Abstract

Current challenges in early identification of autism spectrum disorder lead to significant delays in starting interventions, thereby compromising outcomes. Digital tools can potentially address this barrier as they are accessible, can measure autism-relevant phenotypes and can be administered in children’s natural environments by non-specialists. The purpose of this systematic review is to identify and characterise potentially scalable digital tools for direct assessment of autism spectrum disorder risk in early childhood. In total, 51,953 titles, 6884 abstracts and 567 full-text articles from four databases were screened using predefined criteria. Of these, 38 met inclusion criteria. Tasks are presented on both portable and non-portable technologies, typically by researchers in laboratory or clinic settings. Gamified tasks, virtual-reality platforms and automated analysis of video or audio recordings of children’s behaviours and speech are used to assess autism spectrum disorder risk. Tasks tapping social communication/interaction and motor domains most reliably discriminate between autism spectrum disorder and typically developing groups. Digital tools employing objective data collection and analysis methods hold immense potential for early identification of autism spectrum disorder risk. Next steps should be to further validate these tools, evaluate their generalisability outside laboratory or clinic settings, and standardise derived measures across tasks. Furthermore, stakeholders from underserved communities should be involved in the research and development process.

Lay abstract

The challenge of finding autistic children, and finding them early enough to make a difference for them and their families, becomes all the greater in parts of the world where human and material resources are in short supply. Poverty of resources delays interventions, translating into a poverty of outcomes. Digital tools carry potential to lessen this delay because they can be administered by non-specialists in children’s homes, schools or other everyday environments, they can measure a wide range of autistic behaviours objectively and they can automate analysis without requiring an expert in computers or statistics. This literature review aimed to identify and describe digital tools for screening children who may be at risk for autism. These tools are predominantly at the ‘proof-of-concept’ stage. Both portable (laptops, mobile phones, smart toys) and fixed (desktop computers, virtual-reality platforms) technologies are used to present computerised games, or to record children’s behaviours or speech. Computerised analysis of children’s interactions with these technologies differentiates children with and without autism, with promising results. Tasks assessing social responses and hand and body movements are the most reliable in distinguishing autistic from typically developing children. Such digital tools hold immense potential for early identification of autism spectrum disorder risk at a large scale. Next steps should be to further validate these tools and to evaluate their applicability in a variety of settings. Crucially, stakeholders from underserved communities globally must be involved in this research, lest it fail to capture the issues that these stakeholders are facing.

Keywords

Introduction

Autism spectrum disorder (ASD), which affects 1 in 132 people globally with little regional variation (Baxter et al., 2015), is characterised by persistent difficulties in social communication and behavioural flexibility (American Psychiatric Association, 2013). ASD is often comorbid with epilepsy, gastrointestinal disorders, sleep disorders and other neurodevelopmental disorders or conditions, such as intellectual disability and attention-deficit hyperactivity disorder (Doshi-Velez et al., 2014; Levy et al., 2010). Fine and gross motor atypicalities and sensory sensitivity are also commonly observed in individuals with ASD (American Psychiatric Association, 2013).

Early childhood, as a period of rapid brain development, presents great opportunity and risk in shaping the developmental potential of all children, including those with neurodevelopmental disorders, such as ASD (Black et al., 2017). Early detection of ASD and intervention when the brain is most plastic lead to the best outcomes (Estes et al., 2015; Flanagan et al., 2012; Kasari et al., 2012). However, current challenges in diagnosing ASD in low-resource settings lead to significant delays in detection, and therefore in triaging to appropriate interventions (Mukherjee et al., 2014; Patra et al., 2020). For example, inadequate parental and community awareness about the red flags of autism compromise help-seeking behaviours (Divan et al., 2021). The available diagnostic tools demand administration by skilled and trained specialists, a scarce resource in most settings (Durkin et al., 2015). Moreover, these specialists are concentrated in urban areas or expensive private clinics inaccessible to the large majority of the population. Standardised assessment methods for ASD are lengthy, proprietary, globally priced and therefore not feasible for large-scale deployment (Durkin et al., 2015). However, the more scalable autism screening measures that depend on parent-report questionnaires are often unreliable, as they assume parental knowledge about autism symptoms is often lacking in communities with low maternal education and limited awareness about child development (Dawson & Sapiro, 2019; Khowaja et al., 2015). All these factors contribute to a failure in timely identification of children with autism, resulting in a large ‘detection gap’ (Dasgupta et al., 2016), with consequent delays in receiving a diagnosis and being placed on appropriate care pathways (Bhavnani et al., 2022).

Therefore, there is a critical need to develop scalable tools for autism risk assessment in the early years to leverage into improved outcomes throughout the life course. Digital tools have tremendous potential to address the scalability issue as portable computers and smart devices are now highly accessible across the globe, even in low-resource settings (Istepanian & AlAnzi, 2020). Over 5 billion people, representing more than two-thirds of the global population, have access to smart phones (World Health Organization Global Observatory for eHealth, 2011). The potential for these mHealth tools to be administered in children’s natural environments, such as homes and schools (Sapiro et al., 2019), and reports generated through automated analysis of objective and high-resolution dimensional data make them feasible for administration by non-specialist providers, including parents. This natural environmental setting also garners more representative behavioural observations. By leveraging the multitude of sensors, such as cameras, audio recorders and touch-sensitive displays, digital tools can measure a wide range of autism-relevant phenotypes, including differences in social-emotional, motor and language skills, helping to capture the heterogeneity of the autism phenotype and providing a comprehensive view of the child’s strengths and weaknesses. Alongside clinical practice, this potential for task-sharing for ASD risk screening (Naslund et al., 2019) protects the time and efforts of highly skilled specialists towards diagnosis and treatment of the small fraction who screen positive. Finally, direct assessment of child behaviour through performance-based tasks picks up quantitative information complementary to parent reports that depend on awareness about autism-related behaviours (Dawson & Sapiro, 2019).

Recent reviews have summarised the evidence on the use of digital tools for autism assessment based on parent-report questionnaires (Marlow et al., 2019; Stewart & Lee, 2017), and the more technologically challenging eye-tracking (Alcañiz et al., 2022; Mastergeorge et al., 2021; Papagiannopoulou et al., 2014), electroencephalography (O’Reilly et al., 2017) and magnetic resonance imaging (Sato & Uono, 2019) methods. However, these tools are not ideal for screening in low-resource settings either because of their dependence on parent reports which may be unreliable, the requirement for expensive equipment and software typically administered in controlled laboratories or the need for high levels of manual input and expertise in analysing the data. In contrast, digital tasks administered using more accessible and portable devices, such as computers, tablets and smartphones, and amenable to automated analysis of child responses and behaviours, have a much greater potential to scale since they are suitable for task-sharing approaches (Naslund et al., 2019). However, a comprehensive review of the characteristics and utility of scalable digital tools for direct assessment of autism risk during early childhood is critically missing. This omission is especially significant in terms of their potential to be further developed into valid screening tools deployable at scale in low-resource settings.

This review attempts to bridge this gap by addressing the following questions:

What types of digital tasks are being used for direct assessment of autism risk during early childhood, and which diagnostic (DSM-5) criteria and specific ASD-related phenotype do they target?

How well are these tools (and specific metrics derived therefrom) able to discriminate between ASD and typically developing (TD) groups in case–control studies?

What are the implementation strategies of these tools in relation to hardware and configuration, passive or active task, personnel and time taken for administration?

Methods

Search

While this review focuses on scalable digital tools to assess autism risk during early childhood (0–8 years), it is based on a subset of papers identified from a more comprehensive search of peer-reviewed articles describing scalable digital tools for assessment of autism and attention deficit hyperactivity disorder across 0–18 years. Four databases (PubMed, PsycInfo, Scopus and Web of Science) were searched in two phases to retrieve relevant articles. During the first phase conducted in May 2018, no date restrictions were applied. The second phase, specific to this review topic, updated the original search by including relevant articles published from June 2018 through October 2020. Specific keywords used for Phases 1 and 2 are presented in Supplementary Table 1.

Study selection and data extraction

Search results from selected databases were imported into the Rayyan software (https://rayyan.ai/) (Ouzzani et al., 2016). Titles and abstracts of the imported articles were screened by three reviewers (D.M., V.R. and J.D.) during Phase 1 and two reviewers during Phase 2 (D.M. and G.L.E.) using the inclusion/exclusion criteria described below. Screening results were ‘unblinded’ for group review weekly, and conflicts were resolved through group consensus. Full texts of included articles were downloaded and screened for eligibility. Data were extracted from included articles.

Eligibility criteria

Scalable digital tools were defined as those that collected and analysed data in a digital format using desktop or mobile devices (laptop, tablets, smartphones or any other mobile smart device). Included studies either required the child to engage actively with tasks presented on the device or used the device to acquire data from the child passively (e.g. via voice or video recording).

The inclusion criteria were (a) peer-reviewed primary research articles published in the English language; (b) case–control study design with at least two groups – ASD and TD comparison group (papers with additional atypical comparison groups, such as neurodevelopmental disorders other than ASD, were included) and (c) mean age of the participant groups ⩽ 8 years (defined as early childhood by the World Health Organization, 2020). The exclusion criteria were (a) digital tools that collected only parent-report data since this review focused on digital tools for

Analysis

For each included study, data were tabulated to describe the task(s) presented to the child, the experimental setup, device(s) used and the format in which the child’s response was recorded. The primary metric(s) used to determine group differences also were tabulated, along with the main findings (Table 1). A brief description of the participants (mean and standard deviation or range of the age distribution, sample size and gender distribution) was included in the table. Papers were grouped based on the

Characteristics and discriminating ability of scalable digital tools to assess ASD risk during early childhood.

ASD: autism spectrum disorder; TD: typically developing; AUC: area under the ROC curve; ROI: region of interest; RT: reaction time; DD: developmental delay; ROC: Receiver Operating Characteristic; FB: false belief understanding; PI: performance index (scaled); RTI: reaction time index (scaled); PCA: Principal Component Analysis; IDD: Intellectual and Developmental Disabilities.

The following colour coding has been used in the column named ‘Device specifications’ to indicate the feasibility of the device for use in low-resource settings: Green = most feasible; Red = least feasible.

Figure added to Table 1 legend: Colour coding to indicate feasibility of administering the identified digital tools in low-resource settings.

The time taken to complete the assessment, when specified in the article, is included in the column titled ‘Experimental setup’ in red font colour, along with the number of trials of the assessment and the experimental setting (laboratory, clinic, school, home, etc).

To maintain consistency, age when reported in years was converted to months by multiplying by 12.

Risk of bias

A list of questions was compiled from two risk of bias assessment tools – Joanna Briggs Institute Critical Appraisal tools: Checklist for Case–Control Studies (Joanna Briggs Institute, 2017) and the QualSyst tool: Checklist for assessing the quality of quantitative studies from the Alberta Heritage Foundation for Medical Research Health Technology Assessment Initiative Series (Kmet et al., 2004). Some questions from the compiled list were adapted; the final set of questions used is listed in Supplementary Table 3.

Community involvement statement

As the reported study is a review

Results

Study selection

A total of 51,953 titles and 6884 abstracts were screened for relevance across the two phases (Figure 1). However, 567 full-text articles were screened for eligibility, of which 38 met inclusion criteria. The most common reason for exclusion was the age criterion (mean age > 8 years;

Study selection flow diagram.

Description of the study participants

Together, these studies analysed results from 889 ASD participants, 1348 TD participants and 32 participants with a neurodevelopmental disorder other than ASD (intellectual disability (

Age distribution of participants across studies and types of tasks applied to them. (a) Mean (dot) and standard deviation (error bar) of the chronological age (CA) of participants in months is represented on the X-axis (red = ASD; blue = TD; green = neurodevelopmental disorders not including ASD). Y-axis lists the included studies (in the order presented in Table 1). Most studies used CA-matched samples, except for Hetzroni et al. (2019) in which the other NDD group was significantly older as a result of being developmentally age-matched to the ASD and TD groups. Some studies reported the range of one or more participant groups instead of the mean and SD. In those cases, the range was represented as a horizontal line (e.g. Zhao et al., 2020; 10th row) using the same colour scheme. (b) Box plot demonstrating the age group to which different types of tasks were applied. The vertical line within the box represents the median age of participants to which the tasks were administered. The whiskers represent the 25th and 75th percentiles of participant age, respectively.

Overview of scalable digital tools for early assessment of ASD risk

This is an emerging field, 28 of the 38 included articles (73.6%) having been published in the past 5 years (Supplementary Figure 2). The tools identified were predominantly at the pilot or ‘proof-of-concept’ stage and typically administered in laboratory or clinic settings by research staff. Studies demonstrated high levels of heterogeneity across tasks used to assess diagnostic discriminative ability, the type of technology used to implement them, primary metrics evaluated and developmental domains assessed. Tasks were presented on portable technologies, such as laptops (H. Li & Leung, 2020; Lu et al., 2019), tablet computers (Anzulewicz et al., 2016; Bovery et al., 2021; Campbell et al., 2019; Carlsson et al., 2018; Carpenter et al., 2021; Chen et al., 2019; Chetcuti et al., 2019; Dawson et al., 2018; Fleury et al., 2013; Gale et al., 2019; Jones et al., 2018; Mahmoudi-Nejad et al., 2017; Ruta et al., 2017), smartphones (Mahmoudi-Nejad et al., 2017; Rafique et al., 2019; Zhao & Lu, 2020), intelligent toys (Moradi et al., 2017) and digital audio recorders (Nakai et al., 2014; Wijesinghe et al., 2019), and non-portable technologies, such as desktop computers (Aresti-Bartolome et al., 2015; Borsos & Gyori, 2017; Chaminade et al., 2015; Crippa et al., 2013; Deschamps et al., 2014; Dowd et al., 2012; Gardiner et al., 2017; Gyori et al., 2018; Hetzroni et al., 2019; J. Li et al., 2020; P. Li et al., 2016; Lin et al., 2013; Martin et al., 2018; Veenstra et al., 2012) and VR platforms of varying sophistication (Jung et al., 2006; Jyoti & Lahiri, 2020; Alcañiz Raya et al., 2020; Shahab et al., 2017).

In total, 21 studies (55.3%) used gamified tasks (Anzulewicz et al., 2016; Aresti-Bartolome et al., 2015; Carlsson et al., 2018; Chaminade et al., 2015; Chen et al., 2019; Chetcuti et al., 2019; Crippa et al., 2013; Deschamps et al., 2014; Dowd et al., 2012; Fleury et al., 2013; Gale et al., 2019; Gardiner et al., 2017; Hetzroni et al., 2019; Jones et al., 2018; P. Li et al., 2016; H. Li & Leung, 2020; Lu et al., 2019; Mahmoudi-Nejad et al., 2017; Rafique et al., 2019; Ruta et al., 2017; Veenstra et al., 2012), making these the most common type of performance-based tasks to detect autism risk in early childhood. Other types of assessments included video recording of children’s behaviours while they viewed or interacted with stimuli presented on a screen (

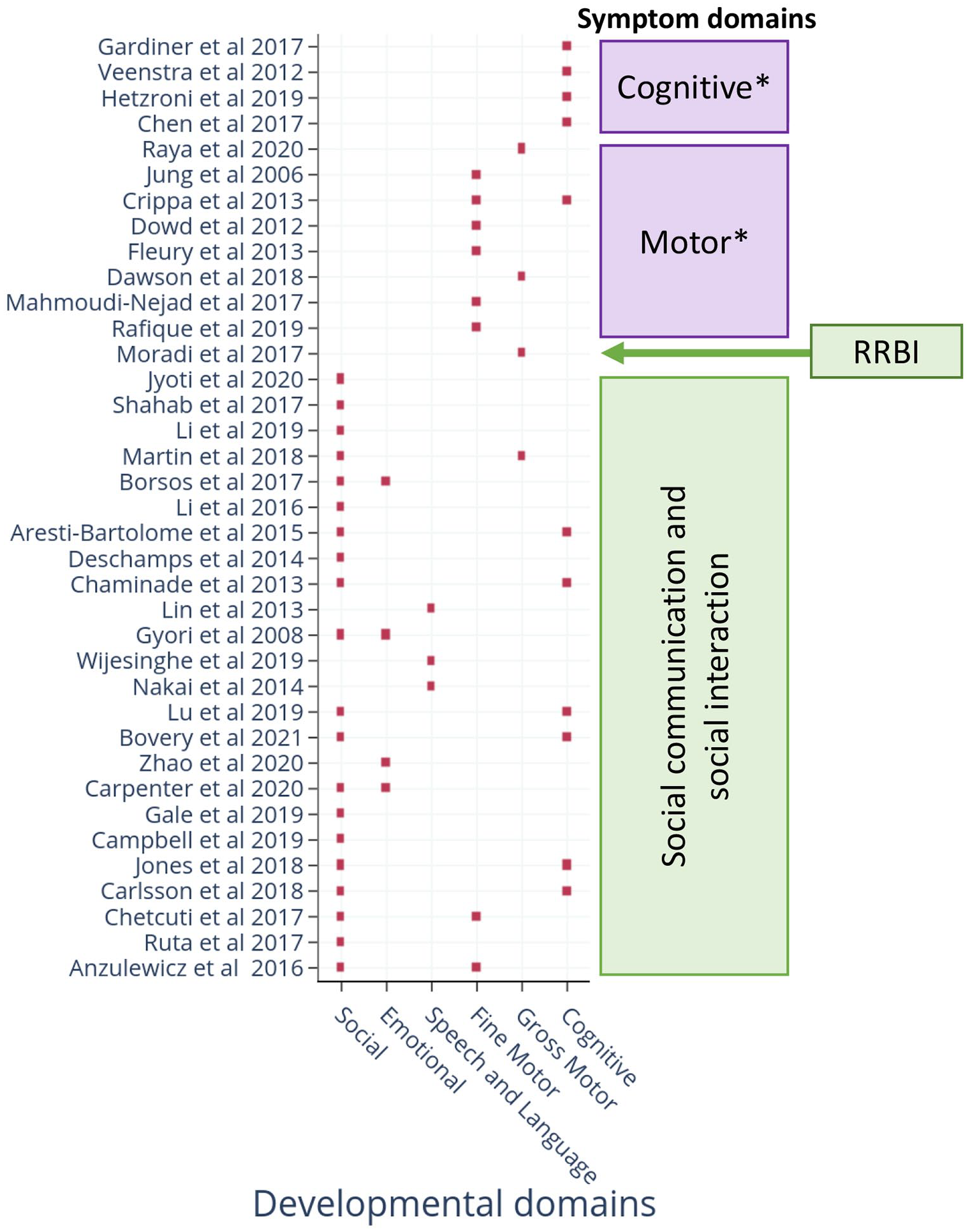

Together, these technologies targeted both criteria set within the DSM-5 for ASDs (Table 1). Several technologies also assessed neurodevelopmental domains not included within the DSM-5 criteria, but known to be affected in many children with ASD. Examples include deficits in motor and cognitive abilities (Figure 3). Two papers included a non-ASD NDD comparison group in the study design (Carpenter et al., 2021; Hetzroni et al., 2019); both demonstrated specificity to ASD symptoms. Eight studies (21.1%) used machine learning (ML) to identify nonlinear combinations of metrics as discriminants (Bovery et al., 2021; Campbell et al., 2019; Carpenter et al., 2021; Dawson et al., 2018; J. Li et al., 2020; Martin et al., 2018; Alcañiz Raya et al., 2020; Zhao & Lu, 2020).

DSM-5 criteria and developmental area(s) assessed by scalable digital tools.

The majority of studies were conducted in high-income countries (26/38); however, three recent studies from India (Jyoti & Lahiri, 2020), Pakistan (Rafique et al., 2019) and Sri Lanka (Wijesinghe et al., 2019) and three from Iran (Shahab et al., 2017; Moradi et al., 2017; and Mahmoudi-Nejad et al., 2017), all low- and middle-income countries (LMICs), represent an encouraging trend for global mental health research.

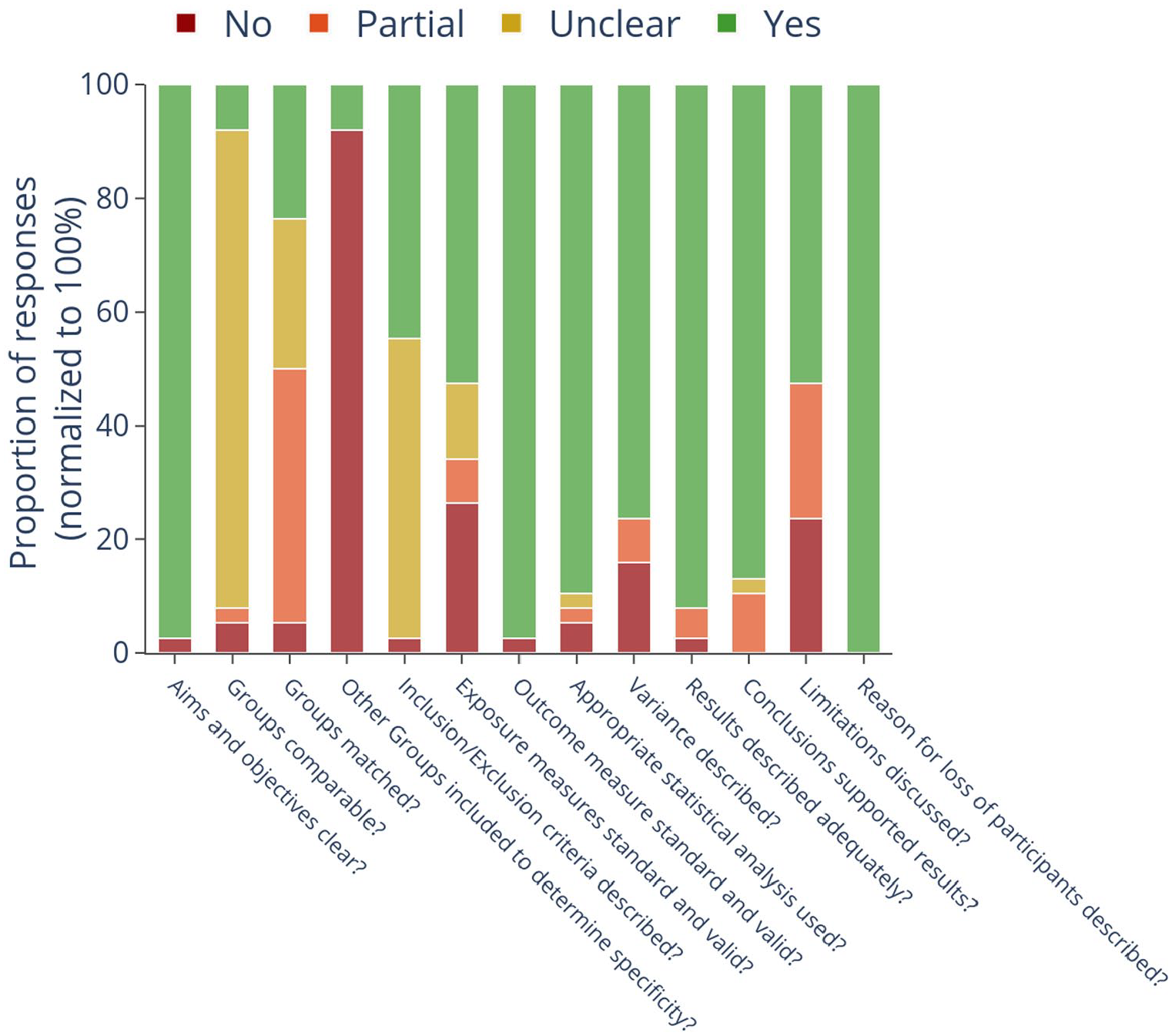

Assessment of the risk of bias

More than 90% of the included articles (34 of 38) clearly described the aims and objectives, details of implementation and used valid statistical methods to report their results (Figure 4). They also reported the reasons for the loss of participants when applicable, and the results supported the conclusions. However, participant demographic details were only reported by 3/38 (7.9%) papers (Supplementary Table 2: Additional participant details), which omission precluded the determination of adequate matching of participant characteristics across groups in a case–control study design. However, 12/38 (31.6%) papers did not describe the study setting or population from which the TD group was recruited. Groups were adequately matched on gender and developmental age only in 9/38 (23.7%) studies. Gender distribution across groups was largely mismatched, with the ASD group typically having a greater proportion of males compared to the TD group (Table 1). In total, 21/38 studies did not clearly describe the inclusion/exclusion criteria for participant recruitment across both the groups. Whereas, 17/38 articles used standardised diagnostic or screening tools to select participants in the ASD group. Meanwhile, 36/38 papers did not include an NDD group without ASD to demonstrate the specificity of the tasks and metrics to ASD symptoms. Finally, while all the included studies were at the proof-of-concept stage, limitations related to small sample sizes, lack of generalisability and inadequate matching of samples were described only in 20/38 (52.6%) studies (Figure 4). Of note, none of the included articles explicitly reported on any measure related to reliability (intra- and inter-assessor reliability, test–retest reliability) or validity (face, construct, content and criterion). While greater understanding of each tool’s validity and reliability is important for their ultimate use, this state of development is to be expected for an emerging field, as the main focus of these initial studies is to demonstrate feasibility and explore the discriminative ability of these tools.

Risk of bias of included studies.

Characteristics of digital ASD assessment tools

Detailed characteristics of individual tasks, details of implementation and their discriminative ability as reported by the studies are presented in Table 1. Detailed description and comparisons of tasks used to address the primary research questions are presented in the Supplementary material. Based on the evidence, the potential of these tools to screen for autism risk in low-resource settings is discussed below.

Tasks using portable technologies (tablet computers, smartphones, toy cars and digital audio recorders)

All tasks using mobile technology could be completed in 8 min on average (range = 1–20 min). Except for tasks assessing accuracy on executive functioning skills (Chen et al., 2019; Jones et al., 2018), all other tasks and metrics could discriminate between ASD and TD at a group level (details in Supplementary material). Tasks tapping the social and motor domains were particularly reliable, as discriminative ability was demonstrated by a total of 13 studies led by different study groups using a variety of tasks, metrics and devices. This included seven tasks tapping the social domain (Bovery et al., 2021; Campbell et al., 2019; Carlsson et al., 2018; Gale et al., 2019; H. Li & Leung, 2020; Lu et al., 2019; Ruta et al., 2017) encompassing social versus non-social stimulus preference and theory-of-mind, and six tapping fine- and gross-motor domains (Anzulewicz et al., 2016; Chetcuti et al., 2019; Dawson et al., 2018; Fleury et al., 2013; Mahmoudi-Nejad et al., 2017; Rafique et al., 2019). Also within the social domain, two studies assessed group differences in facial expressions in two ways – evoked expressions while watching animated videos (Carpenter et al., 2021) versus imitating the facial expressions presented on the screen (Zhao & Lu, 2020). Therefore, while similar data capture and analysis methods were used and significant group differences were reported by both, a direct comparison of the tasks and metrics for this particular construct was not possible. One study each used a toy car and digital audio recorder to assess autism risk. The former was moderately successful while the latter failed – however, more replications of these tasks are required to determine their utility.

Tasks using non-portable technology (desktop computers and VR platforms)

Tasks presented on desktop computers were highly heterogeneous in terms of the ASD phenotype assessed, making it difficult to synthesise results across studies. Except for three studies assessing EF, all others assessed a unique skill using different tasks and metrics. Consistent with tasks presented on mobile devices, accuracy on EF tasks showed mixed results in the desktop technology format as well. Results related to reaction time were consistent, with the ASD group reported to be slower in providing responses in EF tasks. Most tasks tapping the social (Aresti-Bartolome et al., 2015; Chaminade et al., 2015; Martin et al., 2018) and motor (Crippa et al., 2013; Dowd et al., 2012) domains continued to demonstrate significant group differences. The two studies assessing speech and language (Lin et al., 2013; Nakai et al., 2014) used very different metrics to assess group differences (pitch characteristics vs accuracy), so no comparison was possible. Similarly, the results from facial expression analysis using the Noldus FaceReader (Borsos & Gyori, 2017; Gyori et al., 2018) were too preliminary to determine their utility for use as autism screening measures.

The VR format of ASD risk assessment, although showing promising results, depended on sophisticated devices and administration in laboratory settings by trained research staff. However, the discriminative ability of these tasks tapping joint attention, motor imitation and visuomotor coordination continues to highlight the promise of the social and motor domains to identify autism risk. Tasks using desktop technology were completed in 23 min on average, about thrice as long as those on portable devices (8 min). VR tasks took 14.6 min on average (see Supplementary material).

Discussion

Tasks can be brief, portable and largely automated

This study identifies and characterises digital tools that have the potential to be applied in direct assessment of autism risk in early childhood in low-resource settings. Because the availability of skilled human resources is a major limitation in these settings (Divan et al., 2021), we focused on tools that require minimum assessor judgement during administration, and whose data analysis could be automated with no to minimal manual inputs. Two main modalities of direct child assessments were identified – gamified tasks and video or audio recordings of the participant while they viewed/responded to stimuli on the screen or VR platforms, or interacted with research staff or family members. Tasks were presented on both portable and non-portable technologies, namely laptops, tablet computers and smartphones on the one hand, and desktop computers and VR platforms on the other. However, some tasks presented on non-portable technologies but requiring child responses on touchscreens, or in which children’s videos were captured using webcams, are easily adaptable to portable devices (Jyoti & Lahiri, 2022). The majority of the assessments were administered in laboratory or clinic settings, but some were also deployed in homes, schools and daycares. While trained research staff administered these tasks in all studies, they typically provided only simple instructions and demonstrations before participants became able to engage with the tasks independently – extending these tasks’ promise in the hands of non-specialists. Finally, tasks delivered on portable technologies could be completed in less than 10 min on average, and most others within 30 min. Therefore, once validated, the types of tasks identified and their potential to be delivered on low-cost devices by non-specialists pave a promising path for ASD risk detection in low-resource settings, which bear the largest burden of cases worldwide (Baxter et al., 2015). Six recent studies’ being based in LMICs is an encouraging trend towards this direction.

Social and motor skills discriminate best

While specific tasks and metrics targeted multiple developmental domains, those tapping social and motor domains were the most promising. Most studies assessing these domains reported significant group differences using a variety of technologies, tasks and metrics. Lower preference for social stimuli emerged as one of the most reliable metrics. The ASD group consistently preferred non-social stimuli irrespective of the format in which these were presented, be it static images or videos presented on tablet or desktop screens. This general result of aversion to social stimuli aligns with the literature, including decades of eye-tracking literature demonstrating reduced time spent looking at social stimuli in the ASD group (Papagiannopoulou et al., 2014). Individual studies reported unique tasks and metrics relevant to the social domain that successfully discriminated between the ASD and TD groups; examples include anthropomorphic bias and the applied theory-of-mind ability to deceive and to distrust opponents. The ASD group was also found to take longer to orient to the person calling their name, or to initiate social interactions. These are examples of digital tasks tapping literature-backed autism-relevant phenotypes that historically have been assessed by in-person interactions with trained staff (Baron-Cohen et al., 1985; Bruinsma et al., 2004; Federici et al., 2020; Zwaigenbaum et al., 2005). Their success in digital and gamified formats is encouraging for their potential to scale as screening tools in low-resource settings.

Similarly, a variety of tasks tapping the motor domain found consistent differences between the ASD and TD groups. Tasks assessing fine-motor abilities, which were largely administered on portable technology including tablets and smartphones, found differences in the pattern of interactions with smart devices. Kinematic analyses contrasting autistic versus non-autistic touchscreen movements in gamified tasks have demonstrated greater variance in speed and direction with longer, less straight movement paths, amid a less fluid, more piecewise movement style (Weisblatt et al., 2019) and greater spatiotemporal error across a range of tasks (Dubey et al., 2021). A challenge in quantifying visuomotor behaviour is the choice of specific derived metrics from a large number of possible ones, for example, acceleration, jerk, direction, variances and maxima thereof, duration and extent of movement. An important element of the future research agenda will be to combine motor and developmental literatures so as to give the autism field a standard set of parameters with which movements can be described and compared across studies. Discriminative results reviewed here focus thematically on inter-trial variability in response duration in motor planning tasks, accuracy in imitation of complex motor gestures, and visuomotor coordination, but the devil is in the details of specific derived measures.

However, studies assessing gross motor movements were typically based on video recordings of child behaviour while viewing on-screen stimuli or imitating avatars presented on VR platforms. The most consistent result was differences in head movements, indicative of poor postural head control in the ASD group. These results are consistent with the literature on autism-related motor phenotypes, for example, stride length variability (Kindregan et al., 2015), and differences across a range of behaviours including arm movements, gait (Lum et al., 2021), postural stability and oculomotor coordination (Fournier et al., 2010; Johnson et al., 2016), all of which are suggestive of an overall deficit in motor planning (Rinehart et al., 2006) and coordination. Mechanisms proposed for these observed autistic deficits are an inability to chain together sequential motor events (Cattaneo et al., 2007; von Hofsten & Rosander, 2012) and difficulty incorporating visual error feedback in an online movement process (Haswell et al., 2009).

Another consistent discriminating metric was slower reaction times in the ASD group, measured as the latency to respond selectively to pre-specified target objects. This slowing manifested in a range of tasks including tablet-based gamified tasks assessing executive functioning, VR-based tasks of joint attention and visuomotor coordination, and video recording of child behaviour to assess latency in orienting to name. The validity of this metric is supported by the literature demonstrating slower reaction times in older children (Herrero et al., 2015) and adults (Lartseva et al., 2014; Schmitz et al., 2007; van den Boomen et al., 2019) with ASD using computerised simple and choice reaction tasks. Although pathologically slowed reaction time can flag developmental issues in general, by itself it would be too blunt an instrument to discriminate autism from other neurodevelopmental conditions. Similarly, task completion and task engagement, the latter defined variably as number of frames in which an ML algorithm was able to compute relevant metrics from children’s facial landmarks, or the duration for which the child played the game, were found to be a useful discriminating metric in six different studies, but cannot point to autism in particular.

Accounting for heterogeneities, sex differences, ages and stages, and available resources

Arriving at a set of screening tasks that can discriminate autism in all its forms and presentations is not as simple as deciding which tasks work. One needs to know, rather, which tasks work for which subtypes of this heterogeneous condition, at what ages and developmental stages, and in which real-world circumstances dependent on culture and context. Given the early stage of development, most studies are limited in their generalisability to broader samples and contexts. The absence of demographic details in the majority of papers not only precludes determining the comparability between the ASD and TD groups within and across studies but also obscures our understanding of the heterogeneity of the study samples and the subpopulations for whom these tools are applicable. For instance, motor tasks might be especially predictive in some individuals who are not beginning to speak on time (Belmonte et al., 2013), and conversely, assays of social responsiveness might be more predictive in others. Furthermore, while gender specificities in ASD prevalence and symptoms exist (Halladay et al., 2015), gender differences were not addressed in any of the studies. Therefore, further confirmatory studies are required to test the validity, reliability and specificity of these tools before they can be deployed as screening measures. One group has already started planning Phase 3 trials using large samples in different contexts (Millar et al., 2019), which is an important stride in the right direction. The US Food and Drug Administration (FDA) recently authorised marketing of the Cognoa ASD Diagnosis Aid, software using ML algorithms to help predict the risk of autism based on parent reports, videos of child behaviour and health provider inputs, as an adjunct though not a substitute to the regular diagnostic process. A similar open-source effort has shown > 78% accuracy in discriminating between autism, intellectual disability and typical development in 2- to 7-year-old toddlers in low-resource settings (Dubey et al., 2021).

These studies demonstrate great potential pending further validation. All social and motor tasks met our minimum criteria for use in low-resource settings – (1) independent of assessor judgement and hence with the potential to be easily administered by non-specialist providers and (2) capturing data in digital format that could be objectively analysed. Furthermore, they could be completed in less than 20 min, largely on easily accessible and affordable devices. The potential for portability of some of the tasks administered on non-portable technology (desktops, VR) is high since most tasks were in a format that could be adapted to portable technologies (e.g. Jyoti & Lahiri, 2022). With respect to the type of tasks, computer vision analysis of child behaviour is more versatile as it is applicable to the full spectrum of children of varying ages and abilities. Gamified tasks, however, the majority identified by our search, are only suitable for older or less impaired children who can understand and follow game instructions.

Advantages of digital tools for ASD risk assessment and implications for global health research

These novel tools are uncovering nuanced differences in child behaviour using objective and automated measures. Examples include inter-trial variability of a few seconds during motor planning, and differences in force, pressure and patterns of tap-and-drag gestures in tablet-based gamified tasks. The Research Domain Criteria framework theorises that early deviations from normative developmental trends may be predictive of later disorders (Cuthbert, 2014, 2020), including autism (Tunç et al., 2019). Digital tools provide the most feasible method to develop global normative trends of ASD-relevant phenotypes, which could be used to flag children with differential trajectories. Their potential to be administered by non-specialists make them amenable to task-sharing and stepped-care approaches, paving the way for large-scale ASD screening across diverse locations including low-resource settings. Given the current state of development of the tools, magnitude of demand and heterogeneity within the ASD population, digital tools can potentially aid in the initial screening of autism risk at scale. However, a confirmatory diagnosis should only be made by a clinician.

Notwithstanding their potential, it is important to recognise that a ‘digital divide’ currently exists between high- and low-resourced settings, the developmental benefits of technological advances disproportionately accruing to educated and resource-rich communities that have access to smart devices, adequate power supplies and the know-how of Internet services (World Bank, 2016). Therefore, the feasibility of using various types of digital platforms for ASD risk assessment must be carefully considered with respect to the specific LMIC context and setting in which they will be used. Portable computers and mobile devices provide the highest levels of accessibility, affordability and potential for scale across all settings of the global South. Therefore, tools that are adapted for delivery on these platforms would be most feasible to use across all settings and ensure that the technological advances do not inadvertently increase the digital divide in the global ASD community (Kumm et al., 2022).

Methodological considerations to advance the field

Based on the key findings and limitations discussed above, we provide the following directions for future studies. Tasks tapping the social and motor domains that are available in gamified formats or that estimate metrics using computer vision analysis, and provide objective measures analyzable using standard and machine learning methods, should be prioritised for further development. The main goal should be to further validate these tasks and metrics using prospective cross-sectional study designs and larger samples, and to standardise derived measures across tasks. Studies should focus on establishing reliability (intra- and inter-assessor reliability, test–retest reliability) and validity (face, construct, content and criterion) of these tools and report these metrics in future publications. Larger samples would allow assessing heterogeneity using dimensional measures (Stevens et al., 2019) and refining ML algorithms when applicable. Once a tool or task is validated for a single population, its acceptability, feasibility and validity should be assessed in diverse settings and geographies, checking the consistency of psychometric properties across contexts. Once metrics describing reliability, validity, sensitivity and specificity are available for these tools, we can begin to make judgements about their use as screening or diagnostic tools.

For this reason of cultural context, especially, stakeholder families and community members must be involved in the design and execution of such research (Staniszewska et al., 2018), ideally with representation on the research team itself. Stakeholder and community involvement heightens recruitment and retention in general (Crocker et al., 2018), and in autism studies in particular (McKinney et al., 2021), makes tools globally relevant, especially to low-resource and underserved communities (Witham et al., 2020) and must be an integral part of the research design process from the stage of conceptualisation, rather than left as an afterthought. None of the studies reviewed has reported a strategy for stakeholder and community involvement. This must change.

The social and motor tasks currently being administered on desktop computers and VR platforms should be translated for delivery on portable devices to improve access, and then subjected to feasibility and validation testing in different settings riding the wave of widespread mHealth technology use worldwide (Abaza & Marschollek, 2017; Osei & Mashamba-Thompson, 2021). Since research is linked to local capacity building (Durkin et al., 2015), greater testing of these tools in diverse low-resource settings will also generate the necessary awareness and skills, in the community and in researchers, to build momentum towards universal screening of children’s development. Finally, longitudinal studies should be designed to evaluate the developmental trajectories using metrics of the most promising tools and to develop a deeper understanding of the normative trends of ASD-relevant phenotypes.

We identified a few standalone studies that assessed ASD-related behaviours using very specific and unique tasks that could significantly discriminate between groups. Examples include studies assessing evoked and imitated facial expressions, and speech and language. We recommend replication of these studies by different groups in different populations to further test their validity and reliability.

Considerations for future studies evaluating digital tools for ASD risk assessments.

ASD: autism spectrum disorder; TD: typically developing; NDD: neurodevelopmental disorders; ID: Intellectual Disability.

Limitations

As this review was limited to case–control studies, digital tools piloted or validated using other types of study designs will have been missed. Second, since we only included peer-reviewed published articles in English, emerging technologies that may have been presented in conferences or in other languages are not included. Third, the search was last updated in October 2020. This review does not cover new tools and new data (including some of our own) published beyond this date, posing a limitation in view of the rapid pace at which new technologies are introduced and evaluated in this dynamic area of research.

Conclusion

This review identifies and characterises digital tools for direct observational assessment of autism risk in early childhood that have the potential to scale in low-resource settings. This characterisation encompasses tasks and their associated metrics, developmental domains assessed, discriminative ability and details of implementation. Tasks assessing social and motor domains were found to be particularly promising and reliable in discriminating between ASD and TD groups. Their implementation on readily accessible technologies – half of them on portable devices, such as tablet computers and smartphones – coupled with objective output measures make them suitable for task-sharing with non-specialist providers. Novel methods, such as computer vision and ML, are increasingly being coupled with these tasks to allow for objective and automated analysis of data, leading to more in-depth and nuanced understanding of ASD symptoms and furthering their potential for task-sharing approaches and identification of autism risk at the individual level. The time is ripe for the field to move beyond pilot studies and small samples to large-scale, multinational validation studies, using prospective cross-sectional or longitudinal designs conceived, developed and implemented in collaboration with stakeholders and communities.

Supplemental Material

sj-pdf-1-aut-10.1177_13623613221133176 – Supplemental material for Digital tools for direct assessment of autism risk during early childhood: A systematic review

Supplemental material, sj-pdf-1-aut-10.1177_13623613221133176 for Digital tools for direct assessment of autism risk during early childhood: A systematic review by Debarati Mukherjee, Supriya Bhavnani, Georgia Lockwood Estrin, Vaisnavi Rao, Jayashree Dasgupta, Hiba Irfan, Bhismadev Chakrabarti, Vikram Patel and Matthew K Belmonte in Autism

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: D.M. was supported by the Department of Science and Technology-INSPIRE Faculty Award (2016/DST/INSPIRE/04-I/2016/000001). G.L.E. was supported by a Sir Henry Wellcome Fellowship (Wellcome Trust Grant No. 204706/Z/16/Z). The authors acknowledge funding from the Medical Research Council UK (STREAM, MR/S036423/1 awarded to B.C.).

Ethical approval

No ethical approval was required for the conduct of this study.

Data availability statement

All relevant data used to prepare this review article are reported in this manuscript as tables and figures.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.