Abstract

In English as a second or foreign language (ESL/EFL) instruction, feedback plays a crucial role in students’ writing development. In authentic classroom settings, students often receive simultaneous feedback from multiple sources, such as teachers, peers, and machines, each offering its own advantages and challenges. Thoughtful integration of these diverse feedback mechanisms can enhance writing skills; however, there is still limited understanding of how to implement this approach effectively. This systematic review, drawing on third-generation activity theory (AT), investigates strategies for integrating multiple feedback sources in ESL/EFL writing classrooms. By analysing 24 empirical studies, the review highlights the synergistic benefits of multi-source feedback, identifying boundary practices (e.g. sequenced delivery, shared rubrics, class discussions, explicit peer-feedback training, and multi-draft cycles) and the productive role of contradictions (e.g. teacher workload constraints, inconsistent quality of peer feedback, the fallibility of machine systems, and the need to avoid over-reliance while promoting learner independence) as critical to successful integration. Building on these findings, we propose a four-layer pedagogical model that centers on (1) three core activity systems (teacher-mediated, peer-mediated, and machine-mediated), (2) boundary practices, (3) productive contradictions, and (4) emerging partially shared objects and expansive outcomes. The model provides educators with actionable, theory-grounded strategies for effectively incorporating different feedback sources in writing classrooms. The review concludes with recommendations for future research on integrated feedback in ESL/EFL writing.

I Introduction

Feedback, defined as the systematic provision of information regarding performance or behavior, plays an important role in the teaching and learning of English as a second or foreign language (ESL/EFL) writing (Hyland & Hyland, 2006). In authentic classrooms, students routinely encounter feedback from multiple sources simultaneously – teachers, peers, automated writing evaluation (AWE) systems, and, increasingly, generative AI tools – each mediating the writing process in distinct yet overlapping ways (Tian & Zhou, 2020; Zou et al., 2023).

Crucially, these sources do not operate in isolation; rather, they shape and are shaped by one another within a complex activity system. From a Vygotskian sociocultural perspective, feedback is a mediational means that supports learners in moving from other-regulation toward self-regulation, fosters concept development in writing, and creates dynamic zones of proximal development (ZPDs) as learners internalize and transform the assistance provided (Vygotsky, 1978). The effectiveness of this mediation depends not only on the quality of individual feedback instances but also on how the various sources interact, the affective-cognitive experiences (perezhivanie) they engender, and the extent to which learners engage in conscious reflection (praxis) and intentional appropriation during revision (Lantolf & Thorne, 2006).

When feedback sources are deliberately orchestrated rather than simply co-existing, they can form a network of interacting activity systems in which shared or partially shared objects (e.g. improved text, enhanced writing competence, greater learner autonomy) emerge. Within such a network, contradictions (e.g. conflicting advice from peers and machines) and complementarities (e.g. machine feedback clearing local errors so that teacher feedback can focus on global concerns) become visible and pedagogically actionable. ‘Integrated feedback’ is therefore defined in the present study as an intentionally designed network of interacting activity systems that orchestrates mediation from various sources in order to maximize developmental affordances, foster conscious reflection and self-regulation, and support sustained conceptual growth.

Empirical evidence increasingly suggests that combining multiple feedback sources produces stronger writing outcomes than reliance on any single source (Chong, 2017; Tan et al., 2023; D.A. Yan et al., 2025). Nevertheless, existing review studies have predominantly examined teacher, peer, or machine feedback in isolation (e.g. Ding & Zou, 2024; Torres et al., 2020; Zhang & Zou, 2023). The few studies that do address multiple sources typically report effects (e.g. Cen & Zheng, 2024) without systematically theorizing how the sources interact.

The present systematic review addresses this gap by adopting third-generation activity theory (AT; Engeström, 1987, 1999) as its analytical lens. By viewing integrated feedback as a network of interacting activity systems, we aim to illuminate how elements of teacher-, peer-, and machine-mediated feedback systems interact, generate contradictions and synergies, and collectively expand learners’ developmental potential. This approach promises a nuanced, theoretically grounded understanding of integrated feedback and offers actionable principles for its intentional design in ESL/EFL writing pedagogy.

II Activity theory

This systematic review is grounded in third-generation AT as developed by Engeström (1987, 1999), which builds directly on Vygotsky’s (1978) foundational sociocultural theory (SCT) and Leontiev’s (1978) hierarchical model of activity.

From a Vygotskian perspective, human development is mediated: higher psychological functions (e.g. deliberate revision, genre awareness, audience design) emerge through the internalization of culturally organized tools and signs, of which feedback is a prime example. Learning precedes development, meaning that carefully designed assistance can push learners into new ZPDs. Affect and cognition are inseparable, and the concept of perezhivanie captures how emotionally lived experiences refract and shape cognitive growth. Conscious reflection on one’s own actions and their outcomes is the mechanism through which everyday (spontaneous) concepts are transformed into scientific concepts.

Leontiev (1978) further distinguished three hierarchical levels of activity: the level of activity (motivated by a need or object-motive, e.g. becoming a competent, autonomous ESL/EFL writer), the level of actions (conscious, goal-oriented processes, e.g. revising a draft or interpreting feedback), and the level of operations (automatized routines that realize actions under specific conditions, e.g. clicking ‘accept’ on a suggested correction, using a familiar error code).

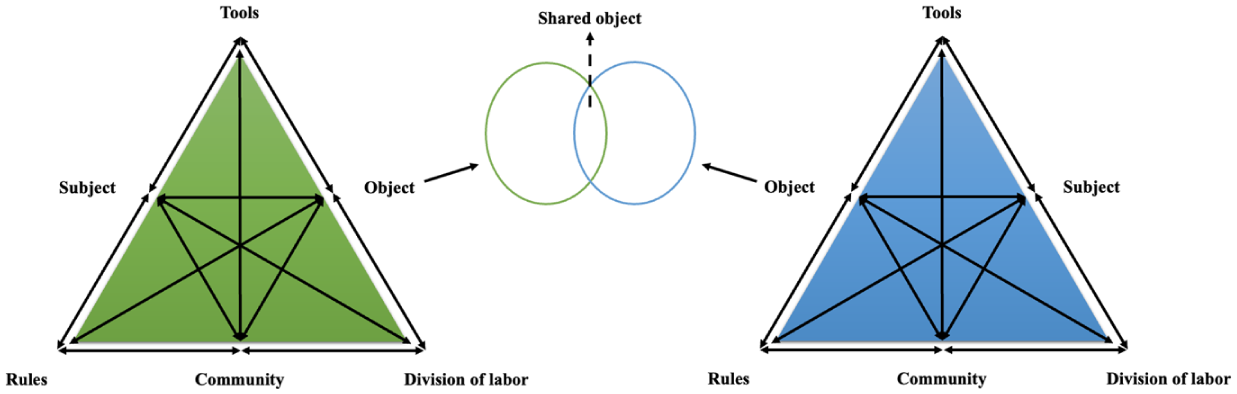

Engeström’s (1987, 1999) third-generation AT (see Figure 1) expands the basic mediated-action triangle into a collective activity system comprising six core elements:

subject: the individual or group whose viewpoint is adopted in the analysis;

object: the raw material or problem space at which the activity is directed, which simultaneously motivates and is transformed by the activity;

tools: artefacts (physical or symbolic) used by the subject to act on the object;

rules: explicit and implicit norms, conventions, and guidelines that regulate actions;

community: the broader social group that shares the object; and

division of labor: the horizontal and vertical distribution of tasks and roles among participants.

Third-generation activity theory.

Crucially, third-generation AT emphasizes networks of interacting activity systems that share or partially share objects (Engeström, 2001). When multiple activity systems are involved, contradictions (within or between systems) become the driving force of change and development. Contradictions can be experienced as dilemmas, conflicts, or double binds, yet, when recognized and worked upon, they open possibilities for expansive learning – qualitative transformation of the entire activity network.

AT has been employed in both empirical studies (e.g. Guo et al., 2024; Yu & Lee, 2015) and review articles (e.g. Zhang & Zou, 2023; Zhang et al., 2024) that explore technology-enhanced language learning and feedback in ESL/EFL writing. Building on these contributions, the present review adopts a networked third-generation AT perspective to examine the integration of feedback from multiple sources.

III The study

To address the research gap identified in systematically theorizing how multiple feedback sources (teachers, peers, and machines) interact and jointly shape ESL/EFL writing development, the present study employs a systematic review methodology guided by the networked third-generation AT framework. This lens is uniquely suited to the phenomenon because integrated feedback is not an additive combination of sources, but an emerging network of at least three interacting activity systems (teacher-mediated, peer-mediated, and machine-mediated) whose objects, tools, rules, and divisions of labor continuously influence one another. The networked AT perspective can reveal the boundary practices, contradictions, partially shared objects, and expansive learning potentials that emerge when these systems are deliberately orchestrated rather than left to coexist.

Within this framework, the six core elements of AT are operationalized as follows, with special attention to their dynamic interactions across the teacher-, peer-, and machine-mediated systems:

subject: primarily the ESL/EFL student-writers;

object: the evolving motive of the networked activity: not only an improved draft but, more fundamentally, the development of self-regulated, conceptually rich writing competence and sustained learner autonomy;

tools: the full range of artefacts (material and symbolic) that mediate feedback delivery, interpretation, and uptake (e.g. rubrics, AWE platforms, error-coding systems);

rules: explicit and implicit norms governing timing, sequencing, and focus of feedback across systems;

community: the classroom environment, which serves as the social context for interaction among multiple feedback sources; and

division of labor: the distribution of feedback responsibilities among teachers, peers, and machines.

Because third-generation AT foregrounds networks, contradictions, and expansive transformation, we group these elements into three interrelated analytical dimensions that capture both structure and developmental process:

integration strategies (division of labor and rules) focus on how feedback responsibilities, timing, sequencing, and focus are coordinated across systems, how boundary practices are formed, and how contradictions are experienced, negotiated, or resolved;

integration contexts (community, subject, and tools) pertain to the educational settings, learner characteristics, and artefacts that enable or constrain networking;

integration effects (object) address the impact on writing development, emotional engagement, and conceptual growth.

Accordingly, the review is guided by the following research questions:

Research question 1: How do division of labor, rules, boundary practices, and the negotiation of contradictions mediate interactions among the activity systems involved in integrating teacher, peer, and/or machine feedback in ESL/EFL writing?

Research question 2: In what communities, with which learner-subjects, and through which tools has integrated feedback been implemented?

Research question 3: What effects does integrated feedback have on ESL/EFL writing development, affect, and conceptual growth?

By framing the review in this way, we move beyond descriptive cataloguing of feedback practices to a deeper examination of how teacher-, peer-, and machine-mediated systems co-evolve, generate productive tensions, and, when intentionally designed, create qualitatively new developmental possibilities for ESL/EFL writers. Ultimately, the study seeks to provide researchers and practitioners with evidence-based, theoretically informed principles for orchestrating multi-source feedback networks that maximize affordances for sustained writing development.

IV Methodology

This systematic review adhered to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines (Page et al., 2021), ensuring a transparent and thorough search and selection process.

1 Study search

We initiated our literature search using the Web of Science (WoS) Core Collection and Scopus databases. These platforms were selected for their widespread use, robust foundation, and user-friendly interface. Our query in the databases utilized Boolean logic with the following terms: (‘English as a second language’ OR ‘ESL’ OR ‘English as a foreign language’ OR ‘EFL’) AND (‘writing’) AND (‘teacher feedback’ OR ‘teacher comments’ OR ‘teacher assessment’ OR ‘teacher evaluation’ OR ‘peer feedback’ OR ‘peer comments’ OR ‘peer review’ OR ‘peer assessment’ OR ‘peer evaluation’ OR ‘machine feedback’ OR ‘automated feedback’ OR ‘AI feedback’ OR ‘ChatGPT’ OR ‘automated writing evaluation’ OR ‘AWE’) AND (‘combin*’ OR ‘integrat*’ OR ‘incorporat*’ OR ‘multiple’). These key search terms were adapted from previous review studies on teacher, peer, and automated feedback (Shi & Aryadoust, 2024; Torres et al., 2020; Zhang & Zou, 2023). We aimed to include as many search terms as possible to capture all relevant articles and ensure a more comprehensive review. The use of the wildcard symbol (*) allowed us to capture various word variations. Notably, given the growing body of research on the use of ChatGPT as an automated feedback tool in ESL/EFL writing classrooms, ‘ChatGPT’ was included as a key search term.

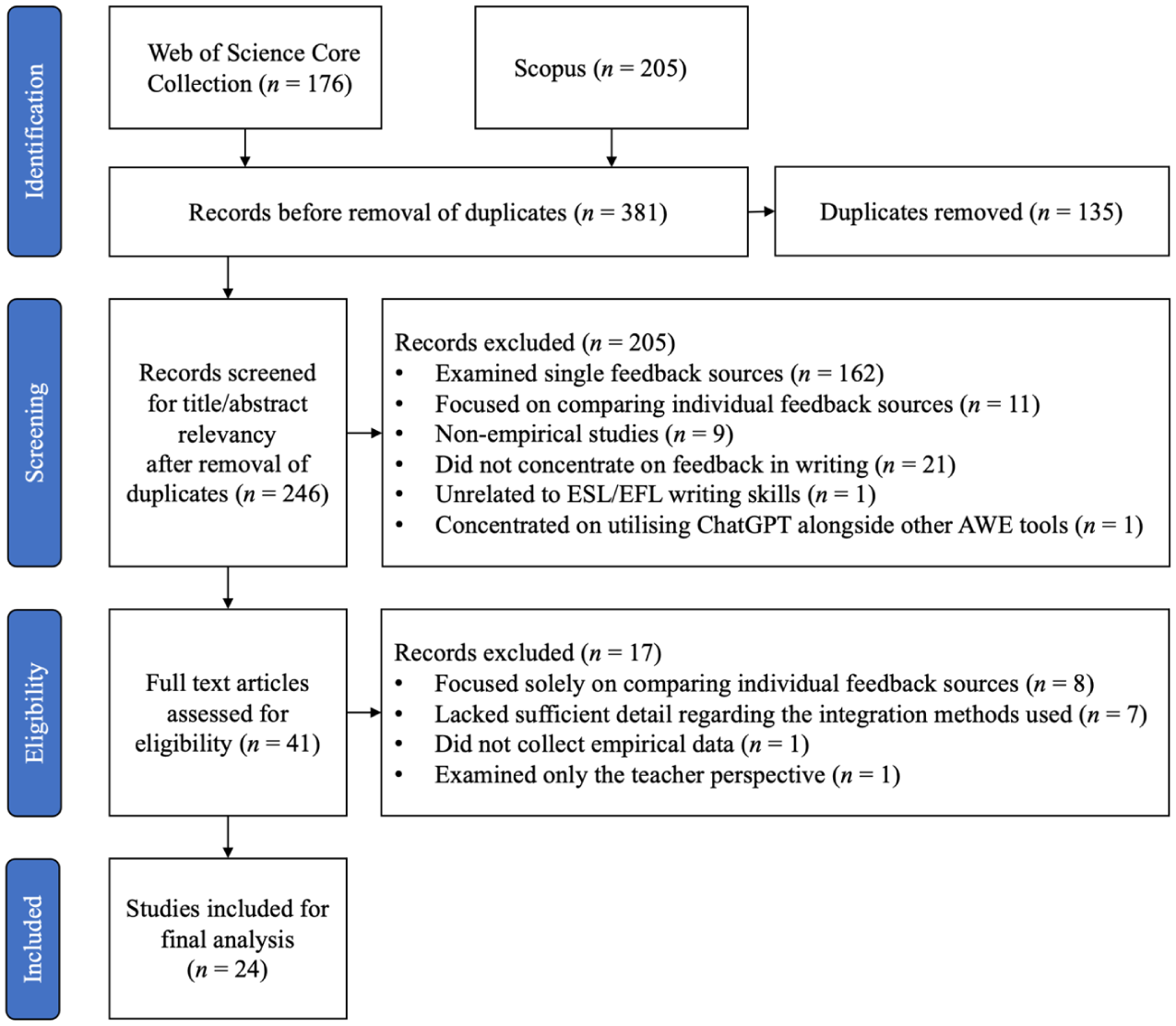

We searched these terms within the ‘topic’ (including title, abstract, keyword plus, and author keywords) of the articles in WoS Core Collection and within the ‘article title, abstract, and keywords’ of the articles in Scopus. Our initial search yielded 452 studies (205 from WoS Core Collection and 247 from Scopus). Next, we utilized the ‘Refine Results’ / ‘Refine Search’ feature in both databases to narrow our search. We restricted the document type to peer-reviewed journal articles to ensure the quality of the literature (Jandrić, 2021) and excluded publications in languages other than English. As shown in Figure 2, this process resulted in 381 studies (176 from WoS Core Collection and 205 from Scopus). We then removed 135 duplicates, leaving us with 246 studies for further examination. The search was conducted on December 9, 2024.

PRISMA flowchart.

2 Study screening

Our screening of the identified studies adhered to the following inclusion criteria: (1) the literature focused on feedback in ESL/EFL writing; (2) feedback on students’ writing was integrated from at least two of the three sources (teachers, peers, and machines); (3) the research examined the effects of integrated feedback, rather than focusing on the differences among individual feedback sources; (4) the articles provided a comprehensive description of the feedback integration methods; (5) the impact on students’ writing learning was assessed; and (6) empirical data were collected and analysed.

The rationale behind these criteria is as follows: (1) including only studies that concentrate on ESL/EFL writing ensures that the findings are relevant to the specific challenges faced by language learners, thereby enhancing the applicability of the results to our target audience; (2) by requiring the integration of feedback from at least two of the three sources, we seek to explore effective strategies for implementing integrated feedback; (3) focusing on the effects of integrated feedback allows us to assess its impact on student outcomes, rather than merely cataloging differences among feedback sources; (4) requiring a detailed description of feedback integration methods allows us to conduct a comprehensive analysis and critical evaluation of the approaches employed; (5) including only studies that assess the impact on students’ writing learning is crucial for determining the practical implications of integrated feedback; and (6) the inclusion of studies that utilize empirical data collection and analysis provides a solid foundation for drawing conclusions.

By establishing these criteria, we aimed to select empirical studies that demonstrated the effects of integrated feedback practices on ESL/EFL students’ writing learning, allowing us to identify effective strategies for combining multiple feedback sources.

The first and second authors independently screened the titles and abstracts of the 246 studies. Any disagreements were resolved through discussion and consensus. Following the application of our inclusion criteria, 205 articles were removed. Among these, 162 examined single feedback sources, while 11 focused on comparing individual feedback sources. Additionally, nine articles were non-empirical studies utilizing meta-analysis, systematic review, or bibliometric analysis methods. Twenty-one studies did not concentrate on feedback in writing, and one study was unrelated to ESL/EFL writing skills. Notably, another was excluded because it concentrated on utilizing ChatGPT alongside other AWE tools (Grammarly and Quillbot) rather than integrating it with teacher or peer feedback sources. Consequently, 41 studies remained for full-text examination.

The full texts of the 41 articles were downloaded and assessed for eligibility by the two authors, again using the inclusion criteria. Disagreements were addressed through discussion and consensus. Ultimately, 17 articles were excluded. Of these, eight studies focused solely on comparing individual feedback sources. Seven studies lacked sufficient detail regarding the integration methods used, preventing us from extracting relevant information. One study did not collect empirical data, focusing instead on theoretical models, and another examined only the teacher perspective (teachers’ feedback literacy) without addressing the effects on students’ learning. As a result, a final total of 24 articles were included in the review.

3 Coding process

Coding was guided by the networked third-generation AT framework introduced in Section II and operationalized in Section III. Rather than treating the six AT elements as isolated categories, the coding scheme was designed to capture both structural features and dynamic, cross-system processes. The final coding framework comprised the following three categories: primary structural codes, interactional and developmental codes, and additional descriptive codes.

Primary structural codes included:

subject characteristics: language proficiency and educational level;

object: reported effects on writing learning (e.g. writing quality);

community: the classroom environment (e.g. class size) in which integrated feedback was implemented;

tools: specific artefacts and platforms used for creating or delivering integrated feedback;

rules: timing, sequencing, focus, training protocols; and

division of labor: the feedback sources involved in the integrated feedback process, including who does what and how systems are linked.

Interactional and developmental codes included:

nature and management of contradictions: e.g. conflicting advice, mismatched criteria, resolution strategies;

boundary practices: e.g. shared drafts, common assessment criteria, multi-draft cycles that cross systems;

evidence of ZPD creation and expansive learning: e.g. increasing learner agency, growing ability to orchestrate multiple sources, observable transformation of the feedback network over time; and

affective-cognitive dimensions: perezhivanie-related themes: writing anxiety, motivation, sense of ownership, trust/distrust toward different sources, emotional refracting of feedback experiences.

Additional descriptive codes included:

comparison with single-source feedback: the individual feedback sources, if any, examined in the studies for comparison with integrated feedback; and

research design: the methodological approach employed, whether quantitative, qualitative, or mixed methods, and instruments used for measuring writing learning effects.

This theoretically enriched coding scheme enabled the review not only to describe what integration strategies exist, but also to analyse how the three activity systems (teacher-, peer-, and machine-mediated) interact, how contradictions are experienced and negotiated, and how boundary practices and expansive cycles contribute to developmental outcomes.

Following the coding framework, the authors manually coded the selected articles. To establish coding reliability, the first and second authors independently coded the articles, achieving a 95% agreement. All discrepancies were addressed and reconciled through discussion until a full consensus was achieved. In cases of ambiguity, a third opinion was consulted.

V Results

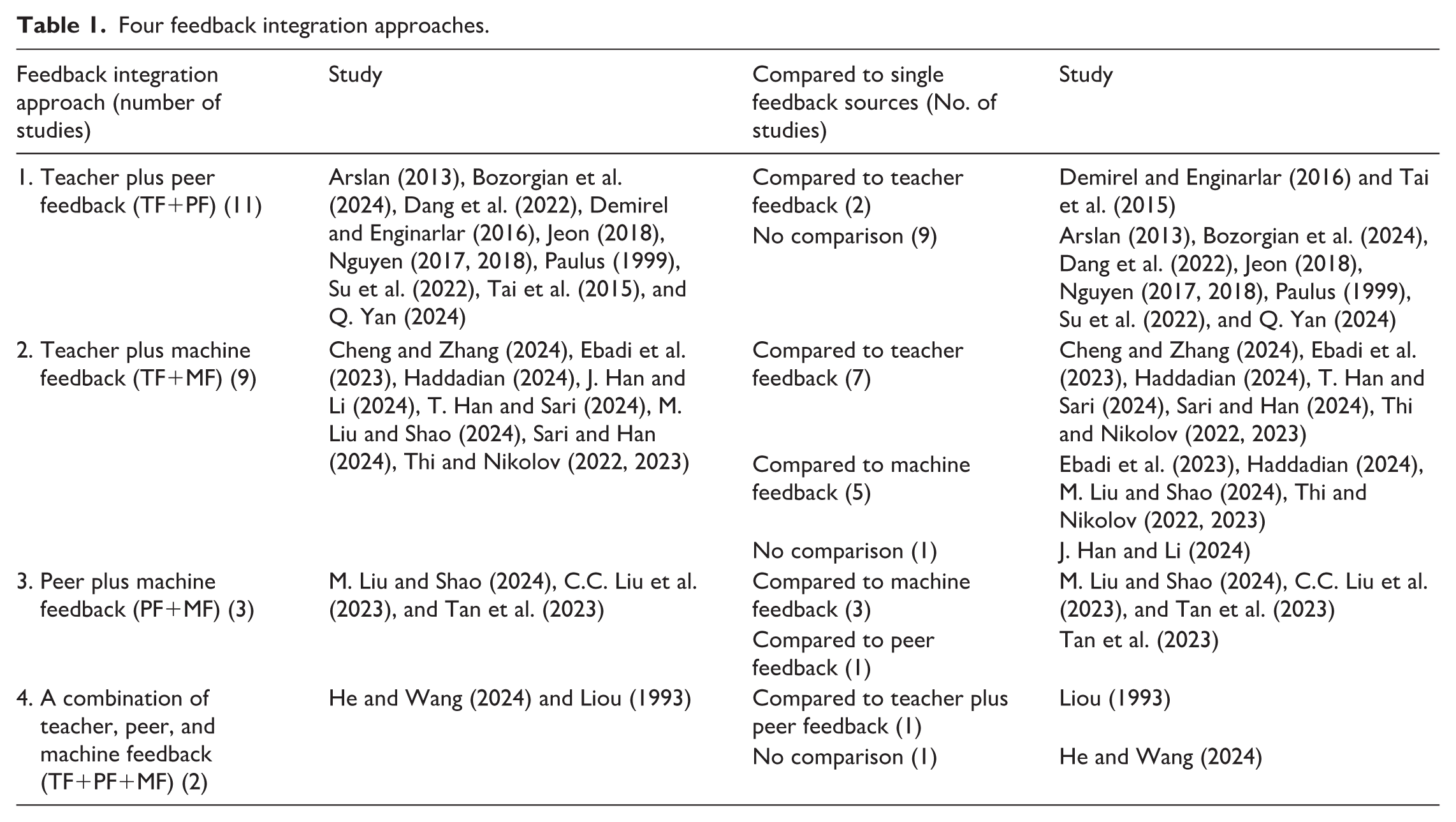

As shown in Table 1, the analysis of the 24 studies revealed four distinct approaches to integrating multiple feedback sources: teacher plus peer feedback (TF+PF; examined in 11 studies), teacher plus machine feedback (TF+MF; examined in nine studies), peer plus machine feedback (PF+MF; examined in three studies), and a combination of teacher, peer, and machine feedback (TF+PF+MF; examined in two studies). Notably, one study (M. Liu & Shao, 2024) examined both the TF+MF and PF+MF approaches.

Four feedback integration approaches.

These approaches formed networks of two or three activity systems whose interactions were mediated by boundary practices, shaped by contradictions, and directed toward partially shared objects. The following subsections present findings aligned with the three analytical dimensions: integration strategies (research question 1), contexts (research question 2), and effects (research question 3).

Research question 1: Feedback integration strategies

a TF+PF

Eleven studies investigated the combination of teacher and peer feedback. These studies employed a range of integration strategies. Concerning the sequence of feedback, most studies (e.g. Jeon, 2018; Nguyen, 2017, 2018; Paulus, 1999) implemented peer feedback first, followed by teacher feedback. Nguyen (2018) emphasized the importance of this sequence, noting that teacher feedback can identify errors overlooked in peer reviews. This subsequent teacher feedback acts as a crucial support system, addressing any gaps in student error identification and enhancing the overall effectiveness of the peer review process. Such sequencing functions as a powerful boundary practice that links the peer and teacher feedback activity systems and helps create a partially shared object: a draft that has already benefited from collaborative meaning-making before receiving expert refinement. Additionally, Nguyen (2017, 2018) noted that the integration of teacher feedback after peer reviews not only alleviated pressure on student reviewers but also sustained their enthusiasm for peer review, as they knew their comments would be validated by the teacher – an arrangement that positively refracted learners’ perezhivanie.

This sequence directly addresses a key contradiction within the TF+PF network: the tension between the subjective, often inconsistent quality of peer feedback and the need for authoritative, reliable validation. For example, Tai et al. (2015) highlighted this tension from the learner’s perspective, noting that students frequently found peer feedback to be ‘vague and confusing’, often focusing on superficial errors while neglecting content and organization. This reveals a disconnect between the intended role of the peer feedback system and its actual effectiveness. In contrast, teacher feedback was viewed as more authoritative and accurate. By positioning teacher feedback as a final, validating layer, the system transforms this tension from a source of student anxiety into a structured support mechanism, ultimately enhancing their perezhivanie.

Some studies also followed the peer-first, teacher-second feedback sequence but incorporated variations. In Dang et al. (2022) and Q. Yan (2024), students participated in collaborative writing within groups. During the feedback phase, intra-group peer feedback was conducted first, followed by inter-group peer feedback, with teachers offering guidance and support throughout the process. This networked activity system facilitated multiple layers of interaction, allowing students to engage with their peers in a supportive environment before receiving teacher input. By first encouraging peer feedback, students could develop self-assessment skills while fostering a sense of community. The subsequent inter-group feedback phase expanded the scope of collaboration, enabling students to gain diverse perspectives on their writing. Teachers played a crucial role as facilitators, providing targeted interventions and ensuring that the feedback process remained constructive and aligned with learning objectives.

Bozorgian et al. (2024) and Su et al. (2022) implemented a three-stage feedback process. In Bozorgian et al. (2024), the sequence began with peer feedback, followed by oral feedback and class discussions on selected student drafts, which incorporated both teacher and peer insights; the process concluded with teacher feedback for final scoring. In this structured approach, the oral feedback and class discussions serve as a boundary-crossing moment, where insights from both peers and the teacher converge, enriching the feedback experience and fostering a more holistic view of the drafts. When contradictions arise, teacher mediation or class discussions can transform these challenges into productive opportunities for praxis and conceptual development in writing evaluation.

In Su et al. (2022), the feedback sequence started with peer reviews, proceeded to indirect teacher feedback, and culminated in direct teacher feedback. Tai et al. (2015) also advocated for the strategy of delivering indirect teacher feedback before direct teacher feedback. This approach would encourage students to actively seek answers rather than ‘being fed by the teacher’s knowledge’ (p. 291). Subsequently, in the later stages, the teacher provided direct feedback that included explicit instructions for addressing any unresolved errors, helping learners produce higher-quality work. The transition from indirect to direct feedback creates a scaffolded learning experience, where students first engage in exploratory learning and then receive the targeted support needed to refine their skills. This approach can be intentionally designed to encourage students to actively seek answers rather than relying solely on the teacher’s knowledge. By gradually shifting responsibility, this method can effectively address the contradiction of learner dependence.

Another important rule involved providing peer feedback training before students engage in peer review activities. This guideline was used in the majority of studies (e.g. Arslan, 2013; Demirel & Enginarlar, 2016; Nguyen, 2017, 2018; Su et al., 2022; Tai et al., 2015). As emphasized by Nguyen (2017), adequate training in peer feedback enhances its effectiveness, as it helps students grasp the rationale behind the practice and understand how to perform the task effectively. Research indicates that such training prior to peer feedback leads to higher-quality comments during peer reviews (Rahimi, 2013). By fostering a shared understanding of expectations and evaluation criteria, this training cultivates a sense of community among students, encouraging collaborative efforts to improve each other’s writing. This supportive environment can nurture a culture of trust, which is vital for enhancing students’ perezhivanie.

Interestingly, in contrast to most studies (e.g. Paulus, 1999) where teacher feedback focused on the content of students’ writing, Demirel and Enginarlar (2016) directed teacher feedback toward form – specifically grammatical structure and mechanics – while peer feedback emphasized content and organization. Their study compared the TF+PF approach (experimental group) to using teacher feedback alone (control group). The findings indicated that peer feedback was as effective as teacher feedback in prompting revisions related to the organization of students’ essays. Although the combined feedback resulted in fewer revisions concerning content compared to full teacher feedback, the average essay scores of both groups were not significantly different. This suggests that the combined feedback did not disadvantage the experimental group. Furthermore, this implies that peers are capable of addressing global issues in writing. However, it is important to note that the students in their study were at upper-intermediate and advanced levels of language proficiency. This indicates that the division of labor concerning feedback focus can be adjusted according to students’ writing proficiency levels. As learners advance to upper-intermediate and advanced stages, they become more adept at navigating complex writing elements. This flexibility within the feedback system not only recognizes the diverse needs of students but also facilitates a more personalized and effective feedback process.

b TF+MF

Nine studies explored the combination of teacher and machine feedback. In most studies (e.g. T. Han & Sari, 2024; M. Liu & Shao, 2024; Sari & Han, 2024), the division of labor between teachers and machines was clear: teachers concentrated on the content of student essays, while machines focused on their formal aspects. This division arose primarily from the limited capabilities of machines (e.g. Criterion, Grammarly, and Pigai) in analysing the content of students’ writing, although they excelled at identifying local issues such as spelling and grammatical errors. In contrast, teachers were better equipped to address global issues in students’ writing (Cheng & Zhang, 2024).

Consistent with this division of labor, most studies (e.g. Cheng & Zhang, 2024; Haddadian, 2024; T. Han & Sari, 2024; M. Liu & Shao, 2024; Sari & Han, 2024) employed a feedback sequence in which machine feedback was provided first, followed by teacher feedback. This approach allowed machine feedback to address local issues before teachers focused on enhancing broader, more complex concerns. This complementary division established a natural boundary practice that allowed a partially shared object to emerge: a grammatically cleaned draft ready for higher-order teacher intervention. Not only did this boundary practice enhance the efficiency of the feedback process, but it also conserved teachers’ time and alleviated their workload, enabling them to engage more deeply with the substantive aspects of student writing. Moreover, the integration approach helped to mediate identifiable contradictions. Students reported that the machine system sometimes provided ‘false alarms’ or redundant feedback, leading to confusion and distrust in the tool (Sari & Han, 2024). In such instances, subsequent teacher feedback became essential for resolving these contradictions by clarifying inaccuracies and addressing misunderstandings, thereby restoring students’ confidence in the feedback process.

One study (J. Han & Li, 2024) presented a different perspective, utilizing more advanced technology – ChatGPT – to support teacher feedback. This research indicated that ChatGPT could assist teachers in addressing global issues that traditional AWE systems could not effectively handle. The evolving interplay between teacher and machine feedback can be seen as a dynamic network of interactions where each participant contributes unique strengths to the writing evaluation process. Initially, the clear division of labor reflected a more traditional model, with machines handling specific, localized tasks while teachers focused on broader, contextual concerns. However, as essay evaluation technologies have advanced, particularly with the integration of sophisticated tools like ChatGPT, this network is becoming more interconnected. Machines are now beginning to take on roles traditionally reserved for teachers, thereby transforming the nature of collaboration. This shift not only enhances the efficiency of the feedback loop but also fosters a more integrated approach to writing evaluation, where the boundaries between human and machine contributions blur. Ultimately, this evolution highlights the potential for a more collaborative networked activity that enriches the feedback experience and promotes deeper learning outcomes for students.

In most of the examined studies, students independently used machines to generate feedback on their essays. Such independent use can foster automatized operations (e.g. routinely accepting grammar/spelling suggestions), but without intentional orchestration, it risks a productive contradiction: over-reliance on these low-conscious operations may impede transitions to higher-level conscious actions (e.g. critically interpreting and negotiating teacher insights on global issues), thereby limiting opportunities for reflective praxis and conceptual development in writing. Explicit training on tool use – positioned here as a key boundary practice – can help resolve this contradiction expansively by elevating operations toward conscious actions (e.g. Haddadian, 2024).

The approach differed in Ebadi et al. (2023) and J. Han and Li (2024). In Ebadi et al. (2023), teachers intervened in students’ use of automated feedback tools, offering explanations to help them effectively incorporate machine-generated insights. This teacher feedback facilitated students’ understanding and utilization of the automated feedback. In J. Han and Li (2024), teachers, rather than the students, employed machines – specifically ChatGPT – to generate feedback on student writing. This method allowed teachers to ensure that the feedback was relevant, accurate, and aligned with educational objectives, thereby minimizing the risk of inappropriate suggestions from the AI. The interventions by teachers in Ebadi et al. (2023) and J. Han and Li (2024) exemplify a more integrated network, where human guidance enhances the learning experience by bridging the gap between machine-generated feedback and student comprehension.

The significant contradiction in TF+MF networks lay in the machine system’s limited rhetorical sensitivity versus the teacher system’s holistic expertise. Studies that resolved this contradiction through teacher explanation of machine output or selective use of advanced AI (ChatGPT) succeeded in expanding learners’ ZPDs, turning potential frustration into critical awareness of both human and algorithmic mediation.

c PF+MF

Three studies investigated the integration of peer and machine feedback. In terms of the division of labor between students and machines, M. Liu and Shao (2024) focused peer feedback on the content of student essays, while machine feedback addressed issues of form. In contrast, C.C. Liu et al. (2023) employed machine feedback that considered four dimensions: linguistic quality, content, article structure, and grammar.

Regarding the feedback sequence, M. Liu and Shao (2024) provided machine feedback first, allowing students to revise their drafts accordingly before receiving peer feedback to further enhance their writing. Differently, C.C. Liu et al. (2023) allowed students to revise their essays based on both machine and peer feedback simultaneously, although machine feedback was generated prior to peer comments. In Tan et al. (2023), an alternative sequence was adopted, where peer feedback was given first, followed by machine feedback. These varying feedback sequences illustrate different configurations of collaborative interactions within the learning environment. In Liu and Shao’s approach, the sequential flow of feedback emphasizes a structured progression, where machine feedback serves as a foundational layer for subsequent peer input, fostering a clear pathway for revision. In contrast, Liu et al.’s simultaneous approach creates a more interconnected network of feedback sources, allowing students to synthesize insights from both machines and peers in real-time. Tan et al.’s sequence, prioritizing peer feedback, underscores the importance of social interaction in the writing process, suggesting that collaborative dialogue can enhance the effectiveness of machine-generated insights when integrated later in the feedback loop.

Like the TF+PF and TF+MF approaches, the PF+MF approach also emphasized the necessity of training students in peer feedback and tool use before they participated in feedback activities (M. Liu & Shao, 2024; Tan et al., 2023).

These boundary practices foster partially shared objectives, such as texts refined for both form and content by non-teacher sources. They also help learners navigate contradictions, such as discrepancies in feedback scope – where machines focus on local accuracy (e.g. grammar) while peers address global coherence and topic relevance (C.C. Liu et al., 2023). This process not only promotes evaluative judgment but also shifts learners’ perezhivanie, enhancing their confidence in managing diverse feedback sources.

d TF+PF+MF

Among the 24 selected studies, only two explored the integration of feedback from all three sources. In terms of feedback provision sequence, Liou (1993) implemented the following order: peer feedback, followed by teacher feedback, and finally machine feedback. Conversely, He and Wang (2024) utilized a different sequence: machine feedback, followed by peer feedback, and then teacher feedback. Notably, in He and Wang (2024), peer feedback was given by students working in groups of three, and the teacher also provided feedback on the students’ peer reviews to enhance their quality.

Regarding the form of feedback, He and Wang (2024) utilized a combination of written and oral peer feedback, while teacher feedback consisted of electronic individual written comments and a whole-class online feedback conference. However, the study indicated that oral peer feedback was less effective than written peer feedback.

Notably, Liou’s study, conducted in 1993, took place during a period when AWE tools were far less advanced and less commonly used. In that study, students had no prior experience with such tools and utilized machine feedback – specifically Complete Writer’s Toolkit and Grammatìk – under the guidance and supervision of their instructor. Liou (1993) emphasized peer feedback on clarity and structure, while the instructor provided indirect feedback focusing on content, organization, and language. In the final stage, the AWE tools were employed to supplement the instructor’s feedback on language use, primarily due to the limited capabilities of these tools at the time.

These rare three-system networks produced the richest set of boundary practices (e.g. teacher commentary on peer reviews, whole-class conferences, multi-stage sequencing), which effectively transformed contradictions – such as administrative constraints (e.g. large classes clashing with timely mediation; He & Wang, 2024), cultural tensions (e.g. student humbleness vs. peer critique; He & Wang, 2024), and system mismatches (e.g. first language bias in early tools vs. ESL/EFL needs; Liou, 1993) – for teachers orchestrating all three into expansive learning opportunities. The resulting partially shared objects – drafts satisfying algorithmic, interpersonal, and expert criteria simultaneously – represent the closest empirical approximation in the dataset to a fully networked, developmentally potent feedback system.

Research question 2: Feedback integration contexts

Among the 24 selected studies, 22 were conducted in higher education settings, while only one study (Dang et al., 2022) applied the TF+PF integration approach to enhance writing learning among secondary school students. The remaining study (Haddadian, 2024) implemented the TF+MF integration approach in private language schools, where students’ ages ranged from 18 to 42 years.

The class sizes for implementing these integration approaches varied significantly, ranging from as few as 11 students (Paulus, 1999) to nearly 90 students (He & Wang, 2024). However, most studies employed these methods in classes of approximately 30 students (e.g. C.C. Liu et al., 2023).

The studies exhibited a diverse range of students’ language proficiency levels, spanning from low (e.g. Tai et al., 2015) to low-intermediate (e.g. He & Wang, 2024; Thi & Nikolov, 2023), intermediate (e.g. Sari & Han, 2024; Thi & Nikolov, 2022), upper-intermediate (e.g. Haddadian, 2024; T. Han & Sari, 2024), and high levels (e.g. Bozorgian et al., 2024; Demirel & Enginarlar, 2016). Ten studies did not specify their participants’ language proficiency levels (e.g. J. Han & Li, 2024; Tan et al., 2023).

These contextual factors – educational settings, class sizes, and learner proficiency levels – highlight how community and subject elements dynamically constrain or afford interactions across the networked activity systems of integrated feedback. Specifically, as previously mentioned, the proficiency levels of student writers can shape the division of labor concerning feedback focus. Advanced student writers may be better equipped to provide peer feedback on global issues in their peers’ essays (Demirel & Enginarlar, 2016). Moreover, proficiency differences align with the principle that learning precedes development: lower-proficiency learners may initially engage with feedback at the level of automatized operations (e.g. routine acceptance of corrections without deep processing), while higher-proficiency learners more readily operate at the conscious action level (e.g. critical negotiation and reflection on global issues), creating differential ZPDs that influence feedback uptake and praxis (Demirel & Enginarlar, 2016; M. Liu & Shao, 2024). This hierarchy underscores the need for intentional design: integrated systems should scaffold transitions from operations to actions, fostering conceptual growth in writing.

Similarly, class size within the community element can generate productive contradictions related to teacher workload, often resolved by sequencing machine-mediated operations first (to address local errors efficiently) before teacher- or peer-mediated actions on higher-order concerns, thereby alleviating resource constraints while maintaining developmental affordances (e.g. Cheng & Zhang, 2024; Haddadian, 2024). Furthermore, cultural norms (e.g. preferences for harmony over direct critique) may surface as contradictions in peer-mediated systems; these can be expansively transformed through boundary practices such as asynchronous online orchestration via chat platforms or learning management systems, enabling face-saving dialogue and synergistic collaboration (He & Wang, 2024).

A variety of tools were employed during the integrated feedback process. Many studies that incorporated peer feedback, such as Dang et al. (2022), Demirel and Enginarlar (2016), Jeon (2018), Nguyen (2017, 2018), and Tai et al. (2015), utilized peer feedback forms or sheets to guide peer reviewers in assessing the target composition, thereby enhancing the quality of peer feedback. For instance, Paulus (1999) implemented a feedback form that encouraged students to start with positive comments about the essays, followed by identifying the position statement and analysing the supporting arguments. Students were then prompted to highlight areas of confusion or those needing further development, concluding with specific suggestions for improvement. The implementation of such forms served as a crucial boundary practice, scaffolding the peer review process and providing novice reviewers with a structured tool to focus their attention. This practice acted as a common reference point, aligning the teacher’s expectations – who designed the form – with the peer review activity, ultimately enhancing the effectiveness of the feedback process.

In the indirect feedback method, which was provided by either peers or teachers, error codes were used to highlight students’ mistakes with abbreviations. For instance, in Tai et al. (2015), spelling errors were marked with S, while tense errors were denoted by T. A coding table explaining the meaning of each symbol was provided for students’ reference. This strategy was adopted in several other studies, including Nguyen (2017, 2018), and Su et al. (2022). Importantly, in these studies, both teachers and peers used the same symbols when giving feedback, ensuring consistency and efficiency. Furthermore, Nguyen (2018) utilized tally sheets to help students track error frequencies by type across each draft and assignment (with three drafts for each writing task).

In studies that incorporated machine feedback, a range of AWE tools were utilized. These included traditional tools like Criterion (e.g. Sari & Han, 2024), Grammarly (e.g. Ebadi et al., 2023), and Pigai (e.g. Cheng & Zhang, 2024), as well as advanced AI tools such as ChatGPT (J. Han & Li, 2024).

Additionally, Bozorgian et al. (2024) emphasized the effectiveness of Google Docs as a medium for providing feedback. Its interactive capabilities allow both the student writer and the reviewer to be online simultaneously, enabling real-time feedback as the draft is developed. This enhanced interactivity can create a more engaging experience for students, fostering greater motivation and encouraging them to produce high-quality work. Similarly, Tan et al. (2023) utilized Tencent Docs, a cloud-based file-collaboration tool akin to Google Docs, as the asynchronous computer-mediated feedback tool.

These mediational tools, such as standardized error codes and shared digital platforms, serve as boundary practices that facilitate cross-system flow. By integrating multiple feedback sources, these tools create a cohesive feedback ecosystem, enriching the learning experience and promoting continuous improvement in writing skills. The thoughtful implementation of these tools underscores the importance of a holistic approach to feedback, where various perspectives converge to support student development.

Research question 3: Feedback integration effects

As presented in Table 1 (see Section V), among the 24 selected studies, 12 examined the effects of integrated feedback by comparing it with single feedback sources. Of these, 11 studies showed positive effects of their feedback integration approaches on at least one aspect of students’ writing learning examined in their research, when compared to single feedback sources. One study (i.e. Thi & Nikolov, 2023) found no significant differences between integrated feedback (which combined teacher and machine feedback) and feedback from single sources (either teacher or machine) in terms of their impact on the syntactic complexity of students’ writing. This suggests that supplementing teacher feedback with machine feedback can reduce teachers’ workload without compromising students’ writing skills. Additionally, another 11 studies also demonstrated positive effects of their feedback integration approaches on at least one aspect of students’ writing learning examined in their research, although they did not compare their integrated feedback methods with single feedback sources. Finally, one study (Liou, 1993) compared two feedback integration methods, i.e. TF+PF+MF and TF+PF, to evaluate the effects of the former approach.

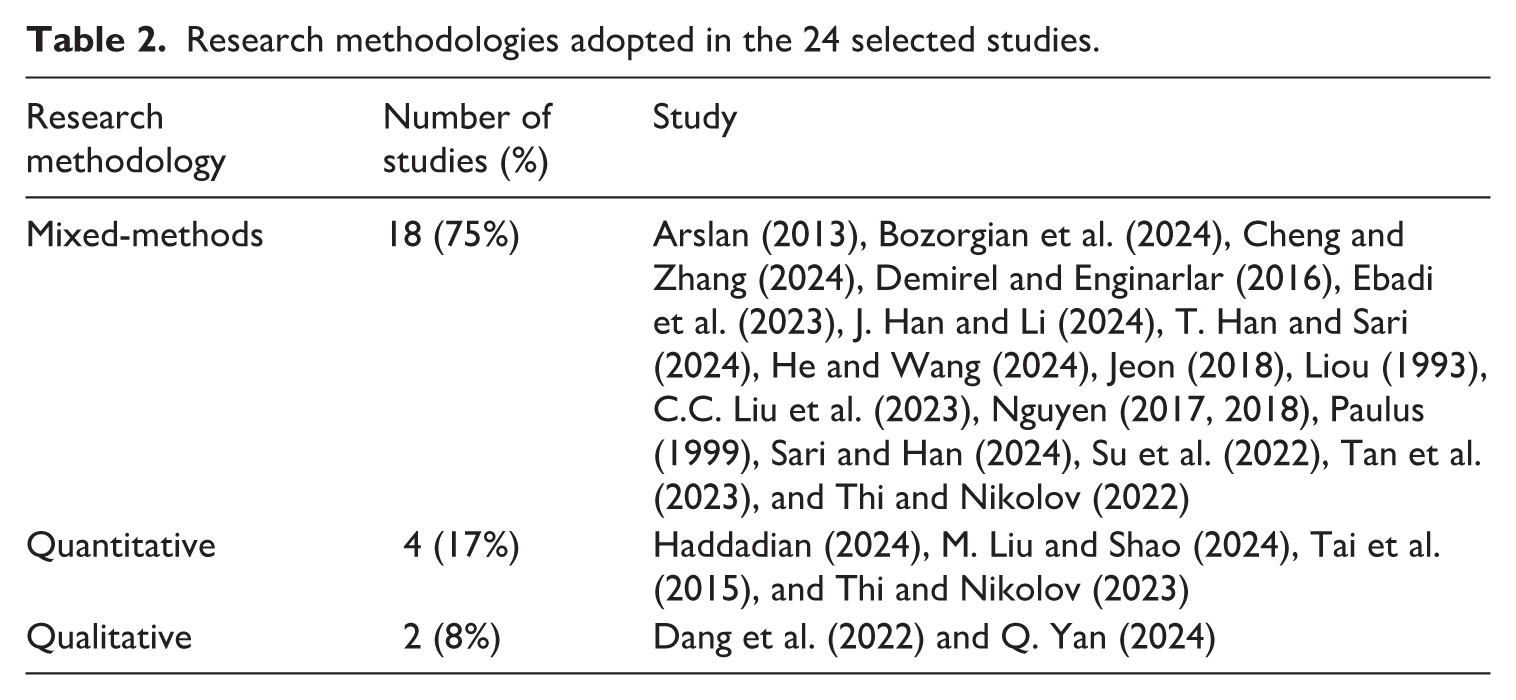

As shown in Table 2, 18 studies (75%) utilized mixed methods to evaluate the impact of integrated feedback, employing both quantitative and qualitative data collection techniques. Additionally, four studies (17%) focused solely on quantitative methods, while two studies (8%) adopted qualitative approaches. In terms of measurement techniques, questionnaires (e.g. Sari & Han, 2024) and writing tasks (e.g. Ebadi et al., 2023) were commonly used to gather quantitative data, whereas interviews (e.g. Tan et al., 2023), written reflections (e.g. Demirel & Enginarlar, 2016), essay drafts (e.g. J. Han & Li, 2024), and think-aloud protocols (e.g. Paulus, 1999) were employed for qualitative data collection.

Research methodologies adopted in the 24 selected studies.

These studies indicated that integrated feedback positively impacted various aspects of students’ writing development. These included enhanced writing performance (e.g. Su et al., 2022; Tai et al., 2015), increased writing self-efficacy (e.g. Sari & Han, 2024), reduced writing anxiety (e.g. Jeon, 2018), and improved attitudes toward writing (e.g. Demirel & Enginarlar, 2016). Additionally, integrated feedback enhanced learning motivation (e.g. C.C. Liu et al., 2023), promoted student engagement (e.g. Cheng & Zhang, 2024; He & Wang, 2024), increased the sense of autonomy (e.g. He & Wang, 2024), and strengthened critical thinking skills (e.g. C.C. Liu et al., 2023).

These effects are not merely additive but developmental: enhanced writing performance reflects successful negotiation of contradictions across multiple drafts; increased self-efficacy and reduced anxiety indicate positive shifts in perezhivanie as learners experience coherent rather than fragmented mediation; greater autonomy, engagement, and critical thinking signal internalization of diverse mediational means and the beginnings of expansive learning cycles in which students themselves start to orchestrate the feedback network within successively wider ZPDs. This interpretive layer explains why integration generally outperformed single-source feedback: intentional networking turns potential conflicts into the very engine of conceptual and self-regulatory growth.

VI Discussion

1 Integrated feedback as networked activity

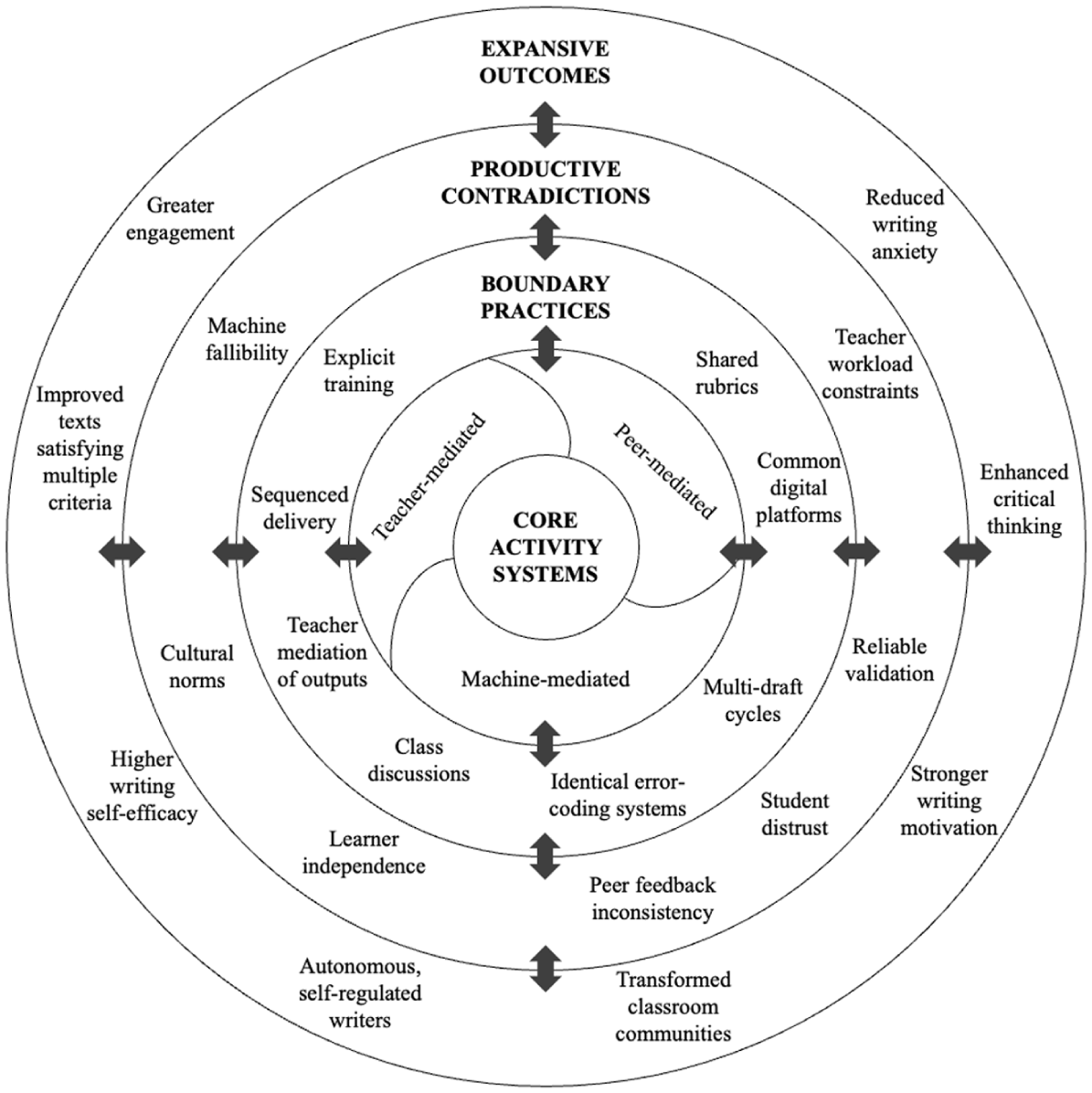

The systematic review, interpreted through a networked third-generation AT lens, reveals that effective integrated feedback is never merely the sum of teacher, peer, and machine contributions. Instead, its developmental power emerges from the quality of interactions among distinct yet overlapping activity systems. Building on the empirical patterns identified across the 24 reviewed studies, we propose a four-layer pedagogical model (see Figure 3) that conceptualizes integrated feedback as an intentionally designed, evolving network of activity systems rather than as a checklist of sources.

The four-layer pedagogical model of integrated feedback.

At the foundation of the model lie the three core activity systems, each with its historically accumulated primary object. The teacher-mediated system is oriented toward expert modelling and the development of rhetorical sophistication, genre awareness, and conceptual depth in writing, as seen in studies where teachers focused on global content issues (e.g. Cheng & Zhang, 2024; T. Han & Sari, 2024). The peer-mediated system is directed toward collaborative meaning-making, perspective-taking, and the cultivation of evaluative judgement through reciprocal interaction (e.g. Demirel & Enginarlar, 2016; M. Liu & Shao, 2024). The machine-mediated system, depending on its technological generation, primarily targets immediate and consistent improvement of linguistic accuracy and surface-level features, though advanced large-language-model tools are increasingly capable of addressing organizational and even content-related concerns, as demonstrated in implementations with tools like Grammarly, Pigai, and ChatGPT (e.g. Ebadi et al., 2023; J. Han & Li, 2024). When these systems operate in isolation or are only loosely connected, their objectives remain distinct, leading learners to perceive feedback as fragmented or even contradictory.

The second layer of the model comprises the boundary practices that enable genuine networking among the systems. The reviewed studies demonstrated that integration only becomes developmentally potent when strong boundary practices are deliberately established. Sequenced delivery – most commonly machine-first, followed by peer, and finally teacher feedback, or peer-first followed by teacher validation – emerged as the most frequent linking mechanism, with machine-first sequencing saving teacher time while addressing local issues early (e.g. Cheng & Zhang, 2024; M. Liu & Shao, 2024) and peer-first approaches alleviating reviewer pressure through subsequent teacher validation (e.g. Nguyen, 2017, 2018). Shared rubrics, identical error-coding systems, common digital platforms, teacher commentary on peer reviews, explicit peer-feedback training, and whole-class debriefing conferences all functioned as crucial boundary practices, ensuring consistency and facilitating cross-system flow (e.g. Arslan, 2013; Su et al., 2022; Tai et al., 2015). Multi-draft writing cycles that required the same text to circulate across all active systems proved particularly powerful, because they forced contradictions to surface and demanded iterative negotiation (e.g. Bozorgian et al., 2024; Dang et al., 2022; Su et al., 2022). However, these linking mechanisms do not eliminate difference; rather, they make systemic contradictions visible and pedagogically addressable, setting the stage for the developmental work of the third layer.

The third layer positions contradictions from systemic obstacles to the central engine of development. Within a networked AT view, contradictions are not noise to be eliminated but signals of where different activity systems intersect. It is at these points of friction that the potential for learning is greatest. Across the 24 studies, contradictions were pervasive: the subjective and inconsistent quality of peer feedback (Tai et al., 2015), the fallibility and limited rhetorical sensitivity of machine systems (Cheng & Zhang, 2024), the demand for authoritative and reliable validation (Bozorgian et al., 2024), the necessity to avoid over-reliance and promote learner independence (Su et al., 2022), teacher workload constraints (Thi & Nikolov, 2023), student confusion and distrust in machine systems stemming from their ‘false alarms’ (Sari & Han, 2024), and the conflicts between cultural humility and peer critique (He & Wang, 2024). Rather than obstacles to be eliminated, the model treats these as contradictions that signal systemic tension with expansive potential. When robust boundary practices are in place, contradictions cease to be sources of mere confusion and instead become the very sites where new ZPDs open. Learners are compelled to engage in conscious reflection, weigh competing perspectives, and gradually appropriate multiple mediational means – precisely the processes that transform everyday concepts of writing into systematic, scientific ones, as evidenced by improved critical thinking and revision behaviors in the reviewed interventions (e.g. C.C. Liu et al., 2023).

Finally, the outermost layer captures the qualitatively new, partially shared objects and developmental outcomes that arise only when the network functions coherently over time. These objects may include: (1) a revised text that simultaneously satisfies algorithmic accuracy standards, interpersonal communicative intentions, and academic-expert rhetorical expectations; (2) a writer who has internalized the ability to orchestrate diverse feedback sources critically and autonomously; and (3) a classroom community whose collective activity has itself been transformed through expansive learning cycles. The empirical effects documented in Section V.3 (e.g. superior writing performance, higher self-efficacy, reduced anxiety, stronger motivation, greater engagement, and enhanced critical thinking) are therefore not mysterious by-products but direct manifestations of these new objects (Cheng & Zhang, 2024; He & Wang, 2024; Jeon, 2018; C.C. Liu et al., 2023; Sari & Han, 2024; Tai et al., 2015). Reduced anxiety and improved attitudes, in particular, reflect positive shifts in perezhivanie as learners move from experiencing feedback as conflicting or overwhelming to perceiving it as a coherent, developmentally supportive network, especially in studies with effective resolution strategies (e.g. Demirel & Enginarlar, 2016; Jeon, 2018).

In practical terms, the model implies that educators should prioritize the deliberate design of boundary practices from the very first writing task, choose sequencing and tools that make contradictions visible yet negotiable, provide explicit scaffolding (especially for lower-proficiency learners and larger classes), and structure courses around multiple drafts that force circulation across systems. Only under these conditions do the inevitable tensions among teacher, peer, and machine mediation cease to be problems and become the primary mechanism driving ESL/EFL writers toward genuine self-regulation and conceptual mastery.

2 Suggestions for future research

Based on the findings from our systematic review, we provide several recommendations for future research on integrated feedback in ESL/EFL writing. First, although the reviewed studies were overwhelmingly conducted in higher-education contexts (22 out of 24 studies), the developmental dynamics of integrated feedback networks are likely to differ markedly across age groups and proficiency levels. Future research should therefore systematically explore primary and secondary classrooms, where contradictions may be experienced more intensely because of limited self-regulation. In these settings, perezhivanie may become more emotionally charged, creating both challenges and opportunities for developmental growth through well-orchestrated feedback networks.

Second, only half of the studies compared integrated feedback with single-source conditions, and just one compared different integration approaches. Experimental and quasi-experimental studies are urgently needed that manipulate specific elements of the network – sequencing order, presence/absence of particular boundary practices (shared rubrics, teacher mediation of AI output), or strength of peer-training protocols – and measure their impact on the formation of partially shared objects, the resolution of contradictions, and the resulting expansive learning cycles.

Third, contradictions emerged as a central but under-investigated phenomenon in the current dataset. Future work should deliberately document contradictions within and between teacher, peer, and machine systems (Engeström, 2015), and investigate the boundary practices that transform them from sources of confusion into engines of development. Mixed-methods designs combining interaction analysis, stimulated recall, and interviews would be especially valuable for understanding how learners experience and resolve these tensions.

Fourth, subject and community elements such as proficiency levels and class sizes were occasionally considered (e.g. M. Liu & Shao, 2024), but other individual and cultural factors remain largely unexplored. Research should examine how motivation, learning styles, emotion-regulation strategies, and cultural expectations of authority shape perezhivanie within the network and influence learners’ ability to appropriate feedback. Specifically, studies could explore how perezhivanie – the emotionally charged, personally lived experience of navigating multi-source feedback – mediates the relationship between these individual and cultural factors and learners’ engagement with feedback. For instance, learners from cultures that prioritize respect for authority may experience perezhivanie differently when peer feedback contradicts teacher comments, potentially heightening emotional dissonance and affecting their revision choices. Cross-cultural comparative studies could shed light on how institutional rules and cultural-historical norms either constrain or expand possible networking configurations by shaping the emotional-cognitive terrain (perezhivanie) through which feedback is interpreted and acted upon.

Fifth, the present review necessarily relied on relatively short-term interventions. Longitudinal studies spanning one or more academic years are essential to capture genuine expansive learning cycles in which the feedback activity network itself is qualitatively transformed – contradictions diminish or become more sophisticated and the object of the activity shifts from ‘improving this draft’ to ‘becoming an autonomous writer who orchestrates diverse mediational resources’.

Finally, it would be valuable to examine how advanced generative AI tools (such as ChatGPT and beyond) reconfigure the division of labor in integrated feedback and generate new contradictions and resolutions. Importantly, future research must address the limitations of AI-generated feedback. For instance, prolonged exposure to non-human feedback risks creating ‘cognitive debt’ (Kosmyna et al., 2025) or superficial compliance, where students mechanically accept algorithmic corrections without engaging deeply with the material. Extended observation will help determine whether these risks materialize or, conversely, whether sustained experience within a well-designed network promotes increasingly sophisticated orchestration of human and algorithmic mediation. Studies of this nature are now urgent if integrated feedback networks are to maintain their developmental potential in an era of rapidly evolving generative AI.

By framing future investigations within a networked third-generation AT perspective, researchers can move beyond asking whether integrated feedback ‘works’ and towards understanding how contradictions, boundary practices, and expansive transformations can be intentionally designed to maximize developmental potential in diverse ESL/EFL writing contexts.

3 Limitations

The study has several limitations. First, the present review deliberately focused on the three external feedback sources (teachers, peers, and machines) that were most prominent in the existing literature. Consequently, self-feedback – an important internal mediational process – was excluded. Future reviews and empirical work should incorporate self-feedback as a fourth activity system and examine how it interacts with the external network, potentially generating new contradictions and boundary practices.

Second, like most systematic reviews of classroom-based interventions, we were constrained by the design limitations of the primary studies. The majority were relatively short-term (one semester or two writing tasks within a single course) and therefore could not capture genuine expansive learning cycles or the long-term transformation of the feedback activity network itself. Additionally, sample sizes in several studies were modest, which limits the generalizability of claims about developmental outcomes across diverse contexts.

Third, the conclusions drawn are rooted in the existing literature available up to the study’s cutoff date. It is essential to acknowledge that new research advancements could impact the applicability and broader relevance of our findings. Including recent studies could offer fresh perspectives and enhance the comprehensiveness of future reviews.

Finally, although the current review applied a networked third-generation AT framework post hoc, the original studies were not designed with this lens in mind. Detailed data on contradictions, boundary practices, partially shared objects, and learners’ perezhivanie were therefore often indirect or inferential. Similarly, only two studies integrated all three systems, and very few systematically manipulated boundary practices or documented expansive transformations of the network. Our four-layer pedagogical model is thus strongly grounded in the available evidence but remains partly interpretive and suggestive rather than fully conclusive. These gaps highlight the need for future primary research specifically designed to test and refine the networked AT perspective proposed here.

VII Final remarks

Integrated feedback is now a near-universal reality in ESL/EFL writing classrooms, yet until this review it has largely been treated as a practical convenience rather than as a theoretically coherent activity network. By applying networked third-generation AT to 24 empirical studies, we have shown that the developmental power of multi-source feedback does not reside in any single source, nor even in their simple co-presence, but in the intentional orchestration of boundary practices that turn inevitable contradictions into the very engine of expansive learning.

When teachers, peers, and machines are deliberately linked through shared rubrics, sequenced delivery, teacher mediation, and multi-draft circulation, learners do not merely receive more comments; they enter a dynamic environment in which conflicting perspectives become sites for praxis, successive ZPDs open, and perezhivanie shifts from anxiety into agency. The result is not just better texts, but writers who can orchestrate diverse mediational resources long after the course ends.

This review therefore marks a conceptual turning point: from asking ‘Does integrated feedback work?’ to asking ‘How do we design feedback networks that make contradictions developmentally productive?’ The four-layer pedagogical model offers educators and researchers a concrete, evidence-based tool to answer that question in practice. We look forward to the next generation of studies, and classrooms, that will put these networked principles to work and continue expanding the developmental possibilities of ESL/EFL writing.

Footnotes

Author contributions

Kai Guo: conceptualization, investigation, methodology, formal analysis, writing – original draft, writing – review and editing; Shaoyan Zou: conceptualization, methodology, formal analysis, writing – original draft; Di Zou: conceptualization, methodology, writing – review and editing.

Data Availability

Data sharing is not applicable to this article as no new data were created or analysed in this study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work described in this paper was partially supported by the Teaching Development and Language Enhancement Grant of University Grant Committee, The Hong Kong Polytechnic University (Project No. PolyU/TDLEG25-28/IICA/P/02).

Declaration of generative AI and AI-assisted technologies in the writing process

During the preparation of this work the authors used ChatGPT in order to improve readability and language. After using this tool, the authors reviewed and edited the content as needed and take full responsibility for the content of the published article.