Abstract

Despite the growing interest in evaluating contextualized task-based language teaching (TBLT), evaluation studies in ‘difficult contexts’, such as monolingual English as a foreign language (EFL) contexts with low-proficiency learners, remain limited. Employing a macro-evaluation framework, this study evaluates a TBLT program implemented in English for specific purposes (ESP) classrooms with low-proficiency learners in an EFL context. The course comprised two cycles of task-based lessons, each consisting of two lessons: one based on a simple oral task and another derived from a collaborative writing task of a laboratory report. This study examined the 14-week course from three perspectives – response-based, learning-based, and student-based – using multiple data sets, including task outcomes, worksheets, pre- and post-tests, and end-of-class and end-of-course questionnaires. The findings revealed that most students successfully achieved the intended task outcomes. While writing quality improved in complexity and fluency throughout the lessons, accuracy showed little improvement. The students demonstrated positive attitudes toward the task-based lessons, appreciating the oral tasks as valuable opportunities for second language (L2) communication and perceiving the writing tasks as relevant to their future careers. However, they raised concerns regarding task difficulty, limited teacher feedback, and pairing issues. These findings demonstrate that the TBLT course was largely successful, underscoring its potential value for low-proficiency learners in an acquisition-poor environment.

Keywords

I Introduction

An evaluation, distinct from an assessment, focuses on practical goals and plays a crucial role in understanding and improving educational programs (Norris, 2016). Evaluations involve ‘the gathering of information about any of the variety of elements that constitute educational programs, for a variety of purposes that primarily include understanding, demonstrating, improving, and judging program value’ (Norris, 2006, p. 579). While an assessment focuses on measuring individual learners’ knowledge, abilities, and learning outcomes, an evaluation extends beyond the individual level to examine programs, instructional approaches, and institutional contexts. Evaluations are oriented toward pragmatic applications and aim to provide evidence-based improvements for educational practices and systems. Additionally, evaluation differs from research. Whereas research is theory-driven and seeks to generate or test theoretical claims, evaluation is not solely concerned with theory. Instead, it aims to inform decision-making and guide actions within educational contexts based on empirical evidence.

Ellis (2011) distinguished macro-evaluations, which focus on whole curricula, from micro-evaluations, which address specific classroom activities. Micro-evaluations are often conducted by teachers interested in determining whether individual activities succeed in a single lesson, whereas researchers or institutional authorities typically conduct macro-evaluations externally to determine how well a program achieves its goals and how it can be improved. In other words, a macro-evaluation must include an evaluation of the program’s ‘accountability’ and the ‘development’ of the learners’ knowledge and skills as a result of the program.

Conducting a macro-evaluation requires evaluating the target instructional program from multiple perspectives (Ellis et al., 2019). Ellis (2011) proposed three types of evaluations: response-based, learning-based, and teacher-based/student-based evaluations. A response-based evaluation examines whether students respond to activities and achieve the intended goals by observing lessons and collecting records of task outcomes. A learning-based evaluation seeks evidence of learning, focusing on improvement in new linguistic knowledge, greater control over existing linguistic resources, and/or enhanced discourse skills. A teacher-based and student-based evaluation explores their reactions by gathering information about teachers’ and students’ perceptions of the lessons through self-reporting methods such as interviews and teaching or learning journals.

Task-based language teaching (TBLT) aims to promote language acquisition by providing opportunities to use a second language (L2) for communication, allowing learners to focus on meaning while attending to language forms as necessary (i.e. focus on form). Evaluating TBLT from these three perspectives allows researchers to assess:

whether focus on form occurs during tasks (response-based evaluation);

whether learners are engaged in communication (student-based evaluation);

whether the teacher perceives the principles of TBLT as effectively realized in the lesson (teacher-based evaluation), and, ultimately;

whether language acquisition consequently occurs (learning-based evaluation).

Researchers have evaluated TBLT to examine how task-based lessons (TBLs) and programs work in specific contexts, providing insights that inform educational decisions (González-Lloret & Nielson, 2015; Kim et al., 2017; McDonough & Chaikitmongkol, 2007). Many published TBLT macro-evaluations have included response-based and teacher-based/student-based evaluations (Beretta, 1990; Beretta & Davies, 1985; Carless, 2004; Hu, 2013; McDonough & Chaikitmongkol, 2007; Watson Todd, 2006). However, relatively few studies have incorporated learning-based evaluations (González-Lloret & Nielson, 2015; Nielson, 2014). These studies conducted learning-based evaluations using pre- and post-course standardized proficiency tests, such as the Versant test (González-Lloret & Nielson, 2015) and the standards-based measurement of proficiency test (Nielson, 2014). Both demonstrated significant improvements in proficiency. Meanwhile, student-based evaluations via questionnaires indicated overall satisfaction with the courses and their relevance. However, some students expressed concerns over workload and time commitment, and others felt unprepared for real-world language use with native speakers. These studies effectively evaluated the ‘accountability’ of the program and the ‘development’ of the learners’ L2 knowledge.

The paucity of research incorporating learning-based evaluation likely stems from the operational challenges of implementing pre- and post-intervention assessments using a longitudinal approach, which is inherently required for macro-evaluations. However, as Norris (2016) posits, the overarching goal of evaluation is to inform decision-making and drive the continuous enhancement of educational practices based on empirical evidence. The current study incorporated all three aspects of evaluation to provide further insights by conducting the evaluation in a ‘difficult context’ (Shintani, 2016), specifically, monolingual English as a foreign language (EFL) classrooms with limited proficiency learners.

II Issues in implementing task-based lessons in Japan

The national curriculum in Japan, known as The Course of Study, aims to develop learners’ communicative competence in junior high and high schools. Since the mid-1980s, growing interest in fostering communicative skills has encountered a shift from the grammar-translation method to communicative language teaching approaches, including TBLT (Butler, 2011; Nishino, 2008; Samimmy & Kobayashi, 2004). However, concerns have been raised about introducing TBLT to Japan, including its perceived incompatibility with the teacher-centered approach common to Confucian heritage; the challenge of balancing dual goals of fostering communicative competence and preparing students for high-stakes, paper-based entrance examinations that prioritize accuracy and reading comprehension; and learners’ indifference to improving their communication skills, due to the limited opportunities to use English outside the classroom (Butler, 2011; Harris, 2018; Nishino & Watanabe, 2008; Samimmy & Kobayashi, 2004; Sato, 2009). Concerns have also been raised at the classroom level, including the lack of appropriate teaching materials, large class sizes, limited instructional hours, and teachers’ lack of confidence in using English and implementing TBLT (Butler, 2011; Harris, 2018).

Some researchers point out that tasks used in TBLT do not always elicit the expected level of L2 interaction. One particularly challenging issue is the overuse of first language (L1) and limited target language use when students in the class share their L1 in a monolingual context (Ellis, 2009). Studies by Carless (2008), Cook (2001), and Macaro (2001) suggest that learners resort to using their L1 rather than a target language for communicative tasks. This trend is also evident in the Japanese context. For example, Burrows (2008) argues that TBLs frequently lead to prominent L1 use, even at the university level, during activities where students are assumed to easily use their L2, such as those that are personalized and relevant for them. Similarly, Lowe (2012) observed that Japanese university students use substantial L1 in their language lessons, arguing that it likely reduces opportunities to use the L2. However, advocates of TBLT have provided some counterarguments for these criticisms. Ellis et al. (2019) assert that some L1 use is legitimate in TBLT as the L1 can be a mediating tool for performing tasks in the L2. They argue that the L1 can be used for functions such as task management, task clarification, discussion of vocabulary and meaning, and the presentation of grammar points (Cook, 2001).

Perhaps the biggest challenge in implementing TBLT in EFL contexts is learners’ limited ability to communicate in the L2. Littlewood (2007), for example, argues that TBLT is not well-suited for low-proficiency learners who lack the language abilities to complete communicative tasks successfully. Seedhouse (1999) claims that TBLT often encourages minimal target language use, limiting linguistic development. However, several studies have shown that these challenges can be mitigated by providing adequate support for low-proficiency learners. Mochizuki and Ortega (2008) found that Japanese high school students with guided pre-task planning, including explicit grammar instruction, produced more accurate L2 output with comparable complexity and fluency than those who did not receive it. Similarly, Lambert et al. (2017) demonstrated that immediate task repetition enhanced university learners’ fluent oral L2 performance. These varied findings suggest the need for a deeper investigation across different educational and cultural contexts, particularly in ‘difficult contexts’ (Shintani, 2016).

III Purpose of the study

Based on the above background, the current study evaluated a TBLT program conducted over a semester for low-proficiency engineering students in English for specific purposes (ESP) classrooms in Japan. To accommodate learners’ proficiency levels, the TBLT program was carefully designed to include pre- and post-task phases that begin with input-based tasks and provide opportunities for learners to use their L1 during the tasks. The program evaluation adopted all three evaluation types (i.e. response-based, learning-based, and student-based evaluation). Drawing from these evaluation concepts, we set the following three research questions.

Research question 1: To what extent do low-proficiency students achieve the intended goals of collaborative writing tasks?

Research question 2: Do the students develop their language skills as a result of the TBLT program?

Research question 3: What are the students’ reactions toward the TBLT program?

IV Method

1 Institutional context

The study was conducted at Fukui KOSEN, Japan, a higher education institution that seeks to educate and cultivate engineers. Fukui KOSEN has approximately 1,000 students, and they belong to one of five departments: Mechanical Engineering, Electrical and Electronic Engineering, Electronics and Information Engineering, Chemistry and Biology, or Civil Engineering. Students enter KOSEN at the age of 15 years and study engineering and liberal arts for five years. Unlike high school students who often study for university entrance examinations, many KOSEN students focus on developing practical skills for their future careers as engineers. Therefore, the issue of university entrance examinations, a common obstacle to implementing TBLT in Japan, is not likely to apply in this context. Furthermore, the national curriculum in KOSEN, called The Model Core Curriculum, emphasizes students’ ability to understand and express themselves on various topics, including those related to mathematics and science in English, making TBLT particularly suitable in this context.

2 Participants

A total of 125 students in three intact classes for English II, a compulsory course taught by the first author that provided two lessons a week for 14 weeks, participated in the study. They belonged to three different departments: the Department of Electrical and Electronic Engineering (n = 39, including 31 males and eight females), Electronics and Information Engineering (n = 44, 35 males and nine females), and Chemistry and Biology (n = 42, 26 males and 16 females). All participants were 16 or 17 years old (M = 16.09, SD = 0.29). They had few opportunities to interact with others in English outside the classroom. They also had little, if any, experience with the TBLT program until they participated in this research program: They had taken textbook-based lessons prior to the study, which offered explicit grammar/vocabulary instructions followed by controlled exercises.

Participants’ English proficiency was assumed to be at an A1–A2 level in the Common European Framework of Reference for Languages (CEFR), as indicated by their grades on the EIKEN test (test in practical English proficiency), a standard English test in Japan. Prior to the study, 71 out of the 125 participants had taken the EIKEN test: Two students achieved second grade, six pre-second grade, 51 third grade, and 12 fourth grade. According to the conversion table between EIKEN and the CEFR (EIKEN Foundation of Japan, n.d.), the second grade in the EIKEN test corresponds to B1, pre-second grade to A2, and third and fourth grades to A1. The EIKEN test was used solely to assess participants’ English proficiency for the study, not to group or measure progress.

3 The TBLT course

The TBLT course in this study focused on students’ lab-report writing skills, which are essential for students across all departments at KOSEN. To achieve this goal, two cycles of TBLs were conducted within a semester, each including two preparatory lessons and a main lesson. The preparatory lessons employed picture-drawing and jigsaw tasks to help learners become familiar with vocabulary related to scientific experiments and activate relevant schemas. The main task, a collaborative writing task, aimed to develop students’ ability to write a laboratory report. All tasks were designed to meet the criteria of tasks proposed by Ellis and Shintani (2014): The tasks primarily focused on meaning; there was some kind of gap; students should rely on their own linguistic and non-linguistic resources to complete the tasks; and they had clear non-linguistic outcomes.

The picture-drawing tasks required students to draw a picture while listening to their partner describe the picture. The predicted task outcome was an illustration that accurately represented the necessary information, including the positioning and states of the apparatus, as well as the person’s appearance, facial expressions, and movements. Four worksheets with illustrations of one or two students conducting a science experiment were prepared. A sample is provided in Appendix A.

The jigsaw tasks required students to sort a set of four pictures correctly in pairs by describing the pictures to each other. The predicted task outcomes were illustrations drawn by the students to accurately represent the necessary information and a correctly sorted series of pictures depicting a science experiment. Four worksheets were prepared: They had two blank cells and two different pictures that described a part of the procedures of a science experiment (ammonia fountain in Jigsaw 1 and the thermal decomposition of sodium bicarbonate in Jigsaw 2). These topics were chosen because the students were supposed to learn them in science classes at their junior high schools. A sample worksheet is provided in Appendix B.

The collaborative writing tasks required students to write a laboratory report in English with their partners collaboratively (ammonia fountain in Collaborative Writing 1 and the thermal decomposition of sodium bicarbonate in Collaborative Writing 2). The predicted outcome was a collaboratively written laboratory report that clearly described the experiment, including essential components such as goals, materials, and procedures, organized in the required laboratory report format. A worksheet with ruled lines and the same four small picture cards used in the jigsaw tasks were prepared.

The lessons were sequenced as picture-drawing, jigsaw, and collaborative writing lessons, following the cognition hypothesis (Robinson, 2001), to progress from simpler to complex cognitive demands. The picture-drawing tasks required students to work with only one picture and were considered the least cognitively demanding. The jigsaw tasks were more cognitively demanding than the picture-drawing tasks, as they involved arranging a sequence of four pictures and focusing on the procedural elements of a science experiment. The collaborative writing tasks required students to consider multiple elements, such as goals, materials, procedures, results, and discussions related to the experiment. This gradual progression aimed to help students with little prior experience in TBLs adapt to the increasing cognitive and linguistic demands of the lessons.

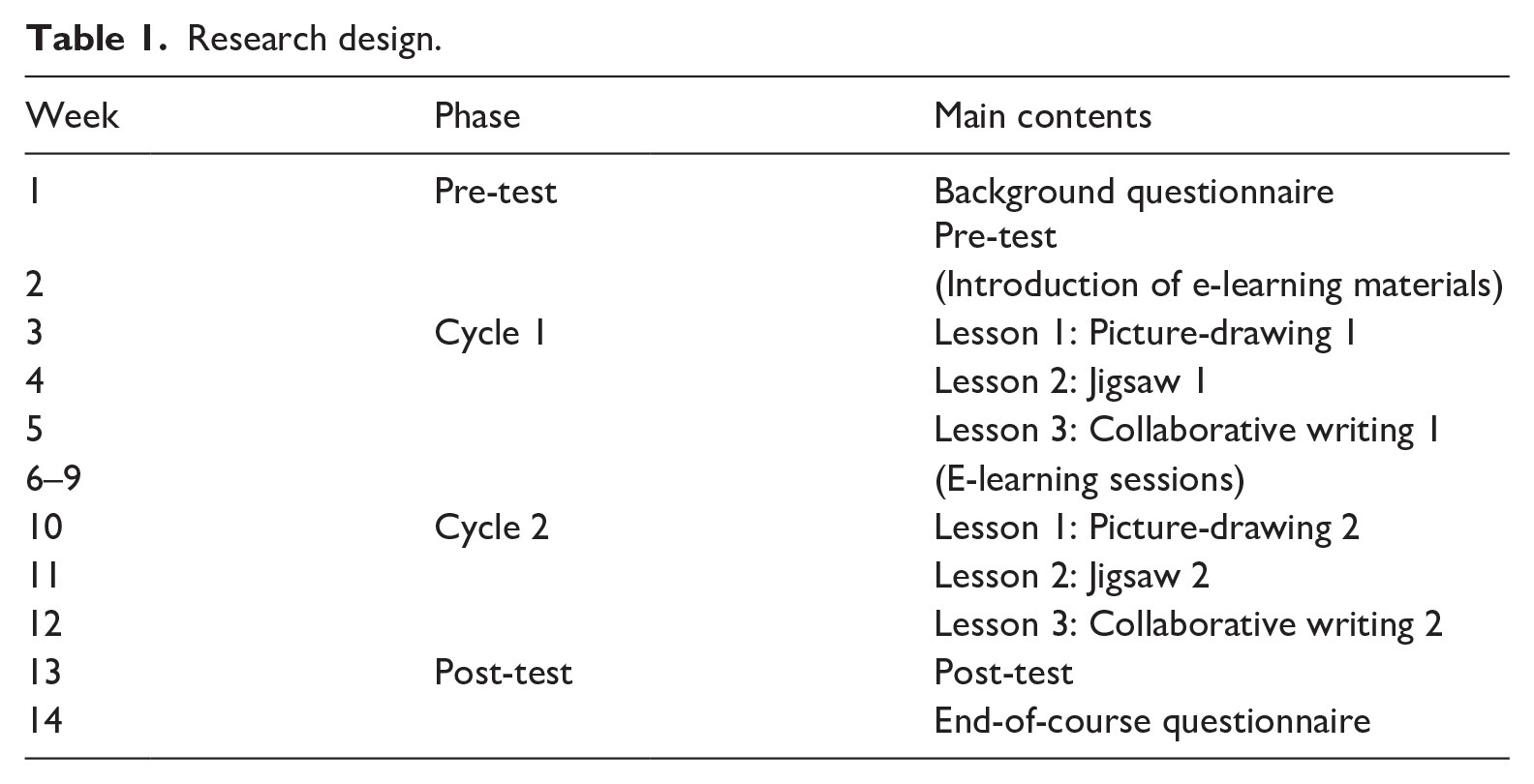

Table 1 provides an overview of the semester schedule. In Week 1, the students were introduced to the course and the study. They also completed a consent form, a background questionnaire, and a pre-test. Starting in Week 3, two cycles of TBLs were implemented, spanning Weeks 3–5 and Weeks 10–12. A post-test evaluation was conducted in Week 13, followed by an end-of-course questionnaire in Week 14.

Research design.

E-learning sessions were incorporated between the two task-based language cycles, spanning Weeks 6–9, as contingency slots to accommodate potential class cancellations due to the Covid-19 pandemic. These sessions consisted of self-study listening and reading practice and were entirely unrelated to the tasks in the study, having no impact on the task outcomes.

4 Task-based lessons

The TBLs were designed based on Ellis’s (2003) framework (i.e. pre-task, main-task, post-task). Table 2 shows how each lesson was structured. The pre-tasks aimed to equip learners with useful vocabulary for performing the main tasks. The post-tasks were designed to engage learners in reflecting on their learning experiences regarding content and language. The following sections describe how the lessons were implemented in practice.

Brief overview of lesson phases in each lesson.

Note. Preparatory lessons include picture-drawing and jigsaw task lessons.

a Preparatory lessons

A matching activity was conducted during the pre-task phase to introduce the vocabulary necessary for the main task (see Appendix C). The students were required to match pictures of the apparatus and other related items with their corresponding English words. After completing the activity, the teacher reviewed the correct answers with the entire class.

Subsequently, the students conducted the main task. For the picture-drawing tasks, they paired up and received a worksheet. The teacher instructed them to draw a picture based on their partner’s description in English without looking at it. The students were given 10 minutes to plan their words without using external resources (e.g. dictionaries and machine translations). Although they could write down some keywords on the worksheet, they were not allowed to write full sentences to encourage spontaneous language use. Each student was allocated eight minutes to describe or draw a picture.

For the jigsaw tasks, the students were informed that they and their partners had different pictures of a science experiment. They needed to describe their pictures orally in English, without showing them to their partner, and work together to sort the four pictures correctly. Finally, they identified the experiment depicted. The students were given 10 minutes for planning, 16 minutes for describing the pictures, and two minutes for sequencing them, with no external resources allowed.

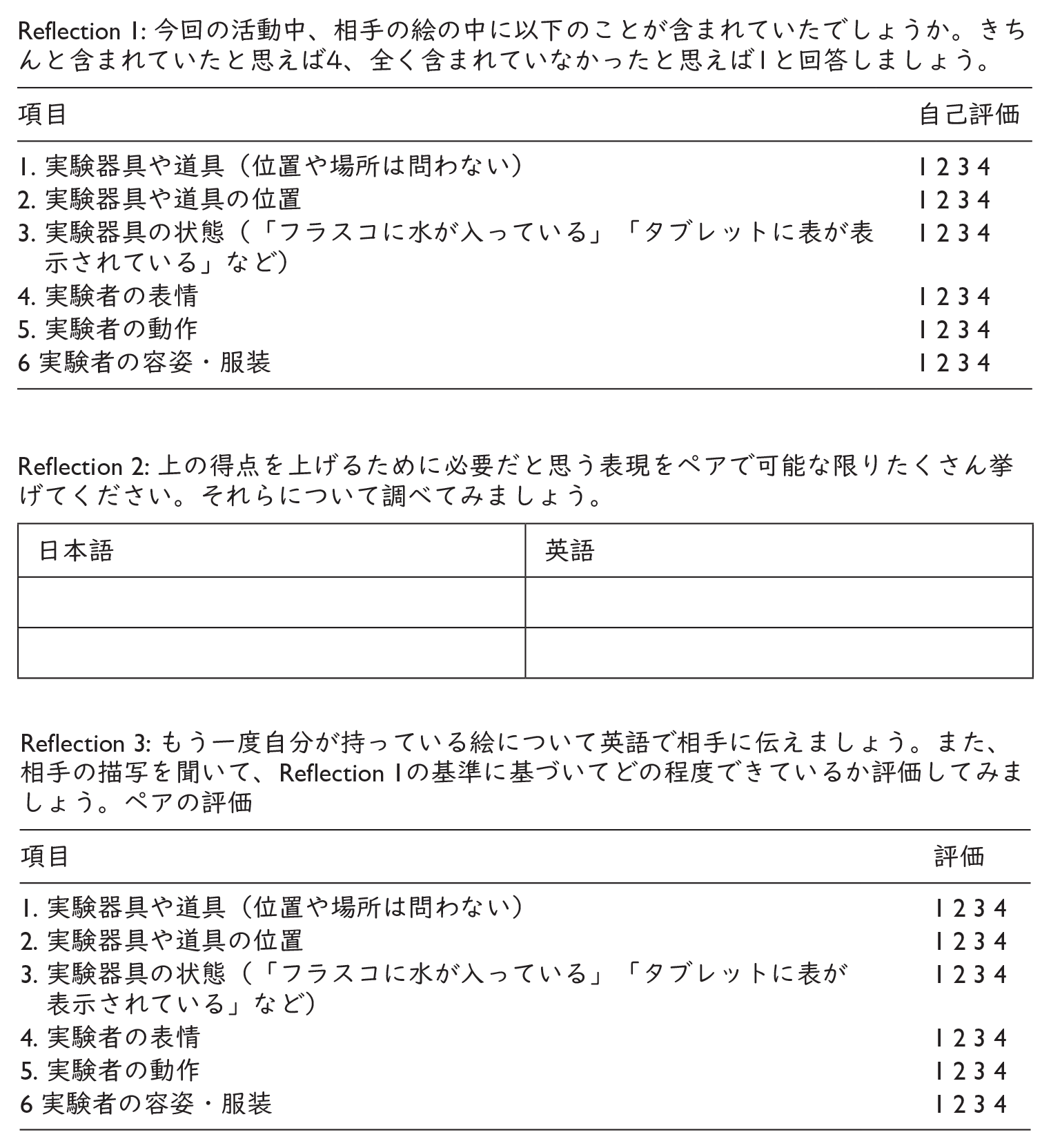

For the post-task, the students reflected on their performance using a self-reflection sheet (see Appendix D). To evaluate the extent to which they achieved the expected task outcomes, they rated their task completion on a four-point Likert scale for each criterion (e.g. whether the partner’s picture accurately represented the apparatus’s position or condition). Then, to address the linguistic challenges encountered during the main task, the students consulted external resources and listed helpful words, phrases, and sentences, some of which were shared with the entire class in the subsequent lesson. Finally, to practice speaking the language they examined, the students repeated the main task with the same partner and the same pictures and rated their performance again.

b Main lessons

The pre-task employed the same matching activity as in the preparatory lessons but with different vocabulary. In the main task, the students were instructed to write a laboratory report with their partner as if they had conducted the experiment. They were required to write it in English but were not told which language (L1 or L2) they should use for discussions. Dictionaries and machine translations were prohibited. The students were given 35 minutes to complete the main task.

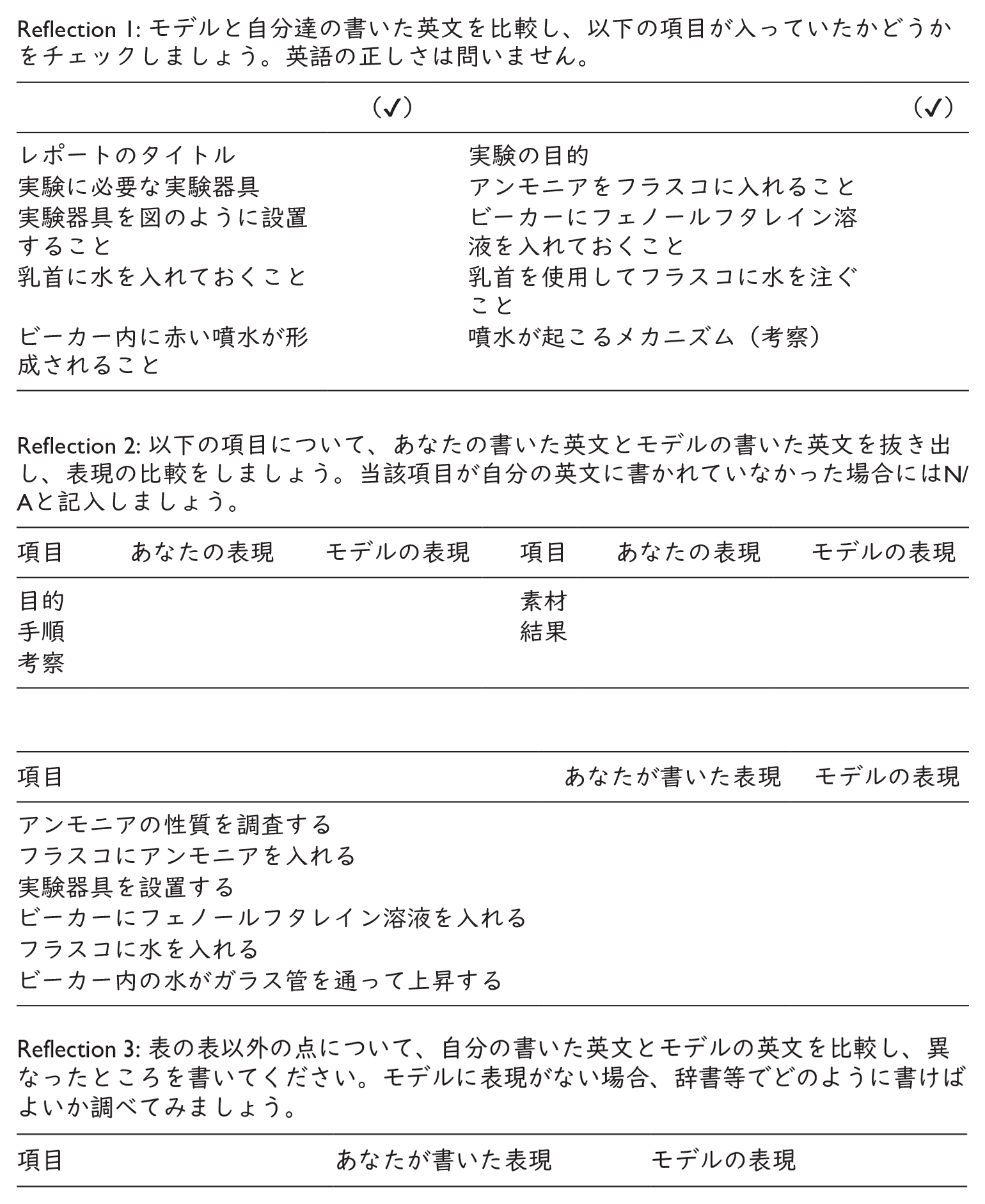

In the post-task, the students received a self-reflection sheet (see Appendix E) and a model report to review the main task (see Appendix F). First, they checked whether their writing products included the items in the checklist (e.g. a title, goals, materials, key experimental procedures, results, and discussion, which are essential components for a laboratory report, aligning with the predicted task outcomes) and marked them accordingly. Next, they compared key expressions from the model and their work. Then, they listed words, phrases, and sentences using external resources. During this phase, the teacher did not assess the students’ task outcomes, allowing them to focus on linguistic elements through comparison with the model.

5 Data collection

a Pre- and post-test

The pre-test and post-test required students to write procedures for a science experiment individually based on the illustration in as much detail as possible within 25 minutes. While the test was similar to the collaborative writing tasks, it featured a different topic (i.e. ethanol distillation). This topic was chosen because the students were familiar with it from prior learning, and it aligned with learning objectives by requiring them to organize and describe a multi-step process for the experiment. Four pictures and some essential keywords for describing the experiment (e.g. ethanol, boiling stone, and seventy-eight degrees) were presented on the test sheet to activate relevant schemas (see Appendix G). The same test was conducted in Week 1 and Week 13.

b Writing products and self-reflection sheets

The writing products from the collaborative writing lessons and self-reflection sheets were collected at the end of each lesson. They were used to examine whether the students achieved the intended task outcomes. Regarding the self-reflection sheets, students’ ratings of task completion were used as data.

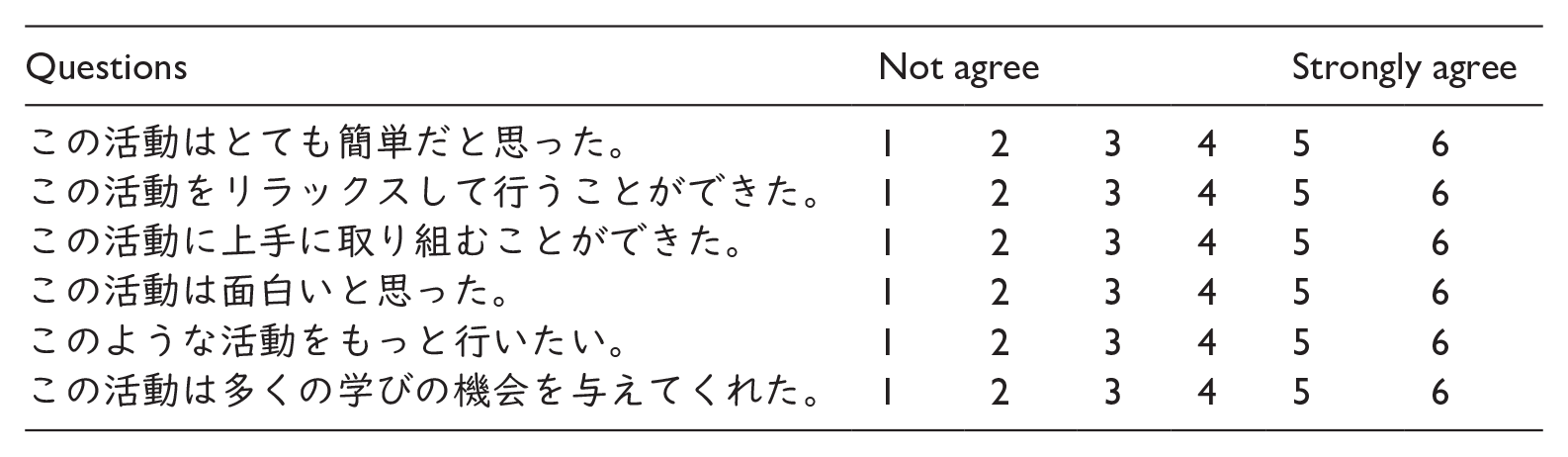

c Post-task questionnaire

A post-task questionnaire was designed to capture students’ perceptions of each task. It comprised six closed and five open-ended questions for a larger-scale research project. For the purpose of this study, we only focus on the six closed-ended questions, which were adapted from Robinson (2001) and Kim (2009), asking for students’ perceptions of task difficulty, level of stress, perceived ability to complete the task, their interest in the task, motivation to perform the task, and learning opportunities (see Appendix H). These questions were suitable for capturing students’ perspectives from multiple viewpoints, covering a broad range of perceptions about the tasks. Furthermore, the limited number of questions allowed the students to answer them within five minutes. A six-point Likert scale was used to eliminate neutral responses. The students completed the questionnaire at the end of each lesson, resulting in six sets of response data.

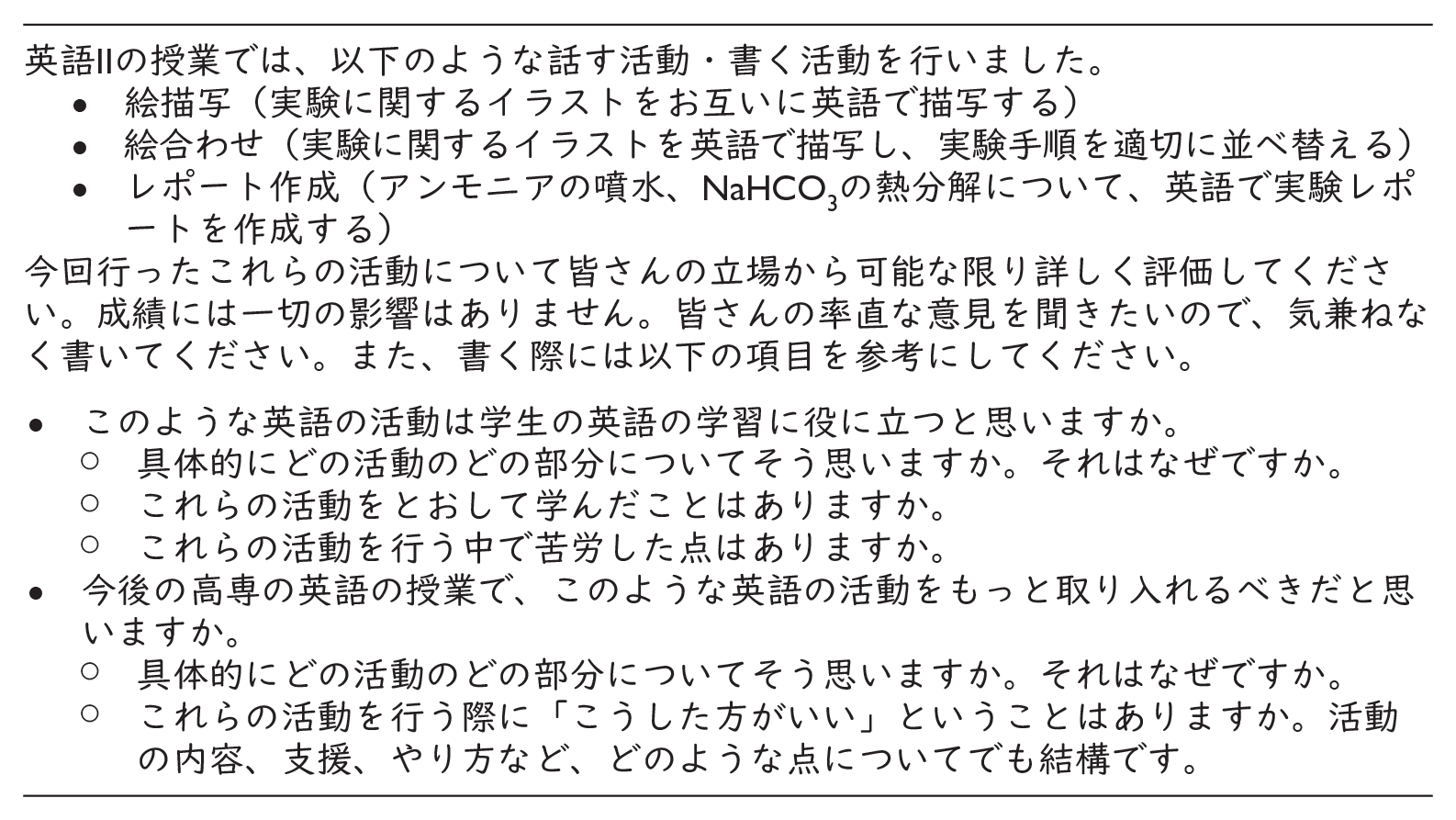

d End-of-course questionnaire

The end-of-course questionnaire was conducted to gather information about students’ perceptions of the entire TBLT course. It asked the students whether the tasks used in the lessons were valuable to them and whether they thought the tasks should be incorporated into their future English classes. The questionnaire consisted of two open-ended main questions, each accompanied by three sub-questions (see Appendix I). A short group discussion session was held before students individually completed the questionnaire. This discussion aimed to help students consider the questions in detail, organize their thoughts, and broaden their perspectives on the course. In groups of four or five, students brainstormed and freely shared their thoughts on the questions for approximately 10 minutes before returning to their seats to complete the questionnaire individually.

6 Data analysis

The students’ handwritten texts on the worksheets were electronically transcribed for analysis. The datasets used to evaluate each aspect are summarized in Table 3, followed by a detailed description of the data analysis procedures.

Data sets for the three aspects of evaluations.

a Response-based evaluation

The data obtained from the self-reflection sheets, which the students provided in the post-task in the collaborative writing lessons, were analysed to measure their perceived task outcomes. The number of items marked on the checklist was counted as self-evaluation scores (one point for each item, with a maximum score of 10 points; see Reflection 1 in Appendix F), and the scores in the first and second cycles were compared. Data from students who missed one or both lessons were excluded from the analysis, leaving data from 101 students. Descriptive statistics for each cycle were calculated with 95% confidence intervals (CIs), and a Wilcoxon signed-rank test was conducted, as normality was not assumed. Effect size (r) was also calculated and interpreted as small if r was close to .25, medium if r was close to .40, and large if r was close to .60 (Plonsky & Oswald, 2014).

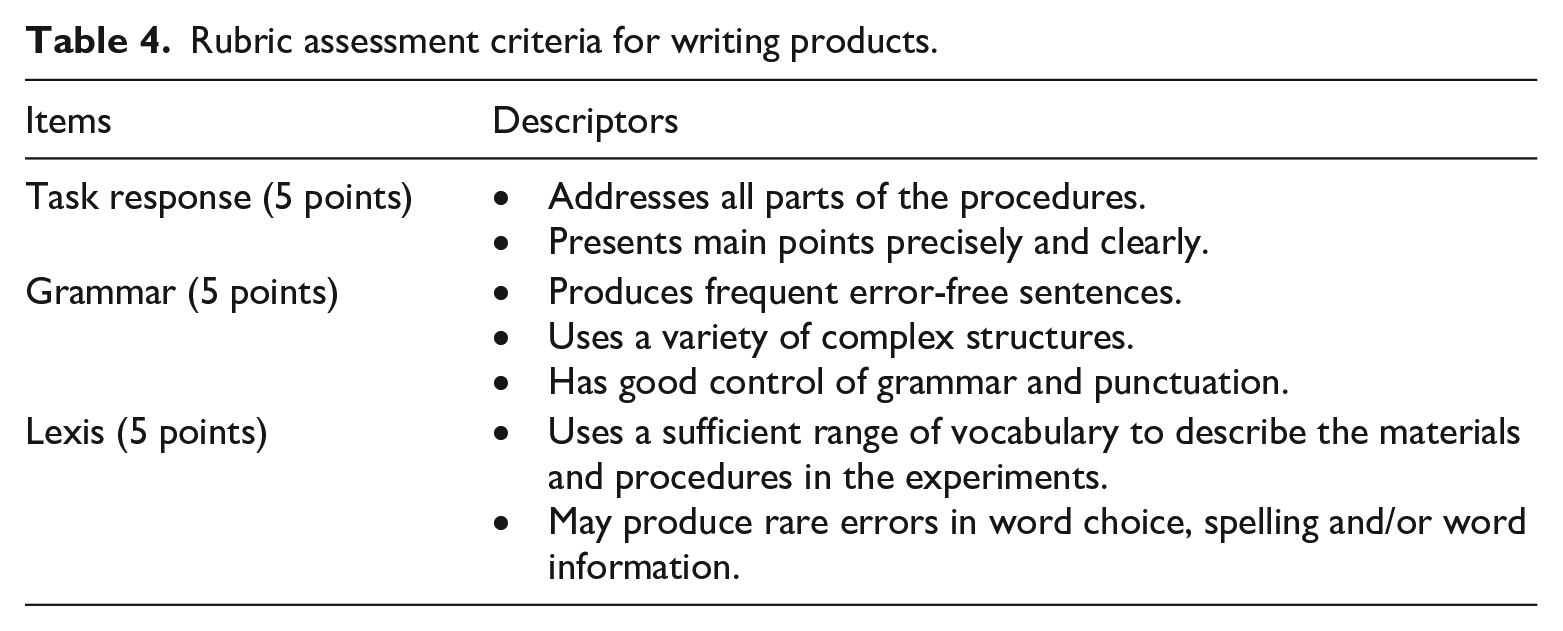

The writing products were scored based on the rubric presented in Table 4. The rubric was designed by the first author, adopting descriptors from the International English Language Testing System (IELTS), which include task achievement, coherence and cohesion, lexical structure, and grammatical range and accuracy. Considering the nature of the collaborative writing task and students’ proficiency levels, coherence and cohesion were excluded, and the descriptors were adjusted to focus more specifically on task response, grammar, and lexis to measure the predicted outcomes from both content and linguistic perspectives. The writing products were rated on a five-point scale by a teacher with over ten years of teaching experience at KOSEN. To ensure consistency in the evaluation process, the first author and the rater discussed the expected proficiency level for the students based on the teaching materials employed in the study, and the rater applied the agreed-upon criteria to rate the writing products.

Rubric assessment criteria for writing products.

Descriptive statistics and the effect size (d) for each cycle were calculated. Analyses using inferential statistics were not conducted, as the students took the lessons with different partners due to changes in classroom seating, making within-participant comparisons difficult. Approximately 10% of the writing products were also rated by the first author, and inter-rater reliability was calculated. The weighted kappa values were 0.84 for task response, 0.77 for grammar, and 0.72 for lexis, indicating almost perfect agreement in task response and substantial agreement in grammar and lexis.

b Learning-based evaluation

The pre- and post-tests were analysed to examine changes in students’ writing skills throughout the course. Data from students who had missed either or both tests were excluded from the analysis, leaving data from 117 students.

The written texts were analysed for syntactic complexity, lexical complexity, accuracy, and fluency. Syntactic complexity was measured by the mean length of T-unit, clauses per T-unit, and mean length of clause. These metrics were chosen based on Norris and Ortega’s (2009) recommendation to use diverse measures for assessing syntactic complexity, reflecting its multidimensional nature. The mean length of T-unit, clauses per T-unit, and mean length of clause measure overall complexity, subordination, and phrasal complexity, respectively, ensuring comprehensive coverage of syntactic complexity. Lexical complexity was measured using the measure of textual lexical diversity (MTLD; McCarthy & Jarvis, 2010). This index was chosen because it is less sensitive to text length in short L2 texts than traditional indices, such as the type-token ratio and Guiraud’s index (Koizumi & In’nami, 2012; Zenker & Kyle, 2021). MTLD was calculated using the Lexical Diversity Measurements (Reuneker, 2017).

Accuracy was quantified by calculating the percentage of error-free clauses, accounting for all syntactic and morphological errors except article errors. This exclusion was necessary due to the high prevalence of article errors among Japanese learners at this proficiency level, stemming from the linguistic distance between Japanese and English (Shirahata, 1988). Indeed, data analysis revealed that many learners frequently omitted articles in their writing (e.g. ‘The test tube is in beaker’ instead of ‘The test tube is in a beaker’). The high frequency of such errors would lower overall accuracy scores, potentially obscuring the assessment of other grammatical features. Orthographic errors were also disregarded, as errors were operationally defined as strictly grammatical deviations for the purposes of this study.

Fluency was operationalized as the amount of language students can produce within a given time (Kim et al., 2022). It was measured by counting the number of words per minute following previous studies (Kim et al., 2020; Zhang, 2018). Fluency can be measured in various ways, such as breakdown, repair, and speed fluency (Skehan, 2009; Tavakoli & Skehan, 2005). Speed fluency was chosen in this study because the nature of the writing tasks and test conditions (i.e. testing all the students simultaneously) made it difficult to measure the real-time responses required to evaluate breakdown and repair fluency. In this context, word count provides a reliable measure of speed fluency.

The tests were further evaluated using the rubric created for the collaborative writing tasks (see Table 4). The same teacher who assessed the writing products in the collaborative writing tasks also rated the tests on a five-point Likert scale. To ensure consistency, the first author and the rater discussed the goal of the tests and the expected achievement level prior to the evaluation.

All scores were statistically analysed to examine the differences between the pre- and post-tests. Descriptive statistics, effect size (r), and 95% CIs were calculated. While paired-sample t-tests were conducted to analyse accuracy and fluency, Wilcoxon signed-rank tests were conducted for complexity and all the rubric scores, as normality was not assumed.

c Student-based evaluation

The post-task questionnaire from the 101 students who attended all the lessons was analysed for this evaluation. Descriptive statistics for the six closed questions were calculated with 95% CIs. The end-of-course questionnaire from 119 students was analysed using thematic analysis. The analysis followed the procedures proposed by Braun and Clarke (2006): (1) reading the data multiple times to gain familiarity, (2) generating initial codes, (3) searching for themes based on the generated codes, (4) reviewing themes by re-reading themes, codes, and data to ensure they properly represent the data, (5) defining and naming themes based on their relevance to the research question, and (6) producing the report. The first author worked on the entire data analysis, and the second author checked it. When there were any disagreements, we discussed them until reaching an agreement.

V Findings

Research question 1: Response-based evaluation

Research question 1 examined the extent to which low-proficiency learners complete collaborative writing tasks. To address this question, the post-task self-reflection sheets that captured students’ perceived task completion and their writing products from the collaborative writing lessons were analysed.

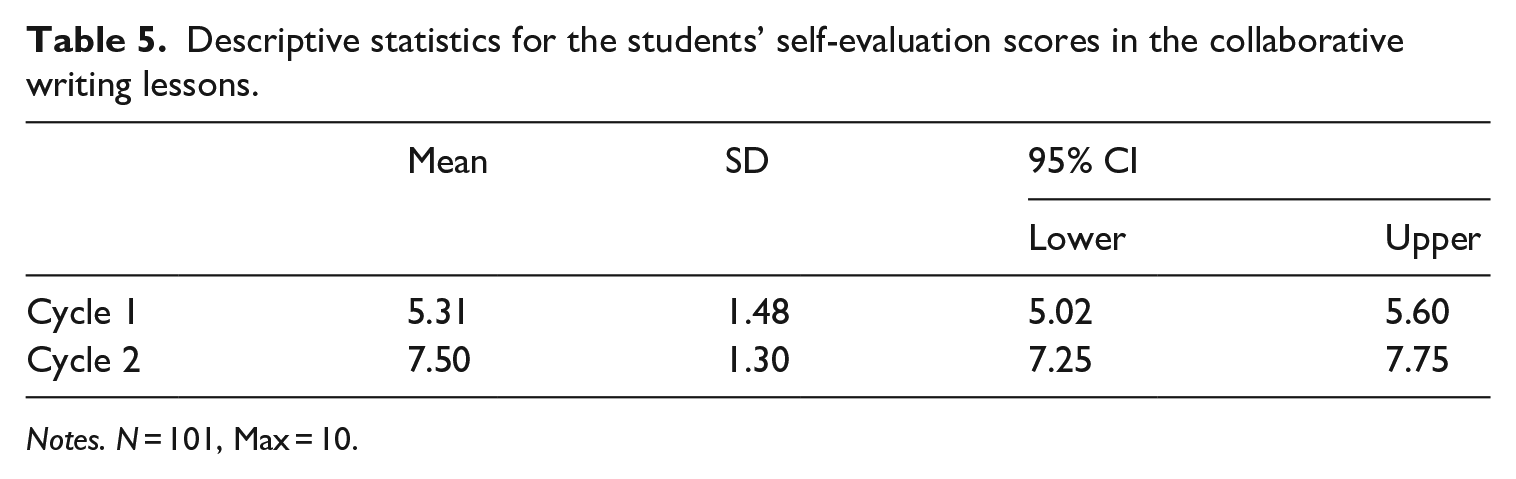

Table 5 shows the descriptive statistics for the students’ self-evaluation scores in the collaborative writing lessons. A Wilcoxon signed-rank test revealed that the mean difference was statistically significant (W = 308.00, p < .01), and the effect size was considered large (r = .73). The results indicate that while students’ perceived achievement was lower during Cycle 1, it improved in Cycle 2.

Descriptive statistics for the students’ self-evaluation scores in the collaborative writing lessons.

Notes. N = 101, Max = 10.

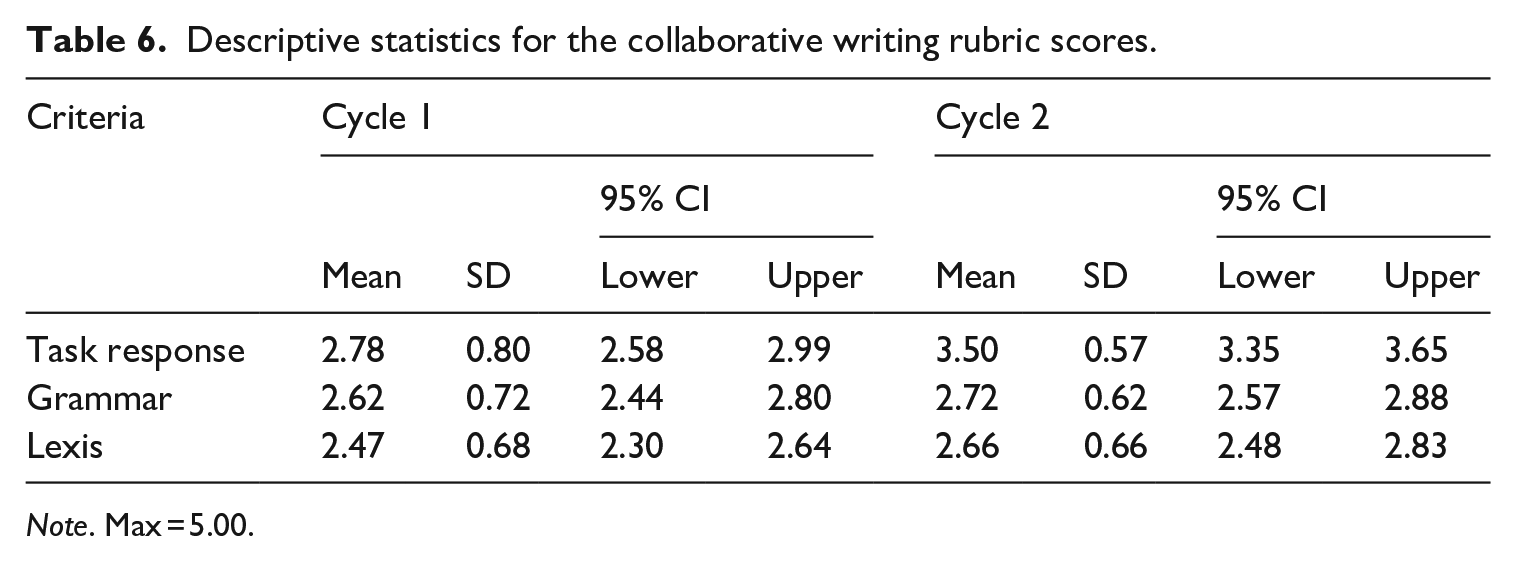

Table 6 shows the rubric scores for the writing products. The effect sizes of the improvement from Cycle 1 to Cycle 2 were medium for the task response (d = 1.04), and marginal for the grammar and lexis (d = .15 and d = .28, respectively). The results suggest that while learners’ writing skills improved in terms of organization and content after experiencing TBLT, their linguistic aspects showed little improvement.

Descriptive statistics for the collaborative writing rubric scores.

Note. Max = 5.00.

The response-based evaluation of the writing outcomes showed improvement in performance from Cycle 1 to Cycle 2. The perceived successful task completion rate increased from 53.1% in Cycle 1 to 75% in Cycle 2. The rubric assessment revealed that the quality of students’ writing products improved in terms of task response, suggesting that the students made considerable efforts to produce higher-quality task outcomes over the course of the two instructional cycles. The post-task model report comparison activity in Cycle 1 appeared to help learners deepen their understanding of organizational structure and content requirements, resulting in improved task response and an increase in perceived task completion. However, a focus on the organization and content of the report may have diverted learners’ attention from linguistic aspects, potentially leading to only marginal improvement in linguistic features. Previous research suggests that when feedback addresses multiple language errors, learners tend to benefit more from feedback on salient linguistic items that play a crucial role in conveying meaning compared to less salient items (Shintani et al., 2014). Additional support to focus on linguistic forms, such as corrective feedback to individual work, may have helped learners notice linguistic forms in the collaborative writing lessons.

Furthermore, the seven-week interval between the cycles may have influenced the results. Given this extended gap, the awareness of linguistic forms that the learners gained in the first cycle, if any, might not have been retained. The results might differ if the syllabus was structured so that the same task was implemented in consecutive weeks (e.g. the jigsaw lessons in Week 4 and Week 5 and the collaborative writing lessons in Week 6 and Week 7).

Research question 2: Learning-based evaluation

Research question 2 examined whether the students developed their language skills after the course. The analyses involved comparisons of the pre- and post-test data using (1) measures of complexity, accuracy, and fluency and (2) a rubric-based assessment. Table 7 presents the descriptive statistics for complexity, accuracy, and fluency. Wilcoxon signed-rank tests revealed significant increases in scores from the pre-test to the post-test in terms of syntactic complexity with large effect sizes (mean length of T-unit, W = 667.50, p < .01, r = .69; mean length of clause, W = 650.50, p < .01, r = .70). However, no significant differences were found between the tests in terms of clauses per T-unit and lexical complexity, both showing marginal effect sizes (clauses per T-unit, W = 2,214.00, p = .05, r = .18; lexical complexity, W = 3,587.00, p > .05, r = .03). A paired sample t-test showed no significant difference in accuracy, with a marginal effect size (t = −1.822, p > .05, r = .17). However, it indicated significant improvement in fluency, with a large effect size (t = −17.65, p < .01, r = .85) These results suggest that, after completing two cycles of TBLs, the students improved their ability to write scientific reports with greater syntactic complexity and fluency, while maintaining similar levels of accuracy.

Descriptive statistics for complexity, accuracy, and fluency in the pre- and post-tests.

Notes. N = 117, MTLD = the measure of lexical diversity.

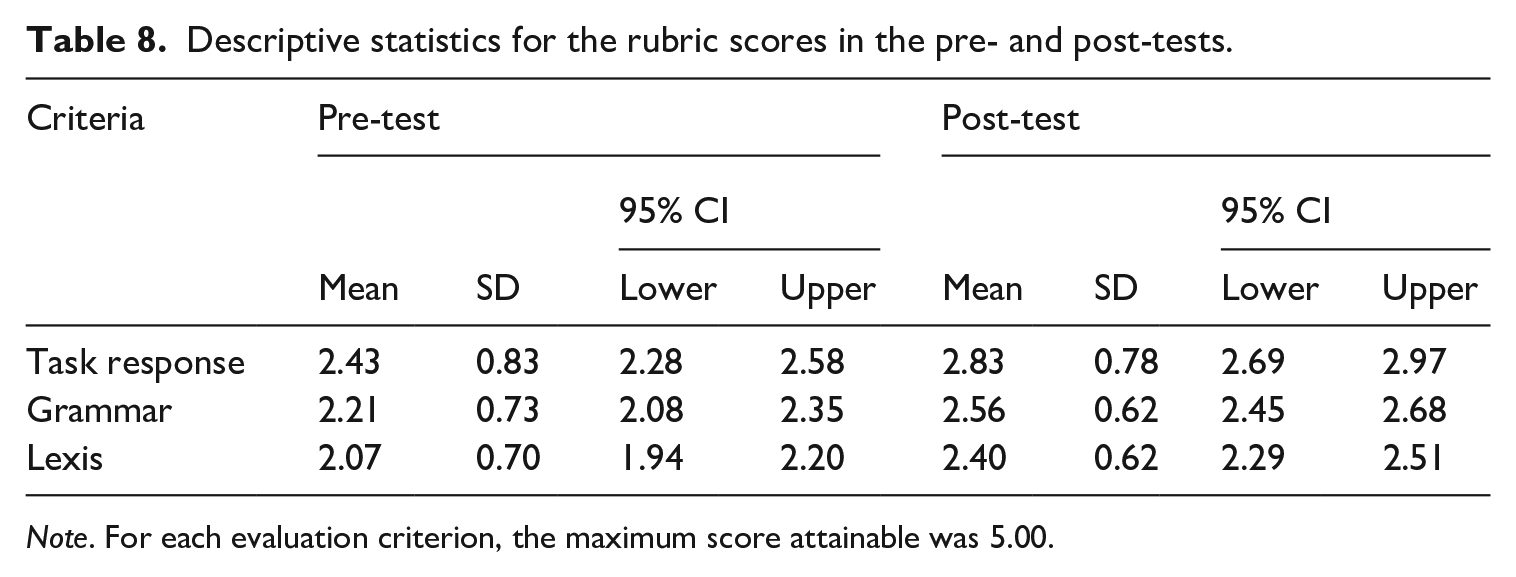

Table 8 presents the descriptive statistics for the rubric scores. Wilcoxon signed-rank tests showed a significant difference between the tests across all criteria, with effect sizes close to medium for task response and small for grammar and lexis (task response: W = 433.50, p < .01, r = .38; grammar: W = 601.50, p < .01, r = .35; lexis: W = 506.50, p < .01, r = .34). These results indicate that while the quality of the students’ writing improved to some extent in terms of achieving task outcomes, the improvements in linguistic aspects remained modest.

Descriptive statistics for the rubric scores in the pre- and post-tests.

Note. For each evaluation criterion, the maximum score attainable was 5.00.

The results showed that the quality of students’ writing performance from the pre- and post-test improved through the TBLT course. The general text analyses suggest that the students’ writing improved in syntactic complexity and fluency. The rubric assessment indicates that some learning occurred in terms of new linguistic knowledge, greater control over existing linguistic resources, and discourse skills, as the quality of products improved across all criteria. These results align with other macro-evaluation studies that demonstrated their effectiveness from a learning-based perspective (González-Lloret & Nielson, 2015; Nielson, 2014; Shintani, 2012).

The results, however, do not indicate notable improvement in writing accuracy through the TBLT course. The difference between the pre- and post-test was not statistically significant, with a marginal effect size. One possible explanation is the nature of the test. The test required students to write part of a laboratory report. As the students lacked sufficient experience in writing laboratory reports in English, they may have focused more on organization and context than on grammatical accuracy. Consequently, the test might not have accurately elicited the students’ linguistic knowledge. Discrete-point tests, such as vocabulary recall tests and grammaticality judgment tests, might have been more effective in capturing improvement in students’ linguistic knowledge gained from the course.

Nonetheless, the notable improvement in fluency and complexity, rather than accuracy, is a positive outcome, as it aligns with the objectives of TBLT and with broader language learning goals. This finding suggests that the students were able to produce a greater quantity of L2 output and take risks by making mistakes (Skehan, 1998). Japanese learners tend to minimize L2 production during TBLs due to shyness, fear of making mistakes, and concerns about negative evaluations (Wicking, 2009). However, the findings suggest that the TBLT course helped students shift their perspective, encouraging them to view language as a tool for communication rather than solely as an academic subject. This shift promoted active L2 use, supporting language learning goals focused on meaningful communication.

Research question 3: Student-based evaluation

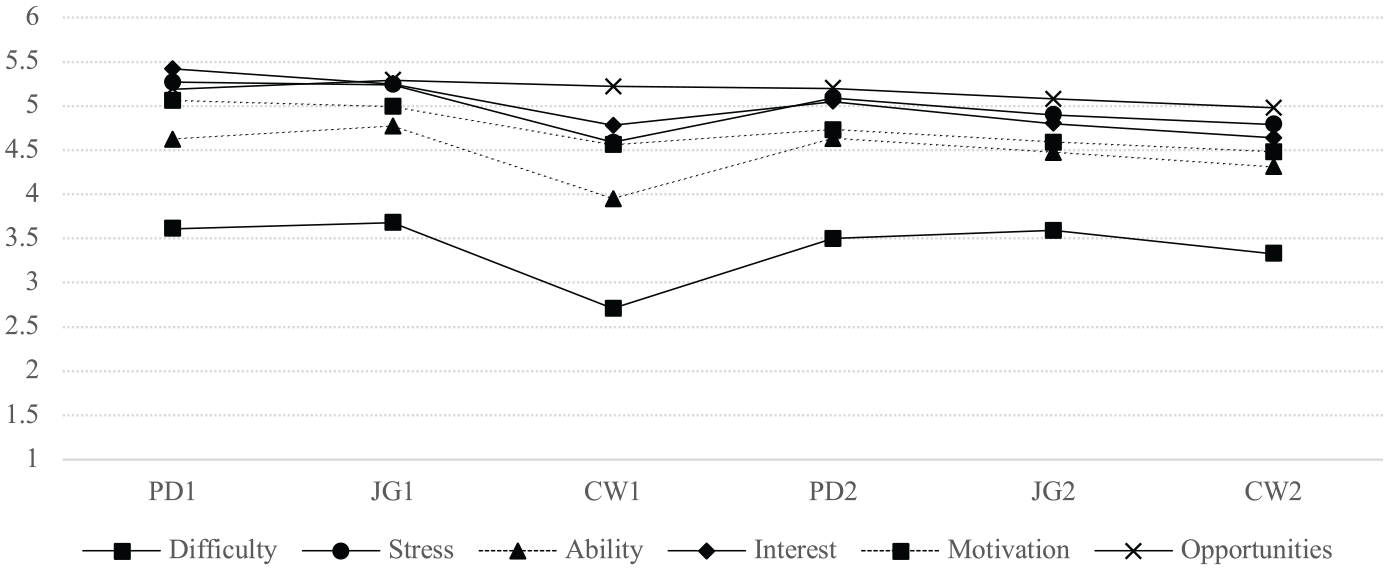

Research question 3 explored students’ perceptions of the TBLT course. The six closed-ended questions in the post-task questionnaire and the open-ended questions in the end-of-course questionnaire were analysed. Table 9 shows the descriptive statistics for the six items in the post-task questionnaire, which captured the students’ perceptions of the tasks.

Descriptive statistics for the perceptions of each task.

Note. Max = 6.

Figure 1 illustrates the trends for each question over time. The data suggest that the students’ levels of stress, interest, motivation, and perceived opportunities for learning remained consistently high across the six lessons. The results indicate that the students had positive perceptions of the tasks from diverse perspectives, and they were maintained throughout the course. Conversely, their perceived task difficulty was relatively low, indicating that they found the tasks challenging. Specifically, the collaborative writing tasks were perceived as more difficult than the other two oral tasks.

Trends in students’ responses to each task.

The thematic analysis of the end-of-course questionnaire identified two main themes: students’ perceptions of the oral tasks and their perceptions of the collaborative writing tasks. Five subthemes emerged for the oral tasks: enhancing vocabulary and communication skills, providing opportunities for oral communication, increased engagement and enjoyment, perceived difficulties in the oral tasks, and pairing issues. For the collaborative writing tasks, two subthemes were identified: enhancing writing skills and real-world communication, and the need for teacher feedback. Each theme is described in detail below.

Regarding the oral tasks, many students reported that the TBLT course helped consolidate their vocabulary knowledge through its use in communication. For example, one student (E27) found the picture-drawing tasks and their pre-task activities (i.e. picture-matching activities) helpful because they ‘can acquire communicative abilities and different words’. The student also stated, ‘The lesson offered a good learning experience in learning scientific terms, expressions of explaining locations, and various daily verbs.’

Some students appreciated the two oral tasks because they provided valuable opportunities for oral communication. They noted that such opportunities were rare in textbook-based lessons or outside the classroom. For example, one student (I12) found picture-drawing tasks beneficial for acquiring English skills, stating, ‘Communicative skills and listening skills were difficult to acquire in regular classes.’ Another student (C3) appreciated the oral tasks for offering opportunities to practice L2 production, which is rarely available in the students’ learning environment: I think it is good for me to work on English practice that requires us to generate English from scratch, such as daily conversation (not grammar practice conducted in class, etc.), because I have few opportunities to experience such conversations. I want you to keep offering it because it’s very good for me (C3).

One student (I13) mentioned that the lessons did not make him sleepy, suggesting that TBLs, compared to regular textbook-based lessons, created a sense of excitement for the students: ‘I can enjoy and concentrate [on TBLs] without getting sleepy.’ These comments indicate that students appreciated and enjoyed the opportunities for oral communication in L2. They particularly valued the practical content provided by the tasks, such as vocabulary relevant to specific fields.

However, several students reported challenges with the oral tasks. Some noted that the oral tasks were too difficult for low-proficiency students; for example, one student (E14) wrote: I am not good at English. If my partner is not good at English either, we cannot work on [TBLs] well, so we should pair up with international students. It is difficult to work on [TBLs] between people who are not good at English (E14).

Other students expressed concerns that the oral tasks might not have effectively improved their English abilities because low-proficiency learners could achieve the task goals without using English. For example, one student (C30) stated that she ‘made little attempt to communicate in [English] sentences’, as she could work on the tasks with non-verbal communication, such as facial expressions and gestures. Another student (E2) reported that low-proficiency learners struggled to conduct tasks in their L2 due to difficulties with L2 production: When the students conduct a picture description activity, those who are good at English can produce English sentences once they see a picture. In contrast, students who are not good at English cannot produce English sentences. As a result, they will conduct a task with words and gestures . . . their English proficiency will not improve compared with those who are good at English. So, the difference in English proficiency between them becomes bigger (E2).

Some students reported pairing issues. For example, one student (E11) stated, ‘There was a case where I could not proceed with the activities because my partner didn’t say anything to me even though I tried to talk to my partner.’ Another student expressed difficulties working on the oral tasks with unfamiliar partners: In the lessons, I needed to pair up with other students, but when I worked on the lessons with those who didn’t know each other well, there were sometimes strange pauses during conversation, which made me feel awkward (E32).

Students found the collaborative writing tasks valuable and helpful for improving their writing skills. One student (E15) wrote that the collaborative writing tasks were helpful because ‘they helped us improve our ability to compose English sentences.’ Another student (E13) felt that tasks allowed them to ‘become familiar with English by writing in my own expressions’. Students also appeared to perceive the writing tasks as having practical applications for real-world use, as one student (C13) wrote, ‘I think we should keep writing a report because it will be beneficial in the future.’

The students’ positive responses in the end-of-course questionnaire suggest that they valued the practicality of the tasks as useful in the future. These results align with the findings from studies examining teacher perceptions, indicating that TBLs improve the students’ linguistic skills (Harris, 2016) and promote engagement and enjoyment (Harris, 2018). The findings also suggest that students did not perceive the tasks as ‘just a fun activity’ (Foster, 1998) but rather as valuable learning materials.

However, some students highlighted that the lack of feedback for their writing outcomes was problematic. One student (I22) wrote, ‘The difficulty I felt during the task was that I had to explain [the content of the task] with incorrect expressions because I wasn’t sure if my English was grammatically correct.’ Another student expressed the need for teacher feedback due to uncertainty about the accuracy of their writing: ‘I wasn’t sure if the sentences I wrote were correct. Although I looked them up, I could not find appropriate expressions. So, it would be great if you correct my report’ (C34). Additionally, a student requested more detailed guidance to improve their writing skills: ‘It will be helpful for the future if you give us more explanation on how to write an English report or models of describing pictures at the end of the activities’ (C5).

The student-based evaluation revealed that the learners approached the tasks in a relaxed manner, found the TBLs interesting, felt motivated, and perceived them as valuable learning opportunities. Their positive perceptions remained consistent throughout the course. The end-of-course questionnaire indicated that learners appreciated both oral and writing tasks but had different perceptions. They enjoyed the picture-drawing and jigsaw tasks, viewing them as valuable opportunities for communication, as such activities are rarely included in traditional textbook-based lessons. They also perceived the oral tasks as consolidating vocabulary knowledge by requiring its use in communication. Furthermore, the collaborative writing tasks were recognized for their role in improving learners’ writing skills.

Most of these findings align with prior macro-evaluations of TBLT conducted in varied contexts (González-Lloret & Nielson, 2015; Kim et al., 2017; Lai et al., 2011; McDonough & Chaikitmongkol, 2007). These studies revealed that the learners were highly satisfied with TBLT courses (Lai et al., 2011), valued communication-oriented lessons and opportunities to learn new vocabulary (Kim et al., 2017), and recognized the real-world relevance of TBLT (González-Lloret & Nielson, 2015; McDonough & Chaikitmongkol, 2007). Aligning with these findings, the students in this study demonstrated high and consistent levels of satisfaction with TBLT throughout the course. They also perceived the tasks as worthwhile, with the oral tasks providing valuable opportunities for L2 communication and the writing tasks being viewed as useful for their future careers. Thus, it is likely that TBLT was recognized not merely as an enjoyable activity but also as a meaningful learning tool (Foster & Skehan, 1996).

The results also highlighted challenges in implementing TBLs in this context. Many students found the oral tasks too difficult, and Figure 1 indicates that the collaborative writing tasks were the most challenging. The thematic analysis revealed that learners struggled with L2 production during the preparatory lessons, particularly when paired with low-proficiency peers. A possible explanation for this difficulty is the limited input before the main tasks. The pre-task activity in this study focused on matching pictures with words. Although this activity aimed to offer learners some input for the main tasks, it was restricted to lexical aspects and lacked demonstrations or models to guide task implementation. More input-based tasks (Shintani, 2016) or guided pre-task planning (Mochizuki & Ortega, 2008) may be necessary to provide richer input.

The learners also reported challenges related to pairing issues. One possible reason is the lack of relatedness among learners. This study started in April, immediately after the class changes took place, and it is possible that many students did not know each other well. Hiromori (2003), investigating Japanese high school students’ motivation for English, reported that relatedness to others greatly affects learners’ extrinsic and intrinsic motivations. Building a good relationship with others might be vital in implementing TBLs in this context. Therefore, greater teacher support could be beneficial, such as carefully assigning partners and providing a step-by-step guide to facilitate task collaboration.

VI Conclusions

The current evaluation provided evidence that a TBLT program can be effectively implemented in ESP classrooms with low-proficiency learners in an acquisition-poor environment, challenging claims that TBLT is unsuitable for such learners or contexts (Swan, 2005). The gradual steps incorporated into each of the two cycles (i.e. providing two preparatory lessons with simple tasks to prepare for the challenging collaborative writing task) and the careful preparation of the lessons, including reflection sheets and model texts, appear to have enabled the students to improve the language skills needed to achieve the course goals. In this manner, the current evaluation reiterates the importance of the teachers’ role in TBLT. In a foreign language context, particularly for learners with low L2 proficiency, the teacher’s role extends beyond merely acting as a manager and facilitator (Swan, 2005).

This study has several limitations, particularly in data collection and analysis. One limitation concerns the response-based analysis, which was confined to task achievement (writing outcomes) and the examination of retrospective data (reflection sheets). Analysing the collaborative dialogue during tasks could provide deeper insights into processes such as whether linguistic items are noticed, how any noticed linguistic items are reflected in students’ writing, and whether evidence of learning is observed in the new writing (i.e. post-test). Another limitation pertains to the end-of-course questionnaire used in the student-based analysis, where learners brainstormed and discussed ideas before responding. While this approach was designed to activate their thoughts on the lessons, it is possible that the ideas of others influenced their perceptions.

One of the most notable limitations of this study is the absence of a control group that completed pre- and post-tests without receiving the instructional treatment. Without a control group, it is challenging to address the criticism that similar results might have been achieved through instructional methods other than the TBLs. However, including a control group was not feasible due to ethical considerations. Acknowledging this limitation, we employed multiple resources to conduct a comprehensive evaluation across all three aspects, including a detailed examination of the instructional process. Moreover, we argue that this limitation is inherent to the macro-evaluation approach, as its primary goal is to assess the impact of an existing program implemented within an educational context.

Despite the above limitations, we believe that the current evaluation offers valuable insights into the potential for implementing TBLT in contexts often deemed challenging. As Long (2016) argues, the difficulties encountered should not be interpreted as evidence that TBLT is unsuitable for such contexts.

Footnotes

Appendix A. A sample worksheet (picture-drawing)

Appendix B. A sample work sheet (jigsaw)

Appendix C. A sample matching activity (picture-drawing)

funnel, test tube, glass tube, tweezers, graduated cylinder, beaker, trough, flask, droppe, ring stand and clamp, gas burner, alcohol lamp

Appendix D. Self-reflection sheet (picture-drawing and jigsaw)

Reflection 1: 今回の活動中、相手の絵の中に以下のことが含まれていたでしょうか。きちんと含まれていたと思えば4、全く含まれていなかったと思えば1と回答しましょう。

| 項目 | 自己評価 |

|---|---|

| 1. 実験器具や道具(位置や場所は問わない) | 1 2 3 4 |

| 2. 実験器具や道具の位置 | 1 2 3 4 |

| 3. 実験器具の状態(「フラスコに水が入っている」「タブレットに表が表示されている」など) | 1 2 3 4 |

| 4. 実験者の表情 | 1 2 3 4 |

| 5. 実験者の動作 | 1 2 3 4 |

| 6 実験者の容姿・服装 | 1 2 3 4 |

| Reflection 2: 上の得点を上げるために必要だと思う表現をペアで可能な限りたくさん挙げてください。それらについて調べてみましょう。 | |

|---|---|

| 日本語 | 英語 |

| Reflection 3: もう一度自分が持っている絵について英語で相手に伝えましょう。また、相手の描写を聞いて、Reflection 1の基準に基づいてどの程度できているか評価してみましょう。ペアの評価 | |

|---|---|

| 項目 | 評価 |

| 1. 実験器具や道具(位置や場所は問わない) | 1 2 3 4 |

| 2. 実験器具や道具の位置 | 1 2 3 4 |

| 3. 実験器具の状態(「フラスコに水が入っている」「タブレットに表が表示されている」など) | 1 2 3 4 |

| 4. 実験者の表情 | 1 2 3 4 |

| 5. 実験者の動作 | 1 2 3 4 |

| 6 実験者の容姿・服装 | 1 2 3 4 |

Appendix E. Self-reflection sheet (collaborative writing)

Reflection 1: モデルと自分達の書いた英文を比較し、以下の項目が入っていたかどうかをチェックしましょう。英語の正しさは問いません。

| (✔) | (✔) | ||

|---|---|---|---|

| レポートのタイトル | 実験の目的 | ||

| 実験に必要な実験器具 | アンモニアをフラスコに入れること | ||

| 実験器具を図のように設置すること | ビーカーにフェノールフタレイン溶液を入れておくこと | ||

| 乳首に水を入れておくこと | 乳首を使用してフラスコに水を注ぐこと | ||

| ビーカー内に赤い噴水が形成されること | 噴水が起こるメカニズム(考察) |

| Reflection 2: 以下の項目について、あなたの書いた英文とモデルの書いた英文を抜き出し、表現の比較をしましょう。当該項目が自分の英文に書かれていなかった場合にはN/Aと記入しましょう。 | |||||

|---|---|---|---|---|---|

| 項目 | あなたの表現 | モデルの表現 | 項目 | あなたの表現 | モデルの表現 |

| 目的 | 素材 | ||||

| 手順 | 結果 | ||||

| 考察 |

| 項目 | あなたが書いた表現 | モデルの表現 |

|---|---|---|

| アンモニアの性質を調査する | ||

| フラスコにアンモニアを入れる | ||

| 実験器具を設置する | ||

| ビーカーにフェノールフタレイン溶液を入れる | ||

| フラスコに水を入れる | ||

| ビーカー内の水がガラス管を通って上昇する |

| Reflection 3: 表の表以外の点について、自分の書いた英文とモデルの英文を比較し、異なったところを書いてください。モデルに表現がない場合、辞書等でどのように書けばよいか調べてみましょう。 | ||

|---|---|---|

| 項目 | あなたが書いた表現 | モデルの表現 |

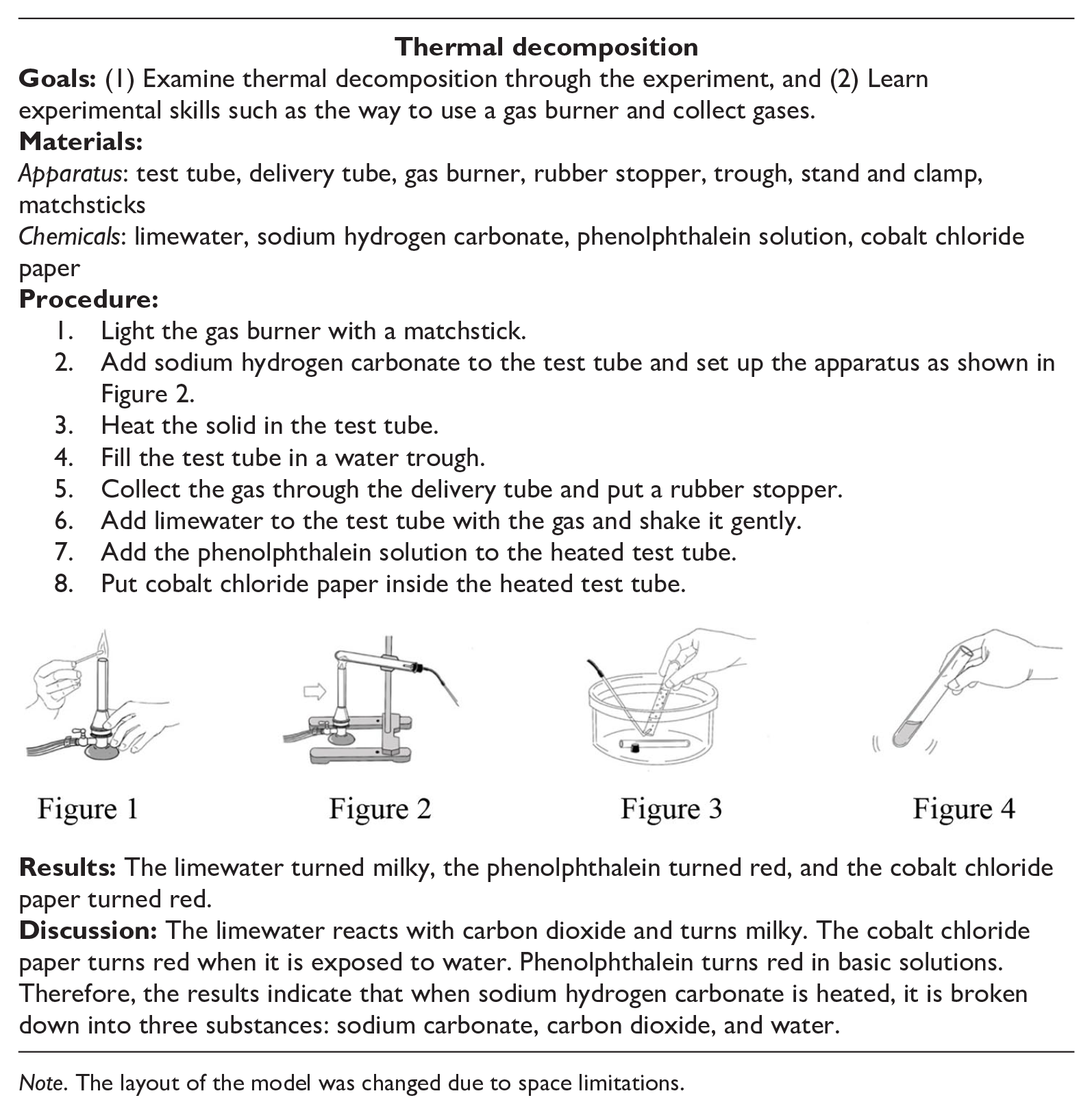

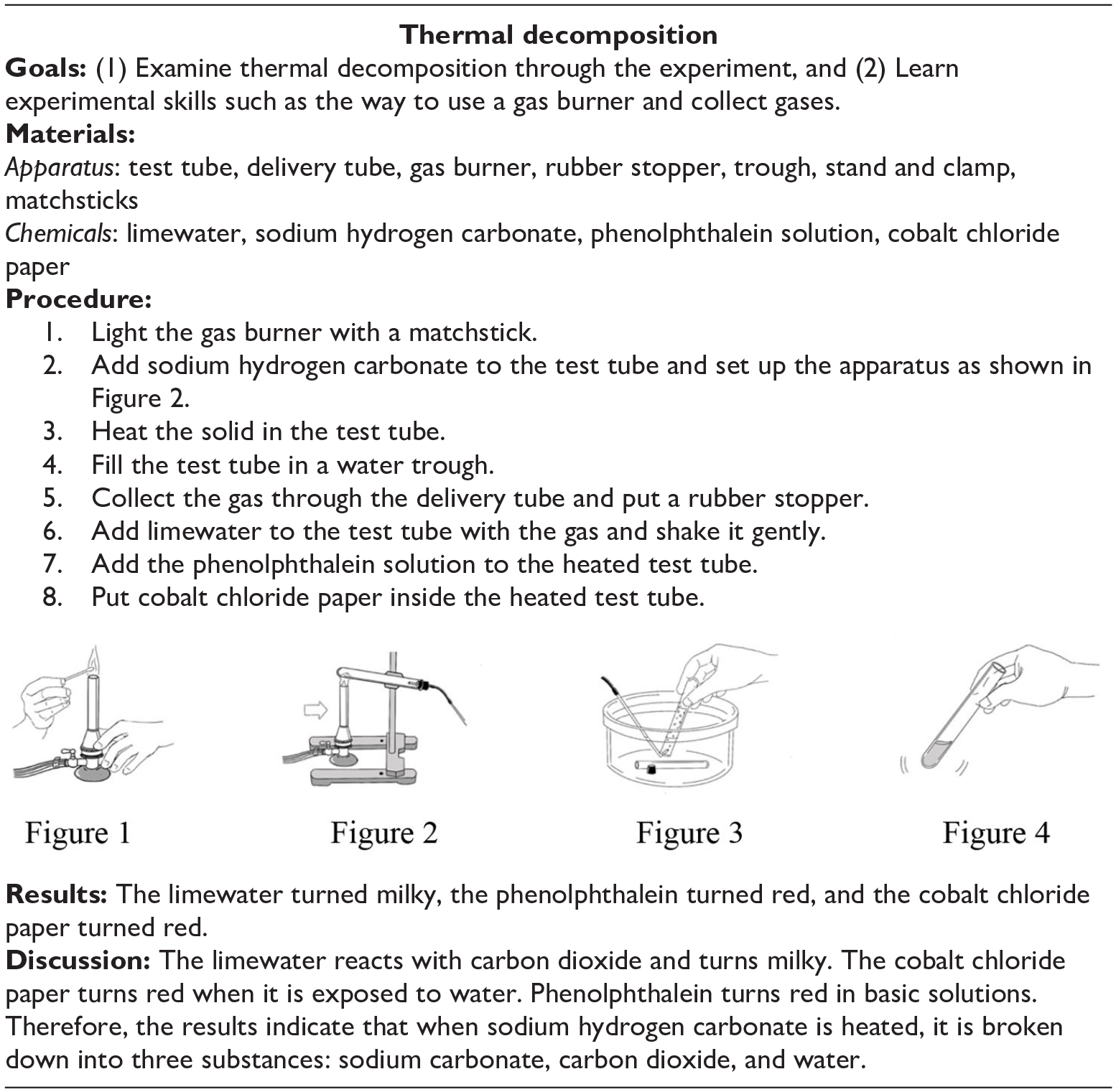

Appendix F. A sample model report in a collaborative writing task

|

Note. The layout of the model was changed due to space limitations.

Appendix G. Writing test (pre- and post-test)

Appendix H. Post-task questionnaire

| Questions | Not agree | Strongly agree | ||||

|---|---|---|---|---|---|---|

| この活動はとても簡単だと思った。 | 1 | 2 | 3 | 4 | 5 | 6 |

| この活動をリラックスして行うことができた。 | 1 | 2 | 3 | 4 | 5 | 6 |

| この活動に上手に取り組むことができた。 | 1 | 2 | 3 | 4 | 5 | 6 |

| この活動は面白いと思った。 | 1 | 2 | 3 | 4 | 5 | 6 |

| このような活動をもっと行いたい。 | 1 | 2 | 3 | 4 | 5 | 6 |

| この活動は多くの学びの機会を与えてくれた。 | 1 | 2 | 3 | 4 | 5 | 6 |

Appendix I. End-of-program questionnaire

| 英語IIの授業では、以下のような話す活動・書く活動を行いました。 • 絵描写(実験に関するイラストをお互いに英語で描写する) • 絵合わせ(実験に関するイラストを英語で描写し、実験手順を適切に並べ替える) • レポート作成(アンモニアの噴水、NaHCO3の熱分解について、英語で実験レポートを作成する) 今回行ったこれらの活動について皆さんの立場から可能な限り詳しく評価してください。成績には一切の影響はありません。皆さんの率直な意見を聞きたいので、気兼ねなく書いてください。また、書く際には以下の項目を参考にしてください。 • このような英語の活動は学生の英語の学習に役に立つと思いますか。 ○ 具体的にどの活動のどの部分についてそう思いますか。それはなぜですか。 ○ これらの活動をとおして学んだことはありますか。 ○ これらの活動を行う中で苦労した点はありますか。 • 今後の高専の英語の授業で、このような英語の活動をもっと取り入れるべきだと思いますか。 ○ 具体的にどの活動のどの部分についてそう思いますか。それはなぜですか。 ○ これらの活動を行う際に「こうした方がいい」ということはありますか。活動の内容、支援、やり方など、どのような点についてでも結構です。 |

Acknowledgements

We are grateful to the anonymous reviewers who provided insightful and constructive comments on earlier versions of this article. Any remaining errors are our own.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by JSPS KAKENHI Grant Number JP21K00803.