Abstract

Teachers’ conceptions of assessment are a significant indicator of teacher assessment literacy. This study contextualized pre-service teachers’ conceptions of assessment in Chinese second language education. An exploratory factor analysis generated eight first-order factors and the following confirmatory factor analysis found a hierarchical model, reflecting a noticeable influence of prior learning experiences and official assessment regimes on conceptions. A follow-up latent profile analysis showed three profiles. Improvement-oriented was the most prevalent profile, but more than 40% of the participants were categorized as negative. The study substantiated the need to develop an integrative and balanced understanding of assessment in teacher education to prepare pre-service teachers for teaching in international contexts.

Keywords

I Introduction

The proliferation of assessment reforms all over the world since the late 1990s has given rise to a reconceptualization of the nexus of assessment, teaching, and learning, and considerable discussion on teachers’ assessment literacy (Charteris & Dargusch, 2018; Crusan, Plakans, & Gebril, 2016; Inbar-Lourie, 2013). Being assessment literate requires not only professional skills but also a solid conceptual understanding of the diversity and complexity of assessment. Teachers’ conceptions of assessment have become a central issue in assessment research and teacher education (Barnes, Fives, & Dacey, 2017; Brown, Gebril, & Michaelides, 2019; Coombs, Deluca, & MacGregor, 2020; Xu & He, 2019). Researchers have used teachers’ conceptions as a critical lens to scrutinize teachers’ understanding of the purposes and functions of assessment in examining the alignment between teachers’ personal dispositions and government initiatives (Brown et al., 2019; Brown, Hui, Yu, & Kennedy, 2011b; Lam, 2019; Wang, Lee, & Park, 2020). Studies tapping into teachers’ conceptions of formative assessment or assessment for learning (AfL) and their assessment practices have revealed significant inconsistencies that might be attributed to educational and cultural contexts (Deneen et al., 2019).

The present study contextualized teachers’ conceptions of assessment in Chinese second language education, with a specific focus on the Master’s program of Teaching Chinese to Speakers of Other Languages (MTCSOL). MTCSOL was launched in China in 2007 to prepare candidates for teaching Chinese in international contexts with two-year professional training in Chinese second language (L2) education that caters to the needs of foreign students in international schools or universities (Ministry of Education, 2007a, 2007b). Local teacher educators have noted the importance of pre-service teachers’ conceptions while gaining the qualifications to teach Chinese as a second language (Li, 2012, 2015). The significance of the present study lies in the fact that this particular group of teacher candidates were mostly educated in the Chinese context, where assessments are usually associated with large-scale testing with high-stakes consequences (Brown et al., 2011b; Lam, 2019; Wang et al., 2020), whereas the potential learners might have various cultural and educational backgrounds and bring to classrooms varied or even competing views about assessment from those held by their Chinese language teachers. The context-dependent nature of assessment makes the challenges faced by pre-service teachers in the emerging profession in China different from those faced by their colleagues in English language education, which forms part of the compulsory education for local students and is a more established field that has dominated the discourse of conceptions of assessment in the local context (Yuan & Lee, 2014; Xu & He, 2019).

Despite more than a decade having passed since the implementation of the MTCSOL, pre-service teachers’ conceptions of assessment remain underexplored. Given the increasing demands of assessment literacy on L2 Chinese teachers and the significance of conceptions for teachers’ attitudes towards assessment reforms, their assessment practices and, in turn, student learning, this study examined pre-service teachers’ conceptions of assessment in the context of teaching L2 Chinese in mainland China. This contextualized examination offers new insights into the construct of assessment conceptions that can enrich the discourse on assessment literacy and produce practical implications for L2 teacher education.

II Literature review

1 Teacher assessment literacy

The notion of teacher assessment literacy has evolved over time, and its history is marked by three stages (Deneen & Brown, 2016; Fulcher, 2012). The first stage was dominated by measurement and accountability. Stiggins (1991) pointed out the lack of professional training in assessment literacy at that time: neither in-service nor pre-service programs offered sufficient training to enable teachers to make critical judgments on assessment results. The second stage was characterized by the promulgation of AfL, which, to some extent, was an attempt to rectify the earlier overemphasis on summative testing. A burgeoning line of research on classroom assessment then emerged to examine the nexus of teaching, learning and assessment (Deneen & Brown, 2016; Fulcher, 2012). Unexpectedly, this growing interest in AfL has engendered a dichotomous view that considers summative and formative assessment as incompatible, particularly in education systems where summative testing has high-stakes consequences (Fulcher, 2012; Taber et al., 2011).

In response to the increasing tensions faced by teachers, in the third and current stage researchers have called for an integrative and balanced approach to assessment. Teacher education programs should prepare teacher candidates for dealing with various assessment processes of construction, administration, scoring, communication and action, for both standardized testing and classroom-based assessment (Deneen & Brown, 2016; Fulcher, 2012; Lam, 2015; Pastore & Andrade, 2019). They are also expected to negotiate the differing and sometimes competing functions of assessment (Taber et al., 2011). Researchers have indicated that the expanded definition of assessment literacy entails not only professional knowledge but also conceptual understanding on the part of teachers, which is closely associated with the sociocultural contexts in which assessment operates (Pastore, Manuti, & Scardigno, 2019).

In their comprehensive review of literature, Xu and Brown (2016) proposed a new framework that conceptualizes teacher assessment literacy in practice as a multifaceted and an integrative construct with teacher’ conceptions of assessment playing a crucial role in explaining teachers’ assessment practices and predicting their responses to new assessment policies. In addition, teachers’ conceptions are reflective of social norms and subject to individual educational experiences, making changes to assessment conceptions a long-standing challenge in teacher education and professional development.

2 Teachers’ conceptions of assessment

Teachers’ conceptions of assessment refer to practitioners’ beliefs or dispositions towards the various purposes and functions of assessment (Brown, 2004b; Fulmer, Tan, & Lee, 2019; Harris & Brown, 2009). In their systematic review of the prerequisites of assessment for learning, Heitink and colleagues (2016) found that teacher factors, including knowledge and beliefs, were the most influential on how successfully formative assessment was implemented. Teachers’ conceptions of assessment are closely associated with their assessment practices. Sadler and Reimann (2018) indicated that teachers whose initial conceptions of assessment were learning-oriented were more likely to develop further into AfL practice over time. The researchers also found a bidirectional relationship between conceptions and practices of assessment; nevertheless, it was shown that teachers’ conceptions of assessment were more resistant to change than were their practices. Resistance to conceptual change might result in complying with the letter of AfL rather than embodying its spirit (Marshall & Drummond, 2006). Adopting a qualitative approach to school teachers’ conceptions of assessment, Remesal (2011) arrived at a four-dimensional bipolar model of conceptions of assessment. The model shows that assessment would affect various aspects of learning, teaching, certification of learning, and accountability of teaching. In addition, teachers’ conceptions were intertwined with their educational beliefs that were inclined to use assessment for pedagogical regulation or societal accreditation. Given the close relationship between teachers’ conceptions and personal and contextual factors, Remesal (2011) indicated that a dichotomous view that separates classroom assessment from standardized testing may not suffice to depict the complexity and nuance of assessment conceptions.

One of the most influential instruments for examining teachers’ conceptions of assessment is the self-report inventory developed by Brown (2004a, 2006). Brown’s inventory consists of four major conceptions of assessment: (1) assessment improves teaching and learning (improvement); (2) assessment is used to evaluate students’ progress for placement or selection purposes (student accountability); (3) assessment is used to evaluate the worth of teachers or schools (school accountability); and (4) assessment is irrelevant to teaching and learning (irrelevance). The inventory has been administered in various educational contexts, with teachers generally agreeing with the improvement conception and disagreeing with the irrelevance conception (Barnes et al., 2017; Brown, Lake, & Matters, 2011a). In addition, Barnes et al. (2017, p. 116) found that teachers may ‘hold multiple, sometimes competing beliefs about assessment’ simultaneously.

Although there might be some universal conceptions of assessment, researchers have argued that the manifestation of these conceptions would vary greatly across jurisdictions under the influence of the socio-cultural environment and education policy in which assessment is enacted (Brown, Gebril, & Michaelides, 2019). In low-stakes assessment contexts, such as New Zealand, Queensland and Cyprus, where low-stakes classroom assessment and standardized examinations are important parts of the national education standards, assessment is generally conceived of as a way of improving teaching and learning (Brown, 2011b; Brown et al., 2011a; Brown & Michaelides, 2011). In examination-dominant contexts, such as Hong Kong, mainland China and Egypt, the conception of improvement is strongly associated with accountability (Brown et al., 2009, 2011b; Gebril & Brown, 2014). Teachers facing higher demands for accountability tend to expect that educational improvement will be reflected in examination results and to believe that ‘a powerful way to improve student learning is to examine them’ (Brown et al., 2011b, p. 314). Teachers’ conceptions of assessment are context dependent; they are reflective of shared values or cultural norms in a society, and therefore should be considered as a differential and situated professional competency (DeLuca, Coombs, MacGregor, & Rasooli, 2019).

3 Pre-service teachers’ conceptions of assessment

Prior experiences as a student have a tremendous influence on pre-service teachers’ initial conceptions of assessment (Lortie, 2002; Pajares, 1992), which are then influential in their ways of interpreting what they learn in training programs and, in turn, on their assessment practices (Pajares, 1992; Taber et al., 2011). Studies examining pre-service language teachers’ assessment literacy have observed limited understanding of assessment for learning among teacher candidates and pointed out inadequacies in professional training (Lam, 2015; Lee, 2010). Pre-service teachers’ initial conceptions of assessment might be naïve or biased as a result of having held a limited understanding of assessment or having had limited experiences with assessment practices as a student (Barnes et al., 2017). However, these conceptions are not static; they develop through professional training and evolve at distinct career stages (Coombs et al., 2020; Yuan & Lee, 2014), provided that teacher candidates are offered the opportunities to reflect on their conceptions of assessment (Cabaroglu & Roberts, 2000) and to learn about the various purposes and functions of assessment in initial teacher education, which help them to develop more comprehensive and balanced conceptions of assessment (Levy-Vered & Alhija, 2018; Smith, Hill, Cowie, & Gilmore, 2014).

Using Brown’s (2004a, 2006) questionnaire, several studies investigating pre-service teachers’ conceptions of assessment have found differences in factor structures, item assignments and correlations between factors across educational regimes (Brown, 2011b; Brown & Remesal, 2012; Daniels, Poth, Papile, & Hutchison, 2014; Levy-Vered & Alhija, 2018). In low-stakes assessment contexts, Brown and Remesal (2012) found a four-factor solution from a Spanish sample (n = 672) and a five-factor solution from a New Zealand sample (n = 324). In both samples, the pre-service teachers prioritized the conceptions of improving teaching and learning. Daniels et al. (2014) collected data from 436 Canadian pre-service teachers, and their analysis generated nine first-order factors, which was different from Brown’s earlier model of four second-order factors. Significant correlations were found among school accountability, student accountability and improvement in teaching and learning, implying that Canadian pre-service teachers tended to consider school and student accountability as equal in importance to improving education. In high-stakes assessment contexts, Levy-Vered and Alhija (2018) used Brown’s (2004a, 2006) inventory to compare 297 Israeli pre-service teachers’ conceptions before and after an assessment course and discovered four second-order factors; the results were consistent with Brown’s (2006) original model. School accountability, student accountability and improvement were found positively correlated with each other in the two phases, indicating that accountability was almost synonymous with improvement. Chen and Brown (2013) used a version of Brown et al.’s (2011b) questionnaire designed for the Chinese context, which was quite different from Brown’s (2004a, 2006) original inventory, to examine 765 prospective teachers’ conceptions of assessment in mainland China and identified four factors (i.e. diagnose, irrelevant, control and life character). The diagnose factor was negatively correlated with the other three, suggesting that pre-service teachers who endorsed the diagnostic and formative purposes of assessment were strongly opposed to conceptions of controlling teachers’ work, assessment irrelevance and life-long moral development. The mean for the control factor was significantly higher than those of the other three factors, implying that pre-service teachers in mainland China regarded the primary purpose of assessment as controlling and evaluating teachers’ work and students’ behavior.

Pre-service teachers’ conceptions of assessment also vary at the individual level. Even within the same jurisdictions, pre-service teachers might hold multiple and sometimes competing conceptions that affect their assessment practice, shaped by their prior experiences (Barnes et al., 2017; Pajares, 1992). Most previous studies have used a variable-centered method to explore the differences of conceptions of assessment among individual pre-service teachers (Brown & Remesal, 2012; Daniels et al., 2014). Recently, a few studies have used a person-centered method to capture the multiple patterns of conceptions and practices of assessment held by teachers. Brown (2008) used cluster analysis to examine 232 New Zealand primary school teachers’ conceptions of assessment integrated with their conceptions of teaching, learning, curriculum and teacher efficacy and identified five clusters of teachers: progressives, pro-formative assessment users, traditionalists, radical social liberationists and conservatives. Coombs et al. (2020) explored 457 pre-service teachers’ approaches to assessment based on their self-perception of assessment competence, motivation for pursuing teacher education and assessment education experiences, and identified three subgroups of assessors: eager, contemporary and hesitant. Barnes et al. (2017) explored 179 teachers’ conceptions of assessment in the United States and discovered three distinct profiles of teacher groups: moderate, irrelevant and teaching and learning. Given the multidimensional and complex nature of teachers’ conceptions of assessment, more research that adopts person-centered approaches to study pre-service teachers’ conceptions of assessment is needed.

4 The context of assessment in mainland China

The education system in mainland China places a high value on high-stakes examinations as the primary approach to selecting students for limited opportunities for higher education or admission to better schools (Chen & Brown, 2013; He, Levin, & Li, 2011). Contrary to the long-standing overemphasis on high-stakes examinations, a curriculum reform that is aimed at integrating AfL in the education system has been implemented in mainland China over the past decade. The aim of the reform is to promote the all-round development of students, and it promulgates formative and authentic approaches to instruction and assessment (Ministry of Education, 2010). Enacting assessment under this socio-cultural context and education policy, Chinese teachers are pressed towards two extremes: high performance on summative assessment and formative improvement (Brown & Gao, 2015). Teachers face the challenge of balancing the relationship between summative examination-oriented assessment and formative improvement-oriented assessment in their classroom teaching. Compared with Western teachers working in relatively low-stakes assessment contexts, it is highly possible that Chinese teachers would hold different conceptions of assessment (Brown & Gao, 2015).

5 The Master’s program of Teaching Chinese to Speakers of Other Languages (MTCSOL)

MTCSOL is a two-year professional postgraduate program for students wishing to teach Chinese to non-Chinese speakers in and outside of China. The program was launched in 2007 owing to the growing interest of learning Chinese around the world (Ministry of Education, 2007a). Anyone who would like to teach L2 Chinese to foreigners in K-12 international schools and universities in China is highly recommended to obtain this Master’s degree. Referring to the curriculum guide of the program, assessment training is an elective course (Ministry of Education, 2007b). Thus, pre-service teachers receive one course on assessment at most. In many cases, they only have access to assessment knowledge and practices through a single topic in a broader course. Jin (2010) found that the majority of the assessment courses in mainland China still covered traditional testing theories and practices. Topics on alternative assessment did not receive sufficient coverage. Although pre-service teachers in this program have been educated in the Chinese high-stakes assessment context, their future international students are likely to have grown up in diverse assessment contexts, which means that these pre-teachers are required to learn and use various assessment practices to prepare for their future teaching. It is necessary to explore pre-service teachers’ conceptions of assessment as these serve to influence assessment practices (Barnes et al., 2017; Heitink et al., 2016; Pajares, 1992). To date, most of the studies exploring pre-service language teachers’ conceptions of assessment in mainland China were conducted with L2 English teachers, who were training to teach English to local students (Yuan & Lee, 2014; Xu & He, 2019). Xu and He (2019) found that pre-service English teachers’ initial conceptions of assessment were constrained by the regular practices deeply rooted in school assessment culture and the challenge of promoting formative assessment in an examination-dominated context. Few studies conducted in the Chinese context have examined the conceptions of assessment of L2 Chinese pre-service teachers, who will instruct international students with diverse cultural and social backgrounds. Moreover, few studies have adopted person-centered approaches to capture the variability of conceptions of assessment that individual pre-service teachers hold.

Given that the MTCSOL pre-service teachers’ conceptions of assessment are underexplored, this study adopted a person-centered approach to address this research gap, guided by the following research questions.

What characterizes MTCSOL pre-service teachers’ conceptions of assessment in mainland China?

What profiles emerge with respect to MTCSOL pre-service teachers’ conceptions of assessment?

III Method

1 Participants

The sample comprised 279 first-year pre-service teachers who were studying a two-year MTCSOL program in government-funded universities in mainland China. The majority of the participants were female (n = 265) and the mean age of the sample was 24.73 years (SD = 2.84). The gender and age proportions corresponded to the general pre-service teacher distribution in the teacher education population in mainland China.

2 Instrument

A self-reported questionnaire was used to examine pre-service teachers’ conceptions of assessment. The first part of the questionnaire collected background information, including age, gender and previous teaching and assessment experience. The second part was derived from Brown’s (2006) Teachers’ Conception of Assessment Abridged (TCoA-IIIA). The TCoA inventory was used in this study instead of the Chinese-Teachers’ Conceptions of Assessment (C-TCoA) because MTCSOL pre-service teachers would teach Chinese language to foreigners in K-12 international schools and universities in and outside of China, which were different from participants who would teach local students in previous Chinese studies (Chen & Brown, 2013; Brown et al., 2011b). These pre-service teachers were likely to encounter diverse culture, assessment policies and educational systems in future, which required them to learn and use various assessment practices to prepare for their future teaching in an international context. Therefore, as pre-service teachers’ assessment practices were influenced by their conceptions of assessment, (Barnes et al., 2017; Pajares, 1992) the TCoA inventory, which had been widely utilized in societies with different assessment policies and teacher groups, was chosen to explore how MTCSOL per-service teachers understand the use and purpose of assessment. This 27-item inventory was designed to elicit teachers’ self-ratings on nine factors of conceptions regarding assessment: (1) assessment makes schools accountable, (2) assessment describes ability, (3) assessment improves learning, (4) assessment improves teaching, (5) assessment is valid, (6) assessment makes students accountable, (7) assessment is bad, (8) assessment is ignored and (9) assessment is inaccurate. These nine factors measure four inter-correlated conceptions of assessment: school accountability, student accountability, improvement and irrelevance. Confirmatory factor analyses conducted in previous studies indicated that the TCoA-IIIA measurement model exhibited satisfactory fit for samples of primary and secondary teachers in New Zealand and Queensland (Brown, 2008). A 6-point positively packed rating scale (1 = strongly disagree, 2 = mostly disagree, 3 = slightly agree, 4 = moderately agree, 5 = mostly agree and 6 = strongly agree) was used in the questionnaire to maximize variance in the responses to items (Brown, 2004a).

The questionnaire was translated into Chinese and then back-translated into English. Two bilingual scholars were invited to examine the accuracy and clarity of the translation. The Chinese version of the questionnaire was combined with the original English version, with the Chinese item corresponding to each English item being provided to help participants understand the item precisely.

3 Data collection

Members of the research team first contacted teachers of the MTCSOL program in universities in mainland China through personal relationships and professional online communities and asked them to invite their students to complete the online TCoA-IIIA questionnaire. The researchers also asked anyone who responded to the questionnaire to share the link with his or her friends who were also studying the same Master’s program. According to the information provided by the online questionnaire tool, most participants took approximately 10 minutes to complete the questionnaire.

4 Data analyses

The data analyses were completed in three steps. First, descriptive statistics of questionnaire items, including means, standard deviations, skewness and kurtosis, were calculated to display a preliminary description of the participants’ conceptions of assessment. As the TCoA inventory had been validated in previous studies (Brown, 2006, 2011a), confirmatory factor analysis (CFA) was first adopted to test whether the data in this study fit the original model. However, the data failed to fit the pre-existing model. Exploratory factor analysis (EFA) was then carried out to identify the factor constructs of conceptions of assessment. CFA was later used to validate the fit of the alternative model hypothesized based on EFA results. EFA is a technique to explore the number of latent variables and possible underlying factor structures of a set of observed variables (Fabrigar, Wegener, MacCallum, & Strahan, 1999). Principal axis factoring (PAF), a least-squares estimation of the common factor model, was performed because it was better to recover factors with low loadings and few indicators and to avoid over-extracting factors from the population (De Winter & Dodou, 2012; Fabrigar et al., 1999). Oblimin rotation was selected to allow natural correlation between the factors (Fabrigar et al., 1999) as the results of previous assessment studies suggested that potential factors of conceptions of assessment were correlated (Brown, 2004a, 2006). Items with factor loadings greater than .3 were retained. Once a factor structure was identified, correlations of factors were calculated. CFA is an approach to test the numbers of factors and the specification of factor loadings postulated by the researchers based on theoretical knowledge or/and empirical research (Thompson, 2004). Maximum likelihood (ML) was used as the estimation method. Several goodness-of-fit indices (x2/df ⩽ 3, CFI ⩾ .90, GFI ⩾.90, RMSEA ⩽ .06, SRMR ⩽ .08) were used to determine the fit of the hypothesized model (Kline, 2011; Hu & Bentler, 1999).

In the last step, latent profile analysis (LPA) was conducted to identify the emergent profiles of pre-service teachers across different factors of their conceptions of assessment. LPA is a probability-based technique to identify underlying groups of individuals that show similar patterns of variables (Magidson & Vermunt, 2004; Muthén, 2001). Individuals within one latent profile have identical solution probabilities for the included items, while individuals from diverse latent profiles differ in terms of their response probabilities. In this study, the pre-service teachers’ responses to the factors generated from the 27 questionnaire items were used to categorize them into groups that shared a similar degree of agreement on a particular combination of conceptions of assessment. ML estimation was used to find the parameter estimates associated with the highest likelihood value coming from the sample (McLachlan & Peel, 2000). ML is the most commonly used method to estimate model parameters in LPA (Pastor, Barron, Miller, & Davis, 2007). To identify the number of profiles, two-, three- and four-profile latent models were tested based on the criteria outlined by Nylund, Asparouhov and Muthén (2007).The best fitting model was determined by evaluating a combination of absolute (Vuong–Lo–Mendell–Rubin likelihood ratio test [VLMR-LRT] and Lo–Mendell–Rubin adjusted likelihood ratio test [LMRA-LRT]) and relative (AIC, BIC, SSA-BIC) fit indices (Nylund et al., 2007). VLMR-LRT and LMRA-LRT compare models where a non-significant p value indicates that the model with one less profile should be accepted (Lo, Mendell, & Rubin, 2001). Generally, a decrease in AIC, BIC, ABIC indicate better fit. Entropy, a measure of aggregated classification uncertainty, was also used to evaluate the model fits in LPA. A high value of entropy, usually closer to one, indicates high discrimination among the latent profiles (Muthén & Muthén, 2007). The emergent profiles from the model were determined based on the posterior probabilities (Muthén, 2001; Tein, Coxe, & Cham, 2013). Posterior probability is the likelihood an individual belongs to the profile based on his or her response pattern to questionnaire items. A value of posterior probability, closer to one, indicates high probability of accurate assignment (Muthén, 2001). A series of one-way analyses of variance (ANOVA) were conducted with profile membership serving as the independent variable and the identified underlying factors of conceptions of assessment as the dependent variables. The intent was to examine whether there were significant differences across groups with distinctive characteristics toward conceptions of assessment. A Bonferroni correction (α = .05) was used to determine the statistical difference in the post-hoc test to control for Type I error. Effect sizes were reported using partial eta squared, which is frequently used in the educational research literature (Richardson, 2011). A value lower than 0.06 was interpreted as a small effect, a value of 0.06 to 0.14 a medium effect, and a value higher than 0.14 a large effect when comparing the group differences (Cohen, 1988). LPA was performed using Mplus 7 (Muthén & Muthén, 2012) and all other data analyses were conducted in SPSS 24 (IBM, 2016).

IV Results

1 Descriptive statistics

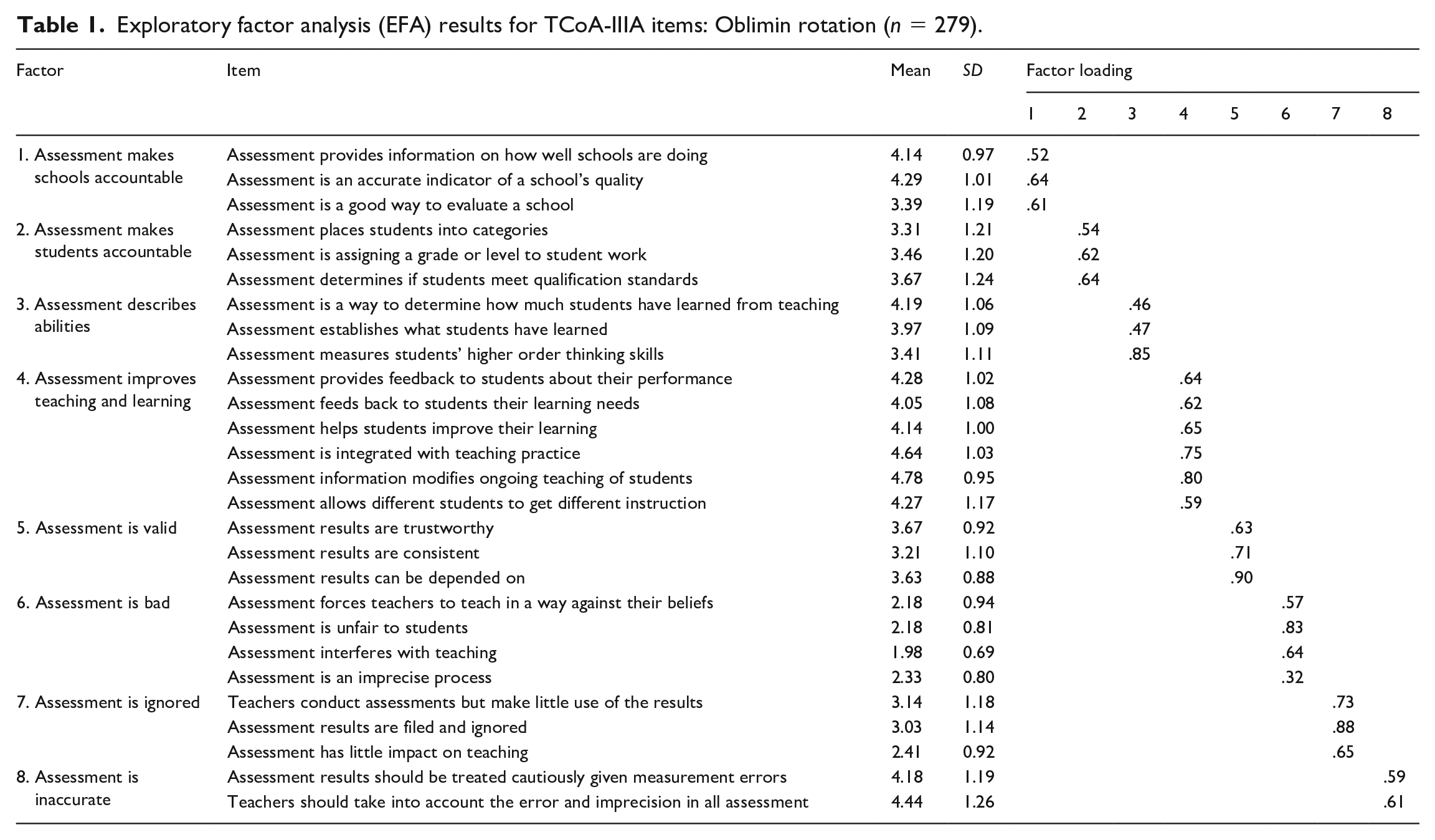

Descriptive statistics were first calculated to demonstrate MTCSOL pre-service teachers’ conceptions of assessment. The means of the questionnaire item scores ranged from 1.99 to 4.79 on a 6-point rating scale, and the standard deviations from 0.83 to 1.15, indicating that our participants held varying degrees of agreement with different conceptions of assessment. The participants slightly (score of 3) to moderately (score of 4) agreed with items that described assessment as school accountability, student accountability and improvement. A wider range of degree of agreement was found among items describing the irrelevance of assessment, as the participants mostly (score of 2) disagreed to moderately (score of 4) agreed with these items. Table 1 shows the descriptive statistics of the questionnaire responses.

Exploratory factor analysis (EFA) results for TCoA-IIIA items: Oblimin rotation (n = 279).

2 Factor analyses

Brown’s (2006) original model with four latent second-order factors did not fit the data due to negative error variances in the first-order factors. Therefore, EFA was used to explore the pre-service teachers’ conceptions of assessment. The analysis yielded an eight-factor solution for 27 questionnaire items measuring pre-service teachers’ conceptions of assessment, accounting for 65.26% of the total variance. All of the questionnaire items had factor loadings over .3 and there was no cross loading. Table 1 also presents the factor loading matrix of the eight-factor solution. These eight factors were labeled with reference to Brown’s (2006) descriptions of item assignments and interpretation. Factor 1 was named Assessment makes schools accountable (School accountability), including three items. Factor 2, Assessment makes students accountable (Student accountability), also comprised three items, as did Factor 3, Assessment describes abilities. The fourth factor, Assessment improves teaching and learning, comprised six items. All the three items considered by Brown (2006) to assess conceptions of assessment as improving teaching and three items identified as improving learning were assigned to this factor. Factor 5, Assessment is valid, comprised three items. Four items were assigned to Factor 6, Assessment is bad, three of which were from Brown’s (2006) Assessment is bad and one from Assessment is inaccurate (i.e. ‘Assessment is an imprecise process’). Factor 7, identified as Assessment is ignored, comprised three items. Factor 8, Assessment is inaccurate, comprised two items. All factors except for Factors 4, 6 and 8 included the same items as were assigned by Brown (2006) to similar factor titles. The eight factor inter-correlated solution was tested in CFA and found to have acceptable fit (x2/df = 1.51, p < .001; CFI = .94; GFI = .91; RMSEA = .043; SRMR = .058). Hierarchical models were examined based on the results of previous research (Brown, 2006; Brown & Remesal, 2012; Daniels et al., 2014). One simplified second-order structure, in which Assessment describes abilities, Assessment improves teaching and learning, and Assessment is valid belong to Improvement, was found with acceptable model fit (x2/df = 1.55, p < .001; CFI = .93; GFI = .90; RMSEA = .045; SRMR = .065). Although this hierarchical model with six inter-correlated factors, of which one had a second-order structure, had somewhat worse fit than the eight factor inter-correlated model, this model was retained as it better explained how pre-service teachers conceived the nature and the purposes of assessment.

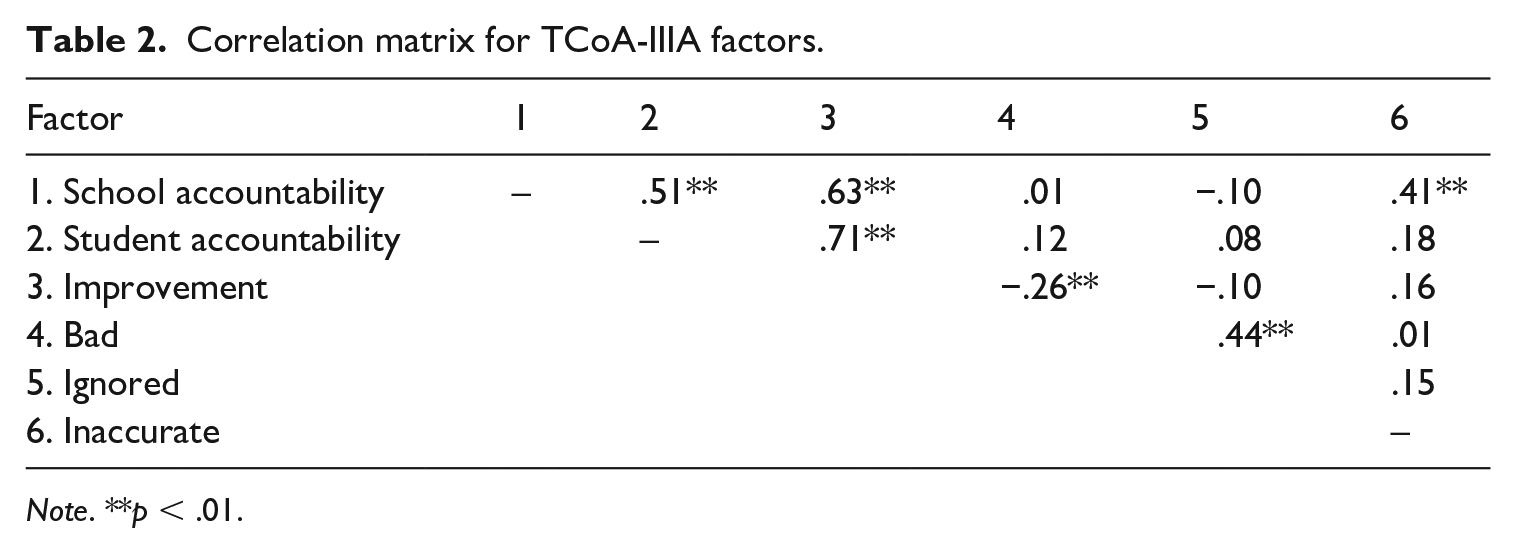

Significant correlations were found between School accountability, Student accountability and Improvement. Interestingly, Assessment is inaccurate was found positively correlated with School accountability. This suggests that pre-service teachers think assessments used to judge the quality of schools are inaccurate. A negative relationship was discovered between Improvement and Assessment is bad, suggesting that pre-service teachers who believe that assessment improves teaching and learning are less likely to believe that assessment is bad. Table 2 displays the correlations of factors generated from the questionnaire.

Correlation matrix for TCoA-IIIA factors.

Note. **p < .01.

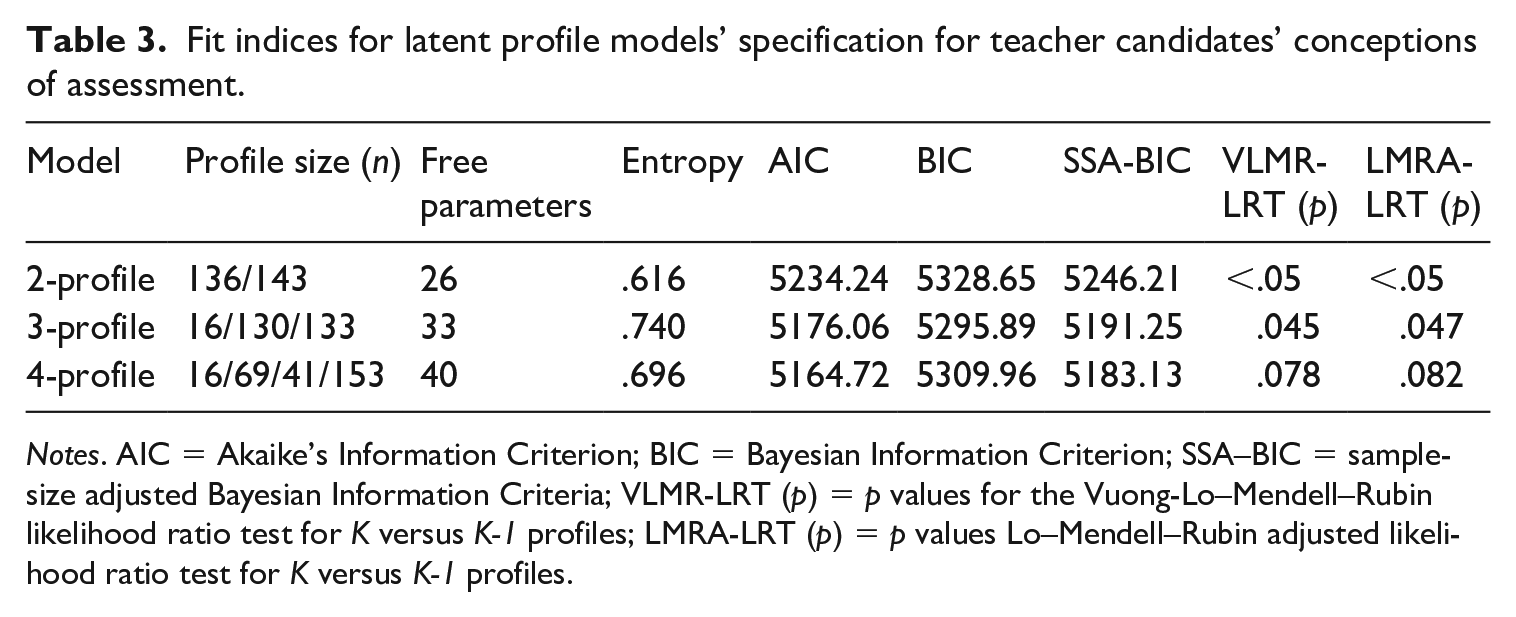

3 Latent profile analysis

Based on the results of CFA, LPA with one second-order factor was used to determine emergent profiles of MTCSOL pre-service teachers’ conceptions of assessment. A three-profile model showed the best statistical fit for the sample in that it allowed for a statistical examination of individuals from different latent profiles. A high entropy value (.740) and high posterior probabilities (.874–.919) that individuals belonged to their assigned profiles suggested the three latent profiles were highly discriminating. The values of AIC, BIC and SSA-BIC decreased largely at the three-profile model. The results of VLMR-LRT and LMRA-LRT, used to compare the fit of models that specify different numbers of profiles, indicated that the three-profile model had a better fit than the two- and four-profile models. The fit indices for the three latent profile models are shown in Table 3. The three latent profiles were described as balanced (n = 16, 5.7% of our sample), improvement-oriented (n = 133, 47.7%) and negative (n = 130, 46.6%). Although the number of pre-service teachers in the balanced profile was quite small, the profile was kept for two reasons. First, this profile emerged from both three- and four-profile latent models tested with high posterior probabilities (i.e. .919 in three-profile model; .930 in four-profile model), indicating it was statistically distinct from the other two profiles. Second, further examining individuals’ response patterns on conceptions of assessment across three profiles, pre-service teachers in this profile were found to report relatively higher scores on the factors of accountability and improvement, which were different from their counterparts who only reported high scores on improvement factors and those who reported relatively low scores on all eight factors in the other two profiles. Thus, this profile was retained as it well represented a distinct group of individuals who shared a similar degree of agreement on a particular combination of conceptions of assessment.

Fit indices for latent profile models’ specification for teacher candidates’ conceptions of assessment.

Notes. AIC = Akaike’s Information Criterion; BIC = Bayesian Information Criterion; SSA–BIC = sample-size adjusted Bayesian Information Criteria; VLMR-LRT (p) = p values for the Vuong-Lo–Mendell–Rubin likelihood ratio test for K versus K-1 profiles; LMRA-LRT (p) = p values Lo–Mendell–Rubin adjusted likelihood ratio test for K versus K-1 profiles.

A series of ANOVAs were performed to examine if there were significant associations between profile membership and the eight first-order factors of conceptions of assessment. Significant differences were found between the groups on:

Assessment makes schools accountable: F(2, 276) = 47.85, p < .001, η2 = .26;

Assessment makes students accountable: F(2, 276) = 126.03, p < .001, η2 = .48;

Assessment describes abilities: F(2, 276) = 64.14, p < .001, η2 = .32;

Assessment improves teaching and learning: F(2, 276) = 70.01, p < .001, η2 = .33;

Assessment is valid: F(2, 276) = 65.79, p < .001, η2 = .32;

Assessment is bad: F(2, 276) = 65.24, p < .001, η2 = .32; and

Assessment is ignored: F(2, 276) = 25.41, p < .001, η2 = .16.

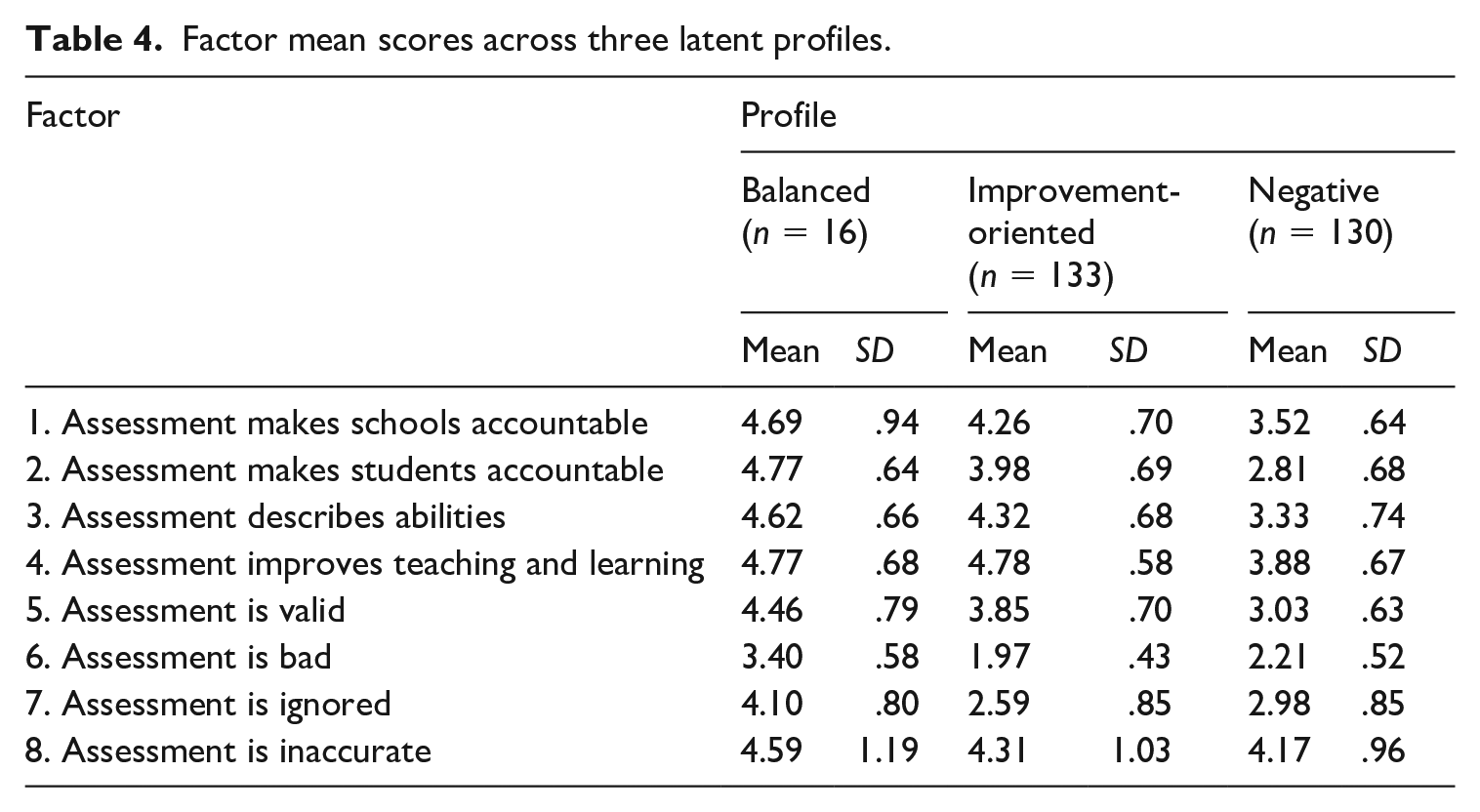

There was no statistically significant difference on Assessment is inaccurate, as pre-service teachers in all three groups moderately agreed with the inaccuracy of assessment. Table 4 displays the factor mean scores across the three latent profiles of pre-service teachers with respect to the factors measuring their conceptions of assessment.

Factor mean scores across three latent profiles.

Pre-service teachers who were assigned to the balanced profile, which was the smallest profile group, indicated higher scores for all eight conceptions of assessment factors. The Bonferroni post hoc tests revealed that these pre-service teachers had significantly stronger beliefs in all factors than those in the negative profile, except for Assessment is inaccurate. Individuals in this profile also reported significant higher scores than those in the improvement-oriented profile on Student accountability, Assessment describes abilities, Assessment is valid, Assessment is bad and Assessment is ignored. Pre-service teachers in this profile considered formative and summative assessment comparably important. They tended to believe in the benefits of different assessment approaches and to be alert to the use of assessment and the interpretation of assessment results.

Pre-service teachers in the improvement-oriented profile reported higher scores on the factors related to improvement, especially on Assessment improves teaching and learning. The Bonferroni post hoc tests indicated that pre-service teachers in this profile had significantly lower scores for Assessment is bad and Assessment is ignored than those in either the balanced profile or the negative profile. However, pre-service teachers in this profile reported relatively high scores for Assessment is inaccurate as their counterparts in the other two profiles. These findings suggested that pre-service teachers in this profile were more likely to attach higher value to formative and alternative assessments and tended to agree that the fundamental purpose of assessment was to improve teaching practice and student learning. Meanwhile, they were cautious about the accuracy of assessment.

Pre-service teachers classified as negative reported relatively low scores on all eight first-order factors. Teachers in this profile on average slightly agreed with all factors except for Assessment is inaccurate, which they moderately agreed with. The Bonferroni post hoc tests indicated that pre-service teachers in this profile had significantly lower scores than those in either the balanced or improvement-oriented profiles on all the factors, except for Assessment is inaccurate. These findings indicate that teacher candidates in this profile were hesitant over the usefulness of different assessment approaches and were inclined to question the accuracy of assessment.

V Discussion

This study explored pre-service teachers’ conceptions of assessment in the context of MTCSOL, an emerging teacher education program in mainland China. The study also used a person-centered approach to examine the variability of these conceptions among the teacher candidates. The findings highlight several differences and similarities in relation to those documented in previous studies.

1 Pre-service Chinese language teachers’ conceptions of assessment

In terms of the first research question, the EFA results generated eight first-order factors: Assessment makes schools accountable, Assessment makes students accountable, Assessment describes abilities, Assessment improves teaching and learning, Assessment is valid, Assessment is bad, Assessment is ignored and Assessment is inaccurate. A hierarchical model with only one second-order factor (i.e. Improvement) was found through CFA in this study. Whereas the findings were similar to those reported in Brown and Remesal (2012) and Daniels et al. (2014), in which less second-order factors emerged from samples of pre-service teachers, they were different from prior work exploring in-service teachers’ conceptions of assessment (Brown, 2006; Harris & Brown, 2009). The differences between pre-service and in-service teachers might be associated with the participants’ identities. In this study, the MTCSOL pre-service teachers might consider themselves more as students than as teachers. Their conceptions of assessment were mostly influenced by their prior learning experiences, which were centered around high-stakes testing. The respondents tended to see the various purposes and functions of assessment in a discrete manner (Daniels et al., 2014). This student-based perspective may also explain why the two factors of Assessment improves teaching and Assessment improves learning in Brown’s (2004a, 2006) model were combined into a single factor of Assessment improves teaching and learning in the present study. It is necessary to facilitate a role-change among pre-service teachers to help them transition from students to teachers before embarking on the teaching profession. Their conceptions of assessment may serve as a critical lens through which to examine the transition process, particularly with regard to their ability to differentiate assessment’s dual functions of improving teaching and improving learning (Barnes et al., 2017; Brown & Remesal, 2012).

In addition, improvement conceptions were found positively correlated with conceptions of school and student accountabilities. This is consistent with the findings of previous studies conducted in high-stakes assessment contexts (Brown et al., 2009; Daniels et al., 2014; Levy-Vered & Alhija, 2018; Li & Hui, 2007). The positive correlations between improvement and accountability conceptions once again substantiated the entrenched examination-dominant culture in the Chinese context. Success in high-stakes public examinations is not only a motivator for students to learn, but also an important criterion that is applied to evaluate the ability of teachers and to represent the quality and reputation of schools (Brown & Gao, 2015). The pre-service teachers who were confronted with and strived to survive in academic competitions as students seemed to agree with the notion that testing is a powerful way to improve student learning and to help them become more responsible for their learning (Brown et al., 2009, 2011b).

Despite the significant influence of high-stakes assessment in the Chinese context, it is worth noting that the factor of Assessment is inaccurate was positively correlated with School accountability. Previous studies have found that teachers were concerned about measurement errors in high-stakes examinations and doubtful about the results, which play a decisive role in students’ chances of accessing higher education (He, Opposs, & Boyle, 2010). The pre-service teachers in the MTCSOL program had recently passed the National Graduate Entrance Examination, a high-stakes examination in mainland China that decides on admission to Master’s programs offered by accredited universities. Their accumulated prior experiences with high-stakes examinations from schooling through to higher education in terms of classifying students and assigning limited educational resources might have led to their sensitivities to measurement errors and formed a conception that assessment is inaccurate when it is associated with accountability.

The negative correlation between Improvement and Assessment is bad is also noteworthy. These pre-service teachers tended to agree that assessment improved quality of teaching and students learning and assessment was not bad for teachers or students. This may be a positive sign of the implementation of formative and authentic approaches to instruction and assessment in the curriculum reform in the past decades (Ministry of Education, 2010). However, as Improvement was significantly correlated with school and student accountabilities in this study, one should notice that using assessment to prepare students for achieving high scores in high-stakes examinations was still an important facet of improvement in the Chinese context due to the high consequences to school reputation and students’ opportunities for higher education that these pre-service teachers had experienced.

The factor structure, item assignments and correlations between factors that emerged in this study echo prior research in which pre-service teachers’ responses to the TCoA inventory varied across sociocultural contexts with different assessment policies (Brown & Remesal, 2012; Daniels et al., 2014; Levy-Vered & Alhija, 2018). The eight robust first-order factor solution emerged from EFA and Brown’s (2006) multidimensional model of the TCoA are alike, which was less found in previous Chinese literature (Li & Hui, 2007). The similarities between these two models may be caused by the specific teacher group in the present study, a group of pre-service teachers prepared to teach Chinese language in an international context, which is different from those teachers who teach local students in the entrenched examination-dominant Chinese culture in previous studies. Brown and his colleagues (2019) argued that the original model of TCoA, developed in New Zealand and validated with Queensland primary teachers in low-stakes assessment contexts, varied across jurisdictions with different contextual frameworks and even with different teacher groups within societies. Future studies are recommended to compare the conceptions of assessment of pre-service teachers who would teach international or local students in the Chinese context to further explore the various models of TCoA inventory. The present study further revealed the nexus between accountability, improvement and negative conceptions of assessment (i.e. Assessment is inaccurate and Assessment is bad), which supports the argument of Barnes et al. (2017) that teachers might hold multiple and sometimes even competing conceptions of assessment simultaneously. It is necessary to develop the ability of pre-service teachers to reconcile the multiple purposes and functions of assessment with socially entrenched practices and personal experiences.

2 Latent profile analysis

Latent profile analysis was conducted to address the second research question. Although the descriptive findings showed that MTCSOL pre-service teachers rated factors of accountability and improvement relatively high and factors of irrelevance relatively low, which is in line with previous studies (Brown, 2011a; Daniels et al., 2014; Li & Hui, 2007), the LPA results indicated variability in the conceptions of assessment held by pre-service teachers within the same culture. The most prevalent profile was improvement-oriented, with almost half of the participants fitting into this category. Pre-service teachers in this group viewed improvement in teaching and learning as the primary purpose of assessment instead of school and student accountabilities. These pre-service teachers also rated relatively low on the factors related to irrelevance of assessment (i.e. Assessment is bad; Assessment is ignored), indicating they agreed that assessment was not bad for teachers or students. This may be a good sign for the curriculum reform that was implemented recently to promote formative assessment and holistic development in mainland China.

A small number of pre-service teachers were described as balanced. The relatively high scores that pre-service teachers in this group gave to the factors of accountability and improvement suggest an integrative and balanced view of the various purposes of assessment and the potential consequences (Chen & Cowie, 2016; Stiggins, 2008). They were aware of the purposes and functions of both summative and formative assessment and were more likely to treat them as compatible. Previous studies have indicated that pre-service teachers who had learned more about assessment in teacher education programs were likely to hold more diverse and positive conceptions of assessment (Levy-Vered & Alhija, 2018; Smith et al., 2014). It is possible that these pre-service teachers had gained more knowledge about assessment through relevant courses or training, as they tended to have a better understanding of the multiple purposes of assessment, which is a learning outcome that teacher educators endeavor to help their students to achieve by the end of a teaching education program. On the other hand, teacher educators should be cautious with the balanced view of accountability and improvement held by the pre-service teachers on the purposes of assessment. As educated in an examination-orientated culture, pre-service teachers are likely to rate highly on the factors of accountability and improvement due to the context that accountability assessments operate to improve teaching and learning in their prior experience as students (Brown et al., 2011b). The balanced conceptions of assessment held by pre-service teachers in this profile may be different from those found in the low-stakes contexts that put less emphasis on standardized tests. Future qualitative studies are recommended to examine how pre-service teachers in this profile interpret the factors of accountability and improvement. Unpacking their beliefs about assessment may be a good starting point to introducing alternative views of the purposes of assessment advocated in other jurisdictions.

It should be noted that more than 40% of the participants in the sample were categorized into the negative profile. This finding suggests that many MTCSOL pre-service teachers still believe that assessment is irrelevant to or disconnected from teaching and learning. This may be caused by their prior experience as students in a high-stakes, public-examination-controlled system (Brown et al., 2011b). The result poses a serious challenge to the implementation of assessment-related training in the program. Chinese L2 learners, especially those who are acquainted with education systems that value formative and alternative assessment, might bring to classrooms views about assessment that vary from or compete with those of their Chinese language teachers, which is the role for which these candidate teachers are being prepared. Considering the influence of conceptions of assessment in assessment practices, it is reasonable to argue that without sufficient training in assessment, the pre-service teachers categorized into the negative profile may retain negative views of assessment in their classrooms, and that this may prevent them from utilizing the learning potential of assessment. To address this issue, teacher educators need to enhance the quality of assessment courses in teacher education programs by introducing pre-service teachers to various assessment methods and their purposes, with the aim of helping teacher candidates develop a comprehensive understanding of assessment and to cater to the needs of their international students by gaining the ability to integrate into classrooms assessment methods that are valued in different sociocultural contexts (Barnes et al., 2017; Levy-Vered & Alhija, 2018).

Interestingly, MTCSOL pre-service teachers across the three profiles gave relatively high ratings to items under the factor of Assessment is inaccurate. As both these items (i.e. ‘Assessment results should be treated cautiously given measurement errors’ and ‘Teachers should take into account the error and imprecision in all assessment’) were related to measurement errors in assessment, the results imply that pre-service teachers were alert to errors, which might be attributable to their prior experiences of schooling (Daniel et al., 2014). It has been noted that in high-stakes assessment contexts, students are under tremendous pressure to achieve academic success and tend to interpret assessment as inaccurate when their examination scores do not match their expectations so as to protect their self-esteem (Covington, 2000). Incorporating the basic foundational principles of measurement and testing and the approaches to avoiding measurement errors into the MTCSOL programs is essential. With a solid knowledge base, pre-service teachers will be more aware of the errors that could exist in assessment and able to adopt appropriate measures to deal with them.

The LPA results provide evidence for the complexities and variability of conceptions of assessment that individual pre-service teachers may hold. The different patterns of conceptions suggest the necessity of contextualized training programs or courses on assessment for MTCSOL pre-service teachers, who demonstrated differing conceptions of assessment in this study. In addition, efforts should be made to elucidate key differences between assessment of and for learning and to help pre-service teachers reconcile the competing conceptions of assessment and the tensions between formative and summative assessment that are particularly evident in examination-dominant contexts. An integrative and balanced understanding of assessment is an essential competency that MTCSOL pre-service teachers need to develop for teaching international students with various sociocultural backgrounds (Coombs et al., 2020; Lam, 2015).

VI Conclusions

This study explored the conceptions of assessment held by pre-service teachers studying in MTCSOL programs in mainland China, and further examined the variability of these conceptions using a person-centered approach. The findings indicated that there were eight distinct first-order factors, endorsing the context-dependent nature of conceptions of assessment. Three groups of pre-service teachers were discernible, supporting the notion that not all pre-service teachers hold similar conceptions of assessment, even from the same country and population.

Despite the significance of the results found in this study, it has two limitations. First, it used a non-random sampling technique, and the results cannot be generalized to the larger population of pre-service language teachers in China. Second, the questionnaire was the sole instrument used to measure pre-service teachers’ conception of assessment. Although self-reported questionnaires are easy to administer and analyse in large samples, it is possible that participants over- or under-reported on questionnaire items based on their own understandings (Brown, 2004b). Future studies are recommended to adopt a mixed-methods approach, in which a combination of qualitative and quantitative components would allow for cross-validation of the findings of pre-service teachers’ conceptions of assessment.

Regardless of these limitations, some implications for teacher education can be drawn from the findings. First, courses or training on assessment need to be compulsory in teacher education programs. The mandatory delivery of assessment education in the MTCSOL program would provide pre-service teachers with opportunities to develop an awareness of their conceptions of assessment and of how these conceptions influence their assessment practices. A revision of the existing curriculum of the program in this direction is urgently needed. Second, assessment education needs to be tailored to pre-service teachers’ multiple beliefs about assessment. Teacher educators are recommended to work with pre-service teachers from different latent profiles to help them narrow the gap between their conceptions and practice of assessment and the conceptions and assessment approaches advocated by local and international educational policies (Coombs et al., 2020). Third, a balanced focus on summative examination-oriented assessment and formative improvement-oriented assessment is required in courses or training on assessment. Given the diverse backgrounds of their future students, pre-service teachers must be equipped with knowledge of both summative and formative assessment and be informed of the benefits of combining the functions of both (Lam, 2015).

This study provides a preliminary result in relation to MTCSOL pre-service teachers’ conceptions of assessment. Future research could investigate this group of teachers’ conceptions of assessment alongside their assessment practice in assessment education to derive a better understanding of the relationship between beliefs and practices in assessment. Another possible avenue for future research is to compare pre-service and in-service L2 Chinese language teachers’ conceptions of assessment to explore the factors contributing to conceptual changes. To explore the possible variations of models using TCoA inventory across different teacher groups with societies, future studies may also compare the conceptions of assessment of pre-service teachers who would teach local or international students in the Chinese context.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The first author received research funding from International Culture Exchange School Research Support Scheme of Shanghai University of Finance and Economics (Project No. 2018003). The authors have no conflicts of interest to disclose in regard to this manuscript.