Abstract

Background

Early-onset depression contributes significantly to long-term disability and suicide, making high-quality healthcare for young people with depression a critical concern. Medical record review (MRR) is widely used to assess healthcare quality. However, its application to depression care for children and adolescents appears underexplored, with no consensus on conceptualising or measuring quality. This systematic review aimed to evaluate how quality was operationalised in primary studies using MRR in this context.

Methods

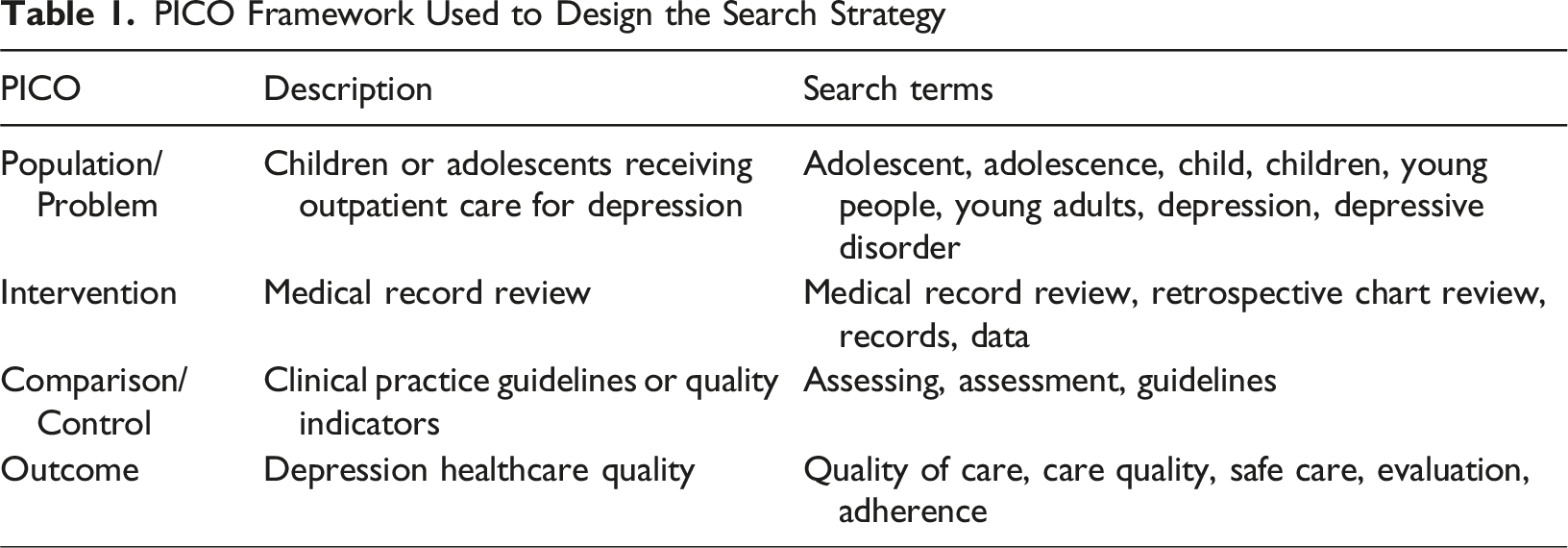

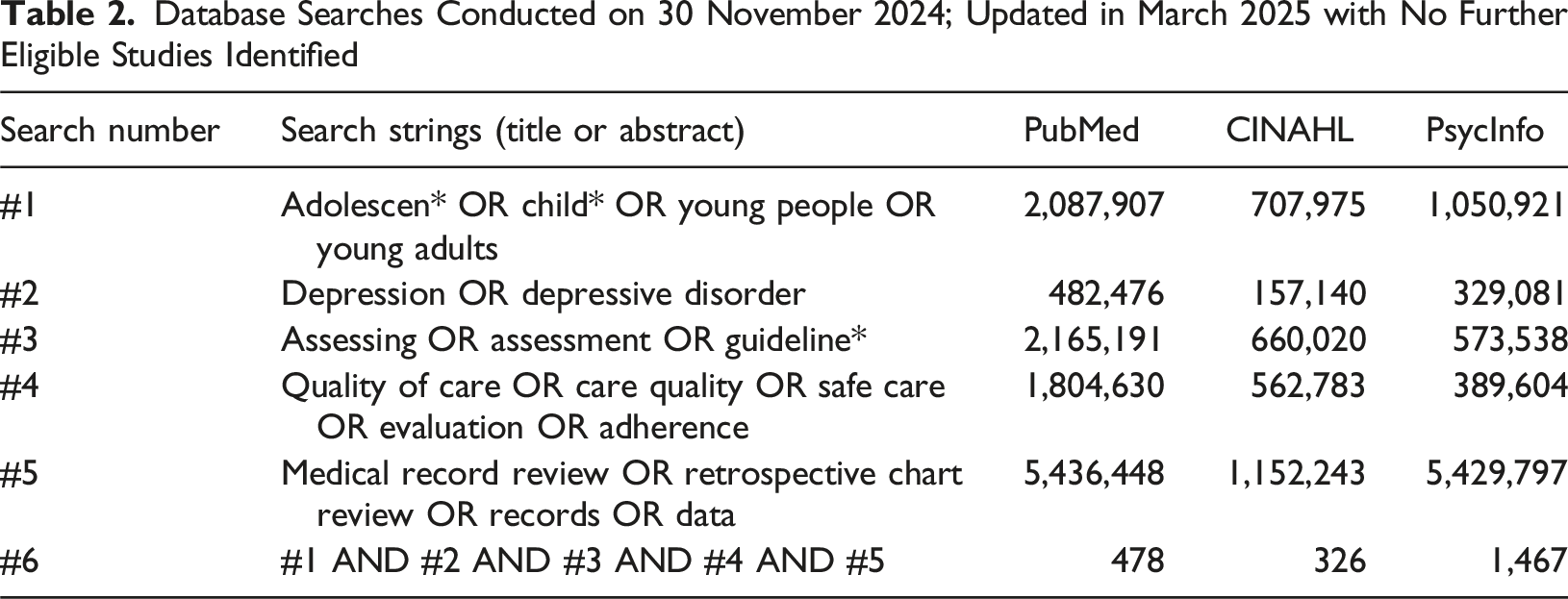

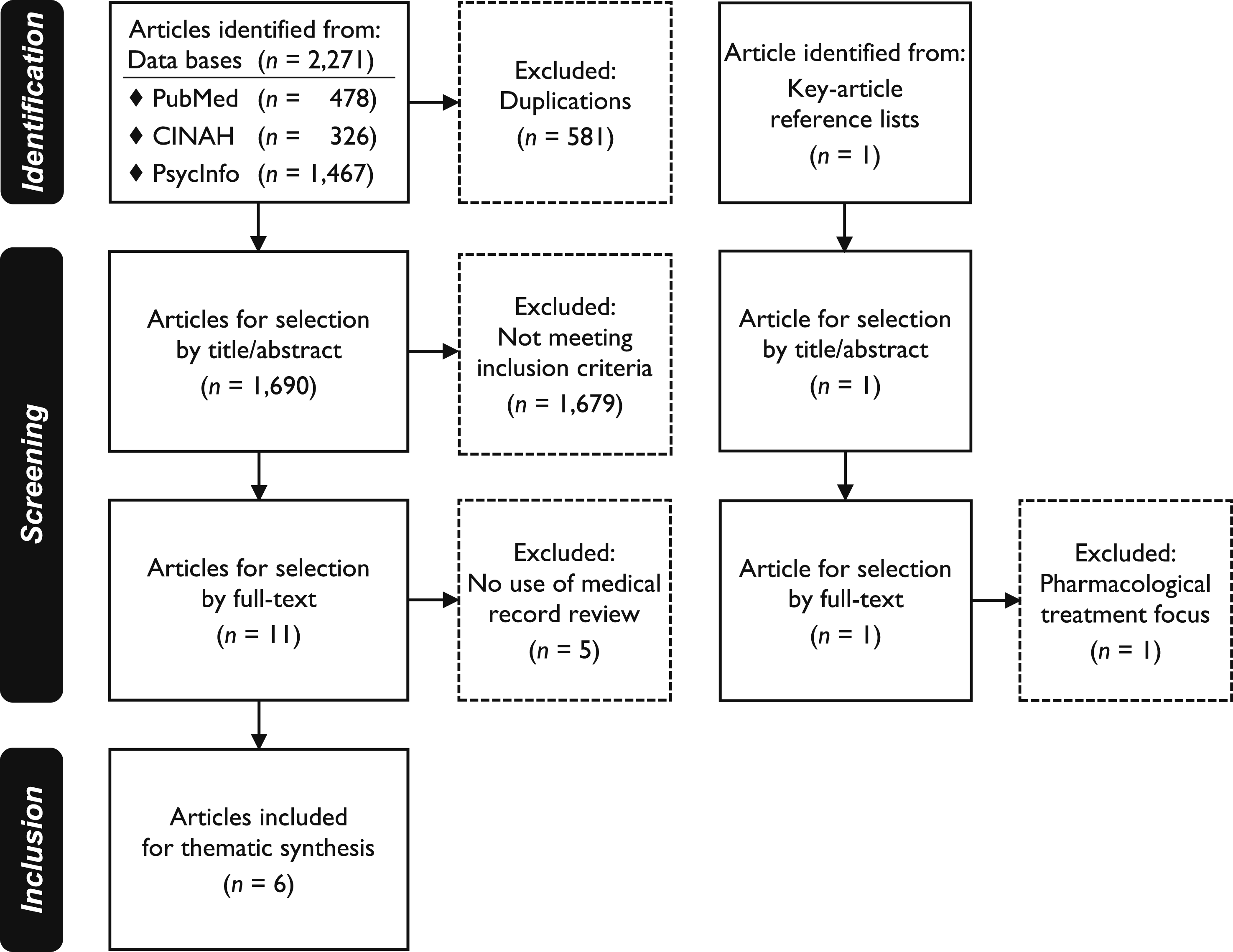

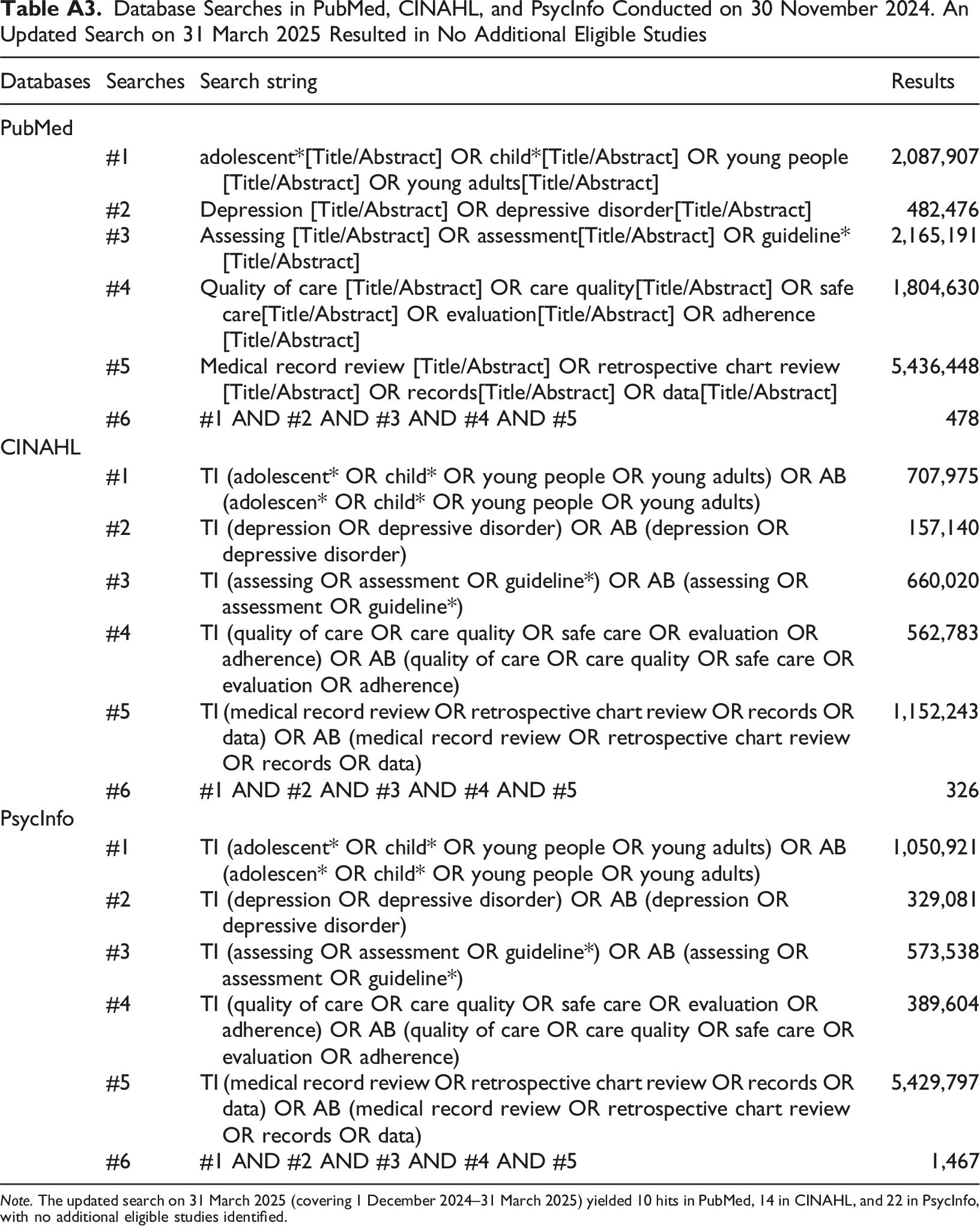

A structured search in PubMed, CINAHL, and PsycInfo following PRISMA guidelines identified 1,690 unique articles. Studies using MRR to evaluate outpatient depression healthcare quality for patients ≤17 years were included. Thematic synthesis was applied, focusing on methods, indicator themes, and quality framework alignment.

Results

Six studies published in 2005–2022 were included. These used 3–32 indicators covering risk assessments, diagnostic assessment, treatment, and monitoring, but indicators and operationalisation methods varied widely. Two studies reported using consensus methods. None incorporated the Institute of Medicine or World Health Organization quality frameworks. Binary opportunity indicator assessments were standard, but methods for deriving composite measures differed.

Conclusions

Despite shared themes, heterogeneity and lack of framework alignment limit comparability. Robust operationalisation methods ensuring indicator reliability and validity would strengthen future measurement of depression healthcare quality.

Plain Language Summary

Why This Study Was Done

Depression in young people is a major cause of long-term health problems and suicide, making access to high-quality care especially important. Researchers often use medical records to understand how well care is delivered, but this approach appears to be rarely used to assess the quality of depression care for children and adolescents. There is also no clear agreement on how to define or measure quality in this context.

What Was Done

Researchers searched three major databases (PubMed, CINAHL, and PsycInfo) for studies that used medical records to assess the quality of outpatient depression care for young people aged 17 and under. After screening 1,690 articles, six studies published between 2005 and 2022 met the inclusion criteria. The researchers examined how each study defined and measured quality, which areas of care they evaluated, and whether established quality frameworks were used to guide the assessments.

What Was Found

The six included studies used 3–32 indicators each to assess areas such as risk evaluation, diagnosis, treatment, and monitoring. However, the indicators themselves and how they were defined varied widely. Only two studies described using consensus-based methods to develop their indicators, and none used quality frameworks from the Institute of Medicine or the World Health Organization. All studies used simple yes/no checklists to determine whether specific actions had been documented, but the ways they grouped or summarised indicators differed.

What This Means

Although the studies addressed similar areas of care, the lack of standardisation in how quality was defined and measured makes the findings difficult to compare. To support better care for young people with depression, future research would benefit from clearer and more reliable ways to define and measure healthcare quality.

Introduction

Depression is a major cause of disability-adjusted life years among adolescents, significantly contributing to the global burden of disease (Ferrari, 2022; WHO, 2021). Since the turn of the millennium, the prevalence of depressive disorders and symptoms among young people has nearly doubled (Shorey et al., 2021). Early-onset depression increases the risk of future somatic, psychosocial, and psychiatric complications (Thapar et al., 2022). It also heightens the risk of suicide, a leading cause of death in adolescents (Moitra et al., 2021). Despite this, many children and adolescents with depression do not receive adequate care. Mental healthcare for young people suffers from insufficient and unevenly distributed resources and often fail to adhere to best practices (Kruk et al., 2018; Moitra et al., 2022; Mora Ringle et al., 2019; Wickersham et al., 2024). These inadequacies impose substantial societal costs (Bodden et al., 2018), underscoring the need for high-quality outpatient depression care for young people. However, knowledge of care processes within child and adolescent mental health services (CAMHS) remains limited, and quality assessment lacks consensus on conceptualisation, measurement, and use of established global healthcare quality frameworks (Kilbourne et al., 2018; Leslie et al., 2018; Quinlan-Davidson et al., 2021; Skokauskas et al., 2019; Williams & Beidas, 2019).

Quality Conceptualisation and Operationalisation

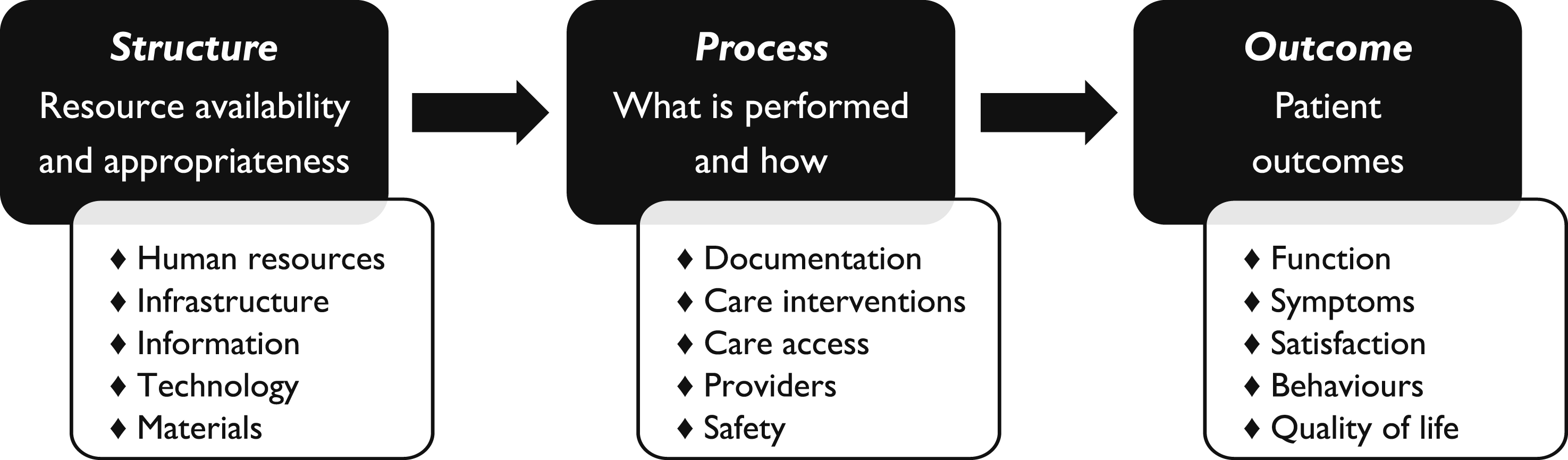

Healthcare quality is an abstract concept with definitions varying by context and perspective (Wensing et al., 2020). It is therefore essential to define, conceptualise, and operationalise quality clearly within its specific context (Vassar & Holzmann, 2013; Wensing et al., 2020). The Donabedian model (Donabedian, 2005) has long shaped healthcare quality research by categorising quality into three elements: Structure – resources, environments, or organisational conditions; Process – healthcare activities and system performance; and Outcome – results and effects following interventions, as illustrated in Figure 1. The model posits that structures influence processes, which in turn affect outcomes. While patient outcomes are the ultimate quality measure, they often take time to manifest and are influenced by factors beyond healthcare processes (Lewandowski et al., 2013). Consequently, process measures play a critical role in quality assessment. The Donabedian model for quality improvement, with suggested indicators (Donabedian, 2005)

Process measures can be based on empirical standards (how processes are) or normative standards (how processes should be). When valid and reliable, these measures can reveal associations between changes in structure or processes and specific patient outcomes (Kilbourne et al., 2018; Wensing et al., 2020). The Institute of Medicine (IOM, 2001) outlined six quality aims – healthcare should be: Safe, Effective, Efficient, Patient-centered, Equitable, and Timely. These aims are the outcome measures within the Implementation Outcomes Framework (Proctor et al., 2011), aligning with the World Health Organization (WHO, 2006) quality dimensions. However, their implementation has been hindered by limited evidence on quality measures in CAMHS that align with these frameworks, highlighting a global evidence gap (Quinlan-Davidson et al., 2021).

Quality Measurement

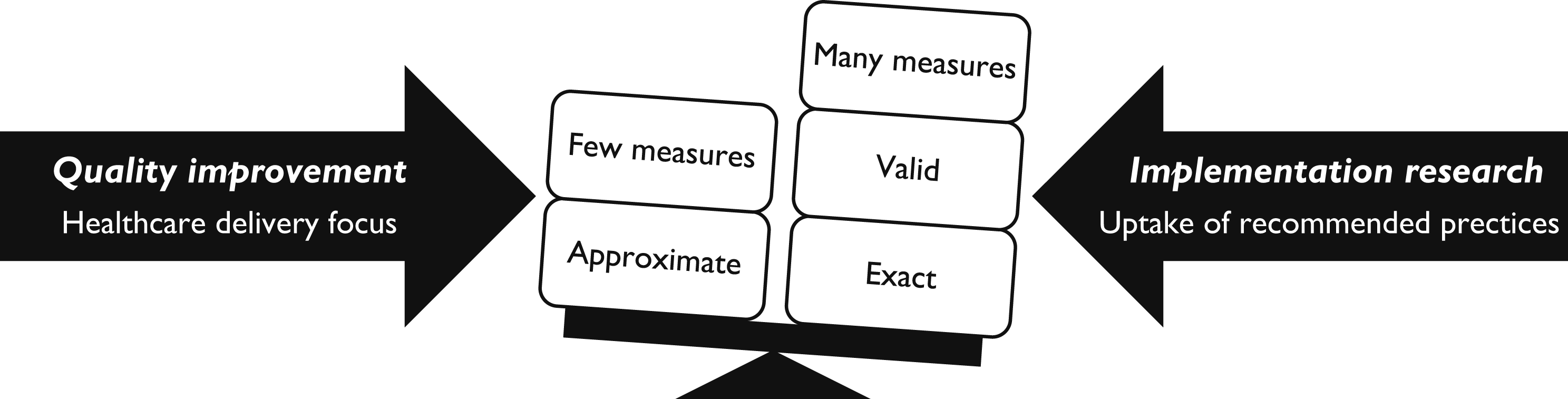

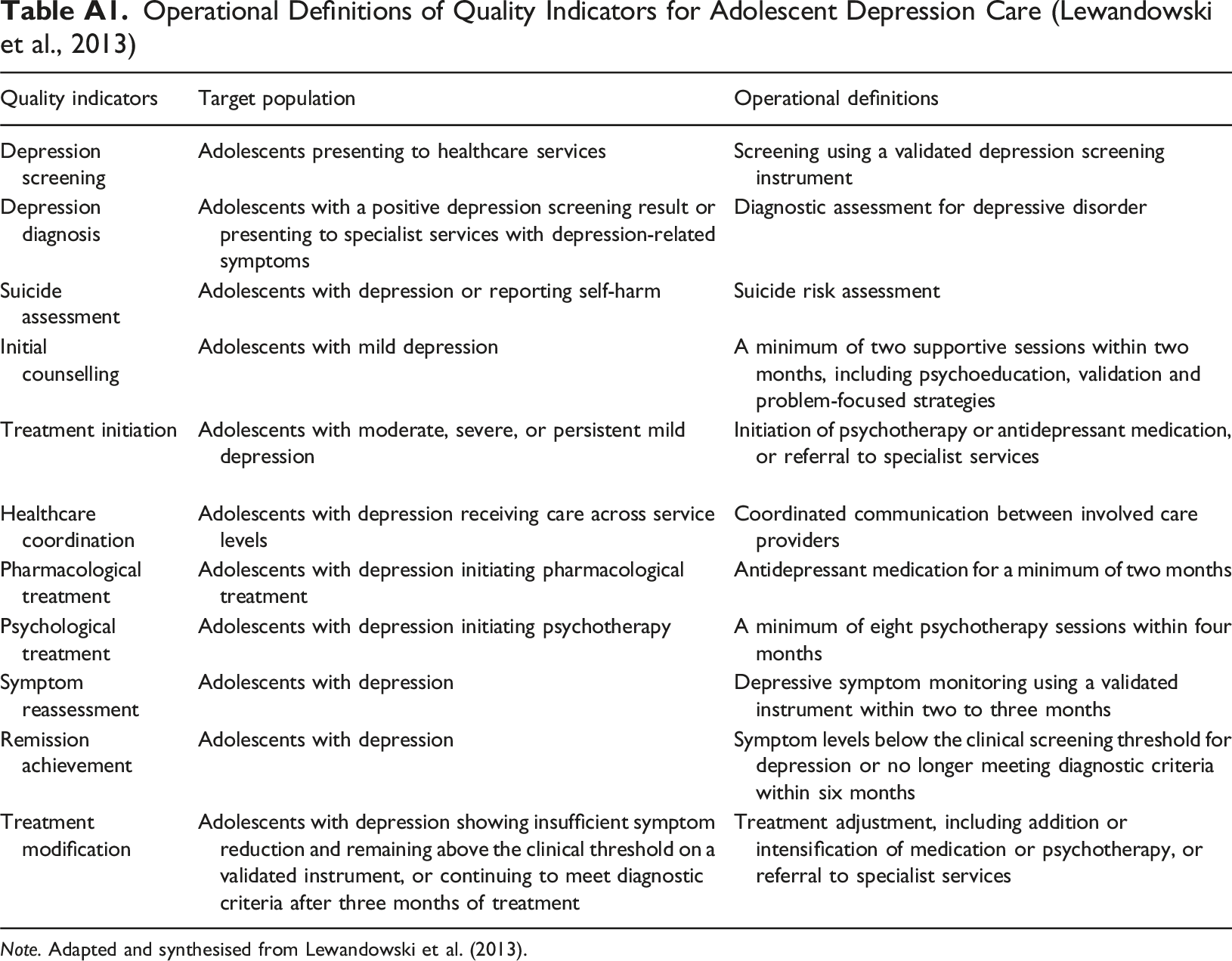

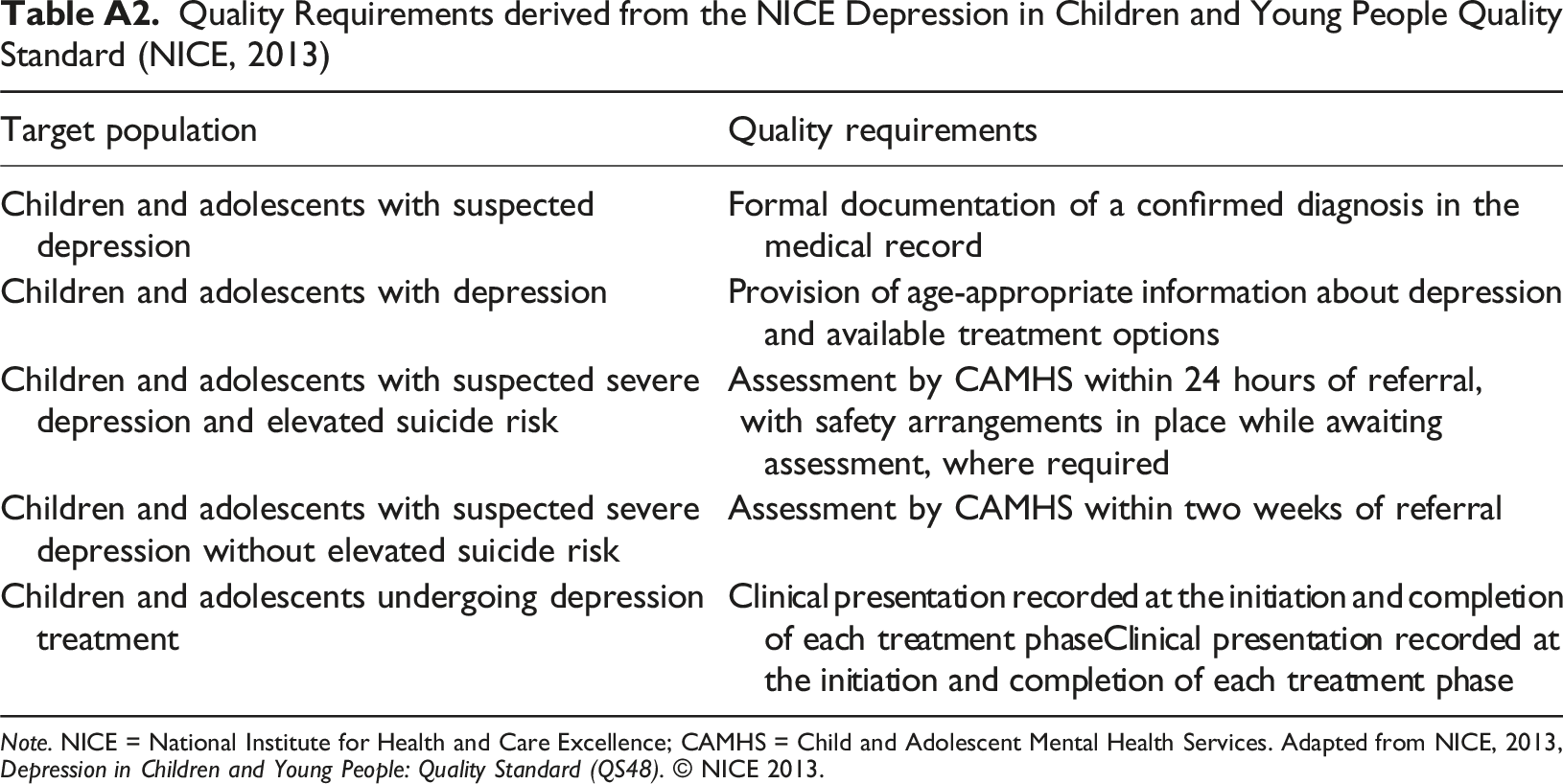

Medical record review (MRR) is a well-established method for evaluating healthcare quality, allowing for retrospective analysis of care processes (Gearing et al., 2006; Vassar & Holzmann, 2013; Worster & Haines, 2004). Direct observation is an alternative but risks influencing care delivery (Donabedian, 2005). While administrative data enables long-term process measurement for large populations, it provides limited insights into detailed care processes (Kilbourne et al., 2018). Operationalising healthcare quality measures, particularly for depression care, is challenging due to variability in conceptualisation and measurement. The aim of quality measurement is expected to guide indicator development (Wensing et al., 2020), as illustrated in Figure 2. Yet, efforts to establish universal psychiatric quality indicators have yielded few measures suitable for outpatient CAMHS (Fisher et al., 2013; Iyer et al., 2016). Quality indicators for adolescent depression care, presented in Appendix Table A1, have been applied to electronic health records (Lewandowski et al., 2013). However, further research is needed to validate these measures, which do not align with other standards, as outlined in Appendix Table A2 (NICE, 2013). Quality indicator characteristics based on aim: quality improvement or implementation research (Wensing et al., 2020)

The validity and reliability of MRR depend on the use of comparable measures and consistent analytical methods, making a two-step operationalisation essential (Vassar & Holzmann, 2013). The first step involves defining indicators that are relevant, evidence-based, applicable, and feasible (McGlynn, 2010; Vassar & Holzmann, 2013; Wensing et al., 2020). Consensus methods (Bourrée et al., 2008), such as the RAND Appropriateness Method (RAM), Delphi method, consensus development conferences, or nominal groups, can strengthen indicator validity (Wensing et al., 2020), with RAM demonstrating evidence of validity (McGlynn, 2010). The second step aims to enhance validity and reliability by reviewing the literature to identify how comparable studies have operationalised similar quality concepts and indicators (Vassar & Holzmann, 2013). Despite its utility, MRR for outpatient CAMHS depression care appears underexplored, with existing tools and studies primarily addressing inpatient safety or pharmacological aspects (Hetrick et al., 2012; Nilsson et al., 2020; Socialstyrelsen, 2021). Specific, agreed-upon, and contextualised indicators aligned with quality frameworks have been called for (Kilbourne et al., 2018; Leslie et al., 2018; Quinlan-Davidson et al., 2021; Skokauskas et al., 2019; Williams & Beidas, 2019). Without such standardisation, the use of various instruments can lead to inconsistent assessments with a low degree of comparability, increasing diversity.

Objectives

The primary objective was to systematically review how quality has been operationalised in primary studies using MRR to assess the quality of outpatient depression healthcare for children and adolescents – focusing on indicator development methods, thematic areas, analytical approaches, and alignment with quality frameworks – while also evaluating consistency and proposing strategies to strengthen the validity and reliability of future quality improvement research.

Method

This review was based on primary studies published in peer-reviewed journals. The systematic literature search, review, and thematic synthesis followed the PRISMA (Preferred Reporting Items for Systematic reviews and Meta-Analyses) 2020 statement (Page et al., 2021; Rethlefsen et al., 2021) and the SWiM (Synthesis Without Meta-Analysis) reporting guideline (Campbell et al., 2020).

Search Strategy and Eligibility Criteria

PICO Framework Used to Design the Search Strategy

Articles were eligible if they focused on children or adolescents aged up to 17 years diagnosed with depression, evaluated outpatient depression quality using MRR, and included quantitative quality indicators. Primary care studies were included if the healthcare processes were comparable to those in outpatient CAMHS. Studies focusing exclusively on primary care screening, pharmacological treatment (not typically recommended as first-line treatment for this age group), or subgroups with depression associated with specific somatic conditions or traumatic events were excluded.

Selection, Data Abstraction, and Analysis

Searches were conducted on 30 November 2024. The first author performed a stepwise manual selection process, starting with titles and abstracts, followed by full-text assessment for eligibility. Reference lists of key articles (Ellis et al., 2019; Hermens et al., 2015; Pile et al., 2020) were also hand-searched by two authors (SR and HJ) to identify any additional eligible studies. An updated search was conducted by the first author on 31 March 2025, covering the period from 1 December 2024 to 31 March 2025. As no further eligible studies were identified, the PRISMA flow diagram and all associated tables reflect the original search results. Because this review focused exclusively on methodological approaches to quality operationalisation, no formal evidence grading was conducted. Data extraction was performed by the first author, with the included studies and extracted data independently reviewed and verified by another author (BH). Consensus was confirmed through author discussions.

Extracted data included basic study characteristics (authorship, publication year, study country, setting, patient age, and sample size), quality indicator development methods (quality standards and consensus methods), quality indicators used in MRR (individual indicators and composite measures/domains with occurrences), and analytical approaches (how indicators were assessed and reported, relevant complementary measures or analyses, and use of IOM or WHO frameworks). To ensure clarity, “indicators” in this review refer to individual quality measures, and “domains” to composite measures, regardless of primary study terminology. Supplementary materials of the included articles were also reviewed where available. A thematic synthesis, conducted by the first author, and verified by another author (BH), identified common indicator themes and methodological variations.

Results

Database Searches Conducted on 30 November 2024; Updated in March 2025 with No Further Eligible Studies Identified

Exclusions

The majority of articles met none or only one of the inclusion criteria: children or adolescents, depressive disorder, or MRR methodology. Several of these studies focused on adult patients, pharmacological treatment alone, mental health conditions other than depression, and/or depression associated with specific somatic conditions – neurological, oncological, endocrine, and cardiac diseases – or traumatic events, where interventions primarily targeted the somatic condition or trauma. The selection process is outlined in Figure 3. Among the 12 full-text articles assessed, which met more than one inclusion criteria, five were excluded for not using MRR, while one focused on pharmacological treatment (Hetrick et al., 2012). Identification and selection of peer-reviewed articles for systematic review. Initial searches conducted on 30 November 2024

Search Outcomes

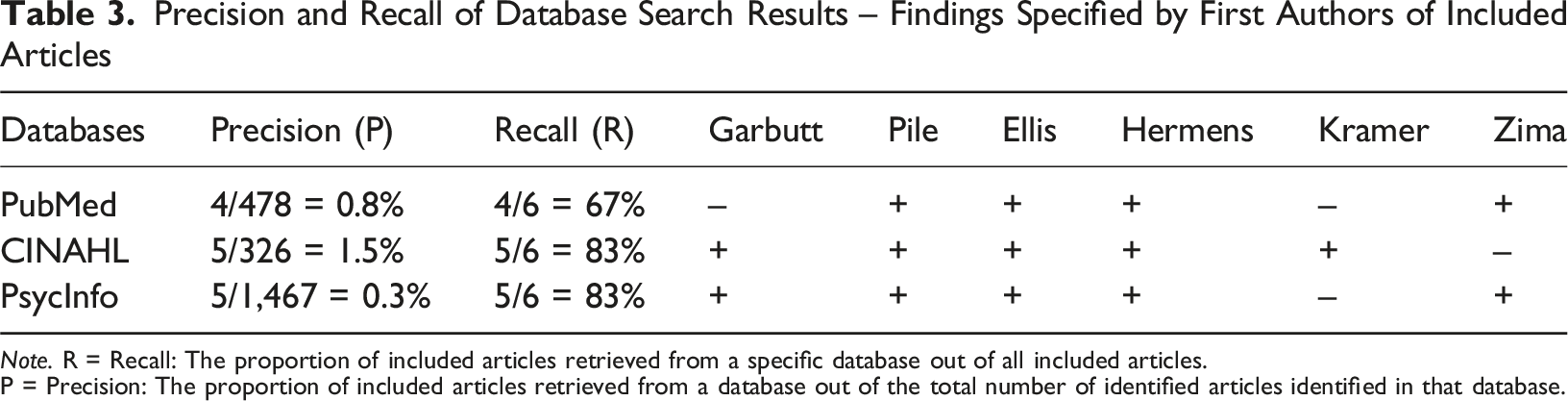

Precision and Recall of Database Search Results – Findings Specified by First Authors of Included Articles

Note. R = Recall: The proportion of included articles retrieved from a specific database out of all included articles.

P = Precision: The proportion of included articles retrieved from a database out of the total number of identified articles identified in that database.

Study Characteristics

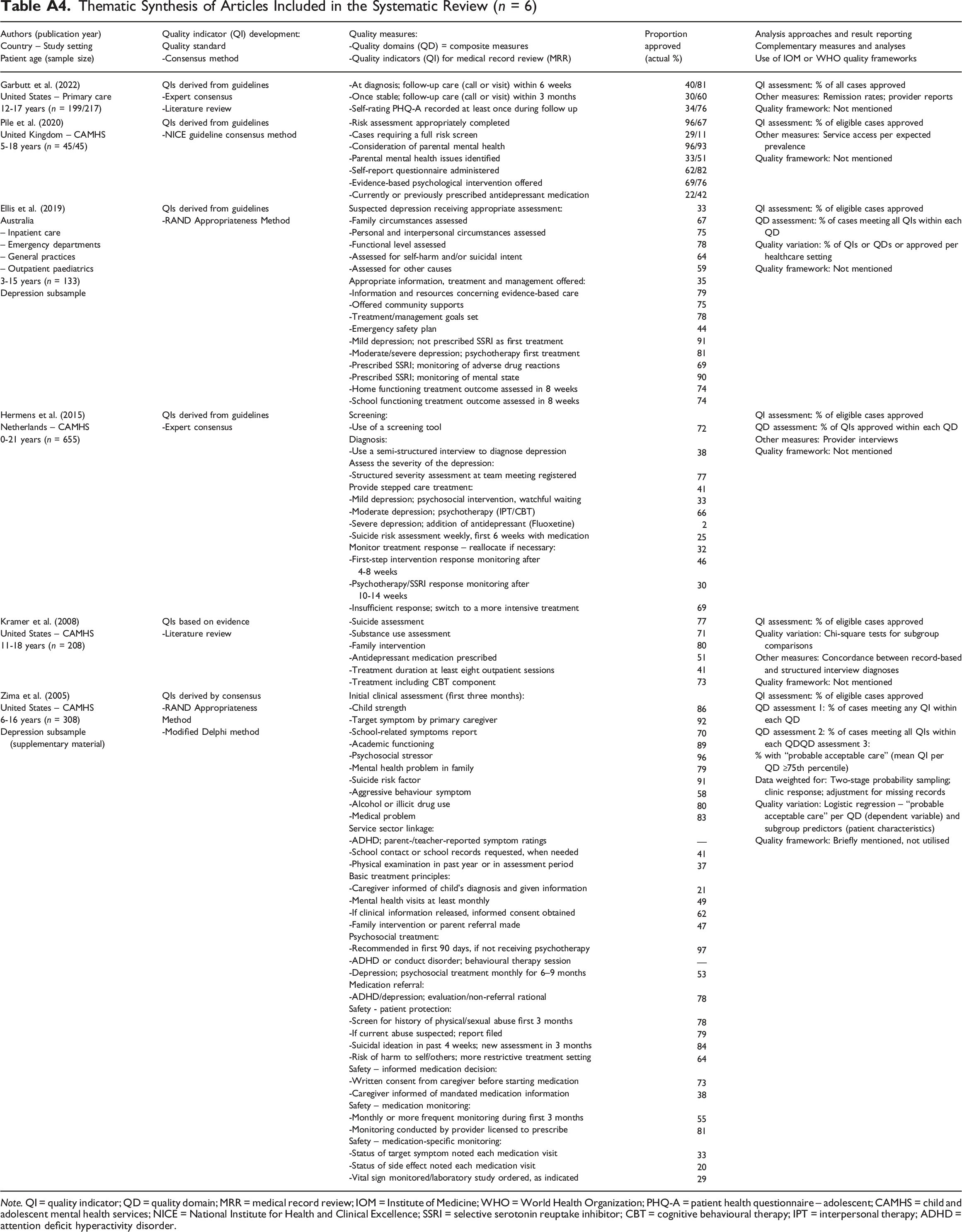

The six included studies were conducted in the United Kingdom (Pile et al., 2020), Australia (Ellis et al., 2019), the Netherlands (Hermens et al., 2015), and the United States (Garbutt et al., 2022; Kramer et al., 2008; Zima et al., 2005). Patient ages ranged from 0 to 21 years, with sample sizes varying between 45 and 655 medical records. The articles were published between 2005 and 2022. Detailed characteristics for each study are provided in Appendix Table A4.

Indicator Development and Operationalisation

Four studies developed their indicators based on clinical guidelines (Ellis et al., 2019; Garbutt et al., 2022; Hermens et al., 2015; Pile et al., 2020). The other two used a literature review (Kramer et al., 2008) and consensus methods, including RAM and a modified Delphi process (Zima et al., 2005). Two studies explicitly detailed their use of established consensus methodologies (Ellis et al., 2019; Zima et al., 2005). These studies also evaluated healthcare quality across multiple diagnoses, presenting depression-specific results separately in the main text or supplementary materials. Two studies reported both pre- and post-intervention proportions of approved indicators (Garbutt et al., 2022; Pile et al., 2020).

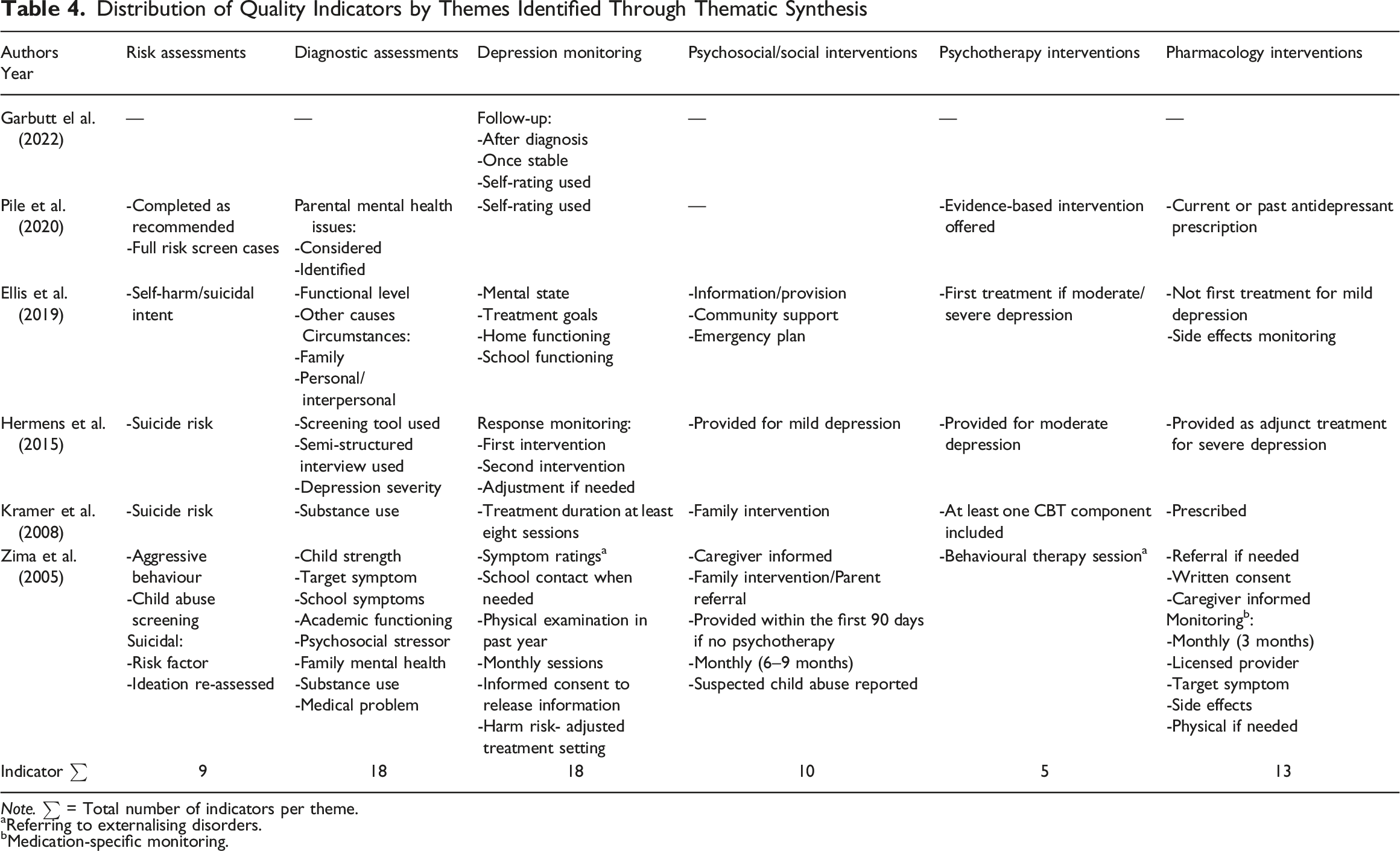

The studies reported a total of 73 indicators, ranging from 3–32 per study, with a median of 8.5. Indicators covered the themes of risk assessment, diagnostic assessment, treatment, and monitoring/follow-up. Binary opportunity indicator assessment (approved/not approved) was universally applied. Hence, continuous scales were not used. The operationalisation methodologies were inconsistently described, and none of the included studies linked their quality concepts or indicators to the IOM or WHO frameworks (IOM, 2001; WHO, 2006). Full data abstraction is available in Appendix Table A4.

Quality Indicators

Distribution of Quality Indicators by Themes Identified Through Thematic Synthesis

Note. ∑ = Total number of indicators per theme.

aReferring to externalising disorders.

bMedication-specific monitoring.

Quality Domains

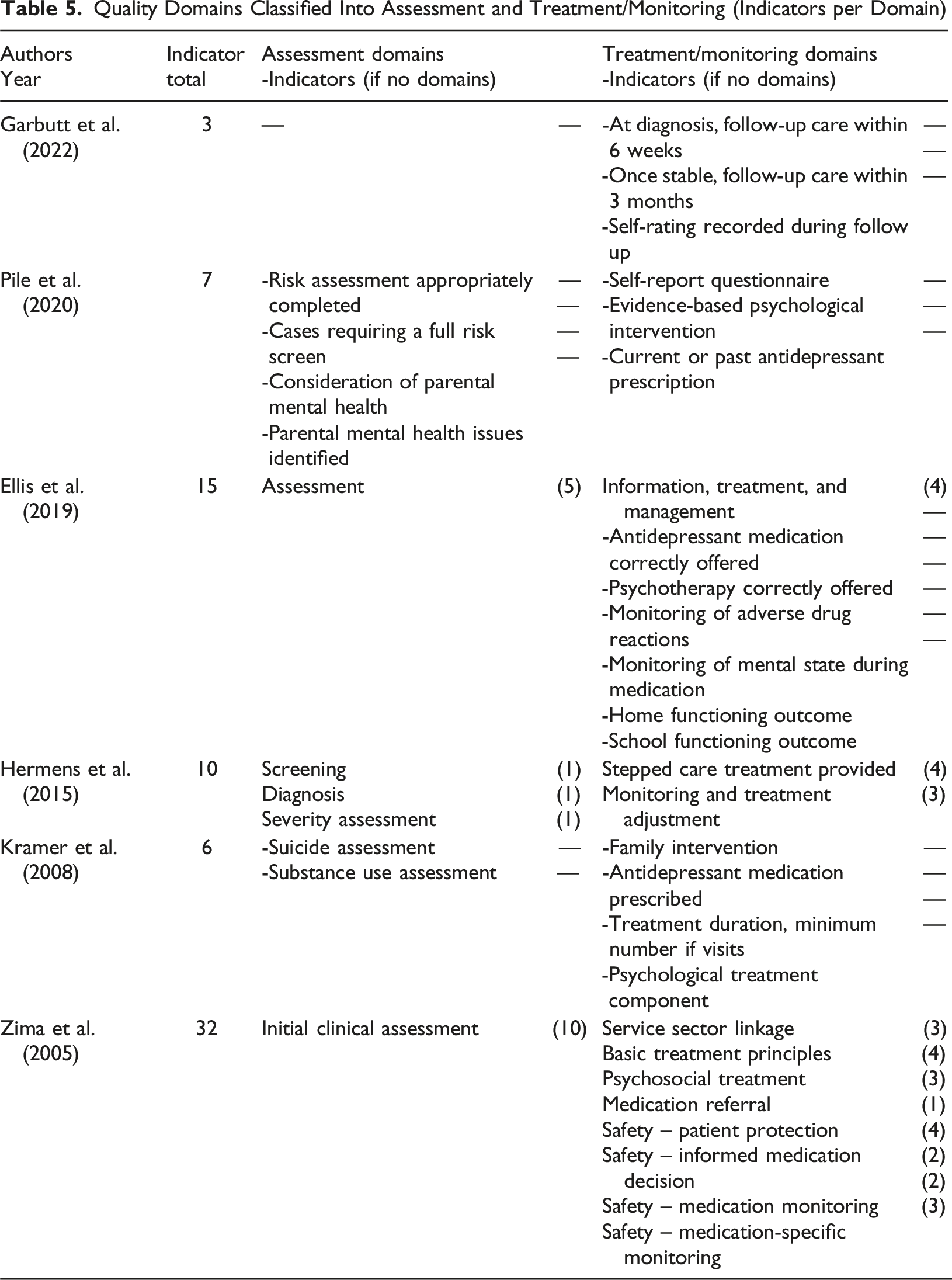

Three of the six studies grouped their quality indicators into domains (Ellis et al., 2019; Hermens et al., 2015; Zima et al., 2005), each comprising between one and 10 indicators. The number of domains in these studies ranged from two to nine, covering two main areas: assessment and treatment/monitoring. One study also reported six indicators without domain affiliation (Ellis et al., 2019), although these were all related to treatment/monitoring. This grouping highlights variations in how studies categorised their indicators.

Quality Domains Classified Into Assessment and Treatment/Monitoring (Indicators per Domain)

Analytical Approaches

All studies utilised binary opportunity scoring for quality indicators. Since some indicators applied only to subsets of records, adherence rates were calculated based on the relevant medical records for each indicator. Thus, this approach was used for indicators related to pharmacological treatment. Two studies further examined variations in quality between subgroups defined by patient or clinical characteristics using statistical tests, such as Chi-square (Kramer et al., 2008) and logistic regression (Zima et al., 2005).

Studies presenting quality domains adopted different approaches to analysing these composite measures (Ellis et al., 2019; Hermens et al., 2015; Zima et al., 2005), none of which were empirically derived. One study calculated domain adherence as the average proportion of approved indicators within the domain (Hermens et al., 2015), while the other two defined thresholds for approved quality (Ellis et al., 2019; Zima et al., 2005). Of these, one required all indicators within a domain to be approved to meet the quality threshold (Ellis et al., 2019). The other presented three measures: the proportion with any domain indicator approved, the proportion with all domain indicators approved, and the proportion with a domain indicator mean at or above the 75th percentile, which was defined as the threshold for “probable acceptable care” (Zima et al., 2005).

Two studies incorporated mixed methods, adding complementary qualitative data from healthcare providers (Garbutt et al., 2022; Hermens et al., 2015). Another study analysed service access in relation to expected prevalence (Pile et al., 2020), while one examined concordance between MRR diagnoses and those derived from structured diagnostic interviews (Kramer et al., 2008). The level of methodological detail varied across studies, complicating comparisons of analytical approaches. Further details are provided in Appendix Table A4, which also presents the approved proportions for each quality indicator.

Discussion

This systematic review, using no date restrictions, identified only six primary studies assessing outpatient depression healthcare quality for children and adolescents using quality indicators in medical records. All were conducted in Western countries with well-developed psychiatric services for this population, as reflected in clinical practice guidelines (Hickie et al., 2019; NICE, 2019; Walter et al., 2023). However, quality measures were inconsistent, and quality conceptualisation was evidently insufficient. The operationalisation of healthcare quality was sparsely described in some studies, and methodologies for quality indicator development varied. While all studies reported some form of content validity, four based their indicators on clinical practice guidelines. This approach can be challenging, as high-quality interventions in complex clinical settings may not always align with guideline recommendations.

Two studies explicitly mentioned using established consensus methods (RAM and modified Delphi), potentially enhancing indicator validity (Ellis et al., 2019; Zima et al., 2005). These studies also provided more detailed methodological descriptions. Three studies reported very few quality indicators (Garbutt et al., 2022; Kramer et al., 2008; Pile et al., 2020), whereas the others included more detailed and numerous indicators – in line with characteristics linked to research aims, as illustrated in Figure 2 – and encompassed broader aspects of healthcare quality (Ellis et al., 2019; Hermens et al., 2015; Zima et al., 2005). These latter studies summarised quality indicators into domains, which may facilitate linkage to global quality frameworks. All studies employed opportunity scoring, allowing for contextual appropriateness – for example recommending pharmacological treatment for severe depression but not for mild depression. However, they all relied on binary indicator assessment, limiting granularity in evaluating healthcare quality.

Quality Indicators and Domains

Common themes among quality indicators included risk assessments, diagnostic assessments, monitoring/follow-up, and various treatment interventions. Risk assessment indicators were the most homogeneous – perhaps reflecting the influence of existing tools focusing on patient safety. In contrast, diagnostic assessment and monitoring/follow-up indicators showed the greatest variability. Complex clinical conditions remain challenging to operationalise through indicators, which may explain the heterogeneity among indicators. Overall, indicators were rarely described consistently or aligned with the nationally advocated quality indicators presented in Appendix Tables A1 and A2 (Lewandowski et al., 2013; NICE, 2013). This lack of consensus confirms findings from previous research (Kilbourne et al., 2018; Leslie et al., 2018; Lewandowski et al., 2013; Quinlan-Davidson et al., 2021; Skokauskas et al., 2019; Williams & Beidas, 2019).

Three studies utilised quality domains related to assessment or treatment/monitoring (Ellis et al., 2019; Hermens et al., 2015; Zima et al., 2005). None employed empirical methodologies for summarising indicators, and domain adherence was reported differently across studies. Two studies defined thresholds for domain approval, but their approaches varied: one required all indicators within a domain to be met (Ellis et al., 2019), while the other empirically defined a threshold for “probable acceptable care” (Zima et al., 2005). Although empirical methodology is appealing, the latter approach introduces variability in standards, as it depends on measurement rather than predefined criteria. Conversely, high normative standards may lead to overly stringent assessments, which a continuous quality scale could mitigate. However, different approaches can lead to incomparability between studies. Thus, providing multiple adherence measures may offer a practical solution.

Global Quality Frameworks

None of the studies linked their quality concepts to the IOM or WHO quality frameworks (IOM, 2001; WHO, 2006), confirming their infrequent use and highlighting the lack of standardised frameworks in operationalisation. Retrospective application of such frameworks is impractical given the nature of existing indicators. Determining how best to represent the IOM aims (Safe, Effective, Efficient, Patient-centered, Equitable, and Timely) remains challenging, as domains and indicators often overlap across multiple aims. Nonetheless, applying global frameworks in future research may be essential for enabling cross-contextual comparisons of healthcare quality across diagnoses, treatments, settings, and demographics.

Complementary Measurements

Four studies employed additional methods alongside MRR (Garbutt et al., 2022; Hermens et al., 2015; Kramer et al., 2008; Pile et al., 2020), consistent with the Donabedian model’s three elements: Structure, Process, and Outcome (Donabedian, 2005), as outlined in Figure 1. For instance, one study examined the concordance between medical record diagnoses and those from structured diagnostic interviews, linking process and outcome measures (Kramer et al., 2008). Another combined provider reports, MRR follow-up indicators, and remission rates based on patient self-ratings, addressing all three elements (Garbutt et al., 2022). This approach aligns with the Implementation Outcomes Framework (Proctor et al., 2011), where complementary measures provide a comprehensive understanding of intervention outcomes.

MRR findings may be skewed by insufficient documentation, which could inaccurately suggest poor healthcare quality. However, high-quality documentation is itself a key component of healthcare quality. Patient and provider assessments of perceived healthcare quality are valuable complements to process indicators but require sufficiently validated methods (Wensing et al., 2020). For instance, dissatisfaction with ineffective treatment may not necessarily indicate poor quality, but addressing such experiences remains an essential aspect of healthcare quality. Understanding the purpose, interpretation, and interrelation of different measures is crucial for accurate quality assessments (Kilbourne et al., 2018; Wensing et al., 2020).

Implications

This systematic review highlights substantial heterogeneity in how MRR quality indicators are operationalised across studies. Despite some common themes, the lack of standardisation and alignment with global quality frameworks limits comparability. Without such standardisation, there is a risk of generating multiple concepts and frameworks, leading to inconsistent assessments with limited comparability. Quality indicators based on guidelines and scientific literature have been proposed, as detailed in Appendix Table A1, yet they appear to have had little influence on quality measurement within this field and provide limited insight into the quality of care processes. This underscores the need for adopting more rigorous methodologies for operationalising quality, especially in research settings (Wensing et al., 2020). Strengthening the evidence base for healthcare quality measurement is essential, and the recommended two-step operationalisation of MRR indicators is crucial in achieving consensus. However, addressing processes tailored to complex clinical conditions remains a challenge.

Although identifying systemic barriers to the use of MRR in CAMHS was beyond the scope of this review, several remarks and reflections can nonetheless be made. The two-step operationalisation of quality indicators, which enhances the validity and reliability of MRR (Vassar & Holzmann, 2013), places substantial demands on knowledge, skills, and time. Another complicating factor is the variable quality and consistency of CAMHS medical records concerning depression – particularly regarding therapeutic interventions, which are often inconsistently documented in free-text clinical notes (Wickersham et al., 2024). In addition, a thorough understanding of both what information is recorded and how is crucial when conducting MRR. Based on the authors’ own experience, CAMHS medical records relating to depression care present considerable interpretative challenges. Greater standardisation of record systems across different providers has been recommended (Wickersham et al., 2024). Taken together, these observations suggest that resource limitations may be an important factor explaining the infrequent use of MRR in outpatient depression care, as well as discrepancies between indicators. This interpretation aligns with previous research showing that CAMHS services generally suffer from insufficient and unevenly distributed resources (Moitra et al., 2022), and that non-adherence to clinical guidelines is associated with such resource constraints (Mora Ringle et al., 2019).

Using clinical guidelines as a foundation for quality measurement offers the advantage of clearly defined intervention descriptions, provided that the guidelines themselves are sufficiently detailed (Wensing et al., 2020). However, existing tools and studies have primarily focused on patient safety and pharmacological aspects (Hetrick et al., 2012; Nilsson et al., 2020; Socialstyrelsen, 2021), likely reflecting that such indicators are easier to operationalise – an observation supported by the homogeneity of the risk assessment indicators identified in this review. Since patient outcomes depend on multiple factors, many of which are unrelated to care processes, process indicators remain essential for quality measurement (Lewandowski et al., 2013). Quality indicators should be clearly aligned with their intended aims and areas of study, ensuring valid and reliable links between frameworks, indicators, and patient outcomes. However, this is complicated by the limited evidence on CAMHS quality measures that align with established quality frameworks (Quinlan-Davidson et al., 2021). Yet, such frameworks are fundamental not only for conceptualising quality but also for integrating rigour into operationalisation and enabling cross-study comparisons (IOM, 2001; WHO, 2006). Incorporating these frameworks would enhance validity and expand knowledge of the frameworks themselves. Nevertheless, linking quality indicators to quality frameworks can be challenging, as tensions and overlaps exist among the dimensions – Safe, Effective, Efficient, Patient-centred, Equitable, and Timely – and there is no established consensus on how these frameworks should be applied (Quinlan-Davidson et al., 2021).

The inherent difficulties in operationalising healthcare quality indicators may underscore the importance of linking these indicators to established global quality frameworks, particularly in implementation research utilising the Implementation Outcomes Framework (Proctor et al., 2011; Wensing et al., 2020). Incorporating global quality frameworks early in the operationalisation process lays the groundwork for developing appropriate quality indicators and ensures that all critical aspects are addressed. However, certain dimensions of these frameworks may be more effectively and efficiently measured through methods other than medical record review, highlighting the need for complementary approaches.

Quality assessments serve diverse purposes and rely on data sources with varying levels of availability. Ideally, indicators operationalised in research contexts could provide a foundation from which quality improvement initiatives focused on clinical care delivery may select fewer, approximate, yet still valid measures. Identifying links between specific process indicators, quality frameworks, qualitative aspects, and health outcomes could streamline future operationalisation and measurement of healthcare quality, while maintaining validity. Conversely, reliance on low-validity indicators risks displacement effects, including resource misallocation and potential adverse clinical consequences. Emerging techniques, including artificial intelligence, offer opportunities for more detailed and nuanced measurements but require robust, evidence-based quality indicators to achieve their full potential.

Strengths and Limitations

The sensitivity (recall) and precision of the searches suggest that the thorough process likely captured most available studies in this field. However, only six heterogeneous studies met the eligibility criteria, highlighting the rarity of outpatient MRR studies on depression healthcare quality for children and adolescents, likely due to the method’s resource-intensive nature. The limited number of included studies and the heterogeneity of indicators constrain the generalisability of findings. Additionally, the homogeneity of healthcare settings in these studies further restricts broader applicability. Not including studies addressing non-depressive psychiatric conditions may have limited the scope of insights, although indicators for other diagnoses would likely not align with depression-specific care. As this review focused on the operationalisation of healthcare quality in MRR, it excluded studies using electronic health records, as well as methods for assessing patient outcomes and experiences or provider perspectives on care processes. These methods, however, are important complements.

One article was initially considered eligible for inclusion but was ultimately excluded due to its primary focus on pharmacological treatment, following author discussion (Hetrick et al., 2012). The study was conducted in Australia and included a sample of 150 participants aged 15–25 years. Four quality indicators, derived from a clinical guideline, were used. However, inclusion of this article would not have affected the overall results or conclusions of the present systematic review.

Having a single investigator (SR) responsible for study selection posed a potential risk of bias and omission of relevant studies. This risk could not be fully mitigated, although another author (HJ) hand-screened the key articles to identify any additional eligible studies. The included studies and extracted data were then independently reviewed and verified by another author (BH) to minimise errors in data extraction. Due to practical constraints, detailed reasons for exclusion were not documented for studies excluded during the title and abstract screening stage.

Conclusions

This systematic review reveals substantial variation in how quality is conceptualised and measured, highlighting the need for more rigorous and standardised approaches to operationalising healthcare quality indicators for depression care in CAMHS. Aligning these efforts with established global healthcare quality frameworks can enhance the validity, reliability, and comparability of future studies. Strengthening the evidence base for quality indicators is essential not only to advance research, but also to support clinical quality improvement efforts and improve patient outcomes in this vulnerable population. The use of MRR and validated quality indicators enables the identification of specific deficiencies in healthcare delivery. As tailored approaches are often required, this can enhance the effectiveness of quality improvement initiatives aimed at optimising recommended, evidence-based care, ultimately leading to improved clinical outcomes and measurable resource efficiency. Beyond the benefits for patients and healthcare services, preventing suicide and other severe consequences contributes to wider, sustainable societal development.

Footnotes

Acknowledgement

This research was supported by Linus Brorsson, research librarian at Lund University, Lund, Sweden, whose expertise was invaluable.

Consent to Participate

No procedures involving human participants were performed.

Author Contributions

SR and HJ conceptualised the research questions, selected the key articles, defined the eligibility criteria and themes for data extraction, and hand-searched the key articles for additional eligible studies. SR and BH specified the methodology and discussed the article that was initially considered eligible for inclusion but ultimately excluded. SR conducted the systematic search, screening, review, and synthesis, and drafted the manuscript. BH independently reviewed the included studies and verified the extracted data. All authors (SR, BH, and HJ) revised the manuscript, provided critical commentary, and approved the final version.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support was provided by Region Skåne and the Stiftelsen Lindhaga (2024-12-04).

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Following the updated search on 31 March 2025, a medical record review by the same research team, Quality of depression assessments in child and adolescent psychiatry: Findings from a nationwide Swedish medical record review, was published in June 2025 (after submission of this review). Although it met the inclusion criteria, it was not included to avoid self-citation bias. This systematic review also formed part of the two-step operationalisation of quality indicators underpinning that study. The article is available at ![]() .

.

Data Availability Statement

Author Biographies

Appendix

Operational Definitions of Quality Indicators for Adolescent Depression Care (Lewandowski et al., 2013) Note. Adapted and synthesised from Lewandowski et al. (2013).

Quality indicators

Target population

Operational definitions

Depression screening

Adolescents presenting to healthcare services

Screening using a validated depression screening instrument

Depression diagnosis

Adolescents with a positive depression screening result or presenting to specialist services with depression-related symptoms

Diagnostic assessment for depressive disorder

Suicide assessment

Adolescents with depression or reporting self-harm

Suicide risk assessment

Initial counselling

Adolescents with mild depression

A minimum of two supportive sessions within two months, including psychoeducation, validation and problem-focused strategies

Treatment initiation

Adolescents with moderate, severe, or persistent mild depression

Initiation of psychotherapy or antidepressant medication, or referral to specialist services

Healthcare coordination

Adolescents with depression receiving care across service levels

Coordinated communication between involved care providers

Pharmacological treatment

Adolescents with depression initiating pharmacological treatment

Antidepressant medication for a minimum of two months

Psychological treatment

Adolescents with depression initiating psychotherapy

A minimum of eight psychotherapy sessions within four months

Symptom reassessment

Adolescents with depression

Depressive symptom monitoring using a validated instrument within two to three months

Remission achievement

Adolescents with depression

Symptom levels below the clinical screening threshold for depression or no longer meeting diagnostic criteria within six months

Treatment modification

Adolescents with depression showing insufficient symptom reduction and remaining above the clinical threshold on a validated instrument, or continuing to meet diagnostic criteria after three months of treatment

Treatment adjustment, including addition or intensification of medication or psychotherapy, or referral to specialist services

Quality Requirements derived from the NICE Depression in Children and Young People Quality Standard (NICE, 2013) Note. NICE = National Institute for Health and Care Excellence; CAMHS = Child and Adolescent Mental Health Services. Adapted from NICE, 2013, Depression in Children and Young People: Quality Standard (QS48). © NICE 2013.

Target population

Quality requirements

Children and adolescents with suspected depression

Formal documentation of a confirmed diagnosis in the medical record

Children and adolescents with depression

Provision of age-appropriate information about depression and available treatment options

Children and adolescents with suspected severe depression and elevated suicide risk

Assessment by CAMHS within 24 hours of referral,

with safety arrangements in place while awaiting assessment, where required

Children and adolescents with suspected severe depression without elevated suicide risk

Assessment by CAMHS within two weeks of referral

Children and adolescents undergoing depression treatment

Clinical presentation recorded at the initiation and completion of each treatment phaseClinical presentation recorded at the initiation and completion of each treatment phase

Database Searches in PubMed, CINAHL, and PsycInfo Conducted on 30 November 2024. An Updated Search on 31 March 2025 Resulted in No Additional Eligible Studies Note. The updated search on 31 March 2025 (covering 1 December 2024–31 March 2025) yielded 10 hits in PubMed, 14 in CINAHL, and 22 in PsycInfo, with no additional eligible studies identified.

Databases

Searches

Search string

Results

PubMed

#1

adolescent*[Title/Abstract] OR child*[Title/Abstract] OR young people[Title/Abstract] OR young adults[Title/Abstract]

2,087,907

#2

Depression [Title/Abstract] OR depressive disorder[Title/Abstract]

482,476

#3

Assessing [Title/Abstract] OR assessment[Title/Abstract] OR guideline*[Title/Abstract]

2,165,191

#4

Quality of care [Title/Abstract] OR care quality[Title/Abstract] OR safe care[Title/Abstract] OR evaluation[Title/Abstract] OR adherence[Title/Abstract]

1,804,630

#5

Medical record review [Title/Abstract] OR retrospective chart review[Title/Abstract] OR records[Title/Abstract] OR data[Title/Abstract]

5,436,448

#6

#1 AND #2 AND #3 AND #4 AND #5

478

CINAHL

#1

TI (adolescent* OR child* OR young people OR young adults) OR AB (adolescen* OR child* OR young people OR young adults)

707,975

#2

TI (depression OR depressive disorder) OR AB (depression OR depressive disorder)

157,140

#3

TI (assessing OR assessment OR guideline*) OR AB (assessing OR assessment OR guideline*)

660,020

#4

TI (quality of care OR care quality OR safe care OR evaluation OR adherence) OR AB (quality of care OR care quality OR safe care OR evaluation OR adherence)

562,783

#5

TI (medical record review OR retrospective chart review OR records OR data) OR AB (medical record review OR retrospective chart review OR records OR data)

1,152,243

#6

#1 AND #2 AND #3 AND #4 AND #5

326

PsycInfo

#1

TI (adolescent* OR child* OR young people OR young adults) OR AB (adolescen* OR child* OR young people OR young adults)

1,050,921

#2

TI (depression OR depressive disorder) OR AB (depression OR depressive disorder)

329,081

#3

TI (assessing OR assessment OR guideline*) OR AB (assessing OR assessment OR guideline*)

573,538

#4

TI (quality of care OR care quality OR safe care OR evaluation OR adherence) OR AB (quality of care OR care quality OR safe care OR evaluation OR adherence)

389,604

#5

TI (medical record review OR retrospective chart review OR records OR data) OR AB (medical record review OR retrospective chart review OR records OR data)

5,429,797

#6

#1 AND #2 AND #3 AND #4 AND #5

1,467

Thematic Synthesis of Articles Included in the Systematic Review (n = 6) Note. QI = quality indicator; QD = quality domain; MRR = medical record review; IOM = Institute of Medicine; WHO = World Health Organization; PHQ-A = patient health questionnaire – adolescent; CAMHS = child and adolescent mental health services; NICE = National Institute for Health and Clinical Excellence; SSRI = selective serotonin reuptake inhibitor; CBT = cognitive behavioural therapy; IPT = interpersonal therapy; ADHD = attention deficit hyperactivity disorder.

Authors (publication year) Country – Study setting Patient age (sample size)

Quality indicator (QI) development: Quality standard -Consensus method

Quality measures: -Quality domains (QD) = composite measures -Quality indicators (QI) for medical record review (MRR)

Proportion approved (actual %)

Analysis approaches and result reporting Complementary measures and analyses Use of IOM or WHO quality frameworks

Garbutt et al. (2022)

United States – Primary care

12-17 years (n = 199/217)QIs derived from guidelines

-Expert consensus

-Literature review-At diagnosis; follow-up care (call or visit) within 6 weeks

-Once stable; follow-up care (call or visit) within 3 months

-Self-rating PHQ-A recorded at least once during follow up40/81

30/60

34/76QI assessment: % of all cases approved

Other measures: Remission rates; provider reports

Quality framework: Not mentioned

Pile et al. (2020)

United Kingdom – CAMHS

5-18 years (n = 45/45)QIs derived from guidelines

-NICE guideline consensus method-Risk assessment appropriately completed

-Cases requiring a full risk screen

-Consideration of parental mental health

-Parental mental health issues identified

-Self-report questionnaire administered

-Evidence-based psychological intervention offered

-Currently or previously prescribed antidepressant medication96/67

29/11

96/93

33/51

62/82

69/76

22/42QI assessment: % of eligible cases approved

Other measures: Service access per expected prevalence

Quality framework: Not mentioned

Ellis et al. (2019)

Australia

– Inpatient care

– Emergency departments

– General practices

– Outpatient paediatrics

3-15 years (n = 133)

Depression subsampleQIs derived from guidelines

-RAND Appropriateness MethodSuspected depression receiving appropriate assessment:

-Family circumstances assessed

-Personal and interpersonal circumstances assessed

-Functional level assessed

-Assessed for self-harm and/or suicidal intent

-Assessed for other causes

Appropriate information, treatment and management offered:

-Information and resources concerning evidence-based care

-Offered community supports

-Treatment/management goals set

-Emergency safety plan

-Mild depression; not prescribed SSRI as first treatment

-Moderate/severe depression; psychotherapy first treatment

-Prescribed SSRI; monitoring of adverse drug reactions

-Prescribed SSRI; monitoring of mental state

-Home functioning treatment outcome assessed in 8 weeks

-School functioning treatment outcome assessed in 8 weeks33

67

75

78

64

59

35

79

75

78

44

91

81

69

90

74

74QI assessment: % of eligible cases approved

QD assessment: % of cases meeting all QIs within each QD

Quality variation: % of QIs or QDs or approved per healthcare setting

Quality framework: Not mentioned

Hermens et al. (2015)

Netherlands – CAMHS

0-21 years (n = 655)QIs derived from guidelines

-Expert consensusScreening:

-Use of a screening tool

Diagnosis:

-Use a semi-structured interview to diagnose depression

Assess the severity of the depression:

-Structured severity assessment at team meeting registered

Provide stepped care treatment:

-Mild depression; psychosocial intervention, watchful waiting

-Moderate depression; psychotherapy (IPT/CBT)

-Severe depression; addition of antidepressant (Fluoxetine)

-Suicide risk assessment weekly, first 6 weeks with medication

Monitor treatment response – reallocate if necessary:

-First-step intervention response monitoring after 4-8 weeks

-Psychotherapy/SSRI response monitoring after 10-14 weeks

-Insufficient response; switch to a more intensive treatment72

38

77

41

33

66

2

25

32

46

30

69QI assessment: % of eligible cases approved

QD assessment: % of QIs approved within each QD

Other measures: Provider interviews

Quality framework: Not mentioned

Kramer et al. (2008)

United States – CAMHS

11-18 years (n = 208)QIs based on evidence

-Literature review-Suicide assessment

-Substance use assessment

-Family intervention

-Antidepressant medication prescribed

-Treatment duration at least eight outpatient sessions

-Treatment including CBT component77

71

80

51

41

73QI assessment: % of eligible cases approved

Quality variation: Chi-square tests for subgroup comparisons

Other measures: Concordance between record-based and structured interview diagnoses

Quality framework: Not mentioned

Zima et al. (2005)

United States – CAMHS

6-16 years (n = 308)

Depression subsample (supplementary material)QIs derived by consensus

-RAND Appropriateness

Method

-Modified Delphi methodInitial clinical assessment (first three months):

-Child strength

-Target symptom by primary caregiver

-School-related symptoms report

-Academic functioning

-Psychosocial stressor

-Mental health problem in family

-Suicide risk factor

-Aggressive behaviour symptom

-Alcohol or illicit drug use

-Medical problem

Service sector linkage:

-ADHD; parent-/teacher-reported symptom ratings

-School contact or school records requested, when needed

-Physical examination in past year or in assessment period

Basic treatment principles:

-Caregiver informed of child’s diagnosis and given information

-Mental health visits at least monthly

-If clinical information released, informed consent obtained

-Family intervention or parent referral made

Psychosocial treatment:

-Recommended in first 90 days, if not receiving psychotherapy

-ADHD or conduct disorder; behavioural therapy session

-Depression; psychosocial treatment monthly for 6–9 months

Medication referral:

-ADHD/depression; evaluation/non-referral rational

Safety - patient protection:

-Screen for history of physical/sexual abuse first 3 months

-If current abuse suspected; report filed

-Suicidal ideation in past 4 weeks; new assessment in 3 months

-Risk of harm to self/others; more restrictive treatment setting

Safety – informed medication decision:

-Written consent from caregiver before starting medication

-Caregiver informed of mandated medication information

Safety – medication monitoring:

-Monthly or more frequent monitoring during first 3 months

-Monitoring conducted by provider licensed to prescribe

Safety – medication-specific monitoring:

-Status of target symptom noted each medication visit

-Status of side effect noted each medication visit

-Vital sign monitored/laboratory study ordered, as indicated86

92

70

89

96

79

91

58

80

83

—

41

37

21

49

62

47

97

—

53

78

78

79

84

64

73

38

55

81

33

20

29QI assessment: % of eligible cases approved

QD assessment 1: % of cases meeting any QI within each QD

QD assessment 2: % of cases meeting all QIs within each QDQD assessment 3:

% with “probable acceptable care” (mean QI per QD ≥75th percentile)

Data weighted for: Two-stage probability sampling; clinic response; adjustment for missing records

Quality variation: Logistic regression – “probable acceptable care” per QD (dependent variable) and subgroup predictors (patient characteristics)

Quality framework: Briefly mentioned, not utilised