Abstract

Existing challenges in surgical education (See one, do one, teach one) as well as the COVID-19 pandemic make it necessary to develop new ways for surgical training. Therefore, this work describes the implementation of a scalable remote solution called “TeleSTAR” using immersive, interactive and augmented reality elements which enhances surgical training in the operating room. The system uses a full digital surgical microscope in the context of Ear–Nose–Throat surgery. The microscope is equipped with a modular software augmented reality interface consisting an interactive annotation mode to mark anatomical landmarks using a touch device, an experimental intraoperative image-based stereo-spectral algorithm unit to measure anatomical details and highlight tissue characteristics. The new educational tool was evaluated and tested during the broadcast of three live XR-based three-dimensional cochlear implant surgeries. The system was able to scale to five different remote locations in parallel with low latency and offering a separate two-dimensional YouTube stream with a higher latency. In total more than 150 persons were trained including healthcare professionals, biomedical engineers and medical students.

Keywords

Introduction

In 2020, the COVID-19 pandemic dramatically changed medical care in many ways. It has been a driver for digitization in medicine,

1

however, there have been severe cutbacks for surgical training due to contact restrictions and emergency services in hospitals.

2

Nonetheless, digital treatment and consultation methods are being implemented quickly.

3

More and more patients and physicians are using video consultations or other digital applications in their daily routine. In 2017, only

This positive development contrasts with existing limitations of surgical training following the classical teaching paradigm “See one, do one, teach one.”6–8 These limitations become even more critical in pandemic scenarios when physicians cannot be trained to the usual extent due to contact restrictions in the operating room (OR) or canceled routine interventions. To address these limitations, simulation-based training has been proposed as a method of medical education.9–11 However, surgical training requires the acquisition of extensive knowledge of all surgical steps and a minimum number of operations under supervision. Even during the COVID-19 pandemic, students reported that ‘‘real cases” are preferred for training instead of ready-made cases. 12 Additionally, there is a lack of training measures, pedagogical, and technical education to reduce the digital illiteracy of physicians and prepare them for the ’digital OR’ in the upcoming decade.13–16

The usage of eXtended reality (XR) such as augmented reality (AR), virtual reality (VR), and mixed reality (MR) for training has been surveyed over the last years17–19 and shows potential in many ways.20–23 It can improve the surgical knowledge24,25 and help surgeons to extend the limited field-of-view during endoscopic surgeries.26–28 However, the use of live streaming technologies for surgical training is quite new with only a few concepts arise in the last eight years. 29 These concepts only focused on streaming the surgical situation using an external camera allowing the trainee an insight into the OR, but already showed a positive impact on the learning outcome 29 without enlarging patient risks. 30

In microscopic surgery, another challenge for continuous medical education (CME) presents itself: it requires the same view identically to the surgeon’s view. Currently, CME is structured as one-sided training in groups of up to 10 persons. 31 However, due to resource constraints (time, limited space) and hygienic restrictions in the OR the direct “surgical view” through the microscope cannot be delivered continuously to all trainees. This can lead to an inconsistent and slightly different training level. 32 One of the missing features is the fundamental three-dimensional (3D) visualization of microscopic procedures; trainees typically follow the operation via a camera attached to the microscope and connected to a 2D/3D display resulting in a different field-of-view. 29 Therefore, the surgeon’s view is conveyed to others to a limited extent. In this case, depth impression and relationships of individual anatomical structures are lost and the learning effect is significantly reduced. Additionally, it is a great challenge for surgeons in training to differentiate similar-looking tissue structures correctly, for example, risk structures like the facial nerve or pathological structures like proliferating cholesteatoma tissue.33,34

The overall objective of this work is the implementation of a scalable, real-time capable audio and video processing chain using an AR-based stereo-spectral algorithm unit for on-site and remote surgical training and education. The remainder of this article is organized as follows: The next section describes the overall AR system design including the stereo-spectral algorithm unit generating important anatomical information. In the section “Results,” three conducted ENT courses are evaluated with respect to lessons learned and user feedback. The last section gives an outlook for the future of surgical training and how TeleSTARs features can be used for intraoperative decision-making.

Materials and methods

Scalable remote system design

The overall system is designed for a low latency bi-lateral audio and XR-based 3D video pipeline supporting full surgical transparency in remote scenarios:

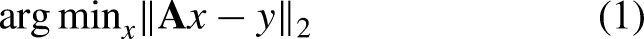

Scalability to other remote locations is achieved by connecting additional lecture rooms using the outlined hardware and network infrastructure, see Figure 1. However, the described system concept relies on a well-working low latency internet infrastructure offering a high bandwidth and low round-trip times (

Scalable AR-based 3D system design for remote surgical training and education. AR: Augmented Reality; 3D: three-dimensional.

AR-based 3D surgical video pipeline

The overall system design is depicted in Figure 1. It consists of four core components using dedicated hardware and software interfaces.

(1) A full digital surgical microscope i (DSM) having an extendible software XR interface 21 (Figure 1 items A to E).

(2) An external 2D overview camera presenting the OR setup and periphereal activities (Figure 1F).

(3) A multichannel audio/video mixer, ii which fuses all streams (Figure 1G).

(4) A real-time H.264 video encoder in the OR (Figure 1H) and H.264 video decoders iii at remote locations (Figure 1I) receiving the AR-based video stream with audio.

All remote locations are connected to the OR over a local area network (LAN) or a wide area network (WAN). Due to the systems scalability, trainees can watch the video on 3D displays/projectors or XR-based head-mounted-displays (HMDs), which can be connected to HDMI/SDI interfaces of the video decoders. The number of remote locations, that is, video decoders is not restricted and has no effect on video quality nor the representation of the AR features. Thus, an unlimited scalability is guaranteed.

Bi-directional audio communication

Besides the AR-based 3D video pipeline, the system has a bi-directional audio pipeline for real-time communication between trainers and trainees. To fulfill these requirements the system has an audio commentary concept avoiding disturbing echo and latency effects.

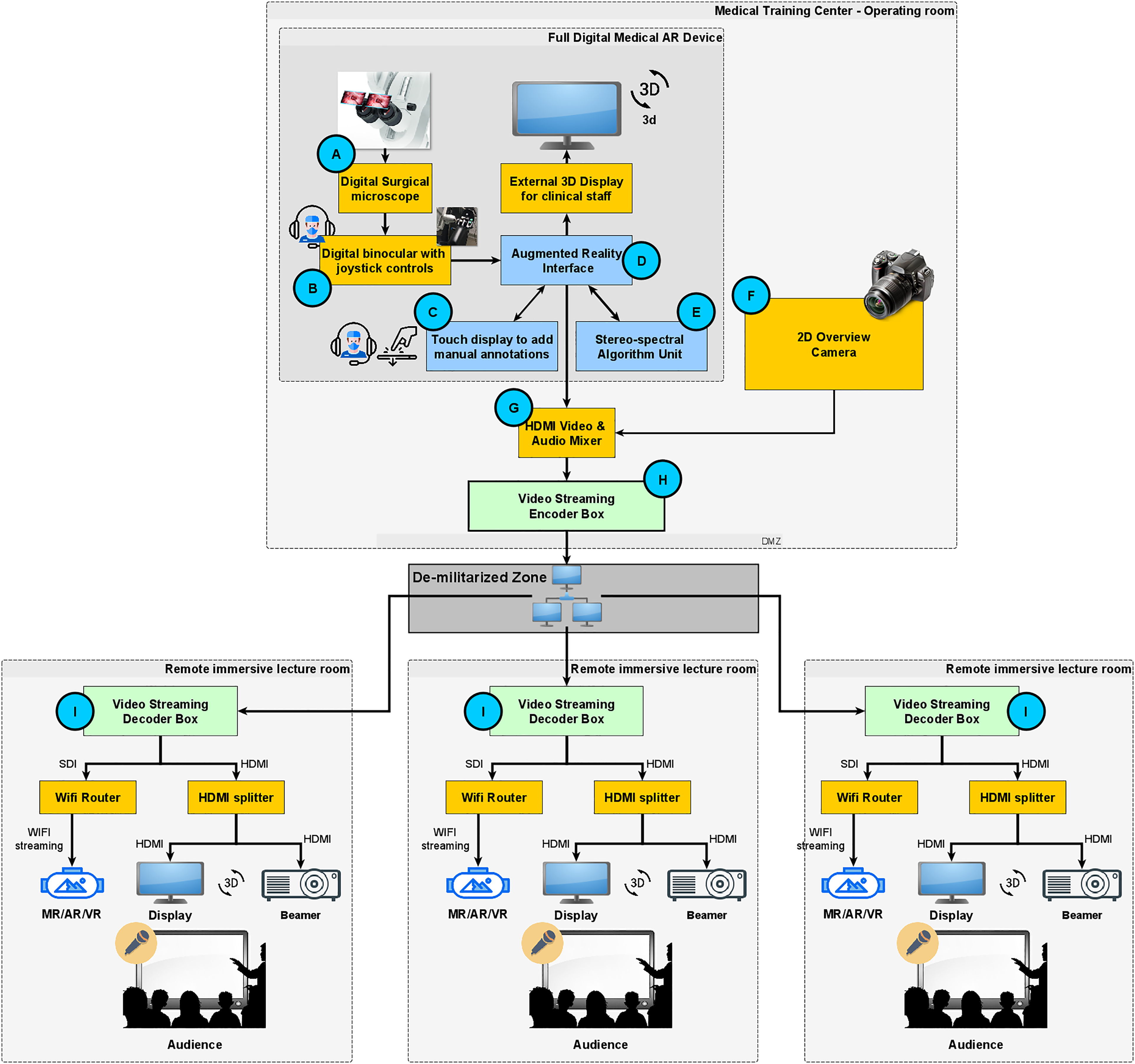

In the OR, we set up a forward audio channel consisting of two audio inputs: the audio track of the surgeon is directly connected to the video pipeline of the microscope, synchronized and embedded in the underlying HDMI stream (Figure 1B). The moderating surgeon uses a Bluetooth headset and a wireless microphone (Figure 1C). Both audio streams are fed into the audio/video mixer pipeline as depicted in Figure 1G. The mixer allows switching between both audio channels and muting if needed. The audio back channel for remote questions is implemented using a conferencing tool to which the headset of the moderating surgeon is connected. This surgeon moderates and answers or forwards questions to the operating surgeon when possible. The operating surgeon only uses the microphone to minimize disturbing background noise and receives feedback from the moderating surgeon (Figure 2(a) and (b)).

Audio system design for bi-lateral communication in a remote surgical training environment. (a) Operating surgeon wearing a Bluetooth headset explaining the intervention. (b) Moderating surgeon using a Bluetooth headset and a wireless microphone receiving questions from the remote audience.

Network configuration

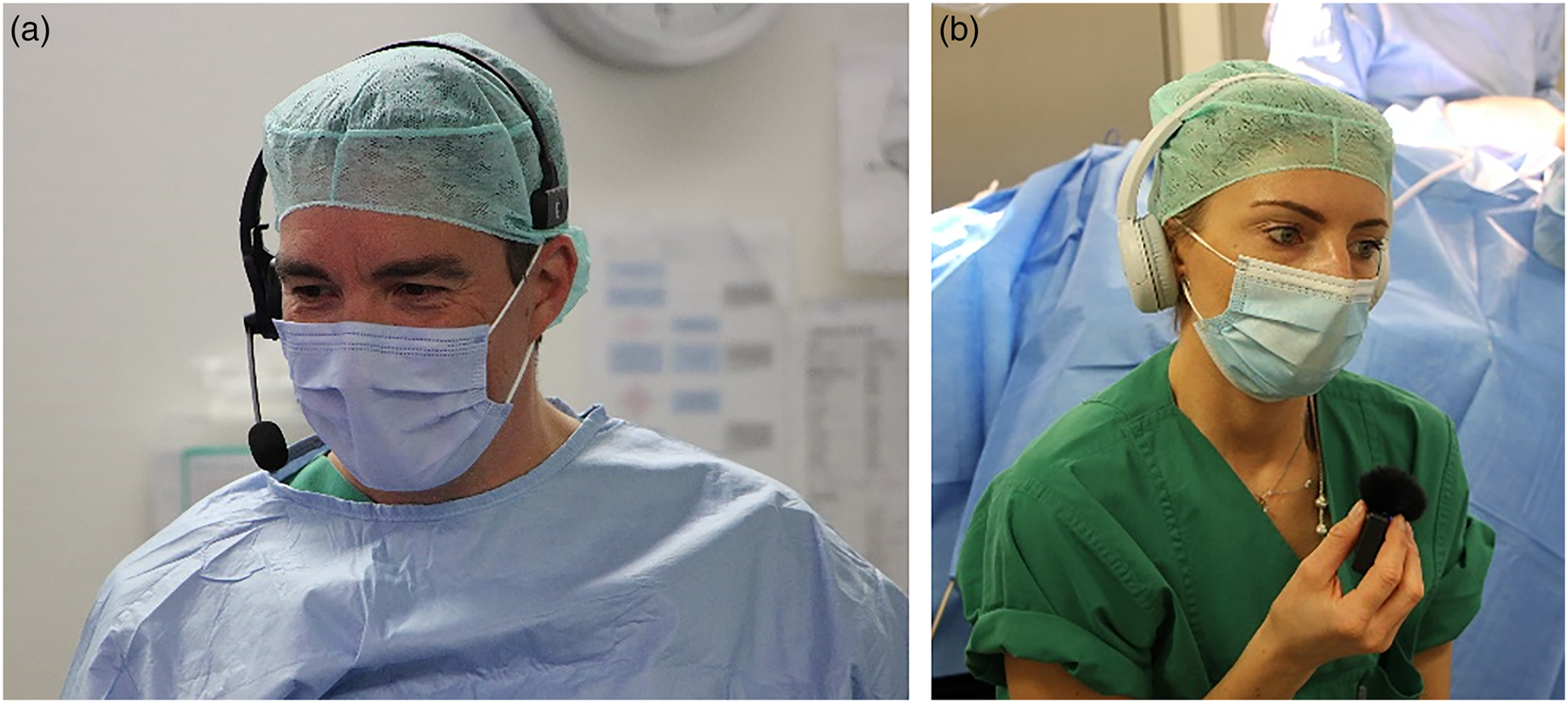

Low latency and secure data transmission are guaranteed by a demilitarized zone (DMZ). The DMZ firewall allows only connections from known external IP addresses (Figure 3). The DMZ is implemented using a virtual server including a reverse proxy, which is administered and monitored through secure VPN connections. The reverse proxy guarantees a secure connection between the internal streaming server inside LAN (Figure 1H) and the external remote WAN clients (Figure 1I), handling the 3D video decoding process.

Network configuration: firewall and de-militarized zone.

Intraoperative tools

TeleSTARs intraoperative toolchain has three parts: (1) an annotation tool, (2) a 3D reconstruction pipeline allowing image-based measurements, 35 and (3) a multispectral analysis module allowing tissue differentiation. 36 All XR-features are beneficial for intraoperative assistance and surgical training.

Annotation tool

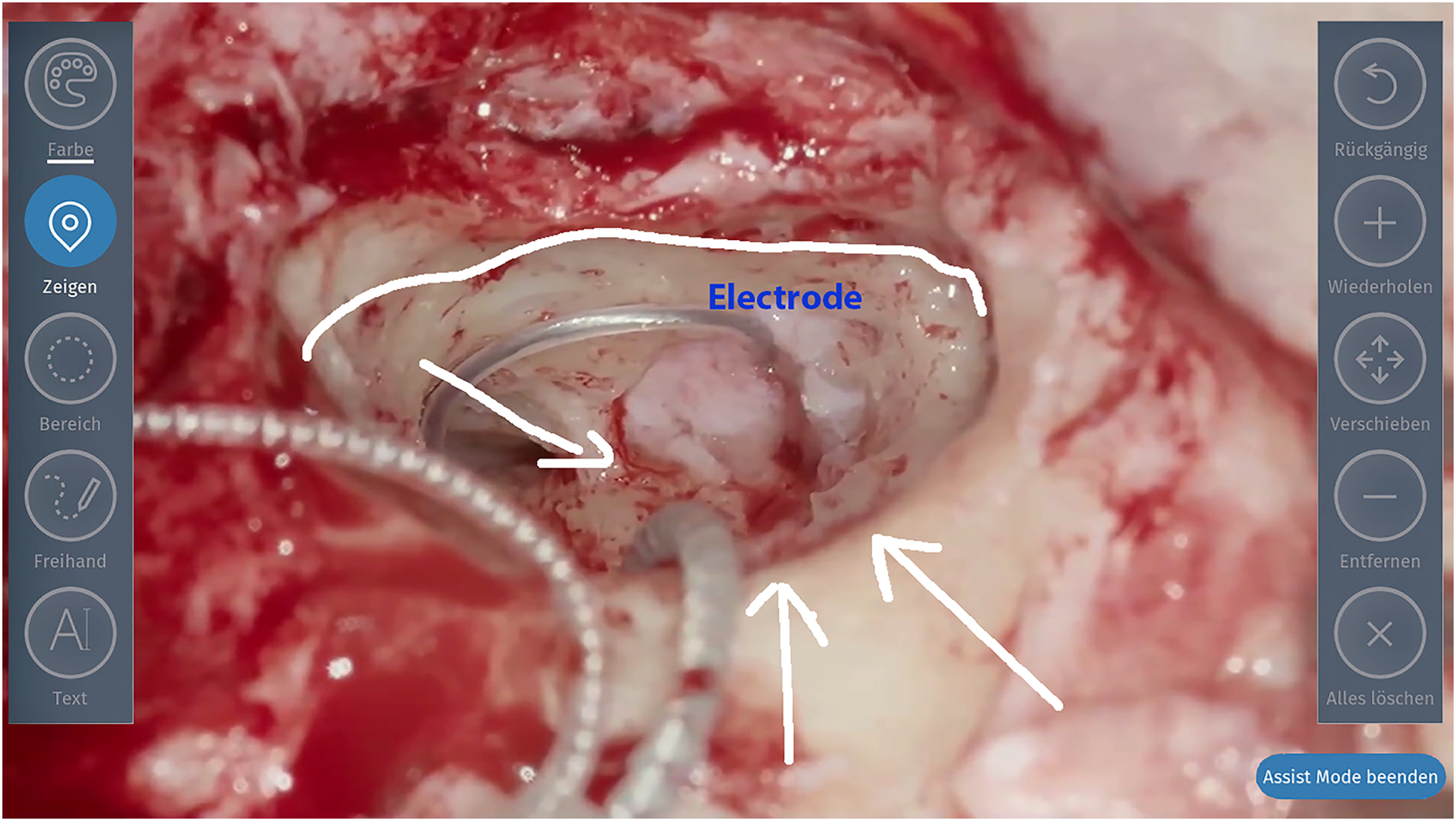

The DSM’s touch-screen user-interface (UI) allows direct and intuitive annotations of the surgical scene. The annotations are fused into the image shown in the binocular. Due to the complexity of rendering virtual objects into correct depth layers, the annotation is performed in 2D only (left view). During annotation, the microscopic head is static with fixed brakes. Any release of the brakes or change of magnification or focus deletes the annotated information to ensure the correctness of earlier annotated structures. The annotation tool (Figure 4) has six modes: (1) cross marker, (2) circle, (3) boxing, (4) directional arrow, (5) free-hand, and (6) text. All annotations can be colored, edited, or deleted.

Annotation mode of live image. The blue border around the image indicates that the augmentation mode is activated.

Multispectral analysis

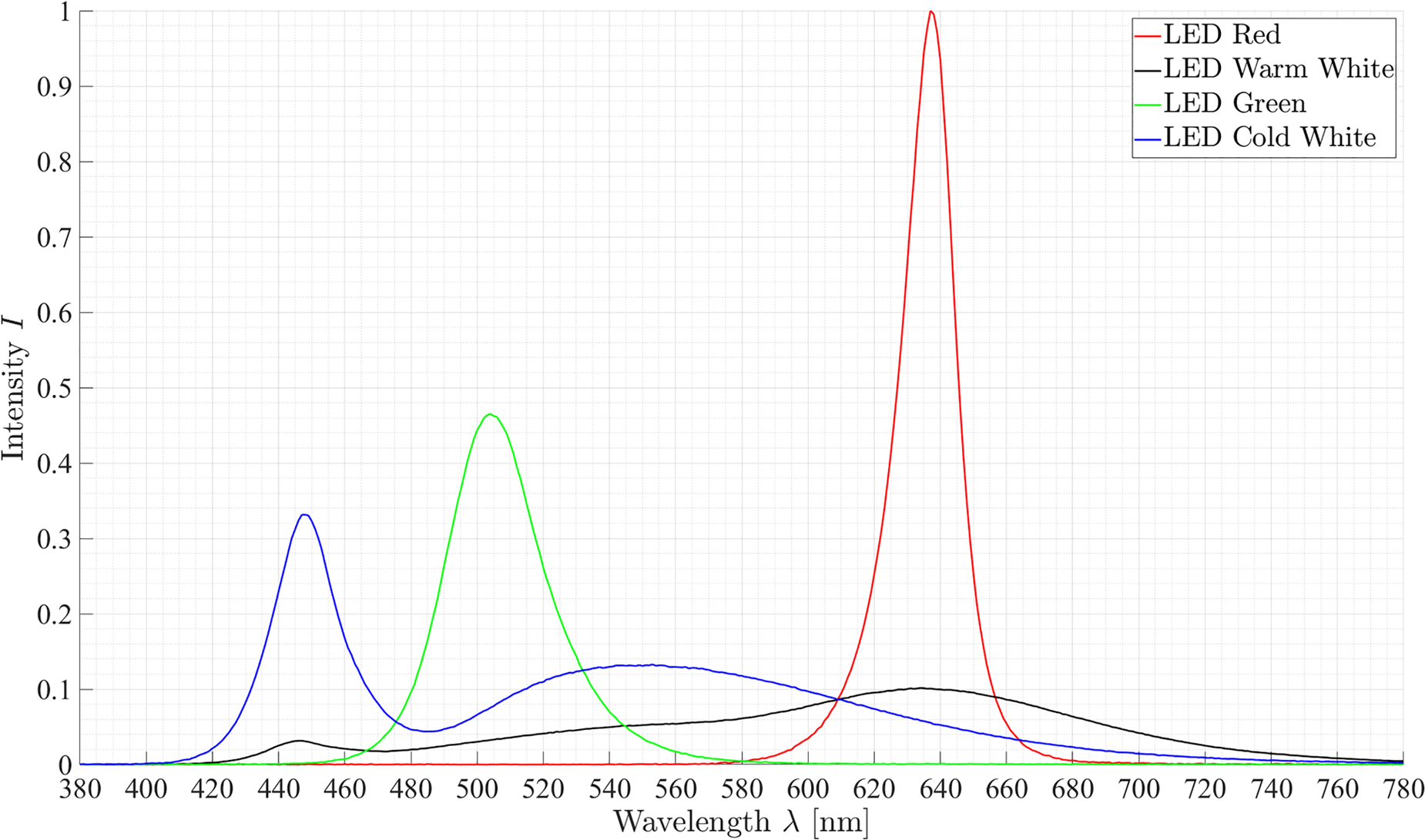

The DSM has an RGB sensor and an LED light source. The multispectral analysis unit uses the integrated LEDs which are synchronized to the RGB sensor frequency allowing to capture a sequence of

Illumination spectra of the four LED.

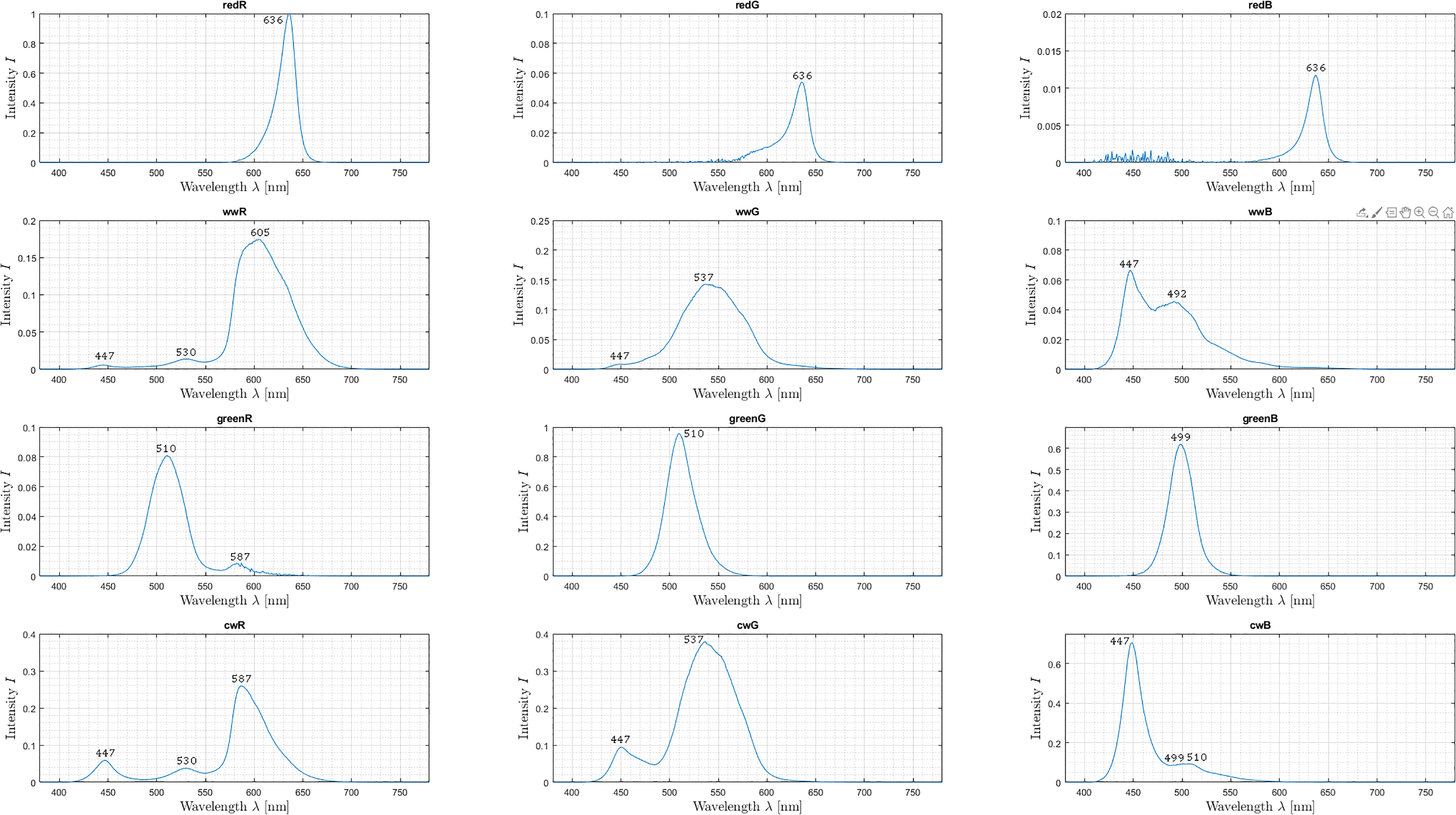

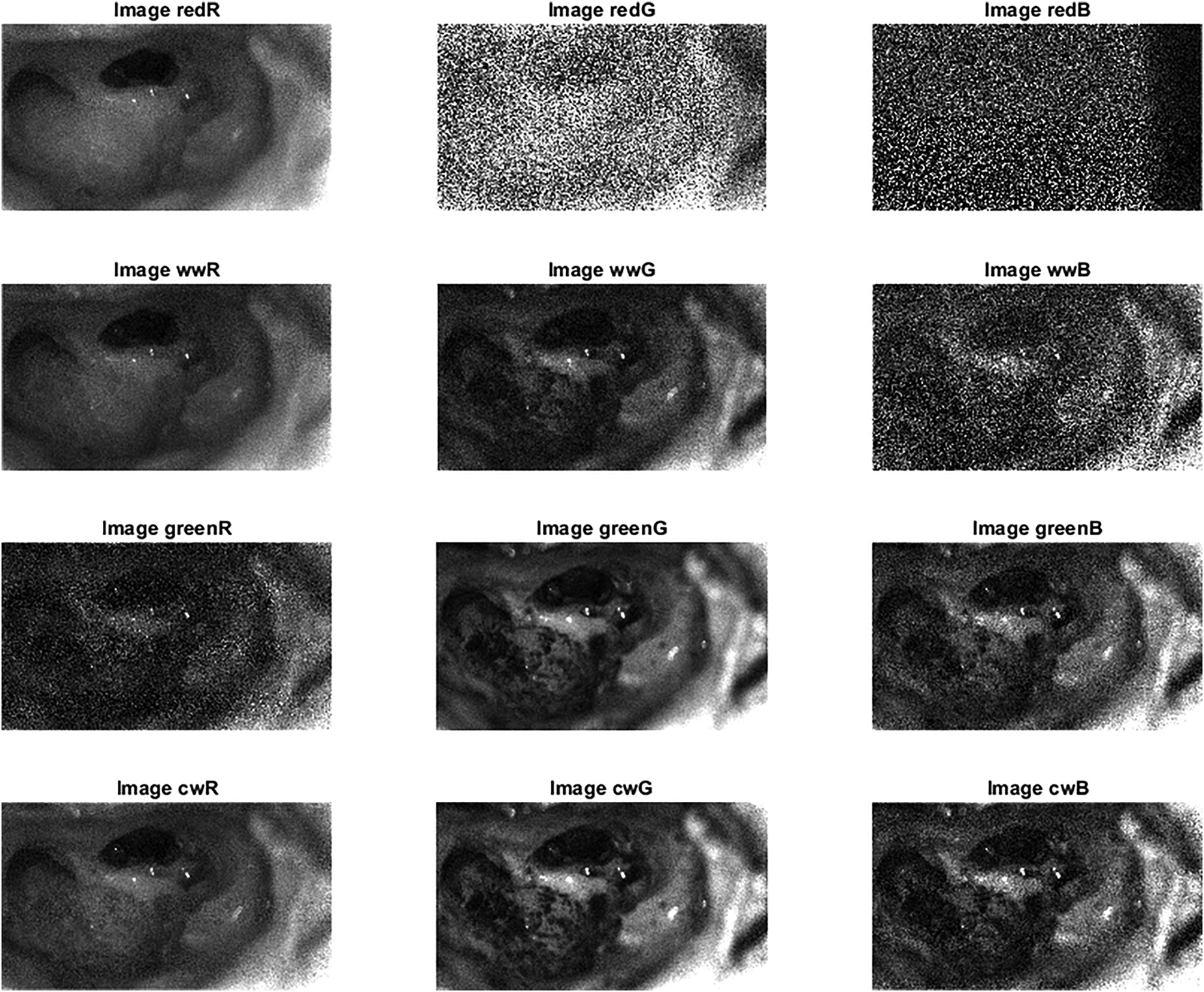

Hence, 12 spectral images can be recorded by using the LED sequence combined with the RGB channels. Each spectral image captures information about specific spectral characteristics, which recur differently in different spectra, see Figure 6.

A spectral imaging sequence showing captured 12 spectral images. Relevant peaks in each spectrum are labeled with the corresponding wavelength

To split up these characteristic peaks into

Thus, a sequence can be combined into a spectral data cube, where each spatial pixel is represented as a vector with a size of (

3D reconstruction

It is a crucial process for the next generation of intraoperative applications for microscopy and other surgical disciplines (e.g. visceral surgery). In the context of image-guided surgery and remote surgical education, it creates a true-to-scale 3D surface representation of the patient’s anatomy to get an improved understanding while also allowing image-based measurement of anatomical landmarks. Our 3D reconstruction pipeline has been successfully evaluated and compared to others. 41

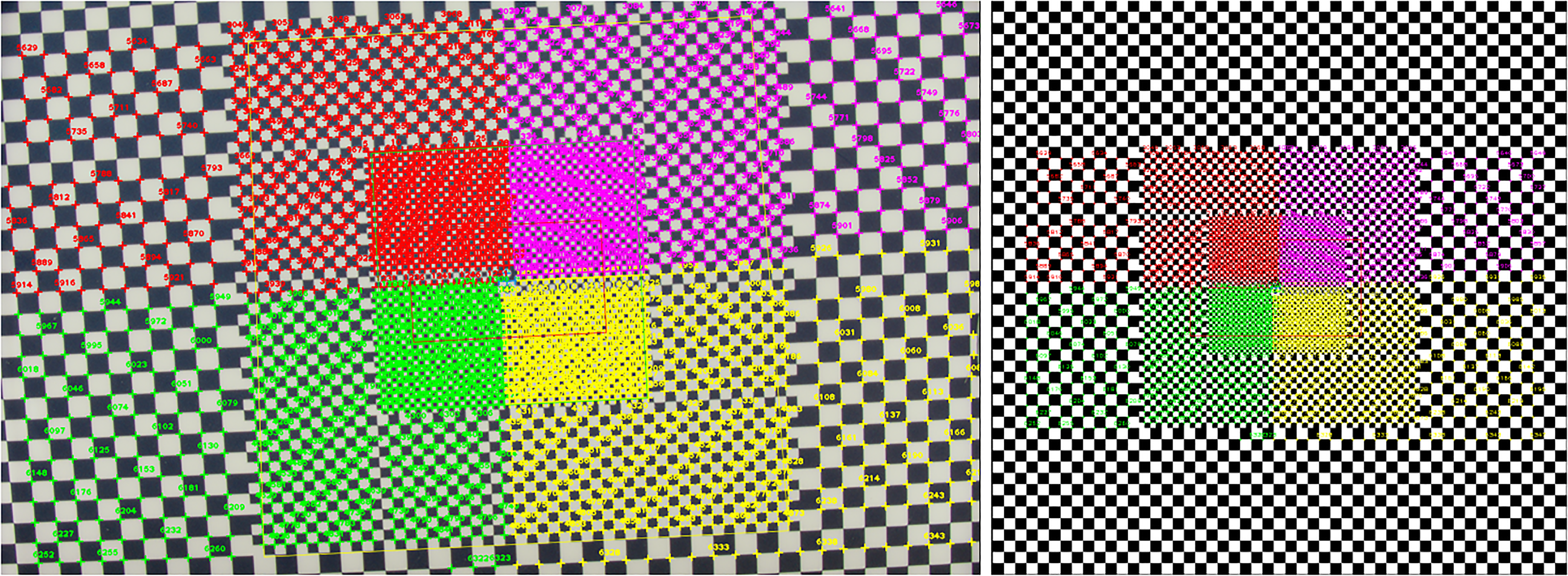

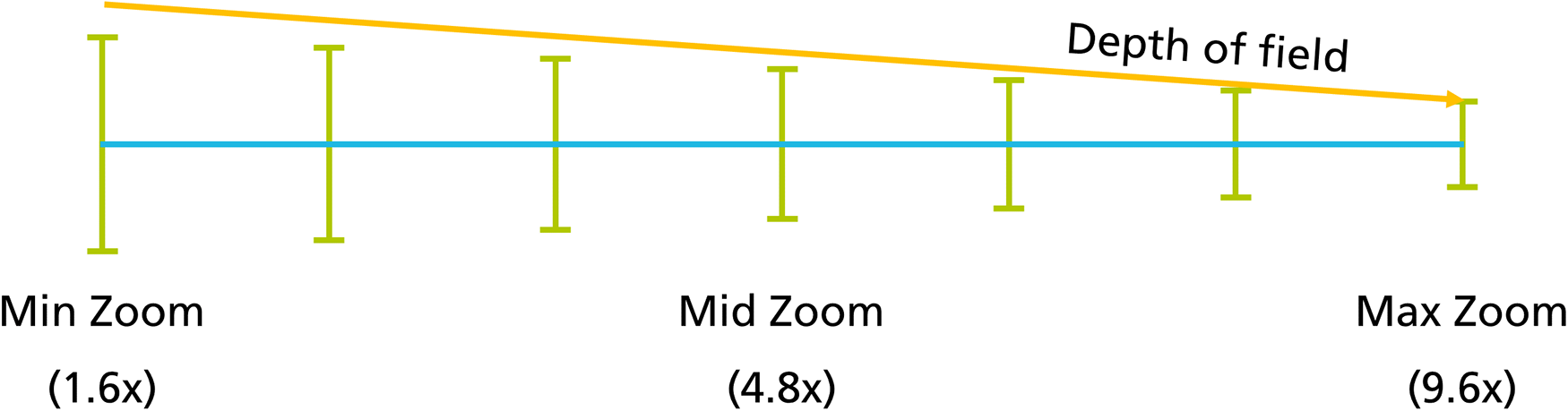

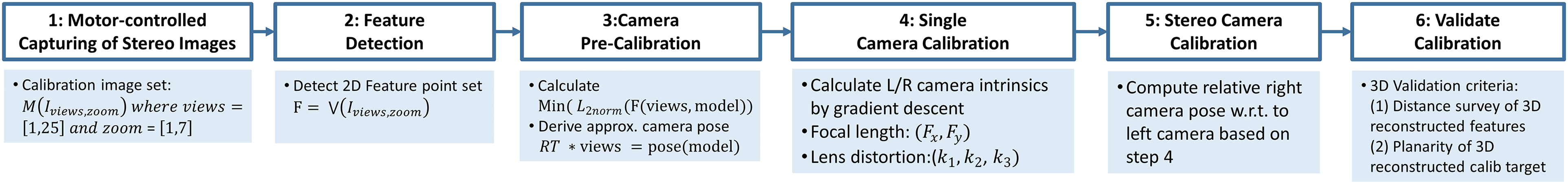

Before 3D reconstruction can be applied, a calibration process of the stereoscopic system needs to be performed which estimates the DMS’s optical lens parameters. 42 The automated principle for multiple zoom levels using a zoom-independent calibration chart (Figure 7) is depicted in Figure 8. Figure 9 shows the overall calibration process which has six main steps.

Intermediate calibration showing the color-encoded 2D to 3D correspondence mapping of detected features and 3D model features. Left: Detected features in four different quadrants. Right: Reference 3D model features of rendered model in canonical view. Each feature has a unique ID for a detailed 2D/3D evaluation. 2D: two-dimensional; 3D: three-dimensional.

Calibration strategy: motor-controlled capturing of different zoom levels.

Calibration pipeline: motor-controlled capturing of different zoom levels with changing depth of field.

Surgical course

Surgical training today involves trainees looking directly at the situs or watching a 2D monitor. During non-critical situations in microscopic surgeries, they may look into the binocular to perceive the surgical scene in 3D. However, this is time consuming and can delay the surgery significantly. Hence, in critical situations teaching is continued only in a limited manner, although such situations are important to build up valuable surgical knowledge.

Courses for CME typically have

Additional problems have arisen due to the COVID-19 pandemic in 2020. Many courses were canceled, leading to an obvious and significant delay in the training of clinical staff. 43 Therefore, we designed a hybrid course using XR under the highest hygienic standards and with approval of the pandemic staff of Charité – Universitätsmedizin Berlin and accompanied by the Medical Association of Berlin.

The lectures were performed in a conventional way followed by a question-and-answer session by different lecturers. All contents were streamed in 3D video and enriched with intraoperative information using the described tools. The XR-streams were sent to different remote lecture halls as well as 2D-stream to video platforms. During surgery, remote participants were able to interact with the moderating surgeon, for example, Q&A or sending images, 2D video platform participants could only send questions via chat.

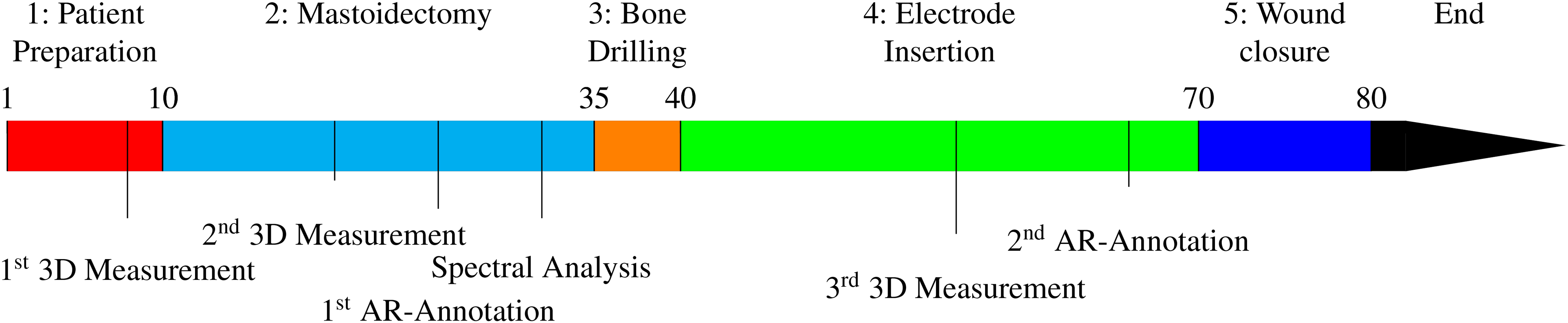

Cochlear implant (CI)

CI surgery was chosen for this study due to four reasons. (1) Complexity: as surgery at the lateral base of the skull it is difficult to learn and only a small number of experts exist. Moreover, its worldwide relevance is increasing.

44

(2) Highly standardized procedure: predictable intervention with duration of

Timeline in minutes for a cochlear implant at surgery: Timestamps of intraoperative AR-features and annotation tools for remote surgical education. In the Appendix, all procedure steps are described in more details.

Results

Technical results

The individual results of the three intraoperative tools are presented, followed by the system performance.

The annotation tool allows an annotation of important tissue structures in different colors to highlight these for remote participants. In addition, the annotations can be augmented by text. The annotation tool was validated independently in an in-vivo study with participants of different training levels showing an improvement in learning outcome as well as in communication between trainee and trainer. 47 All augmentations are visualized instantly with no latency.

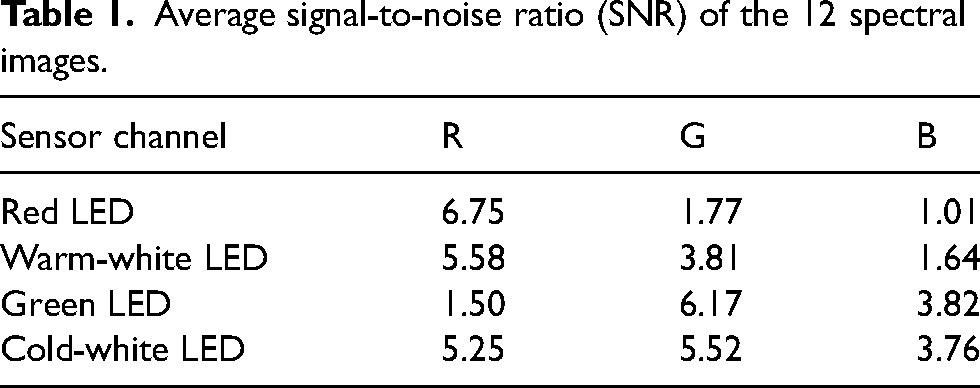

The multispectral unit analyzes the tissue in the situs allowing it to differentiate between single types. Due to the single selection of the individual LEDs, the illumination intensity of the single-LED-images is reduced by a factor of

Average signal-to-noise ratio (SNR) of the 12 spectral images.

Figure 11 gives an overview of all 12 spectral images, showing that sensor channel B holds no information when illuminating the scene with the red LED. This is expected behavior as the spectrum of red LED has a peak at

The 12 acquired spectral images of the third patient.

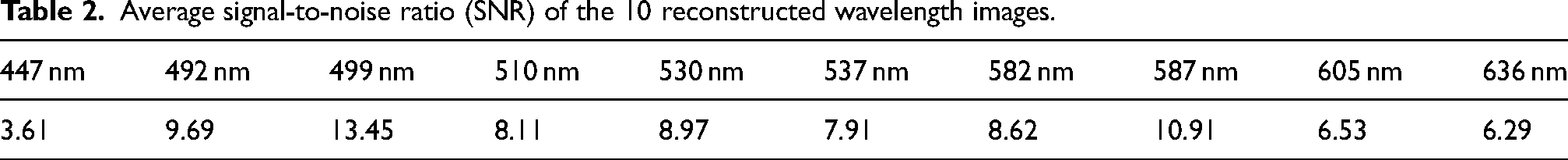

Average signal-to-noise ratio (SNR) of the 10 reconstructed wavelength images.

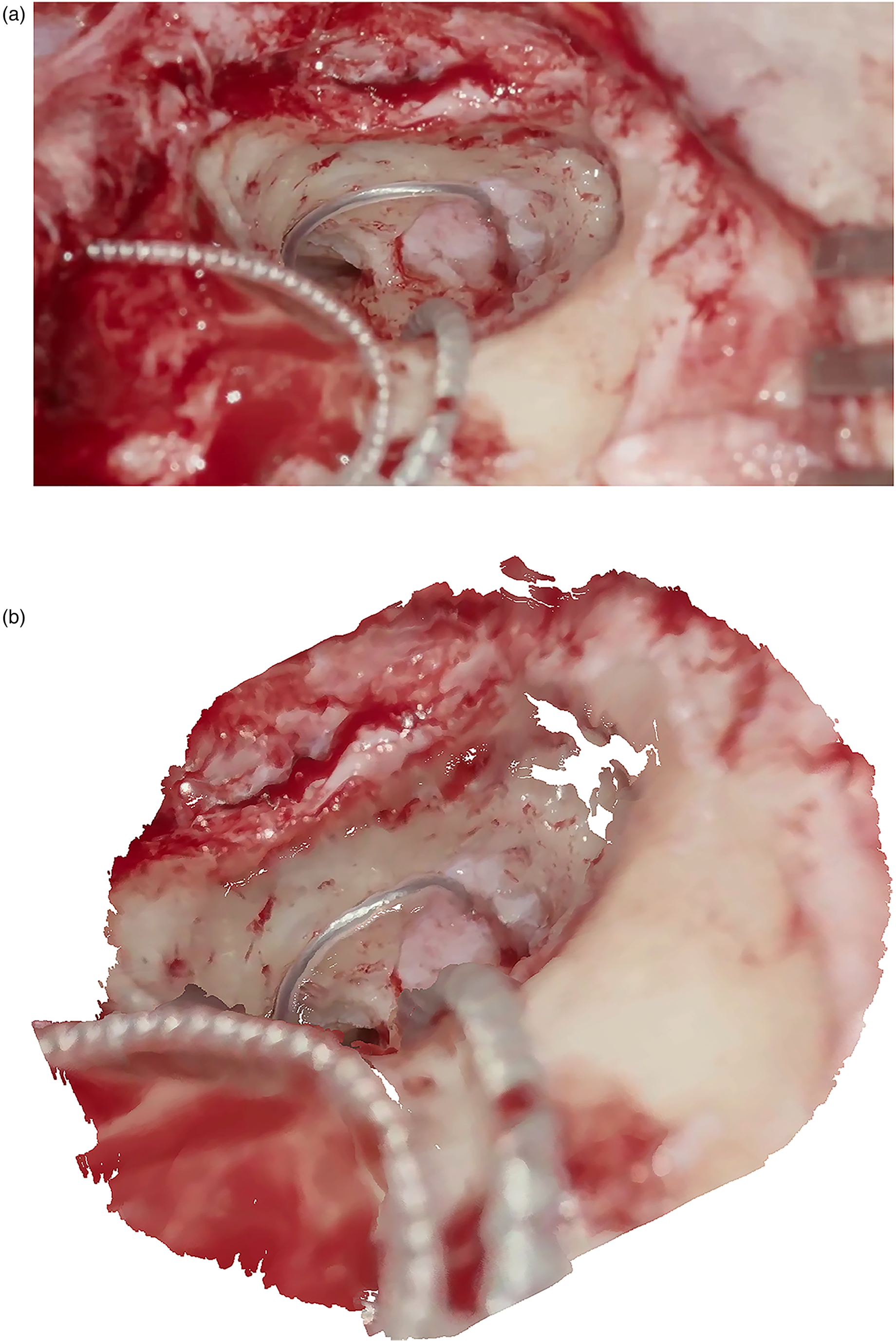

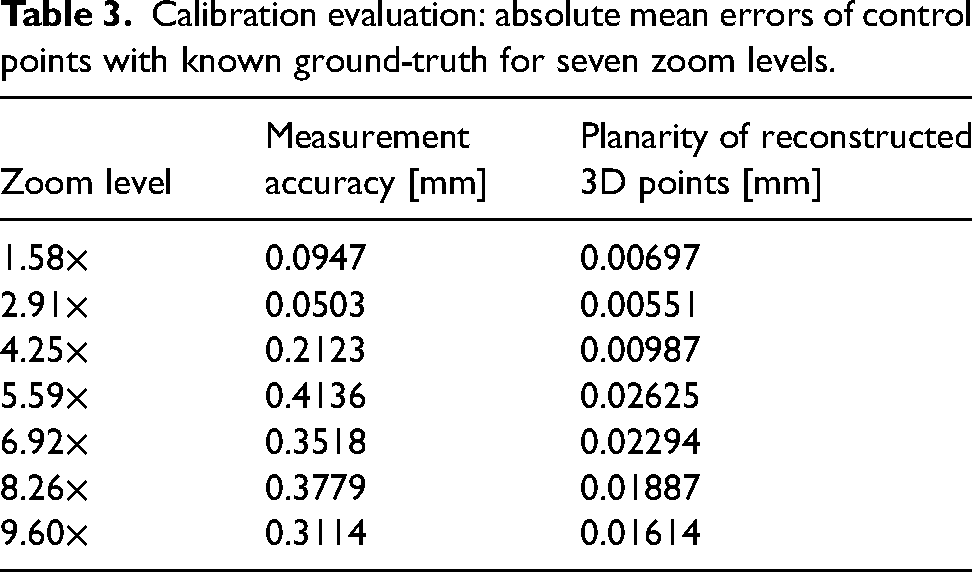

The stereo system was calibrated for seven zoom levels. Calibration results are listed in Table 3. The accuracy for each zoom level is in the sub-millimeter range and is best for minimum zoom (

Comparison of 2D image and corresponding 3D reconstruction. (a) Left view of stereoscopic image pair used for 3D reconstruction. (b) Dense reconstructed point cloud of the surgical scene during a CI insertion. CI: cochlear implant; 2D: two-dimensional; 3D: three-dimensional.

Calibration evaluation: absolute mean errors of control points with known ground-truth for seven zoom levels.

Runtime performance

The pipeline has three parts affecting the overall runtime performance: (1) the main digital video processing and transcoding pipeline, (2) the multispectral analysis module adds

Trainee feedback and didactic results

We broadcasted three AR-based 3D videos of CI surgeries in January, September, and November 2020. The system scaled up to five different remote locations in parallel in two countries: The Netherlands (TU Delft, Rotterdam/Erasmus MC) and Germany (Fraunhofer HHI Berlin, Ludwig Maximilian University of Munich, Charité – Universitätsmedizin Berlin). The didactic component was taught by surgeons (

All participants were able to capture data in the sense of an XR representation such as patient vital signs, preoperative imaging (e.g. CT and MRI), intraoperative distance, and functional measurements (e.g. electrocochleography), or tissue analysis. This additional information helped to explain surgical steps and methods. Basic comprehension questions, such as size ratios and tissue types, could be answered easily. The possibility to annotate anatomical regions is a key feature for remote mentoring leading to more detailed questions about the procedure and its challenges. Furthermore, all participants were able to adapt fully to the surgeon’s view even in surgically demanding situations and ask relevant questions to the moderating surgeon immediately. This leads to a deeper insight into the action of an experienced surgeon so that essential aspects can be taught faster and more transparent.

To evaluate the training course, questionnaires were prepared for the participants consisting of 19 questions based on Weiss et al. 49 These six questions were extended by 10 other questions to optimally address the training effect of the AR, possible future features and the technical knowledge of the participants. In total, 62 of 82 questionnaires were answered by the participants, whose mean age was (38.3 ± 4.5) years. Questionnaires were only returned from the sites where a 3D live transmission was provided. The 68 participants who took part via the 2D-YouTube-Livestream did not return any questionnaires. Due to the anonymity of these YouTube-participants, it was not possible to actively request the questionnaires.

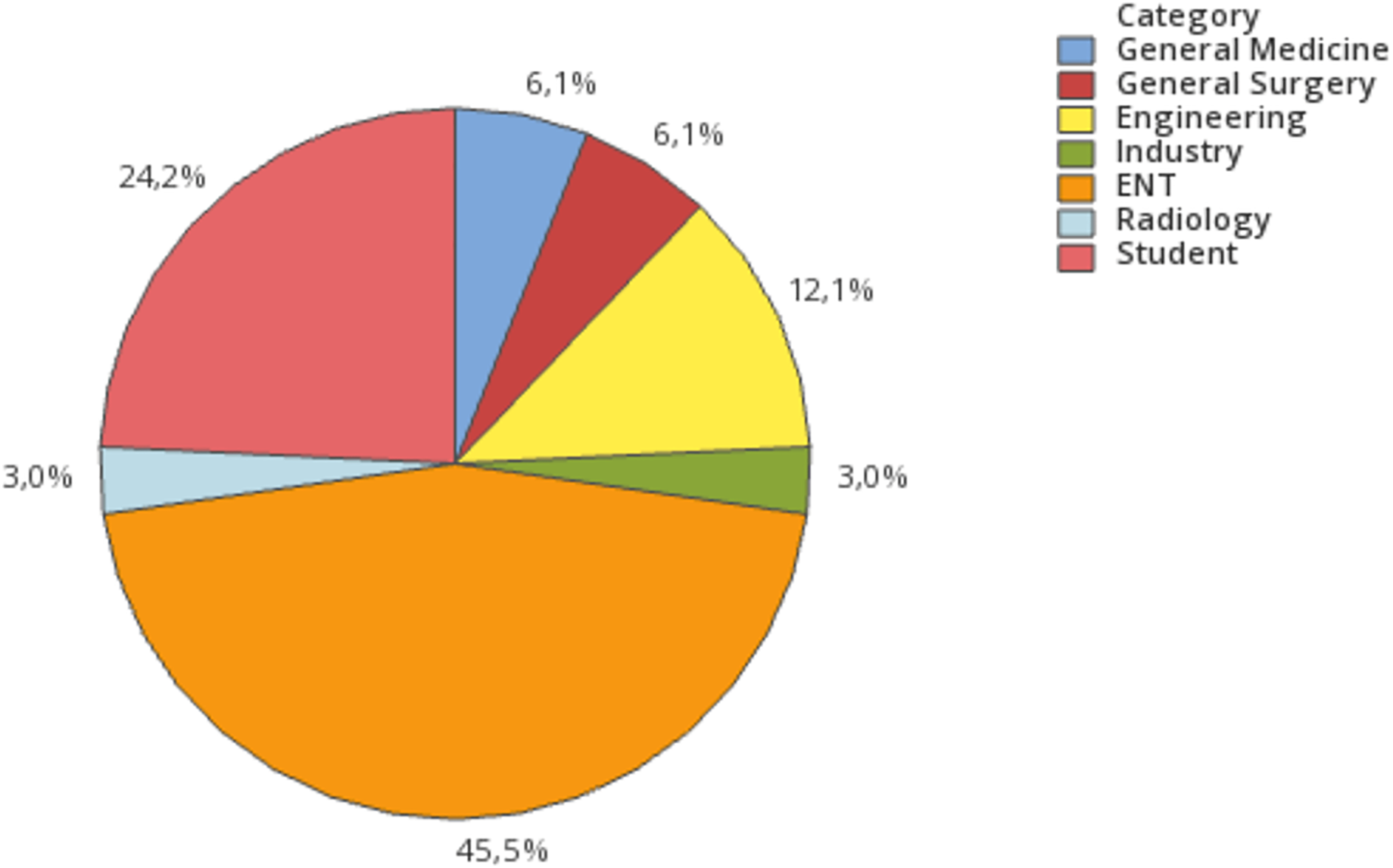

Participants in the live 3D-sessions were from different professional groups: ENT doctors (

Professional groups of the registered participants.

In the first course, which was mainly dedicated to test the technical setup with a limited number of participants, 10 doctors in specialist training for ENT took part in a seminar room with the described latency-free 3D-setup. The second course was enrolled to a bigger group with 33 participants at TU Delft, the Netherlands. The third course had 39 participants at the live transmission sides with 3D-setups in Berlin, Rotterdam, and Munich and was streamed in 2D via YouTube. Due to the COVID-19 pandemic, the number of participants was restricted at all live transmission sites.

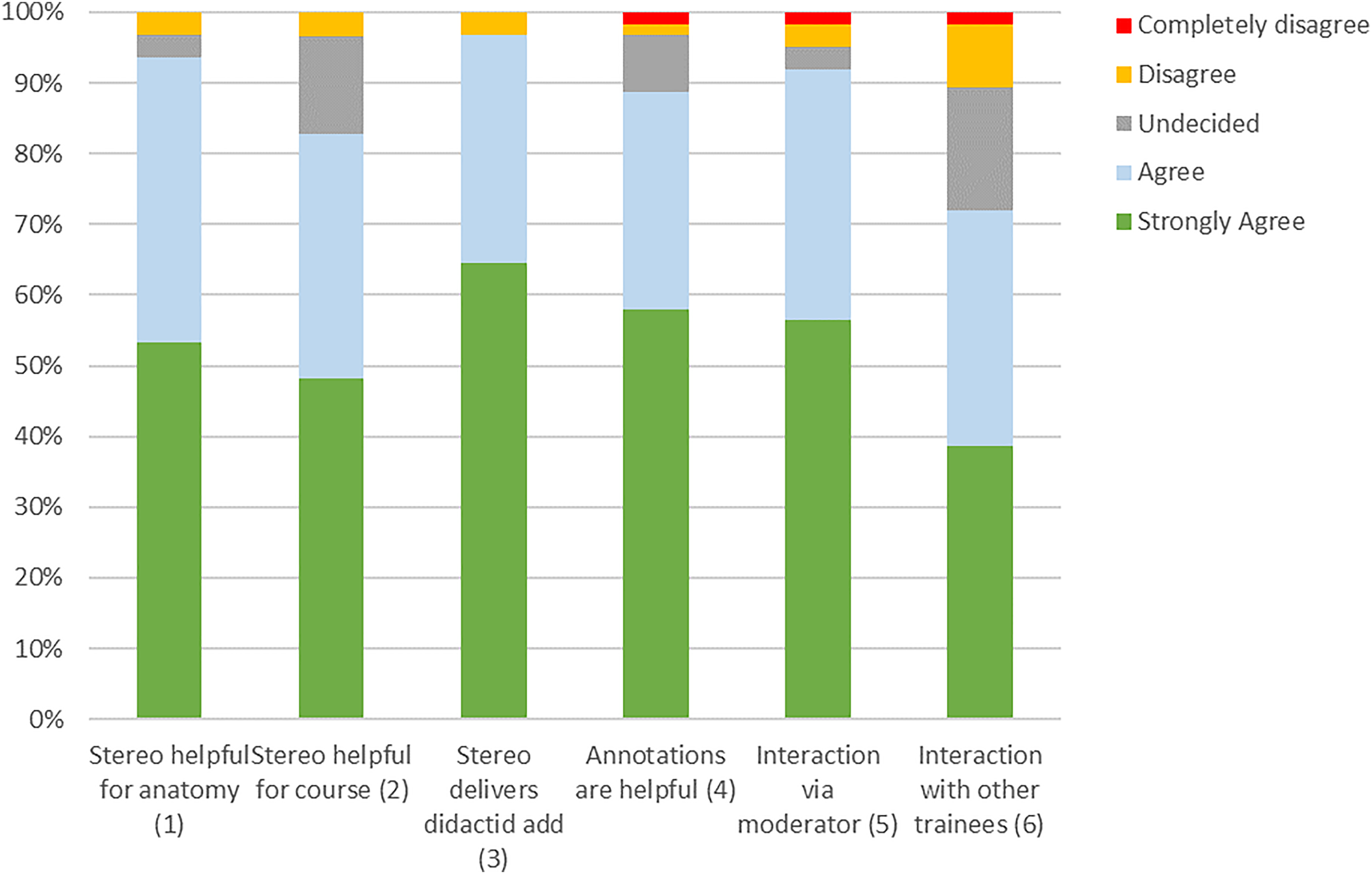

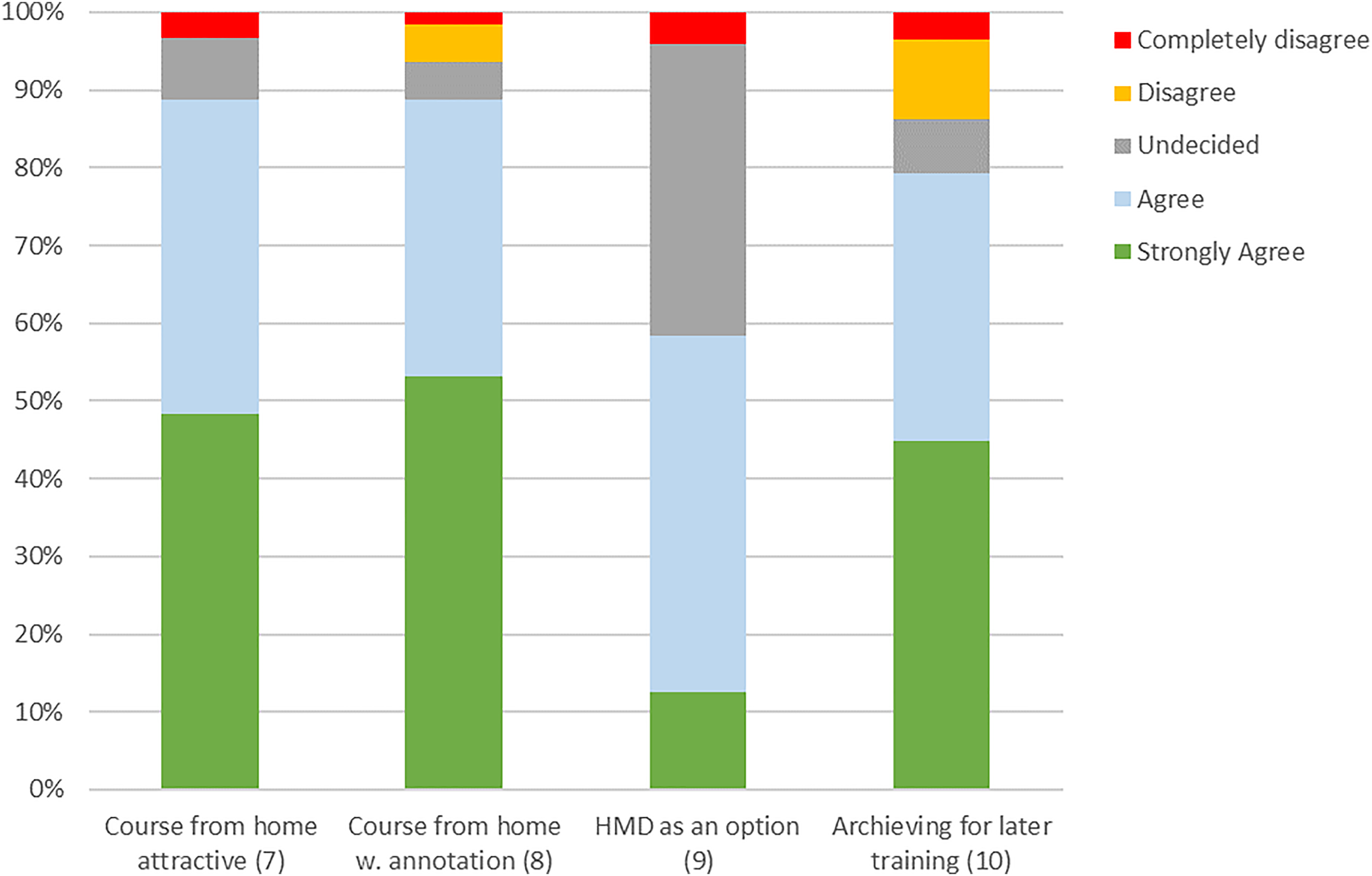

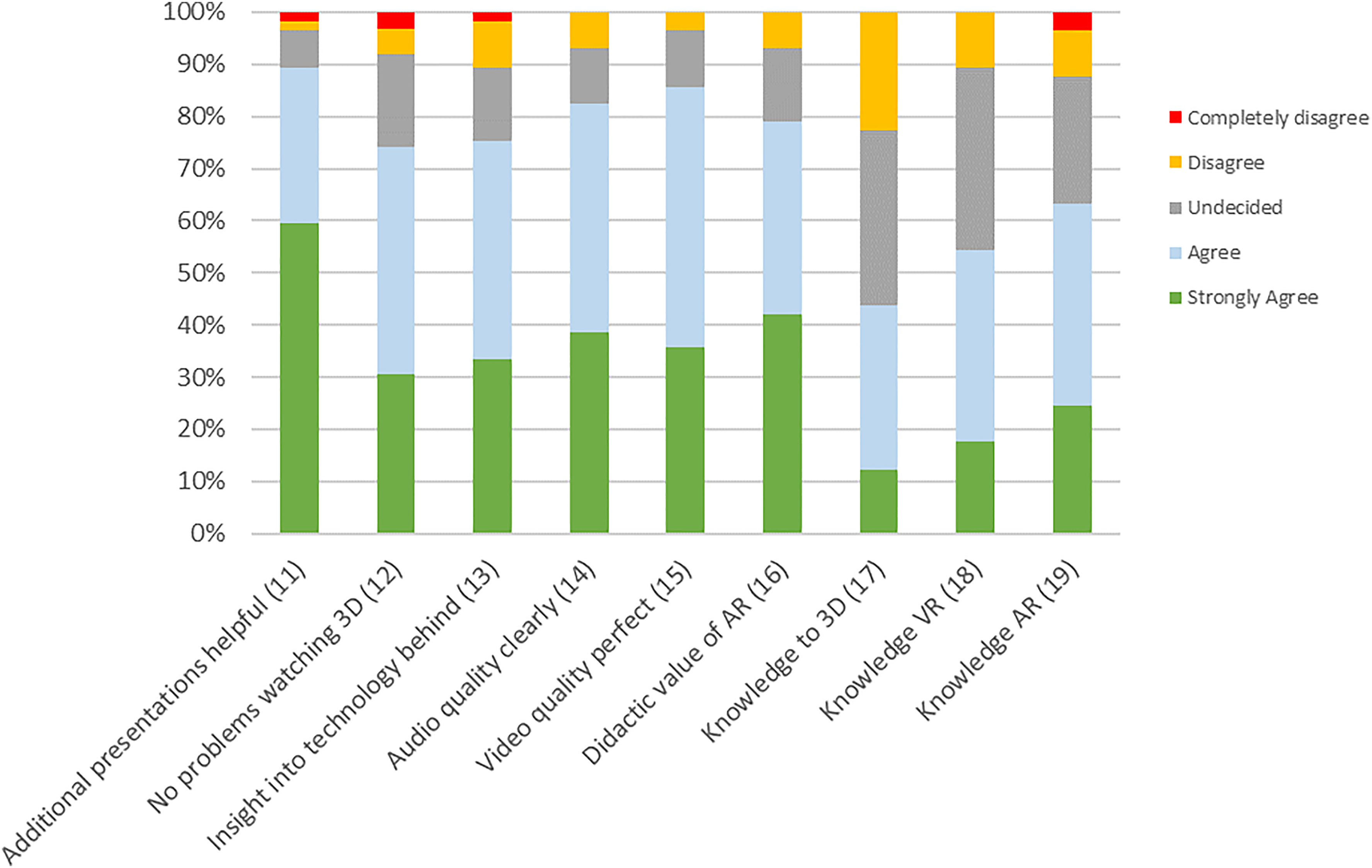

The questionnaire (see the Appendix) was divided into questions about the training effect, possible future features, and the technical knowledge of the participants. Each question could be answered by one-of-five ratings (“strongly agree”, “agree”, “unsure”, “disagree”, “strongly disagree”) (Figures 14 to 16).

Resulting answers of the questions about features used during the training session (n = xx).

Results of the questionnaire part about additional possibilities for future training courses (n = xx).

Results of the questionnaire part about the technology and knowledge of technical solutions.

The question whether stereo visualization is helpful to better perceive anatomy compared to a two-dimensional view was answered with “agree” or “strongly agree” in 58 cases, while two participants were “undecided” and two “disagreed”. Similarly, in the question whether stereo visualization is helpful to understand the course of preparation,

In the part of the questionnaire where we assessed additional possibilities for future training courses (Figure 15) it was shown, that most participants find the possibility to attend an online course from home attractive. Only

The questionnaire part about the technical details (Figure 16) showed that additional presentations describing the background of the used technology are of interest in

It could shown, that AR has a strong didactic value (agreed in

Conclusion

TeleSTAR provides a highly scalable solution for surgical education and training solving the problems and limitations of CME. The survey showed comparable results to other reported surgical live streaming technologies. 29 In our expanded setup, the TeleSTAR concept specifically focuses on the surgical field-of-view and improves the lack of image-based annotation and audio-visual commentary options. Trainees in different remote locations can follow a surgery as 3D live stream on displays or HMDs and are provided with important additional information using the XR-tools leading to an increased surgical transparency and direct interaction with the surgeons. This is achieved by an adaptive combination of modular software and hardware modules which guarantees a seamless way of audio-visual communication between experts and trainees. The system is scalable and allows an easy transfer to other surgical domains. In addition, TeleSTAR can also strengthen the international collaboration in surgical education.

The interactive course design promotes the direct knowledge transfer between inexperienced and experienced participants. New surgical ideas and concepts for intraoperative assistance can develop much faster with large group discussions on surgical workflows. The results highlight that our XR-based setup is a valuable tool for the current COVID-19 pandemic, but also shows great potential for surgical education in a daily routine since it has a positive impact on the learning curve of trainees.

In the future, it is contemplated to build a larger training platform to combine different aspects with scalable, adaptive, and interactive online as well as offline courses with integrated 3D/AR streaming. The key feature of simulation-based medical education is the direct feedback to the trainee based on his performance during a learning experience. 48 The modularity of our system allows an easy integration and assignment of different training tasks. Feasible tasks could be, for example, parallel estimation of tumor size during the procedure with knowledge of slice imaging or identification of pre-defined surgical landmarks. Virtual answers of trainees could be captured and evaluated to the results of the performing surgeon. 33

Finally, the whole concept offers a new approach for clinical decision making and remote surgical education by its easy integration into an interactive processing chain. It supports intraoperative assistance from remote or on-site experts allowing discussion of complicated procedures while guaranteeing the same surgical view and consistent surgical data.

In conclusion, the proposed TeleSTAR platform presents a training platform with a high potential and provides an efficient tool for visualizing intraoperative results from medical examinations and clinical notes as well as for sharing relevant information between remote experts.

Footnotes

Acknowledgements

Informed consent has been obtained from all individuals included in this work. The research related to human use complies with all the relevant national regulations, institutional policies and was performed in accordance with the tenets of the Helsinki Declaration, and has been approved by Ethics Committee of Charité – Universitätsmedizin Berlin, Germany.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by EIT-Health, Campus under Grant No. 20467; EIT-Health is supported by the EIT, a body of the European Union. Further, it was partially funded by the German Federal Ministry of Education and Research (BMBF) under Grant No. 16SV8061 (MultiARC) and No. 16SV8018 (COMPASS).

Notes

Appendix

Timestamp 1: 1–10 min

After disinfection infiltration of xylonest with adrenalin Retroauricular skin incision Preparation of the muscle-periosteal flap and extraction of fascia for later transplantation Timestamp 2: 10–35 min

Exposure of the planum mastoideum and subtle control of bleedings (hemostasis) Mastoidectomy with presentation of the short incus appendix and semicircular canal Creating and displaying of Wullstein’s window and the two nerves with the chorda-facialis angle until the complete opening until the stapedial tendon and the middle ear structures are clearly visible Representation of the round window Timestamp 3: 35–40 min

Drilling of the implant site in the bony skull to place the CI Timestamp 4: 40–70 min

Complete electrode insertion Subtle sealing of the electrode entry point with pieces of fascia Regulation-compliant CI device testing (impedance measurements) showing as picture-in-picture Verification of acoustic/stapedius reflex Telemetric derivation of the potentials Re-implantation of fasciae and possibly muscle grafts to fixate the electrode cable Timestamp 5: 70–80 min

Re-implantation of intraoperatively collected bone meal for placement on the electrode array and the bony canal running to the implant Surgical wound closure End of Procedure

Feedback Questionnaire To improve surgical training for the future and to improve further upcoming TeleSTAR courses, we would like to ask you to answer some questions. Do you agree with the following statements?

Thanks to the 3D/stereo representation of the surgical field, I can perceive the anatomical topography and structures better than with a conventional 2D representation.

I can follow the course of the preparation better with the 3D/stereo representation of the surgical field than with a 2D representation.

The possibility to see the surgical field as a co-observer in 3D/stereo provides a didactic added value for surgical courses.

The possibility of integrating graphical annotations in the video of the surgical field add didactic value for surgical courses.

The possibility to see the operation live via 3D/stereo video transmission from home would make an online distance-learning course very attractive.

The interaction with the surgeon via a moderator and the annotation mode (bi-directional?) would provide additional didactic value!

The interaction with other trainees using a lecture room-based annotation mode would provide additional didactic value!

The possibility to see the operation with annotation live via 3D/stereo video transmission from home would make an online distance-learning course even more attractive!

What do you think of Head-Mount-Displays (AR/VR-glasses) to watch a surgery in 3D?

The 3D/stereo video data of a surgery should be archived for self-study and made available online for registered users.

Additional medical lectures/presentations to the live surgery are helpful for a better understanding what and how the surgery is performed?

I have no problems watching 3D/stereo movies and videos (e.g. discomfort, dizziness, headaches).

It is interesting to get an insight in the technology how image capturing and video transmission with the digital surgical microscope is realized.

The audio quality of the transmission allowed me to understand everything clearly.

Video quality of the transmission was clear and without disturbing artefacts.

How do you rate the didactic value of the presented AR features—Annotation mode and depth visualisation?

What previous knowledge do you have regarding 3D/VR/AR—(1: no knowledge–5: Expert)?

Which 3D/AR visualization features could be also useful for surgical training?

Do you have any more comments/suggestions?