Abstract

Management response processes following evaluations foster accountability, evidence-informed decision-making and organisational learning. This article examines trends, good practices and remaining challenges in management response systems across multilateral organisations within the United Nations system or in close collaboration with it. It draws on a rapid evidence assessment – combining interviews with key informants from 14 multilateral organisations and a review of over 60 documents (evaluation policies, guidelines and annual reports) from these organisations – complemented by insights from a dissemination workshop with 26 United Nations Evaluation Group member organisations. Findings indicate substantial variation in management response practices, explained by structural and operational differences such as reporting lines and levels of decentralisation. Persistent gaps include diffuse ownership, limited validation and infrequent public reporting. This article highlights effective and transferable practices, such as integrating management response actions into corporate planning, linking implementation to key performance indicators and using dashboards to track progress.

Keywords

Introduction

The multilateral development system brings together international organisations – including United Nations (UN) agencies, as well as other organisations such as Gavi, the Vaccine Alliance (Gavi), the World Bank and the Global Fund to Fight AIDS, Tuberculosis and Malaria (Global Fund) – through which countries cooperate to address global challenges (OECD, 2026; UN, 2026). Within organisations across the multilateral system, systematic evaluations fulfil a triple mandate: (1) track progress towards agreed objectives; (2) supply evidence for adaptive, mid-course corrections that enhance programme effectiveness and efficiency and foster organisational learning to inform future policies, strategies and programmes; and (3) offer credible assurance to both donors and partner-country stakeholders about the value, quality and sustainability of investments. Differences in organisational mandates, governance arrangements, size and decentralisation shape how evaluations are structured and conducted across the multilateral system, ranging from a single mid-level officer embedded in planning units to fully independent evaluation offices with dedicated staff.

The United Nations Evaluation Group (UNEG) provides system-wide guidance to evaluation units of the UN system – which includes UN departments, specialised agencies, funds and programmes, and affiliated organisations – through its UNEG Norms and Standards for Evaluation on basic principles and best practices in managing, conducting and using evaluations (UNEG, 2016a, 2026). While intended for UN entities, several non-UN multilateral organisations, such as Gavi, the Global Fund and the World Bank, also draw on this guidance to inform their evaluation practices. Moreover, the Organisation for Economic Co-operation and Development (OECD) Development Assistance Committee (DAC) Quality Standards for Development Evaluation (OECD, 2010) provide a complementary reference for multilateral organisations involved in development assistance. Both documents stipulate that every evaluation must be accompanied by a formally documented Management Response (MR) to indicate whether recommendations are accepted, to outline concrete actions to be taken and assign responsibility for follow-up (OECD, 2010; UNEG, 2016a). In addition, organisations should put in place systematic mechanisms for recording, monitoring and reporting the follow-through of agreed actions, to ensure findings, conclusions and recommendations emerging from evaluations make relevant and timely contributions to organisational learning, informed decision-making processes and accountability for results (OECD, 2010; UNEG, 2016a).

The importance of fostering the use of evaluation has been emphasised since the earliest issues of Evaluation. Finne et al. (1995) and Owen and Lambert (1995) both emphasised the evaluator’s role in fostering learning and use through participatory, co-generative approaches that support programme stakeholders and inform managerial practice, or through the production of evaluation evidence that meets organisational learning needs for decision-making.

As part of broader and ongoing efforts to understand how to enhance the use of evaluation evidence, recent studies have documented how MR processes are applied across the UN system and within the wider development assistance community. In 2014, EuropeAid conducted a study on how evaluation evidence is used by OECD DAC member agencies. Key challenges included: the lack of formal processes to track MR implementation, the lack of clarity on who is responsible for this follow-up, and concerns regarding the process becoming a “tick-box” exercise (Bossuyt et al., 2014). Two reviews by the UNEG Evaluation Use Working Group examined trends across the UN system, the first published in 2016 and the second in 2020 (UNEG, 2016b, 2020). Both reviews highlighted persistent gaps in the production of MR to evaluations, along with important variations in practices to follow up on the implementation and in reporting practices, including engagement with senior management and governing bodies (UNEG, 2016b, 2020). No further reviews of MR processes have been identified since the 2020 UNEG study.

When the Multilateral Organisation Performance Assessment Network (MOPAN) – a network of 22 donor countries that assesses the performance of multilateral organisations – conducts its assessments, it expects these organisations to demonstrate evidence-based planning and programming. This includes the existence of a clear accountability system to ensure timely responses, follow-up and use of evaluation recommendations, as required under Key Performance Indicator 8 (KPI 8) (MOPAN, 2020). Its 2015–2016 assessment of Gavi, the Vaccine Alliance – a public-private partnership dedicated to expanding equitable and sustainable vaccine access in low- and middle-income countries – identified MR processes as an area needing improvement. More specifically, the MOPAN assessment highlighted the need for a more robust accountability mechanism to ensure both the preparation of MRs and the systematic follow-up of their implementation (MOPAN, 2016). In response, Gavi’s Evaluation and Learning Unit (EvLU) undertook several measures to strengthen its MR processes. These included a rapid evidence assessment to document how other multilateral organisations ensure the production, tracking and reporting of MRs (Gavi, 2023). The findings and recommendations from this assessment informed the institutionalisation of a follow-up process for the implementation of MR actions, including the creation of a KPI and systematic reporting to Gavi’s Senior Leadership when MR implementation faces delays, thereby contributing to an improved score on KPI 8 in the 2024 Gavi MOPAN assessment (MOPAN, 2024).

Conducting this study not only sought to inform Gavi’s efforts to improve its own MR processes, but also to serve as a resource for the broader evaluation community, including UNEG members and the OECD DAC Network on Development Evaluation (EvalNet). The findings were subsequently shared and discussed during a workshop at the 2024 UNEG Evaluation Practice Exchange (EPE) conference. This article provides a synthesis of insights from both the rapid evidence assessment and the dissemination workshop, offering an overview of policies, processes and incentives currently used to develop, track and report on MRs across multilateral organisations, and highlights key emerging lessons.

Methods

Findings reported in this article draw on a two-step process: (1) a rapid evidence assessment conducted between February and March 2023 and combining a desk review and key informant interviews and (2) documentation of additional insights gathered during a dissemination workshop at the January 2024 UNEG EPE conference in Málaga (Spain).

Rapid evidence assessment (February–March 2023)

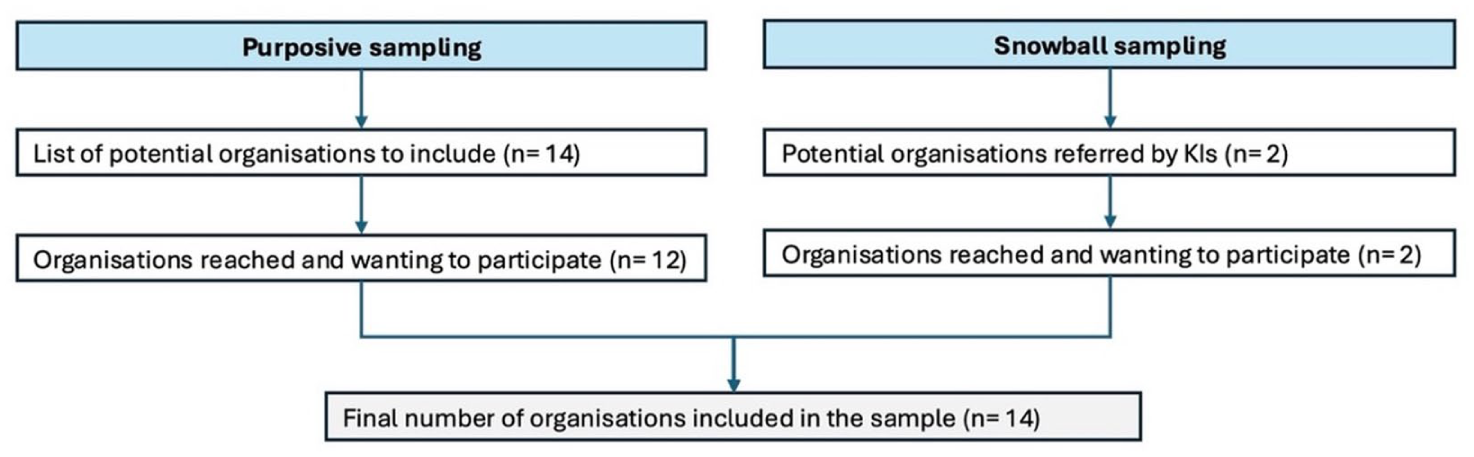

First, we conducted a rapid evidence assessment, combining interviews with key informants and targeted grey literature searches, to document MR practices across multilateral organisations comparable to Gavi, and to provide timely recommendations to Gavi decision-makers. To select organisations, we used purposive and snowball sampling, recruiting participants from evaluation offices and, where relevant, other departments involved in MR processes. Our initial list comprised multilateral organisations and the OECD DAC Secretariat, drawing mainly on more established relationships. Of the organisations contacted, all but two responded, and all those who did agreed to participate. In total, 14 organisations were represented in the final sample, of which two were recommended by organisations contacted (Figure 1). This sampling strategy was not intended to produce a comprehensive review representative of all UN agencies and comparable multilateral organisations, but rather to generate sufficiently rich insights from a feasible and diverse subset of organisations to inform the strengthening of Gavi’s own MR processes. The final sample included: (1) ten (n = 10) organisations within the UN system: Food and Agriculture Organization (FAO), International Labour Organization (ILO), International Organization for Migration (IOM), Joint UN Programme on HIV and AIDS (UNAIDS), UN High Commissioner for Refugees (UNHCR), UN Population Fund (UNFPA), UN Children’s Fund (UNICEF), World Food Programme (WFP), World Health Organization (WHO), World Intellectual Property Organization (WIPO); (2) three (n = 3) organisations outside of the UN system but closely collaborating with it: Gavi, the Vaccine Alliance (Gavi), The Global Fund to Fight AIDS, Tuberculosis and Malaria (Global Fund) and the World Bank Group; as well as (3) the OCED DAC Secretariat.

Selection of organisations to include in the sample – Rapid Evidence Assessment (February-March 2023).

We conducted 14 semi-structured interviews via Microsoft Teams between February and March 2023, with one or two Key Informants (KIs) per session (for a total of 19 KIs) and obtained consent to record and activate the automatic transcription. The discussion guide (Supplementary Material) was based on questions from the two previous UNEG reviews (UNEG, 2016b, 2020). A complementary desk review of 67 key documents (such as evaluation policies, guidelines and annual reports) from the organisations in the sample was conducted. Most documents were reviewed prior to meeting with KIs to gain a preliminary understanding of MR processes, and additional relevant documents were suggested by KIs during the consultation. The desk review focused exclusively on grey literature. Interview transcripts were analysed thematically using the qualitative and mixed methods data analysis software QDA Miner©, applying a coding framework developed through an iterative process (Supplementary Figure S1). The resulting findings were then triangulated with the data from a desk review. The complete list of documents consulted for the desk review is included in the rapid evidence assessment report, published on Gavi’s Evaluation Studies webpage (Gavi, 2023).

Dissemination workshop (January 2024)

Second, we presented our findings to representatives from 26 organisations during an interactive workshop we were invited to deliver at the 2024 UNEG EPE conference, which allowed for an opportunity to capture additional insights on MR practices. Workshop attendees included representatives from UNICEF, WHO, ILO, WFP, FAO, UNHCR (included in the initial rapid evidence assessment sample), as well as from: UN Sustainable Development Group (UNSDG); UN System-Wide Evaluation Office (SWEO); UN Educational, Scientific and Cultural Organization (UNESCO); UN Office on Drugs and Crime (UNODC); UN Department for Safety and Security (UNDSS); UN-Habitat; UN Volunteers (UNV); International Fund for Agricultural Development (IFAD); UN Industrial Development Organization (UNIDO); UN Office for the Coordination of Humanitarian Affairs (OCHA); International Trade Centre (ITC); International Federation of Red Cross and Red Crescent Societies (IFRC); UN Economic Commission for Europe (UNECE); Organization for Security and Co-operation in Europe (OSCE); Office of Internal Oversight Services (OIOS); Pan American Health Organization (PAHO); UN Institute for Training and Research (UNITAR); International Criminal Court (ICC) and Office of the UN High Commissioner for Human Rights (OHCHR).

The workshop consisted of two parts: (1) a presentation of the findings; and (2) an interactive session in which participants moved between three thematic stations (MR production, MR implementation follow-up and MR implementation reporting). At each station, relevant findings were displayed, and participants were invited to discuss and document additional practices and strategies on flip charts. Attendees were informed that additional information generated during the session would be captured and integrated with findings from the rapid evidence assessment, with the intent to produce this article.

Findings

The rapid evidence assessment (Gavi, 2023) and dissemination workshop highlighted practices related to the development of management responses (MRs) to evaluation recommendations, follow-up on the implementation of MR actions and associated reporting. The workshop also allowed to identify areas requiring further exploration. Of the 14 organisations included in rapid evidence assessment, 12 provided details on their respective MR practices, while the OECD DAC and the Global Fund shared broader insights given the OECD DAC’s normative role and the Global Fund’s ongoing development of its newly established evaluation and learning function. Accordingly, the findings presented on specific MR practices draw on the 12 organisations that provided detailed information, while broader recommendations for strengthening MR systems incorporate insights from all 14 organisations. As for the inputs from the workshop attendees, detailed organisation-specific information on MR practices was not systematically collected, as the workshop was designed to generate higher-level, cross-cutting insights applicable across organisations.

Management response development

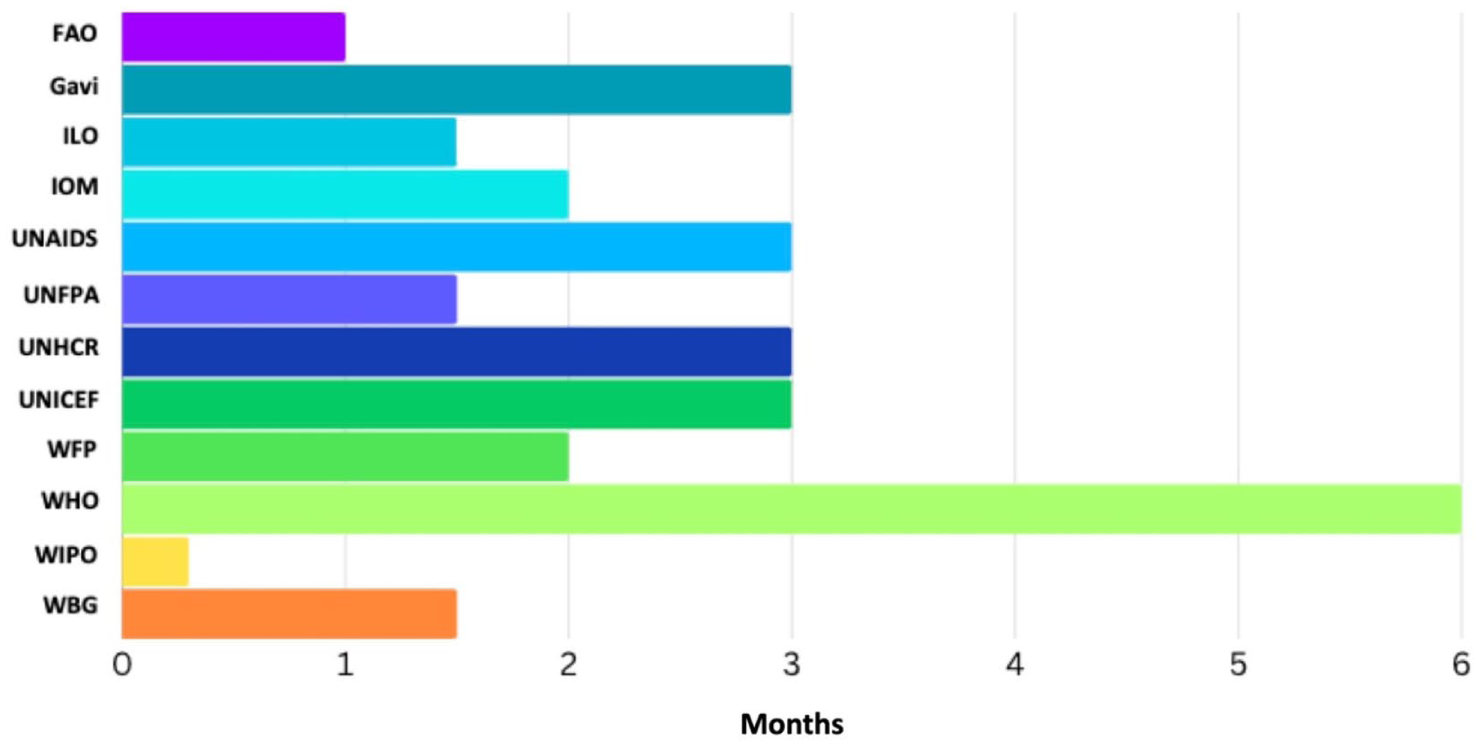

The rapid evidence assessment identified important variations across organisations in how MRs are developed following an evaluation. UNEG and OECD DAC norms and standards do not prescribe specific timeframes for completing MRs. Most organisations (n = 11) require MRs to be completed within 1–3 months after the end of an evaluation (Figure 2). Although UNEG and OECD DAC require an MR to be produced for each evaluation, only eight organisations (n = 8) apply this systematically, while four (n = 4) currently request it only for certain evaluation types, such as corporate and strategic evaluations.

Maximum time allotted to complete the MR – Rapid Evidence Assessment (February–March 2023).

Two noteworthy practices related to MR development are WIPO’s criterion for closing recommendations and the World Bank Group (WBG)’s shift towards results-based reporting. In addition to the essential components outlined in UNEG Standard 1.4 (management agreement, actions, responsibility and deadlines) (UNEG, 2016a), WIPO also requires the definition of a closing criterion when producing the MR, which will help to subsequently determine when a recommendation can be considered implemented or closed (14). As for the WBG, since its Management Action Record (MAR) reform, the organisation has shifted from being action-focused to being results-oriented. Instead of an action plan, the WBG Independent Evaluation Group (IEG) now supports management in developing an Outcome Framework to define the expected results chain, against which management is expected to provide evidence of progress as part of follow-up reporting (WBGIEG, 2020).

Following up on the implementation of management responses

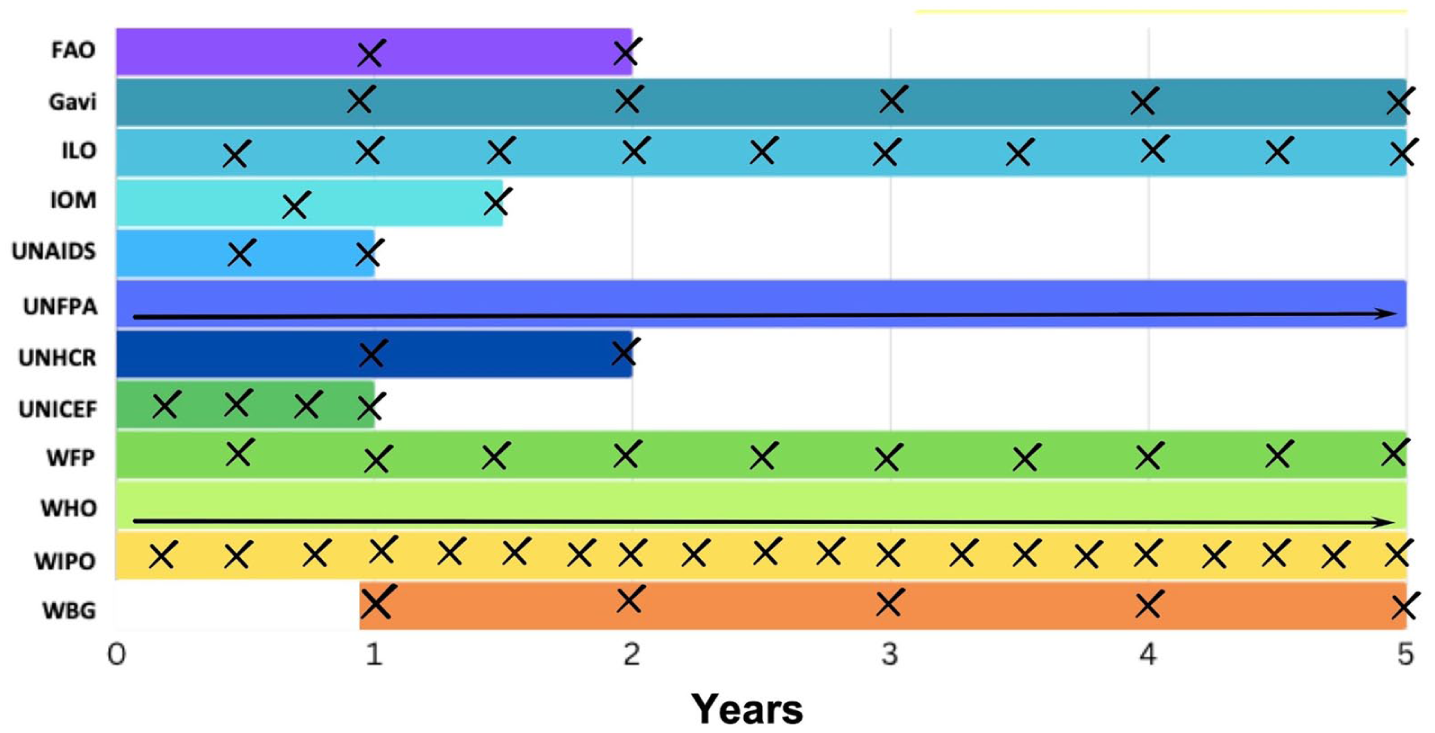

The rapid evidence assessment also identified important variations across organisations in how MR implementation is tracked, including differences in timeframes, tools used, integration with internal systems, roles and responsibilities and validation practices. As for MR completion, UNEG and OECD DAC norms and standards do not prescribe specific timeframes for MR implementation follow-up. Among the organisations included in the rapid evidence assessment (n = 12), frequency of MR implementation tracking typically occurs quarterly, semi-annually or annually, with the overall tracking duration ranging from 1 to 5 years (Figure 3). The view that responsibility for tracking the implementation of MR actions should lie with the relevant management units was cited as a key reason for adopting shorter follow-up periods, when tracking is led by the evaluation unit rather than the implementing unit. In contrast, longer tracking periods were justified by the need to accommodate implementation delays due to contextual factors. Overall, normative flexibility lets agencies design MR follow-up routines that fit their own planning cycles, risk profiles and resource constraints. The resulting diversity (from quarterly to annual reviews and from 1 to 5-year tracking horizons) reflects conscious trade-offs between ownership vs assurance, effort vs rigour and speed vs completeness. Workshop participants also recommended adjusting the frequency and duration of follow-up based on the importance of the MR, by, for instance, tracking critical MRs quarterly and others annually.

Frequency and duration of the MR follow-up – Rapid Evidence Assessment (February–March 2023).

Several organisations included in the sample from the rapid evidence assessment use digital platforms to track MRs, often with automated email reminders, replacing Microsoft Word or Excel-based processes. Examples include UNICEF’s Global Evaluation Management Response Tracking System (UNICEF, 2018), ILO’s Automated Management Response System (AMRS) (ILO, 2020), WHO’s new Consolidated Platform, as well as IOM’s PRIMA system (IOM, 2022). These platforms are often linked to dashboards that allow for data visualisation regarding implementation status (such as percentage of actions completed, partially completed and not started) and with categorisations (such as by division or department, by region or by theme). While these dashboards are usually internal, the WHO has an external dashboard on its public Member States Portal (WHO, 2025).

The rapid evidence assessment also underscored the importance of integrating MR tracking into broader planning and implementation systems, as well as into platforms used to monitor other recommendations. For instance, UNHCR, WHO and WIPO consolidate evaluation recommendations with those from other sources, such as internal and external audits, investigations and donor reviews. This helps to streamline the follow-up process and to highlight cross-cutting and recurring issues.

While evaluation units are often closely involved in supporting implementing stakeholders to complete the MR and ensure alignment with recommendations, their level of engagement after MR completion varies across organisations. As per UNEG Standard 1.4, responsibility for following up on the implementation of actions included in MRs lies with management (UNEG, 2016a). In some cases, once the MR is finalised, the responsibility for MR follow-up is transferred from the evaluation office to other departments, often those responsible for strategic planning and programming, which then provide implementation updates to the evaluation office upon request, such as to include in reports to governing bodies or in annual evaluation reports. In WBG and WIPO, follow-up meetings are held between evaluation units and the implementing stakeholders to discuss the implementation progress, while at WHO, a senior advisor in organisational learning provides ongoing coaching and guidance to management units to support the transition from evaluation to implementation. This support is not intended to diminish the ownership of the management units responsible for implementing actions in response to evaluation recommendations but rather seeks to strengthen that ownership by facilitating a smooth transition an ensuring that follow-up actions are effectively integrated into broader planning processes.

Finally, validation of the evidence provided by management is not yet a common practice, although several key informants and workshop participants expressed interest in integrating it into their MR practices. For instance, WBG IEG validates the evidence provided by management in annual self-assessment reports, while FAO, WFP and UNICEF review strategic planning and programming to confirm implementation of MR actions.

Management response reporting processes

Of the organisations included in the rapid evidence assessment, most (n = 11) already included or were planning to include MR implementation in their annual evaluation reports, while six (n = 6) had already established or were developing a Key Performance Indicator (KPI) to track implementation. Reporting typically covers the timeliness of MR completion and implementation status, with breakdowns by division or department, region, priority, time frame, and resource implications. A narrative is usually included to contextualise the quantitative data and to illustrate concrete examples of evaluation use.

Reporting on MR implementation to governing bodies, to senior management and to the public was among the strategies most frequently mentioned by key informants, among the mentioned strategies to optimise MR implementation. As per UNEG Norms and Standards (UNEG, 2016a), a periodic report on the status of MR implementation should be presented to governing bodies and/or the head of the organisation. This practice was already in place or planned across all organisations in the rapid evidence assessment (n = 12). Incentives for MR completion included requiring implementing stakeholders to present their MRs and progress updates to governing bodies and/or senior management, thereby enhancing accountability. In addition, WBG and WIPO have procedures in place to escalate cases where information on implementation is lacking or when there are important implementation delays. All organisations in the rapid evidence assessment (n = 12) publish their evaluation reports and MRs on their public website, in line with UNEG Norms and Standards, which call for key evaluation products to be publicly accessible (UNEG, 2016a). However, only five organisations (n = 5) also publish specific updates on the implementation of individual MRs. UNEG Norms and Standards do not provide specific guidance on public reporting of MR implementation.

Areas requiring further exploration

Workshop attendees identified several topics of interest for future exploration in subsequent studies on MR practices. Many expressed interests in the World Bank’s shift towards results-focused, highlighting the importance of assessing whether implemented actions effectively address the underlying issues that evaluation recommendations aim to address, rather than simply verifying that planned actions have been completed. Participants were also curious to know whether other organisations had adopted similar outcome-oriented approaches and raised the need for more concrete examples of how such approaches can be operationalised. Another area of interest identified was how to make MR processes more fit-for-purpose, potentially through differentiated approaches based on evaluation types (centralised, decentralised, joint evaluations), scope (strategic, programmatic) and purpose (learning- or accountability-driven). Participants also emphasised the need to continue to document and share practices regarding effective incentives for MR completion and follow-up, including strategies to increase stakeholder ownership and accountability, particularly in cases where implementation is off-track or past due. Finally, questions were raised about what should be systemically prioritised in MR implementation reporting, such as in annual evaluation reports or updates to governing bodies and senior management.

Discussion

This article highlights trends and good practices among multilateral organisations to support systematic follow-up on evaluation recommendations. These findings complement UNEG’s documentation of practices prior to 2020 (UNEG, 2016b, 2020). We emphasise three key findings.

First, there are important variations in MR practices across organisations, which can be explained by structural and operational differences, such as the position of the evaluation office within the organisational structure and its reporting lines, and whether the evaluation function is decentralised at regional or country levels. However, many strategies are transferable, and the exchange of good practices across the multilateral system can foster continuous improvement and greater coherence. Most key informants and several workshop participants requested a copy of the rapid evidence assessment report to inform internal discussions aimed at improving their MR practices. At least five organisations, namely, the Global Fund, FAO, UNHCR, IOM and World Bank, subsequently reported using it for this purpose.

Second, strategies identified by key informants and workshop participants focused primarily on strengthening accountability to governing bodies and senior management, as well as streamlining processes. Accountability-related strategies included providing updates on MR implementation during governance or leadership meetings, incorporating MR implementation in KPIs and using dashboards to enhance visibility. Streamlining strategies involved consolidating evaluation recommendations with those from other sources, integrating evaluation actions into existing corporate planning and programming systems and assigning MR follow-up to the department responsible for strategic planning and performance. These practices were reported to enhance the use of evaluation evidence in decision-making and foster a stronger evaluation culture. This ultimately helps organisations become more results-oriented and strengthen the practice of result-based management (Marschall, 2018). Consolidating evaluation recommendations with those emerging from other sources – an approach already implemented by UNHCR, WHO and WIPO, and currently under consideration at Gavi – was identified as a promising practice by several stakeholders consulted. Such consolidation not only facilitates the identification of trends, patterns, inter-relationships and recurring cross-cutting issues but also streamlines follow-up for implementing stakeholders by establishing a unified process for all recommendations.

Third, key informants and workshop participants identified several areas where further evidence is needed. Topics of interest for future studies on MR practices and future evaluation practice exchange platforms, such as the UNEG EPE conference, included: concrete examples on how to assess whether implemented actions effectively address underlying issues of focus; fit-for-purpose MR processes adapted as per evaluation type, scope and purpose; incentives to ensure MR completion and follow-up, including strategies to foster stakeholder ownership, and guidance on elements to prioritise in MR reporting. Addressing these evidence gaps would require gathering perspectives from primary users of evaluation evidence – such as implementing stakeholders, governing bodies, senior management – to better understand what they consider most important, what could improve MR practices and how to align them more closely with their needs and implementing context and focused on utilisation (Patton, 2013).

Beyond these three specific findings, this article highlights broader implications for organisational learning. While many of the MR practices identified – such as integrating MR actions into planning cycles, improving visibility through dashboards or reporting progress to senior leadership – focus primarily on whether actions have been implemented. As underscored by Bossuyt et al. (2014) in the EuropeAid study on the use of evaluation evidence within OECD-DAC member agencies, measures to ensure that the process does not become a mere “tick-box” exercise remain critically important. The WBG Independent Evaluation Group (IEG) and its recent shift to being results-oriented rather than action-focused, and its use of an outcome framework for reporting from management on whether follow-up shows evidence of change in the direction of expected outcomes, provide a promising practice worth exploring further. Moving beyond a primarily compliance-oriented approach and ensuring the effective use of evaluation evidence requires enabling conditions, such as systematic fora to inform decision-making and mechanisms that encourage feedback loops and sense-making.

Compared to the 2016 and 2020 UNEG reviews, although the organisations differ in the samples, broadly speaking our findings suggest progress with persistent shortcomings relative to UNEG/OECD DAC standards. Advances since those reviews include the introduction of standard MR templates with closing criteria, digital trackers, integration of MR actions into corporate planning, risk-based monitoring and, in some cases, public dashboards or KPIs. Yet gaps remain, with MRs not always systematically produced for all evaluations, ownership often becoming diffuse after sign-off, validation of self-reported progress remaining limited, “review fatigue” persisting, and public reporting continuing to be infrequent. Addressing these barriers is essential for strengthening the credibility, usefulness and overall effectiveness of MR systems and, ultimately, for maximising the return on investments in evaluations by ensuring that decision-makers use their recommendations to improve future policies, strategies and programmes.

Limitations

There are four key limitations to the findings presented in this article. First, as previously noted, structural and operational differences between organisations mean that some practices may not be relevant or transferable across contexts. Second, most key informants and workshop participants were from evaluation units, resulting in limited representation from other stakeholders, including primary users of evaluation (implementing stakeholders). Third, data collection and analysis for the rapid evidence assessment were conducted by a single person, introducing potential interpretation bias, including confirmation bias. To mitigate this, the draft report was shared with key informants for validation, and participants from 11 of the 12 organisations for which practices were documented reviewed it and provided valuable feedback. In addition, the short duration of the UNEG EPE workshop (1.5 hours) limited the depth of insights and the opportunity to validate inputs. Finally, findings reflect practices at the time of data collection (2023–2024) and may not capture more recent developments. Further research to update the knowledge base on MR practices would expand the evidence base on the subject.

Conclusion

This article highlights key strategies to improve MR practices across multilateral organisations within the UN system or in close collaboration with it. Most strategies focus on strengthening accountability to governing bodies and senior management, as well as streamlining processes. It also emphasises the value of sharing practices across the multilateral system and identifies key evidence gaps related to MR processes. Future studies on MR practices should include organisations with strong results-based management systems, which could provide valuable insights regarding the integration of evaluation recommendations and associated actions into existing corporate planning and programming. Improving MR practices is particularly important in the current context of reduced official development assistance. Enhancing the use of evaluation evidence facilitates organisational learning and enables multilateral organisations to course-correct, thereby enhancing the effectiveness, ensuring value for money and accelerating progress towards strategic goals.

Supplemental Material

sj-pdf-1-evi-10.1177_13563890261431252 – Supplemental material for From evaluation recommendations to action: Management response practices across multilateral organisations

Supplemental material, sj-pdf-1-evi-10.1177_13563890261431252 for From evaluation recommendations to action: Management response practices across multilateral organisations by Audrey Beaulieu, Anderson Uchenna Amaechi, Esther Saville and Mira Johri in Evaluation

Supplemental Material

sj-tif-2-evi-10.1177_13563890261431252 – Supplemental material for From evaluation recommendations to action: Management response practices across multilateral organisations

Supplemental material, sj-tif-2-evi-10.1177_13563890261431252 for From evaluation recommendations to action: Management response practices across multilateral organisations by Audrey Beaulieu, Anderson Uchenna Amaechi, Esther Saville and Mira Johri in Evaluation

Footnotes

Ethical considerations

This synthesis relied on organisational documents and interviews with institutional representatives discussing professional practices. As such, it did not involve human subjects or sensitive personal data requiring formal ethics review.

Consent to participate

All interview participants provided informed consent prior to their participation. Participation was voluntary, and participants were informed about the purpose of the study, confidentiality measures. Individual verbatim transcripts were shared with participants after each interview to allow validation and additional comments. Participation was voluntary, and participants could withdraw at any time.

Consent for publication

All participants provided informed consent for the use of their contributions in research dissemination and publication.

Funding

The authors received no specific financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: ES and AA are employees of Gavi, the Vaccine Alliance; AB conducted part of the work while she interned with Gavi’s Evaluation and Learning Unit (EvLU) under the supervision of ES and MJ, and part of the work while she served as a consultant within the same team; Gavi was involved in the interpretation of findings and the decision to publish. MJ reports no other competing interests. Her contribution was supported by the Université de Montréal.

Data availability

The report presenting findings from the rapid evidence assessment (February–March 2023) – Management Response Follow-up to Evaluations: Overview of Practices Across UN Agencies and Other Multilateral Organisations to Follow Up on Evaluation Recommendations – is available on Gavi’s public website (![]() ). The slide deck presented at the January 2024 United Nations Evaluation Group (UNEG) Evaluation Week, along with supporting materials and discussion notes, are available upon request.

). The slide deck presented at the January 2024 United Nations Evaluation Group (UNEG) Evaluation Week, along with supporting materials and discussion notes, are available upon request.

Supplemental material

Supplemental material for this article is available online.