Abstract

The study of unintended effects of policies is a key debate among evaluation scholars. Through complexity theory, we argue that unintended effects of (international) public actions are inevitable and question the reliability of evaluations in providing a correct and complete picture of public policy. We use a machine-learning-assisted text-mining case study approach, examining 254 programme evaluations of German international development cooperation as a ‘least likely case’. While German evaluations focus more on unintended effects than Dutch, Norwegian and American evaluations, their treatment is not always correct or complete. There is an overidentification of unintended effects and a bias towards positive ones, with certain types of unintended effects overlooked. We explore explanations for the observed weaknesses, including an overreliance on linear thinking and insufficient guidance for the evaluators on identifying unintended effects. We conclude with concrete suggestions to improve implementation of the Organization for Economic Co-operation and Development guidelines that are essential to make public administration more effective and trusted.

Introduction

Unintended consequences of public policies are as old as public policies themselves, as examples from the Greek times show (Perri, 2014). When the government of Athens built a wall around their city and harbour to protect them against the Spartans (which worked), they did not realise that by being more confined, they also created a breeding ground for a new pathogen, contributing to a new plague in the city (Christensen, 2022).

The dearth of a focus on unintended consequences of public action in evaluations has been well-documented (Bamberger et al., 2016; Morell, 2010) and problematised (Davidson et al., 2022; Oliver et al., 2020). What happens when a government agency aims to systematically ensure its evaluations deal with unintended consequences hasn’t been researched. This article hence contributes to the academic evaluation literature by providing an in-depth analysis of sustained efforts by a government agency to take unintended effects of their interventions more seriously.

Studying how unintended consequences are represented in evaluations is of particular importance when trust in government and its policies is declining across Organization for Economic Co-operation and Development (OECD) nations (Brezzi et al., 2021). One of the key factors contributing to a reduction in trust is incompetent and ineffective government policies. Distrust of national leaders and public institutions can emerge in the wake of scandals, especially if they are unchecked and not tackled head-on (ibid. page 39). If potential unintended effects of government policies had been monitored and evaluated right from the start, there would have been fewer failed policies and a higher trust in government.

The omnipresence of unintended effects in international development has been well-documented by both academic studies and investigative journalists. Sexual exploitation and abuse by aid workers in various countries have created suffering for many victims. The unintentional spread of cholera by Nepalese blue helmets has caused more than 10,000 deaths in Haiti. In-kind food aid distribution (with surplus agricultural production from the United States, Canada and Europe) has flooded markets in certain African countries, hurting local farmers. All these unintended side effects have had negative consequences for the people the international development was supposed to serve (US Government Accountability Office, 2011). Detecting them earlier is pivotal not only for the effectiveness of aid, but also to maintain public trust in it.

In this study, we have decided to focus on the international development programmes of OECD countries. International development programmes are of particular importance as the budget for them has been steadily rising over the decades. Foreign aid from OECD member countries rose to an all-time high of USD 211 billion in 2022 (OECD, 2023). Also, the number of governments that are providing international aid has been rising over the last decades, with ‘new’ donors such as Turkey, Poland and the United Arab Emirates increasing their spending overseas. At the same time, public support for international aid and its effectiveness is declining over time (Norris, 2017). While many factors explain this decline in public opinion support, such as economic precarity at home (Kobayashi et al., 2021), perceived ineffectiveness also plays a role (Hurst et al., 2017).

For our case study, we have decided to focus on Germany, and in particular the main implementation agency for technical cooperation (TC) of its international development cooperation: the Deutsche Gesellschaft für Internationale Zusammenarbeit (GIZ). Germany was selected as a least likely case study: to our knowledge, it is the only donor whose Evaluation Unit (which was formed in 2017) has made it mandatory for the evaluators they contract to focus on unintended consequences of the programmes. Evaluations are only approved if they address unintended effects of the programmes. While the OECD requires that all evaluations of OECD donors focus on unintended effects since 1991 (OECD, 1991) and has re-emphasised it again in 2021 (OECD, 2021), this practice is not lived up to by its members. In the official evaluation and evaluation documents of the Dutch aid programmes and American aid programmes, the percentage of those that focused on unintended effects hovered between 15 per cent for the Dutch (Koch et al., 2021) and 28 per cent for the Americans (de Alteriis, 2020).

Our German case study looks specifically at the completeness and correctness of its treatment of unintended effects in its evaluations. Since the GIZ evaluation unit is – to our knowledge – the only development evaluation service that has made reporting on unintended effects obligatory since its formation in 2017, researching this case can provide unique insight into the progress and pitfalls of attempting to become more serious about unintended effects measurement. By doing so this article can inform other international aid donors – and even other government departments – how to strengthen their operations and evaluations when it comes to unintended effects.

Theoretical underpinnings and analytical starting points

We employ a simple definition of unintended effects: the consequence of an action which differs from the consequence that was aimed for when starting it (Baert, 1991). This definition means that many problems in public policy execution are excluded. If a programme simply fails to meet its objectives, this is not necessarily an unintended effect. Unexpected extraneous events are also excluded from this definition.

Complexity thinking to explain unintended effects

Complexity thinking can be a particularly useful approach to understanding the unintended effects of international cooperation efforts by providing an ‘understanding of the mechanisms through which unpredictable, unknowable, and emergent change happens’ (Ramalingam et al., 2008: ix). Outputs, outcomes and effects are linked in many ways, leading to multiple layers of unintended effects. Since international development programmes are affected by a wide range of sociocultural, economic, political, legal, administrative and often ecological factors – all of which interact in complex and unpredictable ways – international development is a messy business. This makes the evaluation of international aid programmes a challenging endeavour. A useful approach for making sense of this messiness in international development and its evaluation is complexity thinking, according to World Bank methodological specialists (Bamberger et al., 2015).

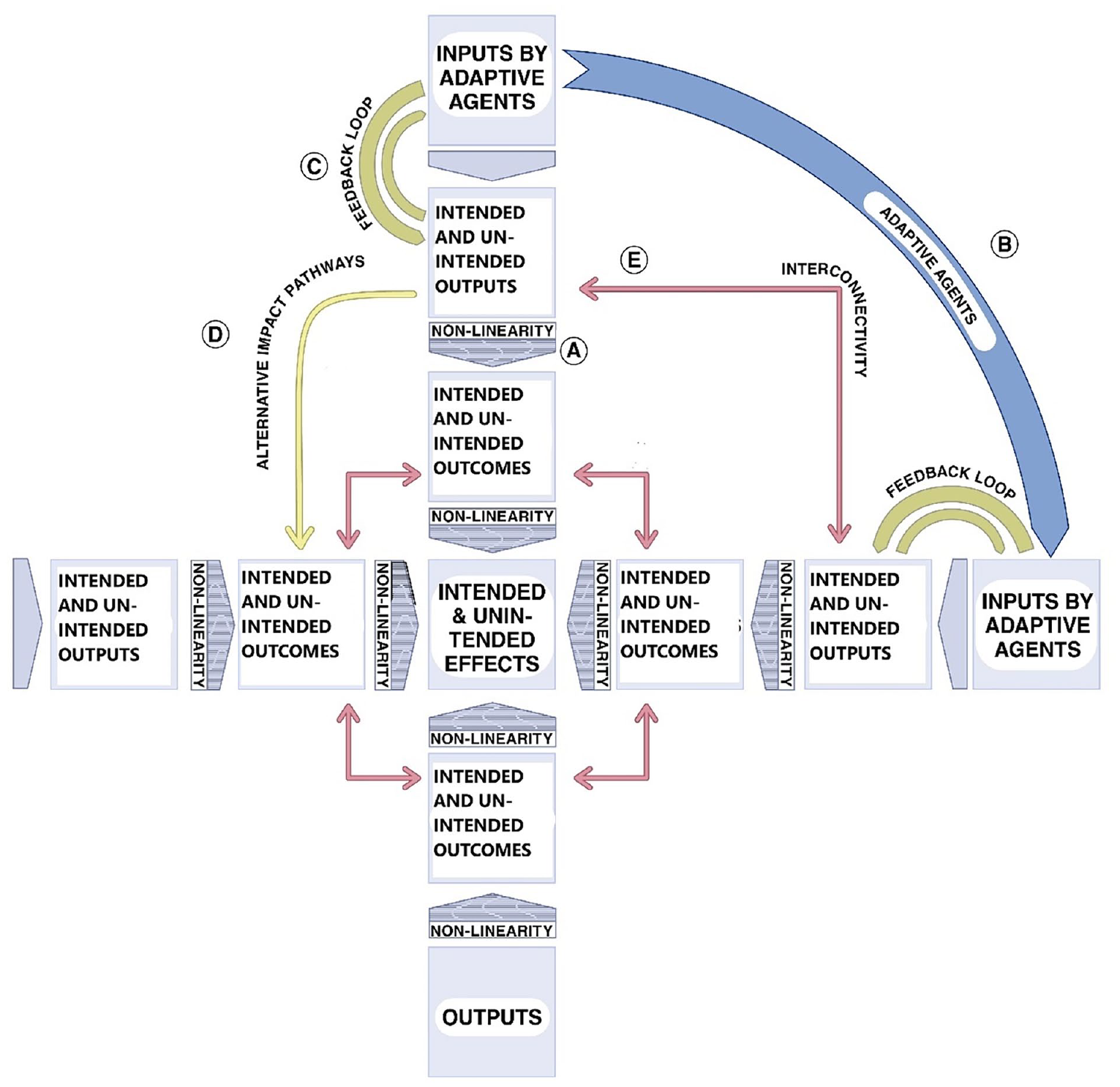

Complexity thinkers in international development generally make use of ten key concepts, five of which are of particular importance for this research, as they are particularly relevant for understanding the emergence of unintended effects. These 5 concepts are (1) feedback loops, (2) interconnections, (3) non-linearities and (4) alternative impact pathways, in a system composed of (5) adaptive agents that lead to both intended and unintended effects (Koch, 2024: 17).

Complexity-thinking-inspired effects model.

These five concepts, graphically depicted in Figure 1, explain that inputs lead to not only intended outputs, outcomes and effects, but just as much to unintended outputs, outcomes and effects. Figure 1 demonstrates how these 5 key terms from complexity thinking contribute to the occurrence of unintended effects.

To deal with complexity and the messiness of reality, practitioners ought to be reflexive. This means that practitioners must realise that ‘their interventions produce unintended changes, which give the situation new meanings. The situation talks back, the practitioner listens, and as he appreciates what he hears, he reframes the situation once again’ (Schön, 2017: 131–132).

Methodological constraints (and innovations) to capture unintended effects in evaluations

Many traditional evaluation methods are mostly based on linear thinking (Figure 2). By doing so there is a natural tendency to ‘evaluate against design’, which boils down to checking whether intended effects are achieved (Jabeen, 2016). Once evaluators have established whether intended effects have been achieved, the search for unintended effects is quite superficial and comes as an afterthought (Jabeen, 2018; Koch and Schulpen, 2018). Methods which rely on statistical hypothesis testing such as randomised control trials often only incorporate a limited number of predefined outcomes at the individual level and hence are likely to miss many unintended effects, while retrospective data-mining approaches may be too diffuse to offer real insight (Oliver et al., 2020: 68). While linear thinking in evaluations can capture

Linear model.

A review of unintended effects as reported in evaluations of American aid programmes found that evaluations that used more different types of data gathering methodologies found more unintended effects. The odds of reporting unintended side effects increased significantly and sizably by a factor of more than four if all or almost all of the seven data collection methods were employed (de Alteriis, 2020: 61). Also, adding a ‘realist’ touch to evaluations, to determine how different participants and stakeholders respond to programmes based on theory, might help to find unintended effects (Oliver et al., 2020: 70).

Assessing the correctness of unintended effects measurement

Assessing the correctness of the depiction of unintended effects is a hazardous undertaking: it is usually not feasible to redo the fieldwork that is involved in the evaluations. However, it is possible to assess correctness by reverting to standard academic quality criteria of validity. For this research, we focus on construct validity and internal validity.

Construct validity defines how well a test or experiment measures up to its claims (Reichardt, 2005). It refers to whether the operational definition of a variable reflects the true theoretical meaning of a concept. We assess construct validity by testing whether those elements that are unintended effects can be labelled as unintended effects according to the official definition, or something different (e.g. an unexpected external event).

Internal validity examines whether the study design, conduct and analysis answer the research questions without bias (Patino and Ferreira, 2018). In this research, we assess whether or not there is a positivity or negativity bias in the unintended effects that are encountered. Unintended effects can be both positive and negative. Taking complexity thinking as our starting point, there is no a priori reason to assume that there are more positive than negative unintended effects, or vice versa. A skew towards more positive unintended effects could indicate that the policy makers and planners have been diligent in pre-identifying potential unintended effects and have mitigated them successfully. The likelihood of this explanation for a positive skew is low because in that case it could be expected that the evaluations would mention this, for instance in the section on risk management. Hence, if there is a significantly skewed distribution, this study suggests that there is a bias, hence that the internal validity can be questioned.

Assessing the completeness of unintended effects measurement

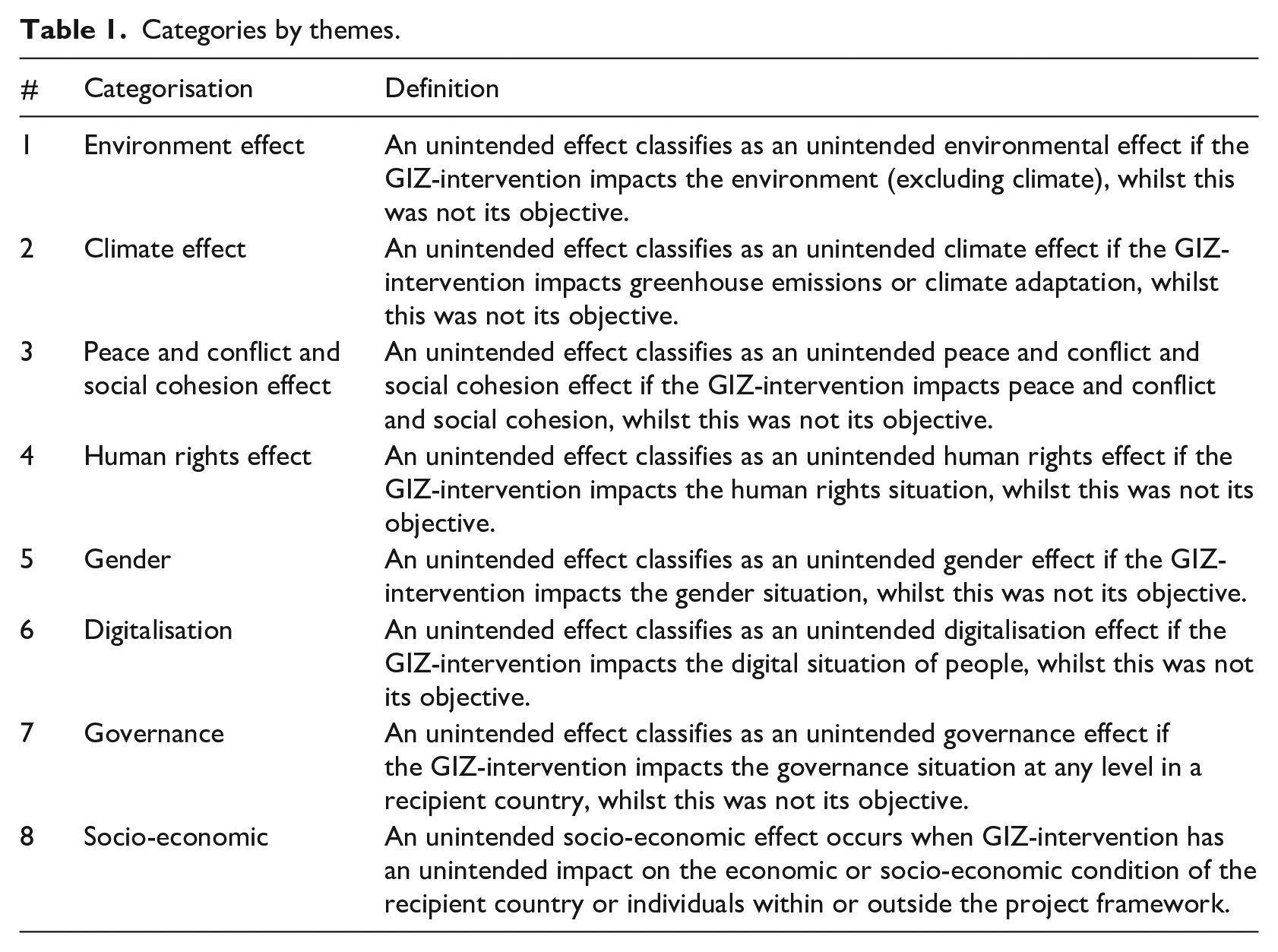

There has been substantial criticism of how incomplete and superficial the treatment of unintended effects in international cooperation evaluations has been (e.g. Wiig and Holm, 2014). Therefore, Davidson et al. (2022: 1) conclude that programme guidelines ‘would benefit from the development and deployment of more rigorous evaluation methods and the codifying of unintended consequences terminology’. For this study, we have developed, in close collaboration with the GIZ Evaluation Unit, and in an iterative fashion, two types of systematic axes based on which unintended effects can be categorised: a thematic one and a process one. By separating these two different ways of categorisation of unintended effects of foreign aid, this study provides an innovation to the until now most comprehensive analytical framework for unintended effects, which lumped both together (Koch, 2024).

The basis of this categorisation is multiple literature searches, as described in Koch et al. (2021). Something was considered as a category of unintended effect by applying a 3*3*3*3 rule, meaning that a type of unintended effect was included in our typology if it matched the following criteria: appeared in at least three different articles; occurred in at least three different domains of international cooperation; covered at least three different geographic areas; and was written by at least three different (groups of) authors.

The thematic axis can help to determine in which domain the unintended effects materialize. The unintended effects can for instance have an impact on climate or conflicts. Table 1 provides the main thematic categories, whereas the full typology can be found in the Supplemental Material 1 .

Categories by themes.

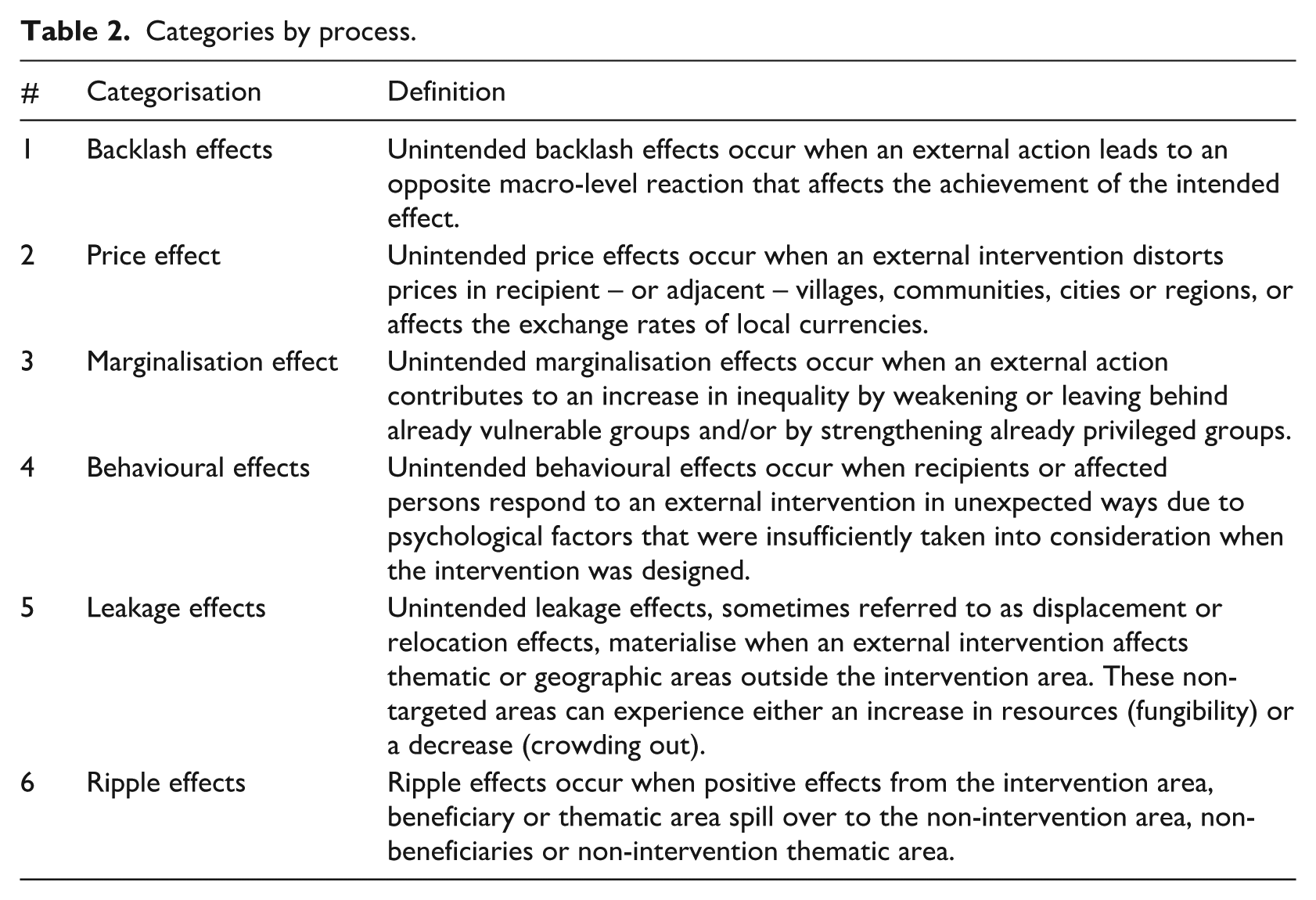

The process-categorisation provides insight into the underlying mechanisms that explain the emergence of the unintended effect. Table 2 provides the generic categorisation: more detailed subcategorisation can be found in the Supplemental Material and the substantiation can be found again in Koch et al. (2021). Neither the thematic, nor the process, categorisation claims to be exhaustive, but they are the most complete to date.

Categories by process.

We assume that the GIZ evaluations provide a complete picture of unintended effects if none of the categories mentioned in Tables 1 and 2 are overlooked. We don’t assume an equal spread across them, but since according to academic literature these unintended effects are part and parcel of international development cooperation programmes, it would be an omission if they were encountered only to a limited degree in the corpus of GIZ evaluations.

Methodology

We used text mining as the main methodology for data collection and a combination of basic statistics and qualitative content analysis for analysis of the collected data. In this section, we explain the various steps that were taken to ensure high quality data collection and analysis.

Text-mining approach

To determine the presence of unintended effects in the GIZ evaluations, we opted for a text-mining approach using Python software. The first step for text mining was to determine the keywords or ‘search terms’ to look for in the GIZ evaluations. For example, unintended effects could be mentioned in the text in many forms including unintended consequences, accidental results, indirect results etc. It was essential for us to come up with a comprehensive list of all possible combinations of words that could be used by evaluators to refer to unintended effects. For this, we developed a thesaurus of all relevant words for ‘unintended effects’ in English using basic artificial intelligence tools like Algolia AI and GPT-3. Similar to the approach of Koch et al. (2021), we used a list of nouns (a total of 18) and adjectives (41 in total) and used various combinations of them for our list of search terms. The complete list can be found in Annex 2. To make our list of keywords as comprehensive as possible, we also included 10 other search terms, in consultation with the GIZ Evaluation Unit, that flowed logically from the thematic and process axis. Altogether, 748 search terms were used for the text-mining exercise.

After the search terms were finalised, we developed a code in Python that loaded each GIZ evaluation report in the software and searched it for the complete list of keywords, returning results about the number of times each keyword had appeared in the document. Once all the evaluations with positive results (i.e. at least one or more keywords were recognised in the report) were identified, another Python code was executed which extracted three things: (1) the sentence in which the keyword appeared (2) the sentence before the identified keyword and (3) the sentence after the identified keyword. This was done to get more context about how the keyword was used in the text. Then, we used a third code in Python to flag false-positive results, that is, results consisting of keywords appearing in a different context (i.e. text might have been flagged as containing ‘unintended effects’ when it was talking about ‘unintended pregnancies’).

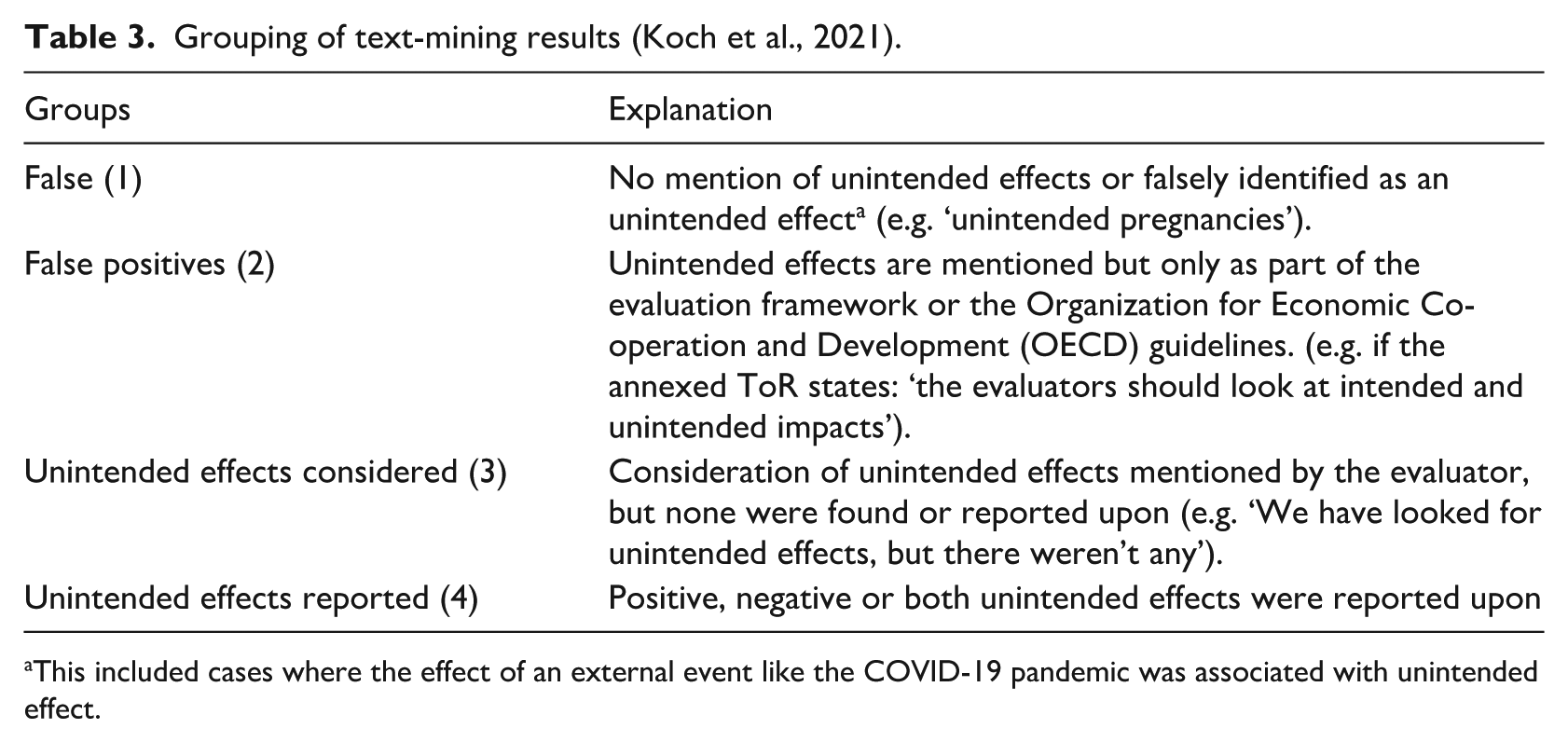

While the false-positive check in Python helped us refine our results, we also opted for a manual check of randomly selected evaluation reports to ensure that there was no omission bias or missing keyword that needed to be included in the list of search terms. After this first round of manual checking, we did not find any new search terms that needed to be included in our list. So we ran the code on all the evaluations provided by GIZ and opted for a second round of manual checks. In this second round, we classified the results, based on the passages extracted via text mining, into four groups that had been defined by Koch et al. (2021) in their work. Table 3 explains the groups.

Grouping of text-mining results (Koch et al., 2021).

This included cases where the effect of an external event like the COVID-19 pandemic was associated with unintended effect.

The results that fell under group 4, ‘unintended effects reported’, were carried forward to be further classified into the categorisations mentioned in Tables 1 and 2.

Background on case study

Germany is the second-largest provider of development cooperation funds among Development Assistance Committee (DAC) members, allocating approximately 37.3 billion USD in 2023 (0.83% of its GNI) (OECD, 2024). Around 46 per cent of Germany’s development cooperation budget in 2024 was disbursed bilaterally (BMZ). GIZ, the German government’s implementing body for technical cooperation, operates in over 120 countries, managing more than 1400 projects worth 23.5 billion USD (21 billion euros) (GIZ, 2024). Funded primarily by BMZ and the Ministry of Finance, GIZ also collaborates with multilateral institutions like the EU and UN on development interventions.

GIZ has extensive processes and standards to conduct a variety of standard analyses for its development projects; these include a gender analysis, an integrated peace and conflict analysis, an environmental and climate analysis and context- and conflict-sensitive monitoring (GIZ, 2023). Through these analyses, the organisation aims to monitor the intended impact of its interventions and to prevent or mitigate any unintended effects. At the inception and project planning phase (phase I) of the project, these analyses are done by various departments within GIZ. Following project implementation, the documentations and guidelines for each of these analyses is shared with the evaluators to provide them with all relevant context and background information. In this way, GIZ tries to internalise both risks and unintended effects and also tries to provide some guidance to evaluators in terms of finding possible unintended effects. To introduce non-linear thinking in their evaluation planning and inception phase, GIZ also has an obligatory procedure to update the results model of each project based on the realities on the ground at the inception phase of the evaluation (interview GIZ Evaluation Unit, 22.08.2024).

During and after the evaluation process, the GIZ evaluation unit serves as a quality assurance body, thoroughly reviewing the evaluation report to ensure it meets established standards, including the assessment of unintended effects, before approving it for publication.

For our study, we considered 254 evaluations on GIZ development projects that were evaluated between 2018 and 2023. All the evaluation reports that we considered are publicly available via among others the GIZ website. Each of these development projects had an estimated budget of 2.2 million USD (2 million euros) or above (the project with the highest budget was around 95.2 million USD/85 million euros). Since the projects have been conducted in different countries around the world and with varied numbers of evaluators, not all the evaluation reports were in the English language. Among the 254 evaluations, 190 were in English while 40 were in French, 9 in Spanish and 15 in German. However, the GIZ evaluation unit team provided us with a translated text for all non-English evaluations so we did not have to identify relevant search terms in other languages.

In terms of methodological approach, almost all the 254 evaluations used a combination of document analysis, observations, contribution analysis/outcome harvesting and interviews as their main methodology. The basic methodological approach indicates that the evaluations are mostly inspired by linear instead of complexity thinking as discussion in the Theoretical underpinnings and analytical starting points section. Quite a large number of evaluations (around 139) were conducted remotely or semi-remotely (probably since many of them took place during the time period during the COVID-19 pandemic – although not every evaluation clearly stated this as a reason for a remote/semi-remote approach). The evaluation process consisted of an inception phase and an evaluation mission (field visit/remote mission), which was typically 14 – 15 days altogether. The total duration of the entire evaluation process was about one year.

In addition to the methods employed in the evaluations, it is also relevant to look at the evaluators (and their selection process themselves) to determine if there are indications that this might influence if and how unintended effects are captured. The composition of evaluators was diverse, consisting of consulting firms to individuals, many of whom were based in the Global North. Evaluators based in the Global North may sometimes lack local context necessary to identify potential unintended effects. According to the GIZ Evaluation Unit, their standard evaluation team consists of an international evaluation expert who is generally based in the Global North and a local evaluator (from the Global South). The international evaluators are selected from 12 thematic pools that GIZ has developed in which potential evaluators submit their resume and experience, which are then accessed by GIZ as well as a team of independent inspectors. The assessment of the resumes and experience is done on the basis of predefined criteria and the potential evaluators are given a ranking and placed in the overall pools. When needed, the potential evaluators are contacted for their services based on this ranking. Each evaluator signs a framework agreement of three to five years with GIZ – this is done to ensure that their independence is not compromised, that is, if their evaluation of the project is very critical, GIZ cannot fire them (interview with GIZ Evaluation Unit, 28.06.2024).

To ensure the relevance and validity of our results, we conducted several rounds of data cleaning. We discuss our results and data-cleaning steps in more detail in the next section.

Results – Part 1: The correctness of findings

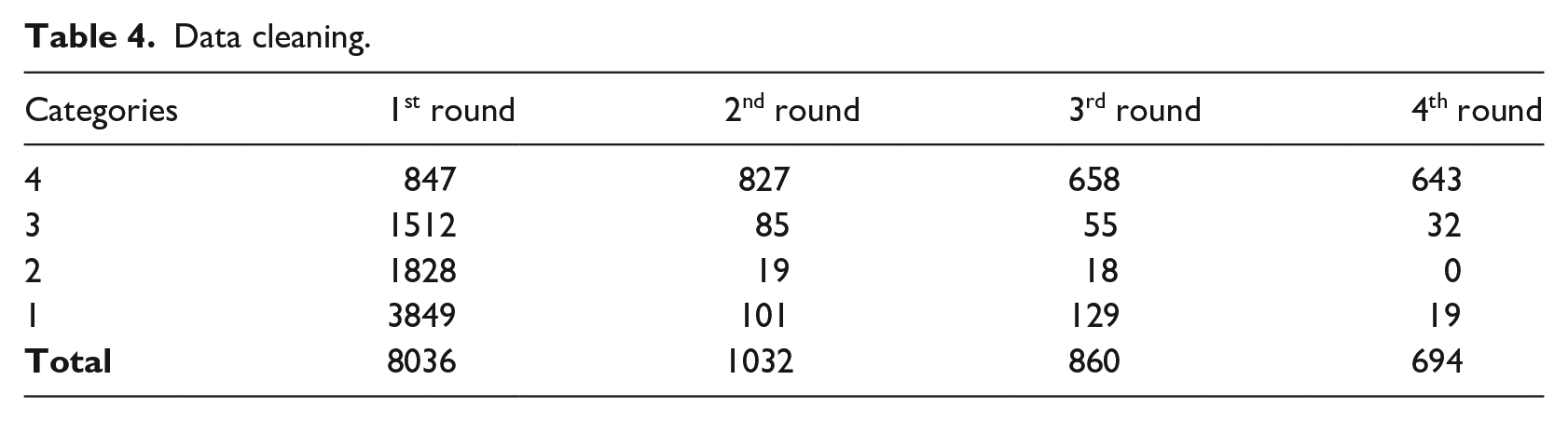

Altogether, 8063 hits were collected as a result of the text-mining exercise, which were then classified into the four groups mentioned in Table 3.

After the four rounds of data cleaning and classification, we had 643 hits that could be identified as unintended effects (see table 4). We carried forward with these hits to categorise them according to the theme and process axes that we defined in the Theoretical underpinnings and analytical starting points section. We explain our results in the following section.

Data cleaning.

Overidentification of unintended effects

While cleaning and classifying the data, we observed that in many cases, the evaluators were not clear about what constituted an unintended effect; as a result, we found clear overidentification of these effects. In some cases, it seemed as if the evaluators declared unintended effects only because they were required by GIZ to report on them. After the four rounds of data cleaning, we recognised a clear pattern in three areas where overidentification was happening:

Unintended effects identified under all three categories mentioned above were omitted from the final dataset to avoid overidentification in the data.

Positive bias of unintended effects

Unintended effects were found in 178 out of 254 evaluation reports, that is, 70 per cent of all evaluations had at least one or more unintended effects mentioned in them. This percentage is in stark contrast to the literature of other development organisations where such a study has been conducted. For example, Koch et al. (2021), while examining the unintended effects in the Dutch context (using a similar methodology), found that only 14 per cent of all evaluation documents considered unintended effects. Similarly, Hageboeck et al. (2013) and Wiig and Holm (2014) also found very negligible mention and consideration of unintended effects in USAID and Norad, respectively, even though their research method was less comprehensive. In comparison to its peers, GIZ’s emphasis on unintended effects in their evaluations between 2018 and 2023 appears to have resulted in the evaluators focusing increasingly on the unintended consequences of the interventions.

Interestingly, though, the percentage of positive unintended effects identified was significantly higher than the negative ones. In absolute terms, out of the 643 unintended effects, 501 were positive (78% of all unintended effects) and only 142 were negative. While there may be more positive than negative unintended effects in the GIZ-interventions, such a large difference indicates a positive bias, that is, the evaluators seemed to focus more on positive unintended results compared to the negative ones. The trend remained similar when dividing the data across different categories of theme and process axes or different geographical regions. We have explored the reasons behind the positive bias in more detail in the Discussion section of the article.

Results – Part 2: The completeness of findings

Unintended effects by theme axis

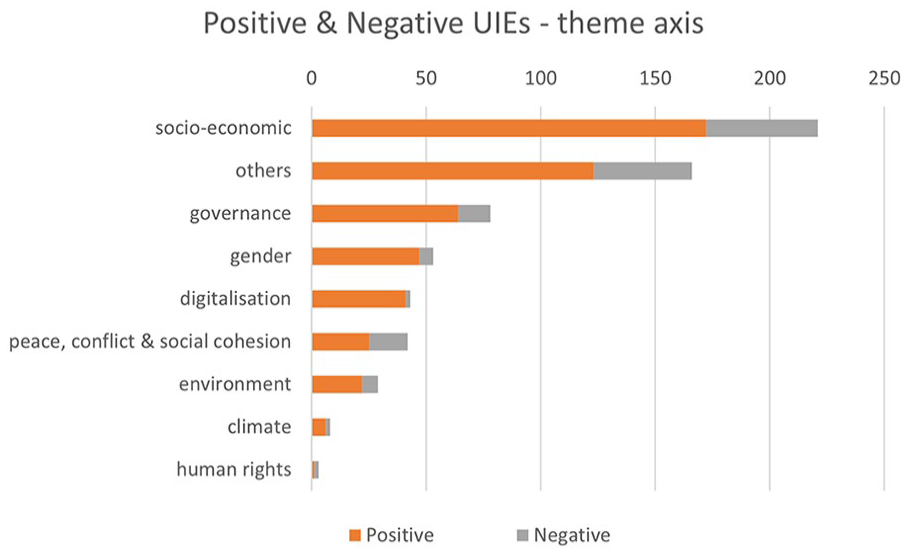

Under the theme axis, we identified 8 categories of unintended effects as explained in the Theoretical underpinnings and analytical starting points section. Each of these categories had further sub-categories that have been included in Annex 1.

The category with the most unintended effects (both positive and negative) was the socio-economic category (221) while the lowest number of unintended effects were found for human rights effects (3). Similarly, the number of unintended climate effects (8) and environmental effects (29) was also relatively low and positively skewed.

Peace, conflict and social cohesion, gender, digitalisation and governance effects combined made up around the same number of unintended effects as the socio-economic effects. This indicates that unintended effects are especially encountered in the socio-economic realm. This could suggest, for example, that in the design phase either potential social-economic unintended effects are not sufficiently considered or that that the evaluators have a blind spot for other categories of unintended effects. Alternatively, it could be that the category social-economic is still quite broad and might need to be split up. In addition, subcategorisations might need to be added; 163 unintended effects could not be categorised in any of the categories by theme, indicating that while the list of categorisations is quite comprehensive, it is still not exhaustive. Figure 3 and Table 7 in Annex 2 summarize the results according to the theme axis, and Table 9 in Annex 3 shows the results across the sub-categories.

Unintended effects–theme axis.

Van der Harst et al. (2023) highlighted that unintended effects related to human rights are easily visible in micro-credit or entrepreneurial programmes, especially if they are focused on women only since, these programmes empower women to be economically independent which could lead to increased cases of domestic violence. To investigate the low number of unintended human rights effects, we checked several evaluation reports for projects focused on micro-credit programmes (e.g. GIZ Central Project Evaluation Report, 2020b) and found that the evaluators had neither mentioned any checks for human rights violations when looking for unintended effects nor referred to the design phase where these risks could have been preemptively mitigated.

Analysing the sub-categorisation of the thematic effects provides a similar picture of neglect of certain (sub)types of unintended effects. One of the sub-categorisations of the unintended digitalisation effect is the ‘digital infringement effect’ (see Table 5 in Annex 1). This effect materialises when data privacy and ownership infringement are unintentionally at risk, especially regarding sensitive data, because of the intervention. An in-depth case study of digitalisation projects of an international NGO found that while this unintended effect was not frequent, it was impactful (Cordaid, 2022). It can hence be assumed that of a total of 254 programme evaluations, quite some of which focused on digitalisation projects, this unintended effect has not been found once.

Similarly, unintended climate effects tend to be prominent in projects related to infrastructure development, for example deforestation resulting from Brazil’s Trans-Amazonian highway that also received international development finance (Jabeen, 2016). To explore the low number of climate-related unintended effects in GIZ evaluation, we went back to the evaluation reports once more. We found several projects that focused on infrastructure and mobility (e.g. GIZ Central Project Evaluation Report, 2020a) in which the climate dimension was not reported on as a possible unintended consequence, indicating a blind spot in the evaluation process. Neither did the reports mention that these potential unintended climate effects had already been taken care of in the design phase.

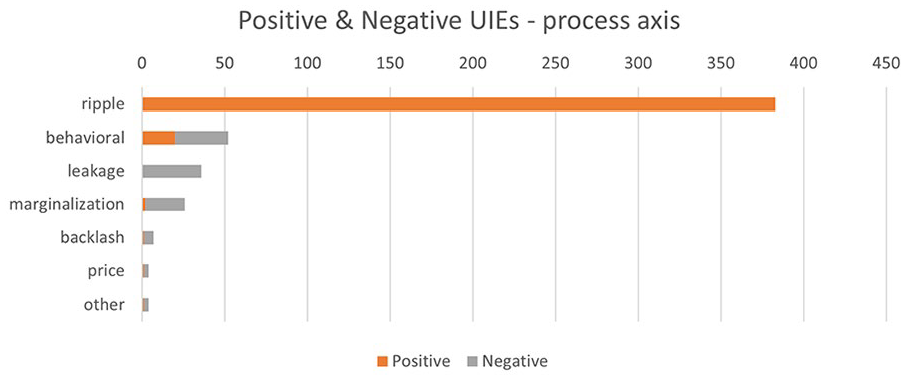

Unintended effects by process axis

There were 8 categories defined under the process axis, each of them with further sub-categories as explained in the Theoretical underpinnings and analytical starting points section. While the thematic axis highlights the domains where unintended effects arise, the process axis identifies the mechanisms through which these unintended effects occur. This is crucial information to generate more crosscutting insights into drivers of unintended effects, which a pure thematic analysis can’t provide. Some 513 unintended effects could be categorised according to the process axis, but the remaining 130 effects did not fit under the broader theme of the process axis and so they were excluded from calculations of this category.

The highest number of unintended consequences found were for ripple effects (384) while the lowest number was for price effects (4) followed by backlash effects (7). The distribution of positive and negative unintended effects is relatively different across the process axis compared to the themes. Excluding leakage and ripple effects, which can only be negative and positive respectively, we observe that the number of negative unintended effects is significantly higher for most categories. For example, among the 26 marginalisation effects, only 2 were positive while 24 were negative. A similar pattern can be seen for behavioural, price and backlash effects.

The large number of positive ripple effects, however, skews the distribution of results in favour of positive unintended effects. Figure 4 and Table 8 in Annex 2 show the summary of results across all process categories and Table 10 in Annex 3 shows the results across the sub-categories.

Unintended effects – process axis.

While investigating unintended price effects, Filmer et al. (2018) found that unintended price effects can be observed in development cooperation projects related to cash transfers which leads to an increase in food prices and transfers in kind (e.g. food aid), which results in a downwards price effect on the local economy. In the case of GIZ evaluations, several projects focusing on sustainable economic development used cash transfers to support laid-off working or reskilling of the labour market. In some project evaluations, the cash-for-work scheme was also mentioned (e.g. GIZ Central Project Evaluation Report, 2021b). However, in none of these projects were unintended price effects observed or investigated by the evaluators. Similarly, unintended backlash effects can be seen in macro-level or meta-evaluations of a specific region and are therefore missed in individual project evaluations since they are difficult to observe at a micro-level. We explore these blind spots in project evaluations in more detail in the next section.

Discussion

The positive bias of unintended effects in GIZ evaluations explained

Positive biases in evaluations have been documented for policy evaluations in general (LSE GV314 Group, 2014), but for development programme evaluations in particular (Bamberger, 2009; Levelt and Pouw, 2022). For instance, in 2022, IOB commissioned a meta-evaluation of 32 project evaluations that were financed through the ministry’s policy framework on Strengthening Civil Society (Global Development Network, 2022). The report highlighted several shortcomings in the evaluation methods used in these evaluations. It found that these methods were not adequately equipped to capture null or negative outcomes, thereby leading to a bias for positive results (p. 4). It explained these biases by among others looking at biases of evaluators and of informants. The biases of evaluators were revealed as they were not checking correctly for alternative impact pathways and the biases of informants occurred because they were providing only positive responses in the hope that a programme would continue. In sum, evaluators are often found to not want to ‘rock the boat too much’ and face institutional and financial pressures for too optimistic appraisals (Bamberger, 2009: 43).

The bias towards positive unintended effects in GIZ evaluations (78%) could hypothetically be explained by similar patterns of institutional pressures. While GIZ has a contracting system of three to five years in place as a safety net for the evaluators (as explained in the Background on case study section), the evaluators might think that they are nonetheless dependent on the appreciation of GIZ-HQ staff to get additional evaluation assignments. While GIZ staff explain this is not the case (interview with GIZ Evaluation Unit, 28.06.2024), this perception could mean that the evaluators face this dilemma, as described by Levelt and Pouw (2022: 386), of being critical enough to have added value as an evaluator, but not being too critical so as not to spoil the relationship with the contractor. We have not been able to interview the evaluators working for GIZ about this dilemma, so this remains a hypothesis. At any rate, it would be too simplistic to attribute the positive bias exclusively to these institutional/financial pressures, as other factors also play a role. Davidson et al. (2022) also highlight that evaluators, often because of budget constraints, are not asked to interview non-beneficiaries. Also in the GIZ evaluations, we found few cases of non-beneficiaries being interviewed, whereas these are more likely to have experienced unintended side effects (such as price effects). If the evaluation methodologies that GIZ employ were more inspired by complexity- and systems-thinking, interviewing non-beneficiaries would become more standard practice as this line of thinking requires tracking contextual development which may – or may not – have been produced by the intervention (Koch, 2024: 204).

A lack of completeness in the treatment of unintended effects explained

To capture unintended effects in a comprehensive fashion, Koch (2024) has argued that evaluators and their contractors need to’(1) look broader, (2) look back longer; (3) look differently and let others look’ (p. 204). It appears that the lack of completeness of the GIZ evaluations can at least partially be explained because these evaluations don’t pay heed to this advice. The project evaluations are methodologically uniform and short-term (meaning that they take place during or right after the implementation phase of a project, and that the field visits in the research last approximately 15 days) in which Western lead evaluators – assisted by local researchers – check linearly how one particular programme works out in practice. In contrast, evaluations that would aim to capture unintended effects need to be methodologically diverse, financially independent, long-term, locally-driven research programmes. These evaluations would focus on local developments and how international development cooperation actors impact them.

To gauge unintended effects it is advised to ‘look broader’ and to measure impact beyond the direct target group and to look beyond the thematic silo of the intervention, as the unintended effects often occur in a different thematic area (‘interconnectivities’ and ‘alternative impact pathways’). It is also important to include those who have left the intervention area and new arrivals (‘adaptive agents’). This was rarely done in the GIZ evaluations.

In addition, from a complexity-thinking perspective, it is important to ‘look back longer’, as it might take time for feedback loops to materialise. This means that it is important to measure the impact several years after the end of a programme. This was also rarely done in the GIZ programme evaluations, which are typically organised within one year after the end of the programme.

Finally, to capture unintended effects systematically, it is deemed important to ‘look differently’ and ‘let others look’, which means that an independent local lens needs to be applied. Concretely, this means having long-term contracts with local research hubs and employing local researchers as standard practice. These researchers shouldn’t take the predefined results of development cooperation programmes as a starting point, but local developments, and see how (the ensemble) of development cooperation programmes have impacted developments. This type of research would better capture unintended effects which are now largely overlooked by GIZ evaluations, such as backlash and price effects.

Current methods employed by GIZ evaluations are capable of detecting unintended effects: 692 unintended effects were identified. However, by looking broader, longer and looking differently (and letting others look), a more complete and correct coverage of unintended effects can emerge. The OECD asks to ‘apply evaluation criteria thoughtfully’ (OECD, 2021), and while not providing a panacea, these three rules of thumb can help in that direction.

Taking the evaluation of unintended effects to the next level

The challenge of ‘looking broader, looking back longer, looking differently and letting others look’ is that it is easier said than done because it is costly. Currently, GIZ Central Project Evaluations cover about 40 per cent of all GIZ projects (GIZ, 2022: 8). Since resources are always a constraint, it is suggested to reduce the coverage rate and exchange quantity for quality: by having fewer project evaluations, resources can be freed up to have more evaluations (for instance cross-sectional evaluations) which take the mantra ((look broader, look back longer, look differently, and let others look) as a starting point. By having these more comprehensive evaluations, key concepts of complexity thinking can be more systematically included, which will enhance the likelihood that unintended effects will be identified (see the Complexity thinking to explain unintended effects section and Figure 1). Complexity-thinking-inspired evaluations could, for instance, facilitate the identification of the interconnectivities and feedback loops that create unintended effects. More broadly, it is advisable to connect with other adaptive agents operating in the development system and to opt for joint evaluations, that is, if NORAD and GIZ are both active in a specific region in South Sudan, joint evaluations of their projects can identify possible unintended effects at a reduced cost and can help in recognising alternative impact pathways. This sort of joint learning can help government agencies zoom-out of their own bubble and can lead to learning across the sector.

Less radically, alternative ways to achieve more correct and more complete assessments of unintended effects are possible. One of the obstacles to a more accurate picture is that many evaluators lack a clear understanding of what an unintended effect entails (or not) and where to look for them. The evaluators must be trained on (1) understanding the definition of unintended effects (including differentiation between unintended effects, risks, implementation failure and external events leading to a different outcome), (2) methodological approaches for finding these effects (including expanding the scope of the evaluations as explained above) and (3) which unintended effects to check for (e.g. using the categorisation used in this article). On the side of the commissioning organisation (e.g. GIZ), it is imperative to provide clearer guidelines to the evaluators for checking unintended effects, something that they are currently working on.

Limitations and representativeness of the study

Despite a variety of quality controls and checks, there are still limitations of this study. One of the major limitations is the quality of translation of the evaluation reports in languages other than English. The translation had been done through automated software by a third party and had not always been accurate, which might have led to some missing data points. In addition to this, there is always a chance of human error and human bias when manually coding the data which can be minimised through quality controls but cannot be considered to be zero. Another major limitation was the lack of access to additional documentation of the evaluation reports (e.g. information on pre-analysis or on inception phase of the evaluation). Additional documentation can sometimes be extremely helpful in contextualisation of information available in the main reports. Finally, the finding of the reports mostly relied on the evaluation reports and the information given to us by the GIZ central evaluation unit. We did not have the opportunity to conduct interviews of other staff of GIZ to get insights into the process of evaluation commissioning, data collection, analysis and other quality checks put in place by GIZ.

In terms of representativeness of the study, we are aware that the case study of GIZ is a least likely case; however, the findings can still have broader relevance. For example, the positive bias visible in the case of GIZ is quite evident in evaluation reports of other organisations, as we explained in the ‘The positive bias of unintended effects in GIZ evaluations explained’ section. Similarly, the lack of completeness would be an even worse issue in other cases compared to GIZ since GIZ tries to provide some guidelines to the evaluators on unintended effects through a series of pre-analyses on the project before implementation, as we discussed in the Background on case study section. On the other hand, the issue of overidentification of unintended effects would be less of a problem in other cases as many organisations have not made it compulsory to report on unintended effects. But despite the limitations of this study, we can consider the recommendations to be largely relevant for other actors and organisations in the field.

Conclusion: Progress and pitfalls in unintended effects measurement

Progress

In our least likely case study, we have analysed the efforts of GIZ to become more intentional about side effects in their evaluations. And with great results: in over 70 per cent of the evaluations, attention is paid to them, which is much higher than for other development cooperation agencies. This high percentage is good news and shows that if an agency takes up the challenge of increasing focus on unintended effects, it pays off. Unfortunately, GIZ is one of the few agencies which have paid so much attention to unintended effects.

Pitfalls

However, our study also showed that despite these efforts, the representation of unintended effects is not always unbiased or complete. Our findings have wider ramifications for evaluation scholars. This underlines the importance of dedicating sufficient resources to evaluations, so to allow for complexity-thinking-inspired evaluations, and to provide evaluators with more guidance on how to measure unintended effects (something that GIZ is currently trying to implement by developing supporting guidelines). The categorisation developed for this research can be useful to come to more comprehensive measurement of unintended effects. Even though GIZ has systems in place that grants independence to evaluators, there is still a tendency to report more on positive unintended effects by them. This reinforces the call to grant sufficient independence to evaluators and ensure they experience it as such, so as to reduce any pressure they might feel to focus disproportionally on positive (unintended) effects.

It remains pertinent to strengthen the measurement of unintended effects in evaluations, not just in the field of international development but also more broadly. There are too many public policies which have too much collateral damage, which are discovered too late. Mapping side effects better is just a first step, however: policymakers will need in their turn also to become more receptive to evaluations, and instead of seeing them as a potential threat, they should see them as a way to strengthen their performance and regain the trust of citizens.

Supplemental Material

sj-pdf-1-evi-10.1177_13563890251347266 – Supplemental material for Progress and pitfalls when evaluating the unintended effects of public policy: The case of German international development cooperation

Supplemental material, sj-pdf-1-evi-10.1177_13563890251347266 for Progress and pitfalls when evaluating the unintended effects of public policy: The case of German international development cooperation by Zunera Rana, Dirk-Jan Koch and Carla Diem in Evaluation

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the German Development Cooperation Agency (GIZ) as part of a project on the unintended effects of international development. The findings presented in this article are based on the study conducted under the project. The article was written independently, and GIZ had no influence on its content or conclusions.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.