Abstract

This study explores the organisational determinants of evaluation use. It proposes a framework distinguishing between the initial and advanced phases of evaluation practice and predicting what factors determine the type of evaluation use at each phase. Our framework was empirically tested using a survey of 1123 public and non-governmental organisations across five European Union (EU) countries: Czechia, Denmark, Italy, the Netherlands and Poland. Our findings suggest that the adoption mode of evaluation practice influences the dominant type of evaluation use, but this impact is limited to the first few years after the evaluation practice was introduced in an organisation. In the advanced phase, we identified significant relationships between dimensions of legitimacy and types of evaluation use. This suggests that organisational legitimacy remains a promising predictor of evaluation use; however, the specific dimensions and their relationships with different types of evaluation use require further investigation.

Introduction

In recent decades, organisational theory has emerged as a valuable perspective shaping scholars’ understanding and explanation of evaluation use. Central to this perspective is the recognition that evaluations are inherently situated within an organisational context. Institutionalising the evaluation function in organisations shifts the focus from a single study to a stream of studies (Rist and Stame, 2006) or towards evaluation systems that require conceptualisation, organisation, funding and staffing to produce evidence (Leeuw and Furubo, 2008; Stockmann et al., 2020; Stufflebeam, 2003).

Scholars examining evaluation through the lens of organisational theories often describe their approach as drawing on agency theory, organisational institutionalism 1 and resource dependence theory (Ahonen, 2015; Behn, 2003; Carden, 2013; Dahler-Larsen, 2012; Weaver, 2007). Carman (2011) specifically observed that in more than three-quarters of the cases she analysed, these three theoretical perspectives most effectively explain why organisations engage in evaluation and how they utilise its findings. Along these lines, Albæk (1996) argued earlier that organisations, as political and cultural systems, may use evaluation in ways that go beyond its instrumental purpose, aligning it instead with broader organisational goals.

This perspective may therefore be seen as both a complement and an alternative to most previous contributions on evaluation use, which are widely regarded as adhering to an inherently realist and rational logic that assumes evaluation is conducted to maximise intervention effectiveness and contribute to social betterment (Dahler-Larsen, 2012; Mark and Henry, 2004). It emphasises determinants related to the organisation’s role within its environment and the external pressures it faces when adopting evaluation practices, paying less attention to factors relating to a particular evaluation study, evaluators that conduct it, or potential users, each of which is prevalent in the current evaluation literature. For the proponents of the organisational perspective, the latter factors are, to a large extent, shaped by the underlying organisational context, meaning that these are micro-level factors that carry less importance (Højlund, 2014).

Based on insights from organisational theories, scholars such as Eckerd and Moulton (2011), Højlund (2014) and Raimondo (2018) have proposed frameworks aimed at linking dominant types of evaluation use with specific characteristics of an organisation and the context in which it operates. While promising, their contributions demonstrate some shortcomings. As noted in a recent review (Kupiec et al., 2023), these (usually theoretical) propositions were relatively frequently at odds with empirical accounts. Most importantly, the frameworks are static (Martinaitis et al., 2019) and suggest that initial conditions determine how the organisation will use evaluation in the future, disregarding possible changes in evaluation practice.

In this article, we aim to deepen the understanding of the determinants of evaluation use from an organisational theory perspective. Following the suggestions on prospective research avenues in Kupiec et al. (2023), we propose a framework for the organisational factors of evaluation use. It recognises different stages of development of evaluation practice and includes legitimacy as a determinant of evaluation use. Hypotheses derived from the framework were tested on data collected by a survey of diverse public and non-governmental organisations (NGOs) from five European Union (EU) countries with different evaluation traditions: Czechia, Denmark, Italy, the Netherlands and Poland.

Our study’s theoretical contribution lies in further shifting the focus on evaluation use from ‘immediate’ factors (Højlund, 2014) to more fundamental determinants embedded within the organisational context. In practical terms, our findings – particularly regarding the role of motivation – may support efforts to better understand and foster evaluation use in organisations with different characteristics and operating in diverse contexts.

Refined framework of organisational determinants of evaluation use

A recent review by Kupiec et al. (2023) identified five frameworks that propose specific organisational determinants of evaluation use (in studies by Carman, 2005; Eckerd and Moulton, 2011; Højlund, 2014; Lall, 2015; Raimondo, 2018). Although the details of the frameworks differ slightly, they share key characteristics due to their links to the same organisational theories. More specifically, all five frameworks recognise the importance of external pressure. Some recognise it explicitly (Eckerd and Moulton, 2011; Højlund, 2014), while others regard it as a consequence of interaction with funders (Carman, 2005; Lall, 2015) or an element of a larger set of external cultural factors (Raimondo, 2018).

The most comprehensive approach, encompassing and organising various types of pressures identified in other frameworks and arising from different possible modes of interaction with the external environment, is proposed by Højlund (2014). Building on the mechanisms of institutional isomorphism conceptualised by DiMaggio and Powell (1983), he introduced the concept of the adoption mode of evaluation practice, which includes four possible options: 2

coercive – evaluation is triggered by formal or informal pressure exerted by other organisations (e.g. donors and resource providers), regulations, or cultural expectations;

mimetic – evaluation is conducted to imitate other organisations, usually the one considered successful, leader in the field, to increase the chances of success;

normative – evaluation is suggested as a desired by consultants or new management, staff that learned this practice in a previous workplace; and

voluntary – evaluation is conducted because of a belief that it helps to learn and improve.

While the term adoption mode is specific to Højlund, a similar logic based on institutional isomorphism can be found in the work of Eckerd and Moulton (2011) and Carman (2005), the latter of whom uses the term motivations to evaluate. In our framework, adoption modes are likewise incorporated as an independent variable.

All five frameworks review by Kupiec et al. (2023) assume that external pressure influences how evaluations are utilised. This dependent variable is conceptualised in various ways: in one instance, it is framed in terms of evaluation functions – learning and accountability; in another, as orientations – funder-centric or otherwise. The remaining three frameworks offer typologies of evaluation use, typically including instrumental, conceptual, legitimising and symbolic uses.

This classification is relatively simple and traditional; indeed, such types of use were identified early in the evaluation literature (Pelz, 1978; Rich, 1977). It does not fully reflect more recent developments, such as the addition of tactical, ritualistic and constitutive uses, or the recognition that these can pertain to either the evaluation products or the evaluation process itself (Vedung, 2021). Moreover, by focusing solely on the notion of evaluation use, these frameworks do not encompass broader conceptualisations such as evaluation influence, which include distinctions between conscious and unconscious influence, individual and collective influence, as well as immediate and long-term effects (see Kirkhart, 2000; Mark and Henry, 2004).

Despite the availability of these expanded conceptual frameworks, we have opted to adopt a simple typology aligned with that used by other authors studying factors of evaluation use. This decision is driven by pragmatic considerations: the typology adequately fulfils our primary aim, which is to capture the influence of organisational context on evaluation use through regression models that require a manageable number of variables. We therefore apply the following categories in our analysis:

instrumental – evaluation findings directly impact decisions and change the evaluand;

conceptual – evaluation findings change the awareness and attitudes of decision-makers, helping to gain new knowledge;

substantiating – evaluation findings are used to legitimise or advocate for the organisation, evaluated intervention, or particular decisions; 3

symbolic – the mere process of evaluation is used to legitimise the organisation or the policy/programme that is evaluated.

The first significant modification introduced in our proposed framework, in comparison to the earlier contributions discussed in this section, responds to the observation made by Kupiec et al. (2023) that much of the discrepancy between empirical findings and existing frameworks can be explained by accounting for time and dynamism. Existing frameworks may be characterised as static (Martinaitis et al., 2019), as they imply that initial conditions largely determine how organisations will use evaluation in the future.

As there are no existing studies that conceptualise the stages in the evolution of evaluation use, our proposal draws inspiration from the literature on evaluation capacity building. In the work of Gibbs et al. (2002) and Preskill and Boyle (2008), three and four stages, respectively, are identified through which evaluation capacity building typically progresses. In both cases, the process begins with an initial stage in which evaluation is conducted primarily to comply with external demands, and culminates in an advanced stage where organisations recognise the value of evaluation, invest in it voluntarily and implement it regularly.

Recognising that the boundaries between these stages are not always clear-cut – and that organisations may not necessarily pass through all of them – we propose a simplified distinction between two stages in the development of evaluation practice:

Initial phase: Evaluation practice is adopted, usually in response to external pressure – either directly from other organisations or indirectly due to perceived uncertainty in the field.

Advanced phase: Evaluation practice has been in place long enough to give the organisation an opportunity to build capacity and reflect on its potential role.

While Gibbs et al. (2002) and Preskill and Boyle (2008) suggest that evaluation practice is typically initiated in response to external pressure, our framework acknowledges that this is not always the case, as reflected in the inclusion of the voluntary adoption mode. Similarly, although these authors assume that, at an advanced stage, organisations come to recognise the value of evaluation, we maintain that this is not necessarily so.

We expect that in the initial phase, the type of pressure 4 that leads an organisation to introduce an evaluation practice influences the type of evaluation use. Therefore, our first hypothesis concerns the relationship between the adoption mode of evaluation practice and the type of evaluation used:

H1: The adoption mode is a significant determinant of the primary type of evaluation use in the initial phase.

We derived several more detailed hypotheses in this vein from Højlund’s (2014) model and in line with our expectations:

H1.1: The frequency of instrumental use is higher in organisations that adopted an evaluation practice voluntarily or mimetically. 5

H1.2: The frequency of conceptual use is higher in organisations that adopted an evaluation practice voluntarily or normatively.

H1.3: The frequency of substantiating use is higher in organisations that adopted an evaluation practice mimetically.

H1.4: The frequency of symbolic use is higher in organisations that adopted an evaluation practice by coercion.

In contrast to previous contributions, we expect that when evaluation practice matures, the influence of the adoption mode visibly decreases. Therefore, we hypothesise the following:

H2: The adoption mode is not a significant determinant of the primary type of evaluation use in the advanced phase.

To address the gap in the advanced phase by identifying a relevant factor, we turn to the second suggestion from Kupiec et al. (2023): organisational legitimacy. A concise definition by Deephouse et al. (2017), following a review of recent conceptual developments, describes organisational legitimacy as the perceived appropriateness of an organisation within a social system, based on its adherence to rules, values, norms and definitions. Legitimacy justifies an organisation’s existence (Woodward et al., 1996) and can therefore be considered a broader concept than its role within the environment, making it more suitable for a framework encompassing diverse types of organisations.

Furthermore, legitimacy is best understood as a dynamic, continuously evolving process rather than a static attribute (Drori and Honig, 2013; Haack et al., 2020), aligning precisely with the dynamics we want to achieve in our framework. It has also been observed that the specific source of an organisation’s legitimacy influences its organisational routines (Johansson and Sell, 2004). Accordingly, we hypothesise the following:

H3: The perceived source of legitimacy impacts the type of evaluation use for organisations in the advanced phase.

Although legitimacy is an implicit or underlying element in all evaluation use frameworks discussed in the previous section, it has not been explicitly treated as a determinant of evaluation use. This omission may be due to the complexity of the term, which scholars have approached from multiple perspectives, including its sources, criteria, dimensions, states, types and bases (Deephouse et al., 2017; Bitektine et al., 2020; Díez-de-Castro et al., 2018), as well as cognitive situations and evaluation modes (DiMaggio, 1997; Tost, 2011), to name a few.

Three typologies from the early 1990s shaped most of the subsequent work on the types of legitimacy. Aldrich and Fiol (1994) distinguished between cognitive legitimacy – the perception of an organisation as necessary or inevitable – and sociopolitical legitimacy, based on conformity to societal norms. Scott (1995) further differentiated sociopolitical legitimacy into two components: regulative legitimacy (compliance with laws and rules) and normative legitimacy (alignment with social values). Suchman’s (1995) typology, closely related to both, also includes cognitive legitimacy, while his notion of moral legitimacy parallels Scott’s normative dimension. His third category, pragmatic legitimacy, overlaps with the regulative aspect but expands it to include all forms of legitimacy based on stakeholders’ self-interested calculations.

Legitimacy typologies have continued to proliferate over time. By 2011, Bitektine had identified 18 distinct types of legitimacy, and by 2018, Díez-de-Castro et al. reported as many as 37. These authors referred to the resulting profusion of terms as a ‘jungle of terminology’, while Czarniawska (2008) described legitimacy theory as a constantly mutating virus.

For the purposes of our framework, we drew on the typology proposed by Díez-de-Castro et al. (2018). In contrast to earlier classifications, which typically identified three or four types of legitimacy, their typology presents a broader spectrum by disaggregating general categories into more specific ones. From this more detailed classification, we selected those elements that we found relevant to different evaluation use types:

Pragmatic: This form of legitimacy (which is also called instrumental legitimacy) relies on stakeholders’ beliefs that they can achieve their goals through the actions of the organisation. Organisations seeking pragmatic legitimacy are expected to use evaluation instrumentally to improve the effectiveness and utility of their actions.

Managerial: This type of legitimacy relates to an organisation’s ability to fulfil its mission and vision in alignment with broader societal interests. Organisations legitimised based on their managerial potential must demonstrate effectiveness, similar to pragmatic legitimacy, thereby increasing the probability of instrumental use. However, to be justified, such organisations must also understand the general interest and align their mission accordingly, which makes their conceptual use of evaluation more likely.

Ethical: Ethical legitimacy arises from the audience’s belief that an organisation upholds positive values and is motivated to specific behaviours because they are morally correct (i.e. ‘the right things to do’). Organisations that rely on this form of legitimacy must learn what is important for their audience and convince them that it pursues the same values and principles. The substantiating and conceptual uses of evaluation are both expected to be frequent in this case.

Technical: Technical legitimacy, also known as procedural legitimacy, stems from the perception that an organisation operates efficiently through sound procedures and quality assurance. Evaluations in this context serve to signal adherence to professional standards and established practices, increasing the likelihood of symbolic use. At the same time, the emphasis on demonstrating procedural competence creates opportunities for substantiating use, as organisations may draw on evaluation findings to support claims of operational excellence.

Regulatory: This form of legitimacy arises from adherence to regulations and standards (e.g. rules, norms, laws and sanctions) set by authority. In such a context, evaluation is often coerced, with organisations obliged to conduct it. Since simple compliance with an obligation to conduct the evaluation is enough to avoid sanctions, one would expect that such imposed evaluations are more likely to be used symbolically.

Based on the above assumptions, we expect to observe several detailed relationships between legitimacy dimensions and the types of evaluation used:

H3.1: The frequency of instrumental use is higher in organisations seeking pragmatic and managerial legitimacy.

H3.2: The frequency of conceptual use is higher in organisations seeking managerial and ethical legitimacy.

H3.3: The frequency of substantiating use is higher in organisations seeking ethical and technical legitimacy.

H3.4: The frequency of symbolic use is higher in organisations seeking regulatory legitimacy.

Research design

Sample

Our sample covered public organisations and NGOs 6 from five EU countries: Czechia, Denmark, Italy, the Netherlands and Poland. This choice of countries was dictated by our desire to include the different contexts (i.e. the different evaluation traditions and cultures) in which organisations may operate in this study.

Denmark and the Netherlands are among the countries where institutionalised evaluation emerged as early as the 1970s and was domestically driven (Dahler-Larsen and Hansen, 2020; Leeuw, 2009). In the other three countries, evaluation emerged in response to external pressures related to policy requirements – at first, those of EU regional policy, and later, those of cohesion policy. In Italy, this occurred at the end of the 1980s due to the Single European Act’s establishment of structural funds. After joining the EU in 2004, Poland and Czechia initiated evaluation practices in this field.

Whereas evaluation cultures in Denmark and the Netherlands are generally considered to have a high degree of maturity, some studies regard those in Italy as having a medium degree of maturity (Jacob et al., 2015). 7 According to Stockmann et al. (2020), the institutionalisation of evaluation is low in Poland and Italy, moderate in Czechia and Denmark, and high in the Netherlands. However, their findings regarding Czechia are inconsistent with those of Kupiec et al. (2020), who argue that most evaluation practices in Central and East European Countries, including Czechia and Poland, are still merely acts of compliance with EU requirements that have not been disseminated to other policies. We thus assume that our sample consists of two countries with mature evaluation cultures (Denmark and the Netherlands) and three with immature cultures and differing histories (i.e. a longer history in Italy and shorter histories in Poland and Czechia).

To select particular organisations, we adopted a broad approach. We sent invitations to all possible NGOs that could be contacted by an email address available on the Internet and to public institutions that were identified as those that most probably use evaluation: 8 local and regional governments of all levels, ministries, central and regional government agencies, and higher education institutions.

We introduced a distinction between large and small organisations, assuming that in a large organisation, the unit of analysis would be the department (questionnaires would be distributed separately to each department), while in a small organisation, it would be the entire organisation. 9 This decision stemmed from the observation that evaluation practices, including the motivations for establishing evaluation practice and types of evaluation use, may vary between departments (Kupiec and Wrońska, 2024). 10 We asked that the survey be completed by a person in a managerial position with knowledge of the origins of the organisation’s evaluation practice and its use of evaluation – department director in a large organisation or organisation manager in a small organisation.

Data collection

Data for our study was collected through an anonymous, online, self-administered questionnaire. This technique is the most widely used in studies on evaluation use (see the reviews from Cousins and Leithwood, 1986; Johnson et al., 2009). A key limitation of the survey approach is the inability to control how respondents interpret the questions and whether they are able to answer them. This issue relates to the challenge of operationalising and measuring complex constructs within a closed-format questionnaire, which inherently simplifies them (Price and Murnan, 2004). However, previous studies demonstrate that surveys can effectively identify and categorise types of evaluation use to a sufficient extent through closed-ended questions (e.g. Altschuld et al., 1993; Carman, 2005; Eckerd and Moulton, 2011).

The key part of the questionnaire was three Likert-type-scale questions designed to identify (1) the types of evaluation use in an organisation (instrumental, conceptual, substantiating and symbolic), (2) the modes in which an evaluation practice was originally adopted by an organisation (coercive, mimetic, normative and voluntary) and (3) the dimensions from which an organisation derives its legitimacy (regulatory, technical, managerial, ethical and pragmatic). Each of these elements was described by several statements (Likert-type items). Two covered types of use, four covered adoption modes, and three covered legitimacy dimensions. Items in the three Likert-type-scale questions were rotated.

Participants were asked to rate the extent to which they agree or disagree with each statement on a five-point Likert-type scale. The statements were formulated indirectly, referring to organisational and professional practices known to respondents from their everyday work. Several considerations supported this choice. First, the results of our pilot study indicated that the analysed concepts, when directly expressed, are perceived by respondents as rather abstract and difficult to relate to the practices known from their organisation. Second, indirect questions in self-administered questionnaires help mitigate social desirability bias – the tendency of respondents to provide answers they perceive as socially acceptable rather than entirely honest ones (Fisher, 1993; Grimm, 2010). Third, this approach allowed respondents to indicate several types of use and use factors with varying degrees of intensity, which aligns with previous observations of the phenomenon (e.g. Eckerd and Moulton, 2011; Milzow et al., 2019). Apart from the three questions mentioned above, the respondents were also asked to provide the main information about their organisation (e.g. the type of organisation, its years of experience in evaluation, number of evaluation studies conducted).

The survey was distributed online between December 2022 and December 2023 in five language versions (translated by the team members from the English original). The full content of the questionnaire is available in the Supplemental Material, available online.

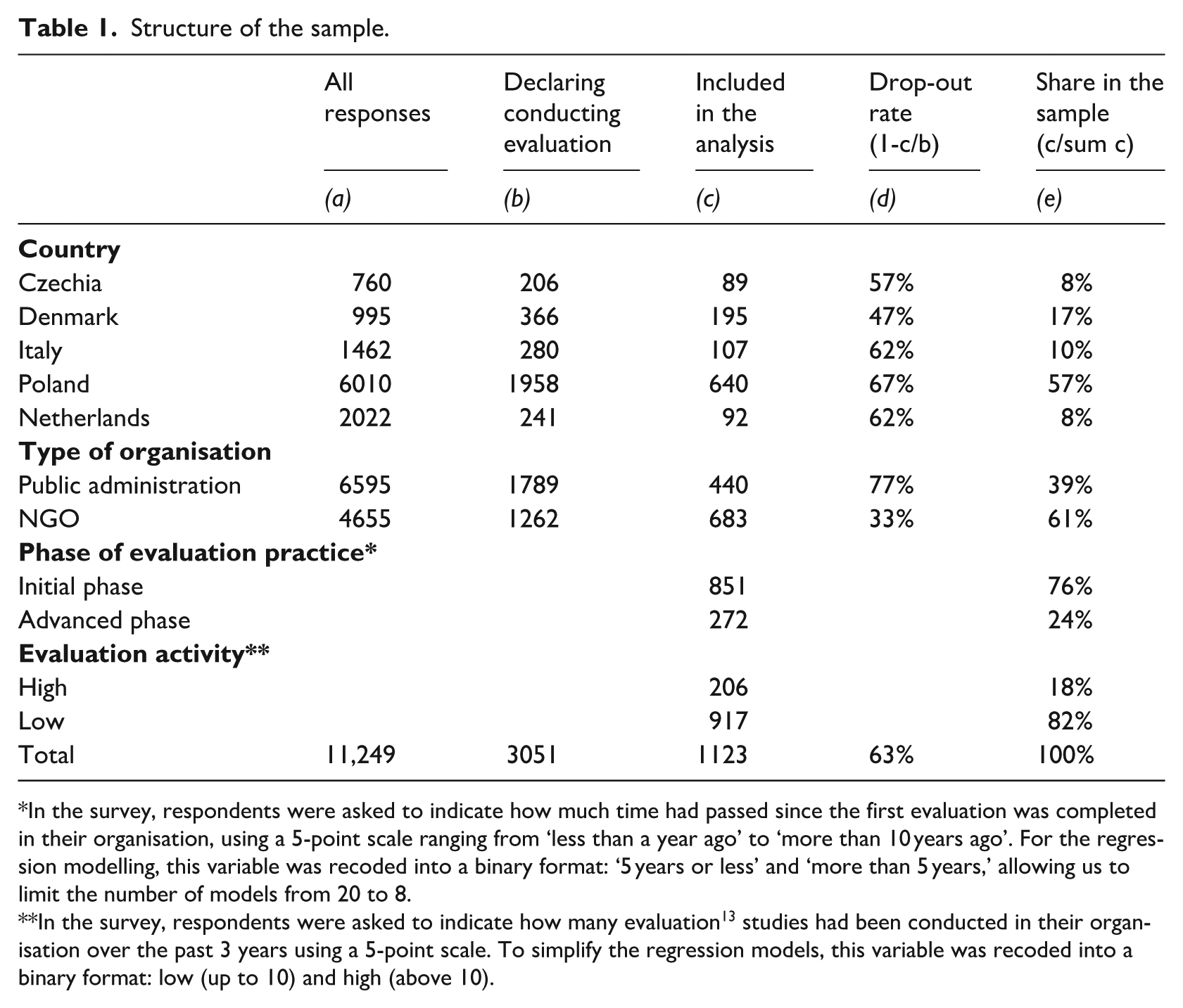

Data processing

A total of 11,249 organisations responded to our survey, of which 3049 declared that they conducted evaluation(s). Out of that number, 1369 questionnaires were fully completed. 11 Incomplete questionnaires were excluded, and questionnaires from respondents who provided the same score (e.g. ‘Strongly agree’) to all items in at least one Likert-type-scale question were also excluded, as we assumed these responses were unreliable. 12 Consequently, 1123 responses were included in the analysis. The structure of our sample is presented below in Table 1.

Structure of the sample.

In the survey, respondents were asked to indicate how much time had passed since the first evaluation was completed in their organisation, using a 5-point scale ranging from ‘less than a year ago’ to ‘more than 10 years ago’. For the regression modelling, this variable was recoded into a binary format: ‘5 years or less’ and ‘more than 5 years,’ allowing us to limit the number of models from 20 to 8.

In the survey, respondents were asked to indicate how many evaluation 13 studies had been conducted in their organisation over the past 3 years using a 5-point scale. To simplify the regression models, this variable was recoded into a binary format: low (up to 10) and high (above 10).

Response rates differed between the countries, and we do not control for who did not submit a complete questionnaire, so we do not argue that our sample is representative or allows us to draw conclusions about the prevalence of evaluation practice or frequency of evaluation use types in the population. However, it fits our research objective: to identify the relationship between organisational factors and the types of evaluation use.

Since each use type, adoption mode, and legitimacy source was described by several Likert-type items, the values of the variables used in our analysis have been calculated as rescaled means of the scores of all related items using the following formula

where

Analysis

We conducted data analysis using linear regression to examine the relationships between organisations’ adoption modes and legitimacy dimensions (independent variables) and their evaluation use types (dependent variables). Inverse frequency weighting was applied to address sample imbalances, particularly the high quantity of responses from Poland. All independent and dependent variables were treated as continuous. Two binary control variables were also included: type of organisation (public or NGO) and evaluation activity (high for organisations with more than 10 evaluations in the past 3 years and low for the rest of the organisations). Country-fixed effects were also incorporated into the regression models to control for any stable source of baseline differences between organisations resulting from a country of origin. In addition, organisations were classified according to the phase of development of their evaluation practices (initial phase – an organisation’s evaluation practice was initiated 5 years ago or less; advanced phase – more than 5 years ago). Separate regression models were estimated for each type of evaluation use in the initial and advanced phases. The linear regression analysis was performed in R using the plm package (Croissant and Millo, 2008).

Results

Descriptive statistics

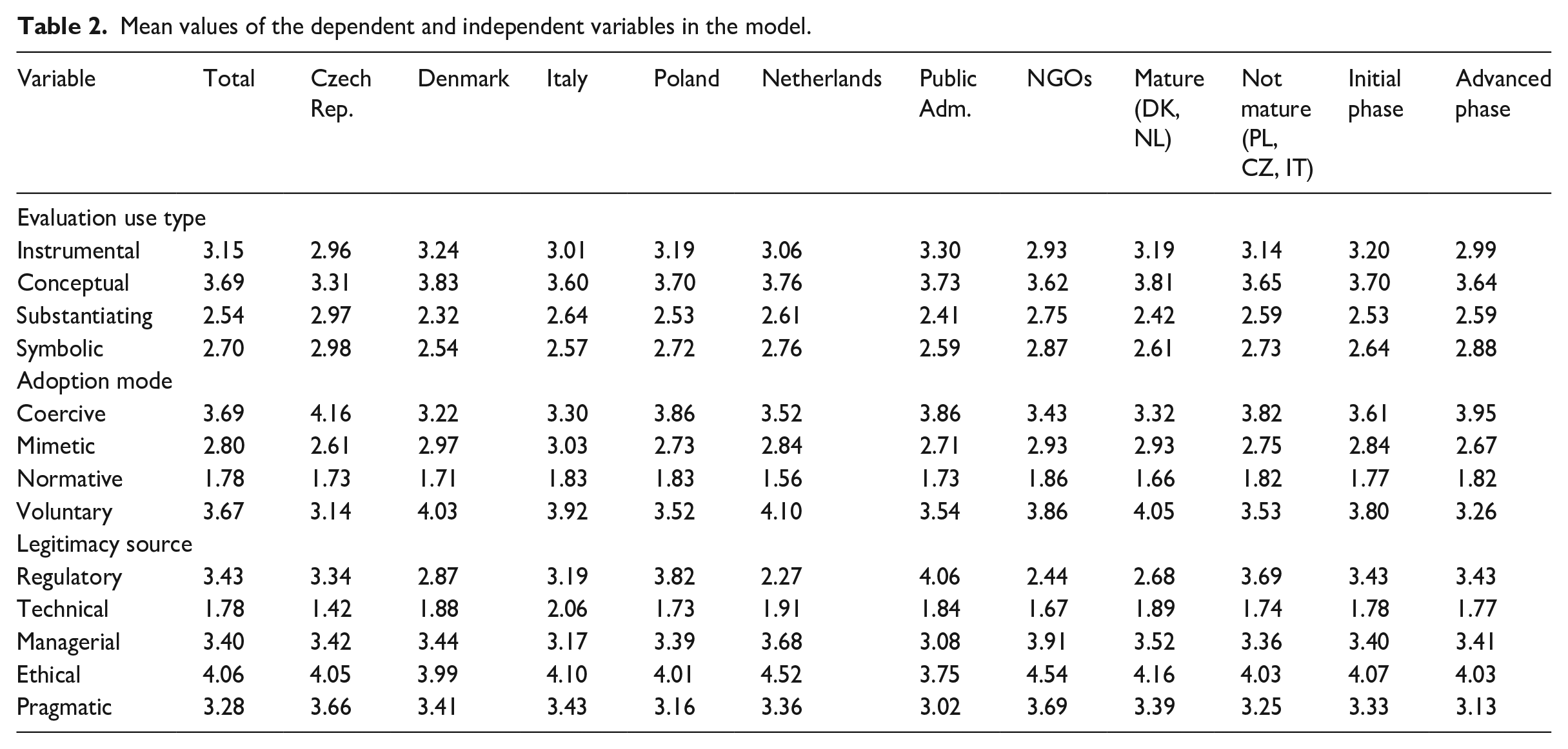

In the sample, the most frequently indicated type of evaluation use was conceptual; instrumental was the second-most common, and substantiating and symbolic uses were much less frequent (Table 2). A similar distribution was visible in public administration and NGOs, mature and non-mature evaluation cultures, and organisations in the initial and advanced phase, as well as across almost all countries except Czechia, where mean values for types of evaluation use were much more even.

Mean values of the dependent and independent variables in the model.

Among the respondents, coercive and voluntary adoption modes were most frequently indicated, whereas the normative mode was the least popular. Again, this general pattern was similar throughout all dimensions (presented in Table 2) and all countries. However, a slight difference is identifiable between countries with mature evaluation cultures, where the voluntary mode of evaluation was the most frequent, and those with immature cultures, where the coercive mode was the most prevalent.

Throughout the sample, ethical legitimacy was most common for organisations; the following regulatory, managerial, and pragmatic dimensions of legitimacy were similarly popular. The least frequently indicated source of legitimacy was technical. In terms of its legitimacy source, the country that stands out the most is the Netherlands, which has relatively (in comparison to other countries) higher scores for ethical and managerial dimensions of legitimacy and lower ones for regulatory dimensions. In addition, public administration organisations did not follow this general pattern and reported regulatory legitimacy as the most popular. NGOs and organisations from countries with mature evaluation cultures reported regulatory legitimacy significantly less often than managerial and pragmatic legitimacy.

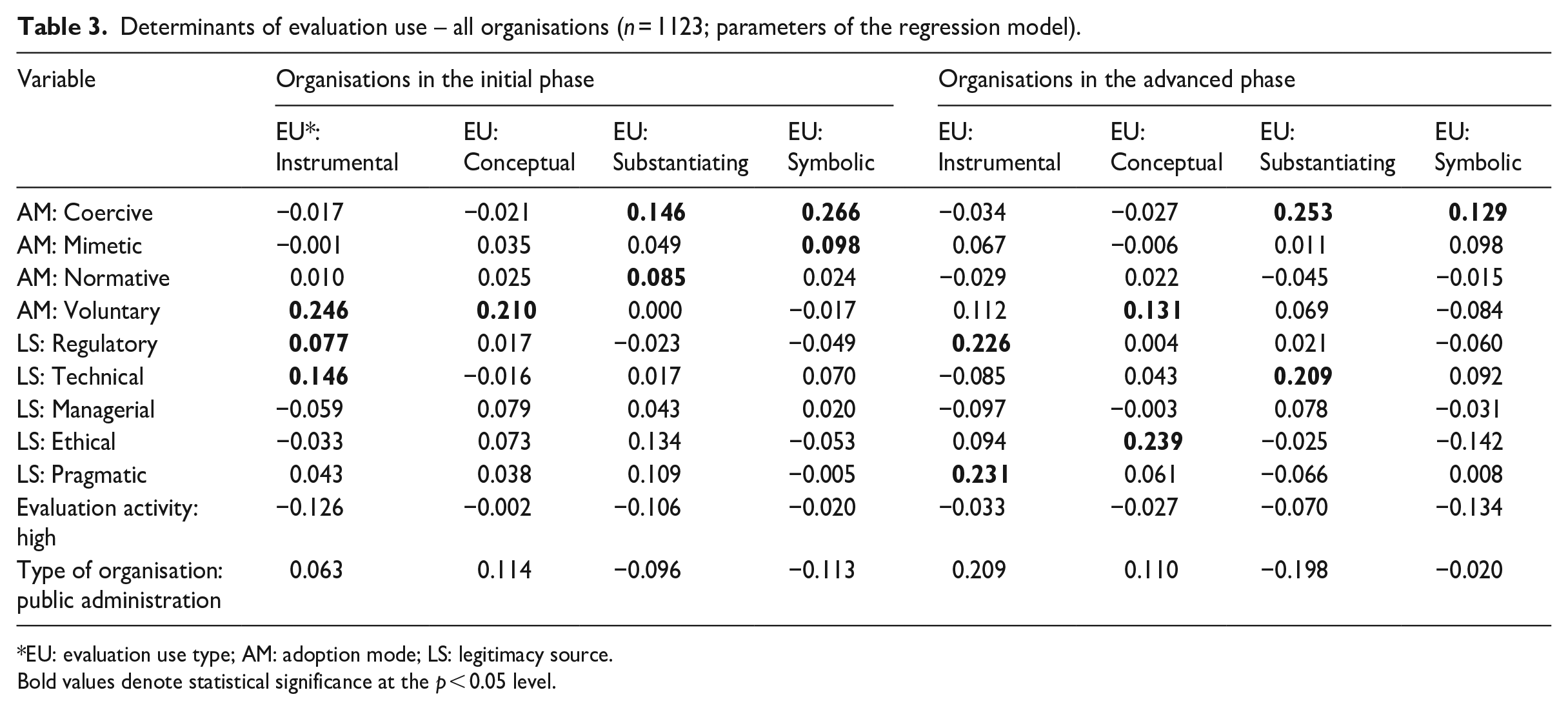

Determinants of use

The analysis revealed statistically significant determinants for all evaluation use types in both phases of evaluation practice. The mode in which an evaluation practice was adopted has a significant influence on evaluation use in organisations that are still in the initial phase (H1), and this influence diminishes in the advanced phase (H2) (see Table 3). Six significant relationships between adoption modes and use types were found in the initial phase, while three were found in the advanced phase. In addition, with one exception, the coefficient values for the adoption modes are substantially higher in the initial phase.

Determinants of evaluation use – all organisations (n = 1123; parameters of the regression model).

EU: evaluation use type; AM: adoption mode; LS: legitimacy source.

Bold values denote statistical significance at the p < 0.05 level.

Regarding the specific predictions derived from Højlund’s (2014) model, H1.1 and H1.2 are partially confirmed: when an evaluation practice is adopted voluntarily, the instrumental and conceptual uses are more frequent. 14 However, no relationship exists between instrumental use and mimetic adoption, nor does one exist between conceptual use and normative adoption. The substantiating use of evaluations is unrelated to mimetic adoption (H1.3), but a relationship is present between the coercive and normative adoption modes. In line with our model, the symbolic use is more frequent when evaluation is introduced due to coercion (H1.4); it also appears related to mimetic adoption.

Regarding the influence of legitimacy, our results are generally consistent with our hypothesis. In the advanced phase (H3), the evaluation use type is influenced by the legitimacy source more often (due to the presence of two significant relationships versus four) and to a greater extent (as higher coefficient values indicate). However, the specific relationships we identified are somewhat puzzling. Although the positive impact of pragmatic legitimacy on instrumental use is fully in line with our expectations (H3.1), the almost equal impact of regulatory legitimacy is clearly against it. We have also not observed the supposed impact of regulatory legitimacy on symbolic use (H3.4). The advanced-phase model for organisations with symbolic use as the independent variable is the only one with a p-value above 0.05, meaning that no relationship between the dependent and independent variables was identified. The relationship between ethical legitimacy and conceptual use is understandable, but no impact of managerial legitimacy was observed (H3.2). Managerial legitimacy is the only legitimacy source unrelated to any type of use in organisations with advanced evaluation practices. Finally, although the substantiating use appeared more frequent in organisations seeking technical legitimacy (H3.3), as expected, no relationship between it and ethical legitimacy was found.

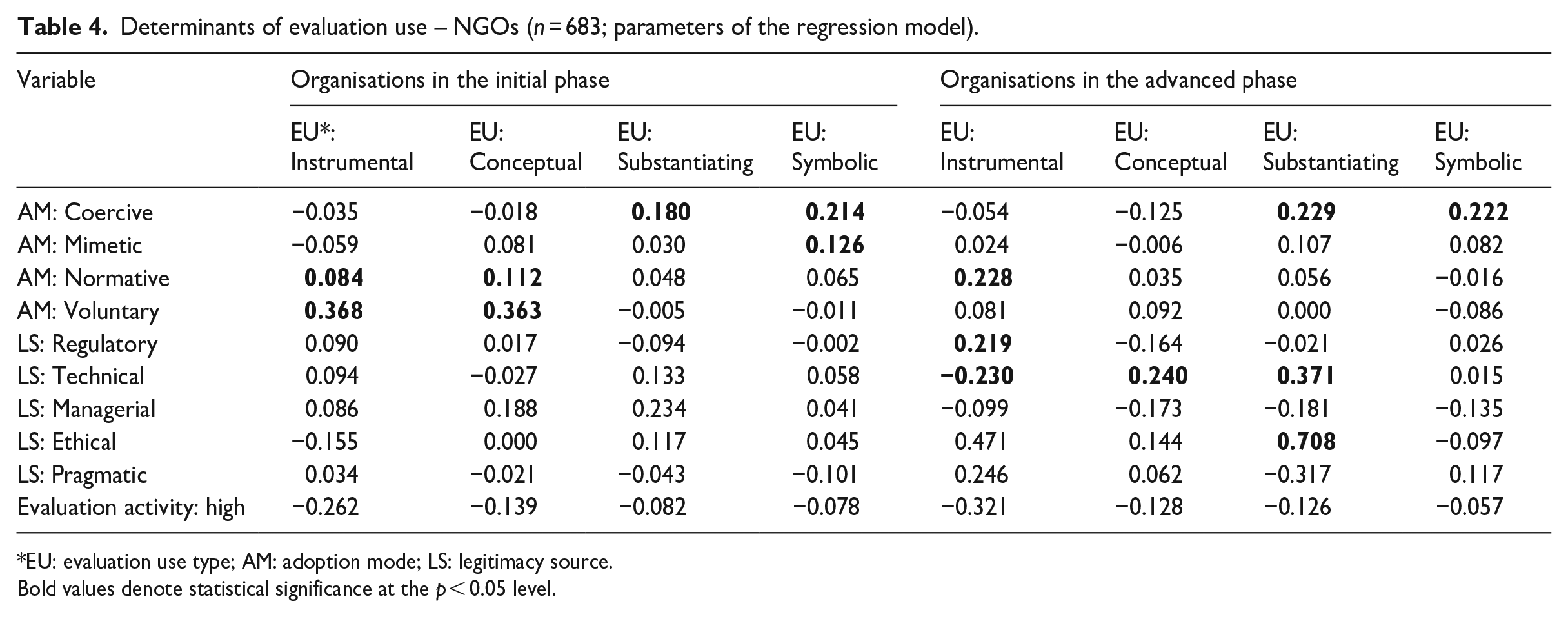

The positive influence of regulatory and technical forms of legitimacy on instrumental use in the initial phase is contrary to our expectations. Interestingly, this observation is true for the regression model fitted for all organisations (Table 3), but it is not for NGOs only (Table 4). In models fitted on NGO data, there are no significant relationships between legitimacy dimensions and evaluation use types in the initial phase, while coefficient values for the significant relationships between adoption modes and use types are relatively higher, which signifies a better fit.

Determinants of evaluation use – NGOs (n = 683; parameters of the regression model).

EU: evaluation use type; AM: adoption mode; LS: legitimacy source.

Bold values denote statistical significance at the p < 0.05 level.

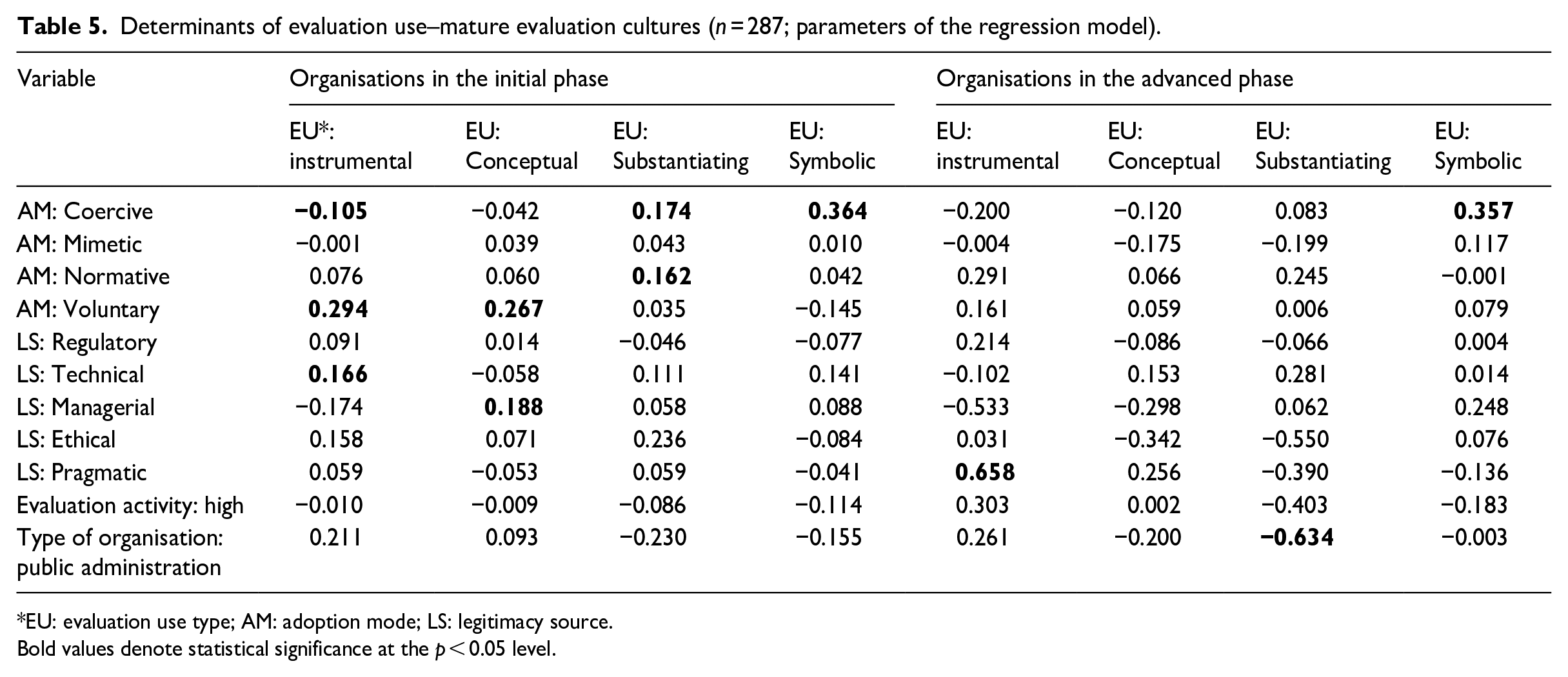

In addition, the models fitted on data restricted to countries with higher levels of evaluation maturity – Denmark and Netherlands – also reveal interesting findings (Table 5). Here, the frequency of instrumental use is related to voluntary adoption in the initial phase and pragmatic legitimacy in the advanced phase, offering results that most closely align with our expectations. Possibly due to the limited number of observations, no significant relationships between legitimacy and other types of use were found in these models.

Determinants of evaluation use–mature evaluation cultures (n = 287; parameters of the regression model).

EU: evaluation use type; AM: adoption mode; LS: legitimacy source.

Bold values denote statistical significance at the p < 0.05 level.

In addition, for all three sets of models (all organisations, NGOs, high maturity countries) the coefficient values of significant relationships are relatively low, with most cases below 0.3 and two exceptions below 0.5. Since the variables for adoption modes, legitimacy dimensions, and evaluation use types use a similar 5-point scale, we find that the impact of adoption modes and legitimacy dimensions on the types of evaluation use tends to be low.

Discussion and conclusion

Summary of the findings

This article aimed to propose and test a framework of organisational factors of evaluation use that would address the shortcomings of the previous models – especially their inability to explain empirical observations of organisations that operate under seemingly stable external pressure even as their use of evaluation evolves (Kupiec et al., 2023). The main distinguishing features of this framework are that it recognises phases in the development of evaluation practice and assumes that each phase is characterised by different use factors: in the initial phase, adoption modes are these factors, whereas those in the advanced phase are legitimacy dimensions.

The framework was tested on a sample of 1123 public organisations and NGOs from five EU countries with differing evaluation traditions: Czechia, Denmark, Italy, the Netherlands, and Poland. Based on the findings presented in the previous section, several observations can be made regarding the relevance of our framework and the organisational factors influencing evaluation use more broadly.

As suggested by Højlund (2014), the responses to our survey indicate that the motivation driving an organisation to introduce an evaluation practice (its ‘adoption mode’) influences the predominant type of evaluation use. Organisations that adopt evaluation practices voluntarily tend to employ them more frequently in instrumental or conceptual ways, whereas coercive adoption more often results in symbolic or substantiating uses. However, consistent with the predictions of Kupiec et al. (2023), the influence of adoption mode appears to be limited to the initial years following the introduction of evaluation practices – the ‘initial phase’.

In this study, we also tested the potential of dimensions of organisational legitimacy as the determinants evaluation use in the ‘advanced phase’ of evaluation practice development. Our findings regarding this aspect are mixed. Significant relationships between legitimacy dimensions and evaluation use types were identified; most concerned advanced-phase organisations, which is in line with our expectations. Some specific relationships are also consistent with our hypotheses, yet others are clearly against them. These contrary findings include the positive impact of regulatory legitimacy on the instrumental use in the initial and advanced phases of evaluation practice development.

Interpretation of the findings

Observations concerning the impact of legitimacy on evaluation use give rise to at least four possible explanations. First, while the dimensions of legitimacy may indeed be a useful determinant of evaluation use, the specific typology adopted in this study may not have been sufficiently adequate. The typology developed by Díez-de-Castro et al. (2018) appeared to be a suitable choice, as it was relatively recent, synthesised insights from several earlier contributions, and was among the most comprehensive available. It also enabled the selection of dimensions that intuitively aligned with different types of evaluation use. However, this conceptualisation is not without limitations. The definitions of individual legitimacy dimensions remain somewhat vague, often expressed as broad and general descriptions. As a result, it proved challenging to operationalise the concepts and translate them into Likert-type-scale items that respondents could readily understand, and distinguish from each other. A promising direction for future research would be to test or develop alternative typologies of legitimacy dimensions with clearer definitions, more precise boundaries, and greater relevance to the study of evaluation use.

One possible direction for future research would be to simplify the typology, perhaps by testing one of the classical frameworks, such as the regulative, normative and cultural-cognitive dimensions proposed by Scott (1995). This could allow for the removal of the most problematic element in our framework – technical legitimacy – which is difficult to distinguish from managerial legitimacy and challenging to operationalise. This is further supported by the fact that it was by far the least frequently selected by respondents. On the other hand, Scott’s typology does not include any dimension of legitimacy that would clearly be expected to increase the frequency of instrumental or conceptual use. For this reason, the alternative explanations that follow appear more plausible.

Second, it is possible that legitimacy is a significant factor, but the dimensions of legitimacy may not be the most appropriate lens through which to examine its relationship with the type of evaluation use. Other promising characteristics that could be explored in future research include legitimacy states – such as accepted, proper, debated and illegitimate (Deephouse et al., 2017) – or the challenges of legitimacy management: gaining, maintaining and repairing (Suchman, 1995). Their advantage lies in the fact that, unlike legitimacy dimensions, it is usually possible to identify a single state or challenge that is most relevant to the organisation at a given moment. In addition, states and challenges align more closely with the understanding of legitimacy as a dynamic and continuously evolving process (Drori and Honig, 2013; Haack et al., 2020), which was a key reason for selecting legitimacy as a promising element through which to introduce a dynamic dimension into our framework. Some potential relationships appear intuitive: organisations seeking to repair their legitimacy, or whose legitimacy is contested, may be more likely to engage in substantial use of evaluation. Conversely, those whose legitimacy is proper, or maintained, may be more inclined towards symbolic use. However, the connections between other types of evaluation use and legitimacy states or management challenges are not always straightforward, which discouraged us from incorporating these features into our framework.

Third, while a significant and universal organisational factor influencing evaluation use may well exist in the advanced phase, it is not necessarily legitimacy. This is because, in most cases, organisations are taken for granted and their legitimacy is not actively assessed by stakeholders. As such, legitimacy cannot meaningfully function as a predictor of evaluation use.

An additional observation from our study that sheds further light on this issue is that the type of legitimacy appeared to have greater relevance for evaluation use in NGOs than in public sector organisations. It is reasonable to assume that legitimacy may influence evaluation use only when organisations regard it as important. Scholars argue that legitimacy matters primarily because it affects organisational performance and survival (Pollock and Rindova, 2003), and that stakeholders are more likely to engage with organisations they perceive as legitimate (Deephouse et al., 2017). It is possible, however, that not all organisations – particularly in the public sector – experience frequent and sufficient concern for their own survival for legitimacy to significantly shape their use of evaluation. In light of this observation, our prior expectation that legitimacy might serve as a general determinant of evaluation use across diverse types of organisations was not confirmed. This suggests that separate frameworks are needed for NGOs and public sector organisations to more accurately capture the dynamics at play, as legitimacy appears to be a promising factor of evaluation use only within the NGO context.

Fourth, we cannot rule out the possibility that organisational context does not exert a significant influence on evaluation use in the advanced phase. In fact, its influence appears limited even in the adoption phase. In the literature, numerous factors influencing evaluation use have been identified over time. These can be categorised into characteristics of evaluation implementation and policy settings (Cousins and Leithwood, 1986) or into aspects related to users, evaluators, the evaluation process and the evaluation product (Alkin and King, 2017). Højlund (2014) argued that such micro-level factors are shaped by the underlying organisational context. However, our study provides empirical evidence that contradicts this claim. While relationships between organisational factors and evaluation use types were identified, our findings indicate that organisational determinants, as presented in this article, are insufficient to explain why organisations use evaluation in specific ways in particular situations. The perceived impact of adoption modes and legitimacy dimensions on evaluation use types is generally low (as evidenced by low coefficient values), and our regression models account for only a portion of the variation in our dependent variables, especially in the advanced phase. This suggests that additional factors, possibly the micro-level factors – to use Højlund’s nomenclature – related to specific evaluation studies, their users and their contractors, must complement the organisational perspective as they appear to shape evaluation use in ways that cannot be fully explained by an organisation’s role or external pressures. Related considerations can be found in the field of expert knowledge use, where Rimkutė (2015) suggested that formal and informal external pressure should be combined with an organisation’s capacity to produce scientific outputs. Similarly, Schrefler (2010) proposed that external explanatory factors should be complemented by an agency’s capacity, considered as a control variable, which may influence the use of knowledge. 15

Limitations of the study

In addition to the above considerations, it is important to highlight several limitations of the study which may have influenced the results and should be taken into account when interpreting them. First, although empirical studies on types of evaluation use and their determinants based on survey questionnaires are well established in the evaluation literature and frequently employed (e.g. Altschuld et al., 1993; Carman, 2005; Eckerd and Moulton, 2011), they are subject to certain limitations. One such limitation is the lack of control over who completes the questionnaire. While we explicitly requested that the questionnaire be completed by an individual in a managerial position – department director or organisation manager with knowledge of the use of evaluation results within their organisation – it was ultimately the respondents themselves who determined whether they were the appropriate person, which may result in some responses being of limited reliability.

As in all self-administered surveys, another potential issue is the understanding of key concepts, including, in our case, evaluation. In the questionnaire, we provided a relatively broad definition of evaluation (see Research Design section). Still, it is possible that some respondents who declared that their organisation conducts evaluation may have meant something beyond the scope of our definition.

Regarding the variables in our regression models – types of evaluation use, adoption modes, legitimacy dimensions – were measured using a Likert-type scale consisting of several 5-point Likert-type items, which allowed us to account for the diverse contexts in which the organisations of our respondents may have operated. This approach follows the example of earlier studies, and does not raise any concerns on our part.

What could be considered problematic is another key element of our framework – the transition from the initial phase into the advanced phase – which is difficult to capture and operationalise. We defined a threshold of five years of evaluation practice to separate the two phases in this study. This was necessary to create four pairs of regression models (one for each type of use) and to compare them. However, in reality, this is most likely a gradual process consisting of more phases, not a one-off change at one point in time. Moreover, this process likely occurs at a different pace in different organisations that operate in different contexts. The pace may depend on, for example, the nature and structure of the tasks the organisation oversees or the intervention or funding cycles in which it operates (Devine, 2002). This necessary simplification in the analysis meant that some organisations with an advanced evaluation practice were included in the initial phase and vice versa.

In relation to this, it is worth considering the slightly different pattern observed – one that aligns more closely with our hypotheses regarding the impact of legitimacy dimensions – among organisations in countries with higher levels of evaluation practice maturity. In our conceptual framework, we assume that in the advanced phase of evaluation practice, an organisation may, but does not necessarily, build evaluation capacity and reflect on the potential role of evaluation. As observed by Kupiec et al. (2020), the perception of evaluation as a compliance requirement may be particularly common in countries with a less developed evaluation culture in our sample. This could result in organisations in such contexts either entering the advanced phase at a slower pace or – when in that phase – engaging less frequently in reflection on the potential roles of evaluation. Both of these factors may limit the impact of legitimacy on the type of evaluation use.

An additional aspect of the phase of evaluation practice variable is that it reflects the potential for organisations to change how they use evaluation over time, even in the absence of external changes in their environment or role. One of the central points of our argument is that, as organisations move from the initial to the advanced phase, the factors influencing evaluation use may also shift, leading to changes in how evaluation is used. In this study, however, we did not directly observe such transitions; our analysis is based on comparisons between organisations currently situated in different phases. As a result, we cannot determine whether, or in what ways, organisations in the advanced phase have altered their type of evaluation use.

To overcome this constraint in the current research design, future studies could employ panel data and follow-up surveys to enable more precise tracking of this progression, thereby allowing researchers to identify both the timing and direction of change. However, fully capturing the process by which a particular organisation moves from an initial to an advanced phase of evaluation practice would require the complementary use of qualitative approaches, particularly case-based methods. Of particular relevance may be narrative process tracing – a within-case analytical technique used to identify causal mechanisms that lead to specific outcomes (George and Bennett, 2005) – which has already been applied in evaluation research, for instance in studies of evaluation capacity building (Rohanna, 2022).

Case-based methods could also help to address another issue, referred to by some authors as ‘evaluation capture’ (House, 2013; Picciotto, 2017), which may have influenced our findings and should be borne in mind when interpreting them. The fact that evaluation is, in most cases, not independent of its sponsors entails the risk of it being appropriated by vested interests. This risk is heightened by the fact that a large proportion of our respondents are civil servants operating in a context where the most prevalent model of evaluation governance conflates the roles of decision-maker and evaluation commissioner (Picciotto, 2016). Applied directly to the context of our results, what we have framed as substantiating use may in some instances reflect an advocacy of the evaluation users’ perspectives, involving even the strategic misuse of evaluation to overstate benefits and downplay negative effects. In addition, as we were unable to uncover respondents’ underlying agendas using questionnaire data alone, certain instances of evaluation use that we classified as instrumental or conceptual may, in fact, more closely resemble substantiating or symbolic use, and be another manifestation of evaluation capture – for example, through the deliberate choice of evaluation subject. This may generate discrepancies between the declared and actual frequency of instrumental and conceptual use. In addition to the four explanations offered above concerning legitimacy, this could also help to explain why the observed relationships between the dimensions of legitimacy and types of evaluation use did not entirely align with our expectations. However, verifying these suppositions would require uncovering the actual organisational agendas related to evaluation through in-depth case studies.

Another assumption in this study, which may have influenced the results, is the distinction between large and small organisations, with the unit of analysis in the former being the department. This approach has been previously tested in a study based on in-depth individual interviews (Kupiec and Wrońska, 2024), which confirmed that evaluation practices, including the understanding of motivations and the use of evaluation, vary at the departmental level. Although justified, this approach may have led, from the perspective of Thompson’s (1967) three levels of responsibility, to an overrepresentation of instrumental and conceptual use (more likely at the departmental level, which corresponds to the managerial level in Thompson’s framework) and an underestimation of persuasive use (more likely to be observed at the organisational leadership level – the institutional level).

Two further limitations can be identified, which are not specific to surveys but apply to any study based on respondent accounts. First, our work is subject to social desirability bias (Ruggeri, 2018), as are all studies of evaluation use based on interviews or surveys. It is reasonable to assume that, in certain situations, respondents may have been inclined to select the response they perceived as ‘appropriate’ rather than the one that accurately reflected their situation. In our study, this temptation might have led to the overestimation of the frequency of voluntary adoption and instrumental use. Although it is difficult to assess the scale of this effect, we tried to reduce it through the design of our questionnaire (i.e. using the Likert-type scale with several indirect statements for each evaluation use type, adoption mode and legitimacy source).

Second, while we limited the question about the use of evaluation in organisations to the preceding three years, responses may have still been affected by recency bias. The recollections of recent cases of evaluation use may have influenced the perception of the dominant use type. In addition, it has been observed in other studies that respondents are most likely to remember examples of intended use at the end of an evaluation cycle (Kupiec and Wrońska, 2024).

Finally, it should be noted that in addition to our study’s theoretical implications, our findings and conclusions may also inform the ongoing discussion about the future of evaluation practice. The recognition that adoption mode shapes evaluation practice provides a useful starting point for designing and refining approaches to strengthen evaluation practice and build evaluation capacity within organisations operating in diverse contexts. A better understanding of organisational factors may help identify those organisations most at risk of symbolic use and, consequently, support the development of measures to counteract this threat. Furthermore, insights into how the motivation to conduct evaluations influences their use are essential for designing policies aimed at expanding evaluation practice into new sectors, organisational types, and contexts – particularly in countries with underdeveloped evaluation cultures.

Supplemental Material

sj-pdf-1-evi-10.1177_13563890251346778 – Supplemental material for Organisational factors in evaluation use: results from a survey in five EU countries

Supplemental material, sj-pdf-1-evi-10.1177_13563890251346778 for Organisational factors in evaluation use: results from a survey in five EU countries by Tomasz Kupiec, Dorota Celinska-Janowicz, Niklas Andreas Andersen, Rasmus Lind Ravn, Stijn van Voorst, Eva Bláhová, Federico Cuomo, Erica Melloni, Oto Potluka and Giancarlo Vecchi in Evaluation

Footnotes

Acknowledgements

Weronika Stefaniak and Szymon Szaleniec, who were at the time studying Regional and Local Development Design (University of Warsaw, EUROREG), helped the authors gather the address database for the survey.

Data availability

The data that support the findings of this study are available from the corresponding author (T.K.) upon reasonable request.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Science Centre, Poland, grant number 2019/33/B/HS5/01336.

Ethical approval and informed consent statements

This study was approved by the Research Ethics Committee ‘Komisja ds. Etyki Badań Centrum Europejskich Studiów Regionalnych i Lokalnych EUROREG’ (approval no KEB-EUROREG-22-2) on 15 November 2022.

ORCID iDs

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.