Abstract

Context is a well-discussed but still elusive concept in evaluation. The authors of this article acknowledge there is considerable literature on context in an evaluation setting, with multiple frameworks, theories and methodologies guided by context. However, the authors believe there is little practical and pragmatic guidance for evaluators regarding how to locate and determine what is contextually relevant. To address this gap, the authors have mapped tools to Rog’s ‘bringing the background to the foreground’ framework. A further contribution to the literature is the addition of the ‘action context’ to Rog’s framework. This describes the period following evaluation when there can be problem redefinition and intervention redesign. This article provides guidance to evaluators on how to locate context through the application of well-established tools, primarily borrowed from business disciplines.

Keywords

Introduction

Evaluation textbooks and journals continue to be filled with examples of novel tools and methodologies with which to conduct evaluations often accompanied by the theoretical justification behind them. However, in the experience of the authors, the evaluator’s toolkit needs greater breadth than evaluation tools alone. The authors firmly believe that each evaluation is inherently different and should be driven by the context in which the evaluand resides, as well as the context of the evaluation itself. In a traditional approach to evaluation design, the evaluator or evaluation team would identify the problem and consider the intervention (usually delivered in the form of a programme) to inform the design of the evaluation. However, in adopting this more linear or reductionist approach, there may be other contextual aspects to this activity that directly or indirectly influence the outcomes of the evaluation itself.

The authors are internal evaluators employed within an Australian regional university. In Australia, most universities are public entities and operate as non-profit organisations. This means that funding is always tight, and as internal evaluators, there is an imperative to be creative and resourceful. Moreover, because the evaluation activities carried out in the university context relate to ongoing projects, there is a need to understand evaluation as forming part of a cycle. Ideally, this cycle should result in continuous improvement, leading the university to become a learning organisation (Alderman, 2014, 2022). Depending on the tool, it can be as simple as requiring no more than physical (or virtual) space, a few hours of time for data collection and the genuine engagement of participants. Other tools do require more significant time investment but are still remarkably efficient relative to alternatives. Therefore, this article will especially appeal to internal or external evaluators who work alongside non-profit organisations to guide them in their evaluation practice.

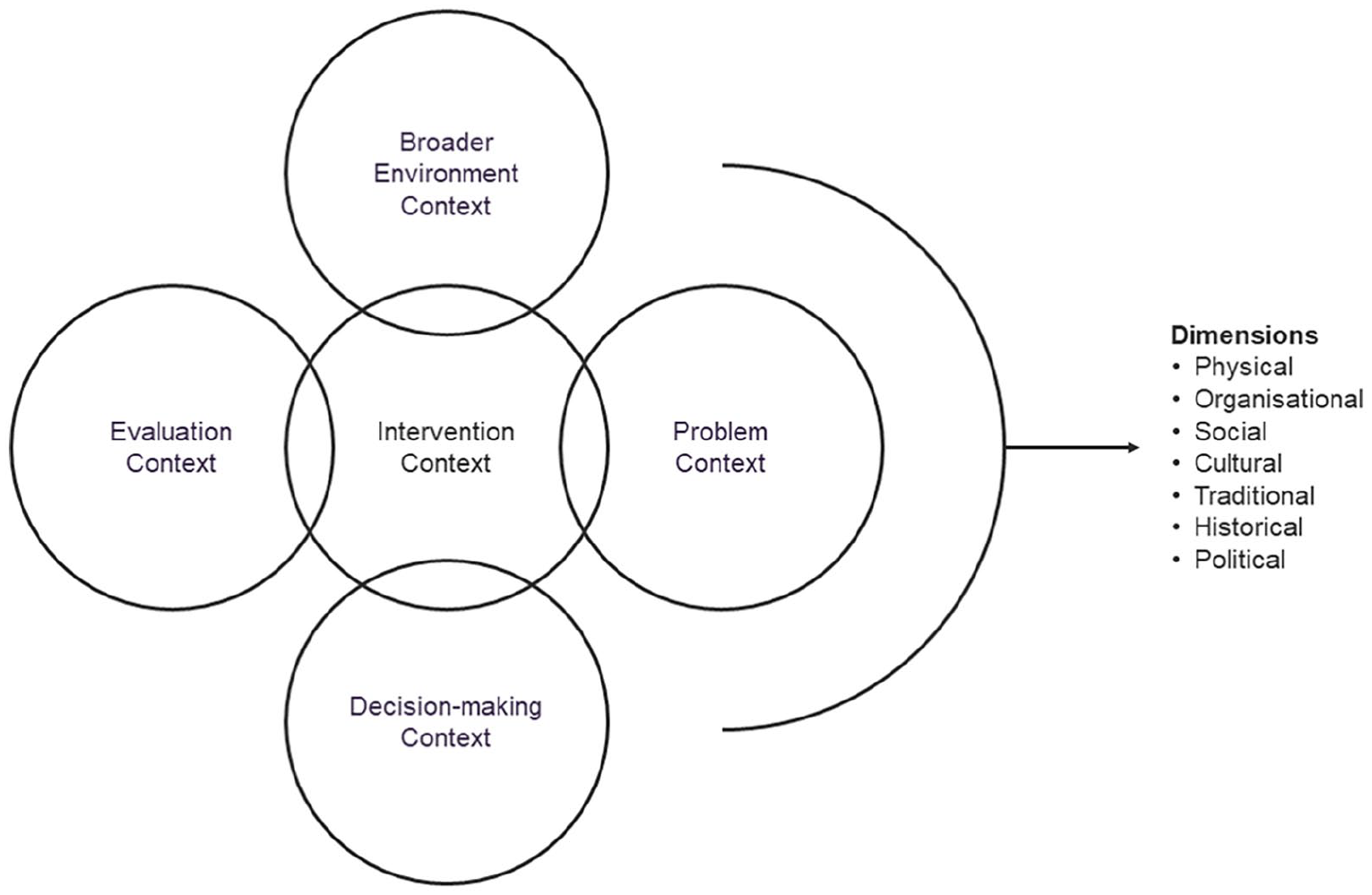

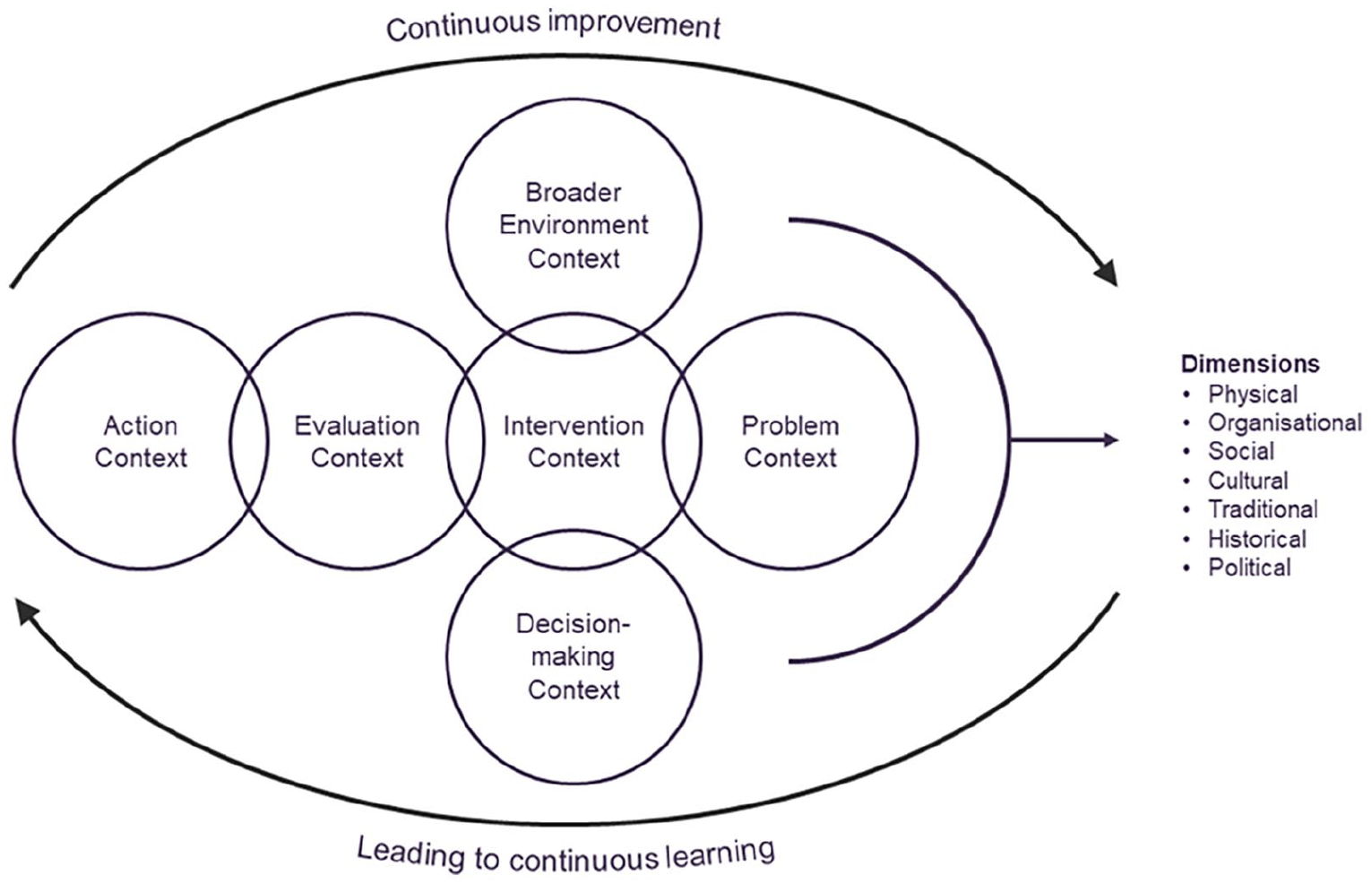

Guidance on the typology of context to be considered is provided by Rog (2012), who explores the additional and often forgotten contexts of evaluation. These are the ‘problem context’, ‘intervention context’, ‘decision-making context’ and ‘broader environment context’ (see Figure 1). These should naturally lead to and ideally inform the evaluation itself, and understanding these contexts is important, as it supports evaluation approaches and findings. However, the authors of this article have also found through their practice that they have been thrust into these contexts in their original form. For example, the authors have been asked to assist in problem identification and articulation, intervention design and redesign, along with assisting key decision makers to make evidence-informed decisions and understanding the broader environment in which an evaluation takes place. This article introduces evaluators to tools that may be new to or existing in their current toolboxes but applies them to these different contexts, which the authors believe are likely to be encountered by more evaluators every day.

Rog’s (2012) bringing context into the foreground.

Bringing context to the foreground in evaluation design

Context is often used as a buzzword within evaluation and social science research more broadly. Its use is hardly revolutionary, and many researchers would argue that it is essential to produce quality research that understands its impact. Schmidt and Graversen (2020) suggest context has often been in a black hole when it comes to evaluation. However, a deep understanding of context allows evaluators to point out factors important in intervention design and the consequential evaluation to themselves, each other and their commissioners.

Context is often difficult for evaluators to establish, for a couple of reasons. First, while most of us have some sense of what context is, ironically, it is nearly impossible to define. Greenhalgh and Manzano (2022) state ‘universal definitions of context can be considered oxymoronic self-contradictions that aim to generalise unique phenomena, which could only ever be context-dependent since they are inherently unique’. Ideally, one would begin an evaluation with prior or background knowledge of the particular social phenomenon or discipline being explored, thus already being armed with the requisite context. However, the reality is evaluation is a multidisciplinary field where individuals often work across an enormous range of societal issues and problems. One can of course often utilise others who are experts in certain fields, whether this be through consultancy or the benefits of a larger evaluation team to explicate context. However, this can be problematic as experts in a field can have a preconceived notion or become overly invested in a particular outcome, ultimately impacting the objectivity and independence one expects from quality evaluation (Rog, 2015). To address this issue in practice, a strong evaluation team would consist of members who represent different disciplines and backgrounds, as it would enhance the evaluation team’s ability to conduct multidisciplinary evaluations. However, while multidisciplinary evaluation teams go some way to addressing the issue of discipline context, there is still an advantage to adopting tools that unpack the contextual parameters specific to the evaluation at hand.

Rog’s contextual framework

Rog’s (2012) article should be considered as one of the seminal works regarding context pertaining to evaluation. It challenges evaluators to bring context to the foreground of evaluation, as Rog (2012) contends that context is ‘more of an afterthought when the work does not go as planned or findings emerge that are difficult to interpret’ (p. 26). Building primarily on the work of Greene (2005) and since supported by Taplin et al. (2014), Rog (2012: 26) argues a ‘context-first approach to evaluation is more appropriate’ than a ‘methods-first approach’. Rog (2012: 26) suggests evaluators are inclined to take a myopic view of the evaluation at hand and choose methods and tools most likely to accurately describe a causative relationship between the intervention and outcomes. While logical, it is strongly suggested that understanding the context the programme being evaluated resides in should come first and inform the choice of methods, as this may reveal variables requiring control, inclusion or exclusion.

Rog’s model incorporates five domains of context that evaluators should consider when undertaking evaluation (refer to Figure 1). The horizontal axis of the figure shows what many will understand evaluation to be at its core. It is assumed evaluators will be very familiar with the ‘evaluation context’, as this is what their day-to-day work is conducted in. However, the authors of this article believe that while context has been well defined and heavily discussed in the evaluation literature, there is relatively scant discussion on the tools evaluators could utilise to enhance and inform an evaluator’s understanding of the context each new evaluation is situated in, particularly in the other four domains (as articulated by Rog). Moreover, evaluation is nearly always applied to an intervention, initially installed with the hope of solving or improving a problem. Therefore, the ‘evaluation context’, ‘intervention context’ and ‘problem context’ should come as relatively little surprise to most evaluators. The addition of the vertical axis, which includes the ‘broader environment context’ and ‘decision making context’, may not immediately resonate with evaluators but should quickly become logical with further explanation.

Contemporary understandings of context in evaluation

At present, context, when spoken about in evaluation circles, often gets immediately conflated with realist evaluation. Realist evaluation emerged from the broader epistemological branch of critical realism, which challenges traditional empiricism. Essentially, realists believe non-tangibles are observable phenomena which can help explain and determine how and if programmes are effective. This might include things like culture, history, class and economics (Mercer and Lacey, 2021). Pawson and Tilley (1997) developed the context-mechanism-outcome approach, which is now synonymous with realist evaluation. The three act as a chain; as context changes, so can the mechanism. That mechanism results in an outcome. A contextual change does not automatically result in a different mechanism and/or outcome, but a realist approach would explore the possibility. While the tools discussed throughout this article and their application to Rog’s (2012) framework could be useful for realist evaluation, the authors of this article wish to address context at a broader evaluation level. Therefore, while some of the discussion following may be pertinent to realist evaluation, specific consideration is out of scope for the rest of this article.

Discussion about the use of context as a key component for conducting evaluation predates Rog (2012). Stufflebeam (1968) brought greater attention to the goals of management and commissioners when evaluation is conducted, essentially arguing while commissioners have no role in influencing the outcomes of an evaluation, understanding their intentions and goals should play a role in method selection. Patton (1976) and Owen and Lambert (1998) also alluded to a role for managers and commissioners. Fitzpatrick (2012a) suggests these were early iterations of Rog’s ‘decision making context’.

Horne (2017) speaks to the importance of understanding the context of the organisation running the intervention, mentioning specific aspects such as the organisation’s age, size, history or experience with similar programmes. While not specifically called out, this appears to echo Rog’s ‘intervention context’ and perhaps some overlap with the ‘broader environment context’.

Newton-Levinson et al. (2020) provide perhaps the simplest and most elegant explanation stating context needs to be included ‘in making decisions about the overall approach, in evaluation design, and most recently as a key component in standard for reporting evaluation results’ (p. 1). Making decisions about the overall approach essentially encapsulates all of Rog’s context minus the evaluation context. The focus on improved and more consistent reporting standards for complex evaluations with significant contextual parameters appears more closely connected to standards rather than context. (Alderman, 2018; Hutchinson, 2017; Montrosse-Moorhead and Griffith, 2017; Newton-Levinson et al. 2020). However, considering the context in which evaluation reports are constructed and how findings are communicated and advocated for should warrant further research and consideration.

One obvious extension of Rog (2012) comes from Coldwell and Moore (2024), who have created the ‘context-informed explanatory framework’. This framework, building on Coldwell’s (2019) previous work, provides a more chronological approach to context, providing both ‘contextual phases’ (backdrop, design, operation and interpretation) and ‘contextual factors’ which are subsets of the phases. The factors include Rog’s five contexts and add ‘causal theory’, ‘teaching’ and ‘consequential’. Causal theory encompasses the test of theory of change, which as Coldwell and Moore (2024) explain is often implied in evaluations. However, its application to interventions which do not have formal theories of change or where inductive approaches to evaluation would encourage the theory of change to be set aside for the purpose of evaluation is limited. ‘Teaching’ appears to be related to the specific case study discussed in the article and is presumably interchangeable from evaluation to evaluation. Interpretation has some similarities to our own action context, but this distinction will be further explained later in this article.

However, while this body of literature has helped evaluators understand the key role context plays in evaluation, and as some have called for, a need for more thought and research to go into further understanding, there is little guidance on how to determine the unique contexts of each evaluation. Conner et al. (2012) have extrapolated on Rog’s framework, in the same special issue, by providing a series of ‘guiding questions’ to help undertake a ‘context assessment’. These questions are too plentiful to list here but despite all the scholarship that has followed the publication of Rog’s framework, these questions remain the only guidance on how to locate and determine what is contextually relevant with respect to Rog’s framework. The next section of this article will provide a practical example of how the second author has used Rog’s framework in practice before outlining the tools, borrowed from business disciplines, which are used to locate the unique contextual components of Rog’s contexts.

Rog’s framework in Australian Government practice

While the significant work on context by Rog’s contemporaries outlined above should not be underestimated, the authors find Rog’s (2012) framework a simple and elegant illustration which is easily translatable. The second author personally adopted Rog’s framework across a variety of situations. The second author discovered Rog’s framework in 2013 by completing a book review for a New Directions for Evaluation special issue – Context: A Framework for its Influence on Evaluation Practice. Within this issue was a chapter by Rog (2012) outlining her framework. From this initial book review, the second author adopted Rog’s framework for a doctoral thesis and then as the basis of a capacity-building workshop. These are outlined below. The second author found Rog’s framework to be critical to establish the multiple contexts affecting an evaluation of an Australian Government reform of higher education. Within this research study, there were three national learning and teaching initiatives in a higher education reform package. These initiatives, which were evaluated and found to be successful, were all closed at the end of their first cycle. Through unpacking the different contexts in Rog’s framework, the second author was able to make sense of somewhat contradictory evidence. In the Australian political decision-making context in higher education, a change in national leadership has triggered a review of the higher education sector over the last three decades (Department of Education, Science and Training, 2003). Therefore, the closure of the national initiatives was driven by political decision making that had nothing to do with the success or quality of the individual initiatives. The new leadership had simply moved the focus of the Australian higher education sector in a different direction.

In 2017, the second author was appointed as the inaugural Chief Evaluator [of an Australian Government Department]. At the same time, the Department was tasked with establishing a Community Grants Hub to provide a shared-services arrangement to administer grant services on behalf of the government to all client agencies in support of policy outcomes. The Chief Evaluator was tasked with building evaluation capacity across all client agencies (e.g. Defence, Aged Care, Disabilities and Social Services) and to secure evaluation funding through the application of a standardised evaluation costing model. A second critical task was to provide capacity building for all teams across all client agencies who were to commission external independent evaluations.

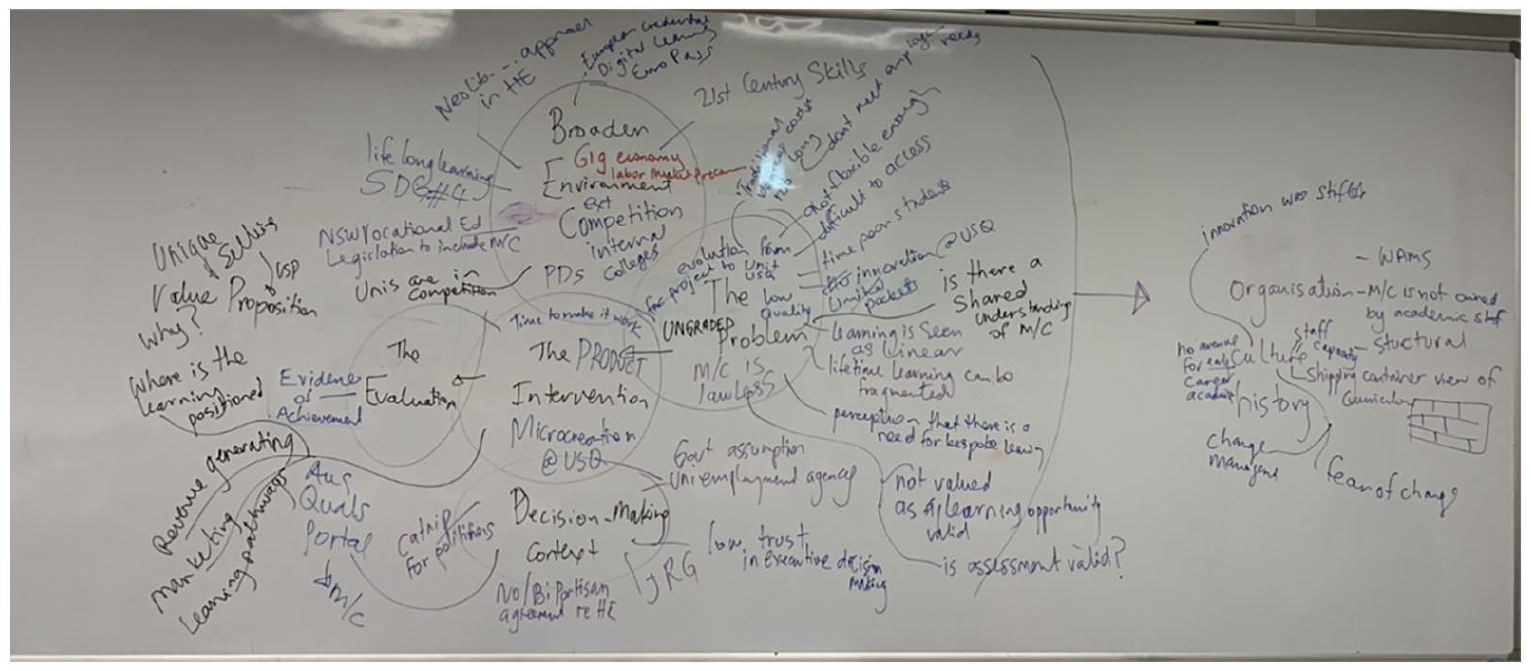

When working across different client agencies and programme teams, it quickly became apparent that the current employment culture within government moved staff across teams on a regular basis. Many teams who deliver intervention programmes to their clients may not be cognisant of the original design of the intervention programme nor the problem these programmes were expected to address. Therefore, the Evaluation Unit designed a suite of professional development workshops called ‘Evaluation Readiness’ to assist. Rog’s framework quickly became the most commonly used workshop tool, which was run in 2-hour sessions across hundreds of programme teams. It was a visual stimulus which allowed teams to reorient themselves to the problem, intervention (if one had taken place at the time), their decision makers and the broader environment. This practice has continued in the university context and remains a commonly used and powerful tool for capacity building and shared understanding. Figure 2 shows the typical results from a 2-hour session. While this was clearly not the intended use of Rog’s framework and in many senses a gross simplification, it makes the complexity of context approachable for a very wide audience of participants. The first and second authors have continually observed programme teams reaching a shared understanding of their programme, leading to greater clarity when commissioning external evaluations or redesigning their programmes.

Rog’s framework in practice – example from university microcredentials intervention.

However, there needs to be a balance between the application of Rog’s framework to a higher degree research study and the facilitation of a 22-hour workshop where a programme team unpacks all of the multiple contexts at play for an intended intervention or programme. There is also a middle ground whereby Rog’s framework can be adopted by service providers who are keen to unpack the multiple contexts at play to ensure that they are keenly aware of other policies, programmes or products that offer greater efficacy.

The next section of the article will review the following elements of Rog’s model with descriptions of the associated tools:

Intervention Context

• Tool #1 Business Case

Broader Environment Context

• Tool #2 Environmental Scan

Decision-Making Context

• Tool #3 Porter’s Five Forces

Problem Context

• Tool #4 SWOT Analysis

Finally, the authors believe there is an additional context that has been completely overlooked. There is little discussion on what happens following the completion and delivery of an evaluation. In instances where the programme is discontinued, this is very straightforward. However, many programmes will consist of multiple cycles, and how findings are incorporated into programme redesign is not well documented. As internal evaluators, the authors have applied the following tool, ‘Stop Start Continue Change’, to assist teams in refining the future cycle of programme delivery. The authors have titled this the ‘action context’.

Action Context

• Tool #5 Stop Start Continue Change

Intervention context

Intervention context tools are often overlooked, not necessarily by evaluators but certainly by their commissioners. For example, over the passage of time and with changes in personnel, there may be a loss of corporate knowledge as to the original design of the intervention. From the authors’ experience, most evaluators will agree that evaluation is easier and more powerful if the evaluation is designed during the same time as the intervention design. This helps to ensure data collection for the consequential evaluation is built into the programme design. Conner et al. (2012) suggest considering the programme in relation to its natural life cycle, the structure, the beneficiaries of the programme and the characteristics of those beneficiaries. While this is a helpful starting point, it would be more practical for evaluators to have a tool to inform the intervention design or at the very least a tool to understand the context in which the intervention was designed. Through their evaluation practice, the authors have identified the ‘Business Case’ as a useful tool in this context.

Tool #1 business case

The origins of the business case are not clear, nor are they easy to trace. Literature referring to the history of the ‘Business Case’ tends to refer to a case study style of teaching in the business disciplines first used by Harvard Business School (Berger et al., 2012; Servant-Miklos, 2019). The business case being referred to here has no obvious origin. It is most frequently referred to in the academic literature in relation to corporate social responsibility and more recently corporate sustainability (Berniak-Wozny et al., 2023; Castilla-Polo and Guerrero-Baena, 2023). Despite the vagueness regarding its origins, the business case has become a popular tool used to justify programmes at various government levels and individual businesses (Australian Government: Department of Finance, 2021; NSW Government: Treasury Department, 2023; Victorian Government: Treasury and Finance, 2024). Interestingly, it has been applied in various health contexts in recent times, potentially demonstrating a desire for qualitative narrative to support interventions often based on quantitative assessments (Eisman et al., 2020; Weaver and Sorrells-Jones, 2007).

The contents of a business case can, does and should vary from context to context. For those unfamiliar with the business case as a tool, Maes et al. (2014: 50) provides a useful guide of potential headings: (1) description, (2) objectives, (3) requirements, (4) impact, (5) risks, (6) assumptions, (7) constraints and (8) governance. A comparison between different options is also considered important (Weaver and Sorrells-Jones, 2007). Interestingly, many of these headings are also integral pieces of evaluation’s ‘programme logic’, which is best thought of as an ‘intervention context’ tool independently. To be clear, this refers to the creation of the programme logic rather than its use as a benchmark for behaviour change and impact. Approved programmes that were based on a business plan should naturally use the business plan as a source document when designing the evaluation and programme logic. Some might even consider the business plan to be a longer and wordier version of the one-page programme logic; however, that is misguided. The programme logic should be designed after the intervention has been approved. While shorter, it provides more comprehensive anticipated outcomes, linking activities to outcomes. Short- and medium-term outcomes can play an important role in understanding the steps it may take to achieve long-term outcomes. The business case is inextricably linked to long-term outcomes, as these are related to the overall impact and ultimately what makes a programme attractive for funding. Short- and medium-term outcomes relate to increases in knowledge and changes in behaviour ultimately leading to impact. These are imperative for the programme team as they are required for long-term impact. However, impact cannot be ascertained without knowledge or behaviour change. This is unlikely to be of interest to funders as they will be seen as standard operational activities.

Doty (2008) explains that the Victorian Government requires all government programmes with an expenditure greater than AUD$5 million to be presented with a formal business case. In essence, these business cases set out the rationale for an ‘intervention’, presumably to solve or improve a societal problem. The business case typically includes a summary of research potentially gathered through an environmental scan (discussed elsewhere in this article) demonstrating the merit of the intervention. Doty (2008) further elaborates it is not uncommon to see needs assessment and benchmarking to also make their way into a comprehensive business case. Furthermore, business cases built in collaboration with evaluators could include a comprehensive rationale for how monitoring and evaluation data will be collected and funded throughout the intervention. This should make the task of determining contribution between intervention and outcome easier for the evaluator, thus delivering a more accurate evaluation that benefits the client, programme, commissioner and society at large.

The overall benefit for future evaluators is the clarity of purpose and objectives of the original intervention design leading to a more informed evaluation design.

Broader environment context

The problem and intervention contexts do not exist in isolation, as there are other parameters at play that need to be brought from the background into the foreground (Rog, 2012) and this includes the broader environment context. Where a linear view of evaluation is adopted and the evaluation is designed based on the problem and intervention contexts, the outcome will be more closed or reductionist. In this instance, a question, such as ‘did the intervention deliver on its intended objectives’, is narrow and only provides scope for investigation relating to the intended objectives. However, a greater emphasis on the broader environment might lead to broader questioning, such as ‘what happened during the intervention phase’ and ‘what factors outside of the intervention and objectives played a role in outcomes’, and this will allow evaluators to report more holistic findings. Interestingly, the context assessment provided by Conner et al. (2012) seems to suggest the broader environment context is closely tied to intervention context and suggests guiding questions for the ‘broad environment around the intervention’. However, the broader environment is equally connected to the problem and decision-making contexts as well. For example, under the dimensions element of Rog’s framework, understanding the history and cross-cultural or cross-jurisdictional nature of the problem may demonstrate the ‘problem’ has been solved elsewhere, or in specific contexts. Designing interventions without an understanding of current and projected societal interest in, and knowledge of, is wasteful and arguably negligent. The tool introduced below – environmental scanning – is ideally placed to unpack the broader environmental context in which the problem is situated.

Tool #2 – Environmental scan

It is believed the term ‘environmental scan’ was first defined by Aguilar (1967), who was a professor at the Harvard Business School at the time he published his seminal text, Scanning the Business Environment. The environmental scan is primarily a qualitative process where the investigator attempts to identify trends and changes in the broader environment with the potential to impact a business or organisation or identify opportunities to gain a competitive advantage over others (Graham et al., 2008).

Aguilar (1967) posits that data located during the scanning could be themed into four broad categories using the ETPS (economic, technical, political and social) method. This slice of the environmental scan concept has retained popularity in business textbooks and business schools, and some readers may know it as the PEST analysis. More recently, this has been extended to PESTLE analysis – adding legal and environmental aspects (Rastogi and Trivedi, 2016). The authors believe this approach has been taken to shorten and simplify the environmental scanning process, which may be appropriate in some circumstances but will often risk the process becoming too myopic and short to add value. While the PESTLE themes serve as a useful starting point, investigators should be prepared to look beyond them.

The environmental scan could be confused with the traditional literature review. However, while they might share some source materials, they have vastly different objectives. The traditional academic literature review is designed to show a gap in existing literature and knowledge and how the study at hand will fill the said gap. Furthermore, it often describes the historical development of the field under investigation and may even speak to future directions for research or long-term probabilities. The environmental scan is not looking for gaps in knowledge, but rather to describe and explain the current state and provide evidence-based direction for future short-term outlooks. For these reasons, environmental scanning gives significantly greater attention to grey literature such as government reporting, news articles and industry reports.

Environmental scanning is designed to provide a holistic view of potential opportunities and threats both at the current time and in the short- and medium-term future. While there are overlaps with some of the other tools described in this article, it goes several steps further by investigating external source material to understand the complete environment and not just the internal context. This could even extend to interviews, observations and focus groups of other organisations; however, this may not be feasible or preferable in a highly competitive environment. While environmental scans bring attention to the key drivers linked to opportunities and threats, the literature also describes a desire to capture and report the ‘weak signals’. Harris and Zeisner (2002) describe these as small events that have the potential to become game changers, which should be deliberately sought out as part of the scanning process. Not doing so could result in some programmes not having a competitive edge over others but potentially could put them well behind their competitors. Having the skill to identify something in its infancy makes environmental scanning such a valuable business tool that can be applied to evaluation for similar impact. Likelihood and probability of these signals being significant can be qualified based on the strength of the signal and the level of saturation in the data.

Aguilar (1967) does not provide a set formula for how to conduct an environmental scan. His contemporaries have not settled on an agreed method, although some do provide clear steps for application. One rather obvious agreement point is to scope the extent of the scan before undertaking any research. However, there is more contention regarding the use of research questions. Jones (2022) speaks specifically of the need for replicable research design and questions. Wong et al. (2014) is less specific, suggesting scope should be determined by the needs of the organisation. The authors encourage evaluators to think as broadly as possible during environmental scanning. Ideally, a non-subject matter expert should be appointed to conduct the scan, overcoming any notions of researcher bias where individuals may have preconceived notions of findings. Furthermore, it is suggested that investigators take an inductive approach, avoiding hypotheses and guiding questions. While this appears speculative and lacking in evidence, this is standard inductive reasoning. As Strauss (1987, p. 29) details, ‘an experienced analyst learns to play the game of believing everything and believing nothing’ during the data collection phase. The authors have often found the best question for framing environmental scans is ‘what is happening in ____’.

This technique has been applied by the authors in their roles as internal evaluators at a regional Australian university. To help add an additional layer to basic benchmarking and market analysis of competitors in certain academic disciplines, the authors compile environmental scans into specific academic disciplines of interest for the university executive. This adds nuance to the trends seen in offerings and enrolments, ultimately helping guide strategic decision-making regarding the commencement, continuation or discontinuation of certain academic products. For example, the disciplines of astronomy and financial planning had notable findings during the environmental scan phase that contradicted the market analysis, thus demonstrating its utility (Harris, 2021a, 2021b). In the case of astronomy, enrolments at the authors’ home institution and broadly across Australian university sector were low and decreasing (Harris, 2021a). However, environmental scanning showed an increased investment from the Australian Government in space engineering and the high likelihood that NASA would continue or increase its usage of telescopes and other infrastructure owned by Australian universities, of which the authors’ home institution has a significant interest in (Harris, 2021a). These are examples of weak signals emerging in qualitative sources that likely would have been missed with simple market analysis. Surfacing this has likely put the authors’ institution at a competitive advantage.

Decision-making context

According to Rog (2012), the decision-making context is imperative to understanding who makes the decisions, under what circumstances decisions are made, and what levels of rigour and evidence are required to support the decision-making context. When evaluating the implementation of national initiatives in the Australian higher education sector during the period 2002 to 2008, Alderman (2015) found the decision-making was dependent upon which political party was in the leadership role at the time. Regardless of the successful implementation of the national initiatives and subsequent positive evaluations, these were turned off when there was a change in leadership. This example is a reminder to all evaluators that understanding the decision-making context of the non-profit organisation, in other words, who is commissioning the evaluation, is critical to the successful outcomes of the evaluation and the implementation of the recommendations.

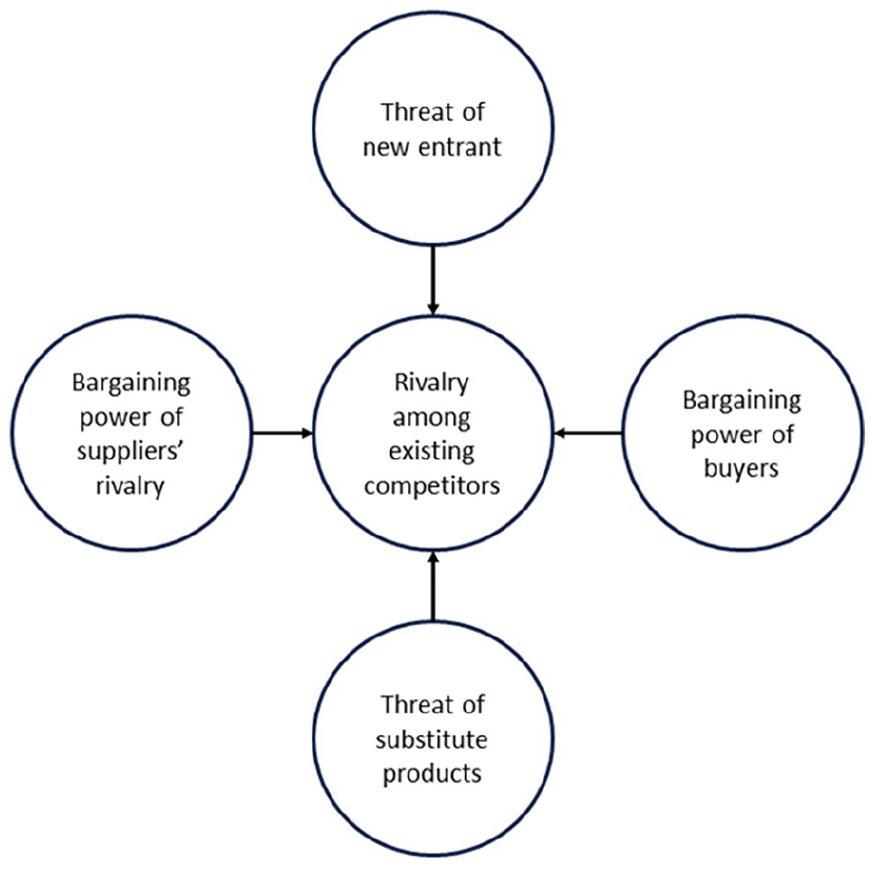

Tool #3 Porter’s Five Forces

The origin story of Porter’s Five Forces is easy to trace in comparison to many of the other tools listed here. Named after its creator, Michael Porter of Harvard Business School, the Five Forces model was first published in the Harvard Business Review in 1979 before being much of the focus of his 1980 book Competitive Strategy (Porter, 1979, 1980).

The Five Forces (see Figure 3) considers competitive rivalry in the business setting. Competitive rivalry is considered one of the Five Forces, but as demonstrated below, it is centred in the diagram, suggesting the other four (supplier power, buyer power, threat of substitution and threat of new entry) impact and influence this competitive rivalry. This work has gained considerable traction in business academia and textbooks, with Porter’s work receiving hundreds of thousands of citations. However, Grundy (2006) argues it has become largely an academic business school model rather than a tool actively used by business executives and managers. Admittedly, Grundy (2006) suggests this is to the detriment of business practitioners. He seems to believe the tool’s complexity and abstraction make it difficult to translate into practice, so he provides several adaptations and illustrations that give different and more simplistic means to use the forces in practice. Specifically, Grundy (2006) recommends rotating all five forces in and out of the centre position. So, rather than focusing on how the forces impact rivalry, he suggests considering how rivalry impacts each of the other forces of equal value. Interestingly, he also advocates for the use of PEST analysis, discussed above, alongside the five forces.

Porter’s Five Forces.

Bruijl (2018) also suggests that Porter’s model could be combined with three additional strategic models. First, the resource-based view, which focuses on the tangible and intangible resources businesses have at their disposal to capture competitive advantage. Second, the delta model places customers at the centre and encourages businesses to retain customers by offering the best product, providing a complete customer solution and creating a system ‘lock-in’ of sorts. Microsoft and Apple serve as useful examples. Both offer excellent products, their suite of products creates a complete solution for most businesses, and once adopted, it is difficult and time-consuming to switch to a competitor. Finally, blue ocean strategy is a concept with innovation at its core and the idea of being first to market. However, despite all this commentary, Dälken (2014) states there is a consensus among contemporaries, albeit academic ones, that Porter’s Five Forces remains ‘a powerful management tool for analysing the current industry profitability and attractiveness by using the outside-in perspective’ (p. 1). Furthermore, regardless of this discourse of a relatively new tool, the model is still used very commonly in recent articles published, with many applying it directly to businesses (Budiharso et al., 2022; Chitamba et al., 2024; Peng and Xu, 2024; Ukonaho, 2024; Waworuntu et al., 2024).

Evaluators may struggle with how to adapt this for their practice at first glance; however, the tool provides a competitive lens to view the climate most of their commissioners operate within. While perhaps regrettable, most programmes undergoing evaluation receive funding from government, private companies or donors. The reality is that funding opportunities are not infinite, and programmes are subject to rivalry and competition from similar programmes (competitors). In this sense, Porter’s Five Forces can be reimagined for the evaluation context, which can be important for evaluators and their commissioners alike in understanding how to pitch each evaluand to funders.

As outlined in the following section, the authors describe how to imagine this in the evaluation context. Non-profit organisations provide an excellent example of how the threat of new entry exists in almost exactly parallel ways to the business world. There are thousands of not-for-profit cancer organisations in first-world nations; some cover cancer generically and others can be incredibly niche in terms of service offerings or specificity. These all compete for funding and/or donations and at times within the same geographic location. Understanding the level of threat and the likelihood of a new entry should be a consideration when evaluating these non-profit organisations and their programmes. If either of these is high, it is worth devoting some of the evaluation documentation to demonstrating stability in an existing entity and explicating the uniqueness of offering that makes it distinct from potential new entrants. Or alternatively, pointing out the more appropriate, effective and sustainable nature of a programme against its competitors (both current and potential).

Buyer power is an interesting concept in the evaluation context. Rarely in programmes will items be purchased, so the closest equivalence is participant power. To demonstrate a programme’s value, it requires participants. Low participant numbers may not necessarily mean the programme has no value; nonetheless, it does make it difficult or impossible to demonstrate value. In this sense, programmes are competing not only with like-for-like programmes but also those with completely unrelated objectives. There will be opportunity costs associated with programme participation, as even without economic costs, there are time impositions on participation. It is unlikely for participants to be enrolled in programmes; therefore, these programmes will compete against each other from the same participant pool. This is problematic as there is not an infinite number of participants. This is increasingly challenging depending on the niche of the programme and intervention. This is why evaluations need to provide findings relating to programme attractiveness to participants and why it is a well-founded opportunity cost decision from the funder’s perspective.

Supplier power is best conceptualised as funding power. Funders, like suppliers, provide the necessary materials to allow the activity to take place (funding). Without it, it is not possible for the activity to run, or at least not at the same scale or quality. Funders, like suppliers, can be fickle. During the evaluation phase, it is worth considering the current direction of the funder. Is there more or less money available for this type of programme? Are there signals to suggest new priorities? Is a recent or upcoming change in leadership likely to impact? These are questions evaluators and their commissioners should consider in-depth while designing and compiling the evaluation. In this sense, funders do hold significant power when it comes to programmes. While evaluation findings should never be written to appease funders, they should be written to consider their notions of success and impact.

An example from practice is provided to reinforce the notion of Porter’s Five Forces. A large Australian children’s non-profit organisation commissioned an evaluation to determine the merit of a one-to-one service provided by a specialist working with a pre-school child with a particular need. In this case, the overarching recommendation arising from the evaluation was to continue to provide this service, as it had successful outcomes for children and guardians. However, in post-evaluation discussions, the commissioner (i.e. the supplier power) questioned why alternatives such as childcare had not been considered. Childcare would be more cost-effective (per child) and may mean the programme has more reach and greater impact. Childcare may also offer additional benefits such as social interaction with children of a similar age and/or better preparedness for schooling. This was a significant ‘threat of substitution’ for the programme, as it would radically redesign what existed, but it was a relevant and pertinent question nonetheless. Ironically, this could have emerged during the intervention design or the evaluation itself had Rog’s ‘broader environment’ context been considered and an environmental scan was undertaken.

Therefore, unpacking the decision-making context through the adoption of Porter’s Five Forces with particular emphasis on the ‘threat of substitution’ is useful to inform strategy. However, in this situation, the threat of substitution is an extremely positive alternative to the intervention under evaluation.

Problem context

All social programmes should be designed to address a problem with specific objectives leading to behaviour change for participants. For example, from one author’s experience in the Australian Department of Social Services, an intervention was designed to remove barriers to employment for young parents so they could enter the workforce. This was done by providing access to childcare, access to formal structured learning and/or supporting participants to gain a driver’s licence. In this situation, a problem exists, an intervention in the form of a policy or programme is designed and implemented, and eventually, it is evaluated to understand the consequences (whether intended or unintended).

Rog (2012) explains that it is important to understand the problem by investigating specific examples from the same or similar fields in order to inform intervention design and embed data collection methods for eventual evaluation into the evaluand. The authors wholeheartedly endorse this approach; however, we also believe a gap exists. What can evaluators offer their commissioners when there is blurriness or complete unknowns regarding the nature and extent of the problem? The authors have experienced firsthand commissioners who are not entirely sure what problem their programme is attempting to solve or what they want to be evaluated. For example, where a programme is an assemblage of activities with no common objectives, it means that no single evaluation is going to be successful because of the disconnect between the activities. While they initially believe they require evaluation services, in actual fact, they need assistance in determining the problem and clarifying the purpose and objectives of the intervention. The following section provides evaluators with a tool for use in the problem context.

Tool #4 SWOT analysis

SWOT (strengths, weaknesses, opportunities and threats) analysis ‘is one of the oldest and most widely adopted strategy tools worldwide’ (Puyt et al., 2023: 1). Despite its fame, its origins have remained unclear and contested within business literature for most of its time, but Puyt et al. (2023) conducted historical, archival research in the hope of uncovering the origin story. They found that SWOT, or in its original form SOFT (strengths, opportunities, fixes and threats), was borne out of the rise of strategic decision-making tools for businesses. During the 1960s, corporations in the United States were coming to grips with the antitrust legislation introduced by the US Government following World War II (Puyt et al., 2023). As monopolisation became difficult or in some instances illegal, corporations were forced into direct competition with multiple competitors. Prior to this, problems were relatively inconsequential, as having a monopoly meant consumers had little choice, even if problems existed. However, corporations now need tools to help them evaluate and understand their problems in a competitive environment and through multiple lenses, including internal, external, past, current and future states.

More recently, Popelier (2018) used SWOT analysis to demonstrate the potential of social network analysis as an evaluation methodology. Sturges and Howley (2017) suggest using a SWOT analysis as the final phase in the annual cycle of ‘response meta evaluation’, where a ‘meta evaluator’ collects feedback and evidence on potential changes to the evaluation data collection and monitoring and evaluation plans. As will be discussed later in this article, the authors believe there is a better tool for this activity.

Dichter (2008) suggests SWOT could be used in uncovering broader contexts to understand which variables may determine causation and others which are unable to be controlled. Similarly, McIntyre (2002) argues a SWOT should be undertaken to understand the ‘social, cultural, political, economic and environmental conditions’ leading to the commissioning of an evaluation. Fitzpatrick (2012) used it to evaluate legislation, recommending that it be used at the concluding phase of the evaluation (p. 497) to essentially elucidate the problem that is being solved.

Finally, Harris and Alderman (2022) believe that SWOT analysis should be applied to the specific evaluation activity of reviewing and reaccrediting academic programmes in colleges and universities. Thiele et al. (2007) describe a similar usage of SWOT to what the authors of this article articulate, albeit very briefly. They used SWOT in ‘early phases of strategic planning’ with programme stakeholders, again presumably to help uncover the problem.

The problem can emerge from all four domains of SWOT analysis. While it might seem more intuitive for problems to emerge from weaknesses and threats, problems can also emerge from strengths and opportunities. For example, opportunities may exist, but owing to external or internal circumstances, they cannot be capitalised upon. This is inherently a problem as it may be hurting the future prospects of a programme or policy that an opportunity cannot be captured. For instance, if the problem is insufficient funding for the correct intervention, perhaps funding should be increased or directed entirely elsewhere. Moreover, strengths and weaknesses (and opportunities and threats) can easily become inversions of one another. Consider an instance where a key programme strength is the presence of experienced and skilled staff. In the event these staff leave, this immediately becomes a weakness. Programme or processes reliant on a single source for functionality are problematic. Tools such as SWOT analysis help bring these issues to the forefront so interventions can be designed to overcome these shortcomings. Similarly, if an opportunity arises for a new funding stream, this could immediately become a threat if other programmes choose to compete for it. SWOT analysis is not unheard of as an evaluation tool, as in the spirit of meta-evaluation that many evaluators will appreciate, SWOT has been used as a tool to analyse evaluation method(s) used in, and for, individual evaluations.

SWOTs are low-investment and low-resource activities to carry out. Other than the time of a facilitator and a room, both physical or virtual, and a document for data collection, there are no costs. Skilled facilitators will determine a data collection strategy designed to ensure differing personality types get equal and ample opportunity for their data to be collected. In the physical setting, this might include large-group or small-group discussions leading to the formation of written notes that will be collected for formal data reporting. This allows for endless discussion to take place for those willing to engage but only formally collects written data, thus giving greater agency to introverted and more passive participants.

Action context

When the commissioner receives the final evaluation report together with its recommendations and/or findings, what happens next? As alluded to earlier, Coldwell and Moore (2024) provide some guidance in this area, suggesting an additional ‘consequential’ context. First, this insists on correctly ‘interpreting’ the results of the study and, specifically, whether the intervention was successful. Determining what qualifies as success is closely connected to and shaped by the decision-making and evaluation contexts and needs to be established in order for meaningful results to be communicated. More importantly and significantly, Coldwell and Moore (2024) argue impact evaluations have utility well beyond the individual evaluation itself. It goes on to form some of the problem and broader environment contexts in future evaluations and informs future theory and policy (Burnett and Coldwell, 2021). Understanding evaluations have ongoing consequences is an important context to consider when considering what happens following evaluation reports.

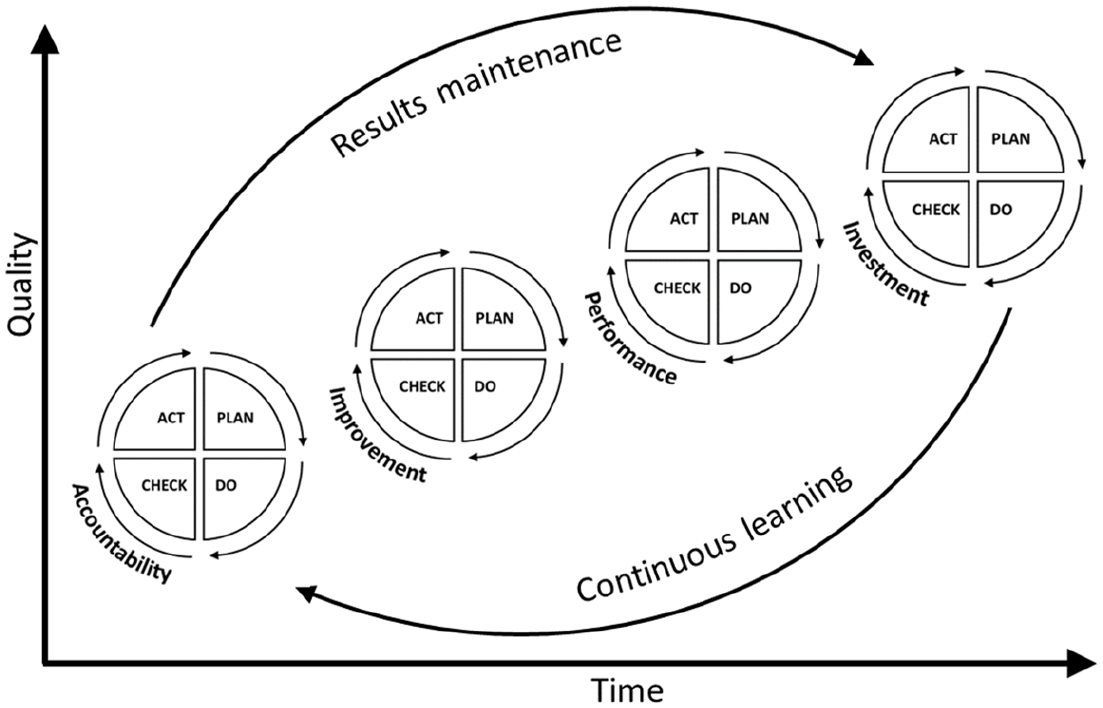

However, in some sense, this does not consider the context that immediately follows the publication of an evaluation. As internal evaluators, the authors apply the continuous learning framework (Alderman, 2014, 2022). Built on the work of Deming (1986) in the automobile industry, this framework (Figure 4) consists of four elements of quality: accountability, improvement, performance and investment. Within each of the four elements, there is Deming’s ‘plan, check, do, act’ cycle of quality improvement. As Deming theorised, the pursuit of continuous quality improvement leading to an entity becoming a continuous learning organisation takes time and needs to be traced across time.

Alderman’s (2022) continuous learning framework.

While policy, programmes and other initiatives undergoing evaluation always have an initial starting point, evaluators are always mindful that others form part of cycles, as the delivery of a programme is repeated. This is missing from Rog’s (2012) representation, but essentially, with each evaluation comes another set of problems to solve when redesigning interventions. In this sense, the problem context tools discussed below can be utilised in the initial problem inception phase or in the post-evaluation and redesign setting.

Alderman’s (2022) framework was designed to be a foundational element of evaluation design, as while organisations often focus on accountability and performance, there is often little to no improvement unless there is an associated investment of time, funding, human resources or a combination of these three things. The authors suggest evaluators are often excellent at ‘checking’, that is, evaluating and often providing useful contributions in the ‘plan’ phase (if invited to participate). However, rightly or wrongly, evaluators are often not invited to participate in the ‘act’ phase. This should be the phase in which findings are acted on for results maintenance and continual improvement.

Building upon the utility of the continuous learning framework, the authors would argue that there is one additional context that is worthy of consideration and that is the ‘action context’. The action context is the response to the evaluation context. In Figure 5, the authors have adapted and built upon Rog’s (2012) ‘bringing context to the foreground’ (see Figure 1) to create the evaluation context cycle.

The evaluation context cycle.

This is often an awkward period for the commissioner and those associated with the programme evaluated. There may be unexpected findings and the recommendations may require radical change for the next phase of delivery. The tool outlined below is called Stop Start Continue Change and offers commissioners and programme teams a structured way to consider the evaluation recommendations and decide what changes need to be made using a collaborative, team-based approach to change.

Tool #5 Stop Start Continue Change

The Stop Start Continue Change tool has very limited coverage in academic literature and yet the authors have used this tool in practice for over 20 years. Traditionally known as ‘stop, start, continue’, it appears to have multiple nomenclatures including ‘stop, keep, start’ (DeLong, 2011) and ‘stop, start, continue, change’ (Carter et al., 2017). The authors believe the inclusion of change is important, as while it might be considered a sub-component of continue, there is considerable nuance. It remains unclear to the authors (and the rest of the academic community) where it has originated. Academic literature is exceedingly sparse on this tool, particularly when compared to the others described in this article.

It is a tool used for performance evaluation in teams and organisations, as it provides simple action points for what they should stop doing, keep doing and start doing (DeLong, 2011). While the literature searches have revealed the tool is most frequently used in the education setting, particularly tertiary education, Stop Start Continue Change is, like the rest of the tools in this article, most likely emerging from the business discipline.

As mentioned, there is a concentration of Stop Start Continue Change literature in the education setting, with Hoon et al. (2014) as one of the most cited articles in this literature. It outlines a study on university students and their feedback on coursework surveys. Three groups were established, one of which was given a general free-text response option, the second a Likert-type scale on course satisfaction and other course issues, and finally, a third with four structured free-text questions centred around Stop Start Continue Change. The results showed the Stop Start Continue Change group gave significantly more profound and constructive feedback than the other two. While the study itself arguably has more impact in the Rog (2012) ‘evaluation context’, as it was used as a form of student evaluation, the action of academic staff determining which of these to follow through on is a form of using the tool in the ‘action context’. In most cases, courses are offered and reoffered, and therefore should be subject to continuous improvement.

Other student evaluation settings have been considered as well. Romney and Pound (2021) discuss the utility of Stop Start Continue Change in mid-study period student surveys, as students see the added benefits of problems solved and good practice continuing, rather than having to wait on the feedback from the next cohort. Keane (2015) reports on an academic peer review of teaching practice using a Stop Start Continue Change framework. Cunningham and White (2022) suggest using this tool in combination with sentiment analysis conducted by a natural language model. As discussed, Stop Start Continue Change appears less frequently in the formal business literature, but it is mentioned in relation to managerial planning and problem solving the identification of necessary issues (Connors and Smith, 2000; Kelley, 2016).

While Stop Start Continue Change is clearly a valid evaluation context tool, as demonstrated by the literature cited above, the authors suggest the tool has even greater application in the action context phase following evaluation, prior to intervention redesign. The outcomes of most evaluation reports come in the form of findings and recommendations; whether they are implemented is a consideration those closely connected to the programme need to determine, in line with their current realities. Evaluation reports serve as a useful tool to elucidating the problems hindering greater programme success and justifying intervention redesigns. Consider the following scenario. A programme is found to be highly successful and has positive social impacts, yet due to broader political and financial imperatives, funding is reduced. This forces a programme redesign where some activities will need to ‘stop’ or ‘change’ to accommodate a smaller budget. The Stop Start Continue Change model provides a structure for such a common scenario like this.

Conclusion

The exploration of context in evaluation is widely accepted as being essential; however, there is little exploration into the different types of contexts that exist and the best ways to gain an understanding of each. This article aims to address that gap by mapping effective evaluation tools to each of the types of contexts that exists in the continual cycle of evaluation. In summary, the Business Case tool is effective for the intervention context because it brings the circumstances and objectives the initial project was aiming to address back into focus; an environmental scan is best for looking at the broader environmental context, as it provides a holistic view of the potential opportunities and threats to the intervention both in the present and short- and medium-term future; Porter’s Five Forces is useful for exploring the decision-making context because it provides an insight into the climate the commissioners are operating in so that the evaluation can be in line with the restrictions of this framework; a SWOT analysis is used to investigate the intervention context, as it uncovers the problem the intervention is aiming to address; and finally, the Stop Start Continue Change tool is useful in the action context, as it offers commissioners and programme teams with a structured way to consider the evaluation recommendations and decide what changes need to be made. Using the right tool for the right context is essential for gaining a comprehensive understanding of the environmental factors influencing the intervention; therefore, it is beneficial for evaluators to be purposeful in choosing which tool to implement.

Finally, this article provides insights for non-profit organisation on how to step into evaluation in a structured, albeit low cost, manner.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.