Abstract

There is still widespread debate about the role that an evaluator could, should or even must play. Evaluations are often complex and require a broad array of interlinked roles and areas of expertise, making it difficult to generate a singular definition of an evaluator. Furthermore, an evaluator may be expected to change their responsibilities and actions throughout the stages of an evaluation, changing the role they play. As practitioners, we believe there is value in contributing to this discussion, providing our perspectives based on personal experience and data collected during the 2022 European Evaluation Society conference. In this article we seek to describe six evaluator traits which we believe to be the most influential to the roles which we play, and six evaluator roles which best illustrate the impact of changing these traits.

Introduction

While there is still widespread debate about the role that an evaluator could, should or even must play during an evaluation, or the many hats which they must wear, there is limited written on the topic. Take for example one definition of evaluation as ‘the process of gathering information about the merit or worth of a programme for the purpose of making decisions about its effectiveness or improvement’ (Owston, 2008 [2007]: 606). This like most attempts to define evaluation is broad. There is a lack of standardisation or expectation of what an evaluator is, how an evaluator conducts their work and what is included in an evaluation. This is unsurprising: evaluations are often complex and regularly require a broad array of interlinked roles and areas of expertise, making it difficult to generate a singular definition of an evaluator. For complex evaluations, an evaluator may be expected to change their responsibilities and actions throughout the stages of an evaluation, changing the role they play.

In order to ensure an evaluation meets the needs of its commissioner and other relevant stakeholders, it is important to have an awareness and understanding of the different roles an evaluator can play, given desired outcomes. An evaluation commissioner may be seeking independent value judgements but define a role which unwittingly restricts or prohibits such independence due to an emphasis on cooperation with an evaluand. We felt that as practitioners with a combined 20 years of experience, there is value in contributing to this discussion, providing our perspectives. This is based on personal experiences working with public and private evaluation commissioners together with experiences we collected as part of ‘live’ data collection during the 2022 European Evaluation Society (EES) conference. In this article we will seek to describe six evaluator traits, which we believe to be the most influential to the roles which we play, and six evaluator roles which best illustrate the impact of changing these traits.

What the literature tells us

Although much has been written about the evaluation discipline, the bulk of the literature focuses on evaluation methods and how they are applied, with evaluation theorists describing roles based on specific methods (Skolits et al., 2009). While a useful starting point, this does not provide a realistic and useful conceptualisation of evaluator roles to help practitioners and wider stakeholders understand the practical implications of different roles.

According to Skolits et al. (2009), evaluator roles can fit four main categories: evaluation methods (qualitative or quantitative), evaluation models (formative or summative), relationship with stakeholders and situational response. Throughout the limited literature, various roles such as the ‘judge’, ‘educator’, ‘advocate’, ‘critical friend’, ‘methodologist’ and ‘problem solver’ have been identified and put forward by multiple authors including Scriven (1991), Preskill and Torres (1999), Morabito (2002) and Weiss (1978), among others. For example, the ‘Judge’ is an evaluator who investigates and identifies the value of an evaluand, and determines the criteria of merit (Scriven, 1996). The ‘Educator’ on the contrary, focuses on generating information through the evaluation that can be used for decision making for project inputs and the long-term goals of the programme (Weiss, 1978). However, while several of these labels have been put are forward, they are often vaguely described and provide insufficient description of how an evaluator playing each role will respond to evaluation activities and manage relationships (Skolits et al., 2009).

Evaluator roles may also be categorised based on the expectations of the stakeholders (Skolits et al., 2009) or the likelihood of an influential evaluation process (Morabito, 2002). Depending on the role of other stakeholders, the evaluator can adopt a complementary role. For example, when the stakeholder is a policymaker, the evaluator may adopt an auditing role (Patton, 2008a). There have been active discussions in the evaluation community about the important role that evaluators can play in addressing key issues facing society, such as being advocates for democracy or environmental sustainability. By raising questions and providing evidence on potentially challenging topics, the evaluator can play a ‘provocateur role’ (Mertens, 2008), unsettling or challenging traditional realities. These are just some examples of the common categorisation of evaluation roles and how they are described by the literature.

Most of the categorisations discussed above require an evaluator to hold one single role, but evaluators can perform multiple roles during an evaluation. A range of factors will influence the role an evaluator plays, including the stage of the evaluation, the maturity of the evaluand and, perhaps most critically, the changing priorities of evaluation commissioners.

One factor that significantly influences the role an evaluator plays is the stage of the evaluation. For example, it is often implicitly expected that an evaluator will play one role in the initial design phase, another during the data collection and analysis, and yet another in the lesson learning stage. Where an evaluator is present throughout this life cycle, they will likely switch from being a Theorist in the design, a Methodologist during the data collection phase and an Educator during the learning and dissemination phase (Luo, 2010).

Given the lack of standardisation of what a good evaluation or evaluator is, several professional evaluation societies worldwide have developed competency and capability frameworks for evaluators, including CES (2008), AEA (2018) and UKES (Simons, 2012). The purposes and content of these frameworks differ, but broadly they seek to enhance the professionalism of the evaluation field by creating consensus on the capabilities required to conduct a quality evaluation, particularly important in the absence of formal evaluator qualifications. These competencies provide a framework for evaluation as a social, ethical and political practice and are synergistic with the traits that we propose as instrumental to an evaluator.

We seek to contribute to and further this discourse on roles by first exploring six key traits or skills which evaluators are expected to demonstrate to one degree or another: Curiosity, Perspective, Flexibility, Independence, Collaboration and Communication. These traits exist on a scale and moving the slider for just one of these traits can have a significant impact on the role the evaluator plays and, perhaps more importantly, is perceived to be playing. Additional analysis of these traits has been generated through our presentation at conferences and events, where evaluation practitioners and commissioners have responded to survey questions through real-time data collection tools to inform perspectives on the traits presented and their importance within evaluation, the results of which are discussed below. Using these traits, we will set out six evaluator roles for use in today’s context – Judge, Advocate, Critical Friend, Educator, Methodologist and Theorist – and use these to better understand the question of what role should evaluators play.

Evaluator traits

There is no limit to the number of evaluation traits that could be identified. As with any analytical role, a complex blend of skills are required to varying degrees. Numerous articles seek to explore different traits or characteristics which evaluators must or should have, or could have if the occasion is right.

As such, we start this discussion by recognising that the six traits highlighted below do not represent an exhaustive list. Nor do they necessarily reflect the most important traits for an evaluator to possess. Instead, we see these six characteristics as critical to defining the role an evaluator plays. Each of the six represent a sliding scale or dial: they can be turned up in one context or muted for another to reflect the evaluative task appropriately, and in doing so can redefine the evaluator’s role. The six traits we will explore are as follows.

These traits can broadly be split into two categories: those which influence the scope of the evaluator’s role (curiosity, perspective and flexibility, informed by the evaluation method and model (Skolits et al., 2009)); and those which influence integration of the evaluator with the evaluand (independence, collaboration and communication, informed by stakeholder relationships (Skolits et al., 2009)).

Curiosity

Curiosity could also be described as inquisitiveness and is a measure of the evaluator’s ability to investigate beyond a given scope. It is perhaps best expressed as a question of limitations in terms of what the evaluator may or may not consider in their analysis. The primary function of the evaluator is to answer analytical questions which may require a great degree of investigative work, exploring new avenues as they are identified, to generate a satisfactory answer, or it may require the review and assessment of a fixed dataset without room for further exploration. In many cases, evaluation questions are defined by the commissioner, setting clear boundaries and restricting the possibilities for curiosity. As a trait, curiosity becomes arguably more salient when the evaluator is able to set their own evaluation questions and determine routes of inquiry when developing such questions. In this sense, curiosity will be related to commissioner oversight and flexibility.

Curiosity raises questions of evaluation bias. If a commissioner seeks only to explore questions which they know will emphasise positive results, then the evaluation may be impacted by positivity bias. Curiosity and the lengths to which an evaluator can explore negative results in addition to the positive is key to mitigating such bias. As such, curiosity as a trait can also requires courage. Evaluators are often confronted with examples of intervention failure, leading to evaluation results likely to spark disagreement. A reticence to engage with negative results restricts curiosity and changes the role of the evaluator. Limiting evaluator curiosity may lead to key contextual factors being overlooked or under-analysed, perhaps even factors which may more clearly situate negative results or inform intervention replication. In these cases, turning the curiosity dial down can reduce opportunities for learning.

The formulation of evaluation questions themselves adds another layer of complexity: is the evaluator seeking to understand simply what has happened, or are they able to explore the how and the why as well? These open-ended questions require deeper analysis and data collection, and the evaluator must have sufficient curiosity to identify, analyse and compare different contextual factors influencing an intervention. In the context of our typology, the Critical Friend must have high curiosity and freedom to explore issues which they deem will best generate useful, responsive learning to support the evaluand’s development. The Methodologist, on the contrary, limits their own curiosity at the outset to maintain methodological rigour and replicability of evaluation results.

While one might argue that inquisitiveness, freedom and courage are all necessary characteristics for an evaluator, curiosity has practical limitations and is never completely unconstrained. The first limitation is simply time. An evaluator cannot be expected to explore each emerging inquiry in a complex evaluation, nor should they. Many emerging issues turn out not to be pertinent to the evaluation but robust exploration of them can stretch out the evaluation process significantly. A second limitation is resourcing. Practicing evaluators will be familiar with the trade-offs between exploring an issue to the fullest extent and having the resources to do so. Finally, there can be a trade-off in the depth of findings if the evaluator is not sufficiently focused and spends too much time ‘down the rabbit hole’. This can result in low satisfaction for both commissioner and evaluand, reducing the usefulness of the evaluation.

Perspective

The perspective trait does not sit on a spectrum of high to low, but rather one which moves from the big picture to the granular, and defines the level of detail which an evaluator may be expected to explore. In evaluation terms, this is a question of generalisation or abstraction, and it is a critical characteristic in defining the role played by the evaluator. At the big picture end, an evaluator is likely to be responsible for assessing overall performance of an intervention or suite of interventions. This may push the evaluator into a more strategic role, thinking less about the intricacies of the context and outcomes of the intervention, and more about how an intervention fits within the wider environment, focusing on issues of coherence and relevance. This big picture thinking may move an evaluator towards the Advocate role, seeking to establish where an intervention fits and influences a wider ecosystem.

At the more granular end, the evaluator’s role would be one of detailed investigation for a specific line of inquiry. This relates back to curiosity; the lower the perspective, the more restrictive the scope as highly granular assessments of specific interventions offer less opportunity for generalisable learning. Again, we see a commonality between the Judge and the Methodologist when perspective is dialled down. Balancing these perspectives are the Critical Friend and Theorist, roles which must sufficiently understand the granular detail and situate the evaluand within wider contexts.

Perspective can be heavily influenced by the scope set by a commissioner. Where the interest is learning for future interventions or wider information sharing for communities of practice or replication, the evaluator’s perspective should be higher to allow development of lessons and recommendations with broader application. The potential trade-off here is that with a big picture perspective role, the recommendations provided by the evaluator may be less operational and less well suited to immediate application and course correction by the evaluand.

Flexibility

Flexibility encompasses the extent to which the evaluator can change their approach over time, adapting to changing priorities, contexts and challenges. This potentially involves adjusting the sliders on the other five traits, to respond to emerging needs. Flexibility is also a question of creativity. Evaluators are ultimately problem solvers, using different analytical tactics to interpret evidence and present a coherent narrative in answering their evaluation questions. A wealth of evaluation methodologies have been developed over the years. There is no one method that fits all requirements equally, and so an evaluator may need to adapt existing methods or even design new ones to effectively deliver what is required. Creativity is also critical in the interpretation of evidence, with inductive and deductive reasoning requiring different levels of extrapolation from available evidence to generate robust findings.

At the low end of the scale, evaluators may take a rigid position on methodology, sticking strictly to processes laid out in a methodology design or approach paper. These approaches accept the limitations of a methodology without seeking to challenge them, acknowledging their role in the completeness of evaluation findings. Moving up the scale, creative flexibility becomes a tool to adapt a given methodology to the evaluation context, potentially reducing rigour but allowing limitations to be mitigated. At the top of the scale, evaluators may not have a fixed or defined methodology at all, responding to emerging information and adapting on a continuous basis. This has its own limitations in terms of replicability and arguably reliability of results, but may enable quicker evaluation responses for time-sensitive interventions. It is also critical for design roles, like the Theorist, where creativity is more required.

Independence

Thinking back to Skolits et al. (2009), independence is a key trait in describing the evaluator’s relationship with stakeholders. Above, we noted that curiosity was one tool to combat evaluation biases, but the traditional trait for this task is independence. The common perception is that an independent evaluator is critical to removing bias and generating credible assessments which fairly reflect the realities of an intervention. 1 At the high end of the scale, an evaluator may have little to no direct contact with the evaluand to preserve this independence. Typically, the Judge might be expected to be the role that best reflects this trait, seeking to provide an unbiased assessment of the evidence. In qualitative evaluations, which rely on subjective analysis of subjective data, a range of strict, semi-quantitative methodologies in the Bayesian school exist to mitigate biases and encourage objective analysis. Where the independence dial maxes out is the realm of the Methodologist, particularly when applying scientific or quantitative methodologies where engagement with the evaluand may not be prioritised.

However, independent assessment is not the only function evaluators can provide. As facilitators of learning and knowledge, evaluators are well positioned to advocate for, collaborate with and support an evaluand; the Advocate, Educator and Theorist roles, for example, require much less independence. In the development space, where the implementing party may be an institution with limited capacity operating in a developing context, the support of an experienced evaluator to integrate learning and advocate for programmatic change can be valuable. Building a closer relationship with an evaluand may lower perceived independence, but it can facilitate greater access to information. This can, lead to tailored learning and recommendations to which the evaluand may be more receptive.

Collaboration

The trait of collaboration builds on the idea of independence. Specifically, we define collaboration as the extent to which the evaluator continues collaboration with either party after the conclusion of the evaluation, to facilitate the implementation or operationalisation of recommendations. At the lower end of the scale, an evaluator would be expected to make recommendations based on the available evidence without any direct follow-up. In this scenario, the evaluator’s role is to evaluate, not to design or implement, closer to the role of a Judge.

However, the knowledge and understanding developed during an evaluation offers a unique perspective which can benefit an intervention beyond the scope of the evaluation itself. Where the collaboration dial is increased, an evaluator might continue to work with the evaluand or commissioner to further tailor recommendations and develop action plans for their implementation. This could, for example, include facilitating forums to enable the evaluand to use evaluative evidence to inform decision-making. In some cases, an evaluator could even take on direct responsibility for the implementation of recommendations, embedding their understanding within the intervention to strengthen it further.

Communication

All evaluator roles require communication skills to effectively engage stakeholders and generate evaluation evidence. For the purposes of this discussion, we define communication in terms of the extent to which the evaluator takes responsibility for knowledge sharing. In this context, the trait may be mainly one of dissemination or influence.

At the low end of the communication spectrum, an evaluator may only be required to communicate findings with the evaluand and commissioner. The evaluation outputs can include shorthand or intervention language as the audiences are familiar with the intervention. At the high end, the evaluator may be more of a knowledge broker, proactively seeking new audiences to share information with and facilitating engagement. At this end of the spectrum, the evaluator may be expected to produce multiple outputs for different audiences, tailoring them for different recipients in terms of language, content and complexity. This again raises the issue of evaluator bias: can an evaluator effectively fulfil high communication requirements while avoiding the perception of advocating for a given intervention? As with the previous traits, the communication requirement for a given evaluation is in part informed by the commissioner, but if an evaluator is responsible for knowledge dissemination to wider audiences, then they may draw on their external knowledge and networks for further dissemination.

Beyond knowledge sharing, the communication trait also speaks to knowledge management and retention. Evaluators can usefully provide long-term services to an intervention or evaluand, becoming a source of institutional knowledge, particularly of learning and intervention development over time, for example, capturing knowledge of what contextual factors influenced past decision-making. In such cases, the line between independent evaluator and intervention support staff can become harder to define as evaluators may be more closely involved in decision-making processes, or at least may have some control over the information which informs decision-making.

Practitioner perspectives

As noted above, these traits were tested with a small sample of EES conference participants to generate additional perspectives and opinions. The sample size was relatively small (15 participants) 2 and so quantitative inferences are limited, but the results offer insight into the thinking of evaluation practitioners and commissioners (with an approx. ratio of 3:1).

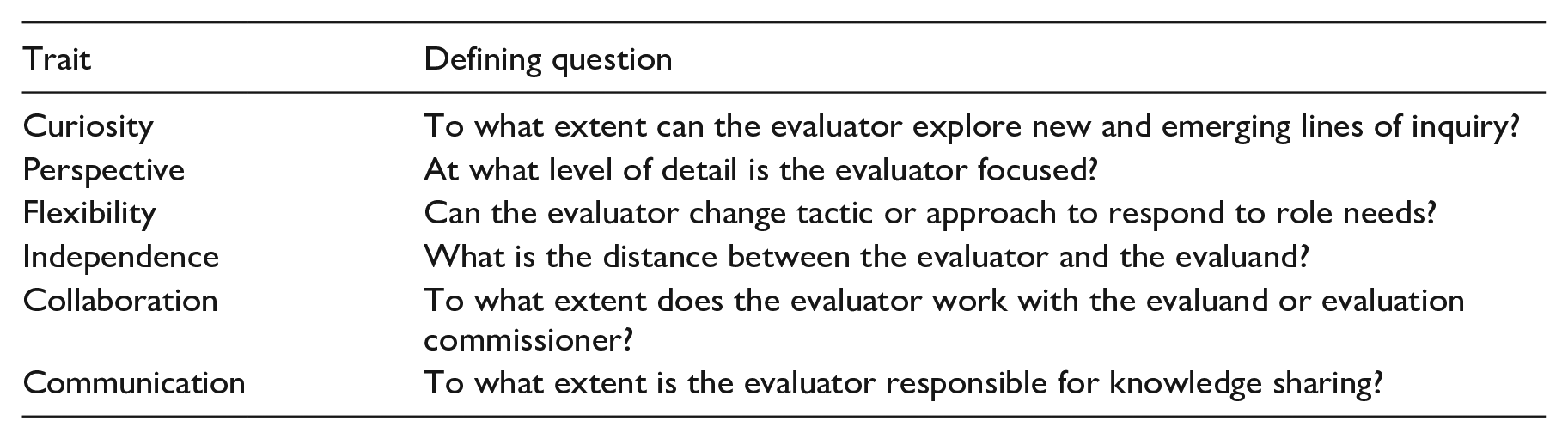

Participants were asked to rank the six traits in order of importance for evaluators, regardless of role. As shown in Figure 1, collaboration was ranked the highest by far, with curiosity, communication and flexibility grouped tightly together. Languishing behind were perspective and, interestingly, independence.

Ranking of evaluator traits by importance.

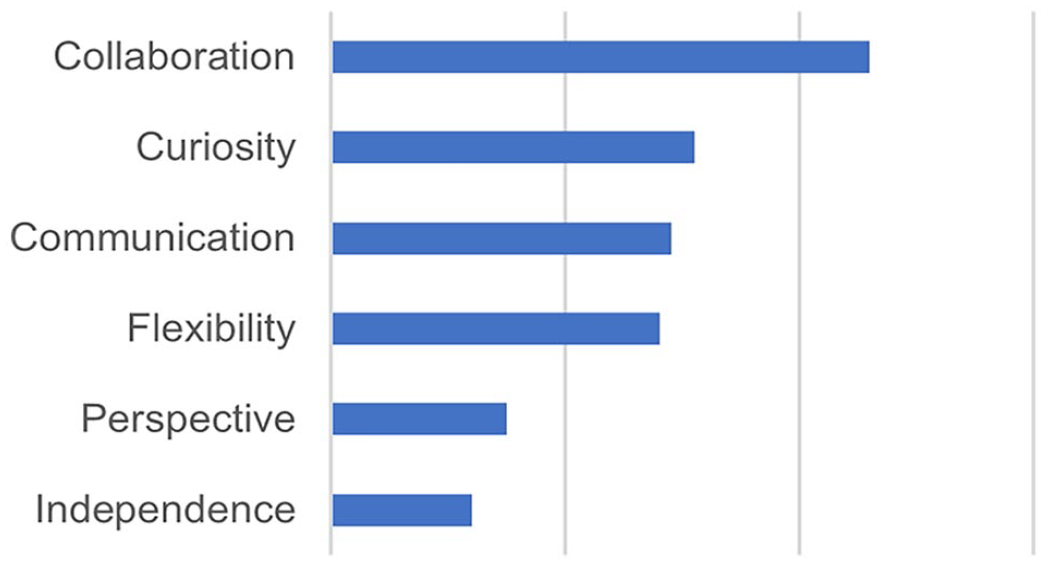

Collaboration being ranked first is indicative of the role evaluators are increasingly expected to play, working with implementing bodies to inform learning-based adaptation and advance transformation. As shown in Figure 2, participants felt that collaboration with the evaluand was essential, and a large majority felt that collaboration with the commissioner and evaluand partners was also critical. The results were more evenly divided in terms of collaboration with evaluation audiences and wider interested stakeholders. This is to be expected, with these groups further removed from the evaluation and less likely to have a vested interest in collaboration, although both were positively identified by about half of participants.

Who should evaluators collaborate with?

A majority of participants were evaluation practitioners and academics, and so it is not surprising that a majority of respondents felt that evaluators should always pursue emerging lines of inquiry, regardless of their role, in relation to curiosity. A small number of participants, however, felt that this should be constrained by the scope set by the commissioner and that an evaluator’s curiosity should have limits. This may speak of the practical limitations noted above, or the implications of commissioner oversight. Communication was ranked third by participants, narrowly behind curiosity. This conforms with the results on collaboration, indicating that there is a perception that evaluators should play a more active role in change processes through support functions. This could be co-design of recommendation implementation plans (collaboration) or direct communication of intervention results to influence other actors in the sector and motivate further change. Flexibility was also rated highly by participants, demonstrating that current practitioners value the ability to adapt and change direction as an evaluation develops. This may be indicative that our participant base represented more qualitative evaluators, and less quantitative or scientific evaluators who would be expected to perceive flexibility differently and typically see themselves as having a more objective testing role.

At the low end of the rankings, big picture perspective was ranked low, indicating that participants felt more granular perspectives are important for evaluator roles. In our experience, big picture generalisable thinking is often promoted by evaluation commissioners. This is a way to elevate evaluation outputs and ensure they maximise the potential benefit across the commissioning institution beyond interventions, which may be short-term and not repeated, thereby generating wider learning. However, our practitioner-majority participants felt that getting into the detail and understanding intervention context was more important.

Despite its unanimous low ranking, the live results regarding independence were somewhat divisive with a 60/40 split in favour of evaluators not being independent. This may represent a growing sense of urgency among practitioners for which agile adaptive action to maximise results is more highly prized than highly credible independent evaluation findings. This is reflected in methods such as Utilisation-Focused Evaluation and Developmental Evaluation focusing less on strict independence and more on evaluation as a collaborative process to enhance delivery.

Proposed evaluator roles

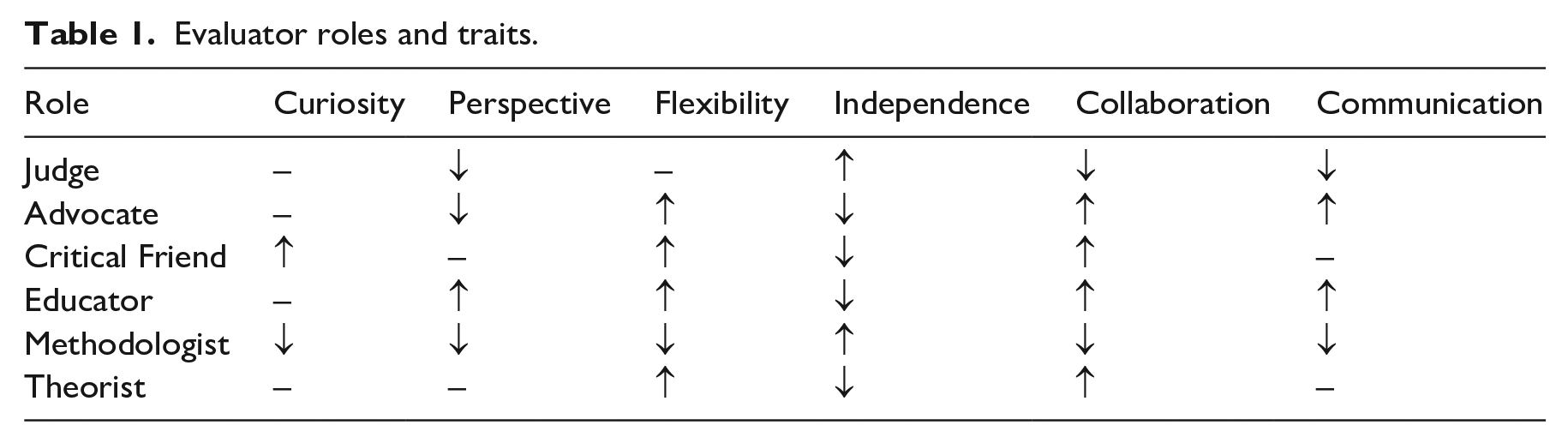

Drawing on the literature, ongoing discourse in the evaluation community and our own personal experience, we felt that practical guidance on different evaluation roles was limited. While the six roles we present below originate in the literature, they are often only presented as general descriptions, with limited guidance on how and when these roles could be deployed and what traits would be more significant to different roles. We also found that there is often a gulf between what evaluation theorists propose and what is applied in practice. The experience of many practitioners is not frequently captured by the literature, particularly on challenging and introspective topics such as what role should we play to be effective evaluators. While much of the below discussion and proposed roles will be familiar to practitioners, we hope that by sketching out a lexicon, we can contribute to this discussion, facilitating consensus-building between evaluator, evaluand and commissioner to support effective evaluation practice. The roles described below are our definitions and may not reflect the reader’s own experiences; as the purpose of this article is to further discussion, we encourage the reader to reflect on their own definitions and contribute to this discussion. For reference, the level for each of the six traits described above is provided for each role in Table 1 using a simplified high (↑), medium (–) and low (↓) grading.

Evaluator roles and traits.

Judge

The role of Judge, inspired by Scriven (1967), establishes the vital role of the evaluator to combine empirical evidence with probative reasoning to determine the merit, worth or value of a process. It is a role that can be perceived as the original type of evaluator as the Judge investigates, collecting empirical data and assessing it against a set of standards or values.

In this role an evaluator is seeking to make a summative evaluative judgement, and must place value on data often by comparing results from other interventions. The role is similar to that of an auditor as they both make a systematic assessment of evidence against a predetermined framework, with the difference being what they assess and their field of origin. Auditors have emerged from the financial sector while evaluators have mostly emerged from social sciences. This role is best performed by external evaluators as it requires qualitative, non-biased judgement to make an assessment of value. The Judge role is methods neutral, and can be played in both a theory-based evaluation or conventional impact assessment. It is most appropriate at the implementation stage of an evaluation, likely a final or outcome focused evaluation and during the data collection and analysis.

Given the requirements of the Judge role, we expect such evaluators to have high independence and fewer collaborative traits - such an evaluator must be unbiased and not open to influence by the evaluand or commissioner. The communication trait is also likely to be low, as they will not be involved in tailoring and communicating evidence for different stakeholders nor supporting wider learning. In terms of the traits of curiosity and flexibility, they are likely to be in the middle, though guided by the commissioner to set where and how the evaluation generates evidence and assesses value. Perspective is more complicated, as it will depend on both the evaluand and commissioner, but generally requires a perspective beyond the intervention to contextualise outcomes and fully establish the value of the evaluand in generating these outcomes.

Advocate

An evaluator playing this role advocates for the primary users of the evaluation and for the evaluation to be used and useful (Patton, 2008b). Advocates play an important role in ensuring that evaluation outcomes and the evaluation process itself is influential, through supporting evaluative inquiry and fostering learning in ways that enable evaluand and commissioners, as well as wider audiences, to learn, share and adapt practices. In this way, Advocates may be seen as change agents, advocating for and influencing positive change from evaluation evidence. Evaluators can also be considered change agents as they have time to capture what can be seen as unbiased evidence and have access to forums to share this evidence with decision-makers. The Advocate role is also methods neutral - it is primarily about how an evaluator communicates and engages with an evaluand.

Evaluators can play an Advocate role when focusing on a specific issue, for example, when collecting evidence on how aspects of gender are considered or establishing evidence and recommendations to ensure the evaluand benefits the most vulnerable groups. In this way, Advocates aim to shed light on key issues or groups and seek to enhance their outcomes.

There are opportunities for both internal and external evaluators to effectively be Advocates. Internal evaluators will have a deep understanding of internal processes, are able to draw on wider organisational learning and are often better placed to craft implementable recommendations. External evaluators, while less familiar with internal processes, can often advocate more successfully as they are valued for their external expertise and viewpoints. Evaluators can play an Advocate role during all stages of an evaluation process, but is most likely to be effective during lesson learning, when they can advocate for change based on evaluative evidence. Advocates could also advocate during evaluation design to ensure focus on key topics and an evaluation process that leads to change.

For an Advocate role, the collaboration trait is dialled up, as a successful Advocate would need to be highly collaborative with both evaluand and commissioner. They would likely be deeply involved in processes to support evaluation evidence influencing changes to practices. In contrast, independence would be low, given their focus on influencing change. Other traits that would be high include communication, as evaluators playing this role would likely be involved in supporting evaluation uptake and flexibility, to ensure that evaluations could adapt based on emerging evidence and pivot focus to outcomes that support changing practices. While still important, the trait of curiosity would be influenced by what evidence and evaluand processes would lead to the largest change or based on existing priorities, in this example those that concern gender. Perspective is likely to be more granular (or low) as an Advocate is likely to focus on the question of ‘how’ to improve an intervention directly.

Critical Friend

This role emerged in the 1970s in an educational setting as a trusted individual supporting another’s learning through asking critical questions, presenting data and providing an outsider perspective (Storey and Wang, 2017). This role has since become increasingly integrated into other settings. Costa and Kallick (1993) provide a seminal definition of this role as ‘a trusted person who asks provocative questions, provides data to be examined through another lens and offers critiques of a person’s work as a friend’ (p. 50). The Critical Friend argues for the success of the intervention, taking the time to fully understand the context of the work and the expected outcomes, where the Critical Friend has the best interests of the evaluand at heart and the project staff trusts their advice. This means that the evaluator can equally be a critic and a trusted advisor, supporting the evaluand to achieve objectives and outcomes effectively while maintaining independence.

A Critical Friend observes the intervention as a whole and uses methods that reflect complex realities to help stakeholders form their own opinions and judgements. A Critical Friend creates opportunities for the evaluand to reflect on evaluation evidence and understand what it means to them. This role is one of external trust. Similar to the Advocate, the Critical Friend is methods neutral and is more about the ways in which an evaluator engages and supports an evaluand. A Critical Friend role can be played throughout an evaluation, but may be most appropriate during the evaluation lesson learning stage, providing trusted and critical advice to support change. While this role could be played by an internal evaluation team within an organisation, it is likely to be more effectively played by an external team that brings wider insights to support learning from other interventions.

The trait of collaboration would be high as Critical Friends need to be able to work collaboratively with the evaluand and commissioner, and provide trusted, actionable feedback and support. Conversely, we believe the trait of independence would be low as while they are external, they need to be able to have a collaborative working relationship. The trait of curiosity would be high as evaluators need to be open to new lines of inquiry and explore issues that may not have been identified at outset. Similarly, the trait of flexibility would be high to enable responsiveness to changing priorities and where evidence would have the most impact. The trait of communication would likely be medium as they are unlikely to be involved in knowledge sharing, but may provide advice and support to share knowledge effectively. The trait of perspective is also likely to be medium, as Critical Friends would need to look at both granular issues and some big-picture aspects in order to generate specific recommendations as well as make more generalisable evidence from other sources meaningful.

Educator

The role of Educator, is well captured by Patton (2002), understanding that every interaction in an evaluation can be considered a teaching moment. An evaluator who plays the Educator role capitalises on various moments, not just formal reporting, to educate and support evaluand staff in a range of ways. The evaluator could also be considered a co-learner or knowledge broker, someone who conveys information to facilitate understanding and creates a favourable environment for sharing and learning.

An evaluator in an Educator role may share learning on the evaluation process: as just because an organisation decides to have an evaluation, does not mean there is a practical understanding of what an evaluation is and what it can deliver. Whilst playing such a role, evaluators may seek to build evaluation capacity or develop an evaluative learning culture within an organisation. The other way an evaluator can act as an Educator is by supporting an evaluand to learn from evaluative evidence and facilitating lesson learning to support decision-making directly related to the intervention. An Educator will likely play an important role during results dissemination, communicating lessons learned in a way that can support changes to practices. An Educator role is well suited to all the stages of the evaluation life cycle from design, scoping, data collection and reporting and lesson learning.

We believe that the trait of communication would be turned up for an Educator, given their support to facilitating and sharing learning. Collaboration would also need to be high, working with the evaluand and commissioner to support effective lesson learning. The Flexibility dial would probably also be turned up as the Educator may have to change tactic to share the most effective lessons or focus on different messages. A broader perspective, would be needed as the evaluator has to to focus on the bigger picture to facilitate generalisable learning.On the other hand independence may be lower as an Educator may need to be closer to the evaluand to understand contextual factors about what evaluation insights may be most useful.

It could be argued that an Educator plays a similar role to an Advocate, but a key difference is that an Educator will not only think about how to influence the intervention and outcomes directly, but also about what can be learned more widely. Another key difference is that an Educator takes an neutral perspective towards sharing knowledge, whereas an Advocate may be more selective in information sharing to further outcome achievement and influence. For example, an Educator may consider what is useful to the wider community and share lessons more broadly; or focus on how to integrate evaluative thinking and tools in organisational culture. Educators may also play a larger role than an Advocate in communicating and facilitating lesson learning by evaluand personnel to support wider use of evaluation insights.

Methodologist

This role draws on the work of Campbell (1991), where evaluators are encouraged to design research that will eliminate biases and focus solely on identifying causal links between an evaluand and potential outcomes. This role underlines the roots of the evaluation field in the social sciences. The Methodologist focuses on developing a strong research design to give confidence to results generated and to ensure they can be replicated. The Methodologist is distanced from evaluand stakeholders to minimise bias, ensuring high-quality research design and evaluation results based on evidence provided. The Methodologist does not focus on applying valu-judgements to the evaluand (like the Judge) or facilitating learning, or on selecting the evidence that would be most useful for uptake (such as the Advocate, Critical Friend or Educator) as this may weaken the credibility of the findings. While this role is methods neutral, a Methodologist would probably not be well suited to a participatory or collaborative design process.

The Methodologist role is also best deployed when focusing on the outcomes of an intervention, rather than exploring other lines of inquiry, such as how an intervention has been implemented or how it could be adapted to new contexts. This means the Methodologist role is most appropriate at the evaluation design and data collection and analysis stage of an outcome or impact focused evaluation. This role is probably best played by an external evaluator who will not be influenced (or biased) by existing knowledge.

We identified the trait of independence as high for a Methodologist emphasising the distance needed to reduce bias. The traits of flexibility and curiosity would likely be low given their strict adherence to a methodology once it has been agreed and limited scope to explore emerging lines of inquiry. Similarly, the traits of collaboration and communication would be low given the focus on causal inference and generation of empirical evidence. The trait of perspective would also be more granular (or turned down) given their focus on identifying the causal inference between perceived outcomes and the intervention.

Theorist

The last role we propose is that of the Theorist: an evaluator who seeks to understand how the results of an intervention depend on contextual, organisational and cultural factors. This role is useful to help explain what factors are contributing to the achievement of specific outcomes and to enable replication of an intervention in other contexts or to address other challenges (McClintock, 1990). This role is often inherent in the way in which theory-based evaluators conduct their work and includes identifying the intermediate causal processes that may produce specific negative or positive outcomes, including contributing factors. In this way, the Theorist is different from the Methodologist as they will look beyond just causal relationships between evaluand and outcomes, for example by identifying other factors that may have contributed to change.

The Theorist role can be played by both internal and external evaluators, is perhaps most useful during the design stage of an evaluation and can underpin support during intervention design to help the evaluand articulate intervention theories.

Theorists need to be highly collaborative with the evaluand, working in partnership to identify theories of change and potential contributing factors. This means that Theorists are likely to be less independent. Their flexibility trait would be turned up, given the need to articulate new and emerging lines of inquiry and identify new factors that could influence outcomes. The communication and curiosity traits would likely be influenced by the evaluand and commissioner and could be either turned up or turned down. Finally, the perspective trait is likely to focus on the bigger picture given the importance of integrating external factors, but may be set at a granular level for specific intervention theories.

Practitioner perspectives

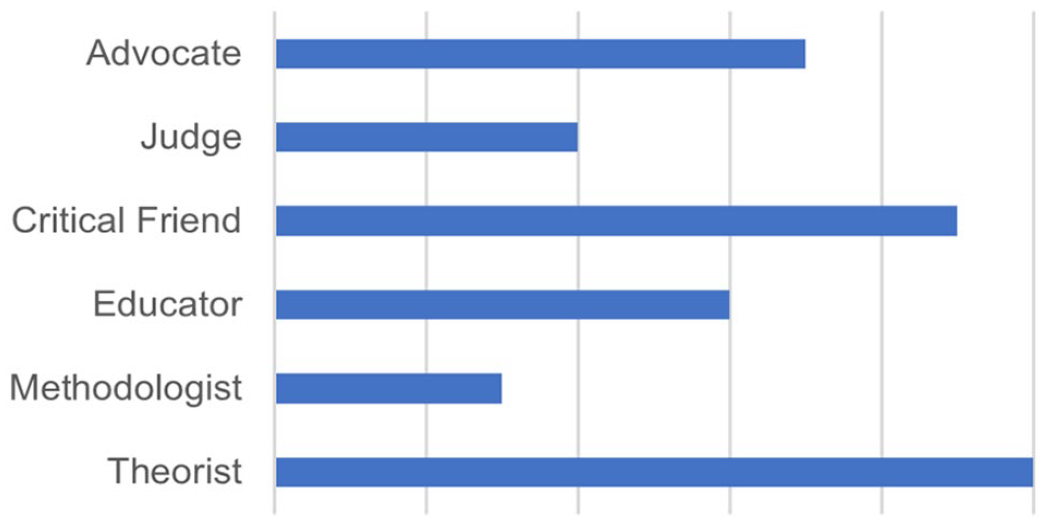

Among our live data participants, the Theorist was the role most commonly played, closely followed by the Critical Friend. In contrast, participants felt they had rarely played the roles of Judge or Methodologist, per Figure 3.

Evaluator roles played by survey participants.

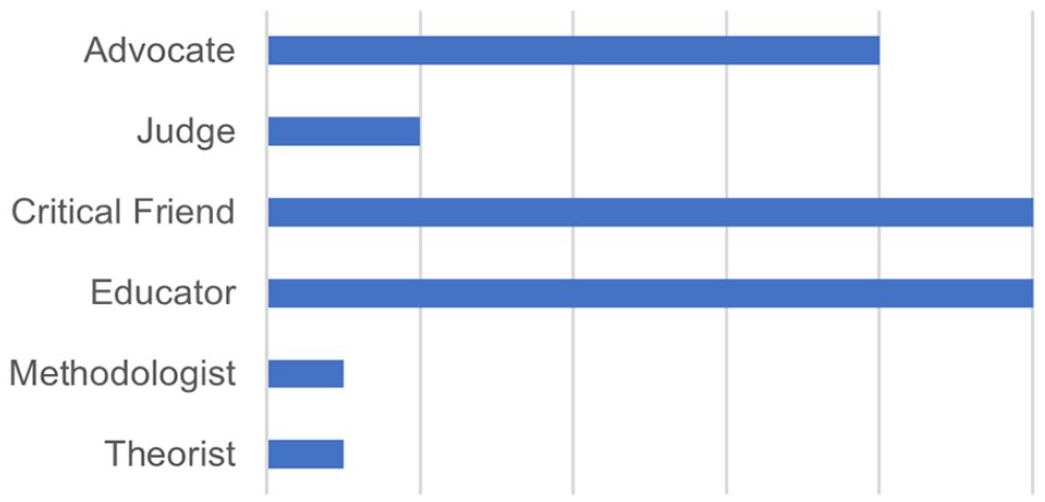

Participants with one exception, also unanimously agreed that an evaluator’s role should change depending on the subject matter. Alongside these results, participants identified the Critical Friend and Educator as being 10 times more important that the Methodologist or Theorist, when considering the role of the evaluator in today’s context. Closely behind these roles was Advocate, with the Judge falling far short, as indicated in Figure 4. While our participants were few and mainly made up of practitioners, these results highlight an important perception that evaluators have a responsibility beyond results measurement and value judgements, and that evaluators can and should be a driving force in influencing change.

Ranking of evaluator roles by importance.

Conclusion

It is clear from both the available literature and wider practitioner experience that there is no one evaluation role that is ‘right’, but rather there are a series of potential roles based on context, the individual experiences of the evaluator and the requirements of each evaluation. We believe it could be useful for key evaluation stakeholders to consider the types of evaluation role best suited to individual assignments and phases and be explicit about the most effective role. To do so, a common language and consistent understanding of the roles and related traits is necessary and could enhance the overall effectiveness of evaluations. The above conceptualisation of evaluation roles is just one way to envision the roles an evaluator might play. Our intention in describing them is to contribute to the codifying of these roles and provide the beginnings of a lexicon for evaluators, evaluands and commissioners. We do not propose that this list of roles is in itself complete, but rather an initial set of definitions based on our own experience complemented by practical guidance about when and how they are best suited.

Further to this, providing the traits which define the role played allow all evaluation parties to better understand the scope of the evaluator’s work and their integration within an intervention. As outlined above, there are trade-offs when changing the level of all of these traits. However describing them in common language can facilitate greater understanding between the parties as to how different requests of an evaluator may influence the role they play, or the role they are capable of playing. It is our hope that establishing this shared language will increase engagement and productivity, while reducing potential tensions or misalignment of expectations.

As roles will change throughout an evaluation, this conceptualisation could also help in creating consensus across and between practitioners and clients about necessary adaptations and how they might influence evaluation results. Expanding the scope of a Judge by dialling up the curiosity trait, for example, may push the evaluator into more of a Critical Friend role and have a corresponding impact on the evaluator’s independence or perspective. Such a shared language could be helpful in ensuring an evaluand or commissioner understands when and how an evaluator may be able to be more collaborative versus when they may need to be more independent, and how that may be influenced by their own expectations of the evaluator.

The evaluation field continues to evolve, with the apperarance of new methods, approaches and tools. Since the evaluation field emerged, there is no singular consensus on what an evaluation is, nor should there be as evaluations are inherently pluralistic and diverse, and must meet different needs. We as evaluators should welcome this complexity and continue to develop the profession, but it can be challenging for non-evaluators to engage effectively. Given the focus in the past decade on formalising evaluation competencies, there is an opportunity for the evaluation community to continue to transform and professionalise the evaluation field. As we have spent more time as a community identifying what is needed to be a good evaluator, perhaps now it is time to continue to be transformational and formalise the different roles – or ‘hats’ an evaluator must wear, thereby furthering own community of practice.

From our perspective, the answer to the proposed question – about what role evaluators should play to be effective evaluators – is twofold. First, as described above, we believe evaluators must be able to play multiple roles across and within evaluations to respond to different or changing requirements. The many hats of the evaluator is somewhat cliched, but it remains accurate regardless of the context. The Theorist, Judge and Advocate all have different parts to play in supporting transformational change, and it is ultimately up to the evaluation parties to agree to the role that best suits their objectives. We believe in the value of evaluation teams composed of individual evaluators who can be deployed to play different roles and draw on different traits at different times, as it is unlikely that a single individual can play all roles effectively or wear all the hats at the same time. Teams of evaluators playing different roles can be an effective way to combine and deliver multiple benefits and mitigate trade-offs when defining evaluation requirements.

The second answer is that all evaluators should play the role of Educators. Evaluators should seek to maximise the benefits of each individual evaluation and the Educator role is often one way to do so, but we can also further the practice of evaluation by developing, supporting and delivering wider reflections, lessons and awareness about what evaluations can deliver and the range of roles evaluators can play. To transform our own field and contribute to transformational change, we must share our understanding and learning more widely, to further inform the development of effective and even transformative evaluator roles to continue to contribute to addressing modern challenges.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.