Abstract

The evidence generated and used in development cooperation has changed remarkably over the last decades. When it comes to the field of democracy support, these developments have been less significant. Routinised, evidence-based programming is far from a reality here. Compared to other fields, the goals of the interventions and assumed theories of change remain underspecified. Under these circumstances, evaluating and learning is difficult, and as a result, evidence gaps remain large and the translation of evidence into action often unsuccessful. This is particularly dramatic at a time when this field is regaining attention amid global autocratisation trends. In this article, we analyse the specific barriers and challenges democracy support faces to generate and use evidence. Furthermore, we identify evidence gaps and propose impact-oriented accompanying research as an evaluation approach that can make a significant contribution towards advancing the evidence agenda in this field.

Keywords

Introduction

Evaluation practice in development cooperation has undergone a rapid change over the last decades (White, 2019). Today, the array of existing evidence and the variety of methods used to generate this evidence have increased and improved remarkably (Manning et al., 2023; Reinertsen et al., 2022). Yet, significant knowledge gaps remain, and there is a lack of comparable and generalisable knowledge about what works in development policy. Most dramatically, where evidence is available, it is often underused, and development actors too rarely fulfil the proclaimed goal of being evidence-based. As a result, millions of dollars are spent every year without solid proof of impact although it is reasonable to expect a solid empirical basis when claiming impact. In international support to democracy, or what many call ‘democracy aid’, both a lack of evidence and the underuse of existing evidence are particularly acute. 1

Democracy support represents a remarkable share of the development cooperation budget. Based on Creditor Reporting System (CRS) data, more than 6.9 billion US dollars was disbursed in total as democracy aid in 2020. This represents around 4 per cent of all Official Development Assistance (ODA) flows that year. However, this number masks the high degree of heterogeneity among individual donors. For instance, for the World Bank, the percentage is well below 1 per cent, whereas for Sweden, it reaches 20 per cent. For major donors such as Norway and the United States, the value is above 10 per cent. 2 In addition, the ‘real numbers’ of disbursed democracy aid are likely to be higher because we face a substantial grey zone in this field. For example, sectoral programmes often include elements of democracy support such as fostering political participation, but they are neither labelled nor reported as such (Carothers, 2009; Freyburg et al., 2011).

Given the relevance of democracy aid in development budgets and its high political salience, it is surprising to realise how little is systematically known (and it is irresponsible to not find out) about the effectiveness of the measures being taken (Gisselquist et al., 2021). Generally speaking, the limited existing evidence suggests that democracy aid ‘supports rather than hinders democracy building around the world, (. . .) and that democracy aid is more associated with positive impact on democracy than developmental aid’ (Gisselquist et al., 2021: 14). However, there remain many open questions about the causal pathways through which democracy aid works as well as the circumstances and the types of interventions that are particularly effective within the democracy support field. To make things more difficult, this evidence is not only scarce, but some of it is also partly contradictory (Dodsworth and Cheeseman, 2018a).

Two complementary observations make it even more urgent to take a closer look at the evaluation of democracy support. First, societies around the world are experiencing a strong and sustained autocratic trend for the first time since the global spread of democracy following the fall of the Soviet Union (Hellmeier et al., 2021; Wiebrecht et al., 2023). As a consequence, the awareness of donors and the demands for more adequate international support to democratisation as well as protection from autocratisation have been increasing recently (Carothers, 2020; Godfrey and Youngs, 2021; Leininger, 2022). 3 If democracy promotion is again receiving more attention, it is all the more imperative to find out what works and what does not. Second, an additional argument in favour of meeting the urgent need to improve our knowledge about what is effective in supporting and protecting democracy is that democracy matters – not only intrinsically but also practically as an enabler of development outcomes (Dodsworth and Ramshaw, 2021). Empirical evidence indicates that democratic regimes are conducive to, and supportive of, improvements on most valued development outcomes (Gerring et al., 2022; Knutsen, 2021). This includes uncontroversial performance outcomes – for instance, in the health, education and climate sector – but also outcomes we consider desirable on normative grounds, for example, the respect of human rights and transparency (Harding, 2019; Le Quéré et al., 2019; Pieters et al., 2016).

One fundamental reason why the evidence basis for democracy support is scarce is the fact that organisations in this field rarely commission ‘rigorous, independent evaluations of their work’ (Dodsworth and Cheeseman, 2018a: 307). Most democracy evaluations do not include a combination of rigorous impact evaluations, such as (semi-)experimental designs, and qualitative methods. 4 For example, the majority of the approximately 1190 sources on democracy in the OECD’s DAC Evaluation Resource Centre (DEReC) are qualitative evaluations or literature reviews. 5 Moreover, the number of impact evaluations in public administration (834 impact evaluations, a minority of which focus on democracy) contrasts sharply with other sectors such as health (5359), agriculture (1764) and social protection (1491), according to the database of the International Initiative of Impact Evaluation (3EI). 6

In this article, we argue that the paucity of rigorous evaluations of democracy aid is caused by a more profound issue: There is a deep scepticism about the appropriateness of applying the mainstream methods of impact evaluation to study the impact of democracy aid (Dodsworth and Cheeseman, 2018b; Funk et al., 2018; Gertler et al., 2016; Kumar, 2013: 2). Practitioners and policy-makers argue that for being linked to political power dynamics, democracy-promotion programmes are different from other development programmes and therefore not as amenable to conventional evaluations (Kumar, 2013: 3). This perception about the ‘singularity of democracy support’ has an impact not only on the amount of evidence that is generated but also on the attitude towards the use of this evidence.

The problem with assertions concerning the singularity and ‘unevaluability’ of democracy support interventions is that they claim universality across the field. Of course, there are specific methodological challenges to the rigorous evaluation of some democracy support programmes. For example, when there is only a very small number of units of analysis, experimental approaches are not feasible. The question is to what extent these complexities apply to all interventions designed to foster democracy. We do not see a specific singularity that would make it impossible to do rigorous evaluations (e.g. Finkel and Lim, 2021; Hyde et al., 2023). Moreover, using qualitative methods or mixing rigorous impact evaluation with qualitative approaches may be a better option. Hence, yes, some interventions will not be amendable to being evaluated using rigorous methods. However, some might be, particularly in terms of testing the microfoundations of grand theories about democratic support (Hyde, 2010). From this perspective in fact, the situation is very similar to other fields of development cooperation.

Against this background, this article answers three questions: (i) What are the main community barriers and evaluation challenges to increased evidence generation and evidence use in democracy support? (ii) What are the major evidence gaps in the democracy support field? and (iii) How should evaluations look like to overcome these barriers and challenges and help closing the evidence generation and evidence use gap in this field?

In answering these questions, the remainder of this article is organised as follows: In the next section (‘Barriers’), we discuss how the evaluation of democracy support is faced with particular perception and cultural barriers in the community. 7 These barriers reenforce evaluation challenges, which we address in the section ‘Evidence: What do we (not) know about the effects of democracy support?’. Here, we take stock of existing evidence in the field of democracy aid, identify evaluation challenges and highlight specific knowledge gaps that are particularly problematic with regard to informing development cooperation planning. The ‘Impact-oriented accompanying research: A format to address the main barriers to generating evidence and using it to support democracy’ section introduces impact-oriented accompanying research as an approach that resonates with existing approaches, for instance, developmental evaluation (e.g. Goertzen et al., 2021) or trailing research (e.g. Finne et al., 1995). This evaluation approach is characterised by a strong commitment to mixed approaches through the combination of quantitative and qualitative methods of impact assessment and a deep and sustained cooperation between the evaluators and the implementing agent. We discuss how these characteristics are essential to address and reconcile some of the previously identified barriers and challenges. We conclude the article with an outlook on the implications for the political economy of democracy support evaluation and the increasingly hostile environment towards democracy in times of autocratisation.

Barriers: What is different about the evaluation of democracy support?

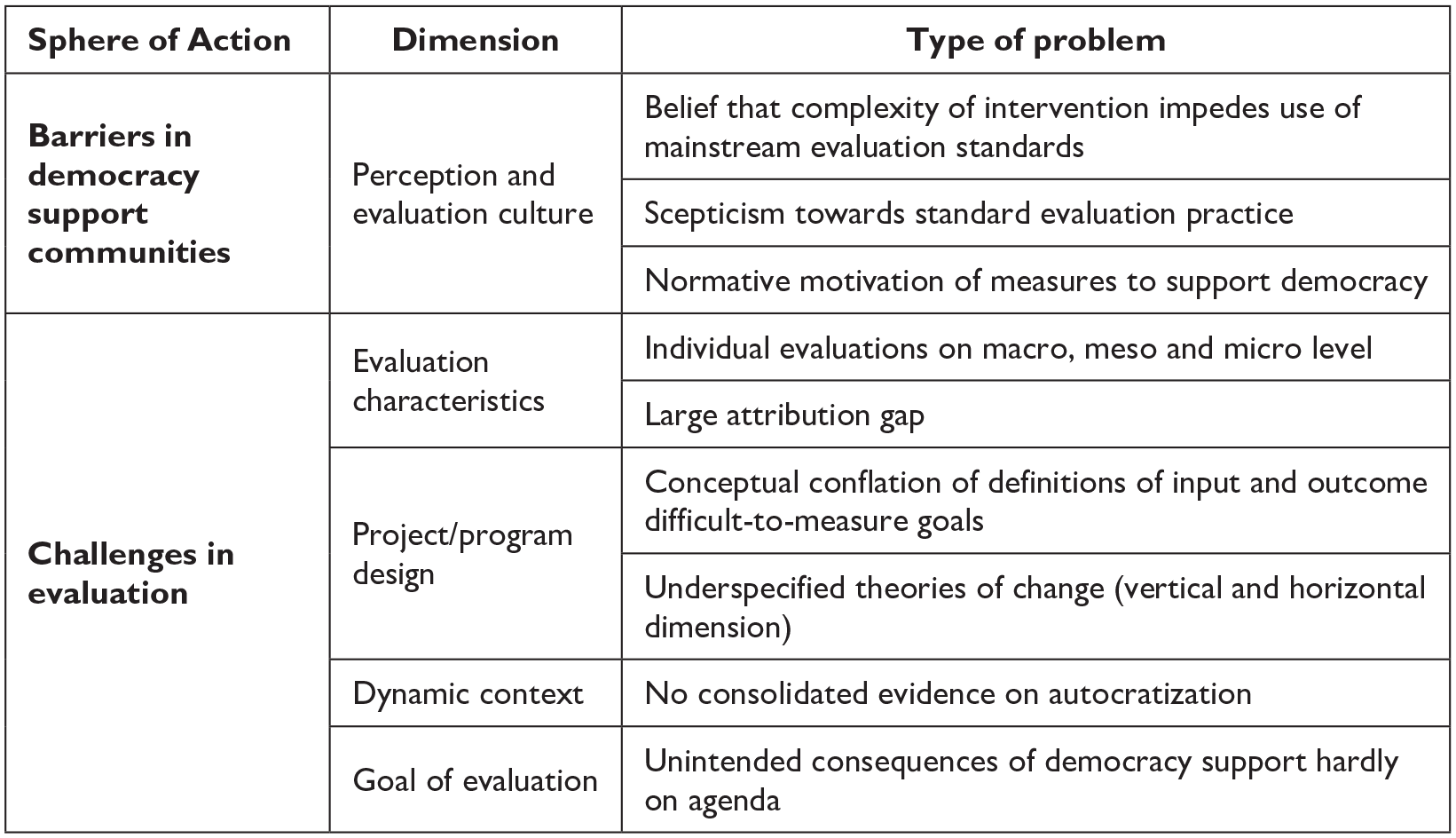

A common debate among practitioners, policy-makers and evaluators is whether there is something distinct about the evaluation of democracy support when compared to other development sectors (e.g. Kumar, 2013). We have identified three main barriers to the use of rigorous impact evaluation that originate from the democracy support community (see also the upper part in Figure 1).

Main barriers and challenges to impact evaluation in democracy support.

Strong beliefs in the inappropriateness of mainstream evaluation standards

Supporters of the idea that democracy support is particularly difficult to evaluate rigorously point to the inherently political nature of the goals, the volatility of the political context with and through which it operates, and the unreliable cause-and-effect relationships in this field (e.g. Oakley, 2022). They often describe democracy support interventions as being ‘complex’, which implicitly suggests that interventions in other sectors are often ‘simpler’. 8 Certainly there is some truth to these claims. Democracy support is a particularly demanding field for various reasons (e.g. Kumar, 2013: Chapter 1). But this should not used as an excuse to bypass standards or, in extreme cases, to claim an inability to evaluate. In particular, it is highly misleading to assume that interventions in any given field are, by definition, either complex or simple. As in other fields, some of the interventions labelled ‘democracy support’ will be simple. Others will be complex. As a result, it is wrong to encapsulate democracy aid within an evaluation standard that is based on a general claim of complexity.

Scepticism towards standard evaluation practice

Standard evaluation practice (especially impact assessment) has come to be seen as unhelpful in democracy support. There is a perception that common evaluation practices are not able to respond to the complexity and adaptive implementation that this field often requires and that common methods do not serve to measure the impact of democracy support (see the Discussion section in Moehler, 2010; on adaptive approaches, see Roll, 2021). These arguments and perceptions were shared and systematically recorded during two workshops on the impact measurement of governance programmes in Bonn (2018) and Berlin (2019), co-organised by the German Development Institute.9,10 Furthermore, a systematic collection of reasons for the reluctance to use impact evaluation in German development cooperation revealed, among other things, that the demand for Rigorous Impact Evaluation (RIE) is low, and methodological challenges are high. 11 There are exceptions to this, such as the United States Agency for International Development (USAID) Impact Evaluation Retrospective (USAID, 2021) and the Evidence in Governance and Politics (EGAP) network. 12 However, these initiatives have not had a comprehensive and structural impact on the agenda of democracy supporters (particularly outside the United States), much less changed the evaluation culture and mindsets in this field.

Normative motivation of measures to support democracy

Another point that contributes to the culture of evaluation in this field is that in many cases, the measures applied are based on, and defended by, strong normative or even ideological motivations (Oakley, 2022). The fact that policy-makers and organisations act out of conviction naturally undermines the perceived need to rely on evidence. This tendency to defend measures on normative rather than empirical grounds often leads to less self-criticism when designing interventions and less openness to learning if something has worked (or not).

In sum, there are significant barriers within the community of people who work in democracy support that magnify and exaggerate known challenges faced by evaluations in this field. These barriers make it difficult not only to generate evidence in this area but also to use any evidence that may be generated. This, in fact, appears to have kick-started a vicious cycle. The cultural barriers to evaluation lead to less appealing and useful evidence for learning, programming and accountability purposes. It is easy to see how this has fostered a culture that is sceptical of and resistant to rigorous evaluation. As a result, where evaluation is carried out, it is made more difficult (and to some extent, more confrontational).

Evidence: What do we (not) know about the effects of democracy support?

In what follows, we illustrate the specific challenges in a number of evaluation areas and extrapolate the key results of the impact evaluations of democracy support (see also Figure 1, lower part). The persistence of these challenges is due in part to the barriers described earlier. The following is based on an exemplary review of the main findings on the effects and impact of democracy support. The review of the literature comprises academic analyses and evaluations, which were officially published either in scientific outlets or by donor organisations themselves. For an excellent synthesis of the findings from a quantitative analysis, we refer readers to the recent Expert Group for Aid Studies (EBA) study on democracy aid (Niño-Zarazúa et al., 2020). Overall, this review illustrates where the field stands and where reforms in evaluation practice are needed.

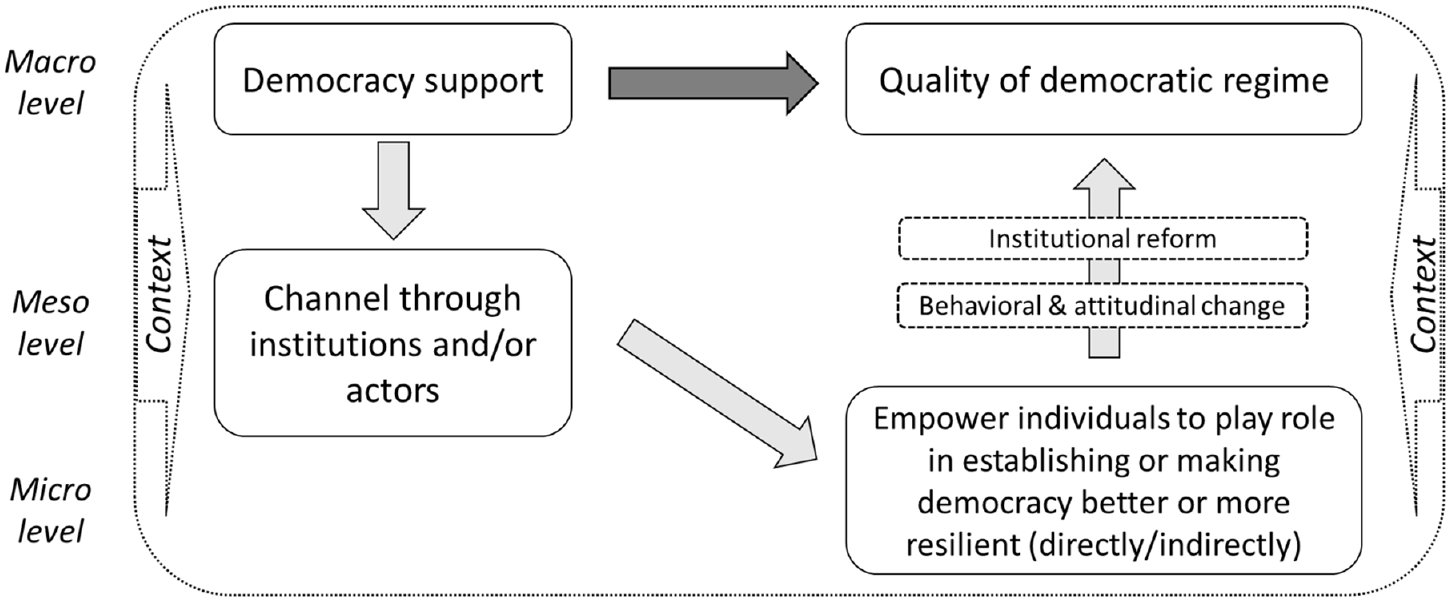

Evaluation characteristics I: Impact evaluations on different levels

Overall, the evidence about what works and what does not in international democracy support can be broadly divided into analyses and evaluations that focus on different levels of a democratic regime – macro, meso and micro. More specifically, they tend to focus exclusively on one of these levels. This can be explained with the underlying causal assumptions of an intervention and the level that the intervention most explicitly aims to target (see Figure 2; Lust and Waldner, 2015). At the macro level, the main interest is whether international democracy aid has an impact on the overall democratic quality of a regime. In this line, the goals range from supporting the democratisation of autocratic regimes to fostering the deepening of democracy. At the meso level, interventions are focussed on institutional change and agency for direct democracy support. On this basis, democracy support is targeted at – and channelled through – institutions such as ministries, parliaments and non-governmental organisations. At the micro level, democratic change is expected to be achieved through the empowerment of individuals. These interventions either aim to strengthen individuals as leaders in support for the establishment or reform of democratic institutions or promote behavioural and attitudinal changes that help to improve democratic processes and foster a democratic political culture. More recently, in the face of autocratisation and increasing autocratic regression, support for individual human rights defenders and activists has become common (Hyde, 2020). Finally, indirect democracy support focusses on the structural factors that influence the quality of democracy, such as economic development, the welfare system and/or urbanisation (see Conroy-Krutz and Frantz, 2017; Niño-Zarazúa et al., 2020, Figure S1).

Generic representation of levels and causal assumptions in democracy support.

Evaluation characteristics II: Large attribution gap despite of positive impact on macro level

On the macro level, a high number of cross-national analyses examine the effects of democracy support. Their generalisable finding is that democracy support makes a significant contribution to the quality of a democracy in general (e.g. Bosin, 2012; Dietrich and Wright, 2015; Finkel et al., 2007; Gafuri, 2022; Gisselquist et al., 2021). Most studies on the relationship between general aid and democracy find no effect, or only a positive effect, when foreign aid is conditionalised (Carnegie and Marinov, 2017). Some studies even show that general ODA can have an opposite effect and negatively affect democracy (Bräutigam and Knack, 2004; Djankov et al., 2008; Knack, 2004; Licht, 2010). These studies are usually regression analyses that use aggregate data. They often discuss plausible causal mechanisms to derive their hypothesis, but they can make no strong causal statements about the impact of aid given the limitations of the research design and, logically, are unable to say something about the impact of individual interventions.

In any case, the overall positive correlations that are found create high expectations, yet the quality of democracy has declined worldwide, despite democracy promotion. This may reinforce the idea that democracy support is too complex to be rigorously evaluated. In addition, these inverse developments can only be understood and explained if impact studies better link the different levels of analysis.

The demonstrated effects of democracy support on the quality of democracy at the macro level point to a particular problem concerning evaluation and how it is conducted in this field: The attribution gap is particularly large for many of the projects (Green and Kohl, 2007: 157). There is a specific problem concerning scale and systemic interaction in democracy support. It is common for support to be given to specific elements of democracy on the assumption that they will contribute to a more democratic regime as a whole (FCG Sweden et al., 2021). For example, supporting free and fair elections may improve the electoral process and outcomes (generically sketched in Figure 2). However, it does not necessarily improve the democratic system as a whole. This might generate a tendency to be less precise about mechanisms in order to hide the uncertainties. In addition, there is often pressure to overcommit in this field and be highly ambitious (and potentially unrealistic) about what can be achieved (Dodsworth and Cheeseman, 2018a). This is historically at odds with the ability to attribute impact, and it has been a major cause of the field’s defensive culture towards evaluation.

Project design I: Conceptual conflation leading to difficult-to-measure goals

A major challenge – and a consequence of the different levels of democracy support – is the use of diverse concepts to describe the measures and outcomes of interventions. First, it is often difficult to consistently measure interventions because many of the concepts used in the field are poorly defined. On the input side, the concepts to label support democracy vary across donors, programmes and contexts. For example, the distinction between democracy support and the concept of good governance is not clear. The OECD’s reporting system dilutes the meaning of the concept of democracy aid, for example, measures for financial good governance are sometimes referred to as ‘democracy aid’; sometimes good governance implicitly stands for ‘democratic governance’, and sometimes not. As a result, there is little standardisation regarding terms and measures associated with democracy aid.

On the outcome or impact side, donors have different understandings of democracy and democratisation. These understandings range from more technical notions of good or inclusive governance to democracy, democratic governance or individual elements of a democratic political regime (FCG Sweden et al., 2021). There are several reasons for the existence of inadequate definitions. The main reason is that the principle of ownership with regard to the recipients of democracy support and notions about the contextual nature of political systems have fostered the idea that no blueprints are available and that democracy must be defined by those who build or defend it (Leininger and Richter, in press). However, measurable goals and outcomes could be defined with partners and then used to inform project designs. In any case, if goals remain ill-defined, and thus difficult to measure, impact evaluation becomes challenging.

Project design II: Underspecified theories of change continue while causal inference increases

Causal inferences on the micro level based on an experimental design have recently become more popular in research and evaluation practice. They show positive effects on intended outcomes, namely institutional reforms as well as behavioural and attitudinal changes (e.g. Fishkin et al., 2021; USAID, 2021; Zeeuw, 2005). For example, civic education had negative effects on levels of satisfaction with democracy in the Democratic Republic of the Congo, but it had positive effects on non-electoral participation as well as on democratic orientations such as knowledge, efficacy and political tolerance (Finkel and Lim, 2021). In particular, it had a positive effect on external efficacy – the experience that an individual’s action often makes a difference in a democratic process or outcome; this was also true for a project in Kenya (John and Sjoberg, 2020). However, the effects were greater on voters who elected the winning party. In sum, results from Kenya and the Democratic Republic of Congo are positive. However, they have different nuances that emerge not only due to different interventions and designs but also due to different country contexts. In addition to demonstrating the need to link the theory of change to the broader context of an intervention in a regime, this also shows the need to improve theories of change by linking the results of impact evaluations in similar areas.

All studies below the macro level focus on outcomes that refer to specific elements of democracy, particularly electoral institutions (e.g. Aker et al., 2017; Brancati, 2014). What is assumed – but is neither an element of most theories of change nor analysed – is how these changes contribute to the overall quality of a democratic regime. Thus, theories of change do little to address the attribution gap between the overall impact of democracy promotion on the quality of democracy at the macro level and how individual interventions contribute to this change at the micro and meso levels (FCG Sweden et al., 2021; Hyde et al., 2023). The results of a disaggregated analysis of election assistance support the argument that there is a missing link in the theories of change: Steele et al. (2021) find that micro measures have no effect on individual indicators concerning the quality of elections, whereas total democracy aid has a positive effect on the overall quality of democracy.

In order to further specify theories of change, more evidence about the effects of specific types of democracy support is needed. In other words, what measures lead to the intended outcomes? Knowledge concerning this input side with regard to democracy aid interventions is still fragmented because individual interventions are restricted to a specific set of approaches and tools (Lührmann et al., 2017). For example, diplomatic measures such as condemning elite behaviour or freezing diplomatic relations have different effects compared with development policies aimed at training with regard to elections. Similarly, within development cooperation, different measures are evaluated separately without taking into account their potential interactions. For instance, conditional budget support aimed at governance reforms (Faust et al., 2012; Molenaers et al., 2015) is likely to reinforce the training of officials or citizens. Also, initial studies show that a combination of coercive measures, such as freezing aid, and cooperative measures, such as strengthening civil society organisations, has proven effective in countering attempts to extend presidential term limits in African and Latin American countries (Leininger and Nowack, 2022; Nowack and Leininger, 2021). However, these insights have not reached approaches to impact evaluation, which should work towards assessing the impact of a set of different measures.

Overall, theories of change – and in particular, the mechanisms that help close the attribution gaps in democracy support – are thus still fragmented, difficult to generalise and do not capture the systemic nature of democracy support. This resonates with Hyde et al., who observe that ‘[s]cholars and practitioners still possess a relatively limited understanding of the mechanisms through which democracy promotion is expected to operate in varying contexts and under circumstances frequently defined by conflicting foreign objectives’ (Hyde et al., 2023: 5). In addition, theories that focus on attitudes assume that more democratic attitudes contribute to more democracy in a regime, but that is not a given. Change can take place only when democratic attitudes are manifested in democratic actions. This is another missing – and often overlooked – link that should be considered in the causal chains of democracy support interventions. Vertical (at least micro-meso level) and horizontal connections need to be made to improve theories of change further.

Finally, another challenge is how theories of change can do justice to the role of democracy supporters themselves in political processes – these theories have consequences for democratic change. The theories of change underlying many democracy-promotion measures are based on conventional democratisation theories, which link certain factors or conditions to a democratic outcome (FCG Sweden et al., 2021; Steele et al., 2021). But even if these theoretical foundations are valid and plausible, they do not take into account that democracy support is always mediated through an intermediary channel (e.g. relationship between donor and partner government). This is certainly true for all other sectors in development policy. However, it is particularly important here because democracy supporters are intervening in a political process in which they themselves are taking part (Leininger, 2010).

Dynamic context: Different types of context matter but open-ended regime change remains a challenge

Context is used in two ways for impact measurement and the evaluation of democracy support – either by identifying general types of context (e.g. level of democracy or economic development) or as specific domestic conditions, often in the specific reform processes of a country (Hyde et al., 2023). Overall, democracy support has a positive amplification effect in existing democratic regimes but a negative impact on democratic outcomes within autocracies (see, e.g. Dutta et al., 2013; Yuichi Kono and Montinola, 2009). Small-N analyses and evaluations explain that strong(er) democratic institutions are needed to amplify changes at the individual level (Berman, 2009).

Another strand of analyses and evaluations focus on actors and actor constellations. For instance, support for civil society can contribute to democratisation if political settlements are organised inclusively (Jamal, 2012). However, political elites and settlements have served as gatekeepers who impede democratic reforms or contribute to democratic facades. How external democracy supporters and local elites interact is thus an important factor that shapes the effectiveness of democracy support (Groß and Grimm, 2014).

Social developments also matter, particularly violent conflicts. Democracy support has been used as an approach to peacebuilding in post-conflict societies. Many evaluations and studies describe the difficulties concerning state fragility and post-conflict settlements in such contexts. Despite the focus on state fragility, there has been no systematisation of post-conflict settings and how they influence the impact of democracy promotion (Fiedler et al., 2020; Jarstad and Belloni, 2012; Mross, 2019). It is also important to note that democracy promotion as a peacebuilding tool makes the identification of causal mechanisms even more complex since the primary goal is peace, whereas democracy is only an intermediate step. In sum, to the best of our knowledge, typical actor constellations have not yet entered the discussion on theories of change about specific interventions, nor have the typical contexts been introduced systematically. These interventions are rather dependent on country-specific decisions and legacies of the relationship between ‘external and internal’ actors.

Despite the classification of typical contexts, democracy support and its evaluation are to be viewed within a highly dynamic context. This also has implications for defining the goals and likely outcomes as well as theories of change concerning democracy support. The process-oriented nature of regime change is especially challenging in two ways. First, the current dynamics of political regimes across the globe point to a clear turn towards greater and more substantial autocratisation (Wiebrecht et al., 2023). Although a growing body of analysis is shedding light on autocratisation, a consolidated picture of the sequences, patterns and idiosyncrasies of autocratisation processes is still lacking. Relatedly, democracy supporters have not yet developed a battery of established practices to protect and defend democracy from autocratisation (Leininger, 2022). Second, autocratisation processes are open-ended and unfold in various areas and at various levels of democracy, sometimes in parallel with democratisation in other areas of the same system, with both affecting different aspects of democracy. Overall, these contextual aspects compound the problems of ill-defined goals and underspecified theories of change in democracy aid, which in turn make evaluation more difficult. Moreover, whether democracy aid has contributed to making democracies more resilient and helped in countering autocratisation remains a major evidence gap (Leininger and Richter, in press).

Goal of evaluation: Unintended effects of democracy support

The majority of evaluations and studies tend to treat democracy support as normatively right and good. As a result, they often ignore unintended counterproductive effects. By now, a substantive number of studies have identified how democracy support frequently leads to the prevention of (further) democratisation (Brownlee, 2012). In fact, some studies indicate that democracy support interventions have contributed to undemocratic politics (e.g. corruption) (Richter and Wunsch, 2019) and even reinforce authoritarianism (Schuetze, 2019). Democracy support can thus also be a potential cause of de-democratisation. In addition, the impact measurement of individual projects may reveal odd unintended consequence, but there is no comparable, systematic evidence of such unintended effects. Evaluation designs and approaches for measuring the impact of democracy support need to be flexible and open enough to identify such effects.

In sum, there is minimal evidence about the impact of democracy support, compared to other fields in international cooperation. In order to overcome the barriers and challenges to impact evaluation, more appropriate evaluation approaches need to be applied.

Impact-oriented accompanying research: A format to address the main barriers to evidence generation and use in support democracy

The discussion in the previous sections shows that there are meaningful difficulties in proving the impact of democracy support, identifying what works and what does not and aggregating that knowledge. This, as discussed earlier, is due to the limited generation and use of evidence, which can partly be explained by the characteristics of this field, but it is also connected to a particularly strong sceptical culture towards impact evaluation (see Figure 1). In order to fill the evidence gap and foster more evidence-based programming in democracy support, three aspects are essential.

First, there is a need to change the sceptical perceptions of many practitioners and policy-makers in this field towards the topic of evaluation, both in terms of the transferability of evidence as well as the degree to which evaluation methods can address their interests. Evaluation is not always positively perceived by practitioners in general, yet resistance to evaluation practice is particularly strong in the democracy support field area. Much is probably connected to the fact that the bar to be reached is ambiguous precisely because of unclear goals and causal pathways. As such, the insecurity concerning what one is being evaluated against and the perceived low value added of evaluations does not help in using evaluations to learn and critically engage with one’s own work. Rather the dynamic is one of a fierce defence for own decisions taken.

Second, regarding the programming, more clarity in the definition of goals is required. Too often, democracy support interventions lack a clear definition of the goals being aimed for. This is essential for the proper programming of the intervention itself and thinking about the theory of change. For example, interventions that aim to counter autocratisation can be assumed to have different rationales, targets and goals compared to those that aim to foster positive effects on the quality of democracy or democratisation. Furthermore, a lack of clear goals complicates not only programming and evaluation at the intervention level but also the proper exchange and aggregation of knowledge beyond individual evaluation. In essence, it is difficult to learn if there is no clarity about what the actual interventions are supposed to achieve.

Third, partly as a result of the lack of clarity concerning goals, theories of change are poorly defined. In this line, it is fundamental to demand and foster better definitions of the theories of change to force more self-reflection on the causal pathways assumed for the interventions but also to enable a more granular analysis of them. This is a necessary condition to solve, for instance, the problem with evidence focused at different levels of analysis. This evidence is complementary – a point often missed – as the measures are commonly not geared towards different overaching goals but rather they measure the impact at different steps of the causal chain.

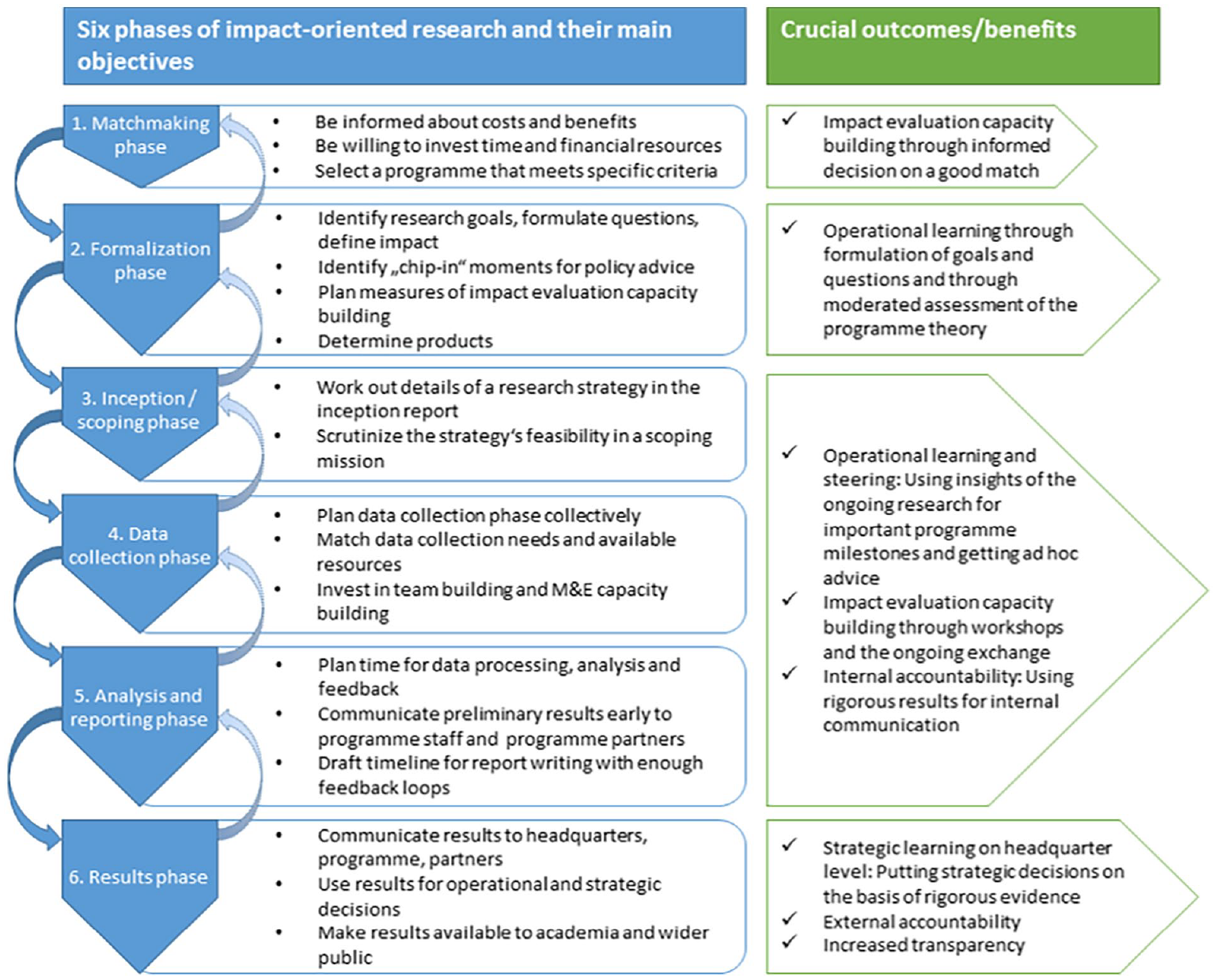

We argue that a format we call ‘impact-oriented accompanying research’ (Funk et al., 2018) is particularly attractive for breaking the vicious cycle between available evidence and the perceived usefulness of evidence, as described in the ‘Evidence: What do we (not) know about the effects of democracy support?’ section. Accompanying research can broadly be defined as the continuous scientific backstopping of any type of change process in the real world (see also Figure 3). It is a common approach in other applied sciences, but because it can be applied in so many different contexts, to our knowledge, there is no methodological description. In particular, although not exclusive, impact-oriented accompanying research would help improve the impact of evaluations in terms of learning (Reinertsen et al., 2022).

Six phases of impact-oriented accompanying research.

Concretely, impact-oriented accompanying research has two specific characteristics – joint knowledge creation and mixed methods approach – that are crucial to address the challenges for better evidence and the better use of evidence for democracy support. In particular, they help to address problems with existing evaluation characteristics, programme design and the dynamic context.

Joint knowledge creation: Building relationships

Accompanying research is defined by a close collaboration between the evaluators and the development organisation, with the goal of creating joint knowledge. Specifically, the model implies a commitment from both sides to collaborate over a medium to long period of time (ideally, a whole programme cycle) but also to engage in co-designing and co-implementing the evaluation. This iterative process serves to create joint knowledge.

An essential element is clarifying the assumed goals and causal mechanisms. The time investment in the design phase of the evaluation not only enables the evaluators to get a better understanding of the measures and the context but also forces the programme implementers to explain, reflect and, if need be, update their theories of change and to be explicit about why they do what they do and the way they do it. As a result, the programme implementers also develop a sense of what the evaluation will be evaluated against, and what they will be able to learn – both essential aspects in order to avoid resistance.

Furthermore, it is not just the length of the relationship that matters, but also the quality. By having those involved interact with each other over time and discuss, design and implement the evaluation together, compared to conventional evaluations, significantly more opportunities are created to build a relationship of trust. The format also provides a clear and explicit space for the discussion of needs and reasons for contextual adaptation, including political economy considerations. Many of these ideas echo earlier suggestions and discussions, such as those of Patton (1994) and Finne et al. (1995), about improving utilisation by rethinking how to involve evaluators and make results better, more usable and more meaningful. In this case, the joint implementation of the evaluation also plays a role. In addition to the ownership effects for the implementing team, it also has capacity-building effects, as practitioners develop stronger skills concerning the responsible use of evidence. For instance, they learn the basic intuition behind applied designs as well as the most relevant assumptions these are based on. Furthermore, they directly experience the potential challenges in the proper implementation of these. Overall, the format is structurally intended to address – not as nice to have, but as fundamental elements – core issues for improving interactions between evaluators and practitioners (Abbarno and Bonoff, 2018). We believe that this in-depth interaction does not necessarily increase the risk of co-option and loss of independence of the evaluators. There are certain elements that help to further minimise this risk formally (see Dodsworth and Cheeseman, 2018b). On a more fundamental level, the mutual understanding of the expectations, fears and needs helps strengthen the image of evaluators as being valuable (and independent) ‘critical friends’ (see Dodsworth and Cheeseman, 2018b).

Mixed-methods approach

The second core element of the format is of a methodological nature. Impact-oriented accompanying research relies on a strong commitment to mixed-methods approaches. The goal of combining methodological approaches is to make it possible for the team doing the assessment to make reliable statements about:

(a) whether the intervention had a quantifiable, statistically significant impact (if-question);

(b) the causal link between the intervention and the outcome (how-question); and

(c) the enabling factors or conditions of an impact (why-question).

To answer (a), we expect that the evaluation design include an impact assessment component employing experimental or quasi-experimental methods. To answer (b) and (c), more qualitative-oriented methodologies are applied. 13

The combination of these methodological approaches provides a comprehensive understanding about the impact that the intervention might have had and its enabling, hindering or moderating factors. This thereby enables learning not only for the context in which the evaluation is being implemented but also about the reasons for failed intervention’s beyond simply identifying that they had no effect (e.g. see Rao et al., 2017). Furthermore, the combination of methods ensures that there are different types of insights which can be used by the practitioners for different goals and with different time dimensions. In this sense, the more quantitative and qualitative insights can be exploited differently when development practitioners make strategic or operational decisions (in the short and long term) and also for reporting and accountability purposes towards donors and the broader public.

Impact-oriented accompanying research consists of six phases that enable building impact-evaluation capacity as well as operational, steering and strategic learning (Figure 3). In our experience, the matchmaking phase is key because it demonstrates the willingness of both sides to invest time as well as human and financial resources.

It is important to underline that we do not claim all evaluation efforts should be channelled through this approach. For methodological reasons, some questions are not best addressed – and some cannot be addressed – with this approach. 14 But even beyond the fact that impact-oriented accompanying research cannot be implemented to evaluate every intervention, we argue it should not be implemented everywhere possible for efficiency considerations. This approach represents a heavy investment of economic and human resources that needs to be strategically grounded. We therefore call upon development organisations to employ this approach wisely to analyse questions they consider of particular relevance. Along this line, it is important to develop within each organisation as well as collectively in this field a research agenda comprised of pressing questions that require empirical scrutiny. Furthermore, it is crucial to set up financing schemes that enable projects to finance impact-oriented accompanying research without having to rely uniquely on their own, often already underfinanced, monitoring and evaluation budget line. The costs of impact-oriented accompanying research might overload smaller democracy support projects in particular. The benefits of implementing this evaluation approach however go beyond the project itself, and if well used, the results and insights can benefit many other projects. In this sense, big development organisations in particular should think about co-finance mechanisms for the project to implement this approach. Directly involving headquarters in the financing further creates an incentive to use learning beyond the individual intervention evaluated and to foster peer learning and the dissemination of results.

Conclusion

The ‘evidence revolution’ in development cooperation did not get as far in the democracy support sector as it did in other sectors. Throughout this article, we have acknowledged that there are good reasons explaining why this is the case. Certainly, the complexity of the interventions in this field is likely to be higher than it is in others. However, it would be too simplistic to assume that this is always the case. Most importantly, it is our contention that the challenges at hand are being amplified by perceptions and beliefs within the field of action itself. They foster the use of weakly specified causal chains and concepts, and they explain the comparatively poor definition of goals and what constitutes success. Unfortunately, relatively widespread resistance and scepticism towards evaluation practice (particularly impact evaluation) have made rigorous evaluation efforts more difficult. They have also contributed to a vicious cycle: Poor specification leads to evaluability problems, which reinforces resistance and perceptions of poor usability.

The knowledge gaps in this field are larger than in other sectors, particularly when it comes to the aggregation of knowledge and the interaction between elements. We lack evidence about what works and also a theory on how it should work.

We believe that more consistent and systematic use of impact-oriented accompanying research (Funk et al., 2018) can help break this cycle. This evaluation format is particularly well suited for this purpose. It allows for a strong methodological focus on context and causal mechanisms as well as systemic thinking. Furthermore, it recognises the need to adapt projects to sudden changes (Roll, 2021). Probably even more important is the fact that impact-oriented accompanying research relies on sustained and continuous cooperation between the evaluators and the evaluated, which is the key to overcoming resistance to evaluation and ensuring its usability and relevance. As such, the format is a generator of evidence, but it is also an ambassador for how practitioners can benefit from rigorous evaluation practices.

To respond to current autocratisation trends, there is a desperate need to learn more about what works in democracy support and under which circumstances. There are much bad news: Although democracy promoters have decades of experience behind them and the topic has remained on the agenda, they have collectively missed the opportunity to create joint knowledge on what works and what does not work in supporting democratisation. It is even more difficult when it comes to the impact of democracy protection. The need to protect democracies from autocratisation in the Global North and South through international cooperation has only recently become a more pressing issue. Knowledge about the patterns and causes of autocratisation still needs to be explored, making it even more difficult to build on established theories of change (Leininger, 2022). The sooner that concerted efforts are made to learn about what works to protect democracy, the better. Despite the re-emergence of democracy support on international agendas, evaluation as such is not receiving more attention than before. As the Summit for Democracy 2023 in Washington demonstrated, most actors are focussed on the impacts and dividends of democracy as well as new approaches to democracy support and less focussed on how such approaches should be evaluated. 15

However, there is also good news. Part of the challenges can be solved by the practitioner community itself, namely those programming and implementing these types of interventions. As democracy promotion regains its place in international politics, more and more forums are bringing together democracy promoters to discuss a common agenda. For example, the Summit for Democracy to be hosted by South Korea in 2024 is one such forum. It is a likely entry point to take the evaluation agenda a step further and begin to share knowledge on the evaluation practices in democracy support. We also know about formats that can help bridge the resistance to evaluation. The learning agenda ahead is big, and many questions remain, both substantively and procedurally, about how to promote and use more and better evidence in democracy promotion. But we do know where to start, and that is not a small thing.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.