Abstract

This article is part of a larger project to examine who calls themselves an evaluator and why, as well as how evaluators differ from non-evaluators. For the present article, 40 professionals doing applied work (e.g. evaluators, researchers) participated in an hour-long semi-structured interview, which involved questions about their journey into the field, applied practice, and professional identity. Research questions were: what does the journey into the field look like for evaluators and similar professionals, and how do they describe the similarities and differences between evaluators and other similar professionals? Results showed evaluators and non-evaluators have unique journeys into the field. Furthermore, evaluators and other similar professionals describe the similarities and differences similarly, yet there are also some misconceptions similar professionals have regarding evaluators and evaluation. This article contributes to the larger conversation on the professionalization of evaluation by helping understand the jurisdictional boundaries between evaluation and other related fields.

Evaluation struggles to define its professional identity (Jacob and Boisvert, 2010), including what evaluation is (Wanzer, 2021) and who evaluators are. “There is no uniform definition of who is an ‘evaluator’” (Schwandt, 2015: 124), and little research has examined this question (Christie et al., 2014), making communicating about evaluation within and outside our profession difficult (Levin-Rozalis and Shochot-Reich, 2008; Mason and Hunt, 2019; Montrosse-Moorhead et al., 2017). It also complicates differentiating ourselves from competing professions (Jacob and Boisvert, 2010; Picciotto, 2011), to the point that “more often than not [evaluators] bear another professional designation (economist, sociologist, psychologist, teacher, adviser, consultant, etc.)” (Picciotto, 2011: 171).

Patton (1988) argued that one indicator of successfully promoting evaluation as a profession is “an increase in the number and proportion of our membership who considers themselves first and foremost to be evaluators” (p. 88). The percentage of American Evaluation Association (AEA) members who consider themselves “evaluators” has risen from 31 percent in 1986 (Shadish and Epstein, 1987) to 71.7 percent in 2018 (AEA Member Survey Committee, 2018). Although most AEA members self-identified that way, Schwandt (2018) argues that evaluators still need to communicate who they are “as a way of continuing to be an evaluator” (p. 81). In other words, what does being an evaluator mean? Is it someone who self-refers as an evaluator, or simply anyone who evaluates (Levin-Rozalis and Shochot-Reich, 2008)?

This lack of clarity is the main impetus for the Evaluator Identity Project. Specifically, we seek to determine who self-refers as an evaluator and why, how evaluators and non-evaluators differ, and what characteristics define “evaluator identity.” This article is part of the larger Evaluator Identity Project, examining specific research questions regarding how evaluators and non-evaluators enter the field and view the two identities as similar and different. We explored these questions by interviewing evaluators and non-evaluators who provided critical information about their journey into the field and their perceptions on the similarities and differences between various applied professions.

Professional identity in evaluation

The conceptual framework that shaped our study from start to finish was social identity theory. Social identity theory posits that a person’s sense of who they are depends on the groups of which one is a part (Tajfel, 1982; Tajfel and Turner, 1986; Turner et al., 1987). Therefore, identifying with a group can be a meaningful process that holds emotional significance and value for members. Social identity theory suggests that choosing whether to professionally identify as an evaluator will depend on several factors. Self-categorization theory within social identity theory suggests that individuals first define themselves as members of a group and then learn and self-assign the norms and behaviors of the group, thereby becoming prototypical members of the group. Group membership can, over time, provide emotional significance, which can be a source of self-esteem for individuals. Two factors can further support whether individuals decide to identify as part of a group: entitativity—the perception of a group as having unity, coherence, and organization—and social comparisons to other salient referent groups.

One important social identity is professional identity, which is a multidimensional, context-bound concept (Hotho, 2008; Kirpal, 2004). A key component of the socially constructed profession is the jurisdiction over professional boundaries (Liu, 2018). In this article, “jurisdiction” is the place where professions claim control over their work because they bring unique expert knowledge to their practice (Abbott, 1988). One aspect of boundary work is boundary-making—distinguishing one profession from another and claiming jurisdictional areas—and the permeability of boundaries.

For evaluation, this means clearly defining its differences from similar activities (e.g. research; see Wanzer, 2021) and the meaning of being an evaluator as opposed to similar occupations (e.g. researcher, auditor). It includes both the process of becoming an evaluator and becoming a part of evaluation, and internalizing what it means to be an evaluator. Preliminary research has begun examining boundary-making in evaluation practice, particularly focused on the professionalization of the field (Jacob and Boisvert, 2010; Worthen, 1999). However, we contend that before professionalization can be discussed it is important to first understand the profession of evaluation and the professional identity of evaluators.

Much literature on evaluators has focused on roles rather than identity. In the concluding chapter of the book by Ryan and Schwandt (2002), Exploring Evaluator Role and Identity, Schwandt (2002) says that “notions of role, identity, and self are entangled in the everyday language,” but “they are distinguishable for analytic purposes” (p. 193). Schwandt (2002) argues that role, identity, and self are relational. Volkov (2011) argues that evaluator roles “combine to form and affect the composite identity of the modern internal evaluator” (p. 37).

Several studies have explored evaluator identity specifically. Sturges (2014) used identity theory to interview 24 external evaluators about their identities and found that none had planned on becoming evaluators, but evaluation was a “perfect fit,” albeit in different ways. Sturges (2014) identified four groups in the study (evaluators, postacademics working in evaluation, academic entrepreneurs who still called themselves evaluators, and layover evaluators who thought their evaluation work would be temporary). Furthermore, their socialization into the field made them feel that protecting evaluation was important. Rogers et al. (2019) examined the identity of three internal evaluators working in the NGO sector. All had variations on how they came into the field, developed their skills and competence in evaluation, and spent time and anxiety on assuming an evaluator identity. Skousen (2017) similarly looked at how evaluators enter the field, finding that most “fall into” evaluation because they started working in or going to school for a tangential field, or took a job that required evaluation. Reid et al. (2020) examined the identity of evaluators of color and how identity intersected with their evaluation roles and practice. They found that a variety of factors (e.g. education, training, race/ethnicity, sector, years of experience) influenced their identities as evaluators and presented challenges to their evaluation practice. Taylor-Schiro—Biidabinikwe and Cram (2021) similarly investigated the identity of evaluators working in advocacy and policy change both shaping and being shaped by their evaluation work. This aligns with Hopson (2002) who stated that “the identity of the evaluator shapes (and is shaped by) the evaluation” (p. 42).

These studies and others suggest that a variety of factors shape evaluator identity. Scriven (1996) suggested that “in order for people to call themselves evaluators . . . the one essential is their ability to do, and their practice of doing professionally demanding evaluation as their primary job responsibility” (p. 159). This means that aspects of how evaluators do evaluation work necessarily affect how they identify as evaluators. This includes how evaluators incorporate evaluation theory (Shadish, 1998, 2006; Shadish et al., 1991) and evaluation-specific methods, such as the logic of evaluation (Gullickson, 2020), the roles they take in evaluation (Skolits et al., 2009; Volkov, 2011), how they think evaluatively (Fierro et al., 2018; Rogers et al., 2019; Vo et al., 2018), evaluator values (Gullickson and Hannum, 2019), and other aspects of how they practice and think about evaluation.

Furthermore, professional associations can socialize evaluators into the larger profession by providing the community with “a sense of stability, belonging, and values” (Hotho, 2008: 279). In Europe, the European Evaluation Society (EES) Evaluation Capabilities Framework (n.d.) and Statement on Methodological Diversity (2007) provide socialization. Statements of national Voluntary Organizations for Professional Evaluation (VOPEs) complement the policy statements and socialize evaluators—for example, the UK Evaluation Society Guidelines for Good Practice in Evaluation (2013), the Evaluation Standards of the Swiss Evaluation Society (2001), and the Ukrainian Evaluation Association Standards (2016).

These socializing policy statements are common across VOPEs worldwide. For example, guiding documents that socialize US practitioners through the AEA (2018b) include the AEA Ethical Guiding Principles, the AEA Evaluator Competencies (AEA, 2018a), and the AEA (2011) Public Statement on Cultural Competence in Evaluation. Although not formally adopted by AEA, the Joint Committee on Standards for Educational Evaluation (JCSEE) Program Evaluation Standards (Yarbrough et al., 2011) is another socializing document of the profession.

In his article on evaluation science, Patton (2018) states that “how we [evaluators] identify ourselves, both individually and collectively, affects how we present ourselves to others and how they see us. How others view us has social, cultural, economic, academic, and political implications” (p. 197). Patton (2018) argues that evaluators should “assert our identity as evaluation scientists engaged in evaluation science” (p. 197), thereby

[enhancing] our credibility, responsibility, capability, utility, and effectiveness while communicating our role more clearly and credibly to those who value science but haven’t thought of evaluation as a scientific activity. Positioning evaluation as science may also have consequences for how evaluators are viewed, treated, perceived, and located in academic institutions, government agencies, and by funders and users of evaluation (p. 197).

Thus, understanding how evaluators self-identify and communicate and assert that identity is important.

This article

To better understand evaluator identity, we conducted interviews with 40 professionals doing applied work (e.g. evaluators, researchers). We asked about their professional identity and whether and how it differed from similar professionals. As part of the Evaluator Identity Project, this article focuses on two research questions: What does the journey into the field look like for evaluators and non-evaluators, and how do evaluators and non-evaluators describe the similarities and differences between them?

Two important assumptions guide this study. First, the jurisdictions or boundaries between evaluation and research overlap in some places and not in others. For example, research and evaluation use similar methodologies often, but not always (Mathison, 2008). On the contrary, some aspects of evaluation are unique and not found in research. The central role and use of values in evaluation is one obvious example (see Schwandt and Gates, 2021 for a history and contemporary account of this). Second is the view that evaluators should have a monopoly over evaluation. We do not mean that evaluators should have a monopoly over things that overlap with others (e.g. research methodology); rather, evaluators should have a monopoly over the field of work known as and falling under evaluation. Evaluation is more than methodology; it is the distinct form of inquiry that answers evaluative questions and requires judgment regarding those questions (Gullickson, 2020). The process by which evaluators engage in this judging, the standards and ethical guidelines they bring to bear, and the competencies they require are unique to the professional work that evaluators do. For this reason, it becomes critically important for evaluators to have jurisdictional boundaries over evaluation.

Methods

Due to the exploratory nature of the study, we employed thematic analysis, a process for identifying, analyzing, and interpreting observations, including patterns and uniqueness, across a data set (Braun and Clarke, 2022; Neuendorf, 2019). The focus of our analysis was semantic; we sought to explore meaning while remaining as faithful as possible to our participants’ language. The roots of our qualitative framework were in a constructivist philosophy of science. As Schwandt (2015) notes, constructivism is elusive with meanings shaped by those using them. The use of the term here is as a philosophical stance that underpins the inquiry effort and that “all knowledge claims . . . take place within a conceptual framework through which the world is described” (Schwandt, 2015: 36). Our interest lay in capturing and exploring our participants’ perspectives on and understanding of their chosen applied careers. We used social identity theory to help us design and execute this study. The preprint, supplemental data of participant demographics, materials (i.e. consent form, demographic survey, interview protocol, Institutional Review Board (IRB) application), and more details on our methodology are available on the Open Science Framework (OSF) at https://osf.io/ye67k/

Participants

Because we were interested in understanding differences in professional identity among evaluators and non-evaluators, we intentionally recruited through social media and through American Educational Research Association (AERA) newsletters. Recruitment occurred during January–February 2021. Inclusion criteria for the study were that participants were (1) applied practitioners, (2) with at least 5 years of work experience, and (3) residing in the United States.

Each country has unique aspects of evaluation that play into its larger professionalization. Several factors make the United States a compelling case for examining the evaluator professional identity and other applied professionals. First, formal evaluation practice has existed there since the early 1940s, much longer than in other parts of the world (Thomas and Campbell, 2021). Second, compared to other countries, the United States has a much larger and more robust evaluator education system, producing a steady supply of young and emerging evaluators (YEE; Beywl and Harich, 2007; Galport and Azzam, 2017). The United States also has a robust market for evaluation (Nielsen et al., 2018). Moreover, federal and state policies and legislation, such as the Government Performance and Results Act (1993) and the Foundations for Evidence-Based Policymaking Act (2019), govern the conduct of evaluation. At the same time, the AEA’s recently board-endorsed Evaluator Competencies (2018) has an entire domain devoted to “what makes evaluators distinct as practicing professionals” and the impetus for the development in the competencies was tied to “develop[ing] a common language and criteria for defining evaluation practice, in other words, what makes evaluation practice unique” (Tucker et al., 2020: 34). Finally, values unique to the United States context—specifically, rooted in the idea of free markets and capitalism—extend to evaluation. While federal and state policies and AEA policy documents for socializing evaluators into the profession exist, evaluation is still largely unregulated, and much evaluator competition for contracts (Nielsen et al., 2018) exists. In addition, despite a wide diversity of people, practices, and perspectives of evaluators in the United States, the above factors address commonalities among evaluators that warrant exploration. Collectively, the United States provides interesting and fertile ground for exploring the boundaries between evaluators and similar professionals.

Participants were recruited to participate in a Qualtrics-based demographic survey. The survey allowed us to (1) ensure inclusion criteria were met, (2) collect demographic data, and (3) allow for maximum variation sampling procedures to get a diversity of perspectives (Patton, 2014). Of the 59 eligible applied professionals who took the survey, we selected 40 at random to participate in the survey, aiming for approximately half who primarily identified as “evaluators” and the other half as something other than an evaluator. Participant self-identification of professional identity happened during the demographic survey as well as at the start of each interview.

Our participants were predominantly women (77.5%) and White (75%), which reflects the AEA membership (62% women, 15% missing gender data, and 53% White, with 22% missing race/ethnicity data; Coryn et al., 2020). Majority of participants held a doctoral degree (65%) and had an average age of 45 (Median (Mdn) = 42.5, SD = 11.3) and an average of 16.5 years of experience (Mdn = 15, SD = 8.4). All but one evaluator was a member of the AEA (17 of 18), whereas most non-evaluators were members of the AERA (16 of 19) and about half were also members of AEA (11 of 19). We also note that among the evaluators group, 77.8 percent were external evaluators and 22.2 percent were internal evaluators. Among the non-evaluators who are or have done group, 73.7 percent were external to the organizations with whom they worked, and 26.3 percent were internal to their organization. More detailed information on our participant demographics, split by the three groups of participants, can be found on OSF.

Study procedures

Semi-structured interviews of the 40 participants occurred in March–April 2021 via Zoom. The interview team included the article authors, a graduate student in evaluation, and an undergraduate student interested in social science research, all trained during the pilot by the research team member with qualitative-methods expertise (Montrosse-Moorhead). All interviews lasted approximately one hour, and a transcription company (Scribie) audio-recorded and transcribed them verbatim. The research team checked the transcribed interviews for accuracy, summarized and sent them to participants for member checking, then coded them for analysis.

Analytic approach

All authors coded the data using multiple rounds of first- and second-cycle coding. Provisional and structural coding occurred during the first cycle. Provisional coding is the use of a priori researcher-generated codes. Our literature review was the basis for a provisional coding scheme and “what preparatory investigation suggest(ed) might appear in the data before they [were] collected and analyzed” (Miles, Huberman and Saldaña, 2019: 77). Structural coding was also used, where a question of interest (e.g. research questions or their aspects) framed the coding (Miles, Huberman and Saldaña, 2019). For this article, our questions of interest were how evaluators and non-evaluators entered their respective fields and the similarities and differences between them.

During the second cycle, researchers used pattern coding to move from codes to themes in the data (Miles, Huberman and Saldaña, 2019). During this process, unpattern coding captured observations that ran counter to emerging themes, as a way to reason through whether the suggested theme needed revision or suggested a new theme to develop. For example, trying to describe similarities and differences between evaluators and non-evaluators made clear that the dichotomy did not fit the data well because the non-evaluator group had important differences. Specifically, many did not identify as evaluators, yet they did evaluation work; some did not identify as evaluators and did no evaluation work. Thus, the analytic lens of the team switched from a dichotomy to a trichotomy, to more accurately represent the data, codes, and themes: (1) evaluators, (2) non-evaluators (other applied professionals who had done or currently did evaluation work but did not primarily self-identify as an evaluator), and (3) other professionals (other applied professionals who had never done evaluation work).

Throughout both coding cycles, researchers wrote analytical memos and met to discuss observations and generated. These memos centered on code definitions, patterns and unpatterns, themes, implications, and connections to extant literature.

Attending to values and study quality

The study used several codes of ethical conduct with embedded values. First, it received IRB approval. Second, the researchers also used guiding principles for evaluators (AEA, 2018b). Although this study is research, the disciplinary training of most of the research team led to deciding to adopt AEA’s guiding principles. Specifically, the research team aimed to privilege systematic inquiry, competence, integrity, respect for people, and common good and equity.

We used several techniques proposed by Guba and Lincoln and elaborated on by Miles et al. (2019) to attend to study quality. To enhance confirmability, we were explicit about study methods and procedures, researcher positionality statements (see detailed methods on OSF), and employed pattern and unpattern coding. To enhance dependability, we ensured a strong connection between research questions, planned methods, and enacted procedures. To enhance credibility, we used table-of-specifications mapping, participant checks, analytic memos, and pattern and unpattern coding. To enhance transferability, we fully reported participant characteristics, clearly described recruitment and sampling procedures, and connected findings to extant literature. To enhance application (Miles et al., 2019), we tried to use language accessible to many audiences and included implications of our findings for audiences beyond academia.

Results

Journey into respective fields

In this section, we discuss inflection points—the moments when professionals realize that their chosen applied career was the one they wanted—as a way to explore how current professional identities developed. These inflection points varied among evaluators, non-evaluators, and other applied professionals. Among those we interviewed (n = 40), a majority did or had done evaluation work (n = 37). Moreover, and perhaps somewhat surprisingly, despite having engaged in evaluation work, only about half of our sample identified as “evaluator” (n = 18). In what follows, we first describe inflection points for other applied professionals; then, inflection points for evaluators and non-evaluators.

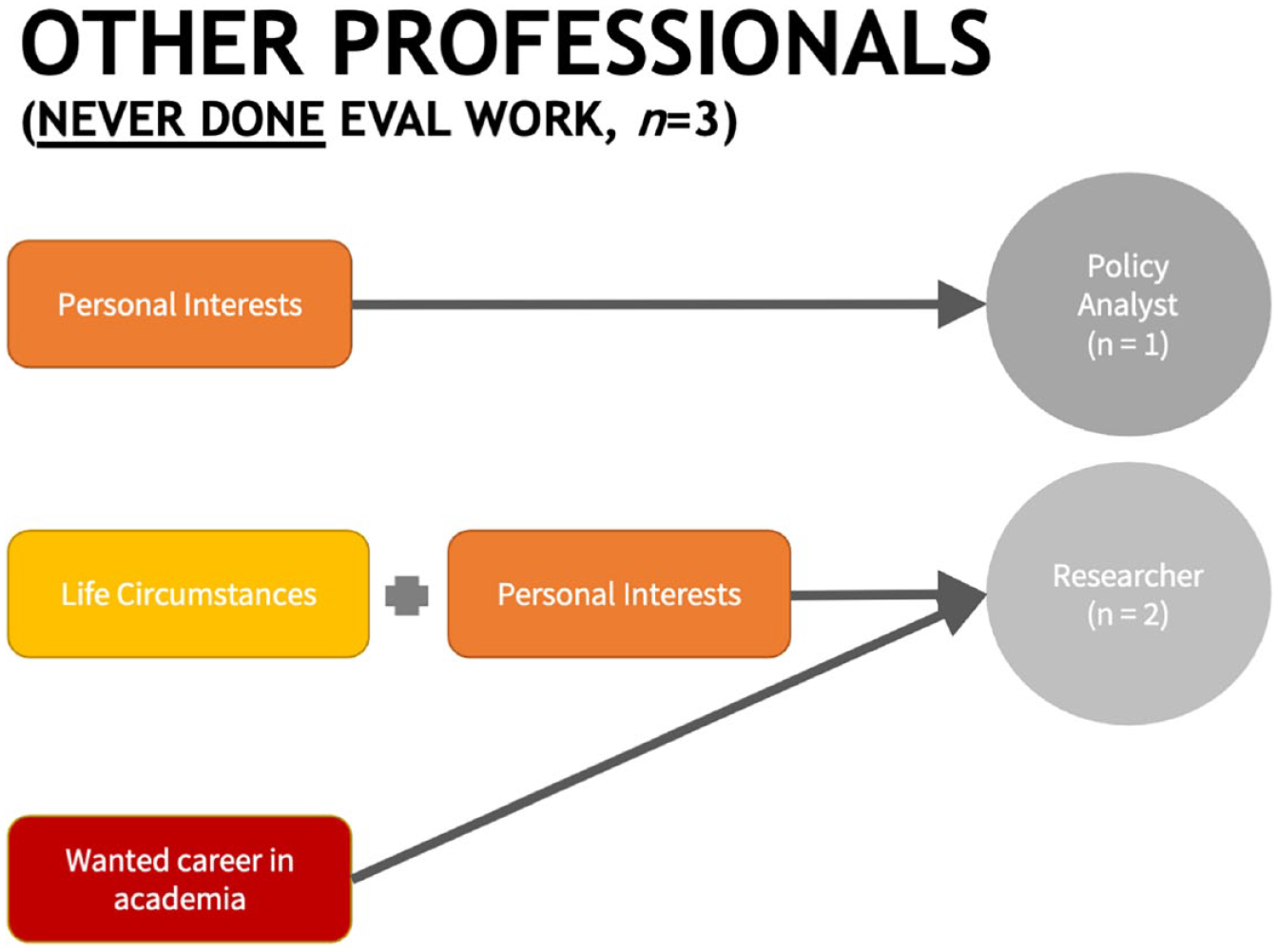

Life circumstances, personal interests, and wanting an academic career are the primary events that led applied professionals who had never done any evaluation work into their professions (n = 3; see Figure 1). For example, one self-described as a “late bloomer,” due to life circumstances (17), having decided to pursue a Demography doctoral degree after the participant’s child was in university. The participant eventually took a position at “an educational technology company,” and became able to work on meaningful projects that have “an immediate impact on people’s lives in a good way.” The other two described how personal interests led to their applied work. For example, one said,

Even from high school, I was interested in how government and policy affect . . . people’s lives, both just in general, but then also how can [they] help improve people’s lives. And so, my undergraduate degree is in sociology . . . I have two master’s degrees, one in Sociology and one in Public Administration and Public Policy . . . So, that’s how I ended up in the field. It was because of this interest in impact of policy and wanting to make a difference. (17)

Inflection points for other professionals who have never done evaluation work appear on the left-hand side of the figure, and move right showing their move toward how they professionally self-identify.

A third participant described wanting an academic career (21). She said,

When I entered my Ph.D. program in special education, I transitioned in, already having a Master’s degree plus about 13 years of teaching under my belt . . . it was one of my long-time mentors who explained to me the idea of getting a Ph.D., entering higher education as an assistant professor, and that a university job would entail not just course work but research.

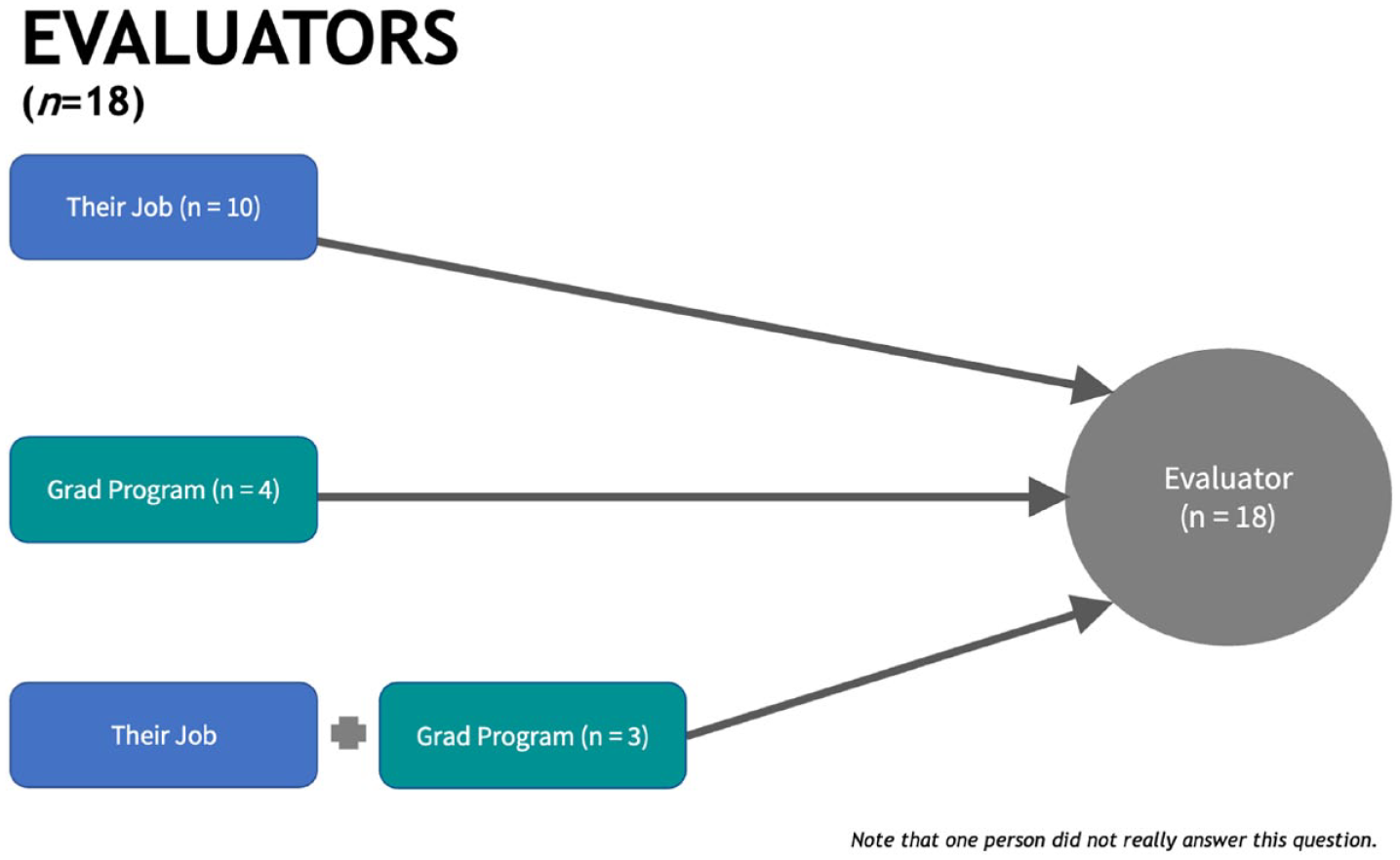

Among those engaged in evaluation work, those who identified as an evaluator (n = 18) showed less variability in their inflection points (see Figure 2). Most had experiences in the context of their professional work that led them to evaluation. To illustrate, one interviewee shared,

I graduated with my master’s in Political Science. And after graduation, I started working full-time for a survey research company that did international research . . . my company was hired to do this survey piece for a USAID impact evaluation . . . I was just doing one piece of it, but it was the first time I understood not just how to collect and analyze and interpret the data, but how it was going to be used. And for me, that was a huge turning point. Like a big ‘aha’ moment for me. . . . I decided to get my Ph.D. with a specialization in Evaluation. (1)

Inflection points for evaluators appear on the left-hand side of the figure, and move right toward their professional self-identity. Note that one person did not answer this question and are not included in figure participant counts.

Another shared,

[During a planned gap year], I had gotten a job at an office of educational assessment at a university. And at the time, I didn’t really know anything about evaluation. . . . At the end of their advising system. . . . And so, by the planned gap year, I realized] I’ve got this great job at the office of educational assessment. I really like doing the evaluation work. . . . And so I’m gonna stick with that. (24)

A little over half of our interviewees who identified as evaluators shared similar stories.

Yet, not everyone had experiences in their full-time jobs that led them to evaluation. For about a quarter who identified as evaluators, it was also work done in the context of their graduate programs. For example, one interviewee shared,

I got my master’s in marriage and family therapy, thinking that I was [going to] become a therapist I didn’t want to do that after a couple of years of internship and practicing. But I loved doing my master’s thesis in collecting data and figuring things out. And so I decided to go on with my Ph.D. in research. Through that, I started working with a cooperative center between the university and the local school district. And so, that got me more interested in doing education research and evaluation. (31)

Relatedly, a few respondents decided to pursue a career in evaluation as a result of graduate coursework in evaluation only. These individuals share similar stories:

It all started . . . when I was taking Research Methods as well as the Evaluation Theories course in graduate school. And I’ve always loved research, always really enjoyed doing hands-on research. And I had been thinking about it in an organizational way before with psychology. And then, . . . being able to see and learn about how I could apply it beyond just a more corporate setting . . . So that was learning to education, to nonprofits, to health. So, that was . . . the first thing that really sparked my interest . . . seeing how I could make a difference in those fields that I really care about without just being . . . in that field itself. (39)

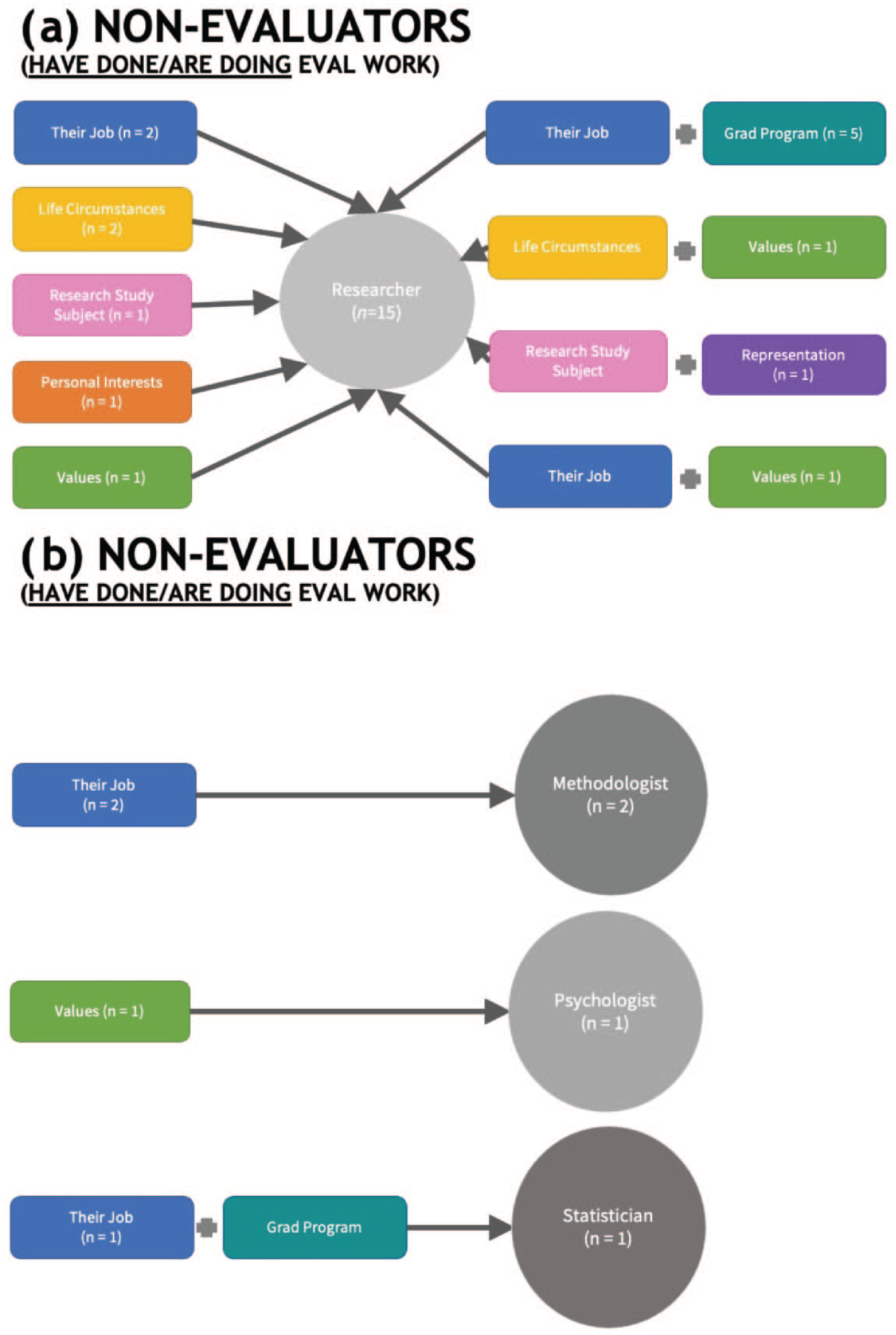

Those who did not identify as evaluators (non-evaluators) but had engaged in evaluation work (n = 19) showed the most variability in how they identified and their inflection points (see Figure 3). Those who identified as methodologists (n = 2) and statisticians (n = 1) had inflection points similar to evaluators, with their job and graduate school leading them to their professional identities. One interviewee shared making decisions in a previous field that had short-term and long-term health consequences for people, leading to questioning the data, methods, and benchmarks used to make these decisions:

I started asking many “why” questions [about how I was making decisions that had potentially life-altering health consequences] and I realized that’s what methodologists do. . . . And so I found a Ph.D. program that . . . focused on Measurement Methodology . . . I realized that this wasn’t just about using the measures but . . . also about how we use the measures, our organizational policies, and our programs for training . . . Like, how we even set up the environment for these managers and everything? And so, it just became this bigger thing, and I realized evaluation was really the culmination of everything that I was interested in . . . My Ph.D. is in research and evaluation methods, and I hope when I say “methodologist,” it encompasses [measures, organizational policies, programs for training and things like that]. It took me a long time to settle on one word. I feel at home now. (9)

Inflection points for non-evaluators who do/have done evaluations appear on the left- and right-hand side of the figures, and move right or inward toward how they professionally self-identify. (a) Those who identify as researchers and (b) those who identify as a methodologist, psychologist, or statistician.

Others shared similar stories of entering their applied profession, deciding to do so because of their full-time work at the time.

One person identified as a psychologist, noting values as the source of the choice of profession.

I was interested in environmental issues growing up. Just being interested in learning how to promote environmental action and how do we get people to not litter, to save paper, use less fuel resources, things like that. So, that was always part of who I was growing up. . . . And after I finished my master’s in psychology, I was, like, okay, what’s next? I realized I wanted to do research, but research on figuring out how to promote responsible environmental behavior. . . . I just looked into the research literature, and I found this entire field called Conservation Psychology . . . it provided me a vision for what I could do with my degree, with my skills, to actually apply the research to promote action . . . that I wanted to promote. So, . . . So that’s how I got to where I am, but I will also qualify it by saying that my official degree is Applied Social Psychology. Conservation Psychologist, as I call myself, is my self-identity. (14)

Most who did evaluation work but did not identify as evaluators identified instead as researchers (n = 15). Compared to evaluators and other applied professionals, their inflection points were much more varied. Half had inflection points similar to evaluators, statisticians, and methodologists, with jobs or graduate programs leading them to their professional researcher identity. A few other non-evaluators had inflection points similar to the other professionals, with life circumstances, values, and personal interests leading them to their researcher identity. They shared similar to those of the evaluators and other professionals.

Two additional inflection points were present only in this group. One was an inflection point that a prior experience participating in research studies had spurred. One interviewee shared,

I was a teacher, and then I participated in a research study. And the researchers who were conducting the study asked if I [had] ever been interested in getting a Ph.D. And I hadn’t, but I said yes. And we started talking, and I applied and got in. And then, I really enjoyed the research [and statistical] side of things, especially the statistical side . . . the math and finding out . . . I don’t want to say finding the truth, but like explaining the world as it exists and finding out what works for whom and why things work and things like that. (05)

Another inflection point for one of our interviewees was seeing someone of similar social identity in a research leadership role.

While [my mom and I were at an event], we were lucky enough to run into a Latina [Principal Investigator] for a [National Institutes of Health] longitudinally-funded study on the normative development of Puerto Rican adolescents in the northeast. We were asked to be part of the pilot. . . . So I had this really wonderful experience of seeing these diverse women doing research that actually mattered to me, and . . . they were wanting to give back to the community in a way, and positively engage the community. And I thought, ‘Oh my gosh, this is so cool, I can do that.’ And so, that’s kind of how I got into this . . . I was so lucky to have these examples of women, and again, diverse women, different background, including Latinas who were doing this work. (37)

Why those who do evaluation work and have similar inflection points differ in how they self-identify

Narrowing our analytical lens, we noticed that the inflection points of evaluators (n = 18) resembled those of professionals who identified as methodologists (n = 2), statisticians (n = 1), and in a subset of researchers (n = 7). We were curious about whether we could identify possible reasons for their similar professional inflection points but different self-identification as a way to explore what it means to “be” an evaluator. We particularly wondered why some who do evaluation would not identify as evaluators. We focused exclusively on those who identified as methodologists, statisticians, and a subset of researchers whose inflection points related to jobs and/or graduate school. Our analysis revealed three reasons why they did not professionally identify as an evaluator; many mentioned more than one reason.

One reason was the markets in which they worked. One interviewee who identified as an educational researcher shared,

Lots of times, the work that I do is determined by the funding model that we have . . . A larger National Science Foundation project require[d] an educational research team and an evaluation team. Then we have other funders like the United States Department of Agriculture, where [they] intentionally merged. (10)

Another who identified as a statistician told us,

Clients will hire me to be their evaluator, but I wouldn’t necessarily call myself that. But I feel like I do the work of it, though. . . . Most statisticians I know don’t take on a classic program evaluation project. (20)

In short, commissioners and funders have considerable sway in what professionals call themselves. Many who do not professionally self-identify as an evaluator but who do evaluation work are in positions where their livelihood depends on securing external funding, so they seek this funding from a variety of marketplaces, not solely in the evaluation marketplace.

A second reason is that their disciplinary training continues to strongly shape how they identify. An interviewee who identified as a researcher commented, “More naturally, I think of myself as a researcher, and I would say my training is as a researcher . . . I honestly don’t even really do all of the things well that an evaluator does” (26). Another who identified as a methodologist shared, “I was a methodologist first, and I found evaluation after my methodological training” (09). Many of those who did evaluation work but did not primarily identify as an evaluator clearly felt a stronger affinity to the professional group with which they identified while they were learning to do social scientific research.

A third reason is the preconceptions that professionals bring with them about the similarities and differences between research and evaluation. Sometimes, this is explicit. One interviewee who identified as a researcher shared, “In my mind, evaluation is a subset of research, which is why I went with that educational researcher identity and not evaluator” (06). Another who identified as a methodologist said,

I think [methodologist] supersedes the evaluator title in my mind because I do research as well. So, I feel it’s not fair to call myself just an evaluator. I just feel like methodologist is the most comprehensive term for all that I do. (09)

However, preconceptions about research and evaluation were implicit in others’ responses. One interviewee who identified as a researcher said, “In my researcher role, I help to shape the politics of it. In evaluation, you don’t necessarily get a chance to do that. You don’t influence the politics of it” (25). As we looked through the data and continued to code it, we began to see a common theme centered on preconceptions about the similarities and differences between evaluators and non-evaluators, which the next section describes.

Similarities and differences between evaluators and non-evaluators

As a way to further explore the meaning of group membership and probe group boundaries, we asked questions about similarities and differences between evaluators and non-evaluators. Notably, despite asking questions regarding similarities and differences between evaluators and non-evaluators, many participants also mentioned similarities and differences between evaluation and non-evaluation (e.g. research). Although we attempted to probe more directly into identity specifically, we also recognized that the work we do is inherently tied to the identities we hold while doing it. What evaluation or similar applied work means to us will affect how we practice and, therefore, our identities as evaluators or otherwise. The following results compare what evaluators and non-evaluators called similarities and differences in both identity and practice. Comments by the three “other professionals” appear at the end of this section.

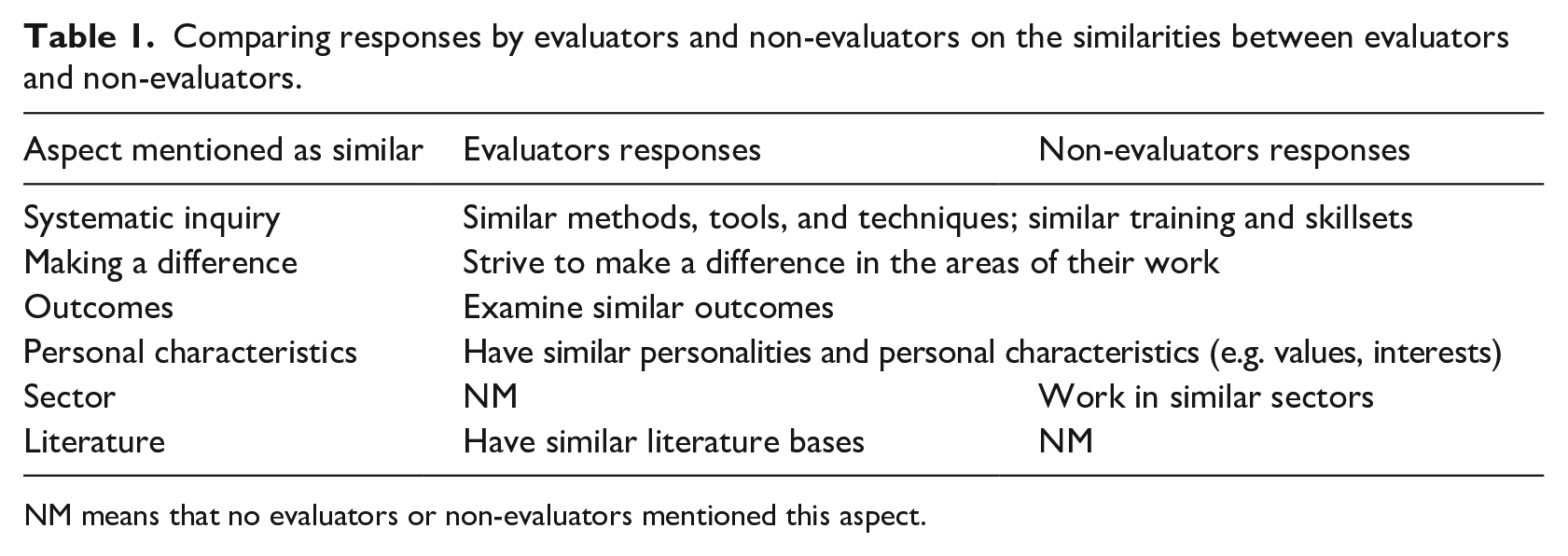

Table 1 shows the similarities between evaluators and other applied professionals that both evaluators and non-evaluators mentioned. Both mentioned that they approach the work using systematic inquiry, strive to make a difference in the areas of their work, examine similar outcomes, and have similar personalities and personal characteristics. Non-evaluators also mentioned working in similar sectors; an evaluator mentioned their similar literature bases.

Comparing responses by evaluators and non-evaluators on the similarities between evaluators and non-evaluators.

NM means that no evaluators or non-evaluators mentioned this aspect.

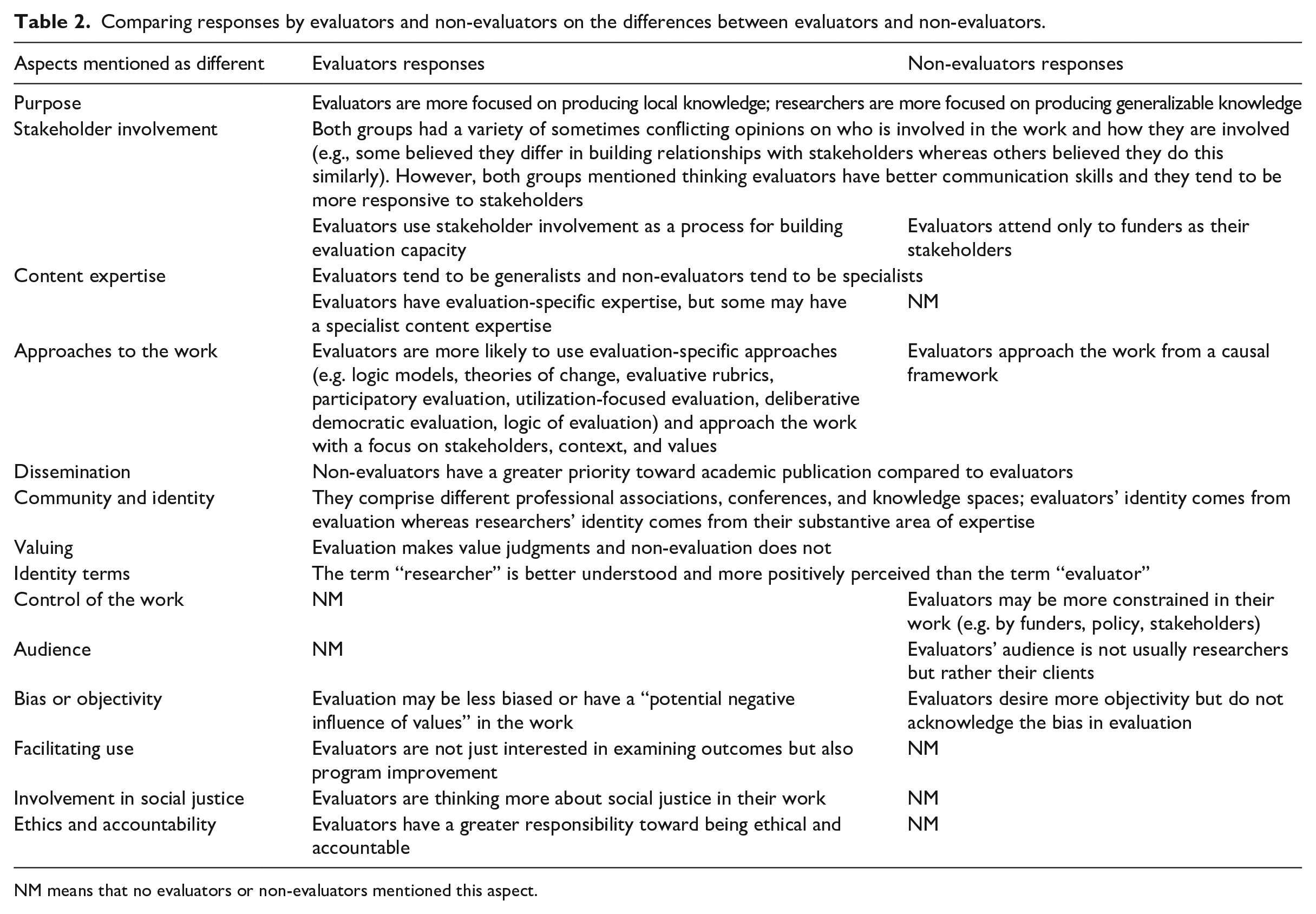

Table 2 shows the differences between evaluators and other applied professionals that both evaluators and non-evaluators mentioned. Notably, they mentioned more aspects as differences than similarities. One commonly mentioned difference was their purposes. Evaluators focus more on producing local knowledge for a particular program or organization; non-evaluators strive to “contribute more generalizable knowledge to the field” (12).

Comparing responses by evaluators and non-evaluators on the differences between evaluators and non-evaluators.

NM means that no evaluators or non-evaluators mentioned this aspect.

Evaluators and non-evaluators differed in mentioning stakeholder involvement. Some thought there were similarities in who is involved and how; others saw differences between evaluators and non-evaluators. However, a few evaluators explicitly mentioned involving stakeholders to build evaluation capacity. Yet, one non-evaluator thought “evaluators make the assumption that the funder is the one that is dictating and wants the work done and is paying you, so, therefore, you need to be responsive only to that stakeholder group” (25).

Non-evaluators and evaluators agreed that researchers tended more toward being “specialists,” but they differed on the extent to which evaluators were “generalists.” Non-evaluators tended to think of evaluators as generalists, but some evaluators asserted that evaluators do have specific expertise, including methodological or evaluation expertise, or more specialized with narrow content expertise.

Evaluators and non-evaluators also differed in how they mentioned approaching their applied work. Evaluators were more likely to mention evaluation-specific approaches they would tend to use. Evaluators mentioned approaching the work more focused on stakeholders, being more “iterative and back and forth with the client” (20) and “training the people I work with” (40) on things like logic models. One evaluator mentioned that evaluators were more likely aware of “context . . . values . . . different world views . . . and understanding how their metrics are political and cultural” (34). Researchers “tend to do it free of context, understanding of purpose, understanding values” (34). On the contrary, one non-evaluator believed evaluators approach the work within a causal framework that typically “reduces it down [to] a question of effect size and significance . . . which I find a little bit lacking” (35).

Both groups agreed on differences in dissemination, community, and identity, and valuing, that is, “evaluation is that step beyond applied research . . . in making a value judgment” (8). Both groups also mentioned bias or objectivity. Evaluators stated that “evaluation is not an unbiased science the same way that some basic research is” (1) or mentioned a “potential negative influence of values in evaluation versus research” (23). One non-evaluator mentioned bias, suggesting that evaluators “want to stay a little bit more objective, or at least [be] perceived as more objective” (25) but “won’t acknowledge” (25) the bias built into the work. Non-evaluators were more likely to mention differences in how people understand or perceive identity terms, with clients, funders, and other interested parties better understanding and more positively receiving the term “researcher” than the term “evaluator.” This subsequently led evaluators and non-evaluators alike to selectively choose which label they use, depending on what they “would like to be able to convey” (14) to their audience, or to “get more respect” (15).

Only non-evaluators mentioned differences in the control of the work—that is, evaluators may be constrained whereas researchers have “more freedom or flexibility” (19). Similarly, only non-evaluators mentioned differences in audience, that is, researchers “are not the audience [for evaluation work] 99% of the time” (11), and “evaluators do it for the clients who hire them, whoever they might be” (22). However, another non-evaluator mentioned that “program evaluation can bring things to educational research” (10), indicating that researchers could potentially be the audience.

Only evaluators mentioned differences in facilitating use—that is, evaluators may be more interested in not just “identifying what’s wrong” but also “trying to tell the story with an eye to program improvement” (39) differences in social justice involvement—that is, whether evaluators “wrestle with these questions of social justice more, about what their role is and what it should be” [8]. One evaluator also mentioned ethics and accountability differences—that is, evaluators may have greater responsibility for doing things “in the right way for the right reasons” (34)

Some evaluators also mentioned factors within evaluation that may also lead to within-group differences, including whether evaluators have a master’s or a doctorate, are internal or external evaluators, work in academia or are US-based or in another country.

Three other professionals remained, one of whom had never heard of evaluation (13). Another (17) said that she “might have called myself an evaluator because I went through a policy program” but, instead, self-identified as a policy analyst. She saw many parallels between evaluation and policy analysis work, including both being “cross-disciplinary” and using a “general social science orientation,” though evaluation focuses on implementation and impact less than diagnostics as a policy analyst would. Interestingly, she believed that “evaluators would be a little stronger on the qual[itative]” (17). The third “other professional” believed that “the difference between people who do program evaluation and me is that I am really bad at statistics” (21). This participant also saw similarities between evaluators and her profession of applied behavior analysis, stating that both focus on “improving individuals’ life outcomes” (21).

Discussion

In this section, we discuss key findings, situating them in extant scholarship informed by social identity theory. The article concludes with study limitations, implications, and future directions.

The development of an evaluator identity

Research using social identity theory has explored the development of identity. Extant work suggests that choosing to go into a career depends on factors such as understanding the career exists, identifying with others doing that career, and believing the career will align with one’s social and personal worth compared to other careers. As a way to begin to explore how an evaluator identity develops in evaluation, we examined how applied professionals entered their careers. This decision was informed by prior work that shows there are a variety of ways that evaluators come into the profession (Rogers et al., 2019; Skousen, 2017; Sturges, 2014). Related, there are currently no undergraduate programs in evaluation (LaVelle et al., 2019) like there are in economics, sociology, public health, and the like. Thus, unlike these other applied fields, how evaluators enter the field becomes an important site of better understanding the development of evaluator identity.

Among those included in the sample, and focusing the discussion on among those who identify as evaluators and non-evaluators who do or have done evaluations, journey into the field varied. These varied pathways lead back to differing inflection points (i.e. the realization that their chosen applied career was what they wanted). For those with an evaluator identity, the inflection point occurred at their jobs or graduate school. For non-evaluators who do or have done evaluations, two separate patterns emerged. The first—for individuals who self-identified as methodologists or statisticians—could trace their journey back to their graduate program or jobs. This pattern mimics that of evaluators, and is consistent with Skousen’s (2017) finding that many evaluators “fall into” evaluation because of a job that required it or a graduate program that led them to it.

The other pattern—for professionals who self-identified as researchers—identified a variety of pathways. About half said their path was their job or graduate program, consistent with Skousen (2017). But other factors were identified that played a role, including values, personal interests, being a research participant, significant life events, and seeing someone in a research leadership role who shared their racial and ethnic identity. Despite 70 percent of Skousen’s (2017) study participants identifying as evaluators, their findings did not disaggregate evaluators and non-evaluators. Our findings include greater nuance for non-evaluators who do or have done evaluations.

Development of identity is an understudied area of social identity theory (Huddy, 2001), and this study suggests that it is a fruitful area for future work within evaluation. Despite work on salient differences between evaluation and research (Mathison, 2008; Wanzer, 2021), a long history of distinguishing the logic of evaluation from the logic of research (Scriven, 1991), and recent work on the logic of evaluation professionalism (Picciotto, 2011), why do those doing evaluation vary in group membership? The answer matters if strong evaluator identity motivates group actions, beliefs, and dispositions to and in evaluation work. Based on the sample included in this study, we believe there is reason to think it does; a point we further explore in the sections that follow.

Exploring the meaning of evaluator identity

There is emerging work on what it means to take on an identity motivated by a recognition that the meaning ascribed to an identity, not the mere existence of an identity label, influences actions, beliefs, and dispositions (Huddy, 2001). This study sought to understand what it means to “be” an evaluator by asking evaluators and non-evaluators their views on a variety of issues, and exploring similarity and difference patterns analytically.

Overall, this study found some similarities among evaluators and non-evaluators. Regardless of professional identity, participants believed that evaluators and non-evaluators use systematic inquiry, strive to make a difference, and have similar personalities or personal characteristics. Moreover, regardless of professional identity, evaluators and non-evaluators agreed that several aspects uniquely distinguished evaluators. Compared to non-evaluators, evaluators were described as pursuing different purposes, including stakeholder involvement approaches more often, being content agnostic or generalists, engaging in different dissemination practices and targeting different audiences, and differing approaches to the work. Furthermore, participants mentioned that evaluators and non-evaluators comprise different professional associations, conferences, and knowledge spaces, which affects their group identity. These ideas about what it means to be an evaluator are consistent with prior work (Wanzer, 2021).

Exploring how understanding of what it means to be an evaluator also surfaced contested aspects of evaluator identity. Non-evaluators, for example, held varying ideas about who evaluators are. Some contended that evaluators use qualitative methods, suggesting one cannot be an evaluator if they use quantitative methods. Others held the exact opposite view— one cannot be an evaluator if they use qualitative methods because evaluators use quantitative methods. Some suggested that evaluators compared to non-evaluators hold proclivities for causal research designs at the exclusion of other research designs. Some also suggested that evaluators only consider and privilege, to the exclusion of other stakeholders, paying clients as the main audience for the work. Extant evaluation scholarship shows these are misconceptions.

Social identity theory suggests that the meaning ascribed to an identity is important to explore because of its consequences. To illustrate, one way that individuals form and maintain professional identities is through professional memberships. Although we had a small sample, all evaluators who shared their professional association membership information were members of AEA, whereas only 11 of the 19 non-evaluators belonged to AEA, instead belonging to other associations such as the AERA or the National Council on Measurement in Education. Professional associations guide members in upholding professional norms, standards, and competencies. One potential consequence of not identifying as an evaluator when doing evaluation work is whether professionals are using evaluator standards and norms or those of other associations. While this study did not explore consequences, this example illustrates one way future research might be useful.

Exploring ascribed meaning from those who hold strong and weak conceptions of an identity, as well as those who do not hold the identity (outsiders), is also important for better understanding two related, but distinct concepts—the meaning of group membership and group boundaries. This study begins to shed light on what it means to claim an evaluator identity, but more work is needed. Findings also raise questions about the permeability of the boundary of evaluator identity; a point next explored.

The permeability of the evaluator identity boundary

Social identity theory suggests that identity development is hindered for professional groups that are viewed negatively, especially when identity boundaries are permeable. Applied to evaluation, choosing to identify as an evaluator depends on whether one self-categorizes into the “evaluator” group, perceives the evaluator group as important, and especially that evaluators are perceived as more important than other relevant groups, such as researchers. Our results suggest, however, that not everyone who does evaluation work holds the identity of professional evaluator. Among those included in the study, this appears to be driven by a negative perception of evaluation. This echoes Donaldson’s (2001) observation based on his career that those outside of evaluation view the profession negatively. This study extends Donaldson’s assertation by highlighting that some doing evaluation share this negative view.

Moreover, social identity theory posits that when boundaries are permeable, members of low-status groups often eschew affiliation with the group, and instead identify with a different, higher status group (Tajfel and Turner, 1986). And, this is exactly what some non-evaluators reported doing in our study. Non-evaluators, for example, mentioned that the term “researcher” is better understood and more positively perceived by outsiders than “evaluator.” Other participants mentioned selectively choosing the label they use depending on the situation. This too is consistent with social identity research, which shows that group members are willing to discard their membership with a group perceived as low-status groups within a specific social context (Jackson, et al., 1996).

Taken as a whole, this study contributes to the growing literature on evaluator identity and professionalization, including boundaries (Castro et al., 2016; Liu, 2018; Montrosse-Moorhead et al., 2017). Evaluation’s boundaries are permeable (Jacob and Boisvert, 2010; Worthen, 1999). And, evaluation’s negative reputation both within and outside of the group “has the potential to undercut serious evaluation efforts and discourage future generations from entering the profession” (Donaldson, 2001: 360). The extent to which our findings hold across varying contexts is important to explore in future work, as it has the potential to hinder the professionalization of evaluation.

Limitations

Our study is not without limitations. Our study and much of the literature from which we draw is situated within the perspective of United States. Moreover, the professionalization discussion in the United States summarized in the article differs from that of continental Europe (see, for example, Evetts, 2003, 2011; Noordegraaf, 2007). Our belief is that one of the reasons for this difference is the valued position of the free market system and capitalism hold in the United States, which influences the frames scholars are bringing to the professionalization debates. While we have detailed why we believe the United States is a compelling case, we also recognize that results should not be transferred beyond this context. Our sample is also predominately white and mostly women, and we did not purposefully collect data on sexual orientation. While this mirrors AEA social identity demographics (Coryn et al., 2020), it may also not be indicative of the views of all AEA members, especially those who identify as Black, Indigenous, Asian, Latino, and other evaluators of color or as lesbian, gay, bisexual, trans, or queer.

Implications and future directions

This study presents a glimpse of who evaluators are and how they differ from non-evaluators (e.g. researchers and psychologists), both in terms of their identities as well as their journeys into the field. One area of future work is better communicating a more salient understanding of who evaluators are and what they do (Mason and Hunt, 2019). Our research demonstrated that one participant was unaware of evaluation, whereas other participants revealed misconceptions about the profession and mentioned that evaluators are less understood and less positively perceived than researchers. Evaluators pointed to the unique aspects of the field of evaluation but also recognized the ample variability (e.g. between academic and non-academic evaluators, master’s and PhD training, internal and external evaluators, and bases inside and outside the United States). Making the evaluation field more well-known and getting over our negative reputation as a field requires more work (Donaldson, 2001).

Another area of future work is related to helping the field of evaluation better recruit and retain evaluators. For example, the findings suggest that professional evaluation work and graduate school serve as major gateways into evaluation. These existing pathways can be strengthened (e.g. through paid internships, graduate assistantships, post-doctoral opportunities) to better support young, emerging evaluators in consciously attending to their professional identity (Bennani et al., 2021). In addition, more pathways into evaluation can be created, such as greater involvement in VOPEs and YEE networks (Bennani et al., 2021). Furthermore, there are currently no undergraduate programs in evaluation like there are in economics, sociology, public health, and the like (LaVelle et al., 2019). Evaluator education programs can help better produce the expert knowledge that distinguishes evaluation (Gullickson, 2020), as well as help promote formal mechanisms for jurisdictional boundaries in evaluation. Non-evaluator and other professionals had clear pathways into their respective fields, many of which they began to pursue as undergraduates; evaluators did not. This study suggests exploring the development of undergraduate programs in evaluation could be fruitful.

Another area of future work is related to professional identity. There have been few empirical studies exploring how evaluators become evaluators or on evaluator identity. We believe this study’s findings confirm many existing beliefs or hypotheses related to evaluator’s journey into the field, such that they “fall into” the field. It also raises important questions. For example, future work exploring the meaning of evaluator identity could explore where the majority of those who belong to VOPEs fall on the continuum from weak to strong evaluator identity; and what differentiates someone with a weak evaluator identity from someone who does not and never will identify as an evaluator. Future work exploring the development of evaluator identity could explore whether it is possible for someone with a weak or nonexistent identity of an evaluator to develop a strong evaluator identity, and the processes by which that happens. Future work on evaluator identity boundaries could explore the connections between group membership and its consequences for practice.

Future empirical work would also be enhanced by considering the ways in which individual professional identity shapes and is shaped by organizational, institutional, policy-making, and other factors. Work outside of evaluation has explored tensions between professional identity and constraints imposed by social structures (Jenkins, 2014). There is also emerging work on how political or organizational factors may impact professional identity (Azzam et al., 2021; Eckhard and Jankauskas, 2019).

Social context plays a role in professional identity development, and so more work into how the professional identity develops in other evaluation settings is another potential area. For example, how does the professional identity of evaluators develop within and across European countries, each with their own evaluation culture (Toulemonde, 2000), and how does that compare to results gleaned from that of the US context represented in this study.

Our next steps in this larger research project are to continue examining the differences in applied work between evaluators and non-evaluators, as a systematic exploration of evaluation’s making and maintaining boundaries. For example, we also asked participants to describe the purpose of their work; the roles they hold; how they advocate for use, social justice, and social change; and the role that values and value judgments play in their work. We anticipate expanding this work at a later date through a larger survey of applied professionals, to examine the extent to which these findings hold across a more representative sample of evaluators and non-evaluators, as well as among subgroups within evaluation (e.g. academics versus non-academics; internal versus external evaluators; comparing White, Black, Indigenous, Asian, Latino, and other evaluators of color). Our ultimate aim in this line of research is to better clarify what being an evaluator means, how this identity develops, and understand the boundaries between evaluators and other similar professionals.

Footnotes

Acknowledgements

We thank Laura Freise and Victoria Tran for their support in early stages of this research project.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Maybelle Ranney Price Professorship by the University of Wisconsin-Stout.