Abstract

While evaluations are critical for non-governmental organizations to strengthen their advocacy strategies, evaluators and advocates encounter many difficulties evaluating such efforts. This article discusses the contribution of the participatory process evaluation methodology to advocacy evaluation, using a Dutch global health advocacy program as a case study. As participatory process evaluation is a novel methodology in the field of advocacy, the article’s primary focus concerns the application and utility of the methodology. Findings suggest that participatory process evaluation in an advocacy context can provide insights into the implementation of advocacy tools and activities, encouraging reflection and leading to ideas and practical tools to strengthen advocacy efforts. While participatory process evaluation can help overcome some of the often-experienced barriers in advocacy evaluation, further research is needed to consolidate advocacy evaluation theory and practice.

Keywords

Introduction

Policymaking is a crucial political process, influenced by institutional structures, circumscribed political environments, and, mostly, the actions of global health actors (Bruen and Brugha, 2014), and shapes the field of global health. Increasingly, non-governmental organizations (NGOs) prompted attention to those determining the strategic direction for population health investments at the global level and those who can influence this (Bruen and Brugha, 2014; Coates and David, 2002; Edwards, 1993; Ford, 2013; McDougall, 2016). Advocacy involves a range of activities, including educating and mobilizing the public, researching the problem at stake, agenda setting, suggesting solutions to those in power, and undertaking policy monitoring and feedback (Boris and Mosher-Williams, 1998; Fagen et al., 2009; Reid, 2000). Evaluation of these activities is crucial to strengthen strategies, extract lessons learned, and determine the next steps (Forti, 2012; Fyall and McGuire, 2015; Jackson, 2014; Mulholland, 2010). Moreover, by evaluating their advocacy work, NGOs may better understand and demonstrate their impact within an accountability frame to donors (Guthrie et al., 2005; Hammer et al., 2010). Nevertheless, evaluations of NGOs’ advocacy activities are currently very limited (Almog-Bar and Schmid, 2014; Glass, 2017).

Based on existing literature, three main reasons for the low-level of advocacy evaluation by NGOs can be identified. First, NGOs have limited human and financial resources devoted to evaluation (Coffman, 2009; Jones, 2011). Second, advocacy organizations are hesitant to conduct and share evaluations due to fear of revealing strategies, which could benefit competing organizations or those to be influenced (Patton, 2008). Finally and maybe most pressing, advocacy evaluations are difficult to conduct, causing reluctance among advocates and evaluators (Glass, 2017; Reisman et al., 2007). Advocacy evaluations face similar methodological challenges as other program evaluations, including determining causality, data collection, and defining effectiveness of individual strategies (Payne and McGee-Brown, 1994). But as advocacy is contested and power-charged (Coe and Schlangen, 2011), it is “generally impossible to attribute changes in systems, public opinion, or policies to just one organization’s actions” (Glass, 2017: 50). Also, it is difficult to incorporate the constant change of advocates’ strategies in relation to their context (Coe and Schlangen, 2011).

Aiming to overcome (some of) these challenges, this article presents a novel way to evaluate advocacy programs, inspired by the learning-oriented approach (LOA), the participatory process evaluation (PPE). PPE is an approach that emphasizes stakeholder engagement and collaboration in the evaluation process, with a focus on understanding the process of program implementation and the perspectives and experiences of those involved in the program (Hearn and Nilsson, 2017). By combining process evaluations with participatory methods, PPE may provide a comprehensive understanding of the advocacy program, its mechanisms of change, and the perspectives of stakeholders involved in the program. By testing whether PPE can be operationalized in a real-life advocacy context a case study was conducted, aiming to identify the conditions required to conduct a PPE study in the advocacy field. Finally, this article presents and discusses what PPE adds to advocacy programs and evaluation methodology.

Conceptual orientation

Two approaches in advocacy evaluation can be distinguished: outcome-oriented or goal-focused and LOA and goal-free approaches. Outcome-oriented evaluation aims to measure the main outputs and outcomes of advocacy activities against predefined log frames (Andrews and Edwards, 2004), checklists (Barkhorn et al., 2013), or blueprints that underpin the program (Gen and Wright, 2013). However, most outcome-oriented methods face similar critiques: most frameworks are not equipped for long-term (multiple goal) advocacy programs, lack ability to showcase the process and story behind the outputs, and do not necessarily lead to improved future performance (Edgett, 2002; Forti, 2012; Gill and Freedman, 2014; Glass, 2017). The Theory of Change (ToC; Klugman, 2011) and outcome-harvesting (Arensman, 2020) methodologies have attempted to overcome these critiques by creating a more processual approach to outcome-oriented evaluation and engaging more flexibly with changing conditions during program implementation (Glass, 2017). Yet, increasingly scholars argue that evaluation should provide reflection into the complex process of change, personal decision-making mechanisms, and capacity development trajectories during the program life span which may, for example, have led to additional outcomes that had not been captured by the log frame or ToC of a program (Aantjes et al., 2022; Arensman et al., 2018; Gasper, 2000; Harada, 2020).

Opening up to multiple possible pathways to assess and explain program effectiveness has also been core to the so-called goal-free evaluations, which emerged as a counter approach to evaluating programs solely by goal achievement (Birckmayer and Weiss, 2000; Youker et al., 2014). The quest of capturing a more comprehensive understanding of achievements, including unintended effects and broader contextual influences on process of the program, appears more congruous with the complex advocacy context, involving numerous actors and (divergent) advocacy strategies interacting with one another in a highly dynamic environment. By adding an LOA to the evaluation design, the assessment can close in on questions as to how, why, and by what factors the work of advocates was influenced (Glass, 2017), with a view to not only utilize this information to feed into an evaluation report but also apply the learning in subsequent phases of the program (Preskill, 2008 ref). LOA can be adopted into an evaluation in many ways as long as the evaluation method allows for a focus on generating insights, lessons, and knowledge from the evaluation process, with an emphasis on improving future actions and decision-making (Abboud and Claussen, 2016). Ringsing and Leeuwis (2008) previously found that LOA is of benefit in the monitoring of advocacy programs, and helps to obtain insights into its progress, account for contextual dynamics, and make interpretative differences explicit. Moreover, as advocacy takes place within rapidly changing contexts, an analysis of the agility, purpose, and intentions of advocates in response to these changes is important (Devlin-Foltz et al., 2012). In this article, we argue that the same holds for advocacy evaluation as evaluation methodologies that encourage a deeper enquiry into advocacy processes will help identify opportunities for organizational learning to improve advocacy strategies (Ebrahim, 2005; Fowler, 1995). While in theory LOA holds potential, little research has been conducted on the use of LOA in advocacy evaluations (Ringsing and Leeuwis, 2008).

Operationalizing an LOA

Process evaluations lend themselves well to operationalizing an LOA (Carless, 2015). Process evaluations describe the program and activities, identify the barriers and facilitators for successful implementation of tools and activities within the program, and assess lessons learned (Getachew-Smith et al., 2022). It assesses longer term processes and outcomes, contextualizes and explains these outcomes, and aims to improve future performance (Freimuth et al., 2011; Nutbeam, 1998; Saunders et al., 2005). Moreover, this method is built on the acknowledgment that perceptions of “progress” and “success” vary between stakeholders and over time (Cornwall, 2014).

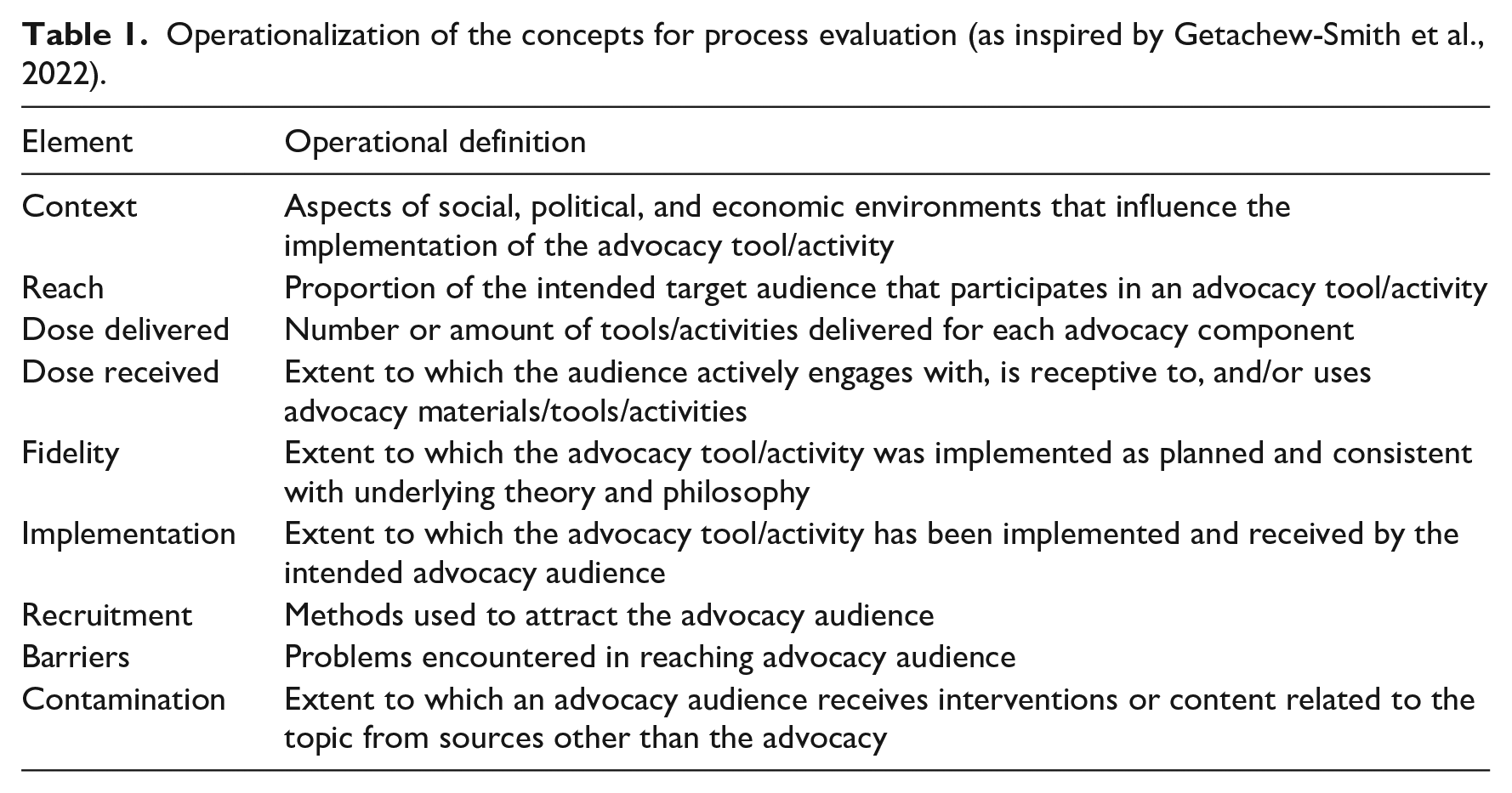

While many evaluators have their own approach to conducting process evaluations, a recent systematic literature review found an increasingly accepted core set of elements brought together in a, in this article called, Process Evaluation Framework (Getachew-Smith et al., 2022). This framework includes an assessment of the (program) context, reach, output, fidelity, implementation, recruitment, barriers, and contamination (Getachew-Smith et al., 2022). These concepts are operationalized for advocacy contexts in Table 1. Process evaluation methodology has successfully been applied to advocacy-related fields like health and communication campaigns (Linnan and Steckler, 2002; Saunders et al., 2005). Yet, to our knowledge, no process evaluation of an advocacy program has been published in academic literature.

Operationalization of the concepts for process evaluation (as inspired by Getachew-Smith et al., 2022).

Incorporating personal reflections and participatory methods

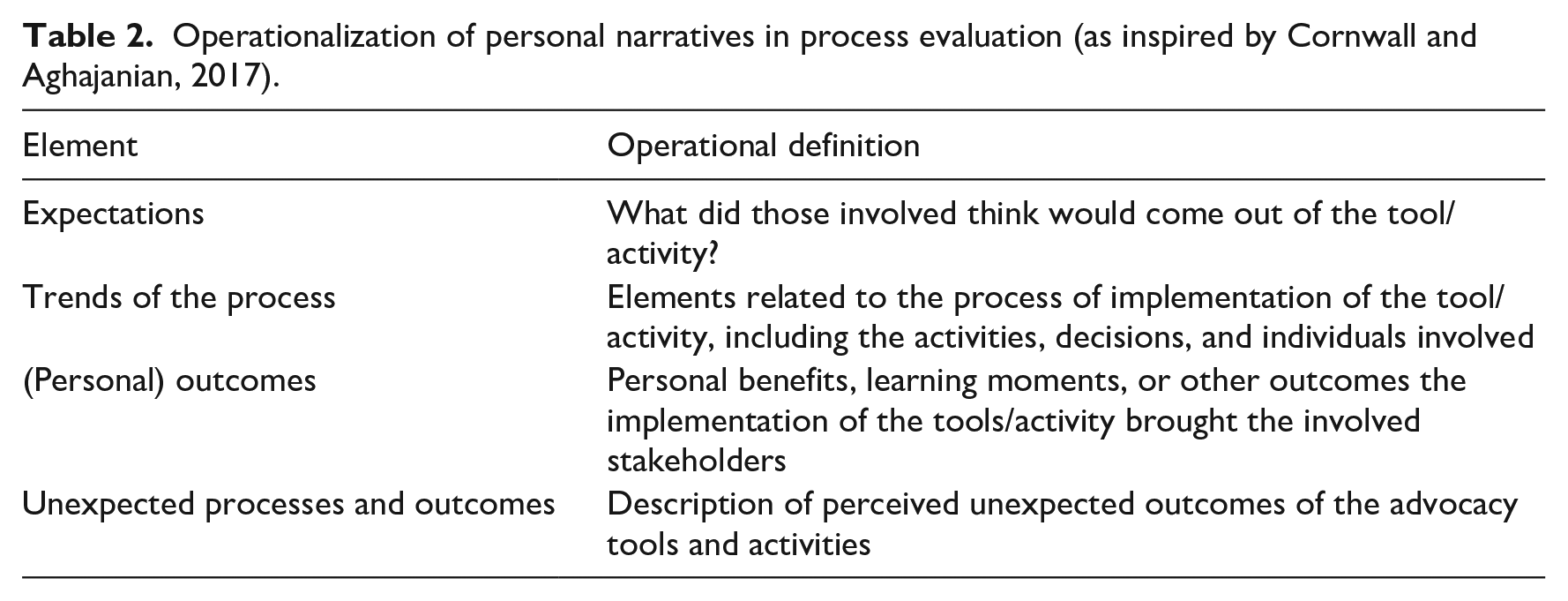

Advocacy cannot exist without advocates, who undertake skillful strategic actions within the context of their (organizations’) belief systems to deploy change (Luxon, 2019). Cornwall and Aghajanian (2017) describe questions regarding personal narratives in process evaluations to stress the expectations, outcomes, and reflections of those involved in process evaluations. The concepts are operationalized for advocacy contexts in Table 2.

Operationalization of personal narratives in process evaluation (as inspired by Cornwall and Aghajanian, 2017).

Participatory research methods can be deployed “to get to grips with the life of an intervention as it is lived, perceived, and experienced by different kinds of people, including program or project personnel” (Cornwall and Aghajanian, 2017: 174). In participatory studies, the end users of the products, in this case, advocates, are part of the research process, for example, by defining research questions or disseminating findings (Israel et al., 2001; Mergler, 1987; Smith, 2007). These studies highlight the importance of reflection and learning from the research process and the findings, endorsing the LOA approach. This may also help to adjust evaluation methods and processes from an NGO’s perspective on applicability and feasibility. This perspective is currently missing in academic literature, despite their insights and experiences potentially adding to the development in evaluation methods (Whelan, 2009).

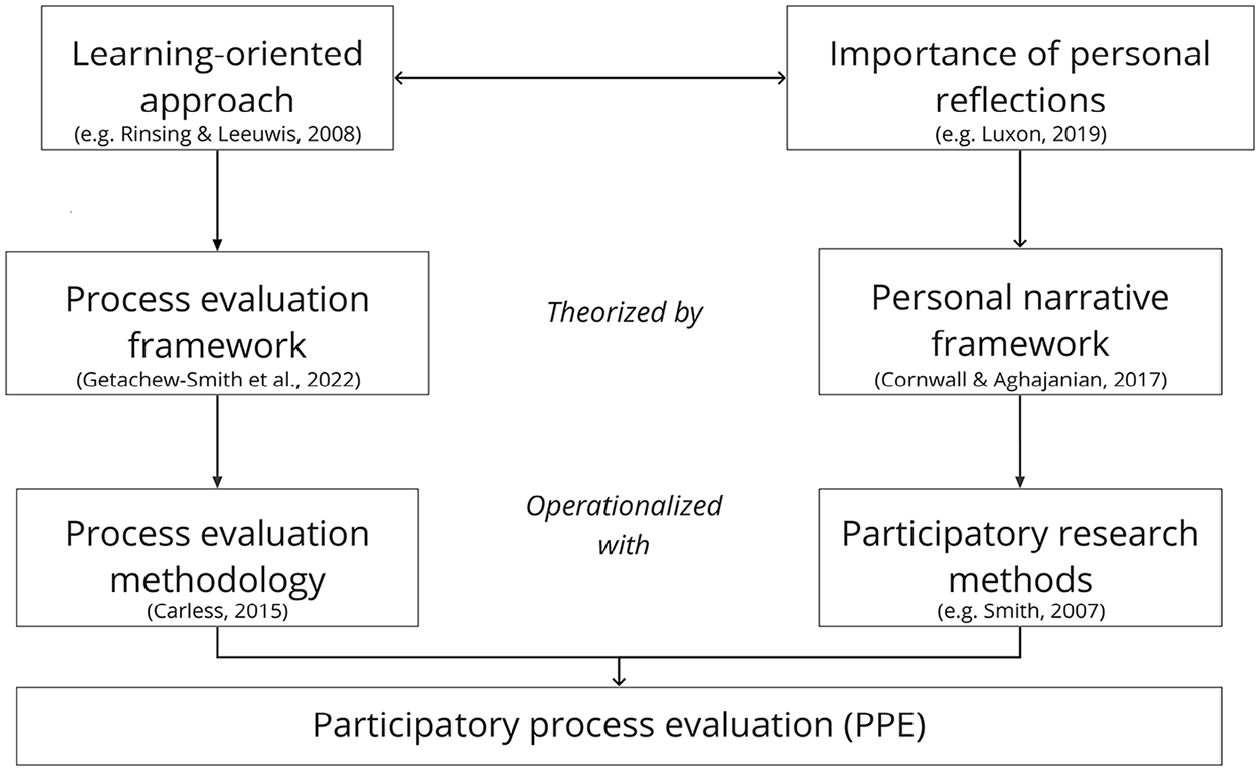

Participatory process evaluation

Combining the process evaluation as a methodology to enhance LOA while incorporating the reflections of the advocates, this article proposes that a PPE can be a potentially useful method in advocacy evaluation (see Figure 1). PPE can provide valuable insights into the effectiveness of programs, particularly in complex and dynamic contexts where traditional evaluation approaches may be limited in their ability to capture the nuances of program implementation and impact. PPE emphasizes stakeholder engagement and collaboration in the evaluation process, with a focus on understanding the process of strategy implementation and the perspectives and experiences of those involved in the program. While several scholars have emphasized a focus on (internal) processes in advocacy evaluation (Arensman et al., 2015; Christoffel, 2000), no PPE in an advocacy context has been found in the published literature. While this conceptual orientation highlights the potential usefulness of PPE, it needs to be applied to a real-life context to bridge theory and practice.

Conceptualization of PPE methodology.

Methods

In this article, a case study of a global health advocacy program was used to further construct the PPE methodology as well as assess its applicability for advocacy strategies. Case studies allow for a holistic, in-depth investigation to understand a real-life phenomenon while taking contextual conditions into account (Smith, 2018; Tellis, 1997).

Cordaid is one of the Netherlands’ biggest international value-based emergency relief and development organizations. The Global Health Global Access (GHGA) program aims to increase Dutch support for official development assistance and, in particular, for global health (Cordaid, 2022a). With over 4 years of advocacy efforts, a range of activities and tools have been developed and implemented, including debates, policy briefs, and advocacy networks. In 2022, the GHGA team commissioned an evaluation to get further insights into strengths and weaknesses of their advocacy strategy (Cordaid, 2022b). This provided a window of opportunity to assess the utility of PPE methodology in an advocacy context and establish if the approach could be recommended for wider use in the NGO community, while contributing to GHGA’s goal to learn and improve. This PPE was divided into five phases, containing a logic and structure that suited the needs of the GHGA team.

Phase I: Preparation (February 2022)

The aim of the first phase was to obtain a general overview of the evaluation context and the responsible advocacy team. The evaluator got acquainted with the GHGA program, by joining day-to-day team activities and conducting an initial document review. The team was divided into two groups: the Evaluation Coordinating Group (ECG) consisting of the program manager, the monitoring and evaluation expert, and the evaluator, who ensured the overall coordination of the evaluation from beginning to end. The Evaluation Team (ET) consisted of 10 GHGA team members who were involved through interactive workshops and updated weekly.

Phase II: Prioritizing (March 2022)

As advocacy programs are usually extensive in scope, the success of evaluations relies on choosing priority areas (Glass, 2017). By selecting priority areas, evaluators obtain a deeper understanding of the program’s impact and outcomes, while managing time and resources effectively (Aubel, 1999). The ET was involved in the prioritization to ensure ownership and responsiveness of the evaluation to the needs of the team and to ensure that the priority areas were illustrative for the advocacy strategy of the program (Aubel, 1999). An online interactive workshop was organized to introduce PPE and discuss the purposes of the evaluation and expectations of the GHGA team. The workshop was analyzed using note-based analysis, meaning that outputs, a debriefing exercise, and summary comments made during the workshop were scrutinized (Onwuegbuzie et al., 2009). The workshop was attended by nine ET members who created a list of eight tools and activities used in the program, which through prioritization was reduced to three tools and activities: (1) three explanatory stop-motion videos on key concepts in global health; (2) a set of webinars on the impact of COVID-19 on the global health field; and (3) a desk review on multilateral funding organizations. All three had been created and organized by GHGA to increase support for global health among the Dutch public and policymakers and were selected, based on three criteria: they were representative of Cordaid’s advocacy work; illustrative examples for learning; and carried significance for the future of the strategy planning.

Developing Cordaid’s PPE framework

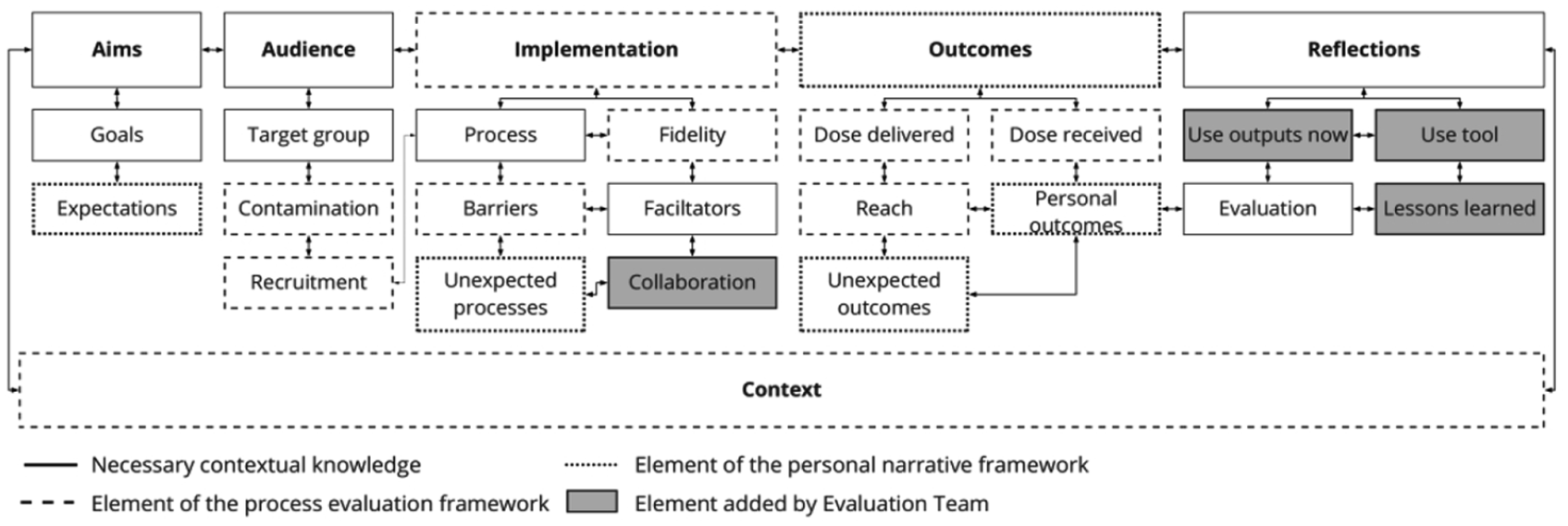

In addition, the workshop gathered input on a framework to guide the PPE. The evaluator created a “base framework” using the process evaluation framework (described by Getachew-Smith et al., 2022) and the personal narratives framework (described by Cornwall and Aghajanian, 2017). This framework consists of five key concepts from process evaluation theory: Aims focus on the goals and strategy of the advocacy set before the implementation of the tool or activity and what the expectations were of those involved. Audience refers to the target group, the methods used to attract them, and the extent to which they are influenced by other advocacy groups. Implementation concerns the extent to which the tool or activity has been implemented and received by the intended advocacy audience. Outcomes focus on what, according to those involved, were the results at the output, individual, and strategy level. Finally, reflections refer to how those involved personally and collaboratively evaluated the implementation and results. The five are further broken down into sub-elements operationalized for the advocacy context and can be found in Supplemental Table S1. The ET added another three elements: collaboration with other advocacy organizations, use of the selected tools today, and use of the type of tool or activity in the future. The framework is visualized in Figure 2.

Cordaid’s participatory process evaluation framework.

Phase III: Data collection and analysis (April–May 2022)

A document review was conducted to obtain more information on the selected tools and activities and identify those involved to form a participant sample, using purposive and snowball sampling (Singh, 2018). In total, 21 semi-structured interviews were conducted, using an interview guide based on the PPE framework, with team members involved in the development, coordination, and execution of the tool or activity under study. Most had been part of the GHGA team over the last 4 years in the capacity of manager, advocate, communication officer, program officer, or health expert. Five members were interviewed twice due to their engagement in more than one tool or activity. Two collaborative partner participants were interviewed, who had developed or implemented a selected tool or activity with GHGA.

All interviews were anonymously transcribed verbatim in ATLAS.ti Version 22.0.2. First, themes were identified in the transcripts (Braun and Clarke, 2006), after which transcripts were analyzed for themes mentioned in the PPE framework. In addition, inductive analysis was performed by respectively open, axial, and selective coding (Saldaña, 2014).

Participant observation and reflection

The evaluator embraced the skills and customs of the team and got a better understanding of the context by working at the Cordaid office, attending weekly meetings, and contributing to activities. Participant observation helped to acknowledge role(s), power relations, and the social dynamics woven into the research process (Pezalla et al., 2012). During this phase, she noticed that ET members who were not interviewed as they were not involved with the selected tools and activities felt excluded. Therefore, informal meetings were scheduled to discuss their experiences with the program. This added to understanding what was going on, with whom, when and where, in what way, and for what reasons (Johnson et al., 2006).

The evaluator also reflected on her own positionality in the process. As a Dutch, young, white, highly educated woman about to complete her education in global health, she had prejudices, biases, and ideas. Using reflection questions, she attempted to map her positionality and address and communicate her motivations, ideas, and doubts (Bourke, 2014). Moreover, she kept a reflection journal to facilitate an LOA and increase transparency (Ortlipp, 2008).

Data analysis of observational data was based on notes from what was observed in (in)formal conversations and activities (Kawulich, 2005). Dewalt et al. (1998) describe these notes as both data and analysis, as the notes are the product of the observation process during and directly after interaction with the research team.

Phase IV: Developing lessons learned (May 2022)

The fourth phase was designed to validate and discuss key findings of the PPE, guided by the PPE framework, and to collaboratively draw lessons learned. During data collection and analysis, the evaluator suspected that several findings could be taken personally by some ET members. For example, as many team members were personally involved and felt ownership of the tool or activity, it was unsure how critique—knowing that it was coming from direct colleagues—would be received. Therefore, additional measures were taken to maintain a positive learning atmosphere before, during, and after the workshop. First, the purpose of the workshop was discussed in several meetings to ensure team members shared an understanding of the workshop’s goal. Also, a brief overview of the findings was shared in the last meeting before the workshop to allow participants time to formulate questions. Second, the workshop was in-person only, and drinks were scheduled afterward, creating a comfortable environment. Finally, some participants seemed hesitant to share critical reflections. So, to limit barriers to voice opinions, the workshop was not recorded.

Seven ET members attended the workshop, and all perceived the meeting as “nice,” “fun,” and “useful.” First, the main findings of the PPE were shared for each selected advocacy tool or activity, including barriers and facilitators during the implementation process, outcomes, and use of the outputs today and in the future. Participants were asked to validate these findings by answering questions based on four key criteria for trustworthiness of qualitative research (Shenton, 2004). While the credibility and transferability (the extent to which findings per tool or activity could be generalized to the program) of the findings were found to be high, the workshop supported a more elaborate contextualization of the findings. This was necessary as some tools and activities were implemented 3 years prior to the evaluation, and since then the onset of a global COVID-19 pandemic led to a completely new advocacy context in which global health and pandemic preparedness became central topics within government policy. Overall, the confirmability of the findings (the extent that participants agree on the findings as a group) was high.

Based on the findings of the PPE so far, participants were asked to collaboratively identify overarching barriers and facilitators in the program and develop lessons learned that could guide their future strategy and practice. The workshop ended by deciding on what was needed to implement the lessons learned. This was analyzed using note-based analysis and the outcomes are further discussed in the “Results” section.

Phase V: Reflection and evaluation (June 2022)

The last phase consisted of a third interactive workshop with seven ET members to (1) evaluate and reflect on this PPE and (2) assess the potential usefulness of PPE for future advocacy strategies. The workshop was recorded and analyzed similarly to the other workshops. To finalize the case study, an evaluation report was created for Cordaid. Moreover, ET members were invited to contribute to the current article, which changed their role as a group to an individual role in the writing process: listener, co-thinker, advisor, partner, or decision-maker, as suggested by the Involvement Matrix (Smits et al., 2020).

Results

This section presents and discusses core issues to consider when applying PPE, what PPE brings advocacy teams, and whether PPE can help to overcome advocacy evaluation barriers.

Applying PPE

All ET members indicated that they enjoyed the PPE process, committed to using the method again, and would recommend the transparent, interactive, inclusive, and cooperative process to other advocacy teams. From the viewpoint of the ECG, no changes were made to the planning and no major obstructions were encountered in the application of PPE in the GHGA team. The PPE framework was found to be a useful instrument to guide the evaluation and reflection process, promote structure, and discuss the main evaluation topics that led to lessons learned. However, five conditions were found to be crucial to consider, discuss, and arrange in an advocacy team when conducting a PPE, which are discussed below.

Trust

First, it was found that this type of study requires trust among team members as well as trust in the evaluator. This is because the PPE among others aimed to reflect on elements in the process that were hindering, requiring participants to be critical of themselves and the team’s work. This was found difficult in some instances in the case study. During data collection, some interviewees were hesitant to share their more critical insights. For example, a participant mentioned: “I remember some things now, but I don’t know if I should tell them. . .” Moreover, in interviews many referred to other team members, stating that they were curious how they would reflect on the topic or arguing that they probably knew better. As the interviewed partners from collaborating organizations seemed to have fewer issues with providing critical insights, this hesitancy may relate to GHGA members being afraid to hurt each other’s feelings—which would not only harm the research process, but also the working relationship, and, thereby, outcomes of the program. For this reason, the evaluator aimed to build trust within the team by contributing to the activities of the team and stressing confidentiality. When participants were asked what contributed to having trust in the team, it was argued that they shared the same goals: improving the program and learning from each other.

Self-reflection

Several participants argued that the capability and willingness for self-reflection of the group facilitated this study. The way this PPE was constructed may have facilitated this; all participants were first interviewed individually, before discussing the findings as a group. Moreover, participants were always prompted positively. Finally, while the evaluator analyzed the data for the main findings per tool and activity, it was the team who analyzed these findings to identify the overarching barriers and facilitators for the program. Being able to reflect on an individual as well as a group level, while also contributing to the design of the evaluation itself, was found to set PPE apart from other advocacy evaluation methods. Moreover, it was argued that the fact that this was an internal evaluation, suggested by the team, helped being more self-critical than when the evaluation would have been done for solely donor-accountability purposes.

Nevertheless, this does not mean that self-reflection is easy. Many participants argued that interview questions were hard to answer due to the details level. Another reason was that participants felt ownership of the tools and activities under evaluation and felt a little embarrassed if they could not answer what they regarded as fundamental issues (e.g. Who was the target group?). Yet, this also helped participants to reflect on what they did not know (anymore) or was not documented, as one noted that “it also sharpens me in some way.”

Setting

According to all participants, it was crucial to be aware of the setting in which PPE was implemented. Sharing the same geographical location and context was found to be facilitating according to participants. Moreover, participants experienced a non-hierarchical environment, which was found helpful. The fact that this evaluation was situated in the Netherlands was found to be a possible confounder in this. A participant from Latin America argued that in her hometown, being critical of the work of someone in a higher position is “not done.” Another factor that may be of influence is that the GHGA team had a vast majority of team members who identified as women. One participant argued: “It would be quite different for me as a young woman to speak up in a room of older men.” This also speaks for the evaluator who shared the same age range and gender.

However, aside from structural contextual factors, participants argued that the setting was also influenced by the setup of the PPE. Participants felt the safe space during the workshops was enforced by, for example, using Chatham House Rules to encourage openness, continuously repeating that it is about learning, framing the findings in a non-personal manner, and providing snacks to differentiate it from a formal meeting. Moreover, as the evaluator was part of the team, she was aware of what was (not) “normal” in the team and could step into the discussion more easily.

Understanding the purpose of PPE

An important reason for advocacy teams to conduct an evaluation is to assess the outcome and impact of their advocacy activities. PPE, however, starts from an LOA, examining how the program’s activities and tools have been implemented and how they have contributed to achieving the program’s goals. By focusing on the process of strategy implementation and stakeholder perspectives, the PPE methodology was found to provide a comprehensive understanding of the advocacy program and its gaps and opportunities for improvement but not necessarily on the effectiveness and impact of the program. The ET argued that this evaluation created clear insights into the most important—and especially unexpected—outcomes of the tools and activities. With some tools and activities, they felt they could easily pinpoint its effect based on the PPE findings. For example, when during one of the webinars a Dutch Minister endorsed the value of Health System Strengthening—a topic GHGA stressed for years. In other cases, however, the GHGA found that PPE may not have provided them with enough information on the impact of the evaluation. In an interactive workshop the topic of knowledge on the effectiveness, outcomes, and impact of the tools and activities and thereby program was further discussed. “I think one of the problems with the term ‘effectiveness,’ is that we did not define it beforehand. How, then, are we going to measure effectiveness?,” said one participant. Yet, even if effectiveness was further defined throughout the advocacy program, another participant pointed out that “it’s our own internal perception of to what extent our tools have been effective or not.” It was concluded by the participants that PPE can provide insight into the impact of an advocacy program, but it does so indirectly by focusing on the teams’ ways of working that underlie the program’s impact. The PPE methodology was found to help advocates identify whether their strategies and tools have been implemented as intended, whether they have been effective in achieving their goals, and how they have been perceived by stakeholders. This information can then be used to refine and improve the program’s strategy, increase effectiveness, and improve outcomes and impact. In conclusion, while PPE may not provide definitive evidence of impact on its own, it can help (1) to learn more about the implementation processes and the team, and (2) to get activated to change ways of working.

In her reflection journal, the evaluator concluded that in future PPEs, further attention needs to be paid to explaining the purpose and possible outcomes of PPEs in advocacy programs. When the ET was asked how to operationalize this, participants argued it would be helpful if there was a manual they could follow. Due to the pioneering nature of this study, this did not exist. Therefore, the ET endorsed and opted to co-write the current article, as it could also serve as a manual for other advocacy teams conducting PPE.

Resources

PPE requires considerable time investment from participants and some experienced this as a barrier. While many argued that it was worth it, it was hard to schedule enough time for these efforts. During the evaluative workshop, it was discussed whether and how the PPE could be shortened, but all participants agreed that this would lead to counterproductive outcomes.

In addition, as this research was part of a graduate study costs were low, but if an external evaluator was contracted for the same time investment, PPE could become expensive for NGOs. A participant argued that less intensive involvement of the evaluator in the team could help reduce costs, but also stressed that the approach in this study was beneficial.

What does PPE offer to advocates?

To assess what PPE can offer advocacy programs, this section sheds light on the outcomes and outputs of the PPE study in the GHGA team.

Insights into barriers and facilitators

For the GHGA team, PPE provided insights into the implementation of advocacy tools and activities, and, thereby, outcomes. Facilitators included being adaptive and flexible in seizing (political) opportunities; having a strong, productive, and close team; having a flexible donor; having good links and partnerships with politicians and other NGOs; and being able to reflect critically on processes and outcomes. Barriers included a lack of strategic long-term planning, lack of follow-up after an activity or publication of a tool, lack of evaluation, and not having activities, tools, and communication aligned. The assessment of specific tools and activities employed in the advocacy process facilitated the identification of valuable lessons that can be applied in general strategy planning. In the case of GHGA, 12 lessons learned were developed by the team to strengthen the program.

Reflection on ways of working

Overall, all tools and activities under evaluation were found to contribute to the goals and progress of the GHGA program. Yet, for all tools and activities participants argued they could have been more effective if adjustments had been made in the implementation process. For example, in creating the explanatory videos on global health more research on the target group may have improved the engagement. When discussing the reasons behind this observation, the ET found that the program was mainly focused on “completing” the task of organizing an advocacy activity or creating a tool, as was planned in the proposal for the donor.

PPE was found helpful in rethinking the way an advocacy team is collaborating, as one participant argued that the PPE helped her to reflect on opportunities to improve. In addition, the ET members pointed out that the reflection, openness, and trust helped to determine not only the strengths of the individual team members, but also how to complement each other.

Tools to improve

Finally, it was argued that PPE leads to concrete and practical outputs, which can be used to improve the day-to-day work of an advocacy team. One of the lessons learned was that it is crucial to write down advocacy targets and goals before starting implementation and to continue with (self-)reflection on the produced activities and tools. Therefore, a short format for planning and evaluating the strategy for the tool or activity was co-developed by two ET members and the evaluator. The format was discussed with the ET and is now regularly used by Cordaid.

Another lesson learned was that the team should take more time to assess the progress of the program. Therefore, it was decided to schedule a “midterm review” every 6 months to reflect on the original planning and executed advocacy activities and their effects, while looking at the coming period. The outline for this review was co-created by two ET members and the evaluator, and tested in June 2022. At the end of the PPE, all members argued that they (will) convert the insights and outputs of this study into practice. One participant concluded that PPE did not only lead to clear outputs, such as formats and outlines, but also created “new ways of thinking about solutions.”

PPE and barriers to advocacy evaluation

Finally, this study aimed to assess whether PPE can help to overcome some of the earlier mentioned challenges to advocacy evaluation. The challenge of having too limited resources for evaluation cannot be overcome by PPE due to the time intensiveness. Yet, the ET argued that the outcomes of PPE, namely a stronger team and outputs to improve, may also be cost saving and lead to better outcomes in the long term.

Second, the often-experienced hesitancy of NGOs to evaluate advocacy was not observed in this PPE. Due to the participatory methods, the focus on internal processes, and experienced sense of ownership, the ET was motivated to co-conduct this evaluation. However, some hesitancy to share the findings of the evaluation in this article did occur.

Third, looking at the methodological challenges, no problems with data collection were found, as participants were motivated to contribute. Focusing on overcoming the difficulties in determining the relationship between intervention and outcome, one could state that PPE allows making statements about the relation between the implementation and the outcome of a tool or activity. However, PPE cannot independently prove the impact of advocacy efforts and can, therefore, not overcome the methodological challenge of attributing change to one organization’s actions. Yet, some participants argued focusing on the process “gives us a different approach to how we look at an evaluation for lobby and advocacy.” Turning to the constantly shifting context that is often hard to incorporate in evaluation, PPE is found to not only incorporate context through data collection and reflection, but also allows a flexible approach to evaluation. However, as participants had difficulties remembering all details, it is possible that not all contextual factors were optimally incorporated. In future PPEs, this can be countered by focusing on more recent tools and activities.

Discussion

Given the many challenges advocacy evaluations bring, this study aimed to present, test, and reflect if PPE can overcome some of these challenges. In the case study of Cordaid’s global health advocacy program, PPE was found to help advocates to better understand the barriers and facilitators of their implementation processes and adjust their strategies accordingly. These findings are in line with process evaluations in other contexts (Getachew-Smith et al., 2022), with PPE being able to adapt to fit the unique needs of an advocacy team. This was enhanced by the stakeholder involvement in the study, which motivated advocates to reflect on their role, work, and knowledge as an individual and as a team, which according to a study by Law et al. (2016) is crucial for health advocates to grow in their role. Moreover, the LOA helped GHGA to develop lessons learned, create clear outputs to implement these, and encourage advocates to change their way of working. On a more academic level, this study added to the existing knowledge by theorizing and applying a novel approach in the challenging field of advocacy evaluation. Moreover, this article aimed to present the often-missing NGO’s perspective on advocacy evaluation.

While this article shows that PPE is applicable in an advocacy context and that PPE can help overcome some of the issues advocacy evaluations face, it is important to note that the described case study was not without challenges and that the advocacy evaluation field still faces obstacles. Some of the suggestions on how to overcome these difficulties are already discussed in the “Results” section as they were proposed by the ET, but the remainder of the “Discussion” section presents further interpretations, insights, and conclusions.

Learning how to conduct a PPE

While PPE incorporates learning and reflection throughout the evaluation, it is important to note that conducting this PPE is a learning process in itself. Throughout the study, the evaluator reflected, doubted, struggled, adjusted the study, and learned. It took time to understand what, for example, a safe space looked like for the GHGA team, and how the team communicated, shared emotions or feelings, and was activated to be involved.

Moreover, it made the evaluator question to what extent she should adapt herself to the ways of the team and to what extent she adhered to her own safe space and manners. Literature on grassroots advocacy emphasizes the need for evaluators to respect the culture of the organization they are assessing (Foster and Louie, 2010), but this study argues that it is about more than respect; evaluators need to understand. This may be even more important in advocacy teams, where the context and priorities, and thereby the motivation and focus on the PPE, constantly change. The positionality of evaluators is an important topic, yet little is published on positionality within an advocacy evaluation context. Therefore, evaluators are encouraged to share more insights into their personal experiences and development in these processes to enhance mutual learning.

Toward an advocacy evaluation toolbox

PPE can help overcome challenges in advocacy evaluation related to the hesitancy of NGOs to conduct an evaluation, data collection problems, and the incorporation of the context in evaluation (design). Yet, it cannot independently determine the effectiveness of advocacy tools, nor the impact of the program. Inclusion of the perspectives of advocacy audiences or external stakeholder perspectives may create a better insight into the impact of the program, but can also be challenging to add to the PPE due to concerns of oversharing strategies. Studies using an outcome-oriented approach are found to shed more light on the impact of programs (Jones, 2011; Klugman, 2011), but also, as explained before, have their own limitations. Attempting to overcome all of the barriers mentioned above, one could consider combining elements of outcome-oriented approach and LOA. Hypothetically, this is a unique and powerful combination of approaches. For example, PPE can be criticized for having too little focus on how the implementation of tools and activities may lead to impact of the program. Using PPE in conjunction with the more outcome-oriented ToC, by for example explicitly formulating the ToC with PPE participants during one of the workshops, may help explain the logic steps toward outcomes of an advocacy initiative, the relationships between these steps, and the underlying assumptions and contextual factors (Ghate, 2018). The combination of PPE and the ToC may help overcome the critique that ToC focuses too little on the process (Arensman et al., 2018) and can potentially provide insights into often neglected negative outcomes (Harada, 2020). Yet, further research is needed to assess the applicability of a combination of approaches in different advocacy settings.

Continuing on adapting evaluation methods, it is important to note that advocacy is constantly evolving, and the methods used to evaluate advocacy programs should do the same—including during the evaluation (Glass, 2017). In the case of PPE, the design allows flexibility and adaptation to the goals, strategies, and focus of an advocacy team responding to the context it is situated in. Moreover, PPE is moldable to the team’s needs and ways of working. Customization is needed to ensure relevance, as “it is unlikely that useful prospective tools will ever be ‘off the shelf’ materials” (Gienapp, 2011: 15). So, while PPE worked for this team and this field, it should be applied to more cases in different advocacy contexts to further explore applicability and usefulness of this method.

Thus, moving beyond either an LOA or an outcome-oriented approach to advocacy evaluation and considering that evaluations should adapt to the advocacy context and team, this article suggests thinking about advocacy evaluation methodology as a toolbox. This toolbox presents a variety of methods, each with its own strengths and flaws. This study aimed to contribute to this toolbox by introducing this novel evaluation approach and demonstrating how it can be applied in practice. Advocacy evaluators are encouraged to use, combine, and develop methods in this toolbox to ensure continuous learning.

Conclusion

This study tested a PPE for evaluating advocacy programs to overcome challenges encountered in advocacy evaluation. PPE was found to be a successful approach for Cordaid’s global health advocacy program, helping to address challenges such as data collection and context incorporation. However, challenges in determining tool effectiveness and program impact, as well as resource constraints, remain. Further research is needed to explore PPE’s application in other contexts and improve evaluation methods for advocacy.

Supplemental Material

sj-docx-1-evi-10.1177_13563890231200057 – Supplemental material for Understanding the what, how, and why in advocacy: Assessing the applicability of participatory process evaluation methodology in an advocacy context

Supplemental material, sj-docx-1-evi-10.1177_13563890231200057 for Understanding the what, how, and why in advocacy: Assessing the applicability of participatory process evaluation methodology in an advocacy context by Bente van Oort, Hilda van ‘t Riet, Adriana Parejo Pagador, Rosana Lescrauwaet Noboa and Carolien Aantjes in Evaluation

Supplemental Material

sj-docx-2-evi-10.1177_13563890231200057 – Supplemental material for Understanding the what, how, and why in advocacy: Assessing the applicability of participatory process evaluation methodology in an advocacy context

Supplemental material, sj-docx-2-evi-10.1177_13563890231200057 for Understanding the what, how, and why in advocacy: Assessing the applicability of participatory process evaluation methodology in an advocacy context by Bente van Oort, Hilda van ‘t Riet, Adriana Parejo Pagador, Rosana Lescrauwaet Noboa and Carolien Aantjes in Evaluation

Footnotes

Acknowledgements

The authors thank all participants and Evaluation Team members, in particular Fenneke Hulshoff Pol and Paul van den Berg, for their contributions to the project.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: At the time of this study, BvO was an intern at Cordaid as part of her training in research master in Global Health, Vrije Universiteit Amsterdam. This study was part of her master thesis.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.