Abstract

The use of multi-agency partnerships, including research-practice partnerships, to facilitate the development, implementation and evaluation of public health interventions has expanded in recent years. However, gaps remain in the understanding of influences on partnership working, and their capacity to facilitate and use evaluation, as well as the characteristics which lead to partnership effectiveness. We applied qualitative methods to explore experiences of stakeholders who were involved in partnerships to deliver and evaluate a national physical activity programme. We combined thematic and network analysis, and drew on concepts of evaluation use, knowledge exchange and organisational systems to interpret our findings and develop a conceptual model of the relationships between partnership characteristics and processes. Our model identifies key partnership characteristics such as high levels of engagement, regular communication and continuity. Furthermore, it highlights the importance of implementing organisational structures and systems to support effective partnership working, knowledge exchange and capacity building.

Background

As our understanding of the wider determinants of health behaviours has grown, there has been an increasing appreciation and understanding of the need for multi-agency and multi-component approaches to address complex public health challenges such as increasing population levels of physical activity (Rutter et al., 2019). Examples include interventions that aim to address multiple influences on behaviour through adopting a range of modes of delivery and intervention functions, such as environmental restructuring alongside education (Michie et al., 2011). As a result, there has been an expansion of cross-sector and inter-organisational partnerships to facilitate intervention development, implementation and evaluation. These include partnerships between physical activity providers and health organisations (Cavill et al., 2020; Daniels et al., 2018; Mansfield, 2016). In parallel, demands for evidence-informed interventions have driven increasing interest in research-practice partnerships, which bring researchers together with practitioners responsible for programme delivery. These partnerships provide opportunities for collaborative approaches to address complex health behaviours and to understand the implementation and effectiveness of complex interventions (Estabrooks et al., 2019; Harden et al., 2017). While this study takes a national physical activity intervention as a case study, the findings are likely to be applicable to other interventions in any domain that operates in similar multi-agency contexts.

Evidence-informed policy and practice relies on evaluation, dissemination and ‘evaluation use’ (Bowen and Zwi, 2005; Brownson et al., 2014). Definitions of ‘evaluation’ typically highlight the assessment or appraisal of an activity, project or programme to provide accountability and facilitate learning for future practice, while also recognising the importance of evaluation as a process and of understanding its purpose and users (Weiss, 1998; World Health Organization, 2013). Dissemination is the process of communicating findings in ways that will facilitate their use in practice (Wilson et al., 2010) and knowledge exchange or transfer of knowledge into action is central to this. Following Alkin and King’s conceptual model (Alkin and King, 2016, 2017), the term ‘evaluation use’ includes both the use of evidence generated (findings use) and the effects of being involved in evaluation (process use). Their typology of evaluation use provides a framework to differentiate between the source and stimulus for use (findings or process), and how it has been used, for example, to inform direct actions (instrumental use); in improving knowledge or changing attitudes (conceptual use) or justifying decisions and actions (symbolic use) (Alkin and King, 2016). Alkin and King also note that a broad definition of evaluation use incorporates the influence of an evaluation on wider systems (Alkin and King, 2017). For consistency with the evaluation literature, we have applied these terms in our descriptions of evaluation use.

Research-practice partnerships (referred to as ‘partnerships’, hereafter) have been advocated as an approach to facilitate evidence-based practices (Brug et al., 2011; Harden et al., 2017; Mansfield, 2016; Nyström et al., 2018). Engagement of practitioners and policymakers in an evaluation can improve understanding among researchers of what evidence is relevant and valued for decision-making in a real-world context, while engagement of research partners can bring knowledge and expertise to help identify and implement appropriate and innovative evaluation methods, and improve the rigour of evaluation (Brug et al., 2011; Page-Reeves and Regino, 2018). Furthermore, dissemination and evaluation use can be improved by research partners’ understanding of the appropriateness of evidence for academic publication; this has the potential to increase the likelihood that evidence is taken up and used to inform policy and practice decisions (Harden et al., 2017). Yet, despite this potential, gaps between evaluation, knowledge exchange, and evidence use in organisations responsible for the design and delivery of health interventions continue to limit institutional learning and lead to unnecessary cycles of programme re-invention (Brownson et al., 2018). A key challenge is that we do not understand well how partnerships can be shaped and implemented to improve practice.

Studies that have explored partnership working within physical activity and health promotion interventions have identified several benefits and challenges (Brug et al., 2011; Habicht et al., 1999; Harden et al., 2017; Mansfield, 2016; Nyström et al., 2018; Page-Reeves and Regino, 2018; Schwarzman et al., 2018). Benefits include the generation of practice-relevant evidence, capacity building, improved implementation of evidence-based practices and access to additional funding and resources (Harden et al., 2017). Challenges include differing evaluation priorities and objectives, time scales, and organisational systems and cultures (Bowen and Zwi, 2005; Schneider et al., 2016). Different stakeholders’ demands for evaluation, the value they place on different forms of evidence, and how partners interact to implement appropriate evaluation methods within certain contexts influence the capacity to conduct and use evaluation (Alkin and King, 2017; Cousins et al., 2014). Indeed, models of evaluation and evaluation use have focused on capacity building at the organisational level (Amo and Cousins, 2007; Cousins et al., 2014; Preskill and Boyle, 2008). Labin et al.’s integrative evaluation capacity model (Labin et al., 2012) highlights the importance of collaborative processes.

Collaboration and partnerships are defined variably but are often presented as a continuum from networking (described as a more distal loose relationship), through co-ordination and co-operation, to collaboration, where collaboration is framed as true, reciprocal partnerships, in which all stakeholders influence activities, share resources and experience mutual benefits (Carnwell and Carson, 2005; Saltiel, 1998). We have used the term ‘partnership’ to encompass the range of relationship types embedded within that continuum. We have applied the term ‘partnership working’ to describe any context in which two or more actors interact for the purposes of their work (in this case, project design, delivery and/or evaluation). The term ‘network’ has been used to describe the set of relationships (or links) between actors (Hanson et al., 2008; Kothari et al., 2014).

Previous studies (Schneider et al., 2016; Schwarzman et al., 2018), including our own (Fynn et al., 2021), have highlighted the complex interconnections between influences on partnership working and evaluation practices. These studies identified limitations in the empirical evidence and gaps in our understanding of organisational structures and processes within multi-agency partnerships (Page-Reeves and Regino, 2018; Schwarzman et al., 2018). Questions remain regarding influences on partnership working and their effectiveness, the value of being involved in partnerships to different stakeholders, and how partnerships may influence the capacity to conduct and use evaluation (Cousins et al., 2014; King and Alkin, 2019; Mansfield, 2016). Similarly, studies that have described models of evaluation use (Alkin and King, 2017; Cousins et al., 2014; Labin et al., 2012) and knowledge exchange (Mitton et al., 2007; Ward et al., 2009) have highlighted the lack of an evidence-base for understanding these processes. If organisations are to initiate and implement collaborative practices that are effective and sustainable, research that takes an inter-disciplinary approach is needed to better understand evaluation practices and information flow between partners (Amo and Cousins, 2007; Cousins et al., 2014; Preskill and Boyle, 2008; Schwarzman et al., 2018).

To address these gaps, we explored the experiences and perceptions of stakeholders who were involved in partnerships to develop, implement and/or evaluate a national physical activity programme. The Get Healthy Get Active (GHGA) programme (Cavill et al., 2016) was designed and funded by Sport England, the agency in England with primary responsibility for developing grassroots sports and getting more people active (Sport England, 2014, 2021). Through the GHGA programme, Sport England funded a portfolio of 33 projects, 31 projects within two funding rounds and two invited projects. Projects were designed, implemented and evaluated through various multi-agency partnerships (Fynn et al., 2021) and were delivered in communities across England between 2013 and 2018 (Cavill et al., 2016; Sport England, 2014).

All projects funded through the GHGA programme aimed to increase physical activity in the most inactive adults and to generate evidence of the role of sport in improving physical activity and health. Projects differed in their target populations, secondary objectives and approaches to partnership working and project implementation. The programme was chosen for this study, first, as it exemplifies the multi-agency and partnership approach increasingly prevalent in public health interventions, and second, because all lead organisations of funded projects were required by Sport England to engage an independent evaluation partner. We explored specific themes related to partnership working and evaluation practices using data generated through stakeholder interviews to advance understanding of how partnership working can best be implemented to improve public health programme evaluation practice.

Objectives

To identify the partners involved in the evaluation of a multi-agency intervention, and the roles of these partners.

To explore how different stakeholders perceived and described the partnerships and their influence on evaluation.

To explore how different stakeholders involved in evaluation partnerships described the use made of the evaluation by themselves, their organisations or partners.

To apply the findings from objectives 1 to 3, to develop a conceptual model of how characteristics of partnerships may be associated with knowledge exchange and the capacity to conduct and use evaluation.

Method

This study used data collected for a broader case study that we reported in detail elsewhere (Fynn et al., 2021). We conducted thematic content analysis (Hsieh and Shannon, 2005) of data from semi-structured interviews to identify partnerships, and themes related to stakeholders’ experiences and perceptions of those partnerships, the evaluation process and evaluation use. We combined this with network analysis (Borgatti et al., 2020) to describe the links between partners and the ‘whole network’ (Kothari et al., 2014), and to produce a visual representation of connections in the form of a network map. We then adopted an inter-disciplinary approach to draw upon concepts of evaluation use and organisational systems (Alkin and King, 2016, 2017; Amo and Cousins, 2007; Cousins et al., 2014) to help interpret our findings and to better understand the types of relationships within the network and their influences on evaluation and evaluation use. Ethical approval was obtained from the University of East Anglia Faculty of Medicine and Health Sciences Research Ethics Committee (REF: 201718-133). Permission to conduct the research was received from Sport England.

Study sample

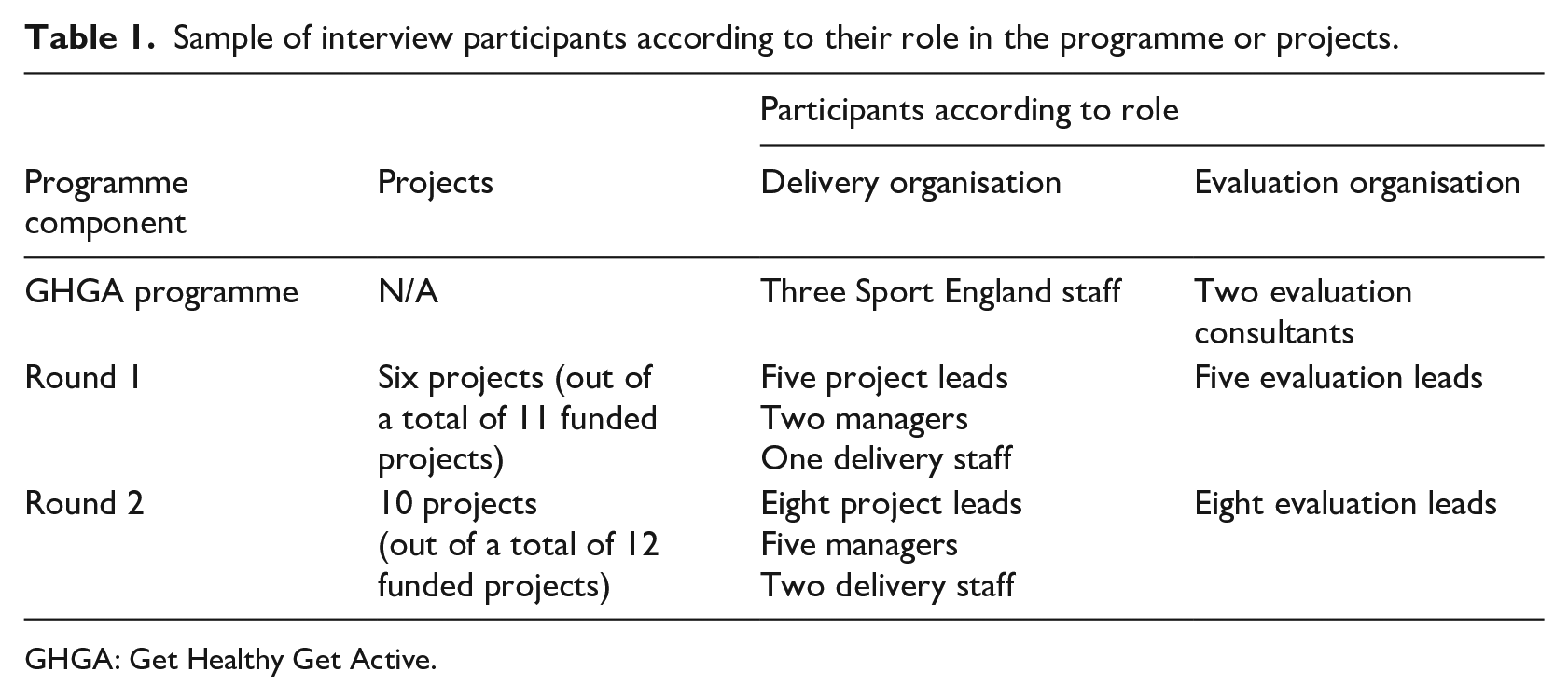

We combined purposive and snowball sampling to identify stakeholders involved in the design and/or evaluation of projects or the overall programme. Organisations and stakeholders named as either the project lead or evaluation lead were identified from evaluation reports and documentation that had been shared with us. We contacted stakeholders directly through email or telephone to invite them to participate in an interview. Participants were asked during the interview to suggest other partners whom they felt it would be useful for us to interview. We continued sampling until we had a sample that was representative of projects across the two funding rounds of the programme, different organisation types and stakeholder roles. Some stakeholders had multiple roles within the projects and programme; for example, some evaluators were involved in evaluating multiple projects, and two were involved at both project and programme levels. Table 1 shows the final sample, which included a total of 35 stakeholders; 31 had a role in design, delivery and/or evaluation of one or more local projects representing 16 of the 31 funded projects. Five had played a role in either the design, funding and/or evaluation of the GHGA programme.

Sample of interview participants according to their role in the programme or projects.

GHGA: Get Healthy Get Active.

Data collection

Thirty-five interviews were conducted and audio recorded by the lead author (JF) between May and December 2019. Interviews lasted an average of 46 minutes (range: 25–86 minutes). The topic guide was sent to participants in advance to facilitate stakeholder reflection prior to the interview. This included questions that asked them to reflect on their experiences of partnership working and its influence on the evaluation, and their perceptions about how the evaluation had been used by themselves or their organisation(s) (see Supplemental Table S1). Interviews took place over Skype, telephone or face to face, and one participant responded through email. Interviews were transcribed verbatim and given a unique identifier to de-identify stakeholders, and then uploaded into the NVivo12Pro software for analysis.

Data analysis

Transcripts were read multiple times to allow familiarisation. To identify partners involved in the project and programme evaluation (objective 1), we applied principles of network analysis. First, we coded each interview transcript and each project as a separate ‘case’ within NVivo12Pro. Second, we coded any named individuals, groups or organisations that were mentioned in the content of the transcripts as being involved in the programme or project evaluation as additional ‘cases’. To de-identify individuals and organisations each of these was also given a unique participant number.

Details of the projects, individuals and organisations were then exported into an Excel spreadsheet for further analysis. Individuals were grouped at the organisational level to minimise the risk of identification. These were coded as organisational types to describe the key attributes of each partner; for ease of interpretation, these were then grouped into broader sector-based categories (Health, Sport, University and Other). ‘Other’ included public, private and third-sector organisations. Each ‘case’ was also coded by role (Funder, Lead Organisation, Evaluator, Delivery Partner, External Partner). The code ‘delivery partner’ included any partner engaged in project recruitment, implementation or evaluation who were identified as playing a role in the evaluation; ‘external partner’ included those identified as being connected, but not directly involved, in the project or programme evaluation.

We created a Microsoft Excel spreadsheet from these coded cases to list the different partners (cases) along with their coded sector and role; this information was imported into UCINET (Borgatti et al., 2020) to generate the nodes. In a separate Excel spreadsheet, we identified the connections between partners from the thematic analysis of the reported descriptions of the projects and the interview data; this was imported into UCINET to generate the links between nodes to produce a visual representation depicting the network of partners included in our sample.

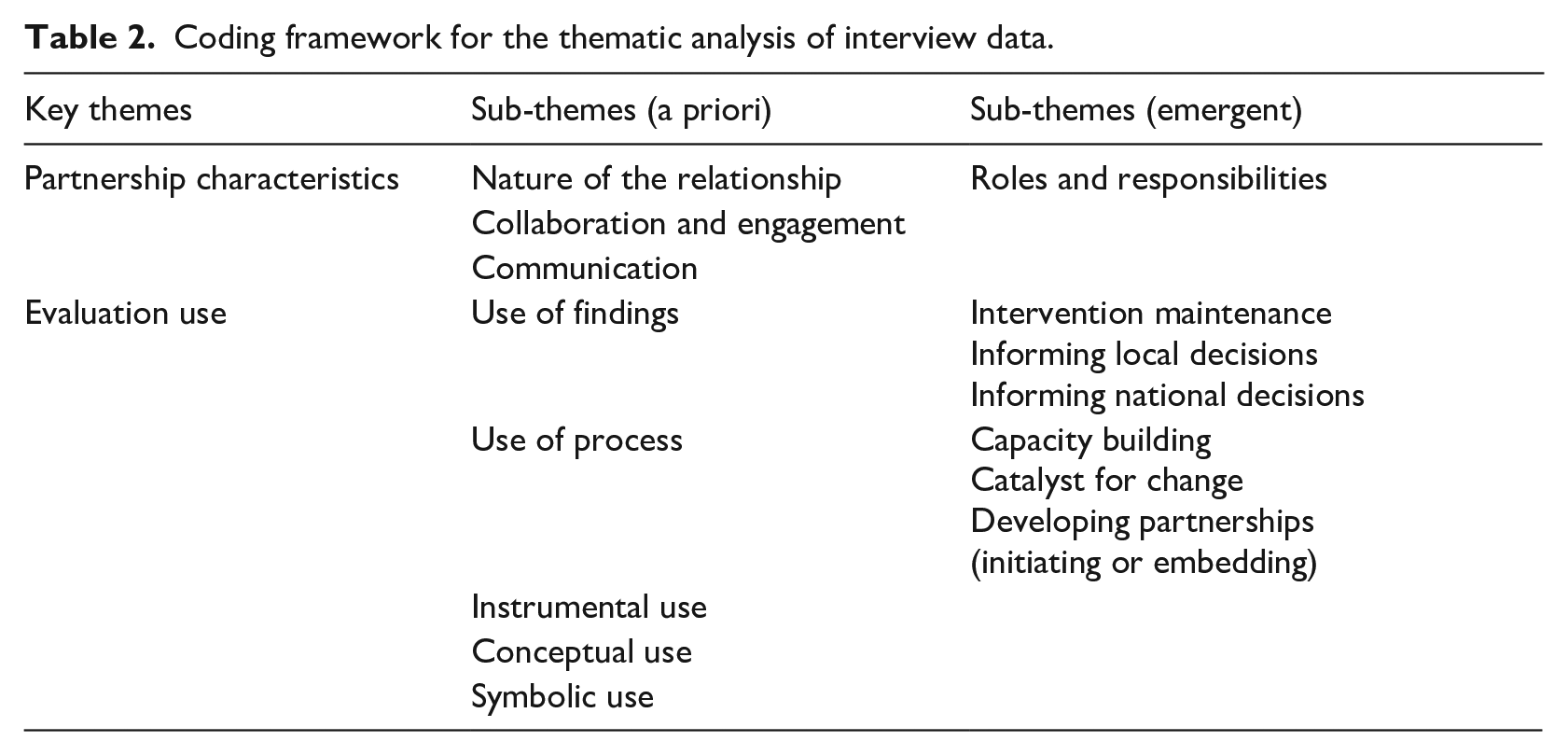

To explore how different stakeholders described their experiences of partnership working, the nature of those partnerships, and their influence on evaluation and evaluation use (objectives 2 and 3), we applied thematic coding to the interview data. Informed by the use of content analysis and framework analysis in a case study approach (Crowe et al., 2011; Hsieh and Shannon, 2005), initial codes were identified a priori, with the key themes informed by our research objectives and sub-themes informed by conceptual models of evaluation use (Alkin and King, 2016, 2017; Cousins et al., 2014) and the literature on partnerships (Harden et al., 2017; Mansfield, 2016; Nyström et al., 2018). Emergent themes were identified iteratively through the processes of repeated familiarisation, coding and recoding. Codes were reviewed and organised into categories (by JF) to develop the draft coding framework, which was then discussed and agreed by all authors (Table 2). Sub-themes were not identified or applied as mutually exclusive. Framework analysis was used to compare across and between stakeholder types and projects.

Coding framework for the thematic analysis of interview data.

To explore how the characteristics of partnerships may be associated with knowledge exchange and the capacity to undertake and use evaluation (objective 4), we drew on concepts of evaluation use and organisational systems to help interpret our findings from the network and thematic analysis, and to develop a conceptual model. This was drafted by JF and refined and agreed through regular in-person meetings with all authors to discuss iterations of the model.

Results

The results are presented within the following four sections, reflecting the four objectives of the research.

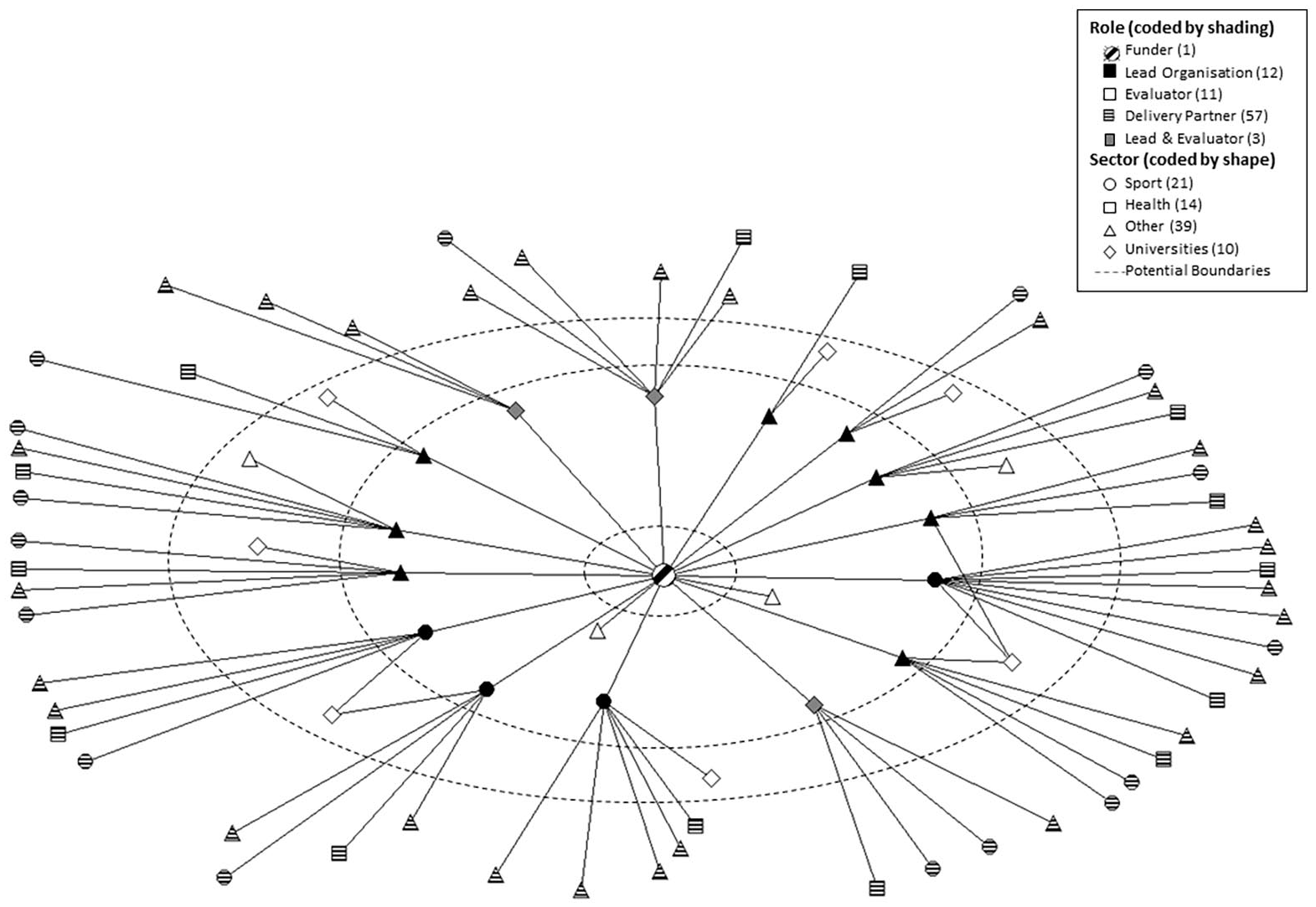

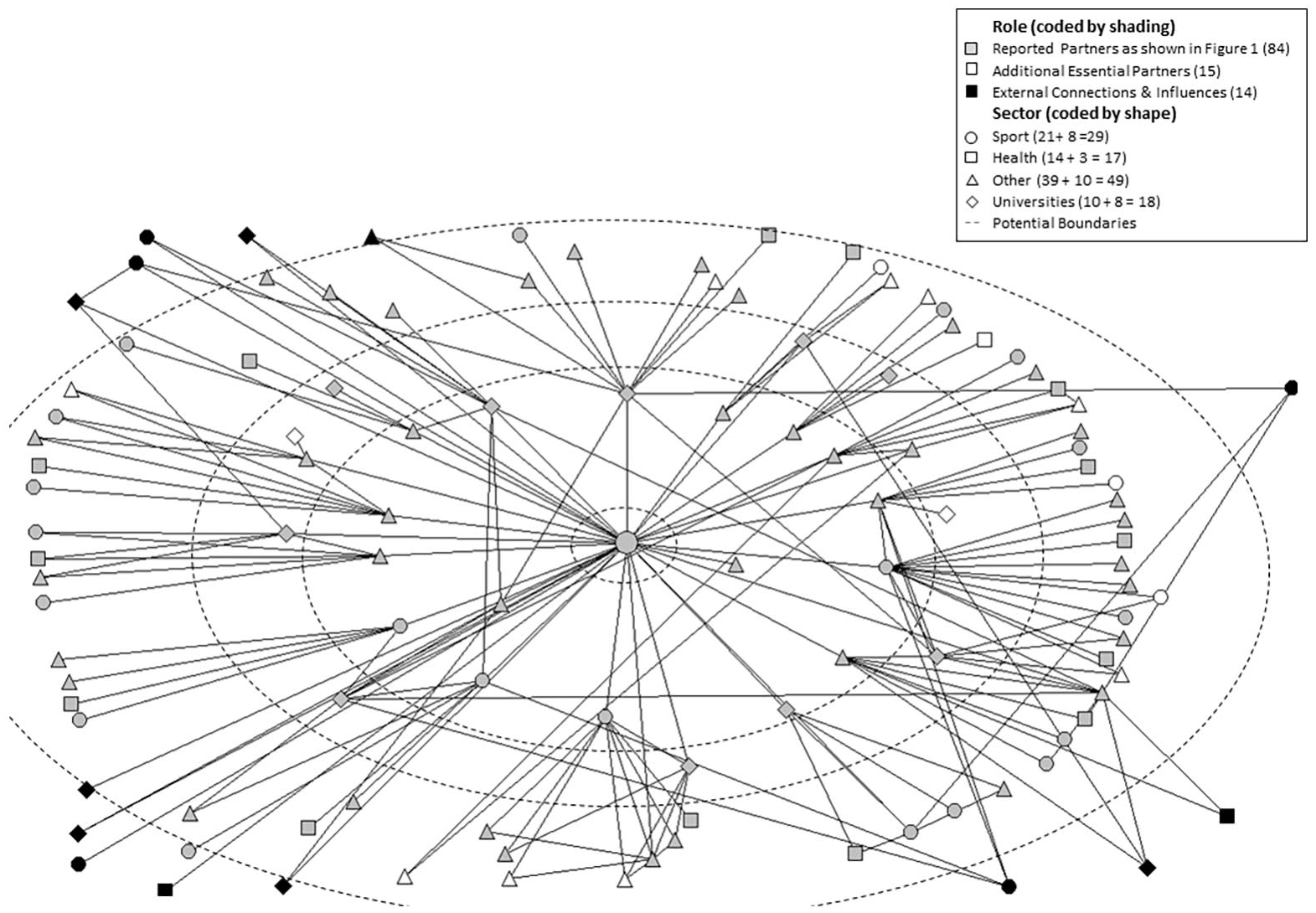

The partners involved in the project and/or programme evaluation

Figures 1 and 2 show the partners involved in programme and project evaluation. Partners are grouped and colour-coded by the categories used to describe their main role within the partnership (funder, lead organisation, evaluation partner and delivery partners). The sectors used to group the organisational types (sport, health, university and other) are shown by symbol shape. The numbers of partners depicted representing each sector and role are provided in the key for each figure. The purpose of these maps is to illustrate the complexity of the network, rather than to examine this complexity in detail. They serve as a descriptive tool on which to base the exploration of the characteristics of the partnerships and discussion of influences on partnership working and their effectiveness.

The network of reported partners (n = 84).

The network, showing reported partners (n = 84) and additional partners and relationships identified from the interviews (n = 29).

Figure 1 shows the formal partners reported to have been involved in the delivery and evaluation of the 16 projects and the programme. Projects brought together a range of private, public and voluntary organisations and individuals from different sectors to facilitate recruitment and implementation. Most involved partnerships between (1) sport and physical activity providers such as County Sports Partnerships, leisure centres, National Governing Bodies, and community-based clubs and individuals; (2) partners from the health sector such as public health teams and primary care; and (3) Local Authorities. Eleven of the projects engaged a university evaluation partner and two engaged evaluation consultants. Three projects were university led, and each of these also led the project evaluation (shown as Lead and Evaluator in Figure 1). It is noteworthy that this represents a deviation from the funding requirement for each project to have an independent evaluator. Sport England commissioned two different consultancies to conduct summative evaluations of the overall programme, including an interim report at the end of the first funding round and a final report after round two which had not been completed when we conducted this research. Figure 1 shows that within each project-based group of partners, the project lead organisation is the central link between partners. It also shows two cases where there are connections between projects through a common evaluation partner. These connections represent flows of information. The dashed lines represent where boundaries exist between the key partner types and show how these intersect the connecting lines and potentially interrupt flows between partners.

Figure 2 shows the wider network of both formal partners and additional connections between individuals and organisations identified from the interview data. This reveals a more complex set of relationships, with connections between individuals and groups that transcend project and organisational boundaries within the network and appear as additional networks nested within the overall programme network. The additional partners include charities, local services and community-based groups, mentioned by stakeholders as additional essential partners in project evaluation. Stakeholders described the vital role that these partners played in recruitment, undertaking baseline and follow-up data collection, and building relationships with participants, which in turn enhanced response rates: The delivery team were quite involved in terms of getting the data, and then we had an administrator who was doing a lot of the inputting. (Participant 5 – Project lead) There were lots of partners around the table who were contributing ideas [. . .] the other thing I forgot to mention actually, we worked with a department within the council, it was a relationship I had with them anyway, I had worked with them in the past. (Participant 26 – Project lead) That partnership working was essential [. . .] allowed us to create partnerships with people who we maybe hadn’t had a relationship with, like Primary Care and the health sector. (Participant 5 – Project lead)

Stakeholders from two projects also mentioned links to additional universities that supported, but did not lead, the project evaluation. For example, one evaluator explained the relationship with a university outside the official partnership: We have worked with them on a number of projects, and I think we refer to him as our methodological advisor. (Participant 31 – Evaluator)

Figure 2 also shows (in black) external individuals or organisations that were not directly involved in the project or programme evaluation but that were mentioned as influencing either the evaluation methods adopted, dissemination or evaluation use. This included individuals and organisations that informed programme-level decisions about project evaluation design, organisations connected by movement of staff between them, and organisations involved in dissemination activities. The complexity of connections depicted in Figure 2 represents potential for multi-directional flows of information that may influence evaluation practices and for wider dissemination of evidence.

Partnership characteristics and their influence on evaluation

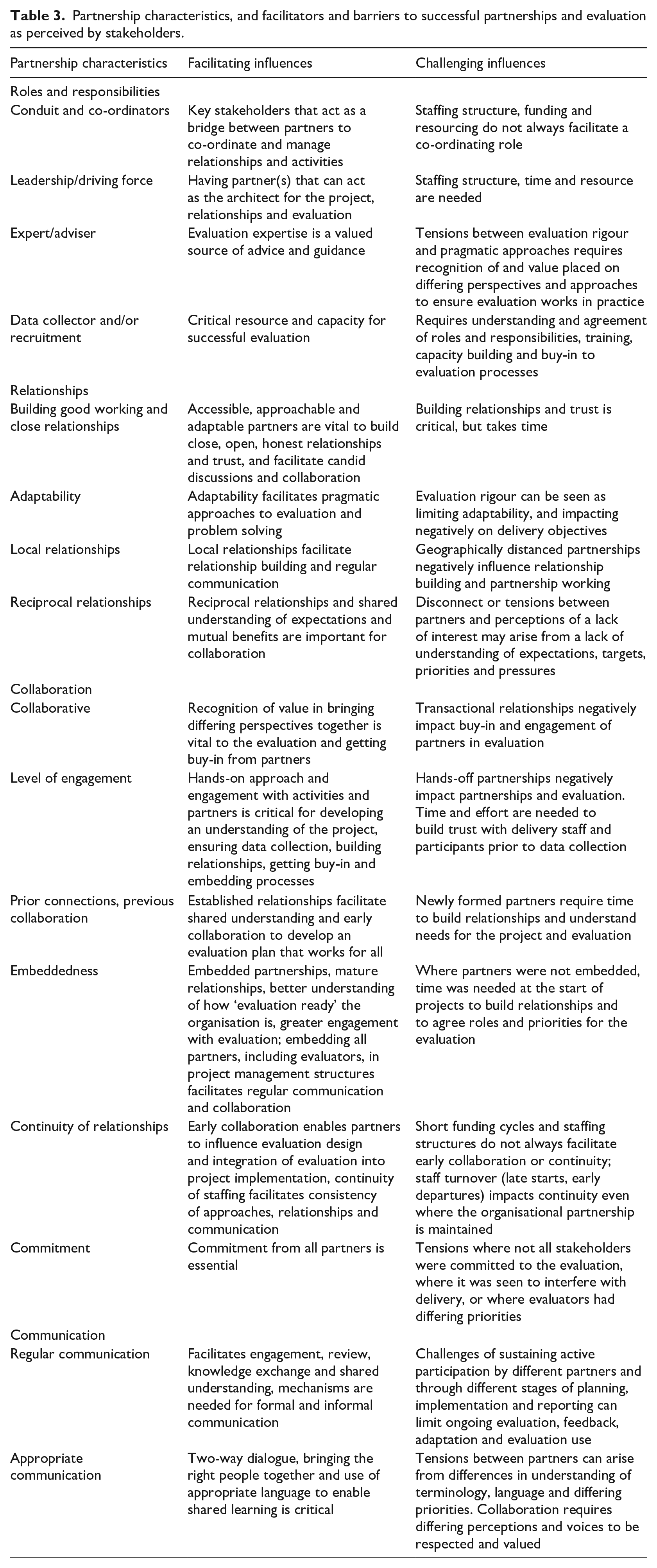

Partnerships were described by their roles and responsibilities (as applied in our categorisation in Figures 1 and 2) but were more fully described by the nature of the relationships, collaboration and communication. Table 3 summarises and explores in more detail how stakeholders described each of these four themes, and how these were perceived as facilitators or barriers to partnership working and evaluation.

Partnership characteristics, and facilitators and barriers to successful partnerships and evaluation as perceived by stakeholders.

Roles and responsibilities

Stakeholders reflected on the importance of understanding, agreeing and valuing the differing roles and responsibilities of partners, as well as the benefits from partners bringing different skills and expertise to facilitate evaluation: You need to be able to draw on a number of different skills. I think the beauty of having the University involved in this project is that you can draw on expertise quite quickly. (Participant 10 – Project lead and Evaluator)

In other projects, stakeholders reflected on the challenges and tensions between partners, and the need for a shared understanding of expectations and the value placed on differing perspectives and approaches to ensure evaluation works in practice: The biggest challenge at the time, was the interest from the evaluation partner, and not understanding the bigger picture [. . .] and how we wanted to show that we were having a big impact. It was too much of a facts and figures focus. (Participant 12 – Project lead) There is a disconnect between them [evaluators and practitioners], and there still remains to be a disconnect but I think it’s just trying to appreciate as best you can each other’s roles really, especially for the first year of this project that really didn’t happen. (Participant 29 – Evaluator)

Others reflected on the importance of key partners acting as a conduit or bridge to facilitate partnership working, and to co-ordinate and manage relationships and activities: I do think that academia has different outputs and objectives to policy and practice. Having an understanding and being able to be a bit of a bridge between the two was important. (Participant 5 – Project lead) Everyone is driving towards the same thing, but they have to do it in different ways because they are either contractually bound, or they are limited by their resources, and so that partnership network was essential. That community of sport and physical activity network was a central way in which we could have debates and discussions but crucial in that partnership was the role I took. You need an architect really to pull that together. (Participant 4 – Project lead and Evaluator)

Relationships

Relationships in which partners found each other to be accessible, approachable and adaptable were described as essential to facilitating open and honest conversations, and to enabling capacity building and collaborative approaches. Stakeholders recognised that building relationships, trust and capacity required time and investment: The partnerships that were really key were myself with the project lead, project coordinator and program manager, we had really good working relationship [. . .] having the key relationships with them was useful. Also, we had to have really good relationships with those who were actually delivering the intervention or the programme and exercise, having good relationships with them was absolutely essential for enabling data to be captured. (Participant 33 – Evaluator) I think the partnership comes down to an investment of time into building it and a mutual benefit in doing it. We put a lot of time and energy into the development of the relationship, and we even now do try to touch base regularly. Collaboration is very different to working in partnership [. . .] it really takes time to embed if you think about building trust, respect, honesty and I think we have built on a lot of those. So it is a very open, honest, transparent relationship. (Participant 6 – Project lead)

Relationships with the funding partner were described variably across projects and between different partners within projects. Experiences of the relationship with the funder were also felt to have changed over the course of the programme’s life cycle. Nine participants (representing delivery partners, lead organisations and evaluators) commented on the supportive relationship between themselves and the funding organisation. Stakeholders also referred to the important role that Sport England played in bringing projects and partners together through knowledge exchange events to facilitate capacity building and shared learning. Nevertheless, nine participants (representing delivery staff and evaluators) described the relationship as transactional and commented on limited opportunities for communication or engagement in knowledge exchange and feedback.

Collaboration

Collaboration was thought to be facilitated by early and ongoing engagement of evaluators in the project implementation and of delivery staff in the evaluation. This was described as mutually beneficial: evaluators developed a better understanding of the project and needs of stakeholders, while delivery staff were more likely to buy-in to the evaluation processes when time was taken to train staff and explain the purpose, methods and importance of evaluation: You can’t just tack it on, you need to be there from the start and to be involved. When everyone gets their opportunity to give their thoughts and ideas everyone is engaged, and that makes a big difference. People can see what they’re going to get out of the evaluation, it makes a better experience for everyone, and then measures get completed. Without which you don’t have an evaluation. When everyone’s bought into the process, that’s when it works. (Participant 31 – Evaluator)

Where there had been a prior connection or working relationship, participants reflected on this facilitating a closer partnership. Local partnerships were thought to enable closer relationships, more regular communication and engagement with project activities, and better understanding of local needs and priorities. The findings also highlighted the influence on continuity of organisational structures and processes, such as funding and staffing. Stakeholders described late project starts and early staff departures within project teams as a challenge to building relationships, and to planning, agreeing and implementing evaluation practices: That consistency, which is always difficult, people do leave, continuity really helps if you can get it, in terms of relationship. (Participant 6 – Project lead) There were changes in the clinical team, changes in the council team, changes from the delivery teams, and changes in the evaluation team, that’s really hard if you’ve not got the good relationships there. [. . .] Since the evaluation got published there’s been a ton of changes in staffing again, I do wonder if it was still the same leads from the beginning whether that would have been more broadly disseminated. (Participant 33 – Evaluator)

Communication

Communication was described as a key process to facilitate knowledge exchange, and in turn, to build capacity to both do and use evaluation. Communication that was regular, timely and appropriate was seen as critical to effective partnership working, whether between funders, delivery staff, project leads or evaluators. For example, evaluators described clear communication as vital to ensuring partners understood each stakeholder’s roles and responsibilities: Making sure that we had really good and clear communication with the instructors [. . .] making sure the instructors were really clear on what they were doing and that I was available to answer any questions [. . .] having that communication was really vital. (Participant 33 – Evaluator)

Participants also acknowledged the wider value of bringing people together and initiating conversations: There have been more conversations happening at the local level. (Participant 1 – Funding organisation) We did learn a lot from working with them [. . .] they understand that way of working, so it has been quite easy to move on to the next project. (Participant 19 – Project lead)

Limitations in communication and feedback in the later stages of the programme, and particularly following final reporting, were identified as barriers to knowledge exchange and evaluation use: It would have been useful to have a little bit of communication when the report was submitted. (Participant 33 – Evaluator)

These four themes are interlinked, and highlight the relationships between processes, such as communication and building capacity, and characteristics that influence these processes, such as the approachability, accessibility and adaptability of partners.

Evaluation use

We identified the following themes related to evaluation use: findings or process use, instrumental use (direct action), conceptual use, capacity building, being a catalyst for change, and initiating and embedding partnerships. There was consistency in the way stakeholders with differing roles within and across projects described their experiences and perceptions of evaluation use. We found no evidence in the descriptions provided by participants that we were able to identify and code as symbolic use.

Stakeholders described their experiences holistically. For example, they did not always differentiate between findings use or process use, or between engagement in partnership working or the evaluation itself. Project and programme stakeholders described how the evaluation as a whole had been used to enable the project or elements of the project to continue, and to inform approaches used in subsequent projects or future commissioning activities: We have massively used it as a way of trying to develop better tools that will measure and do what we want [. . .] which has certainly built on the experiences not just of this programme but across the whole organisation and how we support other organisations. (Participant 1 – Funding organisation) It made the biggest difference to how we tackle and move towards tackling inactivity locally, and so that is not necessarily about the evaluation process but it is the impact and outcome of that whole learning from the evaluation. [. . .] The legacy of the project has carried on, it has had a massive impact on the physical activity strategy. (Participant 10 – Project lead and Evaluator) We have secured further funding and this was probably a part of it, but that was halfway through, not the end evaluation report. (Participant 19 – Project lead) Through that we’ve got a three-year contract to deliver activities as part of a different project [. . .] we wouldn’t have got that without the GHGA project and the evaluation, the evidence that we had from that. (Participant 30 – Project lead)

These observations illustrate instrumental use, and in some cases, conceptual use of evaluation, and highlight the value of concurrent evaluation and intermediate feedback, rather than purely summative evaluation and evidence generation.

Some stakeholders commented on their own limited understanding of how the evaluation had been used at the programme level: I don’t know how useful the evaluation has been, in terms of the report which we submitted. (Participant 34 – Evaluator)

Capacity building was more explicitly linked to process use, and was identified as increasing knowledge, skills and attitudes. Stakeholders described their learning from the experiences of being involved in evaluation processes and of being exposed to different evaluation approaches and methods. Where formal training was mentioned, this related to training for programme delivery or data collection methods. Developing a better understanding of the purpose and importance of evaluation, and gaining buy-in from all partners, were seen as critical for successful evaluation, and to bring about changes to evaluation practices during and after the project. Stakeholders described their learning as a catalyst for changing practices, and, in five projects, for changing staffing structures, with the creation of insight and evaluation officer roles being embedded into organisations: The learning has transferred across to other projects, the importance of capturing really good quality evaluation. We have developed evaluation resources and run training sessions for organizations locally to share our learning with the sector. I would say this project was the catalyst. (Participant 22 – Project lead) It has been huge; it shapes much of what I do on a day to day basis and probably the same for the other people here. Embedding that evaluation, that partnership working across everything we do, I think that’s crucial. (Participant 8 – Delivery partner)

Stakeholders at both the local project and national programme levels also reflected on the value of initiating cross-sector partnerships, opening doors for conversations, and developing networks: One of the big things that came out of the project was the steering group that was set up at the start, that has led to more and more partners coming round the table and that is because people were hearing about it and wanted to be involved in the project and they were bringing their own projects and their own ideas to the table as well, so certainly evidence from my point of view that that was leading to more partnership working locally. (Participant 26 – Evaluator) I think it has been quite significant but isn’t necessarily that easy to quantify or that tangible. One of the effects of GHGA has been this much closer partnership between sporting and some of the health partners, and Public Health England nationally. I think having the evaluation arrangements, for all their imperfections, were probably more rigorous than we would have had historically and has been helpful in getting some of that buy in and engagement with health and wellness [. . .] through evidence, but also through relationships and wider political changes, a shift has happened [. . .] and I think the evaluation has been relevant to winning some of that support or some of that shift. (Participant 2 – Funding organisation)

One stakeholder reflected on the value of relationships with the wider network evident in Figure 2: From my own personal relationships, I still have those networks [. . .] that is how I get most of my information, and find out the best things to be doing. (Participant 20 – Funding organisation)

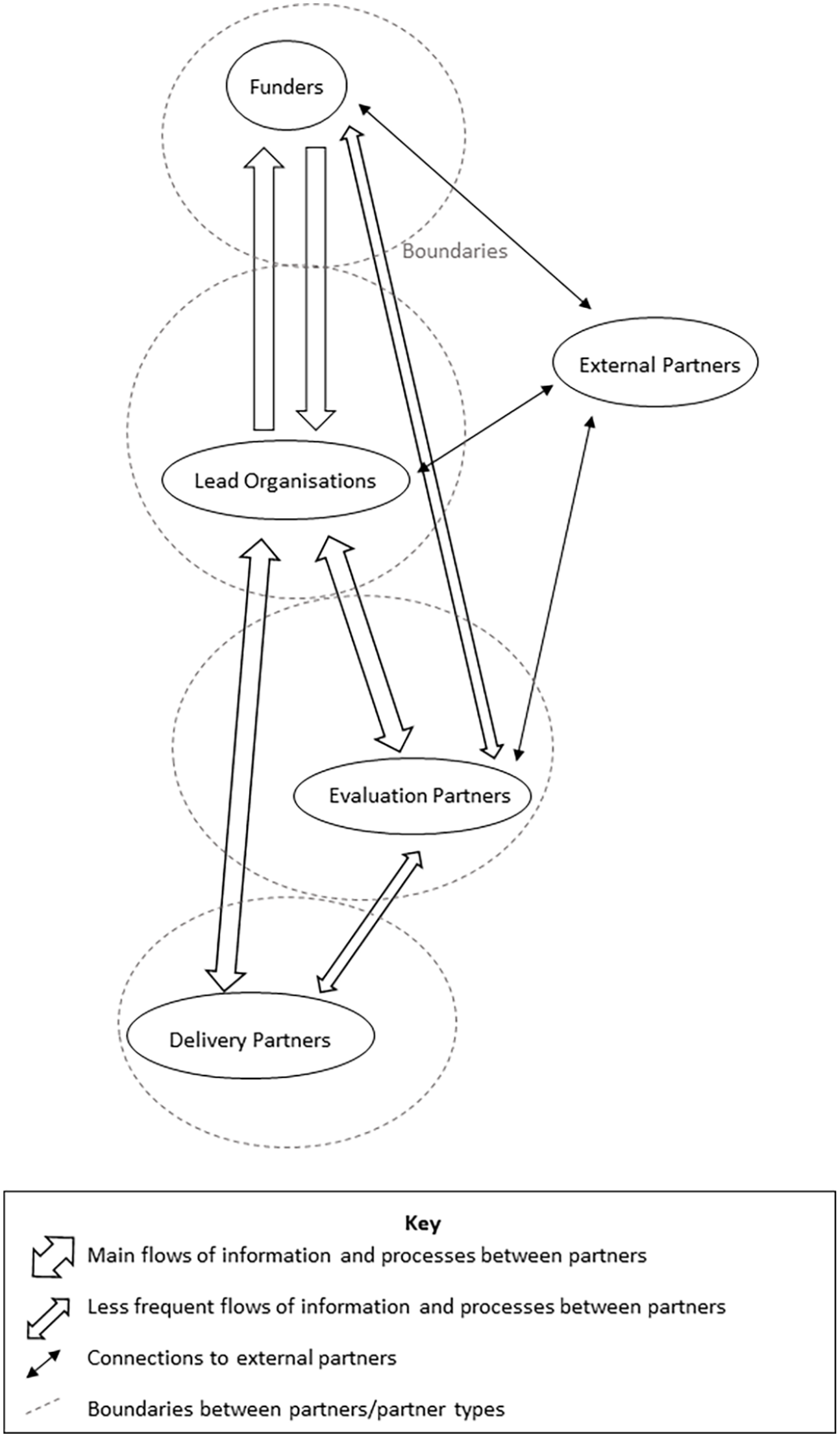

A conceptual model of the relationships between partnerships, processes and partnership characteristics that facilitate evaluation, dissemination and evaluation use

To address the final objective, we applied the findings from the previous objectives to develop our conceptual model of how partnerships may be associated with knowledge exchange and the capacity to do and use evaluation. First, informed by the network maps (Figures 1 and 2), Figure 3 illustrates the partners with differing roles within the projects, the programme and external to the programme, connected by arrows. The network map shown in Figure 2 revealed groups of connections and partners which transcended project and programme boundaries, through having differing roles, connections to external partners, or staff mobility. They can be viewed as smaller networks nested within the overall network. These connections represent important opportunities for information flow between partners and across the network, but also where alignment of processes along connecting lines is required to facilitate effective partnership working.

Information flow and boundaries between partners and networks.

As in Figures 1 and 2, the network illustrated in Figure 3 shows partners grouped by their roles separated by boundaries (dashed lines) which represent potential interruption to flows of information and barriers to alignment of processes. For example, the thematic analysis showed that differences in priorities, organisational structures and a lack of a common language between evaluators and practitioners can act as barriers to communication, collaboration and building capacity. Differences in organisational structures and cultures can influence time-lags in engaging staff, agreeing evaluation processes and in communicating and providing feedback. The arrows illustrate that flows of information were bi-directional, and that the main flow of information was through the lead organisations (often acting as a conduit), with direct flows between evaluators and delivery partners or between funders and evaluation partners as less frequent.

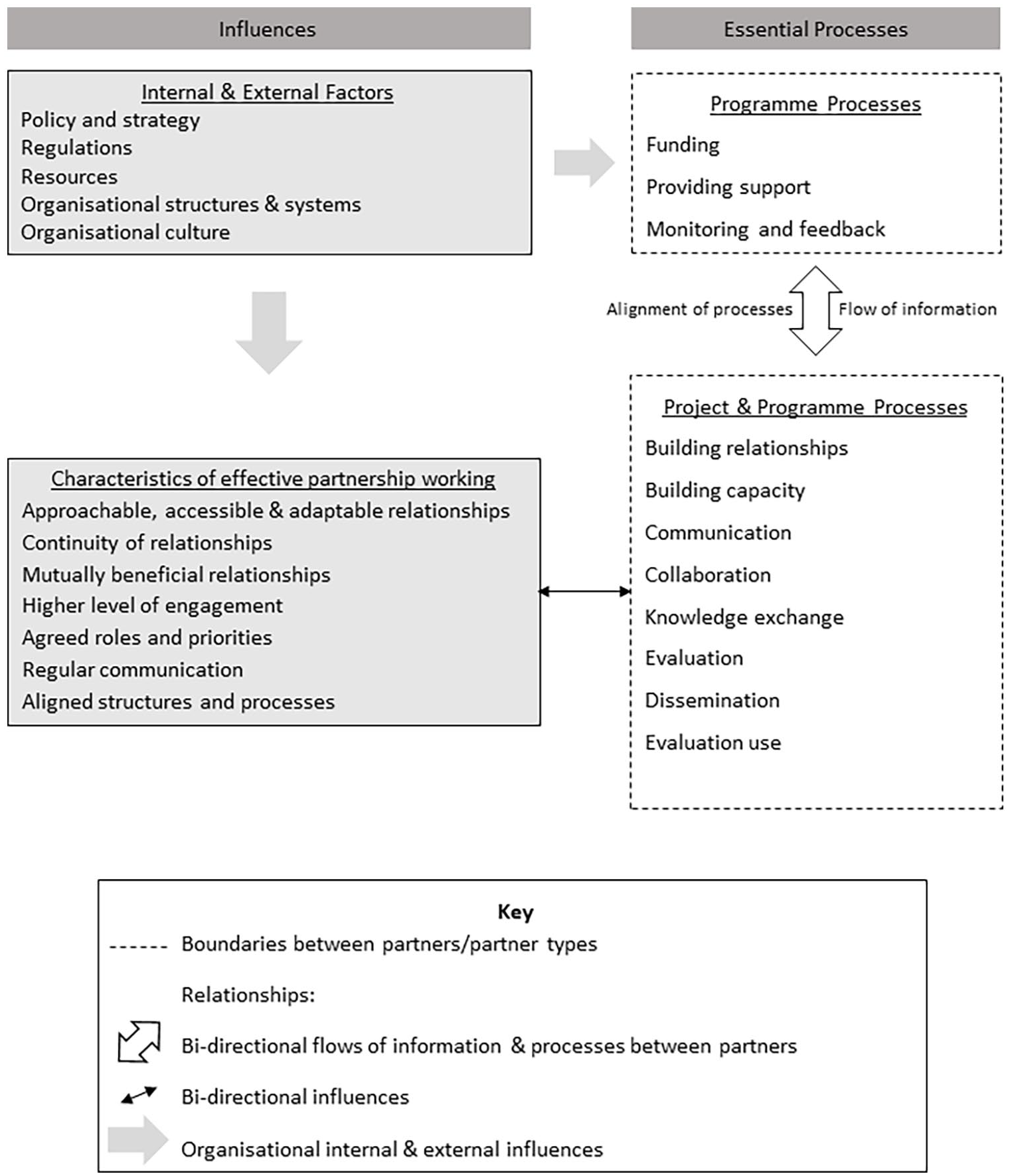

The thematic analysis highlighted key partnership characteristics and processes that are interlinked and influence the effectiveness of partnership working. Drawing on these, we developed our conceptual model to show how partnership characteristics can negatively or positively influence partnership processes, and in turn influence evaluation, dissemination and evaluation use (Figure 4). Processes, such as communication, building relationships and knowledge exchange that were identified as essential to effective partnership working are shown on the right of the model under ‘essential processes’. Partnership characteristics identified as important influences on these processes, and in turn, on the success of the partnerships and the evaluation, are shown within the box on the left of our model. The arrows highlight the potential for relationships (flows of information, process alignment and influences) to be bi-directional. Informed by the boundaries identified in the network mapping and simplified as groups of partner types in Figure 3, our conceptual model (Figure 4) illustrates how boundaries may act as potential barriers and effective relationships as facilitators. Furthermore, the model highlights the importance of shaping systems and organisational structures to support partnership working, align processes and facilitate information flows. The model can be used to understand, and implement, approaches to support partnership working.

A conceptual model of the relationships between processes and partnership characteristics to support effective partnership working for evaluation, dissemination and evaluation use.

Discussion

We identified a complex network of partners that were involved in or influenced programme and project evaluation. By combining network analysis with framework analysis, we have shown how partnership characteristics can influence the flow of information and alignment of processes between partners, and how this in turn influences evaluation, dissemination and evaluation use. We have developed a conceptual model to help visualise this (Figure 4). Our model builds on concepts within previous models of evaluation and evaluation use which focused on capacity building for evaluation at an organisational level (Amo and Cousins, 2007; Cousins et al., 2014; Labin et al., 2012; Preskill and Boyle, 2008), and on concepts of partnership working (Harden et al., 2017; Mansfield, 2016; Nyström et al., 2018). Through the model, we have highlighted important elements of partnerships and networks, and how these are essential for collaborative evaluation activities, quality evaluation, knowledge exchange, shared learning and evaluation use.

Compared to previous models, our study offers a deeper understanding of the roles of different partners within multi-agency interventions in evaluation, and how characteristics of partnerships can be shaped to positively influence evaluation processes and practices. Several authors of previous conceptual models have highlighted the lack of empirical work and evidence to support them (Cousins et al., 2014; Labin et al., 2012; Mitchell et al., 2009; Mitton et al., 2007). By grounding our model in the evidence generated from discussions with stakeholders involved in partnerships to evaluate projects, we have addressed some of these limitations. For example, our findings provide supporting evidence of internal and external influences, such as organisational structures and culture, on evaluation, capacity building and evaluation use, as highlighted by earlier models of evaluation use (Amo and Cousins, 2007; Labin et al., 2012) and of dynamic multi-directional flows of information as described by models of knowledge exchange (Mitchell et al., 2009; Ward et al., 2009).

Network analysis revealed a complex set of formal and informal relationships, and groups of more, or less, connected partners within wider programme and external networks. These connections are essential for knowledge exchange; they provide the potential for building capacity and professional development to improve evaluation practice for individual and organisational partners. Communication to support multi-directional flows of information between partners is crucial. Our findings showed that communication and knowledge exchange were critical to evaluation, and to the use of both evaluation findings and process in multi-agency interventions. Yet, we also showed that knowledge exchange is often reliant on key stakeholders that act as a conduit or link between others.

Through the thematic analysis, we identified important benefits of research-practice partnerships, such as access to expertise, improved evaluation rigour, generation of practice-relevant evidence and capacity building, which support findings from previous studies (Harden et al., 2017; Mansfield, 2016; Schwarzman et al., 2018). We also identified key processes and partnership characteristics that were critical to successful partnership working and evaluation. For example, appropriate and regular communication, and early mutual engagement were essential to facilitate effective collaboration, communication and capacity building. Close relationships in which stakeholders were, and were seen to be, approachable, accessible and adaptable were important. Continuity of partnerships facilitated these processes.

Our findings align with previous recommendations to improve evidence based-practice, such as Powell et al.’s Expert Recommendations for Implementing Change (ERIC) which includes strategies such as promoting network weaving, academic partnerships, shared local knowledge and collaborative learning (Powell et al., 2015). Our findings also provide evidence of the reciprocity within partnership working that Mansfield et al. describe (Mansfield, 2016). Furthermore, we provide evidence of the potential for bi-directional influences between partnership working and evaluation, with the integration of evaluation into programme decision-making influencing the nature and development of partnerships, which aligns to benefits of research-practice partnerships found in various fields (Harden et al., 2017; Huberman, 1999; School of Public Health Research, 2018). Taking a grounded approach to gather evidence from stakeholders of their experiences of being engaged in partnership working, our study addresses the lack of empirical evidence to support previous conceptual models that has been highlighted by these authors.

By identifying the relationships between partnership characteristics and essential processes in our model of effective partnership working to support evaluation, we highlight their importance so that practitioners, funders and evaluators can take steps to address these when engaging in partnership-based evaluation (Figure 4). Funders and commissioners play a crucial role through their requirements for evaluation and the information and support they provide for project evaluation, knowledge exchange and feedback. They need to implement organisational structures and processes that support (1) initiation and continuity of relationships and practices, (2) alignment of processes to minimise barriers to information flows across boundaries between partners, and (3) development of systems to support knowledge exchange and capacity building.

In line with previous studies, our findings highlighted the context-specific and changeable nature of partnerships (Mansfield, 2016), and the complex interconnections between influences on partnerships and practices within multi-agency public health interventions (Schneider et al., 2016; Schwarzman et al., 2018; Tremblay et al., 2013). While, our study is based on a national physical activity programme, we believe our findings are applicable to other public health and multi-agency programmes, although further research would be required to fully understand the role of context in these alternative settings.

Using network mapping principles allowed us to identify where boundaries may exist within networks, where they may limit information flow, where time-lags may occur and where knowledge may be lost or gained. Our model helps explain the relationships between partnership working and processes fundamental to evidence-based practice. To facilitate information flow across boundaries there needs to be alignment of organisational structures and systems, time scales and communication approaches. Staff movement represents the potential for both loss and gain of learning and capacity from organisations. The net effect depends on their role and position within the network, but funding and organisational structures that minimise staff loss are vital.

Knowledge exchange through informal or personal connections for stakeholders in the wider network was also important. We suggest there may be added value in realising the intrinsic value of these ‘hidden communities of practice’ by developing organisational structures and processes that systemise networking and embed knowledge sharing practices. Communities of practice in health settings offer opportunities for capacity building and knowledge exchange to support professional development (McKellar et al., 2014). To realise these benefits of networking, and to make these accessible to all stakeholders at any stage in the evidence-based practice cycle, there is a need for sustainable networks to bring researchers, policy makers and practitioners together and act as a conduit for knowledge exchange, advise and professional development. Both the research community and those with responsibility for strategy, policy and practice decisions have a role to play in facilitating this at the local, national or international level.

In a similar vein to previous conceptual models of evaluation and evaluation use (Cousins et al., 2014; Labin et al., 2012), we offer our model as a contribution to what we see as an ongoing enquiry and conversation to improve evaluation and evidence-based practices in multi-agency public health interventions. We have drawn on concepts from organisational learning and systems to help interpret our findings, rather than applying specific theories. For example, we have described clusters of connected partners at the project level, nested in wider networks operating at the programme level and with partners external to the programme, and have identified boundaries as potential barriers to the flow of information and alignment of processes, much like those described in systems theories. We have not however delved more deeply into systems thinking or communities of practice and highlight these as areas that would add value in further research.

Strengths and limitations

An important strength of this study was the support we received from Sport England to conduct the study, and the access to and participation from stakeholders at all levels of the GHGA programme. The close working relationship between ourselves and the organisations involved in GHGA provided rich insights, yet the study sat outside of the programme and projects with no funding from Sport England and so we were able to maintain independence and appraise the programme objectively. Another strength is our use of empirical evidence and inter-disciplinary approaches to inform our analysis and development of the conceptual model. This has enabled us to develop a novel view that builds on and integrates current understanding of partnerships, networks and knowledge exchange with an understanding of evaluation and evaluation use. There are limitations in our approach. The full extent of formal, and especially informal networks, is likely under-represented, due to the use of snowball sampling, the retrospective nature of the data collection process and the grouping at organisational and sector level which was essential for anonymity. We also acknowledge potential limitations resulting from adopting a pragmatic approach to coding and analysis with just one full coder. In future studies, a more systematic, prospective method of data collection to enable a fuller network analysis would be beneficial.

Conclusion

Partnerships and networks represent a complex set of informal and formal relationships that have the potential to positively influence evaluation and evidence-based practice. This research has identified key processes and influences as critical components of effective partnership working and knowledge exchange, such as effective relationships, regular communication, collaboration and continuity. Our conceptual model highlights the relationships between processes and characteristics of partnership working that facilitate evaluation, dissemination and evaluation use. The model also shows how connections may facilitate the flow of information between partners and the network, and where there are potential barriers between partners, highlighting the importance of alignment and continuity of relationships, practices and processes to minimise barriers to information flows across boundaries between partners. The model can be used by funders, practitioners and evaluators engaged in multi-agency interventions and research-practice partnerships to identify and understand the relationships between partnership characteristics, processes and the capacity to conduct and use evaluation. If partners are to realise the benefits of partnerships and networks, it is essential that they understand these relationships, and invest time, resources and effort to implement appropriate organisational structures and systems to support partnerships and knowledge exchange.

Supplemental Material

sj-docx-1-evi-10.1177_13563890221096178 – Supplemental material for A model for effective partnership working to support programme evaluation

Supplemental material, sj-docx-1-evi-10.1177_13563890221096178 for A model for effective partnership working to support programme evaluation by Judith F. Fynn, Karen Milton, Wendy Hardeman and Andy P. Jones in Evaluation

Supplemental Material

sj-docx-2-evi-10.1177_13563890221096178 – Supplemental material for A model for effective partnership working to support programme evaluation

Supplemental material, sj-docx-2-evi-10.1177_13563890221096178 for A model for effective partnership working to support programme evaluation by Judith F. Fynn, Karen Milton, Wendy Hardeman and Andy P. Jones in Evaluation

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work was undertaken by the Centre for Diet and Activity Research (CEDAR), a UKRC Public Health Research Centre of Excellence. Funding from the British Heart Foundation, Cancer Research UK, Economic and Social Research Council, Medical Research Council, the National Institute for Health Research, and the Wellcome Trust, under the auspices of the UK Clinical Research Collaboration, is gratefully acknowledged. The funders had no role in any element of this research.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.