Abstract

While “systemic thinking” is popular in the context of capacity development and evaluation, there is currently a lack of understanding about the benefits to employing systems theory in evaluation capacity development. Systems theory provides a useful orientation to the work involved in complex systems (e.g. national evaluation systems). This article illustrates how evaluation capacity development practitioners can use systems theory as a conceptual tool to gain a better understanding of the functional aspects and interrelationships present within a given evaluation system. Specifically, the systems theory perspective can help elucidate the reasons for the success or failure of a given evaluation capacity development program or activity. With the goal of motivating evaluation capacity development practitioners to use systems theory in their work, this article presents a systems theory framework for evaluation capacity development and offers practical examples of how it can be adopted.

Keywords

Introduction

Governments and other organizations are increasingly expressing interest in enhancing the effectiveness of their development interventions (Davies and Pickering, 2015; Global Partnership for Effective Development Co-operation, 2020; Prizzon, 2016). Evaluations are important tools for strengthening transparency, accountability, and learning, all of which are important aspects of good governance. Alarmingly, the Bertelsmann Transformation Index (Hartmann, 2020) now shows that many governments are engaging in a growing number of authoritarian actions and there has been a concomitant weakening of democratic structures throughout the world. These issues have further been exacerbated by the ongoing COVID-19 pandemic. Such conditions emphasize the need for evaluations, which are highly relevant tools that can be used to hold these entities accountable for their actions and spending practices, thereby instilling trust that they are wisely using resources during the current global crisis. However, not all countries and organizations possess the necessary skills or knowledge to perform high-quality evaluations, which makes it critically important to develop evaluation capacities where they are lacking.

Evaluation capacity development is the formal process of establishing both the human capital (skills and knowledge) and organizational structures needed to perform high-quality evaluations (Boyle and Lemaire, 1999; Preskill and Boyle, 2008). International debates on the importance of ownership and effectiveness in the context of development cooperation (e.g. Paris Declaration 2005 and Accra Agenda for Action 2008) have increasingly focused on the importance of using national systems to accomplish monitoring and evaluation. This is also evident when looking at the United Nations 2030 Agenda for Sustainable Development (hereafter, 2030 Agenda). This stresses the importance of systematically monitoring and evaluating the implementation of 17 sustainable development goals (SDGs) and emphasizes the role of country-led evaluations, which entails the need to strengthen national evaluation capacities. The OECD Development Assistance Committee (DAC) EvalNet Task Team for evaluation capacity development envisions that evaluation will be recognized as a pillar of democratic governance and increasingly used to measure the progress toward achieving the SDGs.

Given that programs aimed at developing national evaluation capacities must often operate within highly complex socioeconomic and political environments, systems theory offers a useful orientation to account for the multidimensionality of relevant organizations and institutions while clarifying the dynamic nature of their interactions and connections, both internally and externally (Midgley, 2006; Ortiz Aragón and Macedo, 2010). More specifically, systems theory focuses on processes, patterns, perspectives, boundaries, and interrelationships (Williams and Van’t Hof, 2016), which are important components of evaluation capacity development practice.

As a practical example, this article draws on evaluation capacity development work conducted at the German Institute for Development Evaluation (DEval), which is mandated by the German Federal Ministry of Economic Cooperation and Development (BMZ) to evaluate BMZ-funded development cooperation. DEval has been given three core tasks: (1) conduct independent and strategically relevant evaluations of German development cooperation, (2) enhance evaluation methods and standards, and (3) foster evaluation capacity development in selected partner countries. Evaluation capacity development has been undertaken at DEval for nearly 8 years. External evaluations and data from DEval’s monitoring and evaluation system show that its evaluation capacity development projects are highly successful. This success can partially be attributed to the implicit use of systems theory, which inform most evaluation capacity development applications at DEval. 1 Systems theory has successfully and more formally been used in the fields of capacity development (e.g. Fisher, 2010; Morgan, 2005) and evaluation (e.g. Hummelbrunner et al., 2015; Reynolds et al., 2016; Williams and Imam, 2006). Moreover, a variety of studies have acknowledged its potential usefulness in the evaluation capacity development context (Krapp and Geuder-Jilg, 2018; Ortiz Aragón and Macedo, 2010; Stockmann, 2018). However, the literature does not currently contain any descriptions of how to systematically embed evaluation capacity development work in systems theory. This is a critical omission, as such information could provide an orientation for evaluation capacity development practitioners to ground their work in theoretical considerations, with many added benefits. This article targets this gap by providing the first theoretical account of how evaluation capacity development activities and program components can be anchored in systems theory, illustrated by practical examples from work at DEval.

Systems theory and national evaluation systems

Systems theory emerged during the 1940s providing an alternative to

Systems theory acknowledges the vast complexity of the world. It is focused on interrelations, and addresses emergent processes, patterns, and relationships. Importantly, it acknowledges the importance of these concepts without trying to “make them fit” by accepting the significance of uniqueness and constant development. Here, systems theory does not try to simplify complexity or change, but embraces both. In fact, it is based on the demonstrable existence of system effects in the natural world (Morgan, 2005).

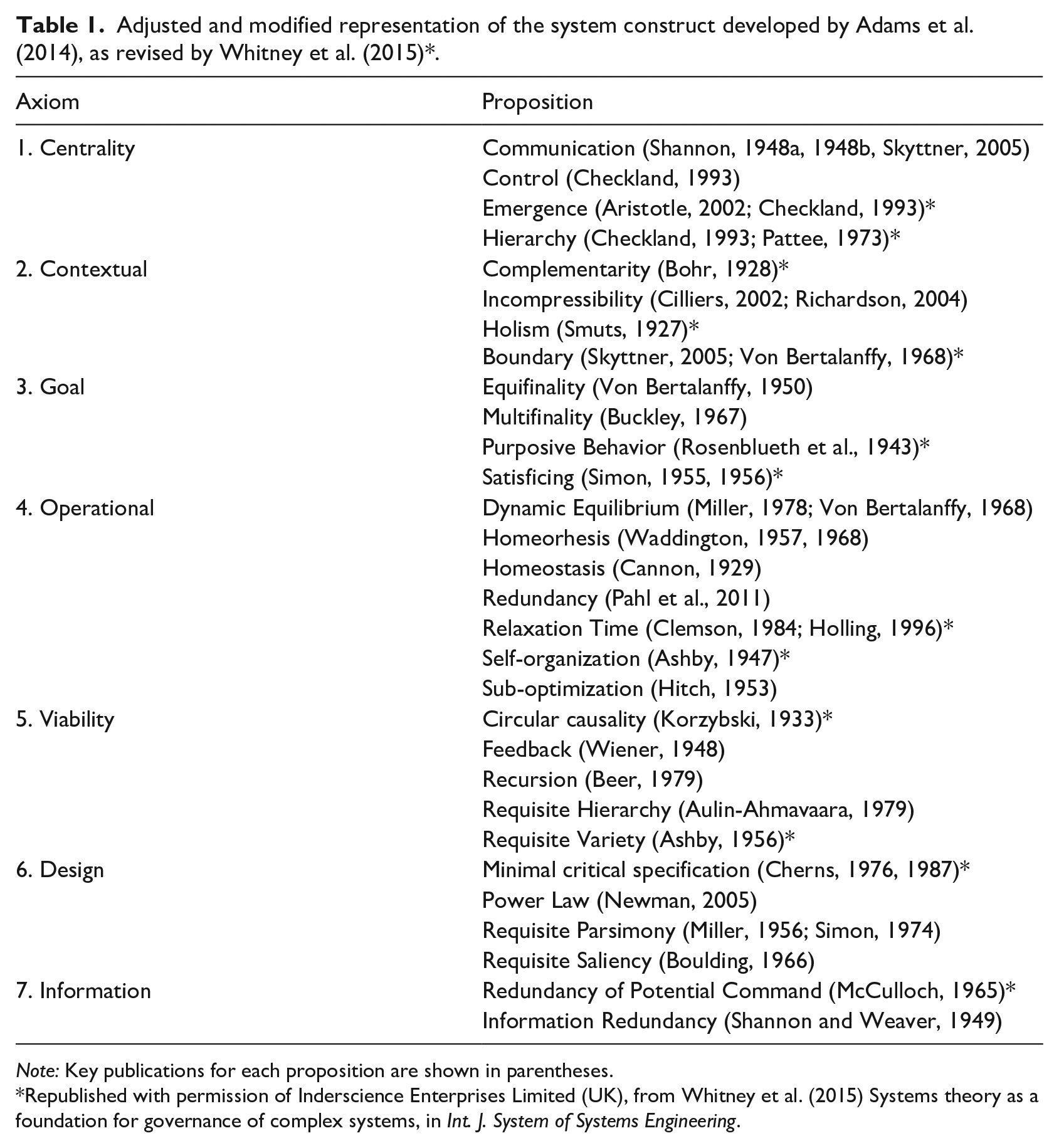

Despite the above, there is currently no consensus on what constitutes systems theory. Different versions have been popularized in various academic disciplines, including sociology (Luhmann, 1995), psychology (Von Bertalanffy, 1967), biology (Pattee, 1978), and management sciences (Kast and Rosenzweig, 1972). Yet, in recent years, systems theory has been employed to explain a wide range of phenomena, including childhood obesity (Allender et al., 2019), cybersecurity (Tarafdar and Bose, 2019), and sustainable development (Eustachio et al., 2019). While there is heterogeneity in the applications and foci, a concise set of concepts and principles has become associated with systems theory. Although a categorically accepted definition of systems theory has not yet emerged, the literature provides substantial evidence on its isomorphic concepts, laws, principles, and theorems, which are applicable to different systems (Adams et al., 2014; Clemson, 1984; Katina, 2016; Mobus and Kalton, 2015; Whitney et al., 2015). In this article, we refer to the formal definition offered by Adams et al. (2014) and define systems theory as “a unified group of specific propositions which are brought together to aid in understanding systems, thereby invoking improved explanatory power and interpretation with major implications for systems practitioners” (p. 113). This definition is drawn from 6 major sectors and 42 individual fields of science, organizing systems theory into a set of 7 axioms and 30 propositions. These elements are inclusive of the laws, principles, and theorems that collectively define systems theory. Here, axioms provide an abstract definition of various important parts of a system, while propositions describe tangible rules for how a system operates (Adams et al., 2014). Table 1 shows an adjusted and modified version of the system construct developed by Adams et al. (2014), which was supplemented and revised by Whitney et al. (2015), including the seven axioms and their propositions. In Table 1, asterisks indicate characteristics that were found to be particularly relevant when applying the systems construct in the context of evaluation capacity development work conducted at DEval.

Adjusted and modified representation of the system construct developed by Adams et al. (2014), as revised by Whitney et al. (2015) *.

Republished with permission of Inderscience Enterprises Limited (UK), from Whitney et al. (2015) Systems theory as a foundation for governance of complex systems, in

Researchers in the evaluation capacity development field frequently draw from theories on adult learning or organizational change (Bourgeois and Cousins, 2013; Ortiz Aragón and Macedo, 2010; Preskill and Boyle, 2008), while systems theory usage remains very limited. Nevertheless, a previous review of the published evaluation capacity development literature shows that a variety of researchers have acknowledged the potential usefulness of a systemic perspective (Bourgeois, 2016; Danseco, 2013; Haarich and del Castillo Hermosa, 2004; Preskill and Boyle, 2008; Stockmann, 2018). Indeed, systems theory is noted as a valuable framework for dealing with the complexities of evaluation capacity development (Danseco, 2013), and has even been used as an orientation tool for better understanding evaluation capacity development efforts, both within general evaluation systems (Haarich and del Castillo Hermosa, 2004) and educational networks (Grack Nelson et al., 2019). Previous studies have invoked systems language, and referenced important systems concepts such as levels, interactions, feedback loops (Haarich and del Castillo Hermosa, 2004), and control (Grack Nelson et al., 2019). However, the literature lacks a rigorous and comprehensive application of systems theory to evaluation capacity development. This is unfortunate, given the many benefits associated with grounding evaluation capacity development in systems theory as follows:

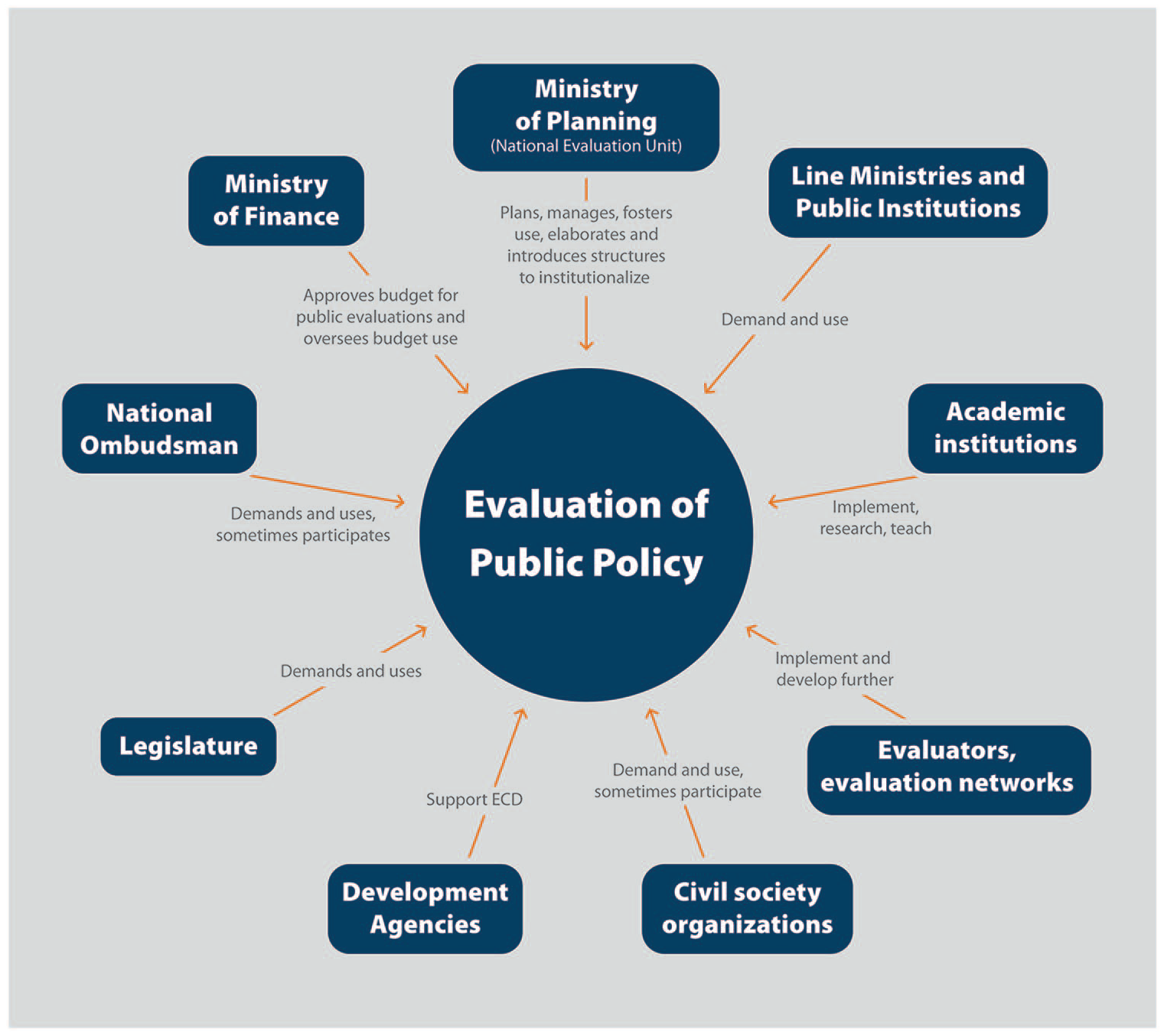

Given these multiple benefits, the following section discusses how the tenets of systems theory apply to practical evaluation capacity development work. At DEval, two large-scale evaluation capacity development projects were jointly implemented with the Costa Rican Ministry of National Planning and Economic Policy (Mideplan). DEval’s expertise lies in supporting national evaluation systems (NESs). While most of the existing literature on evaluation systems is situated at the organizational or institutionalized levels (Andersen, 2020; Briceño, 2012; Leeuw and Furubo, 2008; Martinaitis et al., 2019; Raimondo, 2018), the systemic perspective adopted in this article considers all actors, processes, and structures as national evaluation system components that allow for the planning, implementation, and use of evaluations to assess national public policies. As we explain in later sections, it is important to note that the composition of a national evaluation system gradually evolves and changes (Pérez Yarahuán and Maldonado Trujillo, 2015). The examples described in this article refer mostly to the Costa Rican national evaluation system illustrated in Figure 1. 2

Depiction of the Costa Rican national evaluation system.

The Costa Rican national evaluation system consists of diverse actors (Serrano, 2020). According to Cunill-Grau and Ospina (2008) as cited by PY and MT (2015), the national evaluation system is under the authority of the executive branch; in this case, Mideplan, through its evaluation unit. Mideplan is at in fact the head of the national evaluation system, and therefore plans, manages, and fosters the employment of evaluation in addition to elaborating and introducing structures to institutionalize evaluation. The ministries provide the primary demand for evaluations, formalized in the National Evaluation Agenda (NEA; Serrano, 2020). External evaluators (some of which are organized within the Costa Rican evaluation network) are contracted to perform evaluations, which are managed by Mideplan. An advisory board comprised of the most relevant stakeholders is tasked with guiding the evaluation process. Academic institutions also help perform evaluations, and even offer relevant postgraduate programs. Thus, evaluators can develop the necessary capacities to implement high-quality evaluations.

Civil society has recently begun to demand and use evaluations, and now takes part in selected participatory evaluations managed by Mideplan. On the contrary, development agencies play minor roles, as they do not demand evaluations in the Costa Rican national evaluation system context, but do support the development of evaluation capacities. In turn, the legislature (especially the technical services office) demands and uses the results of evaluations to adjust policies and laws. The national ombudsman represents civil society in the national evaluation platform (NEP), and may request and use evaluations on public interventions. Finally, the Ministry of Finance provides a budget for public evaluation and oversight of the financial resources available for conducting evaluations. These actors form the Costa Rican national evaluation system and participate jointly in semestral meetings hosted by Mideplan.

Embedding DEval’s evaluation capacity development work in systems theory

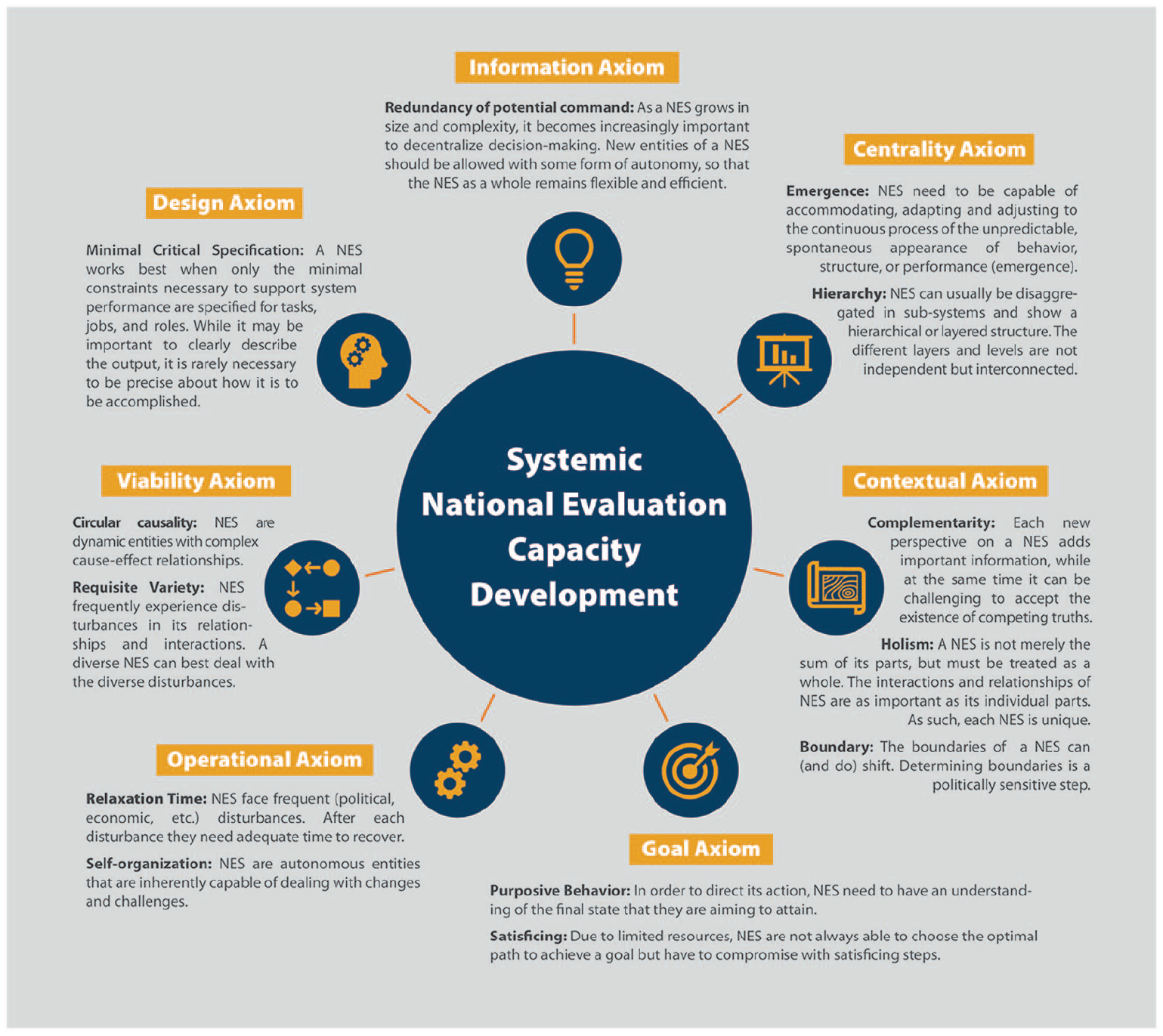

This section describes the utility of the system construct that Adams et al. (2014) developed, as supplemented by Whitney et al. (2015) for evaluation capacity development practice. We chose to focus on a subset of the propositions that were most relevant to DEval’s evaluation capacity development practice (see Figure 2) since a comprehensive examination of all propositions is beyond the scope of this article. Relevance was determined based on expert judgment of DEval evaluation capacity development practitioners in light of the propositions’ usefulness and impact on evaluation capacity development projects outcomes. Next, we explain and discuss the selected propositions, and describe how they can be applied in practical evaluation capacity development work, using examples from DEval’s evaluation capacity development practice. As an overview, we provide a short description of the full set of axioms and their propositions in the Supplemental Table S1. Figure 2 provides a visual representation of the systems construct applied to national evaluation systems.

Depiction of the systems construct applied to national evaluation systems.

Centrality axiom

The centrality axiom states that all systems are driven by two pairs of propositions that are central to their existence: first

Emergence

The concept of emergence can be traced to famous philosophers and poets, including Aristotle and Goethe (Blitz, 1992). Specifically, the concept implies that a system can only be distinguished as an entity given the existence of certain properties that are not found by merely looking at its individual parts (Ortiz Aragón and Macedo, 2010; Wan, 2011). Rather, these properties emerge from interactions and interrelationships between components (Morgan, 2005), which thus make the whole system greater than the sum of its parts (Checkland, 1999). 3 In other words, emergence describes the continuous process of the unpredictable and spontaneous appearance of behavior, structure, or performance. Systems must be capable of accommodating wide variabilities in emergence (robustness). They must also effectively adapt and adjust to emergent conditions (resilience), even though these cannot be known beforehand (Keating, 2009).

Accordingly, evaluation capacity can be seen as an emergent property of an evaluation system (Land et al., 2009) as well as the ultimate goal of evaluation capacity development projects. As an example from DEval’s evaluation capacity development work, the unpredictable emergence of evaluation capacity in the Costa Rica national evaluation system is evident through the formation of an EvalYouth (EY) country chapter (see EY global initiative) in 2017. EY is an organization comprised of young and emerging evaluators (YEEs), all of whom are professionally trained, but have fewer than 5 years of evaluation experience (Brenes-Alfaro and Montero Corrales, 2020; EvalPartners, 2016). Members of the Costa Rican EY chapter founded a new Voluntary Organization for Professional Evaluation (VOPE), titled the Evaluation and Monitoring Network of Costa Rican (RedEvalCR, by the Spanish acronym), which quickly gained a positive reputation and influence. Due to the importance of recognizing the emergence of new evaluation capacity within the Costa Rica national evaluation system, both the EY chapter and VOPE were incorporated into evaluation capacity development projects. Specifically, institutions were encouraged and financially supported to include EY members in their evaluations, which had the dual benefit of providing organizations with highly motivated young staff and allowing young evaluators to gain valuable practical work experience. Thus, the emerging evaluation capacity could be channeled and strengthened within the Costa Rican national evaluation system. Moreover, due to the quick and strong emergence of the EY chapter, Mideplan included YEEs in developing its national evaluation policy.

Hierarchy

Systems can usually be disaggregated into subsystems, thus showing a hierarchical (Wilby, 1994) or layered (Checkland, 1999) structure. 4 Between the different layers or levels of the system, “surfaces and filters” (Wilby, 1994: 662) may influence information flow. In an evaluation system, individuals perform evaluations (individual level), while institutions strategically plan and finance evaluations (organizational level), and the environment influences the evaluation through culture, politics, and resources (enabling environment or contextual level) (Heider, 2011). Alternatively, evaluation systems can be divided into layers of demand, intermediation, and supply, which reflect the usage, management, and implementation of the evaluation (Feinstein, 2011). However, the different layers and levels are not independent; rather, they are interconnected. For example, removing skilled labor (sometimes referred to as a brain drain) at the individual level decreases capacity at the organizational level (Fisher, 2010). In the context of evaluation capacity development work, it is essential to strategically analyze all layers and levels of the national evaluation system in addition to determining where the work is most relevant and/or how to generate the best results. It is particularly useful to focus on the flow of information between layers and identify obstacles that may obstruct those flows. For example, after developing a set of guidelines on how to manage evaluations and publishing the National Evaluation Policy, Mideplan realized that specialized training should be offered to national institutions with an associated role in the NEA. With support from DEval, training programs were developed to build capacities at various organizational levels, including those pertaining to office staff, managers, and institutional leaders (individual level). In conjunction with organizational guidelines, the practice of building capacity at the individual level within different parts of the organizational hierarchy ensured support throughout the organization (organizational level). Finally, the National Evaluation Policy and Agenda assured the sustainable use of evaluation practices (enabling environment or contextual level).

Contextual axiom

The contextual axiom draws attention to the importance of both the environment and the circumstances of a given system that enable or constrain its functions (Adams et al., 2014). The propositions of

Complementarity

This proposition originally stems from quantum mechanics, and suggests that two methodologically different observations may produce different results, but at the same time may belong together and complement one other (Bohr, 1928). Each new perspective on a system adds important information. However, it can be challenging to accept the existence of different and potentially conflicting “truths.” Within evaluation systems there are different realities. For example, when facing an evaluation task, academic actors frequently see only the methodological approach, while government actors see deadlines and policy implications. However, both views are important for the national evaluation system. When considering a problem from various perspectives, product quality also dramatically increases. Consistent with the notion of

Holism

A system is not merely the sum of its parts, but must be treated as a whole (Smuts, 1927). For a system to exist, the

Boundary

According to Von Bertalanffy (1968), as an entity, any system has boundaries, whether they are spatial or dynamic. However, the perimeter that outlines the system is often indistinct making it difficult to identify what belongs to a complex system (Von Bertalanffy, 1972). In national evaluation systems determining boundaries and the resulting clarifications of what is positioned inside and outside is a politically sensitive step (Williams and Van’t Hof, 2016). In Costa Rica, practicalities were the main reason why actors from the private sector only played marginal roles in the national evaluation system. Unlike civil society actors, the private sector was not the focus of either the evaluated policy programs or the evaluations themselves. In addition, the contacted actors showed little interest in the topic of evaluation. 6 Consequently, the private sector has little awareness of the state’s evaluation efforts, which is why the evaluation function of accountability to the private sector is less pronounced. The boundaries of the national evaluation system can (and do) shift and are accordingly adjusted on a regular basis.

Goal axiom

The goal axiom states that systems reach certain goals through purposeful behaviors via pathways and means (Adams et al., 2014). In DEval’s evaluation capacity development work, the most relevant propositions of this axiom were

Purposive behavior

Purposive behavior refers to an act or behavior that is intentionally directed toward goal attainment (Rosenblueth et al., 1943). To direct its actions, the system must therefore understand the final intended state (Buckley, 1967). An important first step in DEval’s evaluation capacity development projects was to define the goals (desired final state) of the Costa Rican national evaluation system. The actors from the NEP wanted to improve the transparency of public policy via the participation of civil society in evaluation. Thus, actions were set to integrate the ombudsman and a representative of nongovernmental organizations (NGOs) working toward the SDGs into the NEP. Furthermore, a handbook on participation in evaluations within public institutions was developed for the public sector. In this context, goal-oriented clarity resulted in actions that ultimately fostered public policy transparency in Costa Rica.

Satisficing

From the perspective of ethics and rational choice theory, Honderich (2005) describes “satisficing” as choices and actions that achieve sufficient satisfaction, but which are not optimal given particular situational constraints. There are multiple ways to achieve this goal. Due to limited resources, the system cannot discover the “optimal” path, and must “settle” on a path that provides a reasonable degree of satisfaction (Simon, 1956). The notion of

Operational axiom

The operational axiom describes the mechanics and internal functions of the system, which determine its unique behaviors and performance.

Relaxation time

Systems do not usually remain in a state of equilibrium for long periods. Rather, they are frequently disturbed, and will attempt to return to a state of equilibrium over time. However, systems require adequate time to recover from disturbances in an orderly fashion (Clemson, 1984; Holling, 1996). Although disturbances are normal, systems that experience too many disturbances in short succession may become unstable. This makes it important to allow sufficient

Self-organization

To ensure survival, a functioning system can adapt and reorganize its structure to cope with change (Cilliers, 2002). Based on this proposition, we assume that a national evaluation system is inherently capable of dealing with changes and challenges. As such, we are able to recognize the agency of the national evaluation system. Rather than actively directing the national evaluation system, it should be empowered and supported in order to autonomously self-organize to the greatest degree possible. Rather than enforcing practices from powerful Western donor agencies, evaluation capacity development actors should work with national and local methods to foster the culturally sensitive development of national evaluation systems. This approach may reduce efficiency (outputs) in the short term, but is certainly more sustainable in the long run, especially given improvements in ownership and agency. One example of DEval’s work is the development of the Costa Rican NEP. Here, NEP stakeholders invited other stakeholders to regular meetings, networked with them, and initiated joint activities to strengthen the evaluation capacity. The evaluation capacity development project was then able to support individual collaborations that formed independently (self-organized). For example, several NEP members (including the National Ombudsman and Mideplan, as well as evaluators) agreed to conduct a participatory evaluation. DEval supported this evaluation project with technical expertise as well as financial and personnel resources. As such, DEval’s evaluation capacity development work merely supported the self-organization forces emerging from within the NEP rather than directing or leading the process.

Viability axiom

The viability axiom addresses key parameters within a system that must be controlled to ensure its continued existence. To date,

Circular causality

This proposition suggests that there is no simple cause–effect relationship. Rather, effects result from multiple causes that often interact, amplify, or cancel each other out. Causes themselves can be influenced by the effects they produce, which results in circular relationships and nonlinear feedback loops. Moreover, causes and effects can operate across levels; indeed, “micro effects can have macro causes and vice versa” (Morgan, 2005: 4). A system is a dynamic entity, such that complex cause–effect relationships may change over time (Morgan, 2005). To promote the use of evaluations (effect), DEval sensitized policymakers to the many benefits and applications of evaluation results (Cause 1). Meanwhile, evaluators were trained (Cause 2) and the development of evaluation standards was promoted (Cause 3) based on the assumption that better evaluation quality would increase use. DEval was also conscious of both nonlinearity and circular causality in its evaluation capacity development program-planning efforts. For DEval’s evaluation capacity development projects, theories of change always consider internal and external factors as well as interactions and feedback loops. They are developed in close collaboration with partners and adapted when necessary.

Requisite variety

A system frequently experiences disturbances in its relationships and interactions. The

Design axiom

The design axiom provides information on how a system is planned, instantiated, and developed (Adams et al., 2014; Whitney et al., 2015).

Minimal critical specification

The proposition of minimal critical specification assumes that a system works best when only the minimal constraints necessary to support system performance are specified for tasks, jobs, and roles. This situation requires that we first know what is truly essential (Cherns, 1976). While it may be important to describe the output clearly, it is rarely necessary to be precise about how it should be accomplished. Furthermore, rules and regulations can inhibit adaptation and effective action (Cherns, 1976, 1987). The DEval evaluation capacity development projects defined their objectives in conjunction with the evaluation platform, and generally discussed the activities that can lead to the objectives. Here, time and money were the minimal critical specifications, but projects usually permitted substantial flexibility in how objectives were reached. A practical example of DEval’s evaluation capacity development work is the generation of an index to measure national evaluation capacities (INCE, by the Spanish acronym). This idea originated at a regional evaluation platform meeting. A working group of 30 actors was formed, with minimal critical specifications including time, financial costs, and human resources. These collaborative efforts were ultimately successful; after 4 years of work, the index has been measured in nine Latin American countries. We attribute the accomplishment of fruitful cooperation between many diverse actors to the abovementioned

Information axiom

The information axiom states that systems possess, create, modify, and transfer information. We found

Redundancy of potential command

The proposition of

Conclusion

This article’s novel contribution lies in its focus on systems theory. More specifically, using practical examples from DEval, we illustrate how evaluation capacity development practitioners can apply the system construct developed by Adams et al. (2014) and later enhanced by Whitney et al. (2015) to theoretically inform their evaluation capacity development activities. Systems informed evaluation capacity development practitioners provide system actors with technical expertise, but do not direct the system. This requires trustful cooperation. Systemic evaluation capacity development can only be applied if evaluation capacity development practitioners are able to adapt their own agenda to the (constantly evolving agenda of the) evaluation system.

Limitations

This article constitutes a novel contribution due to its focus on systems theory in the context of evaluation capacity development practices. While most examples describe DEval’s work with the Costa Rican national evaluation system, first experiences on a regional scale have shown that a systemic approach can also be applied in other countries and other evaluation systems (e.g. Ecuador). This article only described propositions that had the greatest effect on DEval’s evaluation capacity development practice and for which particularly illustrative practical examples were found. Future research should apply all propositions to evaluation capacity development practice examples. Thus far, the evaluation capacity development work conducted by DEval has been rooted in a systemic

Yet, evaluation capacity development practitioners need to be aware of a number of challenges: first, while various systems theory propositions (e.g.

Benefits

While keeping the various challenges in mind, this work highlighted many benefits of applying systemic approaches to evaluation capacity development. First, systemic evaluation capacity development practitioners are part of the system with which they work. This entails both accepting that evaluation capacity development practitioners represent only one perspective among many, while also needing to rely on many other perspectives to enhance their work. Systemic evaluation capacity development requires that all actors have space for participation and expression of their expertise. As such, practitioners contribute through their own evaluation expertise while simultaneously benefiting and learning from the evaluation system as a whole. Second, a systemic evaluation capacity development approach accepts that ownership is held by different actors of the national evaluation system. This supports system operations rather than focusing on goals and results, thus allowing for flexibility and self-determination. By applying a systemic evaluation capacity development approach, the complete evaluation system is strengthened, and not only a single actor. This contributes to a high degree of sustainability and good governance. Third, systems theory provides an analytical lens with which to identify the most relevant leverage effects of an evaluation system and to avoid major political disruptions. It also permits an improved understanding of the reasons for the success or failure of an evaluation capacity development program or component in addition to having implications for program design.

Yet, the employment of systems theory in evaluation capacity development is still nascent. This article offers an orientation for evaluation capacity development practitioners when theoretically grounding their work in systems theory. Governments and organizations are complex entities, and systems theory provides a useful tool to better understand and improve the operation of evaluation capacity development projects and programs therein.

Supplemental Material

sj-docx-1-evi-10.1177_13563890221088871 – Supplemental material for Grounding evaluation capacity development in systems theory

Supplemental material, sj-docx-1-evi-10.1177_13563890221088871 for Grounding evaluation capacity development in systems theory by Sarah D. Klier, Raphael J. Nawrotzki, Nataly Salas-Rodríguez, Sven Harten, Charles B. Keating and Polinpapilinho F. Katina in Evaluation

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was performed as part of the regular assignments of the Focelac project at the German Institute for Development Evaluation (DEval), funded by the German Ministry of Economic Cooperation and Development (BMZ).

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.