Abstract

Evaluation has different uses and impacts. This article aims to describe and analyse the constitutive effects – how evaluation forms and shapes different domains in an evaluation context – of evaluation related to an evidence-based policy and practice, by investigating how the evaluation of social investment fund initiatives in three Swedish municipalities is organized and implemented. Through interviews and evaluation reports, the findings show how this way of evaluating may contribute to constitutive effects by defining worldviews, content, timeframes and evaluation roles. The article discusses how social investment fund evaluation contributes to a linear knowledge transfer model, promotes a relation between costs and evidence and concentrates the power over evaluation at the top of organizations.

Introduction

How social interventions are evaluated, impact and influence individuals and organizations. Within the vast literature on evaluation use, evaluation may contribute to decision-making and accountability, legitimacy of actions taken, knowledge of the quality and results of public services, transparency, democratic dialogue, empowerment and organizational learning. More questionable uses of evaluation have come to include ritual use, in which conducting evaluation shows others that you take action and work in a modern organization (Krogstrup and Dahler-Larsen, 2000). Evaluation systems and performance measurement have also been said to have unintended consequences such as hindering innovation, sub optimization and goal displacement (e.g. Rijcke et al., 2016; Thiel and Leeuw, 2002).

In order to move beyond the intended and unintended use and consequences of evaluation, Dahler-Larsen (2012a, 2014) has proposed the term

An example of a methodological programme aimed at increasing the utilization of evaluation for policy-making and practice is evidence-based policy and practice (EBPP) (and more recently, evidence

Although there has been an abundance of research on the use of evaluation within EBPP (cf. Boaz and Nutley, 2019), less is known about how evaluation aligned with EBPP – which principally aspires to be objective and separate from the evaluand – forms and shapes different domains in an evaluation context. It is important to explore such consequences because of the continuous emphasis on evidence and outcome evaluation in the welfare sector, globally (cf. Mosley and Smith, 2018). It may improve the understanding of how evaluation in EBPP shapes aspects of an evaluation context beyond the intentions of the intended instrumental knowledge use. Also, in welfare organizations that have to deal with social and wicked problems, in which goals may be vague and contradictory, and it is difficult to achieve consensus on how to operationalize and measure the success of solutions (Hasenfeld, 2010), consequences of evaluation may have implications for how to define the success and long-term goals of welfare interventions and social policy at large.

The empirical case is social investment fund evaluation in Swedish local welfare government. Social investment funds (SI funds) are a materialization of the social investment perspective, which rests on policies that both invest in human capital development and help make efficient use of human capital, while fostering greater social inclusion. Social policies are a productive factor, essential to economic development and employment growth (Morel et al., 2012). In Sweden, SI funds is an answer to a lack of innovation and cooperation between local governments’ departments and a way to work preventively and long term despite short-term budgetary practices (cf. Jonsson and Johansson, 2018). SI funds fund temporary collaborative projects in order to achieve social outcomes and lower future spending. Monitoring, outcome evaluation and cost calculations are three evaluation types intended to provide evidence for what kind of interventions work and support decision-making.

The aim of this article is to describe and analyse the constitutive effects of evaluation related to EBPP by investigating how evaluation in social investment funds is organized and conducted in three Swedish municipalities. The research questions are as follows:

What are the constitutive effects of social investment fund evaluation and in what domains can they be found?

What is the significance of the identified constitutive effects for evaluation in EBPP?

The paper is structured in six parts. After this introduction, the literature on evaluation use relevant to EBPP is discussed, followed by the concept of constitutive effects. The case of SI fund evaluation and the qualitative methods used is then described. Finally, the results are presented, and a discussion and conclusion.

Evaluation use in EBPP

The study of the formative effects of evaluation can be derived from research on evaluation use, one of the most explored areas of evaluation research. It was often thought that evaluation was used instrumentally and decision-makers implemented the evaluation results, although it was more often used conceptually, for enlightenment or for legitimacy (Weiss, 1979; Weiss, 1977). Instead, ideology, interests and feasibility are more often prioritized (Shadish et al., 1991) and knowledge often makes discrete and incremental entries into the policy process (Lindblom, 1979; Weiss, 1980). Tactical, symbolic, ritual, conceptual, process and anticipating use have since described different outcomes of an evaluation process or product (e.g. Vedung, 2015). An often-debated question concerns the role of the evaluator in the promotion of evaluation use (Alkin et al., 1990; Donaldson et al., 2010). On the one hand, this is not the role of the evaluator since an evaluation should be external, impartial and objective knowledge among other information in policymaking. On the other hand, evaluators can greatly increase the use by identifying the intended users and working with them.

Furthermore, evaluation

Against this background, constitutive effects related to evidence-based evalaution may be a relevant area of investigation due to the focus on instrumental use. Proponents of the ‘evidence wave’ have argued that government activities should be based on ‘what works’, that is, evidence (Vedung, 2010). Although this wave currently encompasses a variety of approaches and methods, there has been an emphasis on experimental and quantitative outcome evaluation as the ‘golden standard’. That certain methods and approaches bring more evidence to decision-makers than others has spurred an intense debate on what counts as credible evidence (Donaldson et al., 2009, 2014). The demand for rigour and experimental evaluation relates the ‘evidence wave’ to a science-driven perspective on evaluation (Vedung, 2010). An aim is to enhance the role of evaluation and social science and contribute to the avoidance of decisions based on ideology and prejudice (Stame, 2019).

With regard to knowledge use, it is possible to discern different generations of EBPP. The first generation relied on a linear model of knowledge use in which evaluation and research generated evidence as a product, dissiminated to decision-makers for immediate and instrumental use (Best and Holmes, 2010, cf. Boaz and Nutley, 2019). The model is appealing from a top-down perspective in which powerful governing bodies can support the organizing and synthesizing of evidence in reviews, diffusing recommendations for standardized practice that can be monitored and evaluated. However, the model has met with increased criticism when faced with the realities of politics and professional practice (Martin and Williams, 2019). As Cairney (2016) argues, evidence becomes relevant to decision-makers only when beliefs and values are used to make sense of that information in relation to policy problems and solutions. Thus, the linear top-down model builds on idealized views of policy cycles and comprehensive rationality that attempt to identify the right time and place to infuse policy with evidence. The separation between evaluation and decision-makers also raises the question of whether or not decisions will still be political, or de-politicized, de-democratized and technocratic (Hansen and Rieper, 2010). For professionals, the model may contribute to a narrow, mechanistic, unreflective practice without room for professional adaptation (Martin and Williams, 2019). Part of the criticism has been the insufficient theoretical underpinnings of EBPP implementation in public administration, which has often ignored the rich literature on policy implementation and, consequently, questions of power and street-level behaviour (Johansson, 2010). Still, EBPP has also come to operate within bottom up-approaches operate within relational and system-wide models of knowledge use, which are more inclusive of different forms of evidence and how such evidence is produced (Best and Holmes, 2010). A relational model emphasizes exchanges between actors in research, policy and practice in a wide political context, while a system-wide approach draws on complexity and adaptation in evidence ‘eco-systems’. These kinds of approaches have gained traction but struggle with questions of power assymetries and symbolic participation and may therefore engender a distrust of evaluation (Martin and Williams, 2019).

In light of the influence of the evidence wave and the criticism of top-down EBPP, this study could contribute to exploring how such evaluation shapes and constitutes certain domains of the evaluation context in which it is conducted. In the next section, we turn to these domains of constitutive effects.

Constitutive effects as an analytical concept

Dahler-Larsen’s concept of constitutive effects is used analytically in this article to explore the formative consequences of SI fund evaluation. Measurement, quantification and standardization are not objective, evident and simply valid representations of reality, but are constructed through procedures in a social and organizational context. Measurement have a constitutive relation to the reality it seeks to describe and claims to measure and can only acquire meaning through contexts of use (Dahler-Larsen, 2014). This is in contrast to indicator ‘misuse’ or ‘unintended effects’, which imply that there is a ‘correct’ way of using indicators and that a deviation constitutes perversion (Rijcke et al., 2016).

Indicators are only one part of the evaluation of SI funds. In this article, the concept of constitutive effects is used broadly to cover three interrelated evaluation practices (monitoring, outcome evaluation and cost-benefit analysis) within SI funds under the term

Two factors that shape constitutive effects are

In this article, the four domains are used as analytical themes to identify and discuss the effects of SI fund evaluation. The term

Case and methods

In order to describe and analyse the constitutive effects of evaluation in EBPP, the implementation of SI fund evaluation was studies in three Swedish municipalities.

The SI perspective and SI funds

Internationally, the SI perspective has materialized in practices where private capital and entrepreneurs contribute to and profit from solving complex societal challenges by meeting predetermined targets. For example, through social impact bonds (SIBs), private actors finance welfare interventions and take the risk but also have the possibility to make a profit if goals are met in a logic of ‘payment by results’ (Berndt and Wirth, 2018; Jonsson and Johansson, 2018). Consequently, the SI debate in countries with SIBs such as the United Kingdom has revolved around how to structure legislation, taxes and financing in order to facilitate investments (Balkfors et al., 2019).

In Sweden, the SI perspective has been less about how to finance welfare through the private sector and more a municipal answer to a lack of innovation and public sector silos that complicates complex problem solving that demands the participation of several sectors and levels. Also, the SI perspective seeks to draw attention to rigid short-term budgetary practices in municipalities that hinders preventive and long-term welfare work that is more cost effective (cf. Hultkrantz, 2015; Jonsson and Johansson, 2018). Instead, the SI perspective in Sweden emphasizes coordination of different local bodies and a focus on preventive interventions – act today to lower the costs tomorrow – and thus combines elements of control and efficiency with coordination and trust (Balkfors et al., 2019). It thus relates to the recent Swedish debate on trust-based governance where professional discretion is emphasized to handle the effects of new public management (e.g. SOU, 2019).

More specifically, SI in Sweden is defined by The Swedish Association of Local Authorities and Regions (SALAR), an interest group that has been instrumental to the development of SI in Sweden, as ‘a limited investment which, in relation to the usual working methods, is expected to give a better outcome for the target group and at the same time lead to reduced socio-economic costs in the long run’. (Uph, 2017: 6). This definition includes different SI initiatives, such as SI funds, that is, financial resources that are set aside for certain projects. They exist primarily on a municipal level and are characterized as being (1) implemented through temporary projects, (2) separate from day-to-day public administration, (3) a collaborative effort, (4) evidence- or knowledge-based and possible to (5) monitor and assess from a societal as well as a financial perspective (Uph, 2015). SI funds are mainly carried out on a local level where half of Sweden’s 290 municipalities say they work with social investments in some way (Balkfors et al., 2019).

In this context, SI fund evaluation is given the role to determine whether evidence- or knowledge-based interventions are successful or not and connect them to different cost scenarios. Evaluation then work as a basis for decisions for managers or politicians from two aspects: identifying a choice between specific measures and to identifying these measures’ impact (including costs) for citizens’ wellbeing (cf. Hultkrantz, 2015). However, evaluation is regularly referred to as an area in which municipalities need support structures (e.g. Balkfors et al., 2019; Hultkrantz, 2015). A recent governmentally funded report on the state of SI in Sweden recommends supporting a ‘culture of outcomes’ in public administration and the establishment of a national competence centre to deal with the lack of evaluation skills and evidence-based methods (Balkfors et al., 2019).

Against this background, SI fund evaluation is seen as a critical case of constitutive effects in EBPP, where constitutive effects are likely to occur (cf. Flyvbjerg, 2011). One reason is that decision-making in human services depends as much on moral and ideology as evaluation and research, since it is often difficult to reach consensus on how to operationalize and measure the success of solutions, making human service organizations dependent on societal and political legitimacy (cf. Hasenfeld, 2010). As SI fund evaluation has formally been assigned a decisive role in decision-making in local government, the built-in conflicts make it a case in which constitutive effects could arise. Furthermore, SI fund evaluation appear to be formative as it seeks to capture the future based on calculations of future costs and benefits for the individual and society.

SI fund evaluation in three municipalities

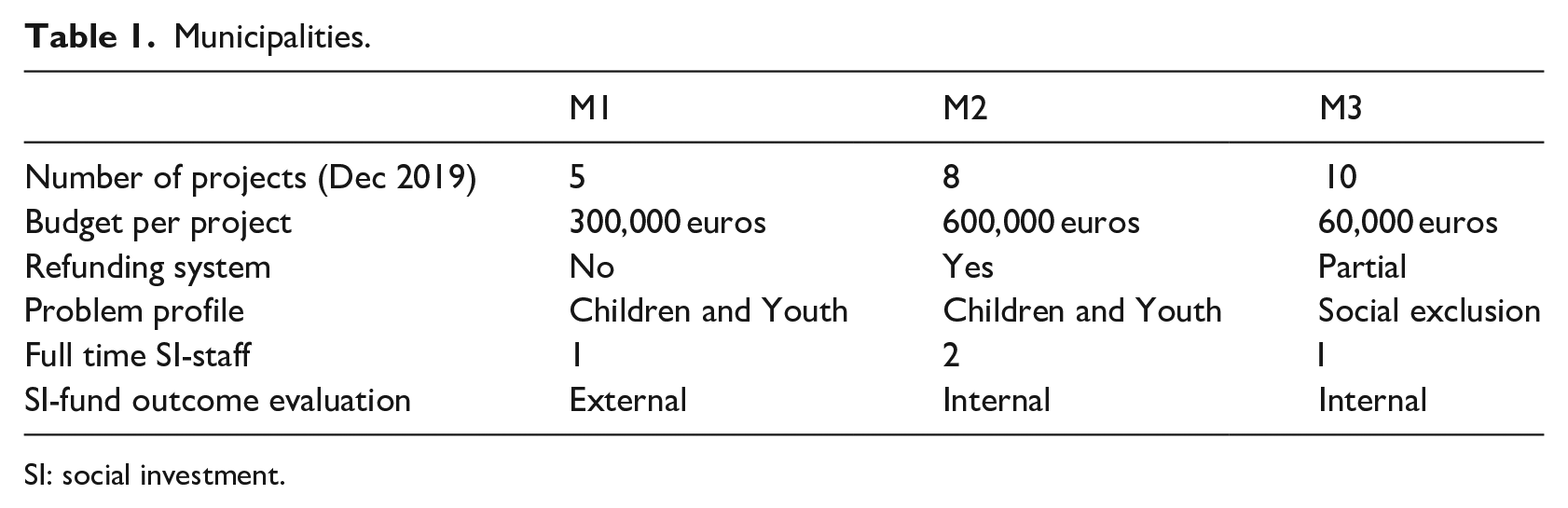

Three Swedish municipalities (pop. 70,000–150,000) were investigated in a multi-site case study (see Table 1). They had several completed SI fund projects and evaluations and thus had evaluations publicly available and interviewees that could reflect on the evaluation processes. They were also described as at the forefront of SI funds in Sweden but were of different size and with different refunding systems. In particular, M2 stands out as the largest SI fund organization with the largest projects and consequently more people to interview. Another difference is that M1 had external evaluators and thus a smaller organization, while M2 and M3 performed evaluations internally. Otherwise, the municipalities’ SI funds were similarly organized. They set aside financial resources in a fund that finances projects related to one or more specialized departments (e.g. social services). SI staff handle the SI project applications that have been submitted by these departments. An application should describe the social problem to be dealt with, the available evidence or research about the problem and the proposed intervention, the target groups, the intervention itself, the evaluation exercises, the costs and anticipated refunds, and the future implementation. The elected politicians in the municipality ultimately decide on what projects to initiate. A feature of the SI funds is the refund system, in which the projects refund parts of what they had initially received from the fund. In M2, projects refund an amount corresponding to the calculated cost reduction; in M3, refunds only apply if there are evident cost reductions such as a reduced workforce due to new ways of working. In M1, no refund system is in place.

Municipalities.

SI: social investment.

Interventions mainly range from

SI evaluation directs attention towards the outcome side of policy evaluations (Jenson, 2009), although a variety of descriptive quantitative and qualitative data and analyses are common in practice (Vo et al., 2016). In the three municipalities, SI fund evaluation comprises three interrelated evaluation activities that are carried out regardless of intervention. A

Data collection

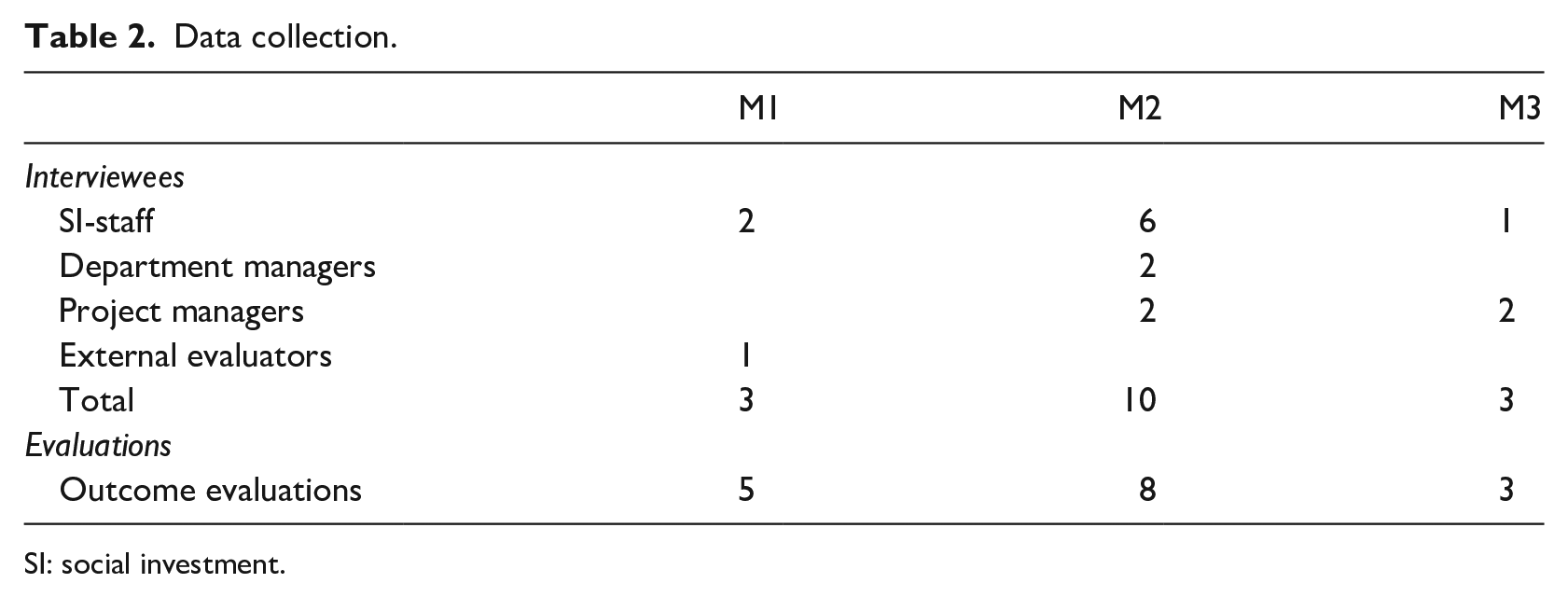

Qualitative data from 16 interviewees and 16 evaluations were collected (see Table 2). Interviews were considered relevant for capturing reflections on how evaluation could affect domains outside of formal intentions. Interviewees were contacted in a combined network and strategic sample. Municipalities were approached through a national network of SI coordinators, after which SI staff, project leaders and department managers were contacted and asked to suggest other interviewees.

Data collection.

SI: social investment.

The interviews were semi-structured in two themes:

Data analysis

An abductive approach was used in a reflective dialogue between the researcher and the previous research, theory, and the empirical material (cf. Alvesson and Kärreman, 2007). The transcribed empirical data directed attention towards the role of evidence and evaluation in decision-making and how they may shape and determine the worldviews of staff and the content of social interventions. Research on EBPP, evaluation use and constitutive effects was consulted in order to make sense of the data (e.g. Boaz and Davies, 2019; Dahler-Larsen, 2014; Vedung, 2010). In a next step, the four domains of constitutive effects provided an analytical lens. For example, the interviewees’ reflections on the significance of evaluation to aspects of time were categorized in the domain of

The constitutive effects of SI fund evaluation

The results are presented in four sections corresponding to the four domains of constitutive effects: frame and worldview, content, timeframes and social relations.

SI fund evaluation as a frame and worldview

SI fund evaluation shapes an interpretive frame of how to understand the success of social interventions which is presented in terms of

The solution is framed as an evaluation approach that delivers data, which makes it possible to single out which preventive interventions are effective and cost reducing, and which preventive interventions are not. The language of SI fund evaluation supports this solution.

This solution provides normative rationales related to austerity and the scientific rationality of EBPP. Preventive interventions are framed as investments in order to innovate the public sector and solve fiscal challenges. The interviewees describe similar arguments when asked about the framings of SI fund evaluation. Regarding whether or not social workers are comfortable with the economic approach of SI funds, an evaluator in M1 argues that, in recent decades, social workers have become more open to analysing costs:

Social workers work with societal change that provides economic outcomes . . . you must be able to argue for your own role . . . you create benefits in society, dammit, you help people feel better, it’s extremely useful . . . and we live in a capitalistic society, and you have got to learn the rules of the game . . . there is too much ignorance about this.

Thus, the overall frame and worldview appear to resonate broadly with the interviewees, in terms of both how credible and consistent it is perceived, and how relevant it is to their everyday experience (Benford and Snow, 2000).

SI fund evaluation defines the content and what to strive for

Evaluation defines what is central in social interventions. A first subtheme concerns how SI fund projects are decided on, and a second subtheme concerns how lower future expenditure is what to strive for.

First, SI fund projects are ideally thought of as bottom-up organizations in which departments can apply for funding based on both evidence and professional experience. In practice, the SI staff in all municipalities are involved in probing and developing suitable ideas from the existing evaluated interventions. For example, in M2,

[Departments] see the problems or challenges, or what to call them, and identify the target group . . . and we (SI staff) say that from an evidence perspective, this [intervention] should work. (SI staff, M2)

SI projects may also be decided on top-down with minimal participation from the professionals in all municipalities, potentially becoming a burden:

There is much political will in these social investments. /. . ./ Social investments have sometimes been the result of political orders and have been more difficult to implement . . . here is an idea from above that someone wants to try. It is not as successful as when the idea comes from below. And we may have had too many political orders. (Department manager, M2)

Nevertheless, finding evidence-based interventions has proven to be difficult since ‘there are few evidence-based interventions in social work for our problems’, says the SI staff in M3. ‘Instead, we have to experiment, and that’s why evaluation is so important, so we get an understanding of what works and what doesn’t work’.

Second, the SI-funded projects that are initialized strive to achieve lower future costs. Project goals and outcomes are defined in the project application and are related to a cost calculation that entitles the project to funding. Evaluation reports in all municipalities reach conclusions on cost-effectiveness in their executive summaries: ‘The cost of social exclusion on a societal level has decreased by [EUR 9,500] per participant per year, which is above average in [the evaluator’s] evaluations of similar projects’ (Evaluation M1, 2017a: 4). In this case, it is conducted through the monetarization of the project and its clients (i.e. service users) through standardized costs available through public databases, which are compared before and after the intervention. As costs depend on the social problem and intervention, they differ between projects. However, costs for social assistance are analysed in 9/16 evaluations, partly because they are relatively easy to calculate and partly because they are among the municipalities’ largest expenditures. Furthermore, costs require quantification. The objectivity and trustworthiness of quantitative measurements are emphasized in general. For example, a project manager in M3 argues that ‘If you can’t present real results, you can’t say what works. Not teachers’ assessments such as grades, but standardized tests that cannot be questioned’. However, it is not unusual for evaluations to suffer from a lack of data, short time spans and attribution issues. Evaluations in M2 need ‘more data and a longer period of measurement to confirm a relationship’ (2019: 2), ‘sufficient data is not yet available’ (2018: 2) or data ‘have been hard to interpret or shown results that cannot be directly related to the project’s work’ (2017: 2). Evaluations may also lack the possibility for control groups ‘as the target group has not been able to be identified and documented in the social assistance system systematically’ (2017: 13). In M3, ‘objective points of measurement have been hard to find’ (2019b: 3) and, in M1, some projects were not aware of the monitoring that was needed for outcome evaluation. These problems can make cost calculations difficult: ‘[It is] difficult to demonstrate that the intervention has generated the economic effects that surpass the costs’ (Evaluation M2, 2019: 2). Nevertheless, the interviewees in M2 argue that there is little discussion among decision-makers on methods or results,

In all, a constitutive effect in this domain is how SI fund evaluation defines how social interventions should strive for what works and how to lower future expenditure. These aspirations tend to be initialized and organized top-down and are challenged by problems of data accessibility and the lack of evidence-based interventions. However, they may still bring legitimacy for what works.

SI fund evaluation defines timeframes

SI fund evaluation contains both long-term and short-term perspectives. On the one hand, the SI perspective rests on the long-term perspective that investing financial resources in preventive welfare work today leads to lower costs, fewer social problems and a decrease in social exclusion among citizens in the future. There is an element of prediction in the evaluations in which the economic outcomes in the form of savings and expenses can be formalized as prognoses on a different year basis related to actors on local, regional and national levels (Evaluation M1, 2017a, 2017b). On the other hand, although the outcomes cannot be measured immediately but generally after several years, attribution becomes more difficult as the years pass. Also, SI fund evaluation must be tailored to a municipal decision-making context in which the evaluation results are in demand at the end of a 1-year budget or a 3-year project:

[The evaluation] should be conducted as quickly as possible for economic reasons. Otherwise, we could have had a longer perspective. And we must also think about when to conduct the evaluation, preferably ten years after the investment. However, that’s difficult for us to decide. So, we have to find indicators of long-term outcomes, for example, school grades for future unemployment. Also, the scaling up must be decided on, you can’t postpone it. (SI staff, M2)

Organizing SI funds in temporary projects also adds to the short-term perspective. This is relevant since the evaluand – the project – is closely related to the evaluation practice (Shadish et al., 1991). Projects are generally characterized by temporality, assignments and transition, in contrast to the permanency, goals and continuous development of bureaucracy (cf. Fred, 2015). Since they should ideally be structured as evidence-based interventions, SI staff and external evaluators generally emphasize the importance of rigid projects, baselines and monitoring systems in order to conduct various forms of outcome evaluation and cost analyses. However, they also entail innovation and experimentation and projects in all the municipalities have tended to differ from the initial plans, challenging the projects’ rigidity and predictability. The SI staff in M2 argue that although projects must adapt at an early implementation stage, rigidity is more important since the outcome evaluation will otherwise suffer. Similarly, an evaluator in M1 argues that the proposed rigidity of the required monitoring and evaluation led to irritation and scepticism towards evaluators who were seen as interfering with social work (evaluator, M1). Finally, the short-term perspective poses an ethical challenge. A project manager in M2 states that since a municipality ‘goes all in and give society’s most vulnerable children something that you won’t continue with’.

In all, a constitutive effect is how SI fund evaluation tends to be conducted from a short-term perspective, giving rise to tensions such as deciding on long-term interventions based on short-term measurements and keeping temporary and innovative projects rigid due to the requirements of outcome evaluation.

SI fund evaluation defines social relations

SI fund evaluation defines social relations and identities for the evaluator and for actors in the evaluation context. The extensive tasks of the SI staff in M2 and M3 make them both internal evaluators and a support function for the project, and sometimes even the ‘informal project owner’ (SI staff, M2). Their involvement is appreciated by the department and project managers who receive various kinds of support. The internal evaluation role of the SI staff in M2 and M3 was meant to keep costs down and evaluation skills within the municipalities. However, providing decision-makers with evaluations while also contributing to organizational development give the SI staff a potentially conflicting role between control and support:

You can become partial and biased, but it’s also the way we learn. There shouldn’t be a strong distinction [between evaluation and projects]. Then we’d just sit here and evaluate and say this is bad and this is good. It should be a process. (SI staff, M2)

Other actors defined by SI fund evaluation are politicians on the municipal board who are constituted as decision-makers. In cases in which SI projects are also proposed and decided on by politicians without participation from professionals, such a combination represents a top-down version of EBPP. Relatedly, department managers are also constituted as decision-makers since they become SI project owners. As SI projects are relatively small in monetary terms, department managers are involved in decisions about what would otherwise be delegated to other roles. Thus, SI projects receive relatively lot of attention. However, the better the results of an SI project, the larger the refund from the department to the SI fund (in M2). For this reason, the refund model has been criticized by department managers in M2 for being a burden and a loan. On top of refunding, there is also the cost of implementation and upscaling of successful interventions. A department manager (M2) thinks that the refunding mechanism is counterproductive. This raises the question whether SI contributes to incentives to present data in an advantageous way for financial reasons, to which the manager responds that the department’s primary concern has been to implement new SI projects: ‘We’ve been so economically stressed out . . . we’ll pay anyway’. Also, the evaluations’ recommendations are subject to ‘lengthy discussions’ (Department manager, M2) before finalization where they can be negotiated and carefully formulated. SI fund evaluation therefore creates certain incentives and tensions among different decision-makers.

Finally, actors on other levels are present in prognoses in some evaluations as they are generally responsible for health care (regional level) and/or employment (national level). The levels become sectors to which municipal costs can be transferred when interventions succeed (Evaluation M1, 2017a).

Thus, a constitutive effect of SI fund evaluation is the concentration and upwards movement of the power over the formulation and use of evaluation at the top of the municipality, and the lack of professional and client participation.

Discussion and conclusion

In summary, SI fund evaluation contributes to constitutive effects in four different domains. It promotes a frame in which it solves the inability to measure the costs of preventive welfare work within scientific rationality. SI fund evaluation also defines the content as top-down evidence-based interventions aimed at gaining knowledge on what works and reducing costs. This creates implementation challenges because of the lack of evidence-based interventions and data accessibility. Furthermore, SI fund evaluation is conceptualized as a long-term commitment but performed in the short term, creating tensions between, for example, the flexibility and rigidity of projects. Finally, the power over evaluation and how to define success is located at the top of the municipal administration. In this closing section, the limitations of the study, the potential significance of the constitutive effects of evaluation in EBPP and their implications for research and practice are discussed.

A limitation of the study concerns the use of domains as analytical concepts. Dahler-Larsen (2014: 977) describes them as conceptual domains and uses them exploratively to ascertain whether they are ‘good heuristic hooks upon which to hang empirical detail’. Indeed, they have been useful for both exploration and description, although they are broad and have been complemented and operationalized with the help of other concepts in the analysis (e.g. frames). Another limitation concerns data. Project managers are in the minority, and no professionals, politicians or clients were interviewed. This has directed attention towards civil servants and managers, not least since decision-making was seen early on as an essential part of SI fund evaluation. However, even if project managers state that not much is different in an SI fund project compared to other projects (apart from increased administration), professionals might hold different views. Politicians could also provide valuable insights into how evaluations are used. Hence, even if the study provides an account of the production of constitutive effects in an administrative practice, future research could also study the constitutive effects in a

A first topic of discussion is how SI fund evaluation promotes an idea of evaluation use built on a linear knowledge transfer model, that is, a one-way process from researchers or evaluators’ production of evidence to end-users who incorporate such evidence into policy and practice (Boaz and Nutley, 2019). It is closely related to a top-down implementation model requiring compliance with knowledge of the best available interventions and assessments based on systematic literature reviews of published research. (Bergmark et al., 2011). Experimental designs are supposed to produce instrumentally usable knowledge for decision-makers about ‘what works’ (Vedung, 2010). It represents a science-driven perspective based on the rationalistic assumption that it is possible to separate goals (politics and decision-making) and means (social science and evaluation) (cf. Furubo, 2019).

At the same time, there are data problems and a lack of evidence-based interventions that make it difficult to perform SI fund evaluation in ways that make it rigorous for decision-making. Nonetheless, the organizational procedures for performing evaluation are upheld and said to be valuable to show externally. Thus, there are signs of loose coupling, that is, a deliberate separation between organizational structures that enhance legitimacy and the organizational practices within an organization that are believed to be technically efficient (Boxenbaum and Jonsson, 2017: 90). Evaluation may therefore become legitimizing since it is not the substance of results that are used, but what they indicate in terms of their credibility (Boswell, 2012). This is not an unusual phenomenon in human service organizations, which are dependent on legitimacy because of the difficulty of measuring outcomes or even agreeing on the desired outcome (Hasenfeld, 2010). In order to make the organization legitimate in the eyes of voters, through evaluation, senior managers and politicians can show they are able to take action.

Finally, although the idea of a linear knowledge transfer model dominates the SI fund evaluation frame, there are signs in the SI evaluation practice of a relational model of knowledge use in which a variety of actors is included in the production and use of evidence. For example, the requirement for evidence-based interventions is downplayed in the municipalities and may be exchanged for ‘proven experience’. Also, data problems and the lack of evidence-based interventions challenge the linear model and which is partly complemented by professional knowledge. In light of the different pitfalls and criticisms of top-down and linear knowledge use, an implication for evaluation practice in EBPP is how evaluators are challenged to problematize and go beyond a linear knowledge transfer model or otherwise be confronted by several challenges when disseminating evaluation results to decision-makers.

A second finding is how SI fund evaluation as a frame and language promotes a link between evidence and costs. Porter (2003) states that cost calculations such as cost-benefit analysis were an attempt to create decision theory by establishing a rigorous, quantitative basis for public decisions. It was a way of demonstrating honesty and fair-mindedness in the face of corruption, politics and distrust. Quantitative criteria are also authoritarian. They are regarded as accurate or valid representations of something else and perceived as useful for solving problems. They also link actors with relations to the numbers and are associated with rationality and objectivity (Espeland and Stevens, 2008). SI fund evaluation ties such quantitative cost calculations to EBPP. The combination of scientific measurements and decisions based on fair calculations could explain the resonance of SI fund evaluation among decision-makers. It provides them with apparently objective data in order to make decisions about costly welfare interventions in the face of austerity.

The link between evidence and costs has at least two implications for evaluation in EBPP. First, it turns the attention away from qualitative methods, the processes of professional practice and the experiences of clients. Evaluation is not for professional improvement or client use, but for instrumental decision-making. It aims to settle rather than inform EBPP. A narrow focus on demonstrable results may overshadow the significance of missing important information on implementation processes and discourage innovative thinking (Gray et al., 2015; Lehmann, 2015).

Second, the link reveals the costs of social problems and interventions. This is a core argument of the SI perspective in which the financial benefits of early interventions are emphasized, urging decision-makers to act preventively to social problems (cf. Hemerijck, 2017). Instead of looking back, SI thus contributes with a focus on the future by trying to identify preventive interventions that may save resources and decrease human suffering. Hence, the slogan “What works?” may be altered to “what lowers future costs.” However, the link between evidence and costs also raises the concern if citizens, often children, matter instrumentally to economic ends rather than existentially (cf. Lister, 2004). Thus, expenditure might only be justifiable when there is a demonstrable pay-off, not when it merely contributes to well-being or enjoyment. Future research could explore how SI fund evaluation shapes future local social policies and interventions.

A third topic is how SI evaluation follows a pattern in which the power over evaluation has moved from a professional sphere to a managerial sphere. A professional sphere ideally emphasizes knowledge for improvement, based on experience, to be used conceptually and interactively. However, the responsibility for SI fund evaluation in two of the municipalities has been allocated to internal evaluators on a top management level who have economic rather than professional backgrounds. This is a managerial sphere that typically emphasizes knowledge manifested in standards and used instrumentally to investigate accountability (Evetts, 2009). Thus, the results of this study follow the development in welfare work in recent decades in which the power over evaluation in public sector organizations has moved upwards (e.g. Krogstrup, 2017). Consequently, although professionals’ views are lacking in this study, it is questionable whether trust in professionals, as promoted by trust-based governance, is supported by SI-fund evaluation. Neither professionals or clients may not be represented when discussing criteria for success and how acceptable welfare should be assessed. As research suggests that policymakers have also lost the power of evaluation to administration (e.g. Rosser and Horber-Papazian, 2018), future research could investigate the strategies and justifications for the evaluation jurisdiction of civil servants. Also, economic and statistical competency may increase in demand. However, bearing in mind that the sample is small and that research has pointed to a diverse evaluation practice within social investment initiatives (Vo et al., 2016), this implication needs to be further investigated.

This discussion on the power over evaluation raises the question whether politics can be separated and removed from evaluation. If politics in evaluation refer to actors’ involvement and negotiations on the distribution of societal and organizational resources, it seems difficult. An evaluator ultimately chooses values and criteria against which to value and frame the evaluand, through choices of methods and research questions, with implications for said resources (cf. Karlsson Vestman and Conner, 2006). In this perspective, SI evaluation not only re-locates the power of evaluation to a managerial sphere, but also the politics.

In conclusion, the constitutive effects of SI fund evaluation have significance for evaluation in EBPP in various ways. SI fund evaluation promotes a linear knowledge transfer model and an evaluation frame and language that link evidence with costs. SI fund evaluation relates to a managerial sphere rather to a professional and give legitimacy to welfare organizations.

Footnotes

Acknowledgements

The author would like to thank the two anonymous reviewers for their constructive comments. Mats Fred, Patrik Hall, Dalia Mukhtar-Landgren and Verner Denvall all gave valuable comments on early drafts.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Swedish Research Council for Health, Working Life and Welfare under Grant 2017-02151.