Abstract

This article examines what the co-existence of different evaluation imaginaries – understandings of what environmental policy evaluation ‘is’ and ‘should do’ – means for everyday evaluation practice. We present a case study in which we show how these different understandings influence the evaluation process as they are mobilized interchangeably. Though co-existing evaluation imaginaries broaden the repertoire and potential for innovation, practitioners also experience tensions as innovative ambitions conflict with institutionalized practices. We hypothesize that practitioners deal with these inconsistencies by decoupling approaches, intentions and outcomes from each other. In this way, innovation occurs in parts of the evaluation process while other parts follow a more traditional approach. For evaluation theory we argue the need to further explore how decoupling enables practitioners to deal with co-existing imaginaries. For evaluation practice we stress that articulation of societal expectations is indispensable to ensure the legitimacy of policy evaluation.

Keywords

Introduction

In recent decades, increasing awareness of the multi-actor, multiple perspective, and polycentric character of many policy processes has led to the development of a variety of different perspectives on the styles and roles of policy evaluation, and to new analytical tools and approaches – for example, argumentation approaches and participative policy analysis. However ‘traditional’ policy analysis approaches, characterized by a focus on system modelling, are still dominant in evaluation practice, even if methodological plurality is widely accepted (Højlund, 2014; Sanderson, 2000; Thissen, 2013). In the field of environmental policy evaluation, the setting of this article, these traditional approaches are strongly represented, while new approaches gain influence to evaluate multi-governance settings. We see environmental policy evaluation as a systematic investigation and assessment of the implementation, effects and/or side effects of an environmental policy activity, in order to inform policy decisions or actions concerning this activity. Further to policy analysis, evaluation research contains an element of assessment or appraisal alongside a set of criteria or principles (Crabbé and Leroy, 2008).

Several authors reject and problematize the common distinction made in the field of environmental policy evaluation between the views, schools and styles in policy analysis, such as ‘technical’ and ‘deliberative’ models (Owens et al., 2004), ‘rationalistic’ and ‘constructivist’ approaches (Huitema et al., 2011) or positivist and post-positivist traditions (Adelle and Weiland, 2012; Turnpenny et al., 2009). In practice, none of these approaches seems to be applied in a pure form and such distinctions have therefore been criticized as simplistic (Adelle and Weiland, 2012; Mayer et al., 2004; Owens et al., 2004). Instead, there is a need for sensitive selection and combination of approaches. For example, it is suggested to better link policy performance assessments and assessments of policy processes (e.g. on learning and politics of policy-making) (Adelle and Weiland, 2012); to differentiate by type of policy supporting activity (Mayer et al., 2004); and to tailor approaches to the object (the kinds of questions being asked) and objective (the end to which the evaluation is being conducted) of appraisal in particular contexts (Owens et al., 2004). These authors are aware that epistemic cultures and policy structures affect the selection and combination of approaches in policy evaluation. Yet, they largely seem to ignore the influence of ‘wider’ societal expectations upon evaluation processes and practice. Evaluation practitioners and evaluating organizations secure their legitimacy by acting according to (diverse and potentially conflicting) societal expectations. Recursive relations exist between (micro-level) evaluation processes and practices and (macro-level) societal views on evaluation.

The claim in this article is that the organizational context is more important, in terms of explaining the selection and combination of evaluation approaches in practice, than the literature so far has acknowledged. We therefore aim to empirically explore how different evaluation approaches are selected and combined under the influence of co-existing, but contradictory societal views on what environmental policy evaluation ‘is’ and ‘should do’. How in their everyday work do evaluation practitioners address the multitude of evaluation approaches, given diverse societal expectations of evaluation?

To address this question we introduce the concept of ‘evaluation imaginaries’: evaluation imaginaries are social constructions that ‘define the purpose and meaning … of particular forms of evaluation in light of the society in which they unfold’ (Dahler-Larsen, 2012: 99). A case study of evaluators involved in a prominent Dutch environmental policy evaluation study in the PBL Netherlands Environmental Assessment Agency, illustrates how practitioners select and combine different approaches by drawing on two different evaluation imaginaries, which, as we demonstrate, creates both tensions and opportunities. This case reflects a more general tendency in environmental policy evaluation to draw on a tradition of technical-rational approaches to assess policy performance, while calls for novel approaches to assess complex policy processes are slowly gaining ground. (Adelle and Weiland, 2012). Both the PBL organization, as well as the specific assessment study are, therefore, important in that they reflect the state of the art in environmental policy assessment.

The next section introduces a sociological and historical analysis of evaluation theory (Dahler-Larsen, 2012) to apprehend the influence of evaluation imaginaries underpinning environmental policy evaluation. Subsequently, we explore how co-existing evaluation imaginaries and evaluation processes and practices are mutually constructed, using the notion of co-production. The fourth section reports the findings of a case study in the PBL organization, and aims to bring empirical evidence to the discussion on simultaneous use of different approaches. The final section concludes the analysis and points to theoretical and practical implications.

The role of evaluation imaginaries in environmental policy evaluation

The starting point is that evaluation approaches are ultimately linked to different societal ideas, norms and values on what evaluation ‘is’ and ‘should do’. This is what we call evaluation imaginaries. 1 The modernist evaluation imaginary embodies modern beliefs in rationality and control and emerged as an attempt to replace tradition, prejudice and religion with a technological mode of thinking that was assumed to ‘linearly’ advance wealth and progress (Dahler-Larsen, 2012). The reflexive evaluation imaginary 2 embodies ideals of continuous learning among policy actors and emphasizes the participation of different actors in the evaluation process, acknowledging that the outcome of evaluation will always be contingent upon the different viewpoints of these actors (Dahler-Larsen, 2012).

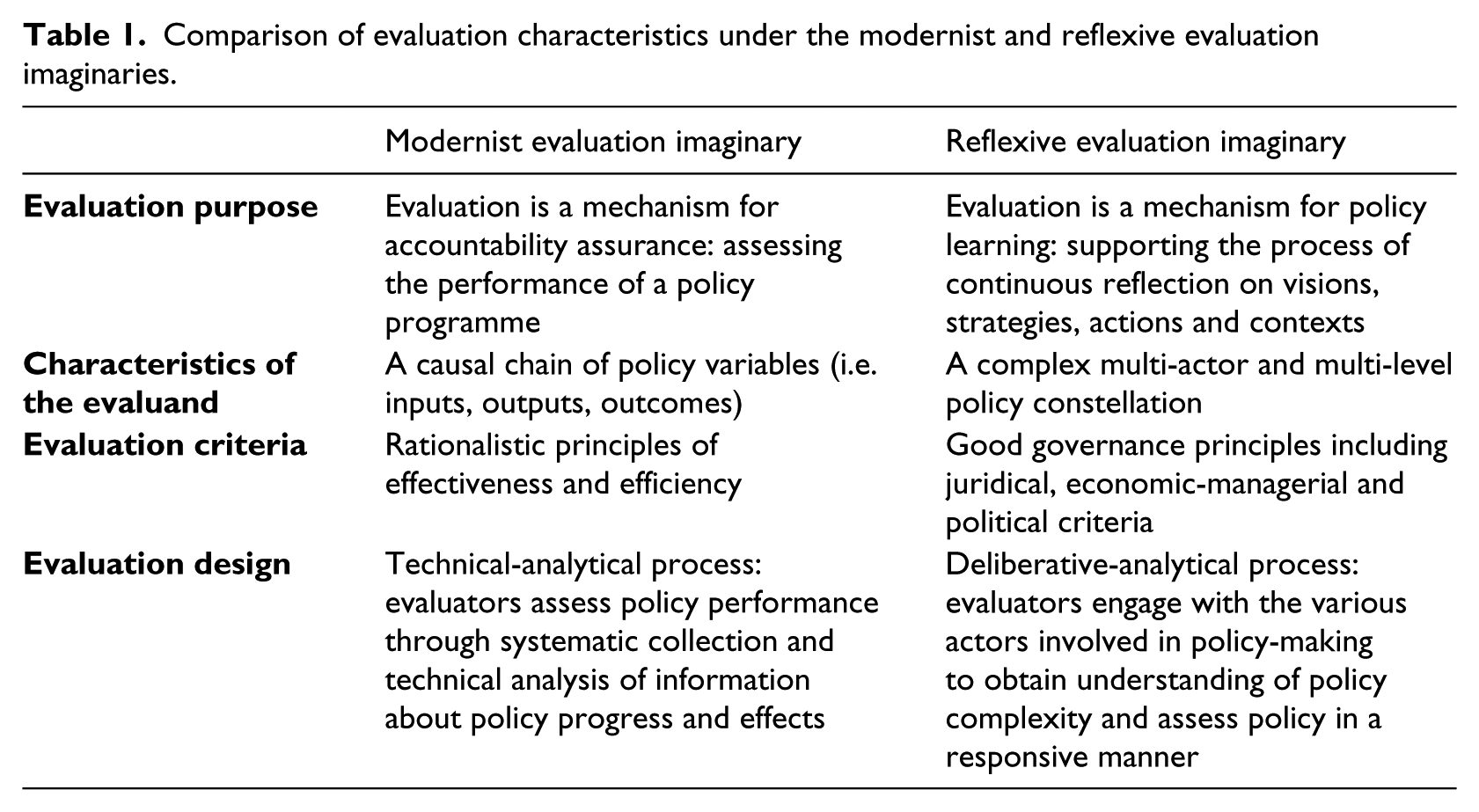

Table 1 illustrates how societal views on what evaluation ‘is’ and ‘should do’ embodied by the modernist and reflexive evaluation imaginaries differ in their understanding of the evaluation purpose, the characteristics of the evaluand, the evaluation criteria and the evaluation design.

Comparison of evaluation characteristics under the modernist and reflexive evaluation imaginaries.

Environmental policy evaluation under evaluation imaginaries

The recognition that political interventions intended to produce progress (i.e. welfare) may fail, led to the demand for policy evaluation. In this sense, the modernist evaluation imaginary has facilitated the emergence of the policy evaluation field. In a similar vein, the term ‘environmental policy evaluation’ was first coined for modern ideals of rationality and control to ensure accountability of governmental policy activities for the reduction or prevention of environmental problems in cost-effective ways (Adelle and Weiland, 2012; Davies et al., 2006; Owens et al., 2004). The ‘textbook’ concept and everyday practices of various types of environmental policy assessment – e.g. including regulatory impact assessment (RIA) and sustainability impact assessment (SIA) – are still often based on modernist ideals of rationality, procedures, oversight and predictability (Adelle and Weiland, 2012; Durning, 1999; Owens et al., 2004; Turnhout, 2010). Environmental policy evaluators aim to facilitate better decision-making through the provision of technical information about policy performance, presented in ‘distance-to-target’ or cost benefit comparisons. Technical information is often produced with system modelling techniques, and processed in indicator-based assessments (Crabbé and Leroy, 2008). Indicators are perceived of as ‘objective’ scientific tools, that proceduralize the ‘objectivity’ of assessment processes (Rozema et al., 2012). The political determination of policy goals is presumed as relatively uncontroversial and stable before, during and after the evaluation period (Dahler-Larsen, 2012). The evaluator adopts the policy goal as officially and formally set. In doing so, the evaluator, in fact, adopts the complexity reduction of social reality as defined by policy-makers (Crabbé and Leroy, 2008).

Under the heading of reflexive modernization (Beck et al., 1994) environmental policy evaluation gained influence as a permanent process of continuing reflection upon the (side effects of) environmental risks in areas of daily life (Dahler-Larsen, 2012). The learning orientation encouraged evaluators to break with the conventional logic of accountability and to embrace the uncertainty and complexity of potential policy impacts in multi-actor and multi-level constellations of environmental policy planning and implementation. This shift has been accompanied by alternative models of the policy process, e.g. incremental and chaotic views on decision-making processes (Radaelli, 1995) and studies revealing the social construction of knowledge and expertise (Knorr-Cetina, 1981; Latour, 1987). While the myth of objective and generalizable scientific methods as well as the assumption of a linear transmission of knowledge to its users was questioned, more responsive and participatory forms of evaluation were introduced. A broader range of stakeholders with diverse interests on environmental policy are seen as legitimate players in evaluation. They may, for example, develop relevant evaluation criteria (Albaek, 1998), and supplant rationalistic principles of effectiveness and efficiency with good governance principles, such as participation, transparency and fairness (Crabbé and Leroy, 2008). Evaluators become sensitive to the unique conditions existing in the context in which a policy programme unfolds (Abma, 2006; Stake, 2004). They seek to capture the many perspectives of local stakeholders on a particular policy programme using participatory procedures in various forms of environmental impact assessments (Owens et al., 2004; Salter et al., 2010; Turnpenny et al., 2009; Van Asselt and Rijkens-Klomp, 2002).

Implications of the co-existence of evaluation imaginaries for environmental policy evaluation practice

The field of environmental policy evaluation has developed an abundance of approaches that focus on different questions using different methods (Adelle and Weiland, 2012), which, in fact, as we point out, is a direct result of the co-existence of the modernist and reflexive evaluation imaginaries. This section elaborates on the implications of the co-existence of evaluation imaginaries for evaluation practice. We draw on insights from science and technology studies (STS) to explain how the co-existence of the modernist and reflexive evaluation imaginaries informs processes of selecting and combining evaluation approaches in organizational contexts. STS scholars (Irwin, 2008; Pallett and Chilvers, 2014) suggest a way of viewing organizations as objects constantly in the process of becoming – dynamic, multiple, performative and open-ended – resulting from networks of different local practices of organizing and knowing. The notion of ‘co-production’ as developed by Jasanoff (2004) has played a highly significant role within this body of work, elaborating how micro-worlds of scientific practice and the macro-categories of political and social thought are mutually constructed. In the context of our study, co-production is used as interpretive device to explore the recursive relations between (micro-level) evaluation processes and practices and (macro-level) societal views on what evaluation is and should do.

In order to capture the significance of this device, we first explore why, drawing on an institutional perspective 3 , the modernist evaluation imaginary has been so influential in the environmental policy evaluation field. In this perspective, the (level of) institutionalization of evaluation imaginaries largely and often unconsciously defines the appropriate evaluation approach (Dahler-Larsen, 2012). Institutions are socially constructed historical patterns of values, beliefs and rules that guide evaluation practice and give meaning to concepts, practices, principles, norms, ethics, values and artefacts associated with evaluation (Højlund, 2014). As a consequence, evaluation processes and practices are largely informed by routines. Routines are informed by a shared understanding of legitimate action. In other words, institutions ensure that methods, skills, norms and processes align with and perpetuate the tradition of the modernist evaluation imaginary (Dahler-Larsen, 2012), which is maintained and enforced by the historical and cultural characteristics of European environmental policy 4 (i.e. strong legal roots, sector-focused). Turnpenny et al. (2008) illustrated how formal rules ‘instruct’ the scope and priorities of national-level policy assessments including e.g. specific guidelines for how evaluative data should be collected, and used in organizational and policy contexts. Informal rules, such as close cooperation between ministries and research agencies in the environmental field, enforce the continuance of established practices – ensuring that evaluation practices do not deviate too much from its functional scope (Turnpenny et al., 2008). As a consequence, evaluation practitioners are captured in a ‘competency trap’ – a self-reinforcing process of capacity-building for technical, indicator-based assessment approaches (Nykvist and Nilsson, 2009). Modernist institutions are enmeshed in three pillars of organizational life: in the values guiding evaluation research (normative pillar), in evaluation–policy arrangements (regulatory pillar) and in evaluation approaches (cognitive pillar). It is through the mutual reinforcement of regulatory, normative and cognitive pillars that the hegemony of the modernist evaluation imaginary in environmental policy evaluation can be explained. From an institutional perspective, the reflexive evaluation imaginary may gain influence in environmental policy evaluation practice via diffusion of standard rules and structures. Some signs into this direction are formal integrating policy efforts led by the sustainability discourse (Turnpenny et al., 2008) and the proliferation in the evaluation field of guidances and tools to engage with multiple actors (European Commission, 2001, 2009). Nevertheless, when it comes to institutionalization, the reflexive ideals seem to largely remain at the level of rhetoric. Familiar modernist concepts, goals and instruments that for decades have dominated policy evaluation in environmental areas such as energy, transport, agriculture and housing persist (Nilsson et al., 2008; Van der Knaap, 1995; Voss et al., 2006). An institutional perspective leads us to conclude that modernist institutions have shaped practices in the field of environmental policy evaluation that are proving resilient to change.

A practical view on organizational life, using co-production as interpretive device, allows us to unravel the assumed stability of modernist institutions, and explore how institutions, identities and imaginaries are mutually constructed in local practices. Local practices are characterized by bounded instability, i.e. novelty does emerge, but with a sense of continuity with earlier institutional innovations (Pelling et al., 2008). Alternative codes of meaning are continuously being shaped, interpreted and created. In this way, diversity is created in practice leading to contestation over which practices are appropriate. Such processes largely occur locally, without a demand for, or intention of, total, systemic change. Actors in and around the organization may begin to challenge promises and values in their local process that are inconsistent with statements, norms and values in the organization or in society at large. On the one hand, these inconsistencies may be experienced as tensions that result from the need to select or justify relevant approaches to work, while evaluations are often performed in an intuitive, unreflective and routinized way (Dahler-Larsen, 2012). On the other hand, the inconsistencies resulting from the co-existence of evaluation imaginaries in the evaluation process may also create more freedom for practitioners, who can pick and choose among different approaches and methods. In this process, elements of alternative evaluation imaginaries may be mobilized and novel evaluation approaches may be created, while traditional ones are contested even though still in place. This means, for instance, that indicators to assess policy performance (characteristic of the modernist evaluation imaginary, see Table 1) may trigger learning when policy deficit or progress once revealed is put in the broader systemic perspective of societal dilemmas the policy aims to address. This of course is a characteristic of the reflexive evaluation imaginary. In such a case, a technical evaluation tool meets a learning-oriented evaluation purpose, normally associated with the more deliberative approaches (Owens et al., 2004). The opposite is also true. Participatory policy analysis does not always result in mutual learning, for instance when participation is instrumentally used by experts to improve impact or public support rather than as a tool for opening up the evaluation process to alternative views and knowledges. A phenomenon described as ‘technocracy of participation’ (Chilvers, 2008).

Inspired by the notion of co-production we hypothesize, therefore, that the co-existence of modernist and reflexive evaluation imaginaries in today’s societies has brought inconsistencies in evaluation praxis. Inconsistencies are revealed when elements of the modernist and reflexive evaluation imaginaries are mobilized simultaneously. As a consequence, distinctions between intentions, approaches, and outcomes of the evaluation dissolve. In our empirical analysis, we examine how these inconsistencies are perceived and acted upon by evaluation practitioners.

The case of the Assessment of the Human Environment study and research design

On 24 September 2012 the PBL Netherlands Environmental Assessment Agency (Planbureau voor de Leefomgeving – PBL) published its Assessment of the Human Environment 2012 report (PBL Netherlands Environmental Assessment Agency, 2012). It covers the policy domains of spatial planning, physical environment and nature along six themes: climate and energy, sustainable food, landscape and nature, water, mobility, and environmental law and urban planning. An Assessment of the Human Environment report has been produced biannually since 2010 and, before that, 15 times on an annual basis in the environment and nature domain, following statutory regulations. Its original objective is to offer the Dutch government and parliament support for policy prioritization and budget allocation based on insights about anticipated policy performance. Over the years, the assessment study has faced requests to generate more actionable, reflective and solution-oriented assessment knowledge (Maas et al., 2012). The changes within the context of this particular study relate to organizational changes at PBL level. PBL is one of the government-funded Dutch planning bureaus.

5

The PBL advises the Dutch government in policy areas of nature, spatial planning and the environment, producing independent

6

policy assessments studies. Triggered by a credibility scandal in 1999, the PBL unwittingly embarked on a transition from a technocratic mode to a more reflexive mode of advising; yet in so doing it found itself confronted with a paradoxical situation. The PBL has been attempting to innovate its practices to become more reflexive and interactive, yet it cannot ‘escape’ the modernist assumptions that underpin its practices: given the institutionalized role of the Netherlands Environmental Assessment Agency at the Dutch science/policy interface and regular reorganizations (the latest due to a merger), the modest progress made in the direction of a PNS [post-normal science

7

] strategy should be considered a substantial result. It is not clear how much further the agency could go even, without losing some of its credibility in the policy domain (based on the image of ‘normal science’). (Petersen et al., 2011)

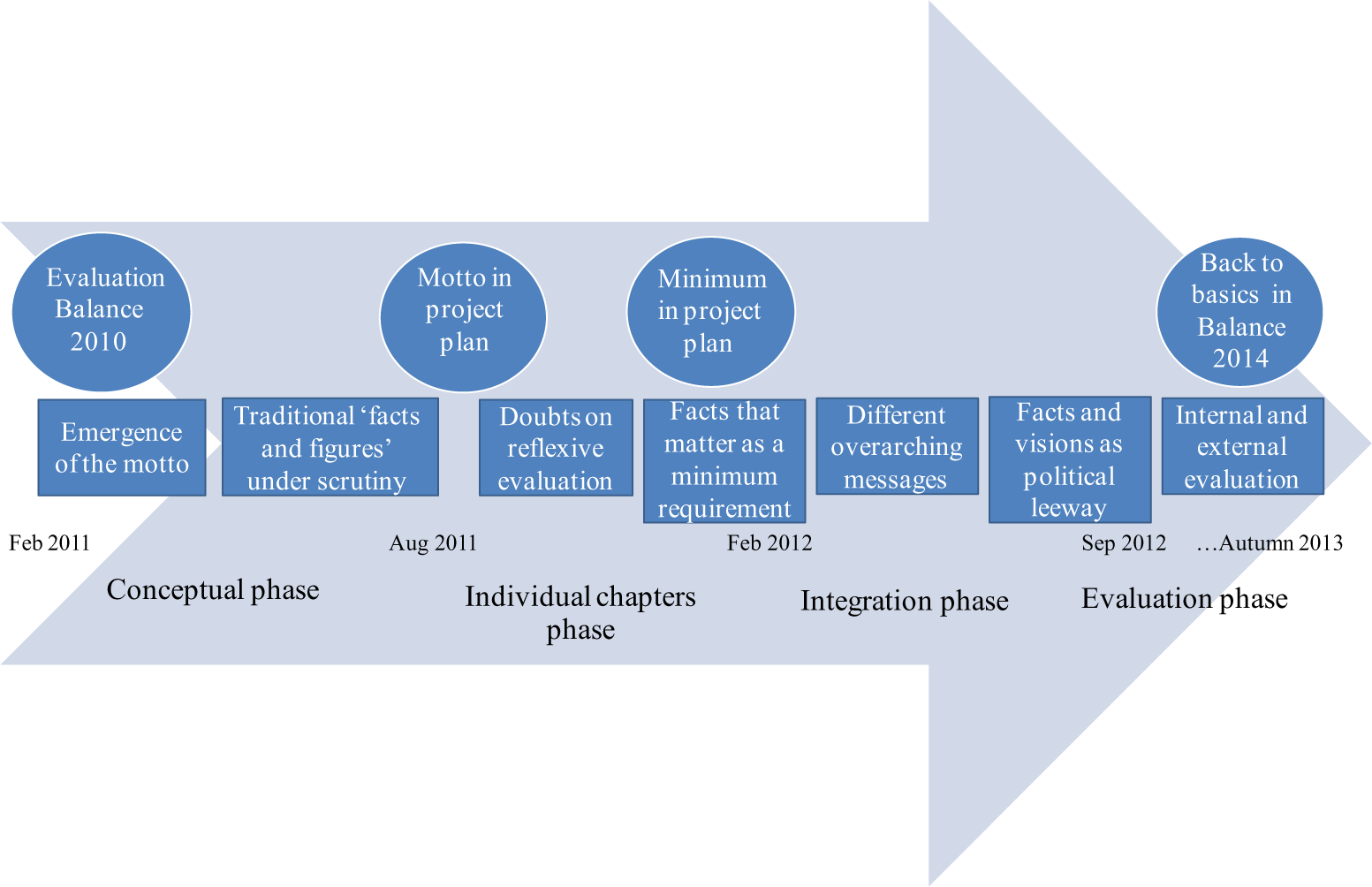

This article zooms in on the evaluation process of the 17th Assessment of the Human Environment study. We use this as a paradigmatic case (Flyvbjerg, 2006) in the hope of learning how inconsistencies are perceived and acted upon by evaluation practitioners who operate under co-existing evaluation imaginaries. Our analytical perspective is informed by interpretive and naturalistic inquiry (Lincoln and Guba, 1985). The basis of our study is the varied and multiple meanings attributed by practitioners and their peers 8 about what evaluation is and should do, as well as related interactions that occur (Creswell, 2003). We examine crucial episodes and decisive moments during the evaluation process in the period from February 2011 to October 2013. These highlight what the evaluation practitioners, in interaction among themselves and with their peers, think needs to be done to secure the legitimacy of the evaluation process and its outcomes. We conducted qualitative content analysis of email exchanges, meeting notes and discussion memos produced by the project team during this period. This enabled us to identify (see Table 1) how elements of the modernist and reflexive evaluation imaginaries are mobilized (first order analysis) and inconsistencies emerged and were experienced as tensions or opportunities (second order analysis). In addition, we draw upon participatory observation conducted by the first author of this article (EK). EK observed the process while participating as an embedded researcher and as a full member of the project team responsible for methodology support. Experience and proximity to the studied reality are at the very heart of case study research (Flyvbjerg, 2006) and offer insight into the contingent and partial processes of organizational change and innovation (Pallett and Chilvers, 2014). Embedded research blurs the distinction between analysis, practice and experience. Intersubjectivity is therefore an important quality of interpretive research since people’s actions and events are likely to be viewed differently and will have different connotations depending on point of reference (Creswell, 2003). We ensured the intersubjectivity of our interpretations in dialogue among ourselves and with project members during the reconstruction of the evaluation process. The reconstruction of the ‘Assessment of the Human Environment 2012’ process described in the next sections is split into four phases that highlight key moments and episodes in the project (see Figure 1):

the conceptual phase (February–August 2011): the project team set the ambition for the study;

the ‘individual chapters’ phase (August 2011–February 2012): the project team conducted thematic policy assessments;

the integration phase (February 2012–September 2012: the project team formulated ‘overall’ policy messages;

the evaluation phase (September 2012–October 2013: the project team and internal peers evaluated the project.

The ‘Assessment of the Human Environment 2012’ process.

How evaluation practitioners deal with co-existing evaluation imaginaries

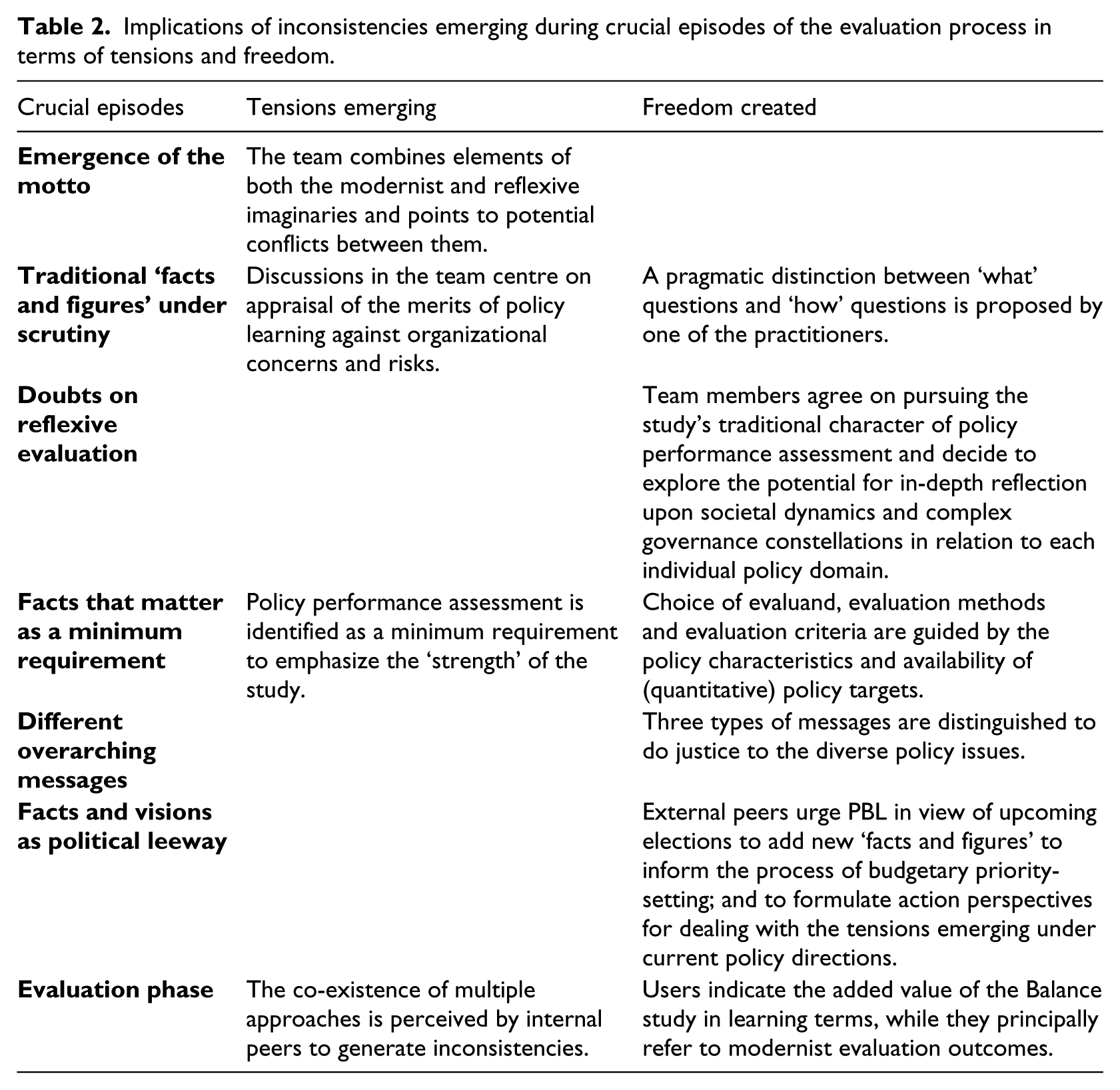

Figure 1 presents the timeline of the four phases of the project, together with crucial episodes (in squares) and decisive moments (in circles) in the evaluation process mentioned in the analysis below. Table 2 summarizes our analysis of inconsistencies emerging in terms of tensions or freedoms experienced.

Implications of inconsistencies emerging during crucial episodes of the evaluation process in terms of tensions and freedom.

Conceptual phase

In February 2011 the PBL management team assigned a team of five employees the task of developing a strategy for an innovative design of the Assessment of the Human Environment 2012 study. This task was motivated by an internal evaluation study addressing the policy relevance and quality of the 2010 study: How useful is this ‘Balance’

9

for policy-makers? The environmental balance, the nature balance and the spatial balance (i.e. the monitor spatial planning) are integrated into one product. How recognisable are these domains for the policy-makers in the diverse government departments? Are the conclusions and messages in the ‘Balance’ due to its high integration level not too general or abstractly formulated? Which policy-maker recognises him/herself in one of the messages? (PBL Netherlands Environmental Assessment Agency, 2010)

The emergence of the motto ‘moving from policy performance to policy learning’

With the aim of improving the study’s policy relevance and quality, the team reflected on the ambitions and purpose of the 2012 assessment study. After intense deliberations, they made three suggestions to the PBL management board. Their first reflects on the evaluation purpose: ‘To make more out of the Balance study than “traffic lights” 10 conclusions, we have to obtain insight into the systemic attributes of policy issues, which would allow us to identify new and realistic action perspectives.’ The second suggestion concerns the evaluation design, pointing to the need for increased interaction with target groups in order: ‘to obtain insight into the questions and needs of our target groups and to use their knowledge during the evaluation process’. The third suggestion addresses the nature of the evaluand, acknowledging the complexity of policy issues, ‘using policy evaluation methods for the analysis of networked and multi-actor governance settings’ (excerpts from internal memo to PBL management board meeting, March 2011).

While the team characterised evaluation purpose, evaluand and design following the reflexive evaluation imaginary (see Table 1), they also mobilized elements of the modernist evaluation imaginary when discussing organizational risks and barriers. They pointed to a potential conflict between deliberation about policy complexities and PBL’s assessment mandate of providing factual information about policy performance. Another risk was identified with respect to PBL’s independent position in interaction with policy-makers, stressing the need for clear roles and responsibilities to safeguard independence. Lack of capacity and skills on how to conduct governance analysis and participatory evaluation was also identified as an issue of concern. These articulated risks indicate how PBL’s evaluation logic is firmly grounded in regulative, normative and cognitive institutions, supporting the persistence of a modernist evaluation imaginary.

Yet, to improve the usability and quality of the study innovative ambitions were articulated in a motto for the study: ‘moving from policy performance to policy learning’. Communicating this motto to strategic level policy-makers and internal peers facilitated external and internal commitment to a reflexive agenda. In particular, the phrase going beyond ‘facts and figures’ served to legitimate the newly defined reflexive orientation of the evaluation study.

Traditional ‘facts and figures’ under scrutiny

In order to enact the motto: ‘moving from policy performance to policy learning’, the team started developing an analytical and methodological framework. Suitable methods were obtained from literature reviews and expert consultations with policy scientists. They intended to design the evaluation in a reflexive manner in order to improve interaction with policy clients and to enable analysis of complex multi-governance constellations. The need for participatory design and governance analysis was captured within the motto ‘moving from policy performance to policy learning’. At the same time, concerns and risks were conveyed illustrating how underlying assumptions and conditions were still largely grounded in presumptions of rationality, control and predictability, reflecting values underpinning the modernist evaluation imaginary. Discussions in the team centred for example on appraisal of the merits of policy learning against concerns and risks concerning objectivity: what is the validity of ‘policy insights’ as a source of knowledge? Are actors’ perspectives qualified? What is the risk of losing control when collaborating with policy-makers?

A pragmatic distinction between ‘what’ questions (e.g. What is the quality of the living environment? What is the attributed policy contribution to quality improvement? What is the distance-to-target? What are the trade-offs across policy domains?) and ‘how’ questions (e.g. How are policies implemented? How do multi-actor dynamics affect policy performance? How can the performance of policy be improved? How are trade-offs justified?) was proposed by one of the practitioners. This pragmatic approach was later referred to as the systems approach. This approach builds on the PBL assessment tradition of systems modelling in the physical and ecological domains, but ‘adds’ a social systems perspective to it. The systems approach was well-received by the other team members, since it offered a tailored guidance to accommodate different types of evaluands, and to sustain both the study’s statutory role of assessing policy performance (addressing ‘what’ questions) and the policy learning ambition of explaining policy performance in terms of multi-actor dynamics and path-dependency in policy-making (addressing ‘how’ questions).

In sum, motivated by the ambition to ensure the study’s legitimacy in view of usability and quality concerns about the previous study, the team formulated the motto ‘moving from policy performance to policy learning’, and accordingly mobilized the reflexive evaluation imaginary. This turned out to be inconsistent with dominant organizational beliefs in rationality and control reflecting the high level of institutionalization of the modernist evaluation imaginary in the PBL setting. The tension between both logics became explicit in discussions over the statutory role and independent (i.e. distanced, objective) position of PBL. A systems approach served to accommodate the inconsistency between modernist and reflexive logics, allowing more freedom for researchers to choose the evaluand (what or how question) they considered appropriate.

Individual chapters phase

In August 2011 a new research team was put together to integrate the ideas developed in the conceptual phase into thematic policy assessment studies. Four out of five team members from the conceptual phase (including the first author of this article, EK) and five new members started to interpret and process the innovative ambitions of the assessment study. Doubts were displayed: There is a certain danger related to ‘we have conceptually elaborated the new approach, so implementation has to take place accordingly’. While nobody really seems to believe in it or to realize what it is actually about, we all act as if we believe it would work. (Excerpt from email exchange within the project team, September 2011)

Doubts on reflexive evaluation

While the reflexive aspirations had proven rhetorically powerful, conversations about the need for methodology support to implement reflexive approaches (i.e. participatory design, network governance analysis) highlighted many doubts, e.g. about the need for a participatory design, but also about the move towards a learning-oriented evaluation, in view of PBL’s mandate. Team members agreed to pursue the study’s traditional character of policy performance assessment and to explore the potential for in-depth reflection upon societal dynamics and complex governance constellations in relation to each individual policy domain.

When the motto ‘moving from policy performance to policy learning’ was discussed with policy-makers in the Ministry of Infrastructure and the Environment – the principal ‘client’ of the evaluation study – similar doubts were raised about the legitimacy of this move. The reflexive evaluation ambition was considered useful in an abstract sense. Who would disagree with improved usability and more realistic action perspectives? At the same time, the ‘change of direction’ was questioned in view of PBL’s evaluation mandate. The policy-makers emphasized PBL’s capacities and strong position in providing ‘hard’ facts and figures. Moreover, they pointed to the danger of weakening the division of responsibility between PBL and their own work. They considered themselves to be in charge of policy interpretations and the formulation of action perspectives, while PBL’s role – in their opinion – was to remain ‘neutral’ towards policy developments. It reflects the institutionalization of linear knowledge–policy arrangements under a modernist evaluation imaginary.

‘Facts that matter’ as a minimum requirement

Halfway the project, the traditional policy performance assessment approach was explicitly identified as a minimum requirement, and reformulated as ‘facts that matter’ approach: Information on policy progress put in the perspective of persistent dilemmas in the broader policy system and political context allows for reflection on complex policy arrangements (final project plan, December 2011). The reflexive aspiration of policy learning could this way be attended to. While the participatory aspirations were, reduced to a set of workshops with policy-makers, instead of the initially envisaged joint fact-finding trajectory with diverse social actors. This tendency of downplaying participatory aspirations was guided by the argument that a ‘facts that matter’ orientation did not require full public participation, other than in framing and aligning the choices made in the assessment process with policy needs in review and consultation meetings.

Although every team member responsible for a chapter initially set out to express policy performance in ‘facts that matter’, assessment approaches soon started to deviate in each chapter. Choice of evaluand, evaluation methods and evaluation criteria were principally guided by the characteristics of the policy field such as the level of consensus on policy goals and availability of (quantitative) policy targets, but also by disciplinary preferences, sectorial interests, policy needs, personal motivations and capacities and practical considerations such as the availability of data. While several chapters principally adhered to a technical-causal model for the assessment of target achievement (addressing ‘what’ questions), other chapters – completely or partially – conducted governance analysis to explore policy dilemmas, addressing the multiple social relations across policy actors and identifying tensions and windows of opportunities (addressing ‘how’ questions). Moreover, reflexive evaluation approaches were considered more appropriate for ‘unstructured’ 11 policy topics in agenda-setting stages, than for ‘structured’ policy topics in their implementation stages. While the sustainable food chapter and the mobility chapter explicitly reflected on current policy framings to raise awareness and influence agenda setting, the climate and energy and water chapter hardly questioned policy frames, and focused on impact assessments to identify trade-offs emerging during policy implementation.

In summary, this phase reflects how various combinations of evaluation approaches appear in individual chapters. This was influenced by the characteristics of the policy field, individual aspirations and capacities, while all strived to surpass ‘traditional’ performance assessments with facts that matter; accommodating a systemic approach of the policy field.

Integration phase

From February 2012 onwards the team worked intensively on overarching policy messages and recommendations. As overall outcome of the assessment study the assessment findings from the various chapters had to be integrated.

Different overarching messages

Attempts to formulate policy messages were initially oriented towards harmonizing and structuring the content of individual chapters. These attempts turned out to leave limited room for the characteristics of the diverse policy issues. Instead of using a generic framework, three types of messages were subsequently distinguished: In case of concrete, measurable targets and a considerable level of acceptance of the target and unambiguous knowledge of the system, it is possible and relevant to assess effectiveness (to what extent does policy contribute to target achievement?) and efficiency (against which efforts/costs?) When targets (policy ambitions) are not defined as ‘SMART’ it is relevant to evaluate the policy direction/ strategy, in terms of its potential trade-offs and opportunities In addition, the participatory, responsive and transparent character of policy can be assessed if relevant for explaining the performance of policy with respect to the role of policy actors such as local governments. (Excerpt from internal meeting notes, February 2012)

Facts and visions as political leeway

On 23 April 2012 the Dutch Cabinet resigned due to a political conflict over budgetary changes. The implications for the assessment team were considerable, as the visionary and strategic policy documents that had served as reference had inspired the formulation of policy messages, but turned out to be no longer politically relevant. At the same time, strategic level policy-makers, acting as external peers in a review meeting, suggested PBL in view of upcoming elections to add new ‘facts and figures’ to inform the process of budgetary priority setting (e.g. with respect to CO2 emission targets achievement by 2020). PBL was also encouraged to formulate action perspectives for dealing with the tensions emerging in current policy directions (An example of such an action perspective is: ‘the challenge for the upcoming period is to rearrange the systems of production and consumption in such way as to meet both societal and economic needs with a more efficient approach to natural resources and a minimization of harmful substances. In some instances policy reinforcement may suffice; in other cases a more fundamental change strategy is necessary’ (PBL Netherlands Environmental Assessment Agency, 2012)): Tensions are of interest and can be addressed more explicitly. Tensions can play a role in political debate. There is no need for ready-made policy solutions, as this is the responsibility of policy-makers. Anyhow, better action perspectives are needed. What are the right choices to make? Not solely at the level of facts and figures, but in terms of: in this or that way you can handle these tensions. (Excerpt from meeting notes, external supervisory meeting, 24 May 2012)

In sum, at this stage, the project team identified three types of overarching messages to do justice to the various types of policy messages that emerged from the individual chapters’ assessments. We see how political dynamics offer room for reflexivity in the integration phase, as policy-makers point out the need for reconsidering policy objectives and strategies. Simultaneously they indicate the need for traditional facts and figures that provide them with ‘hard’ evidence, so they can position themselves and (re)gain political control.

Evaluation phase

On 24 September 2012 the Assessment of the Human Environment report was presented to the Minister of Infrastructure and the Environment at a dissemination event with policy-makers, PBL researchers and news journalists.

Although different categories of policy messages had been distinguished to facilitate readability, the report was perceived to contain complex policy messages: While one section discusses the effectiveness of current policy, building on performance assessment outcomes, the next section starts questioning the objectives underpinning these policies by reflecting upon these policies from a multi-actor perspective. (Excerpt from internal memo, May 2012)

On the other hand, the multiplicity of messages was also appreciated by user groups – including politicians. In their view, evaluative knowledge based on facts and figures, supplemented with policy analysis of networked governance, offered a more complete picture of the system under study. They appreciated the action perspectives for offering rich suggestions, addressing various actors and issues within the system. The external evaluation with user groups reflected three types of functions: a knowledge function, a communication function and a political function, which are illustrated with three fragments from the evaluation report of the 2012 study (final evaluation report, September 2013): Knowledge: ‘In policy the numbers are often forgotten; this [study] allows us to demonstrate how policy is performing (policy-maker)’. For example, the Balance study points to overall national-level improvement of air quality, while it simultaneously decreases in local urban settings. Communication: The Balance study allows for shared understanding of the numbers, for example about emissions, as it discusses their meaning and how to interpret them. Political: A member of the Dutch Parliament makes use of the Balance study in budgetary negotiations to convey political pressure: ‘The Balance study identifies how nature develops in our country and whether this development runs into the right direction.’ (Italics added)

Users tend to indicate the added value of the Balance study in learning terms, while they principally refer to modernist evaluation outcomes. In the first excerpt, a policy trade-off is being addressed. In the second excerpt, the user refers to the added value of the meaning of numbers. In the last excerpt, the study enables reflection on the direction of nature policy. These findings provide empirical evidence of the suggestion made by previous scholars that modernist evaluation approaches can also facilitate learning and reflexivity (Owens et al., 2004).

The evaluation report mentioned how the role of the study seemed to be increasingly shifting towards an agenda-setting function (see also Maas et al., 2012) and it suggested further developing reflexive evaluation approaches.

As for the next project – the ‘Assessment of the Human Environment 2014’ (PBL Netherlands Environmental Assessment Agency, 2014) – it was decided that the learning aspirations had to remain intact using the systems approach as a guidance for the thematic assessments. At the same time, the legitimate role of the Assessment of the Human Environment was acknowledged to be grounded in its ‘facts and figures’ and accordingly the PBL management board decided to stress the traditional assessment function with a ‘back-to-basics’ motto, conforming to the modernist evaluation imaginary.

In summary, this case illustrated the interplay between policy and political dynamics, innovative aspirations and legitimacy concerns in view of the formal mandate and position of the study. Nonetheless, the team managed to develop an additional role in line with a more agenda-setting orientation, represented by the ‘facts that matter’ and the action perspectives. Thus, both imaginaries were mobilized interchangeably.

Discussion and conclusions

In this article we explored how evaluation practitioners attempted to innovate a prominent Dutch evaluation study, while they also attempted to remain faithful to traditional attributes of preceding studies. We now return to our research question: How in their everyday work do evaluation practitioners address the multitude of evaluation approaches, given diverse societal expectations of evaluation?

Our case analysis revealed that at times, practitioners, and their internal and external peers alike mobilized the modernist and the reflexive evaluation imaginaries interchangeably, when justifying their work. We identified the following interplay between institutions and local dynamics in our empirical section:

institutionalized rules (regulatory pillar), beliefs (normative pillar) and practices (cognitive pillar) ensure connectivity to the modernist evaluation traditions;

innovative aspirations to improve the usability and quality of the study bring reflexive ideals (normative change) into the evaluation process; and

the characteristics of policy issues and the political situation trigger modernist or reflexive activities depending on the case.

In the first phase, due to dominant institutionalized views and practices, the initially explored reflexive approaches were partly discarded, as users and practitioners questioned the need for change. Yet, in the individual chapters’ phase, reflexive evaluation approaches were pragmatically aligned with characteristics of the policy field, disciplinary preferences, sectorial interests, policy needs, personal motivations and capacities and practical considerations such as the availability of data. In the integration phase we showed how the resignation of the Dutch government and the temporary political coalition triggered policy-makers, involved as external peer-reviewers, to demand more explicit acknowledgement of both facts and visions in environmental policy evaluation, thus combining elements of both modernist and reflexive thought. The evaluation phase triggered discussions over the legitimate role of the study, revealing the search for ways of combining modernist (back-to-basics) and reflexive (governance analysis; action perspectives) elements.

The reconstruction of this local practice reveals inconsistencies. Tensions emerged from the co-existence of modernist and reflexive imaginaries, articulated as different perceptions about the study’s mandate, internal aspirations and capacities for innovation in view of external conditions and user expectations. The innovative ambitions conflicted with the ritual of evaluation in an institutionalized setting. This is consistent with the observation that ‘evaluation processes in organizations are sometimes inconsistent, disconnected, ritualistic, and hypocritical’ (Dahler-Larsen, 2012: 226). Institutionalized expectations and appeals for innovation were aligned with particular policy characteristics and to the political situation, creating space for different evaluation approaches to be used interchangeably. We found that there is no single, coherent ‘evaluation approach’, but instead, a multitude of approaches and practices became apparent in the different subprojects. We illustrated how innovation in assessment approaches was triggered by practitioners themselves. As a consequence, practitioners experienced more freedom to tailor evaluation approaches to particular policy questions.

The ad hoc and patchwork evaluation style of our case illustrates how inconsistencies experienced due to the co-existence of the evaluation imaginaries were accommodated: a decoupling among intentions, approaches and outcomes allowed innovation to occur locally, while at the same time conforming to traditional values. Illustrative hereof in our case are the emergence of the ‘facts that matter’ approach or the inclination of users indicating the added value of the ‘Balance study’ in learning terms, while they principally refer to modernist evaluation outcomes.

What does this example of co-production in evaluation praxis has to offer for policy evaluation practitioners? We showed how practitioners, and their peers, consciously or unconsciously, draw upon diverse societal views on what evaluation ‘is’ and ‘should be’. Such views may not be coherent, consistent, or even articulated. Awareness and articulation of societal expectations is indispensable considering the complex, multi-actor character of present-day governance processes to which policy evaluations have to accommodate and contribute. Evaluation practitioners need to attend to organizational aspects of their work by addressing internal perspectives on evaluation, institutionalized interests and political and cultural values in the environment (Stirling, 2006), and consider how they mutually affect evaluations.

Finally, in terms of the theoretical debate in policy evaluation, we have suggested that the current co-existence of evaluation imaginaries has contributed to inconsistencies in the evaluation process, interchangeably creating tensions, and more freedom for evaluation practice. There is need for further insight into how to accommodate the potential inconsistencies that result from the hybridization of methods and approaches. Our suggestion for evaluation theory is, therefore, to further explore how decoupling of approaches, methods, intentions and outcomes enables practitioners to deal with experienced inconsistencies. A focus on the political and strategic act that decoupling involves seems a fruitful way to explore how modernist and reflexive understandings of ‘what evaluation is’ and ‘what evaluation should do’ can be combined.

Footnotes

Acknowledgements

I dedicate this article to Eleftheria Vasileiadou who sadly passed away earlier this year. The writing of this article has been made possible by the Open Assessment research programme of the PBL Netherlands Environmental Assessment Agency. Thanks are due to the research group Science and Values in Environmental Governance organized by the VU University Amsterdam and the Eindhoven University of Technology and to Willemijn Tuinstra, Ton Dassen, Guus de Hollander, Ed Dammers for challenging comments and exchange of ideas on an earlier version of this article.

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for profit sectors. EK received time to work on this research while being employed by the PBL Netherlands Environmental Assessment Agency.