Abstract

This study aimed to compare the effect between game-based approaches (GBAs) and traditional skill approaches on decision-making, knowledge and motor skill in physical education students and athletes. A systematic review and meta-analysis of experimental studies available before October 2023 was conducted. The initial search yielded 8431 articles, with 28 articles (n = 1600) meeting the inclusion criteria. Studies were analyzed using three-level random-effects models with a robust variance estimation. Outcomes were computed as raw mean differences and Hedges’s g effect sizes. Results indicate that GBAs have a positive heterogeneous effect on decision-making in game situations (ES = 11.41%; 95% CI [4.39, 18.43]) and motor skill in skill tests (g = 0.36; 95% CI [0.14, 0.57]). GBAs did not have an effect on knowledge (g = 0.37; 95% CI [−0.12, 0.86]) or motor skill in game situations (ES = 1.13%; 95% CI [−2.43, 4.68]). Meta-regression analyses revealed that the experience of the interventionist, the quality of the studies, and the comparison condition significantly influence the impact of GBAs on motor skill tests. More detailed and transparent reporting of trials would benefit the field.

Background

A key question in physical education (PE) and sports coaching centers on identifying the most effective instructional methods to achieve desired learning outcomes (Metzler, 2011). While curricular standards can provide broad goals for learning (Society for Health and Physical Educators, n.d.; Department for Education, 2013), teachers and coaches need clear strategies to maximize student and athlete development. A growing body of experimental research compares the effect of different teaching and coaching approaches on student and club athlete learning (e.g. Batez et al., 2021; Liu et al., 2020). However, individual studies can yield mixed results. Therefore, it is necessary to synthesize this research and provide clearer insights into the relative effectiveness of different instructional methodologies through a systematic review and a meta-analysis.

In this article, we focus on two extensively studied instructional approaches, the traditional skill approach (TSA) and the game-based approach (GBA). The theoretical assumptions, pedagogical practices, and desired outcomes vary between the two approaches. The TSA, also commonly referred to as the direct instruction model (Engelmann, 1980; Rosenshine, 1976), is based on behaviorist learning theory which assumes student behaviors can be modified according to environmental stimuli, such as positive and negative reinforcement (Groom et al., 2016). In this approach, students/athletes spend a large portion of the lesson/session practicing technical motor skills (throwing, kicking) outside of the game context (individually or in pairs). The teacher breaks a motor skill down into small learning steps and structured tasks (Metzler, 2011), and uses modeling and feedback to clarify techniques and reinforce or inhibit student/athlete behaviors. As a practical example, to ensure proper serving technique in volleyball, the teacher can break the motor skill of the serve into a series of small steps (such as tossing the ball up) that students/athletes must master before pursuing the full skill in the context of a game.

The TSA prioritizes the psychomotor domain first (motor skill) and the cognitive domain second (decision-making, knowledge). Proponents of a TSA argue that learners should ideally experience a high number of repetitions and master the proper technique (psychomotor domain) before moving into a game-like context that contains added stressors, such as the opponent (Blomqvist et al., 2001; Cope and Cushion, 2020; Oslin and Mitchell, 2006). Critics of a TSA claim the approach removes important features inherent to games, such as tactical decision-making and problem-solving, and thus results in poor transfer of motor skills and overall performance between different games and less than optimal outcomes for young people (Harvey and Jarrett, 2014; Robles et al., 2020; Tan et al., 2012).

An alternative to the TSA is the GBA. A GBA refers to “the learner-centered teaching and coaching practice in which the modified games set the base and framework for developing thoughtful, creative, intelligent, and skillful players” (Teaching Games for Understanding AIESEP Special Interest Group, 2021). A GBA is known by different titles, including Teaching Games for Understanding (TGfU; Bunker and Thorpe, 1982), Tactical Games Model (Griffin et al., 1997), Game Sense (Light, 2006), and Invasion Games Competence Model (Musch et al., 2002). Despite variations in terminology, GBAs are grounded in constructivist principles that emphasize continuous learning through individual experiences and environmental interactions (Light, 2008; Cushion, 2013). Important to note, a key aspect of GBAs are structured lessons delivered by trained teachers/coaches, which serve to complement experiential learning. Moreover, in contrast to a TSA, a GBA maintains essential elements of games (e.g. opponents and rules) and positions the student, rather than the instructor, at the core of the learning experience (Giblin et al., 2014; Harvey and Jarrett, 2014).

In a GBA, students spend the majority of the lesson competing in modified games or sports. The teacher/coach supports student learning through guided inquiry that aims to foster tactical awareness and decision-making (Harvey et al., 2018; Robles et al., 2020). That said, this approach does not neglect the teaching of motor skills and technique, but rather teaches technique within tactical game scenarios. For example, a student competing in a two versus two soccer game may elect to pass the ball with the outside of their foot given the positioning of defenders and their place on the field. A GBA prioritizes the cognitive domain first (decision-making, knowledge), and the psychomotor domain second (motor skill). In other words, GBAs assume that game-like situations require students to identify and understand tactical problems (cognitive), and then find solutions to solve those problems (psychomotor; Metzler, 2011).

Scholars have examined the outcomes of GBAs in various individual studies, which have been summarized primarily through narrative reviews (e.g. Barba-Martín et al., 2020; Harvey and Jarrett, 2014; Kinnerk et al., 2018; Miller, 2015). These reviews have arrived at a few broad conclusions: (a) GBA research focuses mostly on motor and cognitive outcomes (Barba-Martín et al., 2020; Kinnerk et al., 2018); (b) GBAs are effective in developing decision-making in sport contexts, but not necessarily in PE contexts (Kinnerk et al., 2018; Miller, 2015); (c) GBAs are generally not effective for motor skill development (Kinnerk et al., 2018; Miller, 2015). In addition to narrative reviews, one meta-analysis has been published on GBAs. Robles and colleagues (2020) concluded that GBAs resulted in significant improvements in decision-making (Cohen's d = 0.89, 95% confidence interval [CI] [0.12, 1.65]) but not motor skill execution when compared to TSAs. While this is the only meta-analysis on the subject, it should be interpreted with caution for the following reasons. First, the initial (widely inclusive) search yielded just 51 studies, a number which is typically considerably larger (Siddaway et al., 2019). Second, of the 51 studies identified, only seven were included in the decision-making outcomes analysis, and just six in motor skill performance outcomes. These small sample sizes raise questions about the search strategy and consequently the validity of the results.

Subsequently, given the large number of narrative reviews but lack of existing quantitative statistical syntheses, there remains a need for a meta-analysis of GBAs that includes a comprehensive search strategy, transparent reporting, and robust statistical analyses. Moreover, on a broader level, there is a desire to understand if GBAs in fact result in the prioritized student/athlete outcomes, given the widespread use of GBAs in PE and sport. Given this, the purpose of this study was to perform a meta-analysis on the effect of GBAs on decision-making, knowledge, and motor skill in comparison to TSAs. Specifically, this study sought to address four specific research questions:

What is the effect of GBAs on decision-making in comparison to TSAs?

What is the effect of GBAs on knowledge in comparison to TSAs?

What is the effect of GBAs on motor skill performance in comparison to TSAs?

Are there any variables that moderate the effect of GBAs on the three listed outcomes?

Methods

Search strategy and study selection

This systematic review and multilevel random-effects meta-analysis was performed according to the Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) statement guidelines (Supplemental file; Moher et al., 2009). A systematic literature search was conducted with no time restriction by three independent investigators (first, second, and third author) between June and August 2020. An additional supplementary search was performed in October 2023 just before the first completion of the manuscript, only searching studies published after the last date of the first search. The following online databases were used to identify relevant articles: Web of Science, PsychINFO, PsychARTICLES, ERIC, SportDISCUS, ProQuest Dissertations and Theses database, and Google Scholar. For Google Scholar, the first 500 articles retrieved were reviewed to keep to the suggested strategies (Haddaway et al., 2015).

The following keywords were used: teaching games for understanding, tactical games model, game sense, game, instruction, play practice, teaching, model, approach, centered, physical education, sport, school, experimental, trial, comparison, effect, decision-making, knowledge, skill, technique. The precise Boolean search strings are listed in the Supplemental file.

The following inclusion criteria required the studies to: (a) be written in English or Spanish; (b) be a peer-reviewed research article, unpublished manuscript, conference publication, book chapter, or a doctoral dissertation; (c) have been published before October 2023; (d) have been carried out in a PE or youth sport setting using game sports (e.g. not gymnastics); (e) include a control and experimental group with pre- and post-measures; (f) include a GBA as at least one condition; (g) compare a GBA to an instructional approach aligned with TSA; (h) report sufficient data on one or more of the following outcomes: objective motor skill, objective knowledge, objective decision-making (measures transformable to a probability of making an appropriate decision). Alternatively, the required data could be supplied by the study authors or estimated based on information gleaned from other studies. Additional search strategies employed beyond the initial database search are detailed in the Supplemental file.

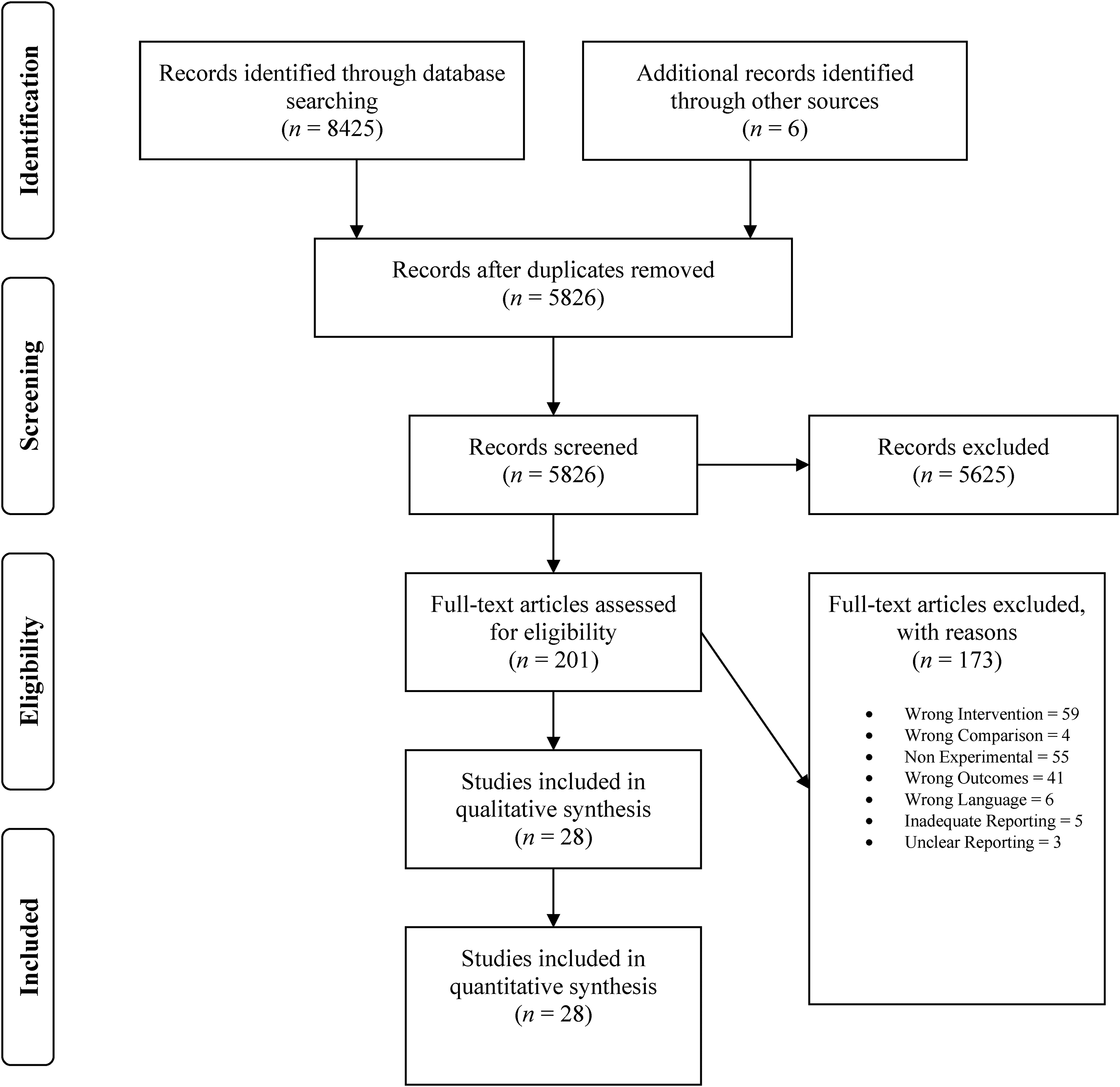

The search yielded 5826 records which were screened for eligibility. The screening identified 201 articles that could potentially meet the inclusion criteria. Three authors (first, second, and third author) read the full text of the 201 articles and independently applied the inclusion criteria to each one. The initial inter-rater agreement in applying the inclusion criteria (Cohen's unweighted kappa from R [version 4.2.2)]; R Core Team, 2020) was 0.95 [0.88, 1.00], z = 13.2, p < .001 with a rough percentage agreement of 99.1%. A full agreement was found via discussion and 28 articles met the inclusion criteria. The 28 studies in the current study included 119 effect sizes and a total of 1600 participants. The process of article identification, screening, and eligibility is displayed in Figure 1.

Flowchart of the study selection.

Data synthesis and effect size calculation

Hedges’s g, a standardized mean change difference, was used as the effect size measure for knowledge and motor skill measured in motor skill tests (Hedges and Olkin, 1985). For decision-making and motor skill performance measured in game situations, we used raw mean change differences as they were measured with the same procedures (Bond et al., 2003). The effect sizes were calculated by subtracting the mean change in the control condition from the mean change in the intervention condition and dividing the difference by the pooled standard deviation of the pre- and post-test scores of both conditions (Hedges and Olkin, 1985; Lipsey and Wilson, 2001). The required pre-post correlations were derived from the study by Turner and Martinek (1999), which was the only study reporting them. The analyses with an alternative correlation of 0.7 did not have a substantial influence on the results (Follmann et al., 1992).

For decision-making, results reported using a probability score of making an appropriate decision were included using similar approaches by Oslin and colleagues (1998; game performance assessment instrument; GPAI), French and Thomas (1987; game performance evaluation tool; GPET) or Blomqvist and colleagues (2005). Some of the studies utilizing this approach reported the odds of making a good decision (appropriate decisions divided by inappropriate decisions). These scores were transformed to probabilities before computing the effect sizes. Lastly, one study (Gray and Sproule, 2011) reported the number of appropriate and inappropriate decisions. These statistics were transformed to probabilities by dividing the appropriate decisions with the total number of decisions made before effect size computations.

Motor skill performance outcomes were analyzed within two distinct subgroups. The first subgroup includes scores for technical execution sourced from Blomqvist and colleagues (2005) or employing the GPAI and GPET instruments. These instruments measure the probability of correct motor skill execution during game situations. The second subgroup contains continuous scores that represent time to complete a motor skill task or successful repetitions of a motor skill (e.g. basketball dribbling). These tests focused on motor skill performance independent of gameplay. Similarly, the knowledge scores included in our meta-analysis were also continuous. These scores were obtained through paper-and-pen-type assessments, assessing various dimensions of the targeted sport and its gameplay.

Most of the studies included in the meta-analysis reported participant numbers and group means for pre- and post-scores, and their standard deviations for an experimental and a control group. However, some studies did not report this data. After unsuccessful efforts to contact the authors of the studies in question, imputation methods were used. In two studies, only the total number of participants was reported. In these cases, an even split of participants was assumed between the different conditions. A total of eight effects out of all the 119 effects analyzed were missing standard deviations (Dorak et al., 2018; Harrison et al., 2004). In the main analyses, these missing data were replaced by using standard deviations from the most similar study (Furukawa et al., 2006). Furthermore, two alternative approaches were tested in sensitivity analyses: (1) the average standard deviation from all the other effects was imputed; (2) all the effects with missing standard deviations were removed (Supplemental file Table S6). None of the applied sensitivity analysis procedures changed the magnitude of the effects in a statistically significant way for any of the outcomes. Effect sizes were adjusted as necessary to ensure that positive estimates aligned with improvements in decision-making, knowledge, and motor skill. Moreover, a positive effect size indicates that GBAs produced superior results compared to TSAs.

The reliability of the extracted data relating to effect size calculation was assessed with a “single rater unit” mixed effect model for intraclass correlation coefficient (ICC) (means, standard deviations, and number of participants in each group). The initial ICC was 0.96 (0.95, 0.97), p < .001. Before final effect size computation, a complete inter-rater agreement was achieved by locating and resolving dissimilarities. Processes relating to data extraction, moderator selection, study quality assessment, and statistical modeling are presented in the Supplemental file.

Results

Decision-making

For decision-making, 29 effects from 17 studies were analyzed. The observed mean change differences ranged from −17% to 59%, with 79% (23 effect sizes) of the effects being positive. The estimated mean change of game-based models on decision-making was 11.41% [4.39%, 18.43%], t = 3.45, p = .003. A 95% prediction interval for the true outcomes was −14.9% to 37.72%, and hence, although the mean effect is estimated to be positive, the true effect for some decision-making outcomes may be negative. According to the Q-test, the true outcomes appear to be heterogeneous (Q(28) = 76.27, p < .001, τ2 = 5.11 (between-study heterogeneity) and τ2 = 137.92 (within-study heterogeneity)) with the total I2 of 59.8% (2.13% and 57.67% for between- and within-study heterogeneity). The observed effects and the mean estimate based on the multilevel random-effects model for all outcomes are shown in the Supplemental file (Figures S1 and S2).

An analysis of the studentized residuals and Cook's distances indicated that one study (Dorak et al., 2018) could be considered an outlier and overly influential. The consequent sensitivity analysis indicated that by excluding this study, the mean effect was still significant, although it was reduced to 9.13% [3.89, 14.36], t = 3.72, p = .002. Neither the visual inspection of the plot nor the regression test indicated any funnel plot asymmetry (p = .431). The fail-safe analysis using the Rosenberg method (Rosenberg, 2005) indicated that 243 studies averaging null results would need to be added to the analyzed set of studies for the overall effect to be zero, p < .05.

Due to the relatively large heterogeneity and significant Q-test, the effect of the categorical moderators was analyzed with meta-regression and orthogonal contrast between the different moderator levels. For decision-making, no statistically significant differences between the moderators emerged. The results of the moderator analyses for all outcomes are displayed in the Supplemental file (Table S3).

Knowledge

Eleven effect sizes from nine studies were analyzed for knowledge. The individual standardized mean change differences ranged from −0.46 to 1.32 with 64% of the effects being positive. The estimated mean effect size was 0.37 [−0.12, 0.86] with a prediction interval of −0.90 to 1.63. Consequently, the mean effect was not significant, t(8) = 1.73, p = .122. According to the Q-test, the true outcomes seem to be heterogeneous (Q(10) = 26.47, p = .003, τ2 = 0.114 (between-study heterogeneity) and τ2 = 0.142 (within-study heterogeneity)) with a total I2 of 61.85% (27.55% and 34.30% for between- and within-study heterogeneity, respectively).

An analysis of the studentized residuals and Cook's distances indicated no effect or study to be overly influential or an outlier. Neither the visual inspection of the plot nor the regression test indicated any funnel plot asymmetry (p = .816) for knowledge.

Despite a small number of total effects, the effect of the categorical moderators was analyzed with meta-regression and orthogonal contrast between the different moderator levels due to the relatively large heterogeneity and significant Q-test. Only one significant difference in moderator levels was identified for knowledge, with studies employing a skill/technique control group demonstrating lower effects in comparison to those utilizing a different (“other”) control group (F(1, 7) = 15.40, p = .006).

Motor skill performance

The effects on motor skill were analyzed in two subgroups: effects on motor skill performance observed in game situations (probability of executing motor skill appropriately) and effects on motor skill performance in independent motor skill tests (continuous variables).

Motor skill performance in game situations

For the subgroup motor skill performance in game situations, 34 effect sizes from 16 studies were analyzed. For these outcomes, the observed mean change differences ranged from −31% to 53%, with 53% of the effects being positive. The estimated mean effect of game-based models on motor skill performance measured by skill observation during game situations did not differ significantly from zero with an estimated mean effect of 1.13% [−2.43, 4.68], t = 0.676, p = .51 with a 95% prediction interval of −32.13% to 34.39%.

According to the Q-test, the true outcomes appeared to be heterogeneous (Q(33) = 184.39, p < .001, τ2 = 0.00 (between-study heterogeneity) and τ2 = 240.69 (within-study heterogeneity)) with the total I2 of 77.17% (0% and 77.17% for between- and within-study heterogeneity).

An analysis of the studentized residuals specified one study (Dorak et al., 2018) to be an outlier at the study level. The consequent sensitivity analysis indicated that by excluding this study, the mean change was 0.63% [−3.73, 4.98], t(13) = − 0.31, p = .76. Neither the visual inspection of the plot nor the regression test indicated any funnel plot asymmetry (p = .25).

Despite a relatively large heterogeneity and significant Q-test, the effect of the categorical moderators was not analyzed for motor skill during game situations as the I2 indicated that practically all heterogeneity in the effects arose within studies.

Motor skill performance in motor skill tests

For the subgroup motor skill performance in motor skill tests, 45 effect sizes from 16 studies were analyzed. For these outcomes, the observed standardized mean change differences ranged from −1.90 to 1.72, with 73% of the effects being positive. The estimated mean effect of game-based models on motor skill performance in motor skill tests was 0.36 [0.14, 0.57], t(14) = 3.52, p = .003. A 95% prediction interval for the true outcomes was −0.40 to 1.11, which indicates that the true effect in some studies for some motor skill outcomes may be negative. According to the Q-test, the true outcomes appeared to be heterogeneous (Q(44) = 114.49, p < .001, τ2 = 0.054 (between-study heterogeneity) and τ2 = 0.063 (within-study heterogeneity)) with the total I2 of 58.1% (27% and 31.13% for between- and within-study heterogeneity).

An analysis of the studentized residuals and Cook's distances indicated that no study was deemed overly influential or an outlier. However, one individual effect from one study (Miller et al., 2016) could be considered overly influential. The consequent sensitivity analysis indicated that by excluding the influential effect the mean effect was still significant and changed only slightly to 0.33 [0.12, 0.54], t(15) = 3.34, p = .005. All the sensitivity analyses are displayed in the Supplemental file (Table S6).

Neither the visual inspection of the plot nor the regression test indicated any funnel plot asymmetry (p = .392). The fail-safe analysis using the Rosenberg method (Rosenberg, 2005) indicated that 805 effects averaging null results would need to be added to the analyzed set of studies for the overall effect to be zero, with a target significance level of p < .05.

Due to the relatively large heterogeneity and significant Q-test, the effect of the categorical moderators was analyzed with meta-regression and orthogonal contrast between the different moderator levels. For motor skill tests, three significant differences between the moderator levels emerged. First, studies using experienced and trained teachers/coaches to carry out the game-based study module had weaker effects compared to studies using inexperienced teachers/coaches (F(1, 14) = 7.24, p = .018). Second, studies using a comparison group other than skill or technique approach had a larger effect on motor skill outcomes (F(1, 14) = 11.14, p = .005). Lastly, studies that were of the lowest quality had smaller effects compared to the average quality studies (F(1, 13) = 11.77, p = .005) and highest quality studies (F(1, 13) = 12.3, p = .004).

Discussion

This systematic review and meta-analysis summarizes the existing evidence on the effect of GBAs on student/athlete outcomes of decision-making, knowledge, and motor skill performance in comparison to TSAs. Our results show that GBAs improve participants’ ability to make appropriate decisions in games, and advance their motor skill performance tested in motor skill tests. However, the wide prediction intervals of the mean effects indicated that in some cases the GBAs can indeed have negative effects on decision-making and motor skill compared to TSAs. Our analysis also identified study design characteristics that appear to moderate the effect of GBAs on motor skill outcomes. These characteristics provide a more nuanced understanding of the benefits of GBAs in terms of motor skill performance of students/athletes.

Our study revealed that participants exposed to GBAs improved decision-making ability to a greater extent (+11% difference) than those instructed with TSAs. While both approaches enhanced decision-making, GBA participants exhibited a more substantial improvement (around 16% when compared to TSA participants (5%)). This positive effect was also found by Robles et al. (2020), who indicated that GBAs’ effect over technical approaches on decision-making is 0.89 (Cohen's d). However, as the effect size computations are not directly comparable, the similarity in the magnitude of the two effects is difficult to estimate and generate meaning. Conversely, our findings conflict with conclusions from Miller's (2015) narrative review, which suggested that GBAs produce no significant improvements in decision-making (procedural knowledge). While the results between these studies are mixed, there are several features of GBAs that aim to enhance decision-making and thus support and help explain the finding that GBAs have a positive effect on decision-making ability. First, GBA participants accrue playing experience, including ample decision-making opportunities, that mirror the decision-making outcome measurement instruments (e.g. GPAI and GPET). Furthermore, the instructional focus and aims (e.g. via questioning and planned progressions) in GBAs also prioritize cognitive understanding, recognition, and tactical awareness during gameplay. This type of instruction may contribute to improved decision-making throughout games. Therefore, GBAs possibly facilitated enhanced decision-making through a combination of context-specific motor skill development and explicit instructional strategies targeting cognitive processes.

The analysis illustrated the effects on decision-making were strongly heterogeneous, especially within studies. Previous research has implied that improvements in decision-making because of GBAs are contingent on the form of questioning used (Lopez et al., 2016), the focus of practice, and the length of the learning period (Turner and Martinek, 1999). One potential explanation for the variability in the outcome of decision-making is the specific focus of the practice task/activity. Specifically, the current study included examinations which utilized mainly GPAI and GPET to assess various components of student/athlete decision-making in both defensive and offensive playing scenarios (e.g. Dorak et al., 2018), which could have been prioritized differently in the lessons/sessions. If the provided instruction, questioning techniques, drills, and games are not sufficiently aligned with the measured components variable effects would not come as a surprise.

A closer examination of the instruments used to assess decision-making raises questions about their utility. Perhaps it would be more beneficial to assess the decisions deemed most impactful from the perspective of instruction and game performance as opposed to general components of decision-making (offense and defense). For example, in basketball, it is widely recognized that the game result is highly contingent on the four distinct factors of shooting, turnovers, rebounding, and free-throws (Oliver, 2004). Consequently, decision-making in these four categories of the game (both on offense and defense) is the most essential for performance. Besides providing more meaningful data, this type of specialized and relevant assessment criteria would arguably make the students/athletes more successful in basketball or in other sports adopting a similar strategy. Furthermore, a binominal categorization of decision-making (either appropriate or inappropriate) in the context of gameplay is an oversimplification, and as such unable to detect the nuances inherent to in-game decisions. Continuous measures, such as those suggested by Memmert and Harvey (2008) would provide a clearer picture, and it is recommended that future studies consider such an approach.

As the second outcome, we analyzed GBAs’ effect on improving participants’ knowledge about games. The analyses indicated that GBAs are no more effective than TSAs in improving knowledge. However, an exploratory analysis indicated that GBAs improve participants’ knowledge significantly—more than 1.5 standard deviations. Thus, based on the current evidence, GBAs do improve students’/athletes’ knowledge but are not more effective at doing so when compared to the other instructional models. Previous findings from narrative reviews supported these results (Miller, 2015). The characteristics of the included studies suggest that GBAs and TSAs yield similar effects within knowledge acquisition, likely due to the inclusion of knowledge components (e.g. explanations of rules and tactics) in both instructional approaches. In addition, neither the GBAs nor the TSAs aim to enhance students’ ability to accumulate and recall facts through pencil-and-paper tests. If knowledge on these types of tests was the main learning outcome, lecturing, questioning on facts, homework, and reading would arguably be the most effective learning and instructional strategies—not game or motor skill instruction.

Motor skills, the third outcome analyzed, were categorized according to measurement methods. The first subgroup included binary measures of motor skill execution during gameplay situations, while the second subgroup included continuous scores derived from independent and isolated motor skill tests. No effect of GBAs on appropriate motor skill performance in game situations in relation to the comparison models was observed. This finding corroborates previous work by Kinnerk and colleagues (2018), who found only two studies supported GBAs’ positive effect on motor skills during gameplay. It is important to note, however, that exploratory analysis in the current study indicated that GBAs improve participants’ motor skill performance significantly in game situations, on average by 10.9%. As stated above, the non-significant difference is due to the fact that TSAs also improved students’ motor skill execution, on average by 10.8%. In other words, GBAs improved motor skill execution during gameplay, but not significantly more so than TSAs. This result is supported by the recent meta-analysis (Robles et al., 2020) which also found GBAs to have no effect on motor skill in comparison to technical instruction.

The second motor skill subgroup analysis showed that GBAs improve participants’ motor skill performance in motor skill tests more than TSAs, with a small effect size. Once again, a further breakdown indicates that both models increase motor skill performance in motor skill tests, but that GBAs do so more efficiently (GBA and TSA pre-post SMDs: 0.66; 95% CI [0.24, 1.08], p < .002 and .35; 95% CI [0.02, 0.68], p = .039). This finding is not supported by Robles et al. (2020) and is in some ways surprising. Specifically, as GBAs focus on the cognitive aspects of gameplay it would be logical for them to improve students’ motor skill performance less than models that, at least on the surface, devote more time to technical motor skill instruction. However, it may be that modified gameplay commonly used in GBAs is quite effective in improving motor skill development. One reason behind this might be that the modified games allow for a lot of repetitions or opportunities to respond that are contextualized and varied (Chua et al., 2019). Opportunities to respond have been noted as a critical element for the enhancement of motor skills (Menzies et al., 2017). Furthermore, these modified games, when compared to prototypical technical instruction, may spark greater learner competitiveness, potentially enhancing motivation and consequently impacting the focus and intensity of students’/athletes’ engagement in motor skill practice (Cagiltay et al., 2015).

Lastly, this study examined potential moderators of the effects of GBAs on decision-making, knowledge, and motor skill. The only relevant moderator effects found were for motor skill performance in motor skill tests, as there were at best two studies in the smaller moderator group for the knowledge outcomes. First, studies with experienced and/or trained GBA interventionists (teachers or coaches), produced smaller effects than studies not reporting this information or if they had inexperienced interventionists. This comes as a surprise, as skilled and knowledgeable teachers and coaches should have a higher probability of delivering higher quality instructional interventions. Due to variable reporting of this feature in the studies, this result should be interpreted with caution. Second, studies categorized as higher in quality had larger effects when compared to lower quality studies. Typically, lower quality studies produce greater effects within meta-analysis so the explanation of this effect is left to speculation (Sterne et al., 2001). Lastly, when compared to models highlighting motor skill or technique, the effect of GBAs on motor skill was significantly lower than when compared to traditional instruction. Logically, it makes sense that a model highlighting motor skills would develop them. However, it is interesting that GBAs produced similar results. On the other hand, traditional instruction seems to be less effective at developing motor skill compared to GBAs. The reasons for this are up for speculation, but perhaps these traditional comparison models were simply poorly planned when compared to the GBAs. Clear structure, logic, and novelty of GBAs present some of the reasons that GBAs may be superior cultivators of motor skill when compared to the traditional instructional models (González-Cutre et al., 2016; Herman et al., 2020).

Recommendations

In general, this systematic review and meta-analysis demonstrates evidence substantiating the benefits of GBAs over the comparison models. However, when specifying motor skill performance assessed via motor skill tests (as opposed to during gameplay), some characteristics were found to impact this effect. Based on these findings and their purported rationales, we make the following recommendations for practitioners and researchers:

When aiming to cultivate decision-making in games, the evidence clearly indicates GBAs are superior to the examined comparison models, and should be implemented. Altogether, evidence indicates that GBAs are comparably effective at improving motor skill development, even when compared to models prioritizing motor skill and technique learning, and should be employed. Avoiding the use of GBAs in PE and sport settings for reasons relating to poor student motor skill development is not justifiable according to evidence. The lack of transparency and detailed reporting within interventions and comparison group procedures as well as the lack of fidelity assessments somewhat undermine the replicability and the feasibility of the analyzed studies. Therefore, we strongly advise clear disclosing of study protocols. Lastly, it is commonplace to assess decision-making with a bivariate categorization which designates a decision as proper or improper. While this strategy may be simple, and sufficient for lower levels of play, it may be overly simplistic and reduce the ability to accurately differentiate appropriate from inappropriate decisions in players possessing more motor skill and creativity. Thus, we recommend adopting assessment practices that provide a wider range of appropriateness.

Limitations

This meta-analysis and systematic review uniquely contribute to the growing evidence base on the effectiveness of GBAs in PE and youth sport. However, despite its methodological strengths, the review has limitations. First and foremost, comparing interventions and educational approaches requires a detailed description of the intervention being examined; within the examined studies this information was oftentimes inconsistent or non-existent. These reporting omissions made it difficult to confidently present conclusions based on the results. Furthermore, decision-making and motor skill execution in games were assessed with a bivariate tool which might not differentiate decision-making in a meaningful way. Second, measures of fidelity were either poorly reported or not reported. Thus, without a measure of fidelity, it is difficult to ascertain if and/or how well the compared instructional approaches were followed. This concern has been discussed before as a general limitation with instructional model research in PE (Fernandez-Rio and Iglesias, 2024). Lastly, the included studies represented a relatively low number of total participants (n = 1600). The relatively low number of studies prohibited (due to low statistical power) the ability to detect moderator effects for knowledge and decision-making. Future studies could enhance our understanding by examining similar outcome variables with different age groups, settings, sports, and experience levels.

Conclusion

The results of this analysis indicate that GBAs generally outperform TSAs in improving student and athlete decision-making and motor skill performance in motor skill tests. Furthermore, studies comparing GBAs to “traditional” approaches displayed stronger effects on motor skill improvement compared to studies using “skill”- or “technique”-based models as comparators. TSAs result in improved decision-making, knowledge, and motor skill, but are perhaps not as effective as GBAs in terms of decision-making and motor skill performance in motor skill tests. Lastly, the results and discussion highlight specific areas needing improvement if the quality of experimental GBA research is to improve.

Supplemental Material

sj-docx-1-epe-10.1177_1356336X241245305 - Supplemental material for The effect of game-based approaches on decision-making, knowledge, and motor skill: A systematic review and a multilevel meta-analysis

Supplemental material, sj-docx-1-epe-10.1177_1356336X241245305 for The effect of game-based approaches on decision-making, knowledge, and motor skill: A systematic review and a multilevel meta-analysis by Mika Manninen, Eric Magrum, Sara Campbell and Sarahjane Belton in European Physical Education Review

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.