Abstract

This study enacted and supported a scaffolding process to improve preservice teachers’ (PSTs') assessment literacy as they experienced school placement. It is crucial to create opportunities that enhance PSTs’ understanding of assessment literacy, helping them to reconsider conceptions previously developed as school students (socialisation experiences) and to gain an appreciation for the benefits assessment affords students in their learning. Assessment literate teachers can enact appropriate assessment practices that can improve students’ learning and the teaching-learning process while providing opportunities for students to regulate their learning. Eight physical education PSTs working with the same university supervisor took part in the study. Data were collected through individual and focus group interviews, post-seminar reflections and testimonial surveys, researcher's field notes, and PSTs’ school placement reports. This study highlighted that supportive, practical and critical participatory approaches are crucial to encourage PSTs to question and change their assessment conceptions, and to improve their assessment literacy. Results also showed that, despite struggling to avoid practicing what they experienced as school students (i.e. socialisation experiences), PSTs can alter their assessment understanding and practices to incorporate assessment for learning principles. Teacher educators are encouraged to consider how they can best acknowledge and address the pre-conceived assessment conceptions PSTs bring into these programmes.

Keywords

Introduction

Education literature has shifted over the years from a focus on teachers to students and from a focus on teaching to learning (Hay et al., 2015). The discussion around teacher- and learner-centred paradigms encourages us to rethink the purpose and process of assessment, leading to an increase in research on assessment as a support for learning (AIESEP, 2020). The intention of this body of research has been to understand assessment literacy in relation to (a) teachers’ implementation of assessment practices, (b) the impact of those practices on students’ learning, (c) the inclusion of students as active regulators of their learning and (d) the consideration of assessment as a situated practice, socioculturally constructed and highly influenced by teachers’ identity (Looney et al., 2018; Pastore and Andrade, 2019).

Despite the efforts of teacher education programmes to change ingrained conceptions preservice teachers (PSTs) bring to these programmes, there is consistent research that PSTs hold on, or revert, to the conceptions they experienced as school students (Richards et al., 2014). Some advocate for changes in the teaching of assessment if the goal is to increase PSTs’ assessment literacy (Brevik et al., 2017). Research shows that if teachers are not exposed to alternative assessment practices, they maintain a traditional orientation to assessment (DeLuca et al., 2018) and PSTs rely on summative assessment given this was what they had been exposed to as school students (Mjåtveit and Giske, 2020).

Considering PSTs’ low assessment literacy levels and struggle to not replicate what they experienced as school students, it is necessary to establish appropriate and explicit opportunities that encourage PSTs to interrogate their conceptions about, and practices of, assessment and learning. In response to this, this study enacted and supported a scaffolding process to improve assessment literacy aligned with PSTs’ school placement.

Assessment literacy

Key aspects that are regularly aligned with a teacher being assessment literate include (a) being able to select assessment methods that effectively demonstrate students’ learning, (b) reflecting on the validity, quality, purpose and use of assessment to effectively embed it within curriculum and pedagogy and (c) making assessment (criteria and results) available to students, allowing them to participate actively in their learning process (Pastore and Andrade, 2019). Hay and Penney (2013) defined that, to be assessment literate, teachers have to master four interconnected components: (a) assessment comprehension, focused on knowing and understanding ‘what’, ‘why’, ‘when’ and ‘how’ to assess better; (b) assessment application, focused on knowing how to plan and enact appropriate, meaningful and relevant assessment successfully, involving students actively in their learning and assessment process; (c) assessment interpretation, focused on the analysis and use of data gathered from assessment practices, and considering the negotiation of social relations of assessment; and (d) critical engagement with assessment, focused on being conscious about the impact or consequences of assessment, challenging the ‘naturalness’ of practices, performances and outcomes of assessment.

As a consequence of the discussion around learning paradigms (Pastore and Andrade, 2019), ‘assessment literacy’ continues to be constructed in multiple ways. While there is some consensus around the key aspects necessary to consider a teacher as being assessment literate, it is important to acknowledge that the different emphasis given to the term ‘assessment’ also impacts the analysis of teachers’ assessment literacy. For example, while the concepts of validity and quality are commonly used with respect to assessment, their intended meaning and associated comprehension are different dependent on the specific purpose of assessment. Assessment application reflects an understanding of the teaching-learning process and learning theories (Allal, 2020). Assessment literacy is no longer only about implementing assessment practices but also about how to interpret and analyse the impact of assessment practices in students’ learning and engage students in their own assessment (DinanThompson and Penney, 2015).

Assessment literate teachers are essential to effective and quality assessment. Using assessment for learning (AfL) requires a teacher to be assessment literate. AfL implies more than changing assessment techniques (Moura et al., 2021), i.e. embedding assessment within curriculum and pedagogy, using assessment to improve teachers’ decisions and students’ learning and including students in assessment. In AfL, it is intended to have students well-informed about the learning process and able to regulate their learning.

Increasing the use of AfL purposes (in physical education) requires investment in (preservice) teachers’ assessment literacy and is also a necessity for teachers entering classrooms (AIESEP, 2020). Pastore and Andrade (2019) report that teachers are not ready to successfully embed assessment in their teaching due to (a) teacher education ineffectiveness in changing their thinking as PSTs, (b) difficulties in transforming assessment practices acquired in teacher education to specific school contexts and (c) a limited amount of research identifying successful approaches to enhance teachers’ assessment literacy. PSTs tend to enter teacher education programmes relying solely on summative assessment because of their education (DeLuca et al., 2018). Improving PSTs’ assessment literacy in this scenario is therefore challenging to Physical Education Teacher Education (PETE) programmes, given that PSTs may find it difficult to consider, understand and enact assessment that promotes students’ learning (Starck et al., 2018; Tolgfors et al., 2021).

DeLuca et al. (2018) advocate for research exploring how teachers perceive assessment and how they enact it, acknowledging the lack of research on the latter. The scarcity of empirical research has been identified by the same author as well as the limited impact of teacher education on PSTs’ assessment literacy (DeLuca and Klinger, 2010). Macken et al. (2020) identified the absence of empirical studies of PSTs enacting AfL in primary physical education.

Although assessment literacy as a concept has become common, some researchers have expanded the concept to ‘assessment identity’ to capture the experiences and personal, social, and contextual aspects that affect teachers’ beliefs (Looney et al., 2018). Teachers’ enactment of assessment not only demonstrates what teachers do, but also who teachers are and what they have experienced (socialisation of teachers). Teachers’ use of assessment is affected by the context they work in and their previous exposure to assessment. Opportunities to obtain a deeper understanding of teachers’ assessment literacy are crucial to appreciate the realities of possessing, and enacting, assessment literacy for the benefit of students. Alternatively, teachers’ identity changes throughout their career and is influenced by the contexts in which they work (Looney et al., 2018). This occurrence, as well as considering assessment a situated and social practice (Hay and Penney, 2013) reliant on teachers’ experiences, suggests that it may be possible to improve (preservice) teachers’ assessment literacy and reinforces the need to consider teachers’ socialisation.

Occupational socialisation theory

Teachers’ and PSTs’ disposition towards assessment and, in turn, assessment literacy is affected by their prior experiences and is captured through occupational socialisation theory (OST) (Richards et al., 2014). OST, defined as the influences of the environment on the socialisation of teachers, is represented by a three-phase process: acculturation, professional socialisation, and organisational socialisation (Lawson, 1986). The fluidity of the impact of each phase on an individual's socialisation is commonly accepted (Starck et al., 2018).

Acculturation covers the period from birth to entering a teacher education programme. PSTs develop conceptions of education throughout these years, in a process described as the ‘apprenticeship of observation’ (Lortie, 1975). The influence of this phase is significant given the well-documented difficulties faced by teacher education programmes in changing PSTs’ conceptions (Starck et al., 2018).

Professional socialisation aligns with the period spent in a teacher education programme. PSTs enter teacher education programmes with a preference for summative assessment due to their aligned previous exposure as school students (Mjåtveit and Giske, 2020). For this reason, and considering that socialisation is a dialogical process, teacher education programmes struggle to successfully change PSTs’ long-term subjective theories.

Organisational socialisation aligns with teachers entering the teaching profession. Transition to school can be challenging for beginner teachers, especially when graduating from teacher education programmes with innovative practices and then teaching in schools that favour traditional approaches (Richards et al., 2014).

OST aids our understanding of why PSTs find it difficult to consider assessment as anything other than what they experienced as school students and, in turn, how best to interpret and engage critically with assessment data to promote students’ learning (DinanThompson and Penney, 2015). Often, PSTs’ teaching replicates the practices of their previous schoolteachers rather than what they have been exposed to, and encouraged to practice, in teacher education programmes (Richards et al., 2014).

Studies that have examined teachers’ conceptions of assessment also show that conceptions and experiences cannot be dissociated, with many teachers associating assessment with summative grading purposes (Darmody et al., 2020). Indeed, research suggests that previous experiences in assessment dominate (preservice) teachers’ thinking in comparison with anything they had been taught about assessment (Looney et al., 2018). One such example is reported by Mjåtveit and Giske (2020) who acknowledged that PSTs reverted to, and relied on, traditional assessment approaches rather than using AfL practices as advocated in the teacher education programme. Pastore (2020) also reported no differences in understanding and practical aspects of assessment (literacy) between PSTs who participated in an assessment course in their teacher education programme and those who did not.

OST alerts us to the importance of teacher education programmes considering PSTs’ previous conceptions of assessment to better develop PSTs’ assessment literacy (DeLuca et al., 2018). Given PSTs’ low levels of assessment literacy, it is expected that PSTs will continue to struggle to transfer what they learned in PETE programmes to embedding assessment in their practice as schoolteachers (Moura et al., 2021). This is likely to result in PSTs abandoning the assessment-related pedagogical principles learned throughout their teacher education programmes and replicating assessment practices they experienced as school students (Starck et al., 2018).

Teacher education programmes and assessment

Assessment can be challenging for (physical education) teachers, and teacher education programmes have been struggling to change PSTs’ previous assessment conceptions on assessment and, subsequently, improve PSTs’ assessment literacy (Looney et al., 2018). Summative assessment practices (as a means of assessing PSTs) also dominate teacher education programmes (Starck et al., 2018), reinforcing assessment preconceptions brought by PSTs to these programmes. Assessment courses, when they take place, appear disconnected from practical aspects of the classroom (Brevik et al., 2017) and provide few experiences to learn about educational assessment concepts and practices (Pastore and Andrade, 2019). Macken et al. (2020) mention the uncertainties about the most relevant knowledge to include in assessment courses and how best PSTs learn to assess. Brevik et al. (2017) suggest that the formal school placement experience as part of a teacher education programme provides an opportunity for PSTs to experience assessment practices in a ‘real context’. Relying solely on cooperating teachers to educate PSTs’ use of assessment practices could be problematic given that cooperating teachers tend to possess low levels of assessment literacy (DeLuca and Klinger, 2010).

To strive towards shared understandings among all involved in school placement, there is a need for an intensive, and closer, relationship between universities and schools (MacPhail and Lawson, 2020). That would promote engagement with assessment and develop both cooperating teachers’ and PSTs’ assessment literacy (DinanThompson and Penney, 2015). Macken et al. (2020) found that, with the appropriate support, PSTs can gain more from the school placement by developing their knowledge about assessment and, in turn, becoming assessment literate teachers.

Improving PSTs’ assessment literacy will most likely require the reconfiguration of PETE programmes. Loughran (2014) advocates for a ‘pedagogy of teacher education’ and considers teacher education programmes as fulfilling two main roles, teaching content and teaching PSTs how to teach (i.e. how to put content into practice). Regarding the latter, explicit assessment courses should be framed around practice-based teaching (Brevik et al., 2017) with the goal of improving PSTs’ assessment literacy. Preferably, practical approaches to assessment should address the four components of assessment literacy defined by Hay and Penney (2013). Practical approaches that challenge PSTs’ knowledge, beliefs and experiences are necessary. This provides PSTs with opportunities to develop skills and an ability to reflect and examine enacting assessment practices (Starck et al., 2018; Tolgfors et al., 2021). This may contribute to improving PSTs’ assessment literacy. Providing PSTs with opportunities to work with school students as part of their PETE programme (e.g. experiencing student peer assessment) may also enhance their assessment literacy.

Exposing PSTs to ‘real’ assessment practices is essential to engage PSTs in reflections about their understandings and the challenges of enacting assessment (Macken et al., 2020). The same authors argue that these opportunities are needed to counterbalance the negative impact of the ‘apprenticeship of observation’ while helping PSTs actively reconstruct their understandings and practices as teachers (and assessors). Assessment conceptions are complex, have a dynamic relationship between theoretical and practical knowledge and are sociocultural in structure (Looney et al., 2018). This reinforces the importance of PSTs having the opportunity to enact assessment practices and reflect on the impact of such practices on students’ learning.

It is essential that teacher education programmes provide PSTs with the appropriate support to demonstrate assessment literacy successfully in practice (Pastore and Andrade, 2019); however, there appears to be a lack of studies exploring collaborative practice approaches to improving PSTs’ assessment literacy. Based on what has been discussed above, this study enacted and supported a scaffolding process to improve PSTs’ assessment literacy as they experienced school placement. This study addresses the following research questions: (a) How does creating explicit opportunities that support PSTs interrogating their own conceptions about, and practices of, assessment and learning, help PSTs in their assessment literacy?; (b) In what way do PSTs’ previous experiences as school students influence their assessment literacy and their capacity to learn different approaches to assessment?; and (c) How are PSTs challenged to change their understanding of assessment and to not revert to assessment practices they experienced as students?

Methodology

This study used critical participatory action research (Kemmis et al., 2014), where participants were co-constructers of their knowledge, actively reflecting on their understanding of assessment and related practices. Critical participatory action research aims to address the theory and practice gap by encouraging practitioners to act as theorists and researchers of their own practices (Kemmis et al., 2014). It is not intended to have participants implementing researchers’ theories. Knowledge is co-constructed by all involved with participants researching, and enacting, what they consider appropriate to their practices. The methodology considers practitioners as the most important resource to change practices, encouraging them to reflect on their practices individually (with themselves) and collectively (with others). One of the challenges/limitations of critical participatory action research is its heightened dependency on participants’ contributions. The ‘key element’ of our critical participatory action research was to encourage participants to interrogate, analyse and reflect on what they learned and taught. To transform current practices, participants must investigate and question their practices, identifying what can be improved and how to improve it.

Participants and context

Eight PSTs from a two-year physical education master's programme from a public Portuguese university took part in the study. The first year of the programme takes place at the university. Assessment is taught in the curricular unit ‘General Sports Didactics’ as theoretical content (mentioning different types of assessment but without opportunities for practical examples or implementation). The focus is on the content to be assessed and on assessing students’ performance. In this course, assessment is taught as a component of the teaching-learning process as are, for example, teaching models and feedback. Little is done during this unit in providing knowledge and skills, and supporting PSTs, to improve their assessment literacy and enact AfL in their school placement. In relation to another curricular unit, ‘Specific Didactics of Sport’, the focus is on teaching how to assess technical-tactical content. During the second year of the programme, PSTs work with their university supervisors on Mondays at the university and with their cooperating teachers, from Tuesday to Friday, in schools (school placement) for the entire academic year (September to June). After successfully completing this master's degree, PSTs become qualified to teach physical education in primary and secondary schools.

To be eligible for this study, PSTs had to be (a) supervised by the university supervisor who acted as facilitator for this study and (b) undertaking school placement in schools where cooperating teachers were interested in being involved in the study and had already been collaborating with the university for more than 10 years. From the 15 PSTs who met these criteria, eight were purposively selected (Patton, 2002) with respect to their predisposition to accept challenges, availability for undertaking study-related requests, interest in joining the pedagogical study, and commitment to the study. Each PST signed an informed consent form to participate in the study. The study’s purpose and design were explained to each PST, and it was made clear that they could opt to leave the study at any time without any consequences. The study was granted ethical approval by the university in which the research was conducted. PSTs received a pseudonym to protect their identity.

The eight PSTs (five women and three men) had no professional experience as teachers and were aged between 22 and 27 years. Six of the PSTs had completed their undergraduate programme in physical education and sport at the same university (north of the country) in which they were undertaking the master's programme and this study.

Study design

The study had three action research cycles over a six-month period and on every Monday PSTs attended the university campus to work with their university supervisor.

Each seminar took place at the university with the researcher, the PSTs’ supervisor, and the eight PSTs in attendance. In an attempt to improve PSTs’ assessment literacy, the researcher and the PSTs’ supervisor facilitated the seminars and encouraged PSTs to interrogate their own beliefs, understandings and practices of assessment. Aware that each teaching context is different, the facilitators encouraged PSTs to consider their own ways of enacting assessment throughout seminars and school placement. The facilitators sought to create a positive environment where PSTs would feel comfortable and safe to share their assessment perspectives and thoughts and act as co-constructers of their learning about assessment. Learning goals for each seminar were shared with PSTs at the start of each session.

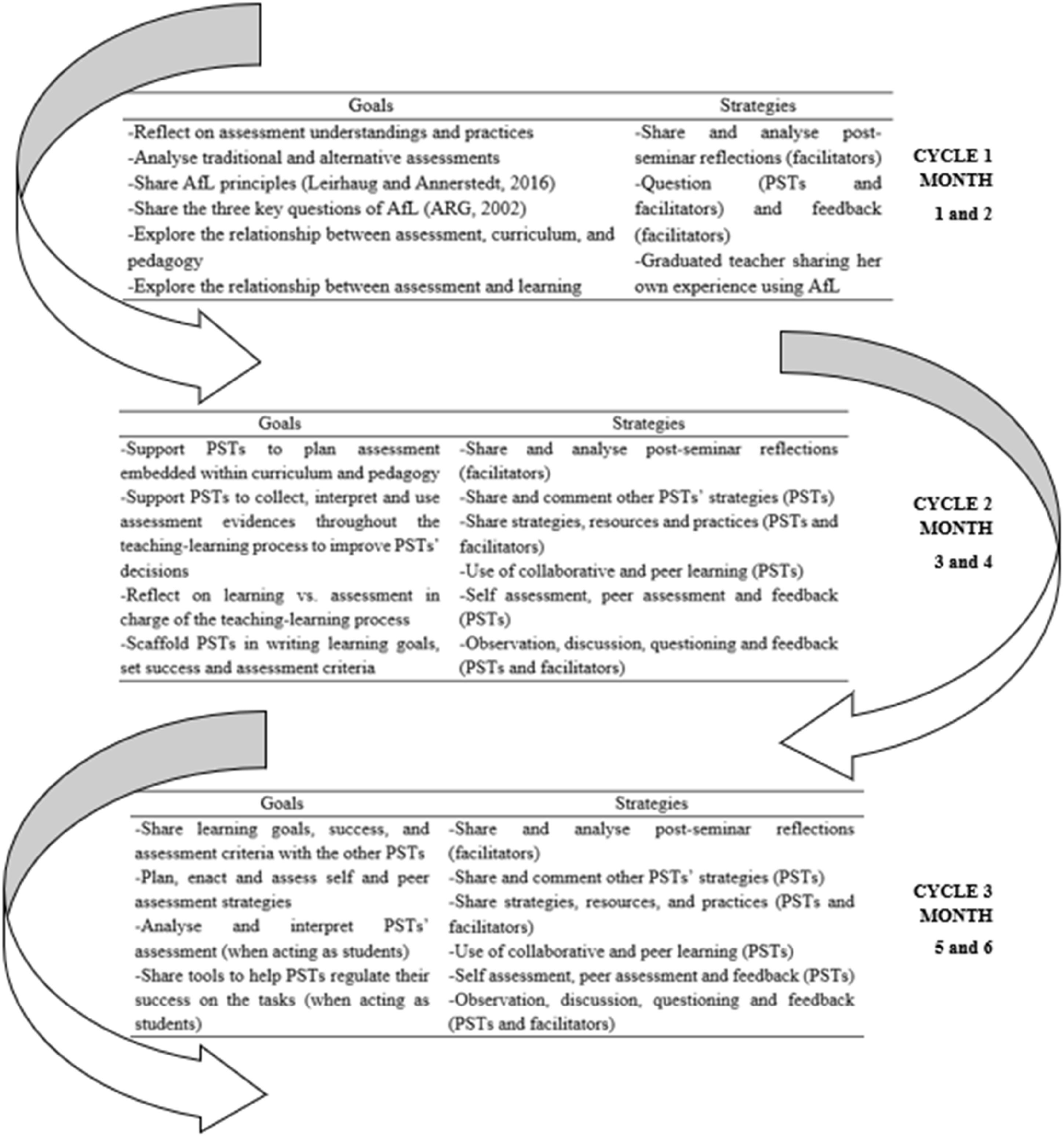

During the seminars, the PSTs’ supervisor was primarily focused on engaging PSTs in conversation about the relationship between assessment and learning (Moura et al., 2021), while the researcher focused on scaffolding activities for PSTs during seminar tasks (e.g. planning). The purposes and strategies of the seminars are detailed in Figure 1. The first two months were primarily focused on directing PSTs to reflect on their current conceptions of assessment and the relationship between teaching, learning and assessment (Hay et al., 2015). During months three and four, facilitators encouraged PSTs to reflect on how they plan for, and integrate, assessment in the teaching-learning process, and why and when they use assessment effectively (AIESEP, 2020). During months five and six PSTs were encouraged to reflect on how, why and when to involve students in the teaching, learning and assessment process (Tolgfors, 2018).

Seminar sessions.

Data collection

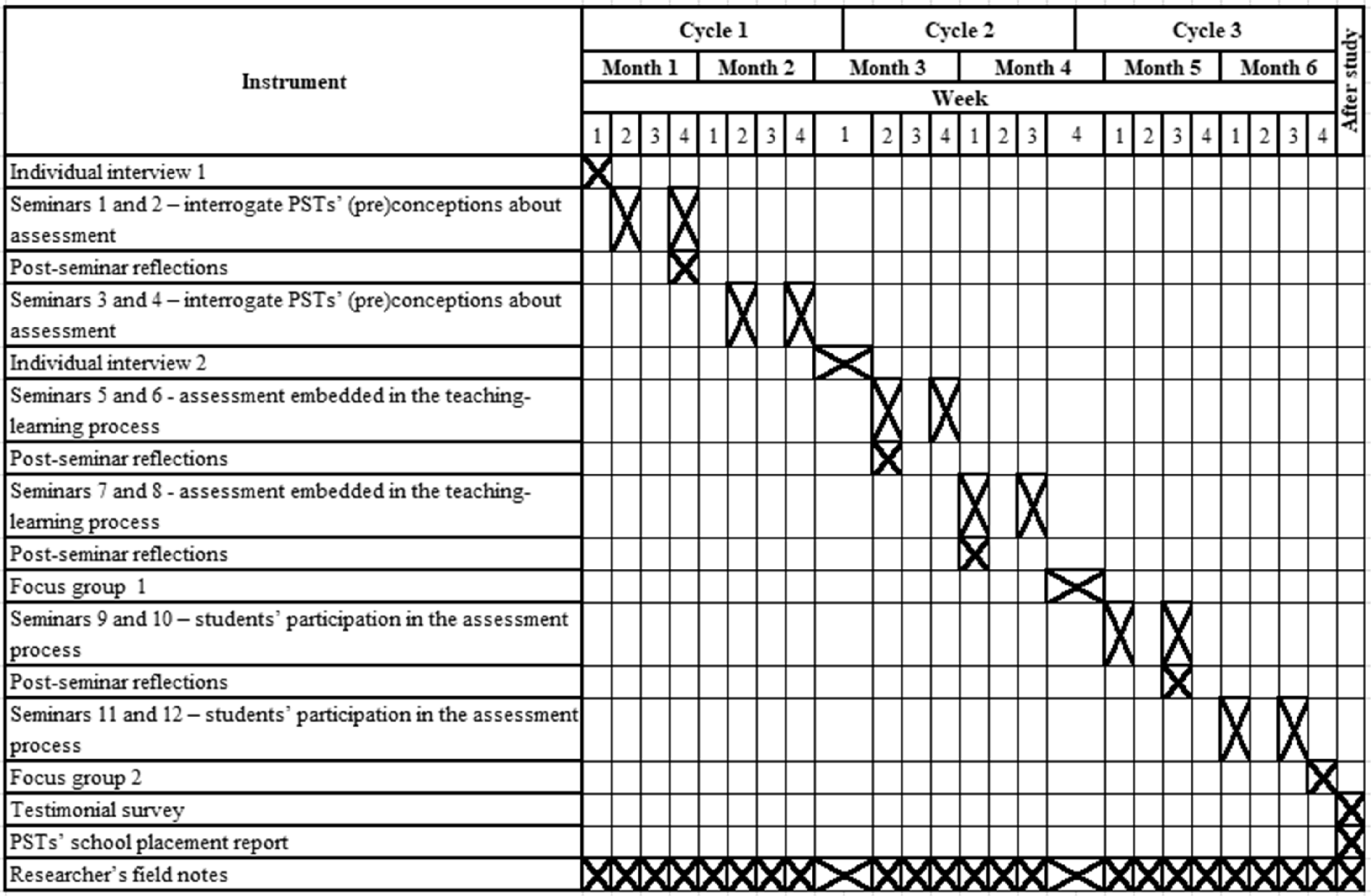

Figure 2 captures the three cycles and instruments used to capture and analyse PSTs’ developments in assessment literacy. Individual and focus groups interviews, testimonial surveys, post-seminar reflections and school placement reports collected complementary data on PSTs’ (previous and ongoing) assessment understandings and practices, and valued their involvement in the seminars and the study. In analysing the school placement reports, the researcher identified unprompted references related to ‘assessment’, ‘learning’ and ‘students’ involvement’. Researcher field notes captured the differences between what PSTs reported they did and what they were observed doing. Triangulating data across the different data sources improved trustworthiness (Patton, 2002).

Study design.

Individual interviews

Two semi-structured individual interviews with each PST took place in a quiet room at the university. Each interview lasted on average 20 minutes and intended to capture PSTs’ perceptions and understanding of assessment before the beginning of the study and after the first cycle. Examples of questions posed in the first interview included “What are your thoughts on assessment and learning?” and “What do you think is important when planning assessment? and when implementing?”. The second interview included questions such as “How do you articulate the relationship between assessment and learning?” and “In what way can assessment support learning?”.

Focus group interviews

The researcher and the PSTs’ supervisor facilitated the two focus groups with all eight PSTs. These occurred during the university seminars, and each lasted on average 70 minutes. The intention was to explore (a) how collaboration in the seminars supported PSTs to deal with problems and dilemmas while learning and planning for assessment and (b) the meaning and value attributed by PSTs to the seminars. Examples of questions posed included “What concerns do you have to help students progress in their learning?”, “Considering the aspects discussed in seminars, what was the most relevant/the one you would like to highlight?” and “What did you try (or would like to try) to incorporate into your practices?”.

Individual and focus group interviews were audio recorded and transcribed verbatim before being returned to PSTs to read and approve final transcripts.

Post-seminar reflections

At the end of each seminar, facilitators invited PSTs to formally write responses to two or three questions. The questions focused on the most relevant aspect learned at the session and the associated perceived challenges in enacting such aspects in practice. PSTs’ responses to the questions each week were revisited at the next seminar meeting to encourage discussion and share perspectives. While the questions were prepared by the facilitators prior to each seminar, the questions would change dependent on the nature of the conversation that ensued throughout the seminar. PSTs were also afforded the opportunity to discuss any related aspects that they considered were important and had not been prompted by the facilitators.

Researcher's field notes

The researcher maintained written reflections (memo writing) (Patton, 2002) throughout the three cycles of the study to capture developments in PSTs’ assessment knowledge and thinking, to note instances where PSTs faced challenges and difficulties they could not solve on their own and to improve the quality of future seminars. Nine reflections in total (each approximately 700 words) were written throughout the process.

Testimonial survey

After completing the seminars, each PST received a survey by email. While stated open questions intended to prompt PSTs’ reflection, PSTs could choose to reflect without directly answering each of the eight questions. Formal questions focused on how PSTs planned and enacted assessment, how they provided feedback to students to move their learning forward and how they included students in the process. PSTs were also asked for feedback on the effectiveness of being involved in weekly seminars in contributing to their learning about assessment and suggestions on how to improve the effectiveness of the seminars. All PSTs completed the survey, with six choosing to answer the posed questions and two choosing open reflection. Regardless of what option was chosen, each reflection was approximately five pages long.

School placement report

PSTs complete a school placement report at the end of their programme to capture their teaching experience. There is a specific section in the report that encourages PSTs to describe the experience of planning and enacting the teaching-learning and assessment process. Given that the report required PSTs to note their most relevant and valued school placement experiences, the report provided additional information on PSTs’ understanding of assessment to those collected during university seminars. Each report included examples of annual planning, teaching units, lesson plans, reflections, log diary, characterisation of school placement, school context and PSTs’ students, and instances of practitioner study throughout school placement.

All data collection and analysis took place in Portuguese. Data were translated to English for dissemination purposes.

Analysis procedures

A deductive-inductive analysis procedure was used across all data, moving back and forth between the aim of the study (enact and support a scaffolding process to improve assessment literacy aligned with PSTs’ school placement), the data, assessment literacy framework and OST. Data analysis used a three-component flow process: data condensation, data display and conclusion drawing/verification (Miles et al., 2014), with the researcher oscillating between the three components.

Data analysis began after the initial PSTs’ interviews and informed the researcher on the design of the first seminars in cycle one. Data were constantly triangulated and informed the other methods. For example, the second individual interview, focus group one, post-seminar reflections and researcher's field notes supported the design of following seminars and respective cycles. Seminars provided complementary data to individual and focus group interviews, post-seminar reflections and field notes. These different sources offered valuable data to one another. Analysis of the testimonial survey and school placement reports took place at the end of the study. All data were analysed as soon as possible after collection.

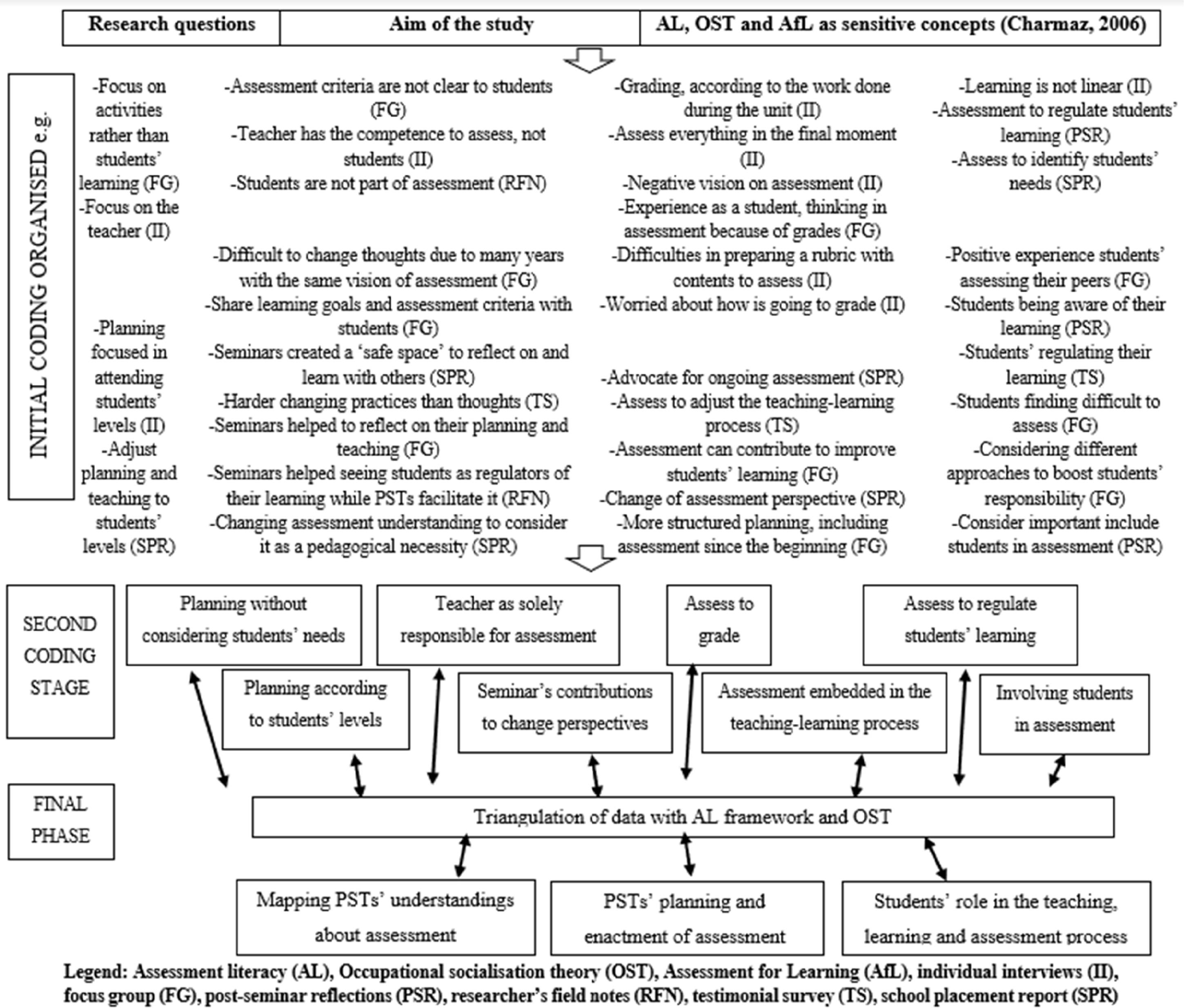

Data collected from the different methods were first read and examined incident by incident, highlighting meaningful extracts on the text. The codification process (data condensation) started with two of the authors re-reading these data using assessment literacy, OST and AfL as sensitive concepts (Charmaz, 2006). Initial codes deduced from the theoretical frameworks or from data were refined in a second coding stage through a constant comparison process, which included aggregating codes by proximity (Figure 3). For example, the codes focus on activities and not on students’ learning and focus on the teacher were aggregated into a broader code of planning without considering students’ needs. The data condensation process by coding led to the creation of charts capturing the most relevant themes (data display).

Data analysis.

The final phase of analysis involved data triangulation across the different methods, taking into consideration the data, assessment literacy and OST. Triangulation allowed the identification of the main ideas within and across the codes at the second coding stage. For example, ideas aggregated under the code seminars’ contributions to change perspectives indicate the contribution of seminars on PSTs’ understandings, planning and enactment of assessment. The analysis resulted in the themes presented in Figure 3.

Results

The Results section is presented considering the three themes identified on data analysis: (a) mapping PSTs’ understandings about assessment; (b) PSTs’ planning and enactment of assessment; and (c) students’ role in the teaching, learning and assessment process.

Mapping PSTs’ understandings about assessment

At the beginning of the study, PSTs considered assessment for grading students’ performance and as a teacher’s exclusive responsibility. This highlights the preconceptions PSTs brought to this study with respect to their experience of summative assessment and specifically assessing to finalise a grade: When I heard the word ‘assessment’, several words came to my mind such as pressure, comparison, classification, grades. (Ricardo, Post-Seminar Reflections cycle 1)

While it was evident that PSTs’ previous experiences and limited appreciation of assessment were challenged throughout the seminars, there were indications that six of the eight PSTs reverted (especially during the first action research cycle) to a reliance on assessment they had experienced as school students: For years and years, all our life as [school] students, assessment was a moment, mainly the final moment. I am already thinking different [about assessment], but unconsciously my thinking reverts to my experience as a [school] student. (Tatiana, Individual Interview 2)

Conscious of PSTs’ limited assessment understandings, seminar discussions explored alternative assessments and supported PSTs in interrogating their conceptions. Experiences shared through seminars allowed PSTs to consider how embedding assessment within the teaching-learning process can enhance learning: The discussions we had in seminars made me question many things I had taken for granted, as for example, assessment is [only] the moment to evaluate/grade. Now I can see that assessment can contribute to learning if brought into the learning process. (João, School Placement Report referring to cycle 1)

While the remaining two PSTs started to move away from reproducing assessment practices they had experienced as school students, it remained difficult for them to explain how assessment could be embedded in the teaching-learning process. As the study continued, PSTs’ assessment literacy improved. This was evident with the PSTs’ understanding that embedding assessment in the teaching-learning process would improve their teaching and students’ learning: Now, I feel I am seeing assessment with ‘different eyes’ [referring to seminar 6]. I consider assessment as a continuous and systematic process of collecting evidence and a good principle to decision-making as it allows you to adjust the teaching and planning strategies to match students’ needs. Assessment gives answers about the success of teachers’ teaching. If students are not learning, we must change something or understand why they are not learning. (Francisca, Focus Group 1)

At the end of their school placement, PSTs reported that they started to consider that embedding assessment into the learning process implied selecting learning activities aligned with learning goals, outcomes and assessment criteria. This represents a significant shift when compared to the beginning of the study: Now I see that I changed from a linear to an embedded and aligned perspective of assessment with curriculum and pedagogy. When planning a learning activity, we have to consider the learning goal(s). These learning goals are relevant, related to the outcomes and assessment criteria. The activities that we select need to support students to achieve the goals and allow them to know if they are achieving them. (Maria, School Placement Report)

PSTs’ planning and enactment of assessment

At the start of the study, PSTs’ thoughts about planning for assessment were reliant on assessing to grade, being solely concerned with creating a rubric that captured all the content that had to be assessed: I think it is important to have a grid [rubric] to grade students. This would be our guide, with the content and criteria defined by teachers that need to be observed, to guarantee that all students are assessed in the same way. (Joana, Individual Interview 1)

PSTs considered assessment as an ‘add-on’ to the teaching-learning process. Subsequently, viewing teaching, learning and assessment as separate entities resulted in misalignment between the different lessons of the teaching unit and uncertainty about what they expected their students to learn: PSTs are incapable of answering [after our first planning attempts in seminars] when asked about what they want their students to achieve at the end of the unit. This highlights their difficulties to create a ‘big picture’ of what they want their students to learn [learning goal/s] and what is necessary to do to help them achieve it. Most of the PSTs (five) tend to create learning situations and then define a goal to that task, but all of them find it difficult to establish progressions that connect the different lessons of the unit. (Researcher's Field Notes)

PSTs reported that discussions during university seminars helped them define learning goals and how best to embed assessment in the teaching-learning process. During cycle 2 of the study, PSTs experienced more structured planning after understanding the importance of determining what they wanted their students to achieve: My planning changed a lot since the beginning [of the study]. Things are now much more structured. We have a better and precise guide of what we want students to achieve. In this second phase, assessment was part of the process from the beginning while in the first phase we talked only about assessment one week before the specific class. (Ricardo, Focus Group 1)

All PSTs acknowledged that the seminars had encouraged them to become more literate about assessment, enhanced by the creation of a safe space where they could share experiences, learn from each other and reflect on what they were sharing and hearing: I did not consider seminars as lessons, but as moments to share knowledge, experiences, learning, reflections, challenges, happiness, outbursts and support on tough days. Sharing strategies and tools was crucial to understand that we did things differently, but that does not mean that was wrong. It was just different ways to plan and enact the learning process. This helped me to reflect and be critical. (Maria, School Placement Report)

The acknowledgement that students do not always learn what the teacher intends them to learn, helped PSTs understand the importance of embedding assessment into the teaching-learning process and involving students in the analysis of their learning: One of the most important things I learned from assessment in relation to students’ learning is that students do not always learn what is taught. This showed me the importance of enacting assessment through the process, because we have to check continuously if students are learning what they are supposed to. (Filipa, Testimonial survey)

Even with PSTs acknowledging the importance of involving students in the analysis of their learning, some students failed to achieve learning goals, because students are all different. During the third cycle, six of the eight PSTs’ planning allowed for more differentiation with a view to addressing the different needs of students: I changed how I plan and conduct lessons. In the first phase, I was only focused on what I wanted students to do. Appropriate or not, I had everyone doing the same. Now [second phase] I consider students’ different levels. For example, in gymnastics I have students divided by levels. Some are doing ‘forward rolls’ on a mat, others are doing it with the aid of a ‘slope’ [to make it easier] and others have help from a peer. During the activity I check if all students are engaged at a challenging and achievable level. (Manuel, Focus Group 2)

Students’ role in the teaching, learning and assessment process

At the beginning of the study, all PSTs reported that, as students at school, they had never been involved in discussions around assessment practices and decisions. When asked about their thoughts on students’ role in assessment, PSTs referred back to the importance of teachers having responsibility for assessment: I never considered the students’ role [in assessment]. During the master's, teacher educators talked about that, but I did not know how and why I should include students in assessment. For me, assessment is the teacher's responsibility. (Manuel, Individual Interview 1)

However, seminars had a valuable contribution in changing perspectives. All PSTs began reflecting in the seminars on the extent to which the sharing of learning goals and assessment criteria with students could enhance the focus on learning: Seminars made me reflect on the use of assessment. Now, I consider that when students understand what they are supposed to learn and how to have success, they can work towards it and know if they are achieving it. In an ideal situation, they can understand their level, what they need to improve, what they improved on and how far they are from their goal. (Maria, Focus Group 1)

PSTs admitted that their perspective on assessment changed throughout the study and specifically in terms of students’ involvement, beginning to support peer- and self-assessment as an important aspect of students’ learning: My perspective about assessment changed a lot. At the beginning of the year, my perspective about assessment was totally focused on giving students a final grade. Then, I started considering assessment as much more than just grading students. It is about involving students in the process, so they can be aware of their difficulties, potentialities, and progress. That is why it was important to share learning goals and assessment criteria. Now, I consider it more about having students participating in the process. Having students regulating their own learning, assessing peers and trying to help them take initiative. (João, Testimonial survey)

In summary, PSTs’ thinking was highly influenced by their previous experiences as school students. This led PSTs to initially understand and enact assessment solely for grading purposes. PSTs’ low levels of assessment literacy limited their perspectives on the potential of assessment, which raised several challenges when trying to change their understandings and, subsequently, their practices. Despite the difficulties, PSTs were able to gradually improve their assessment literacy, evident by not giving up on embedding assessment in the teaching-learning process and, predominantly through the support provided by the seminars, including students in the assessment process.

Discussion

At the beginning of the study, PSTs conveyed a low level of assessment literacy. This was not surprising given that their assessment experiences as students align with Lortie's (1975) notion of the ‘apprenticeship of observation’. These PSTs conveyed similar dispositions to other PSTs who enter teacher education programmes with a strong reliance on understanding assessment as being solely summative (Mjåtveit and Giske, 2020), an add-on to instruction (Hay et al., 2015) and a teacher's exclusive responsibility (Tolgfors, 2018).

Previous experiences as passive students when they were at school led PSTs to develop an idea of teaching, learning and assessment as the sole responsibility of the teacher which proved difficult to change. Being active learners during seminars helped PSTs to question, discuss and reflect on the usefulness of teacher- vs student-centred approaches and to improve their assessment comprehension, specifically in terms of ‘why’ and ‘when’ to assess. Enacting (their thoughts on) assessment in seminars was crucial to PSTs exploring assessment and developing their assessment literacy. Understanding that not everything taught is learned demands teachers to change assessment application and the way they comprehend teaching (Allal, 2020). That is, moving the focus from teaching to learning and from teachers to students, which was evident as the seminars continued throughout the study. This is a salient aspect of teachers’ improvement of their assessment literacy, especially in relation to the assessment comprehension component (Hay and Penney, 2013) and a first step to considering enacting AfL in their practices.

Appreciating that PSTs tend to resist new ideas when these differ from those experienced during the acculturation phase (Richards et al., 2014; Starck et al., 2018), there were indications that the seminars had created a safe space for PSTs to consider alternative assessment practices that they would not previously have considered enacting. However, this is not to say that PSTs did not struggle with developing their assessment literacy and particularly with respect to assessment literacy components: application, interpretation and critical engagement with assessment.

PSTs’ previous conceptions of assessment (from acculturation and professional socialisation phases) continued to affect their assessment application and interpretation even after there were indications of an initial improvement in their assessment comprehension. On some occasions, PSTs believed that they had improved their assessment comprehension, but these changes were not evident in practice. This represents a superficial understanding of assessment (DinanThompson and Penney, 2015).

We suggest that the cyclical nature of the seminars and school placement resulted in PSTs being continually pushed to not revert to their previous socialisation experiences and embrace the opportunity to discuss and interrogate alternative assessment practices. This provided them with opportunities to apply and reflect on the effectiveness of such practices before going into the school placement. There was clear evidence that PSTs’ assessment comprehension and application had improved with respect to the use of assessment as a support for learning and the involvement of students in the assessment process. Another major change was the consideration of the impact of assessment on their students’ learning. In the first phase of changes the focus was solely on being able to use assessment throughout the teaching-learning process. This represents a shift from a superficial understanding of assessment (DinanThompson and Penney, 2015) towards a consideration of interpretation and critical engagement with assessment (Hay and Penney, 2013).

Working with PSTs throughout their school placement provided an opportunity for PSTs to develop their assessment literacy in ‘real’ contexts, challenging the negative impact of their ‘apprenticeship of observation’. This also helped PSTs actively reconstruct their understanding, planning and enactment of assessment (for learning) practices. Similar to the work of Macken et al. (2020), results show that PSTs can better develop their assessment literacy during the school placement when supported to do so. The cyclical nature of seminars and school placement supported the positive impact of practical approaches to teaching (Brevik et al., 2017), encouraging PSTs to put what they were discussing in seminars into practice (Allal, 2020; Loughran, 2014). The cyclical nature of the study allowed PSTs the opportunity to engage with different purposes and to reflect on assessment practices (Starck et al., 2018).

There is a need for further studies to explore similar meaningful infrastructures that support PSTs’ improvement of assessment literacy. Such studies should consider the longevity of assessment practices that are developed in a bid to establish the extent to which such assessment practices become embedded in day-to-day practice without the need for formal support structures.

Conclusion

PSTs’ experiences as students have a significant impact on their understanding of assessment. Previous experiences result in low levels of assessment literacy and challenge PSTs’ learning of alternative assessment practices. Considering the difficulties PSTs face to improve their assessment literacy (and not replicating what they experienced as students) this study reinforces the crucial use of engaging, practical, supportive and co-constructed scaffolding approaches with PSTs to interrogate and change assessment conceptions developed during the acculturation phase. The environment created during seminars helped PSTs to feel safe to share, question, debate, discuss and reflect on their assessment thoughts and practices. PSTs developed a more critical stance about why and when to assess, through an increasing emphasis on learning and students.

While it is acknowledged that changing conceptions does not necessarily result in changed practices (Brevik et al., 2017), this study has conveyed the importance of exposing PSTs to practical experiences in real contexts, capturing and addressing PSTs’ continuous engagement with assessment in an attempt to support and develop their assessment literacy. Exploring and considering PSTs’ assessment understandings on entering teacher education programmes is a key starting point if we are to support PSTs in challenging, and ultimately changing, them (Pastore, 2020; Starck et al., 2018).

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Fundação para a Ciência e a Tecnologia (grant number SFRH/BD/137848/2018).