Abstract

This article introduces Videogame Spatial Cinematics (VSC), an interdisciplinary framework for analysing how spatial design, gameplay, and virtual cinematography converge in videogame environments. While existing scholarship has examined these elements in isolation, VSC integrates spatial organisation, cinematic mediation, and interactive constraint into a unified analytical approach. The framework comprises three analytical toolboxes: Spatial Analysis, Cinematic Narrative Analysis, and Frame Dissection. Each draws on methods including architectural diagramming, cinematic shot analysis, and gameplay sequencing to investigate the spatial-cinematic apparatus of videogames. Developed through a multi-phase methodology combining reflective gameplay, autoethnographic game analysis, structured logging and coding, and cross-disciplinary visual analysis, the framework identifies 11 recurring parameters that shape the player’s spatial experience, such as camera optics, environmental storytelling, framing, and spatial organisation. The toolboxes are applied to three selected action-adventure titles – The Last of Us Part I (2022), God of War (2018), and Star Wars Jedi: Fallen Order (2019) – to demonstrate how virtual cameras choreograph space and narrative through a layered cinematic logic. We argue that the compositional techniques, camera systems, and level architectures work interdependently to guide interaction, frame emotion, and construct narrative rhythm. By making the cinematic mediation of game space analytically accessible, VSC attempts to offer a transferable diagnostic framework for researchers and designers. It is positioned as an interpretive tool rather than a prescriptive design method, with practice-based validation proposed as future work.

Introduction

The architecture of contemporary videogames has evolved into complex and multifaceted systems. Videogame spaces perform at several layers; they both mirror and diverge from reality (Aarseth, 2007: 45), translate abstract game mechanics into intuitive spatial cues (Götz, 2018), weave narrative elements into the fabric of the game’s environment (Domsch, 2019; Jenkins, 2004; Nitsche, 2008), and orchestrate the players’ gaze (Wolf, 2002; Thon, 2009: 281). The multiplicity of the affordances produces an intricate, multilayered spatial experience that is unique to videogames.

Traditionally, scholarship on videogame space has tended to privilege one of three facets: the systemic logics of play, the narrative forms through which games organise storytelling, or spatial configurations (Hunicke et al., 2004; Jenkins, 2004; Licht, 2003; Murray, 1997; Tekinbaş and Zimmerman, 2003; Wolf, 2002). While contemporary research rarely treats these orientations as mutually exclusive, their legacies continue to shape how videogame space is conceptualised and analysed. Studies may foreground navigability and spatial legibility, focus on narrative progression and storytelling devices, or treat mechanics as the primary organiser of experience. Yet under conditions of intensified media convergence (Green, 2017; Jenkins, 2006; Sell, 2021), and in response to fundamental shifts in how videogames are produced and consumed, research attempts that prioritise only one dimension of game space are increasingly insufficient for accounting for its complexity. This gap becomes more critical in a period in which many contemporary titles complicate the spatial–ludic–narrative relation by aligning spatial design, narrative cues, and interaction through an increasingly dominant cinematic regime.

Over the past two decades, extensive interdisciplinary research has emerged on the ontology and epistemology of videogame architecture and space design. The disciplines of architecture (Günzel, 2014; Nitsche, 2008; Pearson, 2020; Walz, 2010), game studies (Juul, 2011; Perron, 2023), film (Burelli, 2015; Gottwald, 2022; Logas and Muller, 2005), media studies (Manovich, 2002; Wei et al., 2010), and even philosophy (Klevjer, 2022; Vella, 2019) broached the topic of videogame spaces. This emerging interdisciplinary field has addressed a diverse set of topics, such as interactive design, spatial narrative techniques, architectural worldmaking, and the visual composition of game environments.

The body of existing research on videogame spaces has established essential foundations. Notwithstanding, it rarely considers how game spaces and the design processes that underpin them operate within the radically cinematic ecosystem of the videogame. The virtual camera, central to spatial experience, draws on the grammar of cinematography and editing, as Lev Manovich (2002: 91) notes with the ‘kino-eye’. Although one ought to acknowledge that designing three-dimensional videogame spaces is, by nature, an act of spatial design, it is just as crucial to understand that videogame spaces are ‘mediated spaces’ captured through a ‘hypothetical camera’ (Adams, 2014: 38) and presented through a ‘cinematic form of presentation’ (Nitsche, 2008: 16) that unfolds as moving images on screen, akin to film (Bonner, 2014). As Rockstar North’s Miriam Bellard (2020: 232) observes, game spaces must ultimately ‘perform’ on a 2D cinematic screen. Thus, the cinematic presentation, enabled through the virtual camera, is as critical as spatial design and gameplay.

The role of virtual cameras and ‘film form’ 1 – or, as referred to here, cinematics – has been acknowledged in existing scholarship, yet a critical methodological gap remains in how cinematic organisation is traced in relation to spatial design and interactive systems in contemporary games. Cinematics is still often treated as an aesthetic overlay rather than a structuring mechanism of spatial experience, which limits analytic precision by obscuring how camera organisation couples with spatial layout, narrative staging, and gameplay constraints. This omission risks misattributing experiential effects to ‘level design’ or ‘story’ alone and, in turn, weakens cross-disciplinary exchange: spatial decisions, camera systems, and interaction design are frequently developed in parallel, yet without a shared method for explaining how they co-produce real-time spatial experience. This gap underscores the need for an interdisciplinary framework that integrates spatial organisation, cinematic mediation, and interactive constraint into a single analytic procedure.

Aims

This article develops Videogame Spatial Cinematics (VSC) as an interpretive and diagnostic framework for analysing how spatial organisation, cinematic mediation, and interactive constraints co-produce the player’s spatial experience. Specifically, it aims to the following: • introduce and conceptualise the notion of Videogame Spatial Cinematics (VSC) as an interdisciplinary conceptual framework that integrates principles from architecture, film, and game studies and recognises the virtual camera’s dual role as a cinematic mediator and spatial storytelling tool. • develop and construct VSC as an analytical system composed of three interlinked toolboxes that may uncover subtle interdependencies and suggest fresh readings of gameplay sequences: cinematics, play and space. • apply the proposed VSC analytical system on a set of selected case studies to examine its key spatial and cinematic parameters.

Foundations

Videogame environments are hybrid constructs: architectural in navigational organisation, cinematic in virtual-camera mediation, and ludic in procedural constraint and interactive agency. This hybridity distinguishes game space from both the ‘built’ and the ‘mediated’: it is camera-mediated yet ‘ergodic’ (Aarseth, 1997), a space that must be worked through to be realised. Videogame space therefore forms a playable, camera-mediated spatiotemporal system.

Principles of architectural and spatial design provide VSC’s spatial analytic scaffolding by treating game space as an organiser of orientation and movement. According to architecture theorist Bernard Tschumi’s conceptualisation, ‘architecture is understood as the interplay of ‘vectors and envelopes’ (2003: 64). Similarly, virtual spaces of videogames can be approached as dynamic spatial systems of trajectories (vectors) and limits (envelopes) that channel possible movement and action (Aroni, 2022; Barney, 2020; Pearce, 1997). The established repertoire of architectural strategies and representational methods provides a rigorous means of externalising spatial relations and making them systematically navigable across gameplay sequences (Pearson, 2020; Rogers, 2014). As Totten (2019) notes, level design translates ludic intent into structured spatial form; architectural diagramming therefore offers a basis for articulating how layout, access, and spatial ordering shape navigability and encounter. Architectural and spatial analysis thus enables systematic comparison across gameplay sequences: rather than describing traversal impressionistically (e.g., ‘the level felt open’), diagrammatic tools render movement as traceable paths and measurable spatial relationships, supporting more rigorous cross-case analysis.

Film theory provides VSC’s second foundation by clarifying how the architectural built-ness of game space becomes operative as an ‘interactive, cinematic machine’ (Froehlich, 2023: ix). Videogame space operates within a ‘cinematic lineage’ in which 3D environments and virtual camera regimes are inextricably linked, reinforcing the moving-image condition, or ‘video-ness’, of gameplay (Gottwald and Turner-Rahman, 2019: 2; Laurier and Reeves, 2014: 181). This camera regime takes Renaissance linear perspective and the grammar of cinematic camera work (shot scale, camera angle, movement, and lens choice) as its blueprint, crystallising them into a computational ‘perspective machine’ (Friedberg, 2009: 42). Yet, it diverges through interactivity: the player navigates an ‘arbitrary’, controllable viewpoint that approximates human vision (Schwingeler, 2019: 43), a framed and partial spatial field that is continuously translated into 2D on-screen projections (Domsch, 2019; Wolf, 2015). Film theory thus supplies a conceptual vocabulary for describing how game environments are produced and interpreted as camera-mediated, screen-based moving images, including the ways framing, composition, and optical conventions organise attention and inference. In this sense, level design can be understood as ‘spatial cinematography’ (Bellard, 2019), where game designers shape not only spatial form but also the conditions of mediated visibility through which space becomes experientially intelligible.

Videogame studies constitute the final pillar by positioning gameplay as the organising ‘core’ of virtual space (Mäyrä, 2008: 17). Under conditions of ‘real-time interactivity’ (Whistance-Smith, 2022: 39), game space is enacted as a procedural and goal-oriented system shaped by rules, constraints, feedback, and player agency, and is therefore not reducible to either cinematic staging or real-world spatial logics. As Aarseth (2007: 47) proposes, playable environments often require deliberate ‘deviations from reality’ in order to support play-oriented navigation, challenge, and progression. Consequently, spatial form is continuously tuned to ludic purpose, as play unfolds through structured tasks, constraints, and sequenced encounters that organise attention and direct movement over time (Anthropy and Clark, 2014; Salmond, 2016). Rather than operating as a superordinate layer, rules are intrinsically braided with spatial layouts to regulate pacing and sustain navigational legibility (Götz, 2018). Videogame studies thus provides a foundation for analysing space as a performative field of action, where architectural form and cinematic mediation are mobilised to organise interaction through traversal, constraint, and feedback.

Architecture, film theory, and game studies together form a shared analytical field for examining hybrid videogame space, informing VSC as an integrated procedure for tracing the coupled operation of space, cinematics, and play.

Research design

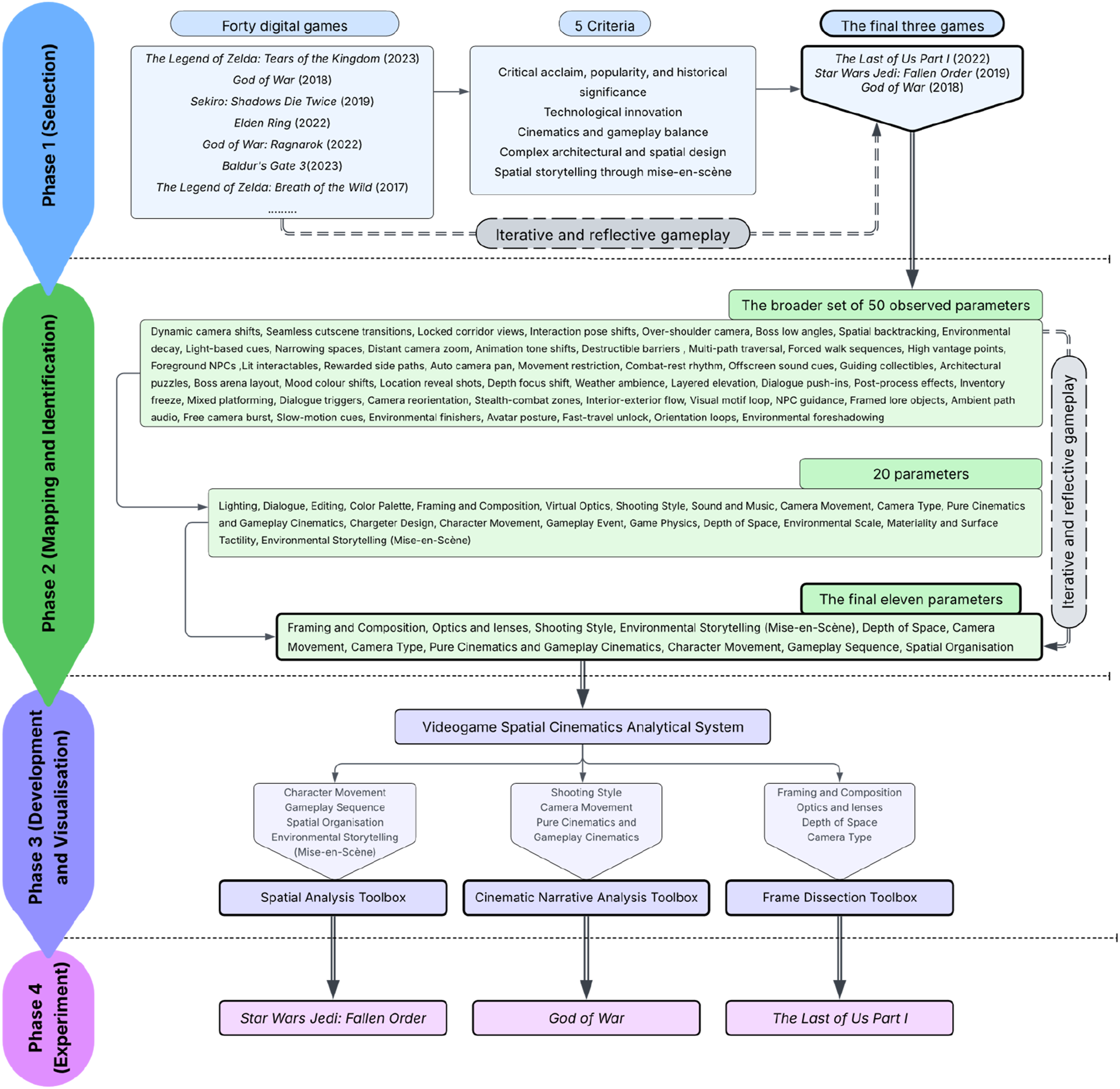

Building on the foundations established above, we followed a multi-phase research process with an iterative and reflective gameplay (‘deep play’) approach (Figure 1), grounded in qualitative game-studies traditions of analysing games through sustained interaction. The method combines Reflective Game Design (Macklin and Sharp, 2016) with Autoethnographic Game Analysis (Kamp, 2021; Väkevä, 2022) to support repeated engagement, reflexive interpretation, and the systematic extraction of spatial–cinematic features. While drawing on ludonarrative analysis, we shifted our emphasis away from ‘dissonance’ (Hocking, 2009) towards examining how gameplay, cinematics, and spatial design cohere within a broader cinematic and architectural context. Research design: From research gap to experimental application of the Videogame Spatial Cinematics (VSC) analytical system.

To mitigate the subjectivity of reflective gameplay, we adopted a structured logging and coding protocol across repeated playthroughs, following Lincoln and Guba’s (1985: 290) criteria for ‘trustworthiness’ in qualitative inquiry. Sessions were documented through a standardised coding sheet capturing gameplay sequences, spatial configuration, camera behaviour, interaction rules, and visual evidence (frame captures and diagrammatic reconstructions). Candidate parameters were consolidated in a parameter log to enable recurrence checks across titles and iterative refinement through cross-checking and re-coding between authors – procedures supporting dependability and confirmability. Parameters were retained only when they recurred across the corpus and could be operationalised through toolbox outputs (plans/sections, storyboards, frame dissection). Appendix A provides templates constituting an explicit ‘audit trail’ (Lincoln and Guba, 1985: 319; Tracy, 2010: 842), supporting replicability.

The development of the VSC framework followed four phases. Phase 1 (Selection) evaluated 40 games and selected three case studies. Phase 2 (Mapping and Identification) involved 40 h of reflective gameplay per title, repeated iteratively, to extract and refine 11 key spatial and cinematic parameters. Phase 3 (Development and Visualisation) developed the toolboxes and tested and visualised these parameters into three analytical toolboxes: Spatial Analysis, Cinematic Narrative Analysis, and Frame Dissection. Phase 4 (Experiment) applied each toolbox to scenes from the case studies to assess the framework’s analytical capacity.

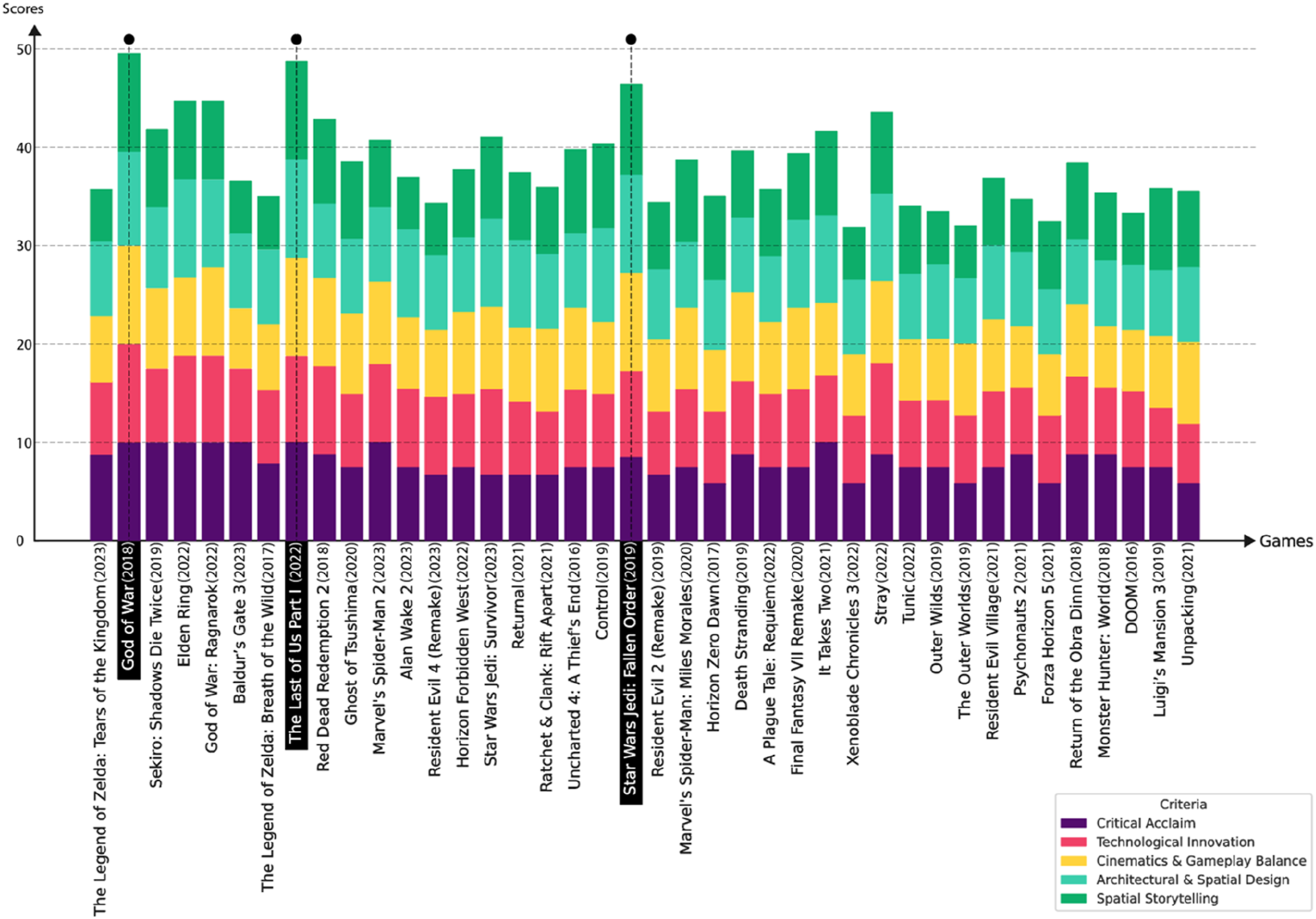

In Phase 1, we examined 40 prominent digital games released between 2016 and 2023 and evaluated them against five selection criteria: • Criteria 1: Critical acclaim, popularity, and historical significance: the selection process prioritised games with high Metacritic scores and recognition from two leading awards, namely, The Game Awards and BAFTA Games Awards. • Criteria 2: Technological innovation: the use of technological innovations such as innovative game cinematography, advanced motion capture, and procedural or generative space design techniques was considered as one of the selection criteria. • Criteria 3: Cinematics and gameplay balance: titles where cinematics and gameplay are balanced and mutually reinforcing, such that cinematic sequences enhance traversal and interaction rather than interrupting them, maintaining a seamless experience and sustained immersion. Games dominated by minimal-play ‘interactive fiction’, where spatial engagement is largely subordinated to linear cinematic narration, were excluded (Montfort, 2011: 26). • Criteria 4: Complex architectural and spatial design: games with complex architectural, interior and urban spaces were prioritised. • Criteria 5: Spatial storytelling through mise-en-scène: spatial narration originating in theme park design, where environments embed narrative cues through staging and designed detail as a ‘meeting point for gameplay and stories’ (Fernández-Vara, 2011: 2; Jenkins, 2004; Nitsche, 2008), aligning with film-studies notions of mise-en-scène (Chang and Hsieh, 2018: 6542; Girina, 2013; Logas and Muller, 2005).

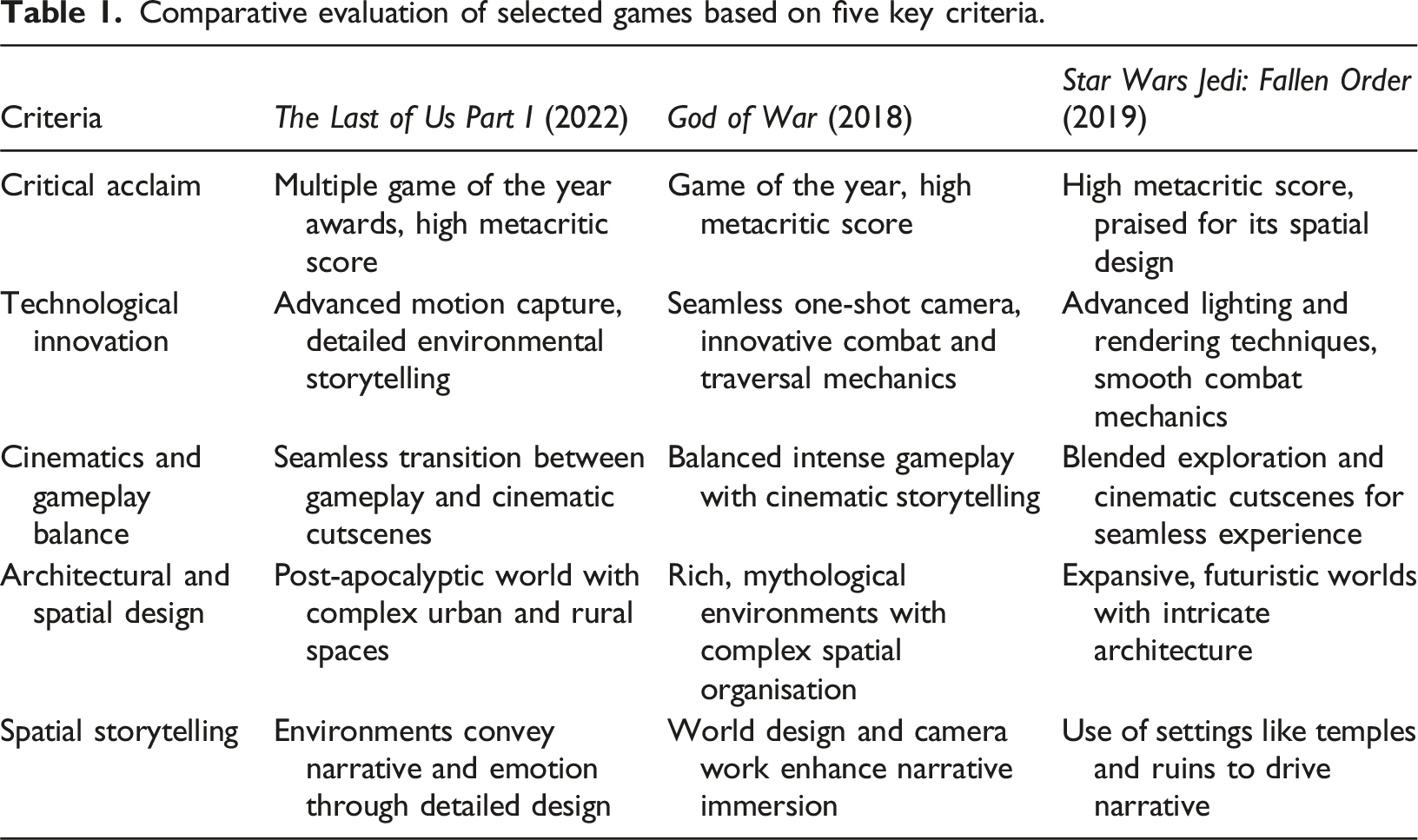

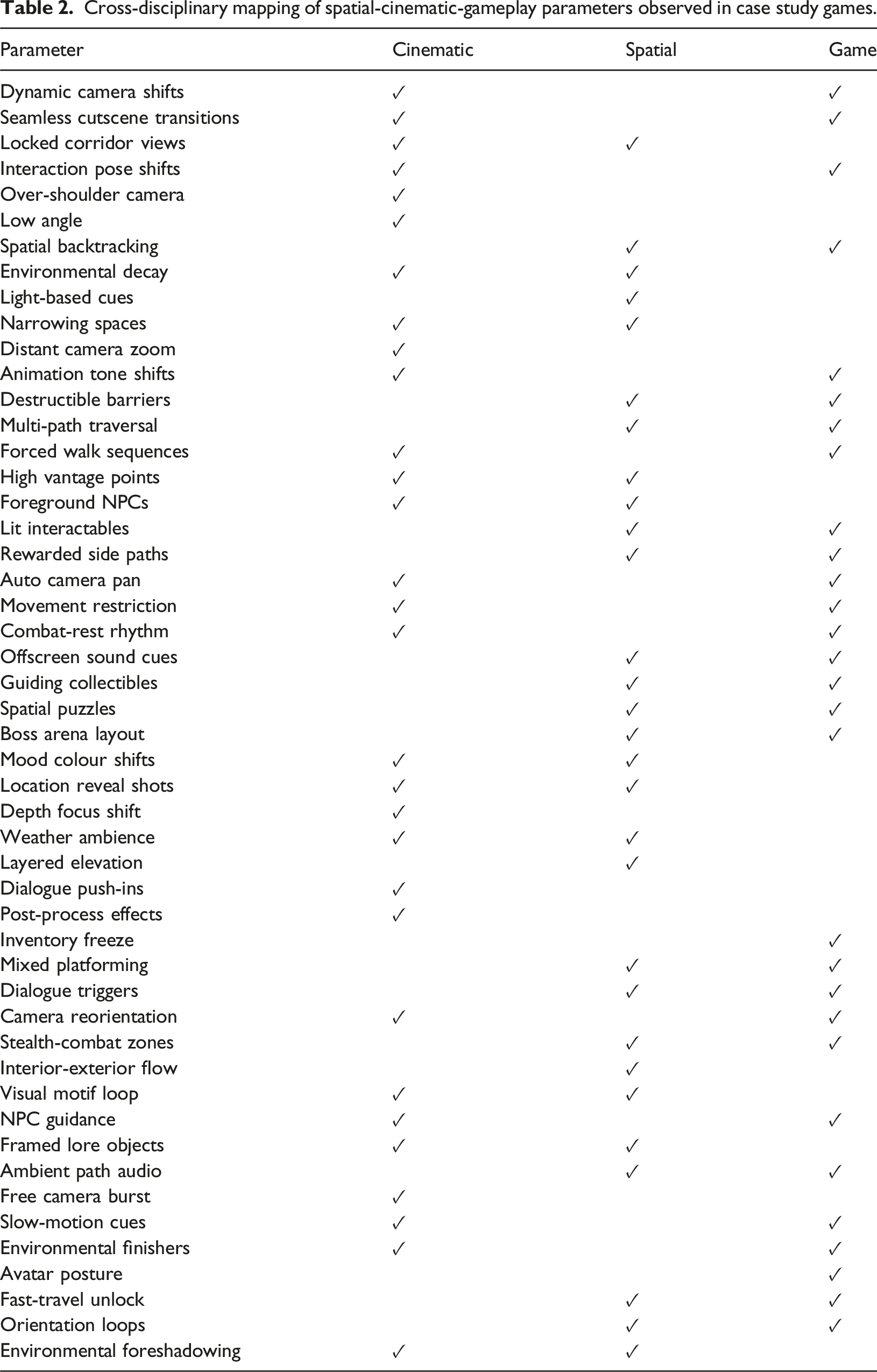

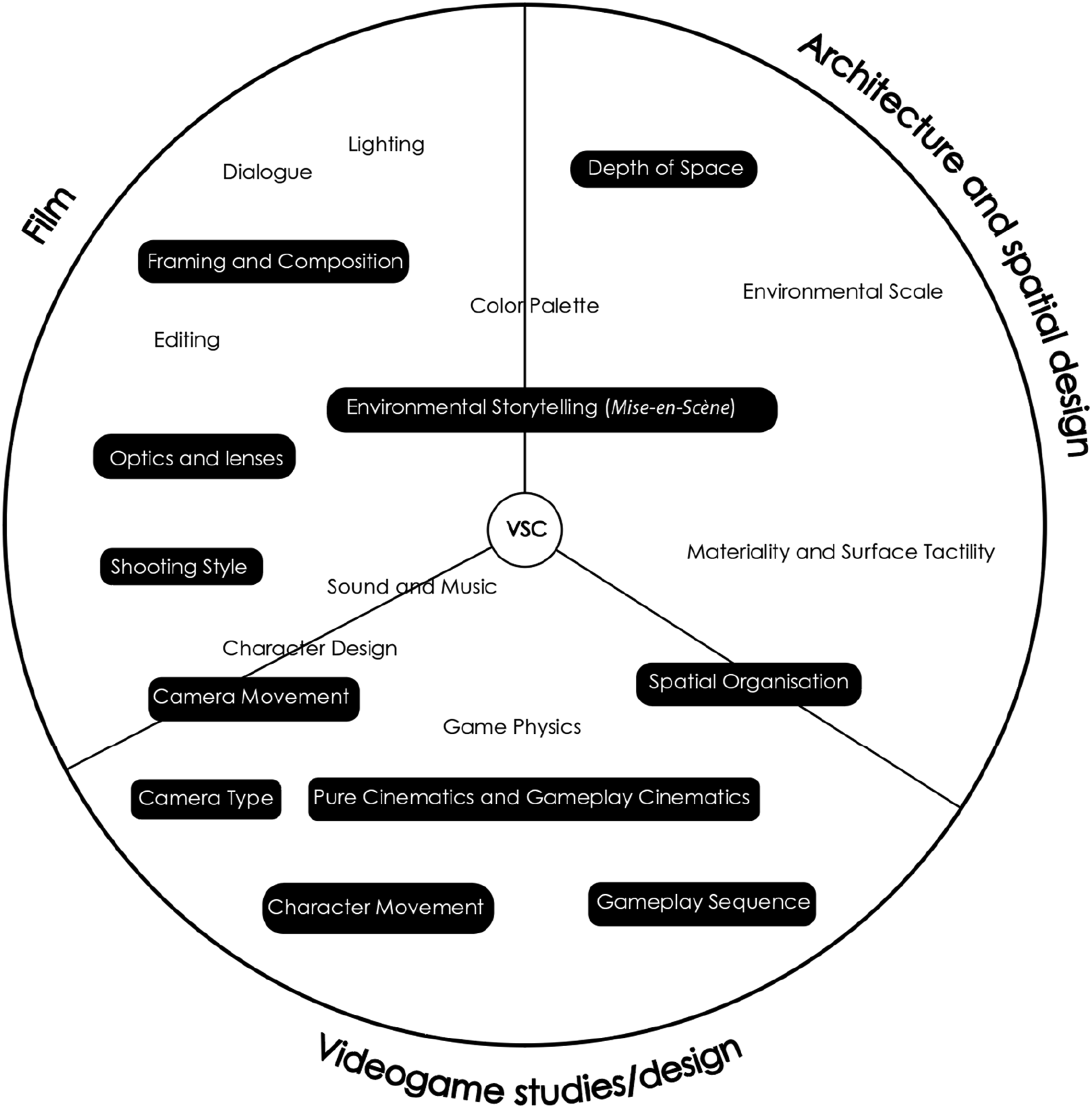

Each game was scored against these criteria, with a maximum of 10 points (Figure 2). This resulted in the selection of three action-adventure titles: The Last of Us Part I, God of War, and Star Wars Jedi: Fallen Order (Table 1). While genre diversity was considered, action-adventure games were retained as a controlled corpus because they consistently foreground authored spatial traversal, hybrid camera regimes, and tightly scripted interaction structures, enabling systematic parameter extraction. In Phase 2, 40 h of iterative reflective gameplay per title extracted an initial set of 50 candidate spatial–cinematic features (Table 2), which were documented through structured logging and coding and verified via cross-author re-coding and cross-title recurrence checks. This process distilled the dataset into 11 recurring parameters, retained for their recurrence across all three games and their theoretical coherence across film, architecture, and game studies (Figure 3). Comparative stacked bar chart of game scores based on five key criteria (2016–2023). Comparative evaluation of selected games based on five key criteria. Cross-disciplinary mapping of spatial-cinematic-gameplay parameters observed in case study games. This diagram shows the distribution of these parameters across the three disciplines, which emphasises their overlapping nature and interdisciplinary relevance.

This process yielded 11 recurrent parameters that structure the VSC framework (highlighted in Figure 3). While some parameters (e.g., environmental storytelling/mise-en-scène) span multiple disciplines, others (e.g., virtual optics) are more domain-specific. Parameters are treated as analytical dimensions rather than discrete techniques, allowing stylistic variation across titles while maintaining analytic consistency. The final 11 parameters are as follows: (1) Pure versus gameplay cinematics: Cutscenes are defined as pure (non-interactive) cinematics, while cinematic techniques embedded in interactive play are termed gameplay cinematics. Their ratio, pacing, and transitions shape spatial experience. (2) Shooting style: Gameplay camera work often emulates film styles (e.g., handheld, Steadicam, tripod, and drone), modulating tension (handheld) or spectacle/scale (crane/drone). (3) Camera movement: Camera motion typically aligns with avatar movement within system-, player-, or hybrid-controlled regimes (Haigh-Hutchinson, 2009), enabling actions such as pan/tilt/arc. (4) Framing and composition: Composition positions the avatar and key spatial cues within the frame to guide navigation and attention. (5) Spatial organisation: Spatial elements (paths, thresholds, platforms, frames, open fields) are orchestrated as navigational schemes that structure progression and encounter flow. (6) Camera type: First-person, third-person, or fluid perspectives shape immersion and the sense of direct engagement with the game world (Cicchirillo, 2020: 2; Denisova and Cairns, 2015). (7) Depth of space: Depth is constructed via foreground–middleground–background layering to render 3D space legible on a 2D screen. (8) Environmental storytelling (Mise-en-Scène): Narrative and play are communicated through object placement, NPC positioning, and architectural cues that guide navigation and affect. (9) Character movement: Avatar locomotion and abilities (e.g., climb, stealth, and glide) structure traversal and condition spatial access. (10) Gameplay Sequence: Objective chains organise play into coherent progressions; sequencing clarifies cinematic narrative rhythm, often in ‘do X to reach Y’ structures (Frasca, 2004: 230). (11) Optics and lenses: virtual camera traits (focal length, lens effects, and focus pull) mimicking real optics.

2

Building on the parameters derived in Phase 2, Phase 3 consolidates the VSC framework by organising the 11 defining parameters (Figure 3) into three interlinked toolboxes – Spatial Analysis, Cinematic Narrative Analysis, and Frame Dissection – each targeting a distinct layer of the spatial-cinematic apparatus. The following sections explain the development, structure, and significance of each toolbox.

Videogame spatial cinematics

This section uses a scene from Star Wars Jedi: Fallen Order to demonstrate how the analytical system works in practice. The following sections will ground the relevance and utility of the three toolboxes in understanding the game spaces.

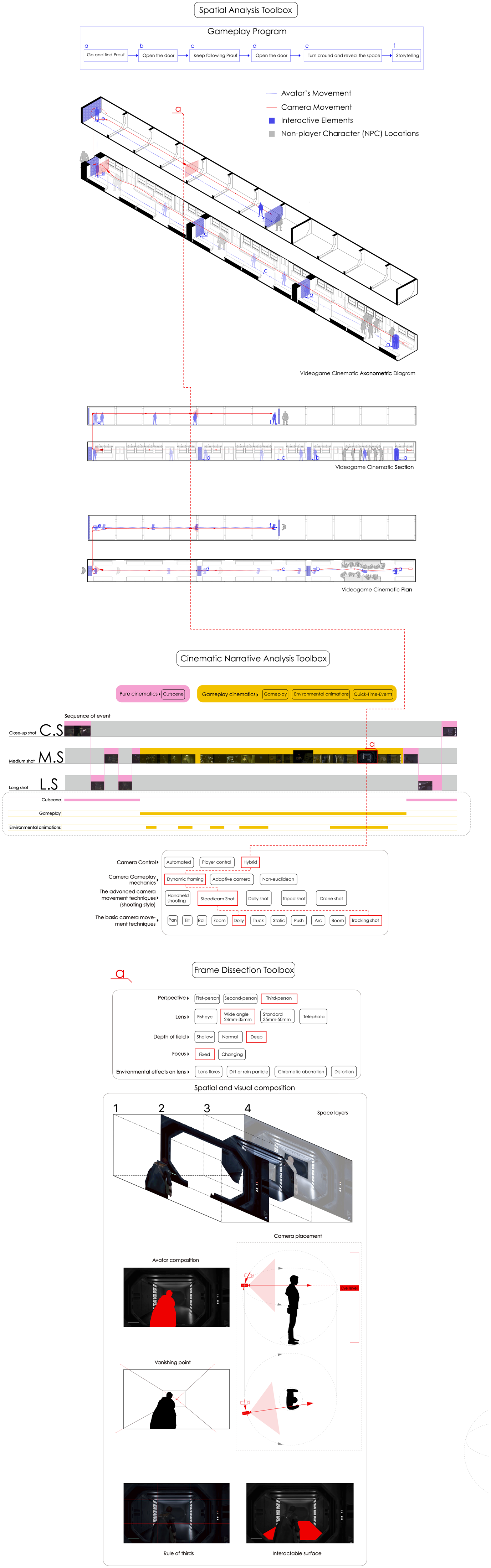

Spatial Analysis Toolbox

Game spaces are ‘spatial constructs’ that are inherently ‘the product of architectural design processes’ (Adams, 2003: 1; Khalili and Ma, 2024; McGregor, 2007: 537; Totten, 2019). The Spatial Analysis toolbox was developed to examine how game cinematics responds to the spatial organisation of game environments. The toolbox includes a range of conventional architectural tools, such as floor plans, axonometric drawings, and sectional diagrams. In architecture and other spatial design disciplines, these techniques are essential for ‘revealing the essence of spatial ideas and the underlying order of architectural composition’ (Ching, 2014: 27). Similarly, as some videogame theorists have noted, these analytical and representational techniques are vital to designing and analysing complex spaces within videogames, where ‘spatiality’ plays a key role (Aarseth, 2007: 44; Jenkins, 2004: 121; Flynn, 2004: 52).

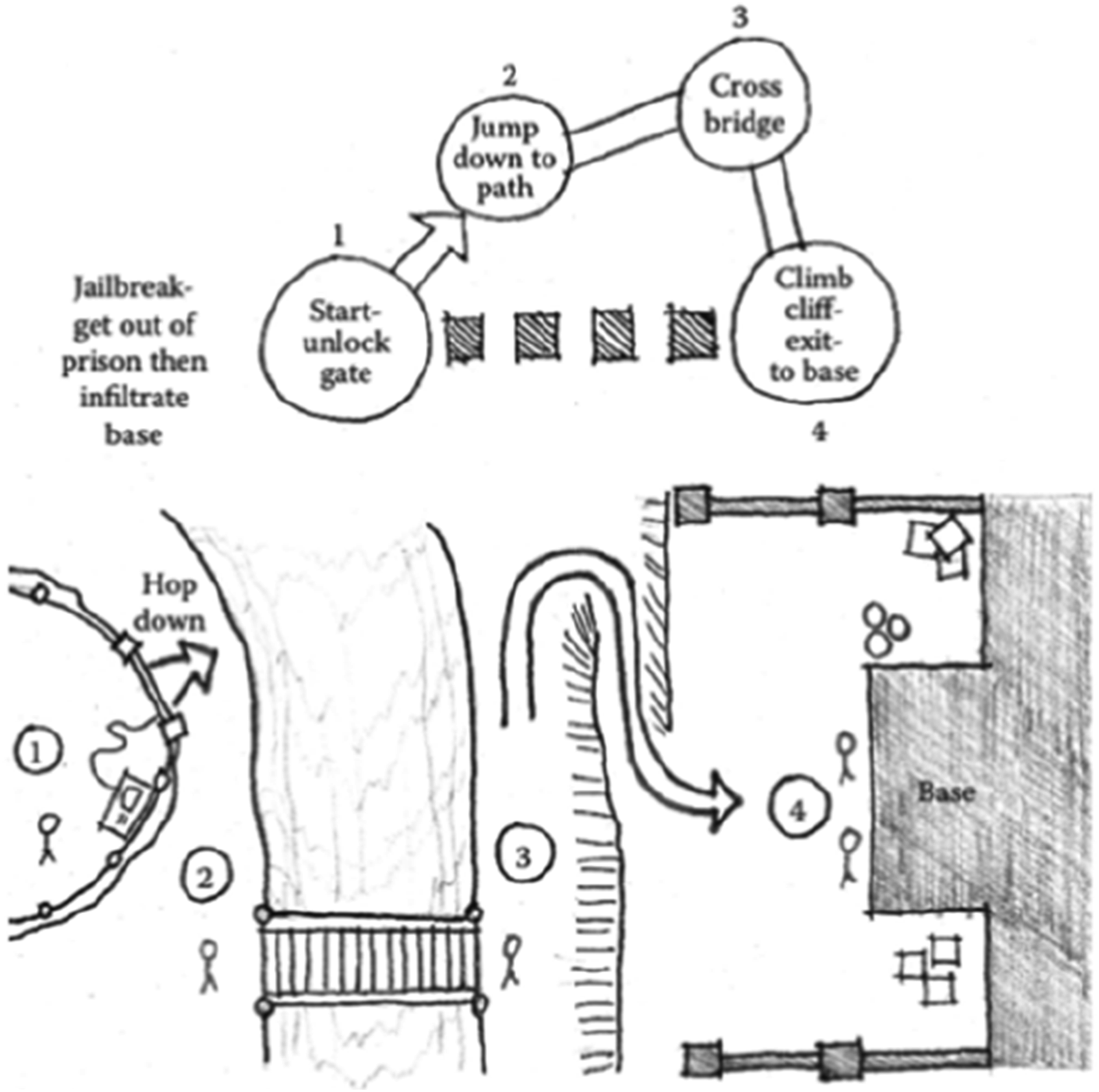

The significance of architectural diagramming techniques in the process of game design is often overlooked by those outside the game design and level design practice. According to videogame scholar Christopher Totten, game designers draw at least a diagrammatic floor plan to organise game spaces (Totten, 2019: 66). Floorplan diagrams in videogame design processes do more than outline the environment’s layout. Sinem Cukurlu (2019: 237) explains that plan-like diagrams help game designers chart the player’s spatial progression, ‘actions and possible strategies’. For example, Figure 4 demonstrates how movement paths, spatial interactions, and key spatial elements such as entry/exit points are designed through a plan-like diagram by game designers. Game space plan sketch. © 2019 Christopher Totten. Source: Christopher Totten, Architectural Approach to Level Design, Florida: CRC Press, figure 4.27.

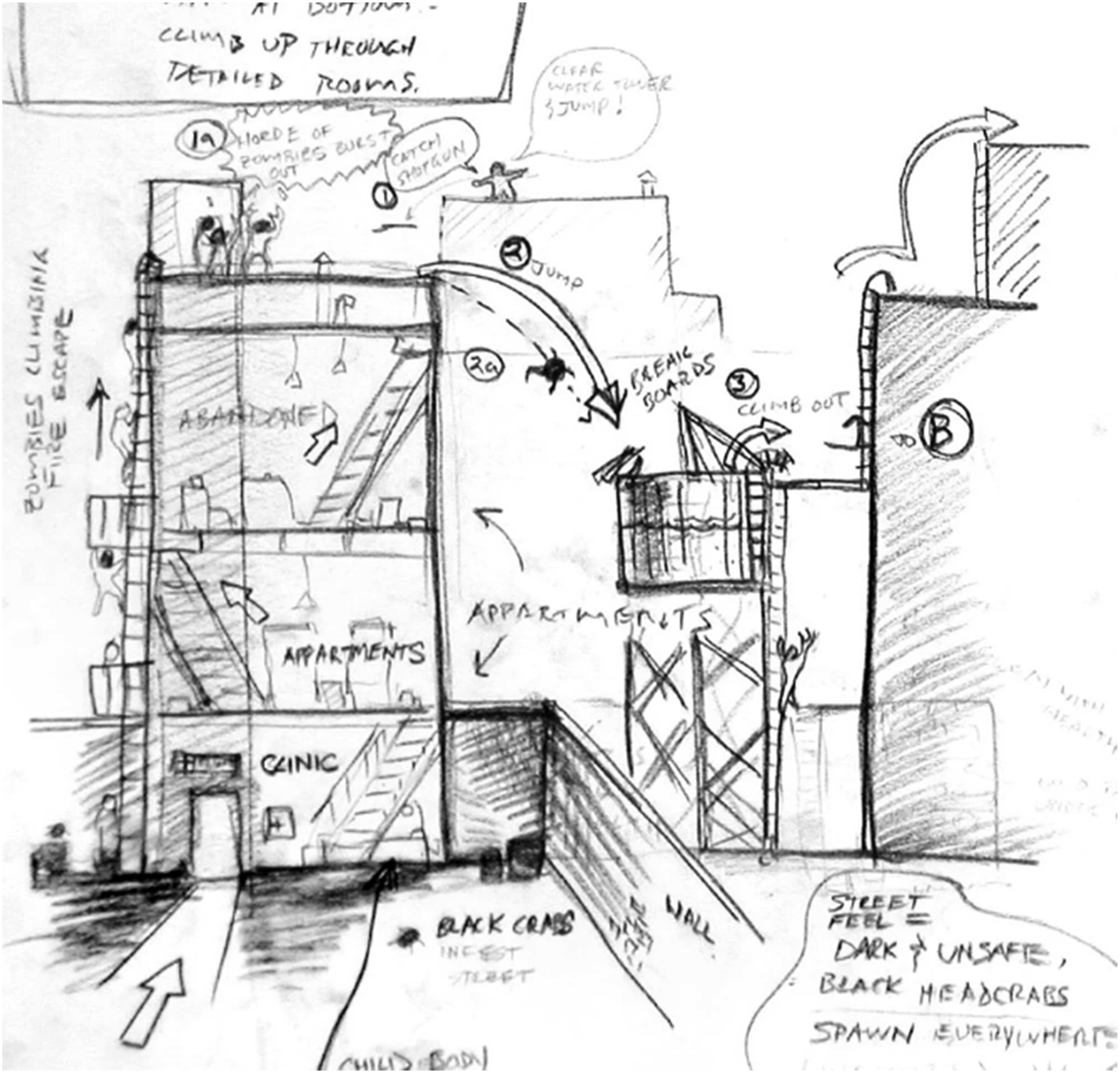

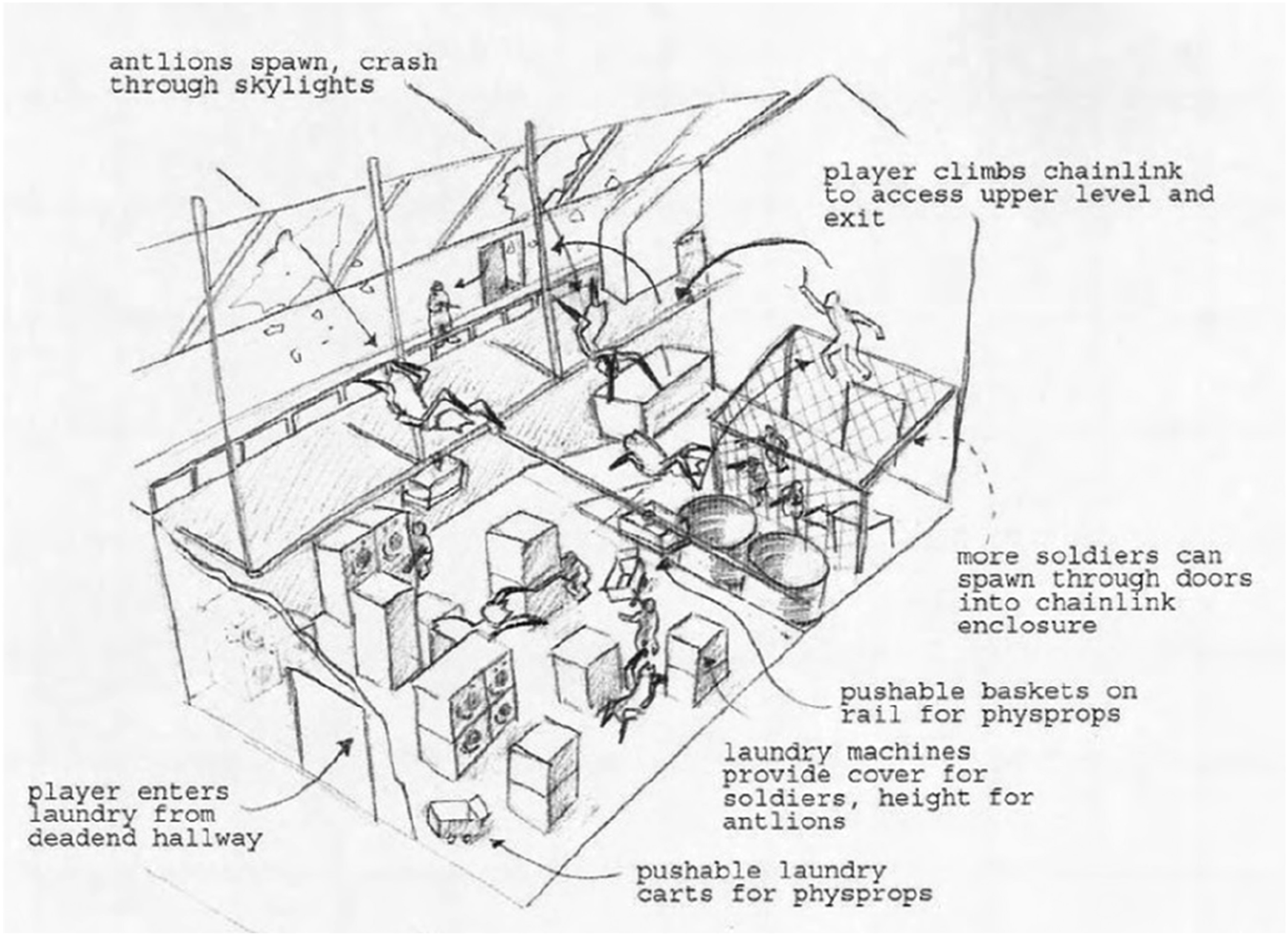

Sections, in contrast, reveal the vertical relationships in multilayered game spaces (Figure 5). In key game design sources, videogame designers are advised to draw section and elevation diagrams to design for ‘height-based spatial transitions’ and move ‘back and forth between plan and section’ to create sophisticated vertical and horizontal relationships (Frederick, 2007: 68; Salmond, 2016: 159; Totten, 2019: 68). Within the realm of videogame design, axonometric drawings (e.g. Figure 6) are also seen as ‘vital design’ tools that are helpful to showcase the ‘three-dimensionality’ of game spaces (Salmond, 2016: 177; Totten, 2019). The key difference here is that the spatial diagramming tools are used to map gameplay action, camera behaviour and cinematics in addition to spaces. Section sketch © Eric Simon Kirchmer. Source: Eric Simon Kirchmer, ‘The Half-Life & Portal Encyclopedia’, accessed October 14, 2024, https://half-life.fandom.com/wiki/Eric_Kirchmer. Game axonometric drawing © Eric Simon Kirchmer. Source: Eric Simon Kirchmer, ‘The Half-Life & Portal Encyclopedia’, accessed October 14, 2024, https://half-life.fandom.com/wiki/Eric_Kirchmer.

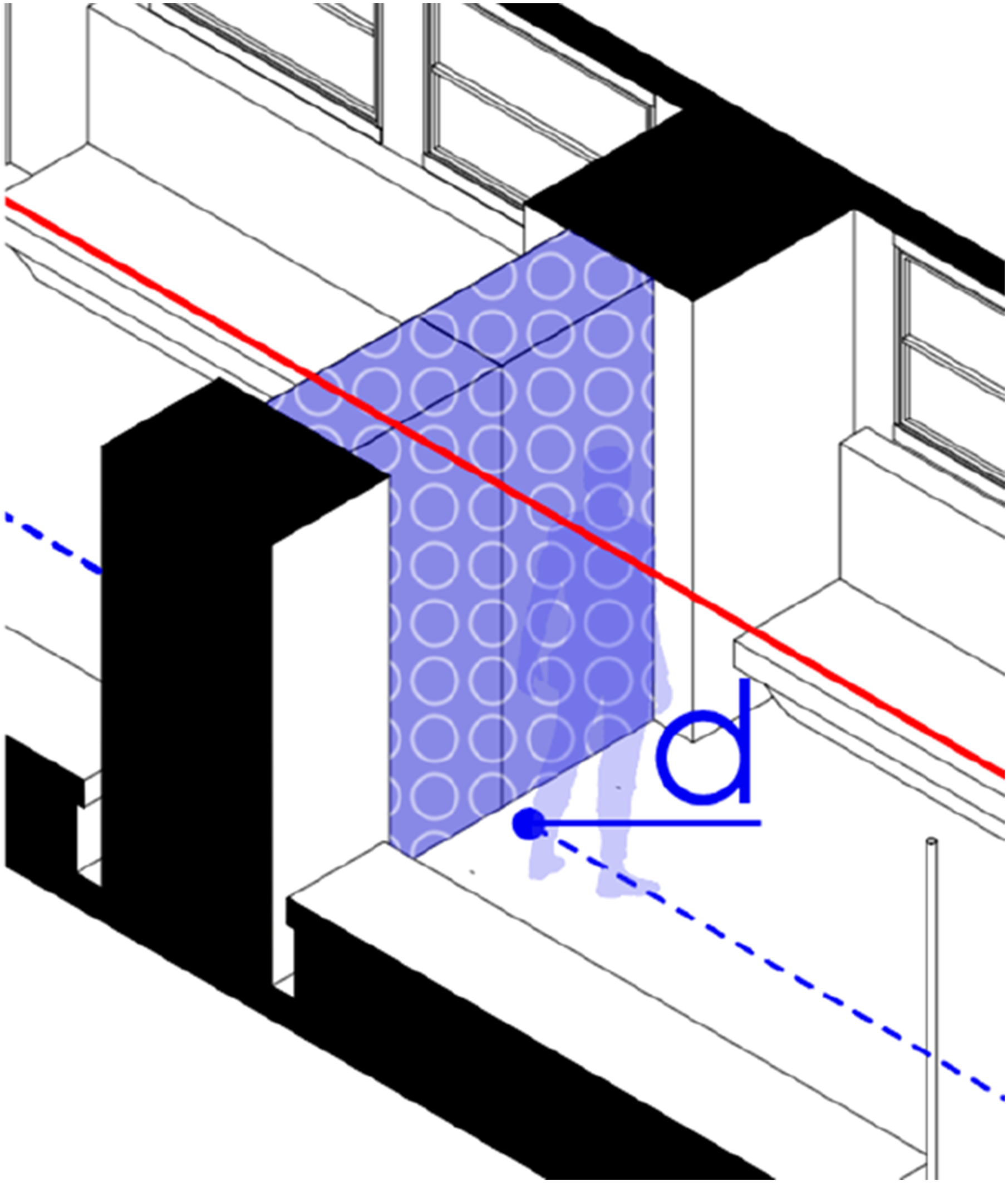

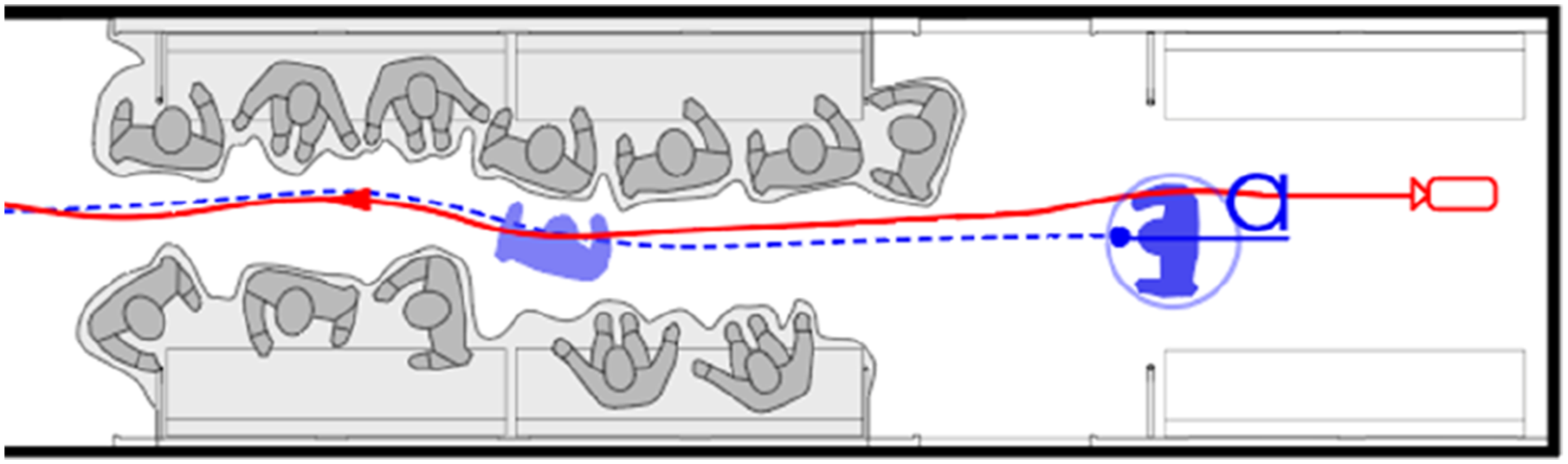

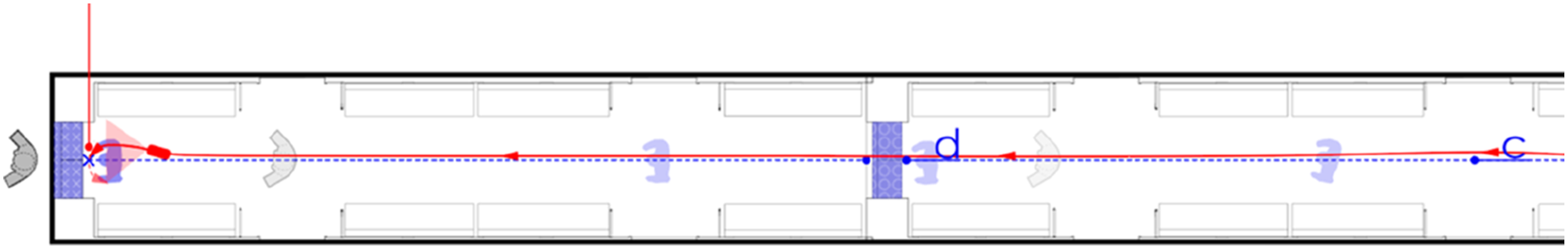

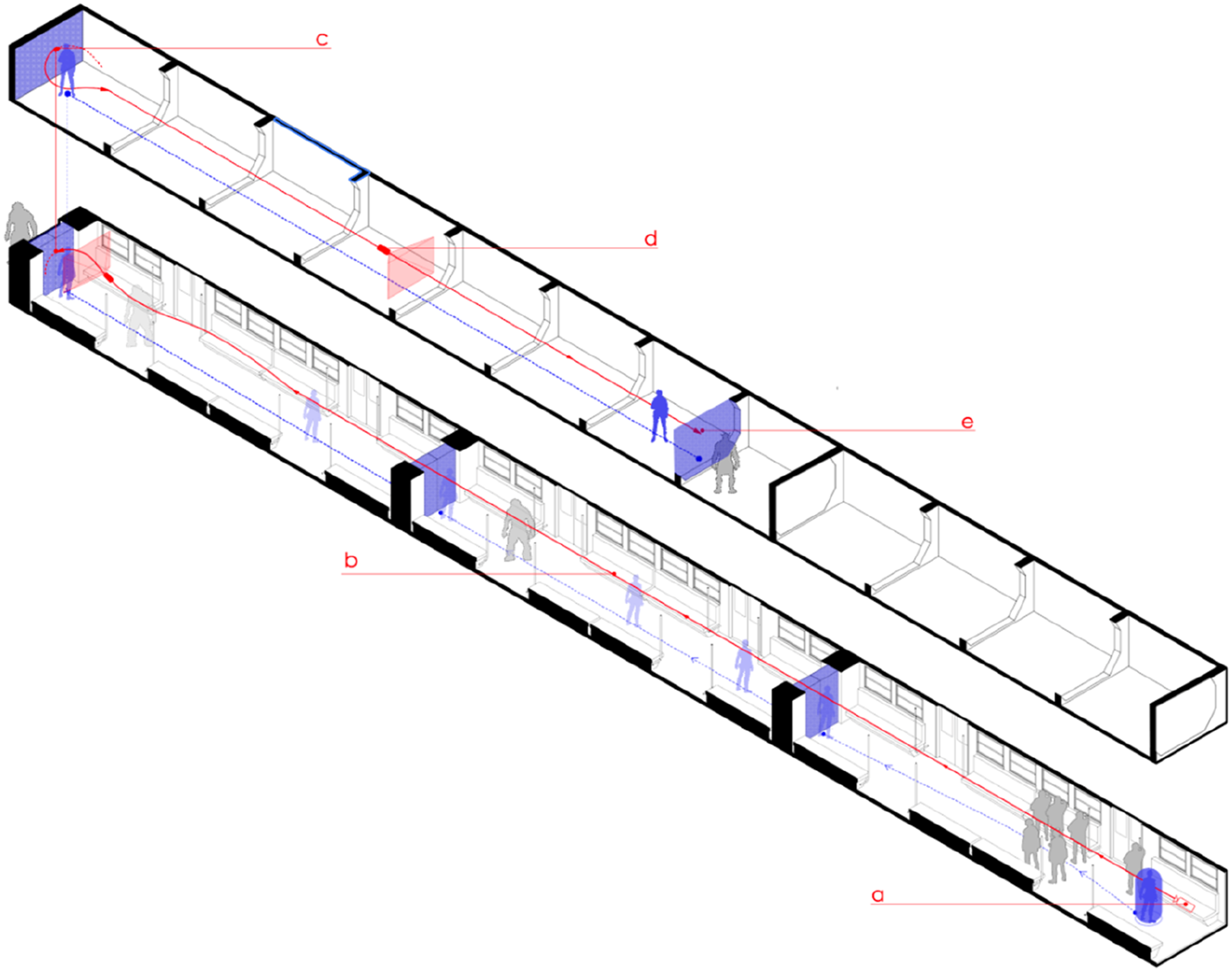

We attempted to use conventional spatial analysis tools as part of the Spatial Analysis Toolbox to deconstruct a scene from Star Wars Jedi: Fallen Order (Figure 7). The analysis focused on three parameters – character movement, camera movement, and spatial layout – represented through plan, section, and axonometric drawings. This showed that conventional architectural diagramming, when adapted as analytical tools, can complement one another and clarify spatial relationships. For example, in moment (a), the plan depicts the avatar’s approach to a crowded area; the section reveals vertical organisation; and the axonometric view provides a three-dimensional understanding of the avatar’s navigation through both environment and non-player characters. Spatial analysis toolbox applied to a case study, illustrating gameplay program, avatar movement, camera movement, interactive elements, and NPC locations through axonometric, section, and plan diagrams.

The avatar’s movement (blue dashed line) corresponds directly to the gameplay programme, functioning as a ‘space exploration resource’ that activates game-space mechanics (Ouriques et al., 2019: 37). In Figure 7, the player first moves left in (a), interacts with a door in (b), and continues toward Prauf in (c). Through this navigation, the gameplay programme’s structure becomes progressively defined. The interplay of actionable and non-actionable objects creates a spatial rhythm: actionable elements establish predictable beats, while non-actionable ones intentionally disrupt them. These disruptions challenge player expectations, prompting strategic adjustment and encouraging exploration through alternative routes. As shown in Figures 8 and 9, actionable doors (circles) set the rhythm, while non-actionable doors (crosses) interrupt it, generating new patterns of spatial engagement. An interactive element with a blue-shaded area and circles indicating actionable zones. A non-actionable element, represented by a blue-shaded area with crosses.

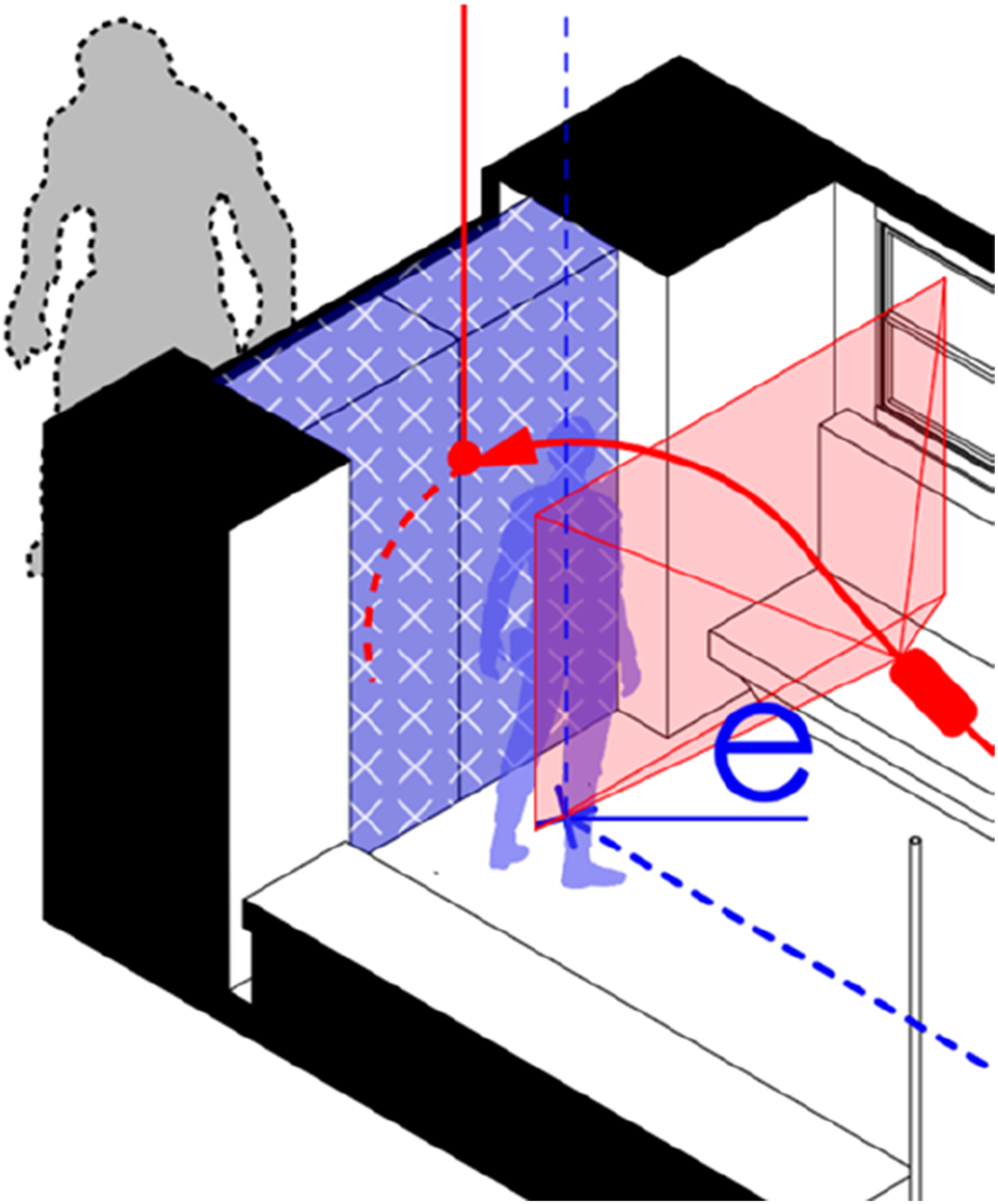

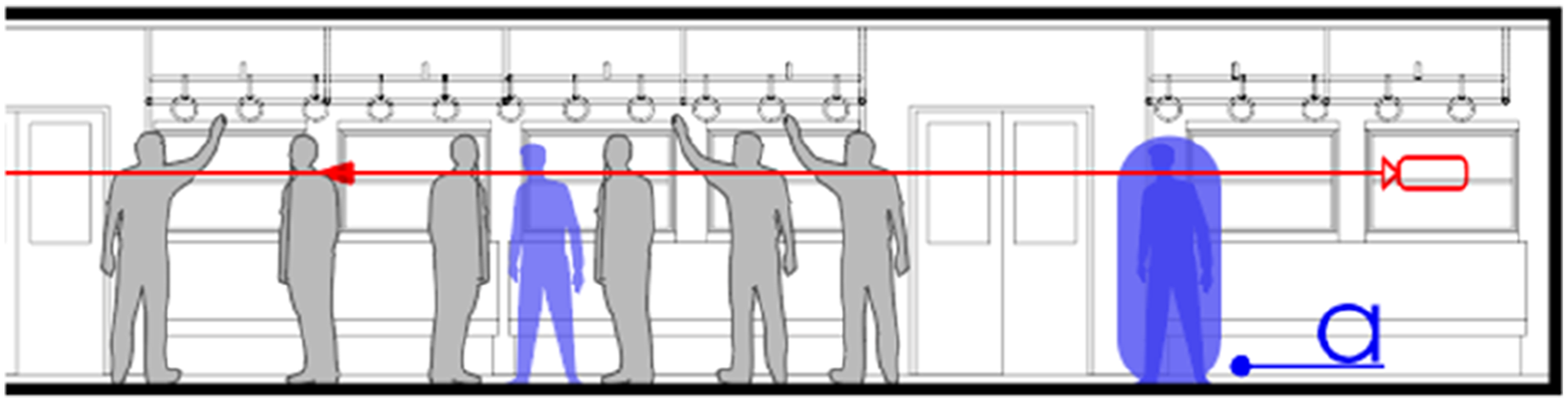

Through the Spatial Analysis Toolbox, we mapped both architectural features and ‘softer’ elements such as NPCs, which significantly shape spatial experience. As shown in Figure 10 (section) and Figure 11 (plan), NPCs (grey areas) in the train scene create obstacles and guide navigation. Their placement enhances atmosphere: static figures provide milieu and texture, while moving ones act as ‘event triggers’ that prompt decisions (Domsch, 2019: 116), producing ‘spatial noise’ in crowded scenes or solitude in empty ones, and keeping gameplay closely tied to story (Salmond, 2016: 146). NPC locations in the section view, depicted in gray. NPC locations in the plan view, depicted in gray.

In Figure 12, for example, the NPC Prauf moves leftward, his trajectory aligning with the player’s path and anchoring exploration. Tracing camera paths further revealed how positioning structures engagement. Figure 13 illustrates this: the camera begins behind the avatar (a), frames the NPC through horizontal and vertical alignment (b), and rotates clockwise to reveal the wider environment (c). Camera behaviour here proves as critical as spatial layout or interactable objects, actively structuring perception and flow. In conclusion, the Spatial Analysis Toolbox is a multilayered set of tools that uses conventional architectural methods to facilitate a detailed examination of not just spaces but also game design-driven aspects like avatar movement, interactive elements, camera movements, and NPC placements, and their combined influence on the player’s spatial experience. The plan view shows the NPC Prauf, depicted in gray, moving from right to left. The varying transparency levels illustrate its progression across the space. An axonometric view of the camera’s movement (red line) from point (a) to point (e).

Cinematic Narrative Analysis Toolbox

The Cinematic Narrative Toolbox is designed to analyse how spatial elements intersect with cinematic narrative features such as events, sequences, and camera work. It integrates established tools from film and game analysis – storyboards (screenshots of key scenes), shot scale diagrams, and camera movement mappings – all crucial for deconstructing spatial cinematics in games.

Storyboarding, in particular, is one of the cross-disciplinary methods in film, architecture, and game design, used to visualise evolving spatial narratives and their sequential flow (Cullen, 2012; Heussner et al., 2015; Price and Pallant, 2015). Similar to film, in game design, storyboards are defined as ‘a sequence of images’ that convey evolving action and narrative through ‘seriality’ (Price and Pallant, 2015: 14). They serve as tools for designing ‘narrative development, editing, camera angles, lens choice, and camera movement’ (Tan, 2019: 235). In architecture and urbanism, well-established approaches such as Cullen’s ‘serial vision’ and Appleyard and Lynch’s ‘mobile viewing’ notations used storyboard-like analysis to map the ‘coherent drama’ of spatial experience (Appleyard et al., 1964; Cullen, 2012: 9).

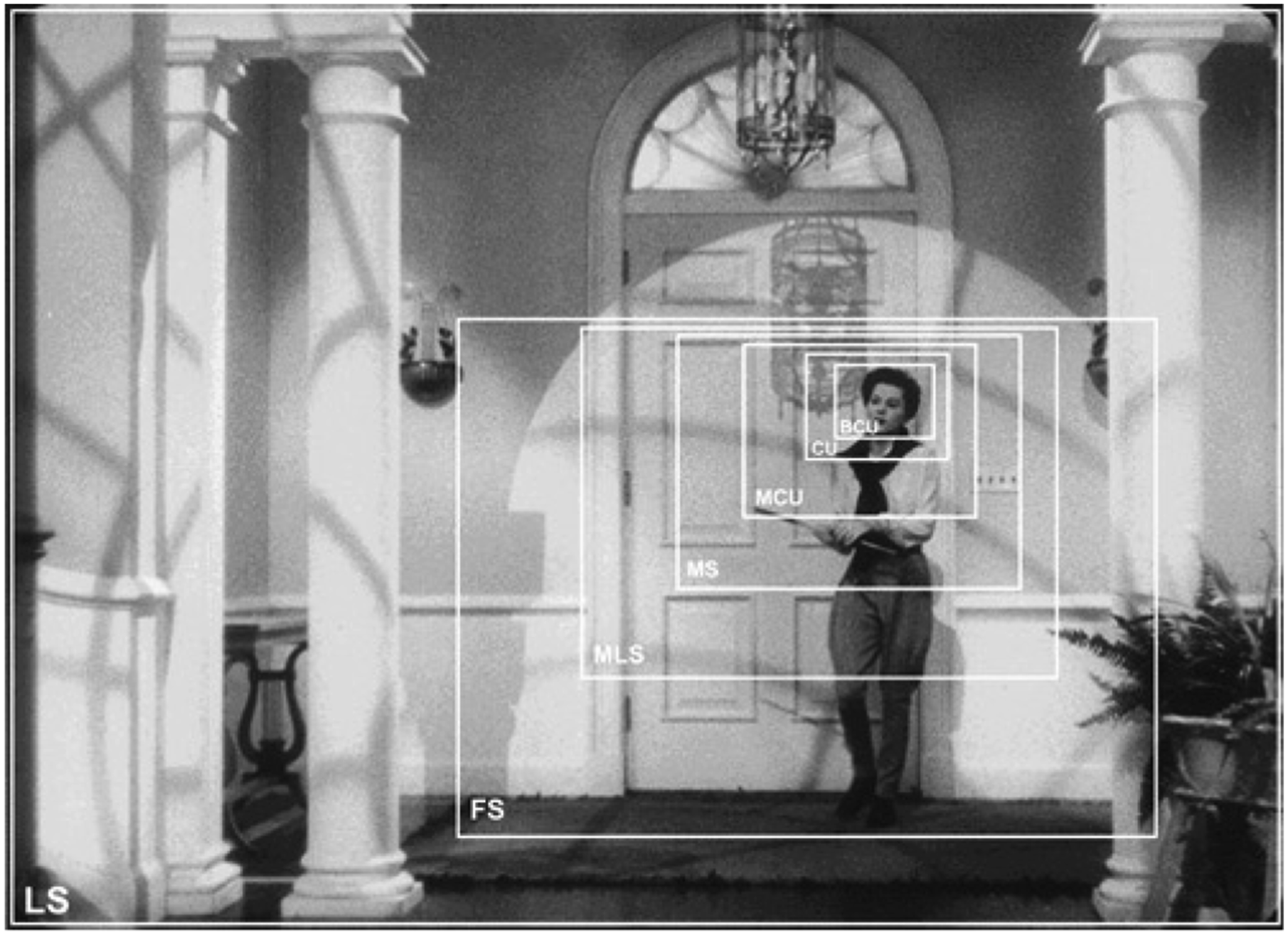

Shot scale (Close-up, Medium-shot, or Long-shot), as a key element of camera work, is important for shaping how viewers and players perceive space and narrative in moving images. In film theory, shot scales are defined as ‘the relative size of a character within the frame’ (Cutting, 2016: 1720). According to film theorist Barry Salt (1983: 155), a close-up frames the ‘head and shoulders’, a medium shot captures ‘from below the hip to above the head’, and a long shot shows ‘the full height of the body’ (Figure 14). Each shot scale shapes the spatial perception of the viewer by creating different levels of emotional impact within the space (Khalili, 2023). Shot scales diagram. © 1983 Barry Salt. Source: Barry Salt, Film Style and Technology: History and Analysis, London: Starword.

In game spaces, different shot scales serve specific spatial functions and influence gameplay. A close-up (C.S.) highlights detailed interactions, enhancing emotional engagement and narrative focus. A Medium shot (M.S.) or a long shot (L.S.) offers a broader view, which helps players assess their surroundings, spot danger, and plan actions. In particular, long shots (L.S.) provide the necessary spatial context for strategic decision-making, such as the choice of optimal movement paths or the plan of combat approaches.

Camera work in videogames similarly borrows from real-world equipment. Virtual equivalents of the dolly, crane, Steadicam, or tripod shape in-game cinematography, each with strengths and limits that designers adapt for player experience. For instance, the Steadicam’s ability to ‘smooth out imbalances and jerks, creating a floating effect’ supports fluid tracking during exploration sequences (Bordwell et al., 2010: 196). Similarly, static shots created with tripod-like framing convey stability and focus on quieter narrative beats, while drone-style movements reveal expansive environments and emphasise scale. These techniques mirror how real cameras achieve dynamic cinematics within technical limits (Totten, 2019).

The combination of shooting styles with fundamental camera movements ensures cinematic coherence. Fundamental camera movements, described as ‘both spatial and a spatializing entity’, are the basic apparatuses ‘dramatising events and the spaces in which actions unfold’ within gameplay (Nitsche, 2008: 83). Movements such as pan, tilt, and tracking shots position the player within the game world and guide attention to key elements. Tracking shots, for example, follow the avatar while sustaining immersion, ‘correlating with player perceptions of space in games’ (Totten, 2019: 198).

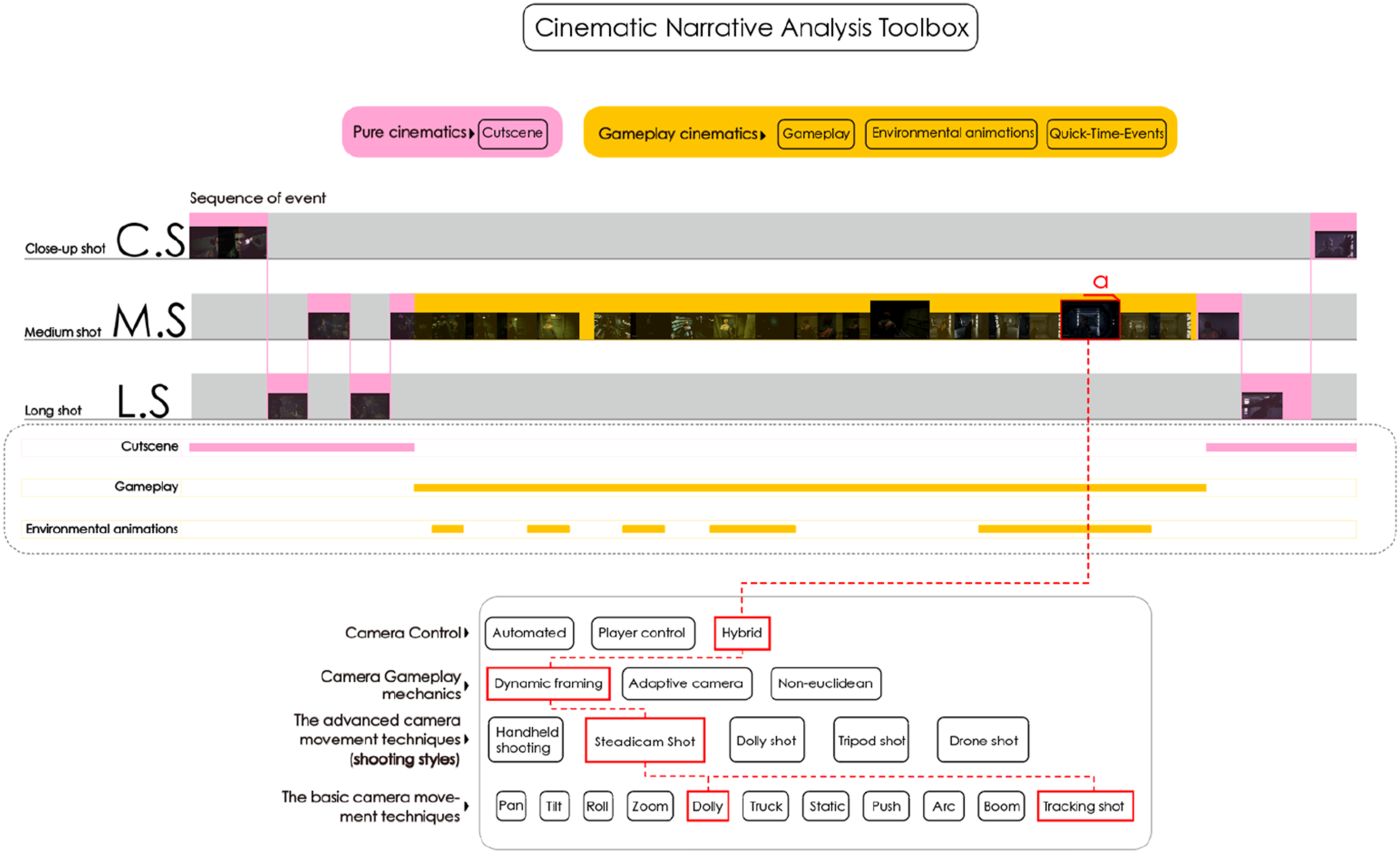

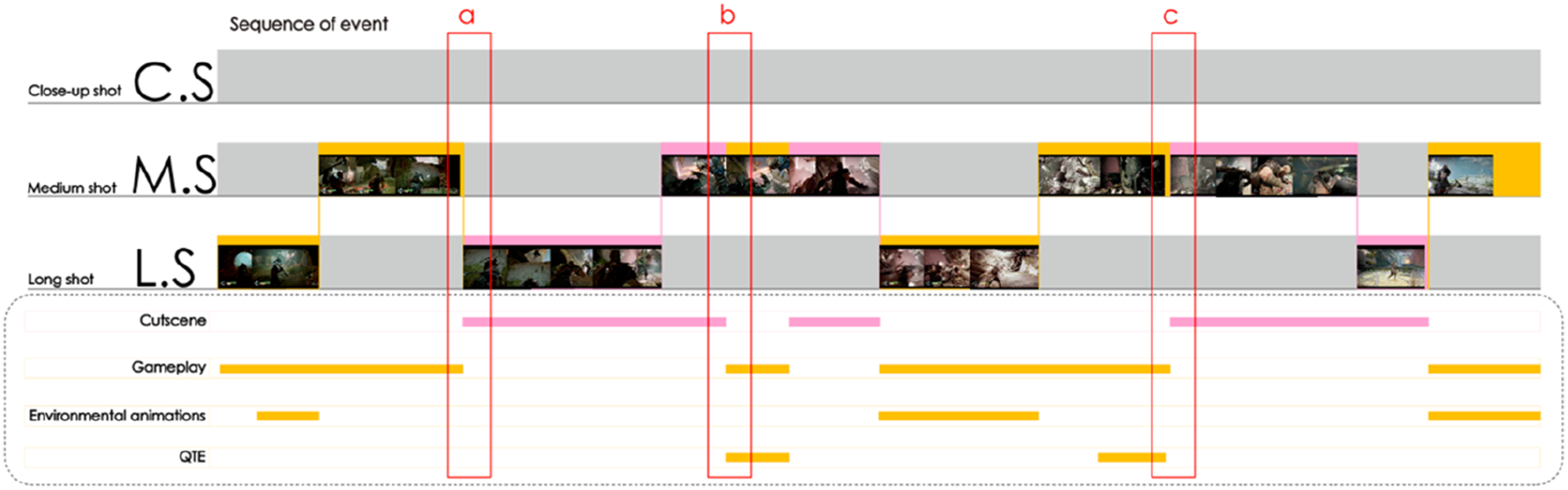

The analysis of the Star Wars Jedi: Fallen Order scene with the Cinematic Narrative Analysis Toolbox shows how cinematic styles and event attributes structure narrative. As depicted in Figure 15, the shot scale diagram (CU, MS, LS) shows how different scales advance the plot, while the event attribute timeline maps out pure cinematics and gameplay cinematics. Pure cinematics (cutscenes) appear at the beginning and end of the segment and focus on the story while allowing a pause from active play. Gameplay cinematics, framed in between, maintain player immersion and ensure narrative construction. During moment (a) of gameplay (Figure 15), hybrid camera control enables players to manipulate the camera within predefined conditions. The camera system dynamically frames key animations and spatial elements with a fluid, Steadicam-like shooting style, while players control basic actions like dolly and tracking movements. Application of the Cinematic Narrative Analysis Toolbox to the case study: shot scale diagram and camera work checklist.

The Cinematic Narrative Analysis Toolbox aims to provide a basis to study how cinematic techniques shape gameplay and narrative in videogames. It combines tools such as storyboards, shot scales, and camera movement to analyse spatial narratives. Storyboards highlight the balance between narrative and interaction across sequences. Shot scales – close-ups, medium shots, and long shots – convey spatial relations and emotional tone. Camera work, especially hybrid control systems, mirrors real-world equipment, with movements like tracking shots guiding player attention to key narrative elements.

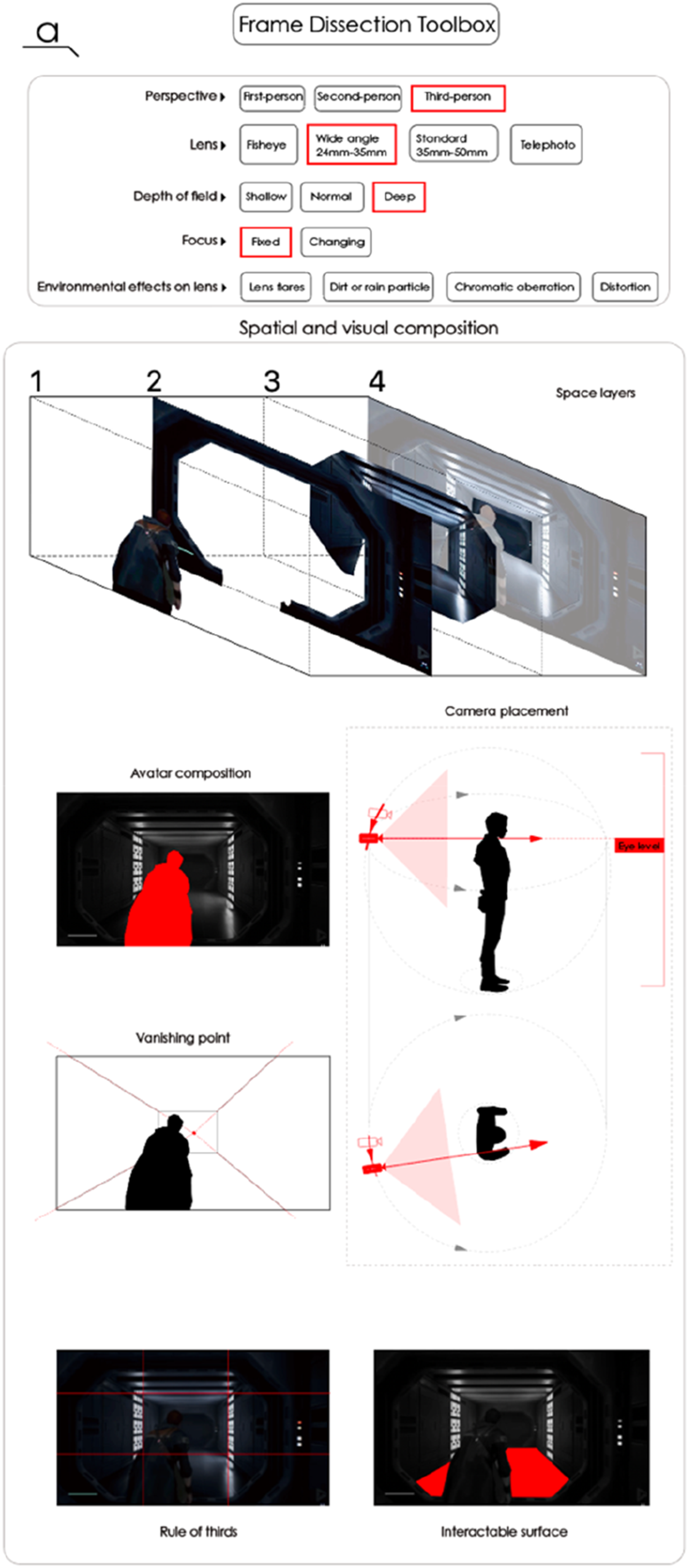

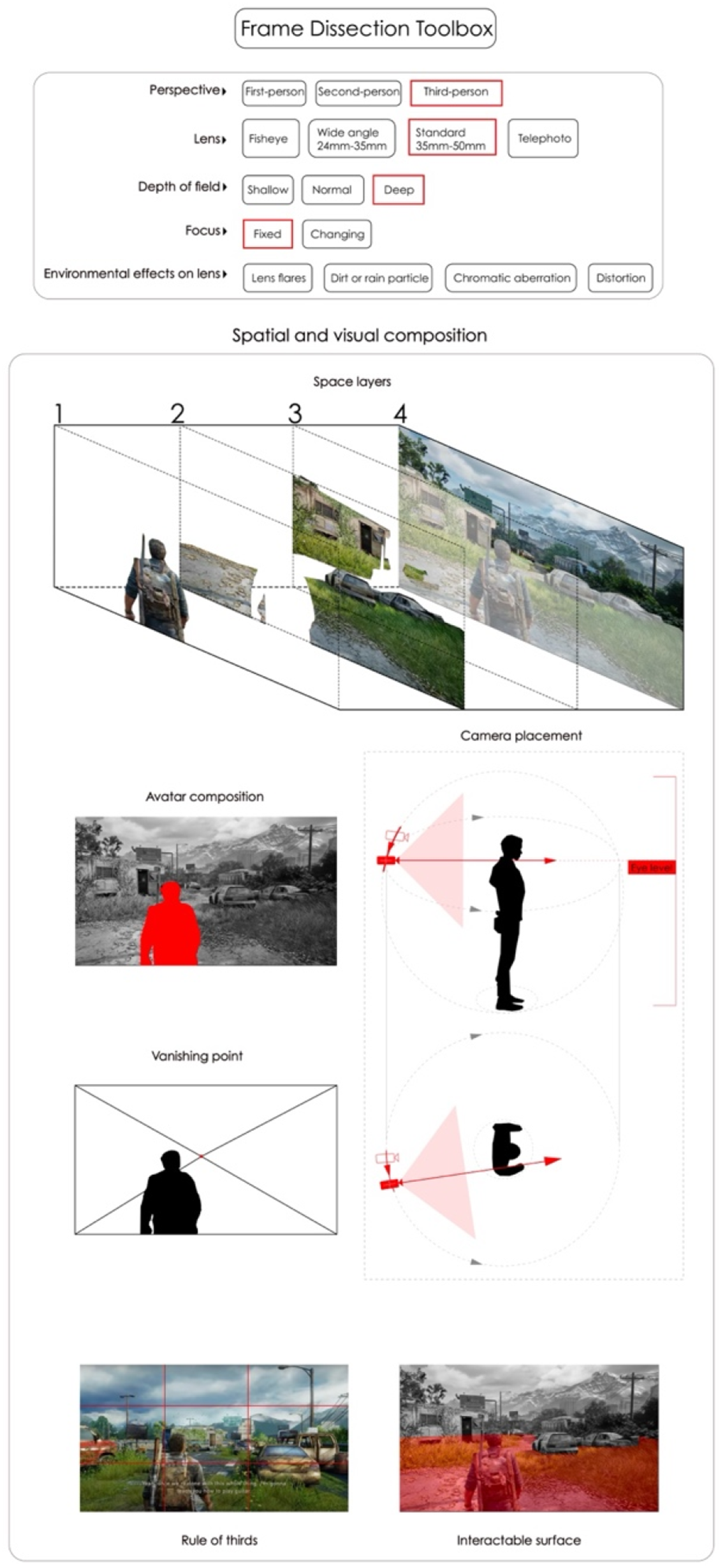

Frame Dissection Toolbox

The Frame Dissection Toolbox focuses on compositional elements within key scene moments. It includes a checklist for perspective and lens analysis, an exploded diagram of spatial depth, a camera–avatar relationship diagram, and two-dimensional composition diagrams. Screen-based videogames, like films, face the challenge of conveying depth through two-dimensional images. As noted in film and design practice, depth is articulated by ‘purposefully orchestrating foreground, middle ground, and background’, a spatial approach adopted by architects, filmmakers, and game designers alike (Khalili and Brennan, 2023; Penz, 2012; Venturi, 1977: 61).

In the Frame Dissection Toolbox, an exploded diagram of spatial layers explains the hierarchy of depth (see Figure 16). The frame is divided into four strata: the avatar in the foreground (1), vertical elements such as door frames as viewfinders (2), the midground action (3), and the back wall (4). This layered breakdown highlights both spatial depth and the design strategies shaping the scene. Lens type, depth of field, focus, and environmental effects further enhance spatial storytelling, with each lens imparting its own ‘flavour and inflection’ to the image (Brown, 2016: 6; Mercado, 2019; Präkel, 2009). Frame Dissection Toolbox applied to the case study.

Short focal length lenses (wide-angle, fisheye) expand the view and deepen the depth of field, enhancing openness. Long focal length lenses (telephoto) narrow perspective and create shallow focus, producing spatial intimacy (Präkel, 2009). Environmental effects – lens flares, fog, rain, and dust – add realism and atmosphere by mimicking real camera capture (Mercado, 2019).

At moment (a) (Figure 16), the game adopts a third-person perspective, allowing players to grasp the avatar’s relation to its surroundings. A wide-angle lens (24–35 mm) deepens depth of field and exaggerates spatial distance, while fixed focus directs attention to the distant wall, setting up subsequent storytelling. The checklist enables systematic evaluation of how lens choices shape narrative delivery, spatial awareness, and immersion. Camera height, position, and angle relative to the avatar further modulate spatial experience by controlling the amount of information revealed to the player (Khalili and Ma, 2024: 14; Naftis et al., 2021). Camera placement shapes key visual features such as the vanishing point, which conveys depth, directs focus, and provides spatial orientation. The avatar’s framing, distance from the lens, and spatial occupancy further modulate expression. Together, these 3D camera–avatar relationships construct the 2D visual composition of the scene.

In the Frame Dissection Toolbox, the camera–avatar spatial relationships diagram works with the visual composition diagrams to unpack spatial relationships from plan and elevation views (Figure 16). At moment (a), from the elevation view, the camera is positioned at the avatar’s eye level, at a distance that frames the upper body to emphasise actions and create intimacy. From the plan view, the camera is behind and slightly to the right, with the vanishing point guiding focus to the important doorway ahead. Additionally, the camera’s angle exposes interactable (walkable) surface area, which encourages and guides players to explore further. The spatial and visual composition diagrams prove effective in clarifying spatial intention and narrative precision at specific moments. The analysis of the three-dimensional information of the camera through the diagrams made it clearer how camera work can effectively convey key spatial narrative and cinematic expression to players.

The objective of VSC is to vertically integrate three toolboxes into a unified system for examining spatial, cinematic, and gameplay relations in videogame environments (Figure 17). The Spatial Analysis Toolbox addresses spatial principles and interactions. The Cinematic Narrative Analysis Toolbox focuses on narrative structures and camera techniques. The Frame Dissection Toolbox hones in on specific moments of spatial composition and visual depth. Combined, they form a multilayered framework for studying Videogame Spatial Cinematics. The overall system applied to the case study.

Experiments

In the Phase 4 Experiments, we put the Spatial Analysis Toolbox, Cinematic Narrative Analysis Toolbox and Frame Dissection Toolbox into an experiment with different scenes from the three selected case studies. We used the Spatial Analysis Toolbox to dissect the spatial design of Star Wars Jedi: Fallen Order. We applied the Cinematic Narrative Analysis Toolbox to investigate the cinematic narrative complexity in God of War. We also employed the Frame Dissection Toolbox to analyse key cinematic moments of The Last of Us Part I. The following sections illustrate the results of the experiments with each toolbox.

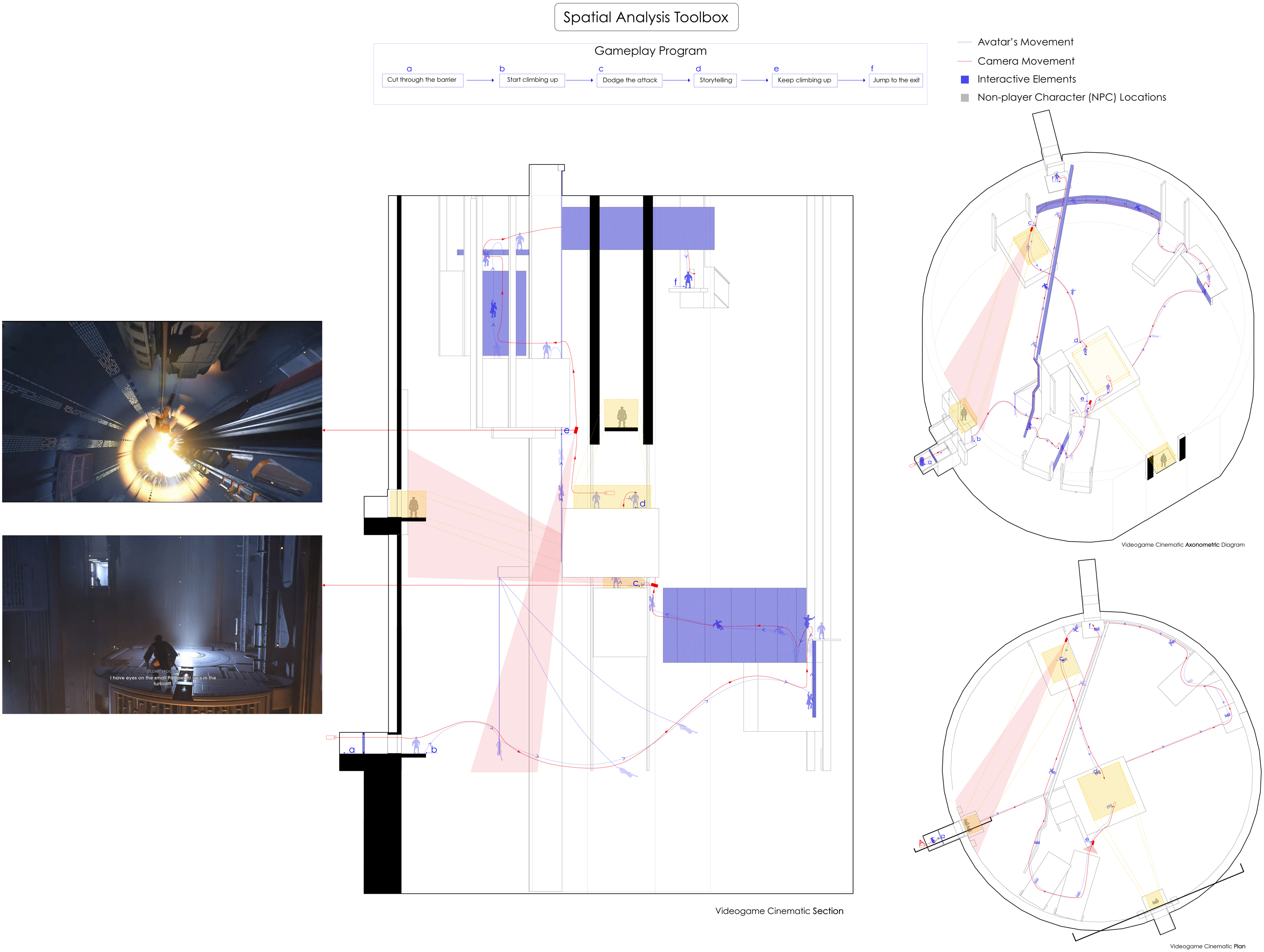

Experiment with Spatial Analysis Toolbox

Applying the Spatial Analysis Toolbox to a Star Wars Jedi: Fallen Order sequence (Figure 18) reveals how traversal rhythm is constructed through recurring spatial affordances. The toolbox traces movement from points (a) to (f) through climbable walls, ropes, and ledges positioned at key nodes where ‘ascending–descending patterns’ operate as embedded narrative devices, marking progress as clearly as dialogue or cutscenes (Khalili and Ma, 2024: 21). These repeated cues form a recognisable level ‘grammar’ – familiar elements that train player navigation and establish spatial legibility (Totten, 2019: 482). Such spatial grammar reduces cognitive load and sustains flow (Calleja, 2011), yet over-reliance risks collapsing exploration into ‘narrative railroading’, where spatial discovery is replaced by predictable beats (Domsch, 2013; Jenkins, 2004). By rendering character and camera movement in plan and section, the toolbox uncovers how these cues facilitate movement while also questioning whether the design innovates or recycles a proven formula. Spatial Analysis Toolbox applied to the selected segment in Star Wars Jedi: Fallen Order.

The toolbox also shows how NPCs function as pacing mechanisms. At point (c), a scripted enemy encounter intentionally slows movement, allowing tactical reassessment or narrative emphasis. At point (d), a cutscene trigger interrupts progression while refocusing attention toward the summit goal (f). NPCs here serve as both environmental texture and narrative punctuation – spatial beacons that guide movement and concentrate focus. In cinematic terms, this resembles the insertion of figures within a composition to modulate pacing, a technique familiar from action cinema where antagonist placement builds tension before climax (Branigan, 1984: 42). By diagramming these interactions, the toolbox makes visible how rhythm is materially embedded in architectural arrangements rather than applied as an abstract narrative overlay.

Camera path annotations elucidate how framing supports spatial comprehension. At point (c), the lens frames both the climbable wall and elevated enemy, retaining spatial cues and anticipated threats in the same field of view. The ascent toward (e) uses tilt and dolly-like tracking to generate a sense of physical involvement (Brown, 2016: 302). As Paolo Burelli notes, although players directly influence camera movement, the camera must perceive the player’s ‘current state’ and establish an ‘indirect relationship’ to shape experience, controlling how depth, obstacles, and objectives are presented (2016: 183). The toolbox renders these tensions legible, depicting where cinematic authorship sharpens spatial comprehension and where it may reduce exploratory play.

One-way climbs, narrow corridors, and single-exit ledges channel players through specific beats – constraint-driven agency within tightly authored limits. From an architectural standpoint, such spaces function as bottlenecks that slow movement and create compression before release (Ching, 2014; Hillier, 1996). Although such structures enhance dramatic effect, excessive use can lead to ‘replay value’ issues, since player agency is reduced to overdetermined routes (Totten, 2019: 435). By diagramming movement flow and enforced slowdowns, the Spatial Analysis Toolbox makes visible where constraints enrich pacing and where they verge on over-control.

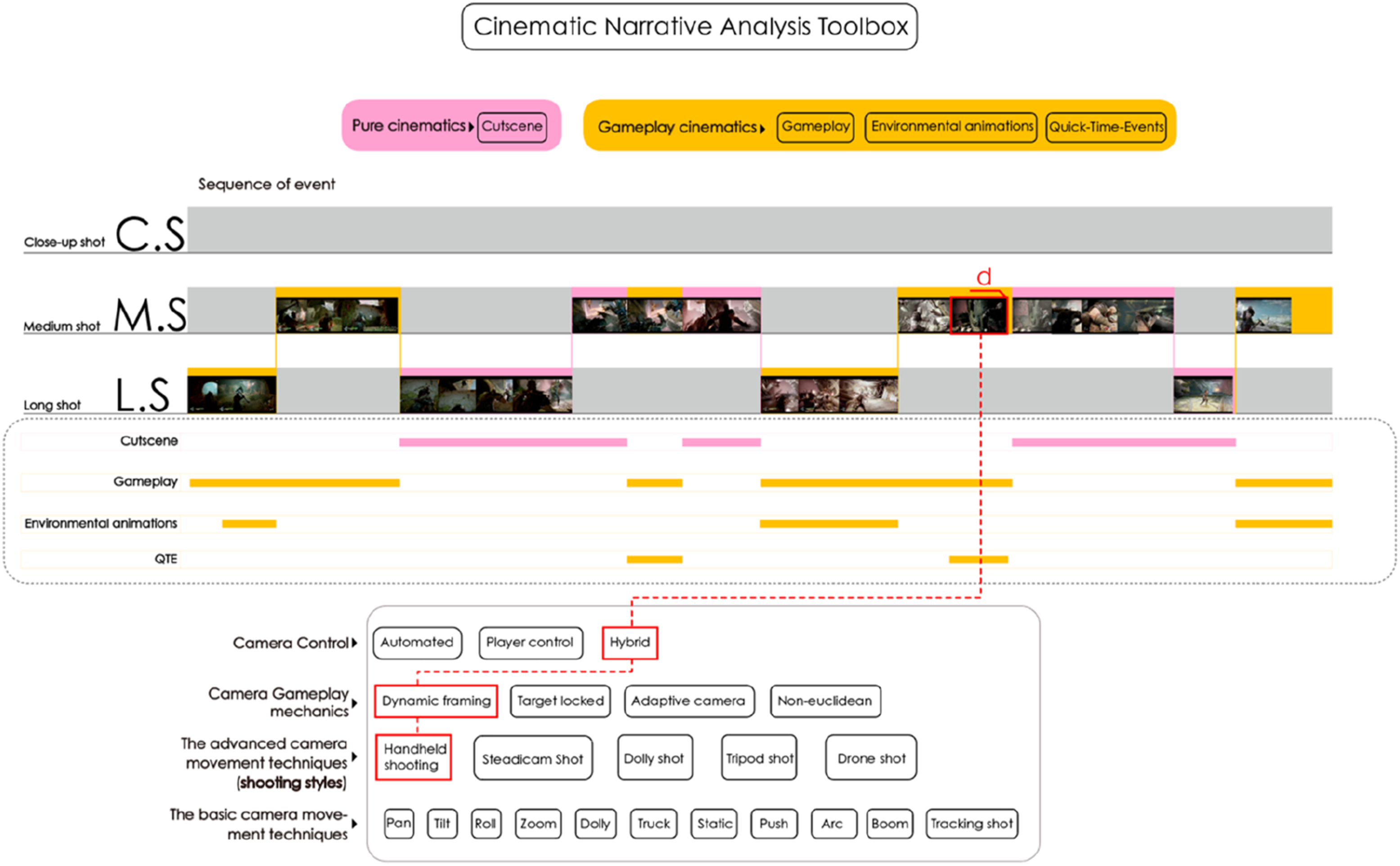

Experiment with the Cinematic Narrative Analysis Toolbox

A scene from God of War was examined using the Cinematic Narrative Analysis Toolbox (Figure 19), tracing how pure cinematics and gameplay cinematics interact to create a continuous cinematic sequence (Figure 20). In segment (a), regular gameplay triggers a short cutscene. Segment (b) introduces a Quick Time Event (QTE),

3

granting limited control within a non-interactive sequence. Segment (c) features another QTE at the hinge between gameplay and cutscene, smoothing the handover between systems. Segment (d) (Figure 20) illustrates how the hybrid-controlled camera automatically frames the avatar alongside distant NPCs, blending system automation with player input. Cinematic Narrative Analysis Toolbox applied to the selected segment in God of War. The three important transitions in the sequence of events.

Game scholar Paul Cheng describes how cutscenes shift players into a mode of ‘waiting for something to happen’, creating an agency vacuum (2007: 22). Yet, as Rune Klevjer observes, cutscenes can reframe agency within a different authored register rather than excluding it (2014: 307–08). QTEs epitomise this reframing by requiring inputs at dramatic junctures. Another game scholar Ben Browning shows that QTEs offer ‘representational agency for players to access additional information’ rather than reducing them to spectators (2016: 75). What emerges is a sense of continuous authorship: the cinematic maintains filmic pacing, but the player’s kinaesthetic loop is never fully severed. Agency persists at reduced bandwidth while maintaining the player’s participatory role in shaping the story.

Segment (c) deploys the QTE at the threshold between gameplay and cutscene, so that the player’s last input leads into a purely cinematic moment. Gordon Calleja defines such player actions as ‘alterbiography’ – an ‘active construction of an ongoing story that develops through interaction with the game world’s topography, inhabitants, objects, game rules and coded physics’ (2009: 5). Jonas Linderoth further explains that although ‘challenges, story elements, cutscenes, puzzles, and quick time events’ differ in nature, careful design can align them to ‘enhance the same emotion’ and strengthen the player’s sense of embedded authorship (2015: 293). These insights explain why precise hinge-QTEs matter: such transitions allow authored moments to unfold without producing an agency cliff, sustaining the game’s one-take illusion while incorporating the player’s input as narrative catalyst.

The coordination between QTE mechanics and camera work is especially legible in moment (d), where the camera adopts a handheld long-take aesthetic that masks the attenuation of player control. Burelli observes that in the cases similar to this scene, the game camera must ‘dynamically react to unexpected events… and take into consideration player actions’ (2016: 181). In God of War, fluid long-take handheld cinematography across gameplay, QTEs, and cutscenes sustains experiential continuity, binding cinematic authorship and player agency into a single aesthetic flow.

Experiment with Frame Dissection Toolbox

The Frame Dissection Toolbox charts a moment in The Last of Us Part I (Figure 21) framed through a third-person camera with a focal length of 35–50 mm – a range that cinematographer Blain Brown describes as portraying depth relationships ‘fairly close to human vision’ (2016: 31). In cinema, this ‘normal’ focal length is valued for neutrality and lack of distortion; in games, however, neutrality is functional, maintaining recognisable scale relationships while supporting accurate judgement of traversal distances and object sizes. Frame Dissection Toolbox applied to the selected segment in The Last of Us Part I.

The choice of a normal lens, long depth of field, and fixed focal anchor sustains what game theorist Steve Swink calls ‘continuity of response’ – the uninterrupted feedback loop between control input and on-screen change (2009: 44). By keeping all spatial layers in simultaneous focus, the player can scan, aim, and route-plan without shifting into a different mode, a design affordance with no equivalent in pre-recorded film.

The exploded spatial layering diagram breaks the frame into three functional strata: Layer (1) – the avatar as kinaesthetic anchor; Layer (2) – a midground obstruction (abandoned car); Layer (3) – a distant attractor closing the depth axis. As Henry Jenkins (2004) argues, environmental features can retard or accelerate plot trajectory. Here, Layer (2) slows immediate progress, subtly prompting a directional shift, while Layer (3) establishes a visual goal. This is indexical storytelling in Fernández-Vara’s sense – environmental arrangements that ‘speak’ narrative clues players must interpret to navigate (2011: 6). Unlike in film, where midground objects serve compositional balance, in games they simultaneously change available movement vectors and script potential behaviour through spatial structure.

The camera–avatar spatial relationship diagram shows the lens at eye level, slightly behind and offset right of the avatar, who is positioned along the left vertical of the rule-of-thirds grid. The over-the-shoulder composition in the scene, as game scholars Naftis et al. (2021) demonstrate, reduces unnecessary adjustment and cognitive load during low-intensity exploration. This framing frees the central and right portions for depth vectors and environmental affordances. Visual theorist Bruce Block notes that in the case of a compositional imbalance, similar to this scene, the frame can direct the viewer’s gaze by engendering visual direction (2020), channelling visual pull toward the vanishing point.

The normal lens, deep focus, and thirds placement should be understood as embedded cinematic mechanics rather than borrowed film language. Unlike in cinema, where mise-en-scène is read passively, here composition directly influences input decisions: the player moves, scans, or aims differently depending on what the camera–avatar relationship reveals or conceals. The Frame Dissection Toolbox makes these functions analytically visible, showing that shot composition is not ornamental but an active navigational scaffold integrating cinematic structure with gameplay rhythm.

Discussion and findings

The application of the Videogame Spatial Cinematics (VSC) framework demonstrates that videogame environments can be productively decoded as outcomes of a tightly coordinated system of spatial, cinematic, and ludic design. Rather than asserting a singular way to approach the medium, VSC offers a synthetic lens to capture the operative mechanisms that manifest when architectural logic and cinematic vision collide within interactive contexts. Insights across the toolboxes suggest that concepts traditionally confined to distinct disciplines – such as sectional analysis in architecture or mise-en-scène in film – can function catalytically in shaping the player’s spatial experience when they are re-articulated through gameplay. This epistemological overlap confirms that contemporary videogames are not adequately captured by approaches that isolate rules, story, visualisation, sound, gameplay, or environment. Rather, they demand a framework that reveals how these principles are re-articulated as operative mechanisms in virtual cinematic interactive spatial contexts.

Michael Nitsche (2008; 17) remarked years ago that videogames are layered structures composed of rules, gameplay, narrative, moving images, and sound, and that ‘none of these layers alone is enough to support a rich game world’. VSC advances this observation by making layered hybridity methodologically examinable. Much existing work, including valuable ludic, narratological, spatial and cinematic studies foreground one dimension at a time. The consequence is not a lack of interdisciplinarity but a persistent difficulty in describing, with analytic precision, how layers operate together when players move, act, and perceive in the course of play. VSC aims to address this difficulty through a cross-referenced structure in which spatial, cinematic, and interactive variables can be aligned and compared rather than inferred as parallel but separable explanations. Additional layers such as sound or dialogue could also be incorporated in future to extend this structure further.

The experimental analyses conducted here do not suggest that the success of these titles results from ‘following’ VSC; rather, they indicate that large-scale game design often involves an intuitive coordination of overlapping spatial and cinematic principles. The VSC framework serves as an explanatory tool to reverse-map these ‘design instincts’, making the latent structures of navigation, rhythm, and composition visible and analytically traceable. The Spatial Analysis Toolbox, for example, shows how verticality, repeated affordances, and NPC placement do more than enable traversal: they regulate pacing and directional learning, producing rhythmic patterns of movement that resonate with cinematic editing. The Cinematic Narrative Toolbox reveals how hybrid authorship emerges in transitional moments – continuous takes, interactive cutscenes, or QTEs – that blur distinctions between authored spectacle and player agency. The Frame Dissection Toolbox demonstrates that composition (rule-of-thirds, vanishing points, depth cues) functions less as decorative flourish and more as a navigational interface, embedding spatial literacy into the environment itself. Taken together, these findings support a central claim of the article: the virtual camera is not a neutral viewing device but an active organiser of spatial experience, coordinating legibility, rhythm, and narrative comprehension alongside play.

VSC’s methodological contribution also lies in its attempt to create a collective, cross-referencing system of thinking and analysis. Prior studies of game space or game cinematics have made important advances, yet their methods often remain discipline-bound. Even within game studies, close readings of rules or narrative do not always extend to the compositional and spatial mechanics of camera work, and analyses of camera systems do not always specify how framing becomes navigationally operative. VSC seeks to re-contextualise established concepts into a systematic procedure that makes overlaps operational rather than incidental. By translating diverse disciplinary concepts into shared visual diagrams and terminology, the framework enables comparative analysis across cinematic, spatial, and interactive elements in unison, supporting more precise dialogue in hybrid fields such as interactive media studies and design pedagogy.

Crucially, VSC operates at the level of formal design decision analysis rather than immediate player perception. It does not claim to measure what players feel or notice directly. Instead, it dissects the surface of gameplay presentation to identify organising structures – patterns of camera tracking, shot scale, traversal rhythm, spatial sequencing, and compositional depth – that together constitute a spatial–cinematic apparatus. What often appears in disciplinary silos as discrete phenomena becomes readable, through VSC, as an integrated system. The framework therefore shifts analysis from describing visible features to explaining how specific configurations of space, camera, and interaction choreograph experience.

The current study focused on screen-based 3D action-adventure titles because they provide a robust primary corpus for theory-building: they foreground authored traversal sequences, third-person camera systems, and tightly choreographed transitions between gameplay and cinematics. This focused scope was a deliberate methodological choice to establish VSC’s foundational parameters with internal consistency before extending to other formats. At the same time, we acknowledge that VSC, as an analytical framework, requires adaptation for different formats. While some principles remain the same, we acknowledge that 2D games, VR/XR environments, and strategy/management titles operate under fundamentally different camera-space regimes than the screen-based 3D action-adventure corpus examined here. However, we plan to address this transferability through dedicated theoretical and empirical study in future work.

We anticipate that foundational principles – such as mise-en-scène, environmental storytelling, NPC-based attention guidance, and spatial sequencing – will remain operative across formats, though their specific implementation may vary significantly. In VR, for instance, camera movement translates into head-coupled viewpoint control, and framing becomes a function of spatial positioning and gaze direction rather than screen composition. Parameter stability and transferability thus depend on how the coupling between camera, space, and interaction is configured in each format. The following examples illustrate how VSC’s parameters would need adaptation across different game contexts: • In screen-based 3D games employing planar, orthographic, or axonometric camera systems, such as strategy and management games, spatial cinematics is organised less around continuous embodied traversal and more around topological relations, simultaneity, and strategic overview. Here, parameters such as framing, depth of space, and camera movement would require redefinition in relation to abstraction, scale, and multi-unit coordination rather than character-centric navigation. Empirical application to titles such as Civilization VI (2016) or Cities: Skylines (2015) would be necessary to validate and refine these adaptations. • In 2D games, spatial cinematics operates under different representational conditions: depth is often simulated through parallax, occlusion, and layering (Totten, 2019: 206), while camera behaviour may privilege lateral framing, controlled reveals, and planar composition over volumetric depth cues. Games such as Ori and the Blind Forest (2015) or Hollow Knight (2017) would serve as appropriate test cases, but such analysis lies beyond the current study’s scope. • VR introduces the most substantial shift because it transforms the camera regime itself: viewpoint becomes bodily and head-coupled, framing is no longer primarily screen-based, and attention guidance must operate through spatialised cues, distance relations, and environmental affordances rather than traditional shot composition alone. Validating VSC in VR contexts such as Half-Life: Alyx (2020) would require not only parameter redefinition but potentially new analytical instruments responsive to embodied, stereoscopic spatial experience.

In these contexts, VSC can function as a comparative baseline, but parameters such as camera type, depth of space, framing, and attention guidance would require adaptation and partial redefinition. Extending the framework across formats therefore entails dedicated re-parameterisation through cross-format case studies alongside practice-based and user-centred validation. The absence of empirical validation across these formats represents a clear limitation of the present study and defines concrete trajectories for future research.

Conclusion

The significance of Videogame Spatial Cinematics (VSC) lies in its capacity to connect domains that are often discussed separately – spatial design, gameplay, and cinematics – and to treat them as interdependent components of a single operative system. By foregrounding ‘camera logic’ as a computational apparatus with profoundly cinematic heritage, the framework clarifies how spatial experience in videogames is not only constructed architecturally but also captured, framed, and rhythmically organised through virtual cinematography under interactive constraint. VSC therefore provides a concrete analytic mode for scholars and designers to examine how these elements coalesce into coherent virtual environments, addressing a methodological gap in how cinematic mediation becomes operative in real-time play.

At the same time, the framework advanced here functions primarily as an explanatory and interpretive theory. While the experiments demonstrate how VSC can uncover ‘latent structures’ in existing videogames, they do not establish causal claims about design success, nor do they validate VSC as a guiding theory for creation. A key next step is therefore design-based validation, for example, through design-led research, comparative prototyping, or collaborative studio projects in which VSC is deployed within an original creative process. To enhance the objectivity of these analytical findings, future research will also incorporate complementary data sources, such as eye-tracking to measure spatial attention and interviews with game designers to see if these VSC principles are intuitively or intentionally considered in the design process.

Finally, while this study establishes VSC through screen-based 3D action-adventure games, the framework’s analytical vocabulary may extend to adjacent domains where spatial experience is camera-mediated and interactively structured. Beyond videogames, VSC may inform emerging practices of virtual production, where real-time game engines fuse physical and virtual spatial data into cinematic environments, offering potential insights for virtual set design, cinematic staging, and camera choreography in hybrid environments. Within gaming itself, formats such as VR, 2D platformers, and strategy titles operate under fundamentally different camera-space regimes and would require dedicated re-parameterisation and empirical validation before VSC’s transferability can be demonstrated rather than theoretically proposed. As the boundaries between architecture, cinematography, and interactive media continue to blur, recognising the need for interdisciplinary approaches and shared analytical frameworks becomes essential for sustaining dialogue and exchange between these converging fields.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Parts of the ongoing research were directly and indirectly supported by a number of funding sources, and aspects of the concepts and preliminary findings were presented by the authors at the Martin Centre keynote, University of Cambridge, and at the DiGRA conference. The authors acknowledge the Leverhulme Small Research Grant, the PGR Travel Expenses Grant (ESALA), University of Edinburgh, support from the Martin Centre at the University of Cambridge, and Manchester Metropolitan University’s A3RO / [FP]lab travel and fieldwork grant, all of which contributed to the development of the research presented in this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The diagrams generated for this study are available from the corresponding author upon reasonable request and selected figures are included in the article.