Abstract

The concept of transmediation might be one of the most influential intellectual tools for studying, discussing and fostering innovative phenomena across fields of study. One could argue that the term itself is self-explanatory. Etymologically, it suggests a transition (to go across) between different media but the concept of transmediation has been shaped both by the somewhat historically lax definition of media and the disciplines that have adopted the term. And while the looseness of media gives transmediation a potential for interdisciplinarity, the siloed nature of academic disciplines could have, at the same time, hindered such potential. In this paper, we introduce a framework for transmediation based on an analysis of the use and evolution of the concept and our own explorations over the last few years. This framework aims to provide a common language and a set of conceptual prompts to explore the notion of transmediation further.

Five decades of transmediation

The first record of transmediation in the available literature dates back to 1970. The book Films on the Campus (Fensch, 1970) credits the coinage of the term to Rod Whitaker, a film professor at the University of Texas at the time. According to Fensch: ‘“Transmediation” is a word that Whitaker coined, meaning a translation and restructuring of a stage play into a screen drama’ (1970, 146). Similar definitions can be found in other documents of the same time (e.g., Marder, 1970). Five years later, a quote found in Solzhenitsyn in America (Nedela, 1976) suggests that transmediation can also occur between words in one cultural context to images in a different one, adding the broad notion of translation between places to the idea of transmediation: ‘Ivan Denisovich is a “quite honest film” but “it lacks Russian colour.” So much for the transmediation of words from the Russian underground into images created by the Western film industry’ (30 [emphasis added]). For the most part of the 1970s, the concept of transmediation remained almost exclusively associated with film adaptations of other works (e.g., Muller, 1977), although early mentions of the concept defining it simply as ‘a transfer from the original media to another media form’ started to appear within the field of Educational Psychology at the end of that decade (Tennyson, 1977).

In 1981, Suhor’s doctoral dissertation entitled A Study of Media in Relation to English (Suhor, 1981) was published. This work, ‘an examination of the nature and range of the English teaching profession's concern with media’ (iv) outlines the principles that defined Suhor’s 1984 article Towards a Semiotics-based Curriculum (Suhor, 1984). The latter is, to this day, a reference for scholars engaging with the concept of transmediation within education. In Suhor’s 1981 work, transmediation is defined as the ‘translation of ideas from one medium (...) to another’ (v [emphasis added]). This definition is amended for the 1984 work to ‘translation of content from one sign system into another’ (Suhor, 1984, 250 [emphasis added]). Another important principle found in Suhor’s work is the definition of media itself. For the purpose of Suhor’s 1981 work, the definition of media includes the human body, as suggested by Olson (1974). In Suhor’s 1984 work, the definition of media shifts to align with Broudy’s (1977), a definition that seems to equate media to material, in alignment with definitions customary in art education. Suhor states: ‘The spoken word is a medium; so is an oil painting, a symphony, a television production, and a computer’ (1984, 250).

The same year Towards a Semiotics-based Curriculum (Suhor, 1984) was published, Siegel’s (1984) doctoral dissertation Reading as Signification was published. In this work, Siegel credits Eco (1976) for the idea (if not the concept) of transmediation, although Eco never used the term transmediation explicitly. The relative connection between transmediation and Eco’s concept of segmentation, drawn by Siegel, would require more discussion. One could argue that although transmediation could be a byproduct of segmentation these two concepts do not refer to the same phenomenon. Siegel offers a definition: Transmediation is ‘[a] process of rotating the content and expression planes of two different sign systems such that the content plane of the original sign is projected onto the expression plane of the new sign system’ (Siegel, 1984, 383). Siegel’s definition of transmediation attempts not only to explain the phenomenon but also how it fits within Eco and Jacobsen’s semiotic models (Sebeok, 1960). The work of Seigel around transmediation, along with the work of other students from the University of Indiana, had an early acknowledgment by Harste (1984), who would later serve as Director of Busch’s (1986) doctoral thesis: The Transmediation of Signs: Pictures, Cognition and Texts. In this work, also out of the University of Indiana, Busch defines transmediation as ‘a cognitive activity in which we utilize multiple sign systems operating within our environment to make sense of our world’ (18). Besides Siegel’s referential material around transmediation, Busch added Cole and Griffin’s (1983) concept of remediation as a potential correlate, which was further explored years later. However, the concept of transmediation was associated almost exclusively with Siegel during the rest of the 1980s within the realms of Education and more specifically, Literacy Education (e.g., Harste et al., 1988; Sampson, 1986; Steele and Threadgold, 1987).

The early 1990s saw a resurgence of Fensch’s (1970) original understanding of transmediation, revised, deepened and further discussed until this day as transmedia. Although it could be argued that the term transmedia branches out from transmediation (the object of this analysis), its impact certainly warrants its inclusion in this review. The concept of transmedia as a cross-mediatic phenomenon of co-creation (rather than adaptation, as entertained by Frensch) is often credited to Jenkins (2003). Nevertheless, in an article published a decade after this pivotal work, Scolari and Ibrus (2014) explicitly recognize the use of transmedia in the work of Kinder (1993), which preceded Jenkins’ by a decade. In their book Playing with power in movies, television, and video games, Kinder discusses cross-mediatic practices enabled by the deregulation of the United States Federal Communications Commission, coining the term transmedia intertextuality in the process. In a later work titled Transmedia Frictions, Kinder and McPherson (2014) compare and contrast notions of medium and nation as boundaries and use an earlier work of Manovich (2014, originally published in 2001) to advance their discussion. The very next year, in what seemed to be a response to a provocation by Stefan Sagmeister (Daniel, 2014), Ryan (2015) reiterates and reinforces the conditions for transmedia storytelling, as understood by Jenkins (2003).

In Education, the 1990s started without a strong presence of transmediation in literature besides very few casual and sometimes uncredited mentions (e.g., Weaver, 1994). The concept was brought back to light by a conference paper by Betts et al. (1995). Interestingly, this conference paper references Harste but not Siegel and it brings back Suhor (1991), not in the capacity of a stakeholder in the mobilization of transmediation but as an advocate for semiotics in the English Arts classroom. In 1995, Siegel published More than Words: The Generative Power of Transmediation for Learning, an influential article in which the author credits Suhor (1984) for the term's coinage. This article also aligns with Suhor’s push to adopt Peircean semiotics as the best framework to deal with transmediation. From this moment, Siegel and Suhor would often appear together in association with the transmediation concept and Peircean semiotics (e.g., Klein, 2003; Rowe, 1998; Semali, 2002; Sipe, 1998), while Siegel was cited more often in works related to implementation in the classroom (e.g., Short et al., 2000).

Eventually, Siegel (2006) would explicitly associate transmediation with other emerging concepts intended to adopt and represent the semiotic turn in literacy education, such as the concepts of multiliteracies and multimodality (The New London Group, 1996). In the next few years, these concepts would gain huge prominence. Siegel’s work would be often mentioned by prominent scholars as an early reference to the need to expand the semiotic repertoires of students, sometimes barely acknowledging the concept of transmediation or its strong semiotic underpinnings, some others not at all (Early and Marshall, 2008; Tierney et al., 2006; Wohlwend, 2009). Recent work from Siegel seems to suggest that the concept of transmediation has been somehow absorbed by multimodality in the collective conscience of literacy educators (Siegel, 2012). This notion is certainly observed in recent works by the late Lars Elleström, former lead of the Linnaeus University Center for Intermedial and Multimodal Studies (e.g., Elleström, 2021; Elleström and Bruhn, 2010; Salmose and Elleström, 2019).

Around the time More than Words (Siegel, 1995) was published, a new iteration of transmediation started to appear in literature outside the field of education. In the context of trade and cultural industries, Jones (1996) defines the concept (this time spelled transmediation) as: ‘the placement of artist, music, merchandise, and so forth across a wide variety of media’ (345). A relatively similar definition can be found in Cheong and Lundry’s (2012) work about prosumerism and transmedia. In this article, the authors define transmediation as an activity that ‘involves additive and iterative forms of consumption and integration of multiple media forms when audiences or fans engage with media and with each other to create new texts on varied media platforms’. As seen before, the definition of transmediation advanced by these scholars and others concerned with the topic of prosumerism (e.g., Berrocal-Gonzalo et al., 2014; Ritzer et al., 2012) seems to emerge as a reference to remediation, as defined by Bolter and Grusin (1999): ‘the representation of one medium in another’ (43).

The polysemic nature of transmediation

Although transmediation could be assumed to be a concept with a reasonably inferable definition, a brief review of the evolution of the term shows its relationship to media, a concept with ambiguous semantic potentiality, making the definition of the term somewhat vague. However, because it is not our intention to sanction the correctness with which the concept has been used over time but simply acknowledge and account for the multiple understandings that the concept of transmediation has elicited, we find the exercise of reviewing the concept of media not just potentially impossible but also to be unrewarding for purpose of clarifying how transmediation can be taken up in contemporary education. In this sense, we share the view that Horn (2007) expresses in the editor’s introduction to the special edition of Grey Room on New German Media Theory: ‘Rather than defining the “essence” of media as technology, “extensions of man,” communication devices, system of codes, and so forth, or describing their social, aesthetic, communicational, ideological, or other functions, (...)[we] channel our attention toward the “technological-medial a prioris” of culture; that is, toward the function and functioning of media over and against any interrogation of their “nature.”’ (7)

Whatever the nature of media, transmediation intends to account for the transference of content between different forms of it. For as long as the term has been in use, the nature of such content has been all but stable: Ideas, works, media itself, semiotic systems, etc. One could argue, of course, that all of this content can be understood and discussed as semiotic systems. And we would agree, for the most part. However, we would also argue that advocating for transmediation as an exclusively semiotic phenomenon not only hinders its potential as a cognitive tool but can also lead to confusion.

A case for this assertion can be found with regard to the concept of multimodality, a concept championed by The New London Group (1996) and intended to encapsulate meaning-making (i.e., semiosis) across different modes. The definition of mode, just like media, is loose enough to be wide-encompassing. In their manifesto, the New London Group also proposes six different forms of meaning-making, reminiscent of Suhor’s (1984) semiotics-based curriculum: ‘Linguistic Meaning, Visual Meaning, Audio Meaning, Gestural Meaning, Spatial Meaning, and the Multimodal patterns’ (The New London Group, 1996, 65). Here, the New London Group fails to recognize that the only way linguistic meaning-making would not also be multimodal is if the definition of mode did not include the Aristotelian concept of sensory modes. This is unlikely given the fact that visual and audio are part of the listed modes. Suhor (1981, 1984) solves this very issue by proposing language as an overarching layer of meaning, as opposed to at the same level as the other meaning-making modes. This conundrum begs the following questions: Is trans-sensory content inherently multimodal from a semiotic perspective? Is trans-semiotic content inherently multimodal from a sensory perspective? Where does linguistic meaning belong, then? These questions might well have an answer but they seem to have no clear framework to tackle them. Moreover, because the concept of transmediation has been discussed almost exclusively in the humanities for the last decade and a half, an inclusive framework could aid the potential for interdisciplinary dialogue within the academic field. To illustrate this point, a simple search of publications containing the term ‘transmediation’ across disciplinary categories within the Web of Science (https://www.webofscience.com/) yields the following results: Education Educational Research 31.081%, Communication 20.270%, Language Linguistics 13.514% Linguistics 9.459%, Film Radio Television 8.108%, Cultural Studies 6.757% Humanities Multidisciplinary 6.757%, Literature 5.405%, Art 4.054%, and Computer Science Interdisciplinary Applications 2.703%.

Kinds of transmediation

Although it is difficult to pinpoint an exact moment for what is known as the semiotic turn in Education, there are events that could be identified as framing this phenomenon between the late 1970s and early 1980s, coinciding with, among other things, the early development and popularization of the Graphical User Interface. Chang (1984) suggests the publication of The time of the sign (MacCannell and MacCannell, 1982) as a representative of that then-new ‘examination for language and meaning’ (Chang, 1984, 317). MacCannell and MacCannell’s book’s preface opens with these lines: ‘This book is about the recent rapid development of semiotics as a body of ideas and techniques for the social sciences and humanities. It is equally a response to some changes which are happening in the “real world.” We think the two are related’. (1982, xi)

The authors see semiotics as a post-disciplinary approach that is not opposed but rather complementary to language: ‘Semiotics honors and mirrors language, restoring its centrality in culture. But it also transcends language, going beyond it as the final cause of meaningful existence. By the continuous and always subversive intrusion of the question of the sign, in which subject, object, and interpretation are fused, semiotics can liberate meaning from language, and vice versa, relieving language of some of its overheavy cultural burdens’. (1982, 16)

The prominence of semiotic-informed approaches in education increased during the 90s with the need to address the ‘real world’ changes that the internet brought to the field of education and it has not diminished since. The introduction and popularity of the concepts of multiliteracies and multimodality during the mid-1990s attest to this (The New London Group, 1996). The changes, of course, have not stopped either. In the last few years, several notable technological trends have rapidly emerged. Some of these trends will invariably have or already have had an impact on several aspects of education in the near future. The commercialization of virtual reality technology, the reemergence of the concept of the metaverse outside of gaming, the popularization of blockchain technology and non-fungible tokens and the increasing interest in the concept of glitch in various disciplinary areas (e.g., Peña and James, 2016; Russell, 2020) are all phenomena that could be studied exclusively from a semiotic perspective, but given their inherently liminal nature, would benefit from other comprehensive cognitive tools besides sign systems. We argue that transmediation, a concept that has always been supported by semiotics, could be one of those tools. However, in order to account for the liminality of the above-mentioned phenomena, we propose to expand or, rather, explicitly account for all the ways in which transmediation has been historically used until the present day. To achieve this, we propose three categories of non-exclusionary transmediation: sensory, semiotic, and signal transmediation.

Sensory transmediation

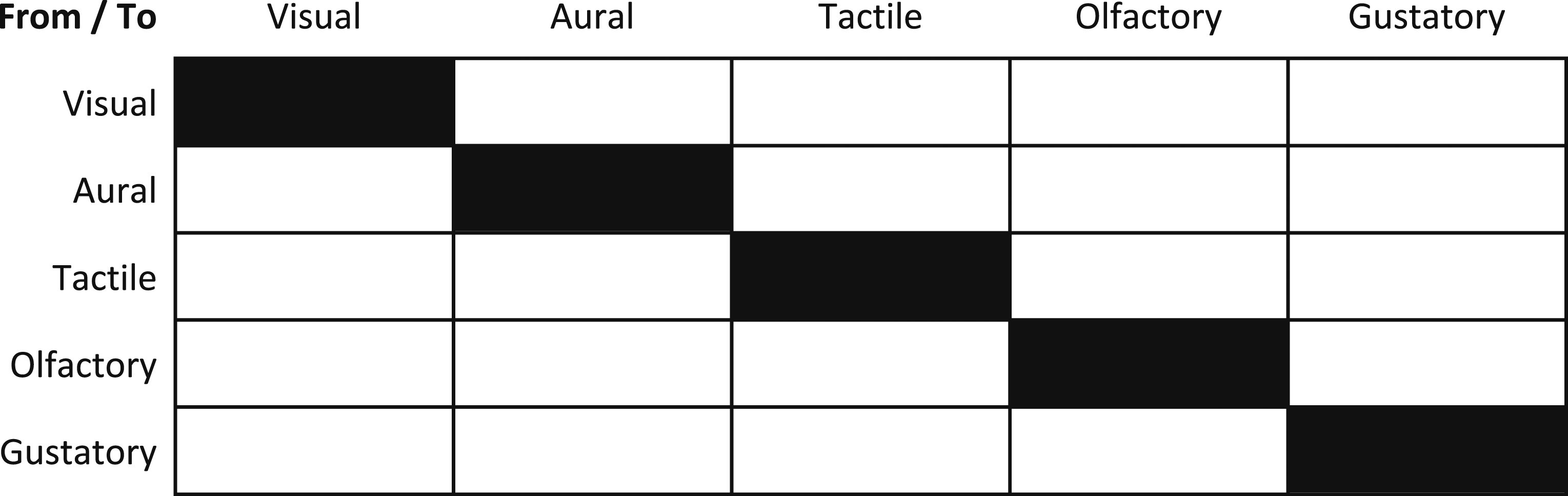

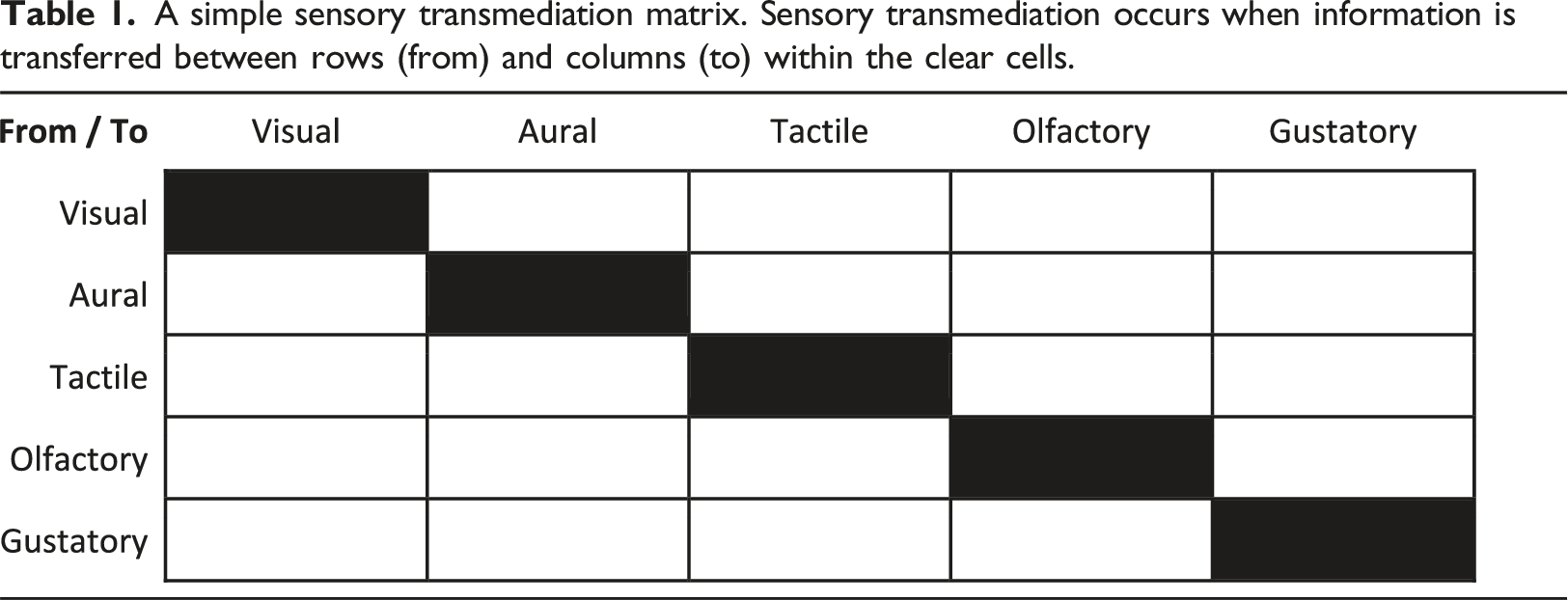

A simple sensory transmediation matrix. Sensory transmediation occurs when information is transferred between rows (from) and columns (to) within the clear cells.

Semiotic transmediation

This form of transmediation results from understanding semiotic systems as media. As mentioned above, these different forms of transmediation are not self-exclusionary: Sensory and semiotic transmediation can both occur within one single phenomenon. Following one of the examples mentioned above, whether reading out loud from a written text is semiotic transmediation or not would depend on whether phonemes and graphemes are considered independent and unique semiotic systems. Alternatively, ekphrasis would involve semiotic transmediation but not necessarily sensory (visual to visual), unless an ekphrastic (written) poem is created in response to a musical piece, for example (aural to visual). Understandably, this form of transmediation is the most commonly discussed in the field of education since the semiotic turn. In contrast with sensory transmediation, semiotic transmediation does not have a discrete number of variables, so there is no simple way to assess whether semiotic transmediation occurs or not. This is why using sensory channels to categorize semiotic events has proven to be an imperfect model.

Signal transmediation

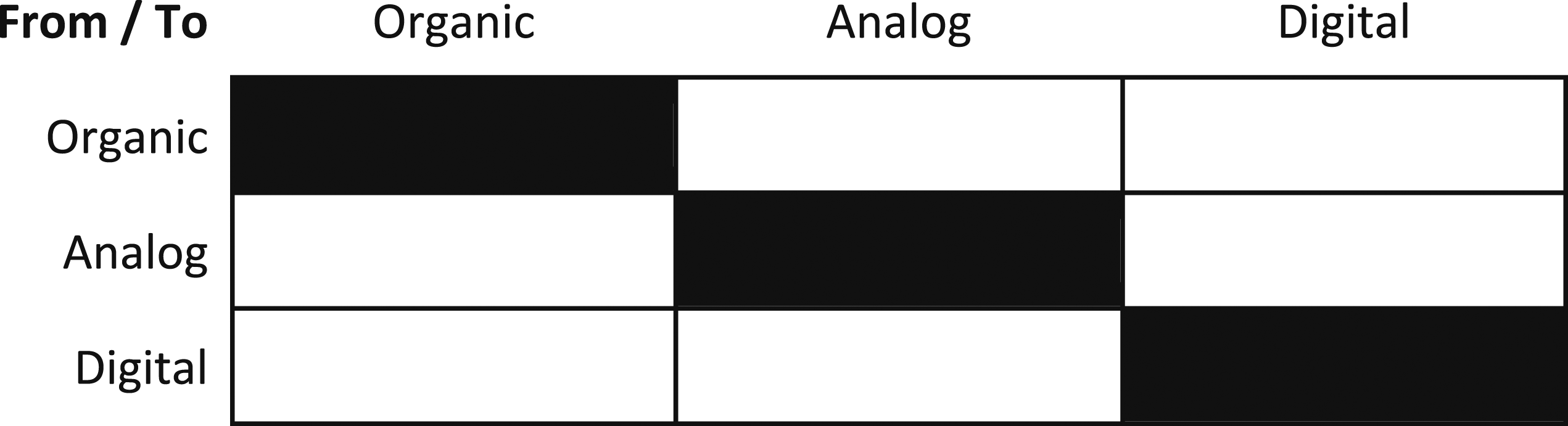

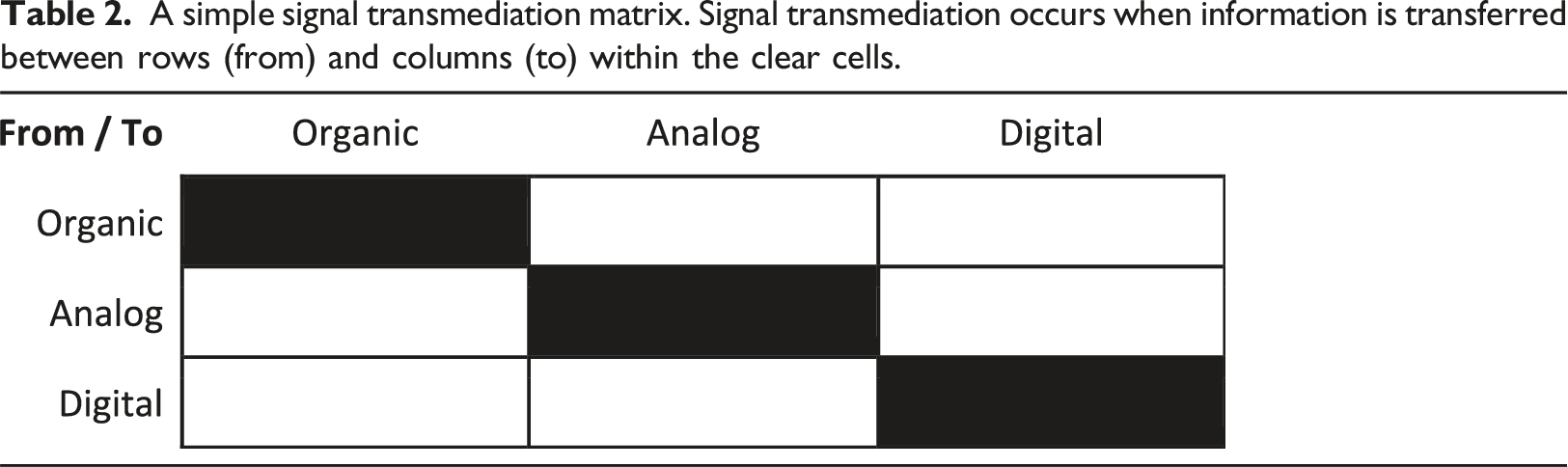

A simple signal transmediation matrix. Signal transmediation occurs when information is transferred between rows (from) and columns (to) within the clear cells.

Although potentially useful and interesting, providing a nomenclature for transmediatic phenomena is not the goal of this framework but merely a necessary step towards its two-fold purpose: On the one hand, we want to acknowledge recent and ongoing technological developments that might require new cognitive tools for their discussion, such as this framework. On the other hand, more than identifying when transmediatic phenomena occur, we think it is imperative to engage in discussions about the outcomes of transmediatic phenomena and their implications for education and other disciplines. The idea would be to articulate what is gained, lost, replaced, or transposed through transmediatic events (a sort of quadripartita ratio). Below, we introduce a series of examples of how our own explorations have led us to use transmediation to unearth phenomena and elicit discussions.

Raw data transmediation: A medley

Databending is a glitch art practice that can be traced back to the mid-1990s, when early practitioners discovered that simply changing the extension of a file name from a natively graphic one (e.g., *.bmp) to one intended exclusively for text (e.g., *.txt), gave them direct access to the encoded pixel information. Having access to the data in this format allowed artists to engage in one of the earliest (if not the earliest) manifestations of inherently digital (software) aesthetics (Fuller, 2008), where the aesthetic outcomes are not simulacra of traditional art but rather unique to digital platforms. Although this form of databending (image to text) was exclusively a semiotic transmediation within the visual channel and digital signal, databending can also involve sensory transmediation when the process is done through audio editing software (e.g., Ahuja and Lu, 2014) and even signal transmediation (more on this later). Recognizing the potential of glitch to provide insights into the functionality of systems otherwise ‘concealed inside the gray/white/beige box that covers the cards, slots, motherboard, and wires’ (Fuller, 2008, 123), by subjecting them to unexpected inputs through diverse forms of transmediation, we started an exploration process that has continued until now. Some of these early explorations were compiled and published under the umbrella of Glitch Pedagogy (Peña and James, 2016).

Visual music

In more recent explorations, we have seized the inherently transmediatic nature of digital data to uncover common patterns between the aural (i.e., samples) and visual (i.e., pixels, text) expressions of such data (Peña et al., 2020). These exercises in sensory and semiotic transmediation within digital signals have led to the possibility of creating musical pieces from raster editing software (i.e., Photoshop, Gimp) and creating visual pieces from aural inputs using open access software (i.e., Audacity). If it is true that the resulting pieces can be aesthetically interesting, we think the process of interrogating sensory outcomes from a non-native sensory channel through transmediation is more valuable. This approach has allowed us to interrogate notions of canonical music by enabling the creation of tonal relationships based solely on arithmetic correlations. These correlations have proven to be easier to instill via two-dimensional graphics. Additionally, by creating a semiotic bridge between visual and aural media, we invite conversations about mixed literacies that have not been afforded simply because there has been no context for it. Moreover, focusing on sensory transmediatic events and methods independently from semiotic events also represents a pushback against potentially ableist notions that all forms of meaning-making are somehow attached to specific sensory channels.

Data visualization/auralization

The same processes and methods that have allowed us to engage in the production of transmediatic pieces (both visual and aural) have also allowed us to visualize the incommensurable sonic qualities of objects and surfaces. In Transmedia: an improvisualization (James et al., 2020), the authors describe the process of transferring a sonic artifact created from an image (sensory transmediation: visual to aural) between two computers using a transducer speaker placed on surfaces of different materials properties. The sonic artifact is recorded by the environmental microphone of the second computer, redigitizing the signal (signal transmediation: digital to analog, analog to digital). The sonic artifact then gets reconstructed as an image (sensory transmediation: aural to visual) and compared to the original visual artifact. The differences between the two artifacts would represent the sonic qualities that the transducer speaker incorporated from the surface where it was placed and the environmental sound of the room. By using transmediation to create similar conditions between events of a different nature, this experiment allowed the authors to objectively compare qualities in sensory stimuli that have not been explored in this capacity. Examples of semiotic transmediatic approaches to comparative visualization can also be found in previous work (Peña et al., 2017).

Provotyping and new materialism

This approach has also allowed us to create transmediatic provocative types or provotypes. Provotypes ‘expose and embody tensions that surround a field of interest to support collaborative analysis and collaborative design explorations across stakeholders’ (Boer and Donovan, 2012, 388). These tools are often used as resources for collective creation and discussion by directing the attention of the participants towards an artifact. Provotypes are common in the toolkit of speculative design, which focuses on the solution to future problems that might fall within a certain scope of possibility (Dunne and Raby, 2013). By digitizing a currency bill, transmediating it into an audio file and making it available for streaming (sensory, semiotic, and signal transmediation), we generated a provotype that invited an open conversation aligned with current discussions on new materialism (e.g., Gamble et al., 2019). Questions such as whether the conventional value of the currency bill is attached to a particular sense, semiotic system or signal; whether the production of the provotype itself is legal or not, or the implications of exposing others to a file that could represent a violation to the law even if its original form is completely lost (Takeda and Peña, 2019). When trying to post the reconstituted image to Bandcamp, along with the sonification as a song, the image was rejected as an unlawful image, but the ‘song’ was not. Despite the extensive security measures used in currency to prevent forgery of material bills, even digital images of currency are seen as unlawful. The same data, represented sonically through signal/sensory transmediation, is not.

Another example with a specific art/pedagogical/research intention, is the software developed at the Digital Literacy Center (University of British Columbia) called Singling. This software takes literal text and converts it into MIDI (musical instrument digital interface) code based on user choices for a wide range of semantic and syntactic transformations into musical parameters, ranging from instruments to note durations, envelopes, tempos, keys, and so on (James et al., 2021). It can function at multiple scales of transmediation, sonifying individual letters, or words and meanings, phrases or whole sentences thus from elucidating textual dynamics of either short statements or large textual corpora. Drawing on Stanford’s Natural Language Processing and Princeton’s Wordnet, with the companion Sentinet (for sentiment analysis) algorithms, it enables listening to structures and general meanings of linguistic utterances from a visceral, musical perspective, engaging the listener in their aesthetics of aural perception of sound and revealing patterns of their own or others writing without dialogical distraction. As such, Singling facilitates all three forms of transmediation: sensory (visual to aural), semiotic (linguistic to musical), and signal (.txt to .mid file, and on playback, digital to analogue) formats. The incorporation of speech to text algorithms, means that signal transmediation can take place twice within the processors, from organic to digital and from digital to analogue. By doing so, the user is made explicitly aware of dynamics and structures within language that are otherwise unconscious and implicit within language use. Hence it can benefit language learners, those who are blind or visually impaired, performing writers and sound artists, or researchers working with qualitative data, without restricting them to a formulaic output or experience, as the user not only learns about language, but also about music and sound, discovering their own preferences through creating preferred sonification presets (which can be saved for later re-use).

The examples presented here might seem sophisticated but are not intended to be exemplars of transmediation as a pedagogical approach. With this framework, we attempt to rescue the concept of transmediation as focused on the liminality between two different conditions (either sense, semiotic system, or signal) and the processes involved in the transference of knowledge, opening the possible pedagogical applications to a wide range of possible uses and applications. Transmediation should invite learners to reflect on and discuss what happens in the boundaries between meaning-making systems and because of this, a seemingly banal activity such as digitizing a document (signal transmediation), should be sufficient to elicit inquiry. In previous work on glitch pedagogy, the authors provide strategies such as glot swapping, stitch skipping, and voice vaguing to demonstrate how seemingly banal activities, when combined with open access algorithms, allow even young children to explore the digital underbelly of systems in which they are immersed and through which they acquire understanding through creative play that gives incommensurate input and thus exposes the normative and normalizing constraints of automated (digital) environments on most contemporary learners.

Transmediation in a pedagogical context is commonplace, yet seldom is it identified as such, nor is it the subject of explicit teaching; often, however, it is a part of a devised curriculum that accommodates differentiated learning and learning styles. Offering students the opportunity to shift from linguistic modes of response to drawing, for example, is frequently employed by teachers especially with struggling or reluctant writers. Even within the Process Writing movement of the 1970s and 80s, a range of activities were subsumed under the stage in the process typically referred to as ‘pre-writing’. These include but are not limited to mindmaps, graphic organizers, sketching, peer discussions, and can go farther into embodied expression through tableau, physical enactment or dramatization, mapping and diagramming, and so on. At this stage in expression, the goal is to have students both plumb their own knowledge and also to not over-commit to initial ideas. As Sharples writes in An Account of Writing as Creative Design: ‘As a writer’s thoughts become externalised in sketches, notes, drafts and annotations, so these designs become source material for the iterative process of interpretation, contemplation and re-drafting. Writing is not an isolated mental activity, but is closely linked to other creative design tasks such as drawing and music composition’. (1996, 2)

These different approaches to traditional linguistic tasks were intended to make learners more aware of the repertoire of possible routes to meaning-making. The advent of digital technologies has greatly expanded the range of possible transmediation activities available to teachers and learners. And yet, at the same time, digital technologies facilitate transmediated expression at the expense of users’ awareness of underlying decisions being made for them rather than by them, creating a black box of operations not understood by the user, and often creating formulaic products that are branded, even in certain cases having the logo of the software company permanently associated with the output.

By extending the transmediatic activities used in learning through techniques of glitch pedagogy, there is an added dimension of metacognitive learning that takes place. As Sharples (1996) noted, ‘tools become apparent to their users when there is a breakdown in normal activity’ (11). ‘Some breakdowns display layers of embedded systems, down to the level of the computer operating system. Dealing with breakdowns of all kinds is an integral part of the learning process and one aim of recent research in writing has been to look beyond the untroubled flow of words…Writing as design shows the writer as a user of tools. These support the writing process, by providing a means to express plans and ideas as they occur, but the tools themselves may be contexts for other types of cognition and action, from displacement activities to breakdowns in which the tool rather than the writing becomes the focus of attention’. (Sharples, 1996, 12).

As ‘writing’ becomes increasingly a transmodal activity, students need to be aware of how different media and modes ‘blend, shape, and reshape each other in different ways, and consequentially transform the “shape” of an emergent interactional product’ and its related meanings (Murphy, 1969, 2012).

We propose that the full potential of this kind of learning can best be realized when a structured approach to such activities is used, one in which the learner is aware not only that multimodal (additive) or transmodal (interpolated) forms accrue and occur in the process of meaning-making with beneficial results, but that transmediative activities interrogate specific sense, sign, or signal systems and afford insight into how they correlate with larger systems (e.g., legal) and disperse intention and authorship (e.g., collaboration with others persons or algorithms), enhancing critical interpretive abilities (McCormick, 2011). These appear to be important understandings going forward, particularly in light of the increasing use of artificial intelligence and bots across domains of interaction with digital media. One recent example of social consternation over AI transmediation algorithms regards the use of AI to produce visual art. Indeed, many galleries and online artist venues now forbid the use of AI generated art, as the artists role has become one of providing textual instructions to the generative algorithm, which creates a graphic representation of those words based on its database of human-generated artworks. This revises our organic notion of craft and talent ― the work of the artist (i.e., artwork) ― and takes us further into the post-human transmediatic moment than many are willing to venture. But it is only the latest of these technological interventions of artificial creativity, the live band replaced by the guy with a laptop sequencer, and so on. At risk is the idea of human uniqueness. Hence, we might find our aesthetic needs are artificial if our means are. There are many instances of transmediative AI serving aesthetic needs that have been adopted by youth culture, perhaps the most common being the use of music streaming sites (e.g., Spotify) which base song selection on user-entered generic preferences and clicking ‘likes’ (visual-linguistic actions), which are then cross-referenced with other users data to generate aesthetic profiles of individual listening preferences. Some listeners will have colorful audio-reactive visualizers preoccupy the screens of their devices when they are only using the device to listen to streaming audio, given that no interaction is required when streaming is in progress.

As above, in all these instances of transmediation, the user serves an increasingly facile role, one which can provocatively be investigated using glitch pedagogy. Although the attention economy that capitalizes and drives these technological innovations relies on its user-base, the users require no specialized knowledge or training. In most cases, that knowledge is proprietary. Even when it is not, for example a user may want to know some information about a particular song they hear when streaming audio, acquiring the knowledge requires conscious effort, and the information search they engage in will likewise be an interaction with capitalized, AI search engines. They may, for example, use transmediative software like Shazam to identify the song and artist. They might search for music videos by the artist on YouTube, and so on. Each stage of their search relies on (and contributes to) a pre-existing database, which itself relies on the conformity of information stored in the database (file formats, consistent categories, all coded endpoints returning prescribed types of data), and all of which is hidden from the user except for the surface level presentation of fully transmediated and conforming types of information.

We are not opposed to such technologies: by analogy, the old-school calculator assists people to do complex equations quickly. But it helps to know something about math, even when the calculator does the hard work. Glitching calculators was a useful lesson for students, especially when they assumed that whatever answer it gave them was automatically correct. To do so, the teacher could take two different calculators and do progressive square roots of the same number. Depending how many decimal places the calculator’s processor used, the two resultant answers would quickly start to diverge. Ten processes later, the answers would be quite different as the rounding ‘errors’ accrued. The same pedagogical approach is rewarding for teachers who wish to help students understand any of the three categories of transmediation explored in this paper. Giving strange or poetic suggestions to an AI artwork generator, purposefully misspelling words in a search engine or misidentifying captcha images, and so on, can lead students on a path of discovery about both the language/signs they are using and the bots they are interacting with. Close analysis of the results can also be revealing, as has been the case with looking at human hands as rendered by AI visual art generators (Foley, 2022). Idiosyncrasies of interpretation in the process of transmediation are still prevalent and can help us appreciate the dialogical exchange we engage in daily with artificial intelligence. We become aware that error is a critical component of almost any system, and through such errors systems evolve and reveal themselves. The human mind is capable of forging important connections and associations when faced with disparate outputs and on a metacognitive level, this is crucial to understanding our worlds and how they are constructed, reducing the assumed correctness of any system to a series of prescribed choices that are not necessarily always correct, regardless of whether the systems is social, biological, or technical in origin. The over-facilitated user is given a glimpse of their own agency within an invisible system, and thus, their own responsibility in the normalizing of the products of those systems, which, in certain cases, are the users themselves.

Transmediation has become a dominant semiotic process in today’s world, one which is often taken for granted. This framework of sensory, semiotic, and signal transmediation provides a structured way of understanding an otherwise complex and often invisible but ubiquitous function of meaning-making in contemporary society. Understanding these functions as something more than additive layers of meaning, something which opens up an interpretative repertoire of sense, sign, and code comprehensions to cross-reference them, means helping students to restructure their thinking and understandings within obdurate and impenetrable systems that may not serve the best interests of peoples and the planet as a whole. Thus, transmediation within a pedagogical framework has the potential to contribute to critical thinking and producing a more literate and metacognitively astute citizenry.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.