Abstract

There are at least two perspectives concerning the role of digital and connected media systems in relation to individual agency. One suggests that individuals have a gain in agency while using digital devices and services while other approaches see that users become dependent on tech companies that use data flows to analyse and manipulate user behaviour. In our paper, we want to examine empirically how those two descriptions of agency work out and relate to each other. We use conversation analysis of face-to-interface interactions with smart speakers and intelligent personal assistants to examine the agency in front of the device and we rely on interviews with smart-speaker users to understand the users’ strategies to curtail the potentially unwanted effects of smart and connected devices and services. With reference to concepts of ‘co-operative work’ and ‘cooperation without consensus’ and a discussion of media and data practices, this paper elucidates how the two agencies of users and of device and service providers are intertwined and distributed.

Keywords

Introduction

Smart speakers equipped with intelligent personal assistants (IPAs) – such as Amazon Echo with Alexa as the most popular version – are being used in an increasing number of households. In 2021, 35% of US households were equipped with smart speakers (NPM, 2022: 5), with similar numbers in Germany (Statista, 2022). They work by permanently listening for their activation or wake word. Once activated, the recorded audio is relayed to servers where the audio is analysed as text and the text is automatically analysed through Natural Language Processing. In this regard, the permanent listening is, on the one hand, taken for granted by users – the smart speaker would not work as comfortably without it. On the other hand, it is also controversial because it is not clear how and for what purposes the recordings are processed by service providers and third parties and how this might affect the everyday life of users. Thus, it seems that there are two forms of the distribution of agency between users and devices. At home, humans give ‘voice commands’ to ‘digital assistants’. This suggests that, here, human users remain in control. However, once the command is translated into digital signals and out the door, the narrative changes. It seems that, on the macro level, the control is with digital platforms, tech companies, and impersonal algorithms.

These two perspectives on digital media and agency – one rather optimistic focusing on local accomplishments of user agency and another rather critical examining the exploitation of user activities through companies – are related to two different methodological and theoretical research approaches which are rarely combined. Our contribution, however, seeks to jointly discuss these two approaches based on empirical research and on a discussion of concepts of cooperation as developed by Susan Leigh Star (1993), Charles Goodwin (2018) and others. We argue that the practical cooperation of users invested in talking to the device and of companies invested in using the data for economic advantage is based on a combination of media and data practices in cooperation without consensus. Users and companies cooperate in producing data although users have little knowledge or say about the use of said data on the companies’ side. Users relate to smart speakers as media, through media practices, while companies relate to these devices as data-producing, via data practices. In this way, users are usually not aware of the concrete ways in which data are used to help produce media practices.

In elucidating the practical entanglements of users’ and companies’ activities through data, the paper relates to a scholarly debate on users’ agency vis-à-vis corporate power (cf. e.g. Silverstone and Hirsch, 1992) more general and in relation to processes of datafication (Van Dijck, 2014; Burkhardt et al., 2022) and algorithmisation (Willson, 2017).

After a discussion of the concepts of agency and cooperation in light of digital media infrastructures, we want to analyse smart-speaker use with two sets of interrelated empirical data, that is, audio- and video-recorded interactions with smart speakers and interviews with smart-speaker users. The first data set will show that the face-to-interface interaction in question is less unidirectional and straight-forward than the term ‘voice command’ suggests. This holds especially in use cases where more than one person is using the smart speaker. Agency has to be established not only between the user and the smart speaker, but also between multiple users. The second set of our analysis details how users understand the companies’ strategies and how they try to prevent giving up too much of their agency. Eventually, we want to conclude by discussing the empirical findings with reference to concepts of agency and cooperation as well as media and data practices.

Agency in interaction with smart speakers

In this paper, we understand agency as a practical achievement which has to be accomplished locally and situationally. It is not a trait or a quality of a given subject or object, but it is produced in the interaction and in a situation. In this vein, also the affordances (Hutchby, 2001) of a technical or media object, its potential to afford agency, have to be produced or evoked locally to be relevant. Here, smart speakers can be seen as ‘boundary objects’ (Star, 1993) which relate between the local interaction and the entangled corporate data practices.

Agency is a problem central to the humanities. In a very basic way, with reference to Giddens (1984: 9), who in turn quotes the Oxford English Dictionary, one could say that one of the central dimensions of agency relates to questions of ‘power’; agency is based on the ‘capability of doing […] things’. A perennial discussion in social theory debates how this capability has to be defined and what its objects and counterparts are. Usually, agency is thought to reside in the human subject which in turn is constrained in its action by social structures. Many schools of thought in social theory have stressed the interlocking of agency and structure (cf. Bourdieu, 1984; Giddens, 1984). While this debate discusses agency in relation to social norms, conventions and institutions, there is a similar debate in media studies and the sociology of technology which looks at the nature of agency in relation to technical objects and media. Agency, here, seems to be endangered by complicated machinery that seems to acquire a life of its own, especially in times of the immersion of smart devices and artificial intelligence in everyday life (cf. Wessels, 2013). Rammert (2008) notes that agency becomes ‘distributed’ when algorithms make using devices more closely resemble human interaction; digital devices and services react in a different way to human input than, say, a hammer or a bicycle does. Smart speakers do this in a more fundamental way than other forms of media. As Natale and Guzman (2022) argue, digital interactive media like smart speakers and chat bots ‘go beyond transmitting and influencing the form of human messages – they generate messages’. In this way they ‘expand the role of technology within communication beyond that of a mediator to that of a communicator’ (Natale and Guzman, 2022: 630). Smart speakers, as well as other ‘communicative robots’ (Hepp, 2020a) thus pose even greater theoretical and practical problems to agency than older forms of media and technology. We do not want to jump to conclusions based on the particular interactive quality of smart speakers. Instead, we want to follow Natale’s and Guzman’s suggestion to study how the media’s ‘affordance’ (Hutchby, 2001) or encumbrance of agency ‘emerges in interaction and relationship with humans and their cultures’ (Natale and Guzman, 2022: 628). In doing so, we want to apply a praxeological and interactional approach to agency. In line with social theories invested in pragmatism, interactionism and ethnomethodology, we suggest that agency is a practical problem (Krummheuer, 2015). It has to be enacted in concrete situations by using means at hand – be it material goods, knowledge, or frames of reference and evaluation (cf. Goodwin, 2018). From this point of view, agency and affordances are not seen as structural potentials and qualities inherent in individuals or objects, but as potentials and qualities that relate to specific encounters of individuals and objects (Pentzold and Bischof, 2019; Nagy and Neff, 2015). We understand agency to be negotiated because this highlights the processual and collective aspects of agency. That is, how smart speakers relate to the agencies of actors involved remains a practical question which can be observed empirically. Practical media use is dynamic and has a material, mediatised and affective dimension. While users develop routines in dealing with the devices and therefore know which interactions succeed and which mostly fail, one could say that it has to be decided anew in every situation if agency is pertained by the individuals or ceded to the machine. In this sense, agency is negotiated between user and machine, as well as between different users in front of the machine. This view of the ‘micro-social order’ is based in ‘methodological situationalism’ (Knorr Cetina, 1988) and informs the first part of our empirical analysis.

While users and smart speakers can be seen to co-produce agency, solely focussing on the interaction in the living room misses out on important aspects of digitally networked media use. It seems that the classic dichotomy between micro and macro approaches and, accordingly, between individual agency and structural limits to it reappears when dealing with digital media and smart speakers in particular. In front of the smart speaker, it is the user who initiates an interaction by voicing a command which in turn is executed by the machine. From this perspective, users are in command because they give commands. However, when considering the embeddedness of smart-speaker use in everyday life and concrete situations, it is not only the user and the device which are relevant, but other potential users, other objects (and connected devices), items and ideas that have a say in what happens.

In addition to the entanglements of users and smart speakers in the situation of use, IPAs are connected to data centres which analyse most of the voice commands and allow the system to respond. Thus, data that can be analysed for usage patterns, preferences, voice profiles etc. is shared with device and service providers and potential third parties (which, e.g., provide applications used with smart speakers or smart-home devices). As the data, and the analysis it is based on, can be easily copied, stored and shared due to its digital nature, users give up this information and lose control over it. The second part of our empirical analysis will detail how users deal with this potential for data and agency loss.

In this view, companies that sell smart speakers and provide the infrastructure for their continued use – through servers, AI software, integration of third-party services etc. – can be seen as ‘corporative actors’ (Hepp, 2020b: 10) with an agency of their own. As collective agents, the companies behind the IPAs use the data collected during smart-speaker use to enhance the capabilities of the IPAs, but also to train speech-based AI that is used and rented out to other clients (cf. Crawford and Joler, 2018), e.g., in call centres (cf. Volmar, 2019). Whether this commercial strategy is criticised as extractivism (Sadowski, 2019) or digital colonialism (Couldry and Mejias, 2019), this theoretical debate focuses less on users as subjects with their own agency but on users as unpaid labourers who provide data while using paid or free services and who are manipulated through nudging, ads and interface design; IPAs are seen as the latest frontier in capital’s ongoing data harvesting (Turow, 2021). From this point of view, it seems that the companies behind the services and devices are in control. Their agency is enhanced in a monetary way, by profiting of the sale of data or the placement of personalised advertisement on their platforms; it is also enhanced by targeting the user of smart speakers through data analysis, as suggested by Zuboff’s (2020) term of ‘remote control’. It is hard to ascertain how this actually is taking place as companies are secretive about their technical complex data use. It is also hard to ascertain on a financial level as all smart-speaker providers also make profits from other avenues. As such, we suggest that the overall economic growth and returns of companies like Amazon, Apple, and Google can be seen as an index of agency and the power for ‘doing […] things’ (Giddens, 1984: 9) through the profiteering of its data practices. While taking earnings or revenues as an index for agency contrasts the micro-sociological approach we are taking in this article, analysing these corporate practices on the side of companies is beyond the scope of this article. We, however, call for studying the data use of said companies on a micro level to also gauge how sense, meaning and economic value is produced situatively. Eventually, research should come up with a trans-situative analysis (Scheffer, 2013) of the entanglement of human and automatic sense and value making in smart-speaker use.

The theories on digitalisation mentioned above are not only part of the scholarly debate on digital media, but have a certain salience in public discourse, too (cf. Zuboff, 2020). It is commonly understood that, after a time of hope for positive change through digital and connected media, the last few years have given rise to a rather wary and gloomy outlook (cf. Lovink, 2019) and to legal efforts to curb the power of online platform and online service companies. Studies that compare smart-speaker users to individuals who prefer not to use smart speakers show that the latter are concerned about said data breaches and a loss of privacy (Lau et al., 2018). As such, users have to relate their own use of smart speakers with the potential loss of control over their data and its consequences.

In the following, we suggest not to take sides in the theoretical debate on whose agency is more important or trumps the other one eventually. Instead, we suggest studying the accomplishment (Garfinkel, 1967: 32) of agency as an empirical problem for users. They have to come to terms with the device in its everyday use as well as the data practices of the companies. Thus, we will look at how agency is realised in the practical use of devices, and how users understand and try to maintain their agency vis à vis the data infrastructure and data practices of IPA providers and third parties. Eventually, this suggests that agency is not a zero-sum game; instead, both sides can have more agency through the cooperative use of smart speakers, even though we agree that companies, in the long run, have potentially bigger gains in agency.

Smart speakers, data practices, and cooperation without consensus

Research on IPA use has followed the dichotomy mentioned above by studying either the interaction in front of the device or the background operation of the smart-speaker infrastructure and its evaluation by users. On the one hand, research has dealt with users’ agency vis à vis the device or the companies’ agency and its curtailment through users. Concerning the interaction in front of the device, studies have shown what topics and commands users actually use (Ammari et al., 2019). They have also highlighted how smart speakers are integrated into everyday conversations in the household (Porcheron et al., 2018). On the other hand, research into the actual workings of the IPA infrastructure has examined if there is evidence for actual eavesdropping when the device is not (accidentally) activated (cf. Ford and Palmer, 2019). Also, more and more literature deals with users’ understandings and perceptions of privacy and data security (e.g., cf. Mols et al., 2022; Lutz et al., 2020; Lau et al., 2018).

Still, hardly any research combines these two fields. Thus, there is limited insight into how those two sides of the device use are connected. If ‘methodological situationism’ (Knorr Cetina, 1988) is taken seriously, we have to look at all sides of the interconnected situation. As established above, we are interested in how users and companies interact with each other and how this affects their respective agencies. They interact without necessarily sharing the same goals and without knowing much about the participants on the other side of the voice-user interface (VUI). However, they still work with material that has been provided or that has been reworked by the other side. We propose to study these phenomena using the term cooperation. This might seem counter-intuitive as cooperation is usually understood to imply shared goals of the participants – as in the ‘cooperative’ as an economic organisation. Theoretizations that have their roots in ethnomethodology (Goodwin, 2018) and the Chicago School approach to ‘social worlds’ (Strauss, 1978) have emphasised that consensus is not necessary for cooperation. On a micro-analytical level, Goodwin’s notion of ‘co-operative action’ uses the now obsolete spelling to signify that cooperation is necessary even in conflict and that individuals use and reuse linguistic and other material provided by other parties. Goodwin’s linguistic example is one of an exchange between two children where ‘Tony’ asks ‘Chopper’: ‘Why don’t you get out of my yard?’ And ‘Chopper’ replies: ‘Why don’t you make me get out of the yard’ (Goodwin, 2018: 3). Chopper here re-uses parts of the original phrase to counter a taunt. That is, he subverts the original meaning of the phrase by recomposing its elements into something new. Tony and Chopper are at odds with each other, but they cooperate in establishing and potentially resolving this opposition in some way. This linguistic perspective interests us particularly as smart-speaker use is based on spoken words.

However, the example does not fit our case well as we suggest that there are two parties who potentially gain in agency; Tony and Chopper might not be able to resolve their conflict in that way. Also, in the example given by Goodwin, the complexities of interaction are fairly low as we can assume that the point of contention is known to both parties. Susan Leigh Star, in turn, has examined numerous cases of ‘cooperation without consensus’ (Star, 1993) in more complex organisational settings. Here, the consensus is lacking because a shared knowledge is missing or because there are differing interpretations of what a situation is about because participants are members of different social worlds. Star argues that cooperation in this case is possible because ‘boundary objects’ facilitate the interaction which can be understood and used in all participating social worlds because they ‘are objects that are both plastic enough to adapt to the local needs and constraints of the several parties employing them, yet robust enough to maintain a common identity across sites’ (Star, 1993: 103). One could argue that media in general – infrastructural media such as public transit and public media such as television (cf. Schüttpelz 2016) – are such boundary objects. Types of boundary objects mentioned by Star include standardised (paper) ‘forms and labels’, ‘repositories’ such as libraries, ‘terrain with coincident boundaries’ and ‘ideal type[s]’ (Star, 1993: 104–105). In this vein, smart speakers can be seen as boundary objects because they operate in the home as well as part of the company’s networked systems. They make sense in two social worlds and they can only operate if they do so.

In addition, the smart speaker is not only a boundary object like a paper form, but it is reactive and is addressed as a device that is able to speak and to give answers. That is, to a limited extent, regardless of a cooperation without consensus – with regards to shared goals, norms etc. – there has to be a consensus, a shared reference to the situation of the interaction, to the fundamentals, or ‘infrastructure’ of interaction (Schegloff, 2006). As Lucy Suchman argues in Human-Machine Configurations, interaction relies on ‘the detection and repair of mis- or different understandings. And the latter in particular […] requires a kind of presence to the unfolding situation of interaction not available to the machine’ (Suchman, 2007: 12). In cases where machines are not able to register that their activities are in contrast to the users wishes, users break out of the communication with the device and use other means to silence or interrupt the device. One of the examples from our study detailed later indicates how users try to negotiate interaction when the device is not responding in a way that competent members of a situation would. Here, users do refrain from interaction as communication and use other means for ‘doing […] things’ (Giddens, 1984: 9) and thereby achieve certain results. On this level, agency boils down to the control of devices outside of communicative control.

The accomplishment of consensus in cooperation can become problematic on another level, too. As digitally networked boundary objects, smart speakers allow for the cooperation of the company and smart-home inhabitants because they allow users to access online services as well as connected domestic appliances through media practices; simultaneously, they turn the spoken commands into data which allows companies to analyse them via data practices. Media and data practices are interrelated as one can argue that data can only be dealt with through a medium – be it a paper strip or a digital interface. However, recent developments in digital devices – such as the smart speaker – promise users to free them of the hassle of dealing with data directly. Smartphones, for example, make it possible to access photos through an app without the need to look for the exact location of the photo files. While earlier command-line interfaces on Unix and DOS systems forced users to remember commands as well as their proper syntax, and while users of graphical user interfaces (GUIs) often need to remember where they stored relevant data, advertisements for smart speakers suggest a hassle-free use of IPAs with everyday language. Using a digital calendar via a smart speaker is supposed to work without typing in or looking up dates and information.

That is, while data practices become less obvious to users, they are central to the economic activities behind the provision of smart speakers. This level of cooperation without consensus becomes partially visible when users are confronted with end user agreements. Users have to agree to terms of service in order to use the devices while these terms obscure the extent and purposes of data analysis of the providing companies. Thus, while users formally consent, they do not necessarily agree to or know about the treatment of their data. In this sense, users cooperate without a consensus with the providers on a more profound level than in more egalitarian situations described by Star (cf. Star, 2010). It is specific to this form of cooperation that users are left in the dark intentionally. While it would probably be impossible to be completely transparent about their data practices, the obfuscation of the way data is treated and analysed inside the company is an economic strategy employed by online companies, as Zuboff (2019) makes clear in her genealogy of surveillance capitalism.

Methodology

The data analysed in the following has been acquired in an ongoing research project that combines the analysis of face-to-interface interaction with the device with interviews with smart-speaker users. In this way, it employs methods and research from empirical linguistics and media sociology. 4 This two-pronged approach is part of an interdisciplinary research project at the Collaborative Research Center Media of Cooperation at the University of Siegen. The main part of our study consists of eight German households. Our sample includes users of the three popular devices Amazon Echo, Google Home, and Apple HomePod living in various forms of household compositions, that is, older and younger couples, shared flats, families with children, partners living apart together etc. Household members recorded and interviewed ranged from students in their early twenties to pensioners in their sixties.

The analysis of the actual use of the device is based on the audio and video recordings of the installation of the device (106 minutes video material) and other use situations (12 hours audio material) that both are transcribed according to the GAT2 standard for transcribing talk-in-interaction (Selting et al., 2011) and the conventions for multimodal transcription (Mondada, 2016). For practical and data protection reasons, a continuous audio recording of the actions in the household over several weeks was impossible. We recorded the interactions with IPAs via a device called Conditional Voice Recorder (CVR). The CVR was installed at the beginning of smart-speaker use and reintroduced a few months later in order to capture an exploratory as well as a routine usage of the device. 1 We also relied on data that users were able to provide as protocol data. These are audio files of the voice user commands which are accessible via the ‘voice history’ stored in the Alexa smartphone app or the user’s Google account; Apple does not offer access to these data (for a more detailed description of this data type and its potential benefit to research see Habscheid et al., 2021). The analysis of these several data sets is carried out by means of conversation analysis and interactional linguistics (Couper-Kuhlen and Selting, 2017) as well as multimodal interaction analysis (Goodwin, 2000; Mondada, 2013) on the one hand but also with a praxeological basis on the other (cf. Goodwin, 2018; see above). Thus, we applied sequence analysis (Schegloff, 2007; Mondada, 2019) as a central procedure as it has the potential to link the interests in linguistic forms and communicative functions to the interest in media practices that are carried out in the recorded situations. The sequence-by-sequence approach analytically unravels the intertwined dynamics of a situation and hence allows us to have a closer look also at the role of technology within those. Within this framework, the linguistic details also provide insights into the practical accomplishment and negotiation of agency.

In addition to the analysis of interactions, we conducted qualitative interviews with the same research participants employing the ‘problem-centred’ approach (Witzel and Reiter, 2012). This approach allows to discuss a perceived problem with interviewees in more depth by pointing to contradictions in the interviewee’s statements. We also added interviews to enrich our data set with types of households not present in the main branch of our study. Interviews were conducted at the beginning of the smart-speaker use and after a few months of use. Due to the COVID-19 pandemic, we were forced to conduct the interviews online. The interviews were transcribed and analysed following a case-centred approach in qualitative content analysis (cf. Kuckartz, 2018).

Users’ agency in front of the smart speaker

In the following, we will examine the use of the smart speaker in the situation. We take a look at two dialogue constellations in this context: (a) the exchange between the conversational user interface and the users and (b) interactions between co-present users which happen to take place in situations which are dominated by the use of an IPA. During the first installation of the smart speaker in the home, users on the one hand address the smart speaker, but also talk to each other about the smart speaker (Habscheid et al., 2023). The following excerpts are taken from video recordings of such installations and shall demonstrate the negotiation and organisation of agency in situ. We chose to focus on such situations because they showcase conflicts about agency, that is, the control over the set-up process. This process is more volatile than the everyday usage because the technology’s placement into the home is not settled and users have to experiment with how to integrate the technology into their routines.

Before we turn to these data in more detail, we want to present a standard case of a voice user command. The following excerpt is taken from the abovementioned corpus of protocol data. These standard cases consist of a unidirectional command-answer-sequence without any further negotiation and with no repair or follow-up dialogue documented, such as in the following example:

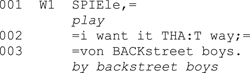

Example (1): ‘Backstreet boys’ 2

The smart speaker does not respond with spoken words but does as told. It seems that this is also the standard distribution of agency at least on the level of conversation and dialogue. Most of the entries in the protocol data follow this pattern. However, as Habscheid et al. (2021: 44–45) point out, this data type cannot show the embedding of the voice commands and the use of the smart speaker in the social situation. As we want to refer to agency as ‘managed accomplishment’ (Garfinkel, 1967: 32) from a praxeological perspective, it is necessary to take data types into account which can deal with the social situations as ‘dynamic, emergent, intertwined totalities’ (Schüttpelz and Meyer, 2018: 174). This is a condition for understanding media as a component of and as embedded in social practice (cf. Goodwin, 2018) and hence for an empirical view on agency distribution and negotiation in situ.

The above-mentioned video recordings fit with these needs and illustrate that especially in the situation of the first installation the distribution of agency is disputed. First, an example will be shown in which the negotiation is carried out between the user and the conversational interface. The participants of the following conversation – in addition to ‘Alexa’ – are two residents of a shared apartment, Alex and Lukas, students from Germany in their mid-20s. The following excerpt is taken from the setup of the smart speaker in their shared living room.

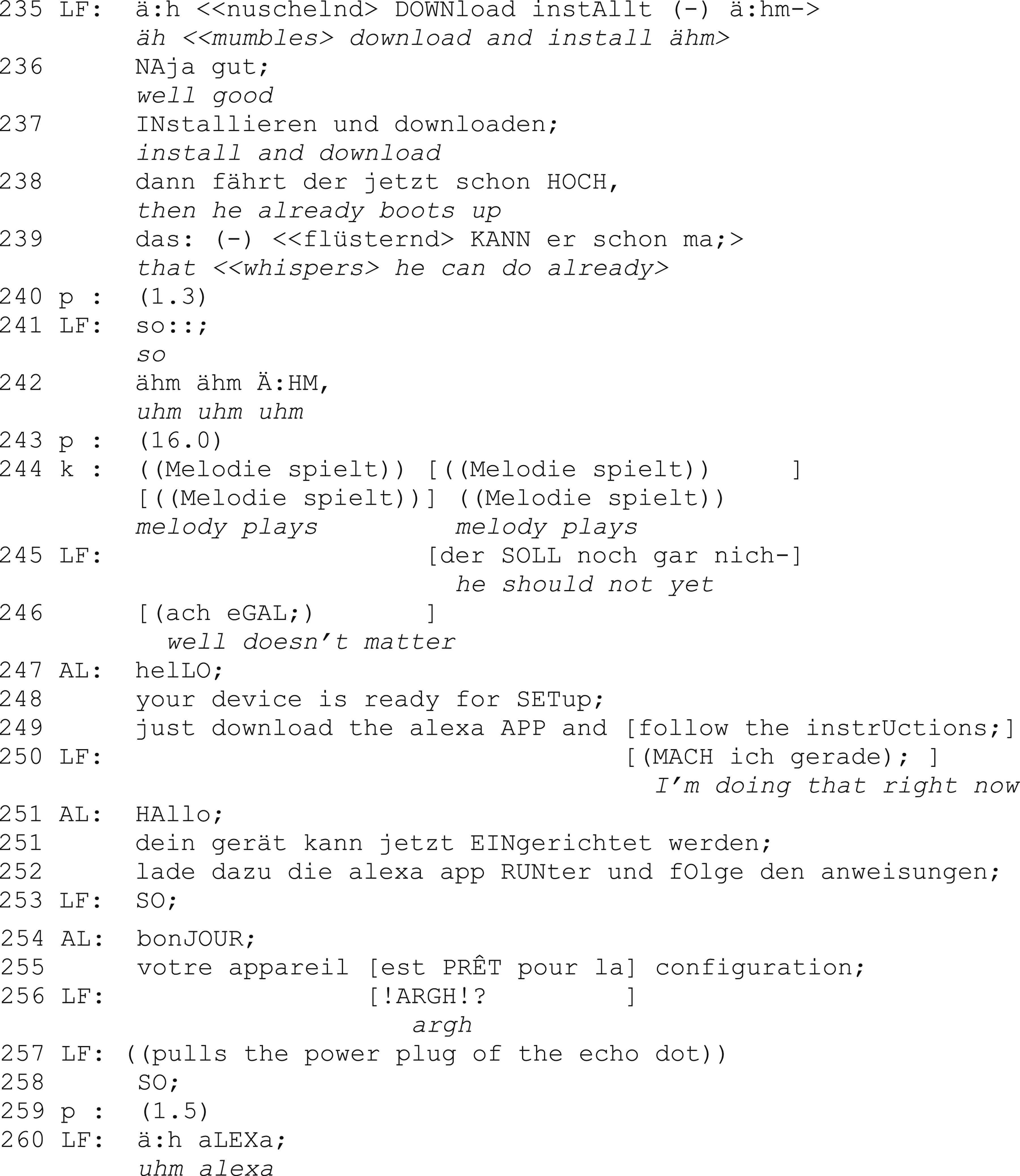

Example (2): ‘He should not yet’

In this example, the smart speaker starts the installation program, although this is not intended by the user as we can see in line 245. As Lukas’ attempt to stop the smart speaker’s installation fails (l. 246), the installation program continues and starts to produce verbal signs, but Lukas is not ready to react. The smart speaker then continues to produce the same request (to download the Alexa app) in different languages and as it starts the third one (in French), Lukas pulls the plug (l. 257) and thereby stops the program. What is noticeable here are the strategies to gain control over the acoustic signals and the conversational floor ‘defined as the acknowledged what’s-going-on within a psychological time/space’ (Edelsky, 1981: 405) as well as over the procedure to install the smart speaker and the temporal order of the installation. This sovereignty is regained by Lukas and to operate the ‘regaining’, he chooses to ‘solve’ the communicative problem not on the level of the program, but externally, via the power plug. We argue that strategies like these – a break-out of the action logics of the programme and a transition to situationally emerging action potentials – can be part of the negotiated agency.

But, as we can see in the next example, turns that follow the conversational interface’s flow can also lead to a negotiation of sovereignty and control over the whole situation. Damaris and Jan-Ole, a student couple in their early 20s living together, install the smart speaker for the first time. The following sequence is taken from the phase in which the IPA suggests to ‘get to know each other’.

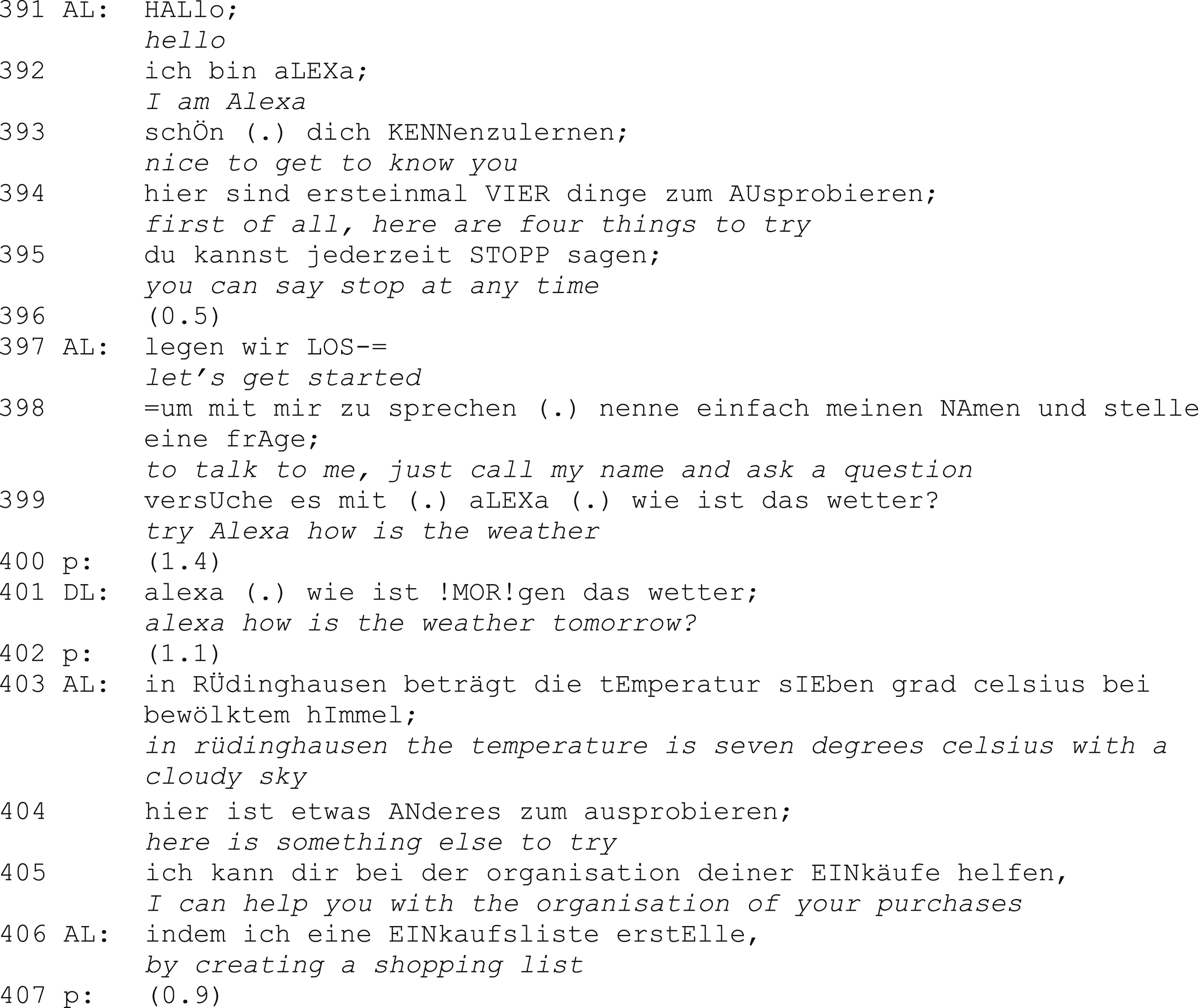

Example (3): ‘How is the weather tomorrow’

The suggested request (l. 399) is taken up by Damaris but transformed to a slightly different request by adding one word (‘morgen’ – ‘tomorrow’). In doing so, she changes the meaning of the question and performs a new action by operating on the linguistic material of the smart speaker. She is, in the sense of Goodwin (2018: 2–6), co-operating with the smart speaker, which does not mean that they act in consent or even perform the same action. On the contrary, by just adding one word and transforming the suggested request into another one, Damaris demonstrates that she can leave the paths of the programme and that she is not bound by the suggestions of the device. Also, the gestures and the gaze to her partner – that are apparent in the video recording of the interaction – underline that she is about to do something unexpected. The pronunciation of the first syllable of ‘!MOR!gen’ (l. 401) with a strong emphasis within the turn-constructional unit is an indication that it is not a mistake by Damaris or that she is interested in the weather forecast for the following day, but that she is explicitly challenging the system (cf. Selting, 2008: 231–232). The answer by the smart speaker is (expectedly) not coherent with the request: It produces not the weather forecast for tomorrow but a description for the current weather in their hometown. The device was not able to react to Damaris’ modification of the suggested phrase. While Damaris did not get the answer she asked for, she nonetheless displays her independence and agency vis à vis the device to the other person present. It is demonstrated, to the co-participant Jan-Ole, that Damaris as a human actor has the power to not follow the instructions; she also positions herself as intellectually superior to the device by ‘doing being’ (Sacks, 1984) in control. The interaction with the device is simultaneously an interaction with the other human user in front of device and thus a form of negotiation of ‘how relations of sameness or difference between [machines and humans] are enacted on particular occasions’ (Suchman, 2007: 2). Here, human agency becomes relevant as the ‘capability of doing […] things’ (Giddens, 1984: 9) differently than suggested by a machine.

One last example in which agency occurs as negotiated is taken from the shared flat of Lukas and Alex. In this excerpt, agency is negotiated not between the smart speaker and the user but between the two users:

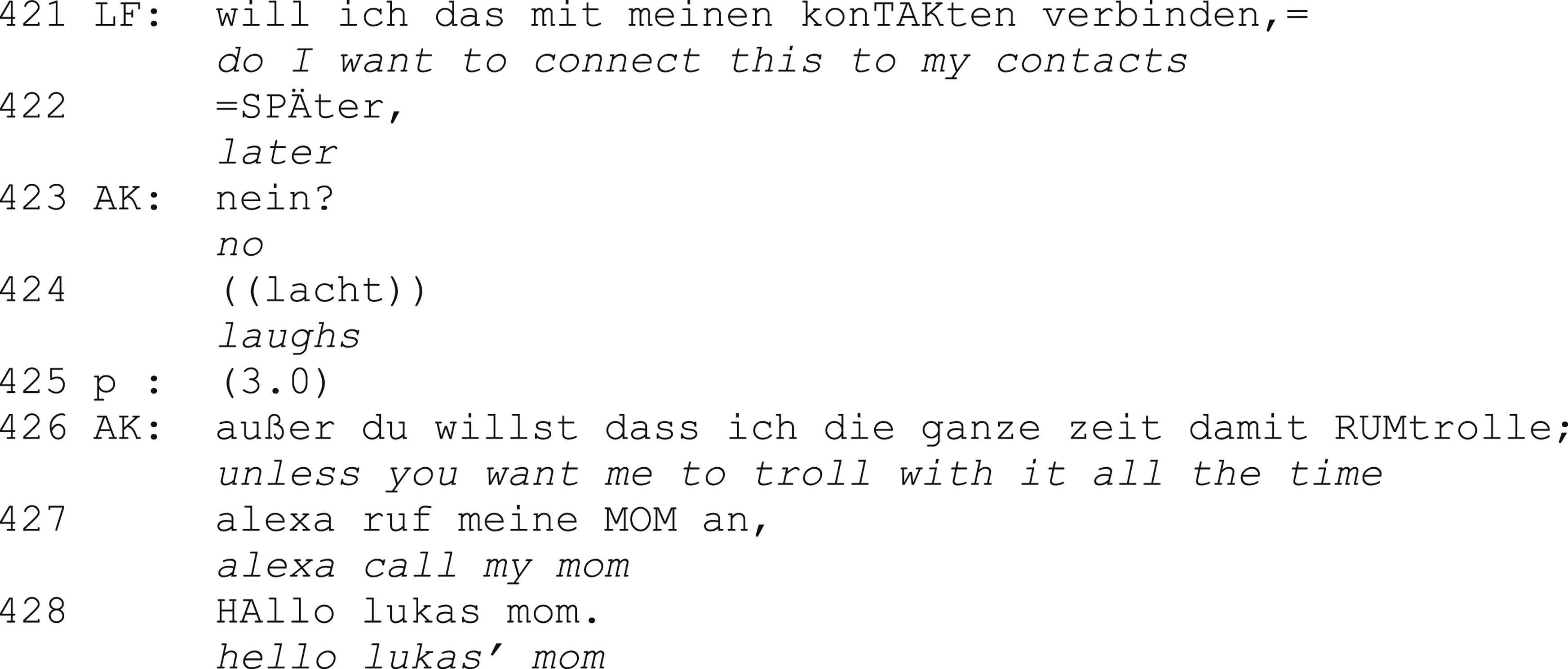

Example (4): ‘Alexa, call my Mom’

Lukas reflects on whether he should connect the smart speaker to the contact list on his mobile phone. His flat mate Alex, who is entering the room, immediately participates in this interaction by answering Lukas’ question with a ‘no’ (l. 423). Both Alex’ laughing and Lukas’ smiling (as documented in the video) as well as Alex’ comment ‘unless you want me to troll with it all the time’ (l. 426) frame the interaction in front of the device as a humorous one, in which Alex also teases Lukas. At the same time, they – also in a humorous way – negotiate the possibility to misuse the smart speaker. As it is placed in the shared living room of the flat, both can address the device equally and the utterance by Alex (l. 426) refers to the possibility to trick the other user by having access to his private data through the device. In doing so, they tie the negotiation of who has access to the device (and possibly to the personal data associated with it) back to the actual practical situation of use. The following utterances by Alex (l. 427–428) demonstrate this prospected action by using fictional reported speech. Taking this into account, the potential ‘trolling’ (l. 436) can be seen as framed as a ‘nonserious action’ (cf. Clark and Gerrig, 1990: 802; Ehmer, 2011: 75) and an ironic distance is interactionally created by Alex (cf. Hartung, 2002: 99–102). Despite this distancing, Alex negotiates a form of agency between him and Lukas, in the sense that he describes and demonstrates a potential scenario, in which structures of access and power can be very relevant. 3 As data collected and analysed by Porcheron et al. (2018) can illustrate, these questions do play an important role in the usage of VUIs in everyday life.

Users’ agency and the containment of corporate data practices

As we have seen, agency is part of the user’s face-to-face as well as face-to-interface interaction and it is achieved with different strategies in situ. Here, IPAs are most commonly addressed as potential participants in interaction and not as devices that enable data practices. However, users do reflect on these data practices as critical perspectives on IPAs, smart speakers, and digital technology in general are part of public discourse. Thus, users have to acquire a certain understanding how their agency is related to and re-composed through smart-speaker use. We want to analyse these views in the following.

We will return to the two households mentioned above. Although Alex and Lukas have a somewhat more elaborate view of the data processing involved in the smart-speaker operation, they come to similar conclusions like the young couple, Damaris and Jan-Ole, who have less to say concerning the data issue. Alex and Lukas bought two Amazon Echo devices while moving into their new apartment. They set them up in the living room and the bathroom respectively. In the interviews we conducted they suggest that they did not talk much about the potential analysis of data and data protection issues before and during the acquisition and use of the device. However, Lukas has a sense that they are being ‘surveilled’:

‘Um, you are being surveilled to a certain extent. I do see that too. However, it’s the question what the data is used for.’ (Lukas, Interview 2: 158)

Alex also is suspicious of Amazon’s data practices, as they cannot be controlled easily. Both suspect that the data provided will be analysed. As they do not know how and to what effect, they assume that it is of little relevance to their everyday lives. However, they chose to not set up a device in their bedrooms. Eventually, Alex comes to the conclusion that the device’s convenience outweighs potential risks:

‘Well I think that most people know about it in the back of their mind somehow. And yes, it’s problematic, potentially. But I don’t care. Because the thing does what it’s supposed to do. I can listen to music; I can ask it to tell me the time and I can access my calendar. And that’s good enough.’ (Alex, Interview 2: 118)

Damaris and Jan-Ole, however, both suggest that they would be fine with placing an Echo in their bedroom. Jan-Ole argues that he has to give away data all the time anyway when he uses digital services. He suggests that he actively ignores thinking about the issue:

‘I did not think about it, because I think that, well, I don’t even want to know how much everyone knows about me already – all the things that I don’t think they know.’ (Jan-Ole, Interview 1: 29)

None of them used the tools that Amazon offers to control privacy intrusions – e.g., the button that deactivates the microphone or the review function in the Alexa App which also allows to delete individual or all recorded voice commands. Lukas and Damaris, however, state that they would never use the Echo for commercial or banking activities. That is, they would neither shop via the Echo, nor would they share their bank account information with the device to access third-party services. Here, they see the potential for a misuse of data that has an immediate – financial – effect, other than the data analytics whose effects they cannot be sure of.

Our interviews mirror the results from other interview studies with smart-speaker users (Pridmore and Mols, 2020; Lau et al., 2018) and they can compare to users’ perspectives on digital data practices more generally. These sentiments have been either framed as ‘privacy calculus’ or, more negatively, as ‘online apathy’ (Hargittai and Marwick, 2016) or as ‘privacy cynicism’ (Lutz et al., 2020). However, especially the financial aspects are of interest here, as they show that there is no pure apathy involved. Instead, it suggests that some users find their financial data more sensitive than other data about their everyday activities. As Lukas puts it:

‘Because that’s something that affects me directly, I think. It’s something that harms me on a financial level. All the other things are like: I don’t know of it even if it could harm me somehow or if it could work against me somehow.’ (Lukas, Interview 1: 142)

This shows that users are not enabling all kinds of data practices. They also avoid or counter overt forms of manipulation by announcing that they would discontinue using the device once it spurts out advertisements. As users are confronted with advertisements constantly elsewhere, one could argue that using a smart speaker currently offers less possibilities for manipulation than, e.g., using social media platforms. However, users seem not to be aware that they are performing unpaid labour by providing training data for the AI behind the smart-speaker system; they also seem unaware how the data can be used by corporations who try to influence them (Turow, 2021). That is, they seem to care more about their personal data than their data practices which are profitable beyond providing information about personal preferences and routines.

In conclusion, users are willing to use devices although the data practices of the device providers are questionable to them. As these practices do not seem to affect them directly, users are willing to continue using smart speakers as long as they are beneficial to their domestic interaction. Eventually, however, Damaris stopped using their smart speaker for anything but turning on the radio for the dog when she is at work because she finds the interaction with it ineffective, time-consuming, and cumbersome, as the IPA does not understand what she wants it to do. Here, the promised convenience is not achieved and agency is not increased. Again, for these users, it is the evaluation of the face-to-interface interaction that seems more relevant for the actual use than the debate about data practices.

Two agencies in cooperation without consensus

As illustrated above, it is relatively easy to pin down agency within the household where the IPA is used. As we have shown through sequence analysis of use-case-situations in the first installation, users are able to retain control or a sense of superiority even in complicated situations. Ad-hoc strategies emerge to regain and demonstrate situational power and also to negotiate potential control-relevant situations in the future, using the (linguistic and physical) material at hand.

However, it becomes more complicated when looking at the picture beyond the living room. Here, it is difficult to ascertain if and how individual agency is deprecated and what exact gain companies have in each act of smart-speaker usage. This is true for us as researchers as well as for users. While we agree with the critical research’s insight into the capitalistic base of the IPA infrastructure, we also have to acknowledge that the capitalisation as datafication of everyday life through smart-speaker use seems mostly irrelevant to users in said everyday life. While it would be naive to suggest it is not relevant at all, it is not relevant when voice commands are used, even though the data collected could be used in the immediate or near future to influence the users. In Garfinkel’s words, the back-end of the IPA infrastructure often is not and does not have to be included in an ‘account’ (Garfinkel, 1967: 32) of the situation at hand.

In focusing on situations of use in cooperation, we highlighted that these situations are formative for the several forms of agency at play. That is, as cooperation connects individuals through things in situations, there are always several agencies that are produced in said cooperation. Contrary to views that focus on the agency of individuals, agency is not a finite resource that can only be held by one party involved. We want to emphasise that participants enhance their agency through cooperating together.

To us, it seems important that both sides can act in new ways and increase their potential ‘to exercise some sort of power’ (Giddens, 1984: 14). Users can find ways to automate routines in their household and to do things hands-free; companies offering smart-speaker services see an increase in revenue or, at least, free AI-training data. While every act of using a smart speaker adds only little comfort and only small specks of data, both add up in the long run. Because companies source the data of several thousands of households, the power they derive from smart-speaker usage is, however, significantly greater.

The analytical distinction between media and data practices allows us to show that users in our study try to avoid overt data practices. This might also explain why users do not use the data management options offered by Google and Amazon devices. We would argue that the users’ avoidance of thinking through the consequences of the data practices associated with device use is one way of increasing their agency. When comfort and convenience are conceived as the avoidance of data practices (e.g. setting a timer on a phone manually rather than through a voice command), it makes little sense to reflect on device-specific data practices, especially when they seem to not have any direct or indirect consequences. It makes even less sense to actively manage them through an app or through voice commands. Thus, we suggest that the differentiation of data and media practices is a useful heuristic for the study of digital phenomena.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Deutsche Forschungsgemeinschaft (262513311 – SFB 1187).