Abstract

Background:

The proliferation of computerized neuropsychological assessment devices (CNADs) for screening and monitoring cognitive impairment is increasing exponentially. Previous reviews of computerized tests for multiple sclerosis (MS) were primarily qualitative and did not rigorously compare CNADs on psychometric properties.

Objective:

We aimed to systematically review the literature on the use of CNADs in MS and identify test batteries and single tests with good evidence for reliability and validity.

Method:

A search of four major online databases was conducted for publications related to computerized testing and MS. Test–retest reliability and validity coefficients and effect sizes were recorded for each CNAD test, along with administration characteristics.

Results:

We identified 11 batteries and 33 individual tests from 120 peer-reviewed articles meeting the inclusion criteria. CNADs with the strongest psychometric support include the CogState Brief Battery, Cognitive Drug Research Battery, NeuroTrax, CNS-Vital Signs, and computer-based administrations of the Symbol Digit Modalities Test.

Conclusion:

We identified several CNADs that are valid to screen for MS-related cognitive impairment, or to supplement full, conventional neuropsychological assessment. The necessity of testing with a technician, and in a controlled clinic/laboratory environment, remains uncertain.

Introduction

Roughly 50%–60% of patients with multiple sclerosis (MS) suffer from cognitive impairment (CI).1–3 Currently, widespread screening for MS-related CI is limited by the considerable time and staff training required to administer traditional neuropsychological (NP) tests. Computerized neuropsychological assessment devices (CNADs) require fewer staff resources and less time to administer and score and may represent a viable alternative. This perspective hinges on both CNADs and conventional NP tests meeting adequate psychometric standards prior to clinical implementation. 4 Furthermore, confounding factors that may influence test validity should be accounted for, such as the degree of test automation and technician involvement/supervision. Previous reviews concluded that, despite some promising results, the scope of impairment targeted by CNADs is limited, motor confounders are not accounted for, and psychometric data are lacking.5,6 While informative, the reviews did not cover psychometric properties in depth and omitted several individual tests not part of a larger battery.

Our objective was to assess the status of psychometric research on CNADs in MS and identify the most promising approaches for future research and clinical application. We endeavored to examine systematically the literature focusing specifically on test–retest reliability (consistency over repeated trials), ecological or predictive validity (relationships with real-world outcomes and general clinical measures), discriminant/known groups validity, and concurrent validity (correlations with established NP tests). Our overall aim was to help MS researchers and clinicians make informed decisions to apply select measures in MS research and clinical care.

Methods

Search protocol and inclusion

As per the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines, 7 we utilized PubMed, MEDLINE, PsycINFO, and Embase databases. The search terms and Boolean operators applied for each database were as follows: multiple sclerosis AND (neuropsychological test OR cognition OR cognitive function OR cognitive impairment OR memory OR processing speed OR executive function OR language OR attention OR visuospatial) AND (computer OR internet OR iPad OR tablet OR CNAD). Test names known to the authors were also employed: Automated Neuropsychological Assessment Metrics (ANAM), Central Nervous System-Vital Signs (CNSVS), Cognitive Drug Research (CDR) Battery, NeuroTrax, Cognitive Stability Index (CSI), Neurobehavioral Evaluation System (NES), Amsterdam Neuropsychological Test (ANT), Cambridge Neuropsychological Test Automated Battery (CANTAB), CogState Brief Battery (CBB), Cognivue, Cognistat, NeuroCog BAC App, CogniFit, BrainCheck, Standardized Touchscreen Assessment of Cognition (STAC), and the National Institutes of Health (NIH) Toolbox.

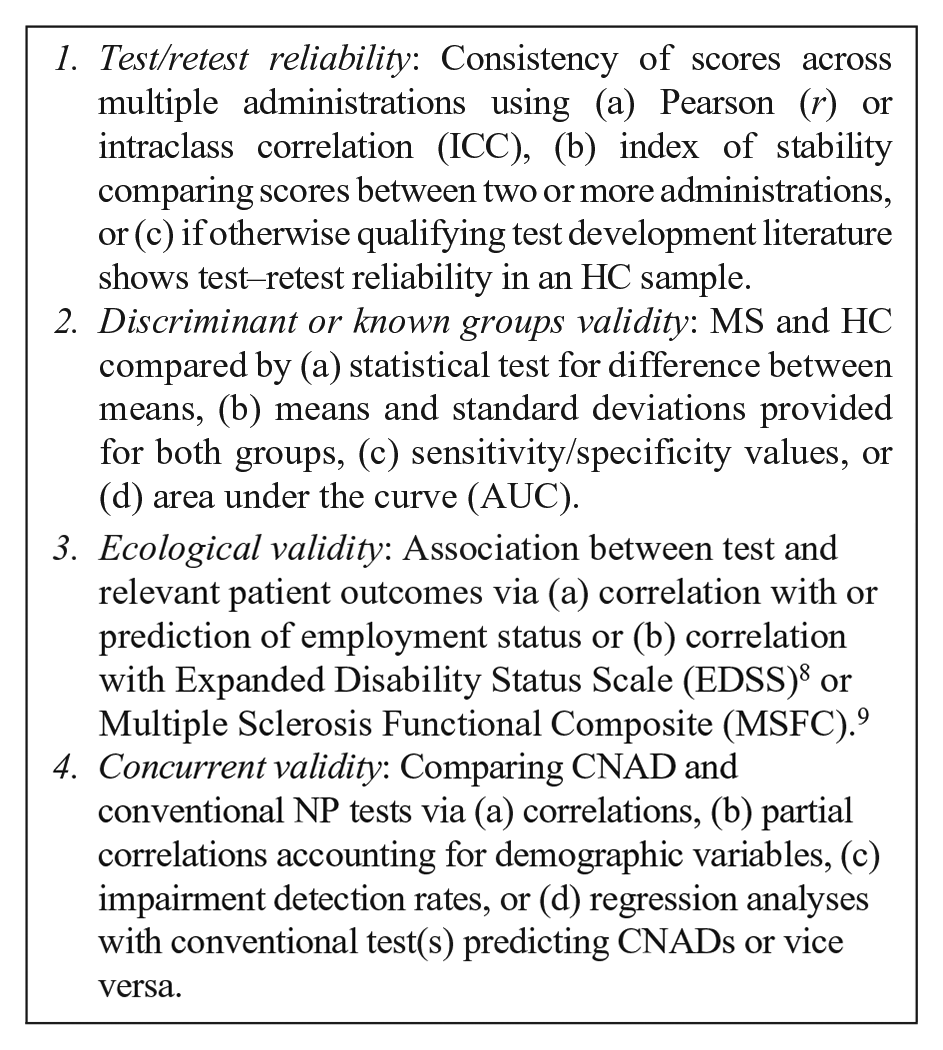

Inclusion criteria for the selected articles were as follows: (a) included an MS and healthy control (HC) sample, (b) included at least one measure with computerized stimuli and computerized response collection, (c) published in a peer-reviewed journal after 1990, and (d) included sufficient data to evaluate at minimum one of the following:

The only exclusion criterion was a failure to meet one or more of the inclusion criteria. To obtain additional information regarding test characteristics (e.g. administration mode, hardware, etc.), information from CNAD websites was reviewed or provided by the test owner upon inquiry by the authors.

Effect size calculation

We calculated effect sizes (Cohen’s d) comparing MS and HCs using available data or by converting another reported metric (e.g. Hedge’s g). The average discriminant effect size for CNADs was calculated for each cognitive domain.

Domain identification

Battery subtests and individual tests were classified as measuring specific cognitive domains based largely on author descriptions, and tests used in multiple studies with varying domain attribution (e.g. N-Back—both processing speed and working memory) were classified as measuring multiple domains. Simple reaction time tests were classified as measuring cognitive processing speed.

Results

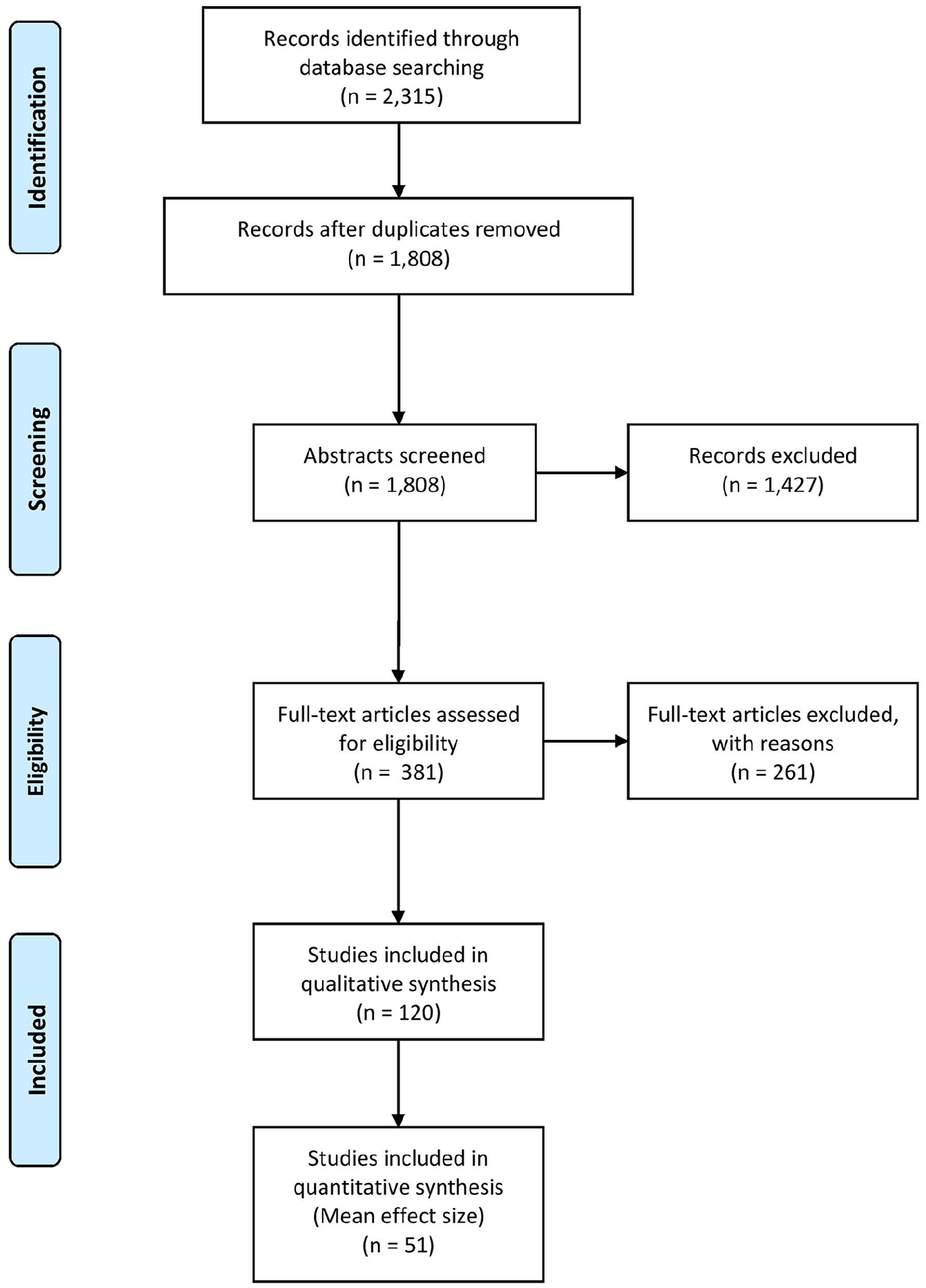

The PRISMA search found 120 articles (Figure 1), covering 11 batteries (i.e. multiple distinct cognitive functions) and 33 individual tests. CNADs with multiple indices within a single domain were classified as individual tests. For example, the Test of Attentional Performance (TAP) has multiple subtests, but each measures attention, so it was not classified as a “battery.” Certain subtests were not included in the review, as they were never used in any study with an MS sample (e.g. several subtests of the CANTAB, ANAM, and TAP were not included as they were not used in a published MS study).

PRISMA systematic review flow diagram.

CNAD administration characteristics

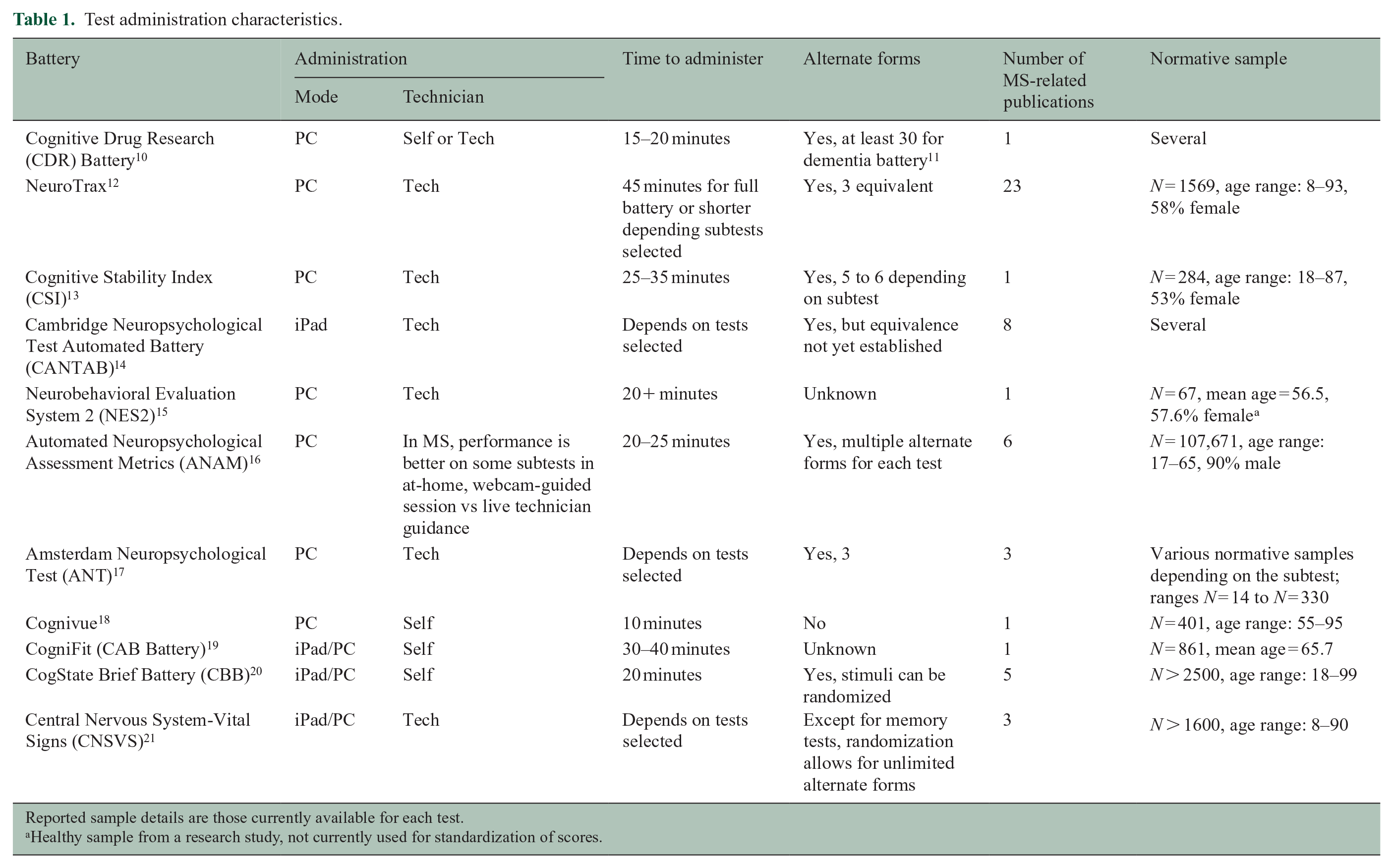

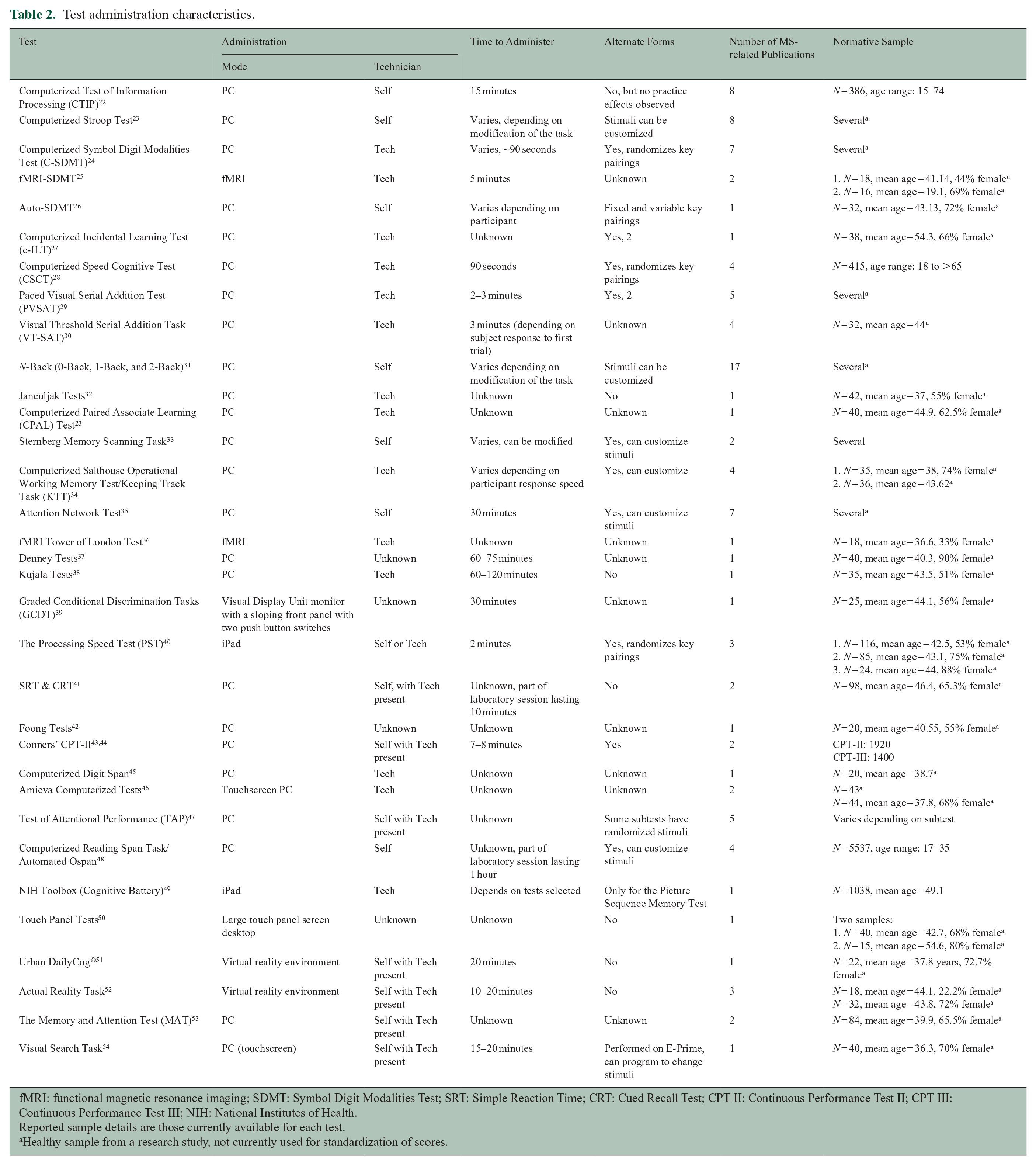

Tables 1 and 2 reveal that most CNADs are Personal Computer (PC) based but several are dually compatible with either PC or iPad®/tablet administration, as claimed by the authors, but only the CBB empirically established the equivalence of PC and iPad administrations. 55 Most batteries were presented as requiring a technician for administration, as in NeuroTrax, CSI, NES2, CANTAB, ANAM, ANT, and CNSVS. The influence of technician supervision was investigated in only a few studies, results supporting the idea that human-supervised administration yields equivalent data to self-administration,40,56,57 although this conclusion is deemed preliminary and likely to vary by test. Of the 11 CNAD batteries, 9 included a large normative database from which scores could be standardized. Few individual tests not part of a battery had large normative databases for standardized scores, but most were administered in at least one study that included HCs.

Test administration characteristics.

Reported sample details are those currently available for each test.

Healthy sample from a research study, not currently used for standardization of scores.

Test administration characteristics.

fMRI: functional magnetic resonance imaging; SDMT: Symbol Digit Modalities Test; SRT: Simple Reaction Time; CRT: Cued Recall Test; CPT II: Continuous Performance Test II; CPT III: Continuous Performance Test III; NIH: National Institutes of Health.

Reported sample details are those currently available for each test.

Healthy sample from a research study, not currently used for standardization of scores.

Psychometric findings by cognitive domain

Table 3 lists CNADs by functional domain (standalone or part of a larger battery) and shows results for the four psychometric properties. Supplementary Tables A and B provide more details regarding samples, effect sizes, and validity coefficients from each study.

Psychometric characteristics of computerized tests.

SSRT: Semantic Search Reaction Time, WMS: Wechsler Memory Scales, WAIS: Wechsler Adult Intelligence Scale, CVLT: California Verbal Learning Test, PAL: Paired Associate Learning, WAIS III: Wechsler Adult Intelligence Scale–Third Edition, SRT: Simple Reaction Time, PALT: Paired Associate Learning Test, CPALT: Computerized Paired Associate Learning Test, RAVLT: Rey’s Auditory Verbal Learning Test, BTA: Brief Test of Attention, TMT: Trail Making Test, WCST: Wisconsin Card Sorting Test, JLO: Judgment of Line Orientation, DSDT: Driving Simulator Dual Task, COWAT: Controlled Oral Word Association Test, NAB: Neuropsychological Assessment Battery, DSST: Digit Symbol Substitution Test, CRT: Complex Reaction Time, c-ILT: Computerized Incidental Learning Test, AOspan: Automated Operation Span Task, and DKEFS CW: Delis Kaplan Executive Function System Color Word Interference Test C-SDMT: Computerized Symbol Digit Modalities Test; CSCT: Computerized Speed Cognitive Test; IPS: Information Processing Speed; PASAT: Paced Auditory Serial Addition Task; CDR: Cognitive Drug Research; PST: Processing Speed Test; CNSVS: Central Nervous System-Vital Signs; CTIP: Computerized Test of Information Processing; CBB: CogState Brief Battery; ANAM: Automated Neuropsychological Assessment Metrics; NES: Neurobehavioral Evaluation System; GCDT: Graded Conditional Discrimination Tasks; CANTAB: Cambridge Neuropsychological Test Automated Battery; CSI: Cognitive Stability Index; PVSAT: Paced Visual Serial Addition Test; VT-SAT: Visual Threshold Serial Addition Task; MAT: The Memory and Attention Test; BVMTR: Brief Visuospatial Memory Test-Revised; CPT: Continuous Performance Test; TAP: Test of Attentional Performance; NIH: National Institute of Health; n.s.: non-significant; Mixed: mixed results from one or more studies showing both significance and non-significance or both poor and adequate reliability; Poor: test–retest reliability below r = 0.60.

The symbol ‘✓’ indicates significant results for respective psychometric measure (p < 0.05). Blank boxes represent missing/uninvestigated for the respective psychometric measure.

Concurrent validity was with a comparator test of the same domain/cognitive function.

Cognitive processing speed

Of the 44 processing speed tests reviewed, all four psychometric properties were examined for the Computerized Symbol Digit Modalities Test (C-SDMT), 24 Computerized Speed Cognitive Test (CSCT), 28 and NeuroTrax Information Processing Speed (IPS) test. 12

For test–retest reliability, the C-SDMT demonstrated the highest test–retest coefficient among processing speed tests in MS patients (ICC = 0.97) over a mean interval of 103 days. 24 By comparison, the test–retest reliability for the Processing Speed Test (PST) was 0.88 in MS patients. 40 Most every test evaluated in an MS sample showed acceptable cognitive processing speed reliability (see Table 3 and also Supplementary Tables A and B).

For discriminant validity (MS vs HCs), the average effect size for processing speed tests was d = 1.03 (SD = 0.42). The C-SDMT showed medium (d = 0.76) 24 to large (d = 1.48) 58 effect sizes comparable to the conventional SDMT. 59 The CSCT had similar effect sizes comparing HCs with primary progressive MS (d = 1.8, p < 0.001) and relapsing remitting MS (d = 0.80, p < 0.01). 60 Other tests of cognitive processing speed discriminated equally well, including the Computerized Test of Information Processing (CTIP; mean d = 0.82, SD = 0.37),61–65 the PST (d = 0.75, p < 0.001), 40 and to a lesser extent the Auto-SDMT (d = 0.68, p < 0.01) 26 and CNSVS Processing Speed subtest (d = 0.52, p = 0.046). 66

For ecological validity, EDSS correlated with C-SDMT (r = 0.35), 24 NeuroTrax IPS (r = 0.20), 67 CNSVS Processing Speed (r = 0.31), 66 CTIP (range: r = 0.39–0.52), 62 and CBB Detection (r = 0.46). 68 EDSS did not correlate with CANTAB Reaction Time 69 and Auto-SDMT. 26 Like the conventional SDMT,70,71 impairment on the CSCT significantly predicted unemployment among MS patients. 28 No other CNAD cognitive processing speed test was studied in this manner.

For concurrent validity, computerized tests based on SDMT correlated with the conventional version: CSCT (r = 0.88), 28 C-SDMT (r = 0.78), 72 Auto-SDMT (r = 0.81), 26 and PST (r = 0.80 and r = 0.75).40,73 SDMT also correlated with CTIP (range: r = 0.29–0.40), 62 CBB Detection (r = 0.40), 74 and CSI Processing Speed Composite (r = 0.58), 13 while the following processing speed tests each correlated significantly with their non-SDMT comparators: CDR Speed of Memory, 10 CSI Number Sequencing, 75 CNSVS Processing Speed, 21 and NeuroTrax IPS. 12

Working memory

Of the 22 working memory CNAD tests, we found published results on three of the psychometric properties for CANTAB Spatial Working Memory and Paced Visual Serial Addition Test 2 (PVSAT-2).

For test–retest reliability, the PVSAT-2 (ICC = 0.75) 41 had the best consistency in MS patients over a mean of 71.7 days.

Overall, working memory CNADs had moderate discriminant validity with an average effect size of d = 0.70 (SD = 0.30). The PVSAT showed smaller effects (range: d = 0.27–0.61)29,41 depending on the rate of stimulus presentation. Except for CANTAB Spatial Span (mean d = 1.11) 76 and Automated Ospan Test (d = 0.90), 77 other CNAD working memory tests generally demonstrated lower effect sizes. For example, the effects derived from the CDR Quality of Working Memory (d = 0.20) 10 and some of the Memory and Attention (MAT) Working Memory subtests (range: d = 0.05–0.32) 78 were not statistically significant.

For ecological validity, EDSS was significantly correlated with CDR Quality of Working Memory (r = 0.48), 10 CANTAB Spatial Working Memory (r = 0.43), 69 CBB One-Back (r = 0.44), 68 and Sternberg Memory Scanning Task. 32 However, the Sternberg task did not correlate significantly with EDSS in two other studies.79,80 EDSS correlations with PVSAT 29 and the Computerized Salthouse Operational Working Memory Test were also non-significant. 79 Ecological validity was unexplored for the remaining working memory tests (listed in Table 3).

In regards to construct validity, validity coefficients with either Paced Auditory Serial Addition Task (PASAT) or Digit Span were as follows: Computerized Salthouse Operational Working Memory Test (r = 0.51, r = 0.57), 79 CBB One-Back (r = 0.41, r = 0.50), 68 PVSAT (r = 0.74), 29 and the Two-Back level (r = 0.59). 81

Episodic memory

Of the 21 episodic memory tests, all four psychometric properties were established for NeuroTrax Memory and CNSVS Composite Memory.

CNSVS Verbal and Visual Memory demonstrated mixed results for test–retest reliability in HCs. 82 ANAM Coding Substitution Delayed Recall had very high consistency (ICC = 0.88) over 30 days. 83 The NeuroTrax Memory Composite 84 also showed very good reliability with an r value of 0.84. There was a wide range of reliability coefficients for the CDR Quality of Episodic Memory in MS patients, from r = 0.50 to r = 0.82, depending on the test–retest interval. 10

For discriminant/known groups validity, the mean effect size for episodic memory tests was d = 0.70 (SD = 0.30), and the largest effect size noted was for the CNSVS Composite Memory (d = 1.34). 85

For ecological validity, EDSS correlated modestly but significantly with several CNAD memory indices, including CNSVS Composite Memory (r = 0.29), 86 CBB Continuous Paired Associate Learning (CPAL) Task (r = 0.32), 68 Touch Panel Tests Flipping Cards Game (r = 0.45), 50 CDR Quality of Episodic Memory (r = 0.33), 10 CANTAB Delayed Matching to Sample (r = 0.40), 69 and MAT Episodic Short-Term Memory (r = 0.21). 78 The CANTAB Paired Associates Learning Test showed non-significant correlations with EDSS69,87 and all other episodic memory tests were uninvestigated for this standard.

Four episodic memory tests showed good or excellent concurrent validity: CBB One Card Learning correlated with the Brief Visuospatial Memory Test—Revised (BVMTR) 88 (r = 0.83), 89 CNSVS Verbal Memory correlated with Rey Auditory Verbal Learning Test 90 (r = 0.54, r = 0.52), 21 NeuroTrax Memory Composite correlated with the Selective Reminding Test 91 (range: r = 0.61–0.65), 12 and CSI Memory Cabinet 75 correlated with Family Pictures subtest from the Wechsler Memory Scales 92 (r = 0.65, r = 0.61). 75

Attention

Of the 23 attention tests reviewed, all four psychometric properties were explored for CDR Power and Continuity of Attention and NeuroTrax Attention.

The test–retest reliability was examined in most CNAD attention tests, but for the most part only in HCs, seldom in MS. The CDR Power of Attention subtest had the strongest test–retest consistency in an MS sample (range: r = 0.86–0.94). 10

For discriminating MS patients and HCs, the average effect size for attention was d = 0.81 (SD = 0.27) with the CANTAB Rapid Visual Processing showing the largest effect size (d = 1.12). 69

Ecological validity data were scant as correlation with EDSS or other validators was tested in only a few CNADs. For CDR, Power of Attention correlated with EDSS at r = 0.62 and the correlation with Continuity of Attention was r = 0.43. 10 Other EDSS correlations were as follows: NeuroTrax Attention r = 0.26 67 and Attention Network Test Overall score r = 0.48. 93 EDSS did not correlate with CANTAB Rapid Visual Processing, 69 Attention Network Test-Alerting, 94 and the Amieva Go-No-Go Test. 95

Concurrent validity data were similarly sparse. Broadening the construct to include tests such as PASAT, Digit Span, or Trail Making Test, 96 we find that NeuroTrax Attention, 12 CBB Identification,68,89 CSI Attention Composite, 13 and ANAM Running Memory CPT (Continuous Performance Test) throughput 97 correlate with each of their comparators.

Executive function

A total of 14 executive function CNAD tests were reviewed, with NeuroTrax Executive Function being the only one to be evaluated on all four psychometric properties.

NeuroTrax Executive Function showed good test–retest reliability over 3 weeks to several months (r = 0.80). 84 The average discriminant effect size of executive function measures was d = 0.99 (SD = 0.40) with CNSVS cognitive flexibility showing the largest (d = 1.67). 85

Ecological validity was demonstrated by significant EDSS correlations with NeuroTrax Executive Function (r = 0.28), 67 the Computerized Stroop Test (range: r = 0.32–0.40), 98 and CBB Groton Maze Learning (r = 0.32). 68 EDSS did not correlate with the Amieva Computerized Stroop, 95 while the remaining executive function measures did not examine ecological validity. The NeuroTrax Global Cognitive Score and Executive Function subtest were studied in relation to employment status, both of which discriminated working and non-working MS patients (p < 0.05). 99

A few computerized executive function measures showed moderate associations with similar conventional tests. For instance, the CBB Groton Maze Learning correlated with a maze completion task (r = 0.56) 100 and the CNSVS measures showed moderate associations with both the Stroop Test and a test of mental set shifting. 21

Other domains

Briefly, there were several other tests which do not clearly fall within one of the above cognitive domains, most discriminating MS from HCs, but with limited reporting of reliability or ecological validity (Table 3 and Supplementary Tables A and B).

Discussion

In this review, we cast a high bar for designating CNADs as ready for use in MS. We apply the usual standards of psychometric reliability and validity59,101 and expect that CNAD authors and vendors will publish research focused on MS samples. We recognize that this may present an economic hardship as the CNAD market is highly competitive and vendors are marketing to the wider neurology community. Nevertheless, we maintain that these psychometric standards are important for optimal quality of care, and that research findings should be publicly available, as in conventional NP validation.102–104

We find that tests from the CDR, the CBB, NeuroTrax, and CNSVS show acceptable psychometrics. The CSCT, PST, and C-SDMT may be the best single tests for relatively quick screens in busy clinics unable to provide a full NP assessment or carry out longer screening procedures. These tests fulfill the four psychometric criteria (i.e. test–retest reliability, discriminant/known groups validity, ecological validity, and concurrent validity) and are sensitive to MS-related impairment. While the PST lacks a published normative database, we know by personal communication that these data will soon be submitted for peer review. The CSCT and the C-SDMT were used in large healthy samples for comparison. The tests could be made more clinically relevant if age-, sex-, and/or education-specific norms are published to facilitate interpretation of individual results.

There are also notable differences in administration and outcome measures between these versions of the SDMT. The PST uses a manual response on a touchscreen keyboard, while the CSCT, C-SDMT, and traditional SDMT use oral responses recorded by an examiner. Furthermore, the time limits for each of the tests are not identical, as the PST has a 120-second limit, while the CSCT and traditional SDMT both last 90 seconds. The C-SDMT has no time limit as its primary outcome is completion time. These differences prevent valid comparison of raw scores.

Our committee debated the use of the term computerized neuropsychological testing device (CNAD) as published by the American Academy of Clinical Neuropsychology. 4 Likewise, the term paper-and-pencil testing fails to accurately characterize conventional tests. It makes some sense to draw a distinction between tests that present stimuli on a computer screen as opposed to a person speaking to a patient (as in reading a word list or asking a patient to orally list words conforming with a category) or showing them a visual stimulus (as in presenting the Rey figure or interlocking pentagons with the instruction to copy it). Yet there are many shades of gray. The PASAT stimuli are often presented via audio files played on a computer device. Shall we refer to the PASAT as a computerized test? Clearly, the terms computerized and automated refer to a spectrum of technology, ever growing in our effort to improve test accuracy and ease of access.

Relatedly, very few tests have published data on the importance of technician oversight. Supervision could influence performance on several levels. First, it may improve patient motivation to perform well. Second, a technician could provide guidance or clarify instructions for patients with relatively little computer experience or having difficulty understanding a given task. Third, a technician may assist with any technical issues that may arise during test-taking, including malfunctioning hardware or confusion over interacting with a software interface. Recent findings on the CBB and PST suggest that a technician is not necessary in an MS sample.40,56 However, future research considering other CNADs, particularly those containing more complex tasks, may yield different results, especially for at-home self-administrations. This latter application would necessitate a basic capacity to manage digital platforms such as iPads and the like, as the home-use technology continues to evolve.

For each battery and test, a distinction should be made between the frequency of use in MS research/clinical trials, the amount of available psychometric information, and the quality of available psychometric information. As shown in Tables 1 and 2, some CNADs were included in numerous publications with MS patients, but as evident in Supplementary Tables A and B, few of these publications aimed specifically to validate the test. In addition, while some tests may have data for reliability and/or validity, the reported coefficients and effect sizes may be low or non-significant. In selecting a CNAD for routine NP assessment or clinical trials in MS, the degree to which a test meets these psychometric categories must be considered.

That said, we acknowledge that some psychometric standards are more important than others. EDSS is not a crucial ecological validity standard as it is notoriously insensitive to CI and physical and mental MS symptoms are generally weakly correlated. Concurrent validity, a process of construct validation, is not as relevant as predicting quality of life and some CNADs assess novel domains/functions for which there are no existing conventional tests. As is evident in Table 3, several CNAD outcomes lack a good comparator and are simply missed by conventional tests. For example, conventional tests seldom measure reaction time, and when they do it is not to the millisecond per stimulus. Another example is the Information Sampling Task (IST) from CANTAB that requires visual/spatial processing and also decision-making based on perceived probabilities of gain. While not yet validated against an established measure, it may prove valuable within a very narrow sub-area of executive function. Furthermore, construct validation depends on an established metric for the cognitive domain studied and the issue has not been fully examined even for conventional NP tests in MS.

Yet novel CNADs should still possess adequate test–retest reliability and sensitivity before routine clinical application or inclusion in a clinical trial. An interesting issue for sensitivity is the technological limitation preventing CNAD memory tests from evaluating recall, as opposed to recognition memory, the former notably more sensitive in MS.105,106 Someday CNADs may employ voice recognition or other methods to record and score recall responses, but in this review all of the memory tests use a recognition format. On the other hand, CNADs offer metrics absent from person-administered testing. Many CNADs are automated, which allows for easy administration and often foregoes the need for trained professionals to give instructions, present stimuli, record responses, and score results. In this way, automation can avoid possible bias or error introduced by a psychometrician. Stimuli can also be more readily changed or randomized in CNADs, providing many alternate test forms that may reduce practice effects with repeat testing. Similarly, some CNADs are less subject to ceiling and floor effects because they have the ability to vary the difficulty or presentation of items based on examinee performance.

Computerized tests might particularly benefit the detection of MS-related CI through their improved sensitivity in measuring reaction time and response speed. Declines in cognitive processing speed are the hallmark CI seen in MS. 107 Computerized tests may facilitate the identification of prodromal deficits by capturing minute differences in response time not identified by traditional tests. In contrast to a few raw score indices derived from a conventional SDMT protocol, most CNADs also generate measures of change in accuracy over the course of a task, change in reaction time, and measures of vigilance decrements. In sum, we opine that CNADs are quite good at measuring cognitive processing speed in MS, and their sensitivity and validity in other domains merit further investigation.

Finally, the practicality of CNADs and their cost-effectiveness are frequently cited as reasons to utilize this approach. All cognitive performance tests, be they person- or computer-administered, require certain physical or sensory capacities of patients (e.g. adequate manual dexterity and visual acuity). Conventional, person-administered tests, require skilled trained examiners, are time-consuming, and may be expensive in some cases. Setting aside the potential advantages of a technician (ensuring motivation to perform well, understanding of instructions), we would like to point out that the SDMT costs roughly US$2 per administration and in its oral-response format requires 5 minutes or less. While a human examiner is needed, there is no cost for computer devices, high-speed internet, or paying a vendor for the service (in our experience vendors typically charge US$20 per test). It is interesting that, in a recent investigation of the conventional and computer forms of SDMT, 26 patients reported a preference for the self-administered computer version. If replicated, this perspective may add value to CNADs over conventional tests, much like the PASAT was largely abandoned due to its stressing patients.59,101 The newly published CMS (Centers for Medicare & Medicaid Services) guidelines for reimbursement add another layer to the discussion. The new CPT code for automated computerized testing reimburses less than US$5 in the United States. Reimbursement rises when a professional becomes involved in the testing process. Thus, the relative value of completely self-administered CNADs is not yet fully recognized by payers, at least in the United States.

Conclusion

Several computerized tests of cognition are available and applied in MS research. As they currently stand, most CNAD batteries and individual tests do not yet demonstrate adequate reliability and validity to supplant well-established conventional NP procedures such as MS Cognitive Endpoints battery (MS-COG), BICAMS (Brief International Cognitive Assessment for MS), or MACFIMS (Minimal Assessment of Cognitive Function in MS). However, some tests (e.g. certain subtests of the CDR, CBB, NeuroTrax, CNSVS, C-SDMT, PST, and CSCT) possess psychometric qualities that approach or maybe even exceed conventional, person-administered tests and can serve as useful screening tools or supplements to full assessments. Further investigations of these CNADs, especially as they relate to ecological measures and patient-relevant outcomes, are needed before widespread implementation with an MS population.

Supplemental Material

MSJ879094_supplemental_table_a – Supplemental material for Computerized neuropsychological assessment devices in multiple sclerosis: A systematic review

Supplemental material, MSJ879094_supplemental_table_a for Computerized neuropsychological assessment devices in multiple sclerosis: A systematic review by Curtis M Wojcik, Meghan Beier, Kathleen Costello, John DeLuca, Anthony Feinstein, Yael Goverover, Mark Gudesblatt, Michael Jaworski, Rosalind Kalb, Lori Kostich, Nicholas G LaRocca, Jonathan D Rodgers and Ralph HB Benedict in Multiple Sclerosis Journal

Supplemental Material

MSJ879094_supplemental_table_b – Supplemental material for Computerized neuropsychological assessment devices in multiple sclerosis: A systematic review

Supplemental material, MSJ879094_supplemental_table_b for Computerized neuropsychological assessment devices in multiple sclerosis: A systematic review by Curtis M Wojcik, Meghan Beier, Kathleen Costello, John DeLuca, Anthony Feinstein, Yael Goverover, Mark Gudesblatt, Michael Jaworski, Rosalind Kalb, Lori Kostich, Nicholas G LaRocca, Jonathan D Rodgers and Ralph HB Benedict in Multiple Sclerosis Journal

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.