Abstract

Great technological leaps in computational capacity and machine autonomy have increased the business community’s expectations of simulators. In joining the conversation on simulators’ ability to reproduce the reality of actual, possible, past, and future worlds, this paper draws on the literature in analytical philosophy on counterfactuals. It identifies three functions of simulations (training, advising, and forecasting) and further inquires into their ontological and epistemological assumptions to show how they limit the quest for reality of higher-performance simulators in each of these three areas. This argument is not only meant to contribute to adjusting scholars’ and practitioners’ expectations and uses of simulations; it also calls for a more in-depth and critical study of the social implications of relying on them.

Introduction

Since the early days of computing, there have been high expectations of technological developments to improve the performance of business simulators (Meinhart, 1966; Orr, 1963), broadly understood as the imitation of a process or system over time by another 1 (Banks et al., 2010: 21; Guala, 2002; Hartmann, 1996: 83). Since the 1960s, decision support systems (Arnott and Pervan, 2005) have worked ‘on the compression of time. Years of operations can be simulated in minutes or seconds of computer time’ (Liang et al., 2008: 234). The ongoing surges in computational capacities (Beer, 2017) in both volume and rapidity of data management through quantum computers (Gibney, 2019) and in the creation of autonomous AI-endowed machines (Akerkar, 2019: 70; Maity, 2019: 654) nourish the hope that simulations can be made more realistic. Matching this trend, investment in robotics is multiplying the supply of applications for simulators in various business areas at a rapid pace. This enthusiasm is habitually higher when the simulators are designed to model natural objects instead of modeling social phenomena, such as business practices and organizations (Miller, 2015). Critical realist scholars, in particular, address the lack of realism of computer models meant to reproduce individual and collective behavior (Al-Amoudi and Morgan, 2018; Fleetwood, 2005); this fatally biases and limits the knowledge we can obtain from them (Mingers, 2004). Moreover, it is also pointed out that higher realism of simulations leads to harmful consequences (Bailey et al., 2012) and does not necessarily improve their function. Bonini’s (1963) paradox is taken to mean that ‘as a model grows more realistic, it also becomes just as difficult to understand as the real-world processes it represents’ (Starbuck, 2004: 1237).

In joining the conversations on what business practitioners should expect of new technologies for simulations, this paper sets aside the ongoing scientific debates about the capacity of new types of computers to reproduce reality effectively (Zhou et al., 2020) and the controversy over whether simulators should aim to reproduce reality at all or should rather focus on designing meaningful abstractions (Törnberg, 2019). Instead of taking a side in these specific debates, it opens a new angle of discussion by inquiring into the ontological status of the simulated worlds, with the aim of highlighting the conceptual challenges that simulators (no matter how sophisticated) have to confront. To identify these challenges, the paper draws on the literature in analytical philosophy on counterfactuals (Chisholm, 1946; Goodman, 1947): conditionals containing an if-clause that is contrary to fact. Despite numerous references to counterfactuals in organization studies, and despite various philosophical inquiries into the practice of simulations, ‘counterfactuals’ and ‘simulations’ are not yet connected, and this paper proposes to fill this gap. The longstanding debates over the ontological status of possible worlds are expected to offer an advantageous perspective from which to address the critical issues in modeling the reality of simulated worlds.

Although simulations encompass a wide variety of techniques (Thavikulwat, 2004) and applications (Greco et al., 2013), this discussion about the realism of simulated worlds will remain confined to managerial practice. To answer the call of Raffnsøe et al. (2014: 291) to explore organizational practice further as the hinge of ‘the established social order of the factual’ and ‘the utopian state of the counterfactual, an imagined and anticipated contrast’, the focus will be on the use of simulations as a managerial tool (Leonardi, 2012). Thus, this paper implicitly sets aside non-managerial applications of simulations and simulations designed with theoretical and methodological purposes (Harrison et al., 2007) – for instance, to test a research hypothesis (Dooley, 2017). However, compared with existing studies on the practice of simulation in business (Jahangirian et al., 2010), this argument proposes a broader conceptual interpretation by using the standpoint of counterfactual analysis.

Alongside the impact of theoretical conversations on simulations’ realism, the argument developed in this paper is also meant to contribute to a growing field of organization studies addressing the managerial use of digital tools (Nell et al., 2020) and, more specifically, of simulations (Bailey et al., 2012). At a more general level, this analysis substantiates a growing body of literature that critically addresses the faith that managers tend to place in technology (Bader and Kaiser, 2019; Lanzara, 2016; Manley and Williams, 2019; Suchman, 2005). While this article is not written itself as a piece of critical organizational scholarship, it nonetheless provides philosophical clarification for critical studies of management, intending to curb the high contemporary expectations for computing technologies.

The argument unfolds as follows. The first section connects the simulations and counterfactuals and distinguishes three critical functions of simulations in managerial practice – training, advising, and forecasting. These are discussed successively in the following three sections, with the aims of identifying their ontological and epistemological assumptions and pointing out their limits. The conclusion indicates directions for future research.

Connecting simulations and counterfactuals

The use of counterfactual conditionals has a long history (Copeland, 2002), yet it was only during the 20th century that possible worlds received serious attention in philosophy (Baudrillard, 1983, 1994), and especially in analytical philosophy and logic, where they generated significant debate (Lewis, 2013). More recently, these philosophical controversies have been transposed to various areas of social sciences – mostly history (Ferguson, 2008; Murphy, 1969), but also economics (Aligica and Evans, 2009; Hülsmann, 2001, 2003), political science (Fearon, 1991; Tetlock and Belkin, 1996), sociology (Griffin, 1993), psychology (Mandel et al., 2007), and law (Strassfeld, 1991).

Even though references to counterfactuals are not always explicit in organization studies, they are nonetheless used to understand and explain specific features of managerial practice. Most counterfactual analysis concerns causal relations between events in the history of business (Booth, 2003; Henik and Tetlock, 2007; Holt, 2016; Maielli, 2007; Scranton and Fridenson, 2013): ‘what would have happened had the top managers or other actors not acted the way they did’ (Vaara and Lamberg, 2015: 642). Occasionally, management and organization scholars refer to counterfactuals to assess organization studies (Cornelissen, 2019), organization change (Werner and Cornelissen, 2014), diversity of business strategies (Durand and Vaara, 2009; Grandy and Mills, 2004) and opportunities (Denrel et al., 2019), entrepreneurial mindsets (Baron, 2000; Gaglio, 2004) and imagination (Cornelissen, 2013; Cornelissen and Clarke, 2010), career aspirations (Obodaru, 2012), and regrets (Verbruggen and De Vos, 2019).

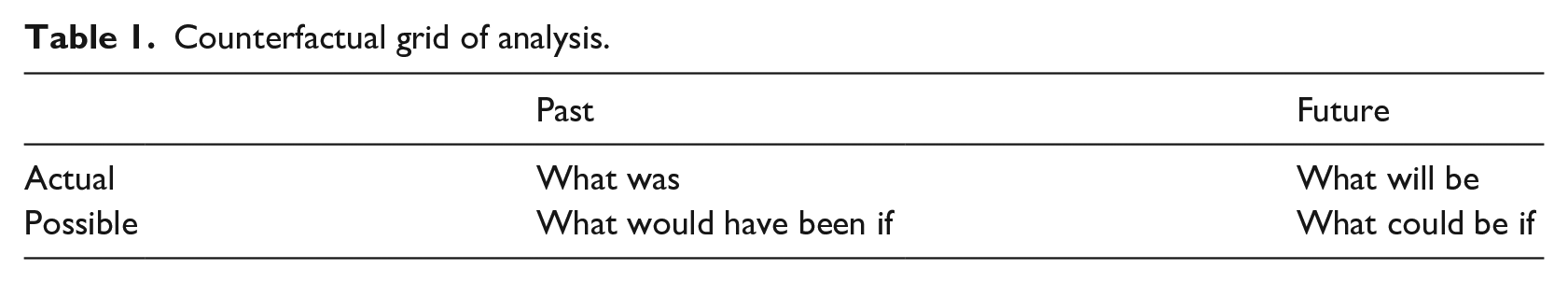

The question ‘but what if we’re wrong?’ (Klosterman, 2017) untiringly torments any person and, a fortiori, a manager whose decisions typically involve significant resources and potentially affect numerous stakeholders. A manager can assess alternative courses of action in a variety of manners: using thought experiments (Rescher, 2018; Tittle, 2016), contrast explanation (Van Fraassen, 1980), imagination (March, 1995; Werhane, 1999), fiction (Holt and Zundel, 2018; Tirado et al., 1999), or empathy (Mencl and May, 2009; Moulin de Souza and Parker, 2020; Waytz, 2016). Compared with these somewhat subjective exercises of thinking about possible worlds, simulations are developed with the promise (encouraged by the advent of new technologies) of offering a more objective representation of the things we cannot see in our present world. By applying a counterfactual grid of analysis (obtained by distinguishing actual vs possible and past vs future), we can determine four areas in which simulations can operate (see Table 1).

Counterfactual grid of analysis.

Quadrant 1 denotes situations that have already occurred. A typical use of simulation, in this case, is reconstructions of past societies of whom we do not have an accurate depiction (Gilbert and Doran, 2018). Reproducing the actual past not only has intrinsic historical value but can also allow those who might have missed it to dive into it. This is an essential function of business simulations designed to train managers by exposing them ex post to experiences they have not had. Quadrant 2 refers to situations that might have happened, yet they remain non-actualized possibilities. The simulation of possible past worlds (along with the actual past) is a source of valuable knowledge to artificially nourish managers’ experience in the present (Simmons, 2017; Swain, 2017). Quadrant 3 encloses a future state of affairs, which will become the actual past. In this case, simulations are expected to offer managers a preview (at least in part) of what the future will hold. They typically sell ex ante knowledge about the demand for a given commodity or stock price. Finally, Quadrant 4 includes all future possibilities that could potentially be actualized. Simulations are typically used in strategy to detail the possible outcomes of various decisions.

The first function is training. Although their scenario is often inspired by past situations, training simulators are naturally atemporal. In Baudrillard’s (1983, 1994) terminology, a good training simulation would include ‘third-order simulacra’, where the possible worlds claim to be copies of the real world, although there is no original. Training simulators are set to enhance managers’ experience artificially by exposing them to problems they have not (yet could have) experienced in the past, or that they might encounter in the future. The key specificity of this type of simulation is the creation of a finite microworld wherein trainees ‘can better understand the interactive effects of the environment, competitors, and employees’ (Xu and Yang, 2010: 223). The artificially created microworld is finite. This means, on the one hand, that the choices made inside the game have no direct impact on the actual world and, on the other, that the game will be over at some point.

Rather than being atemporal, business simulators can also be oriented toward the future, as they are designed to fill in gaps in managers’ knowledge. Following the distinction between Quadrants 3 and 4, we can identify two additional functions: advising and forecasting. The former endows managers with a tool for assessing different future options and understanding their outcomes. However, it does not prejudge which one will be actualized: this will eventually depend on the concrete decision the manager makes. The latter function of simulators is meant to offer a preview of the actual future by anticipating the choices market actors will make.

Within this counterfactual perspective, simulations can be seen as vehicles allowing managers to travel back and forth between the present and the future to explore possible and actual worlds to which they would not otherwise have access. Each of the three functions highlighted here comes with strong ontological and epistemological assumptions, which are further discussed in the following three sections of this paper.

Training simulations

Simulations developed as an educational tool aim to increase students’ (Fox et al., 2018) and executives’ (Tiwari et al., 2014) awareness of possible issues they might encounter in the real world (Nissley et al., 2004: 825). This type of simulation is a way of artificially increasing managers’ experience before they are confronted with similar realities, intending to result, all being well, in a wiser decision.

Toward more realistic simulations

‘Since the American Management Association pioneered its top management policy game, in 1957’ (Marting, 1957; Wolfe, 1976: 47), business games have evolved toward building more realistic fictitious worlds. The efforts made to enhance the realism of INTOP (initially released in 1963) allegedly cemented its popularity as one of the world’s most popular teaching tools in management during the following decades (Thorelli et al., 1995). More recently, computer capacities to enhance the reality of possible worlds have evolved from augmented, virtual, and mixed reality to augmented and pure virtuality (Farshid et al., 2018). The realism of training simulators has become a criterion for assessing their educational value and, implicitly, their quality (Farrenkopf et al., 2016: 1.3). The reason is that when trainees interact with a virtual environment, they should obtain ‘results which would be real in nature if the actions simulated were to be implemented in practice’ (Maity, 2019: 654).

The general argument that computer simulations do not need to, and should not aim to, become more realistic (Lawson, 1998: 70; Törnberg, 2019) does not apply in the case of training simulations. The idea that ‘a map often is a better representation of the territory than a picture’ (Ziman, 1978: 68) might apply in the case of theoretical knowledge acquisition (knowledge-that), but it does not have the same effect in situations where the objective is to acquire practical knowledge (knowledge-how). For a simulation to attain its educational purpose (for instance, to demonstrate the potential blind spots or unforeseen outcomes of managerial decisions), the trainees must experience the reality of their decisions with all senses (Hancock et al., 2008). The realism of business simulation is not, of course, limited to visual high-fidelity but also includes physical and cognitive fidelity (Liu et al., 2008: 64–66) and, especially, functional fidelity (Davidovitch et al., 2009). Within this perspective, technology developments in simulators are expected to augment participants’ affective-sensory experience and create an ‘atmosphere’ (Jørgensen and Holt, 2019), so that trainees can experience it as if it were the actual world.

Two critical barriers to training simulation realism

To attain this ideal, the simulation technology must overcome two critical obstacles: the trainees’ expectations of future interactions and their empathy with the fictitious characters they are meant to embody. The former feature is built into the very definition of training simulation. Currently, trainees have a time horizon, and they typically know that – and often when – the game will end. Unlike the actual world, where all possibilities are open, the simulation is conceived as a closed world, and the trainees do not expect to meet the simulation characters once the game is over. The fact that a game cannot adequately simulate trainees’ expectations of future interactions sets an ontological difference with the actual world and biases their behavior (Van Knippenberg and Steensma, 2003). Hence, simulators should aim at bridging this barrier to induce a feeling of reality.

The second barrier is the trainees’ empathy with their characters. To the extent of their awareness of merely playing a role in the game, it can always be said that they do not ‘really know’ what is to be like the characters they are meant to embody. Consider, for instance, the case of Ford Motor Company, which made its newly hired engineers go through an ‘empathy belly’ simulation, wearing a device that ‘makes a wearer feel like an expectant mother – including extra pounds, back pain, and bladder pressure’ (Lublin, 2016). The idea behind this (not-very-advanced technological) simulation is to put car-design engineers in the ‘mental shoes’ (Goldman, 2006) of a pregnant woman, with the hope that through this exercise they will design more comfortable and safer cars for pregnant women. While in this case it is scarcely possible that the trainees lost sight of the actual world (since they were not in fact pregnant), there are high hopes from more sophisticated technology to enhance the degree of identification with the character (Bachen et al., 2016). Recent advances in neuroscience focus on mirror neurons to understand how humans activate mental processes to reproduce behaviors they observe or expect (Praszkier, 2016).

The paradox of transworld identity

Assuming for the sake of argument that technology will eventually bridge the gap between actual and possible realities (so that trainees feel the world made possible by simulation to be as real as the actual world), this might improve the educational function of simulations, yet it also involves a strong modal realist assumption. The critical question is whether the trainee who makes decisions in the possible world created by the simulator is identical to the manager who lives in the actual world or a mere counterpart (i.e. a different person living a different life and experiences). Classical transworld identity (TWI) theory, following Kripke’s (1963, 2001) modal semantics, takes the first alternative as given. In reaction to this position, Lewis (1986) developed counterpart theory (CPT) to favor the second alternative. In this perspective, the ‘counterpart’ becomes the ‘other’. The ‘self’ and the ‘counterpart’ are two ends of a continuum on which a higher degree of identification of the player with the character will drive the cursor toward the ‘self’ pole, and a lesser degree of empathy will push it closer to the ‘counterpart’ pole. The stake is to what extent the player can put on the ‘mental shoes’ of the character (Goldman, 2009).

It is noteworthy that in the case of training simulators, the managers and their trainee counterparts live in parallel worlds not simultaneously but successively, as they are expected to travel back and forth between possible and actual worlds (Forbes, 1985: 130–158). So, beside facing the classic TWI problems of whether a person and her counterpart are the same or different (Chisholm, 1967), the realism of training simulators leads also to a ‘TWI paradox’. For the trainee to carry back to the actual world the experience lived in the simulated possible world, she must be the same person. Yet, if she is the same person, she cannot fully experience the reality of the possible world created by the simulation as a different person, and thus embody the managerial character she was meant to play. 2

This paradox can be embedded in a more general paradox of the quest for reality in training simulations. On the one hand, these simulations need realism to attain their pedagogical objectives. On the other hand, if that happens, they have to rely on a heavy modal realist ontological assumption (that managers can travel back and forth between the actual and possible worlds), with its appended TWI paradox. To escape the paradox, the alternative would be to accept a form of antirealist modal standpoint that tolerates various degrees of ‘ontological egoism’ (meaning that the actual world is more real than any other world that can possibly be created through a simulation) and a lower pedagogical value of training simulators. This would be the case particularly in management ethics, where empathy plays a critical role. If trainees cannot put on the ‘mental shoes’ of their managerial characters, they may downgrade the importance of ethical issues during a simulation (or even bias their perception of them), as the perspective of harming others may appear to be less urgent or serious than a real-life experience (Teherani et al., 2008).

That said, a way out of this Scylla and Charybdis of realist training simulations would be to reorient their development toward building real-life experiments, wherein the trainees inadvertently become the subject of a pedagogical experiment. Consider the example of the famous Darley and Batson (1973) experiment in social psychology, where seminary students going between two buildings to prepare a lecture on the parable of the Good Samaritan encountered a ‘shabbily dressed man slumped by the side of the road’. Those who ignored him might eventually have learned from this experience about their blind spots in practicing virtue and integrated the lesson into their own personality and future decision process. The pedagogical aim of training simulators – making the trainee think that the simulated environment is real – can be attained not only by building a realistic possible world but also by embedding simulation games in the actual world. The teaching value of simulators can be preserved insofar as the trainees ignore the fact that they are on training. Arguably, it can even be enhanced when considering that the chances trainees will mistake a game for reality are greater when the game is surreptitiously inserted into their daily life than when they are consciously joining an artificial environment with the promise that it will look so real they will forget it is a game.

Advising simulations

The best alternative of all possible worlds

Managerial simulations are set not only to build purely imaginary worlds for training purposes but also to advise managers on making real-world decisions by helping them to identify possible concrete trajectories of the actual world following various choices they might consider making. This type of simulation, proposed by companies like Triskell and Prevedere, typically sets up a cost-benefit analysis – that is, a diagnosis (after reproducing the consequences of alternative courses of action) to provide managers with the required information to assess different possible results and eventually make a better decision (given a specified objective) (Chesney and Locke, 1991). The fundamental question that this type of simulation is used to answer is: in which possible-future world would we be better off? (Vergne and Durand, 2010: 749).

Based on this description, the type of simulation seems designed for strategizing, since ‘a strategist can overcome learning barriers more effectively than rivals by conducting counterfactual experiments, acquiring superior data in moderately complex environments, and focusing on unintuitive phenomena’ (Denrel et al., 2019: 3). However, besides strategy, this type of simulation also covers a broad spectrum of issues in an organization, ranging from marketing (Sterne, 2017; Syam and Sharma, 2018) to human resources management (Buzko et al., 2016). Discrete-event modeling is often used ‘to evaluate possible system alternatives to address a problem or to make decisions such as capacity planning, purchasing decisions, strategic planning, training, and technology planning. It allows for “what-if” questions to be analyzed when designing new systems or improving existing ones’ (Opacic and Sowlati, 2017: 220).

Before a decision is made and one scenario becomes reality, all the possible-future worlds must be conceived as equally real (regardless of their probability). Otherwise, the simulators could not pretend to be accurate, and they would not differ from the manager merely formulating a wish or an expectation about the future. For instance, prices of assets can be calculated insofar as simulators interpret the possible-future worlds as actual-past worlds, and operate as if the transactions on the assets were already finalized and the market actors have already demonstrated their preferences by buying or refraining from buying. To complete such economic calculations with anticipation, advising simulators must rely on a modal realist ontology, as outlined in the previous section.

Modal realism

To provide meaningful comparisons between possible-future outcomes of a decision, simulators need to use the same unit of measurement and refer to the same manager across possible worlds. This raises similar TWI issues to those pointed out in the previous section: for instance, faced with an ethical dilemma, to what extent can we say that the manager who made a moral choice is the same person as the one who chose a harmful alternative? However, it is noteworthy that, unlike the trainee managers moving successively back and forth in time through the actual and possible worlds, managers in the advising simulations are living in simultaneously possible worlds. Thus, advising simulations can avoid the TWI issues that the training simulations have to address by relying on the ontology laid out in Lewis’ (1986) CPT, which states that people living in possible worlds are mere counterparts of (not identical to) those living in the actual world. Our counterparts are people identical to us, except that at some point they made a different choice and had a different experience. When we think about them, we can see ourselves in a counterfactual mirror that shows what we could have been if we had followed another path in the past.

In Lewis’s (1986: 2) words, modal realism: holds that our world is but one world among many. There are countless other worlds. . . . They are isolated: there are no spatiotemporal relations at all between things that belong to different worlds. Nor does anything that happens at one world cause anything to happen at another. . . . there are so many other worlds, in fact, that absolutely every way that a world could possibly be is a way that some world is.

It is noteworthy that the possible worlds evolve separately within Lewis’ CPT, and the frontier between them is meant to remain permanently and fully sealed. Note also that since the advising simulators make claims about the unknown future, they also carry a strong epistemological assumption, in addition to modal realism. Comparing the future outcomes of different choices made by a manager and determining how their counterpart would act requires not only the simultaneous existence of possible-future worlds but also an objective and perfect knowledge of all the worlds simultaneously.

The ‘God’s eye view’ problem

This epistemological assumption implies that a simulator that compares possible-future worlds should be placed outside the possible worlds and endowed with a sort of ‘God’s eye view’, allowing the impartial spectator to see across them (Putnam, 1981), or should act as a director, assuming that the organization is a spectacle (Flyverbom and Reinecke, 2017). To uphold a God’s eye view, we need a ‘simulation theory’ akin to that developed by Bostrom (2003), which states that we are merely fictional characters in a simulation game. If this were the case, then the software writer or computer master, or the AI (assuming it is emancipated from the programmer and gains full autonomy), can indeed have access to various strategies and compare them accurately. 3 However, if we persist in discussing the ontological assumptions of the simulators within the actual world (rather than outside the possible worlds), we should readily agree that those living in the actual world – who might suppose (or even firmly believe) that there is a God’s eye view – would be conceited if they pretended to own such a tool, allowing them to see across possible worlds.

In fact, the CPT itself cannot uphold the God’s eye view epistemological assumption, as it does not allow the individuals living in specific worlds to see what truly happens in the other worlds populated by their counterparts. Persons are entirely confined to one particular world and limited by its frontiers. No one can travel to a counterpart world to really compare the outcomes of their different choices. No matter how sophisticated they might be, simulators are bound to the actual world, just like the software writers programming them and the managers who expect advice. Parenthetically, this is also the case for the theories about possible worlds: the CPT and even Bostrom’s ontology are bound to this actual world where they are conceived. Quantum and AI computers help entrepreneurs to imagine a previously unconceived strategy and to compare outcomes only to the extent that they are foreseeable in the present: like art, entrepreneurship cannot be prefabricated (Beyes, 2015: 445).

An essential feature of the CPT is that the possible worlds are not imagined ex nihilo but are intimately linked to the world where they are formulated and, more specifically, to the subjective experiences and expectations of those who conceive them (Garland et al., 2013). They are ultimately produced under the meta-constraint of power relations, which are ‘ubiquitous and fundamentally pervade all social practice’ (Kroeger, 2011: 756). This observation does not radically change even when we take into account deep-learning AI machines, which notoriously have augmented autonomy from their initial program (compared with devices that merely execute a command), as they can learn by themselves while performing their tasks (Goodfellow et al., 2016; LeCun et al., 2015). The nuance that can be added in this case is that even if the program-writer cannot anticipate the machine’s learning pattern, and even if the device is emancipated from its originator, it does not also mean that it can be emancipated from the actual world and adopt a God’s eye view. The content of its learning remains anchored in the world where it is imagined. Even though such machines become autonomous, they nonetheless remain biased by and limited to the experiences they can accumulate, just like any human manager or adviser.

Forecasting simulations

Mirror, mirror, tell me what the demand will be next year

Simulations are used not only to advise managers but also to attempt to foresee the results of decisions made by various market actors (Evans, 2002). Unlike other types of simulation, where the goal is predetermined (either built into the game or aligned with a given standard of success in the organization), in this type, the objective is to anticipate future events. Therefore, the accuracy of forecasting simulations can be assessed by determining to what extent the future events match the initial prediction. While this type of simulation is relevant both for business practice and theory (Edwards and Berry, 2010), it is mainly applied in the insurance industry and finance and economics (Hu et al., 2018; Moghaddam et al., 2016). Predicting the future of the stock exchange and anticipating the price of a commodity or the GDP of a country is at the core of standard econometric models (Cepni et al., 2020).

Numerous companies have sprung up in recent years with the promise of helping entrepreneurs predict future market events. Companies like HighRadius, a pioneer in cash automation technology, CognitiveScale, and YayPay specialize in using AI to automate accounts receivable and treasury processes. They typically offer real-time information about the likelihood of customers not paying their bills. The software processes and analyzes the relevant data influencing customers’ profitability, including not only the company’s history but also its current profile (credit rating, debt burden, hiring and firing rates, etc.). It also looks into exogenous events expected to affect the specific industry and geographic location and to jeopardize client profitability (Gow, 2020). Another area of prediction is demand forecasting. PredictHQ is a leading company in this market; it provides information about future variations in demand to ride-sharing apps like Uber and companies offering delivery services. To do so, the software is fed with information from data platforms (such as Databricks or Kubeflow) about planned public events such as holidays, sports, concerts, expected social and political unrest, and weather forecasts (Campbell, 2020).

That said, at this stage of technology development, it is unlikely that someone would mistake a forecasting model for a foolproof success recipe: most scholars (and even practitioners) readily admit the patent limitations of current prediction models (Rescher, 1998). They diverge, however, in the faith they put in technological advances. While some scholars consider that they can improve the methodology’s soundness – for instance, through technological advances such as neural networks (Hu et al., 2018) – others think that forecasting models fatally remain ‘fantasy stories’ (Von Mises, 1962: 62–69). The disagreement between these two sides can be explained through their ontological and epistemological assumptions about the actual-future world.

Open versus closed actual future

As mentioned in the previous section, simulators anticipating the future (the actual-future or possible-future worlds) require radical ontological and epistemological assumptions about its reality and discoverability. In particular, forecasting simulators must assume that the future is already settled, at least to some extent, so its discovery becomes a technical matter. Packard and Clark (2020a) recently initiated a conversation about the status of risk and uncertainty in entrepreneurship and the capacity of entrepreneurs to predict the future (Arend, 2020; Packard and Clark, 2020b) by distinguishing between ‘epistemic’ and ‘aleatory’ predictability. The former refers to our technical capacity to anticipate and calculate an event, assuming that we know its causal parameters. ‘For example, a coin flip would become perfectly predictable if all the variables by which it is flipped (angle, velocity, spin rate, air friction, angle and hardness of the landing surface, etc.) could be fully accounted for and their effects fully understood’ (Packard and Clark, 2020a: 8).

Yet even though we might know all the variables of flipping a coin, to anticipate when and on which side that coin will eventually land, a simulator must also be capable of predicting a person’s choice to flip the coin. To achieve this prediction capacity, simulators must assume that the actual-future world is entirely closed – that is, that all choices are already made. This is precisely the assumption that forecasting simulators must rely on to enhance their prediction capacity. PredictHQ might communicate to Uber the estimated turnout of a public event, yet it cannot tell who precisely will choose to use the Uber app, if anyone. To refine the aggregate models and upgrade the capacity for anticipating customer choices, simulators must assume that the future is already written and only remains to be discovered. This ontological assumption (of a ‘closed world’) currently ‘allows probabilistic models to be applied to literally any choice situation and lends the appearance of scientific rigor’ (Packard and Clark, 2020b: 704). Yet, the same assumption also draws skepticism about the possibility of upgrading the simulators to the extent that they can ultimately predict customer choices. If the customers’ future choices could be discovered in advance by a simulator, that indeed would present a recipe for success that would tear down competition and profits.

This closed-future ontology is typically contrasted with an open future, where the choices are yet to be determined (Kodaj, 2014: 419). It is noteworthy that the CPT typically upholds an ontological asymmetry between the settled past and the open future. As Lewis (1979: 459) notes, ‘We tend to regard the future as a multitude of alternative possibilities, a ‘garden of forking paths’ in Borges’ phrase, whereas we regard the past as a unique, settled, immutable actuality. These descriptions scarcely wear their meaning on their sleeves, yet they do seem to capture some genuine and important difference between past and future’. Indeed, if the future is already written, the distinction between actual-future and possible-future worlds loses most of its meaning.

Within this open-future ontology, it is not the dataset that makes a prediction valid: in fact, the reverse is true. Only when a prediction becomes validated (i.e. when relevant future events can effectively corroborate it) can the dataset and the intuition that contributed to the design of the predictive simulation be declared accurate. AI simulators, like the one used by PredictHQ, merely aggregate the existing market signposts to attempt to anticipate what the price of an asset might be at a specific date. The accuracy of this simulation can only be verified if that asset is effectively sold on the market. Otherwise, the information it provides remains a mere interpretation of market indicators within the knowledge limits of that time and is impossible to verify.

The quality of the data a simulator employs, its computational capacity, and the personal experience of the professional who designed it may increase the odds of a correct prediction, as may the software writer’s use of a wide palette of methodological precautions for sanitizing the database. Still, it is only ex post (after validating the prediction) that the dataset and the corresponding model can be considered accurate. To think otherwise would rely on a conceited claim that future choices are written and wait to be discovered. What we call ‘data errors to be fixed’ are not strictly speaking ‘data errors’ (since they may be revealed only ex post) but merely what we think would be data errors within one methodological framework if it were adopted. In other words, ex ante, the error is not measured against the reality of the future (because we do not know it yet) but against the (scientific) expectations at that moment in time. Within the open-future ontology, there is no ex ante way of distinguishing accurate and inaccurate datasets, intuitions, and so on.

Counterfactual forecasting simulations

While the open-future ontology cannot uphold accurate predictions of events (exact prices, precise percentages of demand, the day of bankruptcy, and so on), it nonetheless tolerates forecasts about what would have otherwise been (Goodman, 1983: 108). A counterfactual prediction implies anticipating degrees, proportions, and tendencies compared with other possible worlds: higher prices than otherwise, a lower percentage of market share, heading toward bankruptcy, and so on. Counterfactual predictions are often made as an output of a statistical model: what would the outcomes of a given strategy be if it were applied in a different industry? Therefore, the capacity of simulations to predict relies on the transferability of the object of prediction to other possible situations and, especially, on the pertinence of the theoretical scaffold supporting this transfer.

It is noteworthy that the counterfactual prediction does not depend on technological advances but rather on two critical biases. One is the ceteris paribus assumption (Boumans and Morgan, 2001). The counterfactual prediction works as long as we assume that all other things are equal. As with a laboratory experiment in which exogenous factors can be isolated, the counterfactual simulation assumes that some factors that might influence the prediction remain unchanged (Morgan, 2005). While the designers might prefer to keep some things equal for the sake of the simulation, not all items can be equally set aside (and assumed to be constant). In the words of Faulhaber and Baumol (1988), ‘ceteris are never paribus’. For instance, it is easier to decide that the production of an item will remain stable (as long as the agents in the simulations can easily control this feature) than it is to set aside the possibility of a financial crisis that might contribute to a reduction in the size of the order book for that particular item. At any rate, deciding which things are meant to remain unchanged in the simulation is a fundamental factor capable of changing the results provided by the simulators. Moreover, the distinction between the set of variables that can be included in, or set aside from, the simulation remains an arbitrary decision – fatally so (Eabrasu, 2015).

The other significant bias of the counterfactual prediction is the aliter quam fuiset (‘than would otherwise have been’) proviso. This underlines an utter dependence of the counterfactual prediction on theory, despite noteworthy attempts to build theory through simulations (Davis et al., 2007). If, for instance, we assume the validity of the law of supply and demand, then we can predict that when the supply of a commodity diminishes (while its demand remains constant), its price will be higher than otherwise. This counterfactual prediction can not be invalided even if we observe that the actual price is lower than before. We can still say that otherwise (if the supply was not diminished), the price would have been even lower.

In the light shed by this analysis, we can note that what influences counterfactual predictions are not the technological advances in computational capacities of simulators but the theoretical assumptions made in the actual world. This observation illustrates not only the crucial role that the theory plays in counterfactual prediction but also the theoretical infallibility when explaining counterintuitive facts. It reminds us that organization and management theories describe and shape not only reality but also possible worlds. Therefore, we should lower our expectations of the capacity of simulations to anticipate a particular future state of affairs (even when computers multiply their computational capacities) and focus on the theory underlying the counterfactual prediction. The most ambitious expectations from simulations are qualitative, and primarily depend on a pre-existing theoretical scaffold, which means that they cannot be verified or invalidated by experience.

Conclusion

This article proposed to fine-tune the expectations of business simulation technology through the lens of philosophical counterfactual analysis. It identified and confined the discussion to three distinct functions concerning managerial decisions: training, advising, and forecasting. This study found that specific ontological and epistemological assumptions heavily constrain the quest for higher-performance technological simulators in each of these three areas. For fully realistic training simulators, we need to overcome the TWI paradox; for simulators to accurately compare the outcomes of one decision across possible worlds, we need a God’s eye view; for accurate predictions of the actual-future world, we must also assume that the future is closed.

The paper contributes to these conversations about simulations’ realism by substantiating the claim that to enhance the training, advising, and predicting functions of simulators, we do not necessarily need more technology. Rather than attempting to build a realistic simulator, we can embed simulation games in the actual world: a better understanding of our biases and prejudices within the actual world will also help us imagine and compare the alternative outcomes of managerial decisions better. A sound theory can make qualitative counterfactual predictions more accurate.

Ironically, it is precisely because no simulator can predict the actual-future world that we cannot know whether simulators will become mainstream in management training, advising, or forecasting. Nor do we have a God’s eye view to compare all possible worlds to say whether we are better with or without technologically enhanced simulators. We can nonetheless observe that high expectations of new technologies are paired with increasingly blind trust in machines’ capacity to recreate or discover actual-future and possible-future worlds, potentially leading to self-fulfilling prophecies (Merton, 1968). While this observation is increasingly made for various AI consequences, spanning from culture (Taplin, 2017) to job displacement (Morgan, 2019: 378), further research should inquire into the simulators’ self-fulfilling prophecies about actual-future and possible-future worlds, not only at the societal level (Turkle, 2009) but also more specifically at the organizational level. Perhaps computer simulations cannot really discover future market prices. Still, insofar as we trust them to do so, we guide our actions based on future market prices fixed by automated repricing algorithms, like children who think their parents can do magic just because they entrust them with the capacity for doing magic. Also, thinking about the power the algorithms hold might distract our attention from ‘thinking about how power might operate through them or be complicit in how those algorithms are designed, function and lead to outcomes’ (Beer, 2017: 11).

This observation also opens a second angle for further inquiry into the self-inflicted (and self-financed) immaturity built by trusting simulators’ expertise when delegating managerial training, advising, or forecasting to autonomous machines. The expertise dependency on AI is already recognized as a significant socio-political issue of our times (Morgan, 2019), and its multiple effects on power imbalances have also been pointed out (Zuboff, 2019: 100). However, additional research could focus more specifically on how managers can genuinely emancipate themselves from dependency on simulators and retain control (at least) at the last resort over their decisions while delegating expertise to simulators. This research path on self-inflicted immaturity is also strewn with a myriad of related ethical challenges that need specific focus. The issue of how to participate in unmaking futures that were already ingrained, and its multiple environmental, social, and economic facets should be considered (Morgan, 2020), as should assessment of responsibilities when managerial decisions based on simulated forecasts lead to erroneous or harmful outcomes in the actual world (Eabrasu, 2018: 79–88). Further, the additional impact of managerial decisions based on faulty simulations on the worse-off in an organization should also be discussed.

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.