Abstract

It is widely established that social media afford social movement (SM) organizations new ways of organizing. Critical studies point out, however, that social media use may also trigger negative repercussions due to the commercial interests that are designed into these technologies. Yet empirical evidence about these matters is scarce. In this article, we investigate how social media algorithms influence activists’ actualization of collective affordances. Empirically, we build on an ethnographic study of two SM organizations based in Tunisia. The contributions of this paper are twofold. Firstly, we provide a theoretical framework that specifies how algorithms condition the actualization of three collective affordances (interlinking, assembling, augmenting). Specifically, we show how these affordances are supported by algorithmic facilitation, that is, operations pertaining to the sorting of interactions and actors, the filtering of information, and the ranking and aggregation of content. Secondly, we extend the understanding of how social media platforms’ profit-orientation undermines collective action. Namely, we identify how algorithms introduce constraints for organizing processes, manifested as algorithmic distortion, that is, information overload, opacity, and disinformation. We conclude by discussing the detrimental implications of social media algorithms for organizing and civic engagement, as activists are often unaware of the interests of social media-owning corporations.

Introduction

Over recent years there has been increasing interest within organizational research about how social media affects collective action (i.e., coordinated activities based on similar patterns of technology use performed by a collective in the pursuit of a common goal, George and Leidner, 2019). In social movement (SM) studies, social media have been found to be essential for many SM organizations (i.e., organizations that take the collective pursuit of social change as a primary goal). These organizations range from community-driven initiatives such as print shop collectives and community radio stations, protest camps such as Occupy (Ganesh and Stohl, 2013), to more formalized non-profit organizations (Uldam and Kaun, 2019). In these contexts, social media are largely considered to provide activists with possibilities for collective action (e.g., Dobusch and Schoeneborn, 2015), by working from the bottom up and self-organizing (Segerberg and Bennett, 2011).

Celebratory accounts of the Arab Spring uprisings, and the Occupy and Indignados movements, often focus on affordances, that is, “the action possibilities and opportunities that emerge from actors engaging with [social media] technologies” (Faraj and Azad, 2012: 238). While affordances, such as instantaneous and reciprocal communication, are important to the emancipatory and organizing potential of social media (Khazraee and Novak, 2018), they are merely one part of a much bigger picture (Uldam and Kaun, 2019). Indeed, recently SM scholars have argued that the commercial orientation of corporations that own social media might not always accommodate activists and may work against their intended goals (Coretti and Pica, 2018; Poell and Van Dijck, 2015). Hence, there is a need to look beyond the opportunities and also consider how commercially-oriented social media technologies may compromise activists’ actualization of collective affordances. In doing so, we can move beyond the celebratory focus of social media and better understand both their possibilities and constraints for collective action.

In this vein, critical organizational research has started to focus on the negative aspects of new information and communication technologies for various forms of organizing. Studies highlight the dark sides of algorithms that enable new forms of control (Kellogg et al., 2019) and facilitate hidden algorithmic management, which is based on the commercial exploitation of user data (Beverungen et al., 2015). Such consequences are rooted in profit-orientation (Fuchs, 2014) and have become ever more important, as algorithms increasingly govern a variety of organizing processes (Beverungen et al., 2019), from individual performance evaluations (Manley and Williams, 2019) to interactions on social media (Alaimo and Kallinikos, 2017). In SM studies, conceptual work by Milan (2015a: 5, 2015b) suggests that the “algorithmic environment” of social media may support the symbolic work of activists in the production of meaning and narratives. At the same time, Milan (2015a) cautions that algorithms may have detrimental outcomes on collective action. Accordingly, we aim to identify how the underlying algorithms of profit-oriented social media both support and constrain the actualization of collective affordances, and thereby impact SM organizations’ ability to engage in collective action.

Theoretically, we draw on affordances and SM research. An affordance lens allows us to place similar weight on analyzing the interplay between social practices and the material features of technologies (Leonardi et al., 2013), and this approach has been deemed useful for exploring the organizational use of technologies (e.g., Leonardi and Vaast, 2017). We thus avoid technological determinism, which may give too much credit to technology and understate the agency of human actors. In doing so, we show how interdependent activists actualize imagined affordances (Naggy and Neff, 2015), as they anticipate how the collective use of social media features will play out. Secondly, our framework builds upon SM research, which has suggested that commercially-oriented algorithmic operations of social media may compromise activists’ goals (e.g., Van Dijck and Poell, 2013). Combining these streams of literature allows us to investigate the little understood influence of algorithmic operations designed by profit-driven social media corporations on collective action.

Empirically, we study two cases of SM organizations in Tunisia that use social media as central resources in organizing to promote freedom and access to information (Lee and Chan, 2016), which makes these organizations relevant sites for our investigation. Our analysis, based on ethnographic methods, uncovers how commercially-oriented algorithms impact collective action by moderating the relationship between technological features (e.g., hashtags, news feeds, etc.) and their contextual use by activists. The contributions of this study are twofold: firstly, we show how algorithms support SM organizations in actualizing three collective affordances, but simultaneously introduce constraints for collective action in social media. In so doing, we advance literature on affordances by providing a model for the algorithmic conditioning of collective affordance actualization. Secondly, our study contributes to SM literature by showing how the commercial interests ingrained into the algorithmic design of social media not only support but also work against activists’ intended goals.

The paper proceeds as follows: we start by outlining the theoretical background for the interplay between social media affordances, commercially-oriented algorithms, and the collective action of SM organizations. The methods section outlines the ethnographic methods employed. In our analysis, we then identify three collective affordances and describe how individuals’ expectations and misconceptions, at times, interact with algorithmic decision-making, creating complex relationships that result in support and constraints for collective action. We subsequently discuss our analytical model that shows how activists are left in the dark about the concrete working of social media algorithms, which follow commercial interests that stand in conflict with the expectations and goals of activists. The paper concludes with a brief note for future research based on the limitations of this study.

Social media affordances and collective action

The concept of affordances dates back to Gibson’s (1982) work on ecological psychology, in which affordances are defined as what the environment can offer its users to achieve their goals. Currently, affordances are often understood as relational, referring to the relationship between technology users, defined by their specific intentions within a context, and the material features of a technology that enable individuals to reach organizational goals (Leonardi et al., 2013). Scholars have identified various social media affordances (e.g., visibility, replicability, editability, association, and searchability) that can help activists in their individual tasks and transform their civic engagement (e.g., boyd, 2011). Interestingly, these affordances have been found not to dictate activists’ behavior, but to configure the environment in a way that shapes participants’ efforts for collective action (Segerberg and Bennett, 2011). Following these leads, organizational scholars have started to look beyond individual use, and also paid attention to social media affordances at the collective level (Vaast et al., 2017).

As such, organizational studies typically investigate how affordances enable collective and collaborative behaviors that were difficult or impossible to achieve in combination before the emergence of these new technologies (Treem and Leonardi, 2012). Similarly, SM research has identified collective affordances through which activists and SM organizations symbolically construct a collective identity (Khazraee and Novak, 2018), as well as exchange organizational roles while depending on each other’s contributions (Vaast et al., 2017). Overall, recent research in both SM and organization studies that examines individual and collective levels has found various social media affordances that support key aspects of collective action (see Sæbø et al., 2020 for a review). Nevertheless, gaps remain in terms of the role of algorithms (Milan, 2015a) that underlie many social media features and “reconfigure the relation between users and their tools” (Lange et al., 2019: 605). As discussed in the next section, critical SM research has highlighted that algorithms underlying social media features mainly follow corporations’ commercial goals (Ovide, 2018; Poell and Van Dijk, 2015).

Social media algorithms and collective action

In principle, social media algorithms are designed to “reduce complexity brought about by information and interaction overload in social media” (Coretti and Picca, 2018: 73). They do so by performing “sorting, filtering, and ranking functions” (Neumayer and Rossi, 2016: 4) that provide users with personalized content to increase their interactions and engagement (Van Dijck and Poell, 2013). Algorithms make recommendations for which content and actors their users should pay attention to, either by simply making information and possible connections visible or through explicit recommendations (Leonardi and Vaast, 2017). Recently, scholars have highlighted the perils of such algorithmic functions, for example by arguing that these operations create opinion echo-chambers that can result in radicalization (Just and Latzer, 2017).

Evidently, critical scholars have argued that profit-driven interests, which shape the design and functionalities of social media, are responsible for a multitude of negative consequences rooted in hidden algorithmic operations (Fuchs, 2014). Based on this critique, SM scholars have recently argued that the profit-orientation of platforms introduces limitations for collective action, because commercial goals stand in contrast with activists’ intentions and ways of organizing (Coretti and Picca, 2018; Poell and Van Dijck, 2015). The main argument is that commercial interests therefore impact how information is distributed and interactions are steered through a “techno-commercial” process (Poell and Van Dijck, 2015: 529). For example, Facebook follows an advertising business model that motivates users to pay to distribute their content on the platform rather than facilitating natural growth that might benefit activists. Furthermore, to increase revenue streams, social media algorithms constantly connect new users with each other and accelerate information streams (Van Dijck and Poell, 2013), with the goal of collecting and selling user data to third-party marketers and advertisers (Andrejevic, 2013). Overall, scholars have argued that these hidden algorithmic operations “could entail negative consequences for social movements” (Coretti and Pica, 2018: 74), yet there is limited empirical evidence to support this.

It is important to note that while algorithmic operations of social media are typically hidden, recent research suggests that social media users are not completely at the mercy of seemingly powerful and manipulating algorithms (Coretti and Pica, 2018). Rather, users are increasingly adapting their technology use by anticipating how algorithms work (Bader and Kaiser, 2019; Bucher, 2017). Nevertheless, as we argue next, the decision-making of algorithms tends to be difficult to grasp, and individual users can only imagine the details of their working (Naggy and Neff, 2015).

Imagined affordances and collective action

Regardless of whether users are aware of them, social media algorithms underlying technological features afford their users certain actions (Ettlinger, 2018). For example, by actively recommending a given conversation under a hashtag, the hashtag algorithm enables users to access information, which might help them to organize as a collective (Albu and Etter, 2016; Albu, 2019). These algorithmic operations remain largely hidden for activists, which makes the relationship between intended technology use and assumed technological features more ambiguous. In fact, scholars have argued that “affordances as a field of possibilities are considerably more complex in algorithmic life than in a Gibsonian environment–actor relation in which there is only one actor” (Ettlinger, 2018: 8).

These developments have resulted in calls for an approach to affordances that does not ignore the agency of algorithms (Naggy and Neff, 2015). Rather than looking at the relationship between technological features and users, such a conceptualization needs to account for the decisions made by algorithms. Accordingly, Naggy and Neff (2015: 5) propose the concept of imagined affordances that emerge between user perceptions, attitudes, and expectations; between the materiality and functionality of technologies; and between the intentions and perceptions of designers. As designers’ intentions and the operation of algorithms are unknown to users, affordances become less clear and thus can only be imagined. However, as users start to imagine how social media features work, they might form incorrect perceptions and misinterpretations (Naggy and Neff, 2015).

In sum, social media provide collective affordances which are governed by inconspicuous algorithms that are designed based on a commercial orientation. Yet these technologies leave users in the dark about their real workings. As a consequence, members of SM organizations can only imagine affordances for collective action and accordingly use social media based on particular expectations, which may be supported by commercially-oriented algorithms—or not. In the next section we describe the methods applied to answer the following research question:

How do social media algorithmic operations impact the actualization of imagined affordances for SM collective action?

Methods

Data collection context

This study is based on semi-structured interviews and participant observation. We follow a “scraping” approach (Rieder et al., 2018), which amounts to observing what a social media algorithm does—not only to move closer to understanding how it works, but also to investigate the broader forms of agency involved. Specifically, we draw on agential realism, which allows us to investigate the inseparable social and technological agencies. As Barad (2007) notes, this epistemological-ontological-ethical lens provides an understanding of the role of human and nonhuman, material and discursive, and natural and cultural factors in scientific and other social-material practices, thereby moving such considerations beyond the well-worn debates that pit constructivism against realism, agency against structure, and idealism against materialism. From this standpoint, we outline the operations of social media algorithms based on their implications and qualitatively identify the ways in which algorithms shape collective action. As shown next, this research design allows us to map the shifting relations between activists’ perceptions, the actualized technological features of social media, and the underlying adapting, changing, and mediating algorithmic operations (Lange et al., 2019).

Data collection rationale

Interviews and observations

Data was initially obtained using qualitative semi-structured interviews. However, interpreting algorithms as having agency does not limit the investigation to interviewing potential users about their perceptions, nor can it amount to developing knowledge by learning how to conceive, code, and use such algorithms, as these are not available to researchers (Lang et al., 2019). Not even the social media conglomerate employees interviewed in past research projects were fully aware of the operations of the algorithms that they programmed themselves (Gillespie, 2014). In this respect, it is not possible to treat the activists’ narratives on what their algorithms can or cannot do as transparent accounts of how strategies were executed, or even as reliable accounts of how algorithms operate (MacKenzie, 2014). Scholars explain how algorithms work “by drawing indiscriminately from knowledge obtained through personal interviews with workers, coders, and/or data released from regulatory authorities” (Seyfert, 2016: 256). Similarly, we also included observations of activists’ behavior in relation to algorithmic operations in both virtual and physical sites to analyze the outcomes of algorithms on collective action. Hence, our analysis does not provide exact knowledge of how algorithms operate. Instead, we show how algorithms affect collective action; for example, we observed and learned from interviewees how algorithms sort, filter, and rank information. We therewith gained insights into the manifestation of hidden algorithmic operations. The observations of activist work conducted during the fieldwork period (10 months between 2015 and 2016 in Tunis, Tunisia) were helpful for developing field notes that showed the ways in which social media use shapes SM organizing activities. While our interviews provide information about actions and the justifications of those actions, observing social media data revealed actual patterns of communication and behavior over time, so that we could explain how activists relied on and anticipated social media algorithmic operations to accomplish their tasks.

Data collection steps

Tunisian SM organizations were chosen as paradigmatic cases, which are carefully selected examples extracted from a larger phenomenon (Tracy, 2013). Specifically, in relation to digital activism, Tunisia was chosen as a geographical site of investigation because it is representative of a country context where social media has a high penetration rate (Rane and Salem, 2012). Social media use was central to SM work in setting into motion the Tunisian “Jasmine” or “Internet” revolution that took place in 2011 against crony privatization and the dictatorial practices of the authoritarian leader Zine el-Abidine Ben Ali. Studies show that both social media and traditional face-to-face coordination afforded the capacity to mobilize street protests long before mass mobilization was crucial to Tunisia’s successful revolution (Lowrance, 2016).

We selected a purposeful sample of two paradigmatic cases: Kappa and Omega (pseudonyms), which demonstrate comparable modus operandi. One is an SM with more experience of using social media for collective action (Kappa, 3 years) and the other SM is at the initial stage of using such technologies (Omega, 9 months). This enabled a diversity of parameters to be obtained for the purpose of comparison (Tracy, 2013). Omega is an SM that works to promote open government and democracy in Tunisian institutions. Omega members use social media for daily work (to create advocacy strategies, attract supporters for anti-corruption campaigns, recruit volunteers, etc.). Kappa is an advocacy SM that works to defend the fundamental right of freedom of access to information by offering citizens the means to stay updated about the actions of their elected representatives. It thus repositions citizens at the core of political action. In doing so, Kappa members rely on social media for some organizational tasks (e.g., disclosing to the public the activities of Tunisia’s National Constituent Assembly).

The data corpus consists of three elements. The first comprises field notes based on observations from participating in protests held in public places in Tunis, at meetings and press conferences organized by Omega and Kappa members at their headquarters, as well as at designated locations in Tunis where permanent staff and volunteers met. The field notes were transcribed and amounted to 114 single-spaced pages. The second element consists of 13 semi-structured interviews lasting between 40 and 60 minutes with the Omega members and seven semi-structured interviews with Kappa members, which were conducted in different locations (headquarters, before or after protests, etc.) in English with the informants that spoke English. In the seven cases where the informants spoke only French or Arabic, the interviews were conducted together with a research assistant who facilitated real-time translation from Arabic or French to English. The interviews were conducted with Kappa and Omega members in order to foster the deeper meaning of responses that addressed the different perceptions and influential factors in the way the members used specific technologies; the various practices and strategies of using multiple technologies to do specific tasks; and whether social media algorithms had expected or unexpected outcomes for the intended tasks.

The third step of the data collection pertained to sourcing the social media interactions of Kappa and Omega members. The collection of this data was based on identifying critical events (events singled out through field observations and indicated by informants during interviews), where we analyzed those interactions that had an impact on the ability of members to organize collectively to work on particular activities (i.e., campaigns, protests, conferences). Specifically, in the case of Kappa, the members used only one official Twitter account and an official Facebook page, which were initiated 3 years prior to the time of investigation. We collected all tweets that were disseminated by members of the organization, including the official account of the two organizations, and those tweets that were disseminated by other Twitter users that directly addressed the organization (tweets with an @ sign directed to the organization) and/or were written using a hashtag that related to the organization (e.g., “@Kappa we are back for the plenary this evening. Our mission, we are leading to the end #TnArp”). To collect data, we used ExportTweet and Snapbird APIs, through which we gained complete access to the Twitter data of the respective accounts (tweets, retweets, and favorites).

Given the limited scope of this paper, we identified critical events based on our informant interviews and selected a total of 875 tweets that pertained to two critical events in question (i.e., organizing a press conference and live streaming a voting session). These events were singled out when they met the following four criteria: (1) they involved actors interacting through social media use; (2) they were characterized by an identifiable common cause or theme; (3) they unfolded over time; and (4) they involved specific and intended organizing tasks, taking place in physical and/or virtual locations.

In the case of Facebook, we collected all interactions disseminated from Kappa’s official Facebook page (176,997 followers at the time of investigation), as well as those posts that contributed with hashtags to the organizational events in question. Similarly, based on a qualitative reading of the online conversations and on insights from our interviewees, we selected the Facebook interactions pertaining to those incidents, which amounted to 998 posts. In the case of Meerkat 1 we were provided with all the streams of the Kappa members, comprising 19 video broadcasts of approximately 5–7 minutes’ length each. In the case of Omega, we followed the same data collection procedures.

We subsequently examined all social media interactions of the 11 permanent Omega employees and selected those relating to critical incidents, which comprised 21,497 tweets, 31,423 Facebook interactions, and nine Meerkat video broadcasts of 3–8 minutes’ length each. To validate our sampling, we conducted a search for interactions on the respective profiles that would contradict our emerging concepts and categories derived from the critical incidents identified by our informants (Tracy, 2013). The results showed no significant contradictory evidence to our findings and we therefore conclude that our findings are valid.

Coding and data analysis

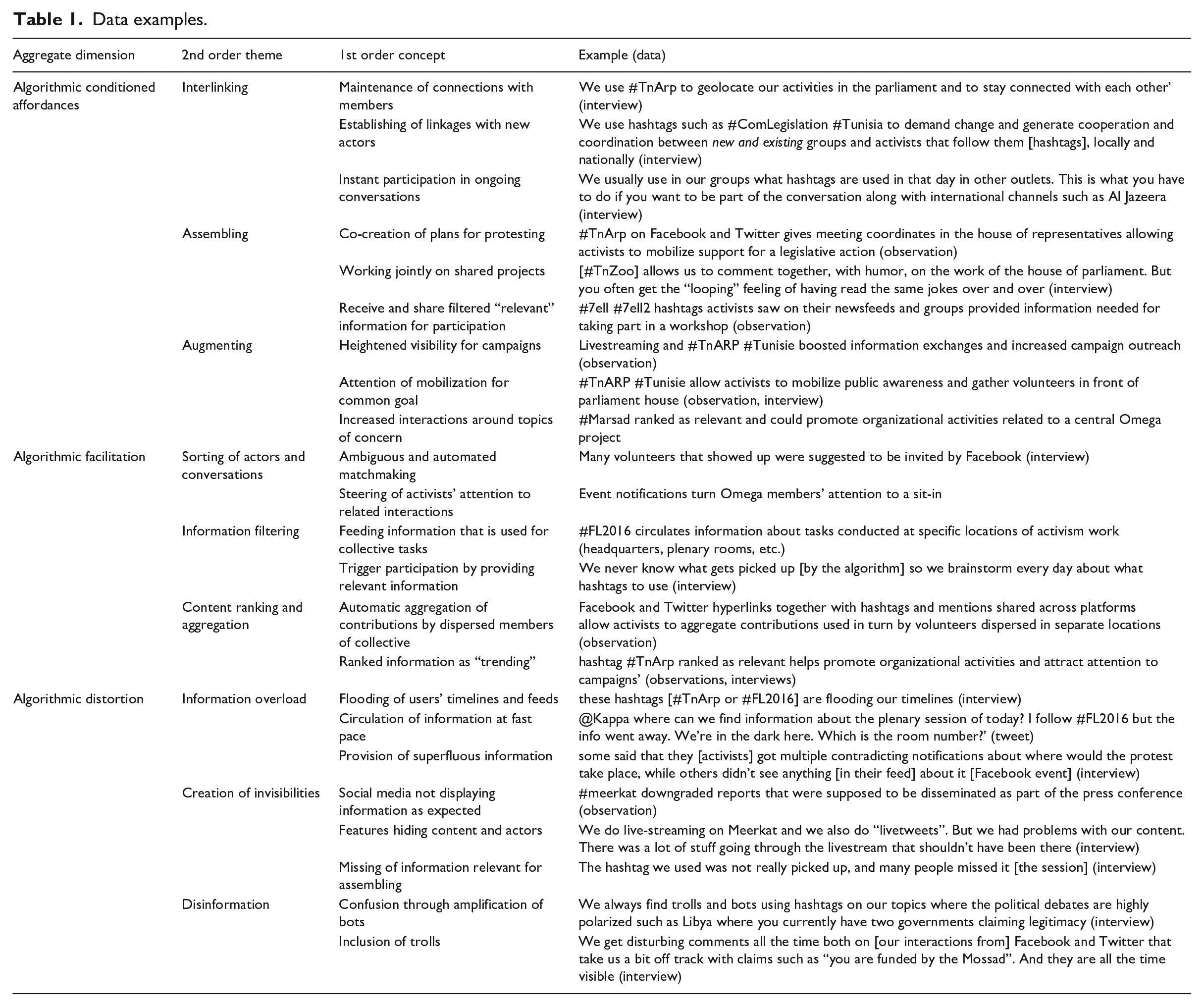

All collected data was analyzed inductively based on thematic analysis, a technique specific to grounded theory (Lincoln and Guba, 1985). In the case of social media data, the unit of analysis was a social media post. For the interviews and field notes, a code incorporated one sentence. The first step was open coding and amounted to line-by-line coding to identify initial concepts in the data and then grouping them into categories. Such coding involved comparing and contrasting data sources while repeatedly reading through transcripts, online interactions, and fieldwork journal entries to identify common codes and recurrences. The emerging codes were indicative of perceptions, actions, and imperatives that frame collective action: for instance, the interview statement “[w]e use #TnArp to geolocate our activities in the parliament” and “to stay connected with each other” was coded for maintenance of connections with members (see Table 1 for more examples).

Data examples.

The open codes functioned as a basis to start sketching situational maps (Clarke, 2005). Situational mapping is an analytic tool suitable for analyzing the materiality of affordances because it starts with the “situation” as the unit of analysis. It included questions such as: who/what is participating and how is collective action emerging? What nonhuman elements (algorithmic-driven hashtags, direct messages, favorites, etc.) are implicated? The goal of this method is aligned with the affordances literature and explores an organizational situation of an SM by analyzing all of the analytically pertinent human and nonhuman/technological elements of a particular situation as framed by those in it and by the analyst (Clarke, 2005). To this end, we compared and contrasted situations that showed how important elements (e.g., users, hashtags, timelines, etc.) were present in some situations or absent in others.

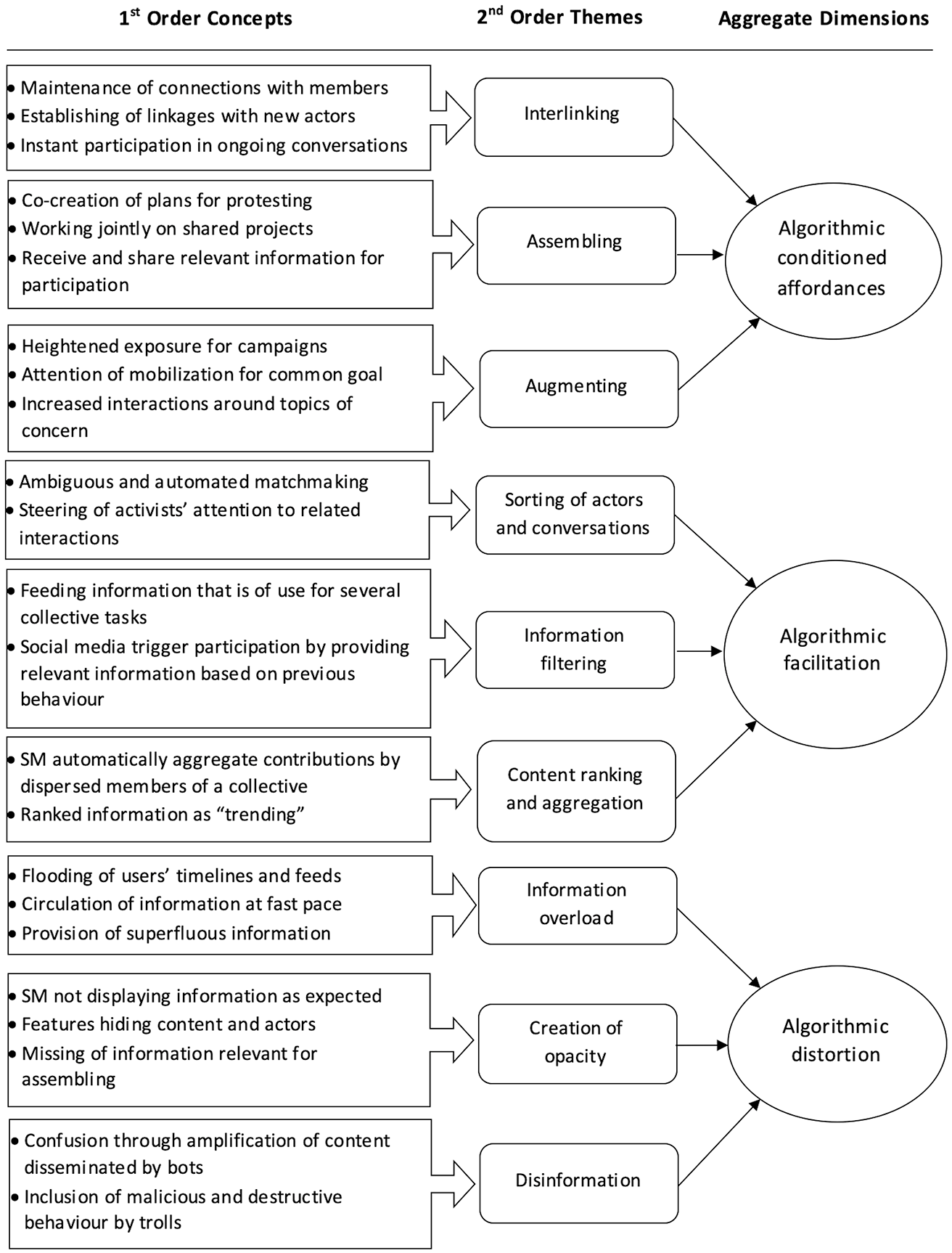

Following the grounded theory approach, for the second round of coding we applied axial and theoretical coding (Tracy, 2013). This amounted to using preliminary findings at different stages throughout the research process to cluster first-order concepts into second-order themes (that make conceptual sense) based on the similarities and differences between codes and the actors or technologies present in those situations (e.g., maintenance of connections with members was coded as belonging to the theme of interlinking). Finally, through theoretical coding the nine themes were placed into three overall aggregate dimensions of algorithmic conditioned affordances, algorithmic facilitation, and algorithmic distortion (see Table 1). The double step coding process provided a measure of triangulation, as most of the data was evaluated in relation to at least one other data source. A negative case search was conducted in order to systematically search for evidence contradicting the emerging themes, which increased the internal validity of our findings. Figure 1 provides an overview of our data structure.

Data structure.

Findings

Our analysis identifies the implications of commercially-oriented algorithmic operations on collective action. On the one hand, these automated operations manifest as algorithmic facilitation, by pertaining to the sorting of interactions and actors, the filtering of information, and the ranking and aggregation of content. As we next explain, algorithms support the actualization of three collective affordances (interlinking, assembling, and augmenting). On the other hand, we discover how hidden algorithms manifest as algorithmic distortion (through information overload, creation of opacity, and disinformation) that constrains the actualization of collective affordances. For purposes of analytical clarity, we present our findings by outlining the opportunities and constraints introduced by profit-driven algorithmic operations for each affordance. Nevertheless, this neat separation is simplified for analytical purposes. We discuss the complex interrelations after the analysis as we develop a theory on the algorithmic conditioning of affordance actualization.

Interlinking

Interlinking is a collective affordance through which locally dispersed activists establish and maintain linkages to each other and conduct collective action related to recruitment and mobilization. Algorithmic operations play an important role whereby linkages are not only established by the mutual intentions and actions of activists, but are strongly supported by algorithms, while occasionally constrained by them. In the following discussion, we elaborate on how these hidden operations manifest as algorithmic facilitation that supports the collective affordance of interlinking through the sorting of actors and interactions into groups and conversations. We then turn to the analysis of how hidden algorithms manifest as algorithmic distortion that constrains the actualization of affordances through information overload.

Algorithmic facilitation of interlinking

In our analysis, we found that Kappa and Omega activists understood the capacity of algorithms to facilitate the interlinking that allowed them to organize. Our observations also confirmed that establishing and maintaining linkages is supported by algorithms that sort online interactions as belonging to particular conversations and assign actors to certain groups—decisions that are made without the clear understanding of activists. In our interviews, we have learned from various informants how social media features bring together interactions and actors through a hidden and ambiguous match making. At times, this process was actively sought out by activists and sometimes it happened unexpectedly. For example, social media features such as events autonomously introduced and suggested new possible collaborators to Omega members (“I recall that at the sit-in [peaceful demonstration] I created [on Facebook] for the two journalists Sofiane Chourabi and Nadhir Guetari that disappeared in Libya, many volunteers that showed up were suggested to be invited by Facebook”, interview, Omega activist). The established linkages, then, enable SM actors not only to mobilize and grow, but also to organize as a group dispersed in different locales (assembly rooms, protests, headquarters, etc.). Particularly, as social media features maintain linkages by steering activists’ attention to certain interactions and actors, this affordance is key to maintaining SM collectives, which are typically fluid and fleeting.

In the case of Kappa, interlinking was actualized as members used hashtags (e.g., #Tunisia) with the expectation that underlying algorithms would connect them with audiences (e.g., constituents, volunteers, etc.) “on the spot” (activist, interview) for further engagement. Moreover, by being “always online” (ibid.), activists expected to establish connections with other activists through social media features, such as hashtags, timelines, and notifications, which eventually would interlink them to new and established collaborators, and consequently result in ad-hoc coordination, recruitment, and campaigning:

It [social media] has changed how we work. We have a major increase in volunteers who are always online, and our support has spiked since we do a lot of work and reach out on Facebook and Twitter. We use hashtags such as #ComLegislation #Tunisia to demand change and generate cooperation and coordination between new and existing groups and activists that follow them [hashtags], locally and nationally (interview, Kappa manager, emphasis added).

Activists often anticipated the working of algorithms to establish new linkages, and accordingly worked toward being better recognized and picked up by algorithms (curating images, tagging users, cross-sharing posts across different platforms in order to increase likes and retweets, etc.). However, informants were often not entirely sure about the inner workings of these mechanisms. Kappa activists relied on “guess[ing]”, “brainstorming”, and “trial and error” knowledge rather than well-defined organizational processes, as the manager indicates:

We use #TnArp to [geo]locate our activities in the House of Parliament. Like this we give those interested in the accountability of elected representatives the chance to be in real-time control of their votes in the assembly. We basically tweet and post everything the MPs [Members of Parliament] say, pictures, videos, you name it, everything from the moment the parliament session is opening in the morning until the evening when the doors close. . .uhm. . .like showing misbehavior or so on. . . . Still, by doing this [tweeting and posting] every day, we are able to make recommendations on the spot for the assembly that is preparing the bill that day. But it’s a lot of randomness and luck invested. We never know what gets picked up [by the algorithm] so we brainstorm every day about what hashtags to use (interview, Kappa manager, emphasis added).

Similarly, as observed on multiple occasions in Omega’s case, the same #TnArp hashtag provided activists with the ability to interlink and subsequently coordinate members from other communities. Thereby, activists imagined and relied on algorithms as relational entities involved in their work, with a capacity to support the mobilization of other actors across communities by sorting interactions and actors into groups and conversations. The hashtag and its underlying algorithm brought together different actors, including volunteers, in organizational conversations:

Once #Tunisia and #TnArp started trending that day on Twitter and Facebook, we had to use them everywhere in our [awareness] campaigns although they weren’t “our” hashtags. We usually use in our groups what hashtags are used in that day in other outlets. This is what you have to do if you want to be part of the conversation along with international channels such as Al Jazeera (interview, Omega manager, emphasis added).

Algorithmic distortion of interlinking

Activists often experienced challenges due to operations of algorithmic distortion that generated information overload. Specifically, the sorting operations of hashtags across newsfeeds and timelines are based on ambiguous and aleatory criteria which constrain interlinking, thereby undermining collective action. In the case of timelines, the recommendations made by Facebook algorithms in terms of new connections and ongoing conversations were superfluous. Algorithmic operations of distortion manifested as informational clutter that reduced the effort and commitment needed in the campaign: “we are of course thankful to everyone who supports us, even from a distance. But in a lot of cases the new ones [activist connections randomly suggested by Facebook] are just there for ‘likes’. We don’t see the same results” (interview, Omega volunteer).

In the case of hashtags, we observed how activists’ communication was compromised, because of the overload of information fed to them through hashtags and timelines. As the Omega manager indicates, their expectations were not met due to the hashtags not working the “right way”, breaking down ongoing connections between dispersed actors that rely on each other: “[w]e work hard to get the message out the right way but we often see that it’s not the case. We get feedback from our interns and volunteers such as ‘these hashtags [#TnArp or #FL2016] are flooding our timelines’” (interview, Omega manager). These constraints were, for instance, experienced by Omega members during the coordination of a sit-in in front of the Tunisian National Theater against the law proposal 108 (i.e., a proposal to impose restrictions on women’s clothing in relation to a hijab, which is a scarf covering the face). As the Omega activist explains, the automatic data sorting operations of Facebook undermined her attempt to attract resources by creating new links with activists:

I shared the event publicly a day before with #wearetogether_withthewomen and tagged 21 activists in the post so that they can reach out to their network. But only [a] few activists showed up the second day. I called many of those that were supposed to come but some said that they got multiple contradicting notifications about where the protest would take place, while others didn’t see anything [in their feed] about it [the event] (interview, Omega activist).

Likewise, in the case of Kappa and their community of followers, algorithms sorted and pushed “trending” interactions to users that were experienced at an overwhelming speed. Observations on repeated occasions confirmed that the resulting outcomes of data sorting through trending #TnArp were disruptive as the linkages between activists broke down. Both Kappa and Omega activists—despite receiving information—were unable to follow the useful information that flooded their timelines. In sum, our observations and interviews revealed that this automated flooding of timelines, ultimately, impacted activists’ ability and motivation to take to the streets.

Assembling

Assembling is an affordance through which members collectively and often virtually bring together information for various forms of co-creation, ranging from the spontaneous planning for protesting, to collaborative work on documents (mission reports, donor reports, etc.), and coordination of core activities (press releases, debriefing meetings, etc.). Social media features, such as hashtags, thereby supported assembling by filtering information that was relevant for collaborators, such as through trending. When five activists contributing to a workshop were asked where they got their event information from, they answered that they obtained it based on the #7ell #7ell2 hashtags they saw on their newsfeeds and groups. It is thus the assembling affordance that provides activists with the ability to spread and receive filtered information, and therefore to engage and participate in collective action tasks (e.g., partaking in press events, funding options, etc.). Our analysis shows next how assembling relied on equivocal algorithmic operations, as much as the actualization of this affordance was constrained by algorithms.

Algorithmic facilitation of assembling

Assembling is actualized through features such as tweets, favorites, and live streams that expose activists to content to which they eventually contribute or which prompts them to initiate collective action. Algorithms play an important role in assembling, as they filter what users see in their timeline or newsfeed, based on the predicted likelihood of what he or she wants to see. Our analysis reveals that members of collectives anticipated the filtering operations of these algorithms and actualized assembling in order to act collectively. For instance, Omega activists shared the hashtag #TnZoo on Twitter and Facebook in order to create acts of political satire collectively (“even stronger > ‘According to Selma Elloumi, 11 million French can come to Tunisia this summer’ facebook.com/15973733071611. . #LOL #TnZoo” tweet, Omega manager). While being well aware of the data selection processes happening in the background (“looping feeling”), the activist hinted at the ability provided by assembling to engage in tasks collectively:

We use #TnZoo to show the confusions, aberrations, unusual events relating to the Assembly, but also to the ruling and political class in general. This hashtag allows us to comment together, with humor, on the work of the House of Parliament. But you often get the “looping” feeling of having read the same jokes over and over [chuckles]. But because it [the hashtag] is popular, it [#TnZoo] also got in touch with a [Facebook] community of volunteers that were following it [#TnZoo] and they [volunteers] showed up at many sit-ins (interview, Omega manager).

In the case of Kappa, activists mobilized support and engagement for a legislative action through the hashtag #TnArp on Facebook and Twitter. When three Kappa activists were interviewed about the implications of social media for their work, they reported how algorithmic operations of the timelines and newsfeeds continuously filter content based on their past actions: “they [timelines and newsfeeds] do help us in terms of knowing who is doing what. But we are in our own ‘bubble’, of course. The more I like Kappa’s posts, the more I see Kappa all over my timeline” (interview, Kappa volunteer). Algorithmic operations are, thus, enabling the accomplishment of collective tasks by filtering pieces of information (e.g., certain outcomes of voting bills, selected funding opportunities, etc.).

In another instance indicative of similar observed situations in the case of Omega, algorithms that filtered and corrected information displayed on the different timelines enabled volunteers to mobilize collectively at ad-hoc events. As our observations and the manager indicates next, hashtags such as #FL2016 #GenLeg #TnArp allowed the inputs of activists in these conversations to become outputs for other activists facilitating collective action. Due to such filtering operations, activists only contributed to those activities that were associated with a trending hashtag, as the manager indicates:

We use #FL2016 #GenLeg and #TnArp on both Facebook and Twitter to “signpost” what project we are working on now, so #FL2016 and #GenLeg shows that we are working on the Fiscal Law 2016 that is part of the general legislative package. We also use these hashtags because people [volunteers, citizens, etc.] use them to search, find information and be engaged in the democratic process with their elected representatives. But this is possible only on the few topics we share [. . .]. It is often the case that we get it [the hashtag] wrong and achieve way less support than we really need. That happened with our tweet on the law project 22 which made no impressions (interview, Omega manager).

Algorithmic distortion of assembling

As activists expect to contribute and participate in activities through social media use, algorithmic operations of filtering introduced constraints in terms of opacity. In Kappa’s case, algorithms removed information (that was important but not trending) from activists’ newsfeeds. These operations did not match activists’ expectations of sharing unencumbered flows of information, as the manager indicates:

Yesterday something interesting happened. I usually start tweeting when the chair [of the House of Representatives] declares the session open. But some MPs were having a debate there before the meeting started and they came to me and asked me to tweet what they were saying. They said they want to have it on the record, on Twitter, so that the people see what their position is. But the hashtag we used was not really picked up, and many people missed it [the session] because it was gone (interview, Kappa manager).

In this situation, similar to many others, assembling was constrained, as information was made invisible by filtering operations that caused activists to “miss it”. Thus, algorithms sabotaged collaborative efforts that rely on contributions by various members, such as observing voting procedures that needed the presence of activists in different plenary rooms simultaneously: “@Kappa where can we find information about the plenary session of today? I follow #FL2016 but the info went away. We’re in the dark here. Which is the room number?” (tweet, Kappa manager). In the case of Omega, activists experienced confusion when they attempted to participate and contribute from different physical and virtual locations through the live broadcasting of a press conference: “@Omega ‘|LIVE NOW| Press Conference Now: Our symposium of civil society #meerkat Watch Omega |LIVE NOW| from Tunis mrk.tv’” (Omega Facebook/Meerkat3 post). However, the distorting operations of #meerkat downgraded reports that were supposed to be disseminated as part of the press conference, while prioritizing advertising content by other users. Due to the resulting opacity, Omega managers could not communicate and collaborate with volunteers based on a consistent stream of information as initially envisaged, as the manager indicates:

We managed to gather more than 20 national and more than five international organizations for our symposium, where we gave a preliminary report of the problems encountered in our fight for human rights vis-à-vis the authorities’ fight against terrorism. In order to get more activists to show up we write a reply to our tweets where we tag their usernames like cc @user and a hashtag. This goes across all our [social] media accounts as we’ve put [the accounts] all together. We do live streaming on Meerkat and we also do “livetweets”. But we had problems with our content (interview, Omega manager, emphasis added).

Augmenting

Augmenting is an affordance through which collectives gain attention for a campaign, protest, or crowdfunding by amplifying its outreach and exposure. Augmenting is equally supported by algorithms that automatically rank and aggregate content as it is constrained by distorting operations that intensify disinformation.

Algorithmic facilitation of augmenting

Augmenting is activated by features such as hashtags, comments, the mention function such as @, and likes/favorites. The extended outreach given by algorithms to posts is reinforced by metadata such as views, favorites, likes, and comment counts: the more users like, favorite, view, or comment on certain content, the more algorithms share that piece of content. In this respect, augmenting is supported by hidden operations through which content is algorithmically ranked (i.e., some content receives more prominence than other content) and aggregated (i.e., content is added to other prominent content around a similar topic). As observed across different occasions, Kappa members actualized this affordance by tweeting and posting collectively using the hashtag #TnArp. The hashtag was visible in other users’ news feeds because it was “ranked up” and could promote organizational activities and attract attention to campaigns in the House of Representatives. In Omega’s case, the same #TnArp along with other hashtags provided activists with the ability to inform community members about organizational tasks. For instance, through posting and (re)tweeting “Livestreaming de la #ReformAdmin bws.la/htiH4Vy #TnARP #MarsadMajles #LiveMajles #Tunisie”, activists were able to boost the information exchanges about their campaign and increase their outreach (obtaining 2055 views). Observations showed that, based on the ranking operations of the hashtags involved, Omega members together with other actors in their communities (six volunteers) were, at that moment, able to act collectively and gather at the parliament house.

Algorithmic distortion of augmenting

The actualization of augmenting was not always supported by algorithms. On the contrary, distorting operations left activists describing social media as a broken system. This is particularly the case as algorithms also ranked and aggregated the content disseminated by engineered bots (short for machine learning software robots) that are not entirely identifiable until they engage in deceptive behavior (spreading misinformation, misguiding activists about meeting locations, promote fake information on reports, etc.). Such malicious actors create confusion by inciting antagonistic and deceitful behavior. As observed, algorithms amplify these exchanges through trending hashtags, hence, sabotaging the work of activists. Such behavior (colloquially known as “trolling”) is typically understood to be deceptive, destructive, or disruptive (Ackerman, 2011). In the case of Kappa, bots used hashtags to sidetrack the original intent of activists to geolocate their work. As a result of trolling, hashtags could “stand for something else”, “work differently” (manager, Kappa), and permit other actors to alter and use them in their interactions:

We can’t really predict how hashtags work. . . sometimes they are of big help and sometimes not and everyone gets confused. Now and then, the hashtags stand for something else and work differently across platforms than what you’d expect. But you can’t buy or control a hashtag because social media is not a media. It’s a world and it makes you as much as you make it (interview, Kappa manager).

Kappa activists used features such as events and live streaming to mobilize volunteers and broadcast a press conference, where augmenting of the event depended on other activists sharing: “@Kappa |LIVE NOW| #meerkat #Décentralisation. The questions/remarks will follow here at the end of our press conf - live: https://mrk.tv/” (Kappa, Twitter/Meerkat mashup message). Such practice acted as an automated action alert and allowed the participation and sharing of other activists. Constraints emerged because the algorithmic operations of the hashtag ranked and aggregated content from intervening trolls, i.e., users whose interactions were obscure and nonsensical. Trolls were able to integrate content that was amplified by algorithms in routinely odd and contrary ways to the visible flow of exchanges and therewith alter the conversations surrounding the hashtags. Hence, disruption occurred because conversations were taken “off track”, as the manager indicates:

We get disturbing comments all the time both on [our interactions from] Facebook and Twitter that take us a bit off track with claims such as “you are funded by the Mossad”. And they are all the time visible, I mean everyone can see the comments. And it’s getting so absurd that no matter what I answer they say the same, so I should reply “yes the Mossad funds me” [laughs] (interview, Kappa manager, emphasis added).

Similarly, in the case of Omega, activists’ efforts toward generating collective action through expected outreach were disrupted because of the polarized conversations of trolls, as an Omega member suggests:

We always find trolls and bots using hashtags on our topics where the political debates are highly polarized, such as Libya where you currently have two governments claiming legitimacy. Twitter as a platform makes visible all sorts of conversations and trolls are part of what it means to have open conversations (interview, Omega manager).

The algorithmic conditioning of collective affordance actualization

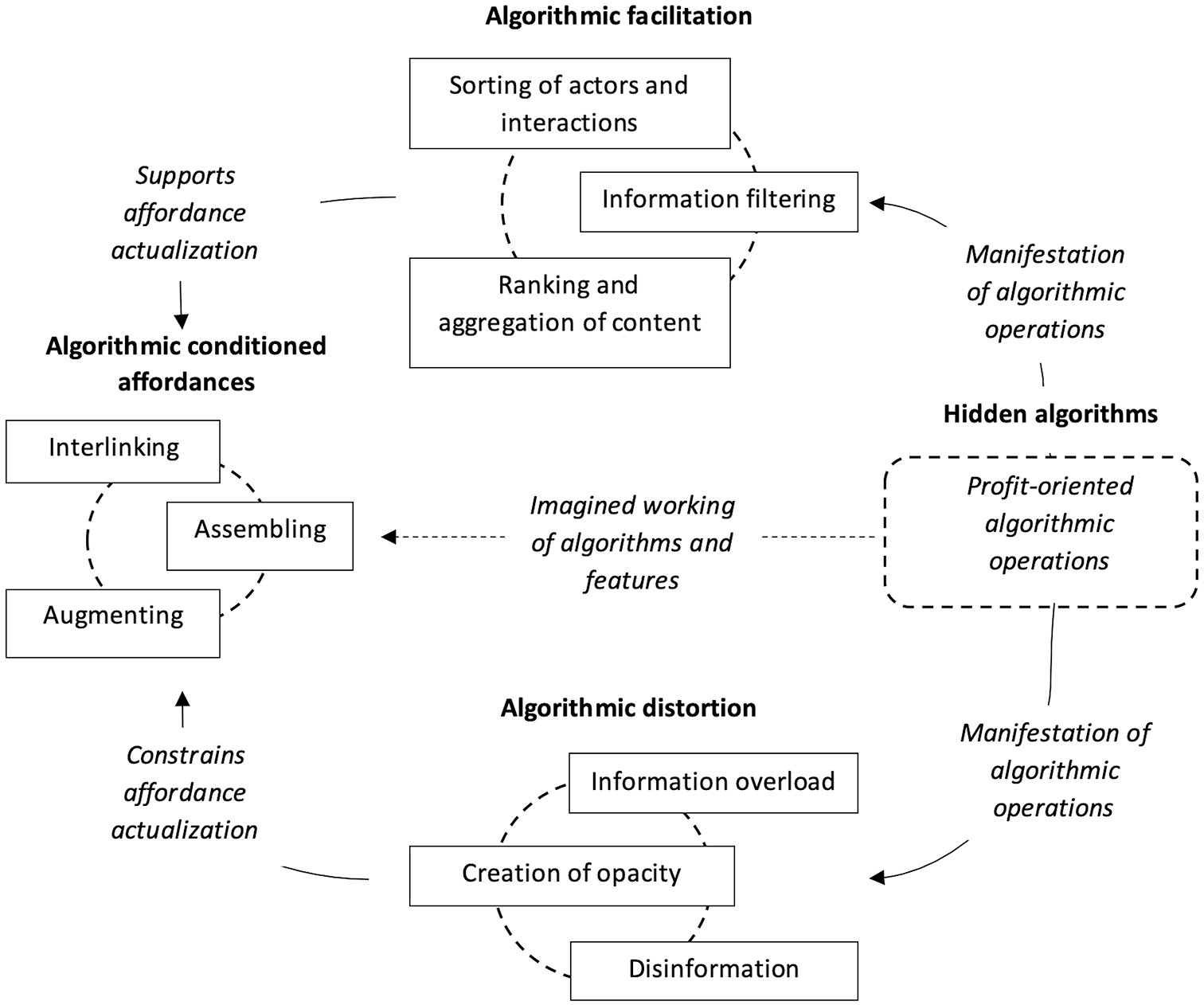

The actualization of the social media affordances identified in this study relies as much on the expectations and technology use of interdependent actors and the materiality of technological features as it does on hidden algorithmic operations. Designed with the aim of maximizing profit, such hidden algorithms manifest in various ways that support or constrain the actualization of affordances. Based on our analysis, we present a model for the algorithmic conditioning of affordance actualization. Accordingly, we define these collective affordances as algorithmic conditioned affordances: that is, collective action possibilities that emerge in an interplay between users, technological features, and hidden algorithmic operations.

On the one hand, algorithms support the actualization of interlinking, assembling, and augmenting. This happens as algorithms sort data and orchestrate relationships that support activists’ use of a feature, for example a timeline, in order to establish and maintain linkages to other actors. Similarly, through information filtering that is based on previously defined relevance, algorithms provide activists with curated information on their newsfeed and timelines, which enables them to assemble information collectively and engage in various forms of co-creation. Finally, by ranking and aggregating content through underlying social media features such as hashtags, algorithms support the augmenting of actors’ interaction for campaigning and task completion. We subsume these manifestations of profit-oriented algorithmic operations under the term algorithmic facilitation, which encompasses the support of affordance actualization through hidden algorithms. Importantly, algorithmic facilitation does not describe the actual algorithmic operations, which indeed are unnoticeable. Rather, algorithmic facilitation is concerned with the manifestation of these hidden operations and is an important mechanism for the support of the actualization of collective affordances.

On the other hand, our findings provide evidence for how hidden algorithms introduce constraints on collective action by operating in other ways to those anticipated by actors. These constraints illustrate that algorithms, rather than supporting the goals of SM organizations, work against actors’ intentions and goals. We subsume these constraining manifestations under the term algorithmic distortion, which encompasses the constraints of affordance actualization through hidden algorithms. As commercially-oriented algorithms are often geared toward heightened engagement, they interfere with actors’ affordance actualization by correcting what is considered relevant and thereby flooding timelines and newsfeeds with high volumes of information at increased speed. These operations can break down connections between actors or hinder the identification of potential new collaborators. Furthermore, algorithms filter competing data exchanges, boosting polarized information, hiding relevant content, and therewith constraining the ability of actors to pursue a collective goal. Algorithms also support the emergence of trolls and automated bots by augmenting and aggregating their content, and consequently facilitating disinformation.

Figure 2 depicts this relationship of how hidden algorithms support and constrain the actualization of imagined collective affordances.

Algorithmic conditioning of collective affordance actualization.

As mentioned before, the analytical distinction made in this model is a simplification of more complex relationships and entanglements. Further, it has already become evident in our analysis that the three identified affordances are interrelated. The collective affordance of assembling strongly builds on the affordance of interlinking, as it is through establishing linkages that activists can engage in meaningful co-creation. Similarly, the augmenting of campaigns is related to interlinking, as it is through the augmenting of conversations, for example a trending hashtag, that actors notice other activists and eventually establish linkages for further engagement.

In the same vein, the manifestations of algorithmic operations are interrelated: the sorting of actors and interactions relates to the filtering of information, as relevant information for participation and co-creation is often shared among algorithmically selected groups. Similarly, the ranking and aggregation of content goes hand in hand with the sorting of interactions, for example, as both are actualized through the use of the hashtag feature. Finally, information overload, creation of opacity, and disinformation via trolls are manifestations of algorithmic distortion that can reinforce and build on each other. For example, as disinformation and content by trolls is included in algorithmic operations, and as timelines are flooded with information, these manifestations create opacity for relevant information.

With these interrelationships in mind, it is important to acknowledge that algorithms work in conjunction with human actors, who approach social media features with certain expectations. As our analysis has shown, actors’ expectations may rest on misinterpretations and misconceptions of how algorithms support the actualization of affordances (illustrated by the center arrow in Figure 2). Interestingly, in certain instances algorithmically conditioned affordances may be actualized as expected, yet in other instances they will not be. Algorithms and their opaque rules of sorting, filtering, and ranking play an important role for either outcome, as much as the contextual use of social media features by interdependent actors does.

Indeed, the use of technology by interdependent SM actors is an important factor that may either compromise or support the actualization of an imagined affordance. For example, if not enough actors use a hashtag in similar ways with the expectation to augment a campaign, the hashtag may never gain traction and trend. Such downgrading happens as the underlying algorithm, following a commercial logic focused on content discovery for advertising and data collection purposes, does not recognize the hashtag as important enough. As a result, the algorithm does not provide the necessary traction for the hashtag, which again lessens the chance of other actors recognizing and subsequently contributing to the campaign. Thus, in a constant feedback loop, activists, the contextual use of social media features, and profit-oriented algorithms act and react to each other.

Concluding discussion

This paper investigated how social media algorithms, designed for commercial purposes, impact the ability of SM organizations to engage in collective action. Without accessing the exact operations of hidden algorithms, we focused on their manifestations as experienced by our informants and as observed through an ethnographic study. Our analysis shows how algorithmic operations support activists in the actualization of affordances through algorithmic facilitation, yet also introduce constraints through algorithmic distortion. The theoretical implications of these findings are twofold. Firstly, we further develop the conceptual vehicle of affordances by introducing algorithmic conditioned affordances that rely substantially on algorithms. Furthermore, we provide an analytical model that specifies how algorithms facilitate the actualization of these affordances and introduce complexity in the relationship between actors’ expectations and practices, and the materiality of technological features. Secondly, we contribute to SM studies by providing empirical evidence and an extended understanding of how profit-oriented algorithms constrain the collective action of SM organizations.

With regard to the literature on affordances, we advance this body of literature with the identification of three affordances at a collective level (e.g., interlinking, assembling, and augmenting). We show how their actualization relies as much on the expectations and practices of interdependent actors and the materiality of technological features as it does on hidden algorithmic operations moderating this relationship. In contrast to scholarship on affordances that typically assumes a rational and informed user (e.g., Treem and Leonardi, 2012), we account for how algorithmic operations leave users in the dark about their actual working. Accordingly, our analytical model is sensitive to the agency of algorithms and the limitations that these algorithms introduce. Our analysis has shown how the misconception of the actual working of algorithms constrains the actualization of collective affordances. This actualization, however, is not only at the mercy of presumably powerful algorithms, but also depends on the concerted social media use of interdependent actors (Vaast et al., 2017). Agency can, thus, be understood as distributed among members of collectives and technological features, as well as hidden algorithms. By referring to the agency of algorithms we are not suggesting that algorithms should be represented as living entities, but rather are acknowledging that algorithms reconfigure the relationship between users and their tools (Borch and Lange, 2016).

This “dance of agency” (Pickering, 2008: 6), which has become prevalent in our analysis, is in line with previous literature, such as on algorithmic trading (e.g., Lange et al., 2019), arguing for an understanding of a rather symmetric relationship between human actors and algorithms, in which these actors influence and shape each other. Indeed, as our findings show, algorithms adapt to collective actions and may support, for example, the strength of a campaign, if interdependent actors make similar use of a hashtag. Hence, as algorithms become increasingly relevant for the process of collective action in social media (Milan, 2015b), our findings show that the dark side of the relationship between algorithms, social media features, and organizing lies not only in their opaqueness, but also in the commercial orientation of algorithms encoded by their designers (see Fuchs, 2014).

In this regard, we contribute to the social movement literature by extending the critique on the celebratory yet pervasive approach to social media and technological affordances (Sæbø et al., 2020). Our article joins existing studies that account for the role of social media platforms’ commercial interests, which are ingrained into social media technologies (e.g., Fuchs, 2014). Our analysis confirms recent claims of SM scholars, who have argued that the commercial interests of social media corporations often stand at odds with SM goals and organizing (e.g., Coretti and Pica, 2018; Uldam and Kaun, 2019). Indeed, our study deepens previous observations by Van Dijck and Poell (2013), who have argued that algorithmic acceleration and personalization of data streams hinder processes of organizing in favor of ephemeral immediacy as opposed to continuity. In this vein, our study provides new evidence of how the profit-orientation of social media corporations enforces commercial objectives against the interests of their users, a conflict which can also be found in other industries and forms of organizations (e.g., Just and Latzer, 2017; Manley and Williams, 2019). Nevertheless, our study provides a balanced picture, as we find that the profit-orientation of algorithmic operations can also work in favor of SM organizing (see also Poell and Van Dijck, 2015).

Finally, we identified that imagining the working of algorithm technology also has practical implications for activism as it encourages participatory subjectivity (see Bucher, 2012), whereby activists recognize that gestures such as commenting on a friend’s photo are key criteria for promoting content. SM organizing is thereby transformed as activists are increasingly driven by a framing imagery of choosing “shareable” and “clickable” campaigns in order to attract international attention (Moore-Gilbert, 2017). This behavior often contrasts with a bona fide ideal of activism—choosing to advocate for the cause that is needed the most. We argue therefore that social media might create a distorted view of activism realities, since hidden algorithms are responsible for the circulation of content that forms the basis of collective action. These world views are taken for granted by communities of activists, who are often unaware of the exploitation of social media-owning corporations and their profit maximization interests (what has been termed surveillance capitalism, Zuboff, 2015). The outcomes of these algorithmic operations show that social media have become the byproduct of technology that promotes a misconception of realities (Carr, 2014). Social media use can thus promote forms of false consciousness (see also van Zoonen, 2017), as members of SM organizations appropriate information from social media and depend on these curated data pieces to organize. 2

This study is limited due to its focus on two SM organizations and the short time span of data collection. The limitations act as a springboard for future research as, theoretically, the identified affordances may provide a way to think through the matter of technology and serve to encourage future scholarship to address the agency of algorithms and how this impacts organizing. For prospective research across disciplines, our study offers a framework for studying the gap between users’ experience of social media and the features or qualities of the technology and helps to identify the potential “dark” sides of these technologies. Ethnographic work involving algorithms is of course challenging (Lange et al., 2019). When studying the operations and outcomes of algorithms, research may not only have to give careful consideration to the micro level of collective action but also engage in methods, such as infrastructure ethnography, to uncover the socio-technical components of social media-driven collective action. As such, our study can be seen as initial empirical work that may be complemented with further studies.

Footnotes

Authors’ note

Michael Etter is also affiliated with Copenhagen Business School, Denmark now.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was kindly funded by the Carlsberg gran nr CF14-0082.