Abstract

Artificial intelligence can provide organizations with prescriptive options for decision-making. Based on the notions of algorithmic decision-making and user involvement, we assess the role of artificial intelligence in workplace decisions. Using a case study on the implementation and use of cognitive software in a telecommunications company, we address how actors can become distanced from or remain involved in decision-making. Our results show that humans are increasingly detached from decision-making spatially as well as temporally and in terms of rational distancing and cognitive displacement. At the same time, they remain attached to decision-making because of accidental and infrastructural proximity, imposed engagement, and affective adhesion. When human and algorithmic intelligence become unbalanced in regard to humans’ attachment to decision-making, three performative effects result: deferred decisions, workarounds, and (data) manipulations. We conceptualize the user interface that presents decisions to humans as a mediator between human detachment and attachment and, thus, between algorithmic and humans’ decisions. These findings contrast the traditional view of automated media as diminishing user involvement and have useful implications for research on artificial intelligence and algorithmic decision-making in organizations.

Keywords

Introduction

Algorithmic decision-making refers to the automation of decisions and is considered as a form of remote control and standardization of routinized workplace decisions (Möhlmann and Zalmanson, 2017). Algorithmically managed workers become interpreters of condensed results of complex algorithmic analyses presented via simplistic user interfaces so that they can make good decisions (Constantiou and Kallinikos, 2015; LaValle et al., 2011; Sharma et al., 2014). Algorithms have gained importance in decision-making (Clark et al., 2007), although decision-making is rooted in the assumption that decisions rely on human competencies like knowledge and human experience (Newell and Marabelli, 2015; Shollo and Galliers, 2016). The function of algorithms in decision-making has moved from descriptive to predictive modes of data analytics and to the prescription of best options for actions in operational and strategic domains (Van der Vlist, 2016). In this context, learning algorithms, often referred to as artificial intelligence (AI) or ‘cognitive systems’ (Helbing, 2019), are finding their way into workplace decisions. Researchers often consider AI in workplace decisions as an ‘automation of data analysis’ (Helbing et al., 2019: 74), where algorithms based on machine learning improve decision-making processes over time without human intervention, resulting in humans’ losing control of their performance (Günther et al., 2017).

When users are confronted with opaque algorithmic decisions, the boundary between human and algorithmic intelligence blurs (e.g. Günther et al., 2017), leading to a debate about which of the two should have control over decision-making and which should have power over the other (Cramer and Fuller, 2008). The separate view of humans and AI in these considerations is based on the idea that AI is a reflection of human intelligence (Goffey, 2008). Scholars in domains like information systems and organization studies (e.g. Shaikh and Vaast, 2016) as well as media studies (Gillespie, 2014) have reviewed this separated perspective to integrate human intelligence and algorithmic intelligence into work practices (e.g. Günther et al., 2017; Lichtenthaler, 2018). From this perspective, humans and algorithms form an assemblage in which the components of their differing origins and natures are put together and relationships between them are established (DeLanda, 2016). In this context, scholars have asked for research on the ‘active capacity of [algorithms] to shape or manipulate the things or people with which they come into contact’ (e.g. Fuller and Goffey, 2012: 5). While this understanding suggests that algorithms are taking over decisions in organizations, the actual role of AI in workplace decisions and in the ongoing involvement of humans in decision-making lacks clarity. Therefore, our study examines how users deal with algorithmic decision-making, how the user interface influences their ongoing involvement in decision-making, and how AI affects their decisions.

Building on extant work in media studies, our empirical case study of the implementation of cognitive software in a large telecommunications provider’s call center contributes to research on AI and algorithmic decision-making in organizations. Using a media theoretical notion of user involvement in algorithmic decisions, we go beyond the opposing views of attachment and detachment (Latour, 1999) to show that AI has a dual role in workplace decisions when users interact with it (Orlikowski, 2007) and must master both their detachment from and their attachment to decisions. Thus, the interface that presents algorithmic decisions to humans mediates both low and high levels of human involvement in decision-making. More concretely, our study sheds light on how human interaction with AI functionalities detaches them from decision-making in terms of spatial and temporal separation, rational distancing as well as cognitive displacement of humans from decisions. Despite algorithmic influence, humans remain attached to decision-making because of accidental and infrastructural proximity, imposed engagement that is due to their unique access to context information, and their affections and emotions. A media theoretical approach helps to explain the performance effects of algorithmic work through our empirical data that suggest negative consequences when humans’ attachment to decisions in the context of algorithmic decision-making is too strong. These negative consequences manifest in deferred decisions, workarounds, and (data) manipulations.

The remainder of this article is structured as follows. First, we describe the study’s research frame, which is determined by the current knowledge in algorithmic decision-making and the notion of user involvement in media theory. Then we present our research design, outlining the case study’s empirical setting and our methodological approach to data collection and analysis. Next, we illustrate the findings of our empirical case study on the implementation of cognitive software in a call center. Based on these findings, we present our framework for the role of AI in workplace decisions and discuss our contribution to the literatures of digital media and organization studies. Finally, we describe how our framework can inform future research.

Algorithmic decision-making and human involvement

The basic conceptualization of a decision is that there is an ‘individual decision maker facing a choice involving uncertainty about outcomes’ (Peterson, 2017: 9). Putting the individual decision-maker at the center of decision-making is the most intuitive approach to studying algorithmic decision-making in organizations (e.g. Davenport, 2013), where the individual is the recipient of automated decisions or recommendations. For instance, operative decision-makers who provide IT-enabled services (Chae, 2014), like bank assistants who decide on loan approvals or call center agents who decide what sales offers to make, are confronted with predetermined decision options via simplified dashboards and user interfaces. In the background, self-learning algorithms work on large data sets and ‘generate responses, classifications, or dynamic predictions that resemble those of a knowledge worker’ (Faraj et al., 2018: 62) based on statistical measures, computations, and machine learning for routine decisions.

In general, the core function of business intelligence software is the algorithmic-based monitoring, measurement, and management of business performance (Clark et al., 2007). Algorithmic decision-making refers to the application of computational algorithms to solve a well-defined problem a priori. However, with the application of AI and learning algorithms and with the decision-making procedures advancing and changing automatically over time, the algorithmic output’s accountability is questionable (Günther et al., 2017; Van der Vlist, 2016). In addition, predictive models that use both historical and real-time data to forecast the likelihood of specific outcomes have increasingly replaced the historical data that provided comprehensive descriptive information as a basis for human decision-making. In fact, algorithmic decision-making is advancing toward prescriptive data analytics by providing options for the best decisions (Van der Vlist, 2016).

In light of these technological advancements, algorithmic decision-making is being increasingly critically judged in contemporary organization research (Davenport, 2013; Introna, 2016; Newell and Marabelli, 2015; Zarsky, 2016). Research has suggested that humans who are confronted with routine decisions are distanced from decision-making when algorithms and AI are incorporated into the decision-making process since they lose track of the data sources, collection methods, data analysis, and information processing that serve as the immediate basis for knowledge and decision-making (Shollo and Kautz, 2010). In contrast to human decision-making, which is usually based on experience, intuition, and context (Klein, 2017), algorithmic decisions are based on statistical models so they can present options for decisions faster, more objectively, and more accurately (Chen et al., 2018; Jung et al., 2018). Despite the involvement of system designers and programmers, as Gillespie (2014: 170, referring to Winner, 1977) highlighted, the core principle of algorithms is that they ‘are designed to be–and prized for being–functionally automatic, to act when triggered without any regular human intervention or oversight’.

Zarsky (2016) saw this automation and opacity as central properties of algorithms, which distance humans from the decision. Although automation is positively connoted in terms of efficiency, the resulting simplicity and reduced uncertainty in decision-making (Orlikowski and Scott, 2014) may also have devastating effects when algorithms focus on narrow problems without taking contextual factors into account (Marabelli et al., 2018). Among these potentially negative consequences are reduced information because of oversimplification (Orlikowski and Scott, 2014), inaccuracy (McFarland and McFarland, 2015), loss of information privacy (Belanger et al., 2002), a loss of fairness (Zarsky, 2016), increasing control and surveillance (Anteby and Chan, 2018), and other ethical issues (e.g. Ananny, 2016).

Given these issues, current research in algorithmic decision-making has seen the characteristics of algorithms and their negative consequences on individual decision-making as problematic. As Introna (2016) stated, we lose track of the active capacity of algorithms since their work is ‘subsumed in daily practices’ (p. 17). For this reason, a growing number of studies have considered AI-supported work practices as assemblages of human and algorithmic intelligence that either synthesize and combine their unique competencies and enhance performance through a division of labor, or pit human intelligence against algorithmic intelligence, where one rules out the other during the decision-making process (e.g. Günther et al., 2017; Lichtenthaler, 2018).

In such an assemblage of human and algorithmic intelligence, the user takes on an active part in what the medium becomes (Brunton and Coleman, 2014; Gitelman, 2006; Oudshoorn and Pinch, 2003). User involvement has been referred to primarily as the intensity with which the user is cognitively and emotionally included in producing the medium’s content (Greenwood, 2008; Krugman, 1971; McLuhan, 1994). In this context, Borche and Lange (2017) analyzed users’ management of their emotional ‘attachment’ when the decision-maker in high-frequency trading changed from human traders to algorithms. Attachment refers to ‘what we hold to and what holds us’ (Hennion, 2017b: 71) and, thus, ‘our ways of both making and being made by the relationships and the objects that hold us together’ (Hennion, 2017a: 118). The notion of attachment is frequently contrasted with its opposite, detachment. Whether one is attached or detached from an object lies in whether one is bound to or free from it in terms of one’s ability to act (Latour, 1999). Similarly, Seaver (2017: 310 f.) emphasized ‘the relatedness of attachment and mediation’ in referring to Hennion (2015), and Callon’s (1984) idea of interessement or how ‘various entities become tied up in each other’ (Seaver, 2017: 310).

If algorithmic decision-making is an assemblage of human and algorithmic intelligence, the user interface serves as a mediator of attachments and detachments, as it presents the algorithmic decision to the human. Different types of media and their user interfaces require more or less user involvement (Cramer and Fuller, 2008). While interfaces are all boundaries that ‘link software and hardware to each other and to their human users or other sources of data’ (Cramer and Fuller, 2008: 149), the central interface that is involved on the work-practice level is the user interface between the software and the final user, presented in the shape of symbols and buttons on the computer screen. Central to this conceptualization of the user interface as mediator is its requiring human competencies and cognition to make sense of the content (Sharma et al., 2014). Thus, the user interface, shaped by programmers (Downey, 2014), provides and presents ‘automated decisions’, thereby mediating how actors are attached to or detached from decisions.

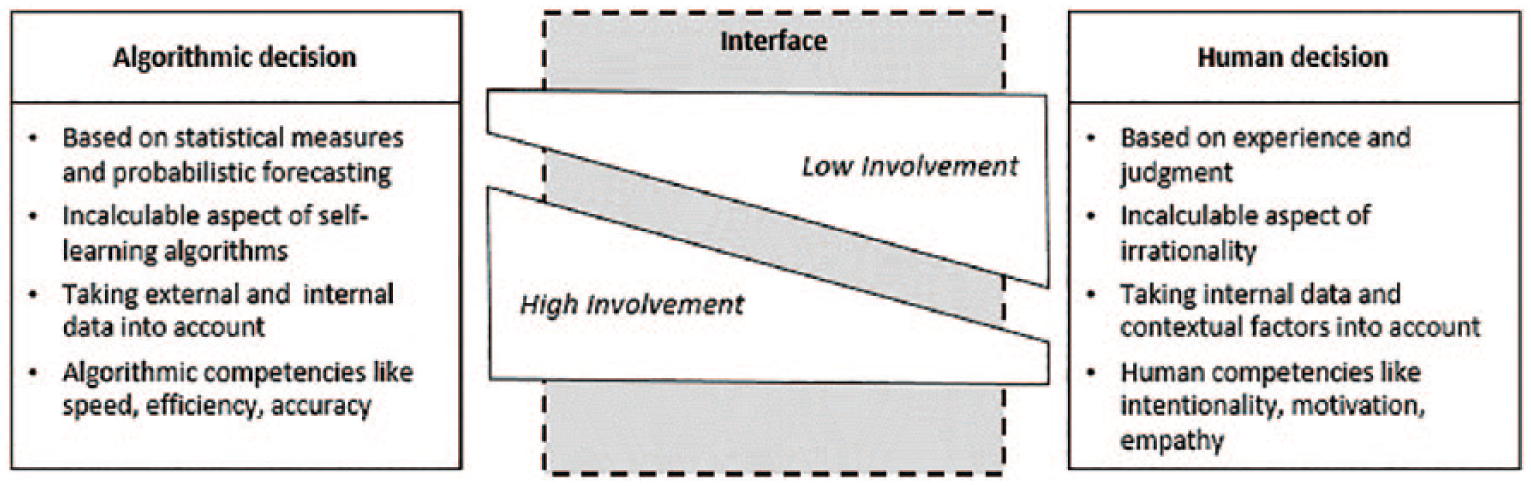

However, Livingstone (2014: 241) emphasized the necessity of considering ‘the activities of users in context’ such that the roles of the surrounding media, including organizational factors like hierarchies, goals, and power relationships, are considered in the decision process (Mackenzie, 2006, 2013; Weich and Othmer, 2016). This extended view of users and their work in context also resonates in Hennion’s (2015) description of how attachments are supported by networks of humans and objects (Seaver, 2017). Therefore, and similar to the notion of distributed decision systems (Schneeweiss, 2012), we refer to algorithmic decision-making as a form of joint problem-solving and an assemblage of humans and algorithms that the user interface mediates. Based on this, we argue that, to assess the role of AI in workplace decisions, one must see algorithmic decision-making as an assemblage of human actors and algorithms mediated by the user interface (Figure 1). This mediation evokes the balance or imbalance between low user involvement, which means human detachment from the decision, and high user involvement, which means human attachment to the decision.

The interface mediator of human involvement in algorithmic decision-making.

Methods

Empirical setting: introduction of cognitive software in a call center

Studying human involvement in algorithmic decision-making on a practice level (Günther et al., 2017) requires in-depth qualitative data that allow the exploration of ‘technologies-in-use’ (e.g. Gherardi, 2012: 79). We assess the introduction of software and affective user interactions (Bracha and Brown, 2012) using a case-study research design that can help to explain complex scenarios (Eisenhardt, 1989; Yin, 2013). Fieldwork is commonly used as a qualitative approach to assessing technologies-in-use and material arrangements (Gherardi, 2012) and to observing the use of software ‘in [a] natural setting’ (Eisenhardt and Bourgeois, 1988).

This article presents research that was part of a broader study on algorithms’ capacity to act. Part of that study was investigating the use of decision-support software in the call center of a large cable operator that has about 1500 employees and about 1.3 million customers. In 2005, the firm was acquired by an internationally operating group and, during the course of several group-wide software standardization processes, a new cognitive system, IBM Interact, was launched in a call center. Call centers provide a relevant empirical context, as the agents’ traditional tasks have considerable potential for automation, so they are among the first empirical settings in which the implementation of AI and actors’ reactions to it can be analyzed. IBM Interact is a cognitive system that operates in a prescriptive way (Van der Vlist, 2016) by analyzing historical and real-time customer data over time and providing its users, the call center agents in this case, with predetermined and increasingly well-suited sales options for the customer who is on the line. During the phone conversation with the customer, the call center agent has to react and make decisions on what offer is the best for the company. Our analysis focuses on this company part of the sales negotiation when the call center agents’ decisions were newly supported by AI.

Before IBM Interact’s implementation, call center agents assessed a customer’s needs using a ‘manual demand analysis’ that was conducted by asking the customer for information or manually gathering the information from various internal databases. The customer demand analysis was usually geared to selling a product, and the procedure followed a strict protocol on which the agents were well trained during their onboarding phase and afterward. While the customer demand analysis and customer interactions were scripted to make as many sales as possible, IBM Interact was based on predictive modeling that sought to make not only the highest-priced but also the most suitable offer to the customer. This predictive modeling considered internal and external data like the customer’s purchasing and surfing behavior, age, and residency and the marketing and sales departments’ requirements to predict the likelihood of customer churn and promote customized service and individualized offers. Thus, with the implementation of this software, the quantity-focused manual demand analysis was succeeded by a quality-focused, algorithm-based demand analysis. By automating the demand analysis, the decision about what to offer the client was presented to the agent via the user interface of IBM Interact, a simple display that gave the agent little information about how the decision was made, as the agent was not involved in the data collection and analysis.

Our analysis considered the interplay between human and algorithmic intelligence and how human involvement in decisions played out when the agents’ decisions were succeeded by choices presented via IBM Interact’s user interface.

Data collection and analysis

Assessing attachment and detachment requires ‘social inquiries made on sensitive matters and things that count for people’ (Hennion, 2017a: 118). To get a sense of the call center workers’ attachment to decisions, we applied the commonly used qualitative methods of conducting interviews, making observations, and doing documentary research. We conducted 28 semi-structured interviews with employees from the company (managers, team leaders, supervisors, trainers, and agents), 15 of which focused exclusively on users’ interactions with the IBM Interact in the call center division. Among the interview partners also were members of the IT, marketing intelligence, sales, customer care, and training departments who were involved in the design and implementations of the software. In particular, we asked questions about how the software afforded their individual goals; how their roles, practices, and decisions changed; and how they simultaneously use other technologies. In addition to the interview material, we collected observational data on technologies-in-use (Gherardi, 2012) in the form of written memos while listening to live calls in the call center and observing how agents interacted with the IBM Interact software. We also collected supplementary data such as intranet entries, emails on IBM Interact, and visual data in the call center, which we found was necessary to understand the roles of the media and the material surroundings.

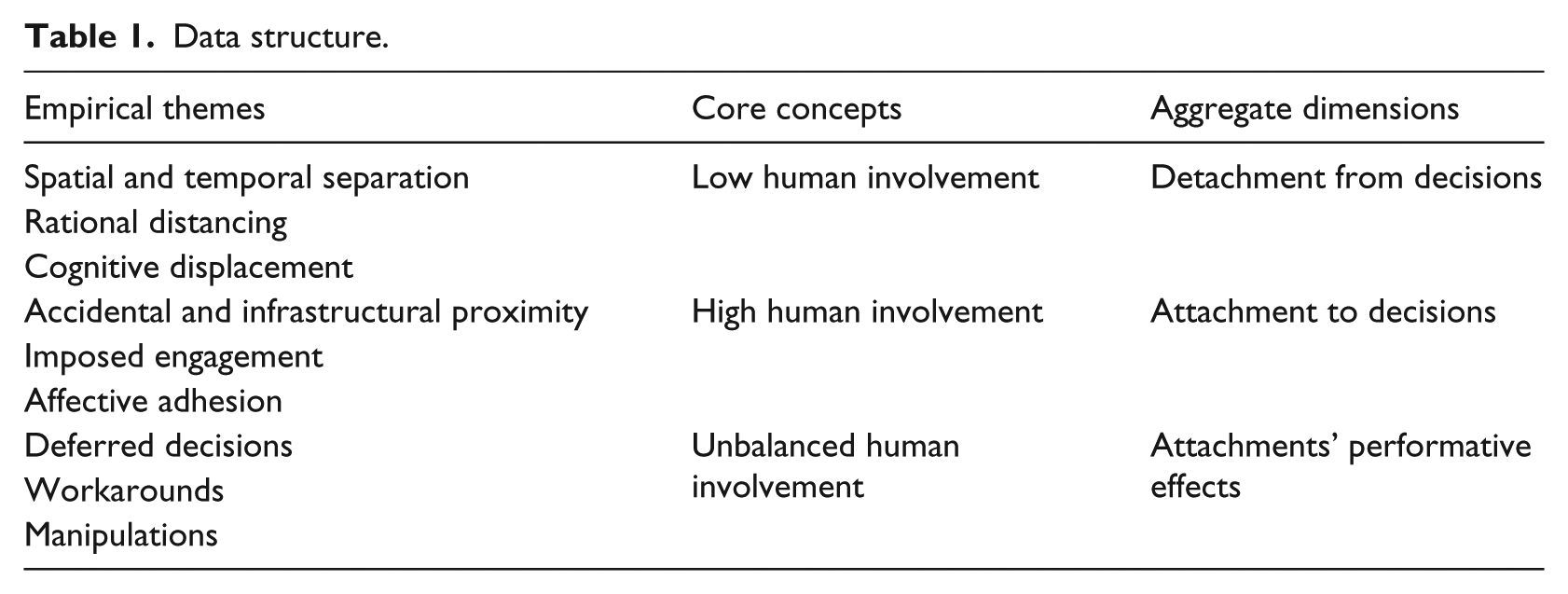

We analyzed the interview, observation, and documentary data we collected using Nvivo software and a theory-elaboration approach, which is in line with case study research (Eisenhardt, 1989). We derived our theory from the data and introduced findings from the literature iteratively during the research process (Eisenhardt, 1989). After the first few interviews, as concepts emerged, we refined our qualitative coding system, modified a few questions in the interview guide, added others (e.g. questions on the workplace atmosphere), and focused on systems the interviewees mentioned to broaden and deepen our conceptualizations. In particular, we elaborated our coding scheme as we noticed that the agents switched between either conducting the manual demand analysis, where they remained highly involved with the decision (1), or adhering to the algorithmic decision where they withdrew their involvement with the decision (2). Doing so, we included the subtle constituents of detachment and low user involvement as well as attachment and high user involvement. We also refined our codes on the actual effects on the decision-making of the new assemblage. Table 1 presents an overview of our data structure.

Data structure.

Findings

This section assesses the involvement of human actors in algorithmic decision-making and discusses the decision situations the call center agents faced.

Detachment of the call center agent from the offer decision

In the call center, algorithmic decisions are presented to the agents via IBM Interact’s interface. The interface’s mediation between the algorithm and the agent in the form of the ‘best’ decision leads one to ask whether the decision is already made before the agent participates. We address this question in the next section, where we describe how the agents coped with the decisions presented. However, first, we elaborate on the agents’ detachment from the decisions, that is, how the agents became committed to the algorithmic decisions. This detachment from decision-making found expression in spatial, temporal, rational, and cognitive dimensions.

Spatial and temporal separation

IBM Interact accesses external customer data and performs predictive modeling. External actors feed data into this database in various ways and at various times. For instance, the marketing department wanted to drive high-priced products; a customer started to play video games intensely and his or her real-time clicks on the webpage were fed back as data from the customer’s home to IBM Interact (Head of Marketing Intelligence Department, Interview 21); internal databases included the customers’ historical purchasing behavior. Thus, call center agents were confronted with prescriptive algorithmic decisions made from data they would not otherwise have had access to. These functionalities meshed with the fast-response environment in the inbound call center, which takes customers’ calls about administrative or technical problems, so agents do not have time to prepare but must serve the customer’s concern while interacting with him or her to sell a product. Thus, with the software’s decision based on internal and historical data and external and foresight data to which the agents do not have access, agents are spatially and temporally separated from the basis of the decision: At that moment, you need to be able to identify what is now the next best action that we can offer this customer, given everything we know about this customer and his past purchase behavior. […] Before [IBM Interact], the agents were drilled to try to make a sale […] in every single call, never mind what the customer’s situation is, so there are customers who ordered a product last Thursday and they are calling in today asking, ‘Hey, where is my stuff?’ [and], of course, there is no way that you have the chance to sell something to this customer, because he is still waiting […] for his first product. Now, with [IBM Interact], we are able to filter those customer groups out. […] Then, based on that segmentation and all the customer insights we have of this customer, we can offer the agents what we call the next best action. (Head of Marketing Intelligence Department, Interview 21)

Rational distancing

The agent has at least two roles that are expressed in the human-material arrangement of the selling situation: the agent’s interaction with IBM Interact’s user interface, which presents the best offers for the next customer in line, and the interaction with the customer through the phone headset that links the agent to the customer, who is elsewhere. The elements of this triangle arrangement among IBM Interact’s interface, the agent, and the customer jointly build the situation in which decisions concerning what the agent offers and what the customer buys are made based on specific goals and rationalities. Therefore, the interface is only the surface of the rational-instrumental goals (i.e. performance targets) of management and the marketing and sales department. IBM Interact is configured to allow the organization to make a shift toward customer service and to change the agents’ focus to ‘quality versus quantity’ and ‘more value than volume’ (Manager, Interview 25), but the agents were still focused on the number of sales as the most important performance indicator, a view that a manager described as ‘conditioned’ (Manager, Interview 25). The third perspective was that of the customer, who had his or her own reasons to act in the situation. In this arrangement of different and even conflicting rationalities, the agent became rationally distanced, not least because the data that built the basis for the algorithmic decision was unavailable to the agent. Hence, we refer to rational distancing, where the human decision differs from what the interface presents and the opaque algorithmic decision instruction and the human’s decision logic diverge.

Cognitive displacement

Agents adhered to the algorithmic decisions if they perceived a special cognitive sophistication or if IBM Interact provided better service in terms of efficiency, accuracy, and speed. A manager highlighted the premade decisions as a help because ‘all those linked logics that are saved in [IBM Interact] are an enormous relief for the employee’ (Interview 25). The benefit of this sophistication especially applied to low performers among the agents, who could improve their skills with the selling arguments and information on the IBM Interact interface that appeared next to every offer. One of these agents praised the cognitive advantage gained with the help of IBM Interact ‘because it is much more spontaneous for me to approach the customer when I see [its interface] because you already have specific information’ (Interview 23).

The marketing and sales departments also integrated incentives into the system for high performers. For instance, IBM Interact incorporates special loyalty offers with high selling potential that agents could not make otherwise. As the Sales and Customer Operations Release Manager explained, ‘If a seller is clever, he will at least open it and look to see ‘can I give the customer this offer?’’ (Interview 27). In this case, cognitive displacement was a form of detachment that led to the need for the unique algorithmic competencies that allowed the system to make these special offers.

In sum, our findings show three dimensions of human detachment from algorithmic decisions: First, IBM Interact had exclusive access to external and forecast data and to the constant stream of data produced by customer decisions that were translated back to the system through customers’ clicks. Lacking these competencies, the agent became spatially and temporally separated from the decision. Second, the interface sometimes presented simplistic results of complex decisions made in a black box that did not coincide with the agent’s own decision logic. These conflicting rationalities led to agents’ rational distancing since they could not track the algorithmic decision logic they had to accept. Third, the user interface prescribed the ‘next best action’ to the agents, vesting them with artificial competencies like efficiency and accuracy that are badly needed in the context of a call center but that cognitively displaced the agents from decisions.

Attachments of the call center agent to the offer decision

While call center agents were increasingly distanced from decision-making, they also simultaneously became highly attached to the decisions. The next sections describe these attachments and how agents intervened to refuse the algorithmic decision. This attachment sometimes happened by accident, was determined by the technical infrastructure, or materialized in the form of supervisors’ commands or as affections and emotions.

Accidental and infrastructural proximity

When the agents were first introduced to IBM Interact, they had no use for it. The user interface’ design did not support the work routines that had been determined by their individual procedures when they used manual demand analysis, so they often (unintentionally) failed to feed data—or even fed incorrect data—into the system. A manager who worked at the intersection between the IT department and sales and customer relations described the outcome of the poorly recorded agent–customer interactions that initially biased the database on which IBM Interact based its decisions: ‘[The interaction] is only recorded when the agent presses “save.” Well, if you forget that, of course, nothing is recorded’ (Sales & Customer Operations-Release-Manager, Interview 27). Agents were also attached to their own decision-making because of infrastructural and material conditions, the most obvious of which was the agent’s role as a user of multiple media. For example, the agent filtered and checked IBM Interact’s user interface when he or she was talking to the customer, as biases in the database resulted in IBM Interact’s proposing offers that the agent understood as not applicable when he or she engaged more fully with the customer and received more information on which to base a decision. IBM Interact’s biases might be based on no data (e.g. when the customer gets service from another telecommunications provider) or flawed data (e.g. when customers make accidental clicks on the webpage or someone else uses the hardware), in which case the interface might present five Internet offers even though the customer said at the beginning of the conversation that he had an Internet contract with another company (Interviewee 21). In such cases, the agent filtered out all Internet-related offers from IBM Interact. Therefore, accidental and infrastructural proximity (e.g. produced through the telephone) was another reason for agents to intervene in algorithmic decisions when the interface’s design did not support their routine work habits or when other surrounding media stimulated sensual and cognitive engagement.

Imposed engagement

In contrast to attachment situations, where agents intervened in the decision process on their own, in some situations agents were commanded to be attached to decisions. While managers and developers of IBM Interact urged the agents to adhere strictly to the software’s proposals (Head of Marketing Intelligence, Interview 21; Manager, Interview 25), team leaders allowed or even instructed the agents to ignore IBM Interact when they felt that their own analysis was better. In one case, a team leader met his team in a private cubicle, where he instructed them to ignore IBM Interact entirely so that the team could meet its sales goals (memo from an informal conversation with a Call Center Agent). We refer to this form of forced attachment that is due to organizational conditions as imposed engagement.

Affective adhesion

Agents were also attached to decisions by their emotions. Agents had been constantly informed of their individual sales numbers and key performance indicators (KPIs) via emails, monitors, rankings, and tournaments (Team Leader, Interview 7) and had been ‘conditioned over years’ (Manager, Interview 25) to focus on their sales in drill sessions and private briefings (Call Center Agents, Interviews 14, 15, 17). In contrast to this omnipresent selling focus, the aim of IBM Interact was to provide the agent with the best solution for the customer on the line, including the option not to make an offer at all. Especially when IBM Interact advised the agent not to make an offer, such as when it would have been disadvantageous to their individual or team goals, the agent’s emotions sometimes came into play. As a supervisor described it: In the beginning, we said you must not make an offer if [IBM Interact] tells you not to make an offer, but the problem is that selling is a goal for them. They must sell. Well, that means, if they can record a sale in […], our selling-tool, […] then it is a sale for them. The employees won’t let a tool ruin their sales. I understand that. It is really hard. They are getting pushed. […] They’re under pressure to make sales, and if a tool says you must not sell, but you could make a sale, then the employee, of course, makes the sale […] because he wants to reach his goals. He wants to have his commission. He just wants to be good. (Interview 15)

Affective attachment is also closely linked to a managerial narrative about IBM Interact as a tool that will take over the agents’ work. Using the same narrative, agents refused to use IBM Interact and switched to the manual customer-demand analysis because they wanted to sell themselves as a matter of ‘professional ethos’ (Manager, Interview 25). These empirical examples show that both the need and the wish to use unique human competencies were forms of affective adhesion where agents held on to their decisions.

Attachments’ performative effects

Our findings suggest an unbalanced involvement of the agents in decisions, which, conscious or not, led to negative impacts on their performance. One consequence was that agents simply could not make decisions or deferred them, but agents also worked around IBM Interact and manipulated how it functioned.

Deferred decisions

In some cases, the conflicting rationalities among the goals of IBM Interact, customer, managers and team leaders, and agents brought the agents into conflicting situations that they could not resolve themselves, so they either refused to take calls or deferred decisions during the phone conversation instead of asking the team leader what to do. One supervisor, who was a key user of IBM Interact, told that the agents could ‘wait a bit longer to accept the call’ or ‘switch to AUX’ (Supervisor, Interview 15), a management control system that counts the time agents are logged off the coordination system during, for instance, meetings or coaching sessions.

Workarounds

When agents had access to the tools they had used previously for the manual demand analyses process, they often switched to these tools. For instance, one agent complied with IBM Interact if its decision coincided with the agent’s personal goal of making three sales per day. If she had already sold three items and IBM Interact still demanded a sale, the agent delayed accepting the next call or used an older system that was still accessible. However, when she decided to work around IBM Interact, she still documented her decision by clicking the ‘save’ button, thus feeding information about the customer interaction to IBM Interact (Call Center Agent, Interview 22).

Manipulation

Although the rigidity of the work environment was frequently compared to the military, the tools that were available to the agents gave them opportunities to cheat and manipulate the system: ‘These are all ways you can cheat as an employee. But apart from that, the numbers are right’ (Supervisor, Interview 15).

One agent described her own sales goals as conflicting with IBM Interact’s instruction not to sell a product: Those from the project feel hoaxed because they work and work and sweat because IBM Interact doesn’t work how it should work. I manipulate the numbers because I say, ‘Yes, okay, but what [IBM Interact] says doesn’t interest me’. I manipulate the numbers, but if it is obvious to me that this customer will call in the next two or three months because their sixteen-year-old daughter says she wants to see this program, I would miss my sale otherwise. (Interview 16)

Clearly, individual call center agents were involved in and committed to decision-making in spite of the system’s algorithmic propositions. We found their attachment to decisions took three forms: accidental and infrastructural proximity, a form of materiality-driven attachment; imposed engagement, where agents were forced to make decisions in reaction to organizational conditions; and affective adhesion, which was driven primarily by emotions. Our findings also reveal the performative effects of a lack of balance between human and algorithmic involvement, where human attachment resulted in deferred decisions, workarounds, and manipulation.

Discussion

Organization and media studies’ research on the work of algorithms has captured algorithmic decision-making from a critical perspective as an approach to automatic management that dictates decisions to humans (Beer, 2017; Gillespie, 2012, 2014; Introna, 2016; Newell and Marabelli, 2015). However, questions concerning how workers deal with algorithmic decision-making, how the user interface influences their ongoing human involvement, and how workplace decisions are affected by AI, have remained unanswered. Our study addresses these questions by examining the ongoing human involvement in AI-supported workplace decisions. Taking a media-theoretical perspective, we analyze human decision-makers’ confrontations with the essence of the algorithmic decision via the user interface and show that AI has a dual role in workplace decisions by creating both human attachment to and detachment from decisions, which result from both high and low levels of human involvement in interactions with IBM Interact’s user interface. On one hand, the user interface evokes low human involvement, increasingly detaching the human decision-maker from decision-making in the form of spatial and temporal separation, rational distancing, and cognitive displacement. On the other hand, the decisions presented via the interface sometimes also lead to a high degree of human involvement. Therefore, our findings suggest a simultaneous attachment to and detachment from the decisions brought about the functionalities of AI. We identified accidental and infrastructural proximity to decisions as a materiality-driven form of attachment and revealed the significance of contextual factors that only humans can take into account. The third form of human attachment to decisions, affective adhesion, is emotion-driven. Finally, our findings suggest that a lack of balanced involvement of humans in decisions has negative performative effects because of deferred decisions, workarounds, and manipulations.

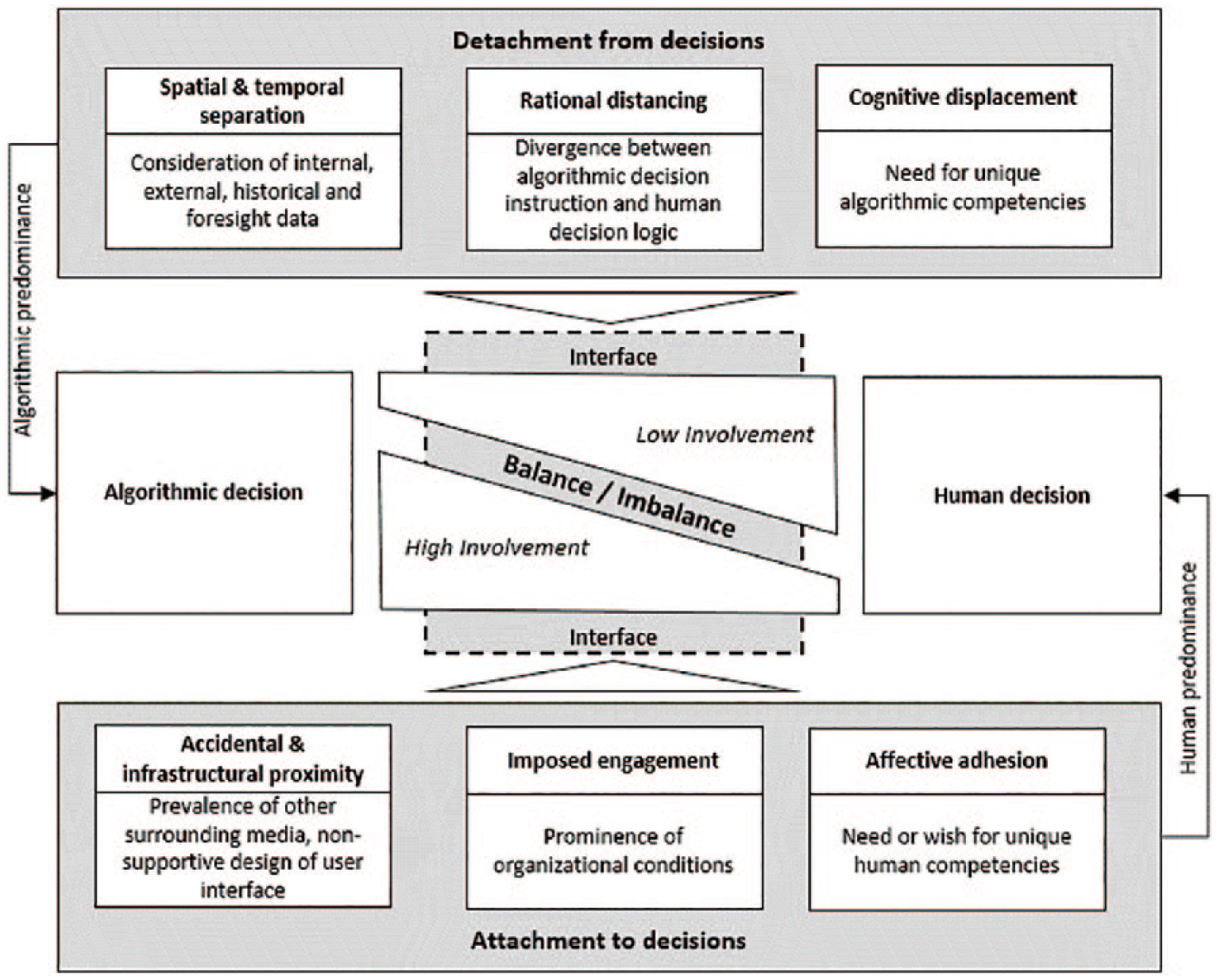

Figure 2 presents a framework that summarizes this dual role of AI in workplace decisions, a role that simultaneously evokes humans’ detachment from and attachment to decisions, which the users master situationally.

The role of the user interface in algorithmic intelligence supported workplace decisions.

The framework contributes to the human-intelligence versus algorithmic-intelligence debate on the workplace level (Günther et al., 2017). Discourse on algorithmic decision-making frequently points to superior software characteristics like machine learning and to problems like algorithmic opacity (e.g. Zarsky, 2016). However, we argue that, depending on the user interface, AI detaches humans from decisions while at the same time encouraging their attachment.

The framework speaks for a dual view of the superior side and the ‘dark side’ (e.g. Marabelli et al., 2018) of AI functionalities, which are black boxes for most users. First, our findings suggest that, with the application of algorithmic decision-making on the worker level, the software’s functionalities, such as its access to external data and predictive modeling, can generate spatial and temporal separation, rational distancing, and cognitive displacement as forms of humans’ detachment from its decisions, increasing humans’ reliance on algorithmic decisions (e.g. Fuller and Goffey, 2012). This argument is supported in previous research that has suggested that algorithms gain control over humans’ decisions and actions (Barocas et al., 2013), albeit on a theoretical level (e.g. Abbasi et al., 2016). The extant research, then, gains empirical grounding with our study. Second, our analysis shows that the software’s advanced functionalities and its opaque decision logic can also lead to users’ strong engagement with the decision, whether because of infrastructural proximity, imposed engagement, or affective adhesion. Our findings suggest that, although the software lacks the ability to consider contextual factors, its superior functionalities, such as access to external data and predictive modeling, attach humans to decisions. Thus, humans decide differently than algorithms do since humans cannot reconstruct the algorithms’ decision logic. We find that this unbalanced human involvement results in negative outcomes like deferred decisions, workarounds, and manipulations. Thus, we go beyond studies on algorithmic decision-making that treat the neglect of contextual factors as a major hazard (e.g. Marabelli et al., 2018) and reveal the ambivalent character of algorithms that is determined by both human autonomy and human dependency.

Research has often pointed to the potential of automated data analysis and decisions (Helbing, 2019), treating algorithmic decisions as disclosed units (e.g. Dewett and Jones, 2001) that facilitate remote managerial control (Bailey et al., 2012). In contrast, we conceptualize algorithmic decision-making as an assemblage (DeLanda, 2016) of algorithms and humans (Lichtenthaler, 2018). In doing so, we first refine the frequent classification of users as either being free (detached) or bound (attached) to specific technologies (Latour, 1999) and show that a user interface that presents algorithmic decisions provokes human detachments as well as attachments. In contrast to previous analyses of the subject in algorithmic decision-making (Borche and Lange, 2017) and attachments, we set the interface as a mediator in users’ involvement.

Therefore, we address Latour’s (1999) call for researchers to look beyond the mere opposition of attachment and detachment by finding more subtle distinctions in their components. We respond to this call by identifying the constitutive elements of attachments and detachments. The sophisticated software functionalities result in low user involvement in terms of spatial and temporal separation, rational distancing, and cognitive displacement. At the same time, the interface’s simplicity evokes individuals’ active involvement and scrutiny when it activates human senses and discernment related to decisions, especially if other media (e.g. the telephone) in the environment or prevalent organizational conditions provoke human attachment to themselves. In addition, the findings that relate to other media in the environment suggest that, in response to the introduction of a new technology to be used by employees (e.g. Orlikowski, 2000), the extant media can determine the extent to which the new technology plays a role in decisions.

Our findings that are based on the idea of assemblage also extend existing scholarly work on managerial control. Research in this field has analyzed unintended uses or ‘drift’ of new management systems (e.g. Ciborra and Hanseth, 2000), although it neglects the role of technologies as carriers of rationality (Bader and Kaiser, 2017; Cabantous and Gond, 2011). In pursuing our notion of rational distancing, we examine how the user interface builds a site on which decision-makers with different and sometimes opposing decision rationalities meet. Hence, similar to existing work that has debated the various knowledge groups involved in using analytics (Pachidi et al., 2014), we add to the drift debate information regarding how unintended uses unfold if decision logics do not coincide and/or are not transparent to the human decision-maker.

Our third contribution addresses the literature on the relationship of algorithms to human practices, those practices’ reaction to the algorithms (Gillespie, 2014), and the algorithms’ relevance to social outcomes (Beer, 2017; Newell and Marabelli, 2015). The results of our empirical investigation emphasize how learning algorithms depend on humans, so our study joins those of researchers (e.g. Suchman, 2014) who have highlighted the simultaneous making and using of data and have agreed that the human-algorithmic interaction is more important in understanding the implications of algorithms at work than are algorithms on their own (Couldry, 2012; Lowrie, 2017; Orlikowski, 2007; Wegner, 1997). We enlarge this perspective through our empirical data and shed light on how human-algorithmic interactions in the workplace affect organizational processes (Yoo et al., 2012). Specifically, we find that users face the challenge of situationally mastering their detachment and attachment, as an unbalanced involvement of humans in algorithmic decision-making results in deferred decisions, workarounds, and manipulations. Earlier work on human manipulations as a response to algorithms has highlighted how actors overly engage with algorithms by orienting their actions to their suppositions about the algorithms’ computations to make themselves more recognizable (e.g. Gillespie, 2017). In contrast to this over-engagement and identification with algorithms, we find that, if humans are under-engaged with algorithms—that is, if they are disproportionally detached from the algorithmic decision—flawed data may be fed back to the database, causing negative outcomes since the algorithms were working with biased data (e.g. Cunha and Carugati, 2018).

Conclusion

Our framework informs future research on algorithmic decision-making and the use of AI in automated data analysis in organizations. These findings are based on the notion of user involvement, which media theory has traditionally connoted as having to do with psychology (e.g. Krugman, 1971). Our framework on the role of AI is built primarily on the constitutive elements of humans’ detachment from and attachments to decision-making that users face in mastering their involvement in decision-making. In doing so, we answer the question concerning the distance between humans and their decision authority.

However, we also raise the issue of the ontological distance between or convergence of humans and AI. In this context, our framework works as a theoretical starting point for researchers who seek to address the ontological categorization of human versus algorithmic intelligence (Westerhoff, 2005). Similarly, our findings on rational distancing, which are grounded in the divergence between algorithmic decisions and human decision logic, suggest that future research in organization studies consider in more detail the role of epistemologies in algorithmic decision-making (Abbasi et al., 2016; Pachidi et al., 2014). On the workplace level, considering the user interface as the site of clashing rationalities could be a fruitful approach to explaining decision-making in organizations (Bader and Kaiser, 2017).

Our findings are based on a single case study on the implementation of a cognitive system in a call center, an empirical setting that is at the forefront of the development of algorithmic decisions since workplace decisions in this setting are usually structured and can be easily automated. Future research may use the framework as theoretical guidance not only in contexts in which human-algorithmic decision assemblages are part of operational, routine, and daily workplace decisions, such as high-frequency trading (Borche and Lange, 2017) and loan processing (Chae, 2014), but also in more complex and unstructured decision-making domains, such as people analytics (Boudreau and Cascio, 2017; Loebbecke and Picot, 2015; Markus, 2017).

Finally, our study shows the potential of drawing on the rich corpus of media studies in analyzing the empirical phenomenon of digitization in organizations. Envisioning user interfaces that prescribe decision options as mediators that involve humans more or less in decisions allowed us to delve into the organizational context of the use of digital media and to elaborate our framework on the dual detaching and attaching roles of AI’s functionalities in workplace decisions. This approach points to the role media studies can play in explaining organizational phenomena during the process of digitization and beyond. Without taking into account the interfaces that present algorithmic decisions as mediators of specific forms of humans’ detachments and attachments, and without emphasizing this dual role of AI in workplace decisions, future research might neglect the ongoing human involvement and go too far in the debate about algorithms as autonomous elements.