Abstract

Citations tell us something about the patterns of knowledge exchange around a particular journal. To examine this network, one can use Thomson Reuters’ Journal Citation Reports database and derive three basic citation relationships: the numbers of articles citing (and thus influenced by) a journal, articles cited by (thus influencing) a journal, as well as the self-citation rate of the journal. In this article we examine the patterns relating to 27 selected journals in organization and management. The article proposes an influence metric with a citing and cited pair. The metric is applied to develop a taxonomy which classifies journals into one of four types of influence network, and comments on the way in which this sort of citation data locates Organization clearly on the margins in a number of important ways. We also comment on whether marginalization is an effect of interdisciplinarity, and political and methodological heterodoxy.

Judgements concerning the ‘quality’ of research publication are increasingly important to tenure and promotions for academics around the world. 1 Scholars are encouraged to publish highly-cited articles in particular journals, but this is only part of a wider process of ranking which implicates academics, publishers, professional institutions and universities (Parker and Thomas, 2011). An interest in global institutional ranking began in 2003 with the publication of Shanghai Jiaotung University’s annual Academic Ranking of World Universities (Shanghai Ranking Consultancy, 2012) which uses six criteria—including the number of alumni winning Nobel Prizes and Fields Medals; the number of staff winning Nobel Prizes and Fields Medals; the number of articles published in Nature and Science; the number of articles indexed in Science Citation Index-Expanded (SCIE) and Social Science Citation Index (SSCI) (Thomson Reuters, 2012); and the number of highly cited researchers in 21 broad subject categories. Whilst, by definition, a highly-cited article is difficult to guarantee, having a article published in an indexed journal nowadays is relatively easy because there are more and more journals publishing more and more articles, 144 listed under the heading ‘Management’ alone in the Thompson Reuters index. One way of making distinctions between the tens of thousands of articles published every year is to use some sort of list, such as those provided by the UK Association of Business Schools (ABS, 2012), or the even more restrictive FT 45 (Financial Times, 2012). The number of articles published in these journals affects the research rank of a business school in the Global MBA and EMBA rankings. For this reason, some schools now promote or tenure their faculty members only if they publish articles in these places. Whilst there is plenty of debate about the merits of such rankings (Rowlinson et al., 2011; Willmott 2011), this article is more concerned to show what sort of influence patterns they reflect. In other words, what can we discover about the centrality and marginality of particular journals to a particular field?

In order to approach this question, we consider the impact factor (IF) of journals, which turns citations into a key currency for authors and editors. As the IF (Garfield, 2012) becomes more important in these calculations so are some editors of indexed journals starting to respond with ‘gaming’ strategies. They often demand, implicitly in editorial statements or explicitly in revise and resubmit letters, that their authors cite their journals in order to inflate their impact factors. Nonetheless, this practice can be easily detected by looking into the self-citation patterns of any given journal in Thomson Reuters’ Journal Citation Report (JCR) database (Thomson Reuters, 2012). From this database we can also derive two other citation relationships: the number of articles cited by a journal and the amount of citation from other journals. This latter measure is particularly important because it tells us something about the breadth of research communities which a journal reaches. That is, the wider the network of citations, the greater the influence (in a citation sense) of a journal.

In this article, we attempt to understand the citation patterns of SSCI journals in the broad area of organization and management (OM). Specifically, we address the following questions. First, what are the impact-factor patterns of OM journals? This means understanding how often these OM journals are cited by other journals as well as how often OM journals cite other journals. It also means looking at, and to some extent discounting, the self-citation patterns of these OM journals. Secondly, we will investigate which OM journals are most influenced by work which happens outside OM, on the basis of their spread of citations, as well as which OM journals have the most influence on other areas of enquiry. For the purposes of this article, we will use Organization as an illustration of the data, and hence the issues which the article is raising. That is to say, what position does this journal have in the citation networks of OM journals?

That being said, there are obviously limitations to this sort of study. For example, the Journal Citation Reports Database does not contain information on citations to and from books, edited collections and grey literature. This means that tracing patterns of influence to and from areas of the social sciences and humanities which are less dominated by journal output becomes problematic. It is also worth remembering that when we use words like ‘influence’, we are using them primarily in a statistical sense, and any inferences about social influence more broadly need to be treated with caution. As is fairly obvious, citing happens for complex reasons, not simply because an author has read something and has had their thinking altered in some way. In any case, as Baum (2011) and Li (2009) argue, there are many reasons to be suspicious of the IF as a measure of research quality. Finally, the selection of journals in this article does not include many that readers of Organization might consider important—Gender, Work and Organization, Culture and Organization, Work, Employment and Society and so on. This is not to suggest that these journals are irrelevant to the argument made here, but our selection reflects the US dominance of journals in the organization and management area (Grey, 2010). The inclusion of Harvard Business Review, for example, might seem controversial for readers of Organization but for those outside the narrow subfields that journals such as this one represents, it is simply the most well-known management and organization publication. Unsurprisingly, we will conclude that Organization barely counts at all when compared to HBR, but also that there are several other ways in which a journal like this one can be seen not to count. But then, if it did, we might ask just how well it was doing at reflecting its espoused editorial values. Marginalization is, we will argue, both a condition and consequence for a journal such as Organization. If the journal did not show a particular pattern of influence, we could easily be suspicious of its claims to heterodoxy and interdisciplinarity. At the same time, however, this means it is unlikely to have much influence, in a narrow sense, on the scholarship of other more central journals.

Methods and data

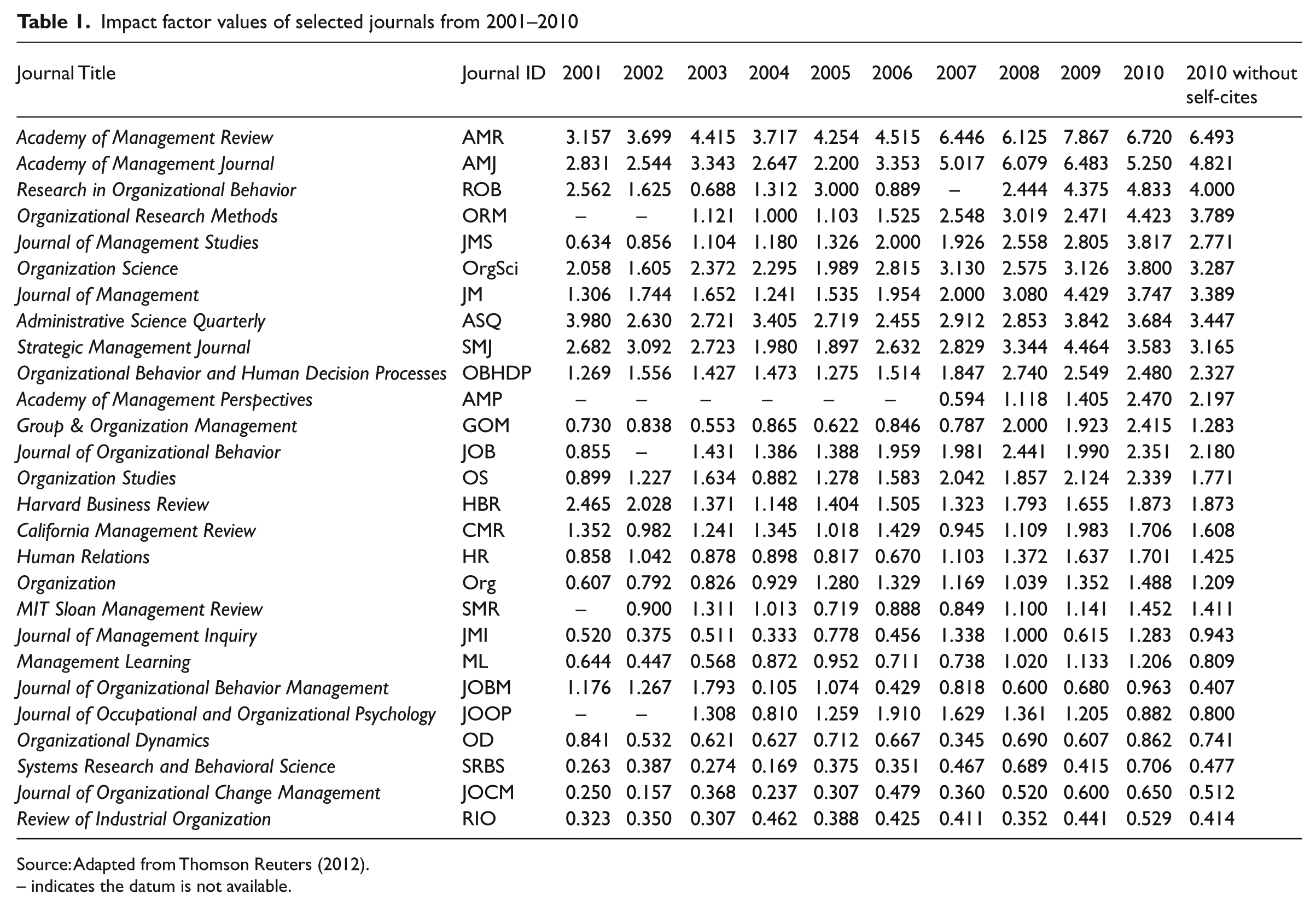

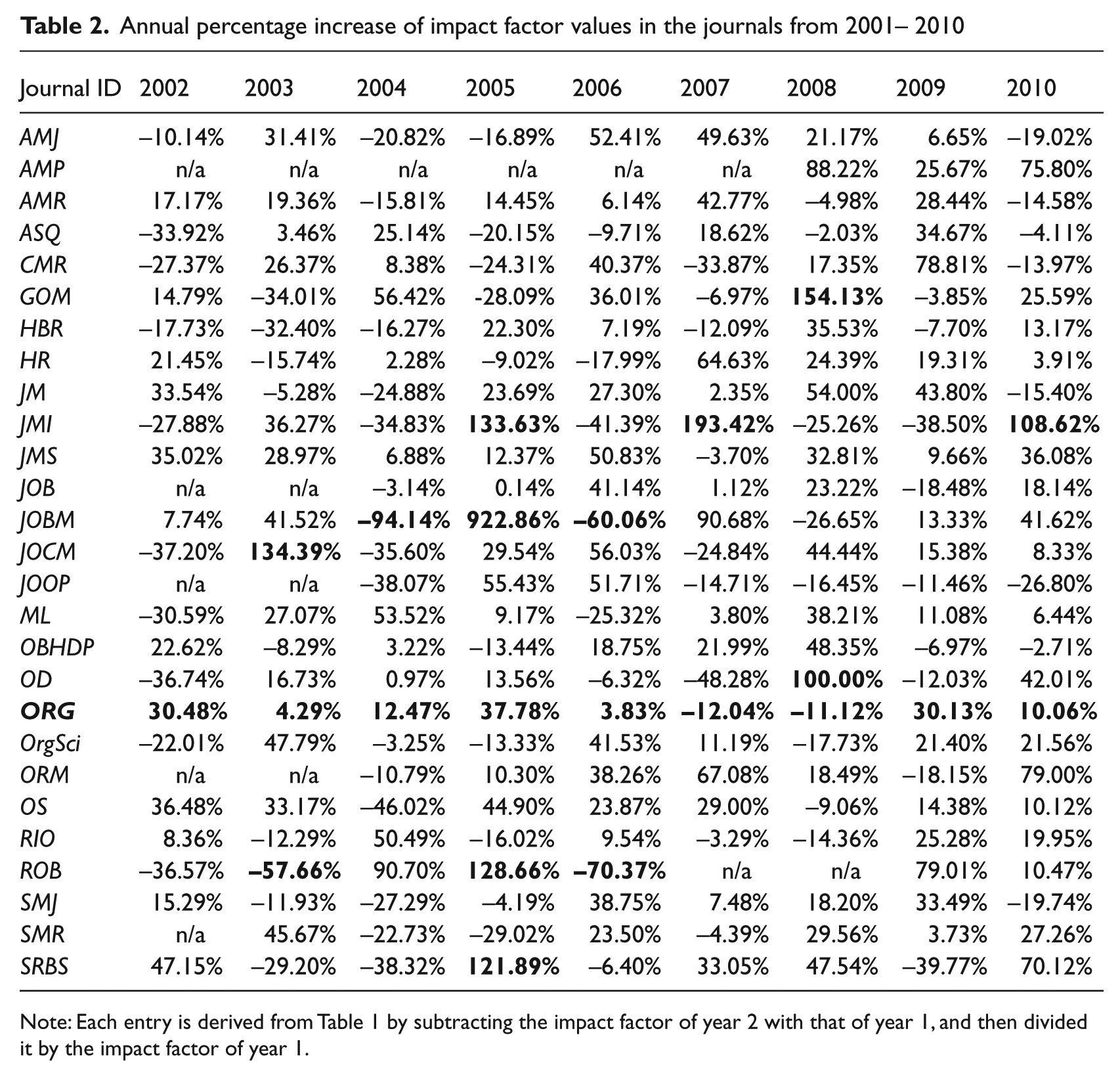

To collect the research data, we searched the 2010 Journal Citation Reports online database (Thomson Reuters, 2011) under the subject category of ‘Management’. From that category we selected 27 journals for this study because the other journals are either not publishing OM-related articles or were only recently included in the 2010 index, resulting in insufficient data. Table 1 shows the 27 journals sorted in sequence by their 2010 IF values. The rightmost column indicates the IF value of each journal after removing the self-citation count. In order to analyse the impact-factor pattern, in Table 2 we also show the annual percentage increase in the IF value.

Impact factor values of selected journals from 2001–2010

Source: Adapted from Thomson Reuters (2012).

– indicates the datum is not available.

Annual percentage increase of impact factor values in the journals from 2001– 2010

Note: Each entry is derived from Table 1 by subtracting the impact factor of year 2 with that of year 1, and then divided it by the impact factor of year 1.

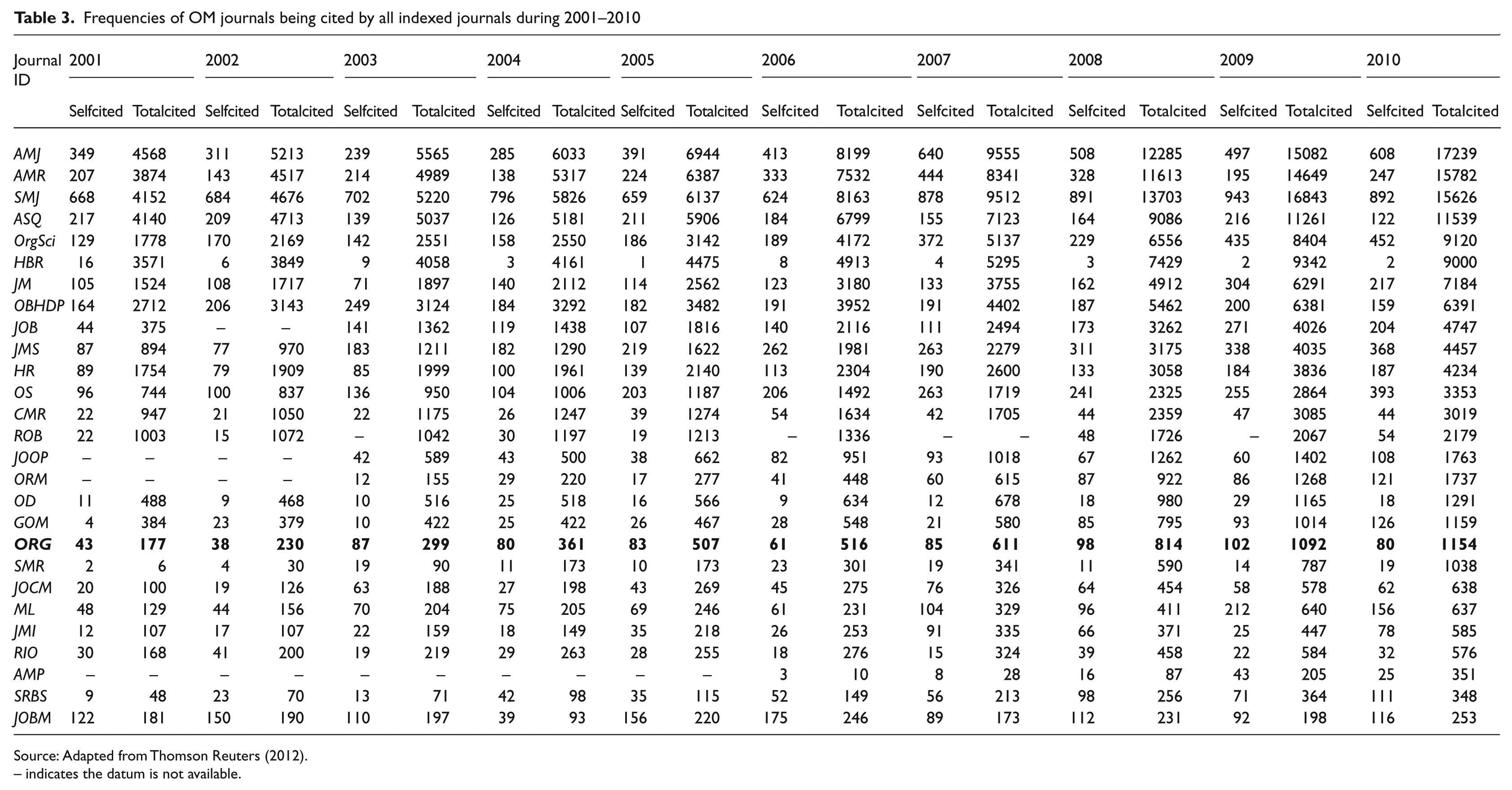

In order to find out how often these OM journals are cited by other journals, we examined the cited tables in the JCR database. Table 3 gives the values of total cited counts from 2001–2010. The total cited count is the number of times all articles published in all SCIE/SSCI journals each year cited those articles published in a target OM journal. For example, the total cited count for Organization is the number of times (1154) that articles published in 2010 cited articles published in Organization since its first issue in 1994. The numbers tell us something about the level of citation influence this particular journal has. As you can see, the top cited journal has about 17 times as many citations as Organization.

Frequencies of OM journals being cited by all indexed journals during 2001–2010

Source: Adapted from Thomson Reuters (2012).

– indicates the datum is not available.

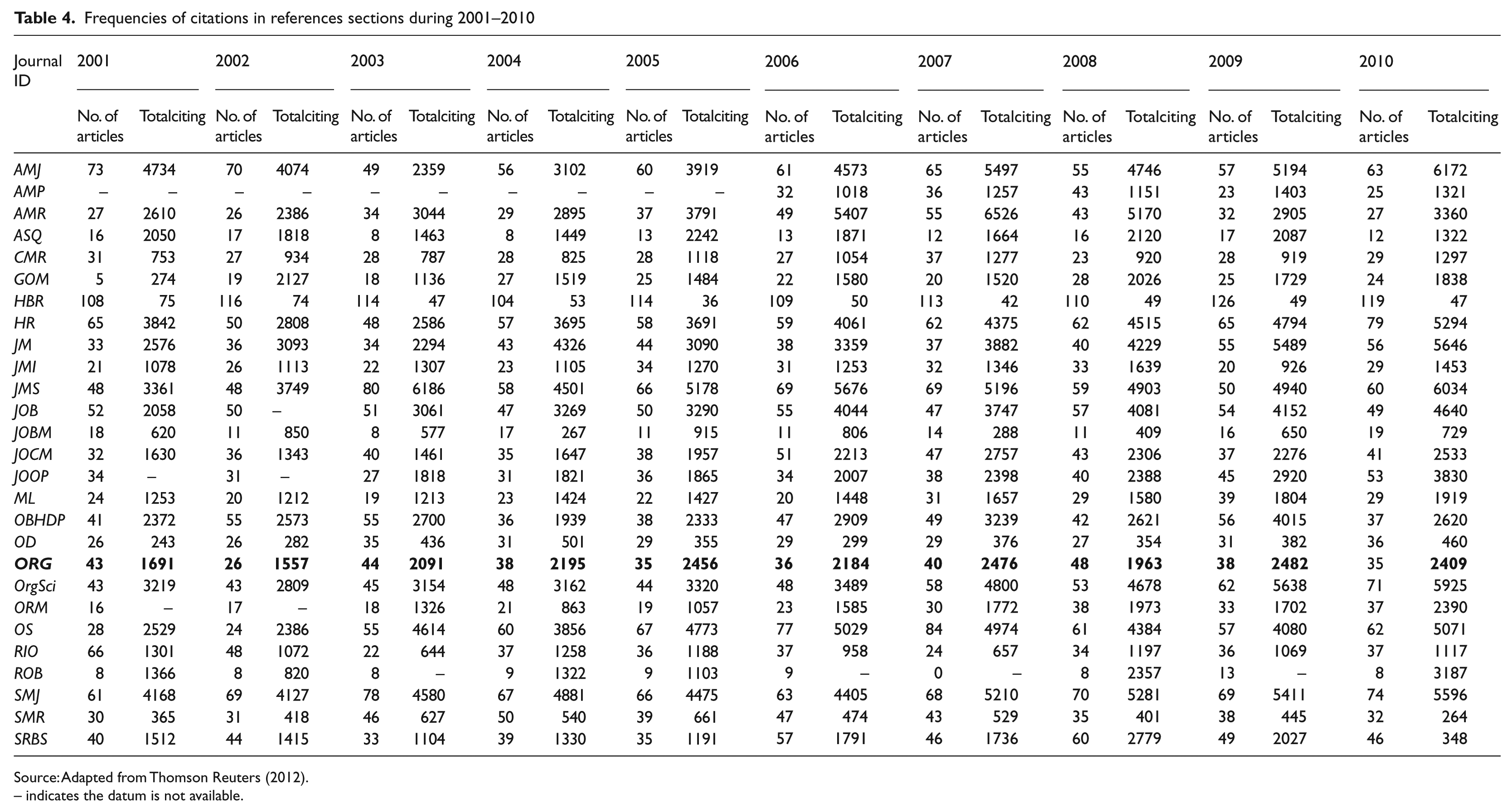

To investigate how often the OM journals in this study cite other journals, we reviewed the citing tables in the JCR. Table 4 gives the numbers of articles and the total citing counts in the references sections of all articles published each year by the selected OM journals from 2001 to 2010. For example in 2010, Organization published 35 articles which made a total of 2409 citations. By looking at what these citations were, we can say something about the sorts of journals which influence the authors who get published in Organization.

Frequencies of citations in references sections during 2001–2010

Source: Adapted from Thomson Reuters (2012).

– indicates the datum is not available.

The self-citation patterns of the selected OM journal are presented in Table 3. For example in 2010, the self-cited count in the table indicates that 80 of the total 1154 times Organization is cited happen in Organization’s own articles; that is to say, other journals have cited Organization 1074 times. We will call this latter figure the ‘other-cited count’. Likewise for the citing counts from Organization in Table 4, 80 citations refer to Organization itself and 2329 (i.e. 2409 minus 80) citations are citing other sources. The latter is hereafter called the “other-citing count”.

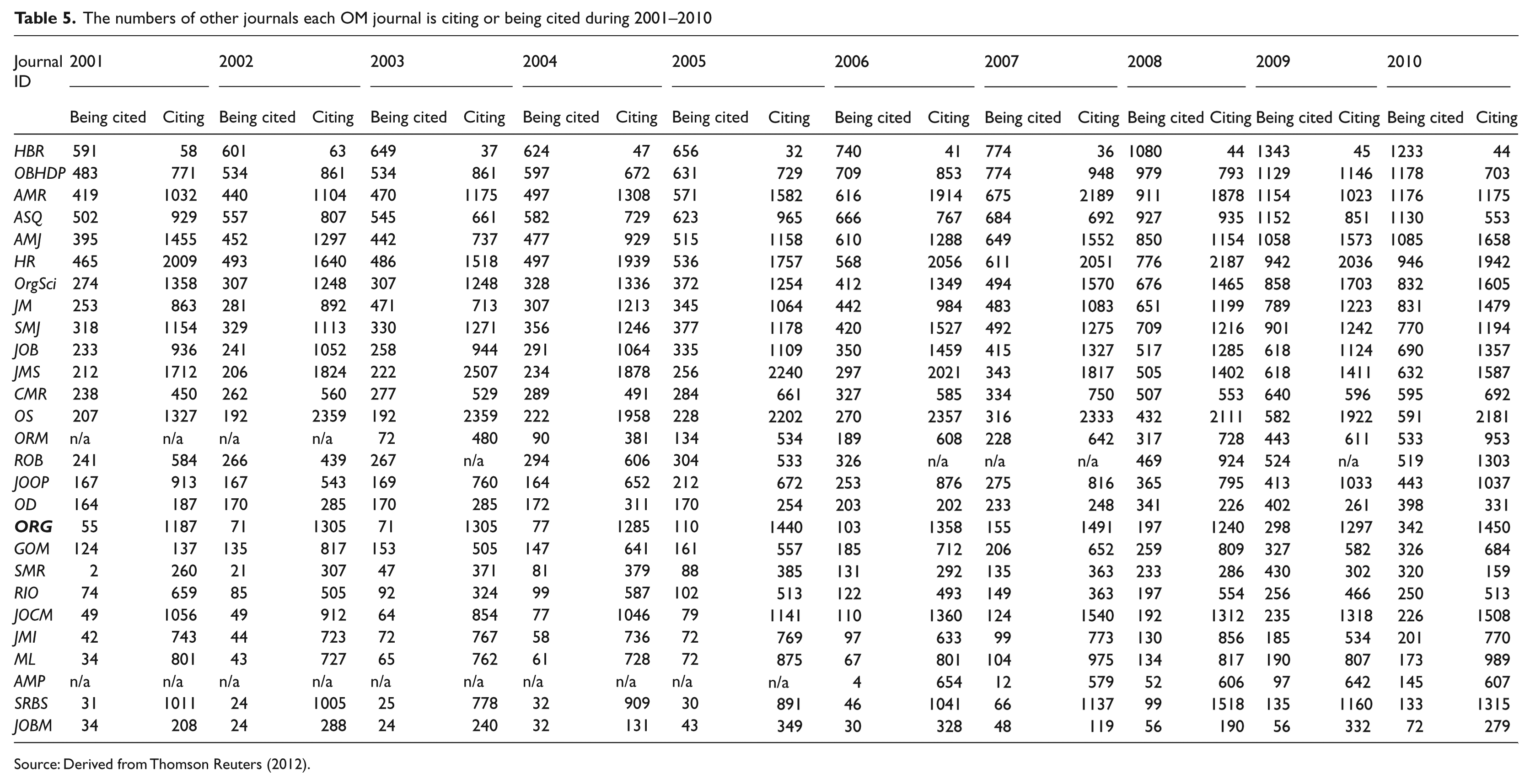

To understand which OM journals are most influenced by other academic fields and which OM journal has the most influence outside organization and management, we examined the journal counts on the cited and citing tables of each OM journal. In these tables, Thomson Reuters breaks down the citations by journal source. Each table indicates the journal sources (rows) on the table and the number of citations coming from each source in each year. This information allows us to derive the citing/cited journal count of each OM journal. Table 5 presents the numbers of other journals citing and being cited by each OM journal from 2001–2010. For example in 2010, 342 other journals cited Organization whilst a total of 1450 other journals are cited by Organization. Compare this to AMR and you have a very different pattern, with 1176 journals citing, against 1175 being cited. These numbers indicate the level that Organization’s authors in 2010 influence or are influenced by other journals, as well as providing some indication of the insularity or permeability of the influence network associated with a particular journal.

The numbers of other journals each OM journal is citing or being cited during 2001–2010

Source: Derived from Thomson Reuters (2012).

Results and discussion

Impact factors and self-citation

In 2010, the top five journals in terms of impact factor rankings were: AMR (IF = 6.72), AMJ (IF = 5.25), ROB (IF = 4.833), ORM (IF = 4.423), and JMS (IF = 3.817). If we take the impact factor without the self-cited count, the right-most column of Table 1 reveals that among the 27 journals, GOM has the largest drop of IF value (1.132), from 2.415 to 1.283. That is to say, almost half its citations come from within the journal. Those journals having IF values drop more than 0.5 include JMS (1.046), ROB (0.833), ORM (0.634), OS (0.568), JOBM (0.556), and OrgSci (0.513). Organization drops 0.279 (from 1.488 to 1.209). As we have noted, it could well be that high levels of self-citation tell us something about the small size or tight network associated with a journal, however, for general management and organization journals this would seem to be a difficult claim to sustain. Indeed, you might expect that, on this measure, a journal like Organization would have a higher self-citation count since it would reflect the density of the ‘invisible college’ associated with the journal (Jones et al., 2006). The data doesn’t bear this out, however, so we are left with either claiming that journals with high self-citation rates publish particularly citable articles, or that the gaming of editors and authors is visible in these numbers.

The variation of the impact factors within each journal seems to have no specific pattern. Almost all of them go up and down during the 10-year period, except for the IF value of AMP which has quadrupled from 0.594 to 2.47 since it was first included in the SSCI in 2007. According to Table 2, some journals have doubled their IF values in two consecutive years (100% or more), while others had experienced a drop of more than half of their IF values (-50% or worse). For example, the impact factor of JOBM exhibits substantial ups and downs in IF values. Specifically, between 2003–2007, its IF values fell from 1.793 to 0.105, went back up to 1.074, dropped down again to 0.429, then went up again to 0.818 in 2007. Likewise, ROB experienced a large drop from 1.625 to 0.688 in 2003, jumped to 1.312, jumped again to 3.0, then dropped to 0.889 in 2006. Such fluctuation might calls for observing both journals for a few more years to ensure the stability of their quality, or it might suggest that the IF is not a particularly reliable measure, since it can oscillate wildly.

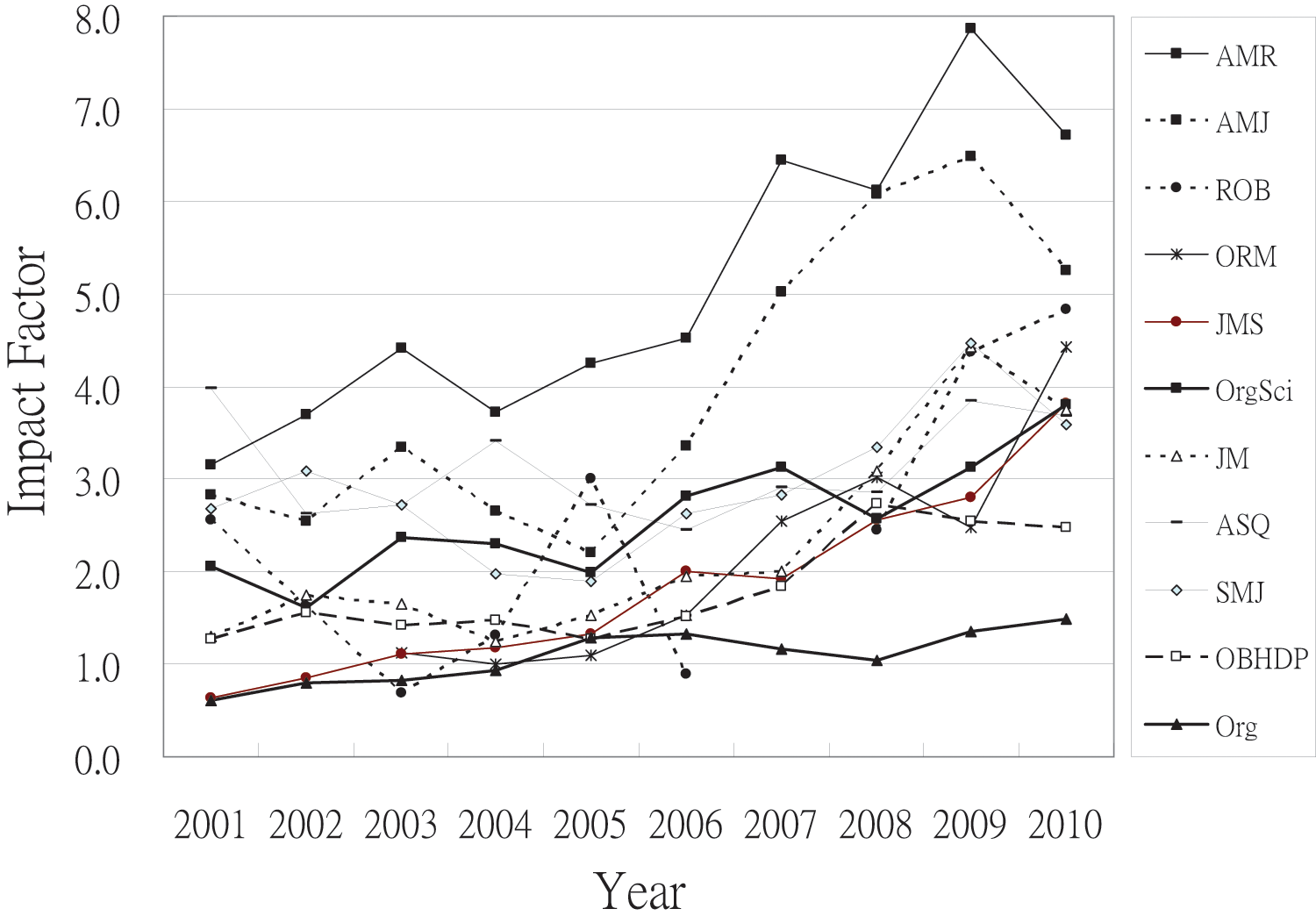

Five journals had more than doubled their impact factor values in some years; GOM jumped 154.13% in 2008; JOCM increased 134.39% in 2003; OD was up 100% in 2008; SRBS jumped 121.89% in 2005. Surprisingly, JMI had increased substantially three times; once by 133.63% in 2005, by 193.42% in 2008, and by 108.62% in 2010. As shown in Figure 1, the ten leading OM journals all experienced fluctuations in IF values in the ten-year period, with AMR in the leading position during the last nine years, while AMJ was right behind AMR and ranked above the other journals during the last five years. Organization is in last place in this race during the last five years, but is also unaffected by any large fluctuations. There have been no sharp rises or falls in its citation rates, and instead a gradual rise, which seems to suggest that it is a journal which is relatively uninfluenced by either gaming strategies or general citation inflation. It might also be the case that it simply publishes articles of lower quality or less interest, and hence receives fewer citations for that reason.

Impact factor values of Organization and the top ten OM journals

Based on the cited counts in Table 3, AMJ (17239), AMR (15782) and SMJ (15626) are the three most cited journals in 2010, far ahead of ASQ (11539), OrgSci (9120), HBR (9000), JM (7184) and OBHDP (6391) which are in the fourth to eighth places. Once again, Organization is significantly behind these journals in cited counts with a figure of 1154. In part, this difference might be explained by the fact that Organization is a relatively new journal, commencing publication in 1994. The youngest leading journal is OrgSci which began publishing in 1990, while SMJ (1980), JM (1975), AMR (1976), OBHDP (1966), AMJ (1958), ASQ (1956) and HBR (1923) are much older. In addition, some journals publish more issues and articles than others, hence providing more citable material. In 2010 OrgSci published twice as many articles (71 versus 35) as Organization, suggesting that it will continue to pull ahead on raw citation counts.

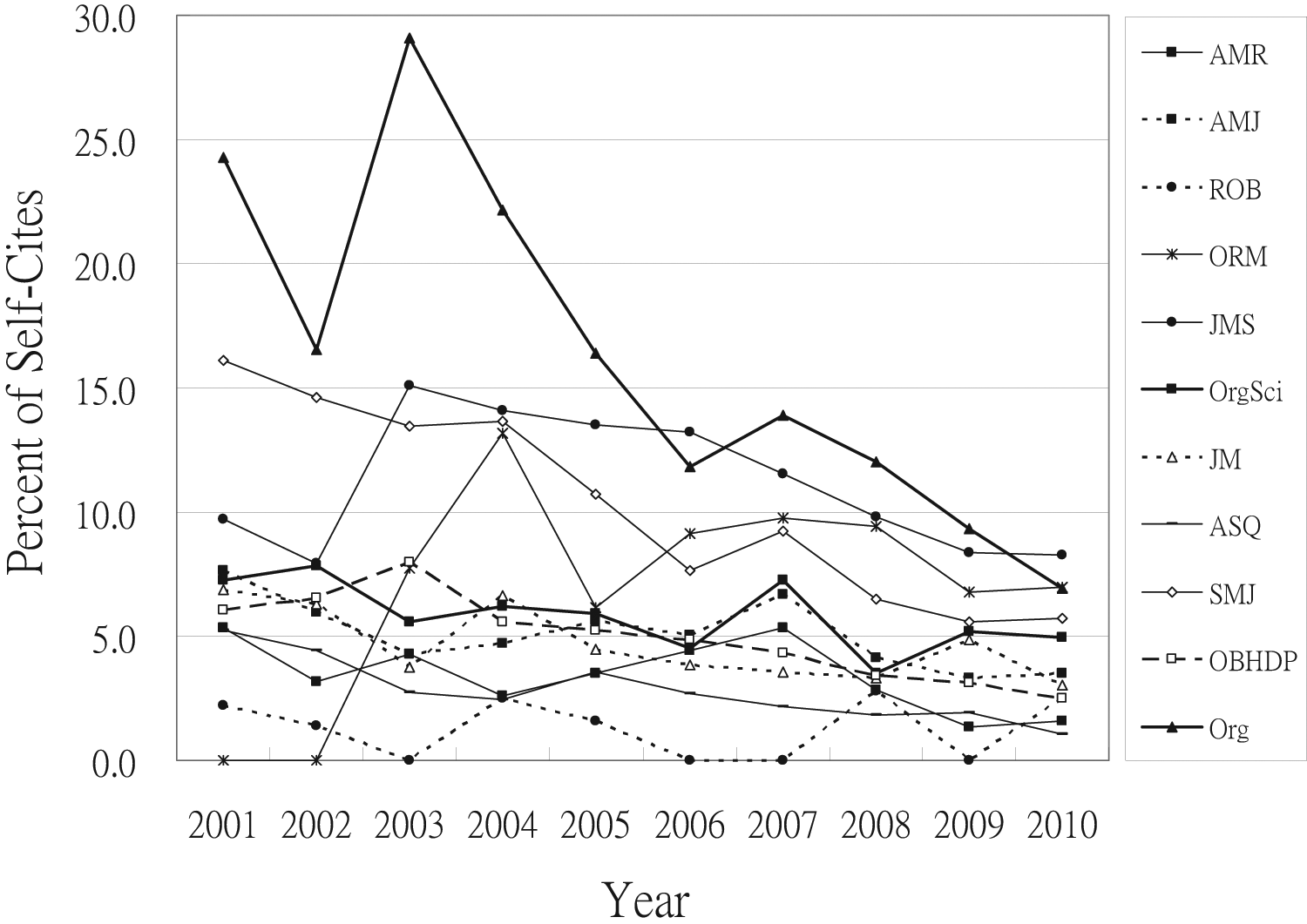

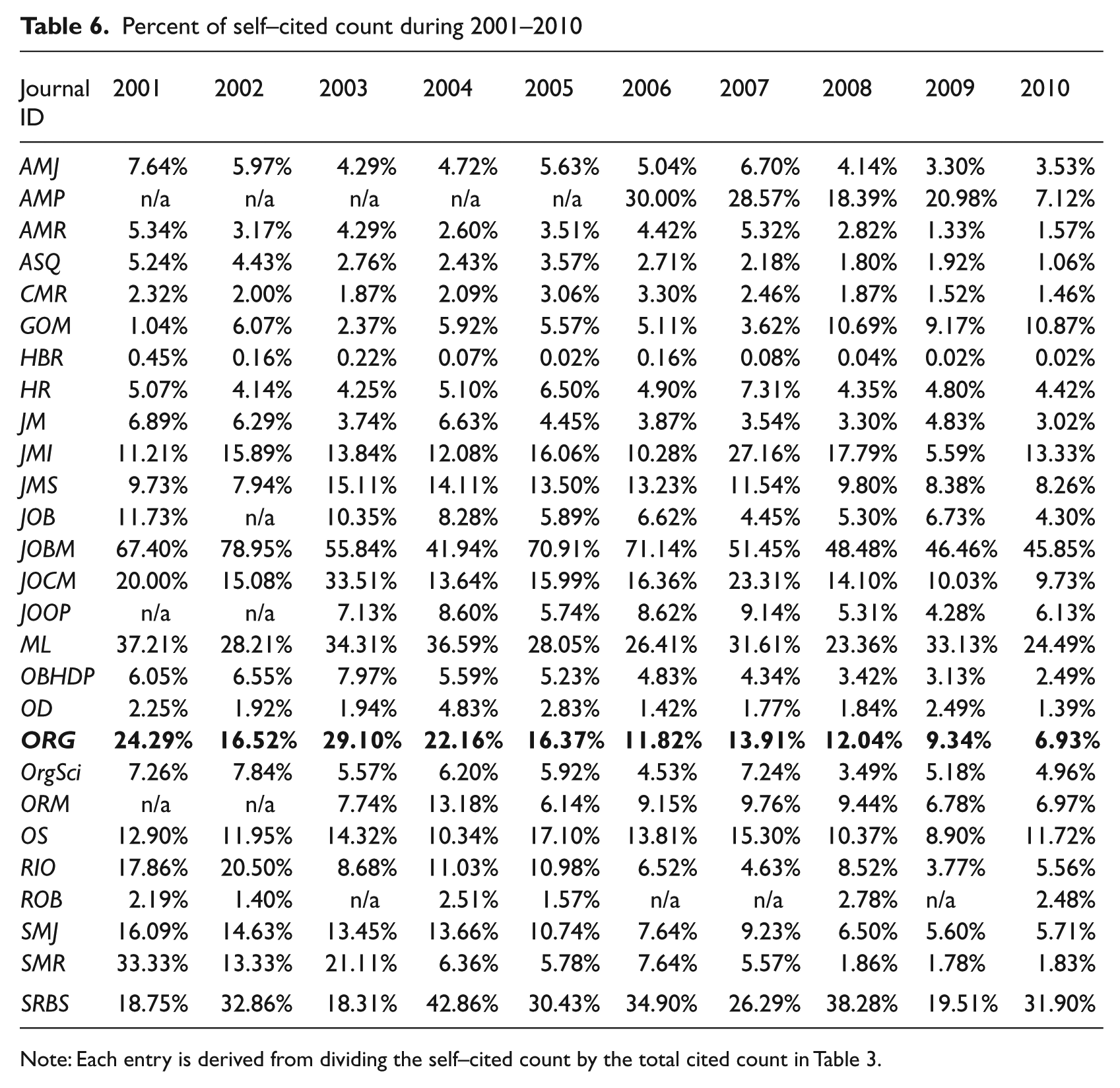

As for self-cited counts, Figure 2 reveals that the self-citation percentages of all the top ten journals have been below 15% since 2004 and dropped further to 10% or lower since 2008. Organization followed suit in 2006 and 2009 after going through relatively large fluctuations and high self-citation percentages during 2001–2005, which is the period that Jones et al 2006 were commenting on in terms of a predictable network of people and institutions. In 2010 ASQ (1.06%), CMR (1.46%), OD (1.39%), AMR (1.57%) and SMR (1.83%) had less than 2% self-citations. At the opposite end (see Table 6), the journal having the highest self-citation percentage in 2010 is JOBM (45.85%). Throughout the 10-year period, this journal has self-citation percentages ranging from 41.94% to 78.95%. Its impact factors have been affected significantly by these self-citation percentages, as evidenced by the drop of its impact factor in 2010 from 0.963 to 0.407. Likewise, two other journals which have relatively high self-citation percentages are SRBS and ML. While SRBS has a range between 18.31% and 42.86, ML ranges from 23.36% to 37.21%%. Without the self-citations, the impact factor of SRBS in 2010 decreases from 0.706 to 0.477, while ML decreases from 1.206 to 0.809. Again, this could be interpreted in a variety of different ways, since ML does fall into the category of a fairly specific (not generalist) journal. Overall, the self-citation patterns of most journals stay within a narrow range of 15%, meaning that seven out of eight citations is to material from other journals.

Percent of self-citations of Organization and the top ten OM journals

Percent of self–cited count during 2001–2010

Note: Each entry is derived from dividing the self–cited count by the total cited count in Table 3.

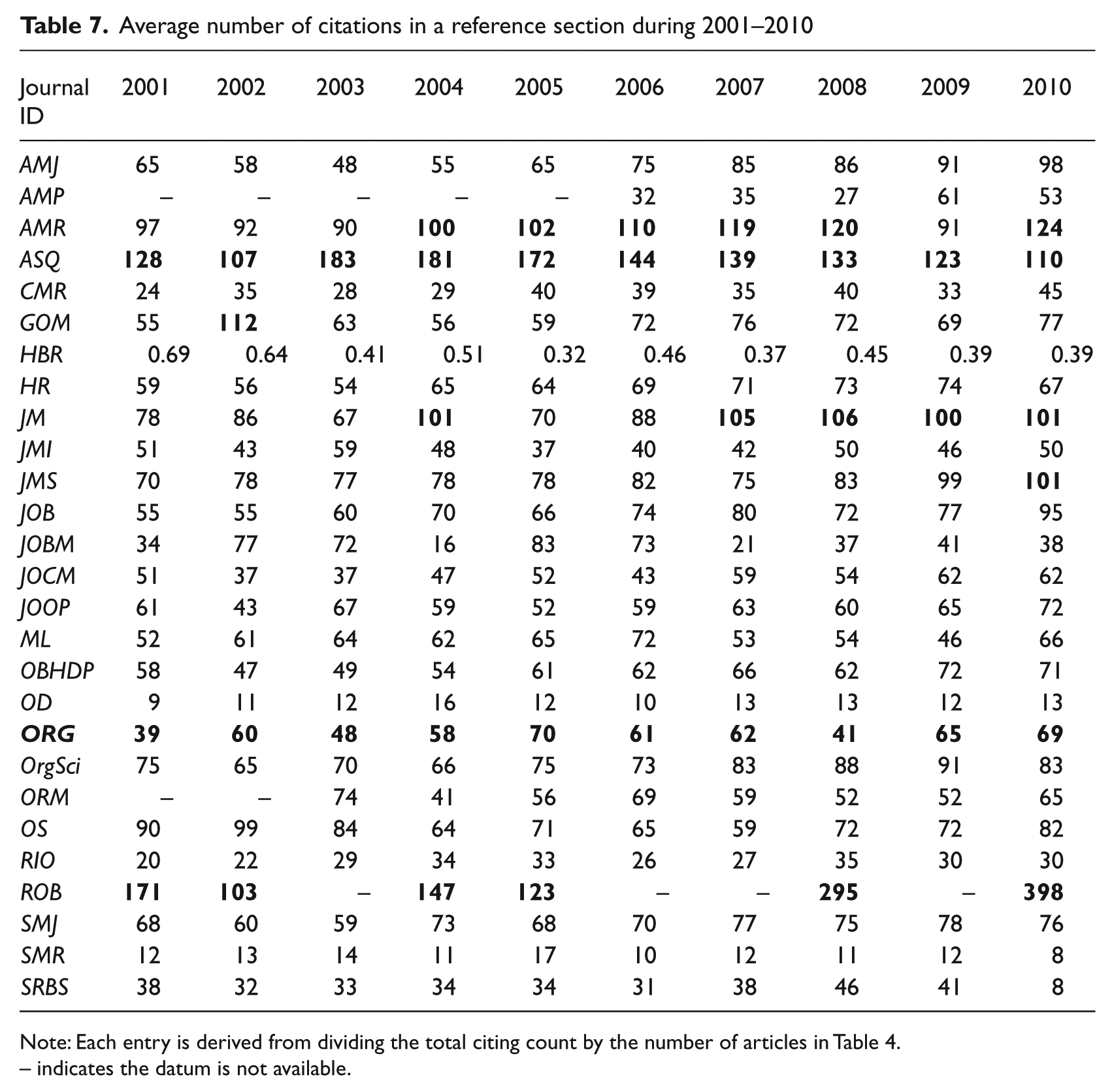

The citing counts provided in the JCR database are the number of citations in the references sections of the articles published each year by an SSCI journal. Table 4 demonstrates that seven OM journals, namely, AMJ (6172), HR (5294), JM (5646), JMS (6034), OrgSci (5925), OS (5071) and SMJ (5596), have over 5000 citations in their references sections in 2010. However, because these journals publish different numbers of articles each year, we divided the total count by the article number to come up with the average number of citations in a references section for each journal as shown in Table 7. The table shows that the higher citers are AMR, ASQ, JM and ROB; all have over 100 citations in each article. In particular, ROB is an outlier which has 398 citations in each article on average in 2010. On the other side, the lower citers include HBR, OD, and SMR; all have on average less than 20 citations in each article. As expected, HBR is an outlier with a mean citation count of 0.39; that is, approximately one out of three articles has a citation. Excluding the two outliers (ROB and HBR), the average citing count is 61 citations in a references section. Organization is just below this number, having citing counts range from 39 to 70 with a mean value of 57. It is unclear exactly what high numbers of references signify, because they could mean a variety of different things, but it seems fairly clear that most journals are seeing a gradual rise in the length of the references section. Perhaps this tells us something general about the rise in a perceived importance of citations, either for legitimating articles or journals, but there are also variations between journals which are likely to be significant in themselves.

Average number of citations in a reference section during 2001–2010

Note: Each entry is derived from dividing the total citing count by the number of articles in Table 4.

– indicates the datum is not available.

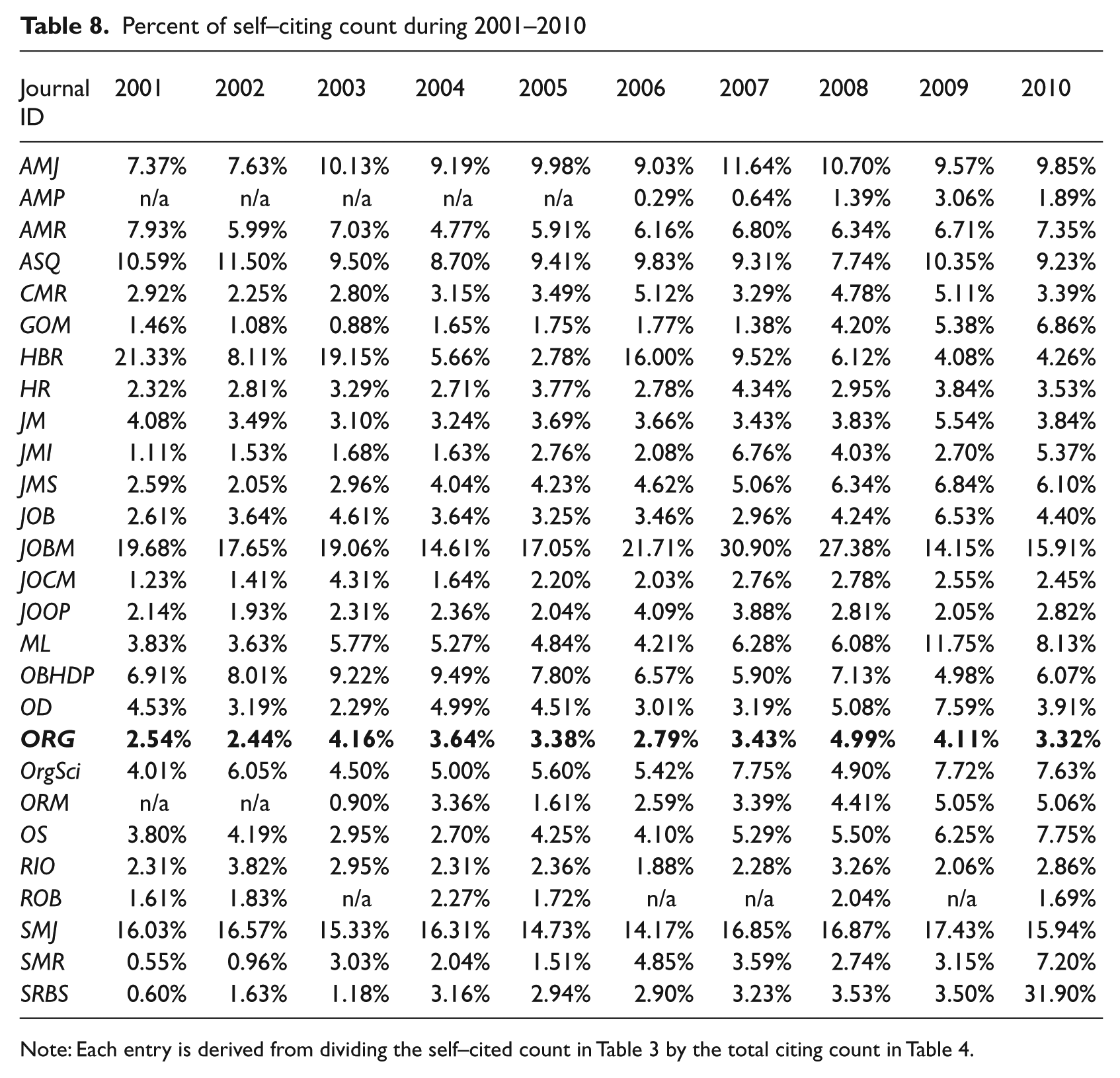

Examining the percentages of self-citing counts allows us to see which journals have unusual patterns of citations in their references sections. Table 8 shows that most journals have self-citing percentages ranging from 3% to 8%; the mean value is 5.72%. A scrutiny of the table reveals that two journals have unusual increases which might affect their impact factor values; one is JOBM in 2007 (30.9%) and the other is SRBS in 2010 (31.90%). The self-citing percentages of Organization are between 2.44% and 4.99% which are below the mean value, indicating that its sources of knowledge are mostly from other publications.

Percent of self–citing count during 2001–2010

Influence networks

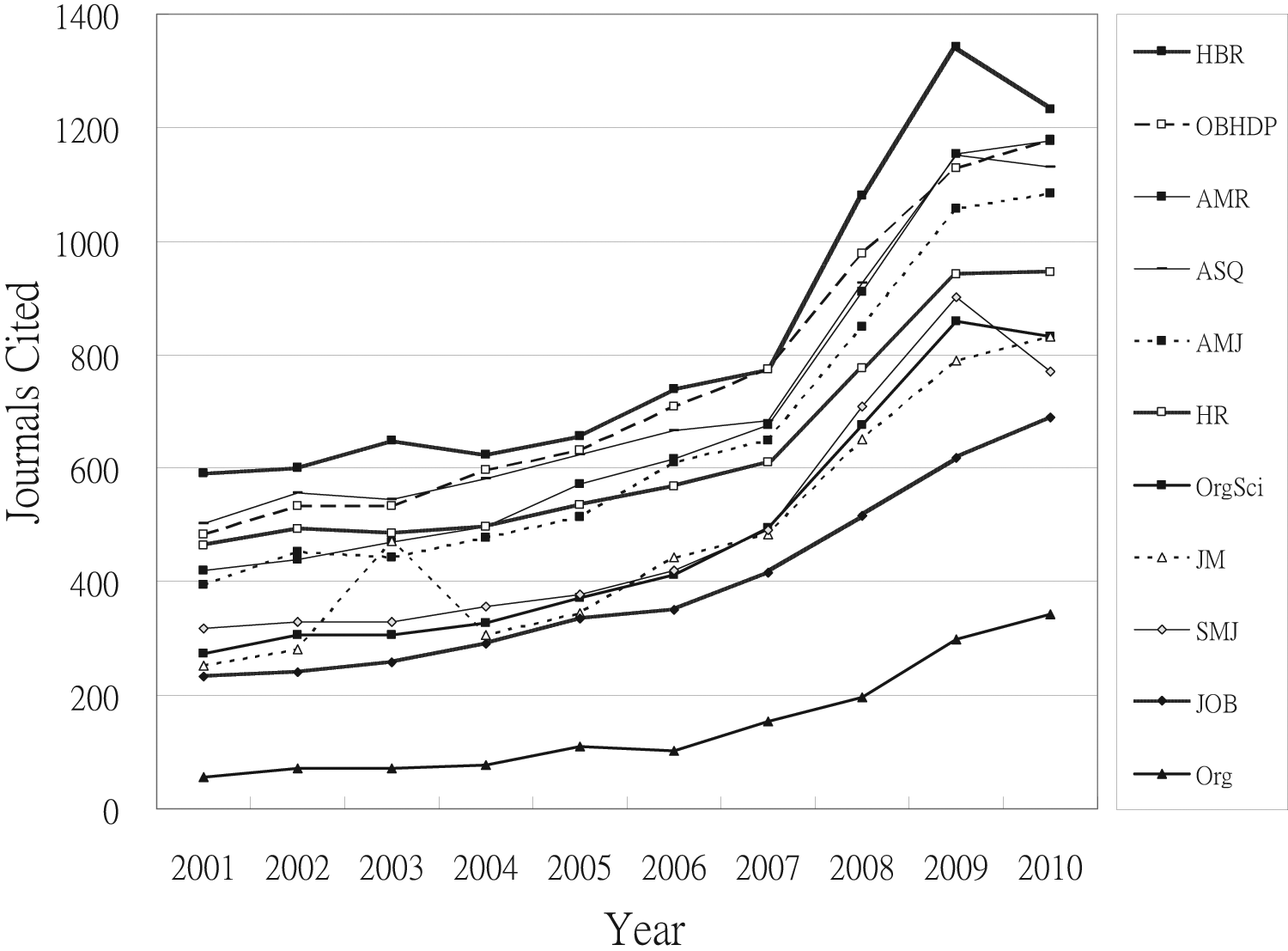

The number of non OM journals being cited by an OM journal is an indicator of the sources of knowledge which influence that particular journal. Adopting the assumption that each OM journal is usually citing or being cited by the other 26 OM journals, we can subtract 26 from each entry in Table 5 to get the number of non-OM journals citing or being cited by any particular OM journal. As the patterns of influence are relative in nature, the use of the original numbers in Table 5 will not affect the pattern. Close inspection of the table reveals that most OM journals are citing more than being cited by other journals, supporting Oswick and colleagues’ (2011) suggestion that organization theory is a discipline which borrows its concepts from elsewhere. Sure enough, the mean value of citing other journals is 961, while that of being cited by other journals is 356. Looking at the opposite flow, Figure 3 shows the influence patterns of the top ten cited OM journals. HBR has the most influence on journals in non OM areas with citations in over 1000 journals every year in the last three years, followed by OBHDP, AMR, ASQ, and AMJ. Most of these top journals exhibit steady growth during the past 10 years. Organization exhibits the same growth pattern though it is not as frequently cited by the other journals.

Numbers of journals citing the top 10 cited journals and Organization

In a previous study into the citation patterns of information system journals, Li (2009) suggested scrutinizing the ratio between the number of journals that cited a given journal and the number of journals being cited by that journal. In the same vein, we here propose a similar ratio at the article and journal level, the ratio between the cited count and the citing count. Both ratios tell us something about the influence patterns of each journal at two different levels: journal and article. We exclude self-citations from the citation network and consider only the other-cited count and the other-citing count. This reduced network is hereafter called the “influence network”. We use a directed graph or digraph (Bang-Jensen and Gutin, 2009; Bondy and Murty, 1976) to represent an influence network. Each journal is a point with the other-cited count being its indegree, while the other-citing count is its outdegree. In a digraph, each point can be labeled with an indegree-outdegree pair in parentheses, such as (1085, 1658) for AMJ in 2010 at the journal level. Following this convention, we can define the influence metrics as follows:

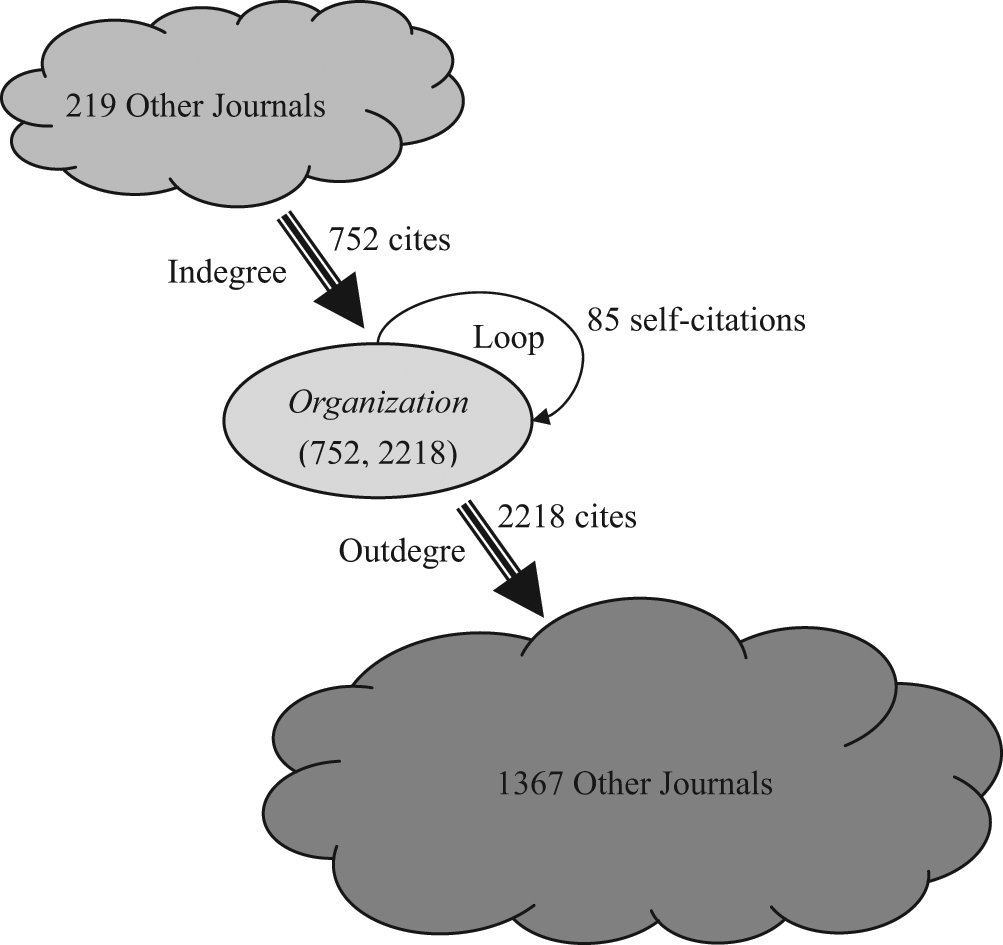

Using this method, we can say that Organization has the metric IMj (Org) with a value pair of (342, 1450) in 2010. That is to say that 342 journals cite Organization while Organization articles cite 1450 different journals. Likewise, the metric IMa (Org) is (1074, 2329), after subtracting a self-citation count of 80 from the total citation counts of 1154 in Table 3 and 2409 in Table 4. This means that 1074 articles cite Organization articles whilst Organization cites 2329 different articles in other journals. Although such metrics can be derived for each journal year by year, the fluctuation of citation and journal counts prevents us from making a meaningful comparison between journals. We therefore take the five-year average of IM values as the basis of comparison. The choice of five-year average is consistent with the five-year impact factor reported in JCR database.

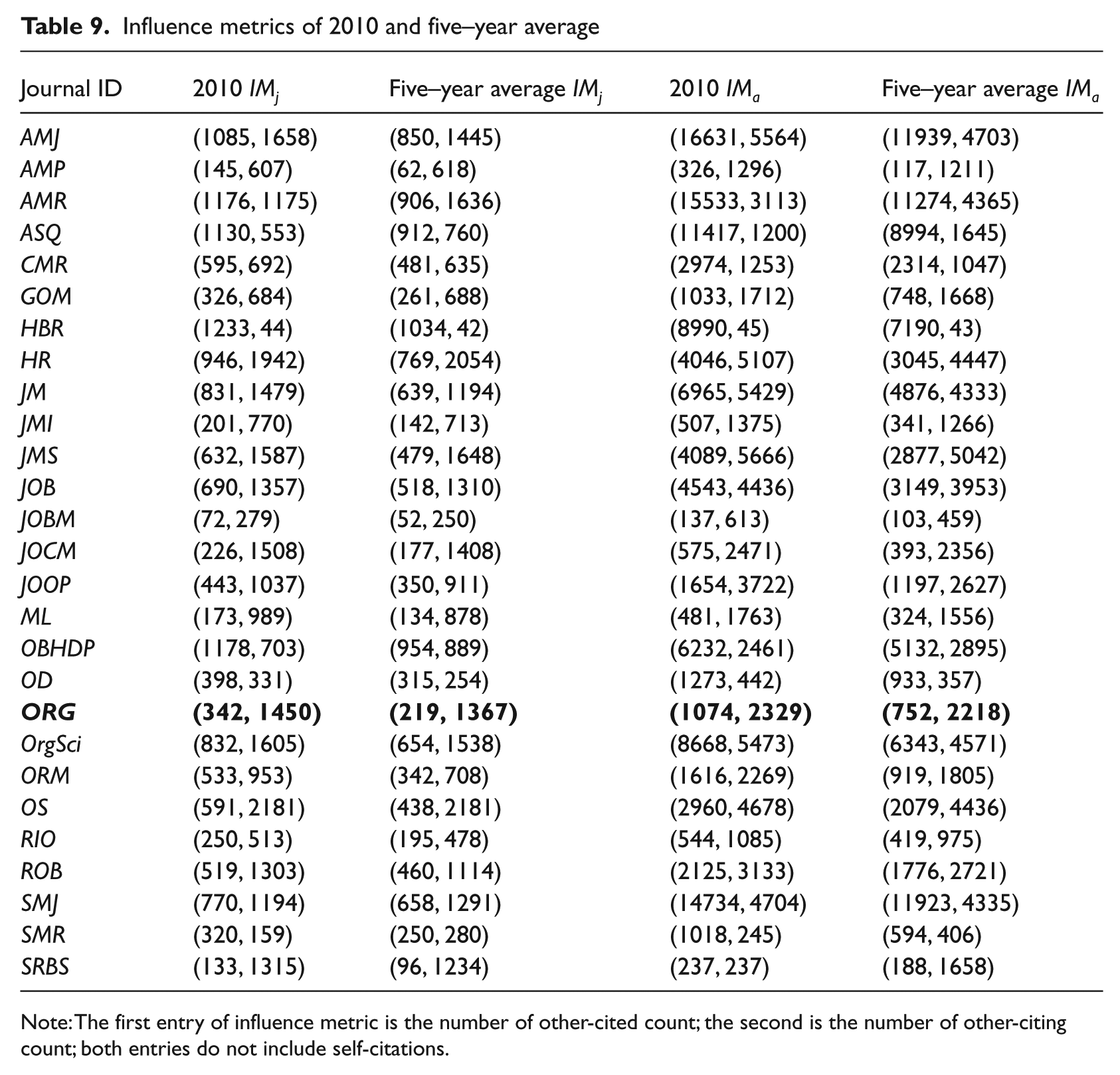

Table 9 shows the IM values of 2010 and the five-year average for journal and article counts, and allows us to see some pretty clear patterns.

Influence metrics of 2010 and five–year average

Note: The first entry of influence metric is the number of other-cited count; the second is the number of other-citing count; both entries do not include self-citations.

In terms of 2010 IMj, ASQ (1130, 553), HBR (1233, 44), and OBHDP (1178, 703) have more other-cited count than other-citing count, while AMJ (1085, 1658), OrgSci (832, 1605), and OS (591, 2181) have the opposite; and AMR (1176, 1175) and OD (398, 331) are somewhat balanced. Organization (342, 1450) falls in the high-outdegree group, which is to say that it cites much more than it is cited. When looking at the five-year average, most journals have higher outdegree, except ASQ (912, 760), HBR (1034, 42), OBHDP (954, 889), and OD (315, 254). At the article level, the IMa patterns between 2010 and the five-year average are mostly the same, except that SRBS changes from balanced to high-outdegree. Taking Organization as an example, its citation network can be visualized as shown in Figure 4.

Citation network of Organization with five-year average IMj (219, 1367) and IMa (752, 2218)

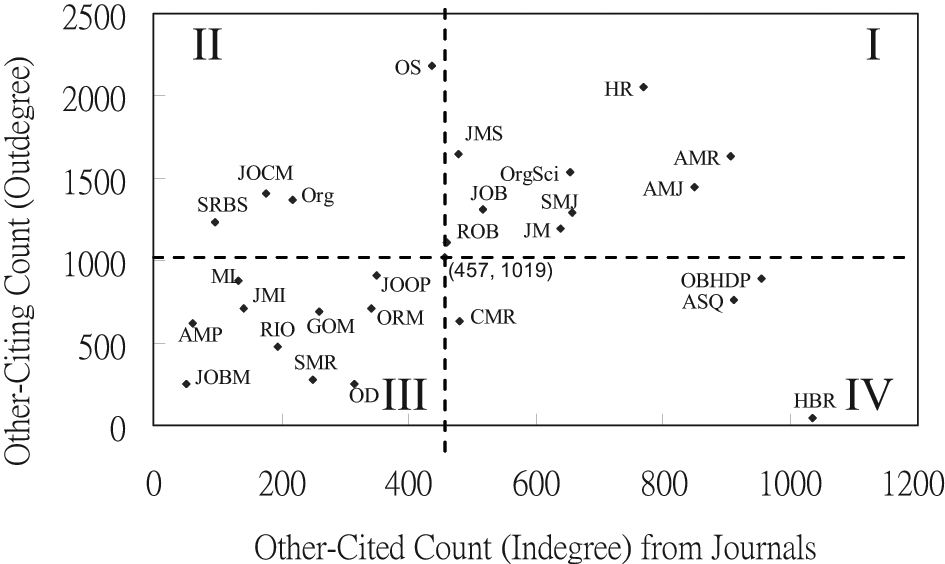

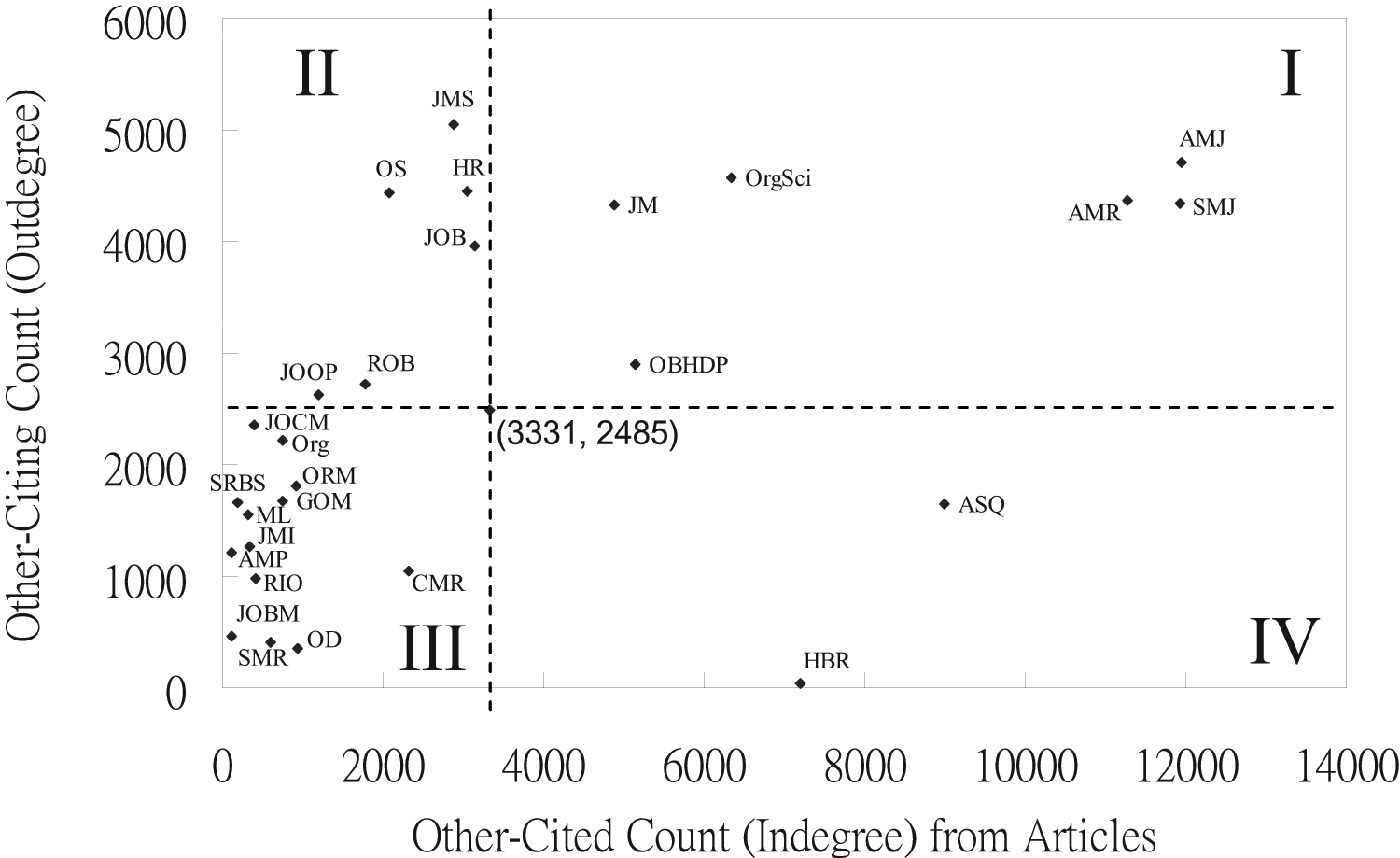

To visualize these influence patterns, we created two XY charts as shown in Figures 5 and 6; the former from the five-year averages of IMj and the latter from five-year averages of IMa. The coordinate of (457, 1017) in Figure 5 are the mean IMj values of all OM journals. Likewise, the coordinate of (3331, 2485) in Figure 6 are the mean IMa values of all OM journals. In order to interpret the patterns, we divide the plane into four quadrants using the mean point as the origin, similar to a Cartesian coordinate system. Journals in the first (I) quadrant have higher indegree and outdegree numbers than the average while those in the third (III) quadrant have lower indegree and outdegree than the average.

Five-year average influence patterns at the journal level

Five-year average influence patterns at the article level

According to social network analysis, a journal in the first quadrant should possess high degree centrality (Freeman, 1979) because its outdegree and indegree are both relatively high. That is, the span of its citation network is relatively large. This seems to suggest that such a journal exerts a high and wide degree of influence, especially when it falls in the first quadrant of both charts. Specifically, five journals have type-I journal networks as well as type-I article networks, AMJ, AMR, JM, OrgSci and SMJ. We could say that they are both knowledge producers and knowledge consumers, and have central roles in the reproduction and distribution of organization and management knowledge. Journals in quadrant III are simply less important, in terms of the raw numbers of citations. In contrast, ASQ and HBR consistently have unbalanced networks at both journal and article levels. They are more of a knowledge producer than a knowledge consumer, with more incoming citations than outgoing, which seems to indicate a certain detachment from the field, but considerable levels of influence.

Meanwhile, Organization has a type-II journal network and a type-III article network, that is to say that the coordinates are located to the left of the mean point. Because of its type-II journal network, this journal is more of a knowledge consumer than a knowledge producer. That is to say, authors who publish in Organization are more influenced by work coming from outside OM, and have relatively little impact on OM citations networks. Finally, those journals which remain in the third quadrant are creating as well as consuming knowledge to a lesser extent, with a smaller citation network and lesser degree of influence on the knowledge community. Essentially, the difference between type I journals and type III is influence in citation terms. The former have it, whilst the latter don’t.

Conclusions

So what does this sort of analysis tell us about the centrality and marginality of journals within this field? We are assuming here that journal citation patterns reflect the influence of a journal on other journals, and also that the citations are relevant to the article (Li, 2009). Then again, even ‘ceremonial’ or ‘legitimatory’ citations are relevant in a broad sense, since they indicate what counts in a particular field and hence what needs to be referenced. What is clear from the data is that flows of citations can show us something about where a journal stands within, in this case, OM. 2 Some journals are central to the field, both in terms of volume of citations and the places that their citations come from and go to. Other journals are less important, based on volume of citations, and yet others are both less important and more marginal, in terms of citing more outside the field. On this basis, Organization is a small and marginal journal in OM.

We’ll consider the broad implications of this position at the end of this conclusion, but cover a few other matters first. First, although self-citations could be easily manipulated by journal editors to increase IF values, we found no suspicious sharp increases in self-citations for all the OM journals selected for this study. Any steep jumps of IF we found during the 10-year period were not caused by sharp increases in self-citations. There certainly were high levels of self-citation in some journals, but they didn’t seem to be related to changes in IF. Of course, if an editor or professional association had influence over two or more related journals, they could encourage the author of an article in one journal to cite pieces from the related journal. Indeed, it might that this is precisely how an author could establish the centrality of their contribution. Such practices could not be detected by the self-citation counts provided in the JCR database.

Second, more and more authors in other areas are citing OM journals. In this study, we found that both the numbers of articles published and the numbers of citations in the references sections remain relatively stable each year in each OM journal. Yet, the rise in the number of citations from other journals outside these 27 might suggest that OM is becoming an increasingly important reference discipline since 2009 with top journals receiving citations from over 1000 non-OM journals. Whether these journals are outside the field of business and management in general is another matter, and it would be interesting to know just how many of these citations come from the social sciences more widely, or even the arts and humanities. That being said, as Oswick et al. (2011) argue, most organization theory ideas seem to be ‘foreign’ rather than ‘domestic’, with most OM journals citing more than they are cited.

Third, journals with high impact factor values tend to have a type-I journal network or article network, and vice versa. The only exception is ORM which has a type-III journal network and a type-III article network. Since the self-citation percentage of ORM in 2010 is less than 10%, this is probably caused by its dramatic increase in the cited count from other journals, resulting in a steep ascent of impact factor in 2010 (from 2.471 to 4.423). It reconfirms the validity of using the five-year average of IM values to classify and compare the influence patterns of different journals. In general though, the higher the IF, the more central in citation terms a journal is to the field of OM.

In terms of the broader lessons for a journal like Organization, it seems clear enough that it is small and marginal. That is to say, in scale, it is a journal which is cited less often, which cites less often, which publishes relatively few articles and hasn’t been going for that long. Indeed, in the US journal field it barely registers in comparison to the large and older journals. Given its editorial policy (Parker and Thomas 2011), this is exactly what we would expect, and in some sense it is a measure of success at positioning itself on the margins. If a journal claims to be ‘critical’, then we should expect that (if this terms means anything) that it will publish work which is critical of the dominant assumptions of the centre, and hence be seen as irrelevant or objectionable. In other words, we would expect fewer citations to a journal which was less involved with the core problems of a particular intellectual field.

What is also interesting is the sense in which Organization is an ‘interdisciplinary’ journal in terms of its citations. It appears to be looking outside OM much more often than inside for its inspiration and legitimation, which again could be both a cause and effect of its marginality. That is to say, any journal which looks outside its field for an influence network is very unlikely to be central to that field, simply because it is not centrally involved in its reproduction. This is also the case for any journal based outside North America, unless it makes huge efforts to become more North American in personnel and orientation (Grey, 2010). Of course, given the political and epistemological critiques which Organization has developed over the past twenty years, this isn’t surprising, and has often enough been noted in the pages of the journal. Perhaps what this article contributes is some evidence about the nature and features of that partly self-imposed marginalization.

We conclude by asking whether a journal could be critical of a field and still be central to it in citation terms. It seems unlikely, simply because it seems to need to reflect disciplinary orthodoxies (in method, epistemology and politics) in order to become an institution which reproduces a field, in terms of citation influence. In some sense then, the cost of heterodoxy is the likelihood that work will not be read as much, cited as much, and count for ranking exercises. None of this might matter if the values of authors, readers and editors over-ride more instrumental considerations, yet the question of influence still nags. Entirely beyond the scope of this article, but implied by it in any critical project, is the question of wider forms of influence, perhaps on the configuration and legitimacy of intellectual fields such as OM. What seems clear is that the audience for such writing is likely to lie outside the centre, which means that marginality and interdisciplinarity should be understood as strategic positions, not indicators of the failure to play a particular game.