Abstract

Young people are often assumed to be competent users of digital platforms; however, today's online environment is flooded with challenges, one of which is misinformation. We examine the social media practices of 14 Australian diaspora youth – a cohort often believed to be more at risk from digital risks and harms – and how they navigated online misinformation during the COVID-19 lockdowns. We find that while participants received some digital and media literacy education in school, this did not meet their specific needs, or enhance capabilities when it came to determining whether the information they encountered on social media was factual. We argue that school digital and media literacy education could more effectively support diaspora youth to navigate challenging content by building on the everyday competencies they enact in their social media use.

Introduction

Although misinformation is not new, the risks it poses to society have dramatically increased in recent years due to technological advancements and the prevalence of digital devices in people's everyday lives (Wardle and Darakhsan, 2017). As young people are so active on social media, they can be vulnerable to misinformation and can even be some of its most enthusiastic spreaders. Misinformation circulating during COVID-19 also indicated that misinformation could pose an immediate threat to diaspora communities. For example, the disproven conspiracy that COVID-19 was deliberately manufactured in China led to increases in hate speech and physical violence against people of Asian appearance, which prompted communities to respond by sharing hashtags such as #StopAsianHate (Tessler et al. 2020;). Although there has been an increase in action by social media platforms to address misinformation since the outbreak of COVID-19 1 , this has not been enough to disrupt the online misinformation environment (Johns et al., 2024). While platforms continue to face pressure to intervene in the spread of misinformation, there is also a need to better understand how users develop digital and media literacy skills through their everyday social media practices to respond independently and confidently when they encounter problematic information online.

This article examines the experiences of a group we describe broadly as diaspora youth, by which we mean those young people who migrated to Australia with their families or have one or both parents who arrived in Australia as a migrant, and who retain strong transnational community links, often through digital media. We focus on this cohort due to the disproportionate emphasis they have received in relation to policies that frame them as ‘at risk’ from online harms, including misinformation, but which often fail to acknowledge their competencies and agency in dealing with such challenges (Harris and Johns, 2021; Caluya et al., 2018).

There have been significant anxieties at the prospect of diaspora youth's digital activities becoming insufficiently supervised, managed, or controlled by adult guardians, rendering them particularly vulnerable (Centre for Multicultural Youth, 2021). A lack of digital literacy skills among migrant and refugee parents has been of central concern in Australian policy discourses around digital safety (Centre for Multicultural Youth, 2021; Harris and Johns, 2021). This has been accompanied by research and programs which have responded to increases in online hate speech targeting diaspora communities (e-Safety Commissioner, 2025). But, while this ‘at risk’ framing addresses an important need, there are also unintended consequences to such approaches (Johns et al., 2025; Harris et al., 2023) with diaspora youth often positioned in policy discourses through ‘deficit framings’, focused on their perceived lack of digital capabilities and safety, and their need for training, protection, and intervention (Livingstone and Third, 2017: 665).

In contrast, more critical approaches to refugee and migrant youth's digital engagement have emerged in digital and media literacy programs in Europe and other global contexts. Here, scholarship has recognised diaspora youth as critical readers and makers of digital media, with a unique skillset to address such challenges as online misinformation (Bozdağ, 2022; Bruinenberg et al., 2021). These changes reflect the push globally to increase young people's digital and media literacy to equip them to become informed citizens, with the ‘ability to effectively search for, evaluate and verify social and political information online’ (McGrew et al., 2018: 1), and to create safer digital environments.

In this article we explore, first, the digital and media literacy education that young people receive in Australian schools to address misinformation, and second, the kinds of informal resources or learning experiences that diaspora youth deploy to respond to misinformation in online environments. Although we define digital literacy as the technical and individual skills to capably manage one's conduct online (Pangrazio and Sefton-Green, 2021), and media literacy as the ability to understand and evaluate different forms of media including social media (Livingstone, 2014), we note that participants’ discussion of their experiences often revealed how these blended together; and as this article seeks to learn from participants, we deploy these definitions somewhat flexibly and propose a more integrated approach.

Our research suggests that while the diaspora youth who participated in our study were aware of misinformation they may experience online, their methods for distinguishing credible from non-credible information often varied from what they had been taught in schools, which was limited, not applicable to their circumstances, or which tended to imagine young people as a culturally homogenous group who share the same digital education needs; instead of their learning experiences being shaped by different experiences pertaining to race, cultural background, gender and sexuality, visa status, and more. This indicates that school digital and media literacy education is not currently being delivered in a way that meets the needs of young people in today's digital environment (see also Breakstone et al., 2021). Based on the findings, we argue that a more targeted level of digital education in schools that better addresses the needs and capabilities of diaspora youth is necessary to support them to confidently navigate contemporary digital environments.

Literature review

Recent efforts to support young people's digital literacy, especially in the school context, have been focused on managing risks of social media misuse with a strong focus on addressing the challenge of cyberbullying and other ‘inappropriate behaviour’ (Pangrazio and Sefton-Green, 2021: 22). The threat of ‘stranger danger’, that is, threats to safety and wellbeing that may arise through encounters with unknown people (usually adults) online continues to be a major concern among parents (Zhang-Kennedy et al., 2016; Livingstone, 2014). Attention has been further drawn to young people's personal data and privacy, and the implications of this data being collected by platforms from ever-younger age groups, often without user knowledge (Livingstone et al., 2024: 2; Pangrazio and Cardozo-Gaibisso, 2020; Pangrazio and Selwyn, 2018; Pangrazio and Selwyn, 2019). However, while skills like password strength, privacy and data management, and avoiding potentially dangerous people online are valuable, such education only addresses an aspect of the challenges young people encounter online.

Another key concern is online misinformation, which poses a range of tangible risks to society. Vaccine misinformation that fuels vaccine hesitancy can lead to individuals refusing vaccinations for themselves or their children, allowing a resurgence of preventable diseases (Attwell et al. 2021). The manipulation of democratic processes globally since the election of Donald Trump in 2016, who shared false election stories from hoax websites, has also been a cause of concern for scholars who have long regarded digital literacy as a vital means of educating future citizens to be able to make informed decisions when participating in democratic processes (Wardle and Derakshan, 2017). Indeed, Trump's 2024 re-election and his support for big tech operators’ preference to retreat from fact-checking and content moderation signals a worrying trend toward an even more acute ‘post-truth’ media environment (Swan, 2025, see also Pangrazio, 2018).

The broad range of content that fall under the overarching category of ‘misinformation’ also contributes to the complexity of addressing it. More than just errors in information or deliberately fabricated content like ‘fake news’ or conspiracy theories, this category also includes rumours, unverified information, and manipulated images that aim to distort truth, including the spread of misleading content for money or advertising revenue (Vraga and Bode, 2020; Tandoc Jr. et al., 2018). Today, many people encounter misinformation on a regular basis, especially if they are active on social media; however, most users dismiss it (El-Muhammady, 2019). While most research and commentary on misinformation has called for improving skills to identify when news and public health information has been manipulated, owing to the direct impacts this has on public health, wellbeing and democracy, the misinformation content that has been designed to target younger audiences and the risks this poses has received less attention at a national level.

A key example is the blend of misinformation and misogyny spread through social media by far-right influencers such as Andrew Tate, a conspiracy theorist and social media ‘life coach’ (Williamson and Wright, 2023). Tate's content has been reported to have resonated particularly with young men, such that Australian educators devised resources to deal with ‘Andrew Tate-style’ misogyny in Australian schools (Premier of Victoria, 2024). Feminist digital media scholars Banet-Weiser and Miltner (2016) and Haslop et al. (2024) have described Tate's content as appealing to marginalised youth. This is owing to its mix of misleading content that uses pseudo-scientific aesthetics to manipulate users into believing biased information to be ‘facts’ alongside the use of humour, including memes, which have broad appeal to young users, who, even if regarding the content critically may reserve their judgement to focus on its entertainment value. Although his YouTube channel was suspended, he is just one of many online lifestyle influencers who deliberately promote dangerous and inaccurate information to marginalised young people by drawing on affective and popular social media formats and cultures.

Similarly, wellness influencers, who blend personal, relatable content with medical advice, have been increasingly open to influence from conspiracy theorists and far right actors since the onset of COVID-19 (Baker, 2022). Being a part of any online community can be affirming but also makes thinking critically about the information received more difficult (Milton et al., 2023). However, as creators on Instagram and Tik Tok chase ‘popularity and view counts before verifiable information’ (Milton et al., 2023: 10), a motivation supported by platform economies, the range of actors and agendas, and the communities that are built around them, can pose threats to people's safety. When considering the nature of the misinformation environments that young people are routinely exposed to, it is therefore important to consider not just news topics such as politics, vaccines, and climate change, but also the heavily distorted worldviews presented to increasingly young audiences through lifestyle and entertainment formats, and the way platforms are designed to promote and personalise such content.

A common strategy to counter misinformation is fact-checking, either as done by third-parties and integrated into platforms, or as an individual strategy. This is despite its ‘significantly limited’ ability as a method to change people's perspectives when the view is counter to their worldview and personal ideology (Walter et al., 2020). Pangrazio (2018: 9) further argues that the value of fact-checking mechanisms is limited because ‘the fact [they] are arbitrated by traditional “authorities” undermines individual agency and the role of critical digital literacies in everyday life’, and that today's digital literacy education must examine the mechanisms and motivations of online platforms themselves, so that people are ‘discouraged from seeing platforms as neutral’ in what they promote.

However, educating young people to think critically about informational content and its distribution by platforms poses its own challenges. For example, a recent large-scale study of American high school students found that the majority considered misinformation shared on Facebook and other social media sites to be credible—with the authors attributing this finding to a willingness to take content at face value without looking ‘laterally’ to investigate the credibility of the source (Breakstone et al., 2021: 512). These international findings are also reflected in the Australian literature on news literacy among young people, with some variations. For example, in the first national study of young people's news media literacy, Notley and Dezuanni (2019: 689) found that, while social media was a preferred source of news for young people, participants were ‘not confident in spotting fake news’. Although, similar to Breakstone et al., they also found that participants ‘rarely or never check the source of news stories’ (Notley & Dezuanni, 2019: 691), a finding they attributed to how news media literacy is taught in the school curriculum, with an emphasis on ‘media production skills… over critical literacies that can support students’ capacity to reflect on and critique media content, distribution and consumption’. Also of concern is that young people predominantly seek out news only when it is of ‘personal relevance’, otherwise believing it will find them if it is important enough (Blakston and Waller, 2022: 111). This demonstrates the significance of contemporary social media platforms which have personalised news and other informational content to users, so that it has become common to accept that what is relevant will ‘find’ them rather than young people reflecting critically on the source and credibility of the content.

While this scholarship informs our study, there are some gaps that we seek to address in this paper. Firstly, the literature has consistently pointed to deficits in young people's capacities to discern credible and non-credible information. While our findings also highlight young people's concerns regarding being able to verify information appearing in their social media feeds, it also identifies diaspora youth capabilities in navigating and evaluating information online, which often depart from school curricula and programs. Secondly, while the digital literacy literature has focused predominantly on keeping young people safe from harm, the media literacy scholarship has centred news literacy in digital environments, a skill that is viewed as vital for young people to learn to become ‘informed and engaged’ citizens (Notley and Dezuanni, 2019). But this reductive focus on news can ignore the full range of information sources accessed on youth-oriented platforms like Tik Tok where misinformation is part of the content ecosystem, including information on social and lifestyle issues and health issues. In addition, news, information and entertainment tend to be more blended in the content young people engage with on these platforms, meaning that critical appraisals may be less likely. This leads to a third gap; whereby young people tend to be regarded as a homogenous group when it comes to navigating and evaluating information online. We address this gap through an empirical study with diaspora youth: a diverse group themselves, whose needs and capabilities are not always attended to in the literature, but who demonstrated a range of digital and media literacy skills and needs related to their experiences. Digital literacy education for diaspora youth, where it does exist, can sometimes overlook key areas of need, while at the same time failing to recognise the capabilities young people already possess to focus on a deficit model, as outlined in the Introduction of this paper.

Notwithstanding this, there is a small body of research in which the focus is more squarely placed on migrant or diaspora youth capabilities in navigating complex information environments. In a study of the digital media practices of recently-arrived young migrants in the Netherlands, Bruinenberg et al. (2021: 36) found that the participants already had a range of media literacy skills with which to navigate societal barriers and challenges. Additionally, the ability of migrant youth to learn Dutch more quickly upon arrival meant they frequently deployed their digital and social skills to act as translators on behalf of their families, aiding with the family's adjustment to life in a new country (Bruinenberg et al., 2021: 39). Young migrants may also maintain connections to their home countries through accessing the arts online, such as viewing tv shows or listening to popular music, which Stewart argues is a kind of media literacy for the purpose of entertainment and to maintain a sense of identity (2014: 361). Young people's use of social media also extends beyond community engagement and entertainment, as they also seek out health information, legal and lifestyle advice, and insights into gendered and cultural dynamics (Bozdağ, 2022: 310). Alford (2023) found that some Australian migrant youth who are still learning English adapt and apply the media literacy skills they learn in the classroom to the social media content they are exposed to outside of school. She argues that material used in the classroom could be expanded to include the types of content more frequently encountered by young people on social media, such as short video formats (2023: 275). These capacities for digital and media literacy to be developed and deployed outside the classroom highlight the technological capabilities of migrant and diaspora youth who use digital media, and skills acquired through formal and informal education, to help them navigate the settlement process and sustain cultural connections and identity. However, these capabilities, as well as how they navigate the unique challenges presented by the contemporary information environment, are often overlooked in the literature. This paper addresses these gaps.

Method

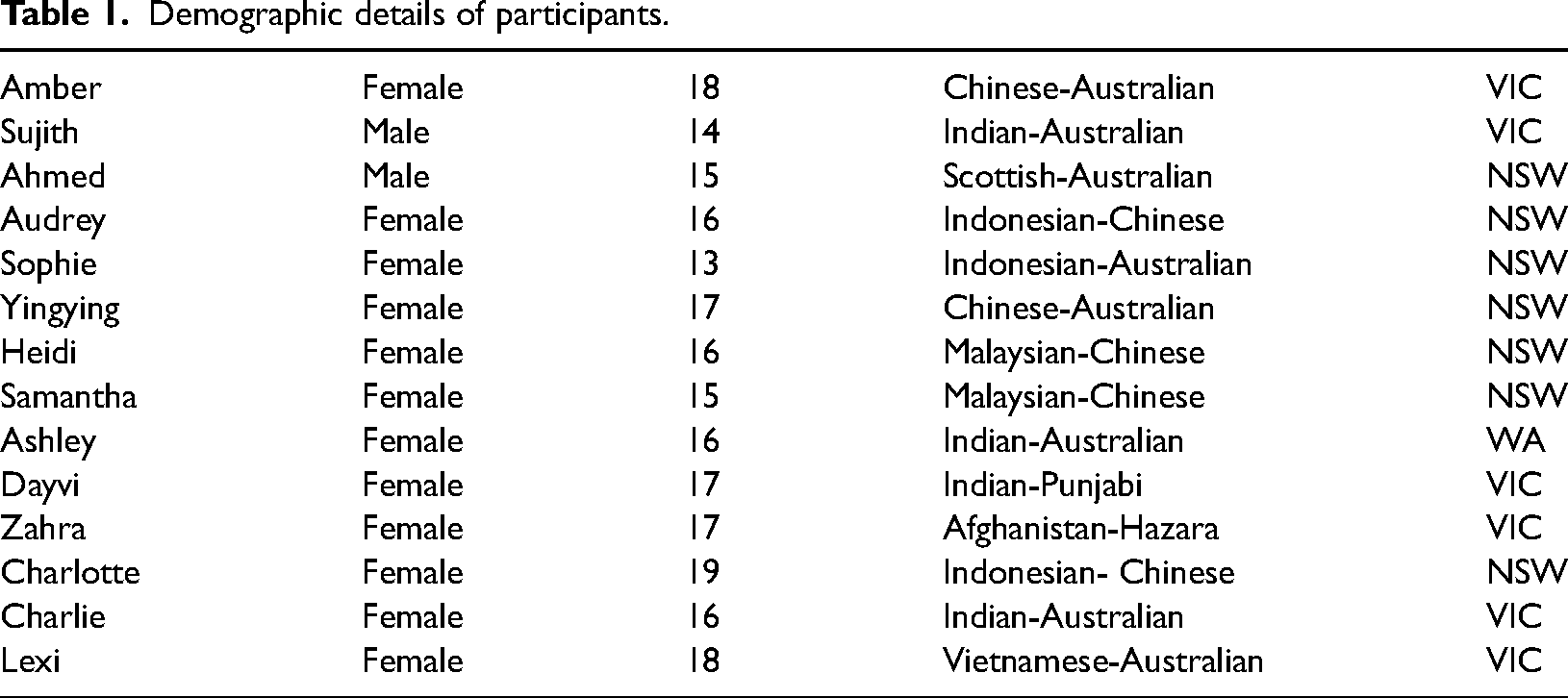

This paper draws on 14 semi-structured interviews with diaspora youth, aged 13–19 years old, undertaken as part of a three-year project examining diaspora youths’ everyday digital citizenship practices. The project received ethics approval from the University of Technology Sydney HREC committee (ETH19-4206 and ETH21-6565) and relevant state government approval (SERAP No. 2019520). Interviews were conducted between 2020–2022, covering the most intensive periods of COVID-19 lockdowns and tentative re-openings in Australia, during which time most participants experienced online schooling. The study involved an online survey with 356 students recruited through a NSW school, social research survey panels (survey data is not a focus of this paper) and interviews with 26 diaspora youth recruited through multicultural youth services. A subset also participated in digital ethnography activities via tasks sent through an online digital research platform (Indeemo). This included sharing screenshots or creating short videos about current issues they were engaged in on digital media. This paper analyses the qualitative interviews and illustrative digital ethnography data of 14 participants to explore these young people's experiences with education, encounters and strategies regarding misinformation online. All participants (and for those under 18, their parents/guardians) returned a signed consent form prior to the commencement of the research interviews, which were carried out via Zoom, and all interviews have been pseudonymised and de-identified for publication. The semi-structured interviews reflected the following themes: diaspora youths’ everyday social media use, their social networks and connections (local, transnational), how and where their social media use overlapped with civic and political practices, and how they defined such terms as digital citizenship. Each interview was approximately 30 min in length, and participants received a $25 gift card in recognition of the contribution of their time. Interviews were thematically coded following transcription, with misinformation and school education about online safety and digital literacy education arising as a distinct and intriguing topic. Participants were drawn from a broad cross-section of diaspora communities in Australia and included Australian born diaspora youth and young people who had migrated to Australia on a humanitarian visa. Table 1 provides an overview of the demographic details of participants, including age, gender, cultural background, and location within Australia.

Demographic details of participants.

Findings and discussion

The three broad areas of findings in this paper are: diaspora youths' concerns about the issue of misinformation itself as a part of the online environment; the types of education and learning experiences that informed their navigation of truth and potential misinformation online, and whether these were useful and relevant to their needs; and the actions they undertook when engaging with potentially non-credible content. Across these three categories, we find a range of topics where young people actively sought out information online and where they encountered misinformation, including on topics such as politics, forms of hate, such as misogyny and racism, and health. This sample of topics indicates the high stakes that misinformation can have for diaspora youth, such as contributing toward harmful experiences of racism and misogyny, negatively influencing health decisions, or developing distrust in news, thus potentially stifling their ability to be politically engaged. Overall, we found that participants drew on range of different educational experiences to guide their actions online, yet most of the formal digital and media literacy education they received had an emphasis on basic online technical skills to encourage safe use and lessons involving how to fact-check content on static websites that overlooked the types of challenges they actually encountered.

Concerns about the issue of misinformation

Although all participants used social media and considered it to have benefit to their lives, particularly during COVID-19 lockdowns, several participants raised concerns about the increasing difficulty of knowing whether information was credible. The potential impacts of this on the ability to engage with political content was of particular concern to participants like Amber (aged 15, Chinese-Australian), who raised a then-recent example of having seen Russian propaganda on social media which used a deep fake to impersonate Ukrainian president Volodymyr Zelenskyy. …It's becoming more realistic. Like for deep fakes too, I don't know if you saw the video of Zelenskyy […] saying how he surrendered, but it seems so much like him, but it wasn't him. So in the future, I think online, that kind of factor of […] differentiating between what's real and not […] I think we might need to become more knowledgeable about that.

Amber's alarm at how convincing the deep fake was led her to believe that it was an important topic young people needed to learn more about. However, she also felt that young people were becoming more aware of how fake news was being amplified by platforms, while some platform cultures also exposed young people to narrow and ‘toxic’ content. …Because there's been a rise in people recognizing maybe incel culture or like toxic masculinity, or with, even with the rise of like, for example, Trump, that's brought a lot of like fake news topics on to the rise, onto the surface. And people began realizing how they should take note of what they're viewing actively. Or maybe as I mentioned, like Reddit, or Discord, depending on how you participate, it can provide a very narrow set of perspectives […] it might lead to a very toxic culture. That's something that I became more aware of […] And I guess my perspectives on how I behave on social media and the internet has become more, more responsible as well.

Thus, Amber was concerned about the potential impacts of dangerous AI technology and platform environments where ‘fake news’ tended to be more prevalent, contributing to an already challenging online environment. Even so, she was hopeful that more young people were aware of these issues, and that engaging critically and ‘actively’ was one way to navigate online misinformation.

Sujith (aged 14, Indian-Australian) expressed mixed feelings on the presence of misinformation on social media, considering it both the responsibility of the viewer and the poster to verify if something was true or not, but also describing contradictory impulses of not caring if content was true as long as it was funny, as he commented in an entry on Indeemo (24/8/22), ‘It's mainly funny memes, so I don’t necessarily need to check if its true … it's also very important to tell whether something is fake or real because that's what half of social media is about. The huge cycle in which somebody shares something, somebody receives it and then they have to decide whether to share it on again. Or if they think that it's fake and they don't share it or they don't see any point in sharing it. As long as its funny, I don’t really care where it comes from’. He considered this to be the way he and his friends engaged with social media. But, despite this admission, he also expressed an awareness of the importance of verifying political content and regarded this to be an important aspect of being a digital citizen online:

As long as its funny, I don’t really care where it comes from’. He considered this to be the way he and his friends engaged with social media. But, despite this admission, he also expressed an awareness of the importance of verifying political content and regarded this to be an important aspect of being a digital citizen online:

Although he considered the online environment to be enjoyable and a good place to find ‘lots of information’, this was coupled with the need to navigate the misinformation that crowded social media.

These responses indicate that the diaspora youth in our study, while wanting to access news and information and engage in Internet humour with their friends, also sometimes did so ambivalently, knowing that what they shared may be incorrect. At other times they engaged only minimally for fear of sharing misinformation. However, as Amber's response indicated, for other young people who wanted to participate fully online there was little option but to be active, critical and engaged, extending engagement beyond mere fact-checking or verifying sources to also think about the platforms’ priorities in distributing information, and to be mindful of which platforms can be more ‘toxic’ by virtue of their design.

Digital and media literacy education in and beyond the classroom

Despite the concerns that participants expressed about online misinformation and unverified information, most only had sporadic lessons on the topic handled by different areas of the school, rather than through a consistent and structured approach. For example, Lexi (18, Vietnamese-Australian) said she ‘never really got actual lessons’, but she recalled one afternoon following a school swimming carnival where students were given ‘pamphlets about like online safety and stuff from the government’ to read. Lexi had received some lessons regarding online misinformation in primary school; however, these were infrequent, and led by the school's teacher-librarian during rare whole-class visits to the library. […] I remember having a lesson in primary school about going through like legitimate sources. […] I remember we had to read through these like websites and we were like, how'd you figure out what if it was fake or not? And like, by the end of it, like it turned out like this, it was like this octopus website and yeah, it turned out to be fake and stuff, [I] thought it was real. It was just interesting. I think there might have also been a class in secondary school, like to identify like a fake website from a real website and why it would be, and it was just like a class that was, yeah, I think that's pretty much it.

When asked whether any of these lessons from either primary school or high school had influenced how she used the internet or responded to potential misinformation, Lexi clearly expressed that they had not been of value to her. Honestly, I feel like I learn more about my like digital citizenship and like how to protect myself from like online sources, from like social media and YouTube and stuff versus like anything that they've taught, honestly.

In the absence of more relevant school instruction on navigating truth in the online environment, it was everyday social media participation and self-directed learning that enabled Lexi to navigate the online environment with more confidence. This aligns with critiques of the current digital and media literacy curricula in schools (Pangrazio and Sefton-Green, 2021; Breakstone et al., 2021) where the focus is either on protection from danger or where learning to detect non-credible information relied on static websites and other sources young people are not frequently engaging with in their everyday social media participation.

Ahmed (aged 15, Scottish-Australian) similarly expressed that school instruction was limited to basic advice about password protection and how to critically evaluate websites and other content that wasn’t specific to what young people were more likely to encounter on Tik Tok or Instagram: …They use the same old websites and the same things that aren’t really specific or relevant, but maybe helpful for some people. I don't know. So it's not relevant because you already know all of it? Yeah. Most of it's just common sense, but it's not like ‘if you're using TiK ToK, you shouldn't do this. If you're using Instagram, don't do this stuff like that.’ It's just, ‘don't give you password out. Don't do this. Don't do that.’ Which most people know.

When asked whether these lessons had influenced his use of digital platforms, Ahmed answered ‘No … Nope … Nope, not at all’. This view was echoed by Audrey (aged 16, Indonesian-Australian), who believed that by the time she was using social media, she already had some awareness of how to navigate the environment confidently according to the technical skills taught in primary school: I sort of like knew all that stuff already. Like we're told it from like a young age and the way it's like repeated, I feel like it's very basic information. So I mean, I follow most of those rules, but it's not necessarily that was influenced by what we're learning at school.

By the time digital literacy education about being online was provided in Year 9, she had already been an active user for years and was familiar with the basic information they provided.

Despite participants’ concern about misinformation, the specific forms of misinformation that young people encountered on social media were rarely covered in school. For example, Sophie (aged 13, Indonesian-Australian) stated that in her classes they ‘haven't learned like a lot of things about like rumours on social media, about someone spreading something. But we have learned about like, don't give your information out to other people’, such as one's location, or in the case of Samantha, ‘keeping your passwords private and things like that’.

YingYing (aged 17, Chinese-Australian) shared that her education about using the internet and social media was ‘only in like primary school, but that's only about like cyberbullying and all of that’; likewise, Heidi (aged 16, Malaysian-Australian) said that only issues around cyberbullying had been taught to her, but that ‘people don't really pay attention to it’ anyway. The limited scope of school digital and media literacy education, and the narrow set of lessons addressing misinformation in particular, ultimately did not prepare participants for the threat of misinformation and the challenges it presents in their everyday social media use.

Despite not taking the form of a digital or media literacy education lesson, Amber shared an anecdote about how her understanding of social media and online environments had been profoundly influenced through her Year 9 English lesson. …I think [the lesson] was also a lot about your identity and being able to differentiate your ego and what you actually believe in, oh, what's true to you. And actually having that at the back of my mind, going forward nowadays, as I've become a little bit more mature, that's helped me like navigate issues, whether it's online or in-person about social issues, particularly, and knowing how to develop myself in that environment. Because I think that's the thing that's helped me be able to navigate online because there's a lot of inflated egos online and just knowing how I should present myself or curate my identity online […] that's been very helpful to me. I do remember in primary school, there's a lot […] about like basic lessons, not putting your personal information online. That's very basic lessons, knowing how to be safe online[…]. But I think it's knowing how to understand yourself online, cause your online identity is very different from your real-life identity. Like being able to understand yourself, that's very instrumental for safeguarding, how you present yourself online.

Amber's anecdote echoes other participants’ experience of finding that explicit digital literacy lessons in school were basic and ultimately had limited value. However, unlike other participants’, she benefitted from a high school English lesson that tackled larger questions about navigating selfhood and identity, and applied this lesson to her interactions with online users. This greater understanding of herself on a personal level became ‘very instrumental for safeguarding’ herself online.

Actions and responsibility

When it came to determining the credibility of information on social media, participants developed a range of literacies and practices to evaluate the trustworthiness of news and political information and used this to determine whether or not to share content with others. For some interviewees like Amber, one important way to protect herself from fake news was to view social media as an inherently distrustful source, because of ‘how biased people's perspectives can be’, including people in her social network and the accounts she followed. This led her to only trust news from news websites rather than social media: It's very easy for me to just fall into that rabbit hole of a very closed viewpoint. So I always use, like, I go on websites to read news like The Guardian or like The New Yorker or like The ABC, The Conversation, they're news sites that I can trust their judgement in to provide a very analytical and non-biased viewpoint. Yeah. So I never use social media for news.

For Amber, the risks posed by both ‘fake news appearing more and more’ and the echo-chambers of ‘closed viewpoints’ that could be created by social media design were something to be increasingly mindful of as she got older. As part of her intention to develop ‘a more mature mindset’ to things in general, she felt she had ‘a personal responsibility to be able to develop my critical thinking skills on the internet’.

Sujith also was a keen user of social media, but had a different approach to navigating misinformation. Instead of treating news and related topics as specifically requiring fact-checking attention, he considered online content to be mostly for entertainment purposes. In fact, he tried to avoid engagement with politics, because ‘a lot of the times people make jokes and sometimes they can be like on the fence, but they’re meant for just like, as a joke,’ and he wanted to avoid negative reactions to his posts. This also influenced his decisions about what to post: ‘if I think it's a joke, then yeah. I'll share it. But otherwise if I think oh this might be controversial and then I just leave it.’ This demonstrates how difficult it is for young people, who use social media most often for entertainment, to switch into a critical mode when consuming news presented as humour, or a joke. However, Sujith did sometimes find himself wanting to share posts about more serious topics. For example, he regularly checked in on news, particularly from India. But he was reluctant to trust the news he encountered and rarely shared it: ‘because things float around a lot, here and there, and to try to find the source of everything gets tedious. Most of the time I leave it and react, but sometimes I share it. It sort of depends on my mood.’

Samantha (aged 15, Malaysian-Australian) expressed a similar sense of responsibility to Amber when it came to sharing information, as well as an aversion to putting other people offside. This was especially the case with social issues, which she believed needed to be understood in depth in order to take ‘meaningful action’: Maybe, not just me, but taking the time to learn about something and then make sure that I'm telling people what I believe is right. But not forcing it onto them, taking time to make sure that what I'm reading is true instead of […] fake news. And then maybe figuring out what I can do to help people from there, or if I can get other people to help me as well, because sometimes it's not just something that you can do by yourself.

Samantha's strategy when confronted with potential misinformation about social issues was to refrain from acting until she came to understand it well. Ashley (aged 16, Indian-Australian) was similarly mindful of the perspectives of peers when it came to evaluating the truth of online information; however this was different in that others’ opinions were in part the metric by which reliability was determined: I mean, if [posts] have like good sources. If it's … if … also a lot of timing, it's not a good way to decide, but if something's like, it's like a post is really popular. I think like, ‘Hey, so many people are saying that it's good’ and I'll be like, ‘okay, I trust this’…

Ashley's strategy for navigating the online environment was therefore partially dependent on the standard approach of checking sources, but for the most part, she relied upon the opinions of peers, despite being aware that it was not the most reliable method. This demonstrates that, although peer-based and informal learning often helps young people to critically navigate misinformation online and come up with strategies to guard against sharing misinformation, it can also lead young people to be swayed to trust information as long as it is shared by trusted members of their network.

Charlotte (aged 19, Indonesian-Australian) accessed mental health information from an Instagram influencer and shared it with friends, but had difficulty determining whether the information conveyed by health and wellness influencers could be trusted: Sometimes I share info from this account in my WhatsApp and Discord groups, mainly with people from Sydney. I think she offers a slightly different take on psychology when compared to other accounts but I do question what she says at times. She links her sources usually but sometimes it can be difficult to determine reliability because of how many journals there are that cover what she talks about, each with their own views and findings.

Charlotte engaged with the information she encountered through confirming that the poster was basing her messaging on academic journals, as well as retaining a healthy dose of scepticism when it came to interpreting the posts. However, the number and complexity of the academic journal references also made it more challenging for her to assess the reliability of the health information. As earlier mentioned, pseudo-scientific materials presented as evidence by online influencers is often difficult to verify for young users of social media, despite influencers often being a source of community and health specific news and information.

Charlie (aged 16, Indian-Australian), who was interested in information about both mental health and social movements such as Black Lives Matter, relied more on the prominence of information as well as the popularity of the posters to determine whether it was reliable. I share current affairs that are given a lot of attention in social media eg. BLM movement and mental health awareness posts to my close friends on Instagram. I don’t usually fact check this information as I only share it once I’ve seen various posts highlighting the same issue and as a result assume it is true. I also only share the information from popular activists accounts on Insta as I assume that because the owners are so passionate about human rights and awareness they will only give accurate information.

Rather than the usual fact-checking method taught by schools, Charlie evaluated the trustworthiness of those who were posting the information and the consistency of the messages. While following trusted sources was enough to qualify information, this process also carried the risk of believing potentially biased accounts or misinformation

Devi (aged 17, Indian-Australian) was also passionate to learn about social issues, such as feminism and was active in responding to misogyny when it arose on her social media feed, as well as other culturally relevant topics, such as diaspora based Tik Tok content. But equally, she was not under any false impressions that the content she engaged with was always factual, given much of the content was opinion-based. However, this was not necessarily a negative experience for her, but rather an inherent part of the online environment and connecting with community-based media. I love [social media]. I learn off it a lot. I see all these facts and stuff though. I am aware that a lot of this stuff is made up. […] I don't know if it's real or stuff, but you know what, at that time it's, for me, it's interesting. And I learn from it. So it's definitely a good place to learn from.

Although relaxed approaches to assessing unverified information were used with little consequence in some low-stakes scenarios as the above, there were also circumstances in which participants’ difficulties in navigating online information environments caused more serious stress. For Zahra (aged 17), who had arrived in Australia from Afghanistan on a humanitarian visa prior to the Taliban regaining power in Kabul in 2021, watching the events in Kabul unfold online in real-time through platforms such as Twitter was both distressing and highly confusing to follow: I give up on following the news because it was so frustrating. There were like newsfeed that were fake and you couldn't actually rely on them. So I actually don't know what's happening in Afghanistan right now […] But I believe that Pakistan is like, like everyone else, said that it's Pakistan's fault side. I believe it's the same because who else can support them? And it's not only Pakistan. It might be some other countries that's, you know, behind. And that's why they created on social media, they created this hashtag #SanctionPakistan that just to raise awareness as well as like telling people that it's no one but Pakistan who supports them.

Despite not considering the news reliable and feeling she had little information to determine whether the claims she encountered were true or not, Zahra made social media posts at this time with the hashtag #SanctionPakistan. She emphasised that this was not to ‘hurt someone's feelings’, but out of a need to do something in a crisis, even if ‘it's not going to do any good’. She also reported seeing scams on Tik Tok where people claimed to be raising money to distribute medicines to people who had been hurt in the war, ‘who were like creating profiles to just, you know, get money’. These bad actors further obscured what events were genuinely unfolding in Afghanistan, and as a result she ‘couldn't actually decide which one was correct. Which one was like, you know, the fake. So it was hard.’ In a time of crisis that affected her and her community so closely, Zahra was understandably unable to navigate the information she encountered from an objective standpoint; yet all the same, she felt a need to contribute to activist campaigns online out of a sense of responsibility to do something.

Conclusion

Our participants valued basic online safety instruction, and, to a lesser extent, the media literacy education they had received, but expressed numerous concerns about navigating online environments that were more complex than what had been addressed in school. Additionally, while some participants responded to dubious content by putting in the time to educate themselves about a particular topic using consensus-based sources, this was not the only strategy. Although a few participants deferred to those they perceived as experts, like news websites, some simply accepted the ubiquity of misinformation, and viewed social media as predominantly a tool for leisure or engagement with social issues they cared about, and did not concern themselves with the credibility of the content they saw. The school-favoured tradition of weighing up the credibility of a source and its claims, which requires one to suspend their current activities for a potentially lengthy and dry undertaking, was limited in its uptake. This is unsurprising given the fast pace and complexity of today's social media environments, and the social and interest-based purposes for which young people used them.

We make three interconnected propositions on the basis of these findings, relevant to a range of digital media and literacy education providers. The first proposition is that digital and media literacy education about online misinformation and unverified content would benefit from incorporating the forms of media that young people are likely to be exposed to, such as short videos, deep fakes, and influencer content. The examples our participants provided, of media literacy lessons in school examining hoax websites or reading educational pamphlets, were not relatable to the content young people actually engaged with online, and what was applicable was widely considered common sense and a waste of time. Echoing Alford (2023), we suggest that basic materials like static images or websites be used as an initial foundation, from which educators could expand to incorporate examples from contemporary social media platforms that are much more relevant to young people's information environment. While this may be complicated by government initiatives to ban social media for young people aged 18 and under, we believe that there is a need to listen to young people and learn from their experiences as they engage with youth-focused social media and other types of media.

Second, we suggest that the topic scope be broadened to encompass the concerns that diaspora youth engaged with and saw being debated online, including content related to global social issues, political and social movements, and health information, as well as the nature of how this content was presented—not as formal news articles, but as highly emotional claims or opinions, often shared by influencers and content creators who may be driven by their own passionately held worldviews, leading them to inadvertently share misinformation.

Third, we suggest that there be more recognition of the diversity of online communities that diaspora youth and newly arrived migrant youth belong to, and the different challenges that they face when dealing with misinformation. For example, the results showed that many young people participating in the study, while worried about misinformation online, were also able to draw upon existing digital capabilities to navigate away from potential sources of political misinformation and to resist the personalisation of algorithms that may produce echo chambers. They also drew upon critical skills learned often through more informal, peer-based learning or from social media itself to discern where social issues and information may have been manipulated. These skills are important to acknowledge and we argue that they could be incorporated into digital and media literacy lessons through co-designed activities, drawing upon principles also practiced in other contexts (Foster et al., 2024). We also acknowledge that, for newly arrived migrants, much of this peer-based learning takes time to build up, and misinformation might be more difficult to detect. In these contexts, formal education may be particularly important, so as to reduce the stress of navigating online environments. This further emphasises the importance of introducing principles of co-design, so that educators can invite young people to consider how to engage critically with the content they find in their newsfeed and helping them to evaluate information encountered on social media. Such an approach can encourage young people to go beyond managing their personal profiles and responsibilities to others online, to recognising their power to act confidently in relation to assessing contentious debates in the online and offline world. As the challenges posed by misinformation are only likely to grow more complex in an era of pervasive AI and questionable content, preparing young people to navigate this environment must include recognition of the skills and perspectives they already hold, and their potential to create change for the better.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Australian Research Council (grant number DP190100635). The research for this article was supported by the ARC Discovery Grant DP190100635 ‘Fostering Global Digital Citizenship: Diaspora Youth in a Connected World’.