Abstract

Elimination of undesired signals from a mixture of captured signals in body area sensing systems is studied in this paper. A series of filtering techniques including a priori and adaptive approaches are explored in detail and applied involving decomposition of signals along a new system’s axis to separate the desired signals from other sources in the original data. Within the context of a case study in body area systems, a motion capture scenario is designed and the introduced signal decomposition techniques are critically evaluated and a new one is proposed. Applying the studied filtering and signal decomposition techniques demonstrates that the functional based approach outperforms the rest in reducing the effect of undesired changes in collected motion data which are due to random changes in sensors positioning. The results showed that the proposed technique reduces variations in the data for average of 94% outperforming the rest of the techniques in the case study although it will add computational complexity. Such technique helps wider adaptation of motion capture systems with less sensitivity to accurate sensor positioning; therefore, more portable body area sensing system.

Introduction

In body area signal processing systems, one of the challenges is elimination of unwanted signals from a mixture of sources that is called filtering. The techniques for removal of undesired signals are commonly using a linear signal decomposition. The method is to present the data in a new space whereas the desired signal is filtered out from other signals in the original signal projected onto different basis vectors or functions. 1 By retaining the basis describing the desired signal and deleting the rest, signal decomposition is performed.

Body area sensing systems specifically designed for motion capture of walking or gait are measuring human body kinematic variables which are the angles of pelvis, hip, knee, ankle, and foot in the X, Y, and Z axes. Such measured signals which are considered as vectors in time domain for each joint in the direction of the 3 axes are prone to undesired components due to different reasons from varying sensor wearing sessions, inadvertent changes in position of sensors, different walking pattern, and speed, etc. 2 Therefore, there is need for removal of undesired components in the captured signals which could be considered and addressed from signal decomposition perspective.

Signal decomposition techniques are categorized into a priori and adaptive ones. It is dependent on the approach that the basis vectors or functions are determined. In a priori approaches the basis are defined independent of the signal, such as finite impulse response (FIR) and infinite impulse response (IIR) filters. The approach is frequency-based utilizing Discrete Fourier Transform (DFT). 3 Such signal decomposition techniques are not used when there is overlap between characteristic frequencies of the undesired signal and the desired signal. To tackle this challenge adaptive frameworks for determining basis vectors or functions have been introduced. 2 The basis vectors are calculated from the statistical properties of the data through adaptive approaches including ICA (Independent Component Analysis), PCA (Principal Component Analysis), and SVF (Singular Value Filter) based signal decomposition methods.

The signal decomposition basic principles can be described by initially considering the signal, joint angle in this study, which is represented as a row vector

where

For linear transformation, the mutually orthonormal basis

A general linear filtering technique for discrete time system is represented through a matrix formulation,

By selecting the first M coefficient and rejecting the rest,

Signal decomposition-based filtering technique process.

The signals could have different characteristics such as being deterministic or random, orthogonal, orthonormal, or independent. A signal is called deterministic if there is no uncertainty about its values at any time which means it can be represented by a mathematical formula as a function of time. A signal is non-deterministic or random if there is uncertainty about its values at some time while being random in nature and are modeled in probabilistic terms. As the aim in this paper is to address random components in the signal, there is need to consider the statistical properties of signals and processes. Stationary process is a procuress that the unconditional joint probability distribution does not change over time; otherwise, the process is non-stationary. Signals are represented as vectors whereas vector space is considered as a set of vectors or functions

Having introduced the signal decomposition process, the principles of filtering and signal decomposition techniques including a priori and adaptive approaches along with a functional one are explored considering critical evaluation of them in Section 2. The approach is validated for a case study in a body area sensing scenario for motion signals which are angles of joints to investigate the applicability of the techniques within that context in Section 3. In Section 4, results are presented, compared, and analyzed which validates the applicability of the approach for the case study. The paper will be concluded in Section 5 summarizing the findings and the validity of used techniques.

Background of Filtering and Signal Decomposition Techniques

To reduce undesired components in the signals captured from body area sensing systems, a series of signal decomposition techniques are explored which are critically reviewed in this Section. The signals are specifically angles of joints for the purpose of gait analysis whereas applying the techniques is for the purpose of finding characteristic features that represent the main patterns of motion. By eliminating the undesired components there would be reduced variability in signals of body area sensing systems. When considering the applicability of on-body sensing systems for motion capture, there are challenges related to wearer’s comfort. The systems are sensitive to sensors positioning which need uncomfortable tight-fitting attachments and experts for placement/set-up.4,5 Using such signal decomposition techniques helps wider adaptation of motion capture systems with less sensitivity to accurate sensor positioning; therefore, more portable body area sensing system. Signal decomposition techniques are categorized into a priori and adaptive ones depending on the approach that the basis vectors or functions are determined. In a priori approaches the basis are defined independent of the signal, whereas for the adaptive ones the basis vectors or functions are derived from statistical properties of the data through adaptive approaches which are critically reviewed in this Section.

A priori signal decomposition

The most common a priori filtering approach, where the basis are independent of the data, is Discrete Fourier transform-based filter whereas the basis for different frequencies are a set of complex exponentials. Such filters have been commonly used in signal decomposition literature. 6 The frequency response of a filter is described by parameters such as stop band, pass band and cut-off frequencies. In the stopband, the deviation from zero needs to be small while all the frequencies need to be passed unchanged in the passband whereas the ripples need be minimized. The passband cut-off frequency and the stopband cut-off frequency need to be as close as possible in order to have an ideal filter response. Two key methods of signal separation in the a priori category as discussed in following section are Finite impulse response (FIR) and infinite impulse response (IIR) filters.

FIR filters have finite duration impulse response and also are called non-recursive filters or convolutional filters. From the time domain viewpoint, these filters are sometimes called moving average filter whereas the impulse response is finite. A

The linear phase FIR filter frequency response is written as (6) where

If a signal x(n) only consists frequencies in the passband, the filtered signal spectrum is

A Kth-order Infinite Impulse Response (IIR) filter is presented by equation (7) where each output sample depends on present and past input samples in addition to the past output samples.

The filter’s impulse response will not fade due to the recursive part, and it is the cause that they are named IIR filters. Considering the steady-state magnitude response characteristics, many techniques are used for design such as Butterworth, Chebyshev type I and II, and Elliptic filters. For filter design there is a compromise between transient time and magnitude response. As discussed, a FIR filter can have linear phase meaning that there would be a constant group delay because of the filter unit-pulse response symmetry. Causal IIR filters due to the infinite duration of unit-pulse response cannot be symmetric; therefore, IIR filter cannot have exactly linear phase. To design filter with sharp transitions between frequency bands or large attenuation are required in the stop band, the required filter length N increases. When constant delay for all frequencies is not required, FIR filters are used. The minimum phase FIR filters are good alternative when the group delay is not important. With the same number of coefficients, a sharper transition between band edges is present for IIR filter than a FIR filter. In terms of realization, IIR filter is much more difficult than the direct, non-recursive FIR filter due to the recursive structure. Considering similar magnitude characteristics, an IIR filter has fewer coefficients than a FIR filter so less memory is required to store the coefficients which is an advantage over FIR. 6

Adaptive signal decomposition

The reviewed filtering techniques in previous part fail to achieve a signal separation when there is overlap in the frequencies of the undesired signal and the desired signal. Moreover, the signals may change through space and time; therefore, adaptive approaches for finding out the basis functions are proposed in the literature. Common adaptive signal decomposition techniques for defining basis functions are Independent Component Analysis, ICA, Principal Component Analysis, PCA, and Singular Value Filter, SVF, which are used in blind source separation (BSS) as well. BSS is an approach for retrieving unseen signals or sources from mixtures of the signals. For separating the sources, no prior information about the signals or their combination is available. Instead, the technique considers that different physical processes have signals with predictable relationships; therefore, specific statistical relationships between the sources. 7 Such techniques are widely used due to the need for no prior information about the signals. The ability of these filtering techniques in signal decomposition is dependent on source signals statistics. One of the techniques for this purpose is known as PCA whereas assumes orthogonality of the source signals 6 while the other one which is known as ICA assumes independence and non-Gaussian-distribution of the source signals. 8 The filtering is achieved by selecting the corresponding source signals’ orthogonal or independent basis, adaptively. To remove the signal of interest, the input signal is “projected onto the complement of the predicted signal subspace while to keep the signal of interest and separate it from the other signals, the input signal is projected onto the basis selected to span the desired signal subspace.” 9

Independent component analysis

In this section, it is explained how the independent component analysis, ICA, based filtering techniques decompose undesired sources of variation from the signal of interest through transforming them linearly into the ICA component axes and then maximizing the entropy of the data. In addition, the principal component analysis, PCA, based filters are explored whereas the basis are orthogonal directions of greatest variance in the data calculated adaptively from the covariance properties of the data while ICA components could be non-orthogonal. It is discussed that how the singular value filter, SVF is different from the other adaptive techniques by considering a weighting function that calculates filter coefficients from the data in the singular value domain, adaptively.

Independent component analysis is a linear transformation with statistical independent components that captures the fundamental structure of the data in the observed signal. Therefore, finding a linear demonstration of non-Gaussian data. It is based on the assumption that the sources are uncorrelated and mutually independent. If the original source signals are statistically independent and non-Gaussian ICA can reach to an excellent signal separation in comparison to PCA.

6

If the source signals are not independent, the technique may not contribute substantial superiority over PCA.

10

To estimate the mixing coefficients,

The assumption is that each mixture

For estimating the mixing matrix,

The meaning of independence can be summarized as following. “Variables”

“Because independence denotes uncorrelatedness, many ICA methods always give uncorrelated estimates of the independent components that reduces the number of free parameters and shortens the problem. The important constraint in ICA is that the independent components must be non-Gaussian for ICA to be possible. In the Gaussian case the density is completely symmetric which means it does not contain any information on the directions of the columns of the mixing matrix

Based on Central Limit Theorem under certain conditions summation of a number of independent random variables would have a Gaussian distribution under certain conditions. In ICA, there is the assumption that there is a mixture of independent components, and the components have identical distributions; therefore, the estimation of one of the independent components involves a linear combination of the

A variable change is considered to determine the estimator giving an approximation for the transform which defines

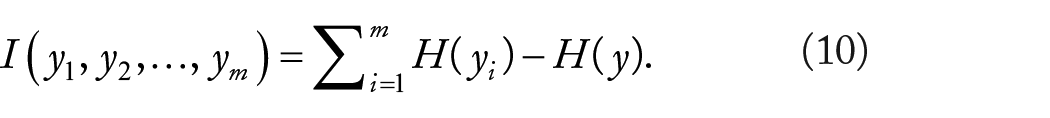

Based on information theory, ICA mixing matrix would be estimated through minimization of mutual information. The mutual information I between m (scalar) random variables,

Entropy H is defined for a discrete random variable Y as (11), where the

A measure of the dependence between random variables is mutual information that is correspondent to the divergence between the joint density f(

In multivariate data analysis, finding a smaller number of less redundant variable is the aim, therefore, giving a representation of the original set as possible which is called source separation. However, in PCA and the related Karhunen-Loeve transform the redundancy is measured by correlation between data, in comparison to the ICA with a stronger independence feature with less emphasize on the number of variables. However, in PCA there would, less complexity due to the use of correlation as second order statistics.

Principle component analysis

Decomposing data into its orthogonal basis, the Karhunen–Loeve (KL) transform is used specifying the autocorrelation matrix’s eigenvalues and the relevant orthonormal eigenvectors. 12 PCA could be useful for filtering such as the one in Negishi, 13 but its capability for adaptive regression filtering is restricted. The orthogonal bases of PCA indicates that they are uncorrelated but it does not necessarily mean the statistical independence. “Orthogonal basis are independent only if they are Gaussian or otherwise distributed random variables for which the second and higher order moments are zero.” 6 Orthogonal basis are not necessarily mutually statistically independent which means there could be multiple independent sources potentially to be projected onto the same orthogonal basis leading to a source separation which is incomplete.

For PCA transform, there is no assumption on the vector’s probability density whereas the first and second order statistics is estimated.

6

Assuming the random vector

Variance maximization approach is about a linear combination is considered where as

The

where the matrix

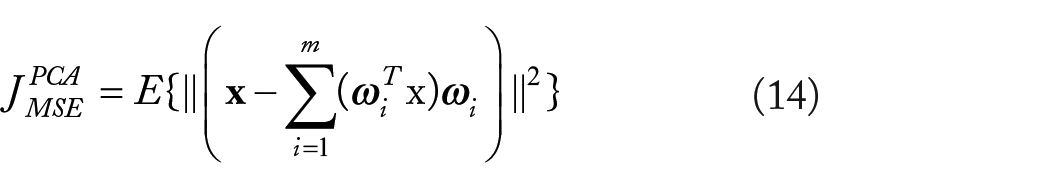

MSE approach defines compression of

Due to orthogonally of the vectors

Assuming orthonormality condition on the

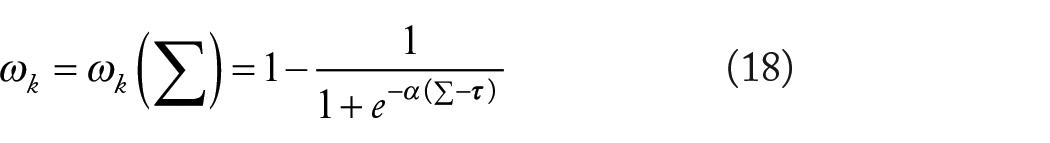

Singular Value Decomposition is another technique whereas weights to the basis of PCA decomposition to achieve filtering output is considered. 14 In this approach, the filter coefficients are function of the data singular value spectrum. In reviewed PCA-based filtering techniques in previous sections, weightings are restricted to 0 and 1 which means each basis function is either deleted or kept. However, based on statistical properties of the signal a weighting function is considered. 15 The filter coefficients will cause consistent and better filtering results because of eliminating undesired signal components with blocking of artifacts caused by strict thresholding. After decomposing the signal along a new set of PCA basis, filtering is addressed by allocating weights to each of the basis as (17).

where y is the output signal of the filter with the same dimensions as

In this equation,

Functional component analysis

Representing PCs by functions rather than vectors will lead to Functional Principal Component Analysis, fPCA. By observing the entire waveform data, this tool will be able to recognize more detailed pattern differences. 16 PCs provide indications for identifying potentially important differences in the curves. Smoothness is the core to this approach whereas in time domain the nearby values are linked and not differ largely. fPCA is a useful tool for analyzing data providing a recognition mean for the main source of variability of a set of curves.

Within linear transformations, which are computationally easier among multivariate statistical transforms, the use of functional techniques could provide additional understanding into differences in data by considering the data as functions maintains all the information contained in the raw data. A set of functional building blocks are used

Parameters

The raw data vector,

There is no particular limitation on the number of variables N to fit the functions into. The number of basis functions K must be less than or equal to the number of sampled data points, n. An accurate representation of sampled data is achieved when K = n in selecting the coefficients

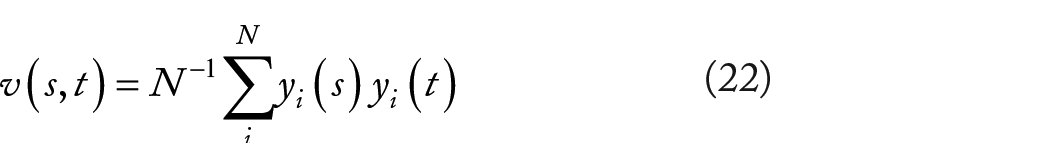

The functional eigenequation is

where

where the PC score

A non-increasing sequence of eigenvalues

Separating signals into deterministic and stochastic components, fPCA can be used that is achievable by deducting either one or other from the signal and can be considered as filtering the noise or the common parts, respectively. The approach is to re-present the original data along a new coordinate system such that the signal of interest can be decomposed from other sources of variation in the original data while projecting the signal along different basis. By retaining the basis describing the signal of interest and deleting the remaining, the filtering is accomplished. 2

The signal processing pipeline consists of several stages. Data needs to be normalized in the first step to ensure the same number of samples. Then transferring the data to the fPCA domain by using the fPCA transform and partitioning the data into 2 elements,

The filter characteristic depends on the data because the sum of the major PCs weight functions is given by

After applying fPCA on the data, the main source of variation in the data is filtered by keeping the components containing the most amount of variability through the most relevant PC function or eigenfunctions and removing the rest from the fPCA domain. After this stage, by projecting the data on the retained eigenfunctions the data will be returned to the first domain. The dominant modes of variation in the data can be kept by studying the ratio of the related eigenvalues to the total variance while deducting the mean from each observation. Consistent features are coherent components generating common structures and following deterministic rules, while there is a degree of randomness or stochasticity in the residual components. 18

Methodology

The signal decomposition and filtering techniques are explored to compensate for the random changes effect in the sensors’ positioning in motion data to find characteristic features that represent the main patterns of motion. Investigating applicability of the signal decomposition techniques, a series of experiments are designed for a motion capture scenario for the purpose of gait analysis whereas the signals are angles of joints including pelvis, hip, knee, ankle, and foot in X, Y, and Z directions. Ten subjects are hired to walk in 10 sessions of sensor wearing with feigned changes in sensors positioning. 2 An appropriate number of subjects are required to be recruited to have a valid analysis of motion data. In a similar scenario in the literature for gait analysis, 7 subjects are used based on statistical power analysis 17 ; therefore, in our experiments it is decided to recruit 10 subjects, 5 females, and 5 males. A power analysis can be used to estimate the minimum sample size required for an experiment, given a desired significance level, effect size, and statistical power which is the probability of a hypothesis test of finding an effect if there is an effect to be found.

Inadvertent changes are emulated in the sensors position for each session while following the standard marker set. There are several standard marker-sets for placing markers on the human body such as Cleveland Clinic, Saflo, Helen Hayes, Codamotion, and so forth. The Cleveland Clinic marker set uses a rigid triad of markers in a plane parallel to the long axis of the bone to capture the motion of the thigh and shank in 3 dimensions. The Saflo marker-set consists of a total body marker-set with 19 retro-reflective markers fixed on specific anatomic landmarks. The Helen Hayes marker set is a relatively simple set of external markers developed for time-efficient video analysis of lower extremity kinematics. All the named marker placement protocols are used for clinical gait analysis. For measuring bilateral gait, the recommended Codamotion marker-set is used which comprises a total of 22 standard markers as shown in Figure 2 for the right side of the body. Markers shown in parentheses () are optional. The marker set determines ankle and knee joint centers and segment coordinate systems by means of a marker on a post or wand protruding from the anterior aspect of the thigh and shank, and by single markers placed over the lateral aspect of the joint flexion/extension axis.

Position of markers on human body for the experiment setup. 6

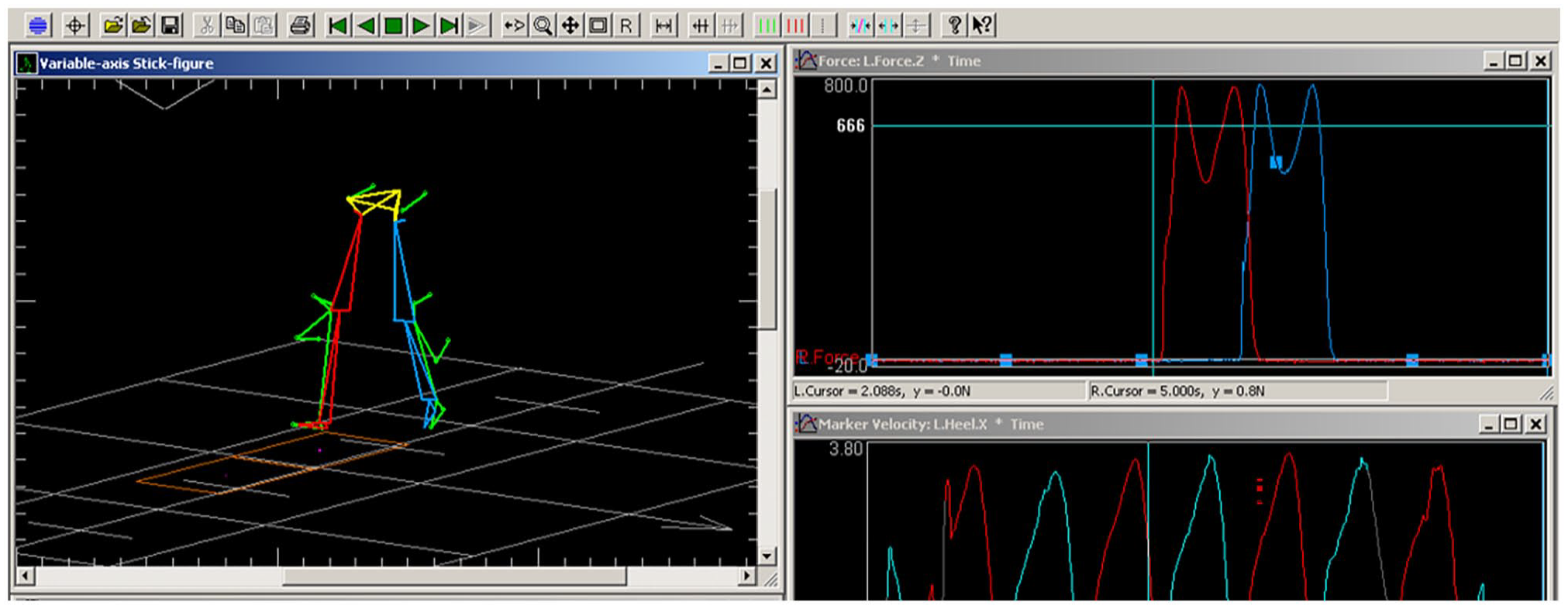

After installing the sensors on legs and pelvis of the subject according to the Codamotion marker-set, the body angles are measured. A motion capture system measures the angles of joints and provides a stick figure view for the subject under experiment as shown in Figure 3. The system is a general-purpose 3-dimensional (3D) motion tracking system called Codamotion. The measurement unit contains 3 pre-aligned solid-state cameras which track the position of a number of active markers, that is, infrared light emitting diodes (LEDs), in real-time. Sampling rates are selectable from 1 Hz up to 200 Hz, dependent on the numbers of markers in use. Each scanner unit contains 3 special cameras which detect infrared pulses of light emitted by the markers and locate the marker positions with very high resolution. The cameras are rigidly mounted on the scanner units so that the system can be pre-calibrated.

Stick figure and captured signals from force plate, left heel in Z direction to separate each walking step.

The calibrated system measures the positions of markers within a 3D coordinate system that is fixed in relation to the scanner unit. The active range of the capturing system is 1.5 to 5.2 m from the scanners and follows a Gaussian distribution function so that optimal visibility occurs at approximately 3 m from each scanner. The angular resolution of each camera is about 0.002°; this results in a lateral position resolution of about 0.05 mm at 3 m distance (horizontally and vertically), and a distance resolution of about 0.3 mm. The set-up of the motion capture system in the laboratory ensures that all experiments are carried out in this range. The motion capture system will generate angles of joints using the measured sensors/markers positions and the subjects’ body measurements including height and weight.

A random number generator with uniform probability distribution to generate random position displacements is utilized for the purpose of inadvertent changes in the position of sensors. The changes are within the radius of 2 cm in each 10 experiment sessions that the sensors are wore considering the random changes. Despite of the precision in sensor placement regime, there still exists human error in placing sensors whilst following the derived random position obtained from the random number generator. However, we do not consider this source of error further, believing to be relatively small. Each sensor wearing session consists of 6 trials of subjects walking from the initial point to an end point of the walkway while the motion capture system collects the subject’s motion. The Duration of each trial is 5 seconds with 200 Hz sampling rate. The walking speed is asked to be as normal as possible whilst different sensors perturbations are made across different sensors wearing sessions.

The kinematic variables in the experiments on the human subjects are in 3 different Cartesian directions for the angles of pelvis, hip, knee, ankle, and foot. To eliminate the effect of other sources of variation that are not related to sensors positioning differences in each sensor wearing session, the kinematics variables of each sensor wearing session are averaged over the 6 trials. There could be different walking speed, and different ways of walking for each subject and could be the potential reason for such variabilities. Walking is partitioned into cycles whereas each cycle comprises 2 steps whereas a subject is asked to walk for a specific time interval and divide it into cycles. Each walking cycle is considered as the time from initial contact of the heel with the ground to initial contact of the following step. The following right heel contacts are used to separate each step which can be determined from right heel sensor position in Z direction as shown in Figure 3.

There could be different numbers of samples in each walking cycle that is challenging in identifying features during action sequences. For this purpose, each cycle needs to be normalized, so it is denoted by the same sample number. The data is time normalized to convert the time axis from the recorded time units to an axis representing the walking cycle from 0% to 100%. After time normalization, data standardization is performed so that comparing results of different angles with each other in terms of percentage of change before and after applying the filtering techniques. In this case study results of applying fPCA, ICA, PCA, and SVF adaptive filtering techniques and an a priori FFT based filtering mechanism are compared.

Result and Discussion

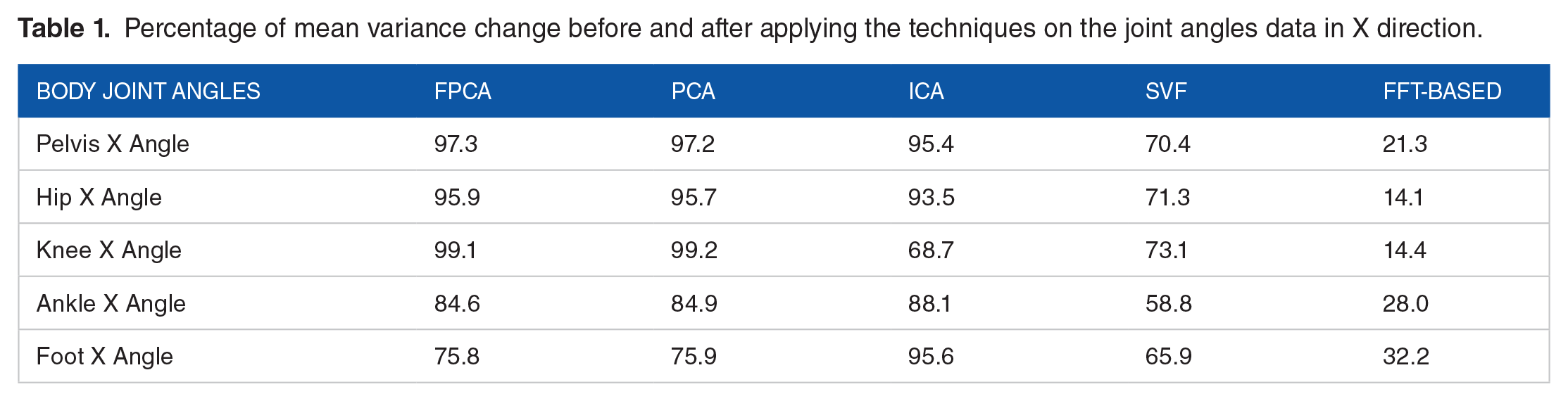

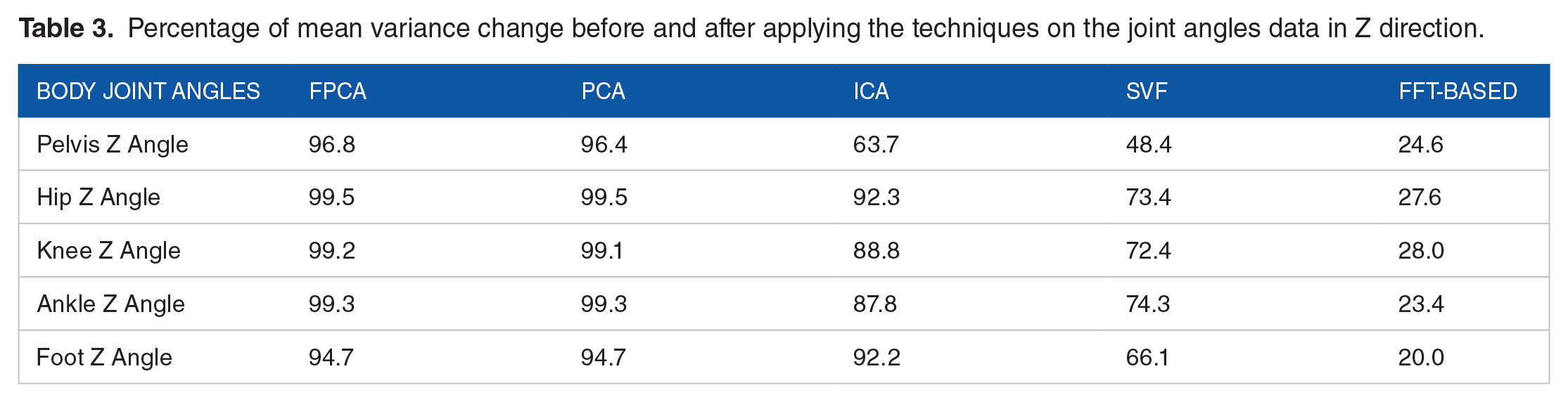

The effect of applying the filtering techniques (fPCA, PCA, ICA, SVF and FFT based) on different angles including pelvis, hip, knee, ankle, and foot as the percentage of mean improvement is listed in Tables 1 to 3 for normalized data in 3 different directions of the Cartesian Coordinate system, respectively. In addition, the right hip angle in the X direction for 10 subjects is shown in Figures 4 and 5 before and after applying the techniques. Motion data is averaged over 6 trials of 10 sessions of marker wearing for each joint angle on 10 human subjects. We can see that for each subject fPCA and PCA schemes are better at separating the main variation pattern in the motion data than other techniques. The Figures confirm consistency in performance of the proposed functional signal separation technique in compensating for positional uncertainties for all participants in the experiment.

Percentage of mean variance change before and after applying the techniques on the joint angles data in X direction.

Percentage of mean variance change before and after applying the techniques on the joint angles data in Y direction.

Percentage of mean variance change before and after applying the techniques on the joint angles data in Z direction.

Right hip angle in X direction before and after applying fPCA, PCA, ICA, SVF and FFT for Subject 1 (S1), 2(S2), 3(S3), 4(S4), and 5(S5), respectively.

Right hip angle in X direction before and after applying fPCA, PCA, ICA, SVF and FFT for Subject 6 (S6), 7(S7), 8(S8), 9(S9), and 10(S10), respectively.

Among a priori FFT based filters, Butterworth IIR filter is selected as a frequency-based filter which is commonly used as a baseline for comparison in literature. A second order low-pass digital filter is used with normalized cut-off frequency of 0.1 Hz to remove high frequency noise. Normalized cut-off frequencies take a variable in range of 0 to 1, where 1 corresponds to the Nyquist frequency. Results show that FFT-based filtering techniques perform poorly on the motion data of our experiments, as expected. As it is shown the mean change percentage in motion data variance before and after applying the technique is 21.94%. Singular value filtering results show mean variance after applying the technique is 68.4 % on average. For ICA based filtering results shows the percentages of change before and after applying the technique is 86.2%. Although the percentage of change is high cases, we see new patterns of variation in the signal which shows that independent component analysis filtering-based techniques are not applicable to this type of data. 19 Results of applying the PCA-based filtering techniques shows that the technique works properly to reduce random patterns in the data. The percentage of improvement as mean percentage change in both PCA and fPCA is 94%. However, looking specifically into the angles the minimum variance reduction is 88.2% for pelvic angle in Y direction and the maximum improvement is 99.6% for knee angle in Y direction.

The aim in this study is to separate the main pattern of variation in the data from residual components in on-body sensing systems by using signal separation techniques. Results demonstrate as random changes in sensors positioning which are potentially due to undesired movement of wearable sensors, are introduced the motion data variation increases. Before and after applying the filtering techniques, improvement is observed as a percentage of variance changes in the data. Since FFT based filtering projects signals onto frequency components, as shown in results Section it cannot separate the main pattern of variation in the signal from the random variations, which is in the same frequency domain. After applying ICA based techniques, we see some new ripples in the data that are unrelated to the motion patterns. As ICA works on non-Gaussian distributed data, 20 it cannot obtain appropriate results after applying this signal separation technique on the captured data.

The variation in data is due to random changes in sensors positioning. Randomness in the position of sensors is generated using a discrete uniform distribution, which has a symmetric probability distribution that is causing Gaussian distribution of sampled motion data. The SVF-based signal separation performs not better than ICA one which is due to the fact the dominant mode of variance in the data is reflected in the first few principal components. It is evident that the performance of fPCA and PCA is similar in outperforming the rest of the reviewed filtering techniques in stochastic separation of patterns in the data. This can be explained in terms of sampling frequency. As the sampling rate is 200 Hz while the movement pattern bandwidth is around 10 Hz according to the normal walking speed of subjects and movement patterns in data, fitting the function into highly sampled data does not have significant effect. Therefore, in highly sampled data it suggests the use of PCA instead of fPCA as it is easier to perform, and it needs less calculations.

In terms of computational complexity of fPCA algorithms, we can find the coefficients

Conclusion

Within the context of on-body sensing systems, digital filters and signal decomposition techniques including a priori and adaptive ones were studied. A novel way of using functional principal component analysis was introduced. In this approach, the main variation source in the data is filtered by restoring the most dominant principal components and deleting the remaining from the projected domain. The data will be reverted to the first domain by projecting the data of the retained eigenfunctions and removing eigenfunctions with less variation. However, adding processing complexity to the motion capture procedure is the drawback of the approach. A case study on body area sensing system for motion capture is presented in which the challenge of effect of random changes in sensor placement was explored using the filtering techniques. The results showed that the proposed fPCA based technique reduces variations in the data for average of 94% outperforming the rest of the techniques in the case study although it will add computational complexity. However, by recent advancements in development of cheap and small high power processors this problem can be addressed. The focus in this paper was on human gait; however, future work could be about applying and investigating the introduced signal processing approach for other body movements to validate its applicability for such situations.

Footnotes

Author Contributions

The author confirms sole responsibility and contribution for the following: study conception and design, data collection, analysis and interpretation of results, and manuscript preparation.

Declaration Of Conflicting Interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Publication of this paper has received financial support from Bournemouth University, UK.