Abstract

Background:

Robotic devices have been used to quantify function, identify impairment, and rehabilitate motor function extensively in adults, but less-so in younger populations. The ability to perform motor actions improves as children grow. It is important to quantify this rate of change of the neurotypical population before attempting to identify impairment and target rehabilitation techniques.

Objectives:

For a population of typically developing children, this systematic review identifies and analyzes tools and techniques used with robotic devices to quantify upper-limb motor function. Since most of the papers also used robotic devices to compare function of neurotypical to pathological populations, a secondary objective was introduced to relate clinical outcome measures to identified robotic tools and techniques.

Methods:

Five databases were searched between February 2019 and August 2020, and 226 articles were found, 19 of which are included in the review.

Results:

Robotic devices, tasks, outcome measures, and clinical assessments were not consistent among studies from different settings but were consistent within laboratory groups. Fifteen of the 19 articles evaluated both typically developing and pathological populations.

Conclusion:

To optimize universally comparable outcomes in future work, it is recommended that a standard set of tasks and measures is used to assess upper-limb motor function. Standardized tasks and measures will facilitate effective rehabilitation.

Introduction

Robotic devices can quantify characteristics of upper-limb function, identify indicators of impairment, and assist in rehabilitation.1-3 Repeatable tasks with a high degree of accuracy can be conducted to test various aspects of a person’s ability, from motor function to short term memory.2,4 In adult populations, robotic devices have been used extensively to quantify typical age-related changes, as well as deficits caused by injuries or illnesses.2,4-7 Additionally, robots have been used for rehabilitation in adults, often for those who have experienced stroke. 2

Robotic devices offer the same testing capabilities for younger populations but have not been used to the same extent. For example, 2 reviews of robotic interaction, 1 examining the adult population with stroke, and the other, the child population with cerebral palsy, resulted in 28 versus 9 articles, respectively.2,3

Robotic quantification of the adult population is straightforward as motor control parameters across the ages from 30 to 60 years display little variation. 8 To identify pathological differences in motor control, the clinician or researcher can readily compare robotic outcome measures to a general database of members within the typical population. However, as children grow, motor control improves, making it difficult to generalize the parameters associated with each age. It is important to understand how function changes across the developmental stages from 5 to 18 years of age, to better identify anomalies related to pathology. Additionally, the goal of therapy is to return function to levels of typical development, and the age at which each developmental goal would typically be achieved must be identified.

The primary objective of this review was to identify the robotic tools and measures that have been used to quantify upper-limb function in typically developing children and youth. Since most papers also used robotic devices to compare function of neurotypical to pathological populations, a secondary objective was introduced to relate clinical outcome measures to robotic tools and techniques to allow comparison with pathological populations. Knowing which tools and measures are most clinically relevant can enable clinicians and researchers to choose the best methods of evaluating motor control of children with neurological conditions as compared to those who are typically developing.

Methods

Study design

This study is a systematic review that followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines.

Eligibility criteria

To be included, articles had to: be written in English, published in peer-reviewed journals, and discuss the use of a robotic apparatus to assess upper-limb function in typically developing children. Articles were excluded if: they assessed only a pathological population, the mean age of participants was above 18 years of age, only lower-limb function was assessed, or the robotic device/system was evaluated rather than testing function of children. To ensure a comprehensive review, there were no restrictions on publication date, study design, or types of outcomes/measures of upper-limb function. References in any review articles found within the search were used to verify that all relevant articles were included.

Information sources and search

To identify research that used a robotic apparatus to quantify upper-limb motor function in children and adolescents, a search was conducted between February 2019 and August 2020 among 5 databases (EMBASE, Engineering Village, OVID MEDLINE, PubMed, and Web of Science). The search was performed with the following search string: (Upper-Limb OR Upper—Limb OR Arm OR Upper-Extremit* OR Upper—Extremit*) AND ((Robot* OR Exoskeleton) AND (Test* OR Assessment OR Quantification OR Evaluation OR Examination)) AND ((Healthy OR Typical* Develop* OR Control OR Normal) AND (Child* OR Youth OR Adolescent OR School-Age OR Pediatric OR Teen)).

Study selection

The titles were first searched to eliminate any articles that did not meet the inclusion criteria, followed by abstract review. The titles and abstracts were reviewed by 1 reviewer. Typically, at least 2 reviewers would be involved at each stage to reduce the risk of bias in paper selection. To help reduce the chances of bias from 1 reviewer screening titles and abstracts, the reviewer was instructed to be lenient when judging the exclusion criteria. Leniency at this stage resulted in a greater number of full text articles being reviewed by multiple reviewers than if multiple reviewers had been involved at this stage in screening.

For each paper that was included after the abstract review, 2 reviewers assessed the papers using a modified version of the McMaster Quantitative Review Form (https://srs-mcmaster.ca/research/evidence-based-practice-research-group/). This form was used to identify any potential biases (ie, subject selection, measurement, and intervention biases), study design, study participants, ethics procedure, outcome measures, intervention, and study results. The form was also used to extract and record data about the measures and outcomes that were reported in each study. The agreement between the reviewers for article inclusion after full paper assessment was calculated using the Cohen’s kappa statistic. When reviewers disagreed whether to include an article, the reasons were discussed with the third reviewer and a decision on inclusion was reached.

Results

A total of 476 articles were identified from the initial search of databases. After removing duplicates, 226 articles remained and were assessed. After the complete selection process, 19 were included in this review. Cohen’s kappa was found to be 0.76, indicating substantial agreement between the investigators. The selection process is illustrated in Figure 1.

Flowchart showing article selection process.

Data about the robotic apparatus, tests of function, and outcome measures for the tests were recorded and summarized. Additionally, the demographic information of participant groups was recorded to compare among studies.

After reviewing all articles, general themes were established and summarized. It is important to note that due to differences in methods and populations studied, a meta-analysis could not be completed as there was insufficient replication of parameters. Correlations between clinical and robotic measures of function could have been combined in a meta-analysis had there been sufficient replication among studies.

Study populations and study design

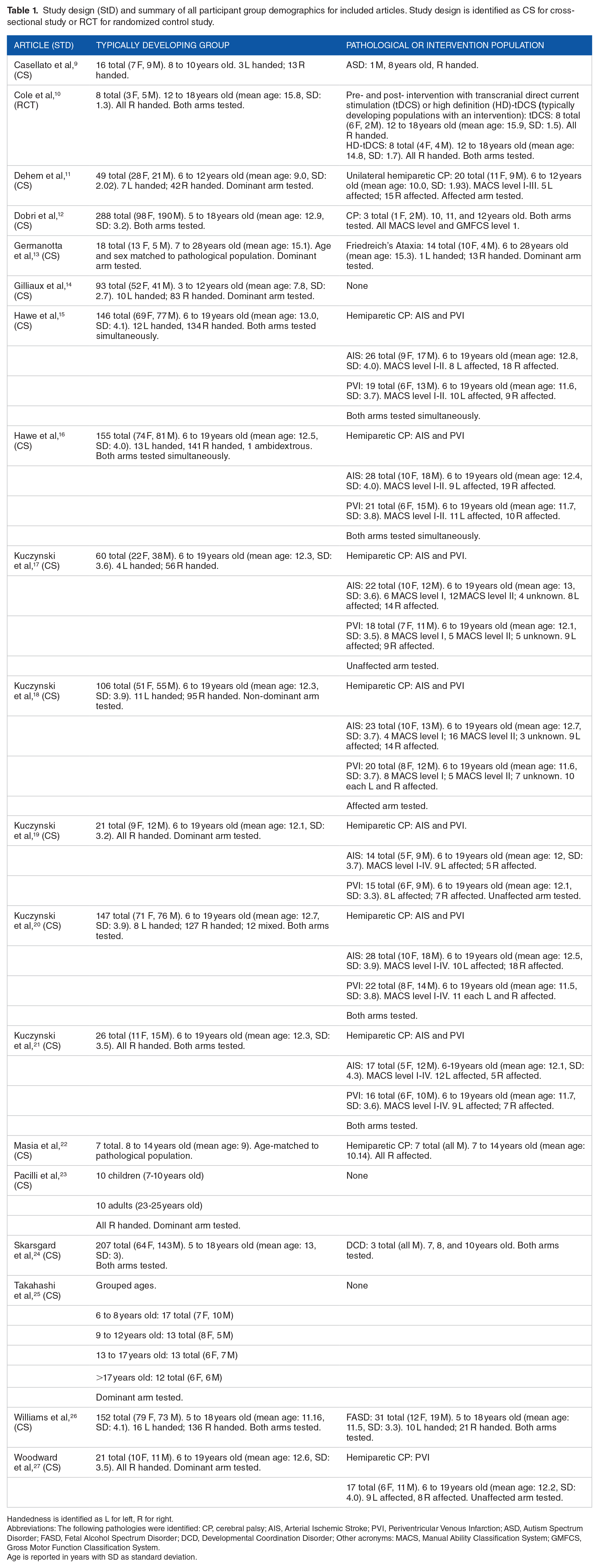

The demographics and the study design of each study are summarized in brief in Table 1.

Study design (StD) and summary of all participant group demographics for included articles. Study design is identified as CS for cross-sectional study or RCT for randomized control study.

Handedness is identified as L for left, R for right.

Abbreviations: The following pathologies were identified: CP, cerebral palsy; AIS, Arterial Ischemic Stroke; PVI, Periventricular Venous Infarction; ASD, Autism Spectrum Disorder; FASD, Fetal Alcohol Spectrum Disorder; DCD, Developmental Coordination Disorder; Other acronyms: MACS, Manual Ability Classification System; GMFCS, Gross Motor Function Classification System.

Age is reported in years with SD as standard deviation.

Most of the articles included within this review discussed the study of typically developing children as compared to pathological populations (15 of 19 articles). In 11 of these articles, children with cerebral palsy provided the comparator.11,12,15-22,27 Four articles each evaluated a different pathological population; children with Friedreich’s Ataxia, Fetal Alcohol Spectrum Disorder (FASD), Autism Spectrum Disorder (ASD), and Developmental Coordination Disorder (DCD).9,13,24,26 The final 4 studied only typically developing populations.10,14,23,25

Of the included articles, 6 had sample sizes of 10 participants or fewer in at least 1 of the populations studied,9,10,12,22-24 8 had sample sizes of 50 participants or more in at least 1 of the populations studied,14-18,20,24,26 with 2 articles having over 200 participants in the control group.12,24 For larger sample sizes, the authors could make reliable group comparisons, whereas smaller sample sizes required authors to acknowledge the limitations of their results. Only 1 article justified the sample size with a priori power analysis, while one other completed a power analysis of their results.17,25

Only 2 articles specifically stated age- and/or sex-matched control populations were used to compare against pathological populations.13,22 All other articles ensured that control populations and pathological populations had the same age range. The 2 articles with age- and/or sex-matched controls also had smaller population sizes (7 and 18 controls, respectively).13,22

Three articles explicitly stated that data was separated by participant sex or indicated that the analysis accounted for sex.12,24,26 Only 4 articles12,22,24,26 considered the effect of participant sex on performance, including the article with age- and sex-matched controls.12,22,24,26 One of the articles explicitly tested for significant differences in male and female performance and did not find significant differences in any of the parameters due to sex. 26

One article was a randomized control trial, 10 with all others being cross-sectional studies.

Robotic devices used

There were 4 robotic devices used for evaluation of motor control, each of which is briefly summarized in Table 2. All articles discussed a robot that was either already commercially available or, in the case of the MIT Manus, the version of the robot before it was commercialized. One of the 4 robots can enable evaluation with a 3D workspace, with 6 degrees of freedom (DOF) (the PHANToM), while the others work in a planar, 2D workspace with only 2 DOF. These differences in workspaces and DOF restrict each robot to specific tasks and measurements of function. Additionally, only 1 of the 4 robots (the Kinarm Exoskeleton Robot Lab) can test both upper-limbs simultaneously (bimanual tests), while the others only focus on unimanual tasks.

Information on the robots from articles included in review.

Abbreviations: 2/3D, 2/3-dimensional; DOF, Degrees of freedom.

Aspects of function being assessed with robotic tests and measures

All aspects of function, robotic tests, robotic measures, and clinical measures used in each article are summarized in Table 3. Thirteen articles compared robotic measures of performance to clinical outcome measures.10,11,13-22,27 The definitions and descriptions of each robotic and clinical outcome measure can be found in their respective articles.

Summary of upper-limb functions assessed, and robotic/clinical tests and measures.

Overall motor performance was most commonly assessed, and occurred in 11 articles.10-16,20,21,23,26 Evaluation of position sense was reported in 4 articles,10,17,19,27 while both motor adaptation/learning9,22,25 and kinesthesia10,18,19 were each assessed in 3 articles.

Robotic tests and measures of function

Eight articles included reports of both arms,10,12,15,16,20,21,24,26 9 assessed only 1 arm,11,13,14,17-19,23,25,27 and 2 did not specify.9,22 Two articles found a significant difference in performance between dominant and non-dominant arms,20,26 while the other 6 articles that tested both arms did not state significance.10,12,15,16,21,24

Point-to-point reaching tasks (without perturbations) were the most common task type, appearing in 10 articles.10-14,16,20,21,23,26 The point-to-point reaching task was the only task that had a similar experimental paradigm among different laboratory groups and robots. Generally, a virtual target would appear, and the participant would reach toward the target. The target distances and orientation, as well as the calculations of parameters, differed among robotic platforms.

The robotic measures of participant performance for point-to-point reaching tasks were also consistent among articles. These included at least 1 measure of path smoothness; however, the measures of smoothness differed among robotic configurations. The most common measures were the number of speed peaks (indicating a change in direction to correct for an error in movement)10,13,20,21,24,26 and different speed ratios,10,11,13,14,18,19,23 while normalized jerk of the movement was also used.13,23 Ideally these measures would be able to be combined and assessed in a meta-analysis, but due to differences in how the parameters were calculated, the data could not be combined.

Path following was also a common robotic task; however, the paradigms differed among robotic platforms. For example, with the NEPSY-II protocol, 35 a digitized version of a paper and pen task required participants to follow a curvilinear path, 9 while other robotic systems had participants trace a circle and a square shown on the screen.14,23 The fundamental assessment and abilities being tested were the same between these 2 different paradigms, but the results could not be combined because of the differences in experimental setup and the methods by which measures were calculated.

Many other robotic tasks and measures were robot dependent and some were laboratory specific. For example, the free amplitude reaching task was only completed on the REAPlan robotic platform, and the test of kinesthesia was only completed using a Kinarm in a lab in Calgary, Alberta, Canada.10,14,18,19 While these tasks and measures were used in multiple studies, most of the overlap between populations and tasks were within specific research groups, not among groups.

Finally, the clinical measures used to assess different aspects of function were not standard among studies unless the studies were conducted by the same lab. Although this result is unsurprising when considering the number of potential clinical assessments that could be administered in typically developing populations, the limited overlap among studies that included the same pathological population is unexpected. For example, not all studies that included participants with CP recorded MACS level, which is a standardized self-assessment of upper-limb abilities. 34

Summaries of study aims, statistical tests used, results, and biases/limitations

Table 4 presents the aims, statistical tests and comparisons made, summary of results, and noted biases or limitations for each study included in the review. The most common limitations were that sample sizes were not justified, and power analyses were not conducted. The most commonly noted bias was a sampling bias, as participants were often recruited from pre-existing research databases or through specific physicians.

Description of aims of studies, statistical tests used, summary of results, and noted biases or limitations.

Abbreviations: CP, Cerebral Palsy; AIS, Arterial Ischemic Stroke; PVI, Periventricular Infarction; MACS, Manual Ability Classification System; ANOVA, Analysis of Variance; ANCOVA, Analysis of Covariance; BOTMP, Bruininks-Oseretsky Test of Motor Performance; CST, corticospinal tract; AHA, Assisting Hand Assessment; CMSA, Chedoke-McMaster Stroke Assessment; DCD, Developmental Coordination Disorder; FASD, Fetal Alcohol Spectrum Disorder; ASD, Autism Spectrum Disorder; PPB, Perdue Pegboard; (HD-)tDCS, (High Definition-)transcranial direct-current stimulation.

Discussion

This systematic review aimed to determine what robotic tools and measures are being used to quantify upper-limb function in typically developing children and youth. Two-hundred and twenty-six articles were identified, 19 of which were included. Four robotic devices were used in the evaluations. Most of the articles (15 of 19) compared typically developing children to children with different pathologies, most commonly cerebral palsy. The most common robotic tests were point-to-point reaching tasks (without perturbations), and path following tasks. The most frequent measures of performance included movement smoothness, reaction time, and path length ratio (the ratio between a perfectly straight path between 2 points and the hand path of the participant).

Study populations

Study populations typically consisted of fewer than 50 participants per group, but 8 articles had more than 50 participants in at least 1 group. This size is similar to studies of the adult population as found by Sivan et al in their systematic review of robotic upper-limb therapy in people with stroke. 2 However, the sample sizes were typically much larger than in the Chen and Howard systematic review that evaluated upper-limb robotic therapy in children with CP. 3 The increased sample sizes are promising as larger sample sizes lead to higher power of statistical results and more confidence in results and conclusions.

A study by Schönbrodt and Perugini used mathematical simulations to determine what sample size is needed to stabilize correlations. Their results indicated that sample sizes upwards of 250 datapoints are needed to stabilize a correlation in most cases. 36 Only 1 article included in this review had a sample size this large; however, that was the control group and the pathological population (children with CP) had only 3 participants. 12 The results from Schönbrodt and Perugini are important to consider for future research into the efficacy of robotic therapies. While studies with smaller sample sizes can give an indication of the efficacy of robotic therapies compared to traditional methods, the true difference will only be known once sample sizes reach 250 participants or more.

While only 2 articles stated age- and/or sex-matched control populations, all research articles stated that the age demographics of the control group were similar to the pathological population. The similarities in age ranges are important for work with children and youth as motor performance is known to improve significantly with age. 37 Matching age will be important for future research comparing robotic therapies to traditional therapy methods to ensure confounding factors, such as age-related performance differences, are minimized. The article that tested differences in male and female performance 26 did not find significant differences, which is consistent with other previously published data. These results indicate that it may not be necessary to have sex-matched controls, or sex-matched intervention groups.37,38

Robotic assessments

Only 1 robotic device could assess both arms simultaneously, and 8 of the articles assessed both hands of each participant. Two of the articles that tested and compared performance between the dominant and non-dominant arms of participants found significant differences. These results indicate that it may be important to separate dominant and non-dominant arms in analysis of unimanual tasks and to ensure that the hand dominance is considered when comparing between groups. The studies did not compare right versus left dominance, only dominant versus non-dominant arms.

Point-to-point reaching tasks (without perturbations) are common assessments of motor function as goal-directed reaching is used in many aspects of daily living. These types of tasks are included in different clinical assessments such as the Melbourne Assessment of Unilateral Upper Limb Function, Perdue Pegboard (PPB) test, and the Bruininks-Oseretsky Test of Motor Proficiency.30,32,39 By adapting point-to point-tasks to robotic platforms, more precision and objectivity can be attained.

Point-to-point reaching tasks occurred on all 4 robotic platforms and were typically used to quantify overall motor function. These robotic tasks are similar in nature to accepted clinical assessments of motor function. For example, the PPB consists of goal-directed point-to-point reaching. 39 Speed and accuracy of movement are important and emphasized in both the robotic point-to-point reaching tasks and the PPB. Robotic tasks and the PPB quantify different aspects of the reaching movement. The PPB gives aggregate measures of function (the number of pegs placed, and assemblies built), which is also affected by manual dexterity, and can identify impairment but not what part of the movement is impaired. Most of the robotic tasks do not measure manual dexterity but can quantify and differentiate aspects of reaching movements to give precise information on motor control differences identifying impairment. For example, robotic measures include the path length ratio (the ratio between the path followed by the participant and the shortest line between the 2 points), path smoothness, and total movement time. These 3 measures give an idea of different ways the point-to-point reaching may be affected, rather than simply identifying that it is impaired. A clinician administering the PPB may be able to make qualitative assessments, but there is no quantification of which part of the movement is most impaired. The Melbourne Assessment and Bruininks-Oseretsky Test have more specific outcome measures than the PPB that can help identify aspects of movements that are most affected by a pathology.

The addition of perturbations to point-to-point reaching and path following tasks allow robotic assessments to evaluate aspects of function that may not be easily tested with existing clinical tests. Specifically, motor learning/adaptation to perturbations could not be directly tested with the clinical tests used by any of the included studies; however, robots could apply perturbations to assess how participants adapt and learn to compensate for external perturbations. These types of tests allow researchers to determine different mechanisms for how a pathology may affect a person’s motor function. They may also be clinically relevant as the tasks can measure how well someone can recover from an unexpected perturbation (such as an object shifting when it is being carried), or can be included in therapies to improve someone’s ability to adapt to unexpected perturbations.

While the precision and specificity of robotic measures can be helpful, the number of measures taken from 1 test can be overwhelming, and not all measures may be clinically relevant. For example, the object interception and avoidance task used in Hawe et al has 17 different performance measures, 15 and the point-to-point reaching task on the same robotic platform has 11 performance measures.12,26 The Melbourne Assessment has 16 tasks in the entire assessment, 30 similar to the object interception and avoidance task, but many of the articles used multiple robotic tasks for similar evaluations. This increased the number of performance measures available with the robot as compared to a single clinical test. Additionally, clinical tests verify that all subtasks and measures are clinically relevant, whereas the robotic tasks and measures have not been tested to the same degree for clinical significance. Finally, these clinical tests can be compared to existing normative ranges to identify impairment with overall scores. Many of the robotic tasks do not have normative ranges of performance against which to compare. Those that do include normative ranges are confined to each specific measure rather than overall measures of performance making comparison to impaired populations more difficult. As noted by Dobri et al, individual measures of performance from 1 task are not sufficient to identify impairment. 12 If robots are to be used to identify impairment of upper-limb motor function in children and youth, normative ranges across platforms are needed as well as aggregate measures of performance for each task in a similar manner to what exists for clinical tests.

Many robotic measures did not correlate with clinical measures of motor performance. The lack of correlation could be due to differences in the actual measure between the robotic and clinical tests, as well as measurement limitations of each tool. 14

Data analysis

Many of the statistical tests require specific criteria to be met for them to be valid. For example, t-tests, ANOVAs, and ANCOVAs require data distributions to be Gaussian and equal variance between datasets. Seventeen articles completed tests that required Gaussian distributions of data10-13,15-27 whereas 13 stated that the distribution was evaluated.10-13,15,18-20,23,24,26,27 One article ignored the results of the test for a Gaussian distribution as the data appeared to follow this distribution upon “visual inspection.” 15 Seven of these articles either used a different test which does not require a Gaussian distribution or applied transformations to make the distribution Gaussian.9,13,17,18,20,24,26 Only 1 article stated explicitly that methods were undertaken that corrected unequal variance between datasets. 13

Limitations of research

Many of the included articles did not explicitly state the necessary conditions for statistical analyses. It is therefore unknown if the results of these analyses are accurate.

While many articles have large study populations (more than 50 participants in at least 1 group), most articles were limited by small sample size in at least 1 group. The articles with small populations did report the sample size as a limitation and stated the results were a proof of concept, rather than an in-depth analysis.

Limitations of review

One limitation of this review is that only 1 reviewer screened article titles and abstracts. Typically, this process is completed by at least 2 reviewers to reduce potential biases in article selection. Another limitation was that a meta-analysis was not completed due to the non-homogeneity of the study paradigms and robotic measures. While a meta-analysis could be completed on tasks that were used in multiple studies, repetition of participant data was included in several articles from the same lab; analysis that included the same participants more than once would skew the analysis.

Recommendations

In future studies and robot design, the same robotic tasks and measures should be used to quantify motor function. Increased homogeneity of study methods would enable meta-analyses to be effectively conducted to increase the statistical power of the findings. The authors recognize that this recommendation may be difficult to achieve as the robotic apparatuses are all commercially available, and cooperation between competitors to ensure consistency of tasks and measures across platforms is unlikely. As the popularity of these devices grow, the diversity of groups using each apparatus may accommodate future meta-analyses.

Similarly, it is recommended that future studies compare the same clinical tools and measures to assess motor function to facilitate comparisons among studies. The use of the same clinical measures would be easier to achieve than the same robotic tasks and measures as the different measuring tools generally are not directly competitive and are significantly cheaper than robots. The lower cost would allow researchers to have access to multiple clinical assessment tools to ensure their findings can be compared to other research in the same area.

Additionally, future researchers should consistently report testing hand/handedness, justify sample size and compute power analyses, and state whether requirements for statistical tests have been met. Testing hand/handedness is important to report as it allows other researchers to fully reproduce experiments and make more complete comparisons between previous work and their own. It is also important to justify sample size and compute power analyses to better understand the statistical strength of the study results. Finally, clearly stating if requirements for statistical tests have been met increases the confidence other researchers have in the results of the study.

Contributions of review and implications for practice and research

Unlike previous research, this review has compiled and summarized robotic devices, tasks, and outcome measures that have been used to quantify upper-limb motor function in typically developing children and youth. It further summarizes those articles whose data were compared to pathological populations and related clinical measures. This compilation will allow clinicians and researchers to quickly and easily identify the devices, tasks, and measures currently available to quantify upper-limb function. Clinicians can now make educated decisions about task type and outcome measures that may be most useful for patient measurement. Researchers can more easily identify methods to ensure effective comparison across research groups as well as identify gaps in knowledge with respect to upper-limb function.

This review identified the need for normative databases of robotic tasks and outcome measures to enable the identification of impairment in individual children and youth, similar to clinical tests. The lack of normative databases prohibits impairment from being identified by clinicians without first collecting and analyzing their own database of results from typically developing children and youth. To make robotic devices usable and effective for clinicians, researchers will need to create these databases and make them readily available. There is precedent for this, as some robots do have normative databases for adults that are used to identify measures outside the typical performance ranges. 40

Conclusion

This systematic review was conducted to identify robotic tools and tests used in published literature to quantify upper-limb function in typically developing children. It also related these tools and techniques to outcome measures used for comparison to clinical populations. Fifteen of the 19 articles studied both typically developing and pathological populations, while 4 quantified motor function in only typically developing populations. While there was some overlap among studies in terms of population, robotic apparatus and tasks, and clinical measures of motor function, there was insufficient overlap to conduct meta-analyses. Future work should aim to use the same robotic apparatuses, tasks, and clinical measures to allow meta-analyses to be conducted to increase the statistical strength of the overall findings.

Footnotes

Author Contributions

SCD Dobri was involved in all stages of the production of the review and was the reviewer who screened titles and abstracts. HM Ready was involved in assessing full articles, writing, and editing the review. TC Davies was involved in developing the research questions and search string, solving disagreements on article inclusion at the full-text stage, and writing and editing the review.

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported through funding from the Natural Sciences and Engineering Research Council grant number 513272-17. NSERC RGPIN 2016-04669, and the Ontario Graduate Scholarship (OGS).

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.