Abstract

Background:

Nearly 65% of justice–involved youth have a substance use and/or mental health disorder. Although evidence–based practices have been established for adolescents with co–occurring mental health and substance use disorders, these practices are not widely used in juvenile justice agencies due to environmental and organizational complexities.

Methods:

Our study builds on Juvenile Justice—Translational Research on Interventions for Adolescents in the Legal System (JJ–TRIALS), a multi–site cooperative research initiative of juvenile justice and partnering behavioral health agencies. We also integrate state and county–level data to support broader assessment of key drivers of implementation success.

Results:

We present an economics/systems conceptual model describing how the environmental context, systems organization, and economic costs of implementation can affect implementation outcomes. Comparison of intervention condition (Core vs Enhanced) and pre–implementation costs (High vs Low) found differences in insurance reimbursements and types, as well as agency staffing characteristics.

Discussion:

Implementing new procedures or policies at a systems level must consider implementation outcomes in a broad context. Factors such as population demographics, primary care and behavioral health treatment capacity, unemployment rates, and public funding for treatment and other services are important in determining intervention success and sustainability.

Background

More than half of arrested or detained youth have a behavioral health disorder, encompassing both mental health and substance use disorders (SUDs). Approximately 1 in 5 justice–involved youth (20%) has an SUD. 1 Despite this high prevalence, only 15% to 23% of these youth receive treatment during detention or are linked to behavioral health services upon release.2,3 There remains a substantial unmet need for SUD treatment and other behavioral health services among justice–involved youth, which can only be addressed through coordinated efforts between juvenile justice and behavioral health agencies to support evidence–based screening, assessment, and referral to services. While mental health and SUD are often addressed by the same service providers, many services are delivered in specialty centers which focus in only one of these two areas.

Implementing evidence–based practices (EBPs) in juvenile justice and behavioral health systems is complicated because key components of the systems—for example, the financing, payment mechanisms, and organizational structure—can overlap. 4 For example, juvenile justice agencies receive funding from federal and state sources as well as from donor organizations, and each of these funding sources are used to support and pay for different services. Similarly, SUD treatment, and more broadly behavioral health services, has clinical components that can be billed to insurance companies or reimbursed through Medicaid, but also have other components related to behavioral health that are not reimbursed through the same mechanisms.

Although several EBPs have been established for adolescents with SUD, these practices have not been widely adopted in juvenile justice, and their partner behavioral health, agencies. 5 Therefore, even among those justice–involved youth who do receive services, many are likely not receiving evidence–based care. Calling upon these agencies to change current practices requires not only clinical evidence of best practices but also consideration for the organizational, financial, and environmental barriers to these changes.6–8 Research in this area falls within the discipline of implementation science.

To inform efforts to improve uptake of EBPs in juvenile justice settings, this study was funded as an ancillary study to Juvenile Justice—Translational Research on Interventions for Adolescents in the Legal System (JJ–TRIALS), a multi–site cooperative research initiative funded by the National Institute on Drug Abuse (NIDA). JJ–TRIALS featured 2 randomly assigned implementation interventions, Core and Enhanced, which were focused on improving screening, assessment, and referral to behavioral health services among justice–involved youth with SUDs. To support the evaluation of Core versus Enhanced, we conducted cost analyses to estimate total intervention cost and cost per implementation phase. These efforts provided data on the resources and financial burden of the interventions, but there are many other factors—at agency, county, and state levels—that likely affect implementation success. This article builds on the JJ–TRIALS study design and data elements by integrating primary data from JJ–TRIALS, including detailed implementation costs, with secondary data sources that describe systems–related elements outside of the intervention.

The purpose of this research is to present a more general model for considering implementation that emphasizes the importance of context and setting, using JJ–TRIALS as an example. While most multi–site trials focus on balancing randomization based on population characteristics, our query is that other factors relating to the context (eg, financing, staff load, and reimbursement rates) are stronger policy levers that may link directly to improved implementation.

Conceptual model

Most of the implementation science literature featuring SUD treatment interventions has focused on efficacy or effectiveness of implementing new technologies (eg, mobile phone applications) in traditional modalities of SUD treatment or primary care. Only a few studies have examined the interplay between unique systems (eg, justice, health, and school), contextual factors (eg, organization characteristics and culture), environmental factors (eg, sociopolitical and financing), and implementation success.9–13

Systems analysis complements implementation research to inform practical questions regarding viability, scalability, and sustainability of adopting new practices in different settings. Only a few studies have examined these concerns—mainly pertaining to the adoption of new technologies in health services delivery,14,15 or examining EBP implementation barriers in child services sectors, including juvenile justice. 10 To expand this important body of research and promote interagency collaboration among different sectors, additional systems–focused studies are needed to inform stakeholders of what types of investments (eg, personnel, facilities, and data systems) lead to more efficient implementation and better outcomes. Understanding the extent to which resource allocation and barriers to different implementation strategies may vary by agency or setting is also important.

We developed a conceptual model to guide our research (Figure 1). The conceptual model builds on the following theories and frameworks from both implementation science and systems analysis that encompass the multi–level and overlapping nature of the juvenile justice and behavioral health services delivery systems: (1) Exploration, Preparation, Implementation, Sustainment (EPIS); (2) Stages of Implementation Completion (SIC) framework; (3) Andersen’s Healthcare Utilization Model; (4) Social–Ecological Model; (5) Control Knobs Framework; and (6) the Cost of Implementing New Strategies (COINS) model. There is naturally a considerable amount of overlap between these frameworks, but also gaps regarding economic analysis, budget impact, and funding/reimbursement mechanisms. Our model both integrates these frameworks and fills these gaps.

Overlapping systems conceptual framework to evaluate implementation of behavioral health interventions in juvenile justice settings.

The primary framework guiding the main JJ–TRIALS protocol is the EPIS framework developed by Aarons et al. 16 This framework establishes 4 phases of change across and within organizational systems. For each phase, there are measures describing system–level factors (outer context) or within–organization factors (inner context) that can be targeted for change based on selected implementation goals. Additional elements from the SIC framework 17 were incorporated to define JJ–TRIALS’ 3 implementation phases (Core Support Activities, Experiment, and Post–Experiment) as well as the benchmarks for evaluating agency transitions throughout the implementation process. The COINS model 18 provided us with an example of how to map economic variables to the stages of implementation completion identified in SIC.

Andersen’s 19 Healthcare Utilization Model describes how 3 interconnected factors: predisposing, enabling, and need influence utilization of health care services. Predisposing factors relate to individual characteristics such as gender or race/ethnicity that can be associated with higher/lower levels of health care utilization. For example, among justice–involved youth, males are more likely to have a SUD, whereas females are more likely to have major depression. Race and ethnicity also affect the prevalence of SUD among justice–involved youth, with Non–Hispanic white and Hispanic youth having a higher prevalence of SUD than African American youth. 20 Enabling factors are external to the individual (eg, family support or health insurance), which promote access to health care services. Finally, need factors relate to the individual’s actual or perceived need for health care services. 21

The Social–Ecological Model and the Control Knobs Framework provided additional context for the economic/systems conceptual model. The Social–Ecological Model has been promoted as a framework for violence prevention by the US Centers for Disease Control and Prevention (CDC) as well as the World Health Organization (WHO). This model describes how 4 overlapping levels influence violence through an interaction between individual, relationship, community, and societal factors.22,23 This model has also been used to explain how SUDs and mental illness mediate the impacts of ecological factors in the commission of violence by youth. 24

The Control Knobs Framework takes a broader system perspective linking 5 main inputs to the system, also called control knobs, to intermediate indicators of system performance and system outputs. The 5 control knobs are financing, payment, organization, regulation, and behavior. The 3 intermediate indicators of system performance are access, efficiency, and quality. The 3 main outputs to measure the performance of a health system are health outcomes, financial risk protection, and user satisfaction. This framework has been used in countries around the world to understand and analyze how to improve health systems. 25

Table 1 summarizes each of these models as well as the factors that apply to our conceptual approach. Figure 1 shows our conceptual model which builds on previous models. Our visualization for how environmental context affects organizations and downstream individual outcomes is informed by the EPIS model, Andersen’s Healthcare Utilization Model, and the Socio–Ecological model. Selection of environmental and organizational variables to include in our model is also informed by the EPIS model. Relevant variables from the EPIS model that we also included are as follows: funding, patient need, organizational policies, and staffing characteristics. Other organizational variables in our model, including financing and policy variables, are informed by the Control Knobs Framework. Finally, we rely on the COINS model to inform measurement and visualization of implementation costs as stratified across implementation stages identified in the SIC model.

Foundational frameworks informing overlapping systems and economic analysis of JJ–TRIALS.

Abbreviation: JJ–TRIALS, Juvenile Justice—Translational Research on Interventions for Adolescents in the Legal System.

In our model, the 2 left boxes represent environmental inputs and organizational structure which are key drivers of behavioral health service outcomes. The focus of this article is on identifying key variables in the left boxes and data sources that can be integrated with implementation intervention trials to better control for—or measure directly—the broader context within which a randomized trial occurs. Inherent to these key inputs are the implementation intervention activities (by phase) and associated costs, which are often not measured alongside the implementation intervention trial. We see this as an urgent gap in the implementation science field, especially considering that demonstrating effectiveness does not mean the intervention is cost effective or fiscally sustainable over time.

Environmental context

Systems are by nature multi–level, multi–agency, and multi–stakeholder. A system can be defined broadly—for instance, the global technology system—or narrowly such as the town’s public safety workforce. In JJ–TRIALS, the overlapping justice and behavioral health systems are at the county level. Organizational and environmental factors which likely affect the implementation of Core and Enhanced interventions are shown in Figure 1. Environmental factors could include such things as federal or state policies which would stipulate insurance coverage, block grant funding, and age of eligibility for juvenile justice services. Such factors would also directly affect practices surrounding behavioral health services (at the agency or systems–level). For example, state policies such as expansion of Medicaid can create a more robust behavioral health services delivery system while also increasing access to that system 20 for youth exiting the juvenile justice system. Other environmental factors could include regional socio–demographics such as racial segregation, which can affect behavioral health service availability. 26

Organizational context

We also capture how an agency is structured including such factors as organizational culture, practices, and internal policies. Such factors are likely influenced by the greater environmental context and the details of program implementation. Program implementation includes the specific processes and costs associated with staffing and other delivery mechanisms of an organizational program or policy. The implementation of a behavioral health program would clearly affect the utilization of behavioral health services. However, as discussed in previous frameworks, there are also individual youth characteristics which interact with the organizational implementation to affect utilization. For example, multiple studies have found a history of racial disparities in referrals to behavioral health services from the juvenile justice system. 27 In this example, the individual youth characteristic of race interacts with organizational characteristics leading to differential access to treatment within the system.

Methods

JJ–TRIALS data

JJ–TRIALS recruited 36 juvenile justice agencies in 7 states to participate in the implementation intervention trial, with each agency (or “site”) representing a unique county. Two sites dropped out of the study, leaving a final sample of 34 juvenile justice agencies (34 counties), comprising juvenile probation offices and juvenile drug courts. The JJ–TRIALS protocol featured a cluster randomized design with a 3–wave roll–out. Within each state, participating counties were randomly assigned to Enhanced or Core during their respective wave. The final sample featured 17 Core and 17 Enhanced sites (across all waves) in 7 states. This design has been commonly used in service delivery and implementation research. 28

The JJ–TRIALS protocol covered 3 implementation phases: Core Support Activities (ie, pre–implementation/pre–randomization), Experiment (examined as early and late experiment phases), and Sustainment (following withdrawal of intervention activities). Under Core Support Activities, all sites received training in data–driven decision–making (DDDM) strategies to guide agencies through the process of implementing EBPs. This phase was conducted over a 6– to 9–month period before randomization to the study conditions Core or Enhanced. DDDM was a process by which key stakeholders within a system or agency collected, analyzed, and interpreted data/information to inform priorities and refine practices. 29 This process entailed selecting a goal (eg, increase referrals to evidence–based treatment) and incorporating a “goal achievement training” plan. While DDDM principles were expected to facilitate change, organizations needed additional support to apply these principles and to make changes that were to be successful and sustainable. The Enhanced arm included all Core Support Activities plus 12 months of active facilitation during the Experiment phase. Active facilitation was provided by an Implementation Facilitator, who worked directly with the juvenile justice agencies and their behavioral health partners to promote better screening, assessment, and linkage to care among youth identified as having a SUD. Knight et al 30 provided a full description of the JJ–TRIALS protocol.

The main outcomes being measured through JJ–TRIALS were defined along the Juvenile Justice Behavioral Health Cascade, 31 inspired by the HIV Care Cascade. 32 The Behavioral Health Cascade tracked unmet substance use treatment needs and gaps in service delivery through 6 activities: screening for SUD, assessment of need for SUD treatment, referral to SUD treatment, SUD treatment initiation, treatment engagement, and participation in continuing care.

JJ–TRIALS provided several key data sources for this study. First, JJ–TRIALS conducted a national survey of juvenile justice agencies and behavioral health providers, which included as part of the national sample all the Core and Enhanced counties within the JJ–TRIAL study, to understand the current state of juvenile justice and behavioral health systems, including the county–level organizational characteristics, financing, youth case flows, and services provided. Data from the national survey were used to identify organizational variables from juvenile justice agencies and behavioral health partners. The authors conducted supplementary economic analyses to estimate the costs incurred by sites during the pre–implementation phase. The cost analysis measured the activities during the Core Support Activities phase (pre–randomization/pre–implementation) and included time and other resources invested in meetings, calls, travel, and other activities during this period for both Core and Enhanced sites. A manuscript describing all aspects of the implementation intervention cost analysis of JJ–TRIALS is in preparation. 33

Secondary data sources

Secondary data to capture the spectrum of contextual factors were identified from national data sets, government reports, and other public sources.34–40 Data extraction was done for relevant years, counties, and states. County–level data were conceptually mapped directly to each Core and Enhanced site (geographic mapping was not conducted). Available data from 2010 to present were extracted to provide historical context for environmental factors when possible.

Conceptually mapping variables and identifying databases

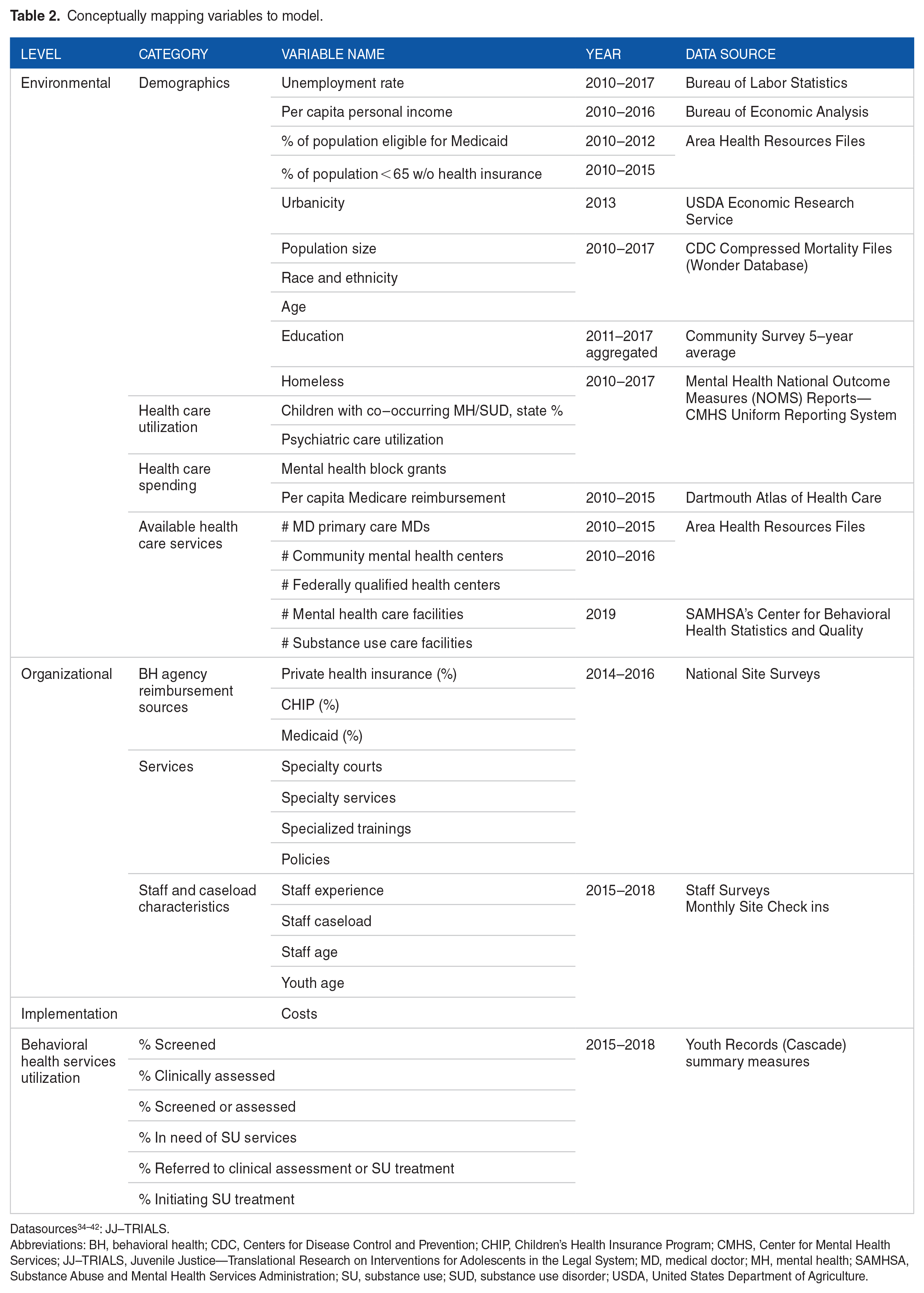

Data included primary data from JJ–TRIALS, cost data from the implementation intervention, and secondary data from public sources. All data were integrated within this study to provide an example of how to apply our proposed conceptual model. Table 2 presents an overview of the categories in our conceptual model, along with specific variables and data sources that conceptually map to each category. The data sources for each variable in Table 2 are listed in the final column.34–42

Conceptually mapping variables to model.

Abbreviations: BH, behavioral health; CDC, Centers for Disease Control and Prevention; CHIP, Children’s Health Insurance Program; CMHS, Center for Mental Health Services; JJ–TRIALS, Juvenile Justice—Translational Research on Interventions for Adolescents in the Legal System; MD, medical doctor; MH, mental health; SAMHSA, Substance Abuse and Mental Health Services Administration; SU, substance use; SUD, substance use disorder; USDA, United States Department of Agriculture.

As shown in Table 2, environmental factors comprised population “need indicators” and were grouped into 4 broad categories: demographics, health care utilization, health care spending, and available health care services. Demographic variables included markers of socio–economic status such unemployment rate, per capita income, and education status, all factors that could affect implementation. Other demographic variables identified included population size, race, county age breakdown, and homelessness. Finally, health insurance status was captured through the percentage of the population eligible for Medicaid and the percentage of the population below 65 years of age without health insurance. Both variables were included in the environmental category to help understand how the broader US health policy, such as the Affordable Care Act, could affect JJ–TRIALS implementation.

Other environmental variables were placed into the health care utilization category. These variables included percentage of children with co–occurring mental health and SUDs, and psychiatric care utilization. Health care spending encompassed per capita state mental health block grant expenditures and Medicare reimbursement rates. Finally, supply of health care services was measured through the number of primary care medical doctors (MDs), the number of community mental health centers, the number of federally qualified health centers, the number of mental health care facilities, and the number of substance use care facilities. The rate of co–occurring mental health and SUDs was included, both because the rates were not available separately and because co–occurring disorders increased the risk of justice involvement. 43 Existing data on psychiatric care utilization encompassed 2 separate areas which included community mental health inpatient utilization per 1000 and state hospital utilization per 1000.

Organizational factors captured both juvenile justice agencies and their behavioral health partners. Overall, 3 categories were used to describe these factors and include the following: funding, services, and staff/caseload characteristics. Funding variables identified whether juvenile justice agencies and their corresponding behavioral health partner reported receiving reimbursements from various types of health care financing. Financing sources included the following: private health insurance, Children’s Health Insurance Program (CHIP), Medicaid, and shared funding between the agencies. Service variables identify the type of specialty courts, services, trainings, and policies used by the agencies. Staff and caseload characteristics captured staff experience, while caseload characteristics include the average size of staff caseloads.

Pre–implementation costs were measured directly and include the costs to implement Core Support Activities leading up to randomization. Core Support Activities featured in–person trainings and pre–/post–training conference calls. The costs of travel and supplies were also measured. The main component of these costs was staff time and, in some sites, travel to trainings.

Analysis strategy

We conducted basic bivariate analyses to look for differences between Core and Enhanced from a broader context. 44 Data were relatively normally distributed, so t–tests of all variables from Table 2 were calculated to compare means between Core and Enhanced sites. Differences in urbanicity were tested via chi–square. We first examined differences between Core and Enhanced sites at the environmental level to test the success of randomization. We then examined differences between Core and Enhanced sites for organizational variables to understand the influence of characteristics within an agency that may influence outcomes. Key environmental and organizational variables that overlapped with broader US health care policy (unemployment rate, income level, Medicaid eligibility, Medicare reimbursement, behavioral health funding, and select staff characteristics) were used to examine how the pre–implementation costs that agencies incur to implement new practices might be associated with these variables. To incorporate intervention costs, we stratified sites by high and low pre–implementation costs. These costs included total costs of receiving Core Support activities during the pre–implementation phase. Costs of the experiment phase costs and behavioral health services data are still being collected. We categorized sites by high or low pre–implementation costs, as compared with the mean overall costs. Costs were also stratified by Core and Enhanced categorization for direct comparison.

Results

As shown in Table 3, there is considerable variation across all sites regarding many environmental and organizational variables, although the bivariate analysis find minimal significant differences (p < .05) by Core and Enhanced sites. None of the demographic variables, relating to the environmental component of the conceptual model, have significant differences between Core and Enhanced sites. For example, the unemployment rate is 5.3% on average across all sites, with no significant difference between Core and Enhanced sites. Similarly, the mean per capita income is approximately $43 000 and on average of 23% of the population across both Core and Enhanced sites are eligible for Medicaid. The percentage of sites that are urban, adjacent urban, or rural also does not vary significantly by intervention type. However, the rate of rural sites is over twice as high in Enhanced sites, as compared with Core sites. There is also no significant difference between Core and Enhanced sites for all other race, age, education variables as well as homelessness. Regarding the health care utilization variables included in the model, there are no significant differences between Core and Enhanced, but Core sites do have (on average) more children with mental health and SUDs (Core 3.5%, Enhanced 3.2%), more primary care physicians (Core 433, Enhanced 385), community mental health centers (Core 1.1, Enhanced 0.6), more federally qualified health centers (Core 9.1, Enhanced 6.8), mental health care facilities (Core 10.7, Enhanced 9.4), and substance use care facilities (Core 17.5, Enhanced 11.9). While not significant, there is a large magnitude in difference of mental health block grant funding by site type (Core $664 102.30, Enhanced $1 343 493.00).

Pre–intervention environmental and organizational variables.

Data from 2015 or closest available year; urban (rural–urban continuum codes 1–3 = 1), adjacent urban (codes 4, 6, and 8 = 2), and rural (codes 5, 7, and 9 = 3).

Abbreviations: AA, associate of arts; BH, behavioral health; CHIP, Children’s Health Insurance Program; FQHC, federally qualified health centers; JJ, juvenile justice; MD, medical doctor; MH, mental health; SUD, substance use disorder.

p < .05.

Some notable differences between Core and Enhanced sites are evident in looking at reimbursement sources. For example, the number of behavioral health sites that report receiving reimbursements from CHIP and some staffing characteristics vary significantly by Core and Enhanced sites. For example, 68.8% of behavioral health sites in Enhanced sites report that they receive reimbursements from CHIP, whereas 33.3% of behavioral health sites in Core areas report that they receive reimbursements from CHIP. Regarding staff, juvenile justice agencies in Enhanced sites tend to have more experienced staff as well as a higher caseload per staff than juvenile justice staff in Core sites. All other organizational variables are relatively equally distributed for Enhanced and Core sites. However, there were some notable differences between Core and Enhanced sites in 3 key areas: (1) the percentage of juvenile justice agencies reporting pooled funding with behavioral health agencies (Enhanced 60%, Core 40%), (2) the percentage of juvenile justice agencies reporting no reimbursement for some services (Enhanced 12.5%, Core 0%), and (3) the percentage of behavioral health agencies reporting contracts with juvenile justice agencies (Enhanced 18.8%, Core 38.9%). Core sites have more specialty programs than Enhanced sites. Specialty programs included the following: specialty courts, diversion programs, specialized pre–adjudication school, and re–entry programs. On average, there are about 2 specialized juvenile justice staff trainings per year across both Enhanced and Core counties and they have between 3 and 4 system–level reforms (Table 3). Pre–implementation costs are significantly higher in Enhanced versus Core sites (p < .05).

Figure 2 presents variables from Table 3 which differed significantly. Measures are stratified by both intervention type (Core and Enhanced) and pre–implementation costs (high and low). Results that compare intervention type show that the average juvenile justice caseload per staff is higher in Enhanced sites relative to Core sites (caseload in Core = 13.9, caseload in Enhanced = 23.7, p < .05). The average years of experience among justice agency staff are also higher in Enhanced sites relative to Core sites (15.5 years in Enhanced, 13.3 years in Core, p < .05). In addition, a higher percentage of Enhanced sites receive CHIP reimbursement (Enhanced = 68.8%, Core = 33.3%, p < .05). Results for pre–implementation costs show that sites with high pre–implementation costs tend to have significantly less CHIP reimbursements (high = 35.7%, low = 60.0%, p < .05) and Medicaid reimbursement (high = 78.6%, low = 95.0%, p < .05) as compared with low–cost sites. Regarding staff characteristics, the average behavioral health agency caseload per staff (high = 23.0, low = 7.0, p < .05) and mean years of experience for juvenile justice staff was higher in high–cost sites (high = 15.2, low = 13.8, p < .05) as compared with low–cost sites.

Comparison of pre–implementation environmental and organizational variables by intervention type and costs.*

Discussion

To our knowledge, this is the first study to link a multi–site randomized trial of EBP implementation interventions with an economics/systems analysis to provide a more nuanced examination of the context in which the trial occurs. Juvenile justice and behavioral health stakeholders will benefit from a detailed description of how to conduct theoretically guided implementation research and use these results as a general model for considering implementation that emphasizes the importance of context and setting to make policy–driven decisions. Given the need for EBPs in behavioral health care, notably for justice–involved youth, this study fills an important gap by describing how factors typically considered outside of the service delivery system can be integrated into an analysis of care delivery, implementation, and sustainability. As previously discussed, while justice–involved youth enter the juvenile justice system with high prevalence of behavioral health disorders, they are rarely connected with the services they need.

To better understand the disconnect between juvenile justice and behavioral health systems, this study works from a novel implementation science trial using enhanced facilitation and DDDM to help juvenile justice agencies work more efficiently with their behavioral health partners and engage youth with needed SUD treatment and other services. We have broadly considered the environmental, organizational, implementation costs, and how they affect the utilization of behavioral health services. We have also operationalized social determinants of health, within a juvenile justice context.

In our preliminary analysis comparing sites by intervention condition (Core vs Enhanced) and pre–implementation costs, there were few significant differences, demonstrating a robust randomization through the trial itself. We did, however, find differences in insurance reimbursements and types, as well as agency staffing characteristics. Given the relationship demonstrated in previous research between Medicaid insurance status and health outcomes for justice–involved youth, 45 this is an important finding that will be explored in future planned analyses. Lower reimbursement rates by both CHIP and Medicaid are also likely linked to the environmental context described in our model. For example, states that have expanded Medicaid through the Affordable Care Act may be more likely to also have lower costs as Medicaid reimbursements will be covering more individuals and more services. Another potential explanation is our observed, but not significant, difference in mental health block grant funding. Since CHIP is funded as a block grant, the higher CHIP funding in Enhanced sites could be a result of the higher amount of mental health block grants that those sites received. If this is true, then it demonstrates how environmental variables in our overlapping framework can affect organizational service implementation within the JJ–TRIALS context.

In addition, while we did not find statistically significant difference in urbanicity, the Enhanced intervention had a rate of rural sites that was over double that of the Core intervention. This has implications for implementation costs, as more rural sites likely face additional costs due to travel distance between agencies and to training centers. Rural sites also likely have less robust existing markets for behavioral health services. Given that these markets determine the availability of treatment services and staff, this finding also has important implications for service delivery.

Regarding agency staffing characteristics, it is perhaps not surprising that sites with more experienced staff have higher pre–implementation costs. However, future studies which link staffing characteristics with health services outcomes will investigate this relationship further. For example, more expensive, but also more experienced, staff may lead to higher agency efficiency and yield better behavioral health cascade outcomes for youth. Staff caseload at both juvenile justice and behavioral health agencies may also be important in explaining these outcomes. Sites with high pre–implementation costs also have much higher mean behavioral health caseloads per staff. This may be because sites with high costs, stemming from factors they cannot control like Medicaid/CHIP reimbursement rates and staff experience, seek to cut costs in areas that they can control such as caseload size. If this were the case, results would support calls for increased reimbursement for behavioral health services. While examining this relationship was outside the scope of this article, future studies should examine how such contextual factors influence implementation costs and cost effectiveness in meeting goals along the behavioral health cascade and youth outcomes.

The results of the above analysis provide actionable policies and practices that overlap with the environmental and organizational context that can influence the implementation of EBPs. While the results of this research are descriptive in nature, more powerful models can be developed to causually predict which environmental and organizational factors have the largest impact on outcomes. The outcomes that might be considered include costs, as implementing agencies and providers are continually trying to understand the most efficient way to use funds. Outcomes will also include behavioral health outcomes and utilization, to understand how environmental factors, such as reimbursement rates and Medicaid enrollment and organization factors, such as staff workload, can be changed to improve outcomes for justice–involved youth with SUD.

Conclusion

The application of our conceptual model to the implementation intervention study in JJ–TRIALS demonstrates the importance of identifying environmental factors outside of the traditional behavioral health care delivery system to evaluate the impact and sustainability of these interventions. This study provides a conceptual overlay of how environmental, organizational, and economic factors affect the downstream delivery of behavioral health services for justice–involved youth. We also build on the previous models to describe how to conceptually map variables in a systems analysis representing different sources of data to our conceptual model. Beyond serving as a foundation for future systems analysis on this topic, this study can also help practitioners identify actionable policy levels to connect justice–involved youth to needed behavioral health services. Future empirical studies will estimate environmental, organizational, and economic impact on behavioral health services delivery processes and outcomes.

Footnotes

Acknowledgements

The authors would like to thank the members of JJ–TRIALS Cooperative for their support. They also thank Dr Craig Henderson for his review and helpful suggestions on a previous version of the paper.

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the National Institute on Drug Abuse (NIDA) at the National Institutes of Health (NIH; R21DA044378). JJ–TRIALS data collection was funded under the JJ–TRIALS cooperative agreement, funded by NIDA at NIH. The authors gratefully acknowledge the collaborative contributions of NIDA and support from the following grant awards: Chestnut Health Systems (U01DA036221), Columbia University (U01DA036226), Emory University (U01DA036233), Mississippi State University (U01DA036176), Temple University (U01DA036225), Texas Christian University (U01DA036224), University of Kentucky (U01DA036158), and University of Miami (U01DA036233) subaward to Brandeis University. NIDA Science Officer on this project is Tisha Wiley. The contents of this publication are solely the responsibility of the authors and do not necessarily represent the official views of the NIDA, NIH, or the participating universities or JJ systems.

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Authors’ Note

Author Contributions

DM: Made substantial contributions to 1) manuscript conception and design, 2) acquisition of data, and 3) was involved in revising the manuscript critically for important intellectual content. BFH: Made substantial contributions to 1) manuscript design; 2) acquisition, analysis and interpretation of data and 3) drafted and revised the manuscript. KEM: Made substantial contributions to 1) manuscript conception and design, 2) acquisition of data, and 3) was involved in revising the manuscript critically for important intellectual content. All authors read and approved the final manuscript.

Ethical Approval

All procedures performed in studies involving human participants were in accordance with the ethical standards of the institutional and/or national research committee and with the 1964 Helsinki Declaration and its later amendments or comparable ethical standards. Data collection for JJ–TRIALS was granted Institutional Review Board approval from all 6 research institutions and the coordinating center. This article does not contain any studies with animals performed by any of the authors. Informed consent was obtained from all individual (human) participants included in the study.