Abstract

Algorithm–based clinical decision support (CDS) systems associate patient-derived health data with outcomes of interest, such as in-hospital mortality. However, the quality of such associations often depends on the availability of site-specific training data. Without sufficient quantities of data, the underlying statistical apparatus cannot differentiate useful patterns from noise and, as a result, may underperform. This initial training data burden limits the widespread, out-of-the-box, use of machine learning–based risk scoring systems. In this study, we implement a statistical transfer learning technique, which uses a large “source” data set to drastically reduce the amount of data needed to perform well on a “target” site for which training data are scarce. We test this transfer technique with

Introduction

The rapid rise in the collection of clinical data, made possible through the use of electronic health records (EHR), has unlocked the potential for machine learning techniques to provide warning of adverse patient events. One such opportunity is the prediction of in-hospital mortality. When equipped with early notice of high-risk patients, clinicians may be better able to allocate valuable hospital resources, provide appropriate care, and improve patient outcomes.

Increasingly, machine learning techniques are being used to detect adverse health events in hospital settings.1–9 Unlike risk scores developed on general populations, such as the Modified Early Warning Score (MEWS), 10 the Sequential Organ Failure Assessment (SOFA), 11 the Acute Physiologic Assessment and Chronic Health Evaluation II (APACHE II), 12 the Medical Emergency Team (MET), 13 and the systemic inflammatory response syndrome, 14 machine learning–based predictors can be customized to distinct patient subpopulations or specialist care facilities by training on data from a “target” population. However, such training is often ineffective, given that minimal amounts of retrospective data are available to serve as the target training set at most institutions. This is an especially challenging problem for relatively rare outcomes of interest because there are few positive class examples in those cases. Target data scarcity presents a significant barrier to the development of predictors using data science techniques and the adaptability of these predictors into widespread clinical settings.

Data scarcity can be overcome by supplementing examples from the target population with a “source” population, for which data are more abundant, in a statistical process known as

Methods

In this section, we describe the source and target data sets, the means by which they were processed, and the definition of the mortality gold standard, which was used to assign a positive or negative class label to each patient. We also describe the machine learning model and training algorithm and the transfer learning method that we used in these experiments.

Data sets and preparation

The source data were drawn from the MIMIC-III database, version 1.3, which include more than 50 000 intensive care unit (ICU) stays (≥15 years of age) from Beth Israel Deaconess Medical Center in Boston, MA, between 2001 and 2012. The target data set used in these experiments, obtained from University of California San Francisco (UCSF), included 109 521 adult inpatient encounters (≥15 years of age) in the UCSF hospital system. We used admissions from June 2011 to March 2016, and the EHR-derived patient charts for these encounters included data recorded in multiple wards. The original MIMIC-III and UCSF data collection did not affect patient safety, and all data were de-identified in accordance with the Health Insurance Portability and Accountability Act Privacy Rule prior to commencement of this study. Hence, this study constitutes nonhuman subjects research which does not require Institutional Review Board approval.

These two data sets differ in a variety of ways, most notably the wards and departments from which they are drawn within the hospital. In principle, closely matched data sets should be more amenable to transfer learning. However, MIMIC-III is a large, well-curated, publicly available data set containing patients who have been the subjects of intense monitoring, such that it represents one of the best available source data sets, in terms of quality. Furthermore, in keeping with the motivation of this article, it will often be the case that potential source data sets are mismatched with the target data set in one or more ways, and that the range of patients over which the target site would like to use a predictive system (here, all wards of the hospital, including the emergency department) may be substantially greater than the directly comparable setting in the source collection (ICU only).

In both data sets, the outputs of database queries were processed with Dascena’s custom algorithms, implemented in MATLAB (Mathworks, Natick, MA, USA). All available measurements of a given type (eg, heart rate) were ordered and screened for anomalous outliers using physiological threshold values. The data were binned into hourly windows, and all measurements of each measurement type were averaged (or summed, if appropriate) within a window. Many windows contained no measurements for particular channels; we applied causal, carry-forward imputation (zero-order hold) to carry forward the most recent past bin’s value for averaged measurements. If there were no previous measurements in a channel, a NaN (“not-a-number”) value was retained for the channel. We used measurements of (1) Glasgow Coma Scale (GCS), (2 and 3) systolic and diastolic blood pressures, (4) heart rate, (5) body temperature, (6) respiratory rate, and (7) peripheral oxygen saturation (Sp

Gold standard

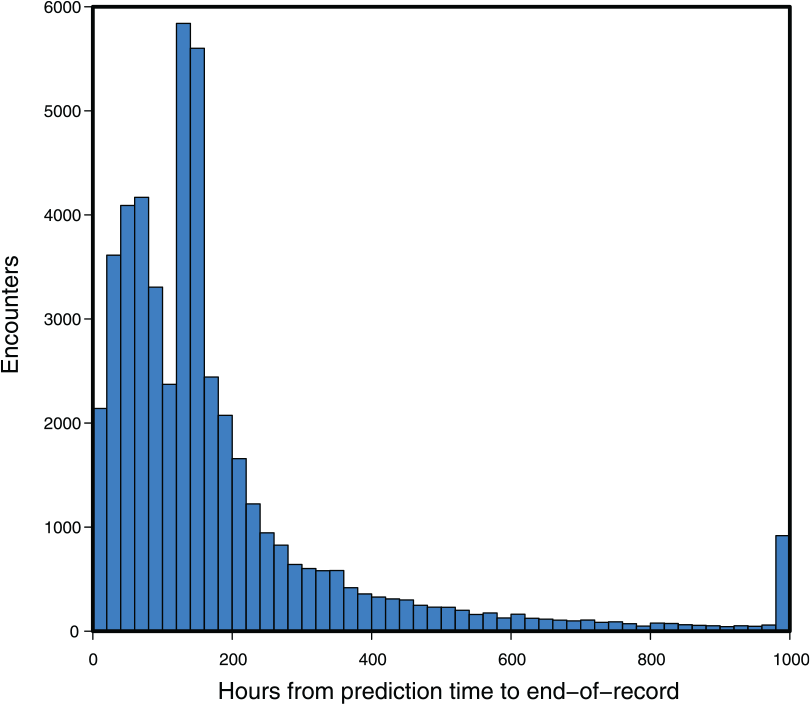

The clinical outcome of interest was in-hospital mortality. Each encounter was marked according to the following scheme: if the patient was recorded as dying in-hospital, and this death occurred during the present encounter, the encounter was marked as Class 1; otherwise, the encounter was marked as Class 0. Note that because the UCSF data set did not contain follow-up beyond the end of the encounter, some of the patients who expire marked as Class 0 may have expired after leaving the hospital. The prediction was made at a particular point in the timeline of the encounter, defined for the UCSF patients as 5 hours after the first time the patient has any one of the measurements (1-7) recorded (excluding GCS). The choice of prediction time was designed to ensure that the patient was physically present and under observation for a number of hours before the prediction was computed. For the MIMIC-III set, the prediction time was selected as 24 hours preceding the last instance of the measurements listed above. These choices were necessary because both the MIMIC-III and UCSF data sets included inexact recording of admission, discharge, and transfer times. The time of the encounter’s end-of-record event (death or discharge) may have been close or distant, relative to when the prediction was made (Figure 1).

The time intervals between prediction time and the end-of-record event at University of California San Francisco (n = 48 249 with 1636 in-hospital mortality cases: 3.39% prevalence). As the record is discretized to 1000 hours at most, the end-of-record event in those cases beyond this limit was marked at 1000 hours. In such cases, the clinical outcome, death or discharge, still determined the gold standard label.

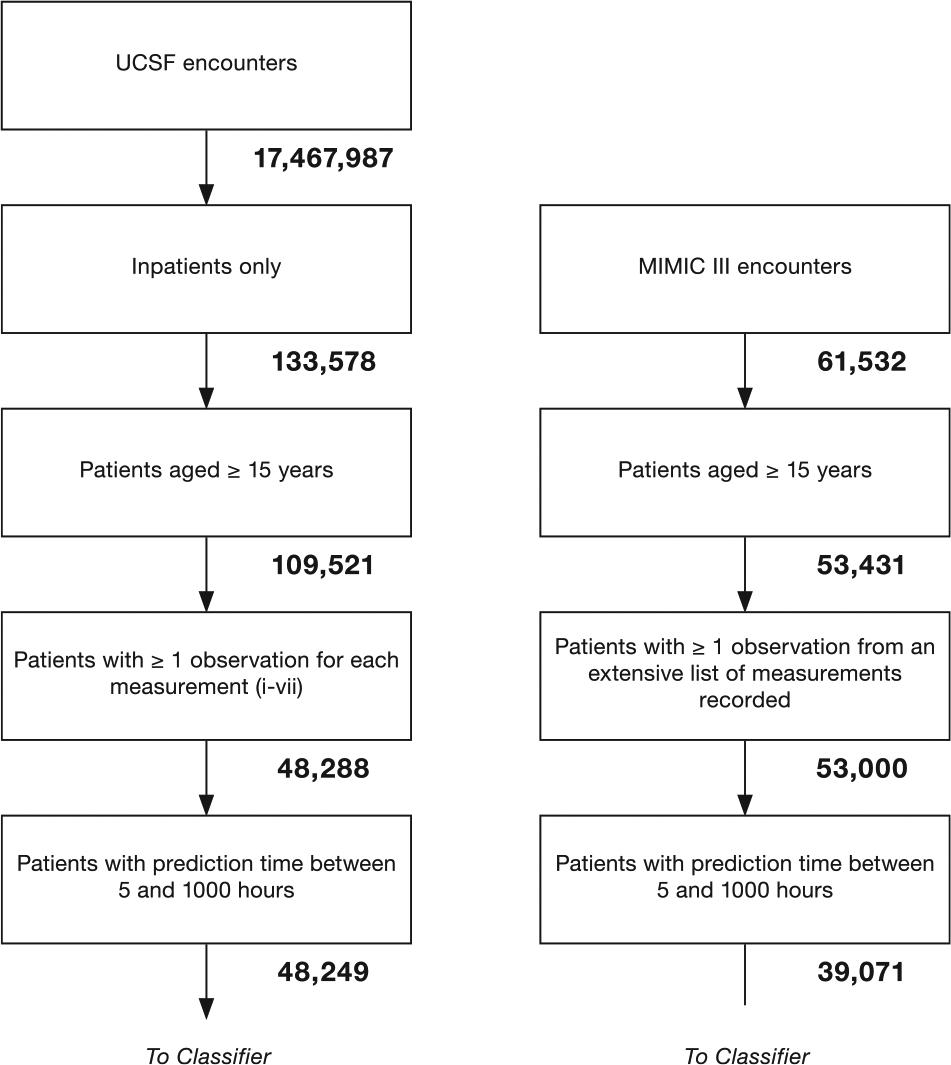

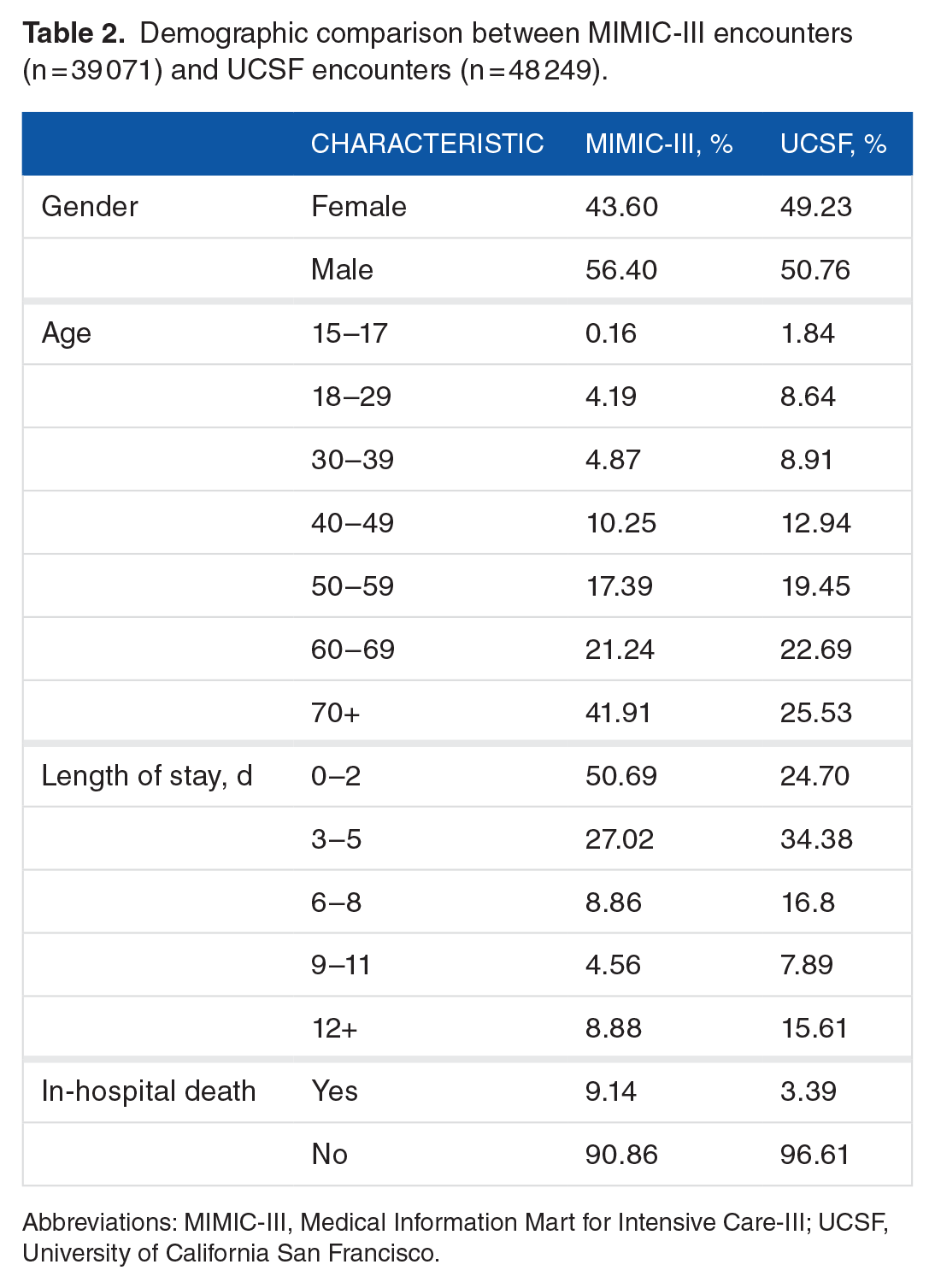

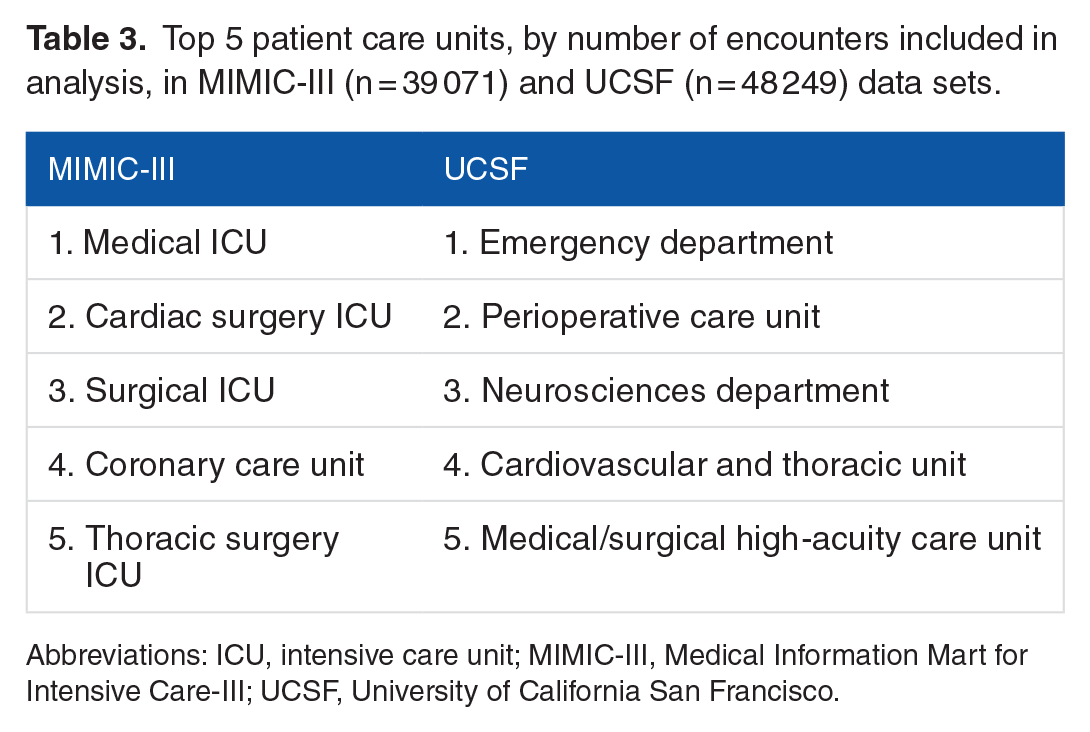

For both the MIMIC-III and UCSF data sets, we used the following procedures to determine which encounters should be excluded from further analysis. For UCSF, we first checked whether each patient had at least one of each of the measurements (1-7) at some point during the encounter. In total, 61 233 out of 109 521 encounters failed to have all of these measurements, leaving 48 288 UCSF encounters for further analysis. It was not required for patients to have measurements (8-14, laboratory test measurements) for inclusion; however, the data from these measurements were used when available (see Table 1). We next checked prediction time choices. In total, 13 929 of 53 000 MIMIC-III encounters and 39 of the remaining UCSF encounters were removed, as the prediction time either could not be determined or it was out of range (less than 5 or greater than 1000 encounter hours). This restriction was intended to ensure that only patients with sufficient encounter data for a prediction were included. For MIMIC-III encounters, when combined with the 24-hour pre-onset prediction time, this restriction also ensured that source set patients spent at least 29 hours in the ICU. The final data sets included 39 071 MIMIC-III encounters, of which 3570 were classified as class 1 (9.14% prevalence) and 48 249 UCSF patients, of which 1636 were classified as Class 1 (3.39% prevalence). We attribute the difference in prevalence to variance between the institutions and the corresponding patient populations. After the inclusion criteria were applied (see Figure 2), the UCSF and MIMIC-III patients had different age, length of stay, and sex distributions (see Table 2). In addition, MIMIC-III consisted of ICU patients exclusively, whereas the UCSF hospital system data consisted of patients across all hospital wards, which included ICUs, emergency departments, and general medical and surgical wards (see Table 3).

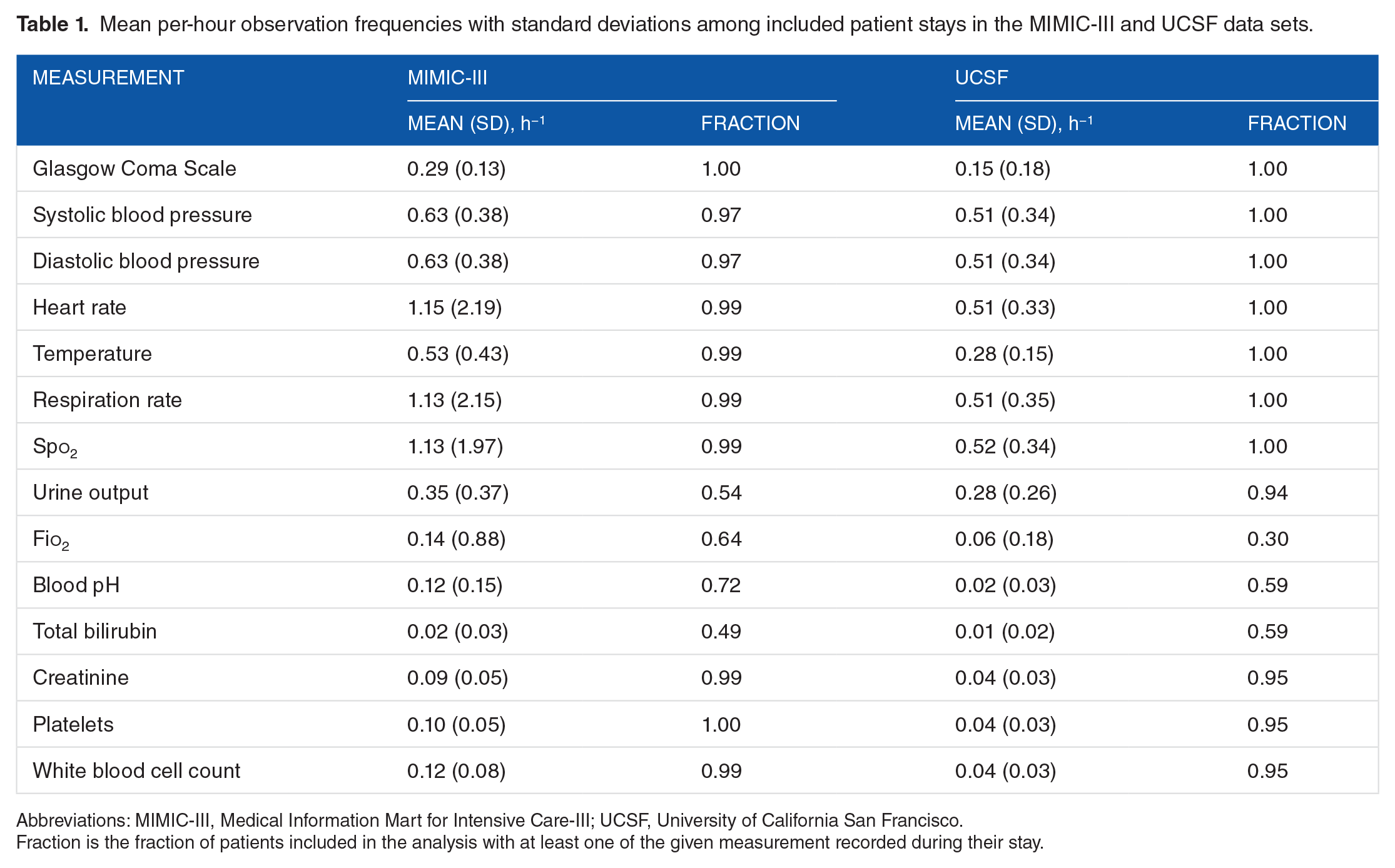

Mean per-hour observation frequencies with standard deviations among included patient stays in the MIMIC-III and UCSF data sets.

Abbreviations: MIMIC-III, Medical Information Mart for Intensive Care-III; UCSF, University of California San Francisco.

Fraction is the fraction of patients included in the analysis with at least one of the given measurement recorded during their stay.

Inclusion flowcharts for UCSF target data (left) and MIMIC-III source data (right). These flowcharts illustrate the process used to obtain the final target and source data sets. The number of encounters remaining after each step is underneath the corresponding block. MIMIC-III indicates Medical Information Mart for Intensive Care-III; UCSF, University of California San Francisco.

Demographic comparison between MIMIC-III encounters (n = 39 071) and UCSF encounters (n = 48 249).

Abbreviations: MIMIC-III, Medical Information Mart for Intensive Care-III; UCSF, University of California San Francisco.

Top 5 patient care units, by number of encounters included in analysis, in MIMIC-III (n = 39 071) and UCSF (n = 48 249) data sets.

Abbreviations: ICU, intensive care unit; MIMIC-III, Medical Information Mart for Intensive Care-III; UCSF, University of California San Francisco.

To create the training and testing inputs for the classifier, the patient’s discretized state was sampled over a 5-hour period from time of admission at 1-hour intervals, for each measurement present. When combined with the patient’s age, this yielded a 71-dimensional input vector,

Model and training algorithm

For this work, we chose to use an ensemble of decision trees. Ensemble classifiers combine the output from many “weak” learners, each of which would be insufficient to solve the desired learning problem on its own. The decision trees used as the weak learners in this scheme were constructed as a series of binary conditions; for example, “Is heart rate in the current hour >100 beats per minute?” Depending on whether each of these conditions was true or false, further conditions may have been checked, and a risk score was eventually assigned. Within each tree, we limited the number of such logical “splits” to 8, in turn limiting the tree to at most 9 risk cohorts, such that each individual tree was a fairly weak predictor. However, by combining 200 such trees, the ensemble was able to produce a strong, flexible, and expressive classifier. We used a boosting algorithm22,23 to construct each ensemble, in which individual trees were created by splitting according to Gini diversity index. 24

Transfer learning method

The transfer learning method provided the source (MIMIC-III) examples

To simulate a new deployment to a small care facility, while employing the large UCSF set as the target, we restricted the number of target training examples used to train the classifier. By sweeping this value from very small (0.025% of the UCSF set, less than 110 encounters) to the full size of the target training data set (43 424 encounters, 90% of the UCSF set), the performance traced a

Experimental procedures

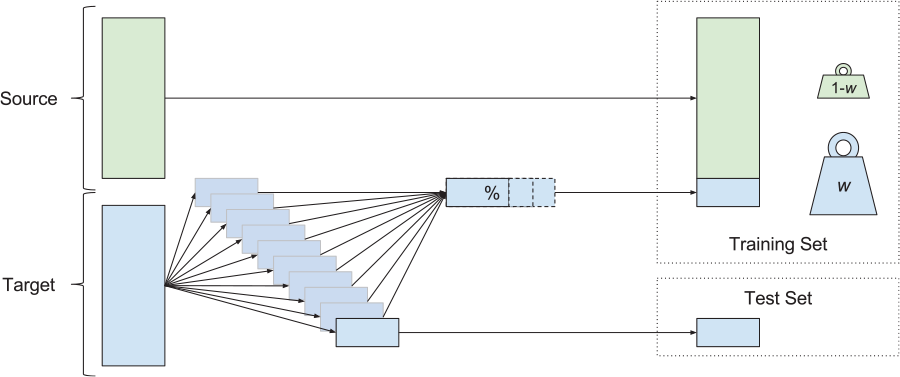

In the experiments that follow, we studied how the amount of available target training data and their weightings control the performance of the resulting predictor. We performed 10-fold cross-validation (CV), where the entire UCSF data set was used to construct the testing folds (ie, 10 partitions into which the whole set is divided). The results in this article show CV folds constructed without explicit regarding the temporal order of the patient encounters. We also executed a similar experiment investigating contiguous blocks of patient data; although this resulted in a very small decrease in performance, the results and trends were qualitatively similar. For each testing fold, a subset of the remaining, nontesting data was used to construct a target training set. This target training set was concatenated with all labeled examples from the source data, and these 2 training subsets were weighted against one another, forming a final training set. The procedure for preparing the training and testing sets is shown diagrammatically in Figure 3. This final training set was passed to the routine which trains the classification ensemble. This routine included a nested CV procedure to select

The construction of training and test sets for each cross-validation fold. Source data (green) and target data (blue) were both used in this procedure. For each of the 10 test folds, the corresponding training set was constructed using the whole source set and a variable portion of the remaining target data. During training, the examples from source and target sets received different weights. The performance of the resulting classifier was assessed on the test set.

The continuous-valued outputs which resulted when the ensemble was given the test examples (ie, the “scores” it gives, not binary labels) were then used, along with the ground truth labels on the test examples, to compute the receiver operating characteristic curve. The area under this curve (AUROC) was the key measure of classifier performance we assessed in this work. Statistical tests for AUROC comparison were conducted by pairwise

Results

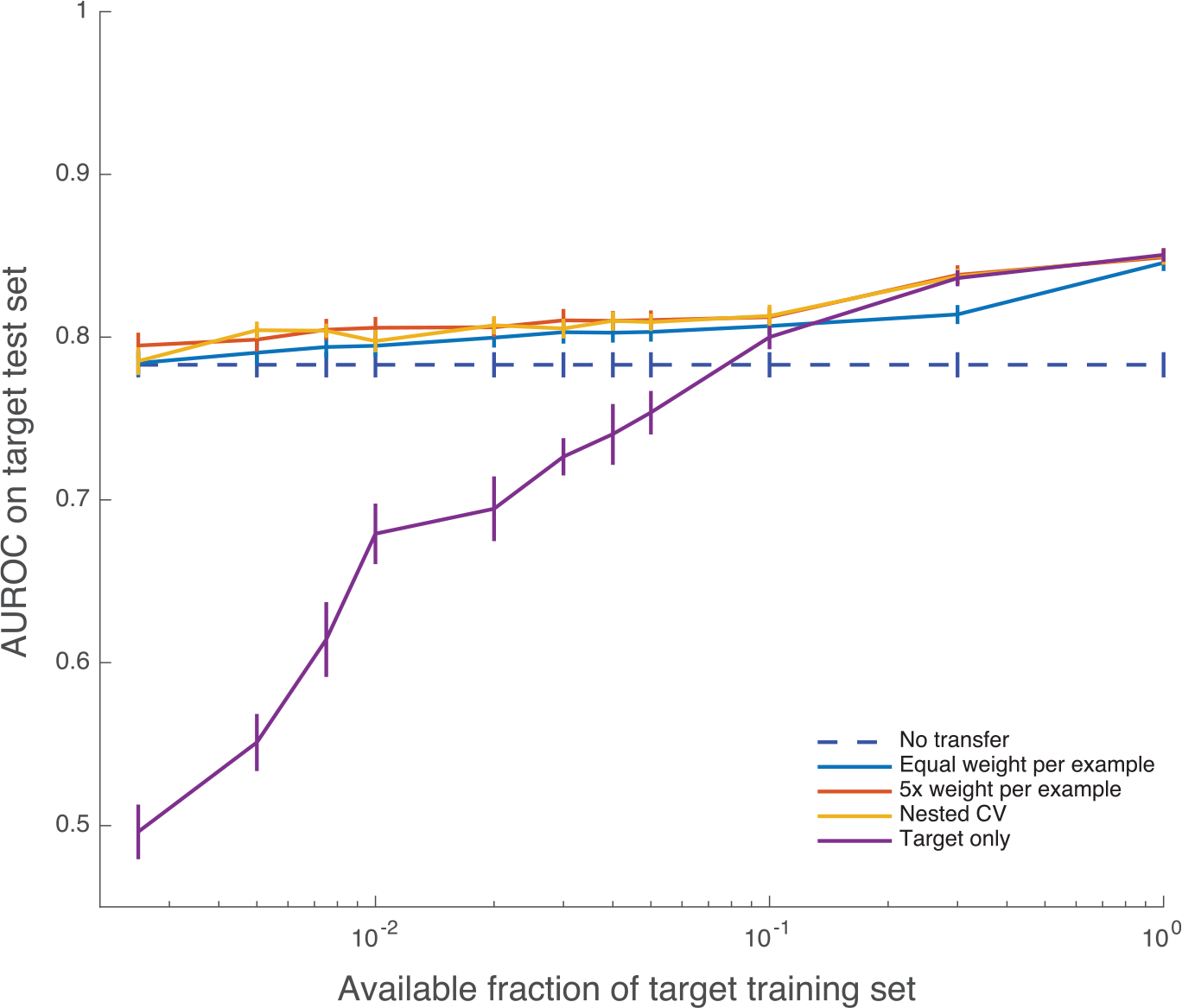

Learning curves for selected weighting choices (

Learning curves (mean AUROC) with increasing number of target training examples. Error bars are 1 SE. When data availability is low, target-only training exhibits lower AUROC values and high variability. AUROC indicates area under the receiver operating characteristic; CV, cross-validation. The maximum mean AUROC achieved by the nested cross validation method is 0.8498.

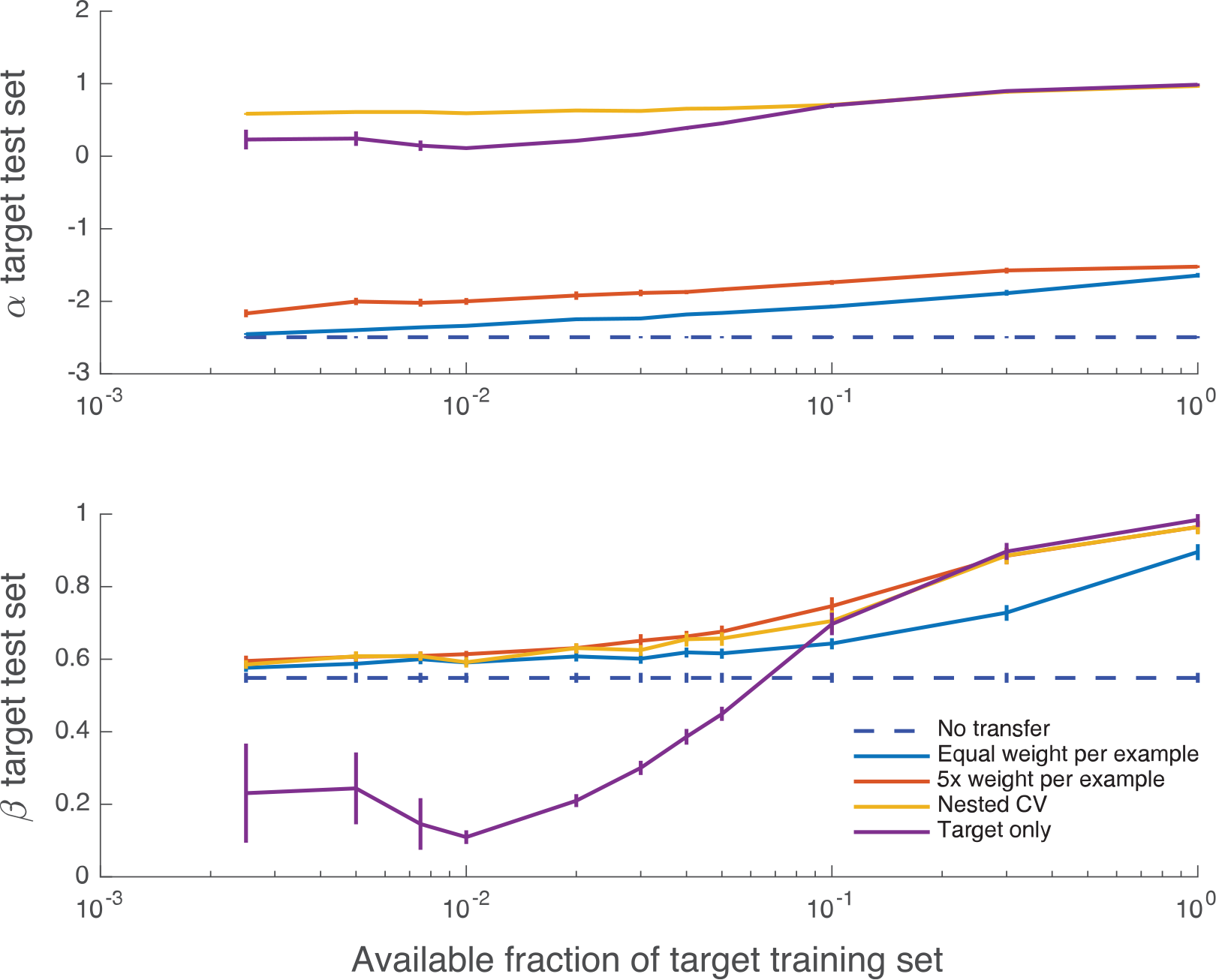

Figure 5 shows how Cox calibration coefficients α and β vary with the training set size. Although the bias coefficient α is typically far from the ideal value of 0, the measure of appropriate spread β is in 0 < β < 1 (indicating an over-responsive mortality predictor, relative to empirical risk) and approaches its ideal value of 1 for larger fractions of the training set and all weightings that include target data.

Calibration curves giving mean regression coefficients α (offset) and β (slope) between predicted and empirical log odds for mortality, as a function of increasing number of target training examples. Error bars are 1 SE; the mean and SE are calculated using CV folds. Perfect calibration corresponds to (α, β) = (0, 1). CV indicates cross-validation.

Discussion

According to these experimental results, our implementation of transfer learning substantially improves mortality prediction over classical, target-only training, and also over the source domain classifier. The source domain classifier may be considered a reasonable choice because the mortality prediction problems in separate medical centers are expected to be similar, but there are performance improvements to be obtained by transfer learning, in part due to demographic and care practice variation. However, what is most instructive is how few data are needed for transfer learning to provide gains over the source classifier. Using the smallest target training sets tested, 0.25% of the target training set, or fewer than 110 UCSF patients, the fixed, 5 times weighted (5×) transfer scheme shows clear gains over the source domain classifier (

The comparison between the 5 times weighted (5×) scheme and the nested CV scheme is also instructive. These 2 methods achieve very similar generalization performance on this pair of data sets, and it would appear that, without running nested CV, a 5× weighting is a reasonable and robust choice. However, because there is no way of knowing a priori that 5× weighting is indeed appropriate for a new target data set, the nested CV scheme is in principle the better choice for a new deployment.

Assessing the Cox calibration coefficients of the classifiers indicated that they were typically substantially biased (α ≠ 0), although the high AUROC indicates that post hoc calibration could improve these values, and the spread of mortality probabilities appears appropriate as the amount of training data increases. We note that the classifier training scheme used in this work is not explicitly intended to produce accurate mortality probabilities, but instead to reduce classification error and improve AUROC.

One limitation of these experimental results is that they were obtained with a single classifier architecture and training algorithm. In particular, there is the possibility that using other methods of regularization or ensemble pruning could reduce the overfitting observed when giving heavy weight to a small collection of target data. This could result in improved target-only training performance, even without using transfer learning and could similarly reduce the number of target examples required to produce effective classifiers, providing an alternative means of reducing the burden of data collection. We do not claim that transfer learning is the only way to obtain good performance with small target training sets; rather, as demonstrated by these experiments, it is an effective way of doing so. Our main finding is that transfer learning is a useful tool for increasing prediction performance in clinical settings with limited data availability.

There are several important lines of work suggested by the present experiment. More sophisticated transfer learning methods29,30 might yield increased performance or be even more economical in terms of required target data. These methods may be critical for other clinical tasks; although the present methods appear sufficient for mortality prediction among inpatients, mortality is relatively frequent (9.14% and 3.39% prevalence in the final source and target sets, respectively). Even with the present methods, longer periods of clinical data acquisition will likely be necessary for predicting especially rare events. This work also only addresses unidirectional transfer between 1 source and 1 target. In practice, it may often be necessary to select the best source data set from among a library of several, to aggregate examples from sets within the library, to subselect a population from within a larger set, or to simultaneously use data from several clinical sites in a bidirectional, multitask framework. Future studies are needed to address other important practical questions, such as to how to verify that a performance specification has been achieved, estimate the remaining data which must be acquired to do so, or empirically answer the above design questions on a case-by-case basis.

Conclusions

We demonstrated the benefits of applying a simple transfer learning technique to our mortality prediction algorithm,

Footnotes

Acknowledgements

The authors wish to acknowledge Hamid Mohamadlou, who contributed to the MATLAB code used to run these experiments; Nima Shajarian, who aided with the introduction; and Keegan Van Maanen, who assisted with figure design. They also thank the anonymous reviewers for their many helpful suggestions, which substantially improved the quality of the manuscript.

Peer review:

Five peer reviewers contributed to the peer review report. Reviewers’ reports totaled 1738 words, excluding any confidential comments to the academic editor.

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Research reported in this publication was supported by the National Institute of Nursing Research, of the National Institutes of Health, under award number R43NR015945. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Declaration of conflicting interests:

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: All authors affiliated with Dascena report being employees of Dascena. Dr Chris Barton reports receiving consulting fees from Dascena. Dr Chris Barton and Dr Grant Fletcher report receiving grant funding from Dascena.

Author Contributions

TD, QM, and RD conceived and designed the study. TD, QM, and MJ prepared the requisite data and code tools. TD executed experiments and analyzed the data. TD, JH, JC, MJ, GF, YK, CB, UC, and RD interpreted the data. TD, JH, MJ, and JC wrote the first draft of the manuscript. TD, JH, JC, MJ, GF, YK, CB, UC, and RD redrafted the manuscript. All authors approved the final manuscript and assume joint responsibility for its content.