Abstract

Use of wearables, which can be considered as devices worn on the body that capture dimensions of health, are common in research. Wearables are useful as they can be employed in a number of environments for a variety of populations and can record over short or long time periods. Recent advancements in technology have significantly improved the accuracy of sensors and the algorithms used to interpret their data. Commercial wearables, such as fitness trackers, smartwatches, and smart rings have seen parallel advancements. Perhaps the most common application of wearables in research is for the assessment of sleep and rest-activity rhythms as most wearables include accelerometers, a sensor commonly used to infer sleep and activity from movement patterns. Commercial wearables are appealing for use in research due to their widespread use in the general population, real-time data syncing capabilities, affordability, and their user-friendly, consumer-oriented design and interfaces. There are, however, several important factors to consider when selecting a commercial wearable for use in research. These include device specifications (durability, price, unique features, etc.), data accessibility, and participant factors. Keeping these considerations in mind can assist in the collection of high-quality data that can ultimately be used to improve population outcomes. The purpose of this methodological review is to describe considerations for the use of commercially available wearables in research for the purposes of assessing sleep and rest-activity patterns.

Introduction

The use of commercial wearables in research is rapidly expanding as device accuracy to assess dimensions of health continues to improve (Grandner & Rosenberger, 2019; Lujan et al., 2021; Robbins et al., 2024; Xie et al., 2018). Commercial wearables, such as fitness trackers, smartwatches, and smart rings, offer a number of different sensors that measure physiologic parameters of the wearer. For the purposes of this article, we consider “commercial wearables” as devices designed to be continuously worn on the body (e.g., wrist, finger, ankle, or arm) that track aspects of health across a 24-hour day and are available for purchase without a prescription. Although specific sensors differ by wearable, almost all, at minimum, include an assessment of sleep and activity patterns using accelerometry.

Polysomnography (PSG) is the accepted standard to evaluate objective sleep parameters such as stages of sleep and wake, and overnight sleep duration (Iber et al., 2007). Standard PSG may require expertise to apply and interpret, is usually done in a laboratory setting, and is not meant for long-term evaluation of sleep health. Self-report questionnaires and diaries of sleep and wake activity are widely used in research given the ease of administration and ability for longitudinal assessments. However, ratings are subject to recall bias and do not capture unaware sleep/wake activity (e.g., episodes of wakefulness overnight; Chan et al., 2018; Moore et al., 2015). To combat challenges with the use of PSG and diaries/questionnaires, actigraphs, which are devices worn on the body that detect movement, are often employed to estimate objective sleep and wake behavior in real-world and research settings (Lujan et al., 2021).

Historically, “research grade” wrist-worn actigraphs have been the primary type of actigraphy devices used by clinicians and researchers to estimate sleep and activity and are not intended for commercial use (De Zambotti et al., 2019; Patterson et al., 2023). These devices and the companies that provide them largely allow the researcher to customize settings specific to the research goals and provide access to raw movement data for analysis. However, research actigraphs can be relatively more costly and less aesthetic than commercial wearables, which can limit acceptability. Actigraphs may not offer highly integrated wearer interfaces or have real-time data uploads to evaluate data quality and adherence. Conversely, commercial wearables are already widely used by consumers, automatically upload and synchronize data in real-time, can be more cost-effective, and are designed with user interests and usability at the forefront, thus providing easily accessible, in-the-moment evaluation of sleep and activity.

Although accuracy largely depends on the device, software iteration, and methodologic approach, use of commercial wearables in research has gained popularity in recent years as many devices have demonstrated practical accuracy in determining sleep/wake parameters compared to both PSG and research-grade actigraphs (Doherty et al., 2024; Lujan et al., 2021; Robbins et al., 2024). When employing commercial wearables to evaluate sleep and activity patterns there are a number of factors researchers must consider. The purpose of this methodological review is to describe considerations for the use of commercially available wearables in research for assessing sleep and activity patterns. Future directions with a case exemplar will be illustrated.

Actigraphy Overview

Actigraphy Description and Specifications

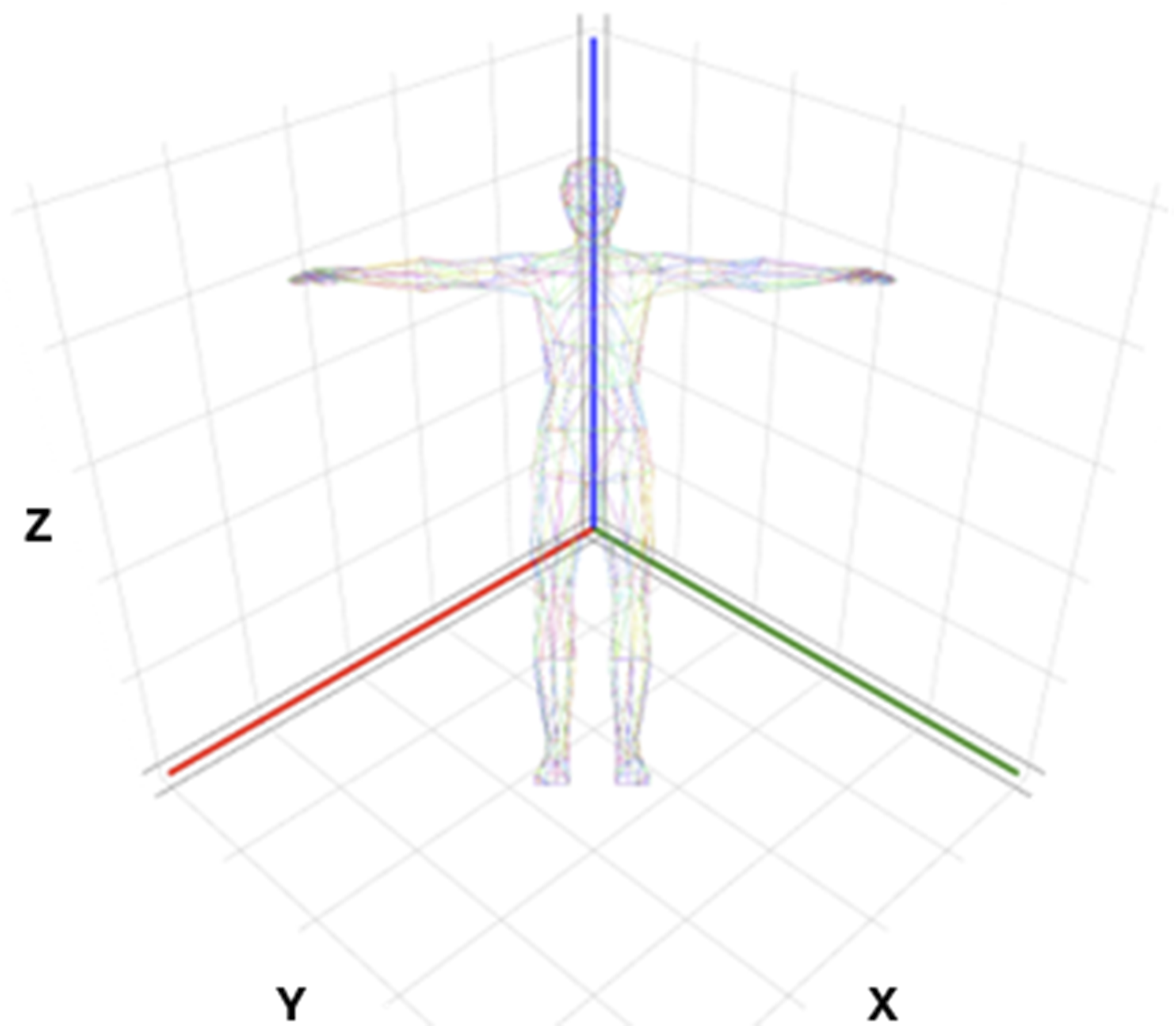

Actigraphy, in this context, is a method of measuring human rest and activity cycles (Patterson et al., 2023). An actigraph is a wearable device that collects actigraphy data using a built-in accelerometer that measures spatial displacement over time. Historically, there have been several types of accelerometers. Triaxial accelerometers, which measure motion in 3 planes of movement (Figure 1) are the most common type employed in modern actigraphy devices (Lujan et al., 2021). Actigraphs can be worn on any location on the body, and choice of location is motivated by population, ease of use, and outcome of interest. For example, actigraphs are most often placed on the ankles of infants for rest/activity evaluation or may be placed on the thigh if primarily interested in walking and sitting activity. For estimates of sleep and activity in adolescents to adults, wrist-worn actigraphy is the most common application (Patterson et al., 2023). Triaxial planes of motion. Triaxial accelerometers capture motion in 3 perpendicular planes (x, y, z): left/right, up/down, forward/backward.

Wrist-worn actigraphy for objective sleep and activity assessments became popularized beginning in the 1970s. Improvements in sensors, memory storage, and wireless data transfer capabilities over the ensuing decades have further propelled interest in actigraphy. Concomitant improvements in algorithms to detect sleep and activity from acceleration data has made actigraphy even more appealing and accessible for use in research. Actigraphs can be employed in numerous research settings including in the laboratory, hospital, field (e.g., home and community settings), and even in space (Basner et al., 2023; Flynn-Evans et al., 2016; Jaiswal et al., 2023; Meltzer et al., 2012; Patterson et al., 2023). An additional benefit for sleep/wake and activity assessments is that the actigraph can be worn 24 hr a day for short or long durations (days, months, and even years) during usual daily activities, thus providing a more integrated evaluation of actual rest-activity patterns over time. Lastly, actigraphs are more practical than PSG as they can be worn continuously without cords, leads, or multiple separate sensors. PSG is also largely only used during the main overnight sleep period, and thus naps and daytime activity are not captured.

Actigraphy itself does not directly measure sleep or sleep parameters. Instead, actigraphy indirectly infers sleep from movement patterns. For example, overnight sleep onset is inferred by the halting of body movement during the biological night, and sleep offset is recorded as the resumption of sustained activity. Bouts of movement recorded during the night could represent wake, and together with sleep onset and offset, are used to calculate parameters such as sleep duration, sleep efficiency, and wake after sleep onset. Similarly, activity is inferred from movement counts and movement patterns. Outcomes of interest may include number of steps, amount or duration of sedentary time, or timing of most and least active periods across the day (circadian outcomes).

Data from actigraphy devices can be analyzed in a variety of ways. Currently, there is no singular standard for calculating sleep and activity parameters from movement data captured by actigraphy. For sleep, several sleep-wake classification algorithms from acceleration data have been developed which may be used by individual investigators or automatically employed by companies manufacturing actigraphy devices (Patterson et al., 2023). It is also possible to manually estimate sleep-wake classification by direct observation of raw acceleration data. Similarly, depending on the parameters of interest, automatic or manual scoring of daytime activity is common.

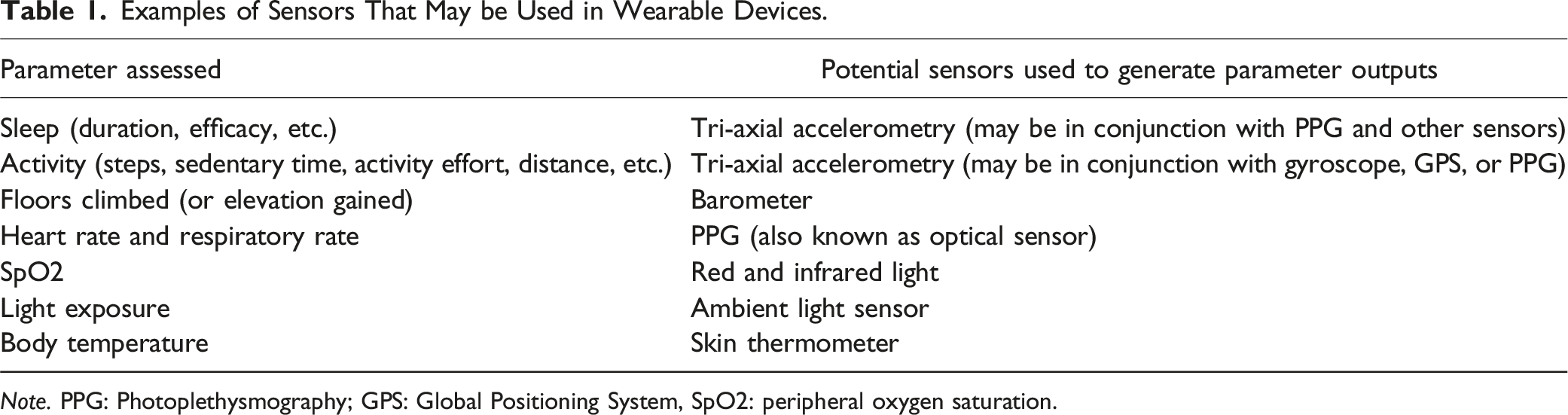

Examples of Sensors That May be Used in Wearable Devices.

Note. PPG: Photoplethysmography; GPS: Global Positioning System, SpO2: peripheral oxygen saturation.

Limitations of Actigraphy

Using actigraphy to evaluate sleep/wake activity is relatively low-cost and easy to employ in a number of research scenarios. However, there are several important limitations. Accuracy varies depending on the activity of interest (e.g., sleep vs. steps), the location where the wearable is worn on the body, and the scoring method used. For example, if the wearable is worn on the wrist/finger, walking activities (e.g., steps) will not be accurately captured when pushing a stroller or if using a walker because there is an absence of arm swing. Conversely, excessive wrist and arm motions while sitting may overestimate activity, like step-counts.

Since actigraphy estimates sleep indirectly through movement patterns (e.g., spatial displacement patterns of the device) rather than direct measurement, its accuracy in assessing true sleep parameters may be reduced, particularly with highly fragmented or abnormally timed sleep (e.g., patients with sleep disorders, shift-workers, or frequent nappers; Kainec et al., 2024). Actigraphy tends to overestimate total sleep duration, especially when time in bed before sleep is prolonged (e.g., lying in bed using a phone before trying to sleep) or in sedentary individuals. For example, actigraphy alone cannot distinguish between sitting quietly for an extended period of time (such as in a chair watching television) and taking a nap over the same time interval.

Incorporating additional sensors (e.g., PPG) and applying advanced machine/artificial intelligence learning algorithms to wearable data has enhanced sleep-wake classification accuracy (Sansom et al., 2023; Schwab et al., 2018). However, limitations for data accuracy remain prevalent, especially when sleep occurs outside of the main overnight sleep period and when the user relies only on manufacturer-reported outputs (e.g., no review of raw data; De Zambotti et al., 2019; de Zambotti et al., 2024; Park et al., 2024; Sansom et al., 2023). Further, accuracy varies by specific sleep-wake classification algorithm, and it may be challenging to accurately evaluate sleep/wake activity in some populations. In addition to the limitation of requiring spatial displacement for accurate interpretation of actigraphy outputs (as described above), integrated algorithms that incorporate additional physiologic parameters such as heart rate to report sleep/wake patterns may be prone to inaccuracies in certain populations, for example in patients with arrythmias. Thus, it is imperative to consider which sleep or activity parameters you are interested in, how each parameter is derived from the specific device chosen, and what may bias the accuracy of results.

Commercial Wearables

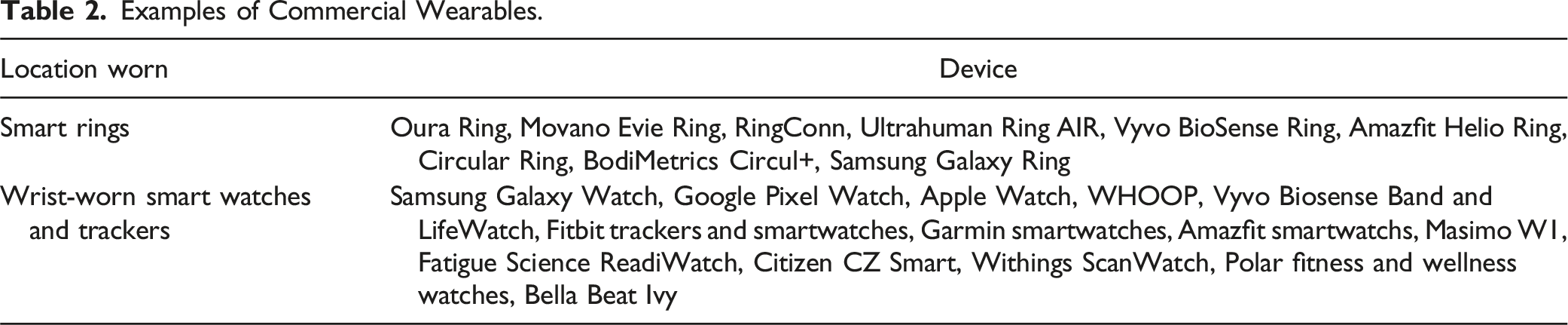

Examples of Commercial Wearables.

We are likely to continue to see improvements in commercial wearables for consumer and health applications, which makes them an attractive option for researchers. Importantly, research participants are often familiar with commercial wearables which may assist in participation acceptance and adherence. Given the widespread public use of commercial wearables, it is also possible to collect data from participants’ personal devices or utilize wearable data from public repositories (e.g., from the NIH All of Us Research Program) for research purposes.

Consumer wearables have all the limitations of research-specific actigraphs with some additional caveats. Researchers have limited control over customization, have restricted access to cloud-based data platforms, and have less flexibility in the types of data collected. Perhaps most importantly, consumer companies use proprietary algorithms to display sleep/wake activity using a combination of one or more sensors (Lee et al., 2019). This means that the researcher largely does not have access to raw sensor data and cannot independently validate the accuracy of data outputs. This may be changing, however, with third-party apps that allow some raw data to be viewed (such as SensorLog for iOS), although not widespread or conducive to all commercial wearables. It is still imperative that the researcher identifies what the given domain value means in terms of its definition. For example, sleep duration displayed from a wearable usually only accounts for the overnight, or “main” sleep period. Naps or daytime sleep may be categorized as an “additional” sleep period. Thus, if the researcher was interested in total sleep time, they would need to add together the main and additional sleep periods to obtain total sleep duration of a given day.

Moreover, each wearable manufacturer uses slightly different algorithms, and each device and software update may change data outputs (De Zambotti et al., 2019; de Zambotti et al., 2024). These issues can limit comparisons between studies and between participants, especially if a major software update occurs during the course of a study. A specific wearable may be purchased for a study at the outset, and that wearable could be discontinued or undergo a major software update without warning. While support for discontinued devices is typically offered, obtaining new stock may not be possible (de Zambotti et al., 2024). Wearable updates are especially important to consider when evaluating accuracy, as validation studies are specific to the device and software iteration and any changes may alter findings. Further, wearable validation for accurate sleep-wake classification by in large have only included healthy volunteers, and/or only included a single night in a laboratory setting (Chinoy et al., 2021, 2022; Robbins et al., 2024; Schyvens et al., 2025). Thus, pragmatic accuracy across a wide variety of populations is largely unknown.

Overall, commercial wearables have become a mainstay in sleep and activity research, and this is only likely to expand in the future. There are however important limitations that raise concerns for reproducibility, and commercial devices cannot, at this time, completely replace the need for research-grade actigraphs. If choosing to use a commercial wearable for sleep and activity monitoring in research, there are several considerations that can maximize the collection of high-quality, complete, and accurate data.

Considerations for Use of Commercial Wearables in Research

Specifications

Commercial wearables can vary widely in their features and design. Researchers must consider each component to determine which device will meet the demands and goals of the study. Considerations include data collection options, data accessibility, battery life and sizing, and device durability and price.

Data Collection Options and Device Paring

The data that a wearable collects is a paramount consideration. Wearables can collect and report different activity data, such as normal walking activity, vigorous activity, vital signs, and/or sleep. Additionally, devices can have varying levels of specificity for different data variables. Researchers should select a wearable that can accurately measure the most prioritized variables for the study. For example, a study whose primary objective is tracking sleep may require a different device than a study that aims to track exercise. It is important to note that several commercial wearables perform similarly in terms of assessing sleep duration, heart rate, and step counts (de Zambotti et al., 2024; Fuller et al., 2020; Robbins et al., 2024; Xie et al., 2018).

Commercial wearables work by pairing to a smartphone or tablet/computer via Bluetooth (by and large). Participants must have, or be given, one of these devices for study participation. If the participant themselves does not have the appropriate type of device for pairing, they could use the device of a household member, or, if the research team will have frequent contact with the participant (at least weekly), then it is possible to pair the participant’s wearable to a study device that would synchronize at study visits.

The wearable chosen may limit participation if the associated app is not compatible with the participant’s smartphone (or tablet/computer). For example, if the wearable is only compatible with iOS systems, then participants with android devices would not be able to synchronize their wearable data and vice versa. Once verifying that the participant has access to the app or software, the account creation and Bluetooth pairing process may vary across wearable devices. Setting up a wearable, including downloading the associated app, signing in, completing the initiating process, and pairing the wearable to the app may be challenging for some participants. If possible, it is recommended that the research team assist participants with setting up the wearable on their device in-person. If unable to assist with in-person set up, detailed step-by-step written instructions with images (for both android and iOS) is crucial. Having a well-trained team member walk the participant through setup over the phone/video conference is also helpful.

Software reliability is also a consideration. Although the wearable and pairing process may be simple, it should be considered how consistent the data are uploaded from the wearable itself to the app software. Some apps consistently and automatically upload data from the wearable. However, some software is less consistent and may require the app itself to be opened in order for the wearable to synchronize to the app. The more often a participant needs to open the app to manually synchronize their wearable data, the more opportunity there is for data loss, and the more of a burden it may be for the participant.

As the wearable’s app is designed for consumers to use with their smartphone, it is not always possible to prevent research participants from viewing their wearable data when they open the app. Thus, there is a potential for bias as participants may change their behavior in response to viewing their data. For example, participants may increase their step count or go to bed at a different time after viewing and reflecting on their data as seen on the app. This may be a particularly important consideration when conducting a clinical trial or when participants need to otherwise be blinded to a study outcome.

Data Accessibility

Options for data collection from commercial wearables include collecting user summary data directly through their respective applications or through data sharing options. Generally, commercial wearable companies do not provide access to raw data. However, they frequently provide data access through software development kits (SDKs) and Application Programming Interfaces (APIs). An API is a mechanism that allows two software applications to communicate with one another using a specific set of rules and protocols. Many wearable manufacturers use what is called “open API”, which is a publicly available and free API. If an API is available, but not open (referred to as closed-source or private API), then it usually requires a private agreement with the company for access. In addition to cost, these private APIs may limit the type and volume of data that can be accessed. SDKs are sets of software tools that allow the user to build software for a particular platform (e.g., a developer builds an app that integrates with another program). Setting up APIs and SDKs are doable by an average individual, but it is recommended that the researcher work with a web developer or someone with expertise in this field, as set-up can be quite technical. There are also a number of third-party companies that specialize in assisting researchers with accessing wearable data, including Fitabase (Small Steps Labs LLC, San Diego, CA) and Arcawatch (Arcascope, Arlington, VA).

Accessing user data through an API interface enables the researcher to automatically retrieve data from the application associated with the wearable (e.g., from the app that the wearable syncs to) that they otherwise would not have continuous access to. The available data are often more detailed than what is available for users in the wearable app. For example, if interested in activity counts, the researcher may be able to extract up to minute-to-minute activity counts through API. Activity details can also be automatically extracted, such as time and date of sleep onset/offset and timing of recorded naps. Further, the researcher can, in real time, monitor wearable battery life, synchronizing history, and off-wrist detection which is helpful to facilitate protocol compliance.

Summary or user-facing data from wearables is also accessible without the use of API. Users can export their data which can then be accessed for analysis. If wearables are set up with study logins provided by the research team, the researcher may have access to user data if web-based platforms are available (e.g., the researcher can log-in remotely to the user account from the manufacturer’s Web site). Regardless of how data ultimately will be accessed, it is a requirement (for almost all wearables) that the wearable sync with the associated app (which requires a smartphone or tablet/computer) to allow for data from the wearable to transfer to the manufacturer’s cloud-based storage system. Each wearable differs in the length of time data can be stored locally on the wearable between syncing, usually about seven days depending on the type of data. Ideally, once the wearable and app are set up, synchronizing happens automatically. However, this is not always the case, and the research team must continually monitor syncing and work with the participant to ensure complete data collection.

Battery Life and Sizing

In terms of battery life, and especially for studies requiring continuous monitoring for an extended period of time, it is helpful to set up standard reminders for participants of when to charge the wearable, and reminders to put the wearable back on after charging. In certain studies, prioritizing a longer battery life may be more important than other considerations. For example, if participants are older adults with cognitive dysfunction, a long battery life limits the need for the participant to remember to charge and put back on the device. If the study is using study-provided wearables, or the wearable is linked for remote monitoring, the research team may be able to remotely monitor battery life and provide directed participant instruction as needed.

Sizing options of wearables may limit who is able to wear them. Devices with sizing options allow for multiple populations to participate in the study, such as pediatrics and adults with varying body mass indexes (BMIs) and wrist sizes. Most fitness trackers and smartwatches have adjustable wristbands, come with more than one band for appropriate sizing, or have different band options available for purchase. Specific to smart rings, the ability to have them fit accurately can be challenging as they are not adjustable. In this case, the researcher would have to individually size each participant as opposed to wrist-worn devices that more easily accommodate size variations. Sizing may also be a factor if the participant gains or loses weight or is prone to extremity edema during the study.

Device Durability and Price

The nature and purpose of the study warrants inspection of a device’s durability and cost. If participants need to wear the device in water, including the shower or water activities, the device should be waterproof/resistant and may need to have water lock capabilities. A water lock prevents water drops (like during a shower) from activating the touchscreen and sensing inaccurate movement. Researchers should consider more durable devices (and bands) for longer studies, and durability considerations also depend on the population. For example, healthcare workers frequently wash their hands and would be exposing the wearable to frequent soap, water, and sanitizing chemicals that may disrupt the device’s normal functioning. Someone working with machinery may have limitations on the type of device that can be used.

Commercial wearables can be relatively inexpensive compared to research-grade actigraphs (∼US$80–400 compared to ∼US$200–1000 per device). The growth and sophistication of commercial wearables has created large price ranges across and within brands, usually depending on the model or type of device. Fitness trackers tend to be the least expensive, with brand-name smartwatches and smart rings being the most expensive. At the higher end, some commercial wearables require a monthly subscription for use, which may limit usability for research. Additional cost factors include the number of participants in the study (especially if participants will keep the wearable following the conclusion of the study), potential for lost devices, and features needed. For example, if the researcher is only interested in sleep and activity data, it may not be necessary to purchase a smartwatch with phone or messaging features. If using a lower cost wearable, a lost device during a study may be less concerning and is more easily replaceable compared to more costly devices. Further, a commercial wearable could be used as a recruitment incentive and study retention tool where participants may keep the wearable for their own personal use after the study (if the protocol allows); which can also alleviate the burden of facilitating equipment return.

Participant Considerations

There are technical factors that the researcher must consider regarding study outcomes and measurement accuracy, and there are also important factors related to participants themselves. These include the difficulty of using the wearable regarding the specific interface design and pairing process, and participant confidentiality. The interface design is an important consideration for participants, as it is the main part of the device they will use and interact with. Participants should be able to understand how to use the device itself, including its specific features and specs, depending on the requirements of the study. For example, if wanting to track exercise, participants would need to be able to start and stop a workout on the wearable. Also, for accurate outputs, the user must input information such as age, sex, height, and weight. The participant must be able to enter this information correctly (if it is not done by the research team).

Devices may also have a small touchscreen, a large screen with push-buttons, or a combination of the two. If participants are unable to see the words on a small screen, or have difficulty toggling buttons, it may lead to frustration and ultimately data loss. Understanding how to navigate the device interface and turn on or off specific functions, including synchronizing the device, is vital for accurate and complete data collection.

Participant Confidentiality

Commercial wearables and their associated apps require user permissions and personal data to calculate their parameters. Researchers may have access to all or some of the wearable data, including metadata. To protect participant confidentiality, there are a number of options to consider. If accessing a participant’s own wearable data, the researcher can limit what data are shared with the research team to only that which are relevant for the study.

If providing a wearable for the study specifically, several steps can be employed to protect confidentiality. When setting up an app for a wearable, the participant is required to enter an email address and create a user account. The researcher can set up research-specific email accounts that the participant uses to sign into the app. This email address is anonymous and not linked to any other applications within the smartphone or tablet/computer. App setup also often requires a birthdate, and an anonymous birthday can be used, preserving participant age. Appealingly, having the research team provide the login for participants allows the research team to set up the birthday and other necessary inputs (such as height and weight) remotely, which can improve data accuracy.

Considering the above factors will make the process of efficient data upload easier and less overwhelming for participants. Researchers must be able to use and understand the device interface, successfully link their device to the paired software, and be able to understand the process of troubleshooting if something unexpected occurs. Participants who are confident in the use and manipulation of their devices will, in turn, make for better data quality and more complete data acquisition for the study.

Case Exemplar

Here we outline a case exemplar from our research where we employ a commercial wearable (Fitbit Inspire 3, Google, San Francisco, California, henceforth referred to as “tracker”) to track sleep and rest/activity patterns for an ongoing study. This is for an observational study of patients with planned and emergent surgery who have an admission to the intensive care unit (ICU) immediately postoperatively. If surgery is scheduled at least one week from study enrollment, we send the tracker in the mail and have participants wear it at home preoperatively. We then have participants wear the tracker postoperatively for up to seven days in the hospital. Finally, some participants additionally wear the tracker for two consecutive weeks at one and six-months post-hospitalization. We chose this particular tracker because of the cost, accuracy, and ease of use. We knew that some trackers would be lost and that it would be difficult to facilitate equipment return given the population and impending hospitalization. Thus, we planned to have participants keep the tracker for their own personal use after the study, eliminating the need for equipment return. This tracker has also demonstrated reasonable accuracy compared to PSG and research-specific actigraphy (Chinoy et al., 2023; De Boer et al., 2021; Hassinger et al., 2024; Lim et al., 2023). Although the user interface is small, for this study we did not need participants to navigate to any screens once setup. Finally, the tracker app is easy to set up and is compatible with both iOS and Android. Overall, we felt the choice of tracker for this particular study best met the needs of both the researchers and participants.

Development of Data Collection Infrastructure

All data collected from the tracker is summarized and then automatically downloaded to REDCap. To enable this, the first step was identifying what information was available from the tracker and then build data collection questionnaires in REDCap that captured the information of interest. Some data needed for the study (like 24 h sleep duration) required formatting that was not readily available by the tracker API return value. Thus, in some cases, we had to apply additional calculations and transformations to format the data in a way that met the needs of the study. For longitudinal data, we set up our REDCap to capture tracker data every calendar day for each data collection timepoint. For example, for the 7-day in-hospital timepoint, data are automatically downloaded from the tracker’s cloud-based storage system (using API) every 24 hr for 7 consecutive days and transferred to the REDCap database.

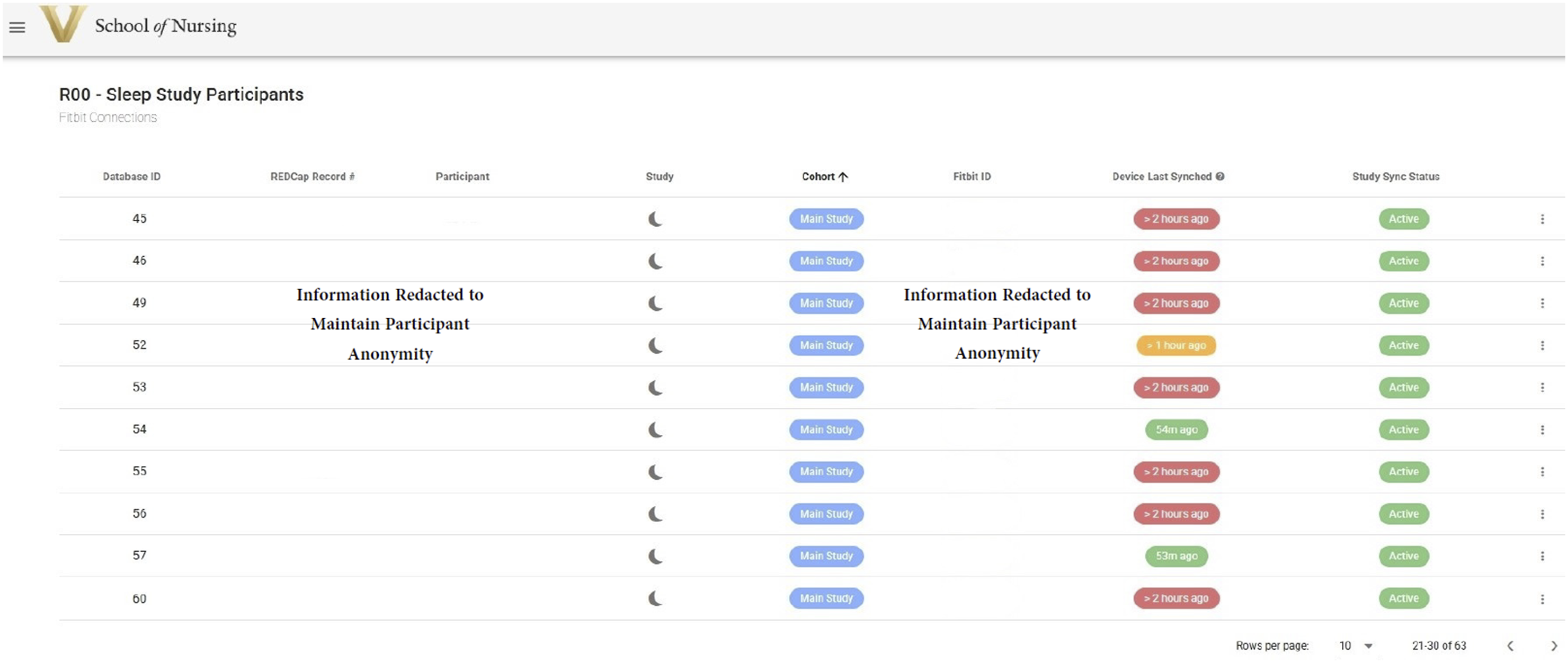

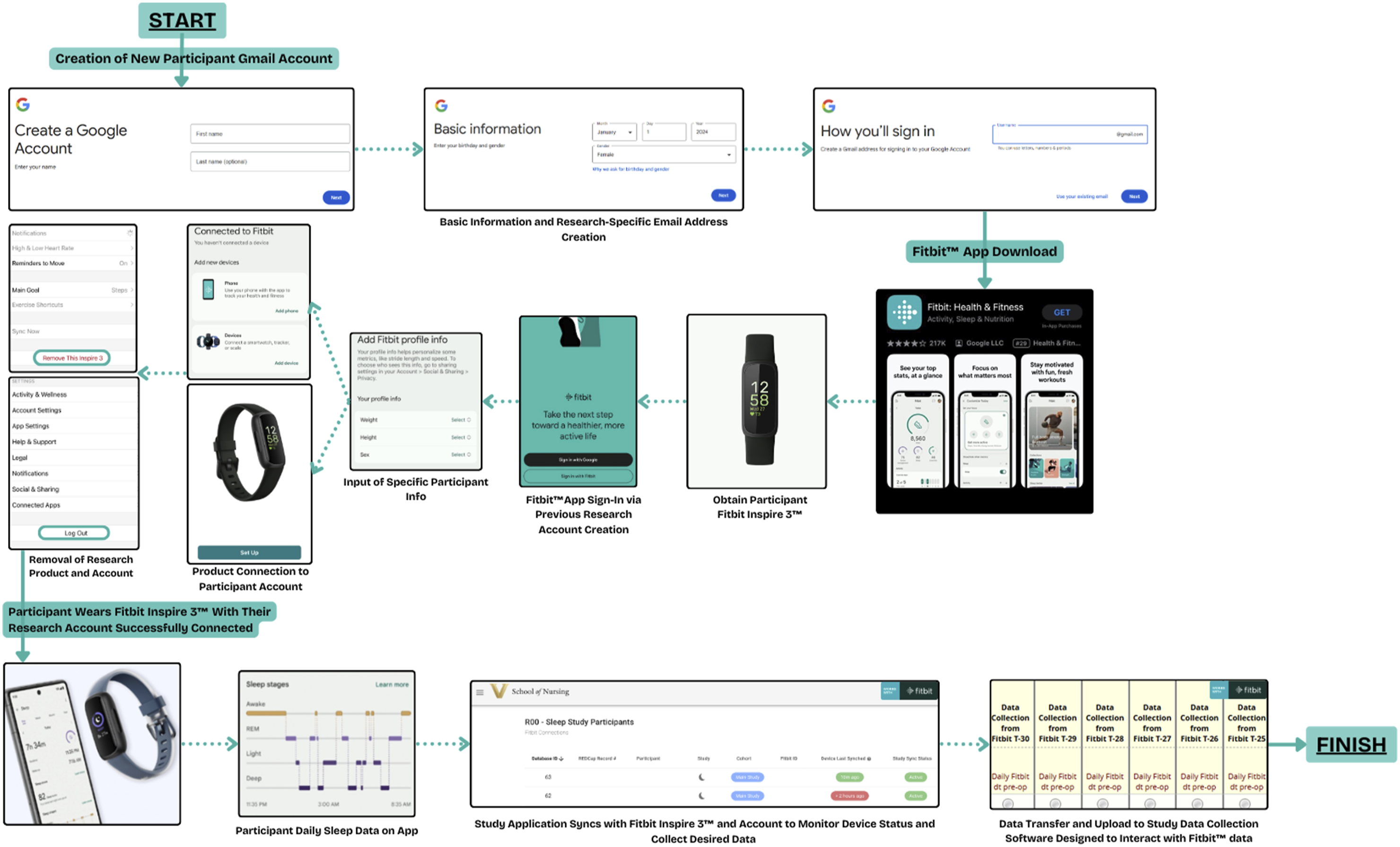

We worked with a web developer to build a web-based app (henceforth referred to as Research App; Figure 2) to manage linking the tracker to participants, capturing data from the tracker, and then transferring that data to REDCap. We used API both between the Research App and the tracker’s platform and between the Research App and REDCap. Within the Research App, participants are automatically added when they enroll in the study (triggered through REDCap). Once a participant is visible in the Research App, we associate that participant with their tracker account (e.g., sign them in to Fitbit using our research-specific email and password), generating an authentication token. We then approve the open authorization (OAuth) which allows the sharing of specified data between the tracker and our Research App. Web-based research dashboard for managing tracker set-up and data collection. This is an example of the web-based research application that serves to manage data acquisition for the tracker wearable. This application connects both to the tracker’s web-based platform, and also to REDCap. From this application, we can see when new participants are added, see when synchronizing of the tracker has last occurred, and manage OAuth access and approvals.

For ease of use and to protect participant confidentially, we created research-specific email accounts that would be used by participants to sign into the tracker’s app on their smartphone. In our particular case, Fitbit is owned by Google, and thus a Gmail account was required for Fitbit sign in. Once a participant was enrolled, we input the participant’s age (using an anonymized birthday) and height and weight from the electronic health record in the tracker app. This ensured that participants did not have to update any of their information when using the tracker, and we could ensure that the correct information was being entered (as the tracker’s algorithms are based on these metrics).

Once the data infrastructure was built and tested, we developed standard operating procedures (SOPs) for consistency and to ensure data completeness. SOPs specific to the tracker included how to associate a participant with their tracker, how to prepare the tracker, how to help participants set up the tracker, and how to troubleshoot set-up and data collection. Daily, we review participant data collection and synchronizing status. Team member training is an important part of successful data collection. Our team has to be able to walk participants, who are often older adults with multiple comorbidities, through set up of the tracker and the app. We also need to be able to quickly identify when data are not downloading or synchronizing so that we can troubleshoot in a timely manner.

Tracker Set-Up and Data Collection

Once a participant is enrolled and associated with the tracker as described above, we ensure that the tracker is fully charged and complete any software updates (as sometimes this can take >15 minutes). We also choose the correct size band for the tracker based on participant BMI (the tracker comes with 2 options). Preparing as much in advance as possible makes set-up with the participant easier with fewer complications. Following this preparation, we send the tracker along with detailed written step-by-step instructions to the participant’s home address. Along with detailed explanations, these instructions include color images associated with each step from setting up the tracker, downloading the tracker app, wearing and charging the tracker, and troubleshooting tips.

We facilitate set up of the tracker with the participant over the phone, where they can follow along with the instructions and our guidance. If needed, we can also meet the participant at an upcoming medical appointment (e.g., if they have preoperative imaging scheduled) or schedule a video conference call. Importantly, we try and engage a household member or support person who can assist with tracker set up, especially if the technology is unfamiliar to the participant. Additionally helpful, we often follow along with the set-up on our own respective smartphone with the same operating system to better support any troubleshooting.

Occasionally, participants already have or previously have had a tracker account. In that case, we have participants sign out of their existing account and sign in with our research login. We can remotely monitor the success of this action using the Research App. We also have participants use our trackers, even if they have their own. These actions allow for consistency across participants and maintains privacy and confidentiality.

Setting up the tracker requires downloading the tracker app on a smartphone or tablet/computer. If the participant does not have a smartphone, we can set up the app on a household member’s smartphone (e.g., a husband is enrolled in the study, but the wife has the smartphone). A successful set-up is when the participant is able to download the tracker app to their smartphone, log in using the study provided email address and password, and synchronize the tracker to the app. On our end, we are able to see when syncing has started via the Research App. For in-hospital enrollments (e.g., participants who did not complete preoperative tracking), we sometimes synchronize the tracker to our research tablets if the participant is unable to facilitate app setup on their own device as we have frequent participant contact in the hospital for syncing.

If working as intended, data from the tracker are now automatically synchronized to the smartphone/tablet and will automatically downloaded to REDCap every 24 hr (Figure 3). Upon study completion, participants sign out of the study-provided login on the tracker app (e.g., sign out of the Fitbit app) and then are free to login again using their own personal email/password or give the tracker to someone else. Once the participant logs out of the study account, we are no longer able to access data from the tracker (e.g., the OAuth no longer applies). Workflow for set-up and data collection. This is an example of the workflow used in this case exemplar to set-up and collect data from a commercial tracker. First, the participant downloads the tracker app on their smartphone and signs-in to the tracker account with a research-provided email address. Then the participant synchronizes their tracker with the app. Once syncing is confirmed, the research team monitors tracker status via the Research App. Every 24 hr during data collection, data that is synchronized from the tracker/tacker app is automatically downloaded to REDCap.

Benefits and Challenges with Data Collection

There are many benefits to using a commercial tracker for this study. The tracker is lightweight and easy to wear with wristband options depending on participant’s wrist size. With the length of data collection at each timepoint (∼1–2 weeks), participants often do not need to charge the tracker during data collection which helps reduce data loss. Also, participants enjoy getting to keep the tracker and several report that they plan to use the data to help with their postoperative recovery (e.g., get more sleep and daily activity). The app is user-friendly, and we have successfully been able to help a wide variety of participants set up the tracker and app. Importantly, we are able to easily gather data from the tracker once our Research App is built. There are, however, ongoing challenges with completeness of data collection that we are regularly troubleshooting.

The biggest ongoing challenge is ensuring the tracker is synchronizing with the associated app to be able to get the tracker data. Some participant’s smartphone settings are such that automatic syncing does not occur. In this case, we are able to see when the last time a participant’s tracker has synchronized (through our Research App), and if we do not see data being downloaded to REDCap, we can contact the participant and have them manually open the tracker app. Usually, the tracker automatically synchronizes once the app is opened. Other troubleshooting options are to ensure Bluetooth is enabled on the smartphone, and logging out and back into the tracker app. If we have synchronized the tracker to our study tablet, then we have to make sure we are going to the participant’s hospital room with the tablet in order to synchronize. If a participant gets discharged before we can bring the tablet, we are largely unable to capture the data after it was last synchronized.

Other challenges relate to participants or nursing staff removing the tracker when not necessary and participants forgetting to bring the tracker with them to the hospital if they were wearing it preoperatively. We regularly provide participant, caregiver, and nursing education, and update practice and procedures as we continue to learn. We occasionally also have missing data in REDCap when it should be present. In that case, we work with our web developer to troubleshoot issues, and in some instances software scripts need to be updated, or the scripts need to be manually run for individual participants.

Conclusions

The interest and use of wearables to track aspects of health in consumer, healthcare, and research applications is growing exponentially. Continued improvements in wearable technology and accuracy adds to the appeal. Commercial wearables can be useful in numerous research applications and offer many attractive benefits for researchers, although important limitations remain. If choosing to use a commercial wearable for research, there are several key considerations, such as device specifications, durability and price, data accessibility, participant factors, and confidentiality. Keeping these considerations in mind can assist in the collection of high-quality data that can ultimately be used to improve population outcomes.

Footnotes

Acknowledgments

The authors have no acknowledgements for the authorship of this article.

Author Contributions

M.M., J.A., O.T., and M.L.C. conceived of the idea and drafted the manuscript. J.N. wrote and provided feedback on the technical language and sections of the manuscript. M.B. provided detail input on wearables. M.L.C. supervised the project. All authors reviewed and contributed to the manuscript and approved the final version.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported by the National Institutes of Health, National Institute of Nursing Research [R00 NR019862] and by Vanderbilt University’s Seeding Success Grant.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.