Abstract

This study evaluated the quality and behavior change techniques (BCTs) included in 11 freely available mobile classroom behavior management applications (mCBM apps). We found that mCBM apps included a limited number of BCTs, with an average of 9 of 21 possible BCTs. Consequence-based BCTs like rewards and feedback were common, while antecedent-based strategies like prompts and reminders were less prevalent. Some mCBM apps also included BCTs related to sharing and exporting data. There was no significant correlation between the number of BCTs and the apps’ overall quality ratings based on the user version of the Mobile App Rating Scale. However, there was a positive correlation between the number of BCTs and the Engagement and Information subdomains of this scale. The findings contribute to understanding mCBM apps design and functionality, providing insights for future development and evaluation. However, concerns were raised about some features of mCBM apps and the degree to which apps ensured data privacy and security. Further research is needed to assess the quality and benefits of mCBM apps for students and teachers.

Keywords

The growing prevalence of technology in schools has led to an increased interest in utilizing technology to promote positive behavior change in classrooms (Cho et al., 2020; Lim et al., 2013). Several studies have demonstrated the successful implementation of various technologies, such as mobile phones, pagers, timer apps, and audio players, in fostering positive behavior change among students (e.g., Bedesem, 2012; Gulchak, 2008; Legge et al., 2010; Radley et al., 2016). Recently, the use of mobile apps for encouraging positive behaviors in students has gained popularity. Although there has been an emergence of research on the use of mobile apps for promoting positive student behavior in classrooms (Mittiga et al., in press), a term to describe such apps has not yet been put forth. In the health literature, mobile apps for promoting positive health-related behaviors (Deng et al., 2018; Luxton et al., 2011; Short et al., 2018) have been termed mobile health (mHealth) apps. In the tertiary education literature, the integration of digital devices such as mobile phones and tablets into learning contexts to support student learning has been termed M-Learning (e.g., Alexander et al., 2019). Previous researchers evaluating classroom behavior management have used the acronym CBM to refer to skills and practices used by teachers to promote positive student behavior and address inappropriate student behavior (e.g., O’Neill & Stephenson, 2011). In this study, we use the term mCBM apps to refer to mobile classroom behavior management applications.

Mobile CBM apps have been recommended by educational researchers for their integration of behavior change techniques (BCTs) (Riden et al., 2019; Robacker et al., 2016). A BCT is a specific component of a program, intervention, or app (e.g., feedback, reinforcement, self monitoring, goal setting, prompting, modeling) that might support teacher or student behavior change (Morrissey et al., 2016). However, few studies have explored the number and type of BCTs included in mCBM apps. A recent systematic review on the use of mCBM apps in classrooms found most focused on positive reinforcement and self monitoring (Mittiga et al., in press). However, the limited number of studies meeting What Works Clearinghouse (WWC) replication standards limited conclusions about mCBM apps overall efficacy.

The use of mobile apps to effect positive health-related behavior changes among adults has been more extensively researched. While some studies have found mHealth apps effective in encouraging patients to engage in positive health behaviors for diabetes and weight management (Hamine et al., 2015; Whitehead & Seaton, 2016), others have reported varied effectiveness due to technological difficulties, lack of BCTs, and time-consuming training requirements (Azar et al., 2013; Higgins, 2016; Nikolaou & Lean, 2016; Quinn et al., 2011; Ryan et al., 2012). Some researchers have suggested that the inclusion of BCTs in apps may be indicative of their quality (i.e., usability in terms of engagement, aesthetics, quality of information, and function) with a higher number of BCTs associated with better quality (Carmody et al., 2019; Furlonger et al., 2019, 2020). However, there is a need to further explore the relation between BCTs and quality. For example, users might find apps with a high number of BCTs difficult to navigate and use and thus may perceive them as lower quality. Alternatively, users might find apps with few BCTs appealing and more user-friendly, and thus viewed as higher quality.

The quality of mCBM design, user interface, and content, as well as their ability to support and stimulate behavioral changes in students may influence the selection and use of mCBM apps by teachers. However, no published research has explored the quality of mCBM apps and their inclusion of BCTs. This study aimed to fill this gap by evaluating the number and type of BCTs included in free mCBM apps. In addition, this study examined the relationship between the quality of an app and its potential to influence behavior change, providing insights into how various aspects of mCBM apps design might enhance or inhibit their effectiveness in eliciting positive behavioral changes in students.

Potential Benefits of mCBM apps

Mobile CBM apps may prove advantageous if they incorporate BCTs. For instance, they could provide guidance on creating a supportive learning environment encouraging appropriate behavior while discouraging inappropriate actions (R. Lewis, 2008; T. J. Lewis et al., 2013). Mobile CBM apps might also facilitate the delivery of evidence-based classwide interventions by integrating antecedent-based strategies, such as clear expectations and cues, and consequence-based strategies, like positive reinforcement (Murphy et al., 2019; Parsonson, 2012). ClassDojo, for example, enables students to exchange points for backup reinforcers (e.g., Dillon et al., 2019; Lipscomb et al., 2018; Lynne et al., 2017) simulating a token economy (Riden et al., 2019; Robacker et al., 2016). Several studies have demonstrated the effectiveness of ClassDojo combined with the Good Behavior Game (GBG) (e.g., Dillon et al., 2019; Lipscomb et al., 2018) and with Tootling (Lynne et al., 2017) in reducing disruptive classroom behavior. Other mCBM apps have incorporated elements of self management. For example, self monitoring is a key characteristic of SCORE IT, in which students self observe and record their behaviors when prompted by the app (Riden et al., 2019). Goal setting, another component of self management interventions (Locke et al., 1981; Lunenburg, 2011), has also been incorporated into some mCBM apps, including ClassDojo and SCORE IT (Robacker et al., 2016).

Mobile CBM apps could also facilitate data-based individualization (DBI) by providing a platform for collecting and analyzing student data, enabling the customization of interventions. Automated data collection through apps may improve the consistency and accuracy of data collection, allowing for more informed adjustments to interventions based on student needs. For example, Bruhn et al. (2015) used data generated by the SCORE IT app to monitor a student’s progress toward their academic engagement goal. When data showed the student was having difficulty meeting their goal, the goal was adjusted and additional intervention components were put into place for the specific student. In another example, Bruhn et al. (2016) used visual analytics generated by the SCORE IT app to monitor students’ progress toward their goals. The data were used to help the researchers make decisions about when to transition to a new phase (i.e., from intervention to maintenance phase) during the implementation of a self monitoring intervention. In this way, mCBM apps might facilitate the repeated cycle of monitoring and evaluation when implementing classwide or individual student interventions, and help teachers make decisions about the effectiveness of these interventions.

New mobile apps are routinely added to the market; however, most lack empirical support (Ayres et al., 2013). Consequently, teachers may rely on personal judgments rather than empirical evidence when incorporating mCBM apps in their classrooms (Sugar et al., 2004). Factors such as cost, accessibility, aesthetics, and preferred features may influence app selection. Initial research posits that employing mCBM apps with more BCTs may enhance student outcomes (Bruhn et al., 2015, 2017; Dillon et al., 2019; Lipscomb et al., 2018; Lynne et al., 2017). However, extant literature lacks evaluations of the number and type of BCTs included in mCBM apps, and this study attempted to fill this gap. The research questions were as follows:

Method

Content analysis enabled the quantification of textual, visual, and audio data within mCBM apps (Krippendorff, 2004). This approach involves categorizing data into smaller units for comprehensive understanding of the phenomenon under investigation (Cavanagh, 1997; Elo & Kyngäs, 2008). In this study, a content unit was defined as a single BCT within the ABACUS assessment framework (McKay et al., 2019). Krippendorff (2004) outlines the content analysis process as: (a) defining the context and formulating research questions; (b) selecting a sample relevant to the research questions; (c) identifying the unit for analysis; (d) describing the coding procedure; (e) training coders; (f) conducting coding; (g) evaluating trustworthiness; and (h) analyzing coding results.

Sample Selection

Search Strategy and Inclusion/Exclusion Criteria

The first author searched the Apple App Store (Apple Inc., 2022) on April 17, 2022 using the following keywords: classroom management, classroom behavior management, classroom gamification, behavior management, and self monitoring. We selected the Apple App Store for convenience and the large number of apps featured (Ceci, 2022). In addition, we selected apps from a single app store to reduce potential confounds introduced by marketplace-specific variations in app categorizations, demographics of users (e.g., regional differences, socioeconomic factors), and changes to apps. Finally, the Apple App Store enabled download and evaluation of apps on the researchers’ Apple mobile devices (iPhones). The search identified 24 mCBM apps.

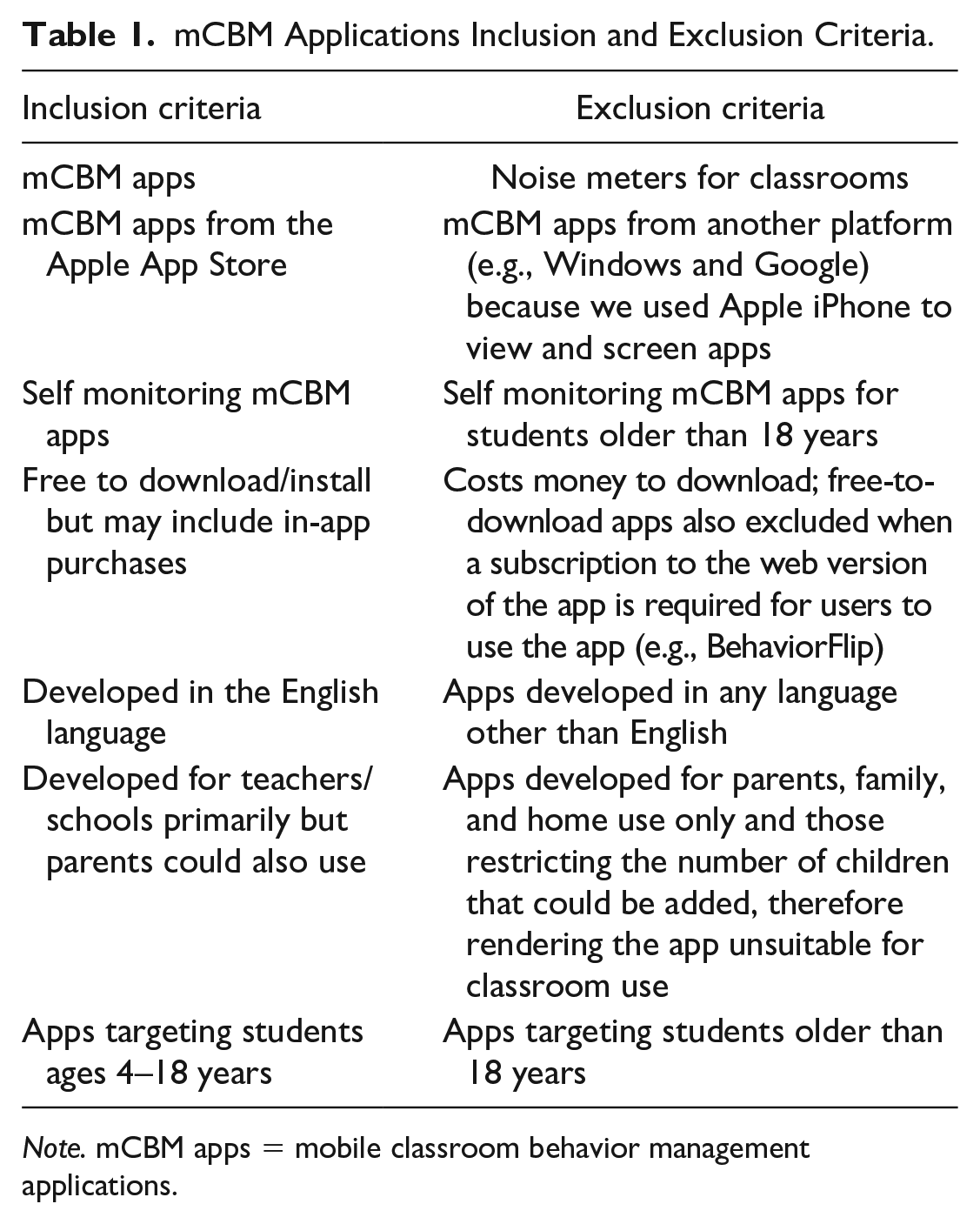

Table 1 presents the study inclusion and exclusion criteria. We only selected for evaluation mCBM apps free to download/install based on the assumption that these apps were most likely used by teachers (MacCallum & Parsons, 2019). We hypothesized teachers would be more likely to trial free mCBM apps because they would be easier to access and to discontinue if the app was unsuitable, presented technical difficulties, did not perform as expected, or was no longer supported by updates to the iOS.

mCBM Applications Inclusion and Exclusion Criteria.

Note. mCBM apps = mobile classroom behavior management applications.

Screening Procedure

The first author examined the previews of all 24 mCBM apps in the Apple App Store (Apple Inc., 2022). The preview included screenshots and information about the app. Based on the previews, each app was screened against the inclusion and exclusion criteria. Eight paid apps were subsequently excluded (costs ranged from US$1.49 to US$399.99). At the time of data extraction, two apps could no longer be found in the Apple App Store: Classroom Hero and SchoolMint Hero. One app, Classcraft, was excluded from further analysis despite meeting inclusion criteria because behavior management features were no longer accessible on the app (via desktop version only). Another app, Cleverbees, was excluded from further analysis despite meeting inclusion criteria because attempts to create an account failed and the developer could not be contacted (no contact information was provided on the website). The PerfectPass Mobile app was excluded from further analysis because, although the app was free to download, it required a paid subscription to use it. Following the screening process, a total of 11 mCBM apps were identified for inclusion. While all 11 apps were free to download, four apps included in-app purchases to access certain features (i.e., TrackCC, Points, TeacherKit, Bloomz, Alora). We did not evaluate content/features only accessible through in-app purchases. The first author downloaded these 11 apps on an iPhone 14 Pro.

Unit for Analysis and Description of Coding Procedure

ABACUS Coding

Each mCBM app was installed on the iPhone 14, examined, and coded by the first author in October 2022. The ABACUS coding framework evaluates the behavior change potential of mobile apps (McKay et al., 2019). The ABACUS allows for the evaluation of the presence or absence of 21 BCTs grouped into four categories: (a) knowledge and information; (b) goals and planning; (c) feedback and monitoring; and (d) actions (McKay et al., 2019). The ABACUS framework has strong interrater reliability (intraclass correlation coefficient [ICC] = .91, 95% confidence interval [CI] = [0.81, 0.97]) and excellent internal consistency (α = .93) (McKay et al., 2019). The first author created a data collection and rating form using the 21 BCTs provided by McKay et al. (2019). A researcher developed ABACUS data collection and rating form (see Online Supplemental) and provided detailed instructions on how to rate whether each app included each BCT. An operational definition was developed for each BCT (see Online Supplemental) to facilitate objective measurement and recording of data by multiple raters. Next to each BCT entry, the first author recorded the presence or absence of the BCT in a separate column. The first author used an adjacent column to note the specific information reviewed within each app to determine the presence/absence of the BCT. Once an app was scored against all 21 BCTs, the number of BCTs present was counted and the app was given a score of 21.

uMARS Coding

The first author employed the validated uMARS (Stoyanov et al., 2016) to assess and rate the quality of the included mCBM apps. The uMARS uses less technical terminology and expert-oriented items than its predecessor, the professional version of the Mobile Application Rating Scale (MARS). The uMARS is designed specifically for end users of apps without training and expertise in health sciences. The uMARS has undergone readability evaluation using the Flesch Reading Ease test (which suggested an eighth-grade reading level) and was pilot-tested for usability with 13 Australian participants ages 16 to 25 years (Stoyanov et al., 2016). The uMARS consists of 20 items subdivided into five categories: Engagement, Functionality, Aesthetics, Information, and Satisfaction. The Engagement subscale measures the level of interest and engagement the app is likely to elicit in the user. It includes items such as entertainment value, interest, customization options, interactivity, and the target audience’s likelihood to use it repeatedly. The Functionality subscale assesses aspects like the app’s ease of use, navigation, gestural design, and the presence of any technical issues or glitches. The Aesthetics subscale evaluates the app’s visual appeal. It considers aspects such as layout, graphics, visual appeal, and overall aesthetics of the app. The Information subscale gauges the quality, credibility, and relevance of information contained within the app. The Satisfaction subscale measures overall satisfaction with the app, perceived worth, and willingness to recommend it. The Satisfaction subscale was excluded from this study because (a) the researchers did not use the app (only assessed the app) and (b) the subscale was based on user preference and therefore considered to be the most subjective of all subscales. The uMARS has high internal consistency (α = .70–.90) and good test–retest reliability (Stoyanov et al., 2016), and was selected for use in this study due to its accessibility for nonexpert and its validity and reliability. Importantly, the uMARS enabled a quantitative analysis of app quality, which facilitated direct comparison between different mCBM apps. A researcher-developed uMARS data collection sheet was used to assess the quality of each app (see Online Supplemental). Apps were rated on a Likert scale ranging from 1 (very poor) to 5 (excellent) for each item on the four included subscales.

Training, Coding, and Evaluating Trustworthiness

The first author appraised all 11 included mCBM apps using both the ABACUS and uMARS data collection and scoring sheets. At the time of data collection, the first author was a PhD student and provisional psychologist. A second independent rater examined 10 of 11 apps using identical data sheets. The independent rater (the fourth author), a registered psychologist, was selected because they had previously used the uMARS and a BCT evaluation framework as part of a published study evaluating mHealth apps for anxiety (Chan et al., 2022). Both familiarized themselves with each app and reviewed the ABACUS and uMARS data sheets before initiating the scoring process. The scores derived by both independent raters for these 10 apps were then compared. After initial interrater reliability scores were calculated, discrepancies were resolved through a consensus meeting between the two independent raters. Cohen’s kappa (κ) was computed to ascertain the concordance between raters for the ABACUS and uMARS scales. Interrater agreement scores and CIs for each app are depicted in Table 2. The agreement scores ranged from 0.60 to 1.00 indicating moderate to perfect agreement.

Interrater Agreement and 95% Confidence Interval for Each Scale.

Note. κ = Cohen’s kappa; ABACUS = App Behavior Change Scale (McKay, Slykerman, & Dunn, 2019); uMARS = user version of the Mobile Application Rating Scale (Stoyanov, Hides, Kavanagh, & Wilson, 2016).

Analysis

Descriptive statistics (M and SD) were used to obtain the mean total BCTs included in each mCBM app and to obtain the mean quality ranking of each app. Mean uMARS subscale ratings were calculated by summing each item’s score and dividing by the total number of items per subscale. Total mean uMARS quality rating was calculated using IBM SPSS Statistics 26. Pearson correlations were calculated to examine whether there was a significant relationship between the number of BCTs and the uMARS ratings of each app.

Results

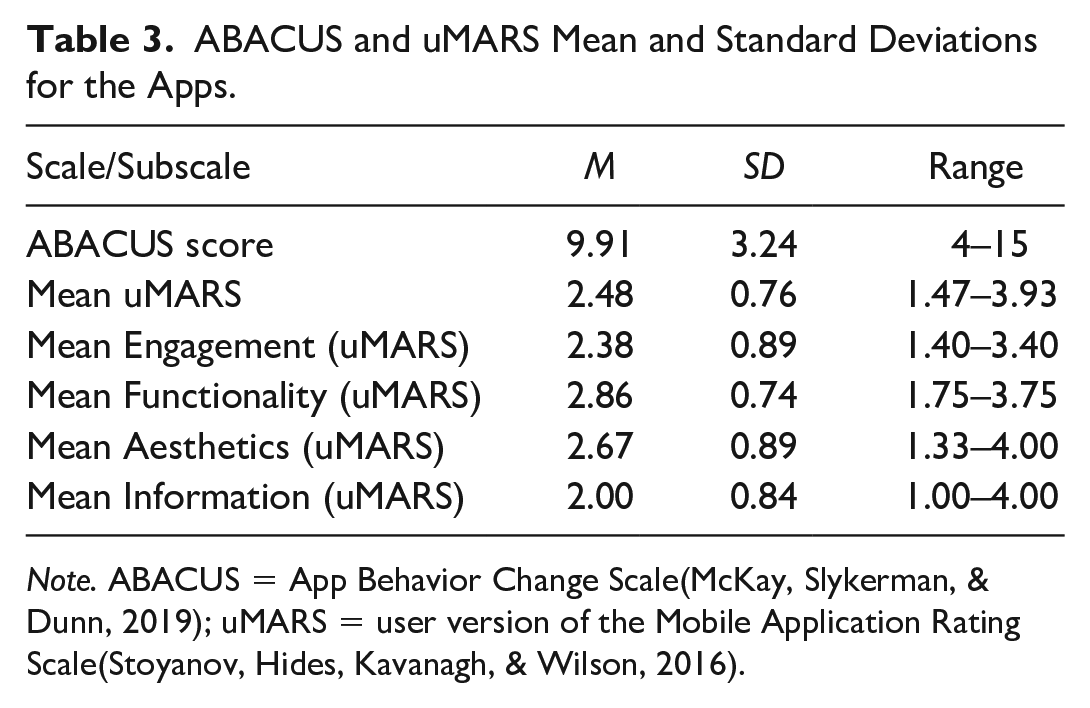

Table 3 depicts the average number of BCTs identified using ABACUS, and the mean uMARS score for each subscale across the 11 mCBM apps that were analyzed. On average, the included apps featured 9.91 BCTs (SD = 3.24, range = 4–15). The included mCBM apps obtained a mean uMARS rating of 2.48 (SD = 0.76) with ratings ranging between 1.47 and 3.93, indicating a quality rating from poor to good.

ABACUS and uMARS Mean and Standard Deviations for the Apps.

Note. ABACUS = App Behavior Change Scale(McKay, Slykerman, & Dunn, 2019); uMARS = user version of the Mobile Application Rating Scale(Stoyanov, Hides, Kavanagh, & Wilson, 2016).

BCTs Included in mCBM Apps

Table 4 depicts the total number of BCTs (i.e., ABACUS score) for each app together with their respective mean uMARS rating. ClassDojo contained the most BCTs (n = 15) and Alora contained the least (n = 4).

ABACUS Scores and Mean uMARS Ratings for Each App.

Note. ABACUS = App Behavior Change Scale(McKay, Slykerman, & Dunn, 2019); uMARS = user version of the Mobile Application Rating Scale(Stoyanov, Hides, Kavanagh, & Wilson, 2016). mCBM Apps = mobile classroom behavior management applications.

Knowledge and Information

Each of the 11 apps allowed the user to Customize and Personalize certain features. For instance, some apps had a selection of preset behavior goals and allowed users to create new behaviors. Teachers could assign students different avatars as their profile picture. Teachers could set alerts or notifications for themselves or to be sent to students and or their parents. Some apps allow teachers to assign students in smaller groups. Eight of the 11 apps appeared to have been created by Credible Sources (e.g., professionals with content matter expertise). Four apps included information consistent with national guidelines for supporting positive student behavior, as stipulated by the app developers. Teacher’s Assistant Pro 3 was created by a teacher, and iConnect specifically mentioned using behavior-based principles in its design. The developers of the Bloomz app stated on their website that the app was designed using the principles of Positive Behavioral Interventions and Supports (PBIS). None of the apps included a place to record Baseline Information about student behavior before implementing classwide behavior management strategies. None of the apps included Instructions on How to Perform a Behavior, except ClassDojo which included videos for teachers to watch about how to encourage positive student behavior. Of the 11 apps, eight included an option for teachers to provide students with information about Consequences for Continuing or Discontinuing a Behavior. For example, ClassDojo and TrackCC used a response cost system for inappropriate behaviors but only ClassDojo allowed teachers to broadcast information on point loss (response cost) to students.

Goals and Planning

None of the apps asked students to describe their Readiness for Behavior Change. Nine of the 11 apps allowed users to set behavior goals (Goal Setting). For example, the iConnect self monitoring app allowed teachers to set behavior goals aligned with the school’s values. Five apps allowed teachers to Review, Update, and Change Behavior Goals based on student progress. For example, ClassDojo generated individual reports and statistics on the number of Dojo points awarded to students, and iConnect displayed summary data in a bar graph. Other apps including Bloomz, ClassClap, and Points 4 Kids apps provided data such as total points awarded.

Feedback and Monitoring

Five apps used visual analytics to help teachers understand differences between Current Action and Future Goals. Points 4 Kids and ClassClap showed points earned in a pie chart, while iConnect used bar charts. Bloomz tracked whole classroom progress toward behavior goals, and ClassDojo generated individual visual reports for each student. Five apps allowed students to self monitor their behaviors. For example, iConnect allowed students to self record their behavior by answering “yes/no” questions when prompted. Eight apps allowed sharing information about behaviors with others. TrackCC, Bloomz, and ClassClap included features to allow teachers to share information directly with parents. ClassDojo also allowed the teacher to broadcast the number of Dojo points awarded to each student on a whiteboard, to allow for social comparisons among students. A+ Teacher’s Aide, Teacher Kit, and Class123 apps enabled parents and students to collaborate by sharing information via email or giving parents and students access to their own versions of the app. iConnect allowed teachers to collaborate with other teachers who may share the same students and or classrooms. All 11 apps allowed for user feedback. Two had automatic “coach” functions: ClassDojo used text notification when points were awarded and deducted and iConnect used a chime to cue students to self record their behavior. User feedback was externally managed by teachers in the remaining eight apps. Six apps allowed teachers to export data. For example, TrackCC allowed teachers to export behavior data to a comma-separated values or text file (CSV file), while the Bloomz and Teacher’s Assistant Pro 3 apps enabled teachers to share behavior data via email. Nine apps included rewards or incentives for achieving or making progress toward achieving behavioral goals. Most apps allowed teachers to deliver points electronically to students for desirable behavior, which could then be exchanged for backup reinforcers. Using the Alora app, teachers could provide a virtual “thumbs up” to students for good behavior. Using ClassDojo, teachers could deliver points to students for desirable behavior and pair the delivery of points with virtual confetti. Five apps provided general encouragement. TrackCC and Class123 used audio cues when points were awarded to students, while ClassDojo used audio cues when awarding points and a visual feedback notification system when redeeming points.

Actions

Five of 11 apps allowed teachers to provide reminders, notifications, or prompts for specific behaviors. The iConnect app provided prompts in the form of chimes, flashes, or vibrations at variable intervals for students to respond to on-screen questions and self monitor their behavior. The A+ Teacher’s Aide app provided attendance reminders, while the TrackCC app had email notifications and in-app notifications to alert students and parents to changes in behavior or attendance. Two apps explicitly encouraged positive habits by prompting explicit practice of positive behaviors, not just prompts for tracking or self monitoring behavior. For example, ClassDojo had “Big Ideas” videos which encourage positive student behaviors by delivering engaging, age-appropriate content on essential social-emotional skills through animated narratives, while allowing teachers to reinforce these lessons by rewarding students. Prompting in the iConnect app provided timely reminders and cues for positive student behaviors, ultimately fostering the development of consistent, beneficial routines and practices. Eight apps allowed for unlimited practice of appropriate behavior, meaning there were no limits set on the number of times a student could, for example, engage in the behavior, self monitor their behavior, and/or receive reinforcement for the behavior. ClassDojo was the only app allowing for both planning for barriers and restructuring the environment. ClassDojo provided suggestions for teachers for anticipating impediments (e.g., classroom noise levels) and modifying learning contexts through features like tailored behavior monitoring, class communication utilities, and personalized student progress assessment. One app, iConnect, assisted with distraction/avoidance by providing programmed reminders to students to stay on task.

Relationship Between the Quality Rating and the Number of BCTs Included in the Apps

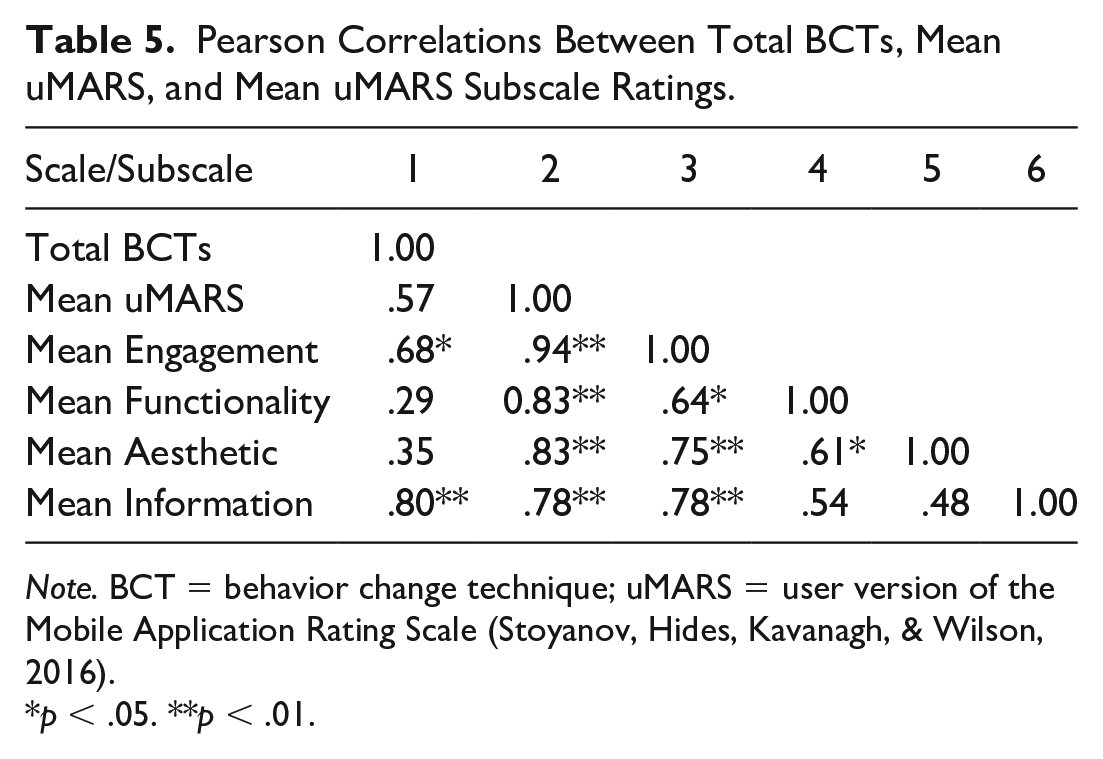

Table 5 presents the results from the Pearson correlation analyses between the number of BCTs and uMARS quality rating. There was no significant correlation between the number of BCTs and mean uMARS total rating (p = .07). Mobile apps that featured more BCTs were not necessarily rated as being of higher quality, as per the uMARS. There was a positive correlation between the number of BCTs and the mean uMARS Engagement rating (r = .68, p < .05). There was no significant correlation between the number of BCTs and mean uMARS Functionality rating (p = .40) or the number of BCTs and mean uMARS Aesthetic rating (p = .30). There was a positive correlation between the number of BCTs and mean uMARS Information rating (r = .80, p < .01).

Pearson Correlations Between Total BCTs, Mean uMARS, and Mean uMARS Subscale Ratings.

Note. BCT = behavior change technique; uMARS = user version of the Mobile Application Rating Scale (Stoyanov, Hides, Kavanagh, & Wilson, 2016).

p < .05. **p < .01.

Discussion

The use of mCBM apps is increasingly common in classrooms (ClassDojo Inc, 2020; Williamson, 2017; Williamson & Rutherford, 2017), but little is known about the quality of these apps. This study is the first to comprehensively assess the quality and type of BCTs included in 11 freely available mCBM apps. Consistent with previous research investigating the inclusion of BCTs in mHealth apps (e.g., Azar et al., 2013; Hamine et al., 2015; Higgins, 2016; Nikolaou & Lean, 2016), results of this study revealed that mCBM apps included a limited number of BCTs. On average, mCBM apps included nine of 21 possible BCTs, with a range of four to 15 BCTs. Pearson correlation analyses indicated that apps with more BCTs were not necessarily rated as higher quality based on their uMARS scores. However, the number of BCTs included in apps was positively correlated with the Engagement and Information subdomains of uMARS. There was no significant relationship between the Functionality and Aesthetics subdomains. Improvement in Engagement and Information subdomains is likely to result in overall improvement in quality, but this may not be specifically related to the number of BCTs.

Interestingly, we found most apps were designed to be used by the teacher to facilitate the delivery of whole-class interventions. For example, ClassDojo allowed the teacher to manage the delivery of points for positive classroom behaviors displayed by students. Apps including Points 4 Kids, TrackCC, Class123, and TeacherKit provided teachers with an opportunity to prompt students to self monitor their behavior, but only the teacher could record whether or not students were on task or displaying appropriate behavior at specific intervals. Relatively fewer apps were designed to be used by the students themselves. One app, iConnect, was designed to be used by individual students and provided students with reminders to self monitor their own behavior. These findings suggest that mCBM apps might bring about positive behavior change in students by supporting teacher behavior change. In other words, apps might help teachers to plan classwide interventions and prompt teachers to implement various intervention components, such as goal setting, prompting, and reinforcement, rather than providing students with opportunities to self manage components of their own behavior change program.

We found that consequence-based BCTs, particularly rewards and feedback, were often included in mCBM apps. These techniques might support positive student behaviors if used effectively (e.g., Dillon et al., 2019; Lipscomb et al., 2018; Lynne et al., 2017). Feedback gives students regular, informative insights into their behavior, promoting self assessment. Rewards might function as positive reinforcement and thus increase future positive behavior. By contrast, antecedent-based strategies like prompts and reminders were less frequently incorporated. ClassDojo and iConnect integrated more antecedent-based strategies, such as reminders, notifications, and planning techniques. Goal setting was the most common antecedent-based strategy, present in nine mCBM apps. This aligns with prior research suggesting goal setting can improve performance when paired with other evidence-based strategies (Locke et al., 1981; Lunenburg, 2011).

Other commonly included BCTs in the mCBM apps were sharing behavior with others and exporting data, which might foster collaboration between professionals and parents. However, these features warrant careful use. While sharing behavioral information can promote behavior change (Luszczynska et al., 2004), it might also have negative impacts (e.g., Liu et al., 2016; Mollee & Klein, 2016). Broadcasting points earned or deducted might seem like an easy feedback and self monitoring tool, but McIntosh et al. (2020) highlighted potential issues. It can be ineffective for students needing extra behavioral support, stigmatize low-performing students, and rely on public corrections that may damage self esteem, increase anxiety, reduce engagement, and/or give attention to unwanted behaviors. Notably, these strategies may disproportionately affect students at risk for disciplinary exclusion, such as those with disabilities (Gage et al., 2019) or students of color (Okonofua & Eberhardt, 2015).

Caution is also warranted when using data sharing features included in mCBM apps. Exporting data requires informed consent, privacy protection, and adherence to laws and regulations. In recent years, as the use of technology in schools has become ubiquitous, concerns have been raised about the privacy and security of student data (Chanenson et al., 2023). Chanenson et al. (2023) noted that concerns have been raised about the harvesting of student data for commercial purposes (i.e., for showing the effectiveness of new educational technologies, such as apps) without parent or student consent. In a recent survey conducted with parents of K–12 students, they reported growing concerns about the use, sharing, and protection of their child’s data in schools, although they largely trusted that schools had appropriate policies to protect student data (Center for Democracy & Technology, 2020).

Implications for Practice and App Development

To date, little research has explored the effectiveness of mCBM apps, although a recent systematic review of published research found some evidence of positive effects for student academic engagement (Mittiga et al., in press). Based on the main findings of this study, we provide three preliminary recommendations for using mCBM apps in schools. First, because we found that mCBM apps differ in their purpose and features, we recommend that teachers carefully review and select apps matched to class or individual student needs. For example, if the teacher’s goal is to increase positive behavior for all students in the classroom, an app that allows for the provision of rewards to all students for positive classroom behaviors, such as ClassDojo, may be most appropriate. By contrast, if the teacher’s goal is to support a student who needs more intensive intervention to remain on task during class activities, an app that prompts individual students to self monitor their behavior, such as iConnect, may be suitable. Teachers may find our ABACUS data sheet (see Online Supplemental) useful when evaluating specific mCBM apps for use in their classrooms because it is brief, easy to use, and can highlight the types of BCTs included in apps. App developers could consider the inclusion of an easy-to-understand description of the purpose and features of the app and instructions for teachers on how to best use it with groups and/or individual students. App developers might consider ways to create versatile mCBM apps with features that can be tailored to both classroom and individual needs, allowing teachers to easily align app functions with specific behavioral goals.

Second, we recommend that apps complement, rather than substitute classwide and schoolwide positive behavior support practices. For example, teachers should define, model, teach, and practice age and culturally appropriate positive behavior expectations within their classrooms. Mobile CBM apps might then be used as part of a positive reinforcement system, such as an interdependent group contingency, where positive behavior displayed by any student helps the group move closer to an end goal. Teachers could supplement the delivery of points or other reinforcers mediated by the app with personalized behavior such as behavior specific praise, to ensure that students know which behaviors are being reinforced. If teachers need to address challenging student behavior, they could do so privately and provide feedback that helps the student learn new behaviors. To assist teachers, app developers might consider including in-app features, such as video models, that demonstrate how to define, model, and reinforce appropriate behaviors and deliver personalized feedback to students. This may help teachers maximize the features of the app, as well as provide knowledge around BCTs, which could be particularly useful for early career teachers who are still learning about the principles of classroom behavior management.

Finally, we recommend that schools develop policies around the use of mCBM apps to ensure they are evaluated for quality before their adoption and adhere to legal requirements. Given that many apps evaluated in this study included data sharing and data export features, schools may wish to consider ways to implement appropriate safeguards to protect the privacy and confidentiality of students. App developers should ensure that apps protect student data by offering robust data protection, particularly in light of increasing concerns about data breaches. App developers may wish to offer the option for users to store behavior data locally on the e-device as is the case with SCORE IT. Apps for use in schools should use strong encryption algorithms to protect data both in transit and at rest and should include authentication mechanisms so that only authorized users can access the app and its data. App developers should also include easy-to-understand information about (a) what data the app collects and for what purpose, (b) how the privacy and confidentiality of student data are protected, (c) how/if data can be transferred from the app to a school-specific data storage system, and (d) how/if data can be permanently deleted from the app.

Limitations and Future Research

Some limitations of this study warrant mention. First, apps are rapidly added to and removed from the Apple App Store, which can raise questions about the generality of the findings of this study. App removal or obsolescence can hinder the evaluation process and affect teachers’ app usage. Technical difficulties further complicate evaluations, emphasizing the need for timely assessments and app support. Of note, this study evaluated only free-to-download mCBM apps under the assumption of teacher preference for cost-saving and convenience. However, these apps may have limited features and support, complicating troubleshooting for teachers. Future research should consider evaluating both free and paid mCBM apps, in-app purchases (which may provide additional components for teachers to use), and the technical support offered by the developers of apps. Given the rapid pace with which new apps are introduced to the market, future researchers may wish to explore ways to train teachers and other education professionals to appraise the quality and content of apps before selecting new apps for use in their classrooms (rather than focusing on empirical evaluation of each new app released to market).

Second, our search was confined to the Apple App Store and those apps available for download in Australia, potentially overlooking apps on other platforms and through other countries’ Apple App Stores and affecting the comprehensiveness of our findings. Moreover, we did not directly engage with teachers to understand their preferences for mCBM apps. Such consultation might have shed light on a more diverse set of apps actively used in Australian classrooms, further enhancing the validity of our conclusions. This omission is a notable limitation in our approach and should be weighed during result interpretation. Future studies should delve deeper into the quality of mCBM apps across different platforms and devices, including iPads and laptops, which are prevalent in educational settings.

Finally, more robust frameworks for assessing the quality and content of mobile applications are needed. For example, in the ABACUS, Credibility of Source was scored simply by the presence of information provided by the app developers about whether the development of the app was informed by expert input, research findings, national guidelines, or an evidence-based framework. However, it was not stipulated that researchers should independently verify these claims. Given that the uMARS is designed with the end user in mind, perhaps a more insightful assessment would have been garnered if teachers had rated the mCBM apps using this scale, presenting a potential avenue for future research. In addition, some aspects of the uMARS scoring system, such as Aesthetics and Satisfaction, were based on user perceptions and preferences, which may be subjective. In this study, having two raters independently assess 10 of 11 included apps increased the reliability of the data. However, not all included apps received a score of “near perfect” or “perfect” agreement when collecting interrater agreement data (see Table 2). In the future, the development of objective frameworks for assessing the content and quality of apps may help researchers identify those apps with stronger empirical support.

Conclusion

The findings of this study contribute to the understanding of the design and functionality of mCBM apps in promoting positive behavior change for students. By highlighting the prevalence of consequence-based BCTs and the relatively underutilized antecedent-based strategies, this study sheds light on areas for improvement in future app development. However, the findings of this study also reveal concerns about mCBM apps, such as whether the use of certain features of apps (such as sharing behavior with others) might negatively affect some students and whether the data collected and stored within these apps meet legal and ethical requirements around student privacy and confidentiality. The findings of this study should be considered preliminary and provide a starting point for further evaluations of the quality of mCBM apps and the potential benefits for students and teachers.

Supplemental Material

sj-docx-1-pbi-10.1177_10983007241230594 – Supplemental material for A Content and Quality Evaluation of Mobile Classroom Behavior Management Applications

Supplemental material, sj-docx-1-pbi-10.1177_10983007241230594 for A Content and Quality Evaluation of Mobile Classroom Behavior Management Applications by Sharon R. Mittiga, Nerelie C. Freeman, Brett E. Furlonger, Perrin Chan and Erin S. Leif in Journal of Positive Behavior Interventions

Supplemental Material

sj-docx-2-pbi-10.1177_10983007241230594 – Supplemental material for A Content and Quality Evaluation of Mobile Classroom Behavior Management Applications

Supplemental material, sj-docx-2-pbi-10.1177_10983007241230594 for A Content and Quality Evaluation of Mobile Classroom Behavior Management Applications by Sharon R. Mittiga, Nerelie C. Freeman, Brett E. Furlonger, Perrin Chan and Erin S. Leif in Journal of Positive Behavior Interventions

Supplemental Material

sj-docx-3-pbi-10.1177_10983007241230594 – Supplemental material for A Content and Quality Evaluation of Mobile Classroom Behavior Management Applications

Supplemental material, sj-docx-3-pbi-10.1177_10983007241230594 for A Content and Quality Evaluation of Mobile Classroom Behavior Management Applications by Sharon R. Mittiga, Nerelie C. Freeman, Brett E. Furlonger, Perrin Chan and Erin S. Leif in Journal of Positive Behavior Interventions

Footnotes

Author Contributions

SRM and ESL conceived of the study. SRM conducted the search, reviewed all apps, undertook data analysis, and drafted the manuscript. PC independently reviewed 10 of the 11 apps. All authors provided feedback on the manuscript drafts and read and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research was supported by an Australian Government Research Training Program (RTP) Scholarship.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.