Abstract

Teachers and clinicians report feeling underprepared to implement evidence-based behavior supports. As such, effective models of professional development that lead to improved outcomes for all individuals are required. Critical components of high-quality professional development include explicit instruction, modeling, practice, direct feedback, and, potentially, individual coaching. However, delivering all components may be resource intensive. Professional development approaches that extend the tiered logic of multi-tiered systems of support to adult learning may address these challenges. In such models, universal professional development is provided to whole-staff groups on specific skills using explicit instruction, modeling, practice, and feedback. Data are then collected and used to inform targeted, individualized supports. The current systematic literature review addressed a gap in the literature by identifying, summarizing, and appraising 20 published studies that examined tiered, responsive professional development models. While consistent practices were identified (e.g., group didactic training at Tier 1 and coaching at Tiers 2 and 3), the form and shape of specific interventions at each tier differed across studies. The same was observed in the data-based decision-making processes employed to determine the need for additional trainee support. Practical implications to inform the ongoing examination of tiered responsive professional development models are discussed.

Keywords

Effective in-service professional development (IPD) is particularly important in school contexts to build the capacity of staff (Waitoller & Artiles, 2013) to meet the academic, social, and behavioral needs of their students, support the staff’s well-being, and ensure staff retention. School contexts can include mainstream classrooms as well as early childhood settings, alternative schools, and specialist programs for students with disability. In these contexts, professional development has been defined as “structured professional learning that results in changes to teacher [or paraprofessional] knowledge and practices” and results in improved outcomes for students (Darling-Hammond et al., 2017, p. 2). Darling-Hammond and colleagues (2017) conceptualized IPD as both embedded learning activities within a professional’s role and school and external provision of activities to staff through didactic and workshop-based learning opportunities. Multiple examinations of IPD have been undertaken to of understand the components of professional development programs that lead to improved student outcomes (Darling-Hammond et al., 2017; Flynn et al., 2016; Sims et al., 2022). The importance of extended duration of training, an explicit focus on content, active learning opportunities including modeling and opportunities to practice, delivery of performance feedback, and coaching and ongoing expert support were noted as important components of training across several studies (Darling-Hammond et al., 2017; Flynn et al., 2016; Sims et al., 2022).

Other studies have emphasized the importance of IPD that includes direct instruction of the target skill followed by more training components that include modeling, skill rehearsal, and performance feedback (e.g., behavioral skills training [BST]; Lerman et al., 2015). For example, Flynn et al. (2016) found that school staff who engaged in group workshops and individual coaching significantly decreased behavior incidents and school suspensions. In this study, following the initial skill instruction, school staff received direct coaching, which consisted of an expert coach observing the staff member implement the target skill with performance feedback. These findings are consistent with Fallon et al.’s (2018) systematic review examining the effects of direct training, where in 45% of the included studies reported cases in which strong or moderate effects of direct training were observed.

Challenges With In-Service Teacher Professional Development

Ensuring that IPD is designed with the critical components described above and achieves the desired outcomes is a significant challenge. For example, while Fallon et al. (2018) described the overall positive effects of IPD programs that included components identified as critical to effective teacher learning, there were still multiple studies within the systematic review that did not achieve the desired outcomes. When considering the barriers to the successful transfer of learning from IPD, multiple barriers exist that relate directly to the context of implementation (e.g., time, resourcing, and alignment with existing practices; McIntosh et al., 2014; Sanetti & Collier-Meek, 2019), as well as to the practitioner (e.g., staff buy-in and disagreements about the practices; McIntosh et al., 2014). This aligns with the findings presented by Zhang et al. (2021) who reported that teacher motivation can influence the effectiveness of IPD. Studies have shown that teacher motivation might vary based on personal factors (such as the perceived value of IPD or alignment with teachers’ personal choices and interests; Zhang et al., 2021), school-level factors (such as workload, time, and support from colleagues; R. A. Fox et al., 2021), and systems-level factors (such as whether IPD is mandatory or optional, or whether IPD occurs during or outside of working hours; McMillan et al., 2016).

The challenge of delivering IPD that incorporates the components of effective PD programs, and builds motivation, within complex and dynamic school settings is significant. Coaching supports have been recognized as a critical mechanism for building teacher fluency to effectively implement evidence-based practices (EBPs) in context (Freeman et al., 2017). However, the resource demands of such coaching support are significant and come with no guarantee of success (Bastable et al., 2020). Once trained, teachers require sufficient time to prepare and implement new EPBs as many require additional material resources. As a result, schools and school systems need efficacious approaches to improve the ability of teachers to implement evidence-based behavior supports that lead to positive outcomes for all learners.

Responsive Tiered Training Frameworks

The need to provide thorough training to staff so they can implement the trained skills with fluency must be balanced with the persistent resource constraints that have been identified across sectors (i.e., time, expertise, and money; Bastable et al., 2020). A tiered approach to staff training may be an effective means to support performance development in ways that are more cost- and time-efficient (Brock, 2022). This approach allows implementers to build on the positive outcomes of high-quality universal IPD with individualized support for those staff who need it. In doing so, limitations in the generality or sustainability of effects of IPD models for some staff may be addressed. This approach may also reduce some of the costs of delivering individualized coaching to all staff in the first instance (Pas et al., 2020). Finally, the provision of more individualized support may allow implementers to identify and address motivational factors that may be hindering the participation of some teachers.

Multitiered systems of support (MTSS) are often employed to support student behavior and academic performance (McIntosh & Goodman, 2016). In schools, this may be called positive behavioral interventions and supports (PBIS) or response to intervention (RTI) for behavior and academics, respectively. This is a framework defined as supporting all learners, which includes staff as well as students (Reddy et al., 2016). Following a similar framework as traditionally applied to students, staff development can be approached in a multitiered manner to adapt staff professional development supports to best align with staff needs (Simonsen et al., 2014). This proposed multitiered framework uses data-based decision-making of staff behavior in a problem-solving framework where staff have opportunities to receive more frequent feedback with less latency between their behavior and the specific feedback.

Responsive tiered training frameworks use a tiered logic of support provision and in many cases are underpinned by theoretical frameworks consistent with the response to intervention, PBIS, and integrated MTSS frameworks. For example, responsive tiered frameworks are designed to organize the prevention-focused practices, systems, and data-based processes along a continuum that provides increasingly intensive and individualized supports (e.g., universal behavioral expectations at Tier 1 PBIS, functional behavior assessment for individual students at Tier 3; Simonsen & Sugai, 2019). In addition, behavioral theory (i.e., radical behaviorism) and the principles of behavior derived from applied behavior analysis (ABA; e.g., reinforcement, punishment, and stimulus control) underpin the practices and systems as well as the implementation processes across all Tiers (Simonsen & Sugai, 2019). Consistent with this logic, tiered responsive training frameworks are structured to provide all staff with high-quality group training at Tier 1 (e.g., incorporating the components of BST or effective group professional development outlined above). There is an expectation that this level of training and support will support most staff to effectively implement the practices with integrity. Within this multitiered framework, Tier 1 monitoring practices focus on routine progress monitoring of all teachers. Staff who are unable to effectively implement the practices as intended after Tier 1 training receive additional, more intensive, and increasingly individualized support practices. Tier 2 supports staff members struggling to implement instructional or behavior management practices with fidelity and fluency. Tier 2 training may include explicit individualized instruction, the provision of the rehearsal and feedback components of BST, self monitoring or self assessment procedures, and or other direct coaching methods (Myers et al., 2020). Many of the monitoring strategies are similar to Tier 1; however, these are often individualized and more frequently used. Staff members who do not respond to these Tier 2 supports receive the highest level of intensive, individualized training support. Tier 3 supports are consistently based on observations of the staff member’s practice in context. Tier 3 assessments and supports reflect an increase in the intensity and duration of procedures to build staff skills and address motivation (Myers et al., 2020). This is typically accomplished through intensive coaching both in vivo and after observation (Reddy et al., 2016).

The increased focus on using tiered training models to deliver IPD for staff that leads to effective implementation in context has led to an increase in the published research examining the effectiveness, efficacy, and social validity of this approach. To this point, there has been no systematic review of these studies. Such a review of this emerging approach is relevant and required to this point as there are no clear definitions of tiered training and little unanimity in descriptions or definitions of the evidence-based training practices that may be selected and implemented at each tier.

The purpose of this systematic review was to:

RQ1. Iidentify, summarize, and appraise the extant literature examining the efficacy of tiered training models;

RQ2. Identify the training practices used at each tier across all studies;

RQ3. Summarize how each tier has been defined across studies; and

RQ4. Describe the decision-making processes to determine the provision of additional support.

Method

Inclusion and Exclusion Criteria

To be included in this review, articles needed to be empirical in nature and published in the English language. Articles published in peer-reviewed journals as well as dissertations and theses were included. To be included, articles must have evaluated a tiered responsive training program. We defined tiered training as a model of professional development wherein all trainees receive training in evidence-based behavior support practices with smaller groups of trainees receiving more intensified and individualized implementation support. Responsive means that data (e.g., frequency or rate of trainee skill use, student outcome data) are used to inform the need for, and type of, more individualized or intensified support for some trainees. In other words, additional levels of support are provided to trainees based on their response to earlier professional learning opportunities. Tiered responsive training is different from models that provide cascading training (e.g., train the trainer, pyramidal training) and that do not use data to inform the design of more individualized or intensified training for some members of the intervention group.

Studies were excluded from this review if they were published in a language other than English, were conference proceedings, or were published in non-peer-reviewed outlets (e.g., newsletters). Articles that only reported learner outcomes, rather than trainee outcomes, were excluded as well; given the focus on the training procedures, outcome data must have been reported on the training itself. As the tiered responsive training must be responsive to data (e.g., using data-based decision-making), included articles must have reported outcome data on the trainee. Any studies that delivered training not using a tiered training model were excluded, such as pyramidal training or sequentially offered training components outside of a responsive structure; some of these training models may have been labeled as tiered but did not meet our definition of a tiered responsive training model.

Search Procedures

Review procedures followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines. Searches were undertaken to identify influential or frequently cited peer-reviewed tiered training articles. The authors collated the following keywords for the search: tiered, training, professional development, coaching, and professional learning. To comprehensively gather related articles, the specific search terms were: “tier* training” OR “tier* professional development” OR “tier* professional learning” OR “tier* coaching” OR “tier* implementation support*.”

The authors used those exact search terms across the following databases: EBSCOhost, OVID, and ProQuest on February 21, 2023. Both the first and second authors conducted this initial search independently verifying that both authors identified the same articles. Records were limited to English-language publications and no date restrictions were put in place. We conducted this review using Covidence systematic review software (2022). A total of 121 articles were found with 39 duplicates removed. This resulted in 82 unique results screened for inclusion or exclusion in this review.

The first and second authors independently conducted a review of titles and abstracts for the 82 articles resulting in an agreement of 97.56% (Cohen’s κ = .94). For the two articles that resulted in disagreement, the authors met and discussed until a consensus was reached. As a result, 58 irrelevant articles were excluded and 24 articles were included at full-text review to assess eligibility.

Both the first and second authors read the full-text of the 24 articles independently to determine if they met the inclusion criteria. Eleven articles met the inclusion criteria, and 13 articles were excluded because they did not present a responsive tiered training. The first and second authors had 100% agreement with the articles that met the inclusion criteria for this systematic review (Cohen’s κ = 1.0). One of the included articles was excluded at the article coding stage due to further reading indicating that the intervention did not meet the inclusion of responsive (Hoston, 2022). Thus, the forward and backward searches were based on the 11 included at this stage of analysis; however, only 10 articles from the initial search are included in the results.

Upon identifying the final articles from the initial search, the first and second authors conducted a forward and backward search to determine if there were further articles relevant to this review but not captured in the original search. As this research area is emerging this step was deemed necessary to capture articles that have been published but not yet indexed in the databases used in the initial search. For the backward search, the authors reviewed the articles cited in the 11 studies included at this stage. There were 515 articles cited in the included studies reviewed for initial screening. Following the screening, 30 articles were then included for further review with 98.44% agreement between the first two authors (Cohen’s κ = .85). All articles with disagreements were further reviewed. Twelve articles out of 30 remaining in the backward search were excluded for being duplicates of articles already included resulting in 18 articles for full text review. Twelve further articles were excluded upon full-text review for not meeting the definition of a tiered responsive training which resulted in six additional articles included in this systematic review.

The first author conducted a forward article review using Google Scholar on March 10th, 2023; the first author searched each of the 11 included articles on Google Scholar and developed a list of other articles that cited each of those articles (n = 151). The first and second authors then independently reviewed all articles based on title and authors to identify those articles that met criteria for further review. Using this method, 18 additional articles were included for further review, with 99.34% agreement (Cohen’s κ = .97). Ten articles were excluded for being duplicates with the previous searches and one article was excluded for not being data-based. One article was excluded for not meeting the definition of tiered responsive training. This resulted in an additional six articles for inclusion in the systematic review. See the online supplemental Figure S1 for the PRISMA flowchart of the search procedures.

Article Coding

A total of 22 articles were included in the systematic review including 15 peer-reviewed publications and seven dissertations. Two dissertations were subsequently published in a peer-reviewed journal, so they were removed from the final analysis (n = 20 total articles). We developed a coding sheet to permit the extraction of data from each included study on the following characteristics: (a) study information; (b) trainee participant characteristics; (c) learner participant characteristics; (d) trainer characteristics; (e) independent variables; (f) dependent variables; (g) treatment integrity; (h) social validity; and (i) design quality and characteristics. Design quality was based on the What Works Clearinghouse (WWC, 2020) reviewer standards for design inclusion in systematic literature reviews and meta-analyses; for more information on the design standards, we refer readers to the WWC standards handbook. Additional details regarding our coding procedures can be found in the online supplemental information (see online supplemental Table S2). The first and second authors coded the articles independently; they assessed interrater agreement on 5 of the 22 articles representing 22.73% of the studies. Their agreement across all variables was 96.60%. The first and second authors reviewed the coding disagreements and came to a consensus.

Results

Participant Characteristics

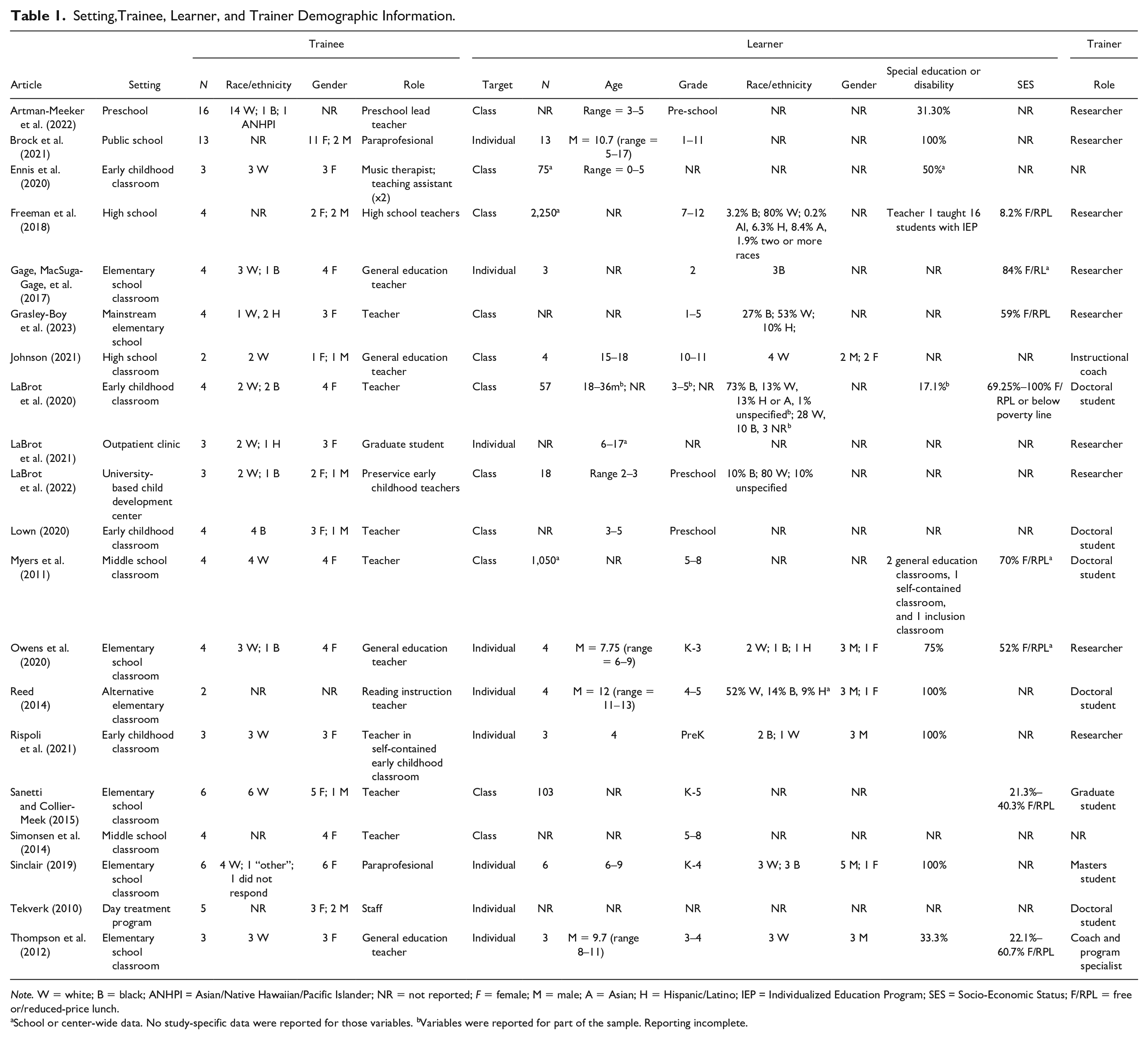

Trainee

Most trainees were White, female staff in schools (see Table 1). In five studies, researchers did not report the race/ethnicity data of their trainees (i.e., Artman-Meeker et al., 2022; Brock et al., 2021; Reed, 2014; Simonsen et al., 2014; Tekverk, 2010). Of those researchers reporting demographic variables, 76.4% of trainees identified as White, and 14.7% of trainees identified as Black. The remaining trainees identified as Hispanic (n = 3), Asian/Native Hawaiian/Pacific Islander (n = 1), or Other (n = 1), and one did not respond. In two studies researchers did not report the gender identity of their trainees (Artman-Meeker et al., 2022; Reed, 2014). Of the remaining studies reporting demographic data, 87.1% of trainees identified as female with the remaining participants identifying as male. Fifteen of the 20 studies reported teachers as participants with most remaining participants serving in a teaching assistant or paraprofessional role (n = 3). The remaining two studies reported training graduate students (LaBrot et al., 2021) and day treatment program staff (Tekverk, 2010).

Setting,Trainee, Learner, and Trainer Demographic Information.

Note. W = white; B = black; ANHPI = Asian/Native Hawaiian/Pacific Islander; NR = not reported; F = female; M = male; A = Asian; H = Hispanic/Latino; IEP = Individualized Education Program; SES = Socio-Economic Status; F/RPL = free or/reduced-price lunch.

School or center-wide data. No study-specific data were reported for those variables. bVariables were reported for part of the sample. Reporting incomplete.

Learner

Nine of the 20 studies involved individual children as the learners to measure training outcomes whereas the remaining were school-based and measured the impact of training on the class as a group (Table 1). The learners ranged in age from birth to 18 years old, yet six studies did not report learner age characteristics (Gage, MacSuga, et al., 2017; Grasley-Boy et al., 2023; Myers et al., 2011; Sanetti & Collier-Meek, 2015; Simonsen et al., 2014; Tekverk, 2010). Tekverk (2010) did not report any other learner demographics. There was varied reporting of race/ethnicity across the included studies with 45% not reporting this for their included learner participants. For the studies that included information about the gender identity of their learner participants, 79.17% were reported to be male with 20.83% reported to be female; 14 of the studies did not report gender demographics. For studies that described the special education status of the included learner participants, all studies included at least some students with a disability in their learner sample.

Trainer

Limited information was provided about the trainer for many of the included studies (Table 1). In most cases, the trainer was the primary researcher of the study (n = 9) or a graduate student (n = 7); based on the article reporting, it is unclear if the graduate students were also the primary researchers in the studies. Thompson et al. (2012) used a coach and program specialist at the participating school district as the primary trainer in their work. Similarly, Johnson (2021) had a school-based instructional coach as the trainer.

Setting Characteristics

Across most of the included studies, the trainers supported educators in school-based settings; this included early childhood classrooms (n = 6), elementary (n = 6), middle (n = 2), and secondary school classrooms (n = 2; Table 1). Brock et al. (2021) conducted their study in a public school across students in primary and secondary classrooms. Sinclair (2019) evaluated the responsive training program in a day treatment program whereas LaBrot et al. (2021) evaluated a responsive training approach with graduate students in an outpatient clinic setting. LaBrot et al. (2022) conducted their study in a university child development and teacher training center.

Dependent Variables

Trainee Variables

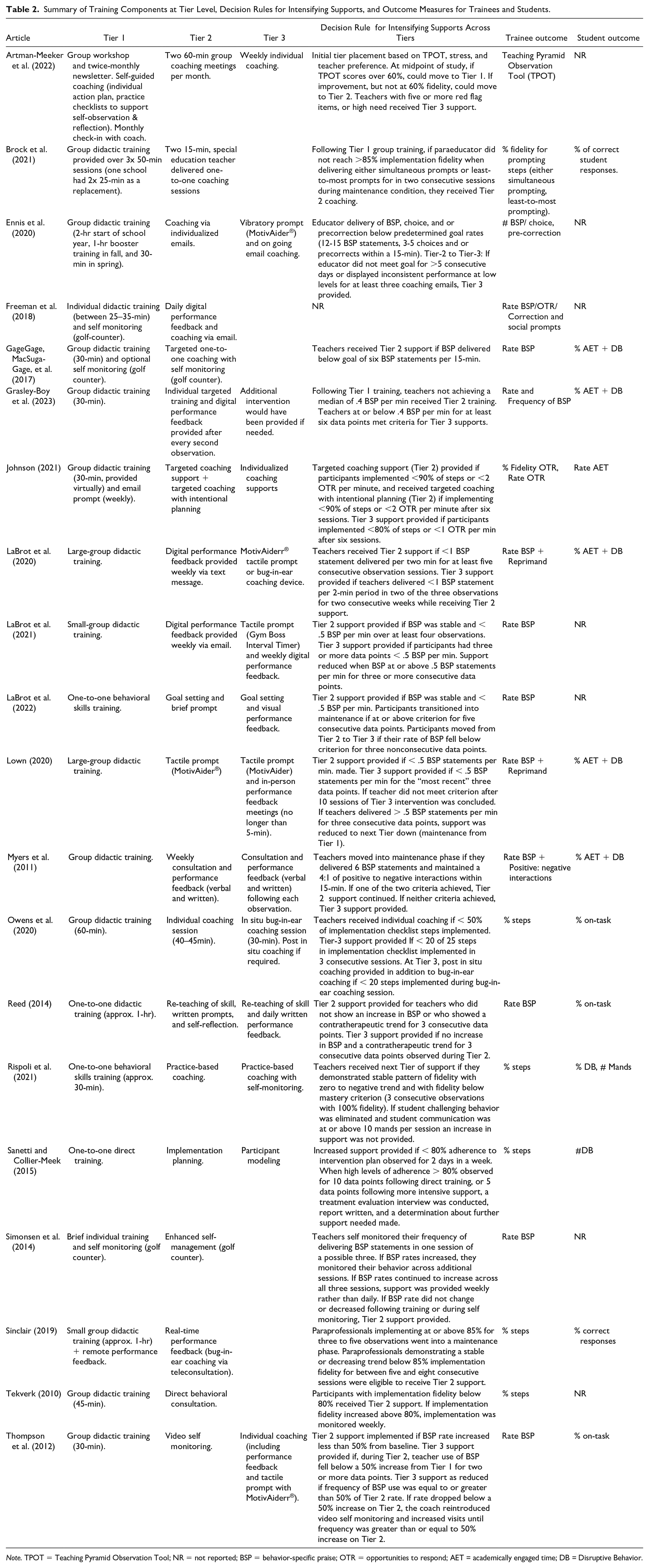

For the trainees, there were two primary outcome variables measured across all studies: fidelity of implementation and the implementation of behavior-specific praise (BSP; see Table 2). Seven studies measured the fidelity of implementation where they evaluated the impact of responsive training on the percentage of steps implemented as planned. Twelve studies evaluated the trainee’s implementation of BSP using either a count or rate per minute. One study used the Teaching Pyramid Observation Tool (TPOT; L. Fox et al., 2014) to measure the impact of tiered training on trainee practice (Artman-Meeker et al., 2022). Additionally, some studies included other outcome variables such as choice and pre-correction (Ennis et al., 2020; Freeman et al., 2018), opportunities to respond (OTR; Freeman et al., 2018; Johnson, 2021), reprimands (LaBrot et al., 2020; Lown, 2020), positive to negative interaction ratio (Myers et al., 2011), and social prompts (Freeman et al., 2018).

Summary of Training Components at Tier Level, Decision Rules for Intensifying Supports, and Outcome Measures for Trainees and Students.

Note. TPOT = Teaching Pyramid Observation Tool; NR = not reported; BSP = behavior-specific praise; OTR = opportunities to respond; AET = academically engaged time; DB = Disruptive Behavior.

Learner Variables

While all included articles reported the target learner (Table 1), studies were less likely to report information about the learner outcomes (n = 13 of 20; Table 2). When studies reported information about the learner, they often reported the learner’s percentage of on-task behavior (n = 9). Some studies also reported on the level of disruptive behavior displayed by the learner (n = 7). Other outcomes for the learners include percentage of correct responses (Brock et al., 2021; Sinclair, 2019) or the number of mands (Rispoli et al., 2021). The remaining studies did not provide information about the learner outcome variables (n = 7 of 20).

Responsive Tiered Training

Tier 1

Didactic Training

All 20 of the included studies included some form of didactic training at Tier 1 (Table 2). The most used Tier 1 training approach was group didactic training (n = 14). This form of training was provided to groups of varying sizes, ranging from three trainees (LaBrot et al., 2021) to a whole-staff cohort from a large middle school (enrollment of 1050; Myers et al., 2011). Duration of Tier 1 didactic training ranged from 30 min (or approximately; Freeman et al., 2018; Gage, MacSuga-Gage, et al., 2017; Grasley-Boy et al., 2023; Johnson, 2021; Rispoli et al., 2021; Thompson et al., 2012) to 210 min across the school year (Ennis et al., 2020). Six studies delivered Tier 1 training to individuals using BST (LaBrot et al., 2022; Rispoli et al., 2021), one-to-one direct training (Sanetti & Collier-Meek, 2015), brief individual training (Simonsen et al., 2014), individual professional development (Freeman et al., 2018), and individual didactic training (Reed, 2014). These approaches, while delivered to individuals, were universally applied across the system in multiple studies (e.g., Freeman et al., 2018; LaBrot et al., 2022; Reed, 2014). In studies conducted by Sanetti and Collier-Meek (2015) and Rispoli et al. (2021), the focus of the training was tailored to match specific content foci of teachers.

Forty-five percent of studies (n = 9) included all components of a BST approach (e.g., Lerman et al., 2015). The most often used training practice was an oral description of the practice (n = 18). A written description of the target skill as well as opportunities for the trainee to rehearse skills were identified in 10 of the included studies. Direct observations (n = 9) and performance feedback (n = 10) were the least frequently identifiable training practices coded. A summary of the BST components included in the Tier 1 training for each study can be found in the online supplemental materials (see online supplemental Table S1).

Additional Tier 1 Practices

Six of the included studies supplemented Tier 1 training with some form of self monitoring, prompt, or performance feedback. For example, Freeman et al. (2018), Gage, MacSuga-Gage, et al. (2017), and Simonsen et al. (2014) incorporated self monitoring in Tier 1 training (i.e., using a golf counter). Sinclair (2019) supplemented Tier 1 training with performance feedback provided remotely, while Johnson (2021) delivered email prompts to trainees. Artman-Meeker et al. (2022) provided self-coaching resources (i.e., individual action plan, practice checklists, and self observation and reflection sheets), as well as opportunities to check-in with a coach monthly.

Tier 2

Coaching

Coaching (n = 14) was the most often described Tier 2 practice (Table 2). While coaching was frequently used at Tier 2, there was considerable variability in both the duration of coaching and the specific practices described across the included studies. For example, Artman-Meeker et al. (2022) provided coaching in a group format at Tier 2, while Owens et al. (2020) described a single 30- to 40-min session delivered to trainees using BST. Similarly, Grasley-Boy et al. (2023) provided participants with a brief training session using BST (i.e., approximately 20 min), following this with performance feedback delivered via email and text message. Brock et al. (2021) reported trainees receiving two 15-min coaching sessions that used a video recording of trainee practice to deliver corrective and positive feedback relating to the target skill. Myers et al. (2011) reported 10-min weekly coaching meetings in which graphed trainee performance data were reviewed and feedback delivered to trainees, while Rispoli et al. (2021) provided weekly 20-min sessions. In addition, Sinclair (2019) coached trainees at Tier 2 using real-time remote bug-in-ear coaching (i.e., the delivery of immediate performance feedback to a trainee within their work setting using bug-in-ear communication devices). The use of remote coaching at Tier 2 was specifically identified, with trainees coached using teleconsultation platforms such as Zoom or GoReact (Johnson, 2021), as well as by email (Ennis et al., 2020; Freeman et al., 2018).

Performance Feedback

Performance feedback (n = 7) was also a commonly used Tier 2 support for trainees. Performance feedback was frequently used in combination with other training practices such as coaching, self monitoring, and prompting (e.g., Gage, MacSuga-Gage, et al., 2017; Grasley-Boy et al., 2023; LaBrot et al., 2022). Digital performance feedback was used in six studies (Freeman et al., 2018; Gage, MacSuga-Gage, et al., 2017; Grasley-Boy et al., 2023; Johnson, 2021; LaBrot et al., 2020, 2021), with this feedback provided by email (Freeman et al., 2018; Gage, MacSuga-Gage, et al., 2017; Johnson, 2021), text message (LaBrot et al., 2020, 2021), both email and text message (Grasley-Boy et al., 2023), and or in GoReact (Johnson, 2021). Myers et al. (2011) provided written feedback.

Other Supports

Self monitoring or prompting procedures were used in 9 studies at Tier 2. Self monitoring supports included the use of golf counters and recording of the rate of delivery of the target skill (Freeman et al., 2018; Gage, MacSuga-Gage, et al., 2017; Simonsen et al., 2014), as well as trainees who watched recordings of their practice in context and assessed their own fidelity against a performance checklist (Owens et al., 2020; Thompson et al., 2012). Reed (2014) and Artman-Meeker et al. (2022) provided staff with a cue sheet and self-reflection form to allow trainees to monitor their performance.

Prompts for trainees included the use of a tactile prompting aide for trainees (e.g., a MotivAider®; Lown, 2020), as well as written prompts (Reed, 2014), and antecedent prompts sent weekly via email (Simonsen et al., 2014), and daily in person (LaBrot et al., 2022). Goal setting was identified as a specific intervention in one study (LaBrot et al., 2022). Finally, video models of the target skills were provided to trainees in three studies to supplement Tier 2 support (LaBrot et al., 2020, 2021; Sinclair, 2019). It is important to note that while Reed (2014) and Simonsen et al. (2014) planned Tier 2 interventions, none were required.

Tier 3

Of the 20 studies included in the current review, 11 studies implemented Tier 3 training support for trainees (Table 2). Six studies did not include a third tier of support for trainees, while two studies included Tier 3 supports that were not required (Johnson, 2021; Reed, 2014). Grasley-Boy et al. (2023) did not describe Tier 3 supports but indicated that they would have been provided if necessary. In total, 21 participants across all studies received Tier 3 training, representing 21% of all participants. Of the 11 studies that delivered Tier 3 training supports, seven explicitly described maintaining existing Tier 2 trainee interventions while additional supports were added (e.g., Freeman et al., 2018; LaBrot et al., 2021). For example, in their studies, Ennis et al. (2020) and LaBrot et al. (2021) maintained email coaching and digital performance feedback respectively, while tactile prompts were added. LaBrot et al. (2022) added visual performance feedback to participants’ goal-setting and their delivery of daily prompts.

Coaching remained the most common Tier 3 intervention. LaBrot et al. (2021) and Thompson et al. (2012) included coaching at Tier 3, while Sanetti and Collier-Meek (2015) provided modeling and coaching within the trainees’ classrooms. Artman-Meeker et al. (2022) moved participants from group coaching to individual coaching supports in which their practice was observed, and performance feedback delivered. Similarly, Owens et al. (2020) provided bug-in-ear coaching for staff to allow for real-time feedback in context. In addition, Myers et al. (2011) increased the frequency of coaching sessions from weekly to following each session, increasing the frequency of written and verbal performance feedback to the same schedule.

Coaching supports at Tier 3 were repeatedly supplemented with tactile prompting devices (e.g., Ennis et al., 2020; LaBrot et al., 2020, 2021; Lown, 2020; Thompson et al., 2012). When provided with a choice of Tier 3 supports, participants in LaBrot et al.’s (2020) study chose tactile prompting over bug-in-ear coaching. Ennis et al. (2020) supplemented their coaching support with a trainee self-monitoring procedure using a task-analyzed performance checklist.

Decision-Making Processes for Increased Supports

Nineteen of the included studies described an a priori decision-making process to determine the provision of additional support to trainees (Table 2). Freeman et al. (2018) presented a study that provided increased and individualized support based on teacher response to training. However, they did not articulate the a priori criterion by which this decision was made. While all studies focused on a specific percentage of implementation fidelity or expected rate of target skill use, the performance criteria differed across studies. For example, while Brock et al. (2021) and Owens et al. (2020) both assessed implementation fidelity by measuring the percentage correct of required steps, increased supports were provided by Brock et al. if the trainee did not reach at least 85% implementation fidelity, whereas Owens et al. provided Tier 2 supports if the trainee implemented less than 50% of the steps correctly. In addition, variability was observed in the number of observations required to demonstrate performance below the predetermined criterion. For example, LaBrot et al. (2020) required trainees to perform below the criterion for five consecutive observations, while Sanetti and Collier-Meek (2015) required two days of trainee performance at the criterion within a week. Thompson et al. (2012) made decisions about increasing support by assessing changes in performance relative to baseline. In their study, Artman-Meeker et al. (2022) made decisions for increased support provision based on the fidelity of implementation of key Pyramid Model practices, as well as the presence of “red flag” practices that are inconsistent with the implementation of the Pyramid Model, and or high-levels challenging or disruptive behavior. Multiple studies included a decision-making process for maintaining or fading existing supports, or for moving trainees into a maintenance phase (e.g., Johnson, 2021; Lown, 2020; Rispoli et al., 2021).

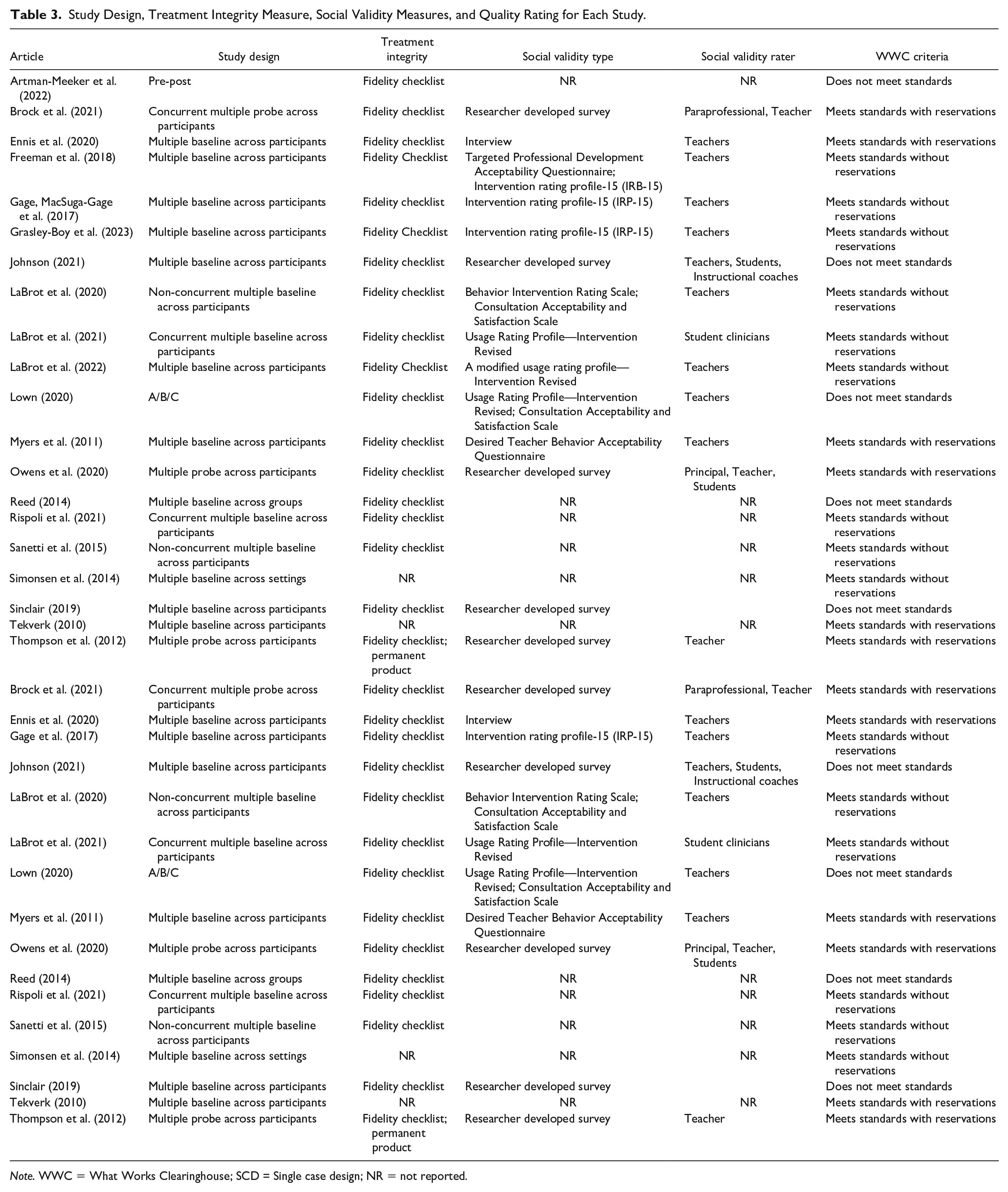

Study Quality

Study variables, including study design, measurement of treatment integrity, social validity assessment type and respondents, and whether the What Works Clearinghouse (2020) standards were met, are reported in Table 3.

Study Design, Treatment Integrity Measure, Social Validity Measures, and Quality Rating for Each Study.

Note. WWC = What Works Clearinghouse; SCD = Single case design; NR = not reported.

Research Design

Nineteen of the 20 included studies used a single-case research design with all but Lown (2020) using variation of a multiple baseline or multiple probe design (See Table 3); Lown (2020) proposed a multiple baseline design but resulted in an ABC design due to researcher error. Three studies used a multiple probe design whereas 15 studies used a multiple baseline design. Of those designs, most were across participants (n = 16) with Simonsen et al. (2014) used a multiple baseline design across settings and Reed (2014) used a multiple baseline across groups design. Artman-Meeker et al. (2022) measured the impact of their tiered training framework using statistical analyses of teachers’ TPOT scores taken at three time points (i.e., pre-test, midpoint, and post-test), as well as results of a teacher survey that measured preferences for professional development, self-efficacy in discipline, amongst other variables.

Treatment Integrity

All but two studies reported the measure of treatment integrity of the training intervention using a fidelity checklist developed by the researchers. Simonsen et al. (2014) and Tekverk (2010) did not report any measure of treatment integrity within their studies. Thompson et al. (2012) also reported the use of permanent product data collection in the form of video recordings and email records as a measure of treatment integrity.

Social Validity

Most studies measured some form of social validity. In many cases, the study used a researcher-developed intervention acceptability questionnaire (n = 5). Alternatively, studies used the Intervention Rating Profile-15 (IRP-15; Freeman et al., 2018; Gage, MacSuga-Gage, et al., 2017; Grasley-Boy et al., 2023), Usage Rating Profile—Intervention Revised (URP-IR; LaBrot et al., 2021, 2022 [modified]; Lown, 2020), Behavior Intervention Rating Scale (BIRS; LaBrot et al., 2020), Targeted Professional Development Acceptability Questionnaire (Freeman et al., 2018), and Consultation Acceptability and Satisfaction Scale (CASS; LaBrot et al., 2020; Lown, 2020). One additional measure used was the Desired Teacher Behavior Acceptability Questionnaire (Myers et al., 2011). When measuring social validity, most studies asked the trainee themselves (n = 13). Additionally, Johnson (2021) and Owens et al. (2020) asked the student learners about their acceptability of the intervention developed as well as the trainees and instructional coaches. As Brock et al. (2021) trained teachers to deliver the training to paraprofessional staff, they measured the social validity reported by the teachers and the paraprofessionals.

What Works Clearinghouse Design Standards

Of the 20 studies included in this systematic review, nine studies met What Works Clearinghouse (WWC) standards without reservations (Freeman et al., 2018; Gage, MacSuga-Gage, et al., 2017; Grasley-Boy et al., 2023; LaBrot et al., 2020, 2021, 2022; Rispoli et al., 2021; Sanetti & Collier-Meek, 2015; Simonsen et al., 2014; see Table 3). Six studies met standards with reservations. Four studies did not meet WWC single-case design standards (Johnson, 2021; Lown, 2020; Reed, 2014; Sinclair, 2019); all four of these studies were dissertations. They did not meet standards due to design constraints such as insufficient participants (e.g., Johnson, 2021; Reed, 2014; Sinclair, 2019) or research design errors that ensured insufficient points of experimental manipulation (e.g., Lown, 2020). One study did not meet WWC design standards as it did not randomize group assignments (Artman-Meeker et al., 2022).

Discussion

MTSS was originally designed to support educators in selecting and adopting evidence-based academic and behavioral supports and interventions to meet the needs of learners of all ages and abilities (McIntosh & Goodman, 2016). This same tiered logic has increasingly been used to support the professional development needs of staff through the delivery of effective pre- and in-service training using formative data to guide the implementation of specific professional development activities that promote improved outcomes for staff (Reddy et al., 2015). Reddy et al. (2016) proposed that this process could be used to improve staff outcomes, focusing on collecting data to enhance staff performance (i.e., formative assessment) rather than evaluate their abilities (summative assessment). The current study evaluated the extant literature within the scope of this framework.

In this systematic review, 20 unique studies met the inclusion criteria for a multitiered, responsive professional development program. Across these studies, a wide array of professional development activities supported the training of staff in schools, clinics, and a day treatment program across three tiers of support. While most studies did explicitly include evidence-based, comprehensive training components (e.g., BST), variability was observed in the delivery of more specific, intensive, and individualized supports. This resulted in a high degree of overlap across different tiers of supports between studies with some studies using a complete and individualized BST training model at Tier 1 (e.g., Rispoli et al., 2021), whereas others did not include those individualized components until Tier 3 (e.g., coaching; LaBrot et al., 2021). In addition, the use of more intensive coaching supports varied across studies as well. For example, Sinclair (2019) delivered bug-in-ear coaching to trainees at Tier 2, whilst Owens et al. (2020) used the same intervention at Tier 3. Importantly, even with the variability of duration, modality (e.g., face-to-face or remote), and Tier of use, a consistent increase in the frequency, duration, or intensity of coaching interventions was noted across studies at Tiers 2 and 3. In addition, there were examples in which the individualized supports were increasingly contextualized to the specific workspaces of the trainees’ (e.g., Sanetti & Collier-Meek, 2015). For example, Sanetti and Collier Meek (2015) assessed an individual trainee’s barriers to implementation within their context and subsequently developed strategies to directly address these barriers, illustrating of how a responsive tiered model of IPD might directly address implementation challenges related to time and cost. Conceptually, this aligns with the tiered logic of MTSS frameworks for students and ensures that supports are provided to match the level of individual needs (McIntosh & Goodman, 2016). In doing this, responsive tiered training models may allow for efficient allocation of the costliest resources (e.g., coaching) while minimizing the time commitment for busy teachers (R. A. Fox et al., 2021).

There was also notable variability in the use of prompting (including tactile), self monitoring, and the delivery of performance feedback (in person and remotely) across all three tiers of responsive training. For example, Lown (2020) utilized a tactile prompt at Tier 2, while other studies implement tactile prompts at Tier 3 (e.g., Ennis et al., 2020). The studies that delivered these additional supports at Tier 3 all provided some form of performance feedback and or self monitoring interventions at Tier 2, while Lown incorporated performance feedback at Tier 3. Taken together, this may indicate that tiered responsive training models incorporated both antecedent (e.g., tactile prompting) and consequence-based (e.g., performance feedback delivered at the end of the week) interventions to support staff. While an assessment of the logic or effectiveness of decisions to select specific antecedent or consequence-based supports was beyond the scope of this current research, it may represent a meaningful area for future research.

The decision making procedures trainers use to assess when an increased level of support is required for trainees, as well as what interventions and supports are best matched to trainee needs are critical components of responsive tiered training programs. As acknowledged in the student-focused MTSS literature, there can be a variety of choices of interventions that may be relevant at various tiers of instruction (e.g., Jimerson et al., 2016); it is not a one-size-fits-all approach. This is paralleled in the tiered responsive training literature where components such as workshops (e.g., Ennis et al., 2020), individual training (e.g., Rispoli et al., 2021), self-monitoring (e.g., Gage, MacSuga-Gage, et al., 2017), tactile prompting (e.g., LaBrot et al., 2021), and graphic feedback (e.g., LaBrot et al., 2020) were used at different Tiers across different studies. In addition, trainees were offered choices in which support they would like to receive as part of their increased training intervention (e.g., bug-in-ear coaching or tactile prompting; LaBrot et al., 2020). By offering choices about the modality—or topography—of implementation supports provided, responsive tiered models of IPD may be uniquely suited to address barriers related to teacher motivation by ensuring that the supports provided align with trainees’ preferences, goals, and needs. However, future research is needed to explore when to provide choices of implementation supports to trainees (e.g., at the outset of training or only after more universal supports have been provided), whether providing choices leads to better outcomes for trainees (e.g., increased implementation fidelity), and whether providing choices can reduce the time and cost of training in the longer term (e.g., when compared to IPD that does not include choice opportunities). While effectiveness and logic (i.e., whether antecedent, consequence, or combined strategies are selected) of specific supports may be an empirical question, qualitative methods of inquiry are also recommended to garner richer data sets exploring teacher preferences and social validity of intervention to accompany data on cost and time effectiveness.

In the included studies, the decisions about when additional supports were required were predominantly focused on the trainee’s performance. For example, participants received additional support when their performance dropped below a specific rate (e.g., Myers et al., 2011) or fidelity percentage (e.g., Owens et al., 2020) over multiple observations or days. Each of the included studies described an a priori determination of the performance criteria at each Tier. As noted by Simonsen et al. (2019), data-based decision-making about professional support is required to ensure that evidence-based practices are implemented effectively in ways that are acceptable to trainees and learners. Ensuring that additional supports were matched to the skill and provided in a timely fashion (i.e., not leaving trainees implementing ineffectively for extended periods) may be critical to ensure effective and acceptable implementation of evidence-based practices using tiered training frameworks. It will remain an empirical question as to when increased support should be provided.

Given that this model of professional development can be used flexibly across settings and skills, it becomes important to consider how to measure success for each skill. For example, opportunities to respond (OTR) BSP lend themselves to the rate or frequency of use (e.g., LaBrot et al., 2020, 2021). In contrast, other more specific skills for a unique setting may require a measure of the percentage of opportunities implemented with accuracy using a fidelity checklist (e.g., Owens et al., 2020; Rispoli et al., 2021). The learner’s behavior of interest may vary as well, yet similar considerations are worth noting. These studies all measured an observable student behavior that may be susceptible to change over time such as rate of disruptive behavior or the percentage of time academically engaged. When conducting further evaluation of a responsive training model, individualized change-sensitive metrics are effective for student behavior change; however, they must relate to the expected behavior change in the corresponding trainee.

In this review, we used the language of trainer, trainee, and learner to refer to the person conducting the training, the person receiving the training, and the person with whom the trainee works, respectively. This was to refer to the different settings in which a responsive training model can be implemented. This framework can be applied to any setting where staff are in need of professional development; however, most of the included studies were conducted in Grades PK–12 school setting. Thus, the trainee and learner were most often a teacher or paraprofessional, and student, respectively. Further study is needed in other environments such as clinic training programs (e.g., LaBrot et al., 2021), hospitals and day treatment programs (e.g., Tekverk, 2010), or other alternative settings for learning (e.g., afterschool programs, clinics, and juvenile justice centers). Staff across all these contexts participate in and would benefit from further training to support the learners with whom they work. Additional evaluation in these contexts would better support the effectiveness of training modalities for settings outside of the school environment.

Each of the included studies focused on developing or expanding trainees’ repertoires of evidence-based practice implementation, as well as the interventions that supported these changes. Our systematic review was to include any evidence-based target skill that is relevant to the trainee’s role; however, the studies identified for inclusion primarily investigated the implementation of practices that might be considered behavioral in nature (e.g., BSP). As Noell and Gansle (2009) noted, trainee behavior will be strongly influenced by the environments within which they work (i.e., the contingencies of reinforcement or punishment for implementation within their context), as well as their existing evidence-based practice repertoires. By focusing on the development of repertoires and the interventions that support the effective development of trainees’ skills, there is the potential to underemphasize the impact of the implementation environment. Importantly, Sanetti and Collier-Meek (2015) included assessments of potential implementation barriers and the development of plans to overcome these barriers as part of their coaching model. Future assessments of tiered training models may also consider the impact of variables that have been associated with the successful and sustained implementation of PBIS (e.g., the impact of leadership, teaming, and staff buy-in; see McIntosh et al., 2014). However, given the potential impact of the implementation environment on the successful implementation of evidence-based practices within schools, school leaders might seek to include potentially more cost- and time-effective activities, such as personalized goal setting, peer-to-peer coaching and feedback, and regular team meetings to discuss implementation successes and challenges, into whole-of-school IPD programs.

While we reviewed the various training components included, we did not plan any component analysis of the training components. Thus, this systematic review cannot be used to discuss the differential effectiveness of training components. Future research should examine the effective and relevant components of this training model. We suggest that this be undertaken with a clear description of contextual needs and variables to assess the impact of components of tiered training within specific learning or work environments.

Limitations

With such flexibility in the decision-making model and various settings in which it has been applied, there are possibly missing studies that were not found in this search. While we intended to use a broad search to capture all relevant studies—including dissertations and theses as recommended by Gage, Cook, et al. (2017)—some may still have been missed. Further, many of the studies are recent publications that were not captured in the initial database searches; they may not have been indexed within the included databases. We conducted a forward and backward ancestral search to try and capture as much of this as possible; however, due to various labels for the training programs, some may not have been included. Future research should consider the terms in which they label their training models to easily link to previous studies in the field. Terms such as MTS (Simonsen et al., 2014), and multi-tiered implementation supports (Sanetti & Collier-Meek, 2015), along with descriptions such as multitiered systems of support for teachers (Ennis et al., 2020) have been used. We propose the title responsive tiered training to differentiate these training models from multitiered systems of support for students, establishing an independent title that reflects a conceptually systematic, tiered approach to professional learning.

When extracting the procedures of the included studies, each study labeled its procedures in different ways. With various labels to define the specific components of training and decision-making, or gaps in reporting details of their training, some studies may have included more than reported in this study. This conservative approach to data extraction may undersell what was done. In the future, we encourage researchers to describe their procedures using consistent terms. By unifying the terms to describe the training and decision making procedures, the consistency and clarity of the procedures conducted can be increased. This may enhance efforts to conduct direct and systematic replication of studies.

In the evaluation of the design quality, we used the WWC (2020) design standards for single-case design. WWC design standards do not align nicely with responsive tiered training with multiple phases. As most included studies used a multiple baseline design, WWC design standards evaluate three or more replications with consecutive phases demonstrating sufficient measurement. In a responsive tiered training model, trainees might be participating in additional, sequential training components as they progress through added tiers of intervention. That may result in additional phases of intervention (e.g., ABCD design) to represent baseline, Tier 1, Tier 2, and Tier 3 intervention. Thus, in our evaluation of the WWC design standards, all assessment was based on the initial baseline and first intervention phase presented due to the overlapping baseline, and systematic introduction of subsequent intervention phases.

Conclusion

Twenty studies were included in this systematic review of the emerging research literature examining the impact of tiered responsive training models on trainee and learner performance. While each model was unique, consistent features were identified across studies. Didactic training that included some or all components of BST was consistently used at Tier 1. In some studies, these didactic supports were supplemented with performance feedback, prompts, or self monitoring interventions. As with other tiered frameworks, the supports provided to staff at Tiers 2 and 3 increased in frequency and were increasingly individualized for trainees. This was achieved by coaching, delivery of performance feedback, and the addition of self monitoring interventions. The form and shape of specific interventions at each Tier differed across studies, reflecting the need for such tiered responsive training programs to be tailored to both the target training behaviors and the specific settings in which training and supports are to be delivered. Overall, the importance of data to inform decision making is clear. If educators are to receive training that is truly responsive to the needs of their learners, and their own needs, and that matches the challenges and constraints of their work, then decision making that is informed by direct behavioral data will be critical.

Supplemental Material

sj-docx-1-pbi-10.1177_10983007231224028 – Supplemental material for Multi-Tiered Systems of Educator Professional Development: A Systematic Literature Review of Responsive, Tiered Professional Development Models

Supplemental material, sj-docx-1-pbi-10.1177_10983007231224028 for Multi-Tiered Systems of Educator Professional Development: A Systematic Literature Review of Responsive, Tiered Professional Development Models by Bradley S. Bloomfield, Russell A. Fox and Erin S. Leif in Journal of Positive Behavior Interventions

Supplemental Material

sj-docx-2-pbi-10.1177_10983007231224028 – Supplemental material for Multi-Tiered Systems of Educator Professional Development: A Systematic Literature Review of Responsive, Tiered Professional Development Models

Supplemental material, sj-docx-2-pbi-10.1177_10983007231224028 for Multi-Tiered Systems of Educator Professional Development: A Systematic Literature Review of Responsive, Tiered Professional Development Models by Bradley S. Bloomfield, Russell A. Fox and Erin S. Leif in Journal of Positive Behavior Interventions

Supplemental Material

sj-docx-3-pbi-10.1177_10983007231224028 – Supplemental material for Multi-Tiered Systems of Educator Professional Development: A Systematic Literature Review of Responsive, Tiered Professional Development Models

Supplemental material, sj-docx-3-pbi-10.1177_10983007231224028 for Multi-Tiered Systems of Educator Professional Development: A Systematic Literature Review of Responsive, Tiered Professional Development Models by Bradley S. Bloomfield, Russell A. Fox and Erin S. Leif in Journal of Positive Behavior Interventions

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.