Abstract

The purpose of this study was to evaluate the effectiveness of training plus Practice-Based Coaching (PBC), delivered via text message, on teacher use of targeted Pyramid Model (PM) practices. A multiple baseline design across behaviors was replicated across three early childhood teachers. Following training on self-selected target practices, the coach watched observations recorded by the teacher and engaged the teacher in a back-and-forth coaching conversation via text message. Coaching sessions included supportive and constructive feedback from the coach as well as prompts for teachers to engage in reflection about their use of the target practice. Training plus PBC, delivered via text message, was both effective and efficient for increasing teacher use of targeted PM practices. Results were maintained up to 3 weeks after the withdrawal of coaching across all targeted practices, and there was some evidence of generalization.

Keywords

Professional development (PD) is used to increase teacher implementation of evidence-based practices (Gregson & Sturko, 2007). In early childhood education, PD has been associated with improvements in classroom quality and child outcomes (Egert et al., 2018; Schachter et al., 2019). While didactic PD (e.g., one-time workshop) alone is not sufficient for increasing sustained use of practices, PD that links knowledge with practice and includes strategies such as modeling and feedback is more likely to result in practice change (Zaslow et al., 2010). It should provide teachers with content knowledge, opportunities to practice in relevant contexts (e.g., classroom), individualized feedback and self-reflection, and include goals addressing teacher-identified needs and practices to support positive child outcomes (Schachter et al., 2019).

Coaching has been shown to increase teacher use of teaching practices and promote both generalization and maintenance (e.g., Barton et al., 2013). One evidence-based model is Practice-Based Coaching (PBC; Snyder et al., 2015), a cyclical framework implemented in the context of a collaborative partnership and designed to increase the use of effective teaching practices. Key components of PBC are: (a) shared goals and action planning, (b) focused observation, and (c) reflection and feedback. In studies examining the effectiveness of PBC (e.g., Conroy et al., 2019; Hemmeter et al., 2016), coaching has been time and resource intensive, with live observations and in-person coaching sessions for up to 16 weeks. Resources needed to implement PBC as examined previously might be prohibitive for early childhood programs. There is a need to investigate approaches to coaching that are still impactful but require lower rates of coaching dosage. In the context of PBC, distance coaching could minimize disruptions, limit scheduling challenges, and increase availability in a wider geographical area (Schachter et al., 2019).

Three studies (Artman-Meeker et al., 2014; Conroy et al., 2022; McLeod et al., 2019) have examined PBC implemented remotely. Artman-Meeker et al. (2014) found non-significant differences between intervention and control groups when evaluating PBC implemented via email. Coaching focused on increasing teacher use of Pyramid Model (PM) practices (e.g., providing transition warnings) as measured using the Teaching Pyramid Observation Tool (TPOT; Hemmeter et al., 2014). McLeod and colleagues (2019) implemented the observation, reflection, and feedback components of PBC remotely with preservice teachers to increase the use of recommended practices (e.g., praise and choice), using a single case design. A functional relation was present for both participants, with some evidence of generalization and maintenance. Conroy et al. (2022) found significant effects on classroom quality in an underpowered investigation of the effectiveness of delivering PBC remotely to increase teacher use of BEST in CLASS practices using asynchronous training modules, a web-based application to embed feedback into observation videos, and reflection and feedback meetings over Zoom. Although all parts of the intervention were conducted remotely, teachers and coaches participated in live (via Zoom) debrief meetings where they engaged in reflective conversations, not decreasing the amount of time teachers and coaches engaged in coaching, a key difference between other studies in which PBC has been delivered remotely. Mixed results across studies provide evidence that delivering PBC remotely could be effective, but additional research is needed.

Performance-based feedback has been used effectively in a distance format to increase practice use outside of the structure of PBC. Feedback has been sent via email to preservice and in-service teachers and teaching teams, while the observation component has most commonly been conducted in person (Barton et al., 2016, 2020). Across studies, email feedback included a request for teachers to respond as a measure of contact with the intervention. Responsiveness was variable (range 57%–100%). For most participants, the intervention resulted in practice change, and results generalized across settings and maintained after coaching was removed. In one study (Krick Oborn et al., 2015), distance coaching was used for both observation (i.e., video recordings) and feedback (i.e., email) to increase home visitor use of coaching strategies (e.g., problem-solving, demonstration). There was some evidence of increased use, but no participants reached the criterion (use of 70% of coaching strategies in a session). Email feedback included a request for a response but home visitors only responded to 33% to 66% of emails, indicating limited contact with the intervention, and practice use was maintained for only 33% of participants.

Given the lack of teacher response to email feedback (Barton et al., 2020; Krick Oborn et al., 2015), increasing responsivity and engagement is an important next step in this research. Only one study (McLeod et al., 2019) asked participants to respond with a reflection on their use of the target practice. Other studies asked teachers only to confirm the time for the next session with a simple yes or no (Barton et al., 2016, 2020). Engaging teachers in reflection requires the teacher to engage with coaching content. In addition to being a proxy for engagement, prompting teachers to reflect on their teaching practices can increase their ability to identify and think critically about their use of quality teaching (Schachter et al., 2019).

Text messaging, an easily accessible technology designed for short conversational exchanges, may support increased responsiveness. With 97% of Americans texting at least weekly, it is a well-known technology (Stroo & Shaw, 2018). In comparison to emails, text messages are more often opened (25% versus 98%), and response time is shorter (average of 90 min versus 90 s; Stroo & Shaw, 2018), indicating text messaging may be an easier and more efficient way for teachers to engage with coaching. Text messaging has been used in parent training literature and parents who had higher levels of engagement tended to have more positive outcomes (Bigelow et al., 2008). We identified only one study (Barton et al., 2019) evaluating the effectiveness of text messaging in delivering feedback to early childhood teachers. A functional relation was identified for three of four participants with variable, but generally lower practice use during generalization and maintenance sessions. Researchers asked for confirmation of receipt of texts, but teacher engagement (i.e., responsivity to text messages) data were not reported. Because low engagement with the intervention has been identified as a potential barrier to improving practices (Barton et al., 2016, 2020; Krick Oborn et al., 2015), and text messaging has successfully been used to increase engagement in parent training studies (Bigelow et al., 2008), research on teacher coaching via text message is justified.

The purpose of this study was to evaluate the effectiveness of a coaching package, training plus PBC, implemented via text message (Training + Text-PBC) on teacher use of targeted PM practices. Implementation of the PM has been successfully supported with PBC and associated with improved classroom quality and positive child outcomes. Professional development, typically training plus PBC, has been effective in increasing teachers’ fidelity to the implementation of PM practices (e.g., Hemmeter et al., 2016; Hemmeter, Fox, et al., 2021). We addressed limitations of previous research by (a) evaluating the use of text messaging with teachers (Barton et al., 2016); (b) incorporating goal setting (Barton et al., 2020); (c) establishing expectations that participants respond by embedding reflective prompts (Barton et al., 2020; Krick Oborn et al., 2015); and (d) measuring fidelity of training sessions as well as the text messaging protocol during the baseline and intervention conditions (McLeod et al., 2019). Both the text messaging format (conversational form of remote communication) and PBC (embedded opportunities for teacher engagement within the coaching process) help address these limitations. This study addressed the following research questions:

Method

Participants, Implementers, and Settings

After obtaining approval from an institutional review board, three teachers were recruited by soliciting nominations from administrators of early childhood programs. Teachers were eligible to participate if they: (a) taught full-time in a preschool classroom, (b) provided in-person instruction, (c) had access to a reliable wireless internet connection in their classroom and to a device with a text messaging app, and (d) identified at least four discrete PM (Hemmeter, Ostrosky, & Fox, 2021) practices as targets. Once consented, teachers completed three surveys: (a) teacher demographics, (b) classroom demographics, and (c) technology (see the online Supplemental Materials). All teachers reported being comfortable to very comfortable with Zoom and texting. They reported texting was the most frequent electronic method of communicating with coworkers.

Jessa was a White 43-year-old teacher with 6 years of experience, a bachelor’s degree in education, and teacher licensure in early childhood and special education. She worked at the same Head Start program in a large southern state as Elizabeth, a White 47-year-old teacher with 11 years of experience, a bachelor’s degree in education, and teacher licensure for students in pre-kindergarten through fourth grade. Stephanie was a White, Latina 48-year-old teacher with 8 years of experience, a bachelor’s degree in sociology, and teacher licensure for children ages birth through kindergarten who worked at a university-based lab school in a mid-sized southeastern state. Jessa, Elizabeth, and Stephanie had 19, 13, and 11 children in their classes, respectively; 7, 1, and 0 children who received special education services outlined in Individualized Education Programs, respectively; and 0, 2, and 2 children who were dual language learners, respectively. Due to the global the COVID-19 pandemic, two teachers reported the implementation of health and safety measures beyond what was typical in their classroom; Jessa and Stephanie reported that adults in the classroom wore masks throughout the day and Stephanie reported that enrollment was limited. All three teachers reported having no prior experience with coaching.

The first author conducted all training sessions and coaching activities and served as the primary data collector. She was a doctoral student in early childhood special education (ECSE) with a master’s degree in ECSE, teacher licensure, and behavior analysis certification. Three master’s students collected interobserver agreement (IOA) and procedural fidelity (PF) data.

Materials

Materials typically found in classrooms were present. Teachers were given an iPad, iPad stand, and Bluetooth microphone to facilitate remote data collection. All teachers were able to use the provided technology, without barriers (e.g., issues related to wifi connections) following initial training. Observations were recorded daily using Zoom (Yuan, 2012), and data collection occurred using those recordings. Training sessions were conducted and recorded using Zoom. Text messages between the coach and teachers were de-identified and saved to a secure server and coded for fidelity. Researcher-created spreadsheets were used to collect dependent variable and procedural fidelity data. The coaching component of the intervention, Text-PBC, was delivered directly to the teacher’s cell phone via the phone’s messaging application.

Response Definitions, Data Collection, and Experimental Design

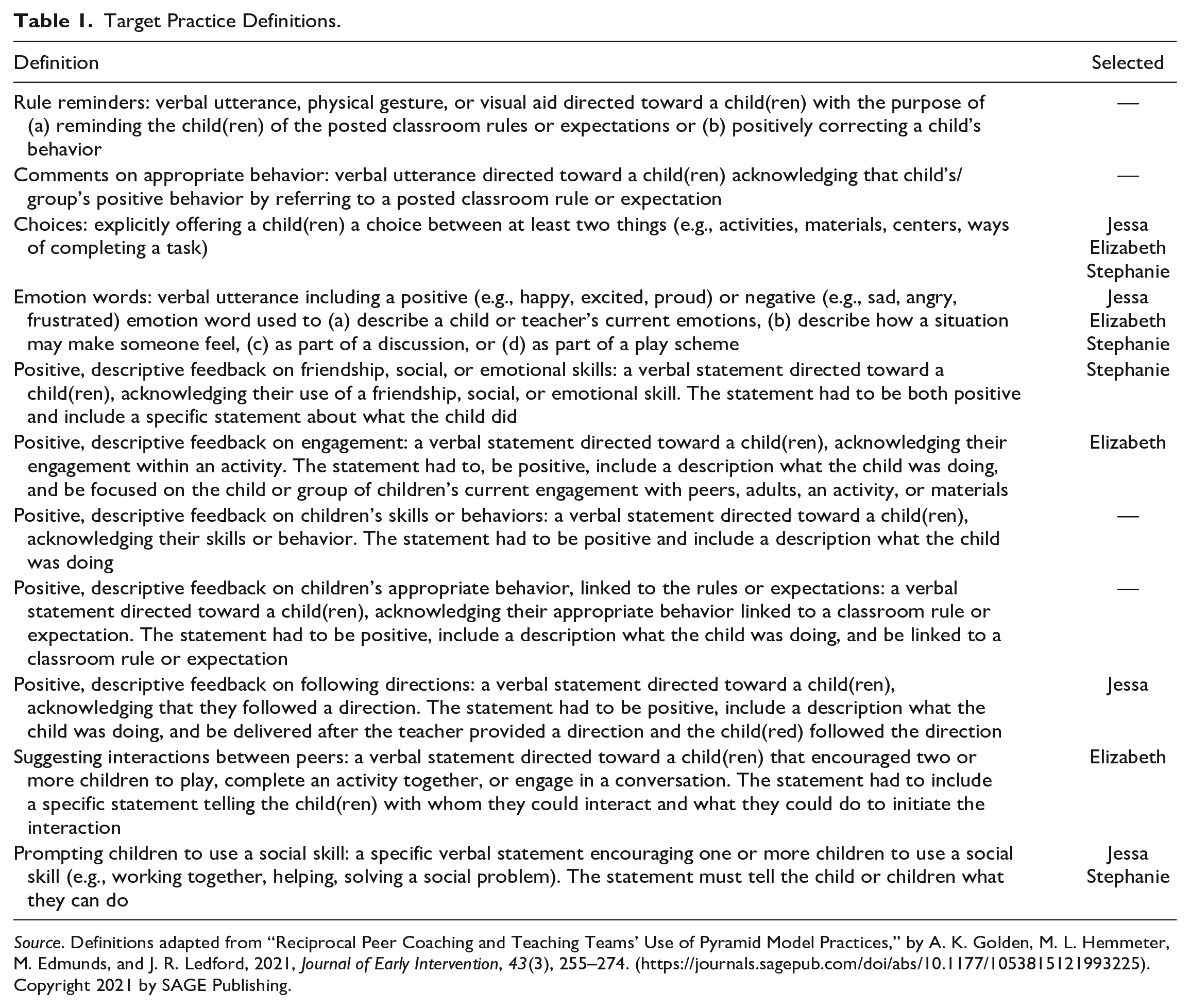

During the initial PM overview training, teachers used a modified version of the PM Practices Implementation Checklist to choose four practices to target with coaching (see online Supplemental Materials). See Table 1 for response definitions and information about which practices were chosen by teachers. While watching recorded sessions, the primary data collector marked each occurrence of targeted practices using timed event recording and a spreadsheet. Data on each practice were collected and graphed daily and were used to make phase change decisions. A concurrent multiple baseline design (MB; Gast et al., 2018) across behaviors, replicated across participants, was used to evaluate the effectiveness of Training + Text-PBC on teacher implementation of PM practices. To describe the magnitude of condition changes, we calculated a parametric effect size, the log response ratio (LRRi) using the SingleCaseES package (Pustejovsky et al., 2023) in the R statistical environment (R Core Team, 2018). This effect size is based on relative differences between baseline and intervention conditions and is less subject to variability due to procedural variation than other effect sizes (Pustejovsky, 2019).

Target Practice Definitions.

Source. Definitions adapted from “Reciprocal Peer Coaching and Teaching Teams’ Use of Pyramid Model Practices,” by A. K. Golden, M. L. Hemmeter, M. Edmunds, and J. R. Ledford, 2021, Journal of Early Intervention, 43(3), 255–274. (https://journals.sagepub.com/doi/abs/10.1177/1053815121993225). Copyright 2021 by SAGE Publishing.

Information on the dosage of coaching was collected by recording the time the coach spent preparing feedback (i.e., watching the recorded observation, preparing text prompts, and feedback statements) and time spent on the text message exchange, measured by recording the time each text message was sent. The percentage of response and reflection prompts responded to by teachers was calculated as a measure of responsivity.

Procedures

Pyramid Model (PM) Overview Training

Prior to beginning baseline data collection, teachers received a PM overview training. The training, provided to each teacher individually and averaging 72 min, included the use of a slide-based presentation with video and picture examples as well as opportunities for discussion and questions. The purpose of the training was to provide teachers with an overview of the key components of the PM (e.g., establishing classroom routines, having supportive conversations, and teaching social–emotional skills). During the training, the coach reviewed procedures, including how to set up the iPad and microphone and how to login to Zoom for observations. At the end of the training, teachers reviewed the Implementation Checklist and with the coach, chose four practices to target with coaching. Teachers also chose the primary activity, during which daily observations and coaching would occur, as well as the secondary (generalization) activity during which observations would occur without coaching. Elizabeth and Stephanie chose the center time, and Jessa chose small group as their primary activities. All three teachers chose large group as the generalization activity. All primary and generalization observations were 15 min in length.

Once activities were chosen, the teacher decided where to place the iPad to best capture the chosen activities. Due to the use of a stationary iPad for recording, the teacher and children were not always in the frame, particularly in Elizabeth and Stephanie’s classrooms where play centers extended further than the camera frame. Any obstructions to the view were consistent across target practices. Finally, the teacher and coach determined a set time each day when the text message coaching exchange would occur (all teachers chose their lunch break), with the goal of completing the entire exchange within a 30 min window. The training ended with the coach confirming the day and time of the first baseline recording.

Baseline

Following the PM overview training, the coach instructed teachers to continue teaching as they had prior to consenting to participate in this study; in other words, business as usual. Each day, during the designated activity, the teacher set up the iPad; logged in to the assigned Zoom meeting; and ensured the microphone was on, connected to the iPad, and attached to her shirt. Each teacher had a unique Zoom link for a recurring meeting set to automatically record.

During small groups, Jessa led an academic activity (e.g., name writing, rhyming game) with four to six children. Other children either worked independently with modeling clay or completed academic games on an iPad. During center time in Stephanie and Elizabeth’s classrooms, children played independently or with peers in centers (e.g., block center, writing center) and could move between centers. The number of children permitted in each center was limited in Elizabeth’s classroom but was not in Stephanie’s. In Jessa’s room, children gathered as a large group on the rug to write or draw on whiteboards between breakfast and movement activities. In Elizabeth’s classroom, large group consisted of attendance, a movement activity, and a shared writing activity. In Stephanie’s classroom, large group included a read-aloud and an additional activity (e.g., song, movement activity, and question of the day) that changed each day. Children and educational assistants were present during all data collection sessions.

The coach reviewed recordings daily. After each session, she sent a text message with (a) a positive greeting (e.g., “Hi, I hope you’ve had a great day”), (b) a reminder about the next observation with a request for response (e.g., “Our next session will be snack time at 9:15 am tomorrow. Please confirm this works for you.”), and (c) a closing statement with an opportunity for the teacher to ask questions (e.g., “Great! Let me know if you have any questions.”).

Intervention

Training

The independent variable in this study was Training + Text-PBC. Following the baseline on the first targeted practice, the coach provided training on the coaching process and the first target practice. Trainings, conducted remotely via Zoom, averaged 34 min in length and included the use of a presentation with seven components: (a) review of study timeline; (b) review of four chosen target practices; (c) definition of the first target practice with examples and non-examples; (d) video examples and non-examples from the teacher’s classroom; (e) creation of an action plan (i.e., goal setting); (f) review of the coaching process; and (g) confirmation of the recording schedule. Teachers were given opportunities to ask questions throughout the training. The action plan developed by the teacher and the coach included (a) the target practice, (b) steps for implementing the practice, and (c) supports (e.g., resources, materials) needed to implement the practice. During training on the first target practice, the coach used mock text message exchanges to introduce text messaging procedures. She reviewed when texts would be delivered and when and how the teacher was expected to respond. This training protocol was repeated prior to the implementation of Text-PBC for each subsequent target practice.

Coaching

The three key PBC components (i.e., action planning, focused observations, reflection, and feedback) were implemented. Action planning occurred during the training. Focused observations occurred daily when the teacher logged in to the assigned Zoom meeting during the chosen activity. Reflection and feedback occurred via text message. During the intervention, the text exchange consisted of the three components present during baseline: (a) positive greeting, (b) reminder about the next observation with a request for response, and (c) closing statement with an opportunity for the teacher to ask questions. Four additional components related to reflection and feedback were added to intervention text messages: (a) general reflection prompt (e.g., “On a scale of 1 to 5, how do you feel about your use of emotion words today?”), (b) supportive feedback statement (e.g., “You used 4 different emotion words, 2 more than yesterday! You labeled your own emotion when you said, ‘I’m so excited to go outside for recess!’ ”), (c) constructive feedback statement (e.g., “When you noticed children were smiling, you could have talked about how they were feeling”), and (d) constructive reflection prompt (e.g., “How do you think you could have used emotion words during that activity?”). Each reflection and feedback component was sent as an individual text message. Quantitative data were shared as supportive feedback statements rather than in a graphical format. See online supplemental Table S1 for definitions, examples, and non-examples of components.

Teachers were expected to respond three times during the exchange. If a teacher did not respond within 20 min to one of the prompts requiring a response by either providing the requested information or indicating she needed more time to respond, the same text was sent again. If the teacher did not respond to the repeated request after 20 min, the coach continued to the next step in the text sequence. Teachers used this procedure a total of six times (9% of all text exchanges). When there were more than 4 days between sessions (e.g., spring break), teachers received a reminder text the morning of the first session after the break including a reminder to record a session that day and the current target practice.

To prepare for coaching, the coach watched the recording straight through without pausing or re-watching. Notes about the use of the target practices and opportunities for additional use were taken. After watching the video, the coach wrote one supportive and one constructive feedback statement, and a constructive reflection prompt which were used in the text exchange. The coach re-watched videos to collect data used to make phase change decisions.

Fading

Visual analysis was used to assess changes in level, trend, and variability of teacher use of targeted practices. A new target practice was introduced when teacher use of the previous practice was stable with an increase in level or trend. When a new target practice was introduced, the previously targeted practice entered a fading phase in which teachers were reminded to continue using the practices, but the focus of the reflection and feedback shifted to the new target practice (i.e., coaching was faded from the previous target practice). When applicable, the coach provided feedback around or prompted the teacher to reflect on how the current target practice could be used together with a previously coached practice.

Maintenance and Generalization

Maintenance data were collected in the primary data collection activity 1, 2, and 3 weeks after the completion of intervention on all four targeted practices. Maintenance data were collected once (i.e., 33%) in the secondary activity. Like baseline, the coach sent a text message with a positive greeting, a reminder about the next session, and a closing statement with an opportunity to ask questions. Teachers were not prompted to reflect, and the coach did not provide feedback on the target practices. After the intervention was complete on all four targeted practices, the coach informed the teacher the coaching portion of the study was complete, but the coach would check in once a week for 3 weeks to observe their use of the target practices.

Generalization data were collected in a secondary activity for a minimum of 33% (range 33–60%) of the number of primary observations. Generalization sessions were recorded using the same procedures used for primary observation sessions. During all conditions (i.e., baseline, intervention, fading, maintenance), a reminder about the generalization session was included with the reminder for the next observation in the regular text message exchange (e.g., “Tomorrow we have two sessions, 9 am during large group and 10:15 am during centers. Please confirm those times still work for you”). No coaching was provided for secondary activities.

Social Validity

Prior to baseline, participants completed a survey about experiences with coaching, comfort with technology, and the use of technology as a source of PD. All three teachers reported being “really comfortable” (M = 4.9, range 4–5) with common technology (e.g., email, text message, recording videos), indicating there was not a need for additional support from the coach around the use of technology. Post-intervention, teachers completed additional questions about the feasibility, effectiveness, and acceptability of Training + Text-PBC (see the online Supplemental Materials). In addition to the teacher report, 24 masked raters (see online supplemental Table S2) rated teacher use of PM practices. Each rater, masked to reduce biased ratings, was randomly assigned to one study participant and watched one randomly selected baseline session and one randomly selected intervention session from the final tier for their assigned participant.

Interobserver Agreement and Procedural Fidelity

Interobserver agreement data were collected for 50% to 66.7% of sessions across participants, target behaviors, and conditions using a 5-s agreement window. Interobserver agreement was calculated using the point-by-point method (agreements divided by agreements plus disagreements, multiplied by 100; Ledford et al., 2018). The first author trained the secondary observer on response definitions and the measurement system and observers reached 90% reliability for each target practice for three practice videos. During data collection, a reliability of 80% or greater was considered acceptable. When IOA fell below 80%, observers met to review definitions and discuss disagreements before resuming data collection. The mean IOA across participants, behaviors, and conditions was 92.13%. See online supplemental Table S3.

Procedural fidelity data were collected for 100% of training sessions and text exchanges. Procedural fidelity was calculated by dividing the number of correctly implemented steps by total steps and multiplying by 100 (Ledford et al., 2018). IOA on fidelity was collected for 33–66.7% of training sessions and text message exchanges across teachers. To collect IOA on PF, the secondary coder viewed recorded training sessions and screenshots of text exchanges. Prior to collecting data, the primary and secondary PF coders were trained to reliability across all components and reached 90% reliability on three practice sessions. The average PF for trainings was 94.6% and IOA of training PF was 94.9%. PF of text message exchanges averaged 99.1% and IOA of text message PF averaged 98.1%. See online supplemental Table S4 for PF data.

Results

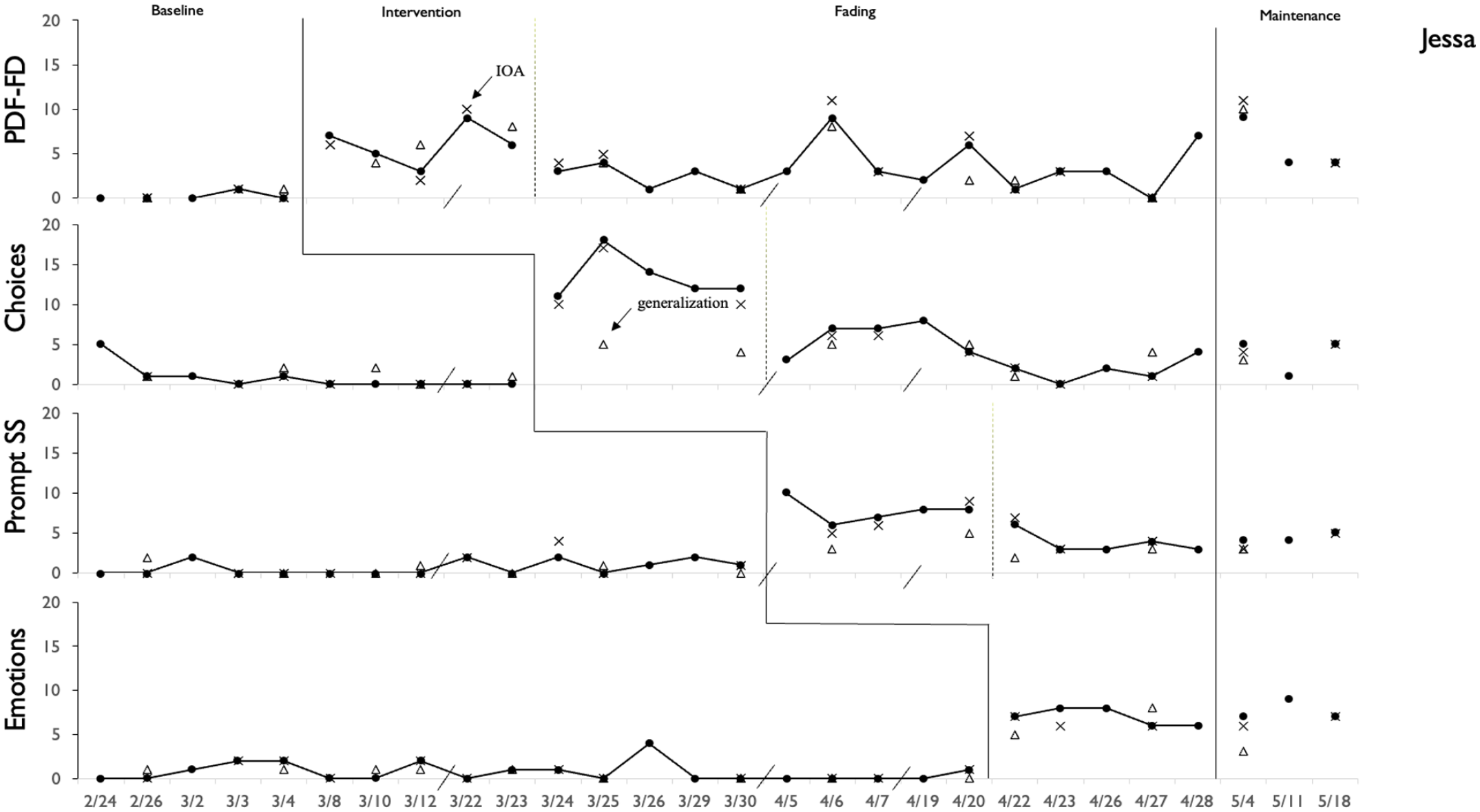

For Jessa, data were low and stable across all PM practices in the baseline condition. With the introduction of the intervention, Training + Text-PBC, there was an immediate shift in level in her use of positive, descriptive feedback about children following directions, with no overlap with baseline data. Once data were stable on the first PM practice, training and coaching were provided on the second target practice, and so on. For each PM practice, there was an immediate shift in level and trend with the introduction of the intervention. Although data were variable, Jessa used all practices during fading and maintenance phases. As shown in Figure 1, a functional relation was demonstrated between Training + Text-PBC and the use of PM practices.

Jessa’s use of targeted PM practices.

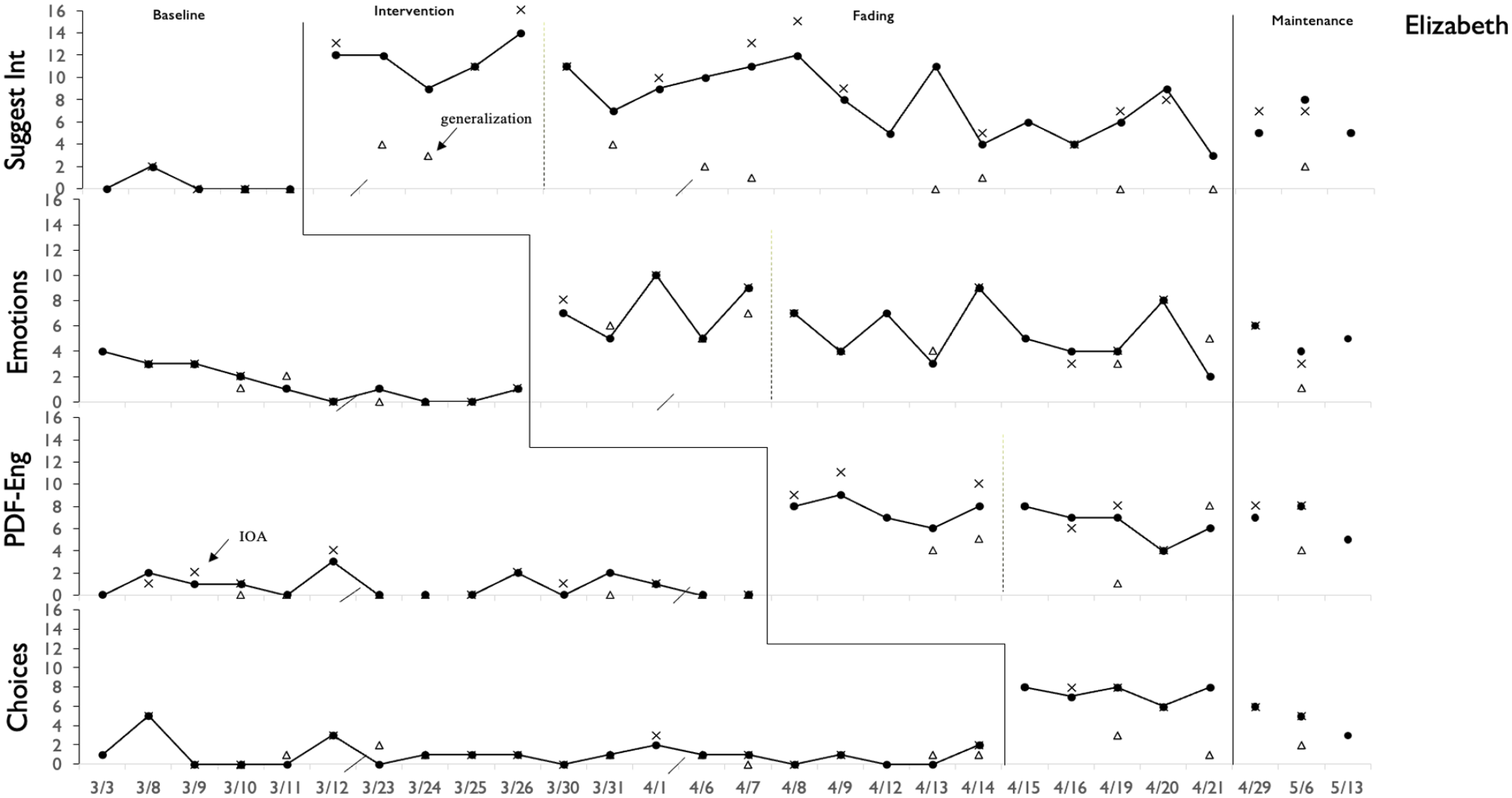

For Elizabeth, baseline data were low and stable across PM practices (see Figure 2). Across all practices, there was an immediate shift in level and trend with the introduction of Training + Text-PBC. Overall use remained high even when the focus of coaching shifted to a new practice and maintained above baseline up to 3 weeks after coaching ended. A functional relation was demonstrated between Training + Text-PBC and the use of PM practices.

Elizabeth’s use of targeted PM practices.

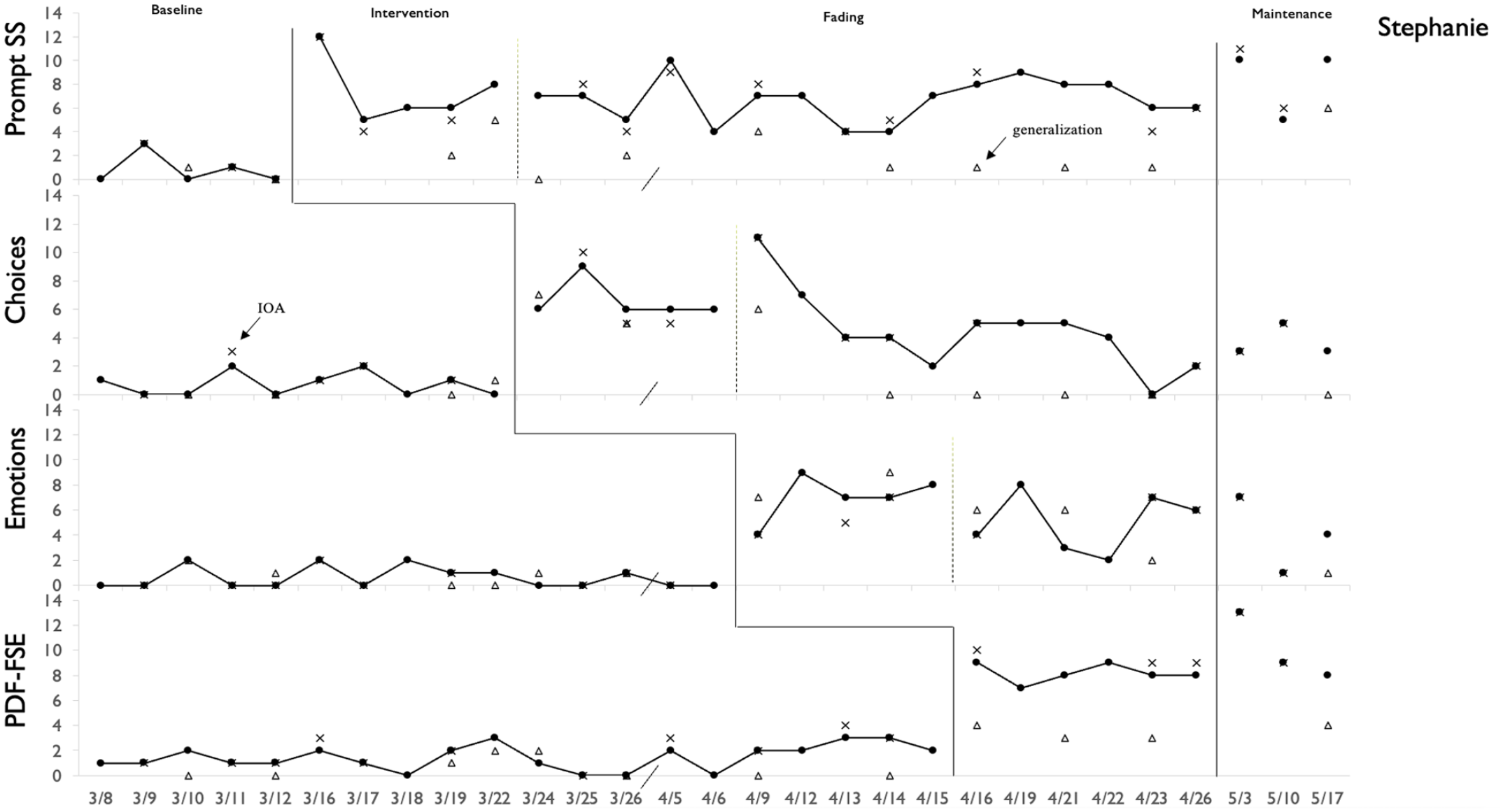

For Stephanie, baseline data were low and stable for all target practices (see Figure 3). With the introduction of the intervention for each practice, there was an immediate shift in the level of her practice use. Stephanie’s practice use was variable during the fading phase but remained above baseline levels except for one data point on the third PM practice (i.e., emotions). Practice use is sometimes maintained above baseline levels after coaching ended. A functional relation was demonstrated between Training + Text-PBC and the use of PM practices.

Stephanie’s use of targeted PM practices.

The average LRRi effect size estimate was 2.25 (range = 1.53–2.92; online supplemental Table S5), and the average percentage change was 932.73% (range = 363.19–1663.26%). The lower bounds of all 95% confidence intervals were greater than zero, suggesting differences between conditions that were statistically different than zero.

Generalization

Generalization data are presented via open triangles in Figures 1 to 3. Across teachers, practice use during baseline was low, with an average of less than one practice use per session. There was an increase in use in the secondary context when Training + Text-PBC was implemented in the primary context and a decrease in use, compared with intervention, during fading and maintenance. Practice use during these conditions generally exceeded baseline levels.

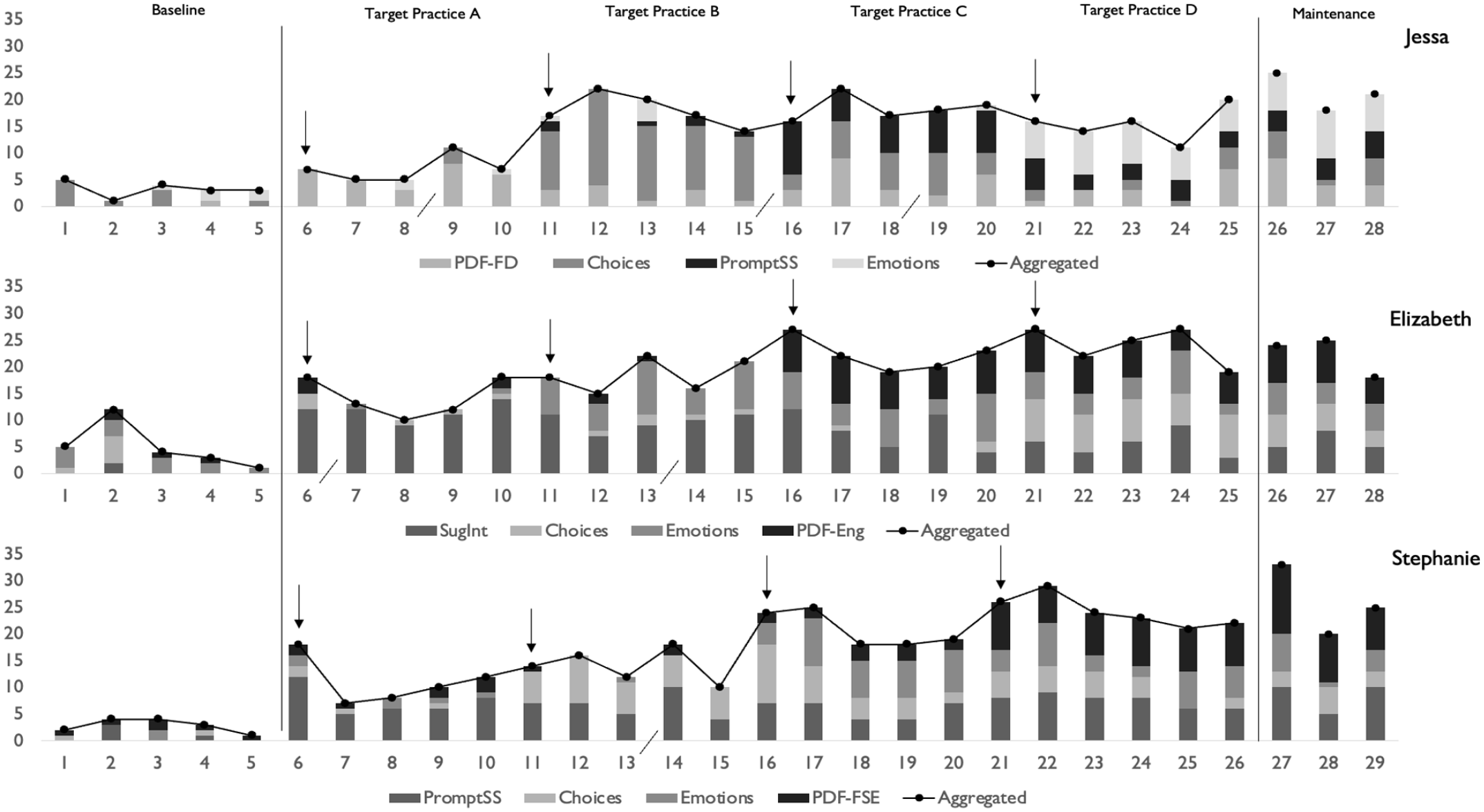

Combined Use of Practices

The combined use of practices is presented in Figure 4. Each practice is represented by a different shade of gray. Although individual practice use tended to decrease in the fading phase, combined use of practices increased substantially and remained high throughout the study. Jessa used an average of 3.2 practices per baseline session and 14.7 per intervention session. Elizabeth used an average of 5 practices per baseline session and 19.7 per intervention session. Stephanie used an average of 2.8 practices per baseline session and 17.8 per intervention session. Except for three sessions (Jessa 22 and 24, Stephanie 25), once coaching was introduced on target practice, teachers used each practice in all subsequent intervention, fading, and maintenance sessions. See online supplemental Table S6 for practice use across teachers and conditions.

Combined use of targeted PM practices within a session across teachers.

Coaching Dosage

Data on the coaching process (i.e., training, coaching session preparation, coaching sessions) were collected to understand the efficiency of the package. See online supplemental Table S7 for a breakdown of coaching dosage across participants. The average length of training sessions across teachers was 52.88 min, including a longer PM overview training (M = 71.67, range=69–77) and four target practice trainings (M = 34.08, range = 20–62). Data were collected on five components of the coaching process: (a) time coach spent watching the video, (b) time coach spent preparing for reflection and feedback, (c) duration of the coaching session (i.e., text exchange), (d) number of texts the coach sent within a session, and (e) number of texts the teacher sent within a coaching session. Across teachers and conditions, the coach spent an average of 17.97 min (range = 15–24) watching the focused observation and 10.55 min (range = 4–18) preparing the reflection and feedback. Across teachers and conditions, coaching sessions (i.e., text message exchanges) were 16 min (range = 4–66) in duration with the coach sending an average of 8.12 (range = 6–17) texts and the teacher sending an average of 5.26 (range = 3–18) texts. All teachers responded to 100% of texts in which a response was requested.

Teachers spent an average of 8 hr 58 min (range: 6 hr 15 min–10 hr 43 min) engaged in training and coaching sessions during the intervention condition. The coach spent an average of 5 hr 11 min per teacher on coaching, including watching observation videos (avg. 6 hr 6 min; avg. 18 min per session), preparing feedback (avg. 3 hr 35 min; avg. 11 min per session), and engaging in the text message exchange (avg. 5 hr 30 min; avg. 20 min per session).

Social Validity

At the conclusion of the study, teachers completed a 10-item survey, rating on a scale of one to five, the effectiveness and feasibility of the intervention. All three teachers rated text-based coaching, including the daily reflection component, as highly effective and feasible (M = 4.83, range 4–5). Jessa commented that the coach “was very responsive when I had questions or wasn’t sure about something.” Elizabeth said “the coaching was very constructive and did not focus on what I did wrong but praised what I did good [sic]” and “I have never been the type to reflect and give answers because I worried that there was a wrong answer. The text-based coaching helped me reflect because [the coach] asked appropriate questions that were more specific.” Stephanie expressed that “this is an easy and effective way to coach” and “the daily reminders of different ways I could incorporate the strategies helped me keep it foremost in my mind.” See online supplemental Table S8 for additional teacher comments.

Masked raters viewed randomly selected baseline and intervention videos and rated teacher use of PM practices (see online supplemental Table S9). Across all participants, teacher use of practices was rated higher in the intervention (M = 3.65, range 2–4.63) compared with baseline (M = 1.43, range 1–2.5). Differences in ratings between practice use in baseline and intervention averaged higher for practices targeted through coaching (mean increase in rating = 2.38) compared with non-target practices (mean increase in rating = 2.07), indicating Training + Text-PBC is most effective for increasing practices targeted with coaching but can also lead to increased use of practices related to those targeted through coaching.

Discussion

The purpose of this study was to evaluate the effectiveness of Training + Text-PBC on teacher use of targeted PM practices. A functional relation between Training + Text-PBC and increases in practice use was demonstrated for all participants. Practice use maintained following the withdrawal of the intervention and generalized across activities. Teachers increased use of each targeted practice with a mean of 34 min of training and 81.6 min of coaching, providing evidence that Text-PBC is efficient for coaching teachers to use PM practices and extending evidence of the effectiveness of PBC and use of text messaging as a method for delivering PBC.

Previous research has demonstrated that PBC is effective in increasing the use of individual practices (e.g., McLeod et al., 2019) and multi-component interventions (e.g., Conroy et al., 2019, 2022; Hemmeter et al., 2016). It has most often been delivered in person with teachers receiving extensive training (over 18 hr) and coaching (average of 91–124 min of observation and 30–44 min of coaching each week for 10–16 weeks; Hemmeter et al., 2016; Hemmeter, Fox, et al., 2021). In previous studies with remote PBC coaching (Artman-Meeker et al., 2014; McLeod et al., 2019), video recordings were collected by outside observers and coaching was delivered via email. We used teacher-recorded observations and delivered coaching via text message, demonstrating that PBC can be delivered completely remotely.

We addressed several limitations and recommendations reported in recent studies. We included a goal-setting component, as suggested by Barton et al. (2019), in which teachers worked with the coach to set a goal, define steps for implementing the practice, and identify supports needed to facilitate implementation. We also included a reflection prompt to increase engagement with the intervention. Previous studies using distance coaching (e.g., Artman-Meeker et al., 2014; McLeod et al., 2019) either did not report or reported low engagement. In this study, teachers responded to 100% of reflection and response prompts. Finally, this study included measures of PF across all study components and conditions.

All participants rated Text-PBC as effective and feasible and reported that the distance procedures (i.e., setting up the iPad and microphone, joining the Zoom meeting, and receiving coaching via text message) were feasible. A key to the feasibility may have been the teacher’s ability to choose when during the day to receive coaching as one teacher reported “we set a time that was convenient for me and did not take away from my students.”

In Artman-Meeker et al. (2014), coaches spent an average of 120 min per session reviewing the observation and preparing feedback emails. Barton and colleagues (2016) reported coaches engaged in 15 min of observation and 10 to 20 min of feedback preparation per session. Observation and feedback preparation time were not reported in other distance coaching articles. Compared with the studies in which these data were reported, the intervention implemented in the current study was as or more efficient than similar interventions (Artman-Meeker et al., 2014; Barton et al., 2016 respectively). This study is the first in the early childhood coaching literature to report the amount of time teachers and coaches spent engaged in the debriefing component. The limited amount of time required by the teacher and the coach to affect change in teacher practice indicates Training + Text-PBC is efficient and feasible.

Implications for Practice

Practice-Based Coaching is effective for increasing teacher use of recommended practices, but an analysis of dosage is needed (e.g., Conroy et al., 2019). This study provides evidence of the effectiveness and efficiency of Training + Text-PBC for increasing teacher use of targeted PM practices with considerably less support than provided in similar studies (e.g., Hemmeter et al., 2016; Hemmeter, Fox, et al., 2021). With an average of 34 min of training and 81.6 min of coaching per target practice, all participants increased and maintained the use of practices. The use of text messaging might make it feasible to provide high-quality coaching with large caseloads common to applied settings and allow coaches to reach teachers in a wider geographical area. Because text messaging is conversational, like face-to-face coaching meetings, it may give teachers an opportunity to process feedback and organize their thoughts before responding.

Limitations and Future Research

Several limitations are noteworthy. First, because all components of the study were done remotely, it was not feasible to conduct TPOT observations to gain information about changes in teachers’ overall use of PM practices as has been done in previous research (e.g., Golden et al., 2021; Hemmeter et al., 2015). Pre-study TPOT scores would have provided descriptive information about each classroom, including the strengths and needs of each teacher. Anecdotally, Jessa had fewer universal practices in place, and children in her classroom engaged in higher rates of challenging behavior. Data related to teacher use of prevention and promotion strategies would help coaches understand who would benefit most from specific approaches to coaching as well as which target practices might be most meaningful and beneficial for individual teachers. Teachers needing support with foundational practices (e.g., teaching behavior expectations, developing routines) may need more intensive (e.g., more frequent, longer, and in person) coaching than teachers who implement or refine more specific practices (e.g., labeling emotions, providing positive descriptive feedback). In addition, future research could include a measure of teacher-coach alliance to understand the impact of rapport between the coach and coachee on the effectiveness and acceptability of the coaching intervention.

A second limitation relates to the use of technology. Teachers had one stationary iPad for recording and depending on the movement of teachers and children in the classroom, the teacher was not always visible. Although the microphone allowed the teacher to be heard, some actions were not always observable, meaning some targeted practices were not counted. More advanced technology that can track the movement of the teacher and children might be considered in future research. A third limitation was the lack of a child outcome measure. Because teachers and children were not always visible during the recording, it was not feasible to collect data on child outcomes (e.g., rates of challenging behavior, levels of engagement, and use of social–emotional skills). Research on coaching should, but rarely does, include measures of child behavior change so we can understand how coaching impacts child outcomes (Golden, 2020; Kraft et al., 2018).

With few exceptions due to teacher absences or school breaks, observations and coaching sessions in the current study were conducted daily by an expert coach. Although this intervention was not time intensive, it may not be feasible for coaches in the field to provide daily coaching to teachers. Additional research is needed with lower-density coaching (e.g., once per week) and with coaches with varying levels of training and coaching expertise. Additionally, while there was some evidence that targeted practices generalized to settings in which coaching was not provided, generalization data were variable and considerably lower than data in the primary activity across all teachers. Because no feedback was provided in the generalization setting, the teachers may not have made the connection that they were appropriately using the target practices in that setting. For example, when Elizabeth was working on suggesting interactions between peers, she added opportunities for children to partner during large group (i.e., the generalization activity). When the focus of coaching moved to the next target practice, Elizabeth did not continue embedding opportunities for children to interact with their peers. Future research should explore efficient ways for systematically programming for generalization.

The focus of the current study was increasing individual teacher use of target practices, measured using timed event recording. Results from this study indicate Training + Text-PBC is effective for increasing teacher use of practices; however, we do not yet know the ideal rate of practice use. A next step in this line of research is teachers using these practices in the most salient way, at optimal levels to support the social-emotional development of young children.

Conclusion

The results of this study indicate that Training + Text-PBC is an effective and efficient coaching method for increasing teacher use of PM practices. Results were maintained up to 3 weeks after coaching was completed, and there was some evidence of generalization to un-targeted contexts. Future research should continue examining the effectiveness of text messaging as a mode for delivering coaching to build a bank of effective coaching practices that can be matched to the skills, characteristics, and needs of teachers; the type of practices being targeted with coaching (e.g., individual teaching practices, multi-component interventions); and other characteristics of the coaching context (e.g., caseloads, distance, and access to technology).

Supplemental Material

sj-docx-1-pbi-10.1177_10983007231172188 – Supplemental material for Evaluating the Effects of Training Plus Practice-Based Coaching Delivered Via Text Message on Teacher Use of Pyramid Model Practices

Supplemental material, sj-docx-1-pbi-10.1177_10983007231172188 for Evaluating the Effects of Training Plus Practice-Based Coaching Delivered Via Text Message on Teacher Use of Pyramid Model Practices by Adrienne K. Golden, Mary Louise Hemmeter and Jennifer R. Ledford in Journal of Positive Behavior Interventions

Footnotes

Acknowledgements

We thank Lila Buchanan, Maria Sanin, and Kelsey Rose for their support with data collection; the Hemmeter Lab at Vanderbilt for their support with planning and revising procedures; and Drs. ML Hemmeter, Jennifer Ledford, Ann Kaiser, and Kathleen Artman-Meeker for their support as doctoral committee members.

Authors’ Note

Portions of these findings were presented as a poster at the following conference: the 2021 Annual DEC Conference on Young Children with Special Needs and their Families, virtual.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was funded in part by U.S. Dept of Ed grant U411B170021 and OSEP grant H325B170003.

Supplemental Material

Supplemental material for this article is available on the Journal of Positive Behavior Interventions website with the online version of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.