Abstract

Building the evaluation capacity (EC) of individuals and organizations in early childhood and broader social-serving fields is key to enhancing their practices, programs, and policies and maximizing the impact of limited resources. While access to psychometrically valid tools for strengthening EC is growing, many existing tools were not developed specifically for early childhood. This paper details a community-based participatory approach to developing and validating the Evaluation Capacity Needs Assessment (ECNA) tool. Our work engaged a sample of 329 participants across diverse early childhood and social-serving organizations responsible for evaluation in a western province in Canada to ensure its relevance. We describe the two-phases involved in the development and validation process, including the exploratory three-factor models for individual and organizational EC measurement, scoring and interpretation. Practical guidance and case applications demonstrate the ECNA tool's value in informing evaluation capacity-building strategies in early childhood and beyond.

Keywords

Introduction

In recent years, the demand from funders for rigorous and reliable evaluation has intensified, requiring organizations to prove their effectiveness and determine whether investments in their work are cost-effective and impactful (Kavanagh et al., 2025; Nakaima & Sridharan, 2020). This is particularly evident in sectors that work with vulnerable populations, such as in the social-serving sector (Buetti & Bourgeois, 2024; Walters, 2021). Gathering useful evidence to evaluate and inform programs requires organizations to have individuals (or teams of individuals) with the collective capacity to conduct evaluations and use the findings generated, and to support a culture of learning (Buetti et al., 2023). At the organizational level, evaluations can be used to inform strategic decision-making, improve operations and practices, and encourage organizational members to think evaluatively—activities that also embed learning and adapt practices within the organization (Al Hudib & Cousins, 2022; Patton, 2011). Evaluations can also satisfy funding requirements, allocate funding, and inform policymaking (Kavanagh et al., 2025; Patton, 2011; Wilcox & King, 2014).

Unfortunately, many social-serving sectors and organizations do not have the necessary individual and collective capacity to conduct these critical evaluations and meaningfully use the results (e.g., Bourgeois et al., 2023; Gokiert et al., 2022; Preskill & Boyle, 2008; Puinean et al., 2022; Taylor-Ritzler et al., 2013). A further complicating factor for evaluation capacity (EC) is that many organizations lack the funding or time needed to undertake evaluation meaningfully, let alone build capacity among organizations’ members to evaluate their work internally in ways that meet evolving accountability and learning objectives (Cheng & King, 2016; Janzen et al., 2017). If resources allow, organizations that do not have the internal capacity to conduct evaluations can engage external evaluation consultants.

While the use of an external evaluator may seem like a good solution, it is not sustainable as organizations still need internal EC to ensure they can effectively hire a qualified evaluator with relevant contextual knowledge and expertise, engage in ongoing monitoring and evaluation, and use the evaluation results effectively (Bourgeois et al., 2023; Cousins et al., 2014; Preskill & Boyle, 2008). The more cost-effective and sustainable option is to build internal EC that is contextually relevant to an organization's specific needs. However, this option requires organizations to invest their limited resources against a background of ever-present funding and time constraints on programming and people (e.g., Cousins et al., 2014; Preskill & Boyle, 2008).

Furthermore, evaluation capacity-building (ECB) efforts need to consider individual and organizational needs and contexts (e.g., Taylor-Ritzler et al., 2013). Context plays an important role in defining and meeting organizational and individual EC needs. As argued by Al Hudib and Cousins (2022), “the sociopolitical, cultural, and economic contexts of an organization are critical and pervasively influence evaluation practice. Context is also the most salient, influential consideration shaping the ECB process” (p. 16). This view was further articulated by Nielsen et al. (2024), who found through a review of several EC instruments that EC has been applied to the individual (micro), organization (meso), and societal (macro) levels, and noted that EC instruments provide an important bridge between theory and practice and are central to furthering the practice of evaluation. They also argued that there is a continued need to explore the applicability of EC instruments across a broader range of populations and contexts.

Our research is situated within the context of early childhood development, where the demand for rigorous and reliable evidence has intensified and an untapped potential of evaluation as a systematic approach to measure the impact of investments in the early years remains (Gokiert et al., 2017; Gokiert et al., 2022). Early childhood organizations and other entities that support children and families (i.e., the broader social-serving sector) can use evaluation to address the learning and accountability demands they face and improve their services (Puinean et al., 2022). We use a systems-level approach to the conceptualization and measurement of EC within the field of early childhood development, and expand on previous published work describing the initial validation of the Evaluation Capacity Needs Assessment (ECNA) tool (El Hassar et al., 2021). The objective of the current study is to extend this work to demonstrate the use of the ECNA tool to address the EC gap within the early childhood and broader social-serving fields. Specifically, the current study adds (1) a detailed description of the community-based participatory research (CBPR) approach we used in the development and validation of the ECNA tool; (2) updated validation evidence compared to previously reported work; and (3) three case applications and other practical guidance for the use and customization of the ECNA tool within and beyond the early childhood field.

Early Childhood as a Critical Setting for Evaluation

Early childhood (birth to 6 years of age) is a critical developmental period that lays the foundation for long-term health and social wellbeing (Gokiert et al., 2017; Shonkoff, 2017). The early childhood field, broadly defined, refers to the sectors and disciplines responsible for enhancing development in these early years, such as early childhood education (e.g., preschool, kindergarten), early learning and childcare (e.g., Head Start, licensed childcare), and health (e.g., early intervention) (Friendly et al., 2024).

Many children are entering early learning, childcare, and more formal early childhood education experiencing vulnerability in their development (Canadian Institute for Health Information, 2021; Perrigo et al., 2025; Public Health Agency of Canada, 2018), a phenomenon that has been exacerbated by the COVID-19 pandemic (O’Connor et al., 2025; Whitley et al., 2021). This vulnerability can manifest, for example, as delays in fine and gross motor skills, social-emotional regulation, or receptive and expressive language. This situation has resulted in an increase in mental health concerns, developmental learning gaps, and family stress (Mental Health Commission of Canada, 2021; Mulkey et al., 2023). Significant investments are being made to respond to these vulnerabilities (Seward et al., 2023). For example, in Canada the federal government has invested $30 billion over 5 years to build a Canada-wide early learning and childcare system that is accessible, affordable, and inclusive (Government of Canada, 2022). Such early childhood initiatives are seen as a necessary equalizer for a thriving society (McCain, 2020). However, their high costs increase the need for strong evidence to justify these investments and to inform the design of practices, programs, and policies that meet the needs of children and families in Canada (Akbari & McCuaig, 2017; Shonkoff, 2021).

Evaluation Capacity Building in the Early Childhood Development Field

Early childhood organizations are diverse and bring expertise across multiple sectors, systems, and disciplines (e.g., early education, early learning and childcare, early intervention and health). As additional resources are invested in the early years to address some of the challenges intensified by the COVID-19 pandemic (e.g., women leaving the workforce because of unmet childcare needs; mental health; and children's developmental and learning gaps), Shonkoff (2021) emphasizes the need to “make sure that all policies and services are guided by the best available knowledge (both scientific and pragmatic)—and evaluated rigorously to determine what works for whom in which contexts …” (para 5). Accordingly, early childhood organizations and other entities that support children and families (i.e., the broader social-serving sector) can use evaluation to address the learning and accountability demands they face and improve their services (Gokiert et al., 2022). However, many of these organizations lack capacity to conduct these critical evaluations and meaningfully use the results, as highlighted in two recent reviews of the ECB literature (Bourgeois et al., 2023; Puinean et al., 2022). Specifically, these reviews found that many early childhood and social-serving organizations lack access to the specialized skills, resources, and support needed to conduct evaluations that are well planned and able to achieve their intended objectives.

The Network

In 2014, the Evaluation Capacity Network (hereafter the Network), an interdisciplinary and intersectoral partnership, was established to provide a coordinated and tailored response to the early childhood field's EC needs by improving access to high quality evaluation knowledge, resources, expertise, and capacity-building opportunities (see Gokiert et al., 2017). Our network draws on bioecological systems theory, which posits that the development of a child cannot be understood in isolation or as the result of a single factor; rather, development is shaped by complex environments and interrelationships between them (Bronfenbrenner, 1986; Bronfenbrenner & Ceci, 1994). For this reason, in building EC our Network considers the many systems, sectors, and disciplines that shape a child's development. Our approach to understanding and influencing the dynamic, non-linear changes that occur within the system are informed by complex systems theory (Waldrop, 1992). Although changes within such complex systems cannot be entirely predicted, it is possible to identify patterns and respond to areas of greatest opportunity (i.e., “high-profit” leverage points, points of innovation) to guide the system toward a more desired state. The creation, mobilization, and use of real-time evidence within the system can aid the identification of areas of opportunity and respond to uncertainty in a more adaptive way (Suárez-Herrera et al., 2009).

In tailoring ECB approaches to the dynamic and complex systems that influence early childhood development, it is important to be able to define and measure EC within this context, such as via validated EC instruments designed specifically for use in the early childhood field. In their recent article, Nielsen et al. (2024) reviewed several rigorous and accessible tools of this kind that can be used by organizations to measure and build their EC, and pointed out that EC instruments provide an important bridge between theory and practice and are central to furthering the practice of evaluation. They also argued that there is a continued need to explore the applicability of EC instruments across a broader range of populations and contexts.

The Development of the ECNA Tool

In consideration of the context of the early childhood field and the various systems that support and interact with children and families, our Network engaged in dialogue with individuals and organizations that support young children across the Canadian Province (see Gokiert et al., 2017; Gokiert et al., 2022 for a detailed description of this engagement process and outcomes). This was an important first step to enhance our understanding of the specific EC needs, challenges around EC, and capacity gaps and opportunities in the early childhood sector, as well how to build EC effectively and efficiently. Our next step was to take this knowledge and build an instrument that could be used to measure individual and organizational EC, to inform and assess our ECB efforts in sectors that support young children.

In previous published work (El Hassar et al., 2021), we described the initial validation of the Evaluation Capacity Needs Assessment (ECNA) tool, an instrument we designed for the early childhood field, and tested some of the direct and indirect relationships among individual and organizational EC domain factors. To our knowledge, there is no other published EC instrument that has been developed with and for the early childhood and broader social-serving sectors that support children and families. Building on our prior work (El Hassar et al., 2021; Gokiert et al., 2022), this study responds to the growing demand for rigorous, reliable evidence in the early childhood field. Through three CBPR case applications, we update validation evidence for the ECNA tool and enhance it. In doing so, we demonstrate evaluation's value as a systematic approach for assessing early-years investments.

Methods

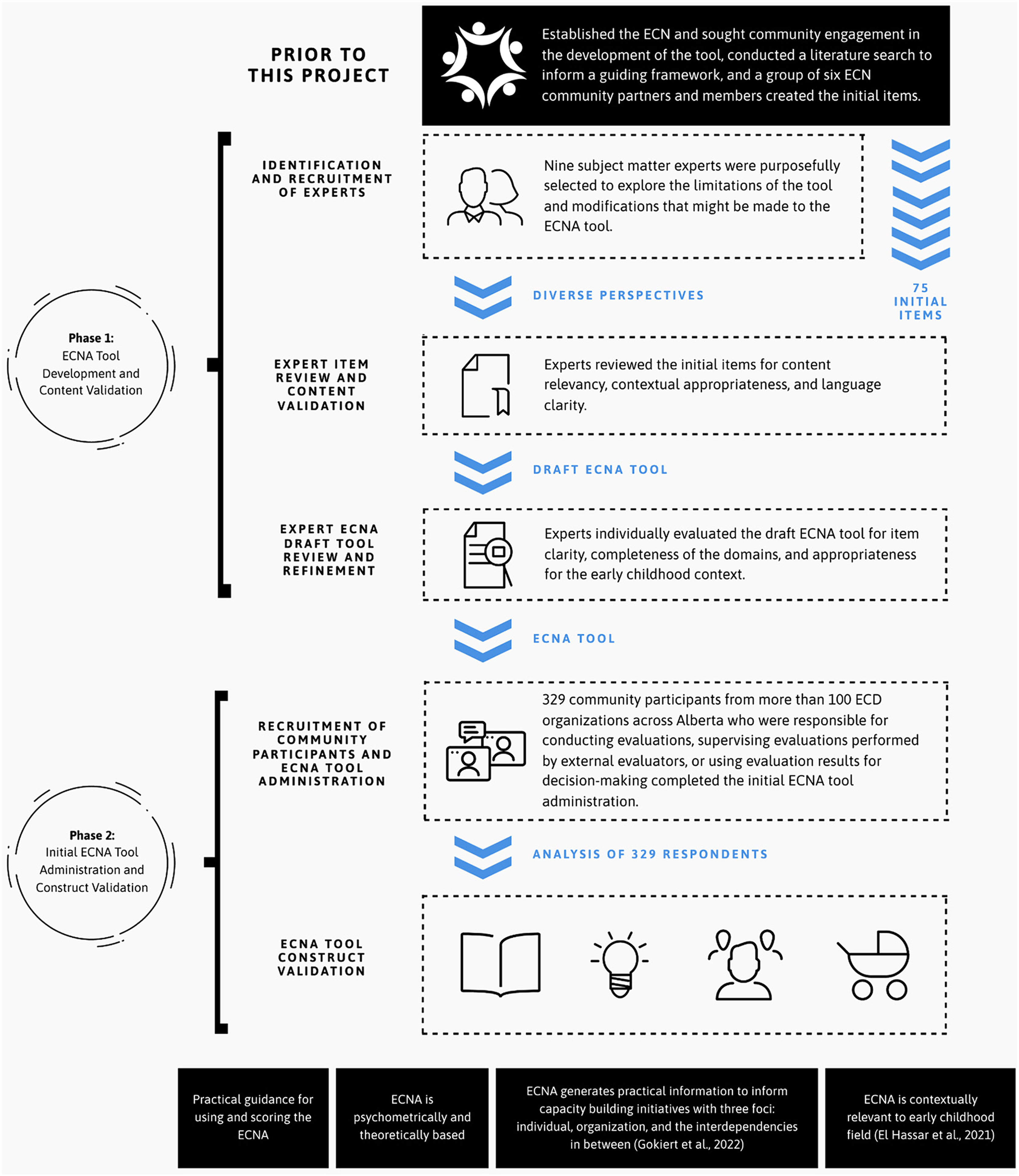

The principles of CBPR guided our approach to the development and validation of the ECNA tool (Mikesell et al., 2013; Wallerstein et al., 2018). This approach to research equitably involves key interest holders across the research and tool validation process (e.g., Bishop et al., 2018; Brush et al., 2024), ensuring that the tool is grounded in diverse community perspectives and expertise, represents the unique context in which it is intended to be used, and generates information useful to the community's needs (Wright et al., 2010). Six Network members who were part of a governance committee focused on research and evaluation formed a community-based advisory committee to oversee and engage in the tool development and validation process. These six individuals included academics and community partners, with expertise across the early childhood, measurement, and evaluation fields. Below, we describe the procedures involved in the two phases of the ECNA tool development and validation process, which are also summarized in Figure 1. The first phase focused on the initial item and survey instrument development and content validation, and the second phase focused on the pilot tool administration and construct validation. The study received approval from the University of Alberta research ethics board.

ECNA tool development, administration and validation.

Phase 1: ECNA Tool Development and Content Validation

The first step in our process was to conduct a comprehensive review of the literature, with a specific focus on EC theories and tools (e.g., Bourgeois et al., 2013; Cousins et al., 2008; Fierro, 2012; Nielsen et al., 2011; Preskill & Boyle, 2008; Taylor-Ritzler et al., 2013). Describing and defining the relevant components of EC has been an ongoing effort with little consensus for more than two decades (e.g., Bourgeois et al., 2023; Compton et al., 2001; King, 2020; Nielsen et al., 2024; Stockdill et al., 2002). Our comprehensive review of the literature on EC theories and tools was conducted as part of a doctoral dissertation and revealed that EC is typically measured across two distinct domains: individual EC and organizational EC (see El Hassar, 2019 for a detailed description of the methodology for this review).

At the individual level, the three key findings that emerged from the review included attitudes toward evaluation, evaluation skills, and motivation to evaluate. While these concepts are referred to differently across theories and instruments, the essence of attitude is an individual's understanding of the purpose and benefits of evaluation, and how the evaluation process is perceived, including its relevance and importance (Cheng & King, 2016; Fierro, 2012; Taylor-Ritzler et al., 2013). Evaluation skills were delineated by general skills (the ability to conduct and understand evaluations, including the design, implementation, analysis and interpretation of findings) and specific skills (technical, analytical, and communications skills related to evaluation) (Galport & Azzam, 2017). Finally, motivation to evaluate is the willingness to invest resources (e.g., time, money) into conducting and using evaluations, and the reasons behind doing so (e.g., accountability, learning) (e.g., Labin et al., 2012; Preskill & Boyle, 2008).

At the organizational level, three key features emerged: organizational commitment, culture, and leadership. Organizational commitment focuses on the resources, learning climate, budget, and external factors that influence an organization (Bourgeois & Cousins, 2013; Taylor-Ritzler et al., 2013). The culture of an organization includes its openness to new ideas, staff involvement in planning, and a culture of reflection, all of which support a learning climate (Taylor-Ritzler et al., 2013). Finally, leadership traits such as collaboration, conflict resolution, celebrating achievements, and promoting reflective practice influence evaluation and foster a positive evaluation environment (e.g., encouraging evaluative thinking and discussing findings with staff) (Cousins et al., 2004; Labin et al., 2012). A 2024 review of 16 EC instruments across 20 studies found that EC can also be described in terms of structural (e.g., Nielsen et al., 2011) and dynamic (e.g., El Hassar et al., 2021; Taylor-Ritzler et al., 2013) models. Structural models focus on the components of EC, while dynamic models describe “the sequences of components that are interrelated and lead to EC (how to build EC)” (Nielsen et al., 2024, p. 454).

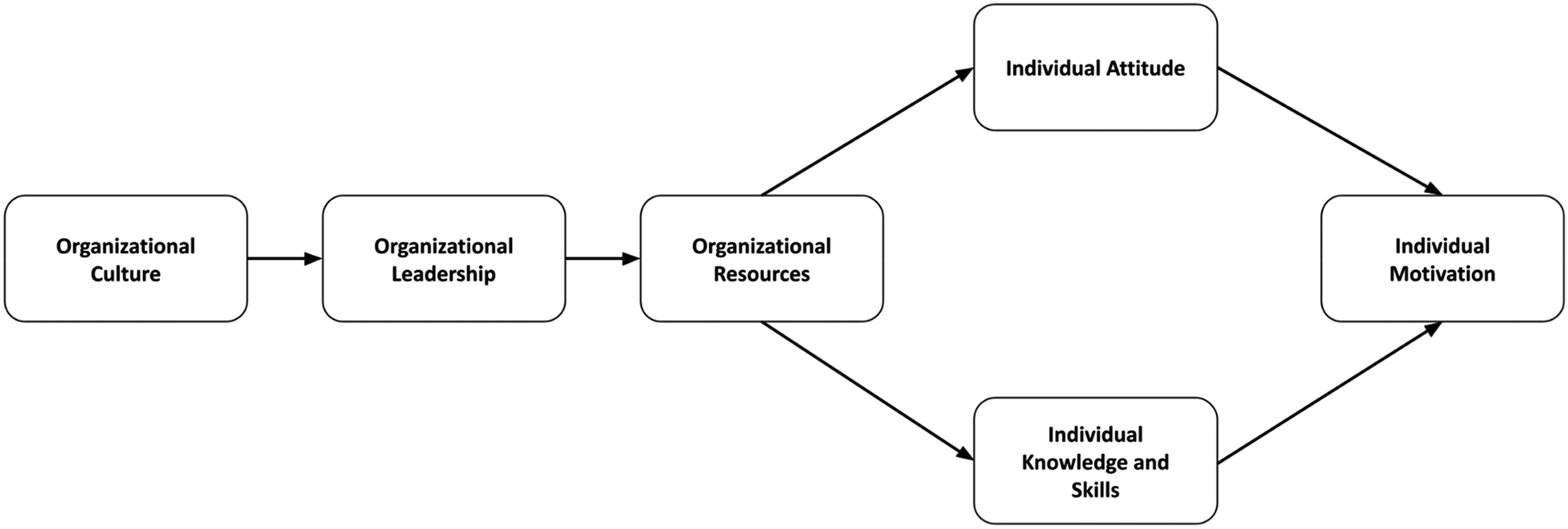

Based on the review of the literature on EC models (i.e., Preskill & Boyle, 2008), and using specific EC instruments as guides (e.g., Cousins et al., 2008), we developed a dynamic framework of EC that articulates the relationships among six EC components (see Figure 2). Further the advisory committee drafted 75 scale items for measuring the six components of EC at the individual (micro) and organizational (meso) levels for the early childhood field: Individual Attitudes, Individual Knowledge and Skills, Individual Motivation, Organizational Culture, Organizational Leadership, and Organizational Resources. The framework represents linear relationships between and among the EC components, which were statistically tested using structural equation modelling in a related study (See El Hassar et al., 2021).

Guiding EC framework for the early childhood field.

Identification and Recruitment of Experts

Nine subject matter experts were purposefully selected using maximum variation sampling, a sampling process that begins by establishing criteria to differentiate participants and then selects those who differ across the specified criteria to increase the diversity of the group (Creswell, 2012). This sampling method was appropriate because the purpose of the review was to comprehensively explore the limitations of the tool and modifications that should be made, not to achieve consensus among experts. The sampling frame consisted of professionals with recognized expertise in evaluation and ECB, measurement, and the early childhood context within a western province in Canada. Professional networks of the research team, prior collaborations, and referrals from leading organizations in early childhood and evaluation were responsible for identifying experts. These experts were also organizationally diverse (e.g., representatives of community agencies, government, nongovernment funding agencies, and academic institutions). We contacted potential experts and each participant received an information letter and consent form describing the study purpose, the item review and tool refinement procedures, and their participant rights. Participants that agreed to participate documented their consent in writing.

Expert Item Review and Content Validation

Expert item review was used to assess the content relevancy of the initial 75 items for measuring EC constructs, contextual appropriateness for the early childhood development field, and language clarity. The experts met in-person at the Networks office, which is situated on a University campus, and were divided into two groups to review and discuss each aspect of the tool (i.e., instructions, items, scales, and formatting) and to answer the questions listed in a protocol developed by the research team. After finishing this 45-min review session, the two groups came together to discuss their suggestions, with a particular focus on areas of agreement and difference. We captured the processes and outcomes of the expert review through observation by two facilitators and in writing via the artifacts (e.g., post-it notes) generated by the experts and notetakers. The two facilitators also asked follow-up questions, as needed, about the clarity of the items, the completeness of the domains, the response scales, and any potential consequences of the content of the questions.

An influential conclusion that emerged from these discussions was that to be relevant to the early childhood context, an EC framework and tool must fulfill two roles. First, it should identify components at the individual and organizational levels. Second, it should focus on EC specific to conducting and using evaluations. Consistent with this reasoning, we relied heavily on Preskill and Boyle's (2008) ECB conceptualization because of its clear articulation of individual and organizational EC components, and Cousins and colleagues’ approach (2014) for its specification of the relationships among different EC components.

Expert ECNA Draft Tool Review and Refinement

The expert review also informed the refinement of the draft ECNA tool, which was composed of 75 items organized across three sections. The first section consisted of 26 items assessing self-reports regarding an individual's EC, including the knowledge, skills, and motivations associated with evaluation on a four-point Likert scale ranging from 1 (strongly disagree) to 4 (strongly agree). An additional 16 items assessed the respondent's evaluation skills and their perceived importance in the respondent's current job. These 16 skill-based items were rated on two three-point scales, from not at all skilled to very skilled and not at all important to very important, respectively. The second section of the instrument consisted of 33 items measuring the organizational EC domain, including respondents’ perceptions of their organization's attitude toward evaluation, the resources invested in evaluation, and leadership approaches that might enhance or deter ECB efforts. The organizational EC domain used the same four-point Likert rating scale as the individual EC domain. The final section of the draft tool asked respondents to provide demographic information (e.g., age, job title, years of experience with evaluation). The experts were asked to individually evaluate, by item, the draft ECNA tool for item clarity, completeness of the domains, and appropriateness for the early childhood context. Experts were also asked to note anything that may not have come up or been captured during the initial item review. Among the key outcomes was the elimination of double-barreled items (i.e., items that ask more than one question, making valid inferences challenging) and attention to the length of the tool for completion. Experts’ feedback was incorporated and resulted in the ECNA tool that was administered for validation purposes, which is described in Phase 2, below.

Phase 2: ECNA Tool Administration and Construct Validation

The objective of Phase 2 was to pilot the ECNA tool and gather data to test its construct validity.

Recruitment of Community Participants and ECNA Tool Administration

We used nonprobability purposive sampling to engage the wider early childhood community in our tool validation and ensure that key community needs and interest holders’ values related to the issue of capacity building were captured and used to inform tool refinement. We targeted individuals working in the early childhood field in a Western Canadian province who were involved in evaluation-related activities including conducting evaluations, supervising evaluations performed by external evaluators, or using evaluation results for decision-making. Recruitment occurred via email invitation involving two waves over four weeks during January to February 2016. The email described the study's purpose and participant rights. Participants were asked to indicate their consent to participate by completing the tool, which was administered using FluidSurveys (2015). The first recruitment wave yielded 101 participants, primarily comprising decision-makers in early childhood organizations at a provincial level. The second recruitment wave in May to June 2016 engaged an additional 228 participants working across different levels of early childhood organizations within a large municipality in the province. The lead researcher sent all email invitations, and encouraged staff responsible for evaluation-related activities to complete the instrument and forward it to appropriate individuals within their organization. The combined sample from both waves thus comprised 329 community participants. Because the study sample was drawn from an unknown population size, we were not able to calculate a response rate.

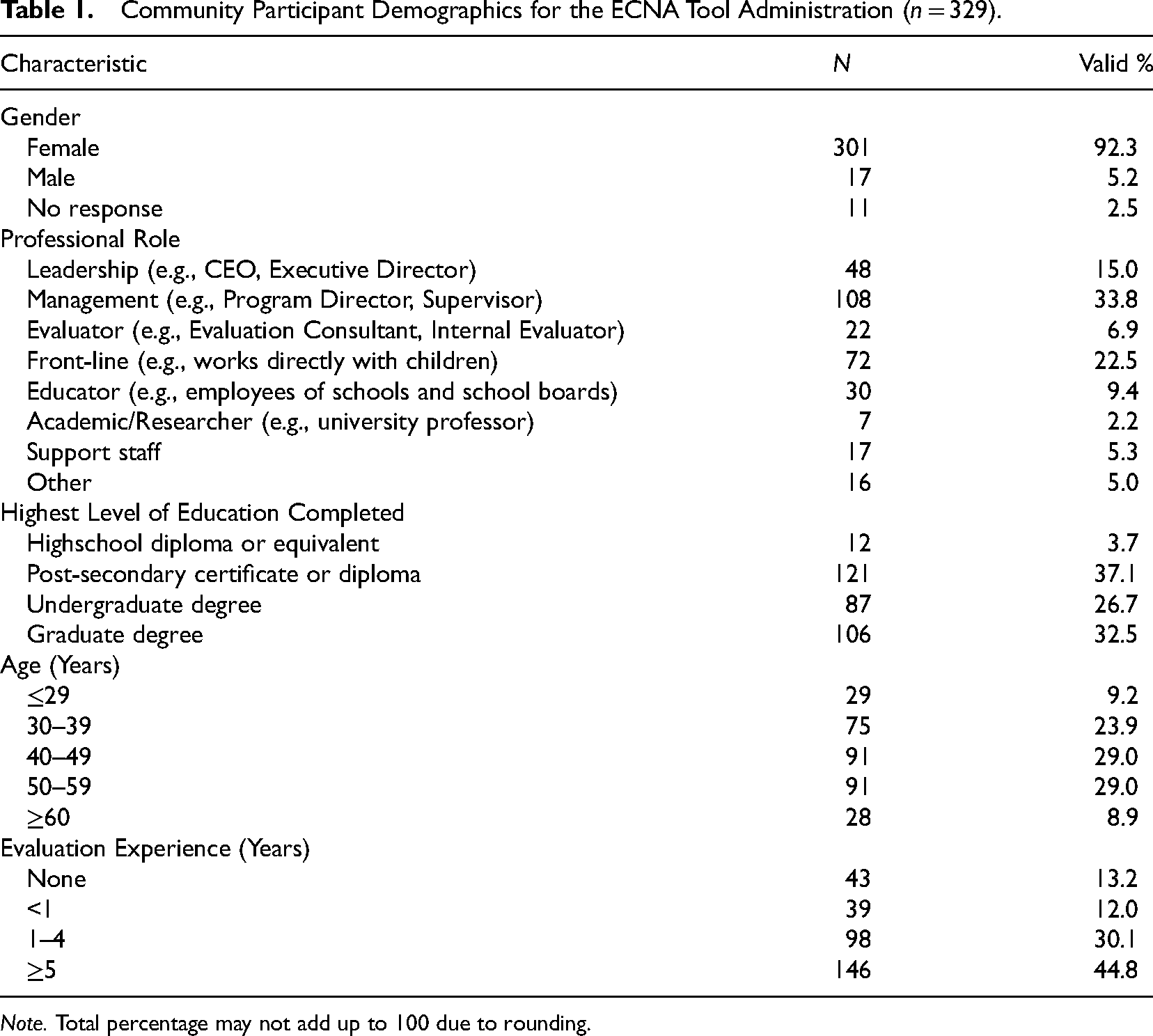

The 329 participants included individuals representing a variety of early childhood development organizations who are responsible for evaluation in some form. Participant demographics provided in Table 1 indicate that the majority of the participants identified as female (92%) and that the study sample reflected diverse professional roles, including 34% management, 23% front-line service delivery, and 15% leadership roles. The majority (87%) of the respondents reported having some evaluation experience (less than 1 year to more than 5 years), with 44.8% reporting 5 or more years of evaluation experience. More than half of the participants (59%) reported having at least an undergraduate degree as their highest level of education.

Community Participant Demographics for the ECNA Tool Administration (n = 329).

Note. Total percentage may not add up to 100 due to rounding.

ECNA Tool Construct Validation

The data from the ECNA tool administration were checked for missing values and inconsistencies (Fabrigar, 2012). Fifty-two respondents (15%) had systematic missing data because they did not contribute to evaluation activities in their present position and thus they were not asked nine items in the individual domain and 12 items in the organizational domain. The data were also investigated to verify univariate normality, and new indicators were created as needed (Tabachnick & Fidell, 2006). We used SPSS version 23 (IBM Corp., 2015) to generate descriptive statistics for the individual and organizational domain items; as expected, the data were skewed toward positive responses.

Second, we used Mplus 7.4 (Muthén & Muthén, 1998) to conduct an exploratory factor analysis (EFA) to determine the underlying constructs, including principal component analysis as a data-reduction method to examine the underlying factor pattern (Floyd & Widaman, 1995). The purpose of EFA is to ascertain the nature and number of latent factors accounting for the variation and covariation that exist among items (Thompson, 2004). The EFA also acted as a test of construct validation, considering the internal structure of the ECNA tool. The 26 individual and 33 organizational EC items were analyzed separately with EFA, using the maximum likelihood parameter estimator to adjust for item non-normality and missing values and a Geomin rotation to adjust for inter-factor correlations (Treiblmaier & Filzmoser, 2010). The 16 skill-based items were not included in the EFA because of their interdependence, and because they were in essence a two-part question (i.e., what level of skill do you possess in evaluation, and how important are those skills to your work).

The number of factors for each EC domain (i.e., individual and organizational EC) was determined by comparing the goodness of fit and the comparative indices of six potential models, along with the theoretical interpretation of the factors. For goodness-of-fit indices, the root mean square error of approximation (RMSEA), comparative fit index (CFI), Tucker-Lewis index (TLI), standardized root mean square residual (SRMR), and chi-square test of model fit (Brown, 2006; Kline, 2011) were used. We used the Akaike information criterion (AIC; Akaike, 1987) and Schwarz's Bayesian information criterion (BIC) as comparative indices, allowing us to compare EFA models with different numbers of factors while considering the complexity of the models (Brown, 2006). Interpretation of both AIC and BIC, which are similar measures, is derived from comparing their values as the model changes. Items with loadings of 0.30 or less and those that loaded equally on multiple factors were eliminated. Factors that were measured by only one or two items were also eliminated, as the items lacked stability for measuring the factor (Floyd & Widaman, 1995). Decisions to eliminate items and factors were made following the statistical results and the theoretical understanding of EC.

Findings

The findings are organized into three sections: First, we share validity evidence for the individual and organizational EC domains; second, we provide practical guidance for the use of the ECNA tool, including the available resources on the Network website for supporting scoring and interpretation; and finally, we share case applications of the ECNA's use by organizations that serve children and their families in Canada.

Validity Evidence of Evaluation Capacity Domains to Inform Capacity Building

Cattell's scree test (included as part of the EFA) revealed a three-factor solution for individual EC (consisting of 16 items from the list of 26) and a three-factor model for organizational EC (consisting of 12 items from the list of 33), which explained the majority of the variance (51.2% and 63.8%, respectively).

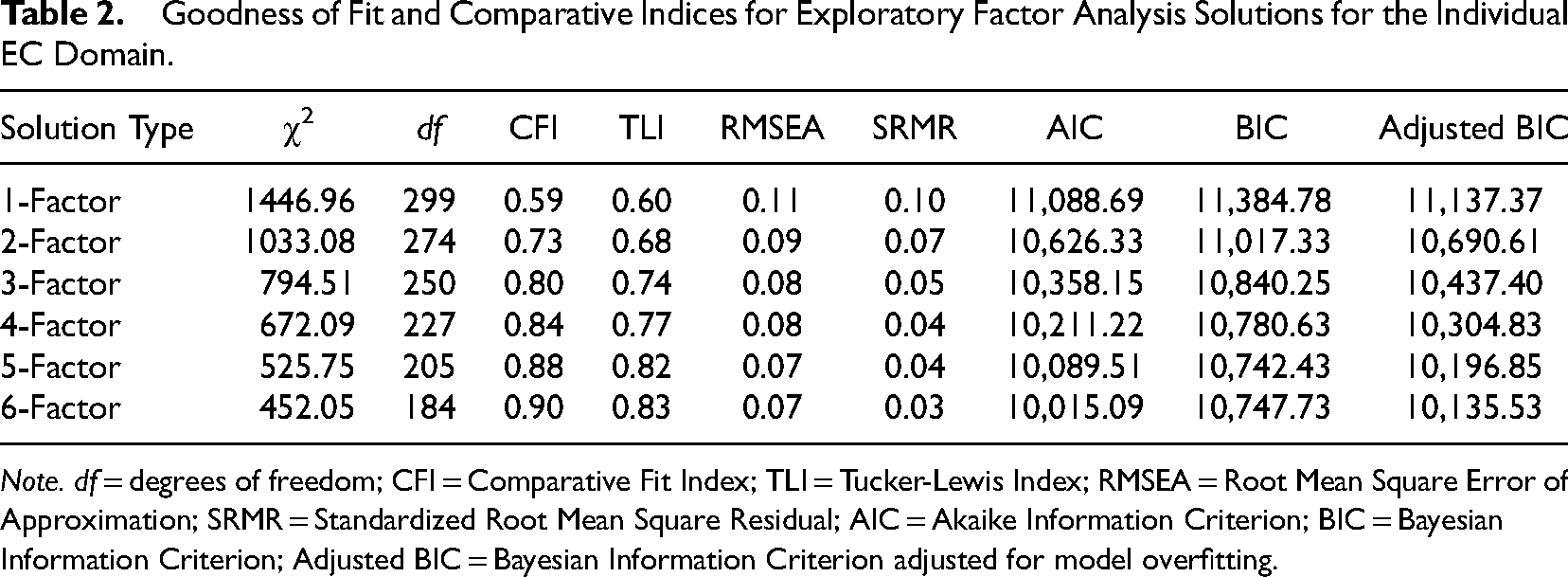

Individual Evaluation Capacity

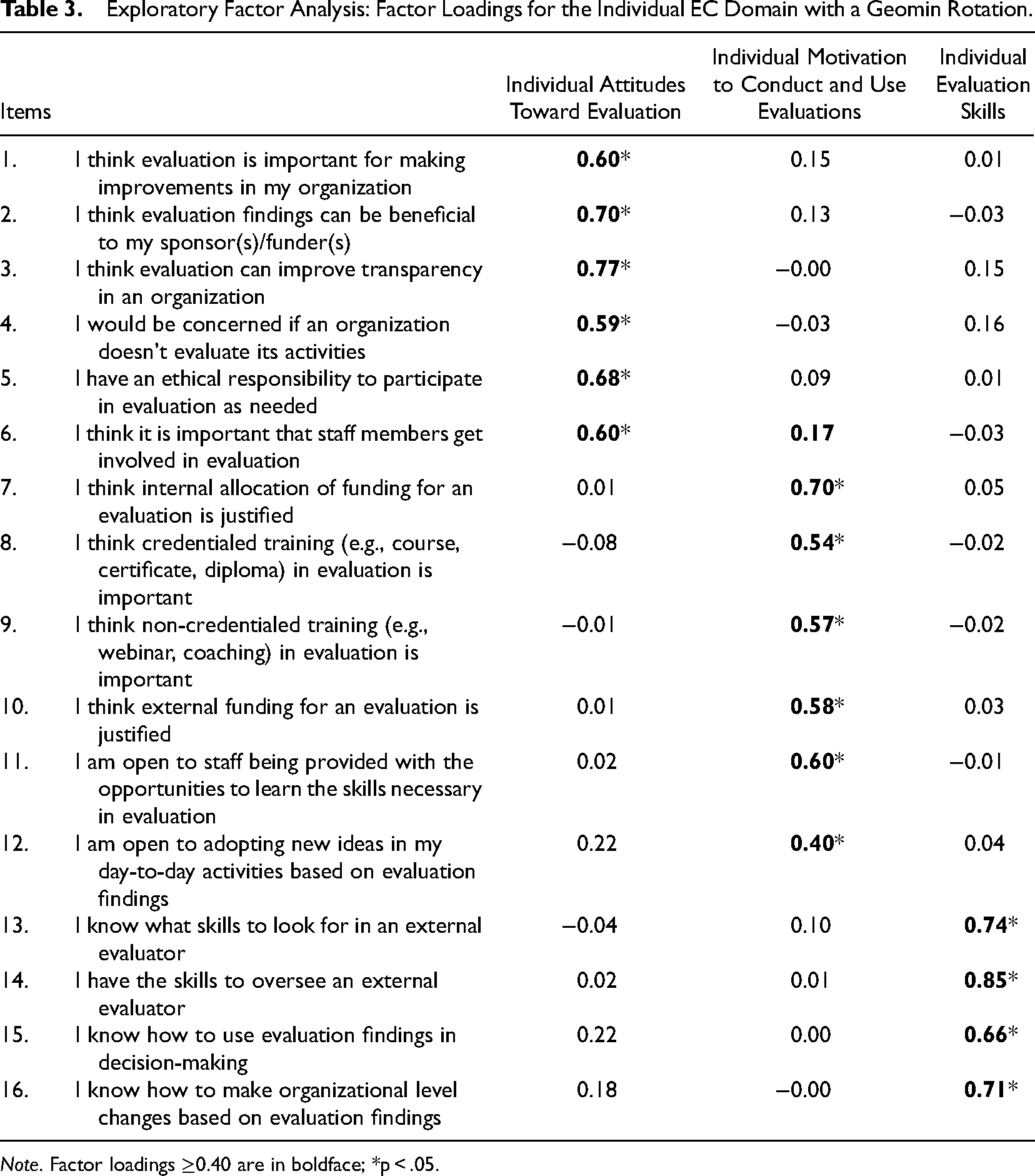

Table 2 presents the fit and comparative indices for each solution tested. According to the overall goodness-of-fit indices, the final EFA solution of the three-factor individual EC domain had a good fit with the data: SRMR = 0.041, RMSEA = 0.082 (90% CI [0.07, 0.094]), TLI = 0.82, CFI = 0.90. The chi-square test of model fit, χ2 (75, n = 329) = 240.87, p < .001, was statistically significant, indicating a poor fit; however, this test is stringent and requires a perfect model fit (Brown, 2006), so it was not used to evaluate the goodness of fit for the model. Even though the fit and comparison indices provided support for five- and six-factor solutions, some of the factors in these models were measured by only one or two items, which is not adequate for measuring latent factors (Brown, 2006). Therefore, the three- and four-factor solutions provided better alternatives for further investigation, with the best solution being the three-factor individual EC domain.

Goodness of Fit and Comparative Indices for Exploratory Factor Analysis Solutions for the Individual EC Domain.

Note. df = degrees of freedom; CFI = Comparative Fit Index; TLI = Tucker-Lewis Index; RMSEA = Root Mean Square Error of Approximation; SRMR = Standardized Root Mean Square Residual; AIC = Akaike Information Criterion; BIC = Bayesian Information Criterion; Adjusted BIC = Bayesian Information Criterion adjusted for model overfitting.

The individual EC domain EFA results are presented in Table 3. The three individual factors are made up of 16 items. Labels for each factor were chosen based on the items representing them. The first individual factor (items 1–6) was labelled Individual Attitudes toward Evaluation, referring to individuals’ willingness to invest their own time and resources in evaluation activities, with factor loadings ranging between 0.59 and 0.77. The second individual factor (items 7–12) was labelled Individual Motivation to Conduct and Use Evaluations, referring to an individuals’ willingness to participate in evaluation and positively perceive the role of evaluation for various purposes, with factor loadings ranging between 0.40 and 0.70. The third individual factor (items 13–16) was Individual Evaluation Skills and refers to self-reported skills for conducting evaluations and using evaluation results, with factor loadings ranging from 0.66 to 0.85. The EFA yielded significant correlations between Individual Attitudes and Individual Motivation to Conduct and Use Evaluations (r = .48, p < .05) and between Individual Motivation to Conduct and Use Evaluations and Individual Evaluation Skills (r = .46, p < .05).

Exploratory Factor Analysis: Factor Loadings for the Individual EC Domain with a Geomin Rotation.

Note. Factor loadings ≥0.40 are in boldface; *p < .05.

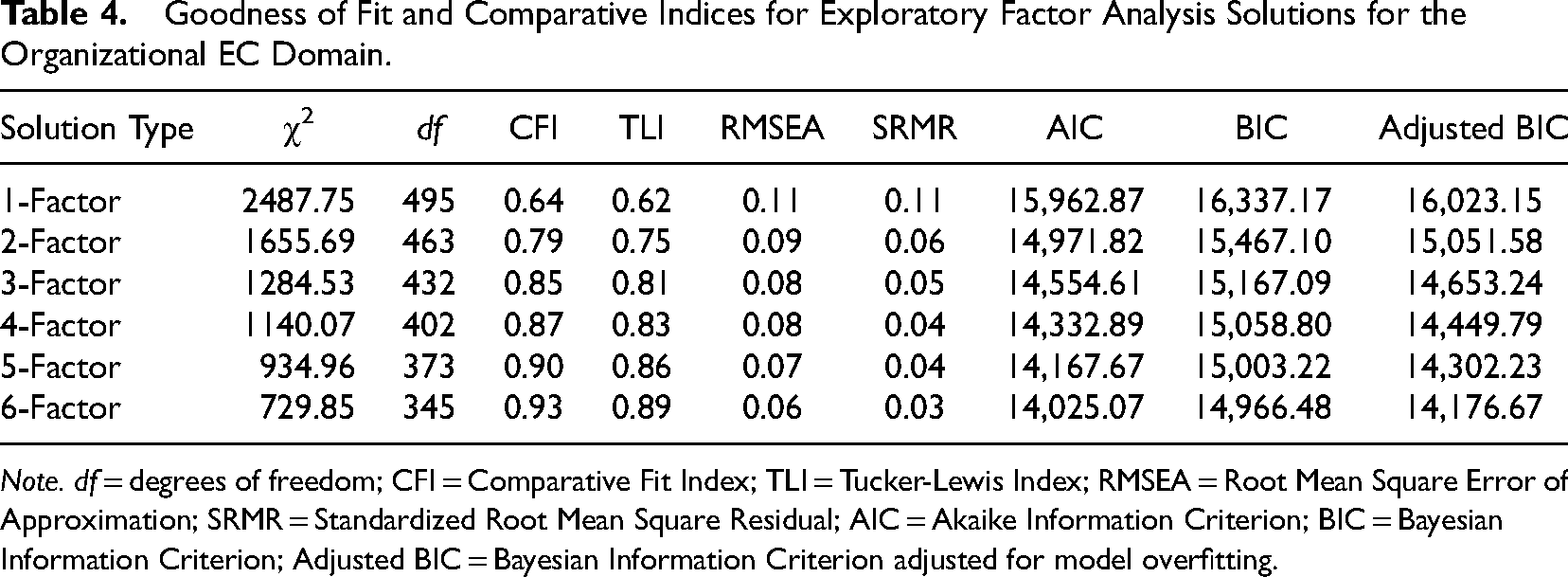

Organizational Evaluation Capacity

The fit and comparative indices are presented and compared in Table 4 for each of the six solutions tested. Examination of the item loadings revealed that the three- and four-factor solutions provide better alternatives for further investigation, similar to the individual EC domain. According to the overall goodness-of-fit indices, the final EFA solution of a three-factor organizational EC domain had a good fit with the data, SRMR = 0.022, RMSEA = 0.038 (90% CI [0.009, 0.060]), TLI = 0.98, CFI = 0.98.

Goodness of Fit and Comparative Indices for Exploratory Factor Analysis Solutions for the Organizational EC Domain.

Note. df = degrees of freedom; CFI = Comparative Fit Index; TLI = Tucker-Lewis Index; RMSEA = Root Mean Square Error of Approximation; SRMR = Standardized Root Mean Square Residual; AIC = Akaike Information Criterion; BIC = Bayesian Information Criterion; Adjusted BIC = Bayesian Information Criterion adjusted for model overfitting.

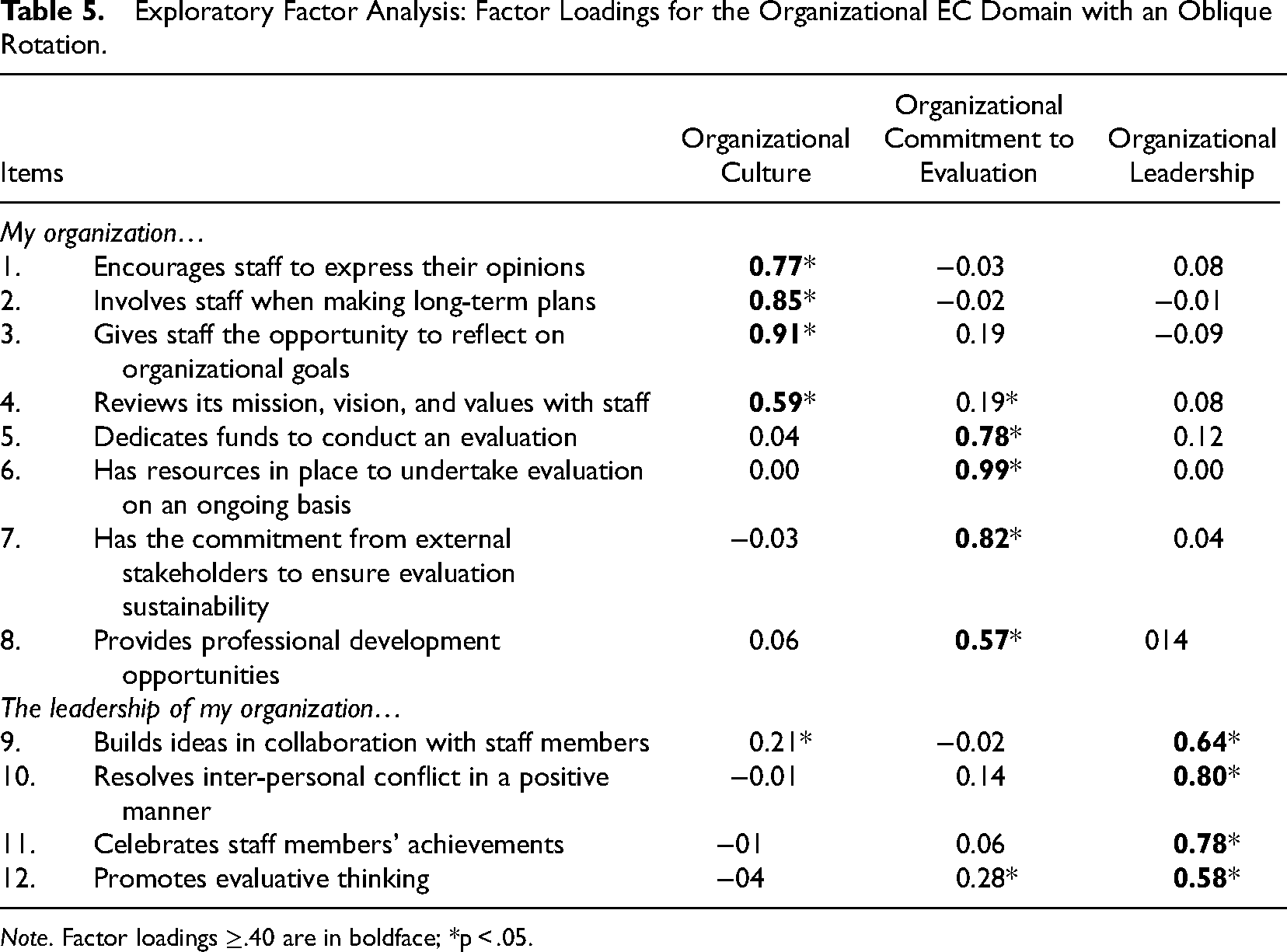

The organizational EC domain EFA results are presented in Table 5. The three organizational factors are made up of 12 items, and labels for each factor were chosen based on the items representing them. The first organizational factor, Organizational Culture (items 1–4), refers to the extent to which an environment enables evaluative thinking and collaboration, with factor loadings ranging from 0.59 to 0.91. The second factor, Organizational Commitment to Evaluation (items 5–8), refers to the resources, relationships, and expertise that organizations put in place to facilitate conducting and using evaluations. This factor had loadings that ranged from 0.57 to 0.99. The last factor, Organizational Leadership (items 9–12), refers to organizational leadership that is open to ideas and encourages risk taking, with factor loadings ranging from 0.58 to 0.80. The analysis yielded significant correlations between all three organizational factors: Organizational Culture was weakly associated with Organizational Commitment to Evaluation (r = .24, p < .05), there was a moderate to strong correlation between Organizational Leadership and Organizational Culture (r = .68, p < .05), and Organizational Commitment to Evaluation had a moderate association with Organizational Leadership (r = .32, p < .05).

Exploratory Factor Analysis: Factor Loadings for the Organizational EC Domain with an Oblique Rotation.

Note. Factor loadings ≥.40 are in boldface; *p < .05.

ECNA use, Scoring, and Interpretation

One important outcome of this paper is to provide access to the ECNA tool and guidance on how to practically use it to assess the EC of individuals and the organizations they work within. Essentially, this tool can be used to assess how ready individuals and organizations are to engage in evaluation activities. This tool can be completed independently, especially when assessing an individual's EC attitudes, motivations, and skills, but it can also be completed by a group of individuals who want to assess their organization's EC needs. The ECNA tool can be found on the Network webpage at the hyperlinked address and includes instructions, items, and response scales. The tool also includes a section with demographic questions that can be used to gather more information about participants’ previous evaluation experience, current roles in their organization, and the type of evaluation professional development they have received in the past and would like to receive in the future. We encourage users of this tool to ask these questions as part of the tool administration, but the questions in this section of the tool can also be tailored to the specific needs/context of the organization or field. While this tool was designed for use with early childhood practitioners and organizations, we believe it can also be used in different contexts, and share case applications of its use in the next section.

We recognize that it can be challenging for practitioners or others to effectively and easily use tools such as ECNA in practice. To overcome this challenge, we have outlined simple and clear scoring instructions and score interpretations (see hyperlinked tool, above). In general, the lower the scores on overall and specific individual and organizational EC domains/items, the less proficient or more limited an individual's/organization's EC. The higher the scores, the more EC individuals or organizations possess. For alternative options for scoring that may be preferable to those who are more experienced in statistical analyses and research, please see El Hassar et al. (2021), where tool scoring and interpretation are described based on mean score calculations.

ECNA Case Applications in Sectors That Support Children and Families

The ECNA tool has already shown promise within and beyond the early childhood field when assessing individual and organizational capacity. Below, we briefly showcase three Canadian capacity-building case applications, presented in chronological order. The first case application describes a national partnership to provide ECB opportunities (full-day workshops, webinars, online resources) to individuals and organizations working in the refugee settlement sector in Canada (Janzen et al., 2022). The second case application involved supporting a Mentoring Partnership in the Canadian province to build EC in three large mentoring organizations that provide supports and resources to children within and outside of school contexts (Gokiert et al., 2024; Keys et al., 2024). The final case application involved supporting a First Nation School Board (see www.fnsb.ca) in Canada to co-create an evaluation plan with School Board leadership (Executive Director, Literacy Director, Principals, and teachers) to evaluate their strategic education plan with a specific focus on early literacy. For each case application, we describe the EC initiative and how the ECNA tool was used to inform the development and implementation of the EC initiative.

Building the Evaluation Capacity of the Refugee Settlement Sector

The Network, in partnership with a Canadian community-based research organization, received funding to build EC for individuals and organizations in the refugee settlement sector. We made the ECNA tool available on the project website (www.eval4settlement.ca) and encouraged participants to complete it prior to attending full-day community-based evaluation training workshops, webinars, or coaching sessions that were offered across Canada from 2018 to 2021 (see Janzen et al., 2022). We used the results, which indicated sectoral needs and strengths, to develop or modify our targeted strategies and resources to build EC in the refugee settlement sector. We administered additional surveys, including some items from the ECNA tool, at the end of each year of the project with participants that had attended any of our tailored evaluation capacity strategies (n = 341). The survey was used to assess participants’ perceptions of their acquired knowledge and skills in planning and conducting evaluations in community-based settings. We found that the impact was greater at the individual rather than the organizational level. For instance, participants stated that the project enhanced their knowledge about the importance of involving clients in the evaluation process as well as building a culture of evaluation in their organization. This project was also successful in equipping participants with the necessary skills and tools to act on their learnings and start planning or conducting their evaluations. For example, some participants stated that they involved clients with lived experience as members of evaluation steering groups, as evaluation participants, or as community researchers in their evaluations. Some individuals and organizations indicated that they still need more support to confidently conduct evaluations on their own, particularly in the following areas: (1) examples of evaluations in the resettlement sector, including approaches and strategies; (2) more workshops, coaching sessions, and training on implementing community-based evaluations; and (3) more in-depth training on each phase of evaluation, especially data collection and analysis.

Building Evaluation Capacity with Child and Youth Mentoring Organizations

A large mentoring organization, the BGC Big Brothers Big Sisters of Edmonton and Area, participated in a 1-week intensive university program planning and evaluation course offered through the University of Alberta. The purpose of the course was to learn about evaluation while co-creating an implementable evaluation plan (Gokiert et al., 2021). The organization wanted to bring this opportunity to more mentoring organizations across the province, leading to a partnership between the local university and a larger umbrella mentoring collaborative (The Alberta Mentoring Partnership) representing 400+ mentoring organizations. The purpose of the partnership was to create a 6-month ECB intervention called E-Eval. The first 3 months of the intervention consisted of teaching the knowledge and skills mentoring organizations need to design an evaluation, including data collection strategies. The remaining 3 months were devoted to implementing the design, with support (i.e., individual and group coaching). For more information on this initiative, see Keys et al. (2024). Distinct learning cohorts have participated in two offerings of this intervention (2020–2021 and 2022–2023). In the second iteration, there was a desire to embed more Indigenous and culturally responsive approaches to evaluation in the ECB curriculum because of the nature of the participating organizations and the children and youth they serve. Each learning cohort completed the ECNA tool pre- and post-intervention to assess individual and organizational EC (Keys et al., 2024). Data from this assessment showed that after both offerings of E-Eval, participants felt that their individual evaluation skills and behaviours had substantially improved, as had their organization's capacity to effectively conduct evaluations and use evaluation results. In focus groups, participants shared that their expanded knowledge of Indigenous approaches in evaluation has helped them reframe evaluation planning and implementation.

Building the Evaluation Capacity of a First Nation School Board

In 2022, an FNSB in Canada approached the Network for support with evaluation design and implementation of a new early literacy program across their 13 schools. We borrowed heavily from the 6-month EC curriculum described above, and tailored it to an intensive 2-month training (four biweekly training sessions and four online modules), with follow-up coaching sessions each month for the remaining 4 months to best meet the unique needs and desires of the FNSB. Before we started the training, we had the leadership (i.e., Executive Director, Literacy Lead) complete the ECNA tool individually and then discuss it collaboratively in light of their understanding of their organizational EC and what approaches would be most effective as we moved forward in our work together (e.g., what they needed to learn, what support they needed, and what time and capacity they had to devote to the EC opportunity and beyond). The collaborative discussions revealed that while their individual EC was high as it related to student evaluation and assessment, their knowledge of program evaluation was limited. The School Board was just forming, so organizationally there was very limited capacity, but there was a significant openness to learning, evaluative thinking, and reflective practice. The use of the ECNA tool in this context is different from the examples shared above, but proved incredibly useful as a collaborative discussion tool that could be used to tailor EC training for leadership and their broader staff and community partners (e.g., teachers, principals, community members, and literacy coaches), who were engaged at different points throughout the 6 months of the EC initiative.

Discussion and Implications

In this paper, we present a CBPR approach to the development and validation of the ECNA tool and offer practical guidance for its use by organizations in early childhood and the broader social-serving sector. We also make the ECNA and scoring protocol available thereby advancing theory and practice in EC assessment while carefully considering the specific embedded individual, organizational, and social-serving sectoral contexts in which the tool is intended to support ECB efforts.

A Novel CBPR Approach to EC Tool Development, Validation, and use

In describing our novel CBPR approach, we highlight the essential role of intended users as well as content experts in the development and validation of EC tools. To enhance replicability, we elaborate on the truncated description of the systematic and collaborative development and validation procedures that was previously reported in (El Hassar et al., 2021). In this way, we provide a detailed description of collaborative procedures not yet seen in other EC tool descriptions (Nielsen et al., 2024).

Unique to the development of the ECNA tool, context was integral throughout the validation process. The bulk of research in the EC area has focused more on defining and conceptualizing EC or ECB, and less on EC measurement and contextual relevance (Labin, 2014; Preskill, 2013; Taylor-Ritzler et al., 2013). For instance, some studies that created and validated EC frameworks and/or tools have made efforts to test the validity of their frameworks/tools (e.g., assess face validity, construct validity, convergent validity; Cousins et al., 2008; Nielsen et al., 2011; Taylor-Ritzler et al., 2013). However, the intended setting and environment for a tool's use are usually not considered in the validation process (Taylor-Ritzler et al., 2013). When the context is considered in these tools, very little information is provided about how this context influenced the tool's development and validation. Compared with previous efforts, the ECNA provides early childhood and social-serving organizations with access to tailored, field-specific framework and tool. It enables users to gather information to make informed decisions to improve their EC and track improvement over time.

The CBPR approach offers promising collaborative processes for tool development and validation that enhance organizations’ ownership and use of EC tools. A collaborative process operationalizes the CBPR principles, in which meaningfully engaging the community deepens partner's commitment, motivation, and buy-in toward behavioural change (Mikesell et al., 2013). Leveraging the Network, as well as engaging diverse individuals and community organizations during ECNA development, significantly shaped the tool and its use to meet specific early childhood organizational needs. This is important because tools in other fields (particularly health education and health equity) have demonstrated promise in their use and influence when developed through a collaborative process (e.g., Brush et al., 2020; Henderson et al., 2013; Poureslami et al., 2011).

There is a growing recognition of the role that EC measurement can play in building strategies for organizational capacity to both conduct and use evaluation. However, a recent scoping review found a disconnect between the knowledge that organizations gain from a given EC tool and their ability to apply it to inform EC strategies within their organization (Bourgeois et al., 2023). The review's authors noted that an applied component, in which participants engage in the process of evaluation, boosts EC uptake at the organizational level. In the same way, by engaging members of the early childhood sector in developing the ECNA tool, its users were invested in its development. We anticipate that this collaborative approach will subsequently enhance both the ability and the motivation of these members to implement the tool.

Use of the ECNA Tool Within the Early Childhood and the Social-Serving Fields

In presenting our validity evidence for the ECNA tool, we demonstrate its content and construct validity within the early childhood field, while our case applications provide examples of its use more broadly within sectors that serve children and families. By identifying individual and organizational EC factors, we make theoretical assumptions explicit and measurable. Together, our results provide a basis for evaluating measurement models for both the individual and the organizational EC domains, using additional analyses to gather more validity evidence about the ECNA tool (e.g., CFA). For example, a previous study (El Hassar et al., 2021) explored the validity of the ECNA tool using CFA with a smaller subset of the current sample, and examined direct and indirect relationships among individual and organizational EC domain factors.

Because we drew on existing EC frameworks (i.e., Preskill & Boyle, 2008) and measurement tools (i.e., Cousins et al., 2014) from the literature, it is not surprising that the ECNA shares some similarities with other EC tools developed beyond the early childhood field. For example, like instruments developed for the Canadian and US contexts (e.g., Bourgeois et al., 2013; Cousins et al., 2008; Taylor-Ritzler et al., 2013), the ECNA assesses organizational commitment to evaluation, organizational culture, and organizational leadership. Organizational commitment is operationalized primarily through the availability of organizational resources that enable evaluation work. While the broader construct of organizational commitment includes leadership support, learning climate, and external influences, our tool focuses specifically on the resources dimension because it is the aspect most feasibly and reliably assessed across diverse early childhood organizations.

Our conceptualization of organizational culture, referring to the extent to which an environment enables evaluative thinking and collaboration, is similar to what Taylor-Ritzler et al. (2013) call Learning Climate, which focuses on openness to new ideas, staff involvement in long-term plans, and the practice of reflection. However, it is worth noting that our conceptualization of organizational culture reflects mixed insights generated through mixed-methods community-based participatory research that integrated quantitative findings from the ECNA tool with qualitative themes from community consultations (Gokiert et al., 2022). We also encourage organizations that adopt the ECNA tool to discuss and articulate their own understanding of organizational culture, using the tool's questions to guide their reflection.

Our tool also emphasizes organizational leadership to assess whether leaders are inclined to collaborate with their staff, resolve conflicts positively, celebrate achievements, and promote reflective thinking. The organizational leadership attributes included in the ECNA tool have been reported by other studies to be valuable for conducting and using evaluations (Cousins et al., 2004; Labin et al., 2012). While others have also included organizational leadership as an EC component (e.g., Bourgeois & Cousins, 2013; Fierro, 2012; Taylor-Ritzler et al., 2013), it has often been defined differently. For example, Taylor-Ritzler et al. (2013) operationalize organizational leadership in terms of the quality of tasks completed by organizational leaders (e.g., clearly communicating goals and the organizational mission), which applies a slightly broader lens to the concept of leadership than the ECNA tool employs.

Our provision of the 28-item

Unlike Taylor-Ritzler et al. (2013) in their EC instrument development and validation for use with nonprofits, we included key demographic questions in our tool (e.g., size of participants’ organizations, years of evaluation experience, position, etc.) to facilitate the interpretation of results and the potential influence of these characteristics on response patterns across social-serving sectors. Taylor-Ritzler et al. (2013) asserted that without these types of participant and organizational demographic characteristics to provide valuable context, caution would be necessary when interpreting results. Following their guidance, we included demographic questions in the ECNA to boost the reliability of results and their subsequent value to users, which also enhances the use of ECNA in research as a tool for repeated measures over time or over the course of interventions.

The ECNA tool can be customized at both the individual and organizational levels and is adaptable to the needs and structure of organizations. For example, an organization may choose to have each staff member complete and score the individual and organizational portions, then discuss the results together to generate strategies to build their individual and organizational EC. Alternatively, staff can complete the individual portion on their own before completing the organizational questions as a team, deciding together how to rate each item. To facilitate discussion and accommodate varying perspectives on an organization's EC, these discussions are best be led by someone within the organization with evaluation experience or by an external evaluator. Guided by the tool, the process of reflecting on and discussing individual and organizational capacity to both conduct and use evaluations can serve as an intervention in its own right. This process is often as valuable as the tool's results and highlights the ECNA's utility for research projects aligned with CBPR principles. This use of the ECNA tool was illustrated in our third case application, which involved building capacity in partnership with an FNSB. It would be important to explore this use of the tool further through additional data collection, similar to the multi-case study approach Bourgeois et al. (2015) used across levels of government and with a nonprofit.

Practical guidance on EC tool use is lacking (with some exceptions, such as Bourgeois et al. (2013), who promote this approach when using tools to measure organizational EC). Once an organization has information on areas of strength and areas needing improvement, it is important to transform scores into strategies for building EC, both for individual team members and the organization as a whole. It may be expedient to prioritize organizational EC first, as this approach will allow important organizational characteristics, such as a strong evaluation culture and supportive leadership for evaluation, to become established. This can ultimately create a solid foundation to support efforts to build individuals’ EC over time. Several resources, available in multiple formats, support both organizational and individual ECB. For example, Preskill and Russ-Eft (2015) have compiled several structured activities in their book to support individuals in both deepening their knowledge and applying principles of evaluation design and implementation. Further, as part of the [network name omitted for blind peer review]'s repository, a curated list of EC-related resources is available and applies to individuals or organizations. The resource list identifies ECB resources that are specifically relevant to early childhood development organizations, as well as those that apply to a more general audience. These resources include videos, web-based options, including resource libraries and online activities, and additional evaluation tools and instruments. A recent scoping review of EC also highlighted some unique approaches, such as establishing peer-to-peer communities of practice and drawing on evaluation instrument resource databases (Bourgeois et al., 2023). These resources can extend the ECNA scope by building on the tool's results to create and implement customized ECB strategies.

Limitations and Future Directions

Our findings should be considered in light of two limitations related to our community participant sample and study timeframe. First, the CBPR approach to the development and validation of the ECNA tool drew upon a sample of individuals and organizations from the early childhood and social-serving sectors that support children and families from one province in Western Canada. Future research should expand the sample within other early childhood and social-serving organizations across Canada and internationally, to test the generalizability of the ECNA tool. Applying the tool in various contexts within and outside early childhood, and gathering feedback from users, would help to refine and adapt the guidance to better support the tool's effective use. This approach aligns with Nielsen et al.'s call for “broadening the populations and settings in future validation studies” to allow for a better understanding of applicability across contexts, and benefits and use in ECB efforts. The validity and relevance of the tool and practical guidance, then, are uniquely strengthened by the community's contributions. In future work, we plan to assess how the ECNA tool performs when used by more geographically diverse participants. Such an investigation might elucidate the degree to which context influences the conceptualization and measurement of EC in closely related settings.

The second limitation is that the study timeframe limited our ability to test the structure of the EC over time with diverse early childhood and other social-serving organizations. As organizations invest time and money to develop their EC, a tool that allows organizations to track the impact of their ECB efforts over time would contribute to creating evidence-based strategies that target EC areas needing improvement (Taylor-Ritzler et al., 2013). We believe that there is an opportunity to use this tool to periodically reassess the EC needs of individuals and/or organizations, monitoring growth and the development of any new EC over time (e.g., changes in EC needs that may arise from changes in social or political climates). This can be accomplished by examining the degree to which the tool can accurately capture change in individual and organizational EC domains over time. Such an investigation would require evaluating the factor structure and longitudinal measurement invariance of the individual and organizational EC domains (Schumacker & Lomax, 2012). In addition to this, qualitative interviews and focus groups with individuals and organizations directly involved in the EC intervention would capture experiences of change overtime (Bourgeois et al., 2015).

Conclusion

Evaluation is crucial to help meet organizational demands for accountability in early childhood environments with limited time and resources, yet the knowledge and skills necessary for effective evaluation often remain elusive. In response, we developed and validated the Evaluation Capacity Needs Assessment (ECNA) tool using a community-based participatory approach with diverse experts including early childhood organizations, measurement and evaluation specialists, and community representatives. The ECNA tool is unique both in the procedures used for its development and validation, its availability on our Network website, and the practical guidance we provide for its use as a psychometrically valid tool to assess individual and organizational EC. Prioritizing context in developing and validating EC tools is critically important given the sociopolitical, cultural, and economic factors that affect an organization (Al Hudib & Cousins, 2022). We are encouraged by the initial uptake of the ECNA and look forward to exploring how it can be customized for use by other organizations both within and beyond the early childhood development field. This article serves as an essential reference for both processes and outcomes that practitioners, organizations, and scholars can use when seeking to contribute to the EC literature (e.g., measurement, interventions, theory, use). As a validated tool based on previous frameworks and using a CBPR approach, the ECNA holds the potential to be expanded and adapted both methodologically and practically so that it can be applied to other contexts and fields.

Footnotes

Acknowledgements

We would like to recognize the contributions of Btissam El Hassar as a graduate research assistant on this project.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work was supported in part by a Social Sciences and Humanities Research Council (SSHRC) Partnership Development Grant and Partnership Grant (grant number 890-2013-0103, 895-2019-1009).