Abstract

Evaluation can be a means for improving challenging problems and transforming systems in addition to determining worth. In the past decade, the field of evaluation has embraced more formative modes of evaluation, including systems thinking and complexity science approaches, that seek to improve a particular problem, situation, or system within which the problem is situated. As such, the evaluator’s initial role may be one that supports interest holders in identifying and defining a problem before seeking to improve it. However, the evaluation literature is limited in studies and discussions about problem definition. Through an illustrative case study, this research demonstrates how to facilitate problem definition in a systems change approach known as Problem-Bound Evaluation. The findings emphasize a need for structured yet flexible facilitation when using the tools and embracing multiple perspectives to understand a problem.

Keywords

Evaluators can serve as facilitators of social change. While this is nothing new, in the past decade or so, we have seen more pronounced recognition of evaluation as a means for innovation and transforming systems (Gates et al., 2021; Patton, 2011; Patton, 2019), improving persistent societal problems (Rohanna & Christie, 2023), and embracing formative approaches, such as improvement science and developmental evaluation, that are more focused on learning and adaptation than determining worth (Gates et al., 2021; Rohanna, 2022). Thus, for some, the role of the evaluator is moving away from one that typically bounds their inquiry to valuing and improving programs, policies, and processes to one that seeks a learning and adaptation orientation to improve problems and transform systems (Eoyang & Oakden, 2016; Gates et al., 2021; Rohanna & Christie, 2023).

This is especially true for evaluators engaging in systems thinking and complexity science (STCS) evaluation approaches. While STCS are distinct fields, the combination of these terms recognizes the various evaluation approaches that incorporate key systems and complexity concepts (Gates, 2016). These approaches help evaluators understand systems as real-world entities or as conceptual frameworks that guide the application of system analysis methods and adaptation to emerging systems dynamics (Bamberger et al., 2016; Gates, 2016; Rohanna & Christie, 2023; Senge, 2006; SETIG, 2018; Stroh, 2015). They also aid in comprehending complexity as a property of systems or problematic situations, which includes the complexity of interrelationships among system actors who identify and define the problematic situation (Gates et al., 2021; Rohanna & Christie, 2023).

In evaluation, this shift toward STCS represents a recognition of the complexity involved when using evaluation as a means for the betterment of society. More traditional modes of evaluation that center the program rather than the problem or system can be limiting if evaluators assume the program is operating within stable, organized system dynamics with predictable cause-and-effect linkages rather than in complex systems with uncertainty and unpredictability (Bamberger et al., 2016; Moore et al., 2019; Parsons, 2012; Rohanna & Christie, 2023). To improve persistent societal problems, evaluators need to center the problem (Patton, 2019; Rohanna & Christie, 2023; Walton et al., 2021) and build “capacity for a more iterative, adaptative approach” (Gates, 2016, p. 71).

Bounding evaluation to the problem rather than the program supports systems thinking and complexity because it creates space to continually learn how the system responds, i.e., what emerges, and adapt when new interventions are undertaken to improve a problem (Rohanna & Christie, 2023). And, symbiotically, it is crucial to acknowledge and understand the dynamics of the complex systems in which a problem is situated. The focus is not only on the issue but also on changing the system. As such, the evaluator's role in improving social problems broadens to one as a facilitator of learning, adaptation, and change (Gates, 2016; Rohanna & Christie, 2023).

In this role, the evaluator must first facilitate the defining of the problem by multiple interest holders. Although seemingly straightforward, problem definition can be in “itself problematic” (Archibald, 2020, p. 7). Problems to be improved are often represented as static, objective, data-informed challenges, commonly determined by organizational leaders and conceived as obvious to all (Archibald, 2020; Gates, 2016; Rohanna & Christie, 2023). Yet, social problems are perceptions, and thus, can differ depending upon who has a voice in defining them (Gates, 2016; Rohanna & Christie, 2023; Rossi et al., 2004). Therefore, for evaluators who facilitate STCS approaches for improving problems and the system in which they are situated, the importance of this crucial first step of defining the problem cannot be overstated. Even so, as Archibald (2020) indicates, discussions of problem definition are not common in evaluation literature.

We seek to add to the dearth literature base on problem definition in evaluation by providing a descriptive, illustrative case study of defining a problem within one STCS approach, problem-bound evaluation (PBE) (Rohanna & Christie, 2023). Using a PBE framework (Rohanna & Christie, 2023), we demonstrate how the evaluator (first author on this paper) used a participatory systems thinking approach with one school team to define and understand a problem for improvement and to develop a framework for improving it. While this case does not encompass the full PBE framework, it does illustrate a practical and accessible approach for evaluators to facilitate problem definition, including an understanding of system patterns and the system in which the perceived problem is situated, as a first step of STCS approaches.

By doing so, we also hope to address the need to engage in and use STCS theory and methods in practice more deeply (Bamberger et al., 2016; Barbrook-Johnson et al., 2021; Torres-Cuello & Pinzon-Salcedo, 2022; Walton et al., 2021). While Barbrook-Johnson and Penn (2021) offer a practical participatory system mapping tool, the evaluation literature is limited. Barbrook-Johnson et al. (2021) argue for the documentation of STCS approaches in evaluation and to make them “accessible and practical” (p. 13); and, as noted and evidenced by Walton et al. (2021) Systems and Complexity-Informed Evaluation: Insights from Practice (New Directions for Evaluation, v170), there is a need for more systems change evaluation studies that aim to bridge theory and practice. Therefore, this paper also seeks to add to the evaluation literature in this way. The first part of this article provides more context and detail regarding problem definition in STCS approaches in evaluation, as well as background on PBE. Next, we introduce the methods and case before detailing the findings. Lastly, we end with a discussion of findings and implications.

Problem Definition in STCS Approaches in Evaluation

In short, problem definition is as it sounds: a determination of a specific condition to be improved, including for whom and within particular contexts (Archibald, 2020; Gates, 2016; Lipsey, 1993). In many program evaluations, the problem is already formulated before the evaluator enters the process and the evaluation's focus is on the “solution” or program to improve the problem. Yet for many evaluators who are seeking a learning and adaptation orientation and/or STCS participatory approaches to support social problem solving, problem definition can be a crucial step in the evaluation (Gates, 2016). Thus, it is important to heed Archibald's warning (2020) that problem definition can be “problematic.” In his literature review of problem definition in evaluation, he cites Rossi et al.'s (2004) statement that social problems are “social constructions” rather than “objective phenomena” (p. 107) and forewarns evaluators not to treat problems as “self-evident.”

The process of defining a problem, although seemingly straightforward, is anything but (Gates, 2016). Social problems may be perceived differently depending upon someone's positionality, identity, or life experience, and rely on a “particular people in a particular place and time” (Gates, 2016, p. 65). Treating problems as static, objective, and self-evident, and thus, not critically considering how they are conceived and by whom, can not only run the risk of solving the “wrong problem,” but also, further marginalizing interest holders whom you seek to serve when using deficit framings of the problem (Archibald, 2020; Gates, 2016; Kirkhart, 2010; SenGupta et al., 2004).

In participatory STCS evaluation approaches, understanding core systems thinking concepts can support evaluators in facilitating problem definition among a team of interest holders. Using Meadow's (2008) definition, we consider a system as “an interconnected set of elements that is coherently organized in a way that achieves something” (p. 11). Systems include three components: elements, interconnections, and a perceived function or purpose (Meadows, 2008; Rohanna & Christie, 2023). Rohanna and Christie (2023) added the term perceived to Meadows’ three components to acknowledge the various philosophical perspectives on systems and, specifically, to recognize the need for multiple perspectives and considerations of whose voice and values are privileged when seeking to understand a particular system and its purpose.

These ideas connect directly to the core system concepts of perspectives, boundaries, interrelationships, and dynamics (SETIG, 2018; Williams & Imam, 2007). In 2018, the American Evaluation Association's Systems in Evaluation Topical Interest Group (SETIG) released a document entitled Principles for Effective Use of Systems Thinking in Evaluation. It provides guidance for evaluators seeking to use systems approaches in evaluation. Although the principles based on the four core system concepts cannot be separated from each other (SETIG, 2018), we elaborate below on specific operating principles within each one as they are particularly germane to defining problems when considering them as problematic situations that rely on a “particular people in a particular place and time” (Gates, 2016, p. 65).

Multiple Perspectives and Considerations of Whose Perspectives are Included

Under SETIG's Perspectives Operating Principal P1, they counsel: “Identify and represent diverse perspectives and the values on which they are based. Seek dissent as well as consensus” (p. 10); and under Perspectives Operating Principal P2, they advise: “Attend to the types of power associated with each perspective and consider the consequences” (p. 10). A critical consideration of whose diverse perspectives to include, or whose are left out, means attending to the boundaries principles as well. Evaluators should “critically deliberate on, set, and explain the boundaries and boundary decisions that relate to the situation being evaluated and the evaluation itself” (SETIG, 2018, p. 11). Particularly relevant to problem definition, Boundaries Operating Principal B1 states, “Identify key boundaries that influence, and should influence, the situation being evaluated and the evaluation itself” (p. 11). Thus, as evaluators seek to ameliorate the potentially “problematic” process of defining problems (Archibald, 2020), they need to critically reflect on who participates in the defining of problems, including who is left out, and who makes the decisions about who is included within the bounds of the evaluation and why, as it is not usually the evaluator. The evaluator's role, then, may be helping decision-makers “see” who is being privileged or marginalized and the potential consequences of each, along with preparing how to facilitate potential power dynamics within the evaluation activities so that the multiple perspectives of the group emerge (Rohanna & Christie, 2023).

Facilitating Activities to Define and Understand the Problem and System

Under SETIG's Interrelationships Operating Principle I1, the authors state that evaluators should “identify, capture, map, and track key interrelationships that influence, could influence, and/or should influence the evaluand and the evaluation itself” (p. 10). Furthermore, the Dynamics Operating Principle D1, counsels, “Consider how dynamics…interact to create patterns that are nonlinear and multi-directional to help understand how dynamics shape the systems relevant to the evaluation” (p. 11); and, Dynamics Operating Principle D3 advises “investigate how observers’ worldviews and their judgements about useful and convenient representations of system behavior influence the conceptualizations of dynamics” (p. 11). Attending to these principles, and incorporating multiple perspectives, suggests that evaluators need to facilitate causal systems activities that examine and unpack system behaviors, patterns, and mental models that are contributing to the perceived problem and the potentially unintended system design. Mental models, as defined by Senge (2006), are “our deeply ingrained beliefs, assumptions, values, and generalizations that help us makes sense of the world” and shape our individual and system behaviors (Rohanna & Christie, 2023, p. 4).

While the SETIG principles provide useful guidance, the question remains: What does facilitation of problem definition look like in practice? PBE, the STCS approach in which this case is grounded, provides a framework for enacting these principles.

Problem-Bound Evaluation

PBE is a participatory STCS approach that centers the perceived problem as the evaluand rather than the program (Rohanna & Christie, 2023). The approach prioritizes adaptation and learning through emergence when implementing new “programs” to improve the perceived problem and system. PBE incorporates improvement science, systems thinking, and the complexity science framework of Complex Adaptive Systems (CAS). As we have already defined systems thinking, we will not redescribe it here; however, for readers who are unfamiliar with improvement science and CAS, we will briefly describe. Improvement science, born out quality improvement in manufacturing and now prevalent in health care and education, is a methodological approach for identifying, understanding, and improving problems and the system in which they are situated; essentially, it is the “science of improvement” (Hinnant-Crawford, 2020). Complex Adaptive Systems is a framework that describes system dynamics from organized to complex to unorganized based on a range of two factors: agreement among actors and certainty or predictability of the cause-and-effect relationships among actions, conditions, and consequences (Parsons, 2012; Rohanna, 2022; Stacey, 1996; Zimmerman et al., 1998; Zimmerman & Dooley, 2001).

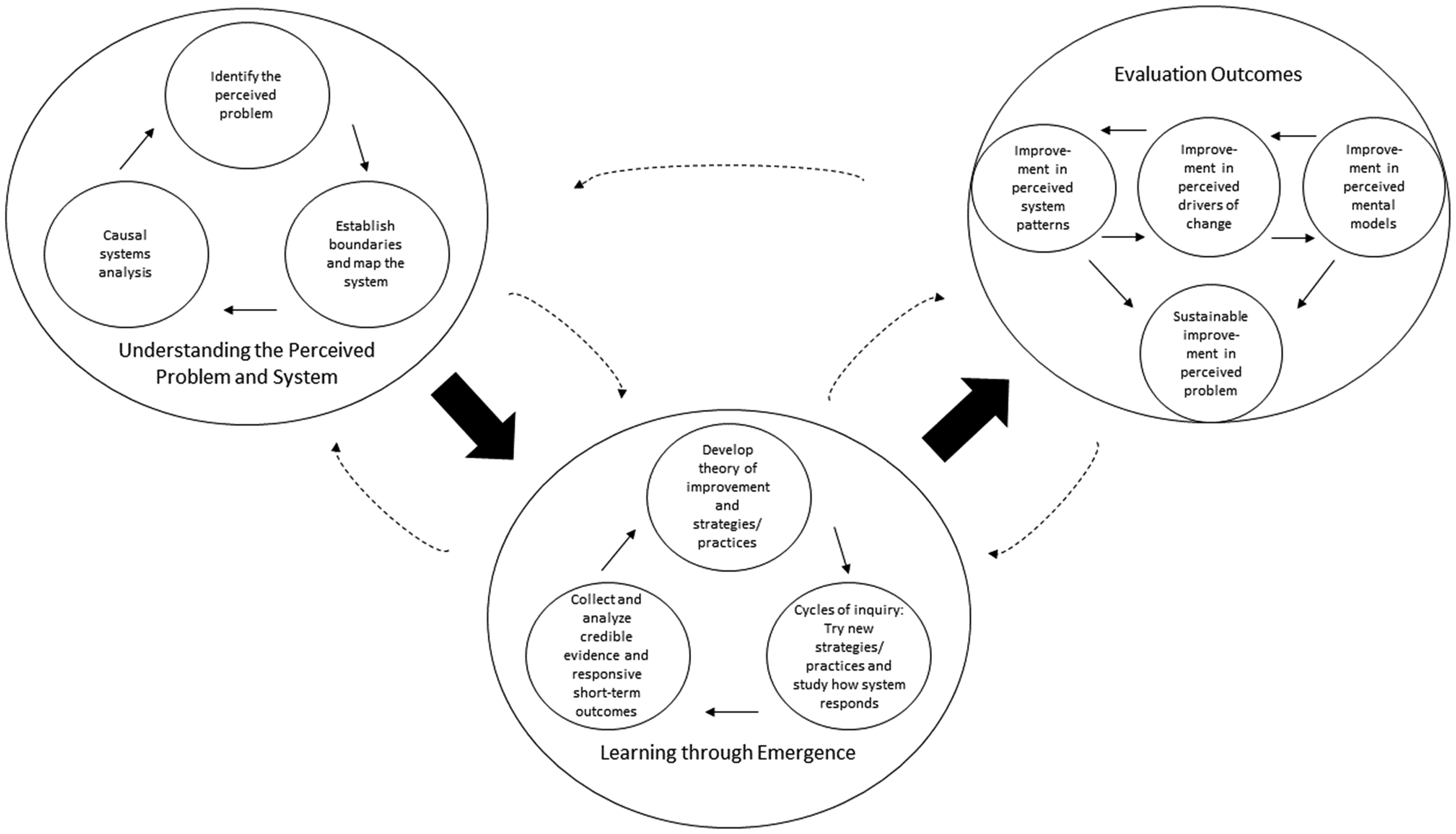

Building upon these frameworks, PBE has three components (Figure 1): understanding the perceived problem and the system, learning through emergence, and evaluation outcomes. Importantly, this model is not linear (Rohanna & Christie, 2023). The learnings within and among the components are iterative and inform each other. Even so, as Rohanna and Christie (2023) explain, the process typically begins with identifying and understanding the perceived problem to be improved. Within this component, the evaluator supports the critical reflection of who to include on the team to improve a problem and system, and then facilitates systems thinking and causal analysis activities to identify and frame a problem from multiple perspectives, map the system within which it is situated, and deepen understanding of the problem and the system before developing strategies to improve it. Once this understanding is developed, the team develops a theory of change and relevant strategies and practices for how to improve the problem, testing them through cycles of inquiry guided by evaluation questions. The team collects credible evidence to understand how the system responds and to guide the next steps. In addition to short-term outcomes informing these cycles, the process aims to improve evaluation outcomes related to system patterns, system drivers, relevant mental models, and the problem itself.

Problem-bound evaluation model.

While other STCS approaches, such as Adaptive Evaluation (Eoyang & Oakden, 2016) and Developmental Evaluation (Patton, 2011) attend to complexity, adaptation, and learning through emergence, PBE is unique in that the problem is the evaluand rather than a program or innovation. By centering the problem to be improved, PBE creates space for strategies and practices or “programs” to change based on how the systems responds and whether the problem improves. PBE, building on the work of others (Parsons, 2012; Senge, 2006), also explicitly attends to changing system patterns and mental models to transform systems, and thus, considers those underlying system structures as outcomes to improve. The explicit recognition of system patterns and mental models as concrete outcomes is also another potential, albeit, theoretical benefit of PBE compared to many other STCS approaches even though they incorporate these concepts. One notable limitation of this approach is that it requires an intensive time commitment both on the part of the evaluator and team.

In this case, PBE provides a beneficial approach for illustrating problem definition in practice because of its explicit focus on evaluation activities seeking to understand the problem and system. Furthermore, it acknowledges that these understandings are perceptions informed by those who are included in these activities.

Methods

This study utilized a qualitative, descriptive, illustrative single case study of an evaluator facilitating one STCS approach—PBE—with a diverse school team to improve a local problem. Specifically, the case is bounded by the three workshops. It illustrates how the evaluator, throughout the workshops, facilitated the process of problem definition using accessible and practical systems thinking tools and practices. This case was selected because it provides a potentially typical or “common case” situation of facilitating systems thinking practices in an education setting (Hayes et al., 2015; Yin, 2014). The following research questions guided our study.

How did the evaluator facilitate the process of problem definition using systems thinking tools and practices? What helped to facilitate the process? What challenges emerged in the process, if any?

Although the questions are narrow, we present our results as an illustrative case study with a detailed description (Hayes et al., 2015) to increase evaluators’ understanding of how to facilitate problem definition as part of an STCS approach in a real-world context (Yin, 2014).

The Case and Context

In 2022, the evaluator, one of the authors of this article, conducted three half-day workshops with a team from Washington Middle & High School (pseudonym) to identify a problem and develop an approach for improving it using a systems thinking lens. Washington is a 6th to 12th grade school, with approximately 500 total students in 2021/22. 1 The school is located in a large Southern California metropolitan area. At the time of the workshops, the school demographics were 54.1% Hispanic or Latino, 43.4% African American, 1.3% Two or More Races, and 0.8% White. 2 The school was considered a “high-needs” school, with 96.0% classified as Socioeconomically Disadvantaged, 25.6% as Students with Disabilities, 25.2% as Emergent Bilinguals, 1.9% as Homeless Youth, and 1.7% as Foster Youth. 3 The 2021/22 chronic absenteeism rate was 60.3%, which was almost double their pre-COVID-19 pandemic rate of 35.5%, following a similar trend as the district's. 4

Washington is a university-partnered community school with committed teachers, administrators, and staff. As a community school, Washington seeks to support students and their families’ academic, physical, and mental health needs and provide access to community resources. The school had approximately 25 teachers at the time of the workshops.

The evaluator was positioned to support Washington. She had an established relationship with Washington as faculty at the partnering university and had worked closely with Washington's math and science teachers in the previous years to build their improvement science capacity as part of a university grant. As a result of this relationship with teachers and administrators, the evaluator was aware of potential issues related to teacher burnout and sustainability. Numerous teachers indicated they wanted to focus on this issue. Therefore, as part of the grant's objective to engage the school in causal systems analysis, the evaluator suggested using a systems thinking approach to support and sustain teachers as they strived to meet the needs of their students. The evaluator had vast experience conducting more conventional program evaluations and participatory, formative systems approaches, like improvement science, in education.

The first step was building a team to provide an inclusive and diverse understanding of the problem. The evaluator met with the principal and the coordinating teacher to discuss who should be included on the team. The goal was to include those who could see “across the system” and provide multiple perspectives on potential teacher burnout and lack of sustainability. The principal and coordinating teacher suggested staff and teachers who served various roles in the school. The teachers all participated in the school's Instructional Leadership Team and played a role in school decisions. The assistant principal was also important because she had the power to support any plan to improve teacher burnout. The evaluator recommended adding additional participants who did not sit within the traditional “boundaries” of the school, such as parent and community partners, and could bring an additional perspective that might impact others, i.e., families and students. In the end, the team included eight people: three teachers representing science, math, and social studies, the assistant principal, another school administrator, a school college counselor, a parent, and a university partner.

Once the team was convened, three online 3-hour workshops were held on Saturdays over one month in the winter of 2022. Initially, the plan was to conduct the workshops in person, but due to a surge in COVID-19 cases, it was decided to move them online. All participants were given a stipend for their time.

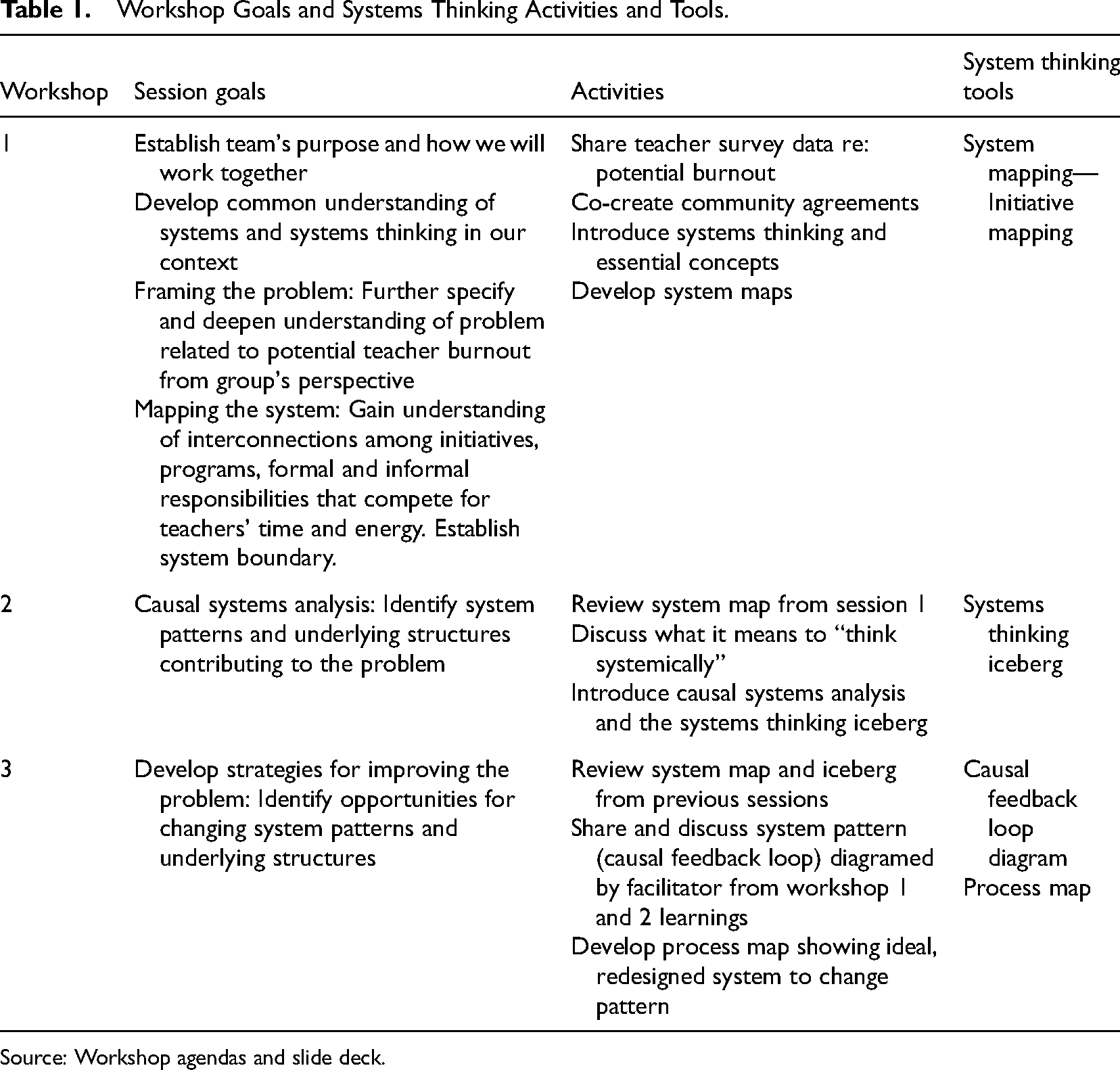

The workshops were grounded in the theoretical underpinnings of the PBE framework (Rohanna & Christie, 2023), discussed in the previous section. To be clear, the workshops’ purpose was not to change the system within three workshops. Table 1 shows the goals of each workshop. The workshops primarily focused on the first PBE component: Understanding the perceived problem and the system. As shown in the table, in addition to activities necessary to facilitate a productive team, e.g., establishing team's purpose and developing shared understandings, the session goals focused on framing the problem, mapping the system around the problem, and identifying system patterns and underlying structures contributing to the problem. The workshops also included the first part of the second component, developing a theory of improvement and strategies and practices, but stopped short of the activities necessary for learning through emergence and evaluating outcomes, and thus, limits our understanding of the whole PBE framework in practice. This limitation was due to the school's systems thinking initiative primarily focusing on causal systems analysis over the course of the three workshops rather than conducting a more comprehensive systems change evaluation. Therefore, the case study was bound to the three workshops. However, even with this limitation, the focus on the first component provided us, as researchers, an opportunity to learn about the process of facilitating problem definition within an STCS approach.

Workshop Goals and Systems Thinking Activities and Tools.

Source: Workshop agendas and slide deck.

Data Collection

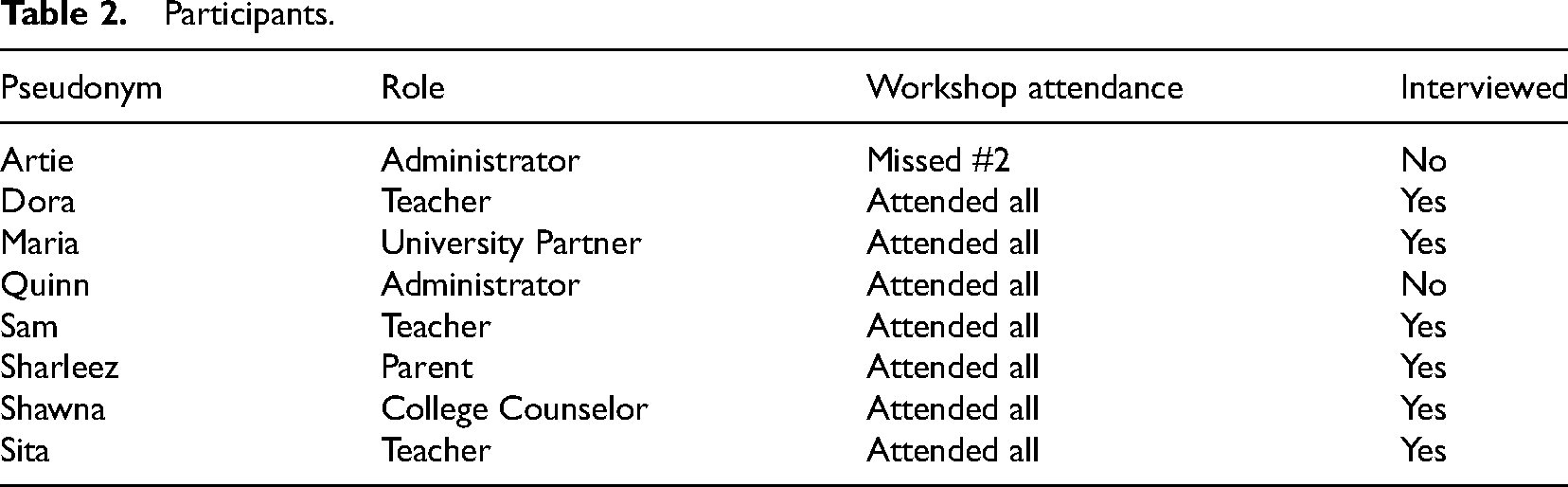

The case study incorporated multiple qualitative methods. Data were collected via workshop non-participant observations (3 workshops), semi-structured interviews (6 participants), and workshop document/artifact analyses (facilitator notes, agendas, artifacts: community agreements, systems initiative map, systems thinking iceberg, causal feedback loop diagram, prototype process map). While the first author of this paper served as the evaluator and facilitator, the second author was responsible for observations and conducting all the interviews. The second author observed the workshops but did not participate and served only in a research capacity.

Table 2 shows the sample of participants with their pseudonym, role, interview status, and workshop attendance status. While we hoped to conduct interviews with all eight participants, as shown in the table, we only interviewed six due to scheduling difficulties after multiple attempts to include everyone. This is a potential limitation since we did not interview the administrators, and thus, their voices and perspectives are not represented in the interview analysis; however, they are represented in the observations. The interviews were conducted on Zoom after the three workshops were completed and lasted approximately 20 to 50 minutes each.

Participants.

Data Analysis

Both the PBE model and previously stated Principles for Effective Use of Systems Thinking in Evaluation (SETIG, 2018) provide a conceptual framework for analyzing the findings. We answered research question 1 by analyzing observation field notes and workshop documents/artifacts. We developed a narrative from these sources describing the process through which the evaluator facilitated the workshops and systems thinking tools and practices. Following Hayes et al.'s (2015) direction that illustrative case studies “should describe every element” (p. 8) and should include “self-contained descriptions of what the researcher observed and narratives about how the individual people or other elements involved in the situation acted during the length of the study” (p. 9), we developed a table that systematically established key events and facilitation activities using the PBE theoretical framework and identified evidence that could be used to build the illustrative narrative.

Research questions 2 and 3 were primarily answered through the analysis of the interviews and observations. First, we transcribed the interview data. Then, using MaxQDA, we inductively developed first-cycle codes utilizing a combination of values, process, and descriptive coding (Saldana, 2021). First-cycle codes were combined into higher-level codes during second-cycle pattern coding (Saldana, 2021). Next, we developed themes from the second-cycle codes. To strengthen the validity of the findings, we reviewed the initial first-cycle codes to determine if there was disconfirming evidence of the themes. One researcher coded all the interviews. Using the initial themes developed from the interviews, the observation field notes were deductively coded for supporting evidence. Through the writing and analysis process, the themes were further refined and connected to the theoretical SETIG (2018) principles.

Process in Practice—Findings

This section presents a narrative of the workshops. It is broken down into key events relevant to how the evaluator facilitated the process of problem definition using systems thinking tools and practices.

Day 1: Establish Workshop Purpose and Common Understandings

The first goal of the workshop was to establish the group's purpose and how they would work together. To do so, the evaluator asked the group to reflect on a relevant quote from the school's website and encouraged them to consider how they could apply this quote to supporting teachers and other adults in their system. Then, she displayed charts from a recent survey of Washington teachers that showed most teachers worked more than 40 hours a week and felt physically or emotionally drained at the end of the workday. She also shared a chart showing that most teachers still found teaching enjoyable “always” or “most of the time.” This slide included a question for the group: “Opportunity now = How can we support teachers before they get burnt out?” As part of establishing the group's purpose, she also displayed two slides that showed the group's overarching task, the objectives for all three workshops, and their ultimate goal to “…identify actionable ways to replenish teachers by removing systemic barriers and embedding supportive structures…” As part of this introduction, she also introduced terms and concepts related to systems and systems thinking and facilitated a small group sense-making activity to develop shared understandings around these concepts.

Day 1: Framing the Problem and Mapping the System

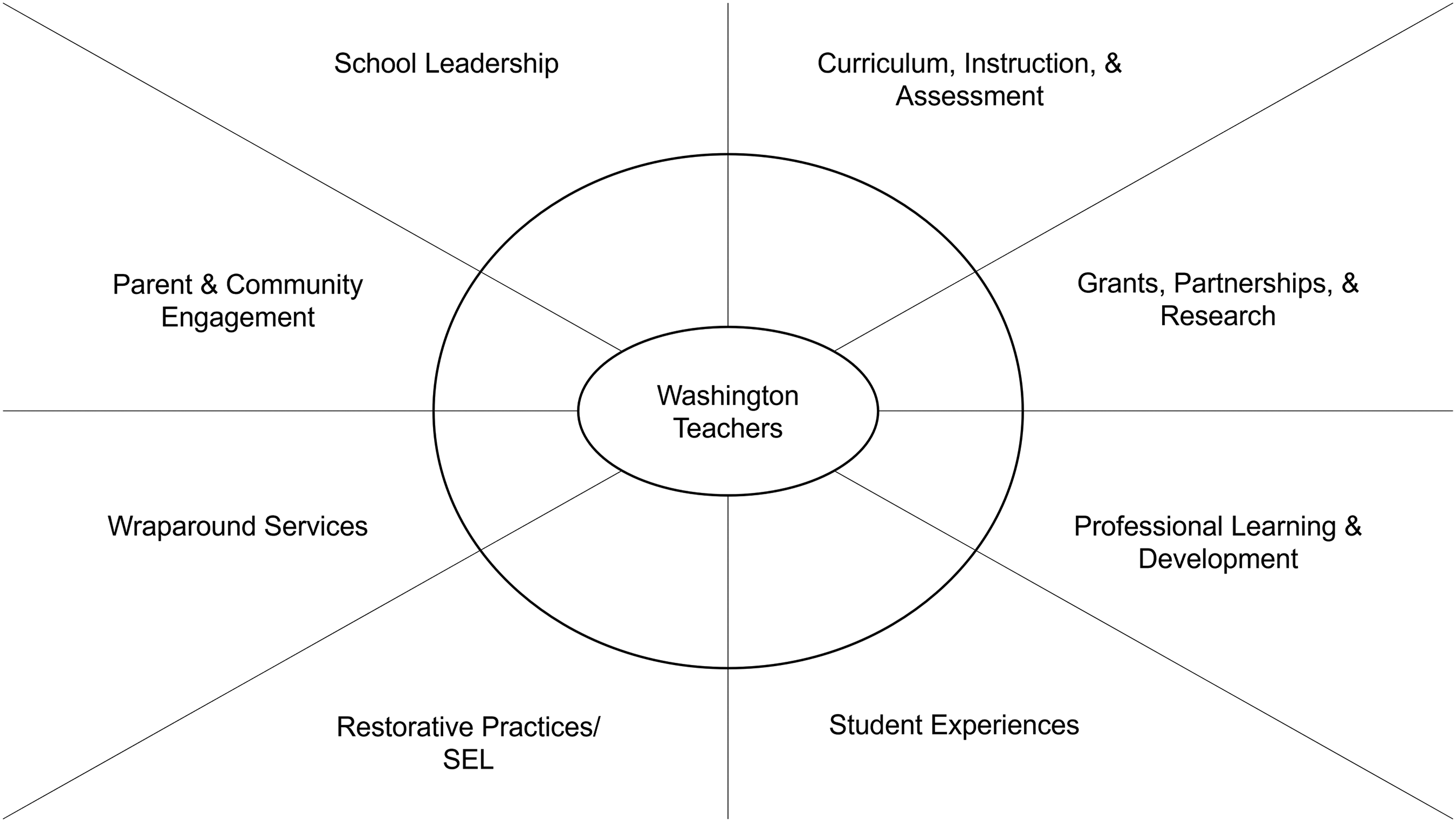

For the two objectives of (1) further specifying and understanding the initial problem of teacher burnout and sustainability, and (2) mapping the system to better understand the interconnection of initiatives that influence teachers’ time and energy, the evaluator engaged in a system initiative mapping activity. This system mapping activity was an adaptation of an actor mapping activity, which provides a “visual depiction of the key organizations and individuals that make up a system…” (FSG, 2015). The primary purpose was to identify initiatives and programs that fell within subsystems of Washington, which were bound to the school and nested within the district.

The first step was to frame their system using a Google Jamboard that all participants could access (Figure 2). Considering time constraints, the evaluator developed the initial framing before the workshop based on the school's transformation strategies and asked participants to revise as necessary. Next, the evaluator asked, “What programs and initiatives fall under each subsystem?” The participants populated yellow post-its across categories, with most of them placed on the right side across curriculum, instruction, & assessment; grants, partnerships, & research; professional learning & development, and student experiences. Then, the evaluator added “teacher” to the center circle. She asked the group which initiatives influenced teachers’ time the most, and to move those post-its closer to the center. Quickly, one-by-one, the yellow post-its moved toward the center, with the right-side of the center sphere eventually filled with yellow post-its stacked on top of each other, suggesting that many initiatives required teachers’ time and energy.

System map frame.

For the next part of the activity, the evaluator asked the group to use pink post-its to add teachers’ formal and informal roles and responsibilities within each of the subsystems. Pink post-its populated the map, primarily in the curriculum, instruction, & assessment and wraparound services subsystems, with other pink post-its scattered across the map. After about 10 minutes, the facilitator asked the group, “What do you notice?” Dora, one of the teacher participants answered, “A lot of pink is filling in where there weren’t a lot of yellow post-its. Teachers are getting creative on how to fulfill those needs.” The evaluator pointed out that in the wraparound services section pink post-its included “spend $$ for snacks and PB&J,” “supporting students with outside issues,” and “spend time mentoring students: navigating personal issues.” The evaluator invited other participants to add and ask questions. Initially, there was silence as participants stared intently at the screens. Sam, one of the teachers, asked the facilitator to repeat her question. She did, and then, more participants spoke up. One participant, Sharleez, who was the parent team member added, “Things outside of the classroom. They are dealing with issues that aren’t in the classroom that aren’t learning. Did everyone realize there would be so much pink?” The other participants nodded in agreement. Others followed by adding their thoughts, including Quinn, an administrator. I’m starting to brainstorm where the pinks become the yellow. My goal in my position is how to make pinks yellow? Not that we need another system, but how do we change pink to yellow. They exist, but not that it is the responsibility of a teacher, but rather the responsibility of the system.

Day 2: Causal Systems Analysis to Deepen Understanding of the Problem

The primary purpose for Day 2 was to identify system patterns and underlying structures contributing to the problem further specified in Workshop 1. After reviewing the system map from last time, the evaluator introduced a slide, asking the group, “What does it mean to think systemically?” The slide showed a table with conventional thinking on the left-hand side and systems thinking on the right (Stroh, 2015). The group engaged in a facilitator-led discussion about systems versus conventional thinking.

Next, the evaluator introduced the systems thinking iceberg as a causal systems analysis tool to dig deeper into their further specified problem. She confirmed with them the problem symptom at the top, “Teachers spending time, energy, and money responding to student needs regarding outside issues and wraparound services.” Using a Google Jamboard, the participants brainstormed around the patterns, behaviors, and trends section of the iceberg. Specifically, the evaluator asked: What is happening? What do students come to teachers for? What type of help and why? Are there trends? After brainstorming on their own, the evaluator invited them to share and led them in a dialogue.

Maria, the university community school partner team member, noted that “It's a beautiful thing [that students come to teachers]. There is a relationship there.” She wondered if it should be categorized as a problem. Teacher participants joined in. Dora, a teacher, responded that it was “beautiful and awesome but use this [activity] to identify what they are coming to us for. I sometimes feel like I’m not the best person, I’m not a therapist.” Sita, another teacher added that it “takes an emotional toll on teachers and [their] families.” Eventually the group began to categorize the “big issues” that took more of a toll, such as helping students with family and homelessness issues, versus “simple issues,” like teachers leaving their room open for lunch so students can have a quiet place or providing students with snacks and pencils.

Next, the evaluator directed them to the bottom of the iceberg: What ways of thinking and structures have contributed to these patterns? As part of this process, they brainstormed around mental models and system artifacts, such as policies, infrastructure, norms, and resources. While they struggled with the concept of mental models at first, demonstrated by their Jamboard post-its, they engaged in a facilitated, meaningful dialogue. The group discussed that even in some cases where students had access to the necessary resources, like the nurse, students still trusted teachers more than others. Additionally, teachers might be aware of school resources, but do not necessarily trust handing off students to external resources to help them, even if they have a partnership. Thus, access to resources was only part of the issue. They discussed the need for more local community partners and for those partners to build stronger relationships with the school, including with teachers.

Lastly, as the evaluator brought the workshop to a close, she asked, “What resonated with you from today's work? What do you still wonder?” Notably, both Maria, the university community school partner, and Dora, one of the teachers, demonstrated an appreciation for hearing each other's perspective and that of others.

Day 3: Develop Strategies for Improving the Problem

On Day 3, the primary purpose was to identify opportunities for changing system patterns and underlying structures in order to develop strategies for improving the problem. The evaluator began by reviewing the previous two workshops and what they learned. This review included walking them through a causal feedback loop developed by the evaluator using the learnings and artifacts from the two previous workshops. It indicated two patterns that needed changing: (1) Students often go to teachers for help with “big issues” because they trust them more than other resources. (2) The teachers help them because they care and do not trust handing off the students to others. This loop, while seemingly positive, continually reinforces students’ turning to teachers for help with significant issues, which takes an emotional and physical toll on teachers. Mental models related to not trusting others were an issue for both students and teachers. Then, the evaluator showed them the conventional thinking versus systems thinking table again. The group began discussing how they might change the causal feedback loop, with attention to improving the system rather than a more conventional thinking way. When several of the teachers discussed the idea of the college counselor supporting teachers and spending more time in their classrooms to build trust with students, Dora responded: Here's an issue, let's fix it. Sounds like conventional thinking, but not a sustainable systems-wide thing that can be followed or tracked. If she leaves, how do we train the next person? Trying to push for things that are based not on who we are, but on the system.

Through the facilitated discussion, the group landed on hiring a grant-funded wraparound services coordinator to develop processes and structures. 5 While the position would only last through the grant period, their focus would be creating sustainable system structures. Sam and Dora, both teachers, suggested that the position could be served by a teacher, who would only teach part-time and spend the rest of their time in this position. Other participants expressed their interest in this idea. Sharleez, the parent, responded in the chat, “I think that is great because the trusting teachers are still in the equation!!!!! That is important for these students, and it also gives the teachers a break as well.” The evaluator cautioned how it might affect other parts of the system by asking, “Would you have to find other teachers [to fill the part-time teaching positions]?” The other teachers responded that they could.

Lastly, the group developed a process map to brainstorm the prototype process for students, teachers, and the wraparound services coordinator. A key to this approach was that both the wraparound services coordinator and service partners build relationships and trust with the teachers and school. After the activity, the workshop ended with everyone sharing final reflections on the three workshops.

Process: Facilitation and Challenges

The previous section provided an illustrative narrative of the facilitation process for problem definition. In this section, we address the remaining research questions: what helped to facilitate the process and what challenges emerged. When considering the SETIG Principles presented earlier in this paper, two themes emerged regarding the process: Participants appreciated the structured yet flexible facilitation style, and they learned more about the problem and system from their multiple perspectives. Additionally, two challenges emerged around clarifying everyone's role when involving multiple perspectives and facilitating mental models.

Structured yet Flexible Facilitation of Activities to Understand the Problem

Participants appreciated how the workshop was structured while still allowing space to listen to and respond to their emerging ideas and needs. Almost all interviewees (5) indicated the workshops were organized and productive. (Please note that interview questions were intentionally broad and did not specifically ask about this. As such, although the sixth participant did not indicate they felt this way, they did not indicate otherwise either.) Participants felt “each session built off each other” and it “all made sense at the end.” Sita, a teacher, shared that she was surprised “how much we were able to tease out in such a short amount of time.” Dora, another teacher, also appreciated the way the workshop was framed, “not venting, it was organized…It's just, like, [facilitator] did a great job framing and facilitating conversations that we’ve had 100 times before, but we were able to do them in a different way.” These data suggest that participants felt the workshops were constructive, and potentially more beneficial than other collaborative spaces they had experienced.

Additionally, in terms of structure, all interviewees (6) indicated the systems thinking tools used for defining and understanding the problem were helpful. Participants noted how these tools helped them to frame the system, organize and document their thinking, and “bring these ideas to life through these visuals.” Participants mentioned specific activities, including Maria, the university partner, who noted the strength of them as visuals to support their thinking: “So, the first exercise on Day 1 with the quadrants that was an amazing exercise. Loved it! It's super powerful to see the pink overlaid on the yellow. It was just such a powerful visual.” Dora compared this process to other improvement processes she had participated in the past. I think if we had tried this without the systems modeling, like, we would have spent a lot of time problematizing and a lot of time solutionizing in a bad way…There's so much that forced us to slow down at all these steps of the process. And, I think that's really what made this process of systems thinking a lot more helpful than, you know, maybe something we've done in PD where we're like, we have Ds and Fs. Why do we have these Ds and Fs? Because of this. Okay, do this to fix Ds and Fs. … You get too many opinions. No one speaking the same language. Like a lot of those barriers were removed in this very, like, highly facilitated process that was still very open, if that makes sense.

Shawna's quote also demonstrates the organic unfolding of the process; while there was structure there was still space for participants to dialogue and openly share their thoughts and ideas. In their interviews, three participants shared how they appreciated being listened to, that they could speak their mind, and the “truthfulness, just the openness, the thinking outside the box.” Participants appreciated the space to hear and learn from each other. This was further affirmed by Shawna, who noted, “I really was surprised that I participated so much, cause usually I'm pretty quiet. I just listen. I’m a thinker, but there's so many things to say, so many things came up.”

Understanding the Problem From Multiple Perspectives

As previously discussed, the workshop was designed to include participants with multiple perspectives on the problem and the system. This approach was recognized by participants. In interviews, almost all participants (5) expressed their appreciation for learning about, and from, another perspective. (The sixth participant did not comment on this either way.) These different viewpoints and experiences supported participants to understand the problem and see different parts of the system. The earlier exchange between Maria, the university partner who brought a community school perspective, and Dora, a teacher, illustrated this point. Maria elaborated on this moment during the interview. She shared it was important for her to hear about the physical and emotional toll that supporting students with “big issues” can have on teachers, and through that dialogue, she learned that community school teachers need more support.

Additionally, including both administrators and teachers in the process provided a deeper understanding of the problem from a classroom versus school or district perspective. In the interviews, two of the teachers indicated their appreciation for teachers’ voices being centered in this process. While the evaluator created space for all voices, there were times when the teachers’ perspective was prioritized due to their proximity to the problem. Dora expressed an appreciation for this during the initiative system mapping activity: “The sticky note thing, that is where I felt the teacher voice thing was the most prominent. Cause I was like, ‘I want you guys to just sit back for a second and just let [teachers] go to town on this.’” She also noted her surprise at Quinn's, an administrator, surprise at the outcome of the activity, and felt it was a “great framing activity because even for people who had just left the classroom last year, I think those people also, kind of, had forgotten the day-to-day [work] of the teacher as well.” Conversely, Quinn's perspective also informed Dora's understanding of the system. In the interviews, Dora shared how she was “still learning a lot about the school system,” and gained a better understanding of district bureaucracy they are “up against” and the pressures Quinn faces. Sam also shared an appreciation for both inside and outside classroom perspectives when seeing the system, i.e., bigger picture. I guess that we tend to forget when we’re outside the classroom. But again, those of us that are inside the classroom, you know, like we don't get to see the bigger picture of what's going on. Like, how certain things have to be that way because the district or something.

These data highlight the value of intentionally including multiple perspectives to see the system and understand the problem.

Challenges to the Process

Notably, while participants appreciated the diverse perspectives in relation to the problem, an important challenge emerged regarding role clarification in this process. During interviews, Shawna and Maria, the counselor and university partner participants, shared that they were uncertain of their role because the activities were so teacher and administrator focused. This led to them being unsure how to participate at times. Maria shared this paradoxical appreciation for teacher perspectives being prioritized while also being hesitant to engage because the evaluator did not explain the purpose and value of each person's role. I think like every gathering of teachers should look like this, right? Like how can we explore something deeply and come to some sort of potential solution for addressing these issues that have come up. So, anyway, I really, really appreciated being there. I will say that I wasn't very clear about my role. I was a little nervous about my engagement given that I really saw this as a teacher-led space, and I'm not sure if that was the intention. I know that [facilitators] were very intentional about, you know, who participated, but I wanted to learn from teachers and community members. Like I noticed that the parent there was very quiet for the most part.

Lastly, surfacing mental models in relation to the problem was another challenge that emerged in this process. The observation of the systems thinking iceberg activity, along with the reflection notes after the facilitation, demonstrated this challenge. During the activity, participants added mental models, such as students believing that “teachers are problem solvers” and teachers feeling “if a need isn’t met, teachers are the only resource left to meet the need,” but the deeper mental models around trust only surfaced through the facilitation of the dialogue. This required the evaluator to continually make sense of participants’ dialogue to summarize, frame, and guide them toward the underlying mental model that was emerging around trust. This was a facilitation challenge because while the iceberg activity framed this dialogue, it required a heavier lift than expected from the evaluator to surface mental models not shown on the iceberg.

Discussion

This descriptive, illustrative case study aimed to demonstrate how evaluators can facilitate problem definition in practice using systems thinking tools, along with furthering our understanding of what supports evaluators in this work and what challenges may emerge. By doing so, we hope to contribute to the field's understanding of how to facilitate STCS approaches, and in particular, one approach, PBE, in ways that are both practical and accessible for evaluators and participants. This section discusses notable findings and their implications for evaluation practice.

First, when leading STCS evaluation approaches, the facilitation process to define and understand the problem and system needs to be emergent and responsive, and to do so, the evaluator needs to be reflective and adaptive. The findings suggest that using structured systems analysis tools and being flexible simultaneously supported the sessions to be productive while leaving room to respond to participant needs. The systems thinking tools used in the case provided practical examples of incorporating SETIG's (2018) Principles for Effective Use of Systems Thinking in Evaluation related to interrelationships and dynamics. Successfully facilitating these tools during the workshops required the evaluator to be flexible within their structures to foster and elicit dialogue to support everyone's learning, including the evaluator's. For example, it was from the emergent learnings about system patterns and dynamics related to lack of trust, along with the artifacts, that the evaluator developed the causal feedback loop to share at workshop 3. Surfacing mental models, which connects directly to Principle D3 (SETIG, 2018), investigating how worldviews and judgements of system behavior influence the conceptualizations of dynamics, was more challenging than initially anticipated but essential for identifying and understanding a system pattern to change.

The evaluators’ ability to be flexible and adaptive is grounded in reflective practice. Tovey and Skolits (2021) define reflective practice as “an iterative process of thinking and questioning, self and contextual awareness, focused on learning and improvement for both the evaluator and those involved in the evaluation” (p. 20). In this case, the narrative “process in practice” detailed how the evaluator sought ongoing questioning, thinking, and sensemaking with participants during the sessions. This case connects to Schön's (1983) concept of reflection-in-action, which can be likened to the evaluator making in-the-moment facilitation decisions based on what was happening in the session (flexible and adaptive) while keeping to the evaluation's objectives and tools (structure). The development of the causal feedback loop, although developed by the evaluator after the session, arose from the dialogue and systems thinking iceberg in workshop 2. In Rohanna and Christie's (2023) theoretical paper about PBE, they acknowledge that understanding system patterns and underlying structures may require evaluative thinking and an evaluator's expertise. They state: The importance of identifying and understanding potential patterns and underlying structures cannot be overstated, and this may be where the evaluator's expertise is most necessary. Unpacking perceived system patterns is challenging. Many evaluators have facilitation, questioning, and inquiry skills that elicit pertinent information; they may also have analytical skills that allow them to make sense of information quickly. In short, because of their evaluative thinking skills, they may see patterns across the system that others initially do not. (p. 7)

This theoretical idea was born out in practice. Surfacing the mental models and system patterns related to trust was more challenging than expected, even for the evaluator. This process involved evaluative thinking, described as “identifying assumptions, posing thoughtful questions, pursuing deeper understanding through reflection and perspective taking, and informing decisions in preparation for action” (Buckley et al., 2015, p. 378).

Second, the findings also confirm the importance of including multiple perspectives when understanding a problem and system. The participants appreciated the inclusion of multiple perspectives. Teachers, specifically, valued the opportunity for their voices to be centered while also learning about the pressures that administrators face when trying to make change. Again, these findings demonstrate an essential theoretical idea born out in practice. Gathering multiple perspectives is a critical concept for understanding systems (Williams, 2015) and connect to SETIG (2018) principles related to perspectives and boundaries. People can have different truths about the same system and perceived problem. The observation of Maria and Dora provided empirical evidence of why including teacher voice, in this instance, was crucial for understanding the problem and how students turning to teachers for support with difficult issues can detrimentally affect teachers. They both perceived the problem in different ways.

In PBE, problem-defining necessitates creating space for dialogue and continual reflection on who is involved in framing the perceived problem and what contributes to it. At the same time, however, a challenge arose: the non-teacher participants, who also appreciated learning from others, were sometimes unsure what their role was in the process and how they should participate, suggesting a need for more clarity about why multiple perspectives were invited and how everyone could contribute. Thus, critical reflection on whose perspectives to include within the boundaries of the evaluation may not be enough on its own. The facilitator also needs to carefully consider how to continually invite voices into the dialogue as well as issues around power. In this case, teacher voices were privileged, which was an intentional choice by the evaluator since they were closest to the problem and too often not prioritized in school spaces. However, this intentional choice to elevate teacher positionality may have left others feeling marginalized in the activities, especially when considering that teachers were the first to identify the initial broad issue of teacher burnout. Upon further reflection, we could have engaged in more deliberation about starting with teacher burnout and the potential consequence of others being unsure how to contribute. Additionally, we wonder if student voice should have been included in the problem definition process. At the time, it did not seem necessary to include students because we were focused on teacher burnout. However, in hindsight, by not including them within the boundary of our evaluation, we missed the opportunity to learn students’ perspective on how and why they chose certain adults to discuss “big issues.”

Lastly, these findings suggest an implication around evaluation capacity building. An evaluator's ability to effectively facilitate problem definition and systems thinking activities in a structured, yet adaptable way, while also attending to implicit positionality issues, requires them to have numerous systems analysis tools in their evaluator toolbox and engage in continual reflective practice. Schön's (1983) notion of a reflective practitioner was born from the idea that practitioners may learn technical skills, in a university for example, but still need real-world experience to enact them in practice successfully. We agree. This study suggests the importance of facilitating systems thinking tools while also remaining responsive to in-the-moment needs. These needs include recognizing when activities are not creating space for all team members to participate. Therefore, it is crucial for evaluators desiring to do similar work to expand their professional toolbox and learn STCS tools, as well as to have opportunities to facilitate, practice, and reflect with an experienced evaluator. This professional learning could potentially take the format of course scenarios or on-the-job mentoring.

Conclusion

These findings contribute to our understanding of how to facilitate problem definition in STCS evaluation approaches by providing a case in practice of one specific approach: PBE. By doing so, we aim to bridge theory and practice. However, we also recognize that our case is limited in that it only includes the first part of the PBE model and does not investigate whether the process led to any changes in the outcomes. In their article, Rohanna and Christie (2023) encourage others to apply their model. As such, we propose that future research continue bridging theory and practice by applying and studying the other components of their model: learning through emergence and evaluation outcomes. In particular, we encourage an investigation of whether the approach can change system patterns as one of the desired outcomes, and whether the changed pattern leads to sustainable change in the perceived problem. PBE is grounded in this fundamental complexity science idea by Parsons (2012). Transforming complex systems requires identifying these patterns, which connect to deeper systemic structures. Changing these patterns and underlying structures will hopefully lead to “stickier” changes more likely to last because they are structural changes. However, more research should investigate to what extent important system patterns can be changed using STCS evaluation approaches, such as PBE.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Carnegie Corporation of New York.