Abstract

Realist evaluations, a form of theory-driven evaluation, aim to explain how, why, for whom, and to what extent a complex health intervention works (or does not work). Realist evaluations are often “methods-flexible” and encourage the appropriate use of a range of evidence types that provide explanatory value. However, there is a lack of guidance in the published literature on how to identify and develop the methods and tools for realist evaluation data collection to contribute to this purpose. This article contributes to evaluation research and practice by providing an in-depth reflection on the experience of a realist researcher in planning and conducting a recent realist evaluation by describing the critical decision points and considerations taken throughout the data collection process.

Introduction

Initially pioneered by Ray Pawson and Nicholas Tilley in their 1997 book Realistic Evaluation (Pawson & Tilley, 1997), so-called “realist evaluations” have since expanded in their use across disciplines (Downey et al., 2024). They have garnered substantial support from scholars and practitioners for evaluating complex health interventions in particular (Wong et al., 2016b). Realist methodologies employ context–mechanism–outcome (CMO) configurations to explain generative causation and pose explanations for how a program may or may not work, rather than just if a program works (Pawson & Tilley, 1997). In this way, the realist evaluation is particularly useful because it enables learning, despite the success or failure of a program. Realist evaluations do this by focusing on generative causation and explaining the underlying processes and factors that lead to different results in different settings (Wong et al., 2016a). This understanding of generative causation—via the CMO configuration—is key to the realist approach and enables its suitability to study complex interventions by asking the question “what works for whom, in what circumstances, how, and why?” (Astbury, 2018; Gilmore, 2019; Pawson et al., 2005; Pawson & Tilley, 1997).

The compatibility of realist approaches to examine complex health interventions and health systems research has resulted in the use of these evaluations to provide insight into the causal mechanisms that enable change (Chen & Chen, 1990; Marchal et al., 2012). By emphasizing generative mechanisms and the influence of context, realist evaluations move beyond simplistic cause-and-effect models and reflect the inherent complexity of social systems (Marchal et al., 2012; Pawson & Tilley, 1997). For example, they recognize that interventions may need adaptation to local circumstances and emphasize the role of stakeholder engagement (Pawson & Tilley, 1997; Wong et al., 2016b).

It is also important to acknowledge the theory-driven nature of the realist evaluation. This means that when planning a realist evaluation, the approaches to data collection are informed by how the questions are framed and the program theories being tested and refined (Manzano, 2016). The realist evaluation often uses a mixed-methods and multimethods approach to data collection, which can involve multiple groups of interested parties in order to uncover generative causation and contribute to theory building and refinement (Graham & McAleer, 2018; Pawson & Tilley, 1997). Therefore, the methods are selected based on what data can best test or refine the theories being explored.

A recent comprehensive review of 166 published realist evaluations (Renmans & Castellano Pleguezuelo, 2023) identified the vast range of data collection methods these evaluations employed, classifying the methods into four main categories: interviews (n = 161), observations (n = 92), surveys (n = 43), and innovative methods (n = 13) (Renmans & Castellano Pleguezuelo, 2023). Although qualitative methods dominate the published realist evaluation literature, 65% of the realist evaluations used them in combination with another method, such as observations or surveys (Renmans & Castellano Pleguezuelo, 2023). The conduct and reporting of realist evaluations is largely informed by guidelines developed and published by the Realist And Meta-narrative Evidence Synthesis: Evolving Standards (RAMESES) II Project (Wong et al., 2017, 2016a). However, the complexity of realist methodologies means there is no formulaic protocol to replicate across realist evaluations, especially in terms of what data are collected and how, and more guidance is needed to facilitate putting these methods into practice (Cunningham et al., 2020). The purpose of this article is to do just that: to provide guidance for researchers by describing the contribution of varied data sources to realist evaluations. We illustrate with reflections on experiences in planning and conducting data collection for a multisite realist evaluation in Zambia (Dada et al., 2025a, 2025b).

Multisite Realist Evaluation in Zambia

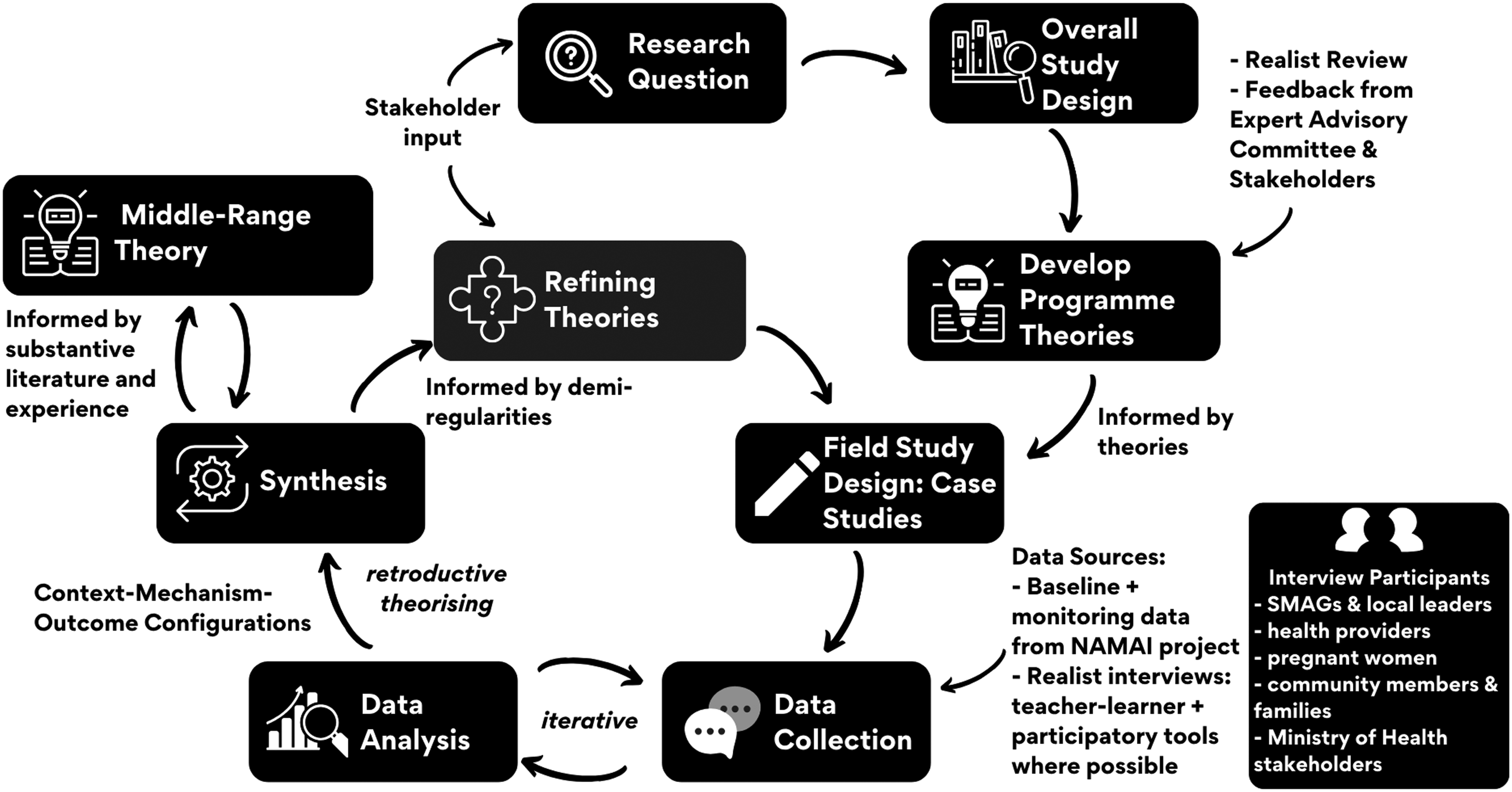

This article describes the considerations in planning and conducting a realist evaluation that aimed to investigate the causal mechanisms that influence how and why communication in community engagement works or does not work (Fryer, 2020; Mingers & Standing, 2017). The examples and reflections are based on a recently published realist evaluation (Figure 1) of how, why, for whom, and to what extent Safe Motherhood Action Groups (SMAG) in Zambia influence maternal health care–seeking behavior (Dada et al., 2025a, 2025b). The use of realist methodoligies, including realist evaluations, has increased globally including in Zambia (Mulubwa et al., 2020; Zulu et al., 2018, 2024). This growing body of work highlights the value in examining how realist evaluations are conducted in different contexts and how the methodological choices and practical considerations can vary depending on the setting. This article thereforefore contributes to the broader discourse on realist research by offering insights into how data collection has been planned, adapted, and implemented across diverse environments.

Realist evaluation cycle implemented in the Zambia study, informed by (Pawson & Tilley, 1997) and (Gilmore et al., 2016).

In this realist evaluation, I sought to understand how these SMAGs, a community engagement initiative using local community volunteers, communicated and interacted within their communities with the ultimate aim of encouraging pregnant women to seek care at the facility during pregnancy and childbirth. I conducted this realist evaluation in two sites in the Eastern Province of Zambia, where the New Antenatal Model for Africa and India project used implementation research to test the applicability of an adapted antenatal care package in four countries (Group, 2023). In Zambia, the SMAGs conduct community engagement by serving as a link between the community and the health facility to support and improve maternal and newborn health by addressing critical delays in health-seeking and mobilizing the community (Ensor et al., 2014; Jacobs et al., 2018; Mutemwa, 2016; Sialubanje et al., 2017).

Reflections and Experiences from a Recent Realist Evaluation

While the full methodology and results of this study have been published elsewhere, this section describes key decision points by drawing upon the experiences and reflections from the realist evaluation in Zambia (Dada et al., 2025a). These reflections focus on: (1) considering the contributions of various data types to testing and refining theories in the realist evaluation, (2) designing relevant data collection tools, and (3) accounting for who will collect the data. These were key steps in the realist evaluation planning and conduct that may be approached in various ways based on the specific project, yet they are often not described in detail in the published literature.

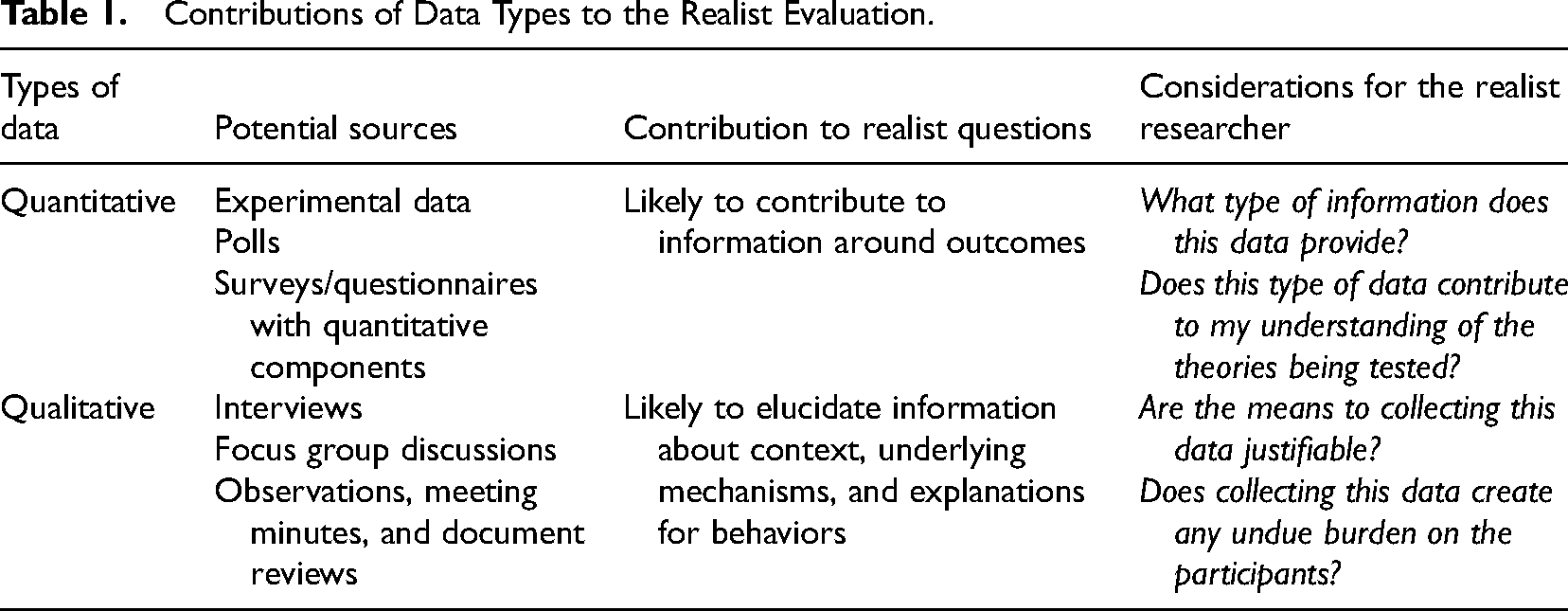

Reflection 1: Considering the Contributions of Different Data Types

While realist evaluations encourage a mix of varied evidence sources and data types (Wong et al., 2017), quantitative and qualitative data make different contributions to knowledge discovery and may be more or less appropriate in the realist line of inquiry (Johnson & Christensen, 2017; Renmans & Castellano Pleguezuelo, 2023). Table 1 describes how these contributions may apply to a realist evaluation and provides some of the initial questions I considered in designing the approaches to data collection for my realist evaluation of the SMAGs in Zambia.

Contributions of Data Types to the Realist Evaluation.

In order to determine what data sources justifiably and ethically contribute to theory testing and refinement, I first considered what data are available: were there existing datasets or evidence that could help support the testing or refining of the program theories? In this evaluation, health facilities and other research organizations were collecting quantitative data relating to health outcomes and facility attendance. I then considered what data were still needed: what information, insights, or perspectives were missing and what data could be collected to fill this gap?

Reflection 2: Designing and Innovating Data Collection Tools

A vital step in every study is the development of data collection tools and determining what tools are fit-for-purpose. After considering the reflective prompts provided in Table 1, I determined that developing additional surveys or other tools to provide quantitative measures was not needed and that a data sharing agreement could be used to access any quantitative data on facility attendance. Therefore, I explored how qualitative interviews, focus groups, and other innovative qualitative approaches could facilitate collecting the needed data.

In considering what data are needed to test and refine theories, the realist researcher may also reflect on who can provide this information. For this realist evaluation case study, I wanted to understand the experiences of both pregnant women and community members as well as the community engagement actors and health providers. Understanding the experiences and perspectives of this range of participants could help paint a picture of how the community engagement program worked across these different populations. In the realist “teacher–learner style” interview, the researcher engages the interviewee with the conceptual or theoretical ideas that they are trying to better understand in order to elicit more explicit responses in regard to these relationships (Pawson, 1996). To do this, the researcher shares components, ideas, or hypotheses with the interviewee in order to encourage their participation and share their feedback and reflections on the researcher's theory (Mukumbang et al., 2019). However, it is also important to consider how varied population groups might respond to different tools based on what is most relevant, feasible, or culturally appropriate. Individual interviews might be more appropriate for privacy or practical reasons, whereas focus groups might allow for further contrasting of ideas among different population groups or observation of how participants interact and explain their perspectives, verbally and nonverbally (Manzano, 2022). For example, in this case study, I conducted individual interviews with community volunteers and local leaders to discuss specific examples of how the SMAGs worked from an operational standpoint. Similarly, I found that separate focus group discussions with community men and community women were more appropriate in this context to enable open discussion among participants.

According to the mapping review (Renmans & Castellano Pleguezuelo, 2023), innovative data collection methods have included the use of diaries, photographs, vignettes, and social media analyses. In particular, participatory methods can provide a complementary approach to realist evaluations (Alliance, 2006; Roura, 2020). Similar to the teacher–learner style interview, “participatory research stresses the educational aspect of social investigation” supporting its potential compatibility with realist principles (Hall, 1981; Westhorp et al., 2016). The emphasis on the iterative nature of realist approaches allows opportunities to revise and revisit components of the theory, while participatory methods often provide opportunities to further explore participants’ insights in follow-up or future interviews (Powers et al., 2006). Therefore, incorporating participatory research practices into realist data collection could break down some of the barriers between the interviewer and participant and empower participants to guide and teach (Chambers, 1994).

With this in mind, I implemented the “realist photovoice technique” (Mukumbang & Dada, 2024) for a set of participants involved in the Zambia realist evaluation. In this approach, pregnant women participants received cameras and two prompts to consider while taking photos, which they later shared with the researcher in a follow-up interview. I decided to implement this tool with this population for two reasons. First, previous interactions and observations of this group indicated that it would be important to address potential power imbalances in the interview setting. Second, I specifically wanted to understand the perspectives of pregnant women in their care-seeking decisions and interactions with the SMAGs. In addition to developing the photovoice prompts to guide this process, I also considered the flow of the follow-up conversation where participants shared their photos, what supporting materials they needed (cameras and any other equipment), how to communicate instructions with participants, and the ethical implications of disseminating any resulting photos. Aligned with participatory methodologies, I aimed to empower participants to lead the follow-up conversation by determining which photos they wanted to share and what they wanted to say with them. However, I also included follow-up questions related to the realist program theories wherever components of the theory were alluded to or mentioned by the participant (Mukumbang & Dada, 2024). This required a deep and comfortable familiarity with the program theory areas so that they could be recognized and discussed outside of the traditional order of topic guide questions.

With the realist photovoice technique in this evaluation, the participants led the discussions in a different way than in the semistructured interviews. Most notably, participants shared photos that uncovered a theory area that was not previously captured by the program theories being tested or in the data collected through other avenues. For example, realist photovoice participants consistently emphasized the importance of receiving knowledge in the dynamic relationships with the SMAGs. This led to exploring an additional explanatory theory. Additionally, across qualitative data collection for realist evaluations, participants will seldom explicitly state a CMO configuration or explanation of generative causation (Gilmore, 2019; Manzano, 2022; Mukumbang & van Wyk, 2020). In this way, the realist photovoice technique contributed a creative way to indirectly discuss and uncover what was important to participants, enabling a “creative imagination of information sharing” (Mukumbang & Dada, 2024).

Reflection 3: Working in New Environments Across Different Languages

After finalizing the study design and approaches to data collection in the realist evaluation, I also had to consider the logistics of implementing this study as an international researcher. One of the most critical components was recruiting a research assistant who could provide not only important language translation skills but also experiential knowledge. However, in many cases, research assistants may have experience with qualitative data collection without having a background in realist research. This means another important consideration is training and supporting a research assistant to be comfortable conducting realist data collection. In the Zambia realist evaluation, I built off of existing realist research training materials to introduce a research assistant to the realist methodology and data collection tools. This training included an overview of the project, a brief introduction to realist terminologies and approaches, and the steps in conducting a realist evaluation. In addition to going over the research methodology, we conducted practice interviews together to trial and familiarize ourselves with the interview guides by identifying potential probing questions and rephrasing questions for clarity. Similarly, we conducted “debriefs” at the end of each interview or focus group to discuss key findings and review data together. This was a particularly helpful practice because it not only supported our understanding of complex data but it also helped us account for the implications of working across multiple languages: Nyanja and English (Gilmore, 2019).

Researchers working outside their usual home environments often face unfamiliar considerations and processes they might not be aware of at the outset. Working with local partners and experienced researchers can highlight some of these logistical components early so that they can be appropriately incorporated into planning. In line with ethical research, conducting courtesy calls or introductions to the local leaders and/or stakeholders is often a necessary first step in many contexts. This is also important when considering meaningful engagement and impact of research because connecting and collaborating with local stakeholders early can help identify opportunities for the useful dissemination of findings, which can influence policy and decision-making.

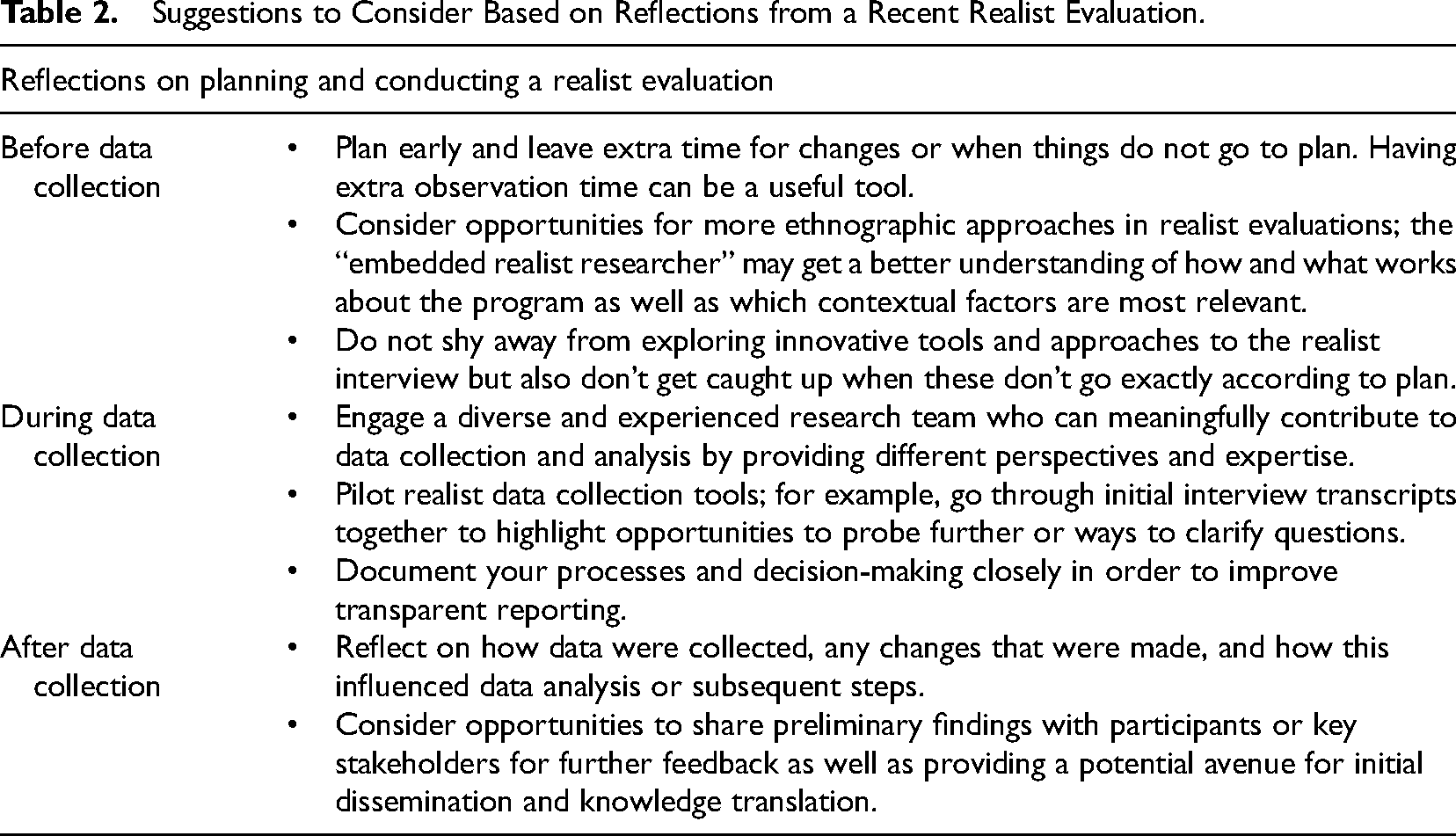

In the Zambia realist evaluation, I scheduled extra time in the region where I collected to allow for these initial meetings with local stakeholders, as well as any potential changes or extenuating circumstances. Additionally, one of my priorities was to include time to feedback on initial findings to the communities and local stakeholders who were involved or enabled the research project. At the end of each case site, I developed one-page briefs summarizing the preliminary findings and highlighting the contributions of the participants in the overall study. I shared these briefs with district, provincial, and national Ministry of Health stakeholders as well as health providers and community members at the health facilities.

Discussion

The complexity of realist research means that designing and conducting a realist evaluation can pose a number of challenges, especially for novice realist researchers. Table 2 provides some suggestions for consideration based on the reflections shared in this article. These are not presented as formal recommendations but are simply some of the key learnings that I would have appreciated when embarking on my first realist evaluation.

Suggestions to Consider Based on Reflections from a Recent Realist Evaluation.

Challenges and Suggestions

It is difficult to ignore the relationship and balance of power between the researcher and research participants in the conduct and planning of interviews. Power is inherent in all interactions and conversations and is not distributed evenly across participants, making it an important consideration across global health interventions and research (Gilmore, 2019; Wang, 2006). Experts have suggested a variety of approaches to address the challenges posed by power imbalances in interview processes, including for realist evaluations. One solution includes having researchers incorporate broader questions about program experiences and general reflections on program theory prior to specific theory-testing questions in order to limit respondent bias (Gilmore, 2019; RAMESES II Project, 2017). Other solutions may consider innovating and adapting approaches within realist philosophy as the methodology continuously evolves (Cunningham et al., 2020).

Involving users and stakeholders in realist evaluation data collection methods is becoming increasingly common as evaluators aim to address these power dynamics and exchange knowledge to capture the complexity of programs (Manzano, 2024). Creative participatory activities such as walking interviews or photovoice help build trust and disrupt the “traditional power structures” of the researcher–participant relationship (Carpiano, 2009; Mannay, 2010; Spicksley, 2018). Visual or drawing activities also allow individuals to participate more freely, without concern for literacy or language barriers that could influence the interview dynamic and therefore knowledge translation (Alliance, 2006). Both participatory and realist approaches bring the environment and populations being studied into the research process, breaking down traditional hierarchies and promoting engagement overall. This co-learning process enables active participation and remains in line with realist principles as long as the “realist logic of enquiry” continues to guide the discussions (Westhorp et al., 2016). For example, the novel realist photovoice technique may provide a useful avenue to address power inequities in the researcher–participant dynamic and to enable deeper understandings of underlying mechanisms through creative imaginations of information sharing led by the participant (Mukumbang & Dada, 2024).

A related challenge comes from the level of depth researchers strive for in realist evaluations. In attempting to uncover the underlying generative causation, realist researchers probe participants to explain why or how they experienced something. Participants rarely provide an entire CMO configuration in their responses, and accepting that data can still be useful without appearing like an overt CMO configuration during the interview is an important step in engaging with and getting the most out of the interview (Manzano, 2016, 2022). In the Zambia realist evaluation, conducting debriefs between the research assistant and myself after each interview informed future follow-up questions or rephrasing questions to improve clarity and specificity. Relatedly, one challenge in the teacher–learner style realist interview occurred when participants repeated back the theory or agreed without providing further detail. We addressed this by trying different approaches to asking questions. At times, we asked broader, background questions and moved away from the typical teacher–learner style approach. Instead of presenting the whole program theory to participants, we asked about elements of the theory in order to enable participants to provide their own ideas; for example, rather than presenting the theory on trust, we asked participants what made them trust someone. Aligned with other realist evaluation approaches (Haynes et al., 2021), we also incorporated concrete examples and anecdotes based on observations, asking if these experiences reflected their own. These adjustments illustrate the iterative nature of the realist evaluation process, emphasizing the need for flexibility and responsiveness to participant feedback during data collection. By refining or adapting questions, we aimed to encourage participants to engage more critically with the theories in order to elicit deeper insights.

In addition to these adaptations to data collection tools through the study, the most important component was the relationships between me, as a researcher, and the participants and environment. We aimed to develop a positive rapport with the communities and participants by being respectful, maintaining a consistent presence at the facility, and connecting with known and trusted community volunteers. Similarly, spending time in the case study community highlighted how embedding myself in the study setting improved my understanding of the programs, contexts, and their nuances (Gilmore, 2019).

Contribution to the Evaluation Field

Guidance on realist evaluation methodology emphasizes transparency and robust reporting (Wong et al., 2017). However, the complexity of conducting the methodology, coupled with word count limitations for most publications, often makes this challenging for both researchers and peer reviewers. As a result, many realist researchers may struggle with tackling how to conduct various practical aspects of the realist evaluation. With realist evaluations continuing to gain popularity, it may be useful to provide more detailed reporting on the process of developing and designing a realist evaluation, including the data collection approaches and tools used (Cunningham et al., 2020). This article contributes to this needed conversation by sharing anecdotal experiences, reflections, and challenges faced in this process. While this may be especially helpful for novice realist researchers, the challenges faced and approaches shared are applicable across mixed methods research projects and other types of theory-driven evaluations.

Finally, this article describes the process of considering and developing an innovative, participatory data collection tool, using the realist photovoice approach (Dada et al., 2025a; Mukumbang & Dada, 2024). While researchers have begun to incorporate participatory approaches in realist evaluations (Manzano, 2024), there is little consistency in how this is done and even less discussion around the ontological and epistemological implications that are a significant component of realist research. This article begins to bridge that gap by providing an in-depth reflection on why the participatory research approach of photovoice was chosen and how it aligned with the project's realist line of inquiry.

Conclusion

Realist evaluations play an important role in the field of implementation and evaluation research. The theory-driven nature and complexity of realist evaluations make them well-suited for a range of traditional and innovative methodological tools and data collection approaches. However, limited reporting of the methodological details involved in initiating and planning a realist evaluation can pose challenges for realist researchers. This article presents an in-depth reflection from one researcher's experience conducting a realist evaluation. By signposting critical decision points in the process, these reflections may be helpful for future realist researchers to consider and build on in planning their own mixed-method and multimethod realist evaluations.

Footnotes

Acknowledgments

The author would like to acknowledge Dr. Rebecca Hunter for her feedback on this manuscript. The author would also like to thank Dr. Brynne Gilmore for her advice relating to both this manuscript and general realist methodological guidance during doctoral supervision. Finally, as this article is based on reflections from a previous realist evaluation, the author would like to acknowledge the support of the additional advisors and collaborators from that study including: Associate Professor Aoife De Brún, Professor Bellington Vwalika, Nachela Chelwa, Natasha Okpara and Mirriam Zulu.

Ethical Approval and Informed Consent Statement

Due to the nature of this manuscript, no specific ethical approval was needed. The overall project this manuscript reflects on received ethical approval from the University of Zambia Biomedical Research Ethics Committee (Ref No. 3612-2023) as well as the University College Dublin Ethics Committee (LS-23-12-Dada-Gilmore). This study was registered with the Zambian National Health Research Authority (NHRAR-R-417/22/05/2023). All study participants received an information sheet with details about the study and their involvement and provided informed consent. The information sheets and consent forms were translated into the local language, Nyanja.

Data Availability Statement

No specific data informed this manuscript; however, data from the original project is available upon request.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The realist evaluation described in this article was funded by a University College Dublin Ad Astra PhD Scholarship.