Abstract

This article contributes to research on evaluation by examining how organizational actors respond to and use evaluation imposed on them within an evaluation system. Drawing on Henry and Mark's theory of evaluation influence, this study uses Q-methodology to explore how staff within English higher education providers experience evaluation and its influence on their widening participation practice and strategy decision-making. The experiences of organizational actors are examined and classified into four types: strategic practitioners, pragmatic practitioners, staff with indirect involvement in widening participation, and evaluation enthusiasts. Through analyzing these experiences, the findings illustrate the diverse ways organizational actors are influenced by evaluation within evaluation systems. To deepen our understanding of evaluation influence in the contexts of evaluation systems, this article recommends explicitly embedding organizational theories into future theories of evaluation influence and provides suggestions for future research on the topic.

Introduction

Research on evaluation use is ubiquitous (Searle et al., 2024). However, few studies have explicitly included organizational theories in their frameworks for understanding the mechanisms affecting how evaluation is practiced and used when it becomes institutionalized (also referred to as evaluation systems) (Kupiec et al., 2023; Raimondo, 2018). Historically, research on evaluation has developed our understanding of how evaluation contributes to instrumental or conceptual uses of evaluation for policymaking (Alkin & King, 2018). This research typically grounds evaluation within a technical rationalist framework (Van Der Knaap, 1995) and as an activity conducted by an evaluator using social science research methods for an audience of policymakers (Levelt & Pouw, 2022). However, evaluation is increasingly becoming institutionalized and embedded within organizational practices and routines (Dahler-Larsen, 2012; Leeuw & Furubo, 2008; Meyer et al., 2022). In these contexts, “evaluation becomes an organizational functional entity” and can be “exercised by interchangeable persons” (Dahler-Larsen, 2012, p. 36). Thus, to better understand the influences of evaluation, the field should develop more research considering the perspectives and experiences of all types of organizational actors.

Current literature has explored the effects of evaluation conducted within evaluation systems from a macro, organizational perspective (Andersen, 2020), and international perspective, as in the case of the European Union (Hojlund, 2014). Other than research that has captured the experiences of evaluators (LaVelle et al., 2022; Teasdale et al., 2023) or that is focused on evaluation capacity building (Bourgeois et al., 2023), fewer studies have sought to examine the viewpoints of actors responsible for enacting evaluation systems in their organizations. This is important because evaluation is rarely put under scrutiny as a practice (Dahler-Larsen, 2021), even though, when institutionalized, it can just as easily be used to maintain the status quo as it can to improve social conditions (Raimondo & Leeuw, 2021).

Moreover, studies of evaluation use and influence are mostly descriptive (Coryn et al., 2017). This study uses Q-methodology as an alternative approach to researching the subjective experiences of employees within organizations enacting an evaluation system. Q-methodology has been used to assess stakeholder perceptions of evaluation use (Harris et al., 2021) and to evaluate the outcomes of collective leadership (Militello & Benham, 2010). The combination of quantitative and qualitative methods within Q-studies allows researchers to systematically explore subjective viewpoints in their given contexts (Ho, 2017).

Although situated in the context of an evaluation system, this study does not examine the influences of evaluation systems. Rather, the purpose of this study is to explore how actors within English higher education providers experience evaluation and its influence on their widening participation practice and strategy decision-making. In England, higher education providers are required to support disadvantaged and underrepresented people to access and succeed in higher education and evaluate the impact of the activities they deliver. The policy is called widening participation, which is an internationally recognized policy area in higher education (Burke, 2016; Chorcora et al., 2023). To achieve their goals, higher education providers typically deliver activities, including campus visits, mentoring and tutoring programs, and summer schools, and provide information, advice, and guidance to targeted groups (Moore et al., 2013). These widening participation activities are like college access and success, or “broadening participation” activities, delivered in the United States (Dill, 2022; Ives et al., 2024). Throughout this article, these activities will be referred to as “access.”

This study also illustrates how Q-methodology can be used to examine a plurality of experiences of evaluation practices within organizations beyond the traditional evaluator/stakeholder relationship. To achieve this purpose, Mark and Henry's (2004) theory of evaluation influence is used as a framework to explore the potential pathways of evaluation influences at the individual, interpersonal, and collective levels of change. Then, the process for implementing Q-methodology is described. Finally, a discussion of the findings is provided, including the implications of the study for understanding how internal evaluation influences the decision-making of staff with different organizational responsibilities for higher education access.

Theoretical Framework: Evaluation Influence

It is well known that policy decisions are rarely made through the direct instrumental use of evaluation findings or report recommendations (Howlett & Giest, 2015). Weiss (1977) suggests that the use of research in policymaking is often “not deliberate, direct or targeted, but a result of long-term percolation of social science concepts, theories, and findings into the climate of informed opinion” (p. 534). Recommending the enlightenment model, Weiss encourages evaluators to consider conceptual uses of evaluation, including how controversial and challenging research and evaluation can be used to make policymakers challenge their assumptions and the status quo (Weiss, 1977). Through this work, Weiss touches on the fluidity and indirectness of evaluation use.

Additionally, among instrumental and conceptual forms of evaluation use, Knorr (1977) challenged the assumptions that evaluation is used at all, arguing that for the most part, evaluation plays a legitimizing role when “data are used selectively and often distortingly to publicly support a decision that has been taken on different grounds or that simply represents an opinion the decisionmaker holds” (p. 171–172). This definition is tied to the symbolic use of evaluation when evaluation is used as a gesture (Alkin & King, 2018).

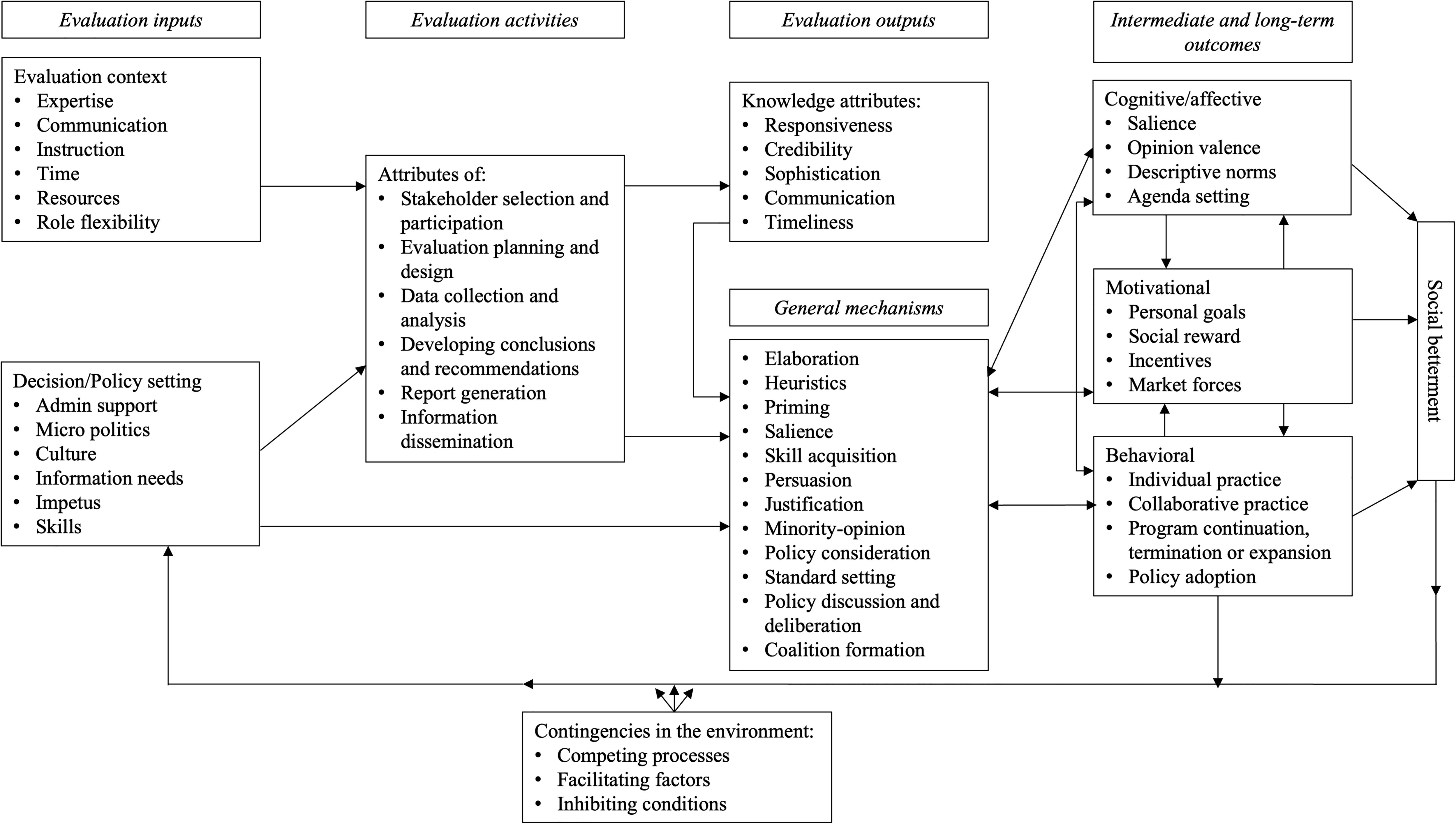

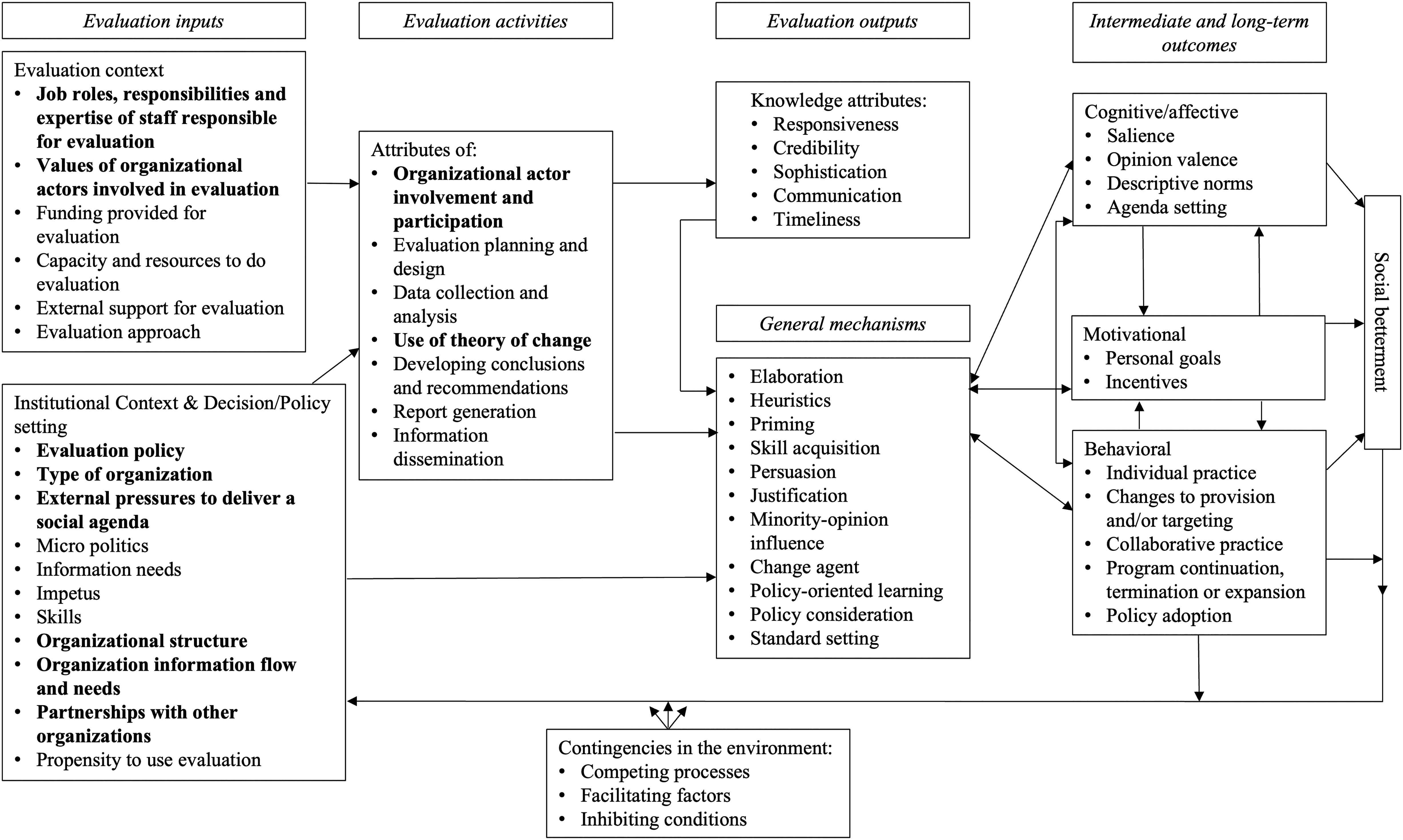

Rather than focus on individual concepts and types of evaluation use, Kirkhart (2000) recommended the term evaluation influence as it better captures the multiple and gradual ways evaluation achieves impact through the process. Similarly, Henry and Mark (2003b) suggest that definitions of evaluation use have left the field overgrown because these concepts are interrelated and overlapping. Henry and Mark (2003a), and in a follow-up article, Mark and Henry (2004) built on Kirkhart's definition to develop a theory of change describing the potential pathways of evaluation influence (Figure 1).

Theory of evaluation influence.

Evaluation Inputs and Activities

Evaluation inputs conceptualized broadly by Mark and Henry (2004) as evaluation context and the decision-making/policy setting shed light on an organization's capacity to do and use evaluation (Cousins et al., 2014). Understanding these evaluation inputs is salient in the case of evaluations that are embedded within organizational practices, such as in English higher education providers for the evaluation of their access programs. The inputs, including organizational contexts and factors, provide the foundation necessary for different pathways of evaluation influence to occur (Mark & Henry, 2004).

Based on Leeuw and Furubo's definition of evaluation systems (2008), evaluation for the policy of widening participation has become institutionalized within English higher education providers over the last twenty years. Since 2009, the internal evaluation of access programs has been mandated as a regulatory requirement of higher education providers as part of their reporting to the regulators of higher education, the Office for Fair Access (2004–2018), and, since 2018, the Office for Students (OfS). The epistemological perspective of evaluation for access has historically been rooted in an evidence-based policy framework and the belief that methodologies, including randomized control trials, are the gold standard and should be used, whenever possible, to evaluate policies and programs (Crockford, 2020). For example, much of the evaluation from a flagship access program called Aimhigher did not use experimental methodologies that would determine causal impact and was dismissed for lacking quality and rigor (Doyle & Griffin, 2012). However, some academics believe these assertions overlook the complex and emergent nature of the outcomes associated with access programs (Harrison, 2012). Indeed, some qualitative evaluation studies suggest that Aimhigher contributed to increases in attainment and progression (Passy et al., 2009). It has been argued that this perceived lack of rigorous evaluation contributed to the discontinuation of Aimhigher (Harrison, 2012). Nonetheless, higher education providers are still implementing similar activities delivered through Aimhigher, such as providing information, advice, and guidance, campus visits, and subject-related taster events (Moore et al., 2013).

Access and participation plans outline the institution's access activity and its evaluation, including their targets (the types and numbers of disadvantaged and underrepresented students they expect to access and succeed at their institution) and the investment they will make to deliver on the plan (OfS, 2023). These plans allow higher education providers to translate national-level policy for their organizational contexts, providing a shared organizational responsibility to deliver their established targets (Rainford, 2021). In addition, there is a degree of permanence to the evaluation of access activities as they are delivered throughout the academic year and are subject to approval and monitoring by the regulators of higher education (OfS, 2023).

Overall, these contexts shape how higher education providers enact widening participation policy, including its evaluation. Since the policy was instigated by the New Labour government (1997–2010), evaluation has been largely influenced at the organizational level by evidence-based policymaking and principles underlying New Public Management and performance management with an emphasis on setting and measuring targets (Crockford, 2020; McCaig, 2011). Standards of evaluation (published by the regulator) emphasize evaluation types that should be implemented by higher education providers to determine “what works” in delivering on targets across the sector. These three types of evaluation are (1) qualitative evaluation methods or a theory of change, (2) pre- and post-evaluations that do not establish causality, and (3) evaluation approaches that can establish causality, namely randomized control trials or quasi-experimental designs (OfS, 2019).

Individual, Interpersonal, and Collective Influences of Evaluation Practice

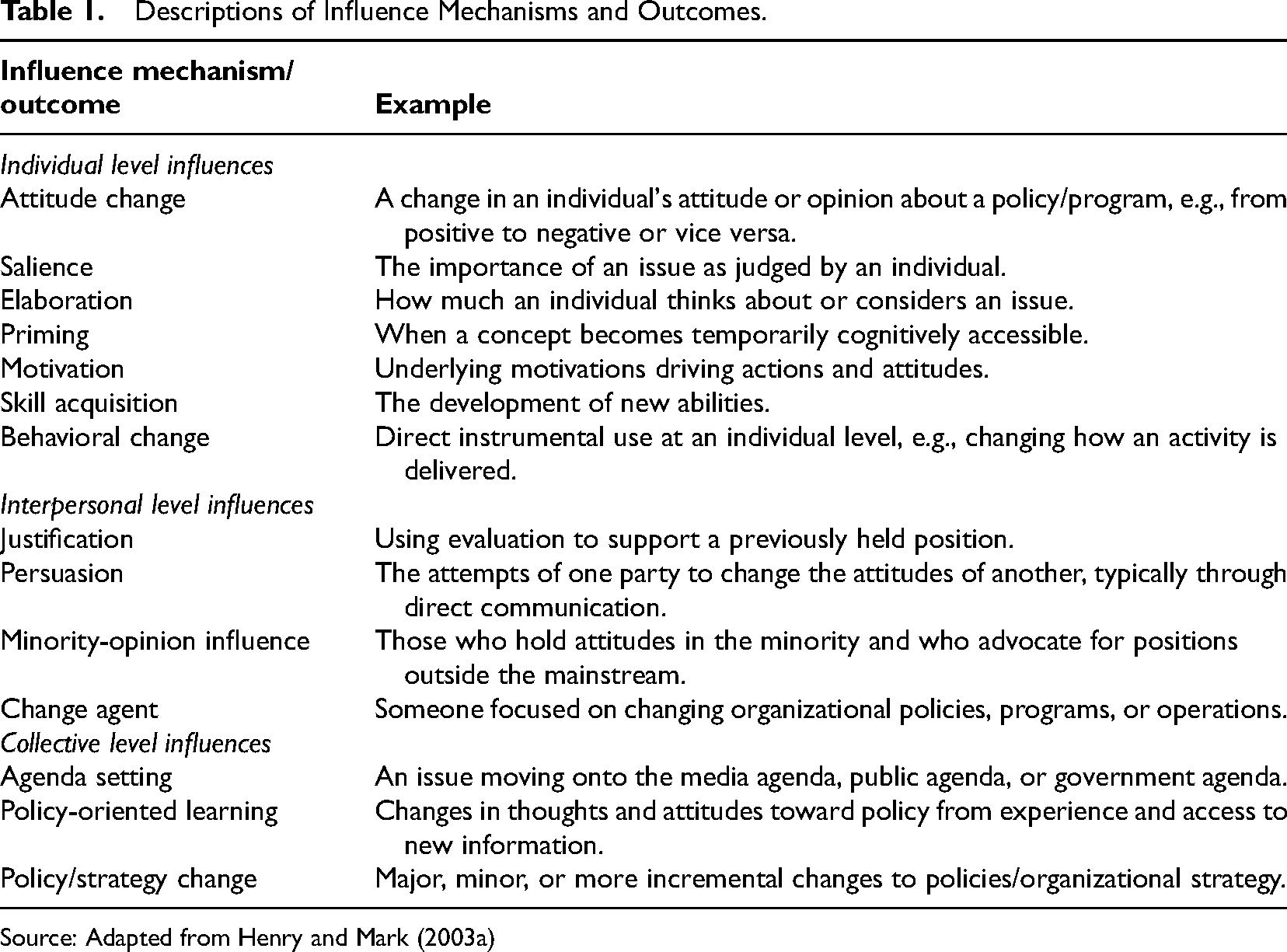

Mark and Henry (2004) distinguish evaluation influence mechanisms and outcomes based on whether they occur at the individual, interpersonal, and collective levels. This section builds on the definitions in Table 1 to describe how mechanisms and outcomes across each level of influence can emerge within English higher education providers.

Descriptions of Influence Mechanisms and Outcomes.

Source: Adapted from Henry and Mark (2003a)

Individual Level Influences

Individual-level influences, including elaboration, salience, and priming, are linked to attitude change because it is through the process of thoughtfully elaborating on information (Oliver, 2008) or because a thought is at the forefront of the mind through priming and salience that changes in attitudes may occur (Fleming, 2011). Moreover, according to Fleming (2011), attitude changes through a thoughtful elaborative process are more likely to persist and lead to changes in behavior. On the other hand, elaboration, priming, and salience can also strengthen already-held attitudes and beliefs (Barden & Tormala, 2014). When this is the case, evaluation can be used symbolically or as a means of legitimization.

Salience can occur through greater elaboration, priming, or by focusing on a particular issue presented in the media or through regulation. Thus, salience can contribute to agenda-setting at the collective level. For example, evidence published by Crawford and Greaves (2015) and UCAS (2015) highlighted to the regulator the underrepresentation of white working-class students in higher education. In 2017, the regulator released a topic briefing to inform higher education providers of the development of activities to support white working-class students (OFFA, 2017).

These descriptions of individual-level influences reflect the complexity of the pathways of evaluation influence. They are not unidirectional, and individual-level influences can trigger interpersonal and collective influences (Henry & Mark, 2003a; Mark & Henry, 2004). Moreover, individual-level influences can occur from process use, “stimulated not by the findings of an evaluation, but by participating in the process of evaluation” (Mark & Henry, 2004, p. 36).

Interpersonal Level Influences

The interpersonal level refers to change that occurs through interactions between individuals (Henry & Mark, 2003a). Mechanisms at this level include justification, persuasion, and minority-opinion influence.

Justification and persuasion can be supported through individual-level mechanisms elaboration and priming, particularly when evaluation can be used to strengthen pre-held attitudes and beliefs (Henry & Mark, 2003a). These mechanisms emphasize the political aspects of evaluation and how evaluators develop persuasive arguments to convince policymakers to adopt their findings (Aston et al., 2022).

The next interpersonal level mechanism, minority-opinion influence, is most effective when the message is persistent, when the argument is strong and has a moral incentive, and when change is led by charismatic leadership. Thus, such influence may emerge over time as the opinion of the majority changes. In widening participation, minority-opinion influence has occurred through the deliberation and contestation of using “raising aspirations” as a key outcome of access programs (Harrison & Waller, 2018), which, according to Rainford (2023), positions young people in a deficit. Over time, practitioners and researchers involved in access programs have acted as change agents and challenged this viewpoint. Recently, the narrative has shifted to help young people realize their aspirations or raise their expectations of what they can achieve (Rainford, 2023).

Collective Level Influences

Finally, change at the collective level refers to modifications in the decisions and practices of organizations either through direct or indirect means (Henry & Mark, 2003a). Historically, the media has influenced the policy agenda by supporting and reinforcing the widening participation discourse in its objectives for social mobility (Waller et al., 2014). For example, a report by the Social Mobility and Child Poverty Commission (2014) found that people in the most elite jobs were more likely to have attended Oxbridge University. This prompted the media, in varying degrees, to highlight issues related to black and minority ethnic (BAME) students accessing higher education (Martin & Levy, 2016). In 2018, the regulator released a topic briefing to support higher education providers to improve their practice to increase access and retention of BAME students (OFFA, 2018).

Despite a lack of evidence for direct influence, policy change has historically been seen as the expected end goal of evaluation (Henry & Mark, 2003a). Indeed, studies have shown that managers within organizations are more likely to use knowledge symbolically (Boswell, 2008). In the context of widening participation, McCaig (2015) asserts that institutional policy discourses have changed over time due to the increased marketization of higher education and guidance provided by the regulators.

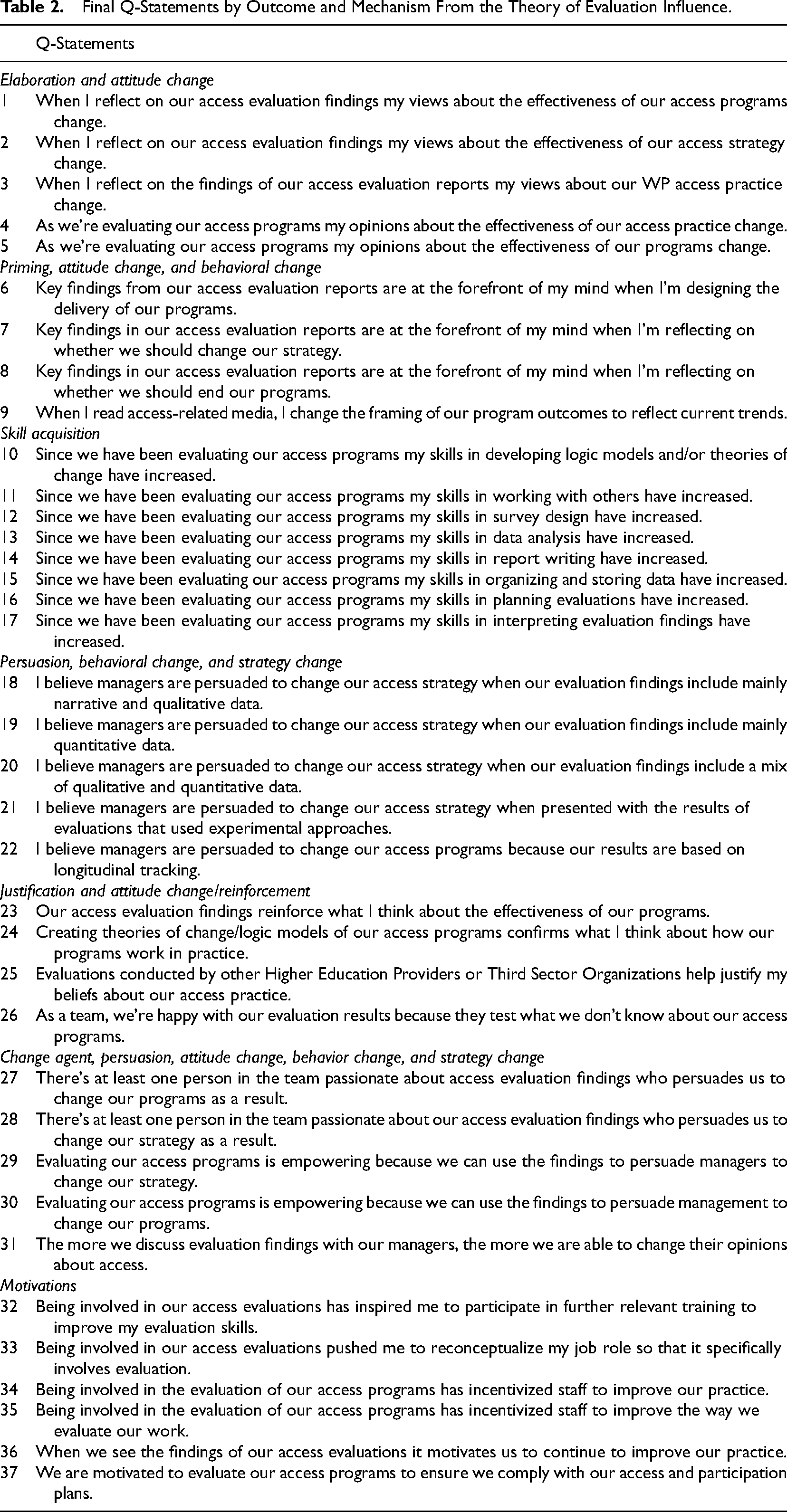

Q-Methodology

Q-methodology was chosen as the primary methodology of this study because it is rooted in the exploration of individual perceptions and subjective experience (Harris et al., 2021), and this study aims to explore staff experiences of evaluation and its influence on their practice and strategy decision-making. Instead of subjecting individuals to measurement, Q-methodology uses individuals to sort a set of statements (known as a Q-sample) that reflect an issue or topic (known as the Q-sort). The Q-sample used in this study was designed in three phases. First, a list of 71 Q-statements was drafted explaining different potential pathways of evaluation influence. To ensure the statements reflected the subjective experiences of participants, the condition of instruction was for each participant to consider the statements in the context of their personal experience of evaluation. Then, to ensure readability, clarity, and representativeness, the statements were piloted with a small sample of practitioners, evaluators, and managers before using them in the study. The statements were revised and reduced from 71 to a more manageable Q-sample of 37 (Table 2).

Final Q-Statements by Outcome and Mechanism From the Theory of Evaluation Influence.

For data collection, an online software used to collect Q-sort data called EasyHTMLQ was piloted with a small group of practitioners, evaluators, and managers involved in higher education access (Banasick, 2021). Collecting Q-sort data online was more convenient for participants to complete on their schedule, particularly since data was collected during the COVID-19 pandemic.

Instructions on how to complete the Q-sort procedure were provided through EasyHTMLQ (Banasick, 2021). Participants were asked to sort statements that best reflected their personal experiences of evaluation within their institution into three piles labeled most like my experience, least like my experience, and neutral. Next, participants were invited to sort the statements into a structured distribution from most like my experience to least like my experience (Watts & Stenner, 2012). After the sorting was completed, participants were asked to add comments via an open-ended text box to describe why they sorted the statements they felt were most and least like their experience. The data was downloaded in a text file and imported into KenQ for analysis (Banasick, 2019).

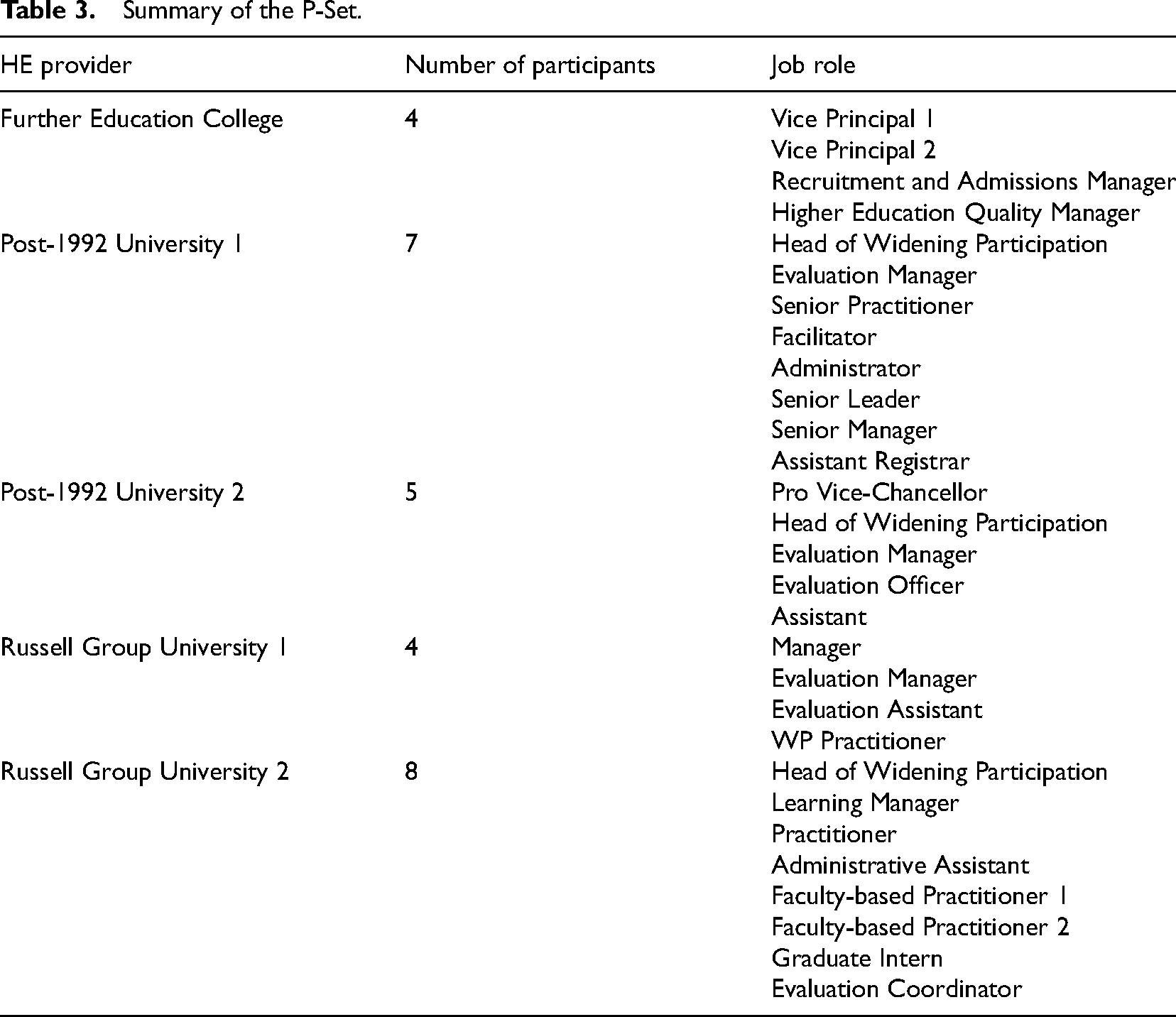

P-Set

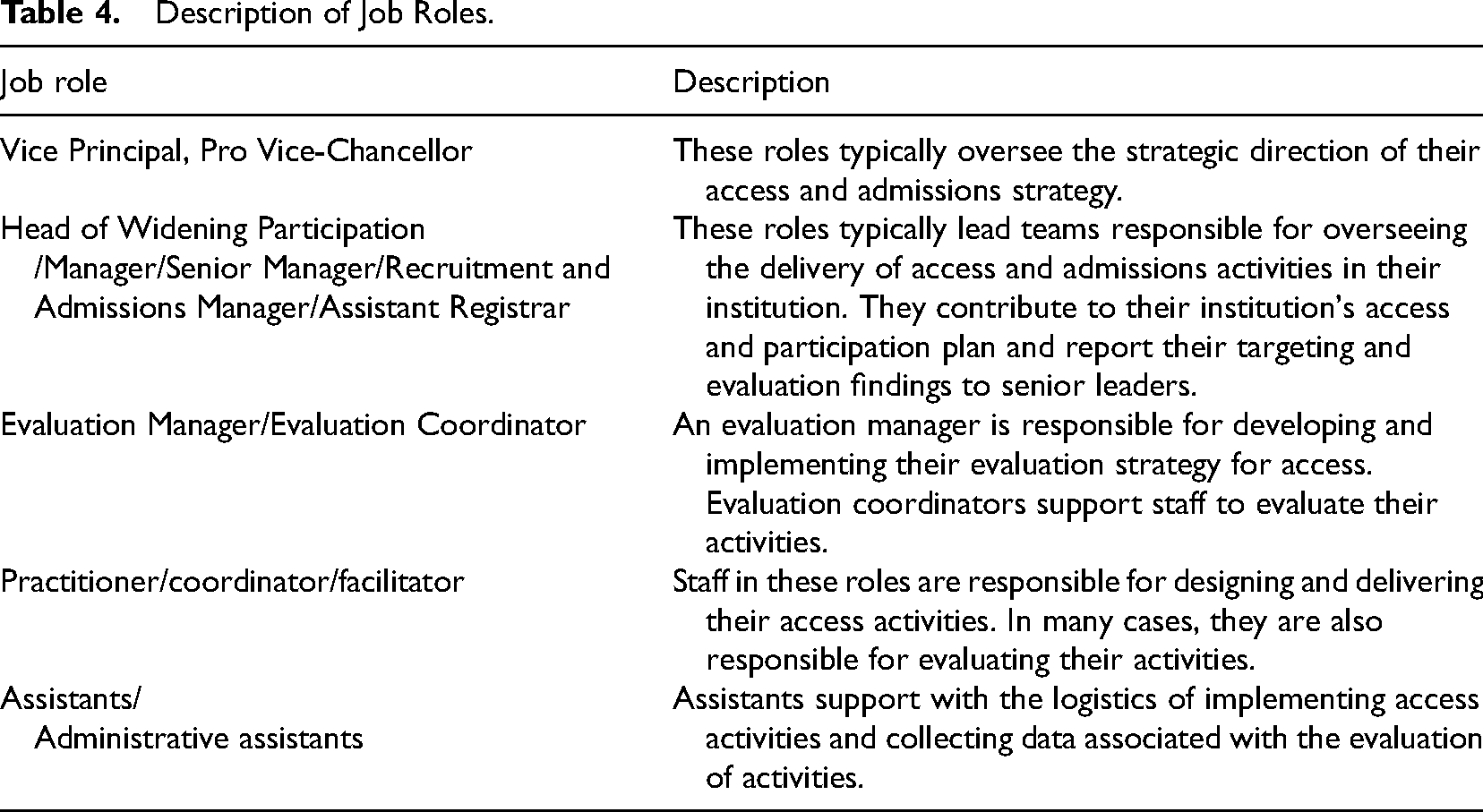

Referred to as the P-set in Q-methodology, the selection of participants for the study was strategic and purposeful to gain a variety of perspectives. This is because higher education providers differ depending on the social, environmental, and cultural factors that affect their approaches to their access programs (Greenbank, 2007). For example, Russell Group Universities, which are research-intensive universities (akin to the Ivy League), face heightened pressures to widen participation because they largely recruit students from high socio-economic backgrounds (Rainford, 2017). Consequently, Russell Group Universities tend to dedicate more resources but to a smaller proportion of target students than Post-1992 Universities and Further Education Colleges (FECs) (McCaig, 2016). Post-1992 Universities are modern institutions that typically recruit more students from local areas and low socioeconomic backgrounds (Chowdry et al., 2013). FECs also tend to recruit more students from local areas and low socioeconomic backgrounds and provide both vocational and higher education-level courses. Two research-intensive higher education providers in the Russell Group, two Post-1992, and one FEC were recruited for this study. In addition to sampling different higher education providers, participants were recruited to the study from various job roles related to access activity (Table 3). To maintain participant anonymity, some job roles have been generalized, e.g., practitioner, senior leader, and manager (Table 4).

Summary of the P-Set.

Description of Job Roles.

Data Analysis

Using KenQ (Banasick, 2019), each Q-sort was correlated against all other Q-sorts. Correlations were presented amongst each possible pairing of participants so that those who shared similar responses in the Q-sort were positively correlated with one another (Brown, 1980). Next, principal components analysis was used to create a finite number of factors that represent groups of similar viewpoints rather than individual views. Following the initial factor analysis, varimax rotation and manual rotation helped maximize the amount of study variance explained amongst the Q-sorts which load onto each factor (Watts & Stenner, 2012). Finally, each factor was interpreted through factor arrays. Factor arrays normalize the factor scores for each Q-statement and present each factor as a Q-sort diagram, also identifying the statements that are most and least associated with the viewpoint presented in each factor. Factor arrays were interpreted as distinctive shared viewpoints using the additional data collected through the qualitative descriptions of participant Q-sorts (Albright et al., 2019; Watts & Stenner, 2012).

Results

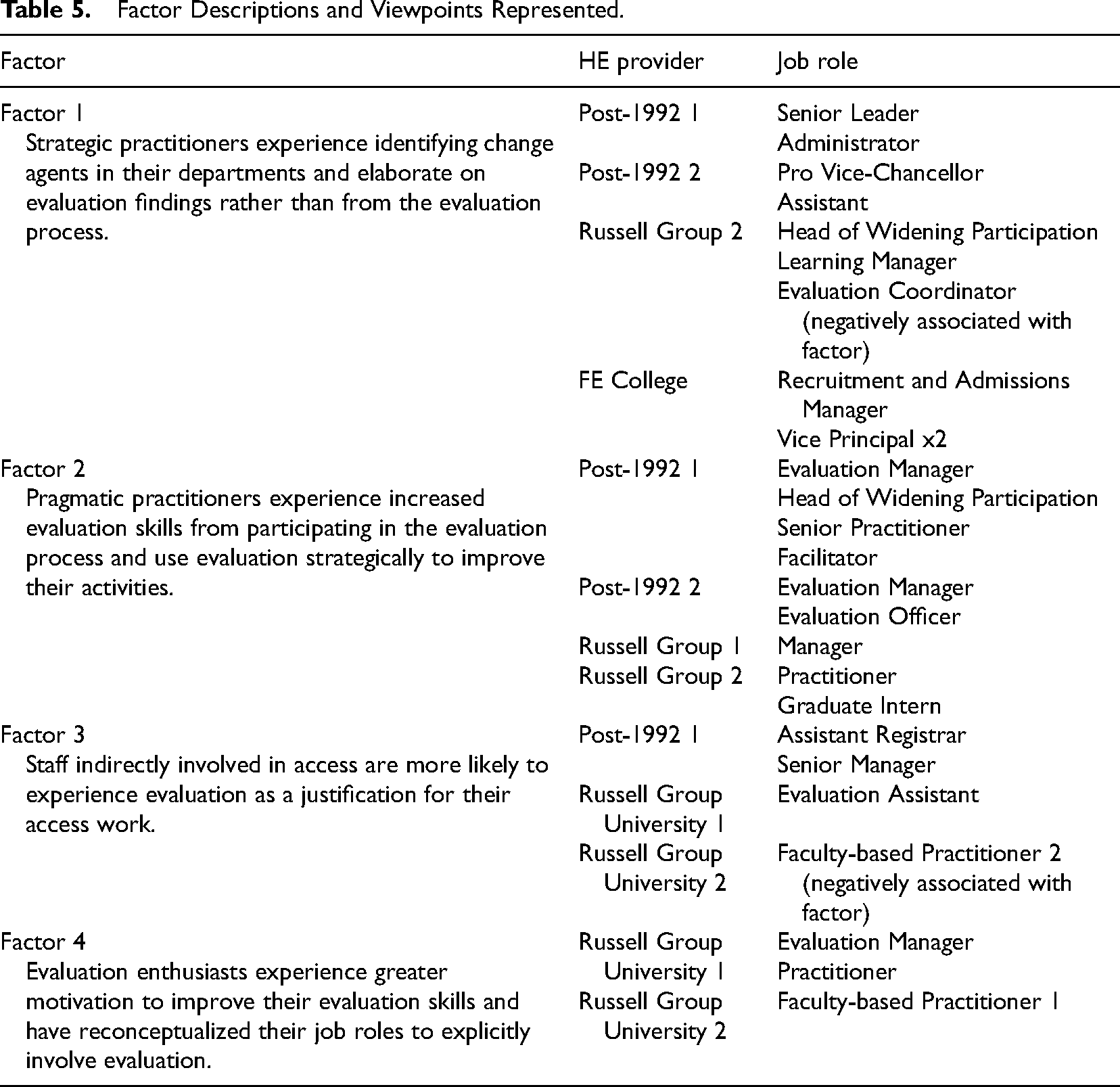

The factor analysis resulted in four factors, each representing distinctive experiences of potential pathways of evaluation influence (Table 5). In total, the views of 26 of the 28 participants are associated with the 4 factors, the most with factor 2.

Factor Descriptions and Viewpoints Represented.

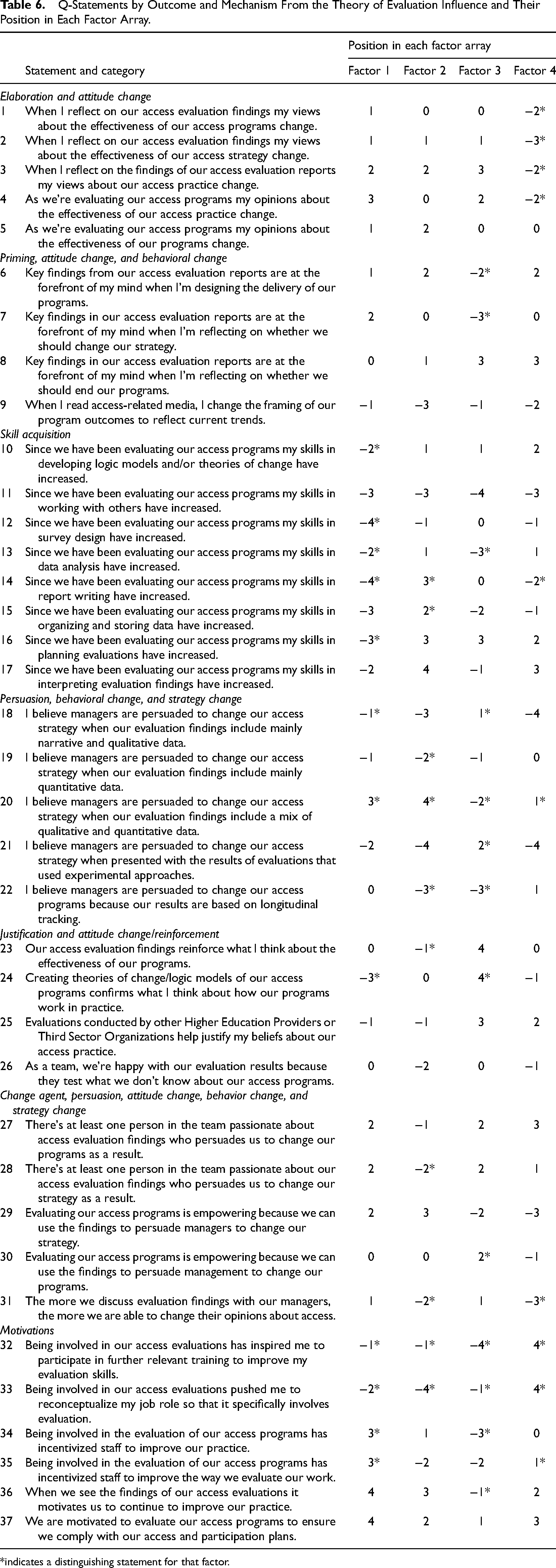

The factor arrays, including the position of each statement within each factor, are presented in Table 6 and described in detail in the subsequent sections.

Q-Statements by Outcome and Mechanism From the Theory of Evaluation Influence and Their Position in Each Factor Array.

indicates a distinguishing statement for that factor.

Factor 1: Strategic Practitioners

Participants whose experiences align most with this factor are likely to be indirectly involved in the evaluation process within their institution. Seven participants work in managerial or senior leadership positions, and one is an Administrator and another an Assistant.

Defining statements that are most like the experience of strategic practitioners include:

Statement number 37: We are motivated to evaluate our access programs to ensure we comply with our access and participation plans Statement number 36: When we see the findings of our access evaluation, it motivates us to continue to improve our practice

Statements that are least like the experience of strategic practitioners include:

Statement number 12: Since we have been evaluating our access programs, my skills in survey design have increased Statement number 14: Since we have been evaluating our access programs, my skills in report writing have increased

Strategic practitioners believe that evaluation findings motivate them to continue to improve their practice (statement 36,

Strategic practitioners are unlikely to experience evaluation influence because of their involvement in evaluation process, such as an increase in skill acquisition, including survey design (statement 12, distinguishing,

This factor represents the viewpoints of both administrative and managerial staff members and suggests that experiences of evaluation influence are not only predicated by job role and underlying professional values. For this factor, experiences of evaluation influence can be affected by how staff perceive themselves to be involved in the evaluation process and their perceptions of their institution's underlying motivations for participating in evaluation.

Factor 2: Pragmatic Practitioners

The experiences represented by factor 2 are held by staff in managerial positions or practitioners responsible for developing and delivering programs and who perceive themselves to be actively participating in evaluation activities in their department. Defining statements that are most like the experience of pragmatic practitioners include:

Statement 17: Since we have been evaluating our access programs, my skills in interpreting evaluation findings have increased Statement 20: I believe managers are persuaded to change our access strategy when our evaluation findings include a mix of qualitative and quantitative data

Defining statements that are least like the experience of pragmatic practitioners include:

Statement 33: Being involved in our access evaluations pushed me to reconceptualize my job role so that it specifically involves evaluation Statement 21: I believe managers are persuaded to change our access strategy when presented with the results of evaluations that used experimental approaches

Pragmatic practitioners experience an increase in skills including interpreting evaluation findings (statement 17,

Indeed, pragmatic practitioners experience evaluation as an empowering tool to persuade management to change strategy (statement 29,

The findings from this factor illustrate the institutionalization of evaluation in this policy context. Although they do not feel the need to reconceptualize their job roles to specifically involve evaluation (statement 33, distinguishing,

Factor 3: Staff Indirectly Involved in Widening Participation

Participants whose viewpoints are associated with factor 3 include senior leaders and an evaluation assistant, all of whom are indirectly involved in both the implementation and evaluation of their institution's access programs.

Defining statements that are most like the experience of staff indirectly involved in widening participation include:

Statement 24: Creating theories of change/logic models of our access programs confirms what I think about how our programs work in practice Statement 23: Our access evaluation findings reinforce what I think about the effectiveness of our programs

Defining statements that are least like the experience of staff indirectly involved in widening participation include:

Statement 11: Since we have been evaluating our access programs, my skills in working with others have increased Statement 32: Being involved in our access evaluations has inspired me to participate in further relevant training to improve my evaluation skills

Unlike the other factors, for staff indirectly involved in widening participation, evaluation findings, and creating theories of change reinforce and confirm what they think about the effectiveness of their programs (statement 23, distinguishing,

Although participants whose Q-sorts have loaded onto this factor explain their lack of involvement in evaluation, they receive evaluation reports. They perceive the process of elaborating on findings to generate a change in their views about their practice (statement 3,

There are some inconsistencies with the overall shared experience for staff indirectly involved in widening participation. On the one hand, evaluation is used to confirm their existing attitudes and beliefs about their institution's access programs. On the other hand, evaluation can also be used to change their viewpoints about their practice and whether to change programs. This illustrates the complexity of evaluation embedded within institutions. Evaluation of the same policy can confirm pre-held beliefs and, at the same time, change opinions, particularly when routinized and conducted across all activities delivered within an academic year.

Factor 4: Evaluation Enthusiasts

As a result of their involvement in evaluation, participants whose viewpoints are best represented by factor 4 experience greater motivation to improve their evaluation skills and reconceptualize their role to specifically involve evaluation. Interestingly, participants whose Q-sorts have loaded onto this factor are exclusively from Russell Group Universities. This could be because these institutions tend to prescribe more resources for their practice, but more research is required to understand why this pattern has emerged.

Defining statements that are most like the experience of evaluation enthusiasts include:

Statement 32: Being involved in our access evaluations has inspired me to participate in further relevant training to improve my evaluation skills Statement 33: Being involved in our access evaluations pushed me to reconceptualize my job role so that it specifically involves evaluation

Defining statements that are least like the experience of evaluation enthusiasts include:

Statement 21: I believe managers are persuaded to change our access strategy when presented with the results of evaluations that used experimental approaches Statement 18: I believe managers are persuaded to change our access strategy when our evaluation findings include mainly narrative and qualitative data

Evaluation enthusiasts are typically motivated to participate in more relevant training to improve their evaluation skills (statement 32, distinguishing,

From the perspective of evaluation enthusiasts, attitudinal change is most likely to be manifested in behavioral change via changes to programs (statement 8,

Like strategic practitioners, evaluation enthusiasts can identify change agents within their department, including staff who use evaluation to persuade them to change their access programs (statement 27,

Evaluation enthusiasts feel they experience increased skills because of their involvement in evaluation, including skills in interpreting evaluation findings (statement 17,

Consensus Across Factors

All factors are likely to experience motivation to evaluate their activities so that they comply with their access and participation plans (statement 37). Moreover, factors 1, 2, and 4 generally perceive managers to be persuaded to change their strategy when evaluation findings include qualitative and quantitative data (statement 20). For example, the Pro Vice-Chancellor for Widening Participation at Post-1992 University 2 associated with factor 1 referred to their own experience overseeing the strategy behind access and participation, stating, “As a scientist, I value the information that both a qualitative and quantitative approach bring and am most convinced by such an approach.” Similarly, a Practitioner for BAME from Russell Group University 2, whose views are most aligned with factor 2, discussed how they need to be pragmatic about the type of data presented to senior management.

The same statement was also significantly associated with factor 3, but least like their experience. Rather, it is generally their experience that managers are persuaded by evaluations that use experimental designs (statement 21). These differences may be due to their indirect involvement in access and the influences of the OfS standards of evidence on staff perceptions of what constitutes quality evaluation.

Discussion

These exploratory findings illustrate how staff responsible for access to higher education have experienced evaluation and its influence on their practice and strategy decision-making. Staff within higher education providers experience evaluation influence differently depending on their job role, their response to the evaluation system, and their motivations for being involved in evaluation activities. Each factor illustrates the varying pathways of evaluation influence at the individual, interpersonal, and collective levels. Figure 2 presents an adapted theory of evaluation influence that includes additional inputs, contexts and activities that this study has highlighted as important factors associated with evaluation influence, discussed next.

Adapted theory of evaluation influence.

Compliance and Adherence to the Evaluation System

While not explicitly covered in the theory of evaluation influence, organizational responsibility for evaluation is a core component of an evaluation system (Leeuw & Furubo, 2008). All factors experience motivation to evaluate their activities to comply with regulation. At the collective level, embedding evaluation activities into organizational structures can provide a sense of rationality and legitimacy (Raimondo, 2018). Factor 3, while indirectly involved in access programs, are more likely to use evaluation to confirm their pre-held beliefs about their institution's access work. Indeed, how staff members enact and respond to the need to comply with regulations differs. In widening participation, policy enactment is known to be an interpretive and individualized experience, and practitioners enact policy differently depending on their values and prior experiences (Rainford, 2021). The findings suggest that the process of enacting a widening participation policy and its evaluation differs depending on whether staff work in operational roles or more strategic roles and the extent to which their roles involve active participation in both access programs and their evaluation.

Fulfilling Job Requirements

Staff with different job responsibilities experience evaluation influence depending on their purpose for doing an evaluation. For example, participants whose views are associated with factor 2 have direct responsibility for delivering and evaluating access programs. Therefore, they experience individual and interpersonal level influences of evaluation that correspond with their job requirements. Evaluation empowers them to persuade management to change strategy through findings from mixed methods evaluations.

Alternatively, as a way of adapting to the requirements of the internal evaluation system, factor 4 experiences individual-level influences, including reshaping job roles and building skills in evaluation. In their view, evaluation is unlikely to convince senior leadership to change strategy because there is often not enough data to hold a persuasive argument. However, they understand that they can use evaluation strategically to make changes at a program level, a type of collective level change.

Evaluation to Support Strategic Aims

There is variation among factors between staff who feel empowered to use evaluation to persuade management to change strategy (interpersonal-level influence), those who feel they can only use evaluation to make program-level change, and those who use evaluation to confirm their pre-held beliefs. On the one hand, this behavior hints towards possible hindrances of evaluation in the context of evaluation systems by providing information useful to inform everyday practice rather than to question the effectiveness of policies (Leeuw & Furubo, 2008). On the other hand, it highlights the complexities of experiences and potential behaviors associated with evaluation and its influence (Mark & Henry, 2004). Organizational actors respond to evaluation in different ways. Some may feel empowered by evaluation to change strategy, and others may be more likely to utilize evaluation for symbolic purposes and be more prone to evaluation capture (Raimondo & Leeuw, 2021). These findings show that these behaviors can occur in the same organization for the same policy requirements.

Conclusion

Limitations

This study is not intended to provide generalizable findings. Rather, the benefits of using Q-methodology include its ability to categorize experiences of evaluation influences and the specific contexts underpinning them. However, the Q-statements only reflect some influence pathways at an individual, interpersonal, and collective level. This is because some types of influences are difficult to characterize in a singular statement and in a way that can be meaningful to a diversity of participants from within an organization. Additional data, including interviews with staff members and documentary analysis of their access and participation plans and evaluation reports, would have strengthened the study and allowed for a more holistic exploration of the theoretical framework. The flexibility for participants to complete the Q-sorting procedure online in their own time was a benefit of the study. However, future studies utilizing these methods could consider scheduling video calls with participants to work through the Q-sort the same way as if it were being implemented in person. This approach would likely provide richer qualitative feedback because the researcher could probe and ask follow-up questions.

Implications

Despite these limitations, this study has contributed to our knowledge of evaluation when it is conducted internally in the context of an evaluation system. Unlike prior studies, which center on the experiences of evaluators and/or their stakeholders, this study categorizes organizational actors’ experiences of evaluation as they respond to regulatory pressures to evaluate their access programs. By using Q-methodology, this study has identified four types of experiences related to evaluation and its influence from the view of strategic practitioners, pragmatic practitioners, staff with indirect involvement in widening participation, and evaluation enthusiasts. Overall, organizational actors respond to evaluation differently depending on their job roles and responsibilities, how they react to the evaluation system and regulatory requirements, and their motivations for being involved in the evaluation of their access programs. These practices have broad implications for whether evaluation maintains existing inequalities or contributes to improving social conditions.

Moreover, with the increasing institutionalization of evaluation (Dahler-Larsen, 2012), the findings from this study may resonate with the experiences of organizational actors responsible for evaluation in similar contexts, such as in other public sector domains with regulatory frameworks for evaluating policy. Drawing from these contexts, research on evaluation could benefit from continuing this conversation and examining the following research questions:

What factors influence how organizations enact evaluation policies and evaluation systems? How do organizational actors respond to evaluation systems when evaluation is mandated as a regulatory requirement?

Addressing research questions such as these centers on the experiences of people who affect and are affected by evaluations the most, thereby supporting evaluators to improve how they facilitate the enactment of evaluation systems. Moreover, these questions should promote an increase in evaluative research, adopting frameworks that explicitly consider organizational and political theories to aid our understanding of the influences of evaluation. This type of research will be particularly useful if we are to support the enactment of evaluation systems that promote deeper levels of evaluative thinking and learning from evaluation.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Economic and Social Research Council.