Abstract

Evaluation competency frameworks across the globe regard evaluation approaches as important to know and use in practice. Prior classifications have been developed to aid in understanding important differences among varying approaches. Nevertheless, there is an opportunity for a new classification of evaluation approaches, in particular one that is practitioner-oriented, intended to guide decision-making in practice, and inclusive of all scholarship. The evaluation garden presented in this article begins to map approaches against eight dimensions of practice and situates them in their philosophical orientations and methodological dispositions. This allows for approach comparison, a more nuanced understanding of where they overlap and differ, and how and where they can be intentionally combined. The goal is to offer a visual classification that addresses prior criticisms, that is of use to a wide range of audiences, and that helps evaluation practitioners be able to more easily integrate evaluation approaches in practice.

Keywords

Evaluation approaches are essential to and for evaluation practice. From competency frameworks adopted by voluntary organizations for professional evaluation, we know that evaluation approaches are important for evaluators to know and use in practice. Knowledge of and ability to use evaluation approaches is explicitly identified, for example, in the professional practice domain of the AEA Evaluator Competencies (American Evaluation Association, 2018), in the evaluation knowledge domain of the Evaluation Capabilities Framework (European Evaluation Society, 2011), in the evaluation knowledge domain of the Framework of Evaluation Capabilities (UK Evaluation Society, 2012), and in the reflective practice domain of Competencies for Canadian Evaluators (Canadian Evaluation Society, 2018). The Canadian Evaluation Society even offers a 6-hour, self-paced course on the fundamentals of evaluation approaches (https://einstitute.evaluationcanada.ca/products/evaluation-theories-and-models/).

The importance of evaluation approaches is also reinforced by others within the evaluation ecosystem. The United Nations Evaluation Group's (UNEG's) Evaluation Competency Framework (2016) states that “a solid understanding of evaluation theory and practice in the context of United Nations evaluation practice, including the aims, processes and intended results of evaluation” is important for practice (p. 8, emphasis added). Recently, UNEG published a compendium of evaluation approaches, the first in a series of planned volumes on the subject. It is not unheard of for requests for proposals (RFPs) or terms of reference (TORs) issued by commissioners and funders to specifically request the use of a particular evaluation approach (UNEG, 2020).

We also know that to what extent, how, and to what end evaluation approaches inform practice is not fully understood (Christie & Lemire, 2019; Coryn et al., 2011; Hurteau et al., 2009). What evidence does exist suggests that the implementation of an approach does not always follow the prototype offered by developers (Alkin & Christie, 2023). One suggestion put forth for the seemingly weak link between evaluation approaches and practice is that many evaluator education and training efforts do not include serious attention to the subject (Dewey et al., 2008). Even if they do, learning how to use approaches as thinking tools to inform practice is even less frequently addressed (Bledsoe & Graham, 2005; Boyce & McGowan, 2019).

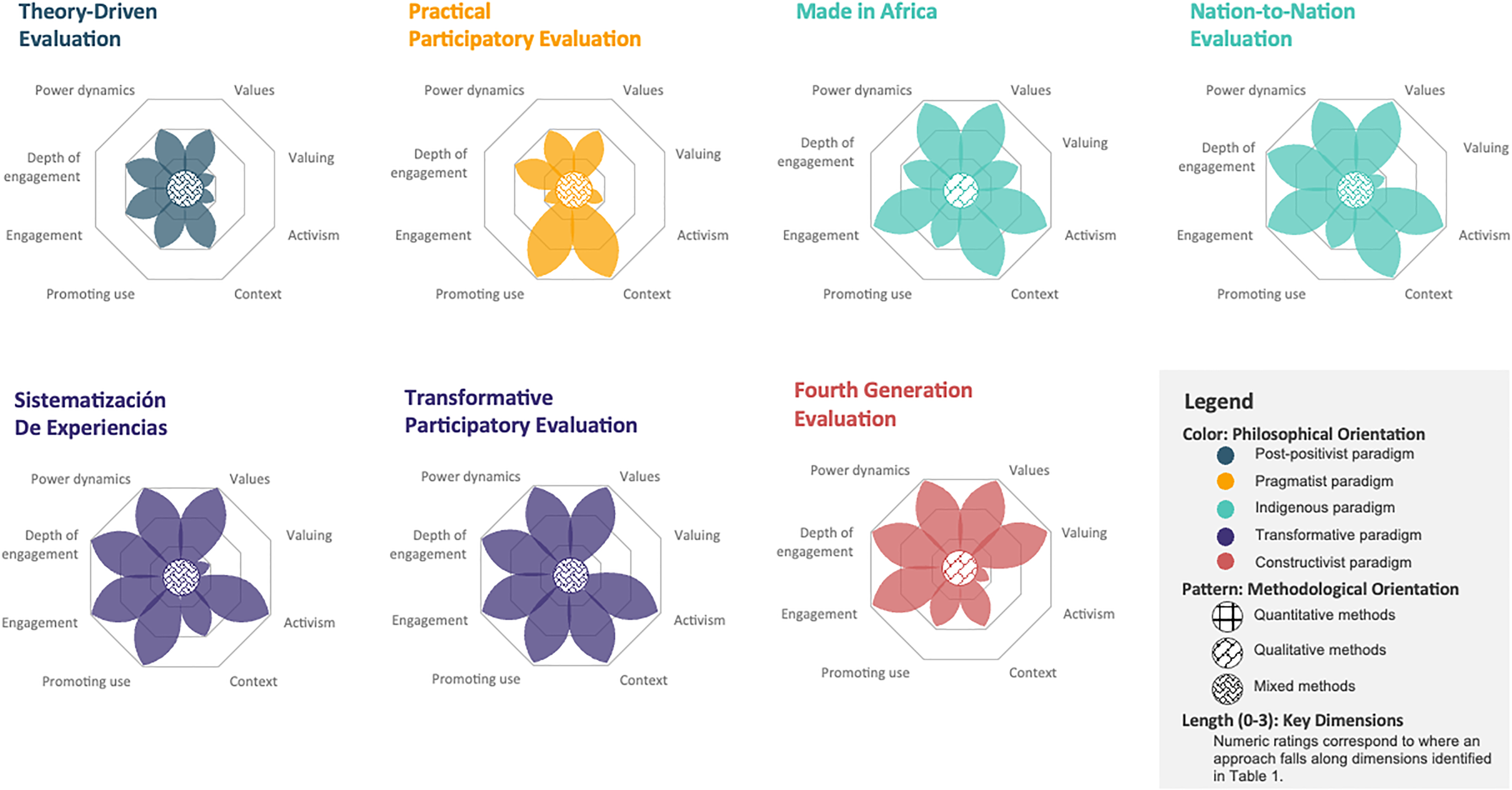

This article takes these barriers head-on by presenting a visual aid—an evaluation garden—that is intended to provide an organizing structure for evaluation approaches and to inform evaluation practice. The guiding question for this evaluation garden is: How do evaluation approaches compare in terms of dimensions that facilitate use and practical application? We examine eight dimensions of each approach for practical consideration. These include the approaches’ treatment of: (a) values; (b) valuing; (c) activism for social justice; (d) context; (e) promoting use; (f) who is engaged in the evaluation process and (g) at what depth; and (h) power dynamics in making evaluation decisions. Visually, we represent each approach as a flower and the eight dimensions as petals whose lengths correspond to each dimension's manifestation within the approach. Additionally, the evaluation garden sustains important consideration of paradigms and methods promulgated by particular approaches.

This article is a first introduction to the evaluation garden and illustrates its potential utility using seven approaches (fourth generation evaluation, made in Africa, nation-to-nation evaluation, practical participatory evaluation, theory-driven evaluation, transformative participatory evaluation, and sistematización de experiencias). It represents one part of our larger effort to classify a wider range of evaluation approaches. Our ultimate aim is to present a complete garden that captures historically prominent, under acknowledged, and emerging approaches to evaluation, and do so in a way that facilitates understanding and use in practice.

Prior Scholarship on Classifications for Evaluation Approaches

The literature talks about evaluation approaches in three general ways. These include what ought to be done in carrying out an evaluation, thick descriptions of how sociological, political, or psychological perspectives apply to the conduct of evaluation, or meta-theories about the nature of the discipline of evaluation itself (Scriven & Davidson, 2021). Here, we are concerned with the first and second of these meanings.

Evaluation approaches serve as a body of knowledge that provides foundational principles and practical guidance for program evaluation (Alkin, 2012; Mathison, 2004). This collection of literature provides a framework to understand what is unique about evaluation, what distinguishes it from other related fields and disciplines, and important elements to consider when conducting an evaluation (Montrosse-Moorhead et al., 2017; Shadish et al., 1991; Stufflebeam & Coryn, 2014).

In scholarship on classifications, three terms—approaches, theory, and models—are often used interchangeably. For example, in iterations of the evaluation theory tree (Alkin, 2004, 2012; Alkin & Christie, 2023), these terms are used interchangeably. Included articles in a special section of Evaluation and Program Planning devoted to using logic models to map approaches as a way to facilitate comparisons, these terms are used interchangeably (Alkin et al., 2013). Major evaluation textbooks also use these terms interchangeably (e.g., Mertens & Wilson, 2018). In keeping with suggestions offered by others (Alkin, 2004; Fitzpatrick et al., 2023; Stufflebeam & Coryn, 2014), we prefer and use the term approach.

As evaluation grows as a discipline and practice, the number of existing approaches is proliferating. Acknowledging this variety, Miller put forth five criteria for guiding research on the evaluation approach-practice relationship, including operational specificity, range of application, feasibility of practice, discernible impact, and reproducibility (2010). However, approaches differ greatly in their empirical basis and completeness.

Correspondingly, multiple classification frameworks have been employed as organizational structures to communicate and teach diverse evaluation approaches. Defined by Merriam-Webster (n.d.) as a “basic conceptual structure,” frameworks are “a set of ideas, conditions, or assumptions that determine how something will be approached, perceived, or understood.” Each conceptual framework of evaluation presents assumptions of relationships and connections intended to aid a user's understanding and comprehension. Classification frameworks may be used not only to organize information, but also to purposefully provide an inherent analysis of or perspective on the information. For example, some classifications for evaluation approaches advance a historical understanding of approach development (Shadish et al., 1991), while others are based on philosophical orientations (e.g., Alkin, 2012). Each classification framework serves a unique purpose.

Existing classifications for evaluation approaches differ in their organization, formats, and assumptions. Some classifications for evaluation approaches are presented in the form of an object, such as a tree (e.g., Alkin, 2012; Mertens & Wilson, 2018) or a river (Azzam & Donaldson, 2015), while others mimic a historical timeline (e.g., Shadish et al., 1991). Each is organized around a motivating question and is particularly useful for specific purposes and audiences. Each classification also has limitations, leaving certain elements hidden or unaddressed. Given space, time, and perspective restrictions, no single classification for evaluation approaches can capture the totality of approaches. The dimensions of five highly-cited classifications for evaluation approaches are summarized in Appendix A: Stufflebeam's coherence with evaluation definition classification (Stufflebeam & Coryn, 2014; Stufflebeam & Shinkfield, 2007), Fitzpatrick et al.'s (2023) evaluation approach orientation classification, Alkin's (Alkin, 2004, 2012; Alkin & Christie, 2023) evaluation theory tree, Mertens and Wilson's (2018) evaluation theory tree, and Shadish et al.'s (1991) evaluation theory stages classification. Alternative notable, albeit less-cited, frameworks are not included in this appendix: Azzam and Donaldson's river (2015), Vaca's evaluation periodic table (2017), and Lemire, Peck, et al.'s evaluation tree in the policy analysis forest (2020).

Most of the existing classifications are focused on approaches created by white American, Western European, and Australian scholars. Some have added to existing classifications by incorporating missing approaches. For example, Thomas and Campbell (2020), building on the work of the No One Knows My Name project (Hood, 2001), explicitly identify hidden figures in their discussion of evaluation history and approaches. These hidden figures include U.S.-based African American and Black evaluators and evaluators who identify as women, all making laudable contributions to the field.

Nevertheless, there is an opportunity for a new classification for evaluation approaches, in particular one that is practitioner-oriented, intended to guide decision-making in practice, and inclusive of all scholarship. In contrast to current classifications for evaluation approaches, which often structure an understanding of the historical development and limited landscape of approaches, a practitioner-oriented classification would lead to easy comparison of dimensions across approaches and enable the weaving of multiple approaches in a single evaluation to maximize evaluation quality.

The Garden of Evaluation Approaches

Humans live by metaphors (Lakoff & Johnson, 2008). We experimented with several metaphors for this work, and ultimately chose the metaphor of a garden of evaluation, with each approach represented by a flower. Prior published work to classify approaches inspired this decision. Moreover, the work of Vidhya Shanker, Nicole Bowman, and Jara Dean-Coffey who in presentations, discussions, or classes have proposed complex adaptive ecosystem classifications or metaphors to think about evaluation approaches also stimulated the garden metaphor (e.g., Shanker, 2023). We have also taken inspiration from these ideas. Ideas, like pollen, spread and we as a team of scholars have benefitted from prior work on evaluation approaches.

The flower metaphor, in our view, has several advantages. One: each petal stands for a particular dimension that distinguishes evaluation approaches in practice. Which dimensions are discussed and to what end are easily seen by the presence or absence of petals and by the lengths of petals. Two: the garden metaphor allows for flowers to evolve and change over time, making space for innovation, cross-pollination, and weaving. There is emerging work on the mixing of approaches. Bledsoe and Graham (2005), for example, offer a case example of how they combined elements of five evaluation approaches (empowerment evaluation, theory-driven evaluation, consumer-based evaluation, inclusive evaluation, and use-focused evaluation) to design and carry out an evaluation of an early childhood literacy program. Lemire and colleagues’ work on “theory knitting” shows how “one or more social science theories [can be used] as the conceptual grounding for both the design of the program and the program theory” and how social science theories and program theory can be used within a theory-driven evaluation approach (Lemire, Christie, et al., 2020). Our flower metaphor builds upon the idea that approaches can be mixed and can be useful for visualizing the influences of different approaches on practice. In a garden, new flowers can evolve, and new portions of the garden can be uncovered, e.g., other plants, insects that cross-pollinate, bushes, and weeds.

The evaluation garden described here is experiential, practical, and analytico-synthetic (Batley, 2014). It is experiential in the sense that it emerged from our experiences in teaching evaluation approaches in formal and informal settings. We have facilitated evaluation approach learning opportunities locally, nationally, and internationally—experiences ranging from bite-sized, hour-long sessions to multiday training opportunities facilitated at professional conferences and other events. In formal education settings, we have taught evaluation approaches as part of undergraduate and graduate coursework since 2005. The evaluation garden described here is intended to facilitate learning about differing approaches to evaluation as preparation for practice or to improve practice in dynamic and complex contexts.

The evaluation garden is practical in the sense that a key motivation for its development was to inform the practice of evaluation. One use for this new visualization is for instructors and trainers to engage learners of evaluation. We have successfully integrated this evaluation garden into a classroom exercise where students classify approaches according to their readings and interpretations of primary and secondary materials. This exercise aids students in identifying key dimensions that inform practice and comparing approaches. A second use for this new visualization is for practitioners to identify approaches that can be responsive to unique evaluation challenges and contexts, thus presenting solutions. It can also aid practitioners in effectively communicating with evaluation sponsors and audiences about the best approaches to choose and combine to optimize learning and improvement.

We have purposefully chosen to create a new visualization that is grounded in dimensions that make evaluation approaches unique, and that allows for a visual overview of approaches’ similarities and differences—a view that lets practitioners easily compare approaches across multiple dimensions. We perceive the easy comparability and visual exemplification of dimensions as core advantages that may facilitate learning and use. For all of these reasons, instructors, trainers, practitioners, learners, evaluation sponsors, and audiences may benefit from this visualization.

Lastly, this evaluation garden is analytico-synthetic. It is analytic in the sense that it aims to analyze a subject—in this case evaluation approaches—for use in sense-making and practice. In doing so, it synthesizes several key dimensions to fully describe and illuminate key aspects of evaluation practice. We describe each of these key dimensions in the next section.

Evaluation Flowers

Each evaluation flower consists of three layers: philosophical orientation (illustrated by color), methodological disposition (illustrated by center pattern), and key dimensions (represented by petals of different lengths; see Figure 1). The paragraphs that follow describe each of these.

The garden of evaluation approaches: A multidimensional mapping of the first seven flowers.

The philosophical orientations are based on those commonly discussed in evaluation literature (Alkin, 2012; Mertens & Wilson, 2018; Thomas & Campbell, 2020). Thus, the philosophies of social science or paradigms represented include post-positivism (teal), pragmatism/instrumentalism (yellow, herein referred to as pragmatism), constructivism/interpretivism (red, herein referred to as constructivism), and criticalism/transformatism (purple, herein referred to as transformatism). 1 We also added a fifth orientation, Indigenous philosophy (cyan). While Indigenous philosophy is not yet considered mainstream in evaluation (Chilisa, 2019), we include this frame in light of the growing scholarship on and recognition of the contributions of First Nations peoples to the philosophy of science (Stevens, 2021; We All Count, n.d.).

Methodological dispositions, as traditionally categorized, relate to the extent to which a preferred research method is identified and described in the literature for a given evaluation approach. These research options broadly include quantitative, qualitative, and mixed methodologies (Mertens & Wilson, 2018; Thomas & Campbell, 2020). We made a deliberate decision not to include a fourth category called “transformative methods”; we elaborate on that decision in the text that follows.

There are many, many resources written on methodology, both within and outside of evaluation. Those that were most influential to our thinking were the writings of: Abbott and Abbott (2004); Harding (1986, 1987); Held (2019); Kemmis et al. (2014); Reason and Heron (2004); Romm (2015); and Schwandt (2015). Several key ideas emerged from engaging with these texts.

One: we agree with the general consensus in this set of writings that issues of methods, methodology, and epistemology have been intertwined, often confusingly so. Two: we find Harding's writing on whether there is a feminist method or methodology particularly persuasive, and recognize its implications for the broader question of whether there is a transformative method or methodology. Three: we agree with Abbott's observation that there is no one way to categorize methods or methodologies and no widespread agreement about the appropriate dimensions on which to array them. Thus, we have tried to represent our thinking as transparently as possible, understanding that others may have made different decisions or have divergent ways of thinking about and representing these ideas. We do not claim that ours is the way to view methods, but rather one way that helps illuminate ideas about evaluation practice.

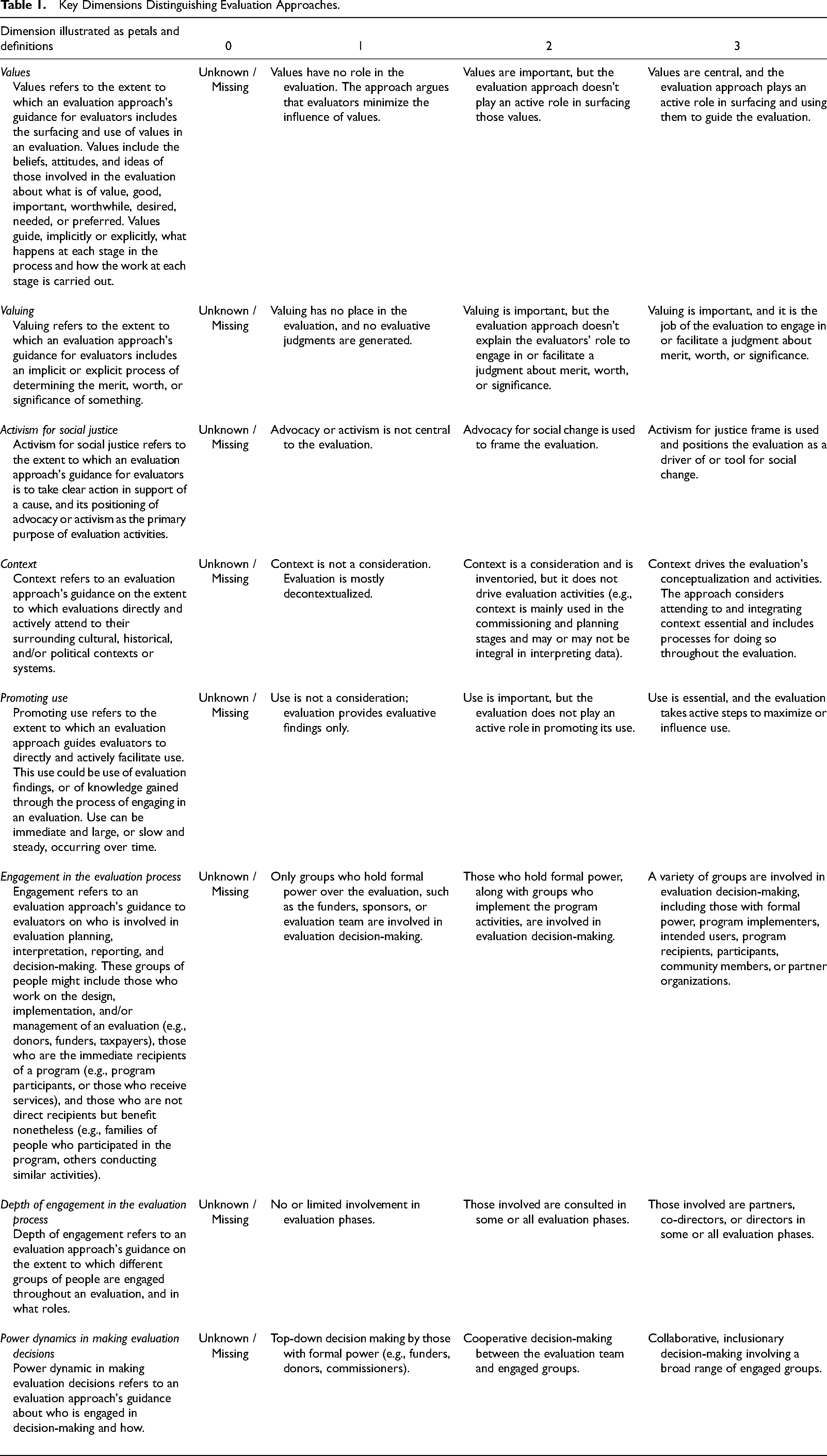

Returning to our evaluation flower, the eight petals, which represent the key dimensions of an evaluation approach, include: (1) values; (2) valuing; (3) activism for social justice; (4) context; (5) promoting use; (6) who is engaged in the evaluation process, and (7) at what depth; and (8) power dynamics in making evaluation decisions. Table 1 includes each of these eight dimensions illustrated as petals, a definition for each, and the rubric used to summarize each dimension for a particular approach.

Key Dimensions Distinguishing Evaluation Approaches.

With the first two petals, the flower represents the approaches in terms of values (first petal) and valuing (second petal). Originally, we used one dimension (meaning one petal) to represent both values and valuing. However, after extensive discussion and use, we separated these dimensions to explicitly differentiate the identification of values from the evaluation-specific valuing process. Here, values are understood as the beliefs, attitudes, and ideas of those involved in the evaluation about what is of value, good, important, worthwhile, desired, needed, preferred, and so on (e.g., House & Howe, 2000; Julnes, 2012). Valuing is understood as the process of combining data and standards to reach evaluative conclusions (Gullickson, 2020). Within these petals, we differentiate approaches in which values and valuing have no place represented by a rating of 1; from those in which values and valuing are important, but not necessarily described represented by a rating of 2; from those approaches in which values and valuing are of central importance represented by a rating of 3. Guba and Lincoln's (1989) fourth generation evaluation is an example of an approach in which values and valuing are central.

The third petal, activism for social justice, expresses the extent to which an evaluation approach has social justice concerns at its core. An approach that is traditionally classified as an objective strategy for evaluation (e.g., a causal inference approach) would be characterized by its lack of consideration of social justice concerns, represented by a rating of 1. An approach that is advocacy-oriented in that it supports advocacy for social change, but that does not take clear steps toward direct activism, would receive a rating of 2. Approaches with a rating of 3 on this petal fully integrate issues of activism as a core component. Social justice concerns in evaluation were first discussed by Barry MacDonald (1976) and Ernest House (1980), and now include a wide range of approaches, such as critical race theory, feminist evaluation, queer theory, disability theory, transformative evaluation, empowerment evaluation, participatory evaluation, deliberative democratic evaluation, and equity-focused evaluation, to name but a few.

Scholarship on the importance of context in evaluation practice (Rog et al., 2012), including scholarship by Indigenous authors (Chilisa, 2019), influenced the fourth petal, context. Our classification distinguishes approaches that are decontextualized (these approaches receive a rating of 1); from approaches where context is inventoried or described, but it is not used as a major driver of evaluation activities (e.g., theory-driven evaluation; these approaches receive a rating of 2); from others where context is a major driver of evaluation procedures (e.g., made in Africa) and where attending to and integrating context is essential (these approaches receive a rating of 3).

The fifth petal, promoting use, acknowledges the long history and dedication of evaluation to use (King & Alkin, 2019). Here we distinguish approaches by how they [the approaches] characterize the need to encourage and facilitate use. At a rating of 1, approaches do not focus on use at all. At a rating of 2, approaches might acknowledge the importance of use but do not prescribe actions to go beyond providing information. At a rating of 3, approaches take active steps to maximize or influence use. The most widely known and influential approach related to use is Patton's utilization-focused evaluation, in which intended users and uses of an evaluation are engaged from the beginning (Patton & Campbell-Patton, 2021).

The next three petals were influenced by Cousins and colleagues’ work on participation in evaluation (Cousins & Chouinard, 2012; Cousins & Whitmore, 1998). The sixth petal, engagement in the evaluation process, shows the approach's treatment of engagement in the evaluation process, meaning who is included in the evaluation. Cousins and Whitmore's (1998) scholarship frames this issue as being on a continuum from only primary users being engaged to all legitimate groups being engaged. We, on the other hand, differentiate approaches in which only groups who hold formal power over the evaluation are involved (these approaches receive a rating of 1); those who hold formal power and groups who implement the program activities are involved (these approaches receive a rating of 2); and those where a variety of groups are involved, including those with formal power, program implementers, intended users, program recipients, participants, community members, or partner organizations (these approaches receive a rating of 3). Youth-participatory evaluation is an example of an approach that engages a wider set of groups (Montrosse-Moorhead et al., 2019).

The seventh petal illustrates the depth of engagement in the evaluation process. Our classification distinguishes approaches in which those involved have no or limited involvement in any phase of the evaluation (these approaches receive a rank of 1) from approaches in which those involved are consulted in some or all phases (these approaches receive a rank of 2) to approaches in which those involved are partners or co-directors in some or all phases of an evaluation (these approaches receive a rank of 3). Goal-free evaluation is an example of an approach where groups have no or limited involvement throughout the evaluation (Scriven, 1973).

The eighth petal, power dynamics in making evaluation decisions, centers on who controls what is done during the evaluation (process) and how it is done (conduct). Cousins and Whitmore (1998) defined it as being on a continuum from researcher-controlled to practitioner-controlled. In contrast, we differentiate approaches in which those with formal power retain decision-making authority (these approaches receive a rank of 1), from those that allow for cooperative decision-making between the evaluator and engaged groups (these approaches receive a rank of 2), and those that are truly collaborative, with inclusionary decision-making involving a broad range of engaged groups (these approaches receive a rank of 3). Fetterman et al. (2017) show, for example, that evaluator control is characteristic of collaborative approaches to evaluation, joint control is characteristic of participatory approaches, and control by others (staff, participants, community, etc.) is characteristic of empowerment approaches.

The Garden of Evaluation Approaches: Seven Flowers Up Close

To create a practical resource based on this conceptual classification system, we began to create evaluation approach flowers based on the approach descriptions in the literature. In this section, we lay out how we decided which approaches with which to start mapping the evaluation garden. Next, we describe our process for generating ratings. In the final section, we share our flowers for seven approaches and focus the discussion on what our evaluation garden makes visible.

How Approaches Were Selected

The landscape of evaluation approaches is proliferating, and while we extensively cover a vast selection of evaluation approaches in our formal and informal teaching, our small team did not allow for a full mapping of all extant approaches at this time. Therefore, we needed to make decisions about which approaches to map first. Five aspects, considered jointly, guided our decision about which approaches to select. One: we knew that our garden metaphor needed to work equally well for approaches developed early on in the field's history and contemporary approaches. Two: we wanted to choose approaches that varied in terms of their philosophical foundations and preferred research methods building on Mertens and Wilson's (2018) work. Three: we wanted to select approaches that were anchored around methods, values, use, or social justice as had been done in other classifications (e.g., Alkin, 2012; Mertens & Wilson, 2018). Four: we were aware of ongoing critiques of existing classification systems as being exclusionary (Thomas & Campbell, 2020), and practically, we wanted to select at least a few approaches that most evaluation practitioners would know. All of these collective considerations led us to choose seven approaches to start creating flowers in our evaluation garden (listed alphabetically): (1) fourth generation evaluation, (2) made in Africa, (3) nation-to-nation evaluation, (4) practical participatory evaluation, (5) theory-driven evaluation, (6) transformative participatory evaluation, and (7) sistematización de experiencias.

How Ratings Were Generated

Before we rated the selected approaches, we made several decisions. The first was which version of the approach to rate. We were aware that approaches developed early on had been refined over time. Because we were interested in visualizing approaches as they are currently understood rather than their historical evolution, we opted to use the most recent writing of each particular approach.

We also needed to decide which types of sources we would use to develop our ratings and subsequent evaluation flowers. There has been much writing on these approaches, encompassing not only the perspectives of those who created the approaches, but also others’ understanding and interpretation of them (e.g., Mertens & Wilson, 2018; Shadish et al., 1991), and we needed to decide if we would include these writings in our analysis. Grounded in our guiding purpose to develop a visualization of approaches as promulgated by their major authors, we pulled only from primary sources as we generated the ratings.

Originally, we had tried to include petals for the philosophical foundation and methodological disposition of each approach. However, upon looking at our rating rubric, we noticed that we had an issue with mixing levels of measurement (i.e., some ratings were ordinal; others were nominal). To fix this, we made several changes to our rating rubric. One: all petals would be based on a rubric with an ordinal scale. Two: the philosophical foundation and methodological disposition of each approach would be incorporated differently. The color of the flower would now reflect the underlying philosophical foundation of the approach (teal represents approaches within a post-positivist frame, yellow represents approaches within a pragmatist frame, red represents approaches within a constructivist frame, purple represents approaches within a transformative frame, and cyan represents approaches within an Indigenous frame). The pattern within the central disc of each flower would now reflect the methodological disposition promoted by the approach. Approaches favoring quantitative methods are visualized by a disc filled with a large grid, inspired by the graph paper often used in mathematics. Approaches favoring qualitative methods are visualized by a disc filled with diagonal brick, inspired by the bricolage concept used in qualitative methods. Approaches favoring mixed methods are visualized by a disc filled with a woven pattern, inspired by the weaving of methods.

Once these decisions were made, we each selected approaches to review and identified primary sources to read. To document and make transparent why we were rating each approach the way we were, we created a handout for each approach. Each handout included our eight dimensions, ratings, and evidence from primary sources used to justify the ratings. We used these ratings to create the flower for each approach, and we included each approach's flower at the top of its handout. Below the rating rubric on each handout, we also included information on steps for implementing the approach in practice, our critical reflection on the philosophical foundation of the approach, a list of references we read and used to create the ratings, and a list of additional readings. Final versions of the rating handouts, which present a summary of evaluation approaches as currently represented in the literature, are available online at https://osf.io/mkxgc/.

What Is Visible Across Seven Approach Flowers

Figure 1 illustrates the seven flowers that we have mapped to date in our evaluation garden. A close-up of each flower can be viewed online at https://osf.io/mkxgc. Several important ideas for practice are illuminated through our multidimensional mapping.

At first glance, it is evident that no flower looks the same as any other. Each evaluation approach is different in practice, and in the evaluation garden these differences are immediately visible. In the following we discuss differences according to each dimension.

As shown, the approach garden has flowers in the five philosophies or paradigms. For example, theory-driven evaluation represents the post-positivist paradigm; practical participatory evaluation is pragmatist; fourth generation evaluation reflects constructivism; sistematización de experiencias and transformative participatory evaluation represent transformatism; and nation-to-nation evaluation and made in Africa reflect the Indigenous paradigm. Particularly interesting here is the example of practical participatory evaluation and transformative participatory evaluation. These two approaches are often discussed in tandem, but our garden illuminates that, philosophically, the two are not located in the same paradigm. Moreover, the two flowers match one another in only three of their eight petals (context, promoting use, and valuing).

Additionally, extant evaluation literature often frames (post-)positivism as preferring quantitative methods, constructivism as preferring qualitative methods, pragmatism as preferring mixed methods, and transformatism as preferring transformative methods (Mertens & Wilson, 2018). Our framework demonstrates that, in practice, these distinctions do not hold as neatly in evaluation as they do in the social sciences. For example, theory-driven evaluation is rooted in a post-positivism frame and at the same time advocates for the use of mixed methods. Made in Africa is rooted in an Indigenous frame and also positions qualitative methods as being preferred. As another example, while nation-to-nation evaluation and made in Africa share the same philosophical foundation (Indigenous), they differ in their preferred methods, as evidenced by different discs at the center of each flower. On the other hand, both sistematización de experiencias and transformative participatory evaluation are rooted in transformatism and both advocate for the use of mixed methods. In short, our garden adds nuance to the conversation about how the philosophies of science literature show up in evaluation approaches.

Focusing on the petals also highlights important similarities and differences. Activism for social justice is either central (a score of 3) or not central (a score of 1) for the approaches included in this paper. For example, theory-driven evaluation, practical participatory evaluation, and fourth generation evaluation do not position advocacy or activism as central to the evaluation. The remaining approaches (nation-to-nation evaluation, sistematización de experiencias, transformative participatory evaluation, and made in Africa) take the opposite position: advocacy or activism are central.

Context inventories are important in all approaches we reviewed; none of the approaches included in our list are decontextualized. Context is particularly essential throughout the evaluation process within practical participatory evaluation, nation-to-nation evaluation, transformative participatory evaluation, and made in Africa. While theory-driven evaluation, fourth generation evaluation, and sistematización de experiencias inventory contextual aspects of the evaluation object, in these approaches context does not serve as a driver of all aspects of the evaluation as it does in the other approaches.

Promoting use is essential, to some extent, in all approaches included in this article. Use is a central feature of practical participatory evaluation, sistematización de experiencias, and transformative participatory evaluation. All of these approaches discuss the active role the evaluation must play in promoting use. Theory-driven evaluation, fourth generation evaluation, nation-to-nation evaluation, and made in Africa position use as important, but the evaluation does not play an active role in trying to maximize use.

Values have a role in all approaches included in this paper. Values are particularly central in fourth generation evaluation, nation-to-nation evaluation, sistematización de experiencias, transformative participatory evaluation, and made in Africa. In these approaches, values are explicitly surfaced and used throughout the evaluation process. On the other hand, theory-driven evaluation and practical participatory evaluation discuss the importance of values, but not how to surface and use them.

Valuing is not addressed in the literature of three of the approaches discussed here. Another three approaches suggest that valuing has no place in evaluation. Only fourth generation evaluation explicitly discusses the importance of valuing and that it is the job of the evaluation to facilitate an evaluative judgment. The remaining approaches either take the position that evaluative judgments are not generated under the purview of the evaluation (theory-driven evaluation, nation-to-nation evaluation, sistematización de experiencias), or they offer no commentary on valuing at all, meaning that their position is unknown (practical participatory evaluation, transformative participatory evaluation, made in Africa).

Engagement in the evaluation process is important in many approaches. Fourth generation evaluation, nation-to-nation evaluation, sistematización de experiencias, transformative participatory evaluation, and made in Africa all take the position that a variety of groups are involved in evaluation decision-making, including those with formal power, program implementers, intended users, program recipients, participants, community members, and/or partner organizations. Theory-driven evaluation takes a narrower position, arguing that those who hold formal power, along with groups who implement the program activities, ought to be involved in evaluation decision-making. Practical participatory evaluation takes the narrowest position, including only groups who hold formal power over the evaluation, such as the funders, sponsors, or evaluation team, in evaluation decision-making.

All approaches discussed here suggest some degree of depth of engagement. In terms of depth of engagement in the evaluation process, fourth generation evaluation, nation-to-nation evaluation, sistematización de experiencias, and transformative participatory evaluation, include partners, co-directors, or directors in some or all evaluation phases. On the other hand, theory-driven evaluation, practical participatory evaluation, and made in Africa consult those involved in some or all evaluation phases.

Finally, despite being rooted in different philosophical foundations, fourth generation evaluation, nation-to-nation evaluation, sistematización de experiencias, transformative participatory evaluation, and made in Africa all hold the same position on the power dynamics involved in making evaluation decisions. They all advocate for a collaborative, inclusionary decision-making process involving a broad range of engaged groups. In contrast, theory-driven evaluation and practical participatory evaluation advocate for cooperative decision-making between the evaluation team and engaged groups. Notably, none of the approaches discussed here suggest a top-down decision-making process only.

Conclusions

In this final section, we turn our attention to the advantages we see in this new visual aid for categorizing evaluation approaches. We also discuss potential uses of the evaluation garden. With any classification system, there are challenges, and ours is no exception. We highlight these challenges and conclude with opportunities for further development.

Advantages and Opportunities

There are several advantages of our evaluation garden metaphor and corresponding flowers. One is that, to the best of our knowledge, our classification is the first to call attention to and visualize the multidimensional nature of practice. While it is true that other classifications do talk about more than one aspect of evaluation practice, none have incorporated that information into their corresponding classifications or visualizations.

The nascent evaluation garden is the only classification that includes nation-to-nation evaluation and made in Africa approaches. While it is true that one other classification system previously included sistematización de experiencias (Carden & Alkin, 2012), the most recent iteration excludes it (Alkin & Christie, 2023). This makes the evaluation garden the only classification where it is currently included. Collectively, these purposeful additions highlight how the evaluation garden is responsive to criticisms of other classifications as being exclusionary, especially for approaches developed by evaluators of color and women and approaches developed outside of American, Western European, and Australian contexts (Shanker, 2023; Thomas & Campbell, 2020).

By taking this approach, it becomes easier to make observations about several approaches, including those that have similar names (e.g., practical participatory evaluation and transformative participatory evaluation). We highlight two important observations from our evaluation garden to further illustrate this point.

It is commonly understood that valuing is fundamental to evaluation practice (Fournier, 2005; Gates & Schwandt, 2023). Of the approaches included in our garden, only one (fourth generation evaluation) provides an explicit discussion of valuing in evaluation. The rest either provide no statement at all (practical participatory evaluation, transformative participatory evaluation, and made in Africa), or they state that valuing has no place in the evaluation, and they shy away from generating evaluative judgments (theory-driven evaluation, nation-to-nation evaluation, and sistematización de experiencias). This observation was unexpected. Our view is that this observation is deeply problematic and has implications for evaluation practice and education, and for evaluation theoreticians. There is some literature supporting the claim that valuing is an understudied area, wrought with conceptual confusions, and that it is not being adequately taught in evaluation programs (Ozeki et al., 2019). Even those who disagree will surely see value in this observation; it warrants further work.

Another observation when looking across the garden is that when it comes to the issue of activism for social justice, approaches either fully embrace it or take a position that it is not central to the work of evaluators. There are no approaches in our current garden that take an “advocacy stance,” which some might frame as a middle ground. We recognize that discourse on an evaluator's role in advancing social justice is ongoing (Neubauer et al., 2020), and the evaluation garden adds an important observation to this debate.

Beyond these two examples of observations that come out of our evaluation garden, we also contend that comparability is especially helpful for new and emerging evaluators, and seasoned evaluators wishing to learn about newer approaches to practice. Moreover, by anchoring the evaluation approach flowers to detailed handouts for each approach, the classification provides users with clearly conceptualized, literature-based, empirically-derived, and visually accessible summaries of nuanced approaches to evaluation.

Potential Uses

Recognizing that the final evaluation garden we are in the process of mapping is intended to be inclusive of all possible approaches, there are several potential uses. Using the garden, the flowers and summaries of evaluation approaches as currently represented in the literature (see handouts posted at https://osf.io/mkxgc/), users will also be able to have thoughtful conversations about which approaches to use, and whether and how to combine approaches. For example, users will be aware of what they might miss by opting for one choice over another. It might spur discussions about what works best for the social context in which their evaluation work is occurring. If they created their own flowers based on these discussions, it would allow for greater transparency about where mixing or weaving is occurring. Our hunch, based on anecdotal evidence, is that evaluation practitioners view approaches as “tools” and use aspects of different approaches that work well in a particular context. So our visualization can both help guide those conversations and help evaluators be transparent about their decisions.

We also believe the garden metaphor will be helpful in evaluation education and training efforts. Instructors may be better able to showcase the variability of practice using the garden. Even when instructors cannot present every approach in a context, the garden metaphor can assist in building awareness around other ways to approach evaluation practice. Alternatively, instructors might have students try to create their own evaluation flowers and associated handouts based on close readings of relevant texts. Those offering professional development likewise could facilitate the creation of each practitioner's evaluation flower.

Researchers could use our evaluation garden as a conceptual framework for research focused on evaluation practice and approaches. For example, scholars could create flowers that represent the idealized version of an approach (its prototype) versus what it looks like when applied in the real world. Similarly, there is an opportunity to add dimensions (i.e., additional petals) to the visualization based on new (or newly reviewed) evaluation literature. Furthermore, scholars could map the evolution of an approach across time. They could use it to map how practitioners make decisions about how to choose, and if applicable, combine approaches.

Finally, visualizing approaches based on extant literature identified gaps in the scholarship for particular approaches, reflected by missing petals. These present opportunities for scholars to elaborate and expand discussions on existing approaches. In essence, our visualization might also inspire evaluators to build upon extant literature. For example, missing petals provide opportunities for scholarship that addresses an approach's stance on a particular dimension. Similarly, practitioners might be inspired to write about how they addressed an approach's missing petal in practice by intentionally combining several approaches. Either way, there is still much work to do.

Challenges

A novel way of mapping and classifying approaches is not without its challenges. One challenge is deciding when the framework is ready to share. We debated how lush our garden needed to be before it was shared more widely. Ultimately, we decided that we had done enough for the field to see our garden and consider its merits, usefulness, and practicality. At the same time, we acknowledge there is more work to do. Not all extant approaches are mapped, and we acknowledge this. We see this garden as part of our research agenda and plan to add more flowers over time to surface new observations. For example, use was essential, albeit it to varying degrees, across all approaches included in this article. Will this observation hold across a wider set of mapped approaches? We also invite other scholars to help us more fully map the garden of evaluation and the flowers within it.

We are also still wrestling with several assumptions embedded in our classification system. One is that we have used a Western frame to anchor our flowers. We are engaged in conversations about what these flowers would look like if they were anchored by other frames. We are grappling with what it means to be us (three white women, two from the US and one from Germany) and to do this work. Related, but separate, because all three of us are educators (two are tenured faculty, and one builds others evaluation capacity through a federally-funded evaluation center), we are also thinking about what it means to use a Western frame in the training of evaluators. For example, one of us routinely teaches international students who bring a variety of backgrounds and experiences into the classroom (Schröter, 2022). In this context, is a Western frame rooted in the philosophy of science the right one to use? If we believe that there is a case for using a Western frame, then what is that case? Alternatively, if we believe that there is a case for using a different frame, what frame makes sense, and what does it illuminate that was previously hidden?

We are also still wrestling with some of the petals. For the context petal, we still are not sure if culture should be considered part of context (as it is in the visualization's current iteration), or else be called out alongside context so the petal is renamed “context and culture,” or become its own separate petal. We are also considering adding a petal to capture the primary purpose of the evaluation and another that shows the timing at which an approach is considered the most useful. We are not yet sure whether and how to add these.

What's Next

We hope our framework will inspire evaluation practitioners to think about how evaluation approaches guide their work, along with the benefits and limitations of creating hybrid flowers from two or more approaches. We also see our evaluation garden and its flowers as a natural evolution from prior classification systems, one that we hope others will welcome.

Our next steps are to continue mapping out the evaluation garden. We are currently working on creating the flowers for adaptive evaluation, outcome harvesting, success case method, and realist evaluation. Future work will balance mapping traditional (e.g., utilization-focused evaluation, empowerment evaluation) and contemporary approaches (e.g., youth-participatory evaluation). Longer term, our vision is for the garden to be inclusive of all extant approaches to evaluation, with flowers and the larger ecosystem (e.g., weeds, climates) included. As the garden continues to be mapped, we will share it through articles, conference presentations, and learning events. Perhaps our work will inspire other evaluation scholars to map out their own gardens or flowers.

Supplemental Material

sj-pdf-1-aje-10.1177_10982140231216667 - Supplemental material for The Garden of Evaluation Approaches

Supplemental material, sj-pdf-1-aje-10.1177_10982140231216667 for The Garden of Evaluation Approaches by Bianca Montrosse-Moorhead, Daniela Schröter, and Lyssa Wilson Becho in American Journal of Evaluation

Footnotes

Acknowledgments

We thank Brittany Hernandez for her support in early stages of this project.

Author Contribution

Bianca Montrosse-Moorhead: Conceptualization; Methodology; Formal analysis; Investigation; Data curation; Writing—Original draft; Writing—Review and editing; Visualization; Supervision; Project administration. Daniela Schröter: Conceptualization; Methodology; Formal analysis, Investigation; Data curation; Writing—Original draft; Writing—Review and editing; Visualization; Project administration. Lyssa Wilson Becho: Conceptualization; Methodology; Formal analysis, Investigation; Data curation; Writing—Review and editing; Visualization; Project administration.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.