Abstract

Evaluation is essential to achieving program outcomes, especially when stakeholders engage with evaluation and make use of the findings. Both of these activities require evaluation capacity that might not be present in community-based organizations. In this paper, we describe how community-university partnership models can support evaluation capacity building (ECB). The basic framework for our ECB initiative was a semester-long, master's-level university course in which 5–6 community partners worked with small groups of 3–4 students to design an evaluation plan. We used mixed-methods to assess (1) if organizations implemented the evaluation plans developed in the course; (2) how organizations used the findings; and (3) what evaluation skills participants continued to use after the course ended. We found that organizations that implemented their evaluation plans gained intended outcomes of ECB, such as improving practice and communicating with stakeholders. These results suggest that community-university partnerships for developing ECB can be effective.

Keywords

Evaluation has much to offer non-profit organizations (NPOs). It can improve organizations’ abilities to assess the impact of the interventions they deliver and may lead program processes to be more effective, efficient, and less costly (Rossi et al., 2019). It can be used to examine and describe effective interventions for replication elsewhere, and can generate knowledge that will improve programs, initiatives, and organizations for future participants, thus contributing to the organization's quality and adaptability (Rossi et al., 2019). When focused on process questions such as fidelity to intervention models, evaluation can facilitate higher-quality implementation, which improves program outcomes (Ditty, 2013; Sexton & Turner, 2010). Furthermore, NPOs are increasingly called upon to demonstrate outcomes to external entities (Carnochan et al., 2014; Ebrahim & Rangan, 2010) and are often required to conduct evaluation in order to qualify or compete for funding (Ostrower, 2005; Thomson, 2010). Not surprisingly, then, evaluation is one of the most commonly identified needs of NPOs participating in community–university partnerships (Ahmed et al., 2016).

At the same time, designing, implementing, and reporting evaluation can be a challenge for NPOs that lack the time, staff, expertise, and/or confidence to conduct evaluation (Carnochan et al., 2014; Kwon et al., 2012). Festen and Philbin (2007) cite three main reasons that organizations hesitate to conduct evaluations: fear and apprehension (of conducting evaluation itself and of what they might find), lack of resources to support evaluation, and the belief that evaluation is an “add-on” that detracts from the organization's primary mission. Alternatively, Liket et al. (2014) found that many organizations are doing evaluation, but “the utilization of these evaluations is often low and frequently results in organizations finding themselves ‘drowning in data’ that do not contribute to their strategic decision making” (p. 172). In each of these cases, improving knowledge, resources, and attitudes/beliefs about evaluation has the potential to enhance evaluation use and increase the likelihood that its benefits will be realized (Preskill & Boyle, 2008; Stevenson et al., 2002).

Evaluation Capacity Building

In recent decades, evaluation capacity building (ECB) has emerged as a sub-field of evaluation. Labin et al. (2012) define ECB as “an intentional process to increase individual motivation, knowledge, and skills, and to enhance a group or organization's ability to conduct or use evaluation” (p. 308). Organizations that have participated in ECB initiatives report improvements in knowledge and skills related to evaluation, as well as the development of tools and resources to support evaluation, uptake of evaluation practices, and increased belief that evaluation is valuable for the organization (Akintobi et al., 2012; Frantzen et al., 2018).

The intended outcomes of ECB efforts range from preparing organizations to work with an external evaluator to equipping organizations to conduct independent evaluations (Stockdill et al., 2002). In most cases, however, one of the primary goals of ECB is to produce a “cognitive shift” wherein organizations become more likely to trust in and adapt to evaluation findings (Fierro, 2012; Taut, 2007). More broadly, ECB initiatives can contribute to the practice and acquisition of “evaluative thinking,” a mindset that includes inquisitiveness, trust in the value of evidence, ability to identify assumptions, using reflection to deepen understanding, and linking decision making with action (Archibald, 2021; Buckley et al., 2015).

ECB can be accomplished through a variety of strategies including training, technical assistance, coaching, inclusion in evaluation, and communities of practice; it can also be delivered in-person, on-line, and/or via self-paced studies (Preskill & Boyle, 2008; Taylor-Powell & Boyd, 2008), all of which suggests considerable design flexibility for intervention planners. Importantly, however, at least one study has found that ECB participants prefer face-to-face training and hands-on-applications and perceive them to be more effective than remote or self-paced strategies (Akintobi et al., 2012). In addition to the importance of designing ECB interventions for individual participants’ learning, organizations that participate in ECB must be “ready” for evaluation for these interventions to be successful at the organizational level (Bourgeois & Cousins, 2013; Preskill & Boyle, 2008).

ECB Through Community-University Partnerships

Since ECB initiatives require change at the individual and organizational levels, they often require long-term engagement among trainers and NPO staff, but marshaling the time and financial resources required for such engagement can be challenging (Lennie et al., 2015). Community-university partnerships can be an effective strategy for developing ECB, because universities are often well-positioned to provide financial and human capital in exchange for benefits like research participation (Bakken et al., 2014; Forden & Carrillo, 2015). Community-university partnerships that involve research, teaching, service, or some combination of the three have long been recognized for their potential to improve the quality of engagement in and impact of research (Kwon et al., 2018, Silberberg & Martinez-Bianchi, 2019), build community capacity (Behringer et al., 2018), and facilitate student learning (Warren et al., 2016). Furthermore, community-university partnerships create mechanisms through which universities can share and exchange resources with the communities that surround them, thus contributing to their social missions (Goodhue, 2017). In recent years, community engagement has grown to be a central aspect of university research and teaching strategies as more faculty appreciate its value (O’Meara & Jaeger, 2016) and more funders require it (Han et al., 2021).

ECB initiatives that are delivered through community-university partnerships vary in format and intended outcomes. Despite this variance, community-university ECB efforts typically involve the transfer of research and evaluation-related knowledge and skills from university affiliates to community partners in exchange for engagement in projects (Akintobi et al., 2012) and/or support for student learning (Bakken et al., 2014). Community-university partnerships focused on ECB encounter similar challenges to community-university partnerships in general, including the time-intensive nature of building and sustaining relationships, ensuring reciprocity, communication, and sustaining benefits to the community once the research is completed (Han et al., 2021; Ross et al., 2010). This paper serves as a teaching and learning tool grounded in evidence. We explain an ECB intervention that was implemented within the context of a community-university partnership model, and we describe the findings of our research study about this ECB intervention and the intended outcomes for local NPOs.

ECB Intervention

Our ECB initiative took the form of a semester-long, master's-level university course in which five to six community partners per semester were invited to participate. Community partners attended class weekly but were not required to complete assignments and assessments, and did not earn formal university credit for the course. Each community partner was matched with three to four students who worked with them for the duration of the semester to develop an evaluation plan for the partner's organization. Evaluation plans were the primary course deliverable and consisted of context and careholder analyses, a program logic model, process and outcome evaluation questions, study design, data collection tools, data management resources (e.g., creation of REDCap databases, Excel shells), participant recruitment and tracking plans, and plans for data analysis and reporting/dissemination. Over six semesters between 2014 and 2019, 29 community partners and 136 students from 11 academic programs participated in the course. All students were in master's or doctoral degree programs but differed considerably in their exposure to program evaluation, knowledge of research methods, and participation in previous community-university-based partnerships. Student outcomes are reported elsewhere (Suiter et al., 2023).

Design

Course objectives and weekly topics can be viewed in Appendix 1, but the overarching goal of the course was to equip community partners and students with the knowledge and skills required to design and carry out basic program evaluations. Beyond the course content, we understand the five primary attributes of our intervention to be: (1) Intentional strategies to facilitate community partner participation, (2) course design that introduces participants to basic evaluation concepts in the order they might be applied when developing an evaluation plan, (3) small, project-based groups, (4) weekly class sessions that combine multiple learning strategies into each class session, and (5) on-going engagement among community partners, students, and the course instructor, supported by class structure.

Facilitating community partner participation

Recruitment of community partners began six to eight weeks before each semester. An invitation to apply to participate in the course was sent out over local list serves. The invitation included information about the nature of the course and emphasized the time commitment (three hours/week for 15 weeks). We required that an organizational leader participate in the class, intending to ensure that the person who participated had enough power within the organization to shape organizational practices and/or implement the evaluation once designed. As part of the application, we asked for the organization's mission and a clearly identified evaluation need, which allowed us to assess whether the course was capable of meeting interested organizations’ needs. In recognition of the time and expertise that community partners contributed to the course, each community partner was given free course materials (e.g., textbook, articles), free on-campus parking on course days, and a $250 stipend. Funding for these resources was provided by the Meharry-Vanderbilt Community Engaged Research Core. Though we did not have the capacity to offer childcare, community partners who needed to bring their children to class were encouraged to do so.

Course strategy

Course topics primarily focused on evaluation concepts and competencies (e.g., context analysis, logic modeling, research design, data collection, and analysis), and presented these sequentially, as one might use them when developing an evaluation plan for an evaluation client (see Appendix 1). Throughout the course, the instructor emphasized that one week's content should inform the next; for example, the logic models were used to develop process and outcome evaluation questions, the evaluation questions were used to select study designs and data collection strategies, and so on. The applied focus and project-based nature of the course gave students and community partners practice in the soft skills of evaluation (e.g., making sense of ambiguous “real world” problems, navigating conflict) as they learned the more technical skills (e.g., study design, survey development) (King et al., 2001; Russ-Eft et al., 2008). Furthermore, it gave students and community partners the opportunity to implement what they were learning in real-time with support and coaching from the instructor, who is an experienced evaluator. Finally, working in dedicated groups throughout the course gave students and community partners the opportunity to develop relationships and deep knowledge of a specific case (i.e., the community partner's organization) over time.

Weekly class sessions

Each class session combined different strategies to support evaluation learning. Assigned readings and classroom lectures provided opportunities for informational learning through direct instruction (Bergmann & Sams, 2014). Small group discussions encouraged analysis of and reflection on reading and lecture content (Davidson et al., 2014). Small group application activities provided the opportunity for students and community partners to synthesize and apply what they were learning (Bell, 2010). For example, during the class session that focused on logic models, students and community partners read two articles about logic modeling before class, listened to a lecture on logic modeling in class, then spent the remainder of class time participating in a guided activity through which they built a logic model for the program or organization they were evaluating. These opportunities for “transfer of learning” (Holton & Baldwin, 2003) were built into every class session.

on-Going engagement

One challenge of community-engaged research and teaching is developing a structure to facilitate and sustain relationships for the duration of the project (Behringer et al., 2018). Indeed, maintaining mutually beneficial relationships is one of the most important and most difficult aspects of community-university partnerships (Israel et al., 2005; Matthews, 2016). A course-based ECB intervention like this one provides the benefit of conferring consistency and structure on participants’ interactions, thus increasing their likelihood of success (Ahmed et al., 2016). No one had to plan meetings, find a location, or coordinate schedules. Rather, all members of the partnership convened in the classroom once per week to learn and work on a shared task. During class, students and community partners interacted face to face. During in-class work time, the instructor circulated among groups to coach, answer questions, and ensure that groups were functioning properly. Foregrounding relationship maintenance in the structure of the course itself was the most “experimental” component of the intervention and turned out to be one of its strongest and most important features according to both student and community partner feedback.

Theories of Change

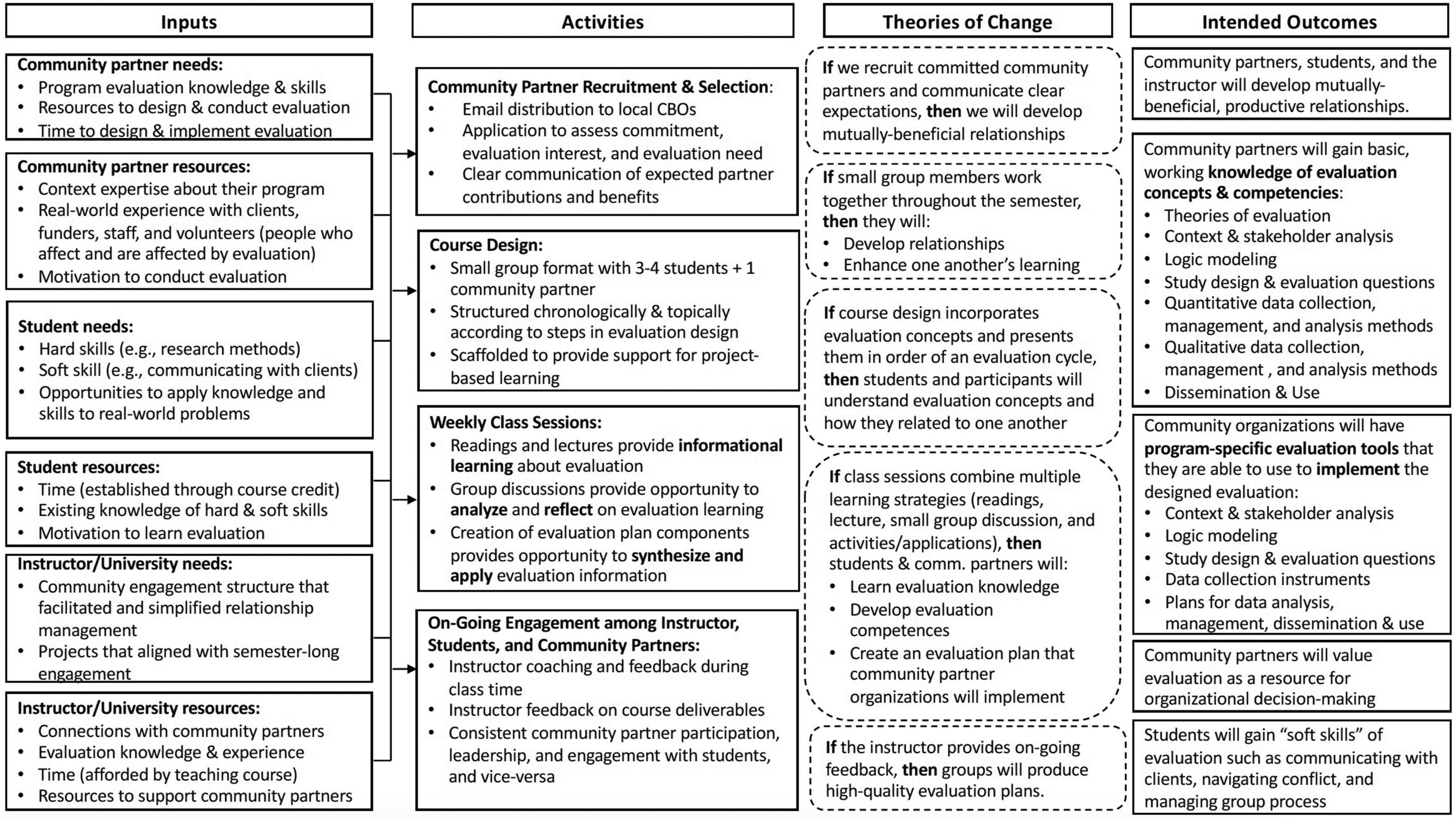

ECB literature demonstrates that improvements in evaluation capacity require change at both the individual and organizational levels (Labin et al., 2012). Our individual-level change strategies were informed by theories of adult learning such as Holton and Baldwin's (2003) work on transfer of learning, cited above, and theories of project-based learning. Project-based learning emphasizes the importance of aspects such as authenticity (the perception of learners that their project involves real-world concerns related to something of value to them), sustained inquiry (an on-going process of asking questions and seeking solutions), and student—and in our case, community partner—voice and choice in driving learning outcomes (Larmer et al., 2015; Lennie et al., 2015). These theories of change and their intended outcomes are depicted in our logic model (Figure 1). We integrated our theories of change into this model to clarify the underlying mechanisms and assumptions we believe are driving the changes we hope to see (Ebenso et al., 2019; Pawson, 2013).

Logic model and theory of change.

Fidelity of Implementation

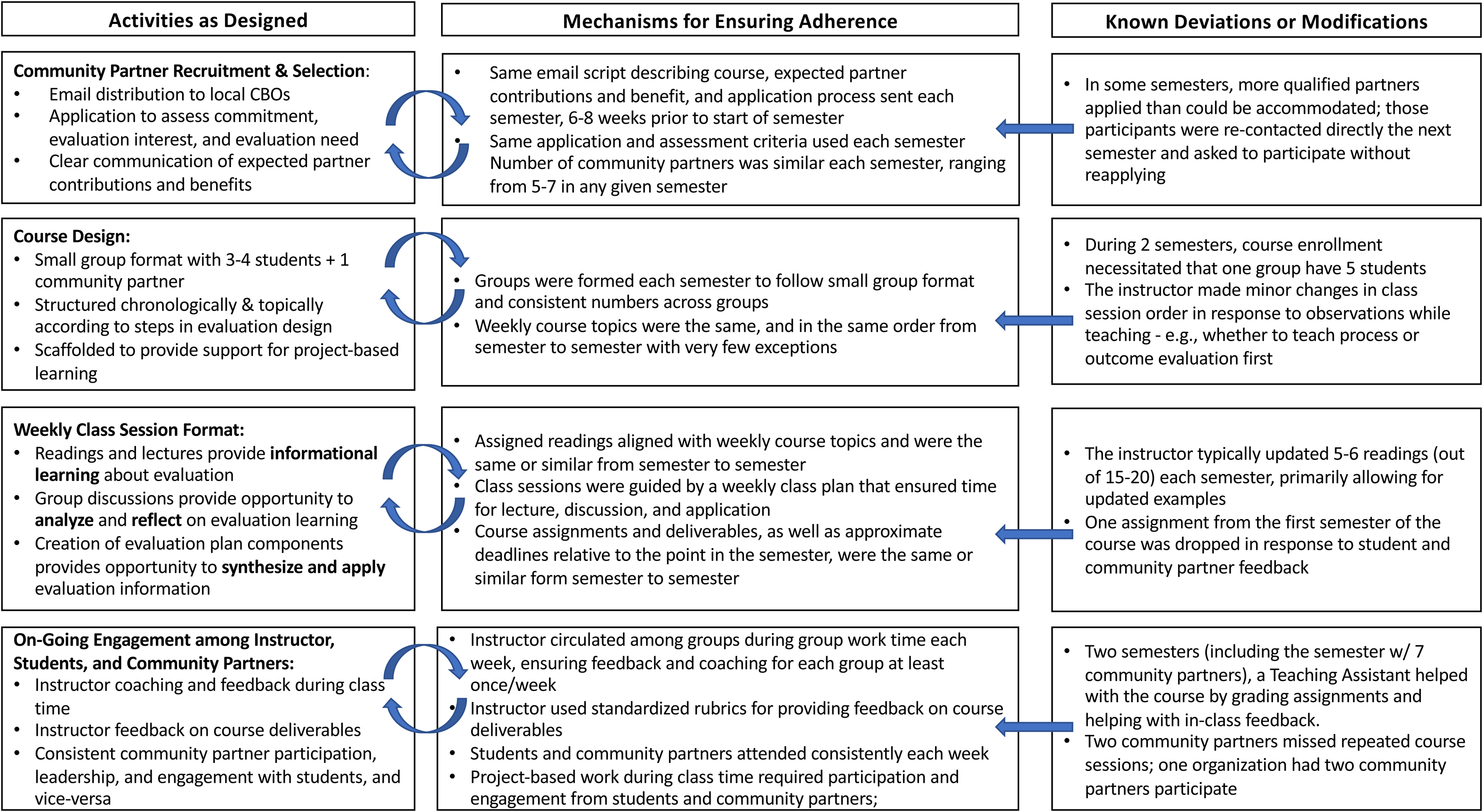

The first author (Suiter) worked to ensure fidelity of implementation throughout each individual course and semester. Implementation literature often identifies five dimensions of implementation fidelity: adherence, exposure or dose, quality of delivery, participant responsiveness, and program differentiation (Carroll et al., 2007). Our controls for adherence—i.e., whether the course was being delivered as designed—and known deviations from the original course design are outlined in Figure 2.

Activities and adherence.

Dose was consistent from semester to semester: Each semester involved 15 weeks of class, and each class session was three hours/week. Structured class plans were used for each week and transferred from semester to semester so that time spent on various activities was also relatively constant across semesters. The same instructor taught the course each semester using the same or similar readings, lecture slides, activities, rubrics, and commitments to student learning and engagement. To the extent that student assessment of course quality is a reliable indicator of quality of delivery (Benton & Ryalls, 2016; Boysen, 2016), students consistently rated the course and instructor highly (Suiter et al., 2023).

Course design included mechanisms to ensure participant responsiveness and engagement, including required attendance and structured in-class activities such that participation from each group member was more or less required. That said, participant responsiveness is likely the aspect of implementation that varied the most, especially from group to group and with respect to community partners. Program differentiation, or the process of identifying which elements of the course are essential (Dusenbury et al., 2003), is hypothesized in the theory of change (Figure 1).

Evaluation of ECB Intervention

On-going evaluation of this ECB intervention took place in multiple ways and focused on various aspects of the initiative, aligned with evaluation and community-university partnership best practices. For example, regular check-ins with organizations and students allowed us to monitor process-related issues such as workload, course pacing, needs for additional content or support, or conflict among group members. Additionally, the first author (Suiter) and other colleagues conducted a pilot study of the course the first time it was taught to explore short-term outcomes of the course on community partners and students (Suiter et al., 2016). Finally, students assessed the course at the end of each semester using the university's course evaluation system, which provided regular, anonymous feedback from students regarding the strengths and weaknesses of the approach. In addition to these evaluation components, we sought to understand the longer-term impact of this ECB initiative on the NPOs that participated in the course through the study described in this paper.

Research Study

We followed a mixed methods case study design (Tashakkori & Creswell, 2007) and combined data from participant questionnaires and publicly available records. Mixed methods case studies are well-suited for studies in which researchers are seeking to understand the “how” and “why” of a particular program or intervention as well as the “what” (Tashakkori & Creswell, 2007). Our case study was guided by the following questions about the intervention:

What types of organizations participated in the evaluation course? How and to what extent did community partner participants use evaluation concepts and skills after the course? Did community organizations use the evaluation designs developed in the course and, if so, how? What course-related factors influenced whether and how community partners used their evaluation plans? What context-related factors influence whether and how community partners used the evaluation plans?

Prior to beginning the study, the project was given exempt status from the university IRB.

Research Participants

Because this ECB initiative sought to make change at both the individual and organization levels, we think of “organizations” and “individuals” as two different types of participants. Below, we describe the attributes of each, acknowledging that one type (individuals) was embedded within and representative of the other type (organizations).

Organizations

Students and community partners created a total of 29 evaluation plans for 26 different organizations (three organizations participated twice, in different years, to develop evaluation plans for different programs; in these cases, different staff members participated in the class). Of the 26 organizations, 23 were community-based service, advocacy, or policy organizations, one was a state government department, one was a professional organization, and one was a university. The catchment area for 19 of the organizations was local (focused on the metropolitan area and/or only slightly beyond), six organizations operated statewide, and one organization operated internationally.

Individuals representing community organizations

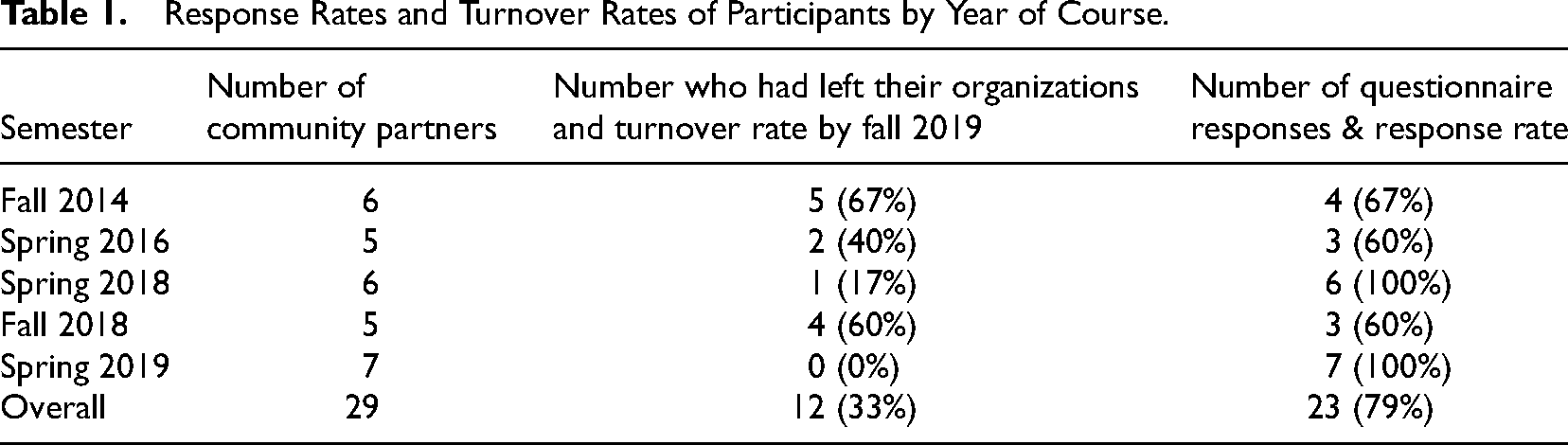

Community participants from courses that were taught in Fall 2014, Spring 2016, Fall 2018, Spring 2018, and Spring 2019 were invited to complete the course follow-up questionnaire in Fall 2019 (n = 29). Of the 29 community partners eligible to complete the questionnaire, 23 responded (response rate = 79%). Table 1 displays course participation, questionnaire response rates, and staff turnover rates (i.e., number and percentage of staff who had left their organization since their participation in the class) by semester cohort.

Response Rates and Turnover Rates of Participants by Year of Course.

Data Collection and Instrumentation

We acknowledge that surveying each organization at a consistent time point after their participation would have been a stronger research design. Initially, we did not set out to study the course as an intervention after we pilot-tested it following the Fall 2014 semester. We conducted the questionnaire in 2019 at the request of our funder, and were pleasantly surprised by both the response rate and our longer-term findings, and came to believe that our findings had important implications for the fields of ECB and community engagement. The one-shot design likely affected response rates (which were lower for more distant cohorts) as well as turnover rates (which were higher for more distant cohorts). At the same time, being able to explore participant outcomes at varying timepoints from their completion of the course provided the opportunity to explore the durability of what the participants had learned.

Questionnaire

The questionnaire was designed and administered using REDCap, an electronic data capture tool hosted at Vanderbilt University (Harris et al., 2009). Various features of REDCap allowed us to see whether a given organization had responded to the questionnaire (and thus have knowledge of response rates by cohort), but we could not see which respondents contributed which set of responses, thus keeping the results deidentified. Questionnaire respondents were informed in writing of the purpose of the study and the intended use of the collected data.

Questionnaire items were quantitative and qualitative and included questions about whether the evaluation plan had been implemented within the organization, if the organization had continued to use evaluation over time, if the participant had remained with their original organization, and if the participant had continued to use concepts from the course in their professional life. The goal of the questionnaire was to assess ECB at the organizational level and at the level of the individual participant. The questionnaire was designed to align with course content and goals, and thus is quite different than other existing measures of ECB. We did not pilot the questionnaire with potential participants because our sample size was already relatively low; however, one author (Morgan) participated in the course as a student and community partner, and provided feedback on the questionnaire from her role as a community partner.

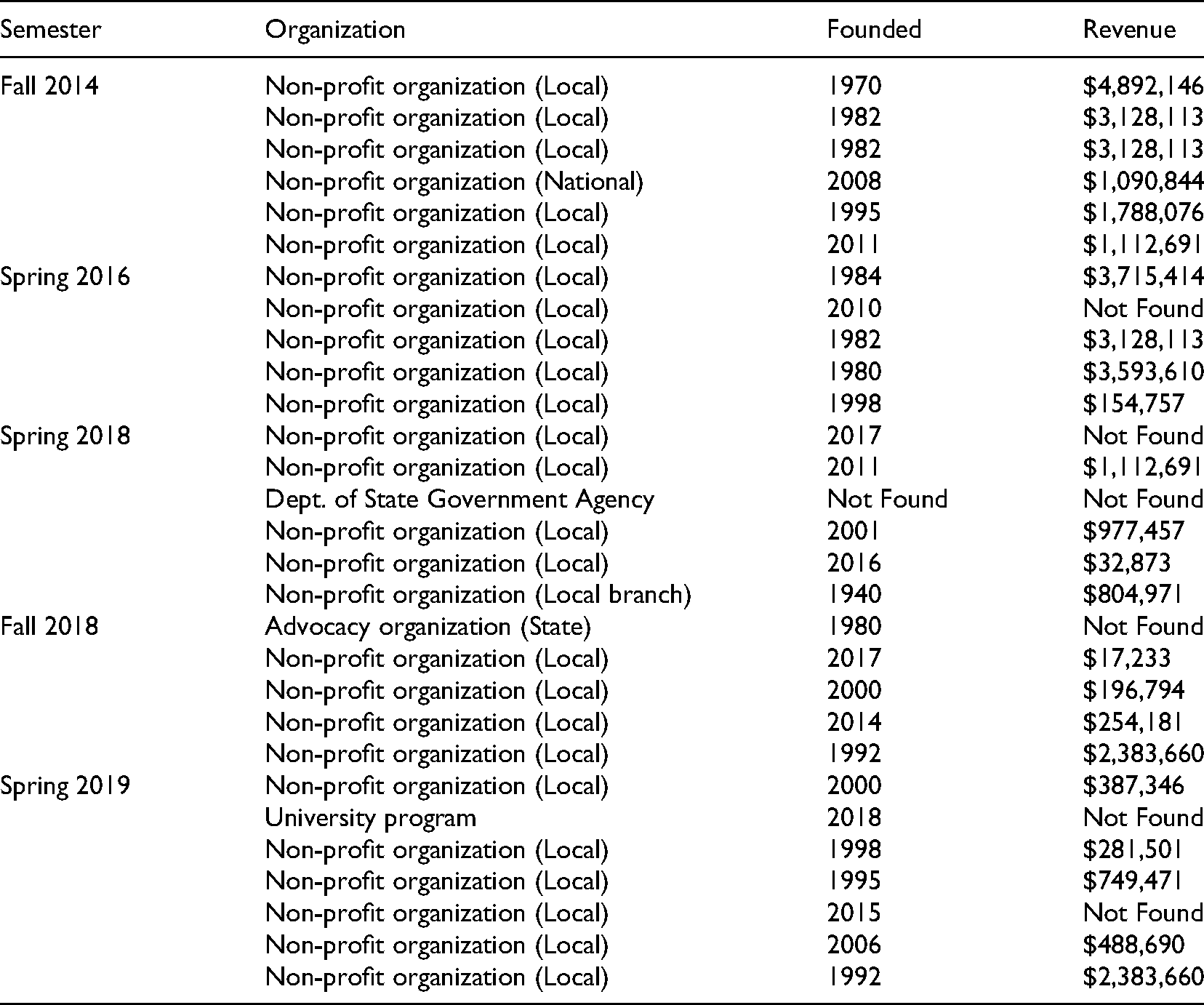

Document review of publicly available data

Document reviews of publicly available data about community partners (e.g., mission statement, target populations, number of staff) were used to gather additional descriptions of the organizations (Centers for Disease Control [CDC], 2018). Data on founding date, reported revenue, target population, and cause area were obtained from publicly available sources, primarily Guide Star and Charity Navigator. Data that could not be found on public domains were omitted from the analysis. Based on background knowledge of the organizations for which data were unavailable, we infer that most of these organizations were relatively young and/or small, or were government or advocacy organizations (i.e., not NPOs). A list of de-identified organizations, their founding date, and their annual revenue is available in Appendix 2.

Analysis

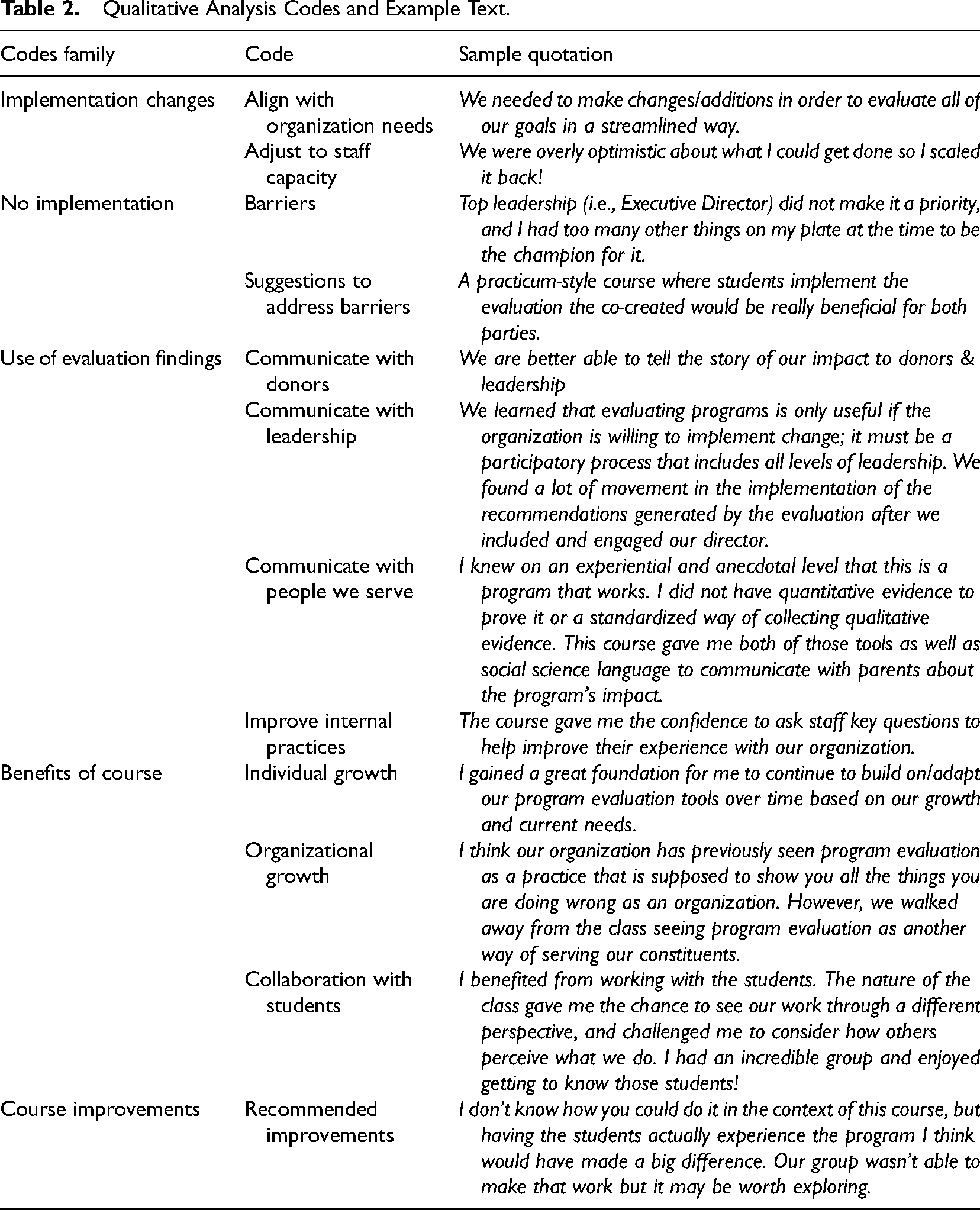

Quantitative questionnaire items were analyzed using descriptive statistics. The second author (Morgan) conducted a thematic analysis of the qualitative data, drawing on the research questions and questionnaire prompts to develop initial thematic domains. Data were analyzed in NVivo (QSR International, Version 12, 2018) and an inductive codebook was created. The second author then discussed the codes with the other authors (Suiter and Eccleston), who served as critical friends (Kember et al., 1997). The codebook was revised by the research team and organized into overall themes that fell within the domains. Codes and example quotations are displayed in Table 2. We used qualitative findings to better understand and explain the quantitative findings. This triangulation strategy was key to exploring our “how” and “why” research questions (Tashakkori & Creswell, 2007).

Qualitative Analysis Codes and Example Text.

Researcher Reflexivity

The authors had different relationships to the course and its evaluation which reflexively informed our interest in this inquiry (Etherington, 2004). The first author of this paper (SS) was the course instructor, the second author (KM) was a student and community partner in the course, and the third author (SE) was unaffiliated with the course but has an interest in community-engaged teaching and research. These varied roles provided different vantage points into the focus of this study and strengthened the validity and trustworthiness of our analysis (Goodrick & Rogers, 2015). For example, when we began to discuss the implications of our findings, the second author focused on implications for improving evaluation use in organizational settings, whereas the first author focused on implications for teaching evaluation and community-university partnerships. We were able to put these areas of focus in conversation with one another, leading to more robust findings about the relationships among the three.

Findings

Findings suggest that our ECB strategy was attractive to and effective for community partners from NPOs ranging in size and focus. Most organizations implemented their evaluation plans and reported multiple ways in which evaluation findings were used by their organizations. Community partners themselves reported using their new evaluation skills in their work roles.

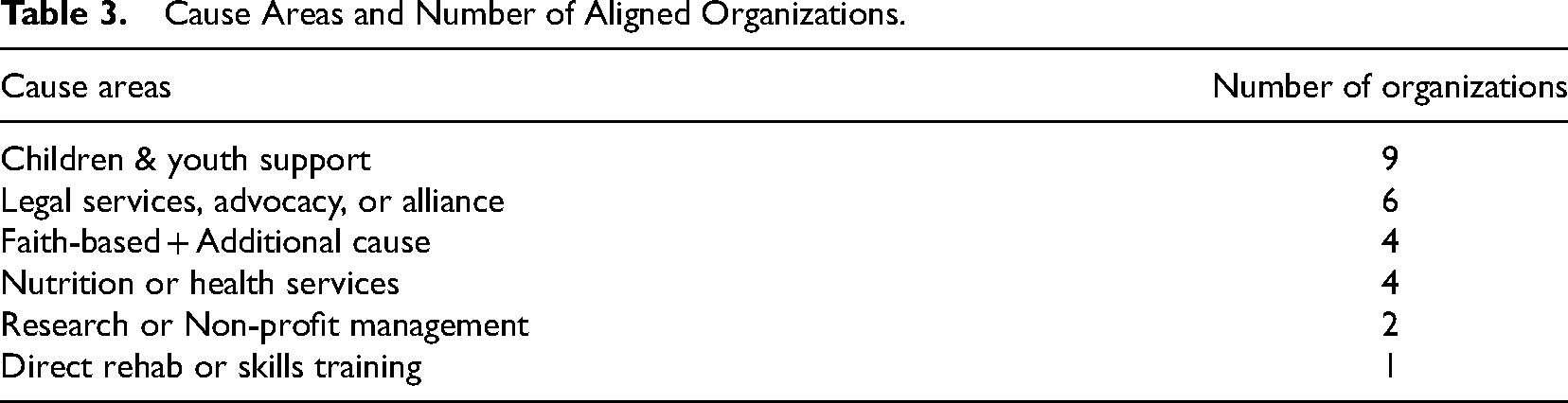

Organizational characteristics

The organizations that participated in the class varied considerably in terms of age, size, budget, and organizational purpose and mission. Ten of the 26 organizations (38%) were founded before 2000 and thus brought decades of experience to the class. Another 11 organizations (42%) were relatively young and founded after 2010 (nine were less than 5 years old). Organizational budgets also varied widely (see Appendix 2). We used 2018 reported revenue as the measure of budget size, because this information was available for most organizations. Annual revenue ranged from $17,233 to $4,892,146 (M = $1157,696, SD = $1,402,863). Five organizations did not have revenue data available. Most organizations had multiple foci, but for the purposes of standardization, we used the “cause area,” indicated by the organization's National Taxonomy of Exempt Entitie (NTEE) code. Table 3 displays cause areas and the number of organizations that aligned with each.

Cause Areas and Number of Aligned Organizations.

Although we lack a comparison data set demonstrating similar indicators for the universe of NPOs in our city, these data suggest that interest in evaluation exists across organizations of varying sizes, budgets, and focus areas.

Program evaluation implementation results

Fifteen of the organizations (65%) implemented some form of the evaluation plan designed in the class: Seven community partners (30%) reported implementing the program evaluation exactly as it was designed, and eight (35%) reported making either minor (6, 26%) or major (2, 8%) adjustments to the plan before implementation. A few organizations, inspired by the planning process they had engaged in with their team of students, chose to expand the scope of the evaluation plan from a single program to “specific desired outcomes across all aspects of [their] work” in order to “evaluate all of [their] goals in a streamlined way.” Although these groups used the planning process as a starting point for more robust evaluation, most organizations that changed the scope of the evaluation plan did so because of lack of staff or capacity to carry out the original plan. For example, organizations noted being “overly optimistic about what we could get done,” often due to “unforeseen internal circumstances” that required scaling back or eliminating elements of the evaluation plan.

The remaining eight organizations (35%) were unable to implement the program evaluation at all. Reasons for not implementing reflect barriers to evaluation capacity outlined in the literature, including high staff turnover, competing priorities, and lack of expertise (Cashman et al., 2008; Festen & Philbin, 2007). In some cases, restructuring resulted in programs “no longer being offered due to changes in organization strategy.” In others, changes to the organization's strategic plan “superseded the need for many elements in the evaluation.” Staff capacity issues also arose, as the burden to implement often fell exclusively on the individual who participated in the course. For instance, one respondent noted that when he left the organization, leadership “didn’t commit to the process outlined,” while another respondent “didn’t have the capacity to implement, on top of the other responsibilities [they] had to carry out.” Despite barriers to implementation, some participants noted that elements of the evaluation plan were able to be integrated into the organization's work in nonevaluative ways: I really wish we had implemented it. Top leadership did not make it a priority, and I had too many other things on my plate at the time to be the champion for it. That being said, some of the things we learned from the team's work did shape our work and approach moving forward.

The sentiment that an additional “champion” for the evaluation plan would improve implementation capacity was shared by many organizations. For example, some participants suggested that it would be beneficial to have an additional course or internship opportunity through which the students who helped to design the evaluation could also work with the partner to implement it. This type of ongoing support, it was noted, would not only help with implementation but also demonstrate the value of evaluation to others within the organization.

Evaluation use results

Among the 15 participants (65%) whose organizations implemented their evaluation plan, evaluation findings served a range of process- and outcome-oriented purposes. Participants indicated that evaluation results had been used to communicate with donors, support organizational change, report to clients, and improve services.

Communication with donors

Eight participants (53%) shared that they had communicated evaluation findings to donors. One community partner described the value that quantitative evaluation capacity brought her organization, noting “how difficult it is to quantify the impacts of our funding! [The organization] has great stories, but getting numbers was still hard.” Others valued the way that qualitative evaluation data helped their organization become “better able to tell the story of [their] impact to donors and leadership.” For these partners, ECB has helped them communicate the value of their programming and thus to obtain, sustain, and diversify their funding.

Supporting organizational change

Ten participants (67%) communicated their evaluation findings with leadership, which one participant noted was crucial for leveraging the evaluation data toward systems change: We learned that evaluating programs is only useful if the organization is willing to implement change; it must be a participatory process that includes all levels of leadership. We found a lot of movement in the implementation of the recommendations after we included and engaged [the Executive Director]. Once he was involved, the evaluation was given proper value and was actually considered to be a vital new tool that would improve our programs and greater organization.

Reporting to clients

Sharing findings back with groups served by the organization was noted by nine community partners (60%), who described using the “tools and social science language” they learned in the course to communicate with those who use their services. Through evaluation, they were able to share findings regarding program benefits they felt were true “on an experiential and anecdotal level … but did not [previously] have quantitative evidence to prove.” They noted that their clients gained “important insights” about their own experiences through reflecting on evaluation findings. These data were useful both for their recruitment efforts and for building community within their organizations.

Improving services

The most-cited use of evaluation findings was improving practice or programs—14 participants (93%) noted that they used findings to “understand where [their] program was going” and to support evidence-based decision-making. For example, one community partner found that the evaluation process was integral to support the school-based intervention they were implementing: These tools helped reveal patterns in what teachers were excluding from the curriculum, and we were able to use that data to talk to teachers about why they were skipping these sections of the curriculum, which ultimately helped us refine the curriculum this summer as we enter our second year of implementation.

By “reveal[ing] patterns” in their practices, the evaluation process not only supported “organizational growth and change,” but also allowed groups to “see program evaluation as another way of serving [their] constituents.”

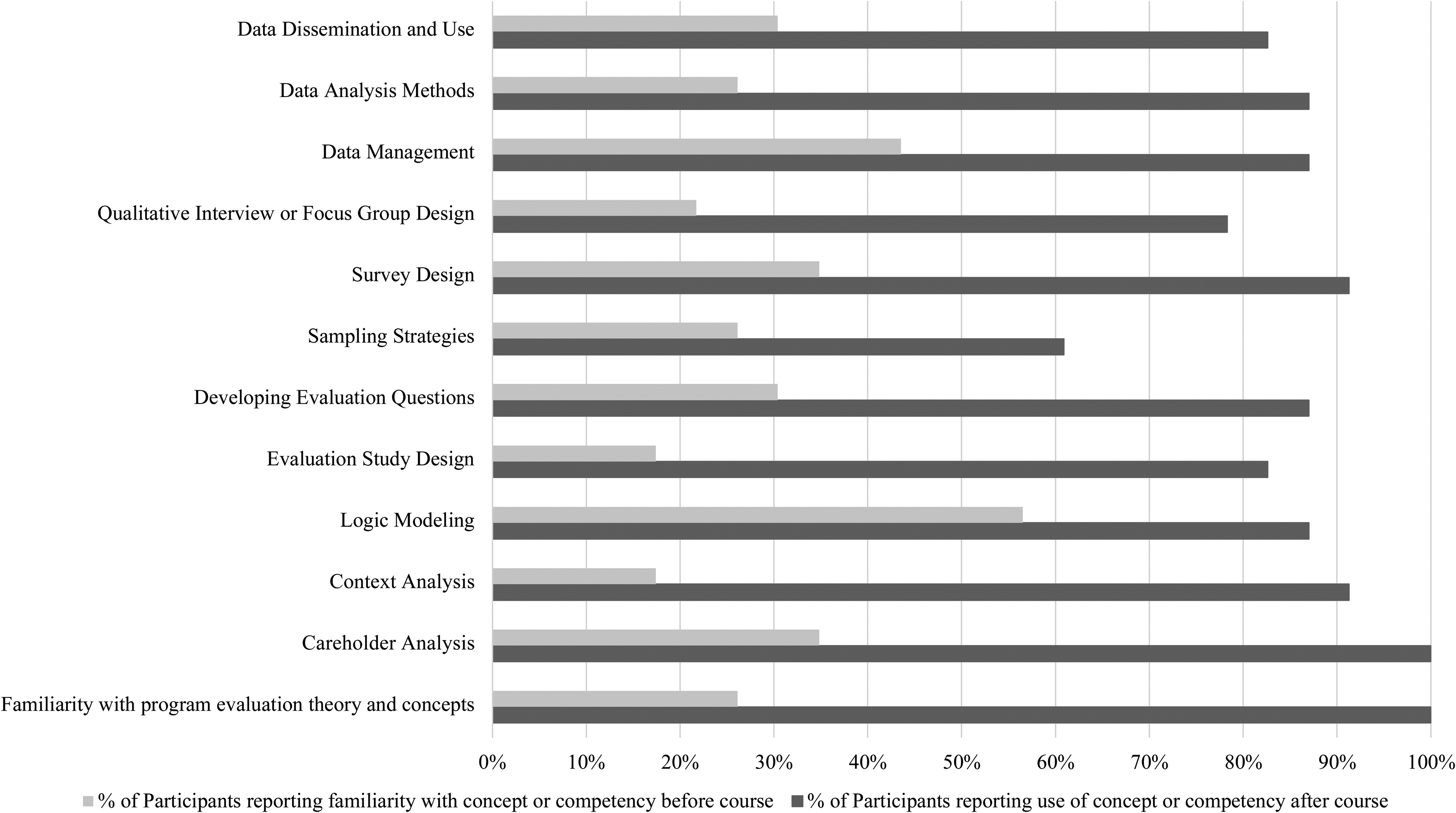

Individual level results

As part of the questionnaire, community partners reflected on the level of familiarity they had with each course concept before participating in the program. Results outlined in Figure 3 indicate that many community partners entered the course with some cursory knowledge of program evaluation, but still reported most concepts as “new,” or “somewhat new.” Concepts most familiar to community partners before entering the course included logic modeling and data management.

Familiarity with course content before participation and use of course content after participation.

Overall, results suggest that participants developed new skills through the course and continued to use these skills in their professional settings. For instance, 15 participants (65%) indicated that they now develop evaluation questions “a lot” as part of their current work; this is a notable result because only seven participants (30%) listed this skill as familiar prior to the course. Many of the processes that were least familiar to participants before the course, including data management, data analysis, and dissemination of findings, were among the concepts that they reported using most often after the course. Additionally, although over 65% of participants indicated that program evaluation theory and careholder analysis were “somewhat new” or “completely new” when they entered the course, all participants indicated using skills in those areas after the course. Taken together, these findings suggest that community partners brought new conceptual and analytical techniques to bear in their practice as a result of course participation.

Course reflections

Community partners’ reflections on the value of the course suggest that both individuals and organizations built evaluation capacity as a result of participating. Participants noted several professional benefits, including the development of “a foundation … to continue to build on/adapt our program evaluation tools over time based on our growth and current needs.” This suggests that the process of planning a program evaluation supports a “deeper understanding of the logic of the program and what its goals should be,” which may impact participants’ efficacy at work. Some participants also noted that they were “able to apply concepts learned” from the course in other jobs, because skills in this course are now in their professional toolbox. In this way, the course supported not only organizations, but non-profit professionals, who may use their evaluation skills in multiple organizations over the course of their careers.

Partners also shared many ways that the course contributed to their organization as a whole, including developing an understanding of the “history of the organization” and “where the organization is going.” For example, one organization used the course to reflect on and revise its programming outcomes, discovering a mismatch between its goals and the outcomes they had historically tracked. The participant explained, Through creating the logic model and theory of change, we realized our program's goal should be to create highly effective partnerships and highly competent volunteers that can engage in [Local School District] initiatives. We should not be trying to tie our program's goal or outcomes directly to student performance changes.

Discoveries like this helped organizations develop a more nuanced understanding of the importance of program evaluation capacity in NPOs. They also serve as evidence of the development and practice of “evaluative thinking” regarding program outcomes and operations. After the course, one partner noted that they moved from “see[ing] program evaluation as a practice that is supposed to show you all the things you are doing wrong” to a understanding it as a vital mechanism for illuminating successes and addressing setbacks quickly.

Participants attributed much of this capacity-building to the collaborative, interdisciplinary teams they were part of during the course. Community partners both “benefited from … the chance to see [their] work through a different perspective” and noted that having a team of students who initially knew little about the program “challenged [them] to consider how others perceive what we do.” They also commented on the valuable relationships they built “getting to know those students” and “learning from a different generation.”

Discussion

By asking former community partners about their course experiences and their outcomes, we gained a deeper understanding of the benefits and limitations of community-university partnerships for ECB. Findings suggest that organizations that implemented their evaluation plans gained some of the intended outcomes of ECB. Combined with our study of student outcomes, these results suggest that the course was successful in building evaluative thinking in both types of participants (Archibald, 2021; Buckley et al., 2015). For example, community partners reported specific examples of questioning the assumptions built into their program, sometimes causing them to shift or revise organizational practices. They reported increased use of data collection and analysis and using their findings as evidence to guide decision making. Finally, they shared that the course exercises prompted reflection that yielded a deeper understanding of their own work.

Course Limitations

This course model may not work for all organizations, because it places very specific demands on the time and availability of community partners, who must attend class each week. We built in supports (stipend, opportunity to bring children to class) to facilitate participation but acknowledge that these might not have been enough for all prospective participants. The intervention is also very specific in terms of the content covered—it is possible that community partners spent time learning things they already knew (or didn’t need to know) or that they would have benefited more from different content. Participating organizations did not complain about the requirements of the course, but it is possible that some organizations did not apply because they could not commit the necessary time or did not think the course would benefit them.

Extending the intervention so that students also take part in the implementation of evaluation plans, as suggested by community partners, may reduce implementation barriers while expanding the impact of this work. Furthermore, the fact that 35% of organizations that implemented their evaluation plans made some kind of modification to them tells us something about the need to provide more instruction in designing an evaluation that is commensurate with the various capacities of the organization. On one hand, it is positive that community partners felt confident adapting the evaluation plan on their own; on the other hand, being able to assess feasibility is an important skill in evaluation design.

Implications for ECB

One benefit of the intervention described above is that it requires relatively few financial resources to implement. The total cost of the intervention, which to date has reached 29 individuals and 26 organizations, was approximately $10,000. As a comparison point, a recent study cited $250,000 in total costs for an ECB program that served 8 organizations (Frantzen et al., 2018). Though this comparison program was more tailored to individual organizations than ours, its reported outcomes were similar. Because of the resource efficiency of this model, and the willingness of our university to support it, it is an ECB initiative that has the potential for substantial longevity.

An additional benefit of this intervention was that it supported individual and organizational learning simultaneously. Future research could seek to assess the scale of this impact on a local nonprofit landscape, especially given the ability of non-profit professionals to transport evaluation knowledge and skills to new/different non-profit employers within the landscape over time. Literature on ECB has emphasized the importance of organizational capacity and readiness for evaluation, and we agree. However, our data indicate that investing in non-profit professionals as individuals may be uniquely valuable, because the participants in our study tended to remain in non-profit settings even when they left their current positions, and they tended to continue to use evaluation skills, in some cases bringing them to new organizations.

Implications for Community-University Partnership

One of the biggest surprises in teaching and researching this course has been the continued interest and commitment to the course on the part of community partners. Although the literature on community engagement often emphasizes the importance of being cautious of the time-demands such engagement places on community partners, we have found that partners are willing to commit their time when they perceive themselves to be gaining important skills and resources in return. The relatively rigid structure of the course meant that we could communicate our expectations and anticipated benefits to community partners before they became engaged. As a result, the course served as a scaffold to support one of the most challenging aspects of community-university partnerships: the time and intentionality required to invest in trusting, productive relationships. We propose that the course-based format and the strategies cited in the theory of change could be adapted to a number of fields and competencies.

This course-based model, developed and practiced in the years prior to the coronavirus disease 2019 (COVID-19) pandemic, relies heavily on sustained, in-person collaboration. In March 2020, however, that semester's version of this course moved online for the duration of that academic term. In the Fall of 2020 and Spring of 2021, this course was taught in a hybrid format, with community partners participating virtually and students participating in-person or virtually, depending on their disease risk and level of comfort with in-person interactions. We have not combined data from those semesters with the data presented in this study because many aspects of the implementation were meaningfully different. Nevertheless, we found the virtual and hybrid formats to be a viable option for continuing to offer the course to students and community partners. Although anecdotal evidence leads us to believe that in-person engagement is better for learning and satisfaction with the course, the viability of virtual and hybrid formats speaks to expanded possibilities. For example, such formats allow for engagement of non-local partners and minimize travel time to and from campus for local partners. Additionally, as online programs for professional students continue to grow, offering a course like this one in a virtual format could increase opportunities for experiential learning in online courses. Despite the major changes in our world that COVID-19 introduced, we believe the lessons from this paper still have relevance. Community organizations continue to face internal and external demands for evaluation and often have even more limited resources to meet them. The design of this intervention, which provided real-world evaluation learning opportunities, and structure for mutually beneficial community-university relationships, is adaptable and effective to meet those demands.

Conclusion

The intervention described above is one model for bolstering evaluation capacity within local organizations, while teaching evaluation to master's-level students. Community-university partnerships are a modality for achieving mutually beneficial outcomes, and this study aimed to demonstrate the effectiveness of one strategy to increase evaluation capacity. In addition to demonstrating the relative effectiveness of this intervention in particular, we recommend course-based partnerships as a change mechanism for building evaluation and other forms of research capacity in students, community partners, and community-based organizations.

Footnotes

Acknowledgements

The authors would like to thank our anonymous reviewers for their thoughtful and helpful feedback on previous drafts of this article. They would also like to thank Donna Smith for the critical support she provided to the course described in this article, and Meredith Meadows for her skillful editing

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Center for Advancing Translational Sciences, (grant number UL1TR000445).

Appendix 1: Course Objectives and Topics

Appendix 2: Participating Organizations and their Attributes

| Semester | Organization | Founded | Revenue |

| Fall 2014 | Non-profit organization (Local) | 1970 | $4,892,146 |

| Non-profit organization (Local) | 1982 | $3,128,113 | |

| Non-profit organization (Local) | 1982 | $3,128,113 | |

| Non-profit organization (National) | 2008 | $1,090,844 | |

| Non-profit organization (Local) | 1995 | $1,788,076 | |

| Non-profit organization (Local) | 2011 | $1,112,691 | |

| Spring 2016 | Non-profit organization (Local) | 1984 | $3,715,414 |

| Non-profit organization (Local) | 2010 | Not Found | |

| Non-profit organization (Local) | 1982 | $3,128,113 | |

| Non-profit organization (Local) | 1980 | $3,593,610 | |

| Non-profit organization (Local) | 1998 | $154,757 | |

| Spring 2018 | Non-profit organization (Local) | 2017 | Not Found |

| Non-profit organization (Local) | 2011 | $1,112,691 | |

| Dept. of State Government Agency | Not Found | Not Found | |

| Non-profit organization (Local) | 2001 | $977,457 | |

| Non-profit organization (Local) | 2016 | $32,873 | |

| Non-profit organization (Local branch) | 1940 | $804,971 | |

| Fall 2018 | Advocacy organization (State) | 1980 | Not Found |

| Non-profit organization (Local) | 2017 | $17,233 | |

| Non-profit organization (Local) | 2000 | $196,794 | |

| Non-profit organization (Local) | 2014 | $254,181 | |

| Non-profit organization (Local) | 1992 | $2,383,660 | |

| Spring 2019 | Non-profit organization (Local) | 2000 | $387,346 |

| University program | 2018 | Not Found | |

| Non-profit organization (Local) | 1998 | $281,501 | |

| Non-profit organization (Local) | 1995 | $749,471 | |

| Non-profit organization (Local) | 2015 | Not Found | |

| Non-profit organization (Local) | 2006 | $488,690 | |

| Non-profit organization (Local) | 1992 | $2,383,660 |

Appendix 3: Course Follow-up Questionnaire