Abstract

The relationship between stability and change is a central paradox of administration that pervades all forms of organizing. Evaluation is not unfamiliar with paradoxical objectives and roles, which can result in tensions for evaluators and stakeholders. In this article, paradoxes between stability and change in the implementation of evaluation, and responses to them, are investigated through the case of social investment funds in Swedish local government. From interviews with staff, managers, and evaluators, findings show how responses to four main paradoxes give priority to top-down summative evaluation that produces instrumental knowledge on outcomes and costs for decision makers. The responses show that the concept of social investment fund evaluation is elastic to contain paradoxes and address different audiences. Also, paradoxes within the structure of the organization develop into paradoxes concerning the roles and goals of evaluation, raising the question of whether individual actors can deal with paradoxes.

Introduction

The administration of public programs contains deep-rooted and recurring types of dilemmas and contradictions (Pollitt & Bouckaert, 2017). For example, public sector organizations are expected to be reliable and consistent while at the same time being innovative and responsive to their changing surroundings. This relationship between stability and change is a central paradox of administration that pervades all forms of organizing (Farjoun, 2010). Evaluation is one form of organizing where paradoxical situations within goals and roles may appear, which can result in tensions for evaluators and stakeholders. The high expectations of evaluation ideals such as objectivity, neutrality, and instrumental use of evaluation results have often proved to be difficult to realize when confronted with the complexities of practice (see Dahler-Larsen, 2012). Governance today calls for collaboration between numerous stakeholders on multiple levels to deal with multidimensional and interlinked social problems (see Peters, Pierre, Sørensen, & Torfing, 2022), presenting evaluators with challenges of competing stakeholder values. Evaluation is an important and desirable concept consisting of different parts that actors may emphasize and understand differently (see Gallie, 1955).

In this article, paradox theory (Jarzabkowski, Lê, & Van de Ven, 2013; Putnam, Fairhurst, & Banghart, 2016; Smith & Lewis, 2011) is used to explore tensions that arise from paradoxes and how they are responded to in the organization and practice of evaluation. Evaluation is the careful assessment of the merit, worth, and value of organization, content, administration, output, and effects of ongoing or finished government interventions, which are intended to play a role in future, practical action situations (Vedung, 2012). Paradoxes have seldom been studied systematically in evaluation research. Investigating which elements—or values—are selected, ignored, or emphasized in responses to paradoxes can illustrate how evaluation policy is shaped and negotiated by actors such as decision makers and professionals. In this sense, evaluation is understood as socially situated. Evaluative solutions represent certain worldviews and framings of a problem and its causes, as well as normative rationales that justify this solution (Høydal, 2021; Nordesjö, 2019).

The empirical case of interest in the research presented below is social investment funds (SI funds) in Swedish local welfare government. Together with, for example, social impact bonds (SIB), they are policy instruments within a social investment perspective. In the last few decades, they have emerged as an answer to shortcomings of service design, delivery, and accountability and the need for innovative organizational solutions to preemptively handle deep-rooted social problems in the public sector. Investing in social services, youth policies, and health is regarded as an investment, aiming to reduce future government spending. Social policies are a productive factor, it is argued, essential to economic development and employment growth (Morel, Palier, & Palme, 2012).

Evaluation is intended to be an integrated part of the selection, implementation, calculation, and decision-making of SI fund interventions. They are assessed prior to implementation, during implementation through a monitoring system, and afterward through outcome evaluation and cost calculations, in order to find out which intervention “works,” that is, to reduce future costs. The SI idea is thus closely related to contemporary ideas of evidence-based policymaking and the “what works movement” (Boaz et al., 2019; Cairney, 2016), as well as social impact measurement (SIM; Rawhouser, Cummings, & Newbert, 2019) and social finance and impact investing (Chiapello & Knoll, 2020). Evaluation's integrated role in public management and a strong focus on outcomes also ties it to New Public Management (Dahler-Larsen, 2007).

However, policy instruments within SI have been shown to be framed and adapted differently as solutions to different political problems (Nicholls & Teasdale, 2021), resulting in paradoxes between, for example, flexibility and evidence-based services (Maier, Barbetta, & Godina, 2018). Thus, it is not unreasonable to explore the SI fund idea as potentially paradoxical. Although it requires rigid administrative, budgetary, and organizational set-ups to produce different kinds of “evidence,” it simultaneously asks for new and innovative as well as flexible and collaborative solutions. Such paradoxes may create tensions for the organization and practice of evaluation, and investigating them may help explain the substantial variation in evaluation approaches in SI evaluation practice (Nordesjö, 2021; Vo, Christie, & Rohanna, 2016).

The aim of this article is to analyze paradoxes of stability and change in the implementation of social investment fund evaluation in Swedish local government, and how local government actors respond to these paradoxes in the organization and practice of evaluation. I continue by describing how paradoxes are discussed in the evaluation literature and the paradox theory used. Next, the case and methods are described followed by the findings and a discussion and conclusion.

The Study of Paradoxes in the Evaluation Literature

Research on evaluation that explicitly mentions paradoxes refer to unrealistic modern ideals of evaluation, unforeseen implementation problems, and conceptual issues within evaluation ideas themselves. Rodriguez and Acree (2020) argue that the legitimacy of evaluation is based on a modern idea with claims of neutrality and objectivity through measurement and statistics, despite it being a “human endeavor of meaning-making and interpretation” (p. 465). As such, evaluation is always contested and aligned to values, politics, and ideologies among the evaluator and stakeholders in an evaluation context. Moreover, Termeer and Dewulf (2019) speak of the evaluation paradox of wicked problems, where evaluation should assess interventions to problems that are difficult to define, are constantly changing, involve actors with conflicting values, and where there is disagreement on when it is solved (see Rittel & Webber, 1973). The most discussed paradox in evaluation literature may concern evaluation use, where evaluation is intended to be used instrumentally but seldom is in practice (see Dahler-Larsen, 2012). Consequently, trust in the instrumental use of evaluation may contradict its own claims and cause damage and frustration (Schwarz & Struhkamp, 2007). Relatedly, the evaluation and performance management literature has described a range of unintended and constitutive consequences from indicator use that can take the form of paradoxes (e.g., Pollitt, 2013; Rijcke, Wouters, Rushforth, Franssen, & Hammarfelt, 2016; Thiel & Leeuw, 2002). The evaluation literature has also dealt with paradoxes that consist of contradictions between parts or values within an evaluation concept itself. An example is Van Der Knaap's (2006) description of performance indicators as, on the one hand, “frozen ambitions” and, on the other hand, aimed to facilitate dialogue and learning. Paradoxes can also emerge from translation where an evaluation concept gradually changes its meaning through actors’ continuous interpretations (Nordesjö, 2019). Relevant to the SI evaluation literature, is how the two paradoxes “flexible but evidence-based services” and “cost-saving risk transfer” in reports on SIB contribute to the charm of the SIB idea—they enable several good things to happen at once that would otherwise be incompatible, and the concept can be adapted depending on the audience (Maier et al., 2018).

Although there are several examples of evaluation paradoxes within the evaluation literature, the concept of paradox has rarely been used analytically to investigate more closely the different kinds of evaluation paradoxes. This article seeks to contribute to this literature, by applying paradox theory to the case of SI funds.

Paradox Theory in Evaluation

Paradox theory is used to investigate paradoxes of evaluation and their responses through the case of SI funds. A paradox consists of “contradictory yet interrelated elements—elements that seem logical in isolation but absurd and irrational when appearing simultaneously” (Lewis, 2000, p. 760). Four kinds of paradoxes that may arise in organizations are commonly described in the paradox literature (Jarzabkowski et al., 2013; Smith & Lewis, 2011). Paradoxes of organizing relate to structural contradictions and tensions between organizational parts and tasks and the need for the organization to cohere as a collective system. Paradoxes of belonging or identity refer to actors’ conflicting experiences between the values and beliefs of their immediate group while also belonging to a wider organization. Paradoxes of performing concern contradictory goals and aims of an organization that forces individuals on a microlevel to perform multiple and inconsistent roles and tasks, resulting in contradictory interpretations and actions. Lastly, paradoxes of learning refer to contradictions when “building on the past while simultaneously needing to destroy it in order to move forward during change” (Jarzabkowski et al., 2013, p. 248), involving specific modes of knowledge acquisition. However, learning occurs within both actors and organizations and is likely an underlying condition for the other three paradoxes.

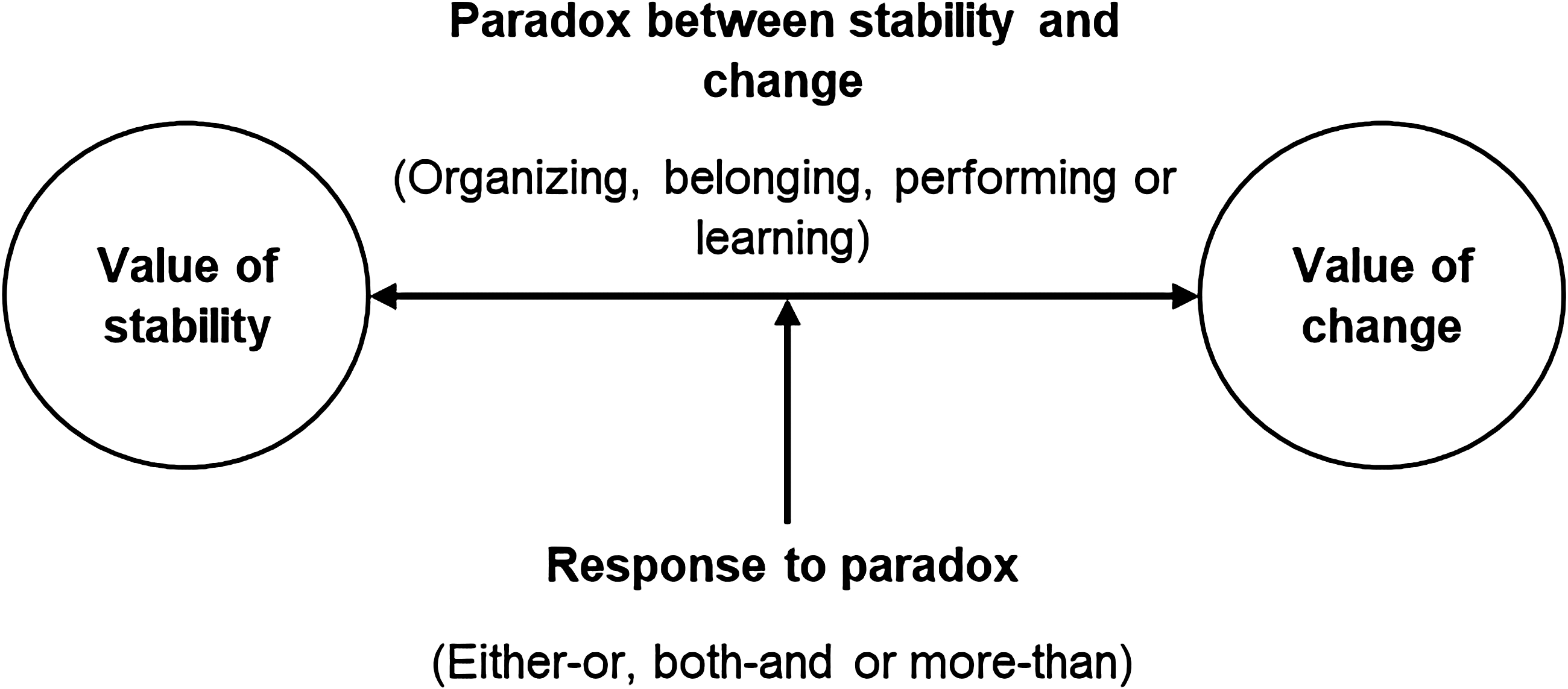

Paradoxes are not good or bad, productive or unproductive, but actors may react to paradoxes in different ways which in turn give rise to various personal and organizational effects (Tracy, 2004, p. 120). Cunha and Putnam (2019) argue that paradoxes should be studied in relation to how organizational actors respond in paradoxical situations. Such responses differ from controlling, resolving, or managing a paradox and instead focus on how tensions emerge, evolve, and transform and incorporate responses aimed at sustaining the dynamic interplay among opposites (Putnam et al., 2016). The variety of responses in the paradox literature can be broadly described through either-or, both-and, and more-than (Putnam et al., 2016, pp. 125–128). Either-or responses prioritize one pole over the other, for example, by separating them temporally or functionally, by favoring one pole, or by suppressing and ignoring the tension altogether. Both-and responses are characterized by combinations and synergies such as paradoxical thinking, where differences are valued, and vacillation, radically shifting between poles at different times. Finally, more-than responses focus on “connecting oppositional pairs, moving outside of them, or situating them in a new relationship” (p. 128). For example, by reframing opposites to become encompassed to form a new whole or by engaging in exploration through trial and error for reflective practice. The relationship between paradoxes and responses is schematically illustrated in Figure 1.

Relationship between paradoxes and responses.

Paradoxes are related to each other and should not be studied in isolation. Jarzabkowski et al. (2013) argue that the responses to the paradox of organizing, which operate at a macrolevel, are embedded within organizational procedures that condition the paradox of belonging on a meso level. Responses to this paradox may in turn condition individuals’ roles and goals that constitute the performing paradox on a microlevel. Specifically, the authors argue, “the paradoxes of performing and belonging are mutually reinforcing as managers attempt to perform their roles, which require them to interact over their interpretations of goals and the group and divisional identities they attribute to those goals” (p. 266).

Against this theoretical background, I argue that paradox theory is relevant to the study of tensions in evaluation. It is possible to draw attention to the larger organizational context while still being responsive to the field of evaluation. For example, the governing logic of the intervention to be evaluated may be based on contradictory assumptions such as simple/complex and top-down/bottom-up (paradox of organizing). How such paradoxes are handled can result in ambiguous stakeholder roles and responsibilities (paradox of belonging) and contradictory evaluation goals (paradox of performing). How the evaluation is used and learned from may return in single- or double-loop ways into other paradoxes (paradox of learning). Using paradox theory in evaluation can thus identify what paradoxes are present in an evaluation context, how stakeholders respond to these paradoxes and how these responses give rise to and relate to other paradoxes within an evaluation context. It is also relevant to acknowledge Pollitt and Bouckaert's (2017) view that there is no fundamental or universal contradiction between stability and change in public management. Innovation may be needed to maintain stability, they argue, and tensions may also be seen as trade-offs where one value is chosen over the other. From a paradox theory standpoint, combining innovation and stability and choosing one value over the other, are types of responses that can help researchers and evaluators identify and understand tensions from paradoxes.

In the analysis, the four kinds of paradoxes presented in this section will be used as themes to identify paradoxes within the larger paradox of stability and change in SI. Responses to these paradoxes in the organization and practice of evaluation will then be explored inductively and the literature on responses to paradoxes will inform the analysis.

Case and Methods

The SI perspective has internationally materialized in practices where private capital and entrepreneurs contribute to and profit from solving complex societal challenges by meeting predetermined targets (e.g., SIB) (see Berndt & Wirth, 2018). In Sweden, the SI perspective has been less about financing welfare through private capital and more of a municipal answer to a lack of innovation and public sector silos that complicates complex problem-solving and requires the participation of several sectors and levels. Also, the SI perspective draws attention to rigid short-term budgetary practices in municipalities that hinder preventive and long-term welfare work that is more cost-effective (Jonsson & Johansson, 2018). Therefore, the SI perspective in Sweden emphasizes the coordination of different local bodies and a focus on preventive interventions—act today to lower the costs tomorrow—and thus combines elements of control and efficiency with coordination and trust (Balkfors, Bokström, & Salonen, 2019).

On a national policy level, SI is defined by the interest group SALAR (The Swedish Association of Local Authorities and Regions) as “a limited investment which, in relation to the usual working methods, is expected to give a better outcome for the target group and at the same time lead to reduced socio-economic costs in the long run” (UPH, 2017, p. 6). Half of Sweden's 290 municipalities claim they work with SI in some way (Balkfors et al., 2019). This paper focuses on SI funds, financial resources that are set aside for certain projects. They exist primarily on a municipal level and are characterized as being (1) implemented through temporary projects, (2) separate from day-to-day public administration, (3) a collaborative effort, (4) evidence- or knowledge-based, and (5) possible to monitor and assess from a societal as well as a financial perspective (UPH, 2015). Furthermore, they can (6) contribute to social innovation by developing or testing new or previously tested methods and interventions (Jannesson & Jonsson, 2015).

Based on these definitions, the role of SI fund evaluation may range from supporting collaboration and innovation to determining whether evidence-based interventions are successful or not and connecting them to different cost scenarios. The latter role is most often highlighted in literature on SI evaluation, where evaluation can work as a basis for decisions for managers or politicians from two aspects: identifying a choice between specific measures and identifying the impact of these measures (including costs) for citizens’ well-being (Hultkrantz, 2015).

Case Study Design

Against this background, the SI perspective is explored as a critical case of potential paradoxes between stability and change in the organization and practice of SI evaluation (see Flyvbjerg, 2011). The range of potential evaluation approaches and designs within the concept of SI may on the one hand be aligned to an experimental, structured, and summative evidence perspective, and on the other hand to evaluation designs supporting innovation and collaboration.

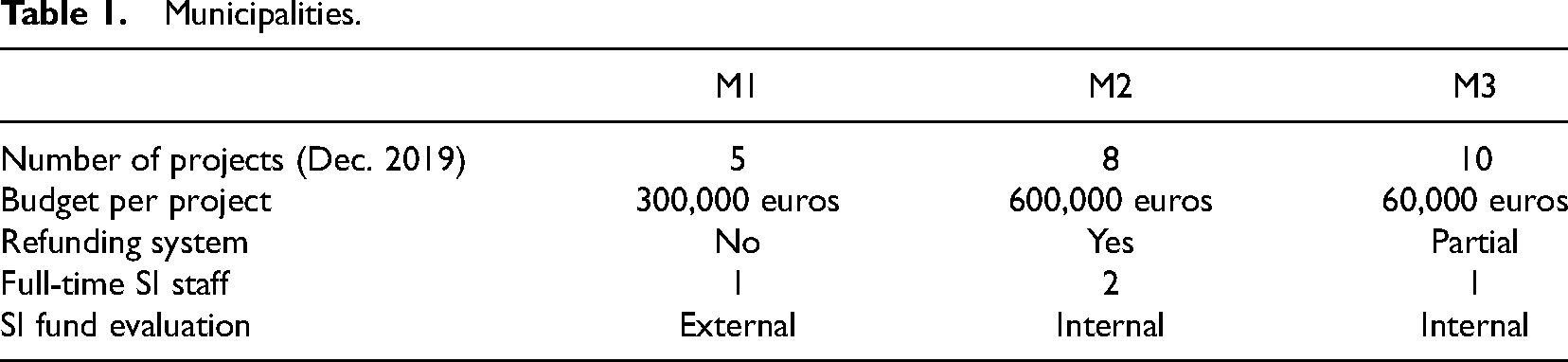

The study was carried out in three municipalities (M1, M2, and M3) in the Swedish public sector (pop. 70,000–150,000, see Table 1). This local level is relatively large in terms of employees and resources, being responsible for public services such as schools, social services, and elderly care. Several completed SI fund projects had been carried out and evaluated in the three municipalities. The municipalities’ SI funds were similarly organized. They set aside financial resources from the municipal budget in a fund that finances projects related to one or more specialized departments (e.g., social services). SI staff handles the SI project applications that have been submitted by these departments. An application should describe the social problem to be dealt with, the available evidence or research about the problem and the proposed intervention, the target groups, the intervention itself, the evaluation exercises, the costs and anticipated refunds, and the future implementation. The elected politicians in the municipality ultimately decide on what projects to initiate. If an intervention is successful, it (or parts of it) should be implemented as part of the regular department and receive no additional funding.

Municipalities.

A feature of the SI funds is the refund system, in which the projects refund parts of what they have initially received from the fund. In theory, since the social problem is reduced through a successful intervention, it does not burden the municipality financially as much as before and the corresponding resources can be returned to the fund. Once back in the fund, resources can be applied for anew and allocated for new purposes. M2 comes closest to this ideal, where projects refund an amount corresponding to the calculated cost reduction. In M3, refunds only apply if there are evident cost reductions such as a reduced workforce due to new ways of working. In M1, no refund system is in place. Other differences were that M2 had the largest SI fund organization and projects and consequently more potential interviewees, and that M2 and M3 performed evaluations internally while M1 had external evaluators.

The interventions carried out within the SI fund projects ranged from promising professional practices that are getting attention and are scaled up within the local government, to more research-based practices that SI staff or politicians pick up from other parts of the country due to good results from research or evaluation. Although some interventions are said to undergo randomized controlled trials (RCTs) in other contexts and countries than the Swedish SI funds, no intervention is claimed by the municipalities to be strictly evidence-based, in the sense that it has had multiple RCTs across heterogeneous populations demonstrating its effectiveness (Nutley, Davies, & Hughes, 2019, p. 229).

In the three municipalities, there are several interrelated evaluation activities that are carried out regardless of intervention. A monitoring system is created by SI staff tracking project goals, and indicators for activities and social and economic outcomes. Project staff provide the monitoring data which work as a baseline for the outcome evaluation. These evaluations are carried out internally by SI staff or externally and should have control groups, if possible, that are counterfactual. Quasi-experimental evaluation is most common, especially in school-related interventions where students’ primary characteristic is age and students from other grades or schools work as a reference. Where target groups’ characteristics are, for example, language or social problems, pre–post analysis tends to be used. Outcome evaluation is conducted at the end of the projects and possibly after 1–3 years. Some evaluations (especially in M2) are supplemented with qualitative interviews to give a broader account of the intervention. Overall, formative evaluation may complement and explain quantitative evaluation results or be used as stand-alone evaluation designs. Cost calculations such as cost–benefit analysis are also performed to assess whether the interventions have reduced future spending. The final evaluation reports draw on all these activities and provide information for decision makers such as department managers and the municipal executive committee.

Data

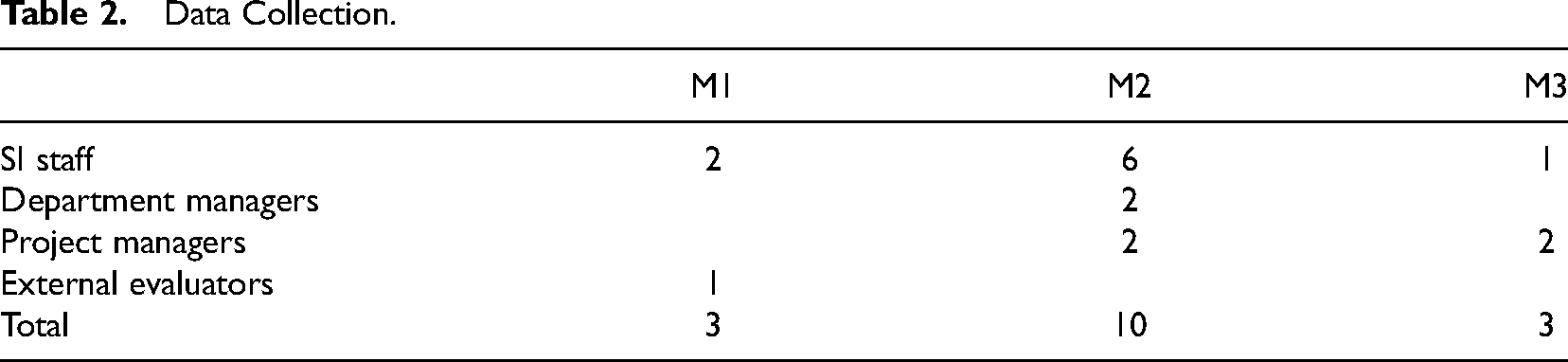

Sixteen people were interviewed (see Table 2) as part of a multisite study of the formulation and implementation of SI initiatives in Sweden 2017–2021 (see also Nordesjö, 2021). The interviews were intended to capture the core elements of the idea of SI, how different values may create tensions in practice and how such tensions are responded to. Interviewees were contacted in a combined network and strategic sample. Interviewees on a national level were easily identified as implementing and supporting actors who promoted SI nationally. Municipalities were approached through a national network of SI coordinators, after which SI staff, project leaders, and department managers were contacted and asked to suggest other interviewees. SI staff work at the top of the municipalities and manage and oversee SI fund projects in different departments, handle applications, design monitoring systems, and may perform evaluations. Department managers are responsible for the acquired funds and are the owners of the SI projects. Project managers work with the interventions.

Data Collection.

Interviews were semistructured in two themes: governance through SI and evaluation of SI funds. Questions revolved around the need for SI funds and how the local SI fund system worked, and interviewees’ experiences of SI fund evaluation practice—how it was performed, and its consequences for evaluation practice, the organization, and different stakeholders. The interviews were held from 2018–2019, conducted in person or on the telephone, and lasted 45–120 min. They were recorded and transcribed verbatim and organized using Nvivo software. Information about the study and the interview was sent in advance, and verbal consent was received from all interviewees. Sixteen evaluations from completed projects in the three municipalities supplemented the interviews.

Data Analysis

Braun and Clarke's (2012) six-phase approach to thematic analysis was used to identify and organize patterns of meaning across the data. The approach has been abductive to create a reflective dialogue between the researcher, earlier research, paradox theory, and the empirical data (Alvesson & Sköldberg, 2009). In phase 1, I became familiarized with the transcribed empirical data. This directed attention to interviewees’ conceptions of tensions and challenges in the evaluation of SI funds. In phase 2, a long list of tentative codes was generated that could capture the tensions between values that interviewees described. The codes were arranged in pairs to represent opposites or poles (e.g., process vs. outcomes, external vs. internal, professionals vs. managers). In phase 3, codes were reformulated, grouped, and regrouped in tandem with paradox theory to make sense of the tensions (e.g., Jarzabkowski et al., 2013; Putnam et al., 2016). The paradox of stability and change emerged as a master “paradox” containing four types of paradoxes (paradoxes of performing, belonging, organizing, and learning). They became themes to reduce and organize the long list of codes. For example, the paradox of organizing, Organizing projects as top-down experiments and bottom-up initiatives, originally consisted of three pairs of codes, which were synthesized to reflect a core paradox of organizing. All four paradoxes were found in all municipalities, although a particular paradox often was more evident in a particular municipality. Responses to paradoxes were identified within the four paradoxes and characterized as either-or, both-and, or more-than. In phase 4, when reading and rewriting the themes and quotes, themes could be adjusted to be made more specific. This continued in phase 5 where the titles of the themes were modified to reflect a theme's unique content and not to overlap with other themes. Finally, the analysis was an integrated part of the writing process and is described in this methods section (phase 6). After the main thematic analysis, the relationships between paradoxes were investigated from the proposition that responses to the paradox of organizing created conditions for the paradoxes of belonging and performance. In all, the abductive data analysis is both deductive in its dependence on, and guidance through, four theoretical paradoxes, and inductive in the analysis of responses within these paradoxes. This ensures the data are interpreted within paradox theory without overriding the experiences of the interviewees (Braun & Clarke, 2012).

Results

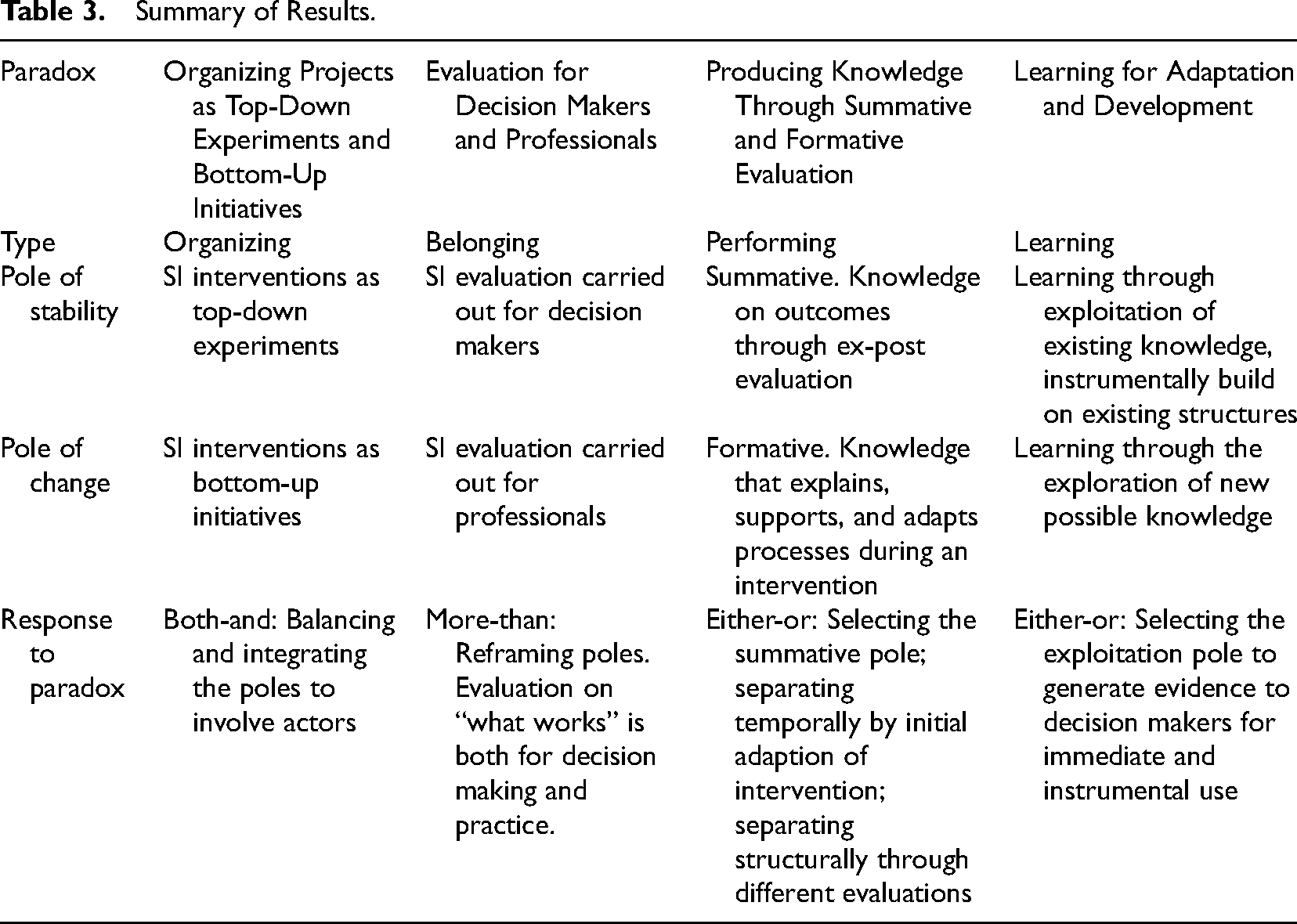

Below, the results are presented in four sections corresponding to the four paradoxes. The results are summarized in Table 3.

Summary of Results.

Organizing Projects as Top-down Experiments and Bottom-up Initiatives

In the first paradox of organizing, tensions may arise as subsystems act independently but still are part of an overarching interdependent organization (Jarzabkowski et al., 2013). On the one hand, the objective is to carry out and manage SI interventions as top-down experiments from a central administrative level similarly for all projects and departments. On the other hand, SI interventions are intended to be implemented as bottom-up initiatives at department level.

On the one hand, SI projects should ideally be centered around an evidence-based intervention and organized and managed as experiments with a clear baseline, defined and measurable indicators and goals to accurately measure outcomes and costs. Project applications from departments are subject to extensive review by SI staff and municipal top management through detailed written routines and criteria. “We are not close to the departments in that sense,” an interviewee from the SI staff in M2 says, who describes the work as enabling forums and workshops and finding people who see the problems and challenges that should be addressed. On the other hand, both departments and SI staff argue that ideas for SI projects should be formulated from a professional level to ensure that interventions are relevant and based on professional experience. A department manager in M2 describes the tension that arises from the paradox: “there is a strong political will in social investments,” but it is not as efficient as “when the idea comes from beneath.” Another department manager in M2 similarly argues that the departments should be given a bigger role in the application process.

The paradox is intensified by the fact that evidence-based interventions are difficult to find: “In general, there isn’t much evidence-based [intervention] in social work, and that is a weakness” (SI staff, M3). The paradox thus relates to the metatheoretical paradox of developing interventions for “wild” and “tame” problems (Rittel & Webber, 1973) where the municipalities seek to develop projects with evidence-based interventions where no such interventions can be found. Still, the municipalities respond, not by abandoning the top-down pole, but by trying to combine the two poles. SI staff in all municipalities say that methods that have shown good results from a practice standpoint may suffice, although applications should refer to research to improve chances for funding: “You can also try out a whole new idea, we can’t just close the door … if there hasn’t been a study, the idea can still be good” (SI staff, M2).

By not abandoning either pole but instead balancing them, the municipalities try to integrate the ambitions in the hope that both top-down experiments and bottom-up influence can be achieved. However, such responses may be temporary or unstable. It might be an efficient way of balancing the interest in the short term, but not necessarily an effective long-term strategy for dealing with the paradox (Putnam et al., 2016, p. 124ff). As will be shown, the integration of the poles in this organizing paradox may have consequences for the following two paradoxes on a meso and microlevel.

Evaluation for Decision Makers and Professionals

The paradox of belonging refers to the evaluation's stakeholders who experience tensions between values and beliefs of their group while also belonging to a wider organization (Jarzabkowski et al., 2013). The paradox consists of SI evaluation being carried out simultaneously for decision makers (managers and politicians) and for professionals. To simplify, decision makers more often seek information that may inform a decision, for example, whether intervention A is less costly and more effective than intervention B. Professionals more often seek knowledge that improves and explains the processes of practice. So, who is SI evaluation for?

On the one hand, SI evaluation is a tool for managers and politicians to govern from the top. It is described in both local SI policy and by SI staff as being of use to decision makers to know which interventions to continue with based on outcomes and costs. For example, in M2, the goal of evaluation has been to “gain better knowledge of what has been done and what it has led to … and to give a suggestion for what we think could be implemented and become permanent” (SI staff, M2). Another important aspect for decision makers is the way evaluation results may increase costs for the departments—both in terms of further implementation of successful projects and in terms of the departments’ obligation to pay back financial resources to the municipality corresponding to the outcomes achieved. On the other hand, SI evaluation is of value for professionals since they are partly the originators of the ideas. The projects are also built on cooperation between professionals in different departments where a “silo mentality” is described as harmful. Evaluation could thus illuminate how different professional perspectives on the same problem could contribute to a potential solution.

However, municipalities respond by adhering to the decision-making pole. Evaluators are perceived as experts standing outside the intervention and are looking in at and assessing the carefully rigged intervention, ensuring objectivity and scientific integrity to the collected data that is fed back to decision makers. This idea is so dominant that even interviewees at a professional level frame their knowledge interests within this kind of “what works” rhetoric: Because when you ask the question whether or not to implement this, you can’t go by your gut feeling … if we don’t know if it's good or bad we don’t know if we should implement it (project manager M3).

It varies … some find measurements annoying and can’t see the use of them … while others say wow, now we can show results, it's great for us to know how to organize our department (project manager M2)

In this way, improving professional practice is not about knowledge for improving processes, but whether what you do has the intended outcomes or not. It is a response to the paradox by successfully situating the opposites in a new relationship, that is, reframing (Putnam et al., 2016). In this frame, knowledge for decision makers is not a contrast to knowledge for professionals, and evaluation that decides on what intervention works is as much a question that settles a decision as one that develops practice. This response is likely related to the paradox of organizing where the integration of top-down and bottom-up calls for ways to involve several actors while still being aligned to a decision-making perspective. An implication is that SI evaluation primarily seeks to produce results that are consistent with this frame, although there are also other knowledge interests in demand. This is illustrated in the next paradox.

Producing Knowledge Through Summative and Formative Evaluation

In the third paradox of performance, individuals are forced to perform multiple and inconsistent roles and tasks to achieve goals and aims, resulting in contradictory interpretations and actions (Jarzabkowski et al., 2013). This paradox refers to the goals of SI evaluation. On the one hand, evaluation should give a final assessment by producing knowledge in terms of outcomes through summative ex-post evaluation. On the other hand, evaluation should produce knowledge that explains, supports, and adapts processes during an intervention, that is, formative evaluation. SI staff in M2 captures the paradox: “It's controlled development work, to get a structure.”

On the one hand, the goal of SI evaluation is to understand whether a project “has given the expected result and the outcomes that we wanted to achieve when we started … to see that we match the needs that we saw initially” (SI staff, M2). These findings may then be related to costs and show what interventions are most cost-effective and beneficial to a certain social problem in the long run. On the other hand, knowledge of how to understand and improve the interventions as they progress is also in demand. A focus on outcomes may overshadow the content of the project, SI staff in M3 argues, requiring ways to understand “what is it that you’ve done to reach the outcomes?” Moreover, SI staff are thought to be too rigid by the departments when not supporting a change of direction based on monitoring: I’ve stepped in as project owner sometimes, dear god, we have to deliver, we will be evaluated as a successful project, then we’ll have to be successful … and if that requires adjusting the project directive, that is what we will do (department manager, M2).

This tension threatens the stability of the experimental design. All municipalities respond by favoring the summative pole. SI staff in M2 separates the poles temporally by allowing adaptation of the intervention for a short initial period. The summative design is what matters: “We want to describe the outcomes that the interventions have contributed to for the target group and for the municipality's economy” (SI staff, M2). Although M2 also conducts interviews, they are part of the summative evaluation rather than for formative improvement. Similarly, M3 separates the poles structurally by inviting researchers and students to investigate their projects independently of the municipal organization, for example, through trailing research. M1 selects the summative pole, although there sometimes were project intentions of formative evaluation: It ended up in an irritated atmosphere … they thought we were annoying … “shouldn’t you evaluate this?” “Yes, but it is a social investment, we will evaluate in relation to if you had not done this.” They thought we would go with “Is this going well? What is not going well?”, some kind of qualitative evaluation … (Evaluator M1)

The quotation also highlights the difficulty of setting up a monitoring system needed for summative evaluation, which has made several outcome evaluations suffer from a lack of data and attribution issues. In M1, project staff were unaware of the monitoring requirements for performing outcome evaluation. Evaluations in M2 state that “sufficient data are not yet available” (2018: 2) or data “have been hard to interpret or shown results that cannot be directly related to the project's work” (2017: 2). In M3, “objective points of measurement have been hard to find” (2019b: 3). These problems can make cost calculations difficult: “[It is] difficult to demonstrate that the intervention has generated the economic effects that surpass the costs” (Evaluation M2, 2019: 2). Also, the quotation highlights a common view among interviewees: outcome evaluation equals quantitative data. Although this is often the case, it leads to a false dichotomy where alternatives are described as vague and subjective “qualitative evaluation.” Thus, it is not difficult to understand the prevalence of either-or responses. It appears that it is safer to respond in a way that aligns with the integrity and formal ambition of the summative outcome evaluation, even if it results in data problems and ambiguous evaluation results. This has implications for the ultimate goal of evaluation—learning.

Learning for Adaptation and Development

Finally, the paradox of learning refers to building on the past and needing to destroy it to move forward during change (Jarzabkowski et al., 2013). It is about learning from evaluation through the exploitation of existing knowledge such as the application of experimental designs of enough evidence-based knowledge; or, learning from evaluation through the exploration of new possible knowledge (March, 1991). The paradox can also be conceptualized as between instrumental single-loop learning where knowledge is used to correct and adjust existing actions, and disruptive double-loop learning, where the foundations for the first loop are questioned and reformulated.

On the one hand, SI evaluation is described by interviewees as instrumentally informing decision makers on the outcomes and costs of the intervention. The evaluations are used “To make political decisions on how to proceed,” an SI staff member in M2 says. In this sense, evaluation use is about verifying and exploiting existing knowledge and informing actors about how they can instrumentally build on existing structures. On the other hand, evaluation may contribute suggestions for improvement during the intervention and new innovative ways of performing and organizing welfare work that breaks with earlier ways. Carrying out supplementary interviews or implementing new and, for the specific municipality, untried interventions may be seen as the exploration of new and uncharted territory. Here, “experiment” takes on a different meaning, as in “testing” and “trying out” new solutions and innovations: “we have to experiment, and that's why evaluation is so important, so we get an understanding of what works and what doesn’t work” (SI staff M3).

Still, municipalities adhere to the exploitation and single-loop learning pole where evaluation settles and determines whether the intervention works and lowers costs. The outcome evaluation is fundamental for knowing how much money is being fed back to the municipality and what parts of the intervention are supposed to be implemented: “Through evaluation they were able to prove that it had made a difference … we want to continue supporting this … and we have also received money now for a new school” (department manager, M2). Besides determining distribution, evaluation has a settling effect and gives a legitimate assessment: “It is a good knowledge addition for the implementation so that everyone is aware that [the intervention] is successful” (SI staff, M2), not least because SI evaluation provides “hard facts that cannot be questioned” (project manager, M2).

This selection of one pole over the other resonates with a linear knowledge transfer model in which evaluation and research generate evidence as a product and can be disseminated to decision makers for immediate and instrumental use (Best & Holmes, 2010; Boaz & Nutley, 2019). It thus consciously ignores the explorative part of learning and may hinder evaluation from problematizing or opening up for discussion. Generally, this learning type will not develop the organization but adjust it to predetermined goals (Jarzabkowski et al., 2013). This is not to say that insights cannot be gained by individuals or that the interventions have not been subject to exploration and innovation prior to the implementation in the investigated municipalities or through complementary evaluations. But such learning processes are not prioritized in response to the paradox.

Finally, as learning occurs among both individuals and organizations, the selection of a linear knowledge transfer model may affect and reproduce the focus on decision-making and summative evaluation in the other paradoxes. However, this has not been investigated. What is discussed by some informants is instead the problems of incentives of the feedback system and the ambiguous evaluations with data problems. Such a discussion can challenge the very foundations of the SI fund idea and potentially create double-loop learning where the conditions for SI evaluation are questioned.

Discussion and Conclusion

The findings show how municipalities respond in much the same way when faced with the paradoxes of SI evaluation. They try to integrate the ambitions of top-down experiments and bottom-up innovation processes in the hope that both can be achieved, although evidence-based interventions are hard to find. Consequently, actors reframe the “what works” rhetoric to resonate not only with decision makers but also with professionals, and select a summative focus rather than a formative one, although it may result in data problems and ambiguous evaluation designs. Lastly, municipalities adopt a linear knowledge transfer model in which evaluation is intended to generate evidence as a product and is disseminated to decision makers for immediate and instrumental use. All in all, the responses give priority to top-down summative evaluation that produces instrumental knowledge on outcomes and costs for decision makers. These findings and the limitations of the study are discussed below.

There are three important limitations in the study. First, the professional level is represented by project managers and department managers. Interviews with other professionals would have given more insights into the expectations and consequences of evaluation on a professional level and could have made responses and paradoxes more distinct. This would also have added to the second limitation, the relatively low number of interviews. Still, since all interviewees were asked if they knew of other potential interviewees related to SI in their municipality, the sample is thought to have captured both formal decision makers and other relevant interviewees. Third, follow-up interviews would have to be carried out to more adequately capture the evolving relationships between paradoxes, for example, between the paradox of learning and the other paradoxes.

Overall, the paradoxes explored in this paper revolve around the meta-theoretical issue of the (unfulfilled) modern expectations of evaluation as an objective, impartial truth-telling activity. The struggle for certain and objective knowledge on “what works” is indeed a return to a science-driven wave of evaluation (Vedung, 2010) where evaluation as social science contributes to the avoidance of decisions based on ideology and prejudice (Stame, 2019). Although quantitative cost and outcome measures are necessary to assess the value of SI interventions, in this case they are challenged by methodological problems which may produce a false image of “evidence.” The findings also support research on evaluation paradoxes where evaluation may fail to capture the particular wicked problem (Termeer & Dewulf, 2019) and are always contested and aligned to values, politics, and webs of power among the evaluator and stakeholders in an evaluation context, thus hindering the ambition of an objective and value-neutral assessment (Rodriguez & Acree, 2020). However, this study has gone further than identifying paradoxes to also investigate how actors respond to these paradoxes. Two conclusions are drawn.

First, the different responses show that the concept of SI fund evaluation is elastic enough to contain paradoxes and address different audiences. On the one hand, the prioritization of stability in the paradoxes does not make the paradoxes go away and instead produces, for example, data problems for outcome evaluation, which raises questions about how SI fund evaluation can be carried out successfully in terms of its own logic. On the other hand, the reframing of the “what works” argument of SI fund evaluation is a way to make evaluation relevant also for a professional level. Of course, the original “critical appraisal” idea of evidence-based practice (EBP) is a bottom-up approach, in which research and evaluation, professional knowledge, and service users’ conceptions are assessed jointly (e.g., Sackett, 1997; Trinder & Reynolds, 2000). However, improving professional practice through SI fund evaluation is now reframed as if what you do gives the results you want or not, rather than knowledge of processes and mechanisms of professional practice. It is an alignment to the top-down model of EBP that has dominated in Sweden (Bergmark, Bergmark, & Lundström, 2011). By knowing what works, professionals can know whether their practice is effective and can feel confident. SI evaluation may thus legitimize social work practice. Consequently, findings show a dominance of summative outcome evaluations rather than a broad range of evaluation practices found in earlier research on SI evaluation (e.g., Vo et al., 2016).

Thus, as Maier et al. (2018) illustrate through the related concept of SIB, paradoxes can enable desirable but incompatible things at once. Paradoxes can be bent in different ways depending on the audience and in order to serve different needs and interests. Hence, as long as the idea of instrumental use and “evidence” remains convincing to stakeholders and attracts the relevant actors, paradoxes can be overlooked and delegated to evaluators. This also resonates with research on SIM where social enterprises deal with the pressure of measuring social impact. Research shows that stakeholder involvement in SIM is active from the preliminary stages in order to define the orientation of evaluation (Dufour, 2019). Social enterprises may try to increase the legitimacy of their own “bricolaged” approaches to SIM by delegitimizing formal methodologies (Molecke & Pinkse, 2017). Understanding the different values at play can illuminate actors’ strategic intents and design evaluation accordingly, as well as making evaluation relevant rather than symbolical (see Dufour, 2022; Nordesjö, 2021). Although the findings in this study suggest that evaluation approaches are legitimate within a larger concept of SI outcome evaluation, the research mentioned raises the question of stakeholders’ roles and responsibilities in evaluation design. The inclusion of different competing values within the idea of SI funds may not only be essential to implementation but also give rise to paradoxes. Future research could investigate how stakeholder participation creates paradoxes and contributes to responses for evaluation practice.

Second, the integration of top-down and bottom-up within the paradox of organizing conditions other paradoxes. This is supported by the evaluation literature where evaluation in public administration is often conditioned by the governance logic of the intervention or evaluand (Hansen, 2005; Hertting & Vedung, 2009). The absence of a single logic of governance draws attention to how attractive both poles are to public organizations. All governance logics are in favor of stability and change, although they tend to focus on different aspects (see Pollitt & Bouckaert, 2017). Further research could compare how the same paradox is manifested in different governance logics, and whether they give rise to other paradoxes and responses with relevance for evaluation. Furthermore, actors are not necessarily equipped to respond to paradoxes of organizing. The influence of the paradox of organizing raises the question of how reasonable it is for different members of the organization to be able to respond proactively to paradoxes that are grounded in the basic structures of the organization. As Berti and Simpson (2019) argue, power asymmetries grounded in, for example, resources and position limit the options for responses.

Footnotes

Acknowledgments

The author would like to thank the three anonymous reviewers for their constructive comments. Mats Fred, Patrik Hall, and Dalia Mukhtar-Landgren all gave valuable comments on early drafts.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Swedish Research Council for Health, Working Life and Welfare under Grant 2017-02151.