Abstract

Organizational evaluation policies describe how evaluation practices should be structured and implemented. As such, they provide key insights into organizational priorities and values regarding evaluation. However, the link between evaluation policies and how evaluation policies translate into concrete practices has seldom been explored until now. Our study examines the implementation of two Canadian federal government evaluation policies over a 10-year timespan, through the secondary analysis of reports produced on behalf of governmental evaluation functions. Our findings show that some policy elements have been fully implemented, but the way in which these have been implemented varies between organizations. Further, we observed that the level of control of various organizational members responsible for implementing policy elements, as well as time, can influence implementation of certain policy requirements. We conclude by proposing further directions for research to examine the policy-practice link.

Governmental evaluation functions are responsible for the development and implementation of studies to assess the relevance, effectiveness, and efficiency of policies and programs (House, Haug, & Norris, 1996). In many countries, a decentralized approach to evaluation has been implemented over time; this approach involves setting up evaluation functions that bring together evaluation experts responsible for coordinating external evaluations or conducting them internally on behalf of their organization (Lahey, Elliott, & Heath, 2018; Lemire, et al. 2018; Lemire, Nielsen, & Christie, 2018). Evaluation functions are typically guided by departmental and government-wide evaluation policies and thus serve as important mechanisms through which government evaluation priorities are brought into practice.

Despite their importance in directing and guiding the work of evaluation functions within governments, evaluation policies have seldom been examined empirically (Al Hudib & Cousins, 2022; Christie & Fierro, 2012; Christie & Lemire, 2019; Grob, 2006; House et al., 1996). Most notably, a need exists for knowledge and insight about how governmental evaluation policies are implemented by evaluation functions: “…limited empirical work has been conducted to better understand how they are interpreted and implemented by the evaluators and practitioners whose work they are likely to affect” (Christie & Fierro, 2012, p. 65).

Our study seeks to address this issue by examining the implementation of two Canadian federal government evaluation policies over the past 10 years, as seen through external assessments of departmental evaluation functions. More specifically, our primary objective was to determine the extent to which evaluation policy shapes departmental evaluation practice, as well as the facilitators and barriers to policy implementation in the departments. This work, although situated within a particular country's context, can inform the way in which government organizations around the world conceptualize and develop evaluation policies, and how these policies are then applied by departmental evaluation functions. Through our analysis, we will also probe the adequacy of existing typologies of evaluation policy and investigate specific areas of noncompliance or variations in policy implementation.

The paper begins with an overview of evaluation policy and its elements; we then provide a few examples of governmental evaluation policies. Next, we will describe the context for our study, including a detailed description of the two policies examined. Then, we will provide a description of our methods and present our findings. Finally, we will examine implications for policy development and implementation by evaluation functions.

Defining Evaluation Policy and its Elements

Mark, Cooksy, and Trochim (2009) define evaluation policy as a set of rules or principles embedded in legislation or other documents that are used to guide evaluation practice. These rules “help set the content, characteristics, and context of evaluation itself” (Mark et al., 2009, p. 5). Such policies can have varying degrees of formality and can be set through various means, depending on organizational context (Bicket, Hills, Wilkinson, & Penn, 2021; Mark et al., 2009; Trochim, 2009).

Evaluation policy can serve multiple purposes: first, it may guide the work of evaluators by identifying the types of programs and initiatives that must be evaluated, the frequency at which they should be evaluated, and the evaluation approaches and methods that should be used by evaluators (Christie & Lemire, 2019; Trochim, 2009). In this way, evaluation policy can guide as well as transform organizational evaluation practices by focusing on specific requirements and directing decision-making related to evaluation. Second, evaluation policy can be a communications tool, used to signal the importance of evaluation in supporting organizational learning, decision-making, and accountability (Trochim, 2009). Third, it may facilitate organizational conversations about evaluation by structuring expectations and requirements: “Establishing an evaluation culture requires work; it depends on a community of people who talk to each other about evaluation in a convivial and critical manner … Expectations are important, especially that people should think about and do evaluation as a professional activity” (House et al., 1996, p. 139). This vision of policy reflects a top-down approach as conceptualized by Pressman and Wildavsky (1973) and requires organizational leaders and managers to implement policy requirements, thus translating the initial vision into concrete actions bound by resources and hierarchical control (Barrett, 2004; May & Wildavsky, 1978).

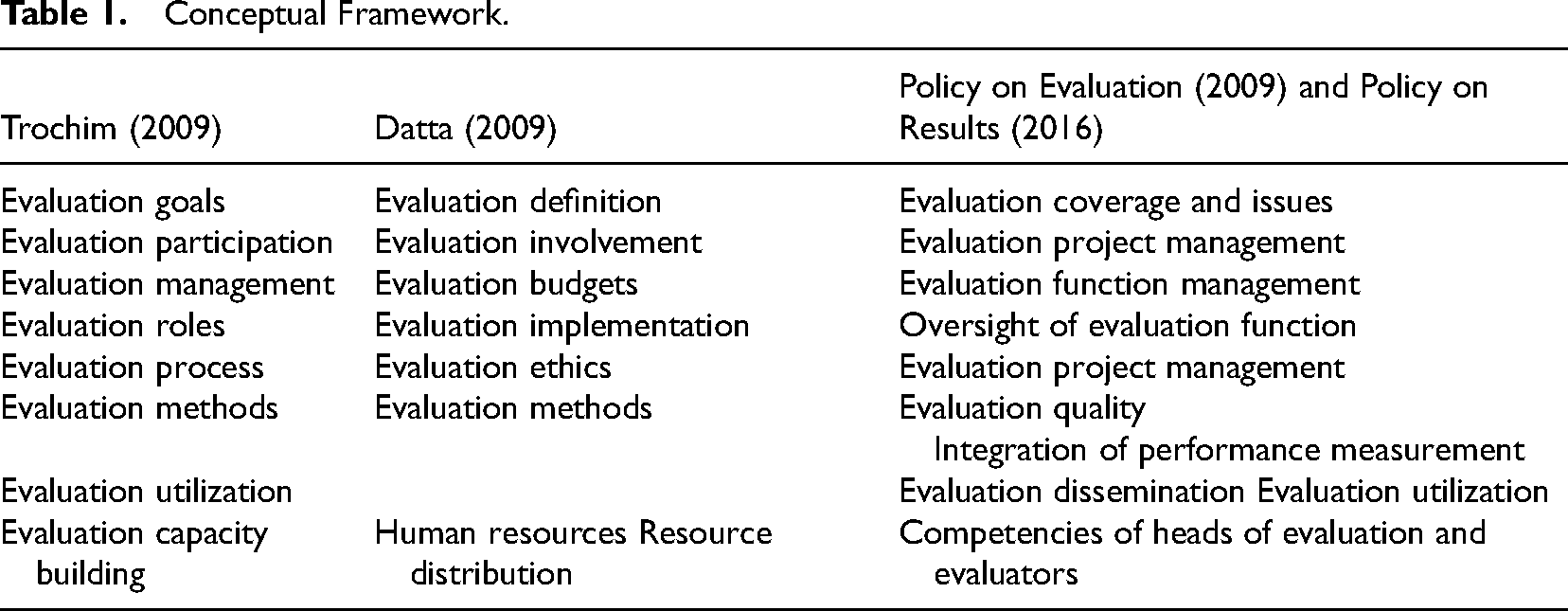

A taxonomy of evaluation policy areas proposed by Trochim (2009) identifies the various elements that can be included in evaluation policies. These refer to evaluation goals (purposes of evaluation), participation (stakeholder involvement in evaluation), management (how evaluations are managed within the organization), roles (in conducting and reviewing evaluations), process (procedures required to conduct evaluation), methods (data collection and analysis), as well as evaluation utilization and capacity building. Along the same lines, Datta (2009), paraphrasing the 2007 AEA Policy Task Force, identifies seven areas of evaluation policy. These include: evaluation definition (distinguishing evaluation from other functions), requirements (what and when do we evaluate), methods (approaches for data collection), human resources (training, experience, and background of evaluators), budgets (financial resources required to evaluate), implementation (roles and responsibilities), and ethics. To these, she adds involvement and resource distribution toward evaluation capacity building. This latter point is also emphasized in the empirical work conducted by Al Hudib and Cousins (2022), which links evaluation policy to evaluation capacity building through several different connection points and which describes some of the moderating factors between the two. Dillman and Christie (2017) recognize the value in these typologies of evaluation policy but add a systems lens to broaden our understanding of how evaluation policies can guide evaluation practice. This view provides a better sense of the interconnections that can exist between various elements of the policy and how these interconnections can be leveraged to accomplish policy and organizational objectives.

Examples of Governmental Evaluation Policies

In the United States, government-wide evaluation policies include the Government Performance and Results Act (1993), which was revised and expanded in the form of the Government Performance and Results Modernization Act (2010). These policies emphasized performance measurement, as well as outcomes measurement, through evaluation activities (Lemire, Fierro, et al., 2018). More recently, the Foundations for Evidence-Based Policymaking Act (United States Congress, 2019) was signed into law in 2019 and seeks to improve the use of evidence and data in policymaking and program development across the federal government. Elsewhere, evaluation policies such as the United Kingdom's Magenta Book provide concrete guidance on evaluation practice, in order to enhance decision-making (Bicket et al., 2021). Others, such as the National Evaluation Policy Framework in South Africa, set the approach for establishing a national evaluation system on which provincial-level evaluations are conducted (Ishmail & Tully, 2020). All of these examples demonstrate governmental interest in evaluation policies and thus highlight the relevance of conducting research on their development and implementation.

In Canada as in the United States, the federal government is a key driver of demand for evaluation (Lahey et al., 2018; Lemire, Nielsen, et al., 2018). Requirements related to evaluation activities and reporting have been in place since 1977 in the Canadian federal government; over time, these requirements have evolved and have largely been subsumed within broader management and reporting trends and policies (e.g., New Public Management influenced the development of performance measurement and evaluation functions in the 1990s). Following two other evaluation-related policies, the Policy on Evaluation was implemented in 2009 across government, with some exemptions based on organizational size and mandate. This policy outlined the role of evaluation within departments and sought to improve the provision of “credible, timely, and neutral information on the ongoing relevance and performance of direct program spending” (Government of Canada, 2009, n.p.) to Ministers, central agencies, administrators, and the public. This policy was developed and implemented following a government-wide spending review in 2007, which sought to identify potential budgetary reallocations. The results of the review pointed to the fact that most departments and agencies did not have credible data on program relevance and cost-effectiveness, and that evaluation studies only covered a small percentage of programs. The Policy on Evaluation sought, among other things, to ensure that all direct program spending was evaluated every 5 years, in order to provide timely and accurate information on programs when needed by senior managers (Dumaine, 2012).

The Policy on Evaluation was rescinded in 2016 with the adoption of the Policy on Results. This broader policy replaced several management policies and sought to improve the achievement of results across government, following a change in the political environment. Evaluation remains a key component of the Policy on Results, but is linked to requirements related to performance measurement and program management. Notable changes include the requirement to evaluate all direct program spending, which was dropped, given its implementation challenges in the previous period. It also provides more flexibility in terms of issues that must be addressed as part of evaluations led by departmental evaluation functions, in order to support the production of relevant and timely evaluations that feed into the department's decision-making processes.

Linking Evaluation Policy to Practice Through “Neutral Assessments”

In 2009, the Policy on Evaluation required, for the first time, that large federal departments and agencies conduct a “neutral assessment” 1 of their evaluation function every 5 years (Government of Canada, 2009). This requirement was reiterated in the Policy on Results (Government of Canada, 2016) and, thus, has been in place for more than 10 years. The neutral assessment can be conducted in various ways and should focus on the compliance of the evaluation units with policy requirements. In other words, neutral assessments are meant to investigate how these two policies have been implemented by evaluation functions and how they have influenced evaluation practices within federal government organizations.

The neutral assessments provide a unique measure of the interplay between evaluation policy and practice; although a rich body of empirical research has been developed regarding the extent to which evaluations are used to account for public expenditures and to support organizational learning, systematic assessments of organizational evaluation functions are rare. In other words, “The use of evaluation has been studied empirically, but the production of evaluation has not” (House et al., 1996, p.136).

Our study, therefore, sought to capture the rich data described in neutral assessment reports to answer the following questions:

How have the elements of the Policy on Evaluation (2009) and the Policy on Results (2016) been implemented by federal evaluation functions? To what extent do these policies appear to shape evaluation practice in the Canadian federal government? What are some of the barriers faced by evaluation functions in implementing evaluation policy?

The specific case of the Canadian federal government constitutes, in our view, a valuable contribution to the ongoing discussions about the policy-practice link occurring across governments and other types of organizations. To the best of our knowledge, the neutral assessment requirement in place in the government of Canada is unique and provides us with a distinct view of how evaluation policy is implemented in a real-world setting.

Conceptual Framework

The conceptual framework for our study is drawn from the typologies proposed by Trochim (2009) and paraphrased by Datta (2009), as well as from the Policy on Evaluation (2009) and the Policy on Results (2016). Our objective in designing this conceptual framework was to capture the general and specific elements that constitute evaluation policies, so that these can inform our analysis of the neutral assessment reports. In other words, our conceptual framework defines and describes the policy statements that are then implemented by evaluation functions. These elements are summarized in Table 1 and described in the section that follows.

Conceptual Framework.

The specific elements contained within each of these categories are introduced in the findings section and directed our data analysis by providing a coding structure for the content analysis. We were not able to directly associate some of the elements taken from the federal policies to the Trochim (2009) and Datta (2009) typologies. In a few instances, such as Oversight of evaluation function, we approximated these linkages. This suggests that further work on establishing a typology of evaluation policy elements might be needed, either to clarify some of the earlier work, or to add new dimensions to existing typologies.

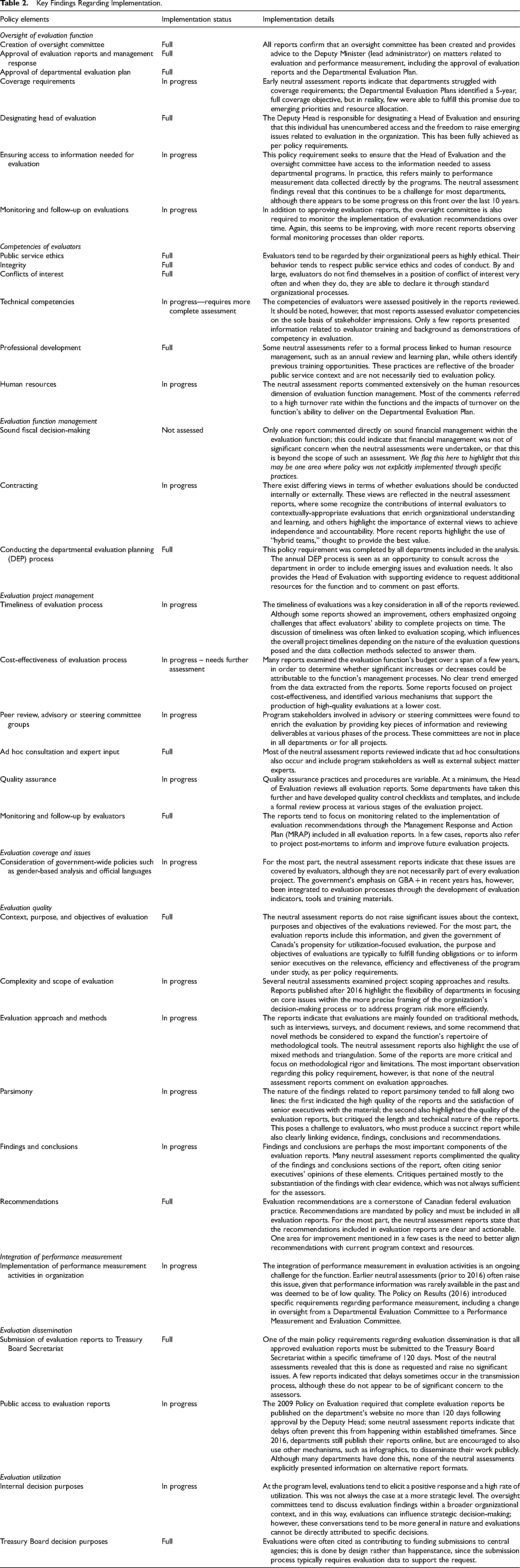

Key Findings Regarding Implementation.

Methods

Materials and Procedures

In collaboration with the Division on Results of the Treasury Board Secretariat, a call was issued to all Heads of Evaluation, asking them to provide neutral assessment reports published by their department or agency on a voluntary basis. In total, 41 reports were submitted from 28 different federal organizations. All of the reports were published between 2013 and 2020. The extent to which this sample of reports represents the entire population of neutral assessments produced over the study period is difficult to establish; changes in department names and functions are frequent in the Canadian federal government, and only certain departments and agencies are required to conduct neutral assessments, based on their size and mandate. In some cases, departments did not share their neutral assessment reports given concerns related to national security. All reports obtained were kept in a secure repository and a tracking document was created to capture data on year of publication and department or agency. This information is not shared here upon the request of some organizations that did not want to be identified as part of the study.

The way in which neutral assessments have been conducted over the past 10 years is an important issue that deserves further explanation, in order to judge the validity of the assessment reports as a data source. In 2014 and in 2018, the Treasury Board Secretariat issued guidance on how to conduct neutral assessments. According to the most recent version of the document, neutral assessments should demonstrate whether the evaluation unit under study complies to the various aspects of the Policy on Results (Government of Canada, 2016) and should offer actionable recommendations in the case of noncompliance. It does provide flexibility, however, in terms of the approach used to conduct the assessment, including: (a) an audit-like requirement-by-requirement assessment of compliance, (b) an assessment by theme that includes a review of governance, management practices, evaluation practices, and evaluation use, (c) a values-based assessment that could include credibility, independence, and the usefulness of evaluation products, or (d) a risk-based and targeted assessment that might focus on certain requirements, selected according to various risk factors. The guidance also provides flexibility in terms of the assessment period—in other words, neutral assessments can cover the entire 5-year period since the previous assessment or focus on a shorter period of time for some specific aspects of the policy. As in the case of program evaluations, neutral assessors can choose data collection methods, data sources, and analytical frameworks. Finally, by its very definition, the neutral assessment should be conducted by experts who do not have a direct relationship with the organization. Again, the guidance offers some flexibility in terms of who can conduct the assessment, and final approval of the report rests with the Deputy Head of the organization. An in-depth description of how the neutral assessments have been conducted, based on findings from this study, can be obtained in the study by Bourgeois and Maltais (2022).

Research Design

Our study used a nonexperimental, qualitative research design to extract secondary data from the 41 reports. The analysis was conducted in NVivo, according to the guidelines for concept coding qualitative analysis set out by Miles, Huberman, and Saldaña (2014). This specific approach involves using the exact words of participants (in our case, these were sentences or word groups extracted directly from the reports). Data extraction was guided by the conceptual framework presented earlier. The main categories correspond to the policy elements captured in the “Policy on Evaluation and Policy on Results” column in Table 1. More precise codes were developed based on the specific policy statements (n = 44). Each of these codes was defined and associated to examples pulled from the reports in order to create a codebook. In total, 1,278 data points were extracted from the 41 reports by one team member, with validations checks conducted by the other team member.

Data Analysis

After the extraction was completed, we examined the data within each code for quality assurance purposes. Minor modifications were made after this examination and involved moving some data points between codes within the same category. A thematic analysis of the data contained within each code was then conducted in order to reduce and interpret the data. This analytical stage involved a careful review of the extracted data for each code, in order to highlight recurring issues or aspects. This work was conducted in accordance with best practices for using qualitative secondary data outlined by Ruggiano and Perry (2019). Our approach to data analysis supports a new use for the data contained within individual neutral assessment reports, which are usually not shared outside of the organization and not aggregated with corresponding data from other departments.

Analytical Quality and Methodological Limits

Various mechanisms were implemented to ensure methodological quality and validity (Creswell, 2014). For example, the team members communicated their understanding of the subject and engaged in peer debriefing to clarify data and analysis results at all stages of the study. This helped ensure clarity in the codebook. The main methodological limit of the study was, as described earlier, that we had an incomplete set of neutral assessments, since the Heads of Evaluation submitted their reports voluntarily. In addition, although we analyzed the methodology used to conduct each neutral assessment (Bourgeois & Maltais, 2022), we could not control or monitor the quality of the methodological approaches and findings presented in the neutral reports. However, we attempted to mitigate this limitation by extracting evidence and examples, rather than opinions, from the neutral assessment reports. This evidence does pose some additional limitations, since the original assessors chose the elements that they felt relevant in their reports and may have left other elements out of their analysis.

Findings

The overall picture obtained through our analysis is that, by and large, the two federal evaluation policies have been implemented by all of the organizations featured in the reports examined. The neutral assessments have served their purpose in determining which of the policy elements were implemented as intended, and in highlighting variabilities and nuances specific to each organization assessed. More importantly, our analysis shows that some policy elements have been implemented to a greater extent than others, and that the policy elements closer to the locus of control of senior executives tend to be more fully implemented than those further down the executive line. The paragraphs that follow explain these nuances in detail and provide examples from the neutral assessment reports.

Policy Implementation Status

The first observation stemming from our analysis is that none of the categories, based on the previous typologies and identified in our conceptual framework, has been fully implemented in the past 10 years. It is only at the code-level of our analysis that we can see policy elements that have been implemented and that are stable in all of the organizations reviewed. These policy elements tend to focus on specific actions, such as the creation of an oversight committee, developing and approving the Departmental Evaluation Plan, designating a Head of Evaluation, and submitting evaluation reports to the Treasury Board Secretariat. For instance, as seen in Table 2, the category “Oversight of evaluation function” can be divided into seven policy elements. Some of these elements, such as “Creation of oversight committee” and “Approval of DEP,” have been fully implemented by all of the organizations studied. Other policy elements, such as “Ensuring access to information needed for evaluation and performance measurement,” have not yet been fully implemented. In this particular example, the policy elements that have been fully implemented are under the direct purview of the Deputy Heads, who are accountable for reporting on these to Treasury Board officials on a regular basis. It is not surprising, therefore, that these policy elements have been fully implemented.

Table 2 describes our main findings for each of the policy elements and their current implementation status. In some cases, data on several policy elements are reported together for the purposes of brevity and to show the interrelationships between these elements.

Variability in How Policies Are Implemented

Beyond reporting on the extent to which these policy elements were implemented, the neutral assessments also describe how they have been implemented. For example, all reports reviewed confirm that an

The

As a core component of evaluation practice,

Evaluation

Evaluation

Time and Level of Control Influence Policy Implementation

The preceding overview of findings highlights the time dimension in policy implementation: not all policy elements can be implemented at once, and some take longer than others to translate into concrete practice. Our findings show that the level of control of those tasked with implementing policy elements, among other factors, can influence what policy elements are implemented and when they are implemented. As mentioned previously, elements that fall directly under the purview of the Deputy Head tend to be implemented more quickly than other elements which may be under the responsibility of the Head of Evaluation. Another example pertains to the

Barriers Faced by Evaluation Functions in Implementing Policy

The neutral assessment reports highlight specific barriers in implementing evaluation policies. These barriers, like some of the other factors presented previously, often fall outside of the purview of the evaluation functions. One example of an enduring challenge faced by federal evaluators relates to the

Another barrier to policy implementation is the availability and retention of qualified evaluators, who are directly responsible for translating policy into practice. Many neutral assessment reports commented extensively on this issue by referring to a high

How Practice Can Also Shape Policy

Although our findings generally show that Canadian federal evaluation policies have largely been translated into concrete practices by the evaluation functions, the reverse is also sometimes true: evaluation practices have influenced certain aspects of evaluation policies. The most striking example of this is related to “coverage requirements.” Coverage requirements refer to the ability of the evaluation function to evaluate (or “cover”) all required programs within a specific timeframe. These requirements have varied over the years; in the 2009 Policy on Evaluation, organizations were required to evaluate 100% of direct program spending every 5 years. 2 The neutral assessment reports published between 2009 and 2016 indicate that departments struggled with these coverage requirements. Specifically, the Departmental Evaluation Plans identified a 5-year, full coverage objective, but in reality, few were able to fulfill this promise due to emerging priorities and available resources. In the 2016 Policy on Results, this requirement was changed to reflect organizational capacity and informational needs. In other words, the more recent policy backtracked the earlier requirement and recommended instead that evaluators focus on program risk and upcoming funding decisions to select the programs that should be evaluated. This particular issue provides an interesting example of how practice influences policy rather than the other way around.

Discussion

This descriptive study highlights the importance and role of evaluation policy in shaping evaluation practices in organizations, as defined by Christie and Lemire (2019) and Trochim (2009). The two evaluation policies examined are meant to guide the work of evaluators and the practice of evaluation at various levels of the organization. The policies do so by describing the role of senior executives in creating and supporting an evaluation function, the role of evaluation managers in developing an evaluation plan and ensuring that evaluations are conducted appropriately, and the role of evaluators in designing and conducting evaluations. In this way, these evaluation policies appear to support the establishment of an organizational evaluation culture as described by House et al. (1996).

Our findings confirm that the taxonomies of evaluation policy areas proposed by Trochim (2009) and Datta (2009) include elements found in other jurisdictions. Our analytical framework, based on the evaluation policies adopted since 2009 in the Canadian federal government, provides a deeper dive into these taxonomies by identifying specific policy elements that are one step closer to evaluation practice. These elements also support the argument put forth by Dillman and Christie (2017) about the need to describe the connections that exist between the elements in a systems-oriented analysis. We found, in some cases, that policy elements were difficult to distinguish when looking at practice (e.g., coverage of core evaluation issues is discussed at the organizational level and at the evaluation project level but refers to the same practice).

Our findings describe not only the extent to which policy elements have been translated into practice, but more importantly, how this was done in different organizations. They also bring to light the level of control and time required to implement certain elements and the barriers faced by evaluation functions during implementation. These findings are important in that they enable us to think of policy implementation not as one broad action, but as a series of specific acts dependent on various stakeholders and organizational context. They echo Lipsky's “Street-level Bureaucrats,” meaning that managers and evaluators interpret and implement the policy elements differently based on their context and characteristics (Winter, 2012). Finally, our findings also show how practice can influence policy changes over time, and how this reverse policy-practice link is important to sustainable organizational improvement. This is consistent with the “bottom-up” approach described by Sabatier (1986).

The findings presented in this paper are unique in that they are founded on policies and practices implemented over a 10-year timespan (and in fact, may reflect more than 40 years of federal evaluation policy and practice), in organizations sharing a similar structure and with similar accountability requirements. This is a departure from previous efforts presented by Trochim (2009), Datta (2009), and Dillman and Christie (2017) but is close to the multiorganizational work conducted by Christie and Fierro (2012) and Christie and Lemire (2019). Despite their contextual framing, our findings also help illuminate the scalable link that can exist between evaluation policy and practice in organizations of all shapes and sizes. Our findings may also provide some food for thought to organizations interested in making this link more explicit in their own context.

Although this was not the original intention, our study also points to significant progress in several areas of evaluation practice since the implementation of the Policy on Evaluation in 2009. Many reports have applauded the attention given to enhancing staffing practices and the professional development of evaluators in recent years, as well as the efforts deployed by evaluation functions to support the integration of performance measurement across their organizations. Along the same lines, the neutral assessments have also described changes in how evaluations are reported to better meet the informational needs of senior managers through brief, targeted documents written with the evaluation user in mind. All of these findings demonstrate an evolution of the evaluation function over time. Many of these changes may be attributable to the adoption of the Policy on Results in 2016, as well as an increased understanding of the role that evaluation can play in government organizations. Some questions remain, however, about the added value of the evaluation function and how it can best support decision-making at the organizational level. This is a fundamental issue that future iterations of the policy should address explicitly. Additionally, given that our analysis did not include all neutral assessments produced since 2009, and that not all evaluation functions have undergone a neutral assessment, our findings must also be understood within the context of available neutral assessments rather than be generalized to all departments and agencies of the federal government.

In terms of future directions in this field, we argue that the call to action issued by Trochim in 2009 continues to ring true. The challenges and opportunities mentioned more than a decade ago continue to apply to today's evaluation landscape, and we support and reiterate the need for continued research on the topic of evaluation policy. Our study contributes to the field by analyzing neutral assessment reports, which roughly correspond to the “Evaluation Policy Audits” suggested by Trochim. It may be time to consider other activities suggested, such as evaluation policy analysis and evaluation policy clearinghouses and archives, to facilitate sharing of best practices and provide a knowledge base for future policy development and harmonization across organizations.

Conclusion

This study sought to examine how evaluation policy translates into organizational practices. The analysis of neutral assessment reports produced over the last decade enabled us to extract data pertaining to whether and how policy requirements are implemented, as well as the barriers that can sometimes be faced by evaluators charged with policy implementation. Overall, the two most recent evaluation-focused policies adopted by the Canadian federal government have been or are in progress of being implemented across the organizations reviewed. Our analysis also showed that in some instances, practice (or the inability to fulfill policy intentions) can also influence policy. Although our findings are situated within a particular context, we argue that the question of policy implementation through enacted evaluation practices is relevant to governments and organizations all over the world, and we reiterate the need for continued research and analysis on this topic.

In his description of what constitutes evaluation policy, Trochim (2009) establishes a clear distinction between what he calls “Substantive Policy,” or the national policies meant to address specific social or economic problems, and “Evaluation Policy,” which provides guidance to evaluators on the programs that should be evaluated and how evaluations should be conducted. Our findings confirm this role for evaluation policies, although we suggest that the body of knowledge on public policy and its implementation also applies to evaluation policy and should be examined further to identify lessons learned and best practices.

Finally, our work provides concrete examples of how evaluation policy is implemented in organizations, based on documentation developed for a different purpose. Although the neutral assessment reports were comprehensive in most cases, and focused on policy elements, we acknowledge the limits of analyzing secondary data as part of our study. Future studies could delve deeper into the mechanisms of policy implementation in order to provide a more detailed picture of how policy is implemented into practice, as well as the role of policy in driving organizational evaluation efforts.

Footnotes

Acknowledgments

The authors wish to thank all of the Heads of Evaluation who provided their organization's neutral assessment reports, thus making this study possible. Their thanks also go to Cédric Ménard who provided useful background information and coordinated the request for the neutral assessment reports, and to Sebastian Lemire and the reviewers, who provided invaluable advice throughout the publication process.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Social Sciences and Humanities Research Council of Canada (grant number 435-2019-0725).