Abstract

The increasing number of anti-trafficking organizations and funding for anti-trafficking services have greatly out-paced evaluative efforts resulting in critical knowledge gaps, which have been underscored by recent recommendations for the development of greater evaluation capacity in the anti-trafficking field. In response to these calls, this paper reports on the development and feasibility testing of an evaluation protocol to generate practice-based evidence for an anti-trafficking transitional housing program. Guided by formative evaluation and evaluability frameworks, our practitioner-researcher team had two aims: (1) develop an evaluation protocol, and (2) test the protocol with a feasibility trial. To the best of our knowledge, this is one of only a few reports concerning anti-trafficking housing program evaluations, particularly one with many foreign-national survivors as evaluation participants. In addition to presenting evaluation findings, the team herein documented decisions and strategies related to conceptualizing, designing, and conducting the evaluation to offer approaches for future evaluations.

Keywords

Human trafficking (HT) for labor and/or sexual exploitation is associated with an array of detrimental consequences for trafficked persons (Dell et al., 2019; Muftić & Finn, 2013). Accordingly, trafficking survivors often benefit from comprehensive services to address legal and immigration needs, ensure their safe and permanent housing, establish fair and living-wage employment, and promote their physical and mental health (Kaufman & Crawford, 2011; Macy & Johns, 2011). To address survivors’ needs, growing numbers of non-governmental organizations (NGO) are providing services to promote survivors’ safety and well-being, as well as prevent their re-trafficking. Governmental and private funders have made substantial expenditures to support such anti-trafficking services. For example, in 2020, the United States (US) Department of Justice (DOJ) awarded more than $100 million to initiatives and programs focused on HT (DOJ Office of Public Affairs, 2020). Nonetheless, the increasing number of such organizations and related funding have greatly out-paced evaluative efforts resulting in critical knowledge gaps concerning anti-trafficking services, which have been underscored by recent recommendations for the development of greater evaluation capacity in the anti-trafficking field. (Davy, 2015; Dell et al., 2019; Krieger et al., 2020; Okech et al., 2018). In response to these calls, this paper seeks to help address gaps by reporting on the development and feasibility testing of an evaluation protocol to generate practice-based evidence concerning a transitional housing program for trafficking survivors.

Evaluation of Anti-Trafficking Services

Despite the gaps noted above, evaluations of anti-trafficking services have been undertaken and presented in both reports and in the peer-reviewed literature. Although the types of programs evaluated, methods and data used, and targeted outcomes have varied considerably, findings from this prior work provide a valuable context in which the current effort was conducted.

HT program evaluations have included, as a few examples, those related to arrest diversion (Roe-Sepowitz et al., 2014), comprehensive case management (Goździak & Lowell, 2016; Potocky, 2010), comprehensive initiatives (Caliber, 2007), shelter-based interventions (Crawford & Kaufman, 2008; Munsey et al., 2018), mentoring (Criswell, 2014; Rothman et al., 2020), rehabilitation (Aborisade & Aderinto, 2008; Honeycutt, 2012), trauma-focused cognitive behavioral therapy (Bass et al., 2011; O’Callaghan et al., 2013), and services tailored to trafficked minors (Gibbs et al., 2014).

Methods have included analyses of administrative data (Goździak & Lowell, 2016; Roe-Sepowitz et al., 2014), case and chart reviews (Aborisade & Aderinto, 2008; Aderinto & Aborisade, 2008; Caliber, 2007; Crawford & Kaufman, 2008; Potocky, 2010), evaluability assessments (Caliber, 2007), field work (Goździak & Lowell, 2016), qualitative interviews with key informants and survivors (Aborisade & Aderinto, 2008; Aderinto & Aborisade, 2008; Potocky, 2010; Roe-Sepowitz et al., 2014), participatory process evaluation (Gibbs et al., 2014), pre-posttest surveys (Bass et al., 2011; Criswell, 2014), longitudinal data tracking over the course of service provision (Munsey et al., 2018; Rothman et al., 2020), and randomized studies (O’Callaghan et al., 2013).

Outcomes targeted for change have included survivors’ adjustment patterns (Aderinto & Aborisade, 2008), re-victimization (Rothman et al., 2020), arrest and re-arrest (Roe-Sepowitz et al., 2014; Rothman et al., 2020), community re-integration (Honeycutt, 2012), service goal attainment (Potocky, 2010), physical and mental health (Bass et al., 2011; Crawford & Kaufman, 2008; Honeycutt, 2012; Munsey et al., 2018; O’Callaghan et al., 2013), social support and coping skills (Rothman et al., 2020), social networks (Criswell, 2014), and vocational changes (Criswell, 2014).

Although these prior evaluation efforts constitute a beneficial foundation, critical evaluation evidence gaps persist, with many of the HT services currently being delivered remaining uninvestigated (Davy, 2015; Krieger et al., 2020; Okech et al., 2018). The consequences of delivering untested services can be benign but can also have negative consequences if well-meaning service providers unintentionally do more harm than good in their efforts to support survivors (Kaufman & Crawford, 2011). Even benign services can cause harms when scarce resources are spent on ineffective programs. For these reasons, increased efforts to evaluate services for survivors of all ages, genders, cultural backgrounds, social circumstances, and trafficking types are needed (Davy, 2015; Dell et al., 2019; Felner & DuBois, 2017).

Nonetheless, evaluations of anti-trafficking services are hindered by several related factors (Krieger et al., 2020). First, service settings that are frequently complex and dynamic. Second, service providers who are often overworked, lack financial resources and/or institutional support for evaluation, and have little guidance regarding the collection and treatment of data. Third, service users who may be reasonably distrustful of macro-level institutions, as well as in urgent need of resources to promote their safety and well-being. Fourth, service funders who are heterogeneous, and only recently expressing an interest in evaluation-derived outcomes. For all these reasons, a key part of the evaluation challenge is in the nature of the evaluation work itself.

One important approach toward helping to address this challenge, especially as HT services proliferate, is an emphasis on practice-based evidence, which is developed through research with NGO's (Green, 2006; Nnawulezi et al., 2018a) and often generated using community-based and participatory research strategies (Ammerman et al., 2014). While practice-based evidence is developed systematically and rigorously with attention to internal validity, it also emphasizes ecological validity by attending to the complex, dynamic contexts in which service provision occurs. Thus, informed by practice-based evidence approaches, evaluators can develop methods for investigating anti-trafficking programs.

Restore NYC and Transitional Housing Services

Restore NYC, a 501(c)(3) NGO organization in New York, New York, is a service provider for HT survivors in the United States (US). Conducting service provision since 2009, Restore NYC focuses on serving adult, self-identified women who have been trafficked into the greater New York city area. Since its inception, Restore NYC has served over 2,000 HT survivors—and those at risk of trafficking—from over 80 countries, with approximately 200 HT survivors served annually in its programs of housing, economic empowerment, counseling, and case management (Restore NYC, 2019). To help ensure that programs work as intended, Restore NYC has developed an ongoing commitment to evaluation.

One of Restore NYC's primary targets for evaluation is its transitional housing program. Residents meet the federal criteria for trafficking and are referred by self, attorneys who represent HT survivors, law enforcement (e.g., Homeland Security, the Federal Bureau of Investigations), and other not-for-profit organizations. Once referred, potential residents are screened for eligibility and assessed via a comprehensive intake process led by the Restore NYC housing manager using a low-barrier framework. That is, survivors who might be excluded from traditional housing services due to challenges such as substance abuse are not turned away (Nnawulezi et al., 2018b). At program entry, program staff work with each resident to create an individualized service plan to address immediate, ongoing, and long-term needs based on recommended practices (e.g., Macy & Johns, 2011). Plan development is guided by a detailed program theory of change, logic model, and extensive training for staff. In addition to housing, residents receive comprehensive and coordinated case management that includes connecting survivors to services outside of the housing program, both at Restore NYC and in the greater community, based on their individualized goals and needs. Examples of such services include (a) job readiness training and placement, (b) English as a second language instruction, (c) immigration legal services, (d) medical services, and (e) mental wellbeing services. Residents typically live in the housing program and receive services for 12 months, with stays of up to 18 months as needed on an individual basis.

Evaluation of the housing program has been an organizational-level priority for three reasons. First, transitional housing is a cornerstone of the organization, both philosophically and fiscally, comprising upward of 20% of total annual expenses in recent years (Restore NYC, 2019). Second, the resources required to support the delivery of transitional housing, given its intensive nature, are significant and thus potentially wasteful without demonstrated program helpfulness. Third, despite laudable efforts to investigate housing programs for survivors of trauma, violence, and/or trafficking, there is a documented dearth of rigorous evidence (i.e., experimental, quasi-experimental) concerning the outcomes of such programs (Klein et al., 2021).

Despite a strong desire to evaluate this program, Restore NYC was limited in its evaluation capacity. This changed in 2014 when Restore NYC connected with a team at the School of Social Work at the University of North Carolina at Chapel Hill (UNC-CH). This partnership combined Restore NYC's significant on-the-ground practice expertise with a university-based research team experienced in conducting community-engaged and trauma-informed evaluations with violence survivors, which led to the development of the practitioner-research team described herein.

Aims and Research Questions

Guided by formative evaluation and evaluability frameworks (e.g., Bowen et al., 2009; Dehar, Casswell, & Duignan, 1993; Leviton et al., 2010; Orsmond & Cohn, 2015; O’Cathain et al., 2019; Trevisan & Walser, 2014) the practitioner-researcher team held two broad aims. First, the team sought to develop a comprehensive evaluation protocol with guiding principles for evaluation, outcome measures, as well as data collection, management, and analysis procedures. Second, the team sought to test the practicability of the evaluation protocol with a feasibility trial as well as to generate formative, practice-based evidence about the housing program.

In addition to these aims, the project was guided by three research questions (RQ). RQ1: Can an evaluation protocol be developed for this type of HT service provision, particularly considering the complexity inherent in the service setting, service delivery, and service users’ needs? RQ2: Can a feasibility evaluation trial be practically and successfully implemented among a sample of survivor service users? RQ3: Can results inform practice with HT survivors in this program and setting?

The development and feasibility testing of a formal protocol was viewed by the entire team as a necessary step towards codifying the team's ongoing evaluative efforts overall. Moreover, considering the challenges the anti-trafficking field faces, documenting the decisions and strategies related to conceptualizing, designing, and conducting the evaluation was theorized as potentially helpful for the anti-trafficking field broadly, particularly considering current recommendations from the field to develop greater evaluation capacity (Davy, 2015; Dell et al., 2019; Krieger et al., 2020; Okech et al., 2018).

Aim One Methods—Develop an Evaluation Protocol

For the first project aim, two activities were initially undertaken to develop the evaluation protocol. First, the team conducted a literature review of relevant research. Second, the project sought to solicit feedback from Restore NYC's administrators, staff, and survivors. These development activities occurred from 2014 to 2018, with the primary work being conducted from 2016 onwards. This latter stage was funded by an award through the Office for Victims of Crime, Office of Justice Programs, U.S. Department of Justice. All research procedures were reviewed by the Institutional Review Board (IRB) of the Office of Research Ethics at the UNC-CH (IRB #17-422; IRB #19-2744).

Literature Reviews

The literature reviews were comprised of (a) a narrative review of substantive and methodological research related to HT evaluation, and (b) a systematic review of substantive research concerning measures for evaluating anti-trafficking services. The narrative review focused on determining key evaluation practices, while the systematic review focused on key targets (i.e., evaluation outcomes).

The narrative review included two general domains. First, substantive research was surveyed related to HT evaluation research including those such as (a) Davy's review of existing HT program evaluations (2015), (b) Macy's and John's overview of trafficking services and programs (2011), and (c) Zimmerman and colleagues’ conceptual model on HT (2011). Second, methodological research was surveyed related to social services evaluation including articles by (a) Shulha and colleagues’ principles to guide collaborative evaluations (2016), (b) Bowen and colleagues’ suggestions for designing feasibility studies (2009), and (c) Shadish and colleagues’ technical information regarding quasi-experimental designs (2002). Research in this review was selected based on the expertise of all team members. All articles were scrutinized by the team, with key findings noted to inform the evaluation protocol.

Details concerning the systematic substantive review component can be found in the article summarizing the review itself (Graham et al., 2019). Briefly here, the search involved two strategies: (a) a systematic search of electronic databases housing peer-reviewed journal articles and (b) reference harvesting of identified studies. Review inclusion criteria were having (a) been published in English in a peer-reviewed journal, (b) included data collection pertinent to needs and/or outcomes of trafficking survivors, (c) acknowledged that some portion of their sample included trafficking survivors, and (d) included specific details regarding constructs (i.e., variables) and/or measures (e.g., instruments). Articles were systematically searched, tracked, and reviewed in accordance with the preferred reporting items for systematic reviews and meta-analyses statement (Moher et al., 2009). In all, 22 (41.5%) articles identified a total of 34 specific published measures relevant for outcomes evaluations of anti-trafficking programs.

Administrative Leadership, Staff, and Survivor Feedback

The second aim of the evaluation protocol was to gather feedback from key groups, including Restore NYC administrative leadership, as well as staff and survivor residents in the housing program. The team conducted guided feedback sessions facilitated by the research team lead (first author) with coordination by the practice team lead (second author). Prior to each feedback session, the team worked collaboratively to determine specific questions of interest, including questions about appropriateness and feasibility of data collection, potential outcomes and measures, and means to ensure survivor input, confidentiality, and safety in the evaluation.

During the evaluation protocol development, seven such sessions in total were conducted. As noted above, feedback sessions included three types of personnel individually and collectively: (a) survivors who were current residents in the housing program at the time the sessions were held (N ≈ 15), (b) administrative leadership (e.g., Executive Director; N ≈ 5), (b) housing program staff (e.g., Housing Director; N ≈ 12). In addition to the two feedback sessions with survivor residents, due to Restore NYC's purposive employment and survivor empowerment strategies, members of the staff included individuals with lived experience of human trafficking, though the identity of these staff was blinded to university researchers. Detailed notes were taken at each session, which were then used to guide evaluation protocol development.

In addition to these efforts to ensure that survivors had input into the design of the evaluation, in the implementing the feasibility trial, which is detailed subsequently, a post-assessment feedback form was implemented for survivor residents to share their experiences with the process of completing data collection assessments as well as to make suggestions for improvement. To ensure that the feasibility trial, as it was implemented, was conducted in survivor-centered ways, questions on the feedback form included “How do you feel about the length of the time taken to complete the assessments,” “How did you feel at the end of the assessment,” and “Did you feel all the questions were clear?” among others. Trial procedures were refined in minor ways based on this regularly collected feedback from survivors.

Aim One Results—Evaluation Protocol

Findings from the aim one activities were compiled into three distinct yet overlapping domains including (a) characteristics of the evaluation, (b) methodological best practices to guide the implementation of the protocol, and (c) targets for the evaluation. Together, the team conceptualized these results as comprising the evaluation protocol itself.

Evaluation Characteristics

Four characteristics were determined to define the internal operations of the evaluation protocol, including (a) relationship-focused (i.e., promoting constructive working relationships in the team), (b) mutually-understanding (i.e., bridging differences between practitioners and researchers on the team), (c) participation-promoting (i.e., ensuring active involvement by all team members), and (d) progress-monitoring (i.e., ensuring that planned activities were carried out in timely ways and to completion). Likewise, four characteristics were determined to define the external implementation of the evaluation protocol: (a) strengths-based (i.e., with an emphasis on survivors’ capacities and resources), (b) trauma-informed (i.e., with an understanding of how violence and victimization might impact survivors’ lives, including their research participation; Elliot et al., 2005), (c) culturally-sensitive (i.e., research approaches that are responsive to all survivors no matter their background, languages, and situations), and (d) survivor-centered (i.e., research approaches that are carried out with survivors’ perspectives and recommendations at the forefront). All eight characteristics were included in the protocol and woven into all subsequent evaluation activities and products.

Evaluation Practices

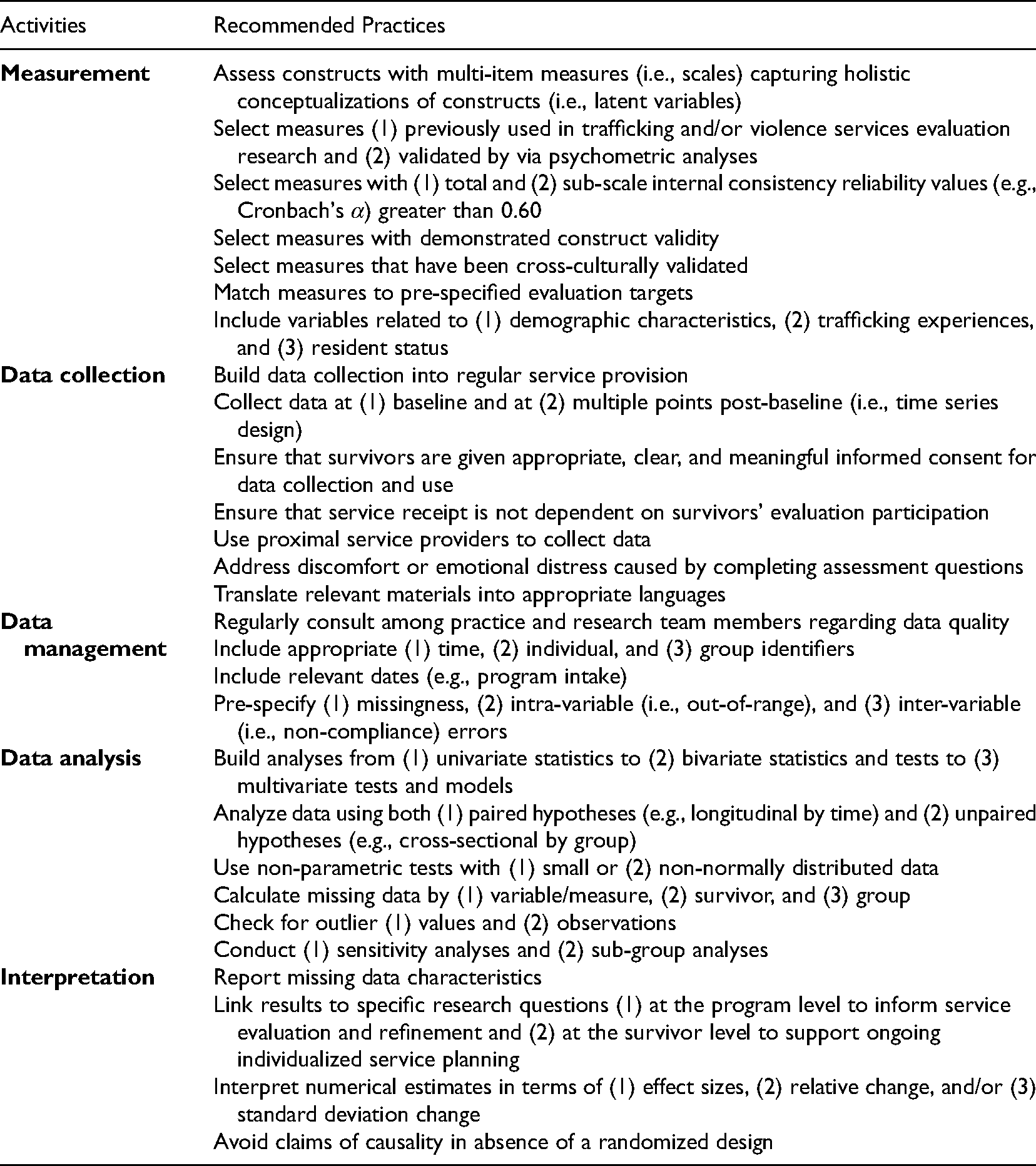

Five sets of evaluation practices were determined to define the evaluation protocol. As Table 1 fully presents both the overall domains, as well as related recommendations, key examples findings are briefly presented here, including (a) measurement selection (e.g., whenever possible, select measures that have been cross-culturally validated); (b) data collection (e.g., build data collection into regular service provision); (c) data management (e.g., regularly consult among practice and research team members regarding data quality); (d) data analysis (e.g., use non-parametric tests with small or non-normally distributed data); and (e) interpretation of results (e.g., link results to specific research questions at the program level to inform service evaluation and refinement and at the survivor level to support ongoing individualized service planning). Like the evaluation characteristics noted above, all five evaluation practice domains were included in the protocol and woven into all subsequent evaluation activities and products.

Anti-Trafficking Program Evaluation Recommendations—Activities and Practices.

Evaluation Targets

Overall, the team identified six evaluation target domains, which were deemed to be potentially modifiable by the housing program given its existing theory of change, logic model, and service strategies, (a) physical and mental well-being, (b) stress and post-traumatic stress disorder (PTSD), (c) depression, (d) coping, (e) post-traumatic growth, and (f) employment and housing. Likewise, these key constructs have been previously used in both typical anti-trafficking service provision and in prior evaluations (Graham et al., 2019). In addition, as described above, the review determined that 34 identified measures have been used in extant evaluations of anti-trafficking services (Graham et al., 2019). Accordingly, while considering measures that had been previously used in HT research as much as possible, the team selected measures for the evaluation target domains that had shown reliability and validity, had been cross-culturally validated, and that capturing holistic conceptualizations of the evaluation outcomes, whenever possible.

Aim Two Methods—Feasibility Trial

Guided by the protocol, the team conducted the feasibility trial, which occurred from 2018 to 2019 and was conducted under the same IRB auspices as the evaluation development.

Participants

The feasibility trial was pre-specified to be all 33 residents enrolled in Restore NYC's transitional housing program comprising survivors who entered the program from March of 2014 to October of 2019. Residents were adult, self-identifying women, and primarily those with either pre-certification or certification in accordance with the Trafficking Victims Protection Act (TVPA; 2000), which allows adult HT survivors who are not US citizens or Lawful Permanent Residents to be eligible to receive federal and state benefits to the same extent as refugees. Importantly, Restore NYC's program features (a) rolling admissions such that participants begin the program whenever they are referred and (b) varying lengths of program completion time as participants are allowed to exit at their choosing.

Data Collection

To ensure that the program tailors services to residents’ needs, transitional home staff have collected data on residents on a quarterly basis since the program began. Consequently, data collection for the feasibility trial occurred by adapting Restore NYC's existing assessments and practices. Consistent with typical program practices, assessments included (a) a comprehensive intake assessment at program entry and (b) regular 3-month follow-up assessments throughout the entirety of a survivor's time in the program, which may be up to 18 months.

All assessments were conducted by trained Restore NYC staff in private settings, with attention to confidentiality, and in residents’ first language. Restore NYC has translated the assessments into multiple languages to ensure that the assessments can be conducted in survivors’ preferred languages. Restore NYC also has bicultural and bilingual staff who can address most survivors’ language preferences. In addition, when necessary, Restore NYC engages interpreters to assist in the administration of the assessments. Restore NYC staff report that typically the assessments were conducted with survivors in 15 to 30 min.

Prior to any assessments and data collection, Restore NYC staff review with new residents their rights via a standardized Notice of Clients’ Rights document, as well discusses informed consent via a standardized Informed Consent document. Survivors are informed that they may withdraw from participating in any assessment and/or decline to complete any specific assessment questions without any consequences for their services and/or program participation.

After conducting the assessments, staff entered data into a secure, cloud-based case-management database using Apricot (Social Solutions, Austin, TX). Also, as noted earlier, a post-assessment feedback form was implemented for survivor residents to share their experiences with the assessments.

Measures

Guided by the evaluation protocol (i.e., aim one), the trial assessments were comprised of standardized instruments to enable a holistic evaluation of residents’ needs. Moreover, the assessments instruments were developed and selected to provide meaningful information concerning changes in survivors’ needs, improvements, and outcomes over time at both the (a) survivor-level to inform ongoing service planning with individual survivors and (b) program-level to inform program evaluation in the six evaluation target domains (i.e., physical and mental well-being, stress and PTSD, depression, coping, post-traumatic growth, employment, and housing) that were determined in the development of the evaluation protocol.

Resident characteristics

The feasibility trial collected information related to residents’ (a) demographic (e.g., age, gender identity), (b) immigration (i.e., documentation status), and (c) employment (i.e., current employment status) characteristics at intake/entry to the program to characterize the sample.

Well-being

Six validated instruments were used to collect information on well-being. Generalized well-being was assessed using the Short-Form Health Survey (SF-12; Ware et al., 1996). The SF-12 is a 12-item scale measuring well-being via two primary subscales for (a) physical well-being and (b) mental well-being, ranging from 0 to 100 for both. Within these sub-scales are eight domains comprising (a) “Physical functioning,” (b) “Role-physical,” (c) “Bodily pain,” (d) “General health,” (e) “Mental health,” (f) “Role-emotional,” (g) “Social functioning,” and (h) “Vitality.” The SF-12 Short-Form has two versions using two different recall periods—asking respondents to consider either (1) past week or (2) past month when answering questions (Ware et al., 2007). The one-month recall version is used in this evaluation.

Stress was measured with the Perceived Stress Scale (PSS; Cohen et al., 1983), a 14-item scale of individuals’ perception of the degree to which situations in their life are stressful. Scores range from 0 to 40, with scores ≥ 20 indicating a “high” level of stress.

Post-traumatic stress was assessed with a measure pegged to the Diagnostic and Statistical Manual of Mental Disorders (DSM) titled the PTSD Checklist for DSM-5 (PCL-5; Blevins et al., 2015). This is a 20-item scale of post-traumatic stress with a score range of 0 to 80. A score ≥ 38 indicates the potential for a formal diagnosis of PTSD.

Depression was assessed using the Center for Epidemiologic Studies Depression Scale (CES-D; Eaton et al., 1977; Radloff, 1977). This is a 20-item scale of items pegged to the DSM-5, which measures depression in the past week with scores ranging from 0 to 60. A score ≥ 16 indicates a potential clinical level of depression.

Coping was assessed using the Brief COPE (Carver, 1997), a 28-item scale of coping styles comprising two broad subscales for adaptive (e.g., reframing) and maladaptive (e.g., denial) coping strategies. Within these subscales are 14 domains comprising (a) “Active coping,” (b) “Emotional support,” (c) “Instrumental support,” (d) “Positive reframing,” (e) “Planning,” (f) “Humor,” (g) “Acceptance,” (h) “Religion,” (i) “Self-distraction,” (j) “Denial,” (k) “Disengagement,” (l) “Venting,” and (m) “Self-blame.”

Post-traumatic growth was assessed using the Post-Traumatic Growth Inventory (PTGI; Tedeschi & Calhoun, 1996) to assess survivors’ growth in response to experienced trauma. The PTGI is a 21-item scale measuring positive changes experienced in the aftermath of a traumatic event, such as trafficking, with a total range from 0 to 105. Within the total measure are five subscales comprising (a) “Appreciation of life,” (b) “New possibilities,” (c) “Personal strength,” (d) “Spiritual change,” and (e) “Relating to others.”

Employment and housing

Two categorical measures were used assess changes in survivors’ employment and housing, including (a) a measure for employment (e.g., “Are you currently employed?” (No = 0, Yes = 1) and (b) a measure for housing (e.g., “What is your current housing status?” Homeless = 0, Supportive housing = 1, Community dwelling = 3). Both measures were identified by Restore NYC as being feasible to collect and potentially subject to change by the housing program.

Data Analysis

The outcome analysis was focused on descriptive univariate characterization of residents’ outcomes at intake and at the 3-, 6-, 9-, 12-month intervals and at case closing when a resident exits the home (i.e., at about 18 months), as well as calculation of outcome change over time. Change was calculated as relative change percentage (Δ%) using the formula of (post-intake score − intake score)/intake score) × 100 for each post-intake interval. These comparisons were conducted on pooled resident data for 3-, 6-, 9- and 12-months compared with pooled intake data, and for case closing only on the subset of residents with both intake and closing data only. Relative change, which assessed by what percentage a score changed from an original score, was used in the analysis so that resident change across the 35 scale and subscale measures could be standardized given the variability in the measures’ ranges.

Also, as the above scales have not been widely used with HT survivors, period-based internal consistency reliability estimates were calculated using Guttman's λ2. Estimates of λ2 ≥ 0.70 were sought to investigate reliability of the scales within this population. Due to the small size of the sample of residents, bivariate and/or multivariate tests (e.g., McNemar's tests, multilevel growth curve models) comparing residents’ outcomes across time were not conducted in this feasibility trial, resulting in no tests of statistical significance. All analyses were conducted by the research team using Stata 16.1 (StataCorp, College Station, TX).

Aim Two Results—Feasibility Trial

Participant Characteristics

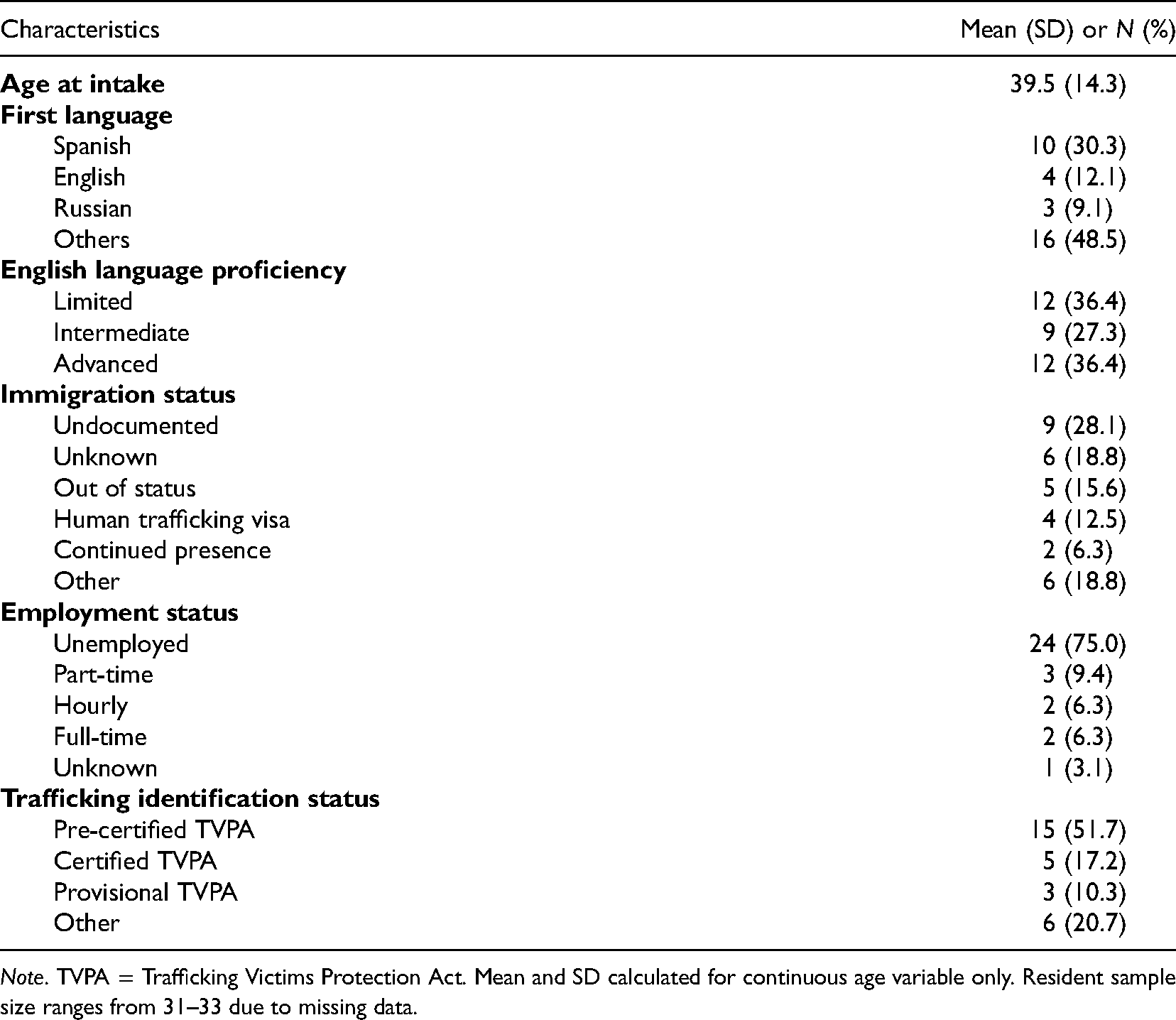

Table 2 shows characteristics related to survivor characteristics at program intake. The residents were all self-identifying women, as was consistent with the program model, with a mean age of almost 40 years old. There were 23 different countries of origin, mostly east Asia and Latin America, with 18 different languages. In terms of English language skills, almost two-thirds (64%) had limited or intermediate ability. At program entry, only about 19% had an immigration status that enabled them to work and/or receive federal benefits (e.g., human trafficking visa, lawful permanent resident status). In addition, over 90% were working less than full-time with 75% being unemployed. In terms of Victims of Trafficking and Violence Protection Act (TVPA) certification 1 , almost were certified, pre-certified, or provisionally certified (79%).

Anti-Human Trafficking Housing Program Evaluation—Survivor Intake Demographic Characteristics (N = 33).

Note. TVPA = Trafficking Victims Protection Act. Mean and SD calculated for continuous age variable only. Resident sample size ranges from 31–33 due to missing data.

Well-Being

Extant counts of the well-being outcomes varied at intake as Restore NYC does not require resident data to be collected to deliver services. Among all 32 residents with any intake data, counts of well-being intake data included 22 (69%) for the PCL-5, 23 (72%) for the SF-12, 29 (91%) for the PTGI, 30 (94%) for the CES-D and PSS, and 32 (100%) for the Brief COPE. Well-being data counts at post-intake intervals tended higher, with counts of 22–24 (88–96%) among the 25 residents with 3-month data, 23–24 (96–100%) among the 24 residents with 6-month data, 22–24 (92–100%) among the 24 residents with 9-month data, and 21 (100%) among the 21 residents with 12-month data. In total, 24 residents had any closing data, with actual well-being observations ranging from 16 to 18 counts across all variables.

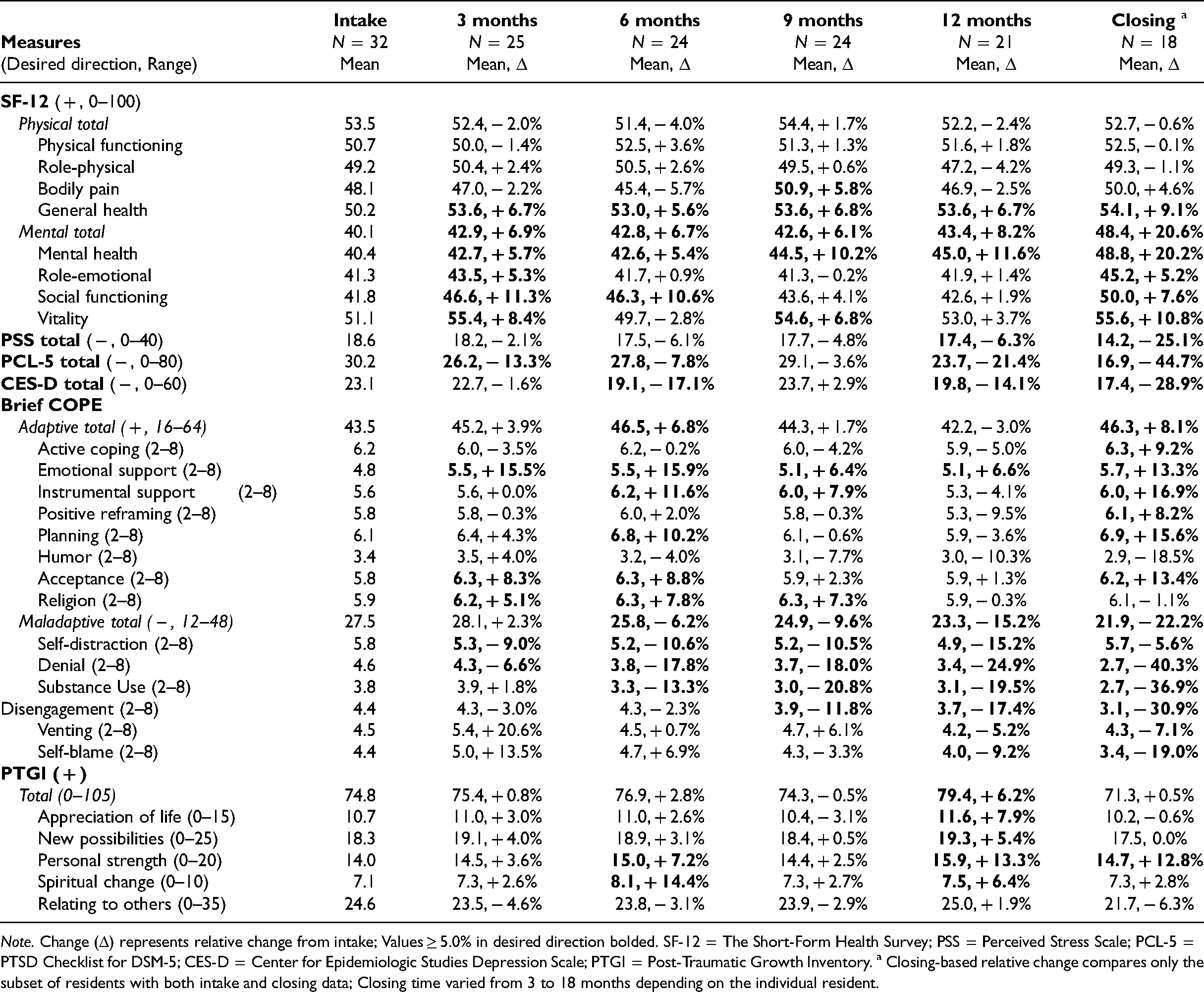

Residents’ mean well-being scores and relative change percentages are presented in Table 3. The primary comparisons are at the 3-, 6-, 9-, and 12-month periods where pooled resident data is compared, with a secondary comparison at case closing on matched data only.

Anti-Human Trafficking Housing Program Evaluation—Preliminary Survivor Well-Being Outcomes, by Measure and Period.

Note. Change (Δ) represents relative change from intake; Values ≥ 5.0% in desired direction bolded. SF-12 = The Short-Form Health Survey; PSS = Perceived Stress Scale; PCL-5 = PTSD Checklist for DSM-5; CES-D = Center for Epidemiologic Studies Depression Scale; PTGI = Post-Traumatic Growth Inventory. a Closing-based relative change compares only the subset of residents with both intake and closing data; Closing time varied from 3 to 18 months depending on the individual resident.

For the SF-12, residents demonstrated consistent and desired improvements on their “General health” (≥ + 6%), “Mental total” (≥ + 6%), “Mental health” (≥ 5%), and “Social functioning” (≥ + 2%) scores at 3-, 6-, 9-, and 12-months. Internal consistency for nine of the 10 SF-12 measures demonstrated acceptability across intake and the four primary time periods (λ2 range: 0.78–0.91), with only “Bodily pain” being low (λ2 = 0.59).

For the PSS, all post-intake scores were consistently in the desired direction (≤ − 6%), and the internal consistency of the measure across 3-, 6-, 9-, and 12-months was good (λ2 = 0.84).

For the PCL-5, all post-intake scores were consistently negative (≤ − 21%) with good internal consistency across the same period (λ2 = 0.82).

The CES-D did not demonstrate consistently desired change, but residents’ mean scores at 6-months ( − 17%) and 12-months ( − 14%) were notably highly negative relative to baseline, indicative of strong decreases in depression. In addition, the CES-D change at closing was notably strong (− 29%). The internal consistency of the CES-D across the four primary time periods was acceptable (λ2 = 0.76). For the Brief COPE, residents demonstrated consistent and desired improvements on their “Emotional support” (≥ + 6%), “Self-distraction” (≥ − 6%), “Denial” (≥ − 7%), and “Disengagement” (≥ − 2%) scores.

Internal consistency for 13 of the 16 Brief COPE measures demonstrated acceptability across time (λ2 range: 0.70–0.92), with only “Planning” (λ2 = 0.66), “Self-distraction” (λ2 = 0.65), and “Venting” (λ2 = 0.56) being low.

Finally, for the PTGI, residents demonstrated consistent and desired improvements on their “Personal strength” (≥ + 3%) and “Spiritual change” (≥ + 3%) scores. Internal consistency for all six PTGI measures demonstrated acceptability across time periods (λ2 range: 0.83–0.93).

Also, in Table 3, any change at any time post-intake that was found to be ≥ 5.0% in a desirable direction has been shaded in grey. These changes represent potential small-to-large increases to residents’ outcomes over the course of their participation in the program. Across all 35 measures and 4 post-intake intervals, 63 (45%) of the 35 × 4 = 140 possible primary post-intake scores were found to be in the desired direction and of a ≥ 5.0% relative change. Changes that were ≥ 5.0% and in an undesirable direction (not pictured), were found to represent just 9 (6%) of all scores. Secondarily, the case closing comparison among the reduced subset of residents with paired intake and closing data found 24 (68.6%) of the 35 outcomes to be in the desired direction with a ≥ 5.0% relative change.

Economic Empowerment

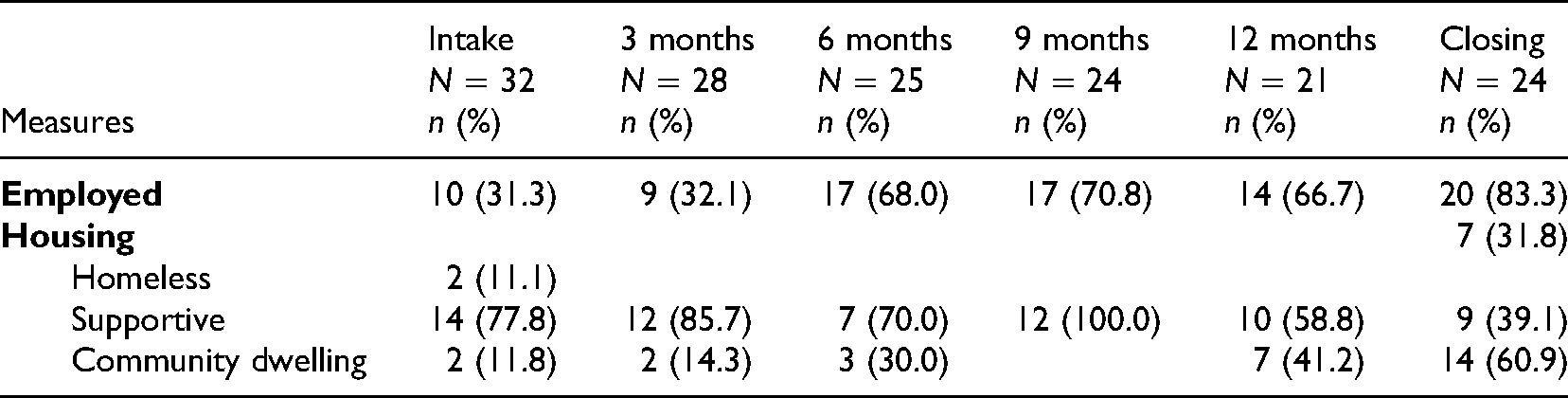

Table 4 shows that residents’ employment status improved over time increasing from 31% of residents having employment at intake to 67% having employment after 12 months and 83% at closing. In terms of housing, while nearly 59% were in some form of supportive housing at 12 months, 41% were in a community dwelling, with none being homeless. At closing, 61% were in a community dwelling, representing a 49% absolute increase from intake.

Anti-Human Trafficking Housing Program Evaluation—Preliminary Survivor Employment and Housing Outcomes, by Measure and Period.

Discussion

Despite the growth of anti-trafficking services, increasing funding for such services, and growing evaluation efforts, the anti-trafficking field is challenged with a dearth of high-quality program evaluations (Davy, 2015; Dell et al., 2019; Krieger et al., 2020; Okech et al., 2018). Consequently, the field faces significant knowledge gaps, including whether services are working as intended and whether services are making positive differences for survivors. Such a situation is worrisome given the potential for even well-intentioned programs to do harm, and the potential of wasted resources if programs do not work as intended. Nonetheless, the lack of evaluations on anti-trafficking programs is understandable given the considerable challenges such efforts face, including the overall lack of guidance regarding how programs can best be evaluated (Krieger et al., 2020). With the goal of helping to respond to calls for developing evaluation capacity in the anti-trafficking field, this paper presented our team's efforts to develop (aim one) and feasibility test (aim two) a program evaluation protocol, including our decisions and strategies in conceptualizing, designing, and conducting the evaluation.

Limitations

Before reflecting on the research questions that guided our efforts, we encourage readers to be mindful of the study's limitations. Like many other anti-trafficking programs, Restore NYC's aims to connect survivors residing in the housing program to key services (e.g., English instruction, job readiness training, legal services, physical and mental health services) via comprehensive case management. Nevertheless, this effort represents one evaluation of one program. Given the heterogeneity of anti-trafficking programs, the protocol, evaluation strategies, and recommendations generated here may have limited implications for other programs, communities, or contexts. Also, the data collected herein is unique to this program and has important limitations due to the varying enrollment and participation periods of participants. Likewise, the findings may have been shaped by the practitioner-researcher team that came together for this project. Even if the findings presented here do not translate readily to other settings, it is our hope that the protocol and recommendations presented here, including those in Table 1, offer helpful strategies to future evaluations. We also note here that this evaluation protocol did not seek to conduct longitudinal follow-up after survivors exited the housing program, which is a limit of our current effort and a recommendation for future ones.

Evaluation Protocol—Discussion and Implications

In response to our first research question (RQ1: Can an evaluation protocol be developed for an anti-trafficking housing program?), our team found that we were able to develop a meaningful protocol (i.e., aim one), which was guided by the activities and recommendations summarized in Table 1.

Here we would like to note that the literature review efforts greatly informed our protocol. The extant evaluation literature—particularly the guidance on community-engaged, participatory, and formative evaluations—helpfully guided the protocol's development (e.g., Bowen et al., 2009; Leviton et al., 2010; Orsmond & Cohn, 2015). Likewise, the emerging evaluation literature on anti-trafficking programs to-date was helpful (e.g., Davy, 2015; Gibbs et al., 2014; O’Callaghan et al., 2013). In particular, the review findings underscored that practitioner-researcher evaluation partnership should be relationship-focused, mutually-understanding, participation-promoting, and progress-monitoring. In addition, evaluations of anti-trafficking programs should be strengths-based, trauma-informed, culturally sensitive, and survivor-centered. Moreover, evaluation teams must ensure the following: (a) measures capture holistic conceptualizations of evaluation outcomes; (b) data collection strategies are practical and respect regular service provision; (c) data management is active, regular, and strives to enhance data quality; (d) data analysis is robust to the types of data that will be typically collected in such evaluations (e.g., small samples, non-normally distributed data, and missingness); (e) interpretation of results are meaningful for programs, practitioners, and survivors.

In addition to the value of the literature reviews for developing the protocol, we would also like to underscore the importance of gathering feedback from other practice leaders and providers, as well as survivors. The feedback sessions with survivors, administrative leadership, and program staff helpfully enabled our team to receive pragmatic guidance from those who would both be most impacted by the data collection activities, as well as potentially benefit from the evaluation findings. Notably, survivor input at key points in the protocol's development and implementation (i.e., in both the feedback sessions and in the post assessment feedback forms) especially shaped data collection activities and evaluation outcomes.

Feasibility Trial—Discussion and Implications

Overall, the feasibility trial findings (i.e., aim two) show that the second research question (RQ2: Can a feasibility evaluation trial be practically and successfully implemented among a sample of survivor service users?) and the third research question (RQ3: Can results inform practice with HT survivors in this program and setting?) were also answered affirmatively.

The trial showed that comprehensive data across key outcomes (i.e., physical and mental well-being, stress and PTSD, depression, coping, post-traumatic growth, employment, and housing) could be collected not only at program entry and program completion but also over time throughout service delivery. Notably, these data came from survivors who represented diversity in their national origins and primary languages. Many survivors included in the evaluation had limited English proficiency and uncertain immigration status.

Although the trial did not result in complete data for all survivors, enough data was collected for a meaningful, albeit preliminary analysis. Likewise, to the best of our knowledge, this is one of only a few reports concerning anti-trafficking housing program evaluations particularly one with many foreign-national survivors as participants. Accordingly, this trial adds to the preliminary, though growing, evaluation evidence base showing that—even in the context of a complex, dynamic service environment—comprehensive evaluation data can be collected from survivors with diverse backgrounds, experiences, and situations using standard survey instruments. The evaluation findings also suggest that, not only can these data be collected, but that such data can be useful in indicating changes in survivors’ outcomes over time. In turn, such findings hold implications for informing practice both at the individual level (i.e., informing survivors’ unique services plans), and the program level (i.e., guiding changes to service content, delivery, and strategies for the program overall).

In considering recommendations for future evaluations, we reflect here that the feasibility of the evaluation might have been helped by: (a) an emphasis on survivors’ capacities and resources, as well as their needs; (b) responsiveness to survivors regardless of background, languages, and situations; and (c) efforts to forefront survivors’ preferences and recommendations for data collection.

In addition, as our team worked together over the course of developing and implementing the evaluation, we realized the importance of our practitioner-researcher collaboration in that both sides brought critical expertise and capacities to the partnership (Ammerman et al., 2014). The practitioners brought deep knowledge of survivors’ service needs and research preferences, as well as expertise concerning data collection strategies that could be successfully implemented in the context of typical service delivery. The researchers brought expertise concerning data organization and management, data analysis strategies, as well as in the interpretation of statistical findings. Likewise, both side of the partnership brought organizational resources to the collaboration that augmented the project. As examples, the practitioners ensured that the evaluation protocol was survivor-centered through the inclusion of those with lived experience in the feedback groups, including both program staff and program participants. The researchers were able to augment the project through the inclusion of students on the team to support the evaluation at no cost. Also, project protocols were independently reviewed for human research protections through the research team's university-based IRB.

Recommendations for the Future and Conclusion

Although some robust evaluations have been carried out in anti-trafficking programs, including longitudinal data collection over the course of service provision (e.g., Munsey et al., 2018; Rothman et al., 2020), and randomized studies (e.g., O’Callaghan et al., 2013), the use of such research methods is still rare in the anti-trafficking field. Accordingly, and considering calls to develop greater evaluation capacity (Davy, 2015; Dell et al., 2019; Krieger et al., 2020; Okech et al., 2018), our team sought to document the decisions and strategies related to conceptualizing, designing, and conducting a longitudinal program evaluation of anti-trafficking housing program. Although this paper offers only one protocol concerned with one program, it is our hope that other evaluation teams will develop and share their protocols so that the entire anti-trafficking field can benefit from the lessons learned by a growing number of projects.

Also, for the future, we recommend that practice, policy, and/or research leaders in the anti-trafficking field, promote practice and research partnerships in evaluation, as well as promote evaluation and data capacities within organizations that serve HT survivors. If growing numbers of anti-trafficking programs were able to undertake evaluations in coordinated ways, the field could develop multi-site data and evaluation efforts, which in turn and in combination, could lead to rigorous, quasi-experimental studies with large samples that are fully powered to detect statistical effects on key outcomes. Moreover, such multi-site data and evaluation efforts would be community-based, ecologically valid, and strongly based in practice.

While a multi-site data and evaluation project may not be feasible in the short-term, this study adds to others in showing that comprehensive, longitudinal program evaluation can successfully be carried out in the context of dynamic anti-trafficking service provision. Likewise, this study suggests that the current situation, of limited program evaluation, is no longer adequate. Accordingly, we hope that the findings and recommendations determined from this study help to offer a long-term vision for the potential of anti-trafficking program evaluation in the future. In the short-term, we also hope that this paper will encourage practitioners, researchers, and survivor-leaders to partner together to develop and carry out their own evaluations in their own communities and programs.

Footnotes

Acknowledgments

The authors wish to thank all the survivors who gave their feedback and data to facilitate this project. The authors also acknowledge and thank Kristin Weschler, Victim Justice Program Specialist at the Office for Victims of Crime, U.S. Department of Justice, for her review of and feedback on an earlier version of this manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Office for Victims of Crime, Office of Justice Programs, U.S. Department of Justice (grant number #2016-VT-BX-K057). The opinions, findings, and conclusions or recommendations expressed in this product are those of the contributors and do not necessarily represent the official position or policies of the U.S. Department of Justice. Additional support for the development of this manuscript was provided by the L. Richardson Preyer Distinguished Chair for Strengthening Families fund of the School of Social Work at the University of North Carolina at Chapel Hill.