Abstract

Implementation fidelity is the degree of compliance with which the core elements of program or intervention practices are used as intended. The scientific literature reveals gaps in defining and assessing implementation fidelity in early intervention: lack of common definitions and conceptual framework as well as their lack of application. Through a critical review of the scientific literature, this article aims to identify information that can be used to develop a common language and guidelines for assessing implementation fidelity. An analysis of 46 theoretical and empirical papers about early intervention implementation, published between 1998 and 2018, identified four conceptual frameworks, in addition to that of Dane and Schneider. Following analysis of the conceptual frameworks, a four-component conceptualization of implementation fidelity (adherence, dosage, quality and participant responsiveness) is proposed.

Documenting and understanding implementation processes of program or intervention practices (PIP) through fidelity assessment to improve and strengthen both research and practice are very useful (Lloyd et al., 2013). Fidelity assessment is a process used to collect data to document the extent to which PIP are implemented as intended (Dane & Schneider, 1998; Hulleman et al., 2013; Snyder et al., 2013). The need to conduct this assessment process has been established (Bond & Drake, 2019) by a number of early intervention researchers (Guo et al., 2016; Knoche et al., 2010; Luze & Peterson, 2004; Thomas & Marvin, 2016).

First, it is important to assess implementation fidelity to determine whether practitioners implement PIP faithfully as intended by their developers (Century et al., 2010; Dane & Schneider, 1998). This evaluative process makes it possible to extract information about how practitioners use new PIP (Dusenbury et al., 2003). This information is important to understand the challenges or facilitating factors practitioners encounter regarding implementation fidelity of PIP (Dusenbury et al., 2003; Knoche et al., 2010). Moreover, when implementation fidelity is evaluated during the process, the data collected allow for adjustments to continuously improve utilization of the PIP (Fixsen et al., 2013; Franks & Schroeder, 2013; Luze & Peterson, 2004). These data on implementation as planned are useful to determine practitioners’ training needs based on the difficulties encountered (Ledford & Wolery, 2013).

Assessing implementation fidelity can also shed light on the reasons and factors that could explain the presence or absence of intervention effects (Dusenbury et al., 2003). For Dusenbury et al., fidelity assessment can identify, among other things, changes in the use of PIP and their impacts on the effects. This information is necessary, given the documented links between high degrees of intervention fidelity and positive effects of an intervention (Durlak & Dupré, 2008; Guo et al., 2016; Hamre et al., 2010; Ruble et al., 2013).

Therefore, assessing the implementation fidelity of PIP in an intervention setting is considered essential (Durlak, 2015; Knoche et al., 2010). However, too few studies in the field of early childhood education in general, and early intervention specifically, focus on fidelity of implementation (Knoche et al., 2010). Those that do only provide partial information (Caron et al., 2017; Sanetti et al., 2011). The data collected do not cover all components of fidelity (Sutherland et al., 2013). Research mainly documents quantity rather than the quality of interventions (Domitrovich et al., 2010; Sanetti et al., 2011).

This shortcoming may be caused by such gaps identified in the scientific literature with regard to guidelines for implementing and assessing the implementation fidelity of PIP. These gaps are lack of clearly defined conceptual frameworks and their use (Century et al., 2010; Durlak & DuPre, 2008; Sutherland et al., 2013). Nevertheless, Dane and Schneider’s (1998) conceptual framework is the one most frequently used to document fidelity of PIP implementation as noted by Caron et al. (2017).

Dane and Schneider’s Conceptual Framework

For Dane and Schneider (1998), program integrity is “the degree to which specified program procedures are implemented as planned” (p. 44). Dane and Schneider identify different factors and components of fidelity to consider for effective implementation. In terms of factors, it is important that PIP be consistent with the needs of the intervention setting. PIP must also be clearly explained in reference materials. Furthermore, training and supervision must make it possible to reduce resistance to change while promoting the acquisition of new practices. In a literature review of studies that examine the evaluation of fidelity of implementation, Dane and Schneider retain five components. With these five components, their conceptual framework (1998, p. 45) also includes these definitions: adherence (the extent to which specified program components are delivered as prescribed), exposure (number, frequency, or length of interventions, quality (implementor enthusiasm; perception of effectiveness…), participant responsiveness (participant response to program sessions: levels of participation and enthusiasm), and program differentiation (manipulation check performed to safeguard against the diffusion of treatments, i.e., to ensure that the subjects in each experimental condition received only planned interventions).

Over the years, authors from various fields have used Dane and Schneider’s (1998) conceptual framework to assess fidelity of implementation (Paquette et al., 2009) or to conduct literature reviews on this subject (Durlak & DuPre, 2008; Dusenbury et al., 2003; O’Donnell, 2008). However, in the past decade, the lack of consensus on a definition of fidelity of implementation and its components was raised again by various authors (Century et al., 2010; Durlak & DuPre, 2008; Sutherland et al., 2013). For those reasons, Century et al. (2010) developed a new conceptual framework that can be used to assess fidelity of implementation in various areas of intervention.

Century et al.’s Conceptual Framework

Century et al. (2010) define fidelity as, “the extent to which an enacted program is consistent with the intended program model” (p. 202). Two main categories of components form their conceptual framework: (1) structural and (2) instructional. The structural components are procedural (process to follow, how often and for how long) and educative (theoretical knowledge necessary to implement new practices). Therefore, the procedural and educative components depend on professional development. As Century et al. point out, the procedural component is similar to Dane and Schneider’s (1998) exposure component. The instructional components are pedagogical (intervention strategies based on a theoretical ideal as well as interactions between teacher and student) and student engagement (participant responsiveness).

It should be noted that various aspects of Century et al.’s (2010) framework differ from those of Dane and Schneider (1998). They consider adherence, as defined by Dane and Schneider, and fidelity as synonymous. For this reason, they do not view adherence as a component of fidelity. Dane and Schneider then include practitioners’ enthusiasm, attitudes, and perceptions of the intervention’s effectiveness in the quality component. Century et al. perceive these elements as moderating variables that can influence fidelity and that should be taken into account. According to Century et al., these elements do not define the fidelity component. Similarly, education levels and years of experience of stakeholders should also be moderating variables to consider. Also, Century et al. view differentiation as a method for distinguishing the presence or absence of an intervention or for differentiating two types of practices. In this perspective, they do not consider it a fidelity component, and therefore, differentiation is outside their conceptual framework.

Despite Century et al.’s (2010) contribution, to our knowledge, their conceptual framework has not yet been used as a guideline to evaluate PIP implementation in early intervention. In fact, in the field of early intervention, the lack of a conceptual framework for assessing fidelity of implementation that takes contextual factors into account remains a concern (Dunst et al., 2013; Halle, Zaslow, et al., 2013; Sutherland et al., 2013). Several authors (Century et al., 2010; Franks & Schroeder, 2013; Halle, Metz, Martinez-Beck, 2013) raise the need for a common language and conceptual framework to guide assessments of implementation fidelity of PIP in early intervention.

For all these reasons, reviewing and assessing implementation fidelity frameworks are important for evaluation practitioners. The purpose of this study is to compare fidelity implementation frameworks. The field of early intervention is used as the context for the analysis. Dane and Schneider’s (1998) and Century et al.’s (2010) conceptual frameworks serve as points of comparison for the analysis.

The research questions posed in this article are as follows: (1) What are the different conceptual frameworks used to guide implementation and assess fidelity of implementation in early intervention? (2) What proportion of theoretical and empirical articles describe one or more conceptual frameworks? (3) What definitions of fidelity of implementation are associated with each conceptual framework? and (4) What points of convergence and elements differ from Dane and Schneider’s (1998) and Century et al.’s (2010) frameworks, for each conceptual framework targeted in the literature review?

Method

Search for Articles

A literature review was conducted using the Eric Resources Information Center (ERIC), PsycINFO, and MEDLINE databases. The key words used were fidelity, implement*, and early intervention; the search criteria applied were publication date (1998–2018) and language (French and English). A total of 267 articles were identified (199 without duplicates). Inclusion criteria were (1) participants (preschoolers aged 0–5 years), (2) type of literature (empirical studies and theoretical literature), and (3) early intervention contexts (daycare center, preschool class, and stimulation workshop). Exclusion criteria were (1) prevention/promotion programs and (2) parent- or school-based interventions.

Article Selection and Identification of Conceptual Frameworks

The titles and abstracts of the 199 papers were read to identify the most relevant articles according to inclusion and exclusion criteria. A total of 54 written documents emerged from this first selection and were read in full. In the end, 46 papers were selected for their relevance to the theme: 22 book chapters and theoretical articles as well as 24 empirical articles that were then synthesized using a reading sheet. A first grid was used to extract the following data: reference, or not, to a conceptual framework; the name of the conceptual framework and authors; and frameworks used or presented and described. The conceptual frameworks used herein for this article are those presented or used in the chosen articles and which partially or completely describe their components.

Conceptual Framework Analysis Grid

Each identified conceptual framework is described below based on the writings of the authors who developed it. A content analysis was carried out using the approach proposed by Aubert-Lotarski (2007). An analytical grid was systematically applied to the descriptions of all conceptual frameworks. The grid consists of the following categories: definition of fidelity of implementation, number and names of fidelity components, definitions of fidelity components, and moderating variables including professional development. Results for each objective are presented below.

Results

Identified Conceptual Frameworks

In response to the first research question, four conceptual frameworks emerged for the review: (1) implementation stages and drivers (Fixsen et al., 2005), (2) conceptual implementation model (Huang et al., 2014), (3) conceptual model of treatment implementation (Sutherland et al., 2013), and (4) framework for showing the relationships between the fidelity of evidence-based implementation and intervention practices and the outcomes and consequences of the practices (Dunst et al., 2013). Dane and Schneider’s (1998) framework is also included in this literature review. Century et al.’s (2010) conceptual framework is not used or presented in any of the 46 articles reviewed.

Proportion of Articles Describing Conceptual Frameworks

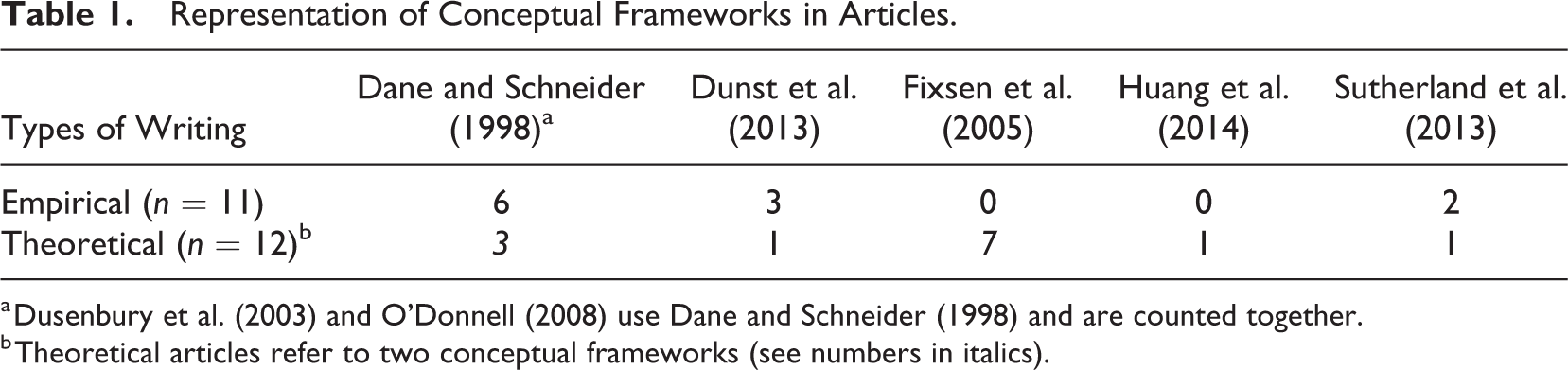

Results for the second research question show that less than 50% (11/24) of the empirical articles present and use a conceptual framework. Table 1 summarizes the representation of conceptual frameworks in the articles. The most commonly used framework is that of Dane and Schneider (1998). Of the 11 empirical articles, six refer to Dane and Schneider (1998), O’Donnell (2008), or Dusenbury et al. (2013). The latter two (Dusenbury et al., 2003; O’Donnell, 2008) base their work on Dane and Schneider’s (1998) framework. Dunst et al.’s (2013) framework is used to outline fidelity of implementation in three studies (Dunst et al., 2013; Ruble et al., 2013; Snyder et al., 2018) and is also presented in a theoretical paper (Trivette & Dunst, 2013). In the theoretical literature of this review, the implementation stages and drivers framework (Fixsen et al., 2005) is the most frequently represented.

Representation of Conceptual Frameworks in Articles.

a Dusenbury et al. (2003) and O’Donnell (2008) use Dane and Schneider (1998) and are counted together.

b Theoretical articles refer to two conceptual frameworks (see numbers in italics).

Definitions of Implementation Fidelity

The third question served to identify definitions of implementation fidelity. First, Fixsen et al. (2005) provide two definitions, one for intervention fidelity and the other for organizational fidelity. For Fixsen et al. (p. 85), intervention fidelity consists of “descriptions or measures of the correspondence in service delivery parameters (e.g. frequency, location, foci of intervention) and quality of treatment processes between implementation site and the prototype site.” Organizational fidelity refers to “descriptions or measures of the correspondence in overall operations (e.g., staff selection, training, coaching, and fidelity assessments; program evaluation; facilitative administration).” For Sutherland et al. (2003), “Treatment integrity refers to the degree to which an evidence-based practice (EBP) was delivered as intended and is composed of four dimensions: treatment adherence, treatment differentiation, competence, and relational factors (McLeod et al., 2009)” (p. 130). Huang et al. (2014) describe fidelity as: “[…] fidelity (including adherence and quality of program implementation, and trainees’ engagement and exposure to the program as defined in the TTIM […]” (p. 4). Finally, Dunst et al. (2013) define implementation fidelity of professional development practices as follows: […] the degree to which coaching, in-service training, instruction, or any other kind of evidence-based professional development practice is used as intended and has the effect of promoting the adoption and use of evidence-based intervention practices (Trivette & Dunst, 2011). (p. 89)

Points of Convergence and Difference

The fourth research question focused on points of convergence and elements that differ, for each conceptual framework targeted in our literature review, from Dane and Schneider’s (1998) and Century et al.’s (2010) frameworks.

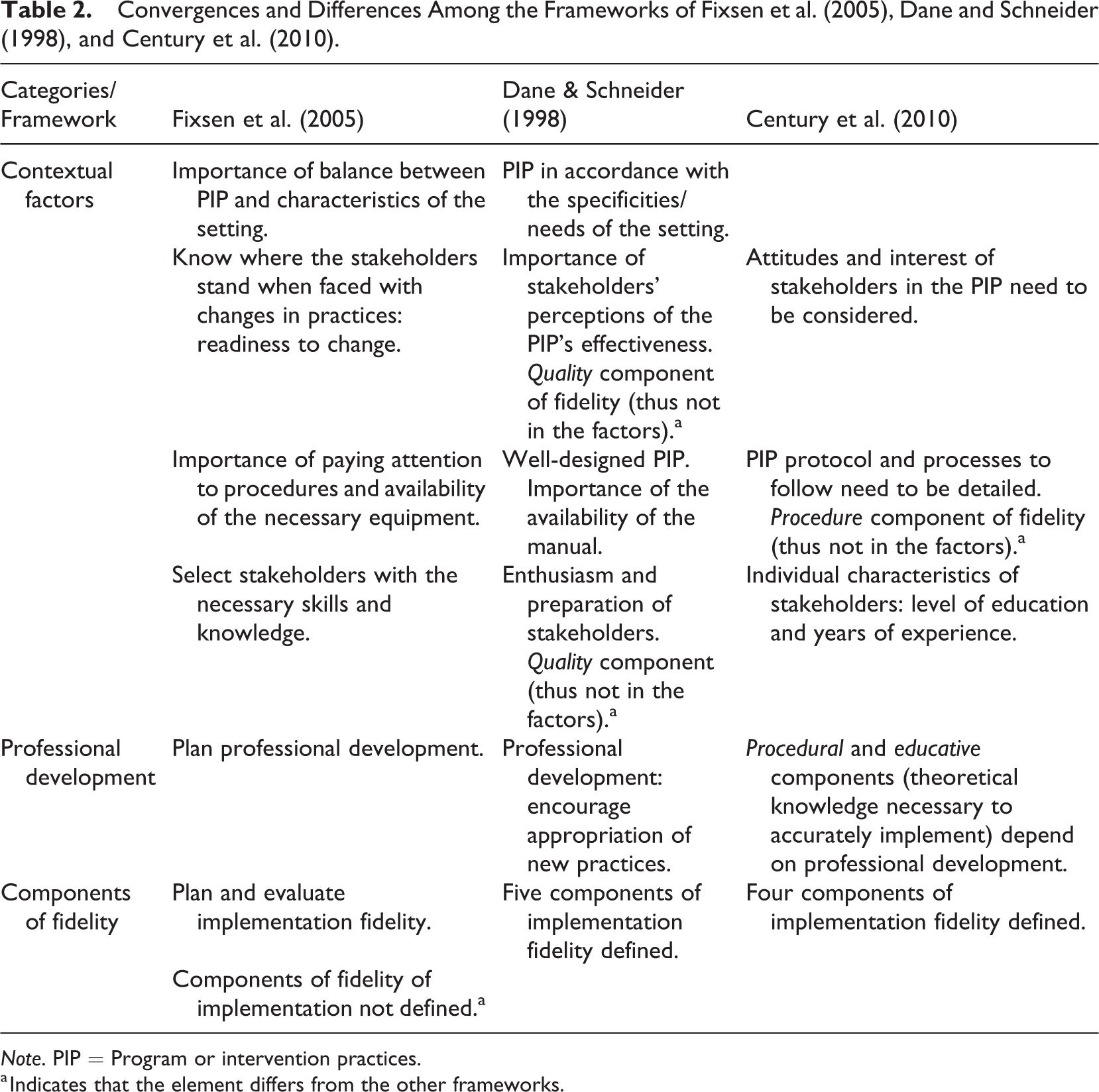

Analysis of the conceptual framework—Implementation stages and drivers

Fixsen et al.’s (2005) conceptualization results from a literature review designed to highlight factors and conditions that influence PIP implementation. Fixsen’s conceptual framework makes it possible to track implementation according to major steps by considering different contextual factors, all based on an iterative process. Implementation planning and assessment follow these steps: exploration, installation, initial implementation, and full implementation. Planning and evaluation of fidelity of implementation are part of this iterative process. Table 2 presents the main elements of convergence and difference among the frameworks of Fixsen et al., Dane and Schneider (1998), and Century et al. (2010).

Convergences and Differences Among the Frameworks of Fixsen et al. (2005), Dane and Schneider (1998), and Century et al. (2010).

Note. PIP = Program or intervention practices.

a Indicates that the element differs from the other frameworks.

According to Fixsen et al. (2005), the exploration phase is necessary to ensure that the PIP to implement match the characteristics of the setting (Fixsen et al., 2013), which is in line with Dane and Schneider (1998). In addition, in this first step, Fixsen et al. (2013) stress that readiness to change must be considered. It is important to know where the organization and stakeholders stand in the face of change (Fixsen et al., 2005). Educators’ perceptions of the effectiveness of the PIP could be relevant information. Perception of effectiveness is included in Dane and Schneider’s framework within the quality component and not in the contextual factors. For Century et al. (2010), perceptions, attitudes, and interest in PIP are also factors that influence fidelity. In addition, during the exploration phase, the availability of equipment needed to implement PIP must be verified (Fixsen et al., 2005). Dane and Schneider mention the importance of the presence of reference literature. Another factor to consider for Fixsen et al. (2005) is that staff must have the necessary knowledge to implement new practices. However, for Dane and Schneider, the degree of stakeholders’ preparedness comes under the quality component and not under contextual factors.

Professional development planning (training and coaching) and evaluation of implementation fidelity are done during the installation phase. Professional development planning is part of Century et al.’s (2010) procedural and educative components. The theoretical concepts and protocol to follow to implement PIP fall within these two components. For Century et al. (2010), these elements are used to plan professional development, a factor whose importance is also highlighted by Dane and Schneider (1998).

Data collection on the fidelity of implementation begins at the same time as the installation implementation phase. Data collected are used for feedback during professional coaching to improve the fidelity of implementation (Fixsen et al., 2005). Finally, when fully implemented, the practices should be used as intended, that is, as planned in terms of quantity and quality (Fixsen et al., 2013). One difference is that, unlike Dane and Schneider (1998) and Century et al. (2010), Fixsen et al. (2005) do not define the components of fidelity in their framework. However, Sutherland et al.’s (2013) conceptual framework, the next one to be analyzed below, breaks down fidelity of implementation into components: adherence to treatment, competence, differentiation, and relational factors.

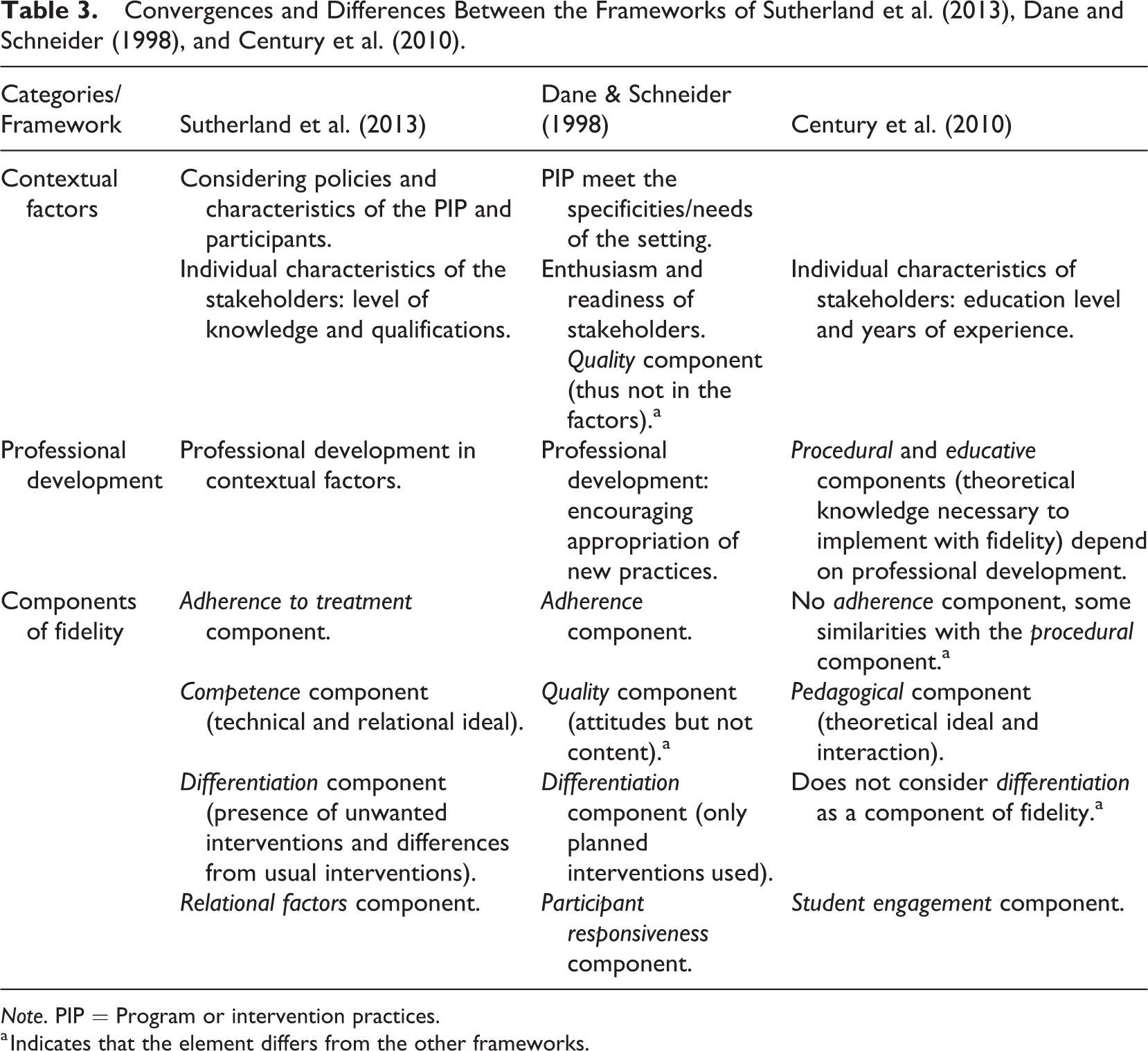

Analysis of the conceptual framework—Conceptual model of treatment implementation

The conceptual model of treatment implementation by Sutherland et al. (2013) relates to the implementation of intervention practices for children at risk of socio-emotional difficulties. Sutherland et al.’s conceptual framework is divided into three main sections: (1) structure, (2) implementation of EBPs, and (3) outcomes. Each section affects the next. Table 3 summarizes the information stemming from the analysis of Sutherland et al.’s conceptual framework.

Convergences and Differences Between the Frameworks of Sutherland et al. (2013), Dane and Schneider (1998), and Century et al. (2010).

Note. PIP = Program or intervention practices.

a Indicates that the element differs from the other frameworks.

Some factors from Sutherland et al.’s (2013) framework are also identified by Dane and Schneider (1998) and Century et al. (2010). Indeed, according to Dane and Schneider (1998), PIP characteristics must be clear and in line with the specificities of the intervention setting. For Sutherland et al. (2013), PIP characteristics, policies regarding the setting, children, and educators are considered. Stakeholders’ knowledge and qualifications are also considerations similar to Century et al. (2010) The latter describe stakeholders’ experiences and education as moderating variables for the fidelity of implementation. Dane and Schneider (1998) discuss the enthusiasm of stakeholders in their quality component and not as a factor.

The central part of Sutherland et al.’s (2013) framework is a conceptualization of fidelity of implementation that comprises of four components: (1) treatment adherence, (2) competence, (3) differentiation, and (4) relational factors. Sutherland et al. define adherence as the degree to which different intervention components are implemented as intended (e.g., according to a specific protocol). This component is synonymous with Dane and Schneider’s (1998) definition of adherence. For Century et al. (2010), the definition of fidelity is the same as that of adherence, but there is no adherence component in their framework. However, Century et al.’s (2010) procedure component is similar to Sutherland et al.’s treatment adherence component. The competence component, on the other hand, underlies the use of intervention practices according to a technical and relational ideal (receptivity and sensitivity to participants). Competence is similar to Dane and Schneider’s (1998) quality component for the relational aspect but differs in terms of technical compliance. For Dane and Schneider (2010), quality is not about the implementation of content but rather about the attitudes and enthusiasm of implementors. In Century et al.’s (2010) conceptual framework, the competence or quality components of fidelity are part of the pedagogical component. Pedagogy encompasses intervention strategies used according to a theoretical ideal and interactions between practitioners and participants. The third component of Sutherland et al.’s (2013) framework is differentiation, which is the level of difference between planned and unplanned interventions (presence of unwanted interventions). As a result, Sutherland et al.’s (2010) definition is similar to Dane and Schneider’s and is a way of conceptualizing fidelity that differs from Century et al.’s (2010) terms of reference. For the latter, differentiation is not a fidelity component. The fourth component, Sutherland et al.’s relational factors, is similar to the participant responsiveness component in Dane and Schneider’s (1993) and Century et al. ’s (2010) frameworks. The third framework analyzed is Huang et al.’s (2014) implementation conceptual model.

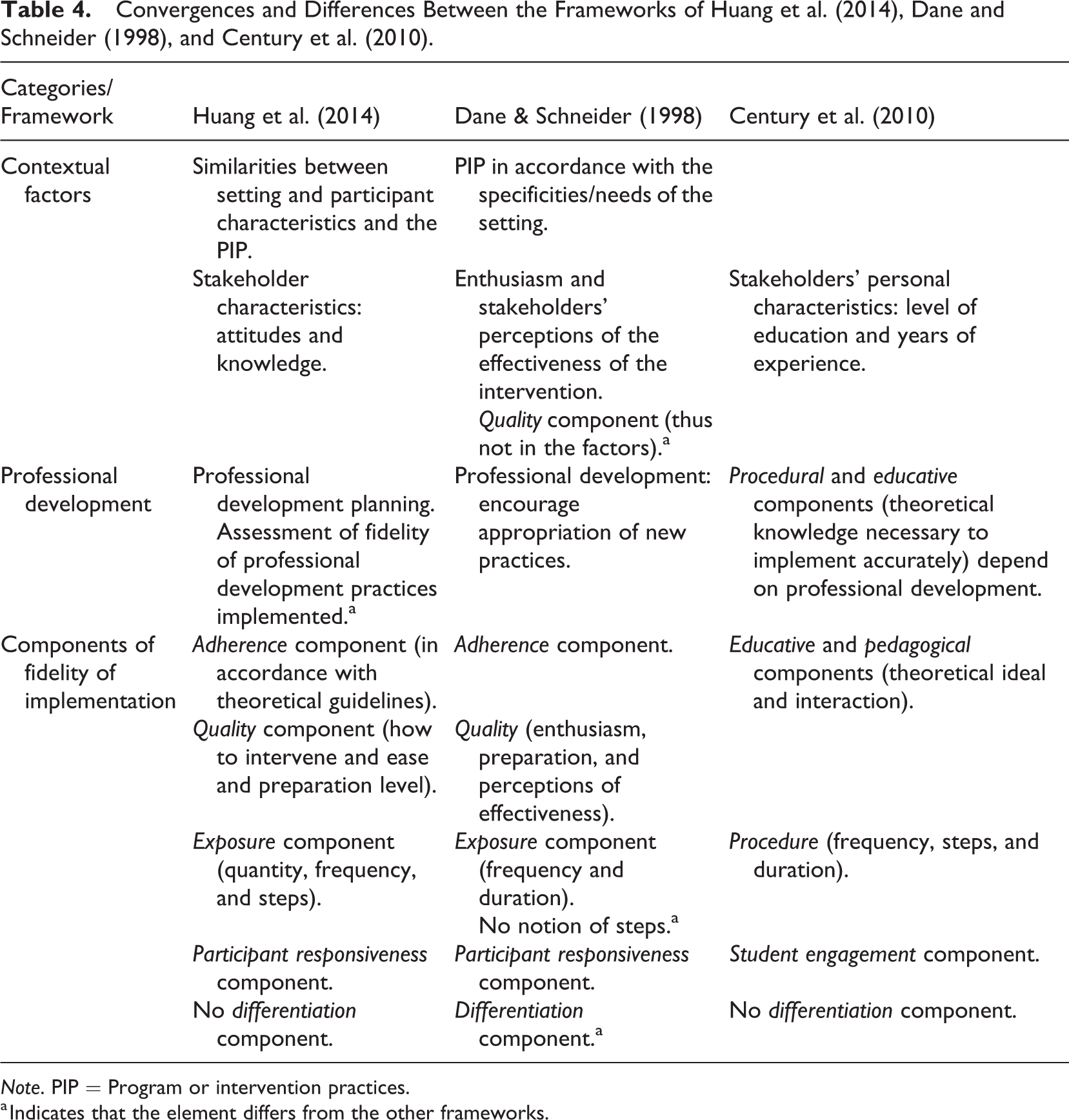

Analysis of the conceptual framework—Implementation conceptual model

Huang et al.’s (2014) conceptual framework consists of three sections linked by unidirectional arrows: (1) contextual factors, (2) fidelity of professional development and implementation outcomes, and (3) services and consumer outcomes. Table 4 summarizes the points of convergence and difference with Dane and Schneider’s (1998) and Century et al.’s (2010) frameworks. Some contextual factors that may influence implementation fidelity as presented by Huang et al. are discussed by Dane and Schneider (1998) and Century et al. (2010) or Huang et al. (2014) and Dane and Schneider (1998), the PIP characteristics to implement must converge with the clients’ needs and the interventions already used in the intervention setting. Huang et al. (2014) and Century et al. (2010) mention that practitioners’ attitudes toward and knowledge of new practices must be considered. For Dane and Schneider (1998), some personal characteristics of the stakeholders, such as their enthusiasm, are contained in the quality component. Huang et al. (2014) also identify organizational support, such as professional development planning, as a factor. Dane and Schneider (1998) note the important role of training and supervision in implementing new practices.

Convergences and Differences Between the Frameworks of Huang et al. (2014), Dane and Schneider (1998), and Century et al. (2010).

Note. PIP = Program or intervention practices.

a Indicates that the element differs from the other frameworks.

In terms of conceptualizing fidelity of implementation, the following three components from Huang et al. (2014)—adherence, quality, and participant responsiveness—are similar to Dane and Schneider’s (1998). Huang et al.’s (2014) description, which includes quantity, frequency, and duration of interventions received by participants, is similar to Dane and Schneider’s (1998) exposure component. Unlike Dane and Schneider (1998), Huang et al. (2014) include the notion of intervention steps in their definition of exposure. This notion echoes the definition of the procedural component in Century et al.’s (2010) framework. According to Huang et al. (2014), adherence and quality are also included in Century et al.’s educative and pedagogical components. Another element of convergence with Century et al.’s framework is that Huang et al. do not have a differentiation component in their conceptualization of fidelity of implementation; this absence diverges from Dane and Schneider, who do include differentiation within their framework.

Finally, in Dane and Schneider’s (1998) and Century et al.’s (2010) frameworks, the fidelity of implementation focuses on intervention practices. However, for Huang et al. (2014), the fidelity of implementation refers to professional development practices. The objective of professional development is to use and maintain intervention practices that have an impact on the system (school environment) and on children. This concept of implementation fidelity is very similar to the last conceptual framework analyzed, that of Dunst et al. (2013). These authors have developed a conceptual framework that links fidelity of implementation practices, fidelity of intervention practices, and results.

Analysis of the conceptual framework—Framework for showing the relationships between the fidelity of evidence-based implementation and intervention practices and the outcomes and consequences of the practices

Dunst et al.’s (2013) conceptual framework illustrates the relationships between two aspects of fidelity and their effects. These two aspects are fidelity of implementation practices (professional development) and fidelity of intervention practices. Dunst et al. (2013) represent the main interrelated parts of an implementation process, namely, effective professional development used as intended and which allows for faithful use of new intervention practices. These new and accurately implemented intervention practices have an impact on participants (e.g., developmental improvements in children). Consequently, there is a point of convergence with Dane and Schneider’s (1998) and Century et al.’s (2010) frameworks, that is, the importance of professional development in the implementation of practices as intended. The main difference with Dane and Schneider’s (1998) and Century et al.’s (2010) frameworks is that Dunst et al. (2013) do not specify the components of fidelity. The next sections discuss the principal findings and offer recommendations for research.

Discussion

First, in this review, most empirical articles lack detailed descriptions or references to a conceptual framework used to guide the assessment of implementation fidelity. This does not necessarily imply that those studies are not based on conceptual frameworks but rather that the authors do not specify or clearly refer to one. Second, the most commonly represented and used framework in those studies is Dane and Schneider’s (1998), which is in line with Caron et al. (2017) and Bérubé et al. (2014). A number of reasons could explain this framework’s popularity. As Tabak et al. (2018) note, a framework and its concepts must provide guidance to help researchers in the PIP assessment process. Dane and Schneider’s (1998) framework seems to fulfill this purpose. Indeed, many researchers in a variety of fields use it as a guide to assess and explain the different aspects of PIP implementation (Caron et al., 2017; Dusenbury et al., 2003). According to Bérubé et al. (2014), this framework allows to document different aspects of PIP implementation and to establish links with the changes observed.

Fixsen et al.’s (2005) framework stands out in theoretical articles but not in empirical articles on the fidelity of implementation. On the one hand, the very nature of the framework to chart the implementation process can explain this result. On the other hand, those authors gathered information on the assessment of fidelity from their literature review on implementation research. However, the information on the fidelity of implementation is presented neither as a model nor a conceptual framework.

Next, Dunst et al.’s (2013) framework is the one most used in empirical studies after Dane and Schneider’s (1998). The importance of the former’s framework relates to its comprehensive vision of fidelity of implementation. Dunst et al. (2013) highlight the importance of fidelity of professional support practices as well as fidelity of intervention practices. However, having access to a definition of implementation fidelity is the required first step when delineating research related to this topic.

Definitions of Implementation Fidelity

In this literature review, we identify various definitions of fidelity of implementation that could act as starting points for assessments. Dane and Schneider (1998) and Century et al. (2010) provide simple and similar definitions of implementation fidelity as found in other writings (Hemmeter et al., 2013; Luze & Peterson, 2004; Thomas & Marvin, 2016). However, Dane and Schneider (1998) use the term integrity instead of fidelity. Surtherland et al. (2013) also refer to integrity to reference the extent to which an intervention is implemented as planned. These examples from this literature review illustrate one of the shortcomings in ways of defining implementation fidelity—the lack of shared language. Actually in the literature, various terms are used interchangeably to discuss implementation fidelity: intervention integrity, treatment integrity or fidelity, reliability, and compliance (Dunst et al., 2013; Luze & Peterson, 2004). Furthermore, for some authors (Bryk, 2016; LeMahieu, 2011), differentiating fidelity of implementation from integrity of implementation is important. For LeMahieu (2011), fidelity concerns the use of PIP exactly as intended, while integrity refers to implementing what is useful and important while considering the context and needs of the intervention settings. When PIP move from theory to practice, adaptations may be necessary to respond to the realities of the intervention settings (Frank & Schroeder, 2013). For several authors (Bryk, 2016; Dane & Schneider, 1998; Durlak, 2015; Mowbray et al., 2003), those adaptations are inevitable and even desirable if the new PIP are to be adopted and used effectively. For some authors (Bryk, 2016; LeMaHieu, 2011), it would be more appropriate to use the term integrity rather than fidelity to speak of adaptive integration of the PIP.

This literature review also highlights that certain authors (Century et al., 2010; Dane & Schneider, 1998; Dunst et al., 2013; Fixsen et al., 2005) do not include components (e.g., Adherence, quality) in their definitions of fidelity while others do (Huang et al., 2014; Sutherland et al., 2013). In addition, for Huang et al. (2014) and Sutherland et al. (2013), the incorporated components are different. Finally, Dunst et al. (2013) define fidelity according to two aspects: fidelity of intervention practices and fidelity of implementation practices (professional development). It is a way of defining and conceptualizing fidelity that differs from the other frameworks in this review. To sum up, the information from this review supports the concerns of various authors. Indeed, for more than 30 years, several authors (Century et al., 2010; Dane & Schneider, 1998; Durlak & DuPre, 2008; Sutherland et al., 2013) have identified a lack of common language concerning the definitions of implementation fidelity and its components. As Dusenbury et al. (2003) stated: “The field needs to adopt universally agreed upon definitions of fidelity of implementation.” A definition of fidelity that incorporates the main concepts to delineate this construct could be an avenue to consider. Some authors (Huang et al., 2014; Sutherland et al., 2013) seem to follow this path by integrating the components into their definitions of implementation fidelity. Several authors (Domitrovich et al., 2010; Guo et al., 2016; Sutherland et al., 2013) underline the importance of conducting a thorough assessment of fidelity that considers several components of fidelity, not only exposure. Adopting such a definition could thus guide and inspire those assessing implementation fidelity. Indeed, most frameworks discussed in this article use several components to conceptualize fidelity.

Components of Fidelity

Dane and Schneider (1998) present five components of implementation fidelity. For Century et al. (2010), Huang et al. (2014), and Sutherland et al. (2013), the conceptualization of implementation fidelity consists of four components. Results of our review indicate the relevance of defining implementation fidelity as being composed of these four components: adherence, exposure, participant responsiveness, and quality. These components are integral to Dane and Schneider’s (1998) framework. This makes sense, given that Dane and Schneider’s (1998) terms for the five components are widely used in the literature, according to Hulleman et al. (2013). The component with the least consensus is differentiation, which is absent from the frameworks of Huang et al. and Century et al. (2010) or Sutherland et al. (2013), the differentiation component documents implemented intervention practices that are not recommended. From this perspective, it does not provide information on the reliability of implementation fidelity of interventions. Moreover, when evaluating programs, it is essential to verify whether implemented PIP differ from intended PIP. However, this is a step in an evaluation process and not a component of fidelity, as noted by Century et al. (2010), which could explain why differentiation is not widely used in studies that assess fidelity of implementation (Caron et al., 2017; Dane & Schneider, 1998). For these reasons, it is possible not to consider differentiation in the conceptualization of fidelity.

This literature review also points out differences in the names or definitions of the other components. For instance, in Sutherland et al.’s (2013) framework, the component related to quality is termed competence and is defined as the use of practices according to a theoretical ideal. This definition differs from Dane and Schneider’s (1998), who define quality as mainly a relational ideal that does not deal with the intervention contents. Although the relational aspect between the implementor and the person targeted by the PIP can prove to be an indicator of the quality of delivery, evaluators cannot limit themselves to this component. For example, underlying the quality of intervention practices designed to support children’s development is the integration of learning opportunities through routines and daily activities, with a goal to foster generalization of learning (Division for Early Childhood, 2014). This indicator must be evaluated to get a more accurate picture of the implementation fidelity of this type of practice. Therefore, it is important that the definition of quality includes both these aspects, that is, the use of PIP according to a theoretical and a relational ideal.

Another example of the differences in terms used is the participant responsiveness component, which Sutherland et al. (2013) call relational factors. It is interesting to note that Sutherland et al. stress the importance of relational factors, which underlie participants’ responsiveness and responses to the intervention. For Sutherland et al., without this participation, even compliant implementation of an EBP will not produce the expected results. For this reason, evaluation of participants’ responsiveness cannot be left out or be limited to collecting participant attendance.

Contextual Factors or Moderating Variables

Finally, our literature review highlights factors or moderating variables to consider when assessing fidelity of implementation. A number of contextual factors are points of convergence among the frameworks: the importance of well-detailed PIP and the balance between PIP characteristics and those of the intervention setting (Dane & Schneider, 1998; Fixsen et al., 2005; Huang et al., 2014; Sutherland et al., 2013). Characteristics or elements specific to educators should also be considered, namely, training and experience as well as perceptions of effectiveness (Century et al., 2010; Dane & Schneider, 1998; Fixsen et al., 2005; Huang et al., 2014; Sutherland et al., 2013). Those results support the conclusions of Dusenbury et al. (2003) regarding elements that can influence high implementation fidelity of intervention practices—training provided, characteristics of implementors, and PIP and organizational characteristics. In short, evaluators who undertake to evaluate the fidelity of implementation must consider and document those factors. Special attention must also be paid to professional development practices, whether they are an integral part of implementation fidelity (Dunst et al., 2013; Huang et al., 2014) or considered as contextual factors (Century et al., 2010; Dane & Schneider, 1998; Fixsen et al., 2005; Sutherland et al., 2013). For Dunst et al. (2013), professional development practices must be recognized as effective and used with fidelity. The research carried out by Dunst et al. (2013) show a direct effect of professional development practices on preschool educators’ accurate use of intervention practices that lead to improving child development. Thus, focusing on the use of professional development practices as intended should be a concern when evaluating implementation fidelity of intervention practices (Barton & Fettig., 2013; Dunst et al., 2013; Powell & Diamond, 2013).

Conclusion

As Tabak et al. (2018) noted, “Frameworks guide researchers to consider constructs in systematic efforts to develop and evaluate interventions” (p. 73). Regarding implementation research, Tabak et al. recommend looking to existing conceptual frameworks or models to further advance knowledge in this field. In addition, these authors suggest that exploring available frameworks to identify common concepts is preferable to developing new ones to respond to issues in this research field. It is from this perspective that the studies and works are presented here in this article.

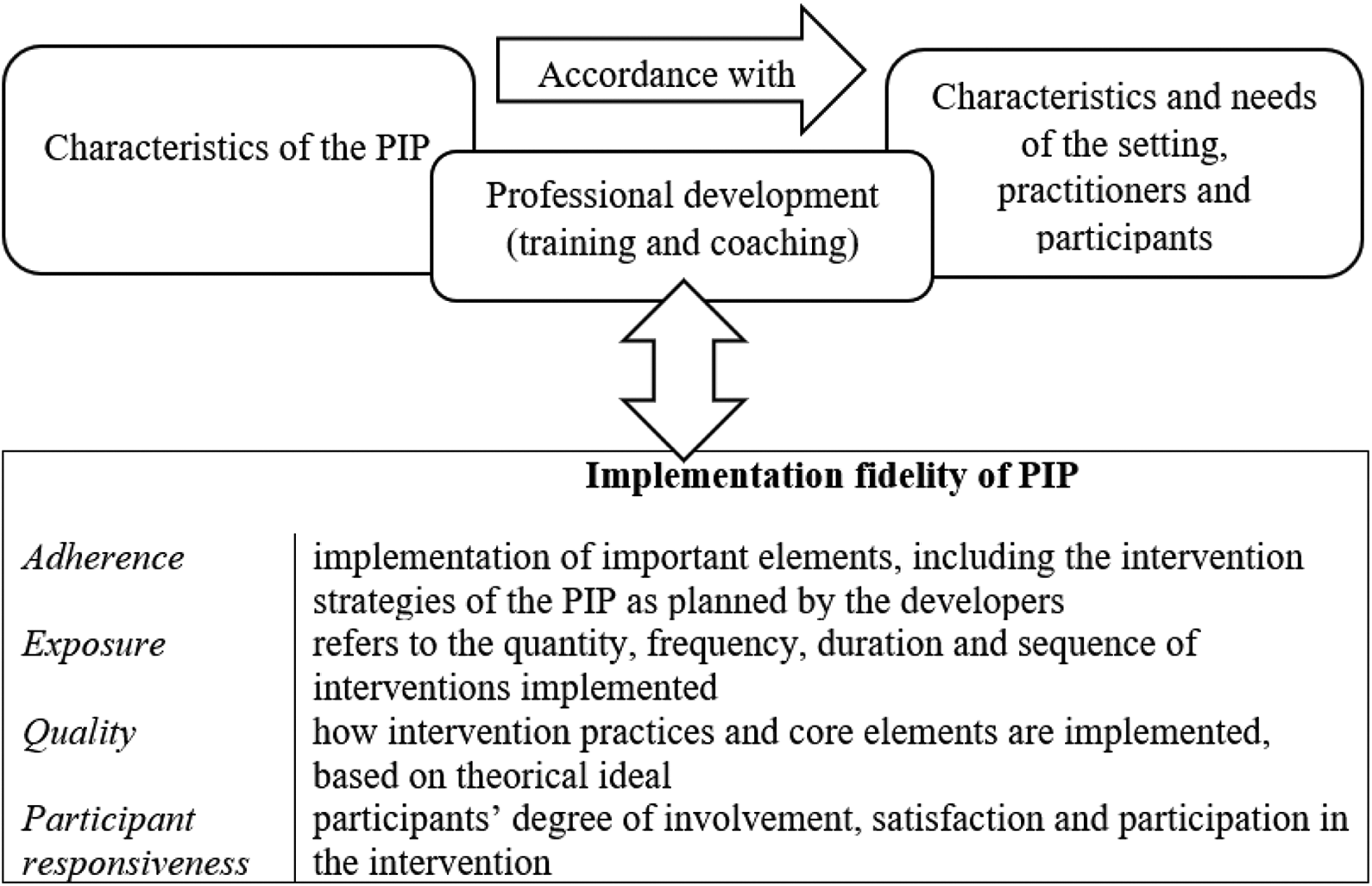

In light of this study’s results, the following definition of implementation fidelity is proposed: use of PIP as planned by the developers based on four components: adherence, exposure, quality, and participant responsiveness. Defining fidelity by specifying components is interesting because it clarifies the term and provides markers. Therefore, the components are incorporated into the proposed definition. Moreover, each of the four components has been defined by integrating the contribution of the conceptual frameworks (Century et al., 2010; Dane & Schneider, 1998; Dunst et al., 2013; Huang et al., 2014; Sutherland et al., 2013) analyzed here in this literature review. These components and their definitions constitute the conceptualization of implementation fidelity, which is illustrated in Figure 1 along with contextual factors.

Conceptualization of implementation fidelity and contextual factors.

The proposed definition and associated conceptualization can guide the essential process of assessing fidelity of PIP implementation to support early childhood development and other areas of intervention. When evaluating implementation fidelity, the evaluators’ first step is to identify key PIP elements and dimensions (Chen, 2015). The conceptualization and proposed definition invite evaluators to ensure they have a complete view of all aspects of PIP while also considering moderating variables. In addition, component definitions are useful to the operationalization process and indicator development. When evaluating fidelity of implementation, these steps are essential to highlight key elements of PIP and their use by practitioners (Hulleman et al., 2013).

Assessing fidelity of implementation is the first step in the process of documenting adaptations in the use of PIP to gain a clearer picture of implementation integrity. Assessing the implementation fidelity of a PIP is useful to identify challenges and facilitators encountered when applying a program, collect data to make continuous adjustments, understand the presence or absence of intervention effects, and identify related key elements of the PIP. Assessing implementation fidelity is a complex but essential process that requires clear markers. The information in this literature review provides a step toward a common language regarding the definition and conceptualization of implementation fidelity. This article does not claim to be reinventing the wheel. The suggestions are consistent, build on previous work, and could be a basis for agreement in the future.

Finally, this article presents some limitations and strengths. It is based on a review of French- and English-language literature published between 1998 and 2018, using the ERIC, PsycINFO, and MEDLINE databases. This review could not track all publications. Relevant articles in languages other than English and French were not included, nor were publications from the gray literature. Also, the search was limited to articles in the field of early intervention. Inclusion of empirical articles dealing with implementation, but in other areas of intervention such as education or youth mental health, could have been relevant. It could also have been interesting to examine the scientific literature related to knowledge transfer. Nevertheless, the selected databases provide an overview of the literature on implementation fidelity of early interventions. Moreover, a considerable number of theoretical (22) and empirical (24) articles were selected to provide a representative portrait of evaluation of fidelity of implementation in the field of early intervention.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported in part grant from the Chaire de recherche du Canada (CRC) en intervention précoce, from the Fonds de recherche du Québec–Société et culture (FRQSC; grant ID: 189836), and from the Social Sciences and Humanities Research Council (SSHRC; grant ID: 767-2016-8004).