Abstract

Evaluation policy has been identified as an important means of shaping and influencing organizational evaluation practice, yet, to date, little empirical research has been conducted to deepen our understanding of this relationship. The purpose of this study was to illuminate evaluation policy’s role in leveraging organizational capacity to do and use evaluation. We interviewed 18 published evaluation scholars and practitioners from North America and Europe about this topic. A thematic analysis of findings underscores the importance of context, policy attributes, enablers, and organizational benefits. Based on the findings, we developed an ecological conceptual framework to guide thinking about the role of evaluation policy in capacity building. We discuss these findings in terms of practical implications of understanding context, redressing the imbalance between learning and accountability purposes of evaluation, and organizational leadership, and we conclude with some implications for research.

Introduction

Evaluation scholars who are concerned with building evaluation culture and practices in organizations have been adding to a growing literature on evaluation capacity building (ECB). Organizational ECB is commonly defined as “the intentional work to continuously create and sustain overall organizational processes that make quality evaluation and its uses routine” (Stockdill et al., 2002, p. 14). Historically, organizational evaluation capacity has been thought of as the competencies and structures required to conduct high-quality evaluation studies (i.e., the

The enhancement of organizational evaluation capacity is essential to the production of quality evaluations that enable organizations to meet their accountability requirements and enhance organizational learning and decision making (e.g., Bourgeois et al., 2013; Boyle et al., 1999; Cousins et al., 2004; Sanders, 2003; Stockdill et al., 2002). In this study, we are interested in the extent to which and how evaluation policy is implicated in building and maintaining organizational evaluation capacity.

Evaluation policy remains an obscure and underdeveloped research area (Dillman & Christie, 2017; Trochim, 2009). It has been defined as “any rule or principle that a group or organization uses to guide its decisions and actions when doing evaluation” (Trochim, 2009, p. 16). Based on this definition, evaluation policy is inherently collective and could be written and explicit or unwritten and implicit. Despite recent, growing interest, there is a particular paucity of research examining the impact of evaluation policy on organizational evaluation culture and practice (Mark et al., 2009).

The purpose of this exploratory study is to investigate the extent to which and how evaluation policy has the potential to improve organizational capacity to implement evaluations and use them. Does evaluation policy have the potential to leverage such capacity? If so, under what conditions or circumstances?

Conceptual Background

The Nature and Consequences of Evaluation Policy

Evaluation policies exist within public, private, and nonprofit sectors. They are created and applied in specific political, economic, and cultural contexts, whether at organizational, national, or global levels. Trochim (2009) categorized their content into specifications about evaluation purposes, participation, capacity building, management, process and method, role, use, and meta-evaluation or quality assurance. In general, evaluation policy is intended to govern the way evaluation is conducted and used. It carries an organization’s intentions to be accountable to stakeholders, to support organizational learning, and to use evidence for decision making (Datta, 2009).

Evaluation policy has the potential to influence decision making in a range of ways (Chelimsky, 2009; Datta, 2009; Mark et al., 2009; Trochim, 2009). For example, consider the following policy attributes and potential influences:

Policy thus sets an important frame of reference for how funders and evaluators define, implement, report, and use evaluations. For instance, in politically contentious organizational contexts, evaluation policy can help address challenges to evaluation credibility or accuracy (Chelimsky, 2009). In hyper-political contexts, policies related to evaluation use and its links to decision making can help organizations to meet international transparency and accountability demands (Stern, 2009). In challenging economic conditions, policies requiring evaluation to include “reconsideration study” can help decision makers understand potential consequences for goal achievement in the hypothetical event of a significant intervention budget cut (Leeuw, 2009). These examples show how evaluation policy is influenced by and can influence contextual conditions.

Evaluation Policy as Part of the Evaluation System

The role and consequences of evaluation policy can be examined using a systems lens. According to Liverani and Lundgren (2007), the term evaluation system refers to “the procedural, institutional and policy arrangements shaping the evaluation function and its relationship to its internal and external environment” (p. 241). Implicated are evaluation independence, its resources, and cultural attitudes toward it. Using a systems lens highlights influences on evaluation demand and its use, including the dissemination and integration of results. Dahler-Larsen (2006) argued that an evaluation system acts as “a continuous stream of information” (p. 1) that plays a role in the production of evidence to support decision making. To a great extent, system functioning depends on evaluation policy, organizational design, and staffing structures that allow the flow of information. According to Dillman and Christie (2017), policies constructed from a systems perspective, rather than isolated rules and guidelines, help organizations achieve desired outcomes, increase evaluation use, and support organizational learning.

As Leeuw and Furubo (2008) asserted, an evaluation system must represent an organization’s internal consensus about the meaning of evaluation, the purpose it serves, and the type of knowledge it produces. To that end, internal responsibility for evaluation, as opposed to outsourcing, may act to enhance evaluation capacity and promote institutionalization. We now turn to a review of what we know about organizational evaluation capacity and how to enhance it.

Organizational ECB

Evaluation scholars have developed a number of conceptualizations of the meaning and the practices that constitute ECB. For example, Taylor-Powell and Boyd (2008) introduced a three-component framework that focuses on structuring strategies used in ECB. Professional development, resources and supports, and organizational environment are the three main dimensions of ECB practice. Along the same lines, Preskill and Boyle (2008) developed an ECB model that outlines a range of factors (e.g., evaluation knowledge, skills, attitudes) that may influence the development and impact of ECB processes on evaluation practice. Such elements may be intentionally integrated into organizational evaluation policy. In doing so, the focus would be on enhancing organizational capacity to practice evaluation or engage with it in an oversight function. Yet, as Cousins et al. (2004) asserted, “the integration of evaluation into the culture of organizations…has as much to do with the consequences of evaluation as it does the development of skills and knowledge of evaluation logic and methods” (p. 101). As such, “ECB is about developing the capacity to do evaluation, as well as the capacity to use it” (Levin-Rozalis et al., 2009, p. 194).

Organizational Capacity for Evaluation

Cousins et al. (2008), Nacarrella et al. (2007), and Nielsen et al. (2011) lamented that while much had been written about the role of ECB, scholars had directed considerably less attention toward the concept of evaluation capacity itself. Whereas organizational evaluation capacity manifests as visible, enacted evaluation practices and processes, ECB is the process by which an organization develops its understanding and ability to undertake such. As has been the case with scholarship on ECB, an emphasis on understanding evaluation use as an integral part of organizational evaluation capacity has been missing (Cousins & Bourgeois, 2014).

With this gap in mind, Bourgeois and Cousins (2008, 2013) developed and validated a comprehensive conceptual framework of the organizational dimensions of evaluation capacity. The framework consists of six subdimensions: three associated with the organizational

While evidence is beginning to accrue as to how organizational evaluation capacity can be enhanced, evaluation policy’s role in this regard is not well understood. This study sought to explore the role of evaluation policy in building (or impeding) organizational capacity to do and use evaluation through one-on-one interviews with knowledgeable evaluation scholars and practitioners. Next, we briefly describe the study participants and the research method.

Method

The study relied exclusively on in-depth interviews with participants with high degrees of knowledge about evaluation policy and/or ECB. To follow is a description of the strategies, we used for sampling, data collection/processing, and analysis.

Study Participants

We selected the participants on a reputational basis. Each participant had published books and/or peer-reviewed journal articles on evaluation policy and/or ECB or, to a lesser extent, had significant professional experience in at least one of those areas. We identified candidates from the latter group through consultation with special interest group leadership, evaluation conference presentation descriptions, and collegial nomination. Including knowledgeable practitioners enabled deeper first-hand interpretations of both the role of evaluation policy in building organizational evaluation capacity and the multiple and varied dimensions at play within an organizational context.

Of 22 invited evaluation scholars and practitioners from North America and Europe, 18 agreed to participate in a remote interview. While the majority of the participants were university professors, some were working as evaluation consultants and/or with government or private organizations. The participants had between 15 and 30 years of experience working in evaluation in regional, national, and international contexts. All participants resided in the global West, but some worked in international development.

Interview Methods

Relying on teleconference/telephone technology, the lead author interviewed all 18 participants once they formally agreed to the terms of a letter of informed consent. The sessions were audio-recorded with permission and lasted 60–90 min.

The interviews were semi-structured. To mitigate interview bias, we asked participants for their definition of evaluation policy and organizational capacity for evaluation and refrained from introducing them to our conceptualizations, as described above. Listed in Box A are sample questions from the interview protocol.

Sample Interview Questions

So, first of all, I’d like to ask you about your own definition of:

Evaluation policy

Organizational capacity

Organizational capacity

What impact do you think evaluation policy has on organization capacity for evaluation?

How does evaluation policy influence evaluation practice?

How does evaluation policy influence evaluation use?

In your opinion, what evidence should organizations collect on the impact of the evaluation policy on evaluation practice and use?

In your opinion, what factors contribute to benefitting from evaluation policy in building/enhancing organizational capacity for evaluation? (

Repeat for “impede benefitting”

Analysis

The lead author transcribed the audio recordings verbatim into individual Word files, which were then integrated into NVivo (Version 12) for analysis. 1 The analytic strategy we employed was to use the previously described conceptual aspects of evaluation policy and dimensions of organizational evaluation capacity as the basis for an initial list of “start codes” while remaining open to identifying additional emergent codes.

The work was carried out in two stages. During the first stage, the lead author read through each transcript, flagging specific pieces of information to which she applied start and emergent codes. She then identified patterns and connections between specific codes and subsequently developed emergent themes based on the implicated concepts. The themes included nuanced elaborations of the concepts and their relationship to other variables. This process was iterative. The second stage involved the classification of the themes according to their focus on the relationship between evaluation policy and organizational capacity for evaluation. Both authors collaborated throughout this stage in order to “support, strengthen, modify, or disconfirm the findings” (Saldana, 2009, p. 229). Joint analysis included interrogation and resolution of coding discrepancies, theme identification, and relationship verification. As a measure of data quality assurance, we include rich, thick verbatim descriptions of participants’ accounts in order to support our interpretation of the findings. We now turn to a thematic summary of the findings.

Findings

In this section, we begin with an analysis of participants’ understandings of the main constructs of interest: evaluation policy and organizational evaluation capacity. We then turn to a thematic analysis of the variables that moderate the relationship between policy and capacity and close with the presentation of an integrative conceptualization of the relationship.

Defining Evaluation Policy

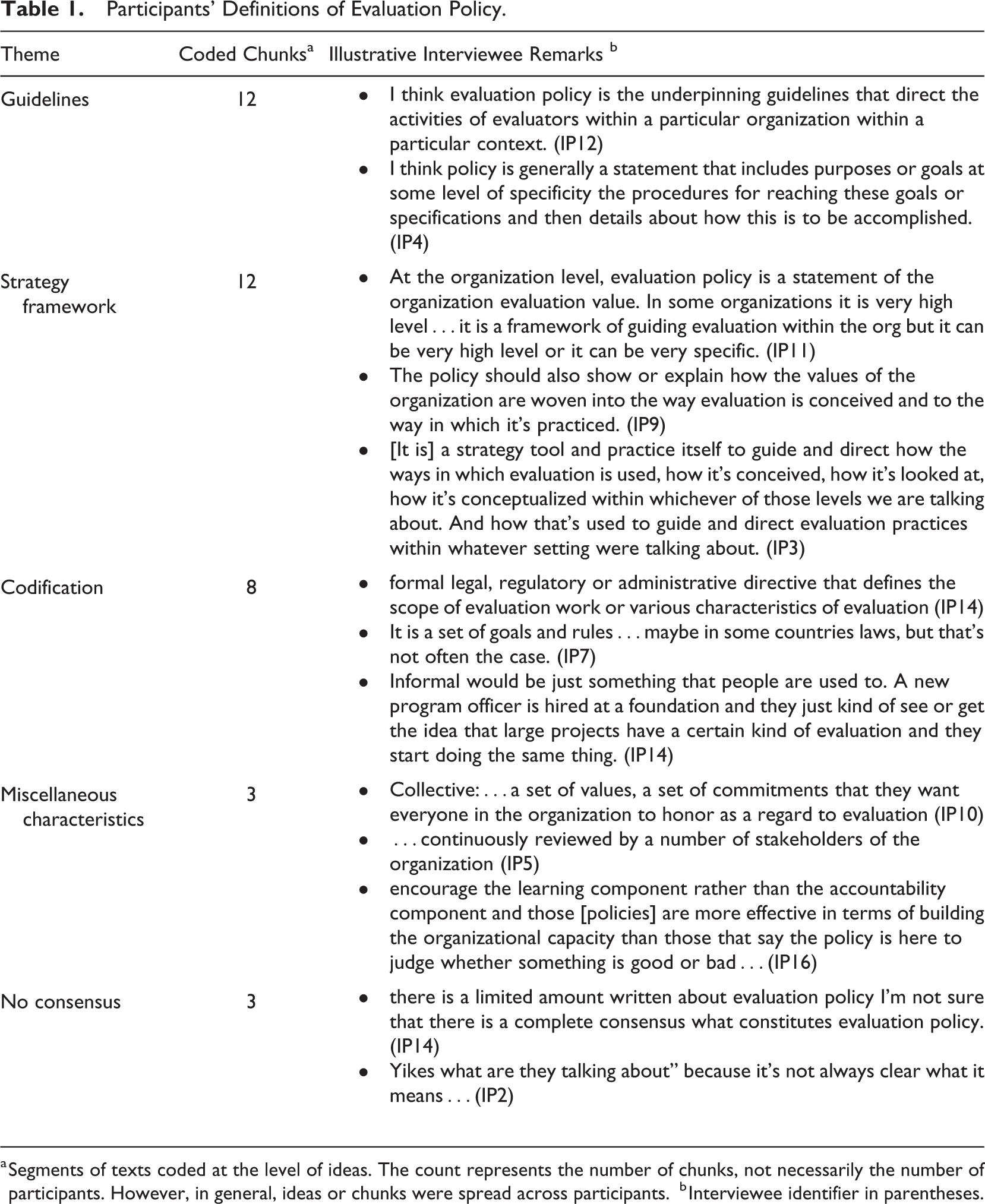

There was some variation in participant responses to the question of how they define evaluation policy. Table 1 shows the results of a content analysis of the answers given by the interviewees.

Participants’ Definitions of Evaluation Policy.

a Segments of texts coded at the level of ideas. The count represents the number of chunks, not necessarily the number of participants. However, in general, ideas or chunks were spread across participants.

b Interviewee identifier in parentheses.

Participants often talked about evaluation policy in terms of a set of rules or guidelines or as a strategic framework within the organization. In referencing guidelines, participants focused on descriptive statements about how evaluation is to be conducted within the organization, including roles and responsibilities. Such statements are intended to guide evaluator practices. Participants also talked about evaluation policy at the level of organizational strategy; it was not so much about when and how to do evaluation but what evaluation is expected to accomplish within the organization. There were sometimes references to organizational values embedded within the evaluation policy. When conceived as a strategic framework, evaluation policy could be either quite high level or very specific.

Several participants also referred to the explicit nature of evaluation policy or the extent to which it was codified. Of the eight instances of such references, all but one referred only to explicit statements or formal legal, regulatory, or organizational directives. Our own definition, provided above, encompasses the notion that policy can be implicit or hidden. Yet only one participant inferred that evaluation policy can be implicit or informal and that new members of the organization develop an understanding of evaluation expectations over time. In a few instances, miscellaneous characteristics of evaluation policy were referenced, such as its collective nature, it being subject to ongoing review and revision, and the relative emphasis on learning, as opposed to accountability, and attendant implications for ECB. Finally, there were three references to a lack of clarity or consensus about how evaluation policy is defined.

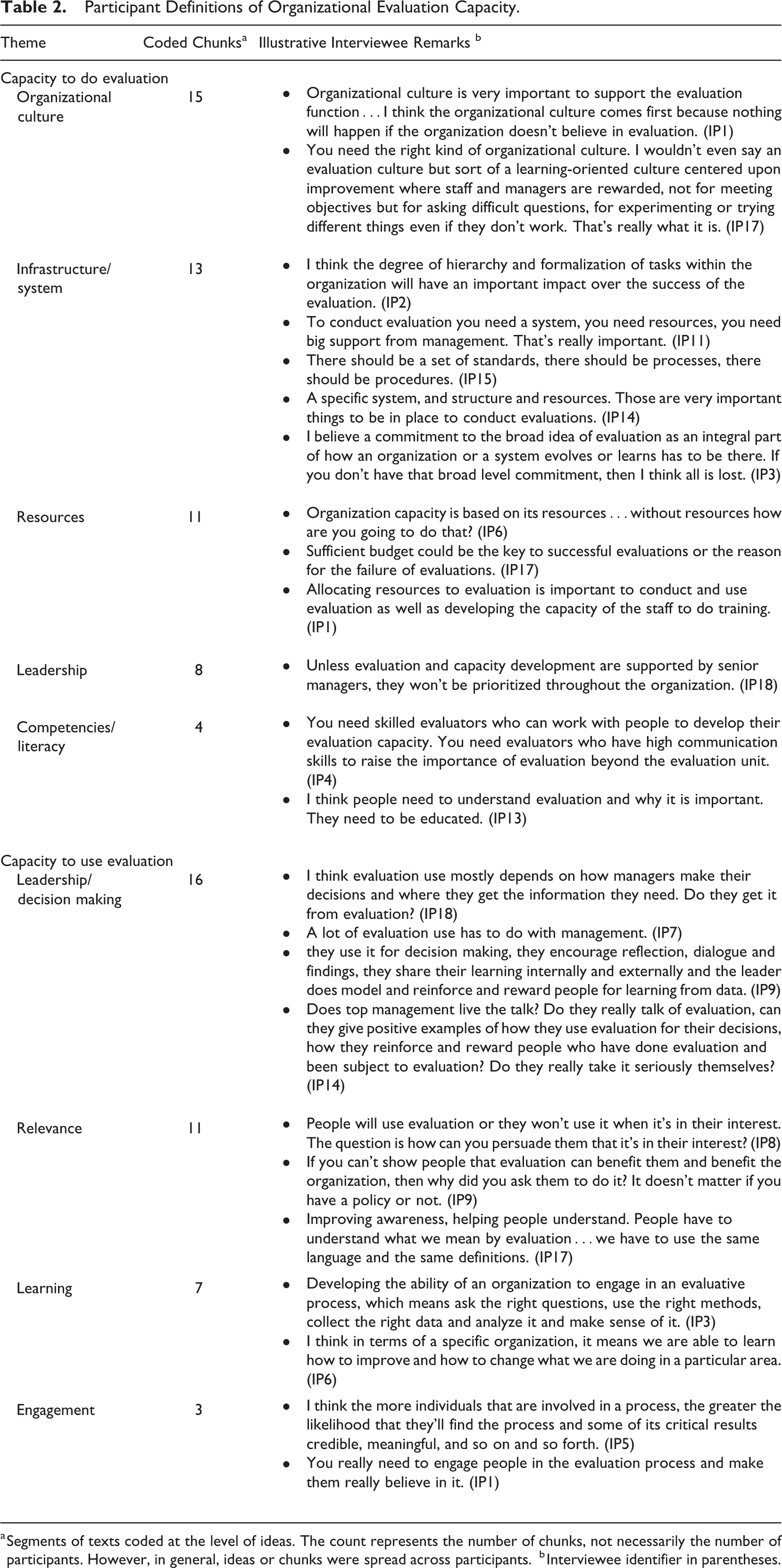

Defining Organizational Evaluation Capacity

To capture the meaning of organizational evaluation capacity, we asked participants to define the capacity to do evaluation and the capacity to use it. Our analysis of the responses shows widespread agreement among the participants that the two aspects are interactive and inform one another. Further, their comments align to a substantial degree with our own conceptualization as described above (see also, Bourgeois & Cousins, 2008, 2013). Appearing in Table 2 are the results of our content analysis along with illustrative quotations.

Participant Definitions of Organizational Evaluation Capacity.

a Segments of texts coded at the level of ideas. The count represents the number of chunks, not necessarily the number of participants. However, in general, ideas or chunks were spread across participants.

b Interviewee identifier in parentheses.

The majority of the participants referred to the capacity to do evaluation in terms of organizational culture, infrastructure, and resources. Participants focused on a learning-oriented culture that accepts experimentation for development and growth. They also highlighted the importance of a supportive system to set the stage for the evaluation function activities. Participants tended to commingle thoughts about ECB with organizational evaluation capacity in their definitions. For example, the availability of resources was identified as essential when thinking about ECB. Participants also noted that leadership support is necessary to establish an organizational environment for ongoing learning and improvement. To a lesser extent, evaluation literacy and competencies were identified as critical to understanding and promoting ECB activities.

Almost all of the interview participants referred to the organizational capacity to use evaluation in terms of management’s application of evaluation to support decision-making processes. There were also several references to the relevance of evaluation and the benefits it brings to organization members. Seven references were made to the learning that takes place during and after the evaluation processes as having significant implications for use. Finally, three participants mentioned the engagement of stakeholders in the evaluation process in their definition of the capacity to use evaluation.

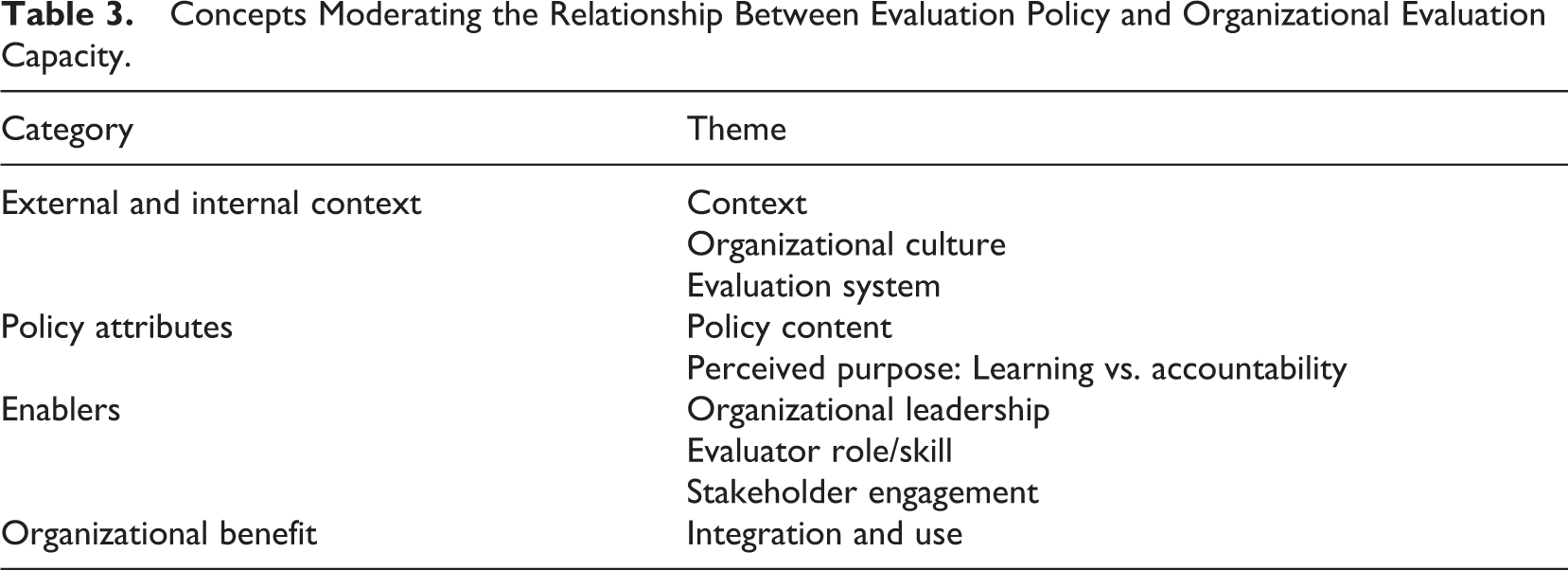

It is interesting to note that many of the participants’ definitions of evaluation policy and capacity touched on variables that moderate the relationship between the two. That policy was framed in strategic terms and that capacity for evaluation use was grounded in considerations of organizational culture, ensuing benefits, and learning are cases in point. Table 3 shows the themes that emerged about this topic. We take up these themes, organized by higher order categories—context, attributes, enablers, benefits—in turn.

Concepts Moderating the Relationship Between Evaluation Policy and Organizational Evaluation Capacity.

Context

Contextual factors and conditions affect the development, implementation, and outcomes of policies and programs because they shape the way in which evaluation is approached, practiced, and used. For example, some participants shared the view that evaluation policy is likely to have a major impact on evaluation practice and capacity building in developing country contexts. This is because the needs and demands for evaluation focus on supporting the development of evaluation capacity and strengthening related systems of management, learning, and accountability. As one of the participants suggested:

If you want to develop evaluation capacity in a sub-Saharan African country, you better have an evaluation policy that tells people there what evaluation is and what they need to know and what they are supposed to do. They need something to refer to; it will guide them through the process. (IP8)

2

On the other hand, in the global West (i.e., developed countries), evaluation policy is mostly perceived as another level of bureaucracy and an additional requirement that actors need to fulfill. This is especially the case in political contexts where there is a high demand for evaluation for accountability purposes, as is implied by the following comment: “In Europe, we have evaluations, we have performance audits, we have oversight, we have supervision, we have inspections…we are evaluated to death” (IP2). It seems likely that in contexts where evaluation is mainly used for performance measurement and performance accountability, evaluation policy becomes the dominant accountability instrument. This can hinder learning, improvement, and, ironically, performance:

If policy is not well thought out, it can damage practice. In England, strict policy that focused mainly on accountability was a disaster because it was driving teachers out of the profession. (IP1)

Some participants also pointed to the influence of the historical and economic aspects of the evaluation policy context and the ways in which they affect the practice of evaluation and people’s perceptions of evaluation. For example, some argued that the European Structural Funds were a major driver for spreading the practice of evaluation throughout Europe in the 1980s. They indicated that the introduction of evaluation into many European countries was a result of the requirements of the structural fund regulations to assess value for money, requirements that continue to influence how people think about evaluation today. In such contexts, evaluation is associated with a high level of accountability, with the main motivation being the fulfillment of external requirements accompanied by little or no interest in learning in organizations.

Many of the contextual variables referenced were external to organizations, but their influence on internal variables is often quite evident. As a case in point, significant external accountability demands influence and shape internal organizational perceptions about evaluation being exclusively accountability oriented.

Organizational Culture

Evaluation policy will have little to no effect on capacity building if the organization lacks a culture that supports making evaluation strategic and useful, which in many cases is established at the management level. Many participants argued that when organizations are committed to evaluation, having limited resources is not an obstacle for evaluation; instead, it is a matter of management being strategic with the available resources and more intentional about learning and evaluation activities. According to most participants, to benefit from a well-thought out evaluation policy to create or support a dynamic environment, organizations must have a culture that encourages learning, risk-taking, and dialogue to improve their performance. Many participants also shared the view that the type of organization and the stability of its management and governance structure affect its culture and influence both the role of policy in promoting evaluation capacity and the feasibility and sustainability of capacity-building efforts.

Evaluation System

A supportive evaluation system within the organization is essential for setting the stage for evaluation policy fostering ECB activities within the organization. As one participant put it, “Evaluation policy can light a flame, but the flames are only going to catch it if there’s dry timber and so a certain infrastructure has to be in place to see an impact” (IP16).

An essential aspect of the organizational infrastructure is the degree of coordination within the system to ensure access to evaluation resources and information, which is affected by the alignment of work processes and data sharing between units and departments. For example, some interviewees indicated that responsibility for evaluation data collection and use could implicate different units within the organization. Without proper coordination, the potential for evaluation policy to leverage ECB activities remains unrealized. This is particularly the case with the ongoing collection of performance measurement data by program staff and its consequent suitability for evaluation purposes.

Another important moderator is the availability of appropriate resources to implement the evaluation policy and to turn its strategies into actions that can accomplish its objectives and, ultimately, achieve the desired impact on organizational capacity. This includes supporting staff participation in capacity building activities and allocating sufficient time to integrate the policy into the organizational system. Some participants noted that resources are needed in order to bring organization members on board. They must have enough time to implement any evaluation activities that they are not currently performing.

Policy Content and Type

Participants suggested that whether evaluation policy is descriptive or prescriptive, policy content, particularly requirements, significantly impacts evaluation practice and capacity. Some interviewees were of the view that descriptive evaluation policies are more favorable for guiding evaluation practice because they provide general guidance for the evaluation function without restricting practice choices. Prescriptive policies, on the other hand, include extensive details and instructions that effectively constrain evaluation practice and reduce the flexibility needed to learn from it. As one participant explained,

Evaluation policy at the federal government is considered to be very prescriptive, and it results in evaluations that are so focused to meet the requirements of the policy that they set aside the actual informational needs of stakeholders. So evaluators really struggle because the policy is so explicit about the fundamental issues and the key evaluation questions to be asked. They are so focused on those that they are unable to match [them] to the actual needs of stakeholders. So they produce evaluations, and they check the boxes and are able to say yes, we did it. (IP16)

Further, participants thought that overly detailed evaluation policies not only restrain capacity and are difficult to maintain but are also deleterious to the quality of evaluation and thus may potentially do more harm than good. As one of the participants pointed out,

The EU is prescribing its evaluation policy and the rats pop out of the earth; not bureaucrats but rats. Folks that have no idea what they are doing, but they use the right words because the evaluation policy is prescribed and articulated. These guys are copying the words and putting them out to tender, and then they get it done. This is really a perverse effect of evaluation policies, and I see it happen all the time. (IP2)

Nonetheless, many participants agreed that effective evaluation policy is both a source of information and a tool for highlighting potential areas of concern and offering guidance without restricting evaluation practice. When there are restrictions on evaluation practice, capacity can be limited. Thus, recognizing the effect of these contextual factors is critical for understanding the dynamics of the role of evaluation policy in building evaluation capacity and identifying the challenges involved in the process.

Perceived Purpose: Learning Versus Accountability

Organization members’ perceived purpose of the evaluation function is critical for the role of evaluation policy in fostering evaluation use. As a participant noted: “Evaluation is actually something that means something when you’re tasked with either carrying out evaluations or contracting them out or managing them” (IP17). A common understanding of the meaning of evaluation within the organization and the familiarity of organization members with evaluation principles moderate the extent to which evaluation policy will impact use. Another participant explained:

There are different ways of viewing evaluation, and then there are different ways of understanding what policy is. As a strategy tool to guide and direct how the ways in which evaluation is used, it depends on how it’s conceived, how it’s looked at, how it’s conceptualized within the organization and how that’s used to guide and direct evaluation practices. (IP5)

Organization members tend to confuse or conflate evaluation and audit as a single concept or function within the organization with deleterious effects. Such confusion undermines support for and use of evaluation as a mechanism for learning and improvement and, ultimately, limits the role of evaluation policy. While the purpose of evaluation is to understand and assess the merit and worth of programs and to identify the best alternatives to inform decision making and generate improvement, the purpose of auditing is to assess the degree of compliance of a program with predetermined standards or objectives. When evaluation is done in an audit-like fashion, its meaning, value, and purpose diminish; the knowledge that the evaluation eventually produces is likely to be either useless or irrelevant for capacity building activities. For many participants, the primary focus on accountability as the major purpose of evaluation has significant, potentially negative, implications for evaluation, especially in terms of its learning benefits and use. Nevertheless, a number of participants acknowledge that accountability is an integral part of evaluation and that evaluation policy should reflect this reality. Yet, for evaluation policy to foster evaluation use, it should make accountability meaningful in a way that reflects the true value of evaluation.

Organizational Leadership

Several interviewees underscored the importance of organizational leadership to the evaluation policy–capacity building relationship. They suggest that leaders must support evaluation and communicate its importance to improve the organization’s effectiveness to both staff and partners. Such practice can make a significant difference in establishing an organizational environment for ongoing learning and improvement in which organization members consistently want to perform at their best. A participant explained as follows: “Unless evaluation and capacity development are supported by senior managers, they won’t be prioritized throughout the organization” (IP18). The right blend of leader pressure and support can breathe life into evaluation policy and move it from a static document to actions that boost opportunities for learning and capacity building.

Evaluator Role and Skill

The role evaluators play within the organization and their evaluation knowledge and skills influence the effectiveness of evaluation policy and enable or hinder its role in building organizational capacity. Essential technical skills are the identification of evaluation issues, the use of appropriate data collection methods, and the generation of evidence-based recommendations. Softer interpersonal skills such as building client trust, communicating evaluation messages with clarity and transparency, and being responsive to stakeholders’ information needs were mentioned as salient. A few participants also shared that evaluators have an important role in planning for and facilitating learning from the evaluation. Evaluators play a key role in educating organization members and improving their knowledge and skills regarding evaluation. As a participant noted: “Evaluators are the best people to talk about the policy. They are the passionate people to talk about it. They are able to answer questions and explain things, so they are best placed to do that” (IP18).

Another participant further argued that evaluators could act like coaches to guide organization members to achieve the goals of the evaluation policy and support them in building their capacity and knowledge about evaluation. Indeed,

When the organizations have a policy or guidelines, I think the evaluator needs to take on the role of a coach, coaching people about evaluation to keep them moving along. The last thing you want is this whole document to sit on the shelf because people don’t know what to do with it. (IP5)

Evaluators should also work with the organizational leadership to promote the value of evaluation as a management tool. One interviewee put it this way:

The directors of the organizations are not trained in evaluation, and they don’t have the skills, so they do the best they can to be compliant, but they don’t have the background or training like evaluators. They are not evaluators. Evaluators need to work with them to get there. (IP7)

The evaluator’s communication skills are critical for promoting evaluation and using the policy to foster evaluation capacity. One of the participants commented that “people need to be pulled into evaluation more than pushed to evaluation. You can push them to evaluation through mandates and policies, but I don’t think that’s going to get you much more than sort of ‘check-off-the-box’ answers” (IP1).

Communication between evaluators and other organization members is an important factor that affects not only whether they are supportive of the evaluation but also their perception of the value of the evaluation and its use. Thus, evaluators with the right skill set, and perhaps especially soft skills, play a critical role in helping organization members to understand the value of evaluation and develop their knowledge to address the long-term change the policy aims to achieve.

Stakeholder Engagement

Through our analyses, we identified the engagement of policy stakeholders in the policy-making process and/or the evaluation process and the learning that takes place during and after such processes as enabling moderating variables. A large majority of the participants emphasized the importance of ensuring the responsiveness of evaluation policy by actively involving those who will be affected by it.

Stakeholder engagement can enhance voluntary compliance with the policy for two reasons. First, stakeholders may adjust to changes more easily because they are made with opportunities for input to allow them to anticipate and overcome challenges quickly. Second, stakeholder engagement engenders a sense of legitimacy and shared ownership that motivates them to abide by the policy.

Some participants also affirmed that the evaluation policy should set clear objectives for stakeholders’ engagement in the evaluation process in order to strengthen the relevance and use of evaluation findings. They explained how evaluation policy could leverage participation in evaluation activities that will eventually increase their evaluation capacity. One of the participants indicated that if evaluation policy relies on a participatory approach and promotes the inclusion of all relevant actors in the evaluation process, more attention will be paid to marginalized, disadvantaged, or less powerful groups: “Participation is crucial. It is everything. If you don’t have the agreement of the people who are going to use it, who are going to endure the change, I don’t think it’s worth doing it.” Thus, evaluators can use the evaluation policy to take on a purposeful role in stimulating evaluation capacity use by engaging stakeholders, communicating with them throughout the evaluation process, and identifying teachable moments when participants can learn and apply various evaluation skills.

Integration and Use

We learned from participants that the impact of evaluation policy on the organizational capacity to use evaluation is moderated by its integration into decision-making processes and by how the purpose of the evaluation function is conceptualized within the organization. The ability of evaluation policy to positively foster evaluation use depends on the extent to which the organization’s management relies on evaluative information and uses it for decision making. If management does not demonstrate to staff how they can integrate evaluation and use it to make decisions, take action, and learn, evaluation policy’s potential to enhance evaluation use remains unrealized. This claim is illustrated nicely by the following comment:

It’s not just the policy that is going to make that difference in itself. It’s one way, but we’re talking about evaluation use. If it is in the policy but you never hear about it from top management, then other managers are not going to take it seriously themselves. (IP14)

Some participants also pointed out that when there is an evaluation policy at the government level, evaluation may be thought of as a burdensome “fill-in-the-form” and “check-the-box” exercise. As such, it will have no impact on building organizational capacity because it is not integrated or used for strategic decision making. The mentality around evaluation use in most government organizations is more summative or accountability-focused than formative. It is perceived as a control mechanism rather than as a learning and improvement process. In such cases, the role of evaluation policy in building evaluation capacity is compromised; it is most likely perceived as a mere tool for enforcing control.

Some participants also explained that the symbolic use of evaluation as a strategy for retrospectively justifying decisions already made within an organization might be prevalent. Such practice not only limits the role of evaluation policy in fostering evaluation capacity but also reflects the persistence of an unfavorable gap between evaluation practice, on the one hand, and the use of evaluation as a mechanism for learning and improvement, on the other. In this sense, many participants expressed concern that evaluation and evaluation policy are used as tools to preserve the status quo rather than to explore and challenge assumptions. Alternatively, some participants noted that when evaluation information is clearly integrated and connected to decision-making processes, the evaluation policy can signal to staff and the organization what the evaluation is going to be used for and on what values the evaluation practice is based.

Summary and Integrative Conceptualization

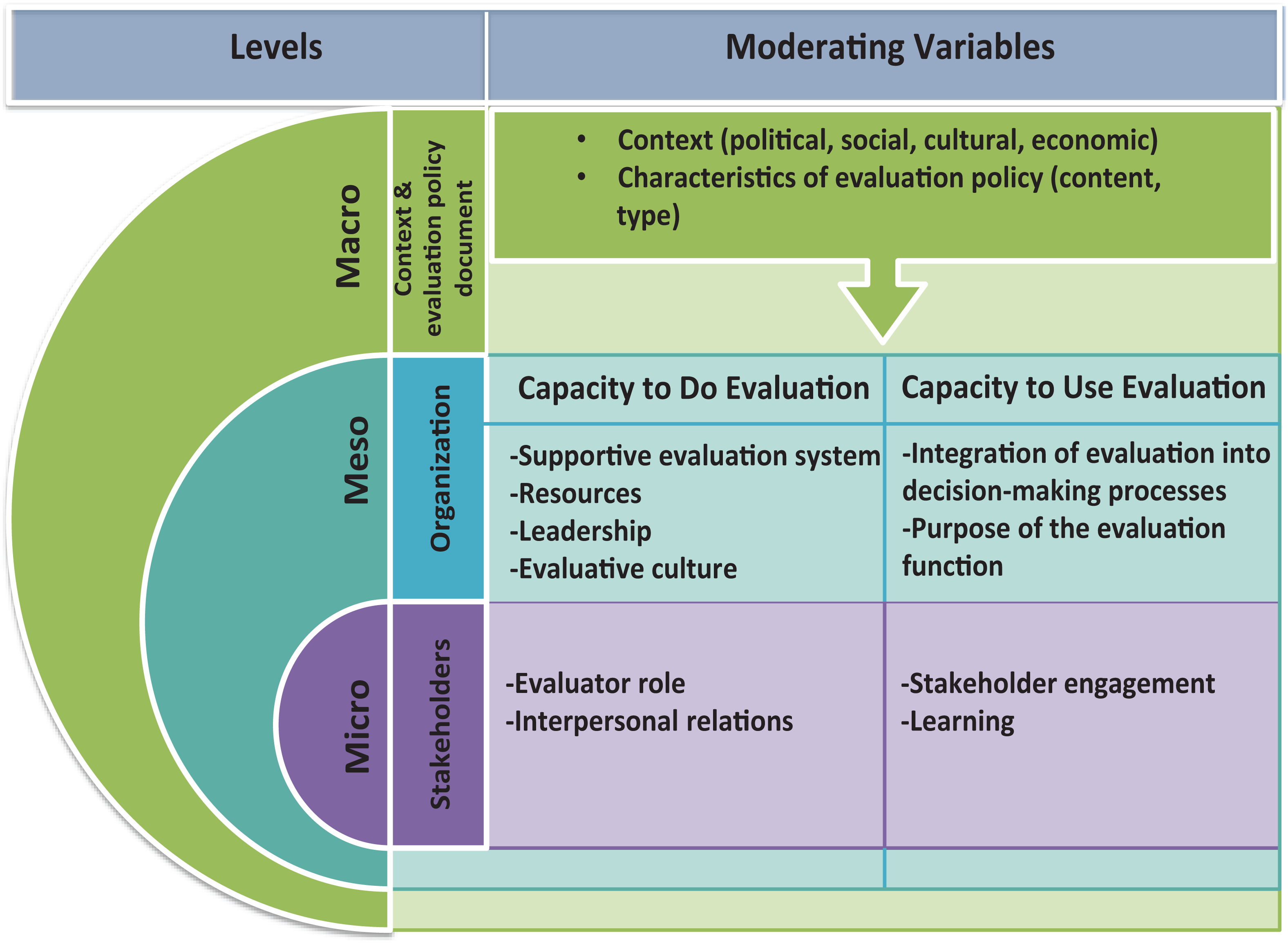

The above thematic analysis shows a range of variables that interview participants discussed as moderators evaluation policy’s influence on organizational evaluation capacity. Described were variables associated with external and internal organizational contextual conditions, attributes, and perceptions of the policies themselves, enabling factors associated with policy implementation, and reciprocal effects of organizational benefits from policy. Several of these variables touch multiple levels—individual, organizational, and extra-organizational—and tend to interact across these levels. With this in mind, we developed a three-level ecological framework (see Figure 1) that illuminates the multiple interconnected variables/conditions that moderate the relationship between evaluation policy and organizational capacity to do and use evaluation.

Ecological framework of moderating variables that explain the relationship between evaluation policy and organizational capacity for evaluation.

This framework comprises the following: the

As shown in Figure 1, moderating variables operate at one of three levels. At the macro level, our analyses revealed that the complexity of organizational context and characteristics of the evaluation policy itself were operative. At the meso and micro levels, moderating variables were associated with the organizational capacity to do evaluation and to use it, respectively. Having a supportive evaluation system and required resources helps to foster capacity to implement and/or oversee evaluation studies at the organizational level. To the extent that the organizational culture is conducive to evaluation and leaders were inclined to promote it, capacity building would be enhanced. These variables influence and are influenced by evaluator roles and skills and their relations with other organizational members at the stakeholder level.

Organizational capacity to use evaluation, on the other hand, is enhanced as evaluation becomes more integrated into the decision-making processes and the purposes of evaluation extend well beyond meeting accountability demands. Specifically, embracing a learning orientation with regard to evaluation use is likely to be a powerful means for organizations to develop capacity. At the stakeholder level, stakeholder engagement and learning from evaluation processes and results are salient elements of building the capacity for use. We now turn to a discussion of these findings and their implications moving forward.

Discussion

In this section, we discuss three significant themes informed by the findings of this research pertaining to evaluation policy’s role in developing organizational capacity for evaluation: (1) evaluation context, (2) evaluation purposes (accountability vs. learning), and (3) leadership. These three themes interconnect with, and arise from, those emerging from the interview findings. We conclude by considering some limitations of our study and the implications for ongoing research.

Evaluation Context

Over the course of this empirical study, the undeniable role of context and its influence on the relationship between evaluation policy and organizational capacity for evaluation became increasingly evident. The sociopolitical, cultural, and economic contexts of an organization are critical and pervasively influence evaluation practice. Context is also the most salient, influential consideration shaping the ECB process. Many scholars have argued that understanding an organization’s context can generate insights that are important for explaining how the interaction between hierarchies, systems, structures, and people influences evaluation processes and use within organizations (Chelimsky, 2012; Coldwell, 2019; Vo, 2013; Vo & Christie, 2015).

Organizations considering the development and installation of evaluation policy would benefit from a thorough contextual analysis. First, such analysis can identify where gaps exist in the organizational capacity to implement evaluation, including in human resources, organizational, and administrative systems. Second, it can help illuminate why these gaps exist in relation to sociopolitical, economic, and cultural factors, as well as specific statutory and regulatory systems that constrain or enable capacity-building efforts. Evaluation policies based on the premise that capacity building is essentially a matter of “replicating best practices” regardless of organizational context have little chance of being effective.

Evaluation Purpose: Accountability and Learning

Study participants almost uniformly insisted that evaluation policies are mostly symbolic and accountability-driven and that the requirements of evaluation policies add to the existing tension between the accountability-oriented needs of funders and the learning needs of other evaluation stakeholders (e.g., program developers, managers, and organization staff). They argue that a prevailing emphasis on accountability can have considerable adverse effects on the evaluation function precisely because it undermines the learning value of evaluation. Organizations that are only producing evaluations to satisfy the funders’ needs and leverage ongoing funding unwittingly increase the incentive for “strategic misrepresentation” of evaluation findings (Lehtonen, 2014). As such, evaluation becomes a time-consuming, menial task likely to be irrelevant for internal use within the organization (Ebrahim, 2005).

This tension between accountability and learning as competing justifications for evaluation has been a subject of interest for a number of scholars in recent years because of its significant impact on evaluation practice (Chouinard, 2013; Feinstein, 2012; Guijt, 2010). Some evaluation theorists assert that “accountability-based” and “learning-based” evaluations are qualitatively different (Armytage, 2011; Lennie & Tacchi, 2014). The question for many is how to reconcile the imbalance of these two forces, especially in the face of seemingly ever-increasing demands for accountability (Armytage, 2011; Lennie & Tacchi, 2014; Regeer et al., 2016). This is not an either/or question. It is a question of redressing the imbalance and highlighting the potential power of learning-oriented evaluation; to a substantial degree, the enhancement of organizational capacity for evaluation depends on it. By implication, there is a role for evaluation policy in reconciling this tension.

Organization Leaders’ Evaluation Capacity

We learned about the indispensable role of leadership in fostering the capacity to do and use evaluation. Leadership must be seen as supporting and promoting evaluation in order for policy to leverage organizational capacity for evaluation. Leaders can do this by: (i) understanding how strategy and evaluation are interconnected (or should be interconnected); (ii) ensuring adequate resources for evaluation; (iii) being active consumers of evaluation information; and (iv) using evaluation as a means for ongoing organizational learning (Preskill, 2014). Leaders should place a high value on evaluation by consistently seeking evaluative information for planning, implementation, and decision-making processes—in other words, leaders have to walk the talk (Norman, 2002).

Within our ecological framework, leadership not only moderates the role of evaluation policy, but it serves to link the capacity to do evaluation and the capacity to use, particularly at the organizational level. Leadership emerges as a variable critical for supporting or leveraging the capacity to plan and implement evaluation effectively, on the one hand, while being responsible for integrating evaluation into decision-making processes, on the other. Leaders (especially senior organizational leaders) have the power and the influence to support the ongoing development of organizational capacity for evaluation.

As such, the implications for leadership development are significant. Yet, as is demonstrated by the findings of Labin et al. (2012) regarding ECB, “leadership was the least frequently targeted organizational factor and the least frequently reported organizational outcome” (p. 321). At the same time, as Preskill (2014) noted, we have not “paid enough attention to the role senior leaders play in organizations, and how they influence, shape, and sustain an evaluation and learning culture” (p. 117). Our research underscores the need to delve more deeply into leadership as a moderating variable than is presently the case.

Limitations of the Study

The context within which this research was undertaken entails three noteworthy limitations on the interpretation of its findings. First, the design of the study leaves the findings and interpretations open to researcher bias. To address this, we kept systematic records of all data collection sources and efforts. Further, our analyses were informed by previous scholarship, and the conclusions are linked to extant contributions.

A second limitation is that our findings are somewhat evaluation-centric. Those who participated in the interviews were evaluation scholars and practitioners who have made significant contributions to the advancement of the evaluation field. The study could have benefitted from a more deliberate inclusion of evaluation users, such as organization managers and senior decision makers, to ensure the integration of multiple points of view. Nevertheless, we can say with confidence that the findings of this study represent points of view of participants from many different regions and backgrounds, which touches on a third limitation.

Our data emerged from interviews with professionals located in Canada, the United States, and Europe. While we have highlighted the importance of context, a comparison of, for example, North American versus European perspectives was beyond the scope of the present study, and our data do not provide latitude to support such comparative analyses. We note that several participants, although located in North America or Europe, work in international development. In the literature, there is some evidence to show that global Western approaches to evaluation are pervasive, not only in the West but also in international development contexts (Chouinard & Hopson, 2016). Still, it would be beneficial for ongoing inquiry to look more closely at organizational context and its implications for evaluation.

Implications for Research

This exploratory research breaks new ground in the ECB domain of inquiry. As a field, we have developed a reasonably deep understanding of what constitutes effective ECB. Our research takes the next step with this knowledge by situating it within the context of evaluation policy influence. We hope that the evidence-based ecological framework we developed (see Figure 1) will serve to guide ongoing inquiry in this area. Important research questions—not the least of which is the role of context in moderating the influence of policy on organizational evaluation capacity—might arise from the constructs identified in Figure 1 and the suggested relationships among them.

Our understanding of evaluation policy could be enhanced by more conceptually informed investigations. Thus far, much of the research on evaluation policy has focused on aspects of evaluation practice that these policies might be used to govern. Findings from the present research suggest that the field may benefit from continued probing into how specific aspects of evaluation practice interact with various features of evaluation policy and how such interaction influences ECB. For instance, how does evaluation policy affect evaluation design? How does it affect ECB activities? How does it affect stakeholders’ level of engagement?

Another implication for research is methodological. We used qualitative interviews as the primary means of data collection in this study. Future research might benefit from using mixed and/or quantitative methods to test the variables in the ecological framework, thereby providing a different level of analysis. For example, questionnaire survey methods could yield interesting findings in terms of weighting the importance of variables, understanding interrelations among them, and providing insights into the existence of other variables. Particularly rewarding might be studies featuring stakeholder perceptions about these variables or even comparisons with those of evaluators. Alternatively, case studies of varying organization types would provide a deeper understanding of what evaluation policy means in practice.

In conclusion, the findings presented here serve as an important first step in broadening our knowledge of the impact of evaluation policy on evaluation practice. If we agree that ECB has an explicit goal of developing organizational capacity for evaluation, it is important that we expand and deepen our knowledge about ECB processes by situating them within a broader context of evaluation policy.

Footnotes

Authors’ Note

This study was part of the doctoral dissertation of the first author completed at the University of Ottawa. The authors are grateful to thesis committee members for their input.

Acknowledgments

The authors thank the Saudi Cultural Bureau and the University of Ottawa for support provided during the research.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.