Abstract

Open science aims to enhance the integrity, transparency, and openness of research to improve the reproducibility and accessibility of scientific knowledge. It has received renewed attention due to reported concerns about questionable research practices across multiple scientific disciplines. While various open science practices, such as preregistration and data sharing, have been developed, their effectiveness remains unclear. This paper provides a review of current meta-research on open science practices, assessing their effectiveness and identifying key initiatives that promote transparency and openness in research. Next, we report the results of a preregistered retrospective observational analysis of 517 studies from 254 papers published in the Journal of Service Research and Journal of Service Management between 2019 and 2023. This analysis evaluates which open science practices are already in use and to what extent, as well as whether these practices align with the recommendations derived from the meta-research review. Finally, we present actionable guidelines and resources aimed at encouraging authors, reviewers, and editors to adopt effective open science practices in service research, both in the short and long term.

Keywords

Open science promotes a research culture based on integrity, transparency, and openness (Nosek et al. 2015). Open science involves a range of practices aimed at making research more transparent, including increased transparency in the study objective and design (e.g., open materials), transparency in intentions (e.g., preregistration), transparency in data and analysis (e.g., open data and scripts) and transparency in production (e.g., open access; Miguel et al. 2014). These initiatives aim to make research as understandable, evaluable, and accountable as possible, and—above all—to strengthen the reproducibility of science (Nosek et al. 2015). Although open science is not new, it has gained renewed focus due to growing concerns over questionable research practices (QRPs), including data manipulation, selective reporting, and data fabrication (Banks et al. 2016; John et al. 2012). These concerns have been raised across many scientific fields, including neighboring fields like management, economics, marketing, and operations management (e.g., Aguinis et al. 2020; Camerer et al. 2016; Davis et al. 2023; Motoki and Iseki 2022).

The move toward open science brings both benefits and challenges, creating a debate about the overall efficacy of these practices (Suls et al. 2022). Advocates argue that practices like preregistration enhance transparency in studies and alleviate QRPs (Nosek et al. 2015, Simmons et al. 2021), while critics caution that such measures are not a panacea for the reproducibility crisis or better science in general (Pham and Oh 2021; Souza-Neto and Moyle 2025). The literature remains inconsistent on the effectiveness of various open science practices. Without clear evidence, service researchers may struggle to decide whether open science is worth pursuing, and if so, which specific practices should be adopted. Addressing these uncertainties is crucial to determining the most effective and beneficial open science practices for the service research community.

To address this gap in understanding, we review current meta-research on open science practices, published across a large variety of research fields (e.g., communication, general science, political science, psychology, medicine). Meta-research provides valuable insights by evaluating the effectiveness and impact of open science practices across various disciplines (e.g., Hardwicke et al. 2022; Weiss et al. 2023). A review of these studies allows us to identify key open science initiatives and assess the available (or the absence of) evidence for their effectiveness. This effort will enable us to offer service researchers evidence-based recommendations regarding what works and what doesn’t, guiding researchers in adopting strategies that best support credible, transparent science.

In the next phase of our study, we estimate the prevalence of open science practices in service research by conducting a preregistered retrospective observational analysis of articles published in the Journal of Service Research and Journal of Service Management between 2019 and 2023. This analysis allows us to evaluate which open science practices are already in use and whether these practices align with those recommended based on our meta-research review. By establishing a baseline for current open science practices, we can identify areas where further efforts may be beneficial and provide a foundation for tracking future progress. Our goal here is to present an overview of current practices in service research, without implying prescriptive or evaluative judgments.

Finally, although many researchers are aware of open science, its practical application is oftentimes not straightforward (Diederich et al. 2022). We, therefore, connect the insights gained from our review and observational study to propose practical guidelines and tools for advancing effective open science practices in service research, while acknowledging that not all approaches might be equally effective within this field of research. Ultimately, we hope to encourage all stakeholders—authors, reviewers, and editors—to contribute to bridging the open science-practice gap (Aguinis et al. 2020).

This paper is organized as follows. First, we provide a historical overview of the open science movement, exploring its potential applications in service research. Next, we synthesize previous meta-research on open science, offering an evidence-based discussion of effectiveness of various open science practices. We then detail the methods used in our retrospective observational study and present our findings. Finally, we discuss implications for service researchers and offer a roadmap for implementing open science practices within this field in both the short and long term.

Open Science: Origins and Developments

At the heart of open science is the commitment to promoting transparency and openness across all stages of scientific work to enhance the quality and trustworthiness of scientific knowledge (Munafò et al. 2017). The origins of the open science movement date back to the 17th century when knowledge creation began to be institutionalized and professionalized through the formation of scientific associations, or academies, and the development of the journal publication system (Bartling and Friesike 2014). The establishment of academic journals such as Philosophical Transactions of the Royal Society in 1665 marked the beginning of a formalized system that aimed not only to publicly share scientists’ ideas and disseminate knowledge but also to establish priority and provide appropriate credit for scientific discoveries and inventions (Hull 1985). This scientific revolution introduced new norms that encouraged scientists to rapidly and transparently share their findings, enabling others to evaluate and build upon them, while appropriately acknowledging the value of their contributions (David 2004).

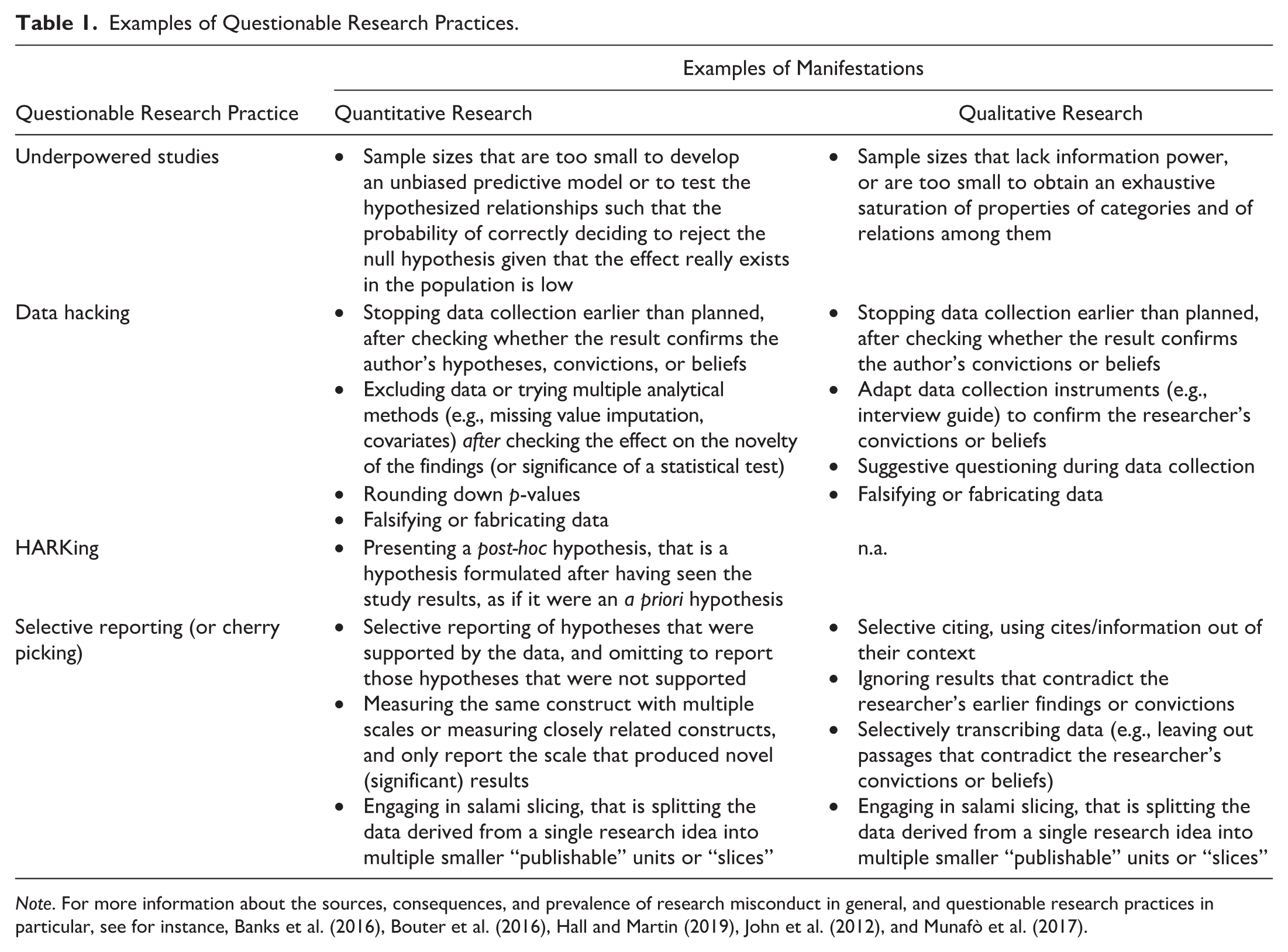

Building on the core concept of publishing, a variety of institutionalized and commercialized structures soon emerged, leading to an increase in proprietary research and closed systems that gradually diverged from the principles of transparency and openness (Bartling and Friesike 2014; Hull 1985). As the evaluation of scientific impact evolved around this new publishing system, competition intensified both among individual scientists and among the institutions funding their work (Bartling and Friesike 2014). This competitive climate, coupled with substantial rewards tied to measurable outputs such as publications and citation indexes, created an environment where researchers might pursue novel findings—or “significant” relationships in quantitative research—potentially through “questionable research practices” (Banks et al. 2016; John et al. 2012). Table 1 provides an overview of such practices in both quantitative and qualitative research. These practices, along with a lack of transparency in the research process, contributed to what is known as the “reproducibility crisis” (Aguinis et al. 2024; Munafò et al. 2017).

Examples of Questionable Research Practices.

Note. For more information about the sources, consequences, and prevalence of research misconduct in general, and questionable research practices in particular, see for instance, Banks et al. (2016), Bouter et al. (2016), Hall and Martin (2019), John et al. (2012), and Munafò et al. (2017).

In response to these challenges in modern science, a renewed movement has emerged to reestablish open science as the standard in research. This movement has gained momentum across numerous scientific disciplines, including fields closely related to service research. For example, in early 2023, both the Journal of Marketing and Journal of Marketing Research adopted a new research transparency policy. Both journals require authors to submit replication packages—including materials, data, and code—with each round of invited revisions. Marketing Science and Management Science implemented a similar policy in 2013 and 2019, respectively. Additionally, The Leadership Quarterly adopted the registered report model in 2017 as an alternative to traditional publishing options. This journal is currently involved in a pilot program testing a registered revisions process (Gerpott et al. 2024). In 2023, MIS Quarterly introduced a special issue dedicated to registered reports to evaluate the model’s applicability.

The open science movement has also gained strong support from various stakeholders, including funding agencies, political entities, and intergovernmental organizations. In the EU, for example, the Horizon Europe program mandates that all funded scientific research be made openly accessible immediately following peer-reviewed publication. Additionally, related research outputs, such as data and instruments, must be made available as soon as possible to support reproducibility (European Commission n.d.). Similarly, the United States’ National Science Foundation (NSF) has launched the “Public Access Initiative,” which aims to make NSF-funded publications openly accessible upon publication by 2025. The United Nations Educational, Scientific and Cultural Organization (UNESCO) regards open science as “a critical accelerator for achieving the United Nations Sustainable Development Goals and a true game changer in bridging the science, technology, and innovation gaps, as well as fulfilling the human right to science” (UNESCO 2023).

In summary, open science is not a new concept; rather, it has regained interest following the reproducibility crisis. Over the past decade, various practices have been proposed to enhance the transparency and reproducibility of scientific work. However, the literature remains mixed regarding the effectiveness of open science, leaving researchers uncertain about which practices are most beneficial. The following section addresses this issue.

Meta-Research on the Effectiveness of Open Science Practices

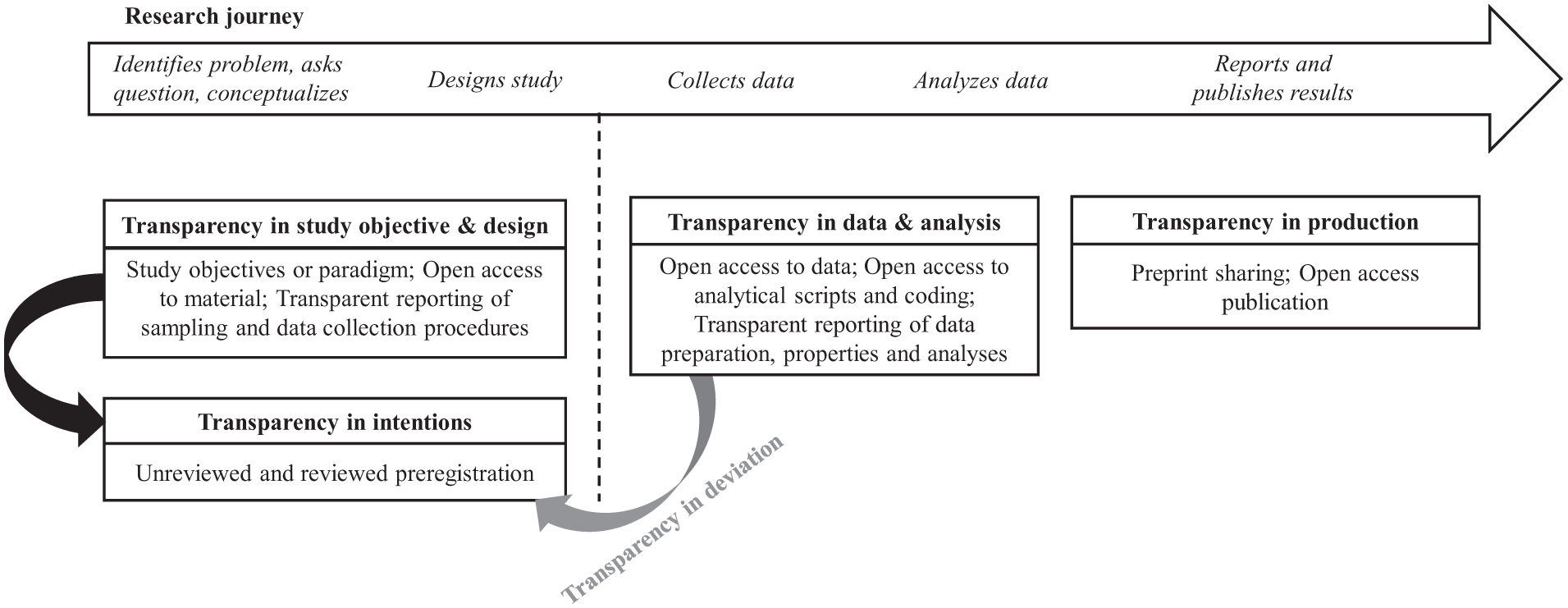

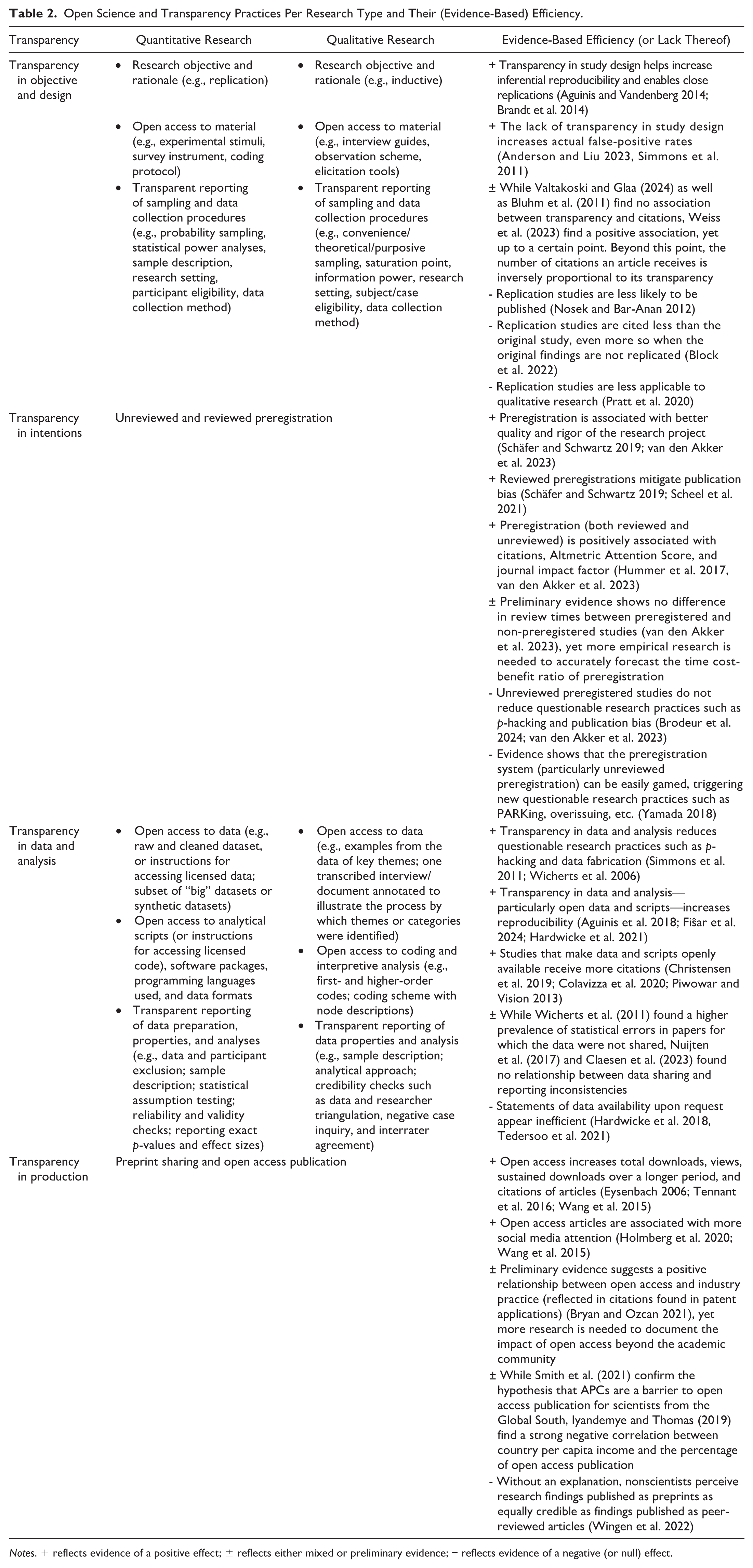

The open science movement has led to the development of various recommendations aimed at enhancing the transparency and openness of research. Following Miguel et al. (2014), we categorize these practices into four groups: (1) Transparency in study objectives and design; (2) Transparency in intentions; (3) Transparency in data and analysis; and (4) Transparency in production. Figure 1 provides an overview of these transparency practices at various stages of the research journey. In the following sections, we focus on practices that are considered prevalent observable indicators of open science, discussing evidence—or the lack thereof—for their effectiveness. To enhance readability and address space constraints, we present a synthesis of the evidence on the effectiveness of open science practices in Table 2 and draw major conclusions here. Unless specified differently, most of the open science practices we discuss are broad enough to apply to all types of research.

Transparency-related practices along the research journey.

Open Science and Transparency Practices Per Research Type and Their (Evidence-Based) Efficiency.

Notes. + reflects evidence of a positive effect; ± reflects either mixed or preliminary evidence; − reflects evidence of a negative (or null) effect.

Transparency in Study Objective and Design

What

The first tenet of the open science movement is to enhance transparency in study objectives and design. This includes clearly specifying (a priori specified) study objectives and hypotheses (if any), sampling methods, and sample size rationale. Transparency in study objectives and design also includes sharing relevant materials for data collection and/or extraction (Aguinis and Solarino 2019; Aguinis et al. 2021; Hardwicke et al. 2022). For quantitative research, it involves clearly articulating the research objective and rationale, such as the aim of replication, and providing open access to materials like experimental stimuli and coding protocols. Furthermore, transparent reporting of sampling and data collection procedures—such as using probability sampling, conducting statistical power analyses, and detailing participant eligibility—enhances the rigor and reproducibility of findings (Aguinis et al. 2021; Hardwicke et al. 2022). In qualitative research, transparency in study objectives and design involves articulating the research objective and rationale, often inductive in nature, and ensuring open access to materials like interview guides and observation schemes (Aguinis and Solarino 2019). Reporting on sampling methods, such as convenience or purposive sampling, as well as data collection procedures—including saturation points and eligibility criteria—further supports the credibility of qualitative findings (Aguinis and Solarino 2019; Verleye 2019).

The open science movement also advocates for “close” replications to validate findings across studies, highlighting a critical need for replication. This recommendation was reinforced following the study by the Open Science Collaboration (2015), which found only 36 of 100 prominent psychological experiments could be replicated successfully. Similar studies were also conducted in economics (Camerer et al. 2016), operations management (Davis et al. 2023), and entrepreneurship (Crawford et al. 2022), among others.

Evidence About the Effectiveness

Transparency in study design significantly enhances inferential reproducibility and facilitates close replications, thereby contributing to the credibility of research findings (Brandt et al. 2014). Conversely, a lack of transparency can lead to increased false-positive rates, undermining the integrity of scientific conclusions (Anderson and Liu 2023; Simmons et al. 2011). While some studies found no clear association between transparency and downloads or citation rates (Bluhm et al. 2011; Valtakoski and Glaa 2024), Weiss et al. (2023) identified a positive correlation up to a certain threshold; excessive transparency in research can have negative effects when authors report too many details. Standardized reporting practices may also restrain methodological diversity (Symon et al. 2018). While transparency is essential for reproducibility, accurately conveying all methodological nuances is challenging (Bowman and Spence 2020). Full transparency raises concerns about the misuse of materials (Kuhlau et al. 2013), and space limitations often lead authors to omit details that could be shared in public repositories.

Focusing specifically on replication studies, these studies often face publication biases, as they are often less likely to be published. They also tend to receive fewer citations compared to original studies, particularly when original findings are not replicated (Block et al. 2022; Nosek and Bar-Anan 2012). These limitations may lead researchers to refrain from conducting replication studies, or to prioritize small, easily replicable studies over large-scale data efforts aimed at testing complex theories—a phenomenon Guzzo et al. (2022) refer to as the “paradox of replication.” In addition, the results of replication studies are often not straightforward to interpret, as replication failures can also result from methodological challenges or misinterpretation, rather than indicating that the original findings are incorrect or fraudulent (Brandt et al. 2014). Finally, replication logics developed largely in the positivist, deductive field of (quantitative) research. Applying these logics to the inductive, theory-generating side of (qualitative) research is argued to be ontologically problematic and potentially harmful (Pratt et al. 2020).

Transparency in Intentions

What

A second key tenet of open science is the need to specify the research plan before starting the research. Transparency in study design promotes transparency in intentions (Miguel et al. 2014). Preregistration serves this purpose by allowing authors to specify their objectives, hypotheses, planned research design, and analysis plan using a time-stamped protocol before data collection (Wagenmakers et al. 2012). There are two models of preregistration: unreviewed preregistration, where authors reference a preregistration document in a trusted online repository, and reviewed preregistration (or registered reports), where studies and protocols undergo peer review before data collection (e.g., van’t Veer and Giner-Sorolla 2016). Once studies or protocols have been revised following the reviewers’ comments and judged appropriate for publication, authors receive an In Principle Acceptance. This acceptance ensures publication if the authors follow the registered procedures, regardless of the significance or nature of the findings.

Evidence About the Effectiveness

Preregistered studies are generally associated with higher citation rates and Altmetric Attention Scores, suggesting that they gain greater visibility within the academic community (Hummer et al. 2017; van den Akker et al. 2023). Preregistration of research studies is recognized for increasing the quality and rigor of research projects (e.g., Schäfer and Schwartz 2019; van den Akker et al. 2023). Preregistration also serves as a valuable tool in mitigating questionable research practices and publication bias by committing researchers to their predefined study designs and analysis plans (Schäfer and Schwartz 2019; Scheel et al. 2021). These benefits, however, seem to apply mainly to reviewed preregistration (van den Akker et al. 2023). Unreviewed preregistration may not adequately reduce questionable research practices like p-hacking. On the contrary, they could even encourage new questionable research practices, including preregistering after results are known (“PARKing”) and overissuing (i.e., preregistering multiple studies or versions of the same study privately or on different platforms, and only reporting the preregistered studies that produced significant findings while abandoning the rest; Brodeur et al. 2024; Yamada 2018).

Additionally, preregistration can be time-intensive (Suls et al. 2022). More time would be necessary for researchers to prepare and submit their preregistrations, anticipate all possible analyses they want to do while factoring in how data may turn out to be, and document any unexpected changes to the initial preregistration (referred to as “Transparency in deviation” in Figure 1), as well as for editors and reviewers to do the “verification work” and evaluate the alignment between preregistrations, amendments, and the submitted manuscript. The demands of preregistration are likely to be higher for qualitative researchers, as making the preregistration a “living document” is often necessary to accommodate the flexible nature of qualitative research (Haven et al. 2020; Pratt et al. 2020). Preliminary evidence—primarily from quantitative studies—suggests that preregistered and non-preregistered studies do not differ significantly in review times (van den Akker et al. 2023), indicating no immediate time-cost benefits of preregistration.

Transparency in Data and Analysis

What

The third tenet of open science is to make data, analytical scripts or coding schemes, data cleaning procedures, reliability and validity information, and analytical approaches as transparent and openly available as possible (Aguinis et al. 2018; Nosek et al. 2015). Of course, access can be restricted and justified based on confidentiality, personal information protection, or intellectual property rights (European Commission n.d.). To ensure the reproducibility of findings, open science advocates for transparent disclosure of data properties and analysis details, as analysis choices can influence and even alter results (Miguel et al. 2014; Silberzahn et al. 2018). For quantitative research, relevant details include data cleaning procedures, statistical assumption testing, reliability and validity checks, reporting exact p-values and effect sizes, or reduction and justification of alpha. (e.g., Aguinis et al. 2018; Weiss et al. 2023). As for qualitative research, features include the number of data coders, the process of categorizing data for subsequent analysis (e.g., pattern and axial coding), and credibility checks (e.g., intercoder agreement, negative case inquiry), among others (e.g., Aguinis and Solarino 2019; Tong et al. 2007; Verleye 2019).

Evidence About the Effectiveness

Transparency in data and analysis provides significant benefits, including the reduction of questionable research practices like data fabrication (Wicherts et al. 2006) and enhanced reproducibility, which in turn builds trust in scientific findings (Munafò et al. 2017). For instance, Fiŝar et al. (2024) demonstrated that a data and code disclosure policy greatly improved reproducibility in Management Science, with 95% of accessible articles achieving reproducibility, compared to just 55% before the policy. Data availability statements alone may be ineffective though: studies show that less than half of datasets said to be available upon request were accessible (Hardwicke et al. 2018; Tedersoo et al. 2021). Despite high reproducibility rates in some fields, cross-discipline differences highlight variations in effectiveness of data-sharing policies (Fiŝar et al. 2024; Hardwicke et al. 2021).

Evidence regarding whether non-disclosure correlates with higher error rates is mixed. While Wicherts et al. (2011) found a higher prevalence of statistical errors in papers for which the data were not shared, Nuijten et al. (2017) found no relationship between data sharing and reporting inconsistencies across three large retrospective observational studies. In a similar vein, Claesen et al. (2023) did not find robust empirical support for the claim that not sharing research data is associated with consistency errors. Thus, while transparency in data and analysis holds advantages, the advantage mainly lies in the ability to detect errors rather than providing authors an incentive to make results error-free.

Transparency in data and analysis also offers a citation advantage, increasing visibility and impact for researchers who share their work. Studies indicate that articles with data in public repositories receive significantly more citations (Christensen et al. 2019), with increases ranging from 9% (Piwowar and Vision 2013) to as much as 25% (Colavizza et al. 2020). This transparency promotes follow-up studies, such as meta-analyses, and fosters collaboration, leading to enhanced research productivity (Gilmore et al. 2018). These findings underscore the benefits of creating transparency in data and analysis.

Despite its benefits, data and analysis transparency poses challenges and concerns for many researchers. One barrier is the significant resources needed to prepare, document, and share data in accessible formats (Perrier et al. 2020; Tenopir et al. 2011). Some disciplines face additional challenges, as privacy, intellectual property, and ethical concerns may prevent complete transparency, particularly when human subjects are involved (Guzzo et al. 2022). Resource-intensive datasets, fears of potential embarrassment if errors are exposed, and fears of being “scooped” further discourage data sharing (Gleditsch et al. 2003).

Transparency in Production

What

The fourth and perhaps most visible tenet of open science is making the “products” of scientific inquiry more broadly and quickly available, free of charge. By removing obstacles to the dissemination of knowledge, transparency in production would facilitate extensive knowledge sharing, promote equality and collaborations within and between communities, and bridge the gap between academic and general audiences through the endorsement of science in public discourse (Tennant et al. 2016; UNESCO 2023). Preprints and open access publications serve this purpose. Preprints are early-stage, non-peer-reviewed manuscripts posted by authors on dedicated public servers (e.g., SSRN, arXiv). Open access publications are peer-reviewed manuscripts, made publicly available after acceptance or publication. “Green” open access refers to the practice of authors self-archiving a version of their published work in a free, publicly accessible repository, often after an embargo period. Most publishers now offer open access publication options, with payment of an author processing charge (“gold” access). “Diamond” open access eliminates these fees and paywalls, allowing academic work to be published, distributed, and preserved by research institutions and their faculty members, with no fees for either readers or authors. Even though international policies like UNESCO’s (2023) recommendations on open science support diamond open access, this model remains underrepresented to date.

Evidence About the Effectiveness

Transparency in research production enhances the dissemination and impact of scientific knowledge, with a clear citation advantage for open-access articles. Open-access publications receive significantly more citations and visibility. For example, Eysenbach’s (2006) longitudinal study of a cohort of articles published in the Proceedings of the National Academy of Sciences revealed that non-open access articles were twice as likely to remain uncited compared to open access articles. The average number of citations for the latter was more than double than that of non-open access articles. Similarly, Wang et al. (2015) reported that open-access articles in Nature Communications saw higher citation counts, downloads, and broader readership. Moreover, open-access research reaches a wider audience beyond academia, gaining social media attention and fostering private-sector innovation (Holmberg et al. 2020; Wang et al. 2015). For instance, Bryan and Ozcan (2021) discovered that open-access articles mandated by funders are more frequently cited in patent applications, highlighting the broader societal impacts of open access. Nevertheless, while the benefits of open access seem established, studies suggest variability in citation advantages across disciplines, indicating a need for further research to clarify these effects (Langham-Putrow et al. 2021; Tennant et al. 2016).

Transparency in production initiatives faces challenges, particularly concerning affordability and quality control. For example, many open-access journals charge substantial article processing fees. These fees potentially disadvantage researchers from low-income regions, though findings on this are mixed (Iyandemye and Thomas 2019; Smith et al. 2021). Predatory journals, which exploit the “author-pays” model by charging fees for minimal peer review, further complicate open-access publishing by compromising research quality (Khalil et al. 2022). As for pre-prints, they did not undergo any scientific quality-control peer-review process, creating risks if findings are taken as definitive by nonspecialists (Soderberg et al. 2020; Wingen et al. 2022). During the COVID-19 pandemic, for instance, bioRxiv introduced disclaimers on preprints to clarify their non-peer-reviewed status (Brierley 2021). Such measures reflect ongoing efforts to balance transparency with scientific rigor, underscoring the complexities inherent in open-access publishing.

Key Takeaways

The shift toward open science brings both benefits and challenges, with varying effectiveness among practices. Transparency in study objectives and design (e.g., sample size rationale, materials availability) shows promise in reducing questionable research practices and boosting reproducibility. Two challenges must be noted: Authors need to strike the right balance between being transparent and being too transparent, especially in the paper itself. Moreover, replication studies remain challenging, as they are more difficult to publish. In terms of transparency in intentions, unreviewed preregistration appears ineffective in reducing questionable research practices and may even prompt others (e.g., PARKing), whereas reviewed preregistration is more successful. Transparency in data and analysis (e.g., data sharing, analysis script sharing) increases reproducibility but faces practical limits—privacy issues, extensive documentation needs, and cross-disciplinary variations. Data availability statements often fall short, with fewer than half of declared datasets truly accessible, highlighting the need for stronger data-sharing policies. Open-access publishing benefits research dissemination but shows mixed results across disciplines and citation rates. Finally, high article processing fees and predatory journals risk increasing inequities, while preprints, though rapid, may mislead if taken as peer-reviewed.

Adopting open science practices not only enhances the transparency and openness of research findings but also strengthens their credibility and trustworthiness, though their impact varies across different audiences. Studies show that students and academics perceive research that follows open science practices—such as preregistration, replication, and data sharing—as more credible and trustworthy (Schneider et al. 2022; Song et al. 2022). Among the general public, findings are more mixed, with studies reporting no (Schneider et al. 2022) to moderate effects (Song et al. 2022) of open science practices on credibility and trustworthiness. These findings suggest that open science practices enhance credibility and trust among key audiences of academic publications, such as students and academics, while also potentially improving credibility and trust perceptions among the general public.

Overall, these insights provide a balanced view of the effectiveness of open science practices. The extent to which open science practices have been adopted within the service research community remains unclear. Estimating their prevalence is essential to assess the current state of open science in service research and to guide future efforts. Drawing from meta-studies in various social science disciplines, we conducted a retrospective observational study of published service research papers, which we will discuss next.

Adoption of Open Science Practices In Service Research

Protocol and Sample

The study protocol was preregistered on the Open Science Framework on June 4, 2021. We made minor amendments to the original protocol and preregistered these amendments on November 22, 2022, October 6, 2023, and October 2, 2024, following reviewers’ feedback. The preregistration, amendments, coding forms, and dataset can be found in our OSF repository at https://osf.io/2fxt9/.

To assess the adoption of open science and transparency-related practices in service research, we retrieved all articles published in the Journal of Service Research (JSR) and the Journal of Service Management (JOSM) from 2019 to 2023. These two journals are widely regarded as “the key service research journals” (Furrer et al. 2020, p. 8), and hold the highest 2022 two-year impact factors in Web of Science® among service journals (JSR: 12.4; JOSM: 10.6). At the time of this study, they also had similar transparency policies. It is important to note that our aim was not to evaluate the field’s performance relative to other disciplines, and thus we did not include articles from non-service journals. As Fiŝar et al. (2024) observe, comparing transparency (and thereby reproducibility) rates across fields is complex and can lead to misinterpretation. Different journals “often apply different definitions and standards of reproducibility, and reasons for non-reproducibility may vary between journals due to differing policies, enforcement procedures, and data availability conditions in their fields” (p. 1345).

We deliberately chose 2019 as the starting point for our paper selection for the following reason: The reproducibility crisis gained significant attention around 2015, marked by publications that highlighted reproducibility issues in scientific research and proposed potential solutions (e.g., Camerer et al. 2019; Open Science Collaboration 2015). Given that research projects often take several years from initiation to publication, we anticipated that the impact of these recommendations on research practices would become evident a few years after their introduction to the scientific community.

We used Web of Science® to identify studies meeting our eligibility criteria. Due to the specificity of these criteria, our search strategy was limited to a computerized bibliographic search in the Web of Science® database. Using the advanced search option, we retrieved all articles published in JSR and JOSM with the SO field tag and applied a temporal filter: PY = (2019–2023). The resulting full records were exported to Microsoft Excel (v.2408) for analysis. We then downloaded the full text of each article and manually screened them. A total of 391 papers were published between 2019 and 2023, with 171 in JSR and 220 in JOSM. Since our scoping review aims to assess the prevalence of open science and transparency practices in study objectives, design, intentions, analysis, and production, articles that were not considered “empirical papers” or that did not involve primary or secondary data collection and analysis were excluded. Specifically, we removed conceptual papers (n = 97, 24.8%), editorials (n = 23, 5.9%), commentaries and viewpoints (n = 13, 3.3%), methods tutorials (n = 3, 0.77%), and analytical modeling papers (n = 1, 0.26%), leaving a final sample of 254 articles (see Supplemental Web Appendices A and B for sample characteristics).

Coding Process and Analysis

The coding process followed a two-step approach. In the first step, the first author automatically extracted the year of publication, open-access designation, and citation count for the retained papers from Web of Science, with data obtained on July 10, 2024. In the second step, all three authors developed and agreed on a standardized coding protocol (see Supplemental Web Appendix C), which outlines the open science and transparency-related practices to be recorded. This protocol was designed based on similar studies in other scientific domains (e.g., Aguinis and Solarino 2019; Hardwicke et al. 2022) and reporting guidelines from the EQUATOR network (https://www.equator-network.org/).

All 254 articles were coded manually according to this coding protocol. Important to note is that these 254 articles reported 517 studies. This observation suggests that multi-study papers are common, and hence, the study (not the paper) serves as our unit of analysis. The retained articles were randomly assigned to one of the three authors for coding. All three coders have expertise with meta-analysis and/or systematic literature reviews. In the next step, we calculated the intercoder agreement by having 52 studies coded twice. These studies were randomly selected and aligned with the recommendation to use a minimum of 10% of the sample to ensure representativeness when assessing and reporting intercoder reliability (Lombard et al. 2002). The percentage of intercoder agreement reached 85.4% across 676 individual codes. Any disagreements were resolved through discussion.

We stored the results of our coding process in a Microsoft Excel (v.2408) file, which we later converted to an .omv file. The data file is accessible in multiple formats on our dedicated OSF page. Following similar retrospective observational studies in the service research domain (e.g., Furrer et al. 2020), we conducted frequency analyses on the data, focusing on the proportion of studies (or articles, where relevant) that meet each evaluated indicator; the denominator used was the number of studies (or articles) applicable to each indicator. The frequency analyses were performed using jamovi (v.2.6.13.0). Additionally, in line with Hardwicke et al. (2022), we report 95% confidence intervals (CIs) based on the Sison-Glaz method for multinomial proportions (Sison and Glaz 1995). These CIs were derived using the DescTools package (v.0.99.57; Signorell et al. 2024) in R (v.4.3.3).

We are not conducting confirmatory tests of a priori hypotheses. Instead, we present descriptive results. It is important to interpret these results with caution. Although some articles in our sample may not report all the information needed to fully ensure inferential reproducibility, it is possible that authors nonetheless followed appropriate protocols in conducting their studies. Hence, the reported estimates should be considered conservative.

Results

Transparency in Study Objectives and Design

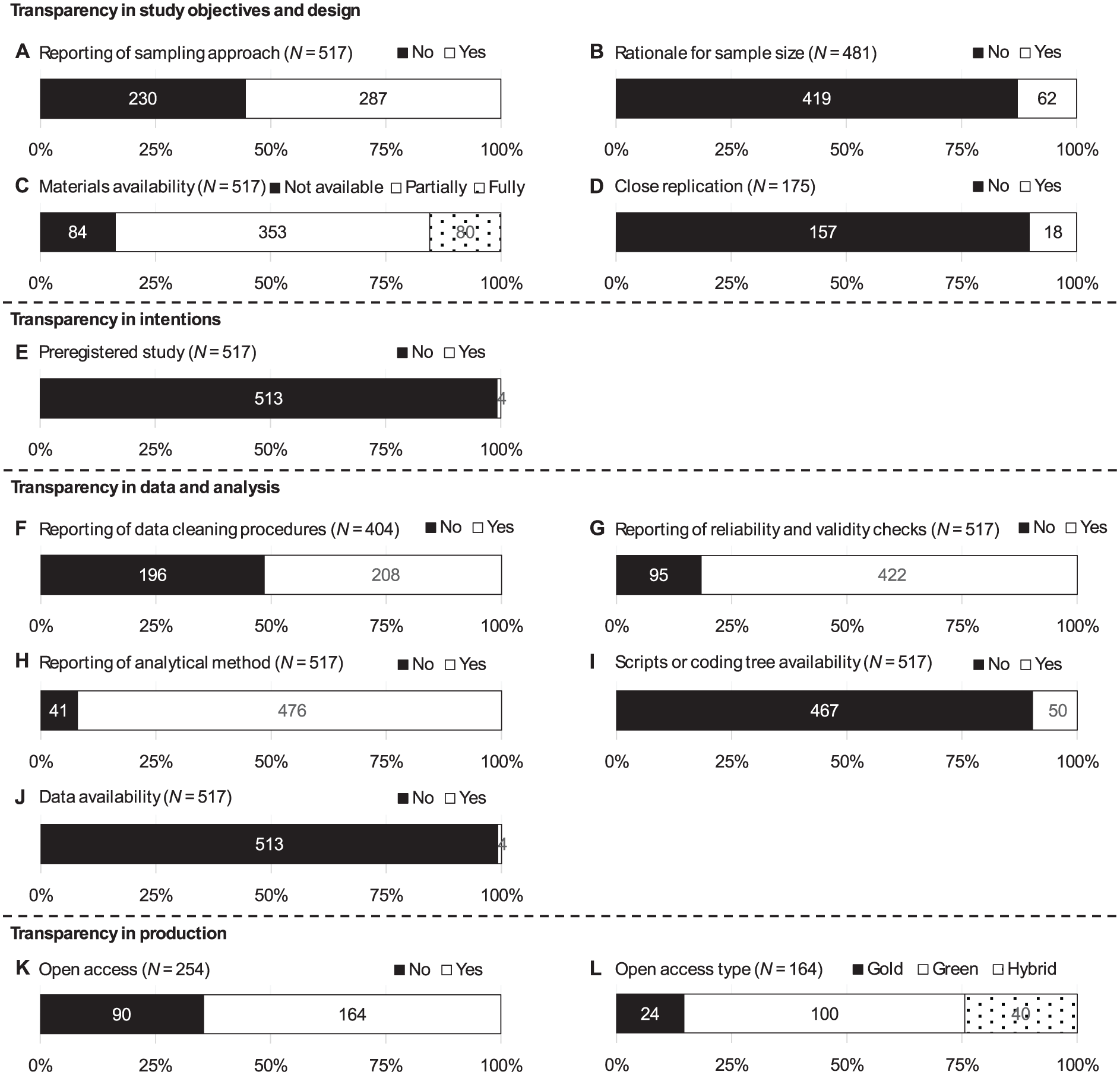

As Figure 2 shows, of the 517 empirical studies that involved primary or secondary data, 287 reported the sampling approach and described how study objects (e.g., participants, cases) were selected (55.5%, CI = [51.3%, 60.1%]), whereas 230 studies did not (44.5%, CI = [40.2%, 49.1%]). Of the 481 studies for which providing a rationale for the sample size was deemed relevant, 62 (12.9%, CI = [10.2%, 16.0%]) transparently reported such information, whereas 419 did not (87.1%, CI = [84.4%, 90.2%]).

Transparency in service research.

Among the 517 empirical studies, the materials were fully available for 80 studies (15.5%, CI = [11.6%, 19.6%]) and partially available for 353 studies (68.3%, CI = [64.4%, 72.4%]). Eighty-four studies (16.3%, CI = [12.4%, 20.4%]) did not provide the materials that would be needed to repeat the collection or extraction of the data. Of the 175 articles that involved quantitative data, 18 (10.3%, CI = [6.3%, 14.9%]) included at least one close (intra-article) replication study (Figure 2D).

Transparency in Study Intentions

Among the 517 empirical studies included in our sample, 4 (0.8%, CI = [0.2%, 1.5%]) included a preregistration statement (Figure 2E).

Transparency in Data and Analysis

As Figure 2F shows, of the 404 studies for which reporting data cleaning procedures was deemed relevant, 208 (51.5%, CI = [46.5%, 56.7%]) transparently reported such information, whereas 196 did not (48.5%, CI = [43.6%, 53.7%]). Of the 517 empirical studies that involved primary or secondary data, 422 (81.6%, CI = [78.3%, 84.9%]) reported information related to validity and/or reliability checks, whereas such information was not reported for 95 studies (18.4%, CI = [15.1%, 21.7%]; Figure 2G). Among the 517 studies, 476 (92.1%, CI = [89.9%, 94.3%]) transparently reported information about the analytical method or model used in the study, whereas 41 did not (7.9%, CI = [5.8%, 10.2%]; Figure 2H).

Of the 517 empirical studies that involved primary or secondary data, the analytical scripts or coding tree was available for 50 studies (9.7%, CI = [7.3%, 12.2%]), and not available for 467 studies (90.3%, CI = [88.0%, 92.9%]; Figure 2I). Among those 517 studies, 4 (0.8%, CI = [0.2%, 1.5%]) included a data availability statement (Figure 2J).

Transparency in Production

Of the 254 articles retained in our sample, we obtained a publicly available version for 164 of them (64.6%, CI = [58.7%, 70.6%]), whereas 90 were only accessible through a paywall (35.4%, CI = [29.5%, 41.5%]; Figure 2K). Among these 164 open access articles, 24 (14.6%, CI = [7.3%, 22.5%]) are published under gold open access, 100 (61.0%, CI = [55.7%, 68.9%]) under green open access, and 40 (24.4%, CI = [17.1%, 32.3%]) under both gold and green access (Figure 2L).

Discussion

Our analysis of the adoption of open science practices in two key service research journals—Journal of Service Research and Journal of Service Management—revealed that certain aspects of open science, such as reporting data cleaning procedures, validity and reliability checks, and analytical methods, are widely used. However, significant gaps remain in other areas. In particular, areas such as providing a rationale for sample size, replication efforts, and data accessibility require greater attention. To further advance open science in service research, we propose a set of concrete recommendations for authors, reviewers, and editors in the next section, aimed at addressing the gaps identified in our analysis. Drawing on both our review of the literature on open science effectiveness and our assessment of its current adoption in service research, these recommendations offer actionable guidance on how researchers can better integrate open science principles into their work.

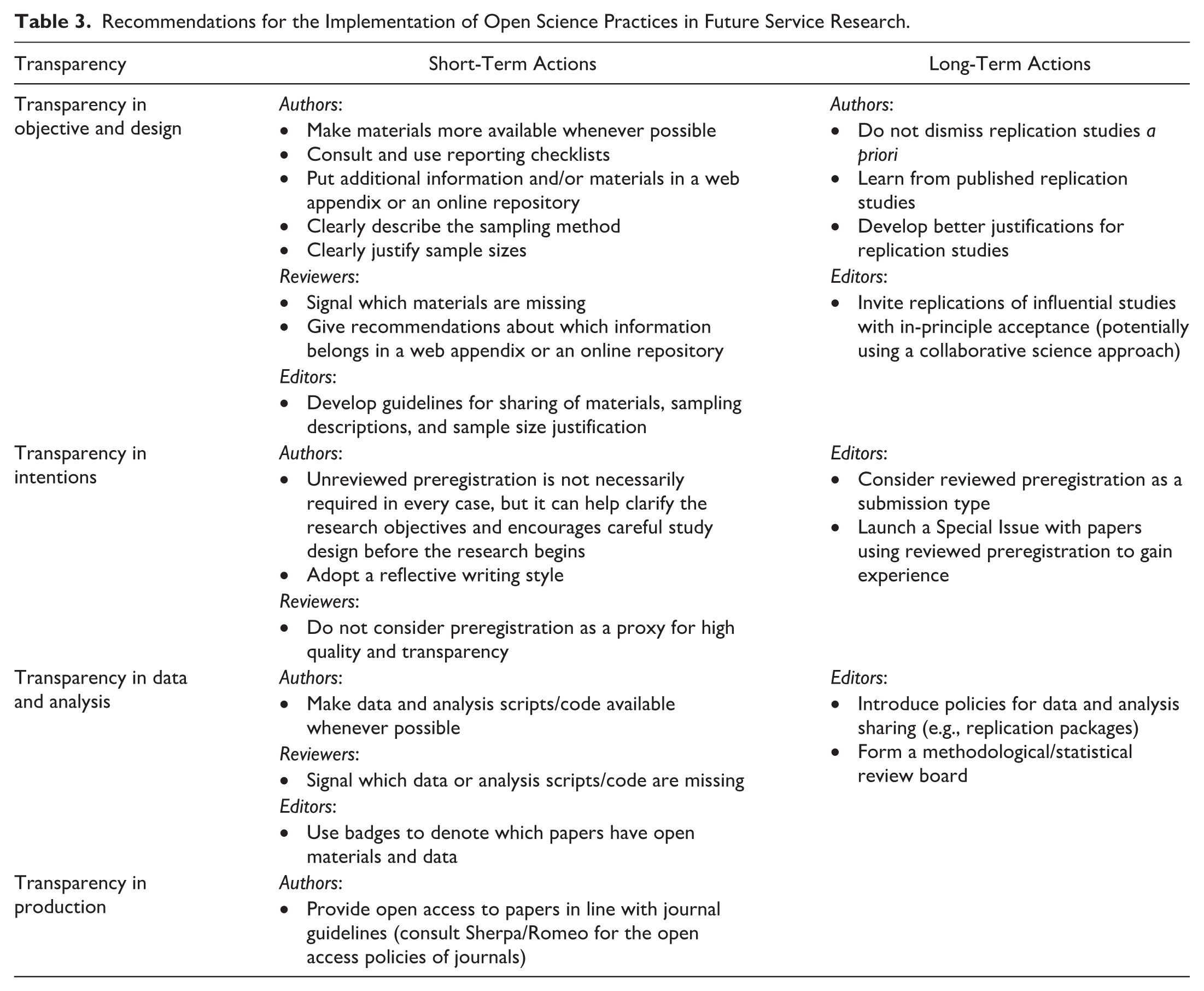

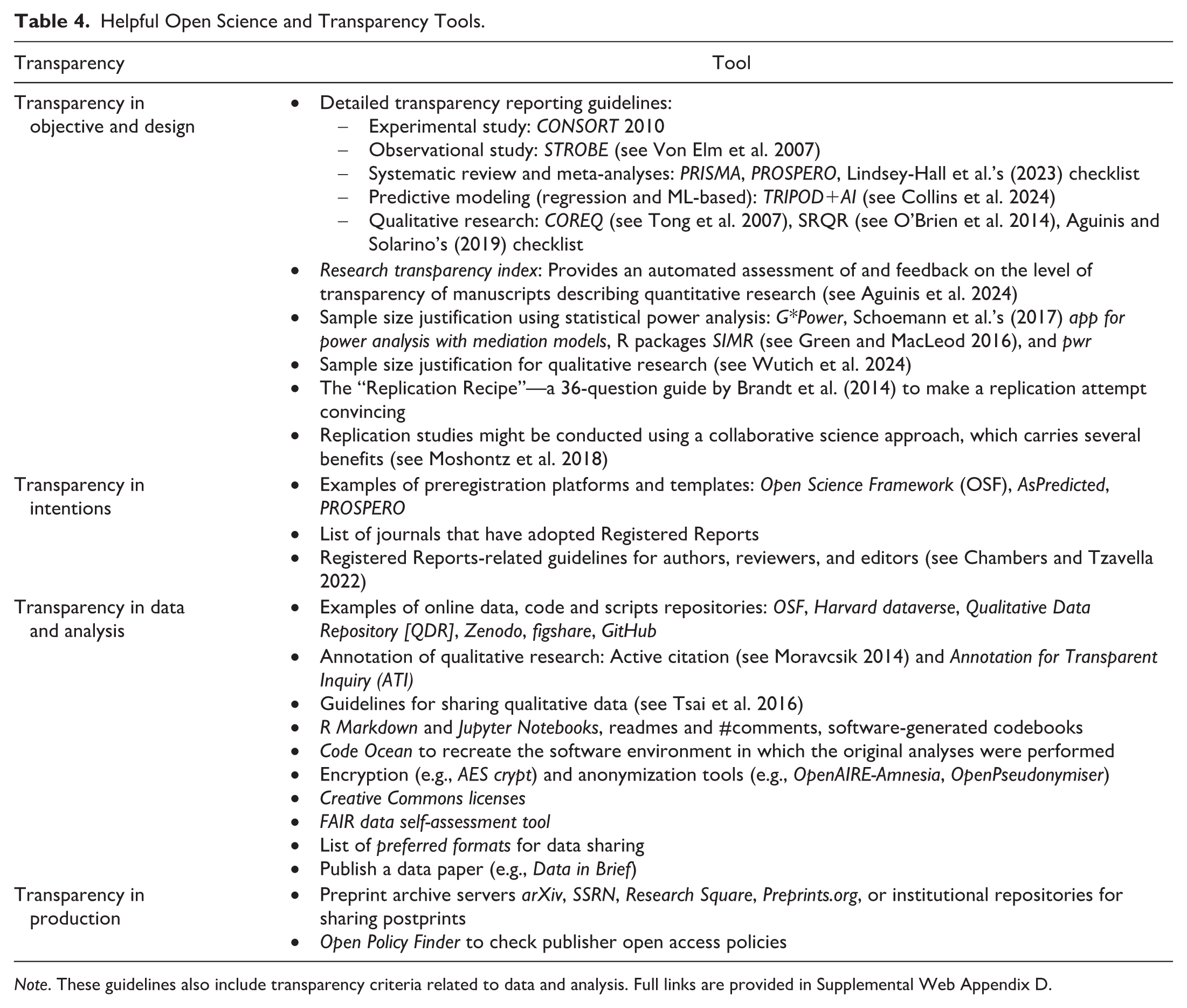

Open Science In Future Service Research: Recommendations

In the following, we propose guidelines for the further implementation of open science in future service research. Given that science is a process that involves authors, reviewers, and editors, we provide specific recommendations for each of these actors. Moreover, given that some open science practices are easier to implement than others, we distinguish between short-term and long-term efforts. While we consider the following guidelines reasonable, their implementation may pose challenges—beyond those introduced earlier in our review of meta-research. We briefly discuss these challenges and urge all stakeholders to carefully consider them before adopting our recommendations. Table 3 summarizes the key future recommendations for the field. Table 4 provides helpful tools to implement these recommendations. We provide full links to these tools in Supplemental Web Appendix D. We also created a checklist that authors can use to facilitate the implementation of open science practices (see Appendix A). Journal editors and reviewers can use this checklist to assess the extent to which a paper lives up to open science practices.

Recommendations for the Implementation of Open Science Practices in Future Service Research.

Helpful Open Science and Transparency Tools.

Note. These guidelines also include transparency criteria related to data and analysis. Full links are provided in Supplemental Web Appendix D.

Most evidence on the effectiveness of open science practices discussed in our literature review stems from fields outside service research. Thus, we recognize the need to assess whether similar outcomes apply to our domain. For instance, service researchers frequently rely on field and third-party data to capture complex, context-dependent service experiences, making certain practices (e.g., replication under identical conditions) more challenging to implement. Likewise, while open-access publishing has increased visibility and citations in some disciplines, its specific impact on service research requires further study. However, other practices (e.g., transparency in study design, data availability, and methodological reporting) have been addressed in prior service research (e.g., Valtakoski and Glaa 2024; Verleye 2019) and remain highly relevant. By acknowledging these distinctions, we aim to provide a nuanced discussion of how open science can be meaningfully integrated into service research—while considering its methodological and contextual constraints—and to encourage service researchers to explore the knowledge gaps and issues raised in this paper.

Transparency in Study Objectives and Design

Transparency in study objectives and design practices spans four primary areas, each with varying levels of prevalence in service research. Our findings show that material availability is relatively high in service research, yet studies typically report a subset of their materials. While full transparency is generally advantageous, excessive disclosure can have unintended consequences, making it important to find a balanced approach. Authors can enhance transparency by carefully deciding which details to include in the main paper versus supplementary materials. These supplementary materials can accompany the article on the journal website. Alternatively, the authors can provide a link to an online repository that contains their full materials. Reporting checklists can serve as helpful guides in the decisions about which materials to make available. Table 4 provides links and references to checklists for commonly used research methods. Additionally, Aguinis et al. (2024) recently introduced the Research Transparency Index, an automated tool for assessing the transparency of quantitative research papers. Authors can use these tools to evaluate a paper’s transparency prior to submission. Editors and reviewers can support this process by advising on which elements belong in the paper versus supplementary materials.

Clear reporting on sampling approaches and sample size justification seems essential for enhancing reproducibility. While service researchers have made strides in this area, our review of service research reveals further progress is still possible. Authors are encouraged to detail their sampling methods and provide robust justifications for their sample sizes. For instance, quantitative researchers can increase transparency by including sample size justifications based on statistical power analysis. Qualitative researchers can justify sample sizes according to the type of saturation (e.g., thematic, meaning-based, theoretical). For further guidance on sample size justification in quantitative and qualitative studies, authors may refer to Lakens (2022) and Wutich et al. (2024), respectively. Table 4 offers links to tutorials and tools created by meta-researchers to help authors adopt these practices. Reviewers can support this practice by requesting further sampling details when needed, ensuring studies include essential information. Editors can reinforce these practices by specifying the sampling information needed in the main paper and requiring sample size justification for submission.

While replication studies are beneficial for verifying the reproducibility of service research, their lower chances of publication and citation rates (Block et al. 2022; Nosek and Bar-Anan 2012) warrant a more concerted, long-term effort. Editors, as gatekeepers in the publication process, have substantial influence in fostering a culture of replication. They can emphasize the importance of replication through editorials and organize Special Issues dedicated to replication studies, as recently seen in the Journal of International Business Studies (Dau et al. 2022). These editorials can enhance the visibility and impact of replication research within the academic community.

As Brandt et al. (2014) point out, one of the key reasons why replication studies are not published is because the argument in favor of the replication attempt is not convincing. Rather than attempting to replicate every study, focus should be on those that are particularly significant or widely referenced. Brandt et al. (2014) offer a 36-question guide that can help improve the argumentation for a replication study. If motivated (and executed) well, replication studies can be published and attract citations. Some examples of well-cited replication studies in service research include Keiningham et al. (2007) on the effectiveness of the Net Promoter Score in predicting growth, which explores a meaningful phenomenon, and De Keyser et al. (2015) on customers’ multichannel choices, which combines replication with new extensions. An alternative approach is to engage in the replication of various influential studies rather than replicating one specific study. Similar initiatives were taken recently in operations management (Davis et al. 2023) and entrepreneurship (Crawford et al. 2022), leading to publication success in Management Science and Entrepreneurship Theory and Practice, respectively. Authors can examine these studies in more detail to become more familiar with published replication studies.

Transparency in Intentions

Our review shows that preregistration is currently uncommon in service research, though meta-research on preregistration indicates it is not necessarily required in every case. Preregistration offers benefits, such as clarifying research objectives and encouraging researchers to carefully design their study before data collection begins. However, our review of meta-research revealed that preregistration is not a comprehensive solution to all questionable research practices, especially when unreviewed. The primary purpose of preregistration is to help readers distinguish between findings that were hypothesized in advance (confirmatory) and those that emerged because of the research process (exploratory). This distinction can also be achieved without formal preregistration by adopting a reflective writing style that clearly differentiates between anticipated and unanticipated findings. For example, authors can use sentences like “in further exploratory (not hypothesized) analyses, we examined. . ..” Given the evolving nature of the service context and the growing availability of big data, exploratory research is increasingly necessary. From a transparency standpoint, it is important that such findings are explicitly labeled as exploratory.

Reviewers play an important role in evaluating a study’s quality on its own merits. While some studies suggest that preregistration is sometimes seen by reviewers as a proxy for high quality and transparency (Lakens et al. 2024), preregistration is not a panacea for all problems. Hence, we recommend reviewers not to overly rely on or to demand (unreviewed) preregistration when evaluating manuscripts. Authors can also adopt a reflective writing style when dealing with reviewers’ request. For example, Wald et al. (2024) write: “Following a reviewer’s request, we also analyzed our data [. . .]. We did not conceptualize our experiment in this design, and hence these analyses were not preregistered.” These approaches allow the reader to understand what was anticipated a priori, and which insights (or, e.g., new hypotheses) emerged as part of the data analysis or the review process.

Our review of meta-research on open science practices reveals that reviewed preregistrations show greater promise in improving research transparency and limiting questionable research practices than unreviewed preregistrations. This form of preregistration, which involves preliminary feedback on research questions and methods before data collection, can add significant value to service research. Even when results differ from the original hypotheses, this process raises valuable questions about the factors influencing these findings. After all, all involved parties (authors, reviewers, and editors) agreed upon the research question, hypotheses, and approach prior to data collection. Editors might advance reviewed preregistration and consider this type of paper as a submission type for their service journals, particularly in the longer term. However, implementing this submission format may come with challenges, such as the need to educate authors (reviewers) on how to write (review) registered reports, find expert reviewers who are prepared to deeply engage with the theoretical justification and study design of the manuscript, and ensure that authors follow the agreed plan (Chambers and Tzavella 2022).

To gradually implement this submission format and build familiarity with it, some journals, like MIS Quarterly, have experimented with this approach through Special Issues focused on registered reports. Table 4 provides a link to a list of journals that have already adopted reviewed preregistration as a submission format. We recommend exploring the best practices in these journals when developing guidelines for these new submission types.

Transparency in Data and Analysis

In service research, there is a high level of transparency in reporting reliability and validity checks and in describing analytical methods. Further improvements, however, are needed in documenting data cleaning procedures, including cases where no cleaning was required, as well as in making data, scripts, and coding trees accessible. The main advantage of open data sharing lies in allowing other researchers to validate findings and expand upon them. Meta-research shows that data-sharing policies alone do not ensure that shared data are free from errors (e.g., Claesen et al. 2023). Authors can therefore improve reproducibility by providing more comprehensive documentation of their data and analyses, as demonstrated by increased reproducibility rates at Management Science after implementing the replication package policy. Despite these benefits, implementing open data practices presents challenges. Researchers may lack the time or expertise to properly document their datasets, and concerns about confidentiality, proprietary data, and ethical considerations can further complicate data sharing. Table 4 lists several tools to share, annotate, and anonymize data. It also lists tools to make the data analysis itself more transparent, such as R Markdown or Code Ocean. Authors can also use the FAIR data self-assessment tool by the Australian Research Data Commons to assess whether their dataset is Findable, Accessible, Interoperable, and Reusable.

Reviewers have an essential role in assessing analytical quality, although this thorough checking can add to the already demanding review workload. Journals can help by assigning specialized statistical or methodological reviewers who focus on technical assessments, as practiced by journals like Psychological Science (Hardwicke and Vizire 2024). This approach allows other reviewers to concentrate on theoretical contributions, with the confidence that the methods have been reviewed by an expert. Editors can encourage data and script sharing in the short term and eventually introduce policies that require replication packages, following the examples set by Journal of Marketing and Journal of Marketing Research. Forming a dedicated methodological review board could further enhance transparency and reproducibility in research across the field; however, this would require substantial long-term investment.

Another basic low-cost initiative that journals may easily implement is the use of open science badges, which serve as some sort of quality label acknowledging open science practices. By using such badges, journals signal their values and support for openness and transparency. As an example, the journal Psychological Science undertook important changes to its publication policy back in 2014. Among these changes, the journal has introduced several badges that appear in the article itself and signify open materials and open data, among others (Eich 2014). Before badges were introduced, less than 3% of articles published in Psychological Science reported open data. After badges, 23% reported open data in 2014, and 39% in the first half of 2015. These numbers went up to 69% for open date and 55% for open materials in 2022 (Bauer 2023). These badges appear to be a useful yet temporary measure. Psychological Science recently decommissioned the use of badges, as they were always intended as an interim nudge (Hardwicke and Vazire 2024).

Transparency in Production

Our observational study of the service research literature reveals that publicly accessible versions of articles are available in approximately two-thirds of cases. Service researchers are generally providing open access to their published work, either through gold or green open access, or both. We recommend that authors increase open access to their post-prints, for example, by using institutional repositories or alternative free platforms such as SSRN (Accepted Paper Series) or OSF. Researchers can use the “Open Policy Finder” website (https://openpolicyfinder.jisc.ac.uk/) to make informed decisions about open access publication and compliance with journal policies. Regarding preprints, our review of meta-research on open science suggests that preprints do not always deliver the anticipated benefits. Sharing preprints may not be the most effective path at this time.

Futuristic Outlook

The previous sections have focused on open science practices that primarily address current research processes. While our recommendations provide actionable steps for immediate implementation, it is also important to acknowledge broader developments that are beginning to reshape the research ecosystem. Emerging trends—such as collaborative science, open review, the decline of hypothesis testing, and the transformation of the academic system—are likely to influence the future direction of open science. We briefly discuss these advances next.

Collaborative Science

In some fields, we are witnessing a surge in the number of collaborative (or big-team) science projects. Originally, these projects were mainly aimed at replicating a specific theory or relationship, or several prominent studies. For example, in a so-called “Many Labs” project, 64 researchers from 23 research groups collaborated to replicate the well-known ego depletion effect in psychology, which ultimately did not show any significant effect (Hagger et al. 2016). Such collaborative projects can also be set up to test for novel phenomena and would offer several benefits, such as more transparent procedures, materials, and data, as well as fewer errors and biases as many researchers have been reviewing the materials before collecting the data, higher statistical power, and more robust population parameter estimation across samples (Moshontz et al. 2018). In addition, collaborative science projects can help deepen our understanding of how analytical choices influence research outcomes. For example, the International Journal of Research in Marketing recently launched a collaborative science project to estimate price elasticities for meat substitute products across multiple brands and countries (www.elasticity-open-science.com). Overall, collaborative science initiatives may offer a valuable pathway for generating scientific insights in the field of service research.

Open Peer-Review

Another aspect of the open science movement that may become a common practice in the future is open peer review, where “review reports and reviewers’ identities are published alongside the articles” (Wolfram et al. 2020, p. 1033). Early evidence shows that reviewer comments in open peer-review journals tend to be longer, more courteous, and of higher quality than closed review journals (e.g., Bornmann et al. 2012), which may explain the recent steady growth in open peer-review journals in medical disciplines (Wolfram et al. 2020).

Novel Data Analytical Approaches

In recent years, alternatives to (frequentist) hypothesis testing—such as Bayesian estimation methods and machine learning techniques—have gained traction, with some even advocating for the abandonment of hypothesis testing altogether (McShane et al. 2024). These approaches could significantly enhance research replicability, and the adoption of open science practices can facilitate their dissemination and integration. These methods do not necessarily make open science practices obsolete. By openly sharing research designs, data, and analytical workflows, authors can support fellow researchers in navigating the learning curve and expanding the accessibility of these methods. For instance, researchers interested in Bayesian analysis or in using Bayesian tests alongside frequentist approaches to assess the robustness of their findings can benefit from openly documented methodologies and insights from prior studies that follow open science principles.

Challenges remain, however, particularly when dealing with novel data analysis approaches, such as unsupervised machine learning techniques (e.g., deep learning algorithms). While researchers can engage in transparent reporting for supervised machine learning techniques—by providing labels and the rationale for their use—many unsupervised techniques remain black boxes, where analytical decisions are made by the algorithm rather than the researcher. This presents significant challenges for transparency and reproducibility. Even if authors report all necessary data, model fitting considerations, and syntax for replication, reviewers may encounter different results due to variations in the analytical decisions made by the algorithm. While progress is being made toward improving the transparency of these black boxes through post-hoc interpretability techniques (see Rai 2020), this is still not a common practice.

Academic System

For the aforementioned recommendations to be followed and become mainstream, these open science practices must also be recognized and incentivized. Various researchers call for the need to revise the current academic system, which seems disconnected from the evidence on the causes of the reproducibility crisis and still mainly rewards researchers based on quantitative bibliometric indicators such as the number of publications (preferably in prestigious journals) or citation indices (De Rond and Miller 2005). According to Moher et al. (2018), an increasing number of institutions worldwide are adopting novel research assessment principles, including evaluating researchers based on responsible indicators that fully reflect their contributions to the scientific enterprise (e.g., reproducible research reporting, data sharing). Examples of research career evaluation tools that are yet to be widely adopted include the European Commission’s (2017) Open Science Career Assessment Matrix (OS-CAM) and the Coalition for Advancing Research Assessment’s (CoARA) agreement. When widely adopted, these efforts can realign incentives so that what benefits individual scientists also advances the quality and trustworthiness of science as a whole (Munafò et al. 2017).

Conclusion

This research aimed to offer service researchers informed, evidence-based recommendations regarding what works and what doesn’t in terms of open science, and provide a reasonable path forward for greater transparency and openness in our field. Our review of meta-research identified several open science practices that are effective in enhancing transparency and reproducibility, such as sharing of materials, policies related to sharing of data and analysis code, and reviewed preregistration. Other practices, like unreviewed preregistration, seem less effective. These insights provide a balanced view of the need to adopt open science practices. At the same time, our review of meta-research on open science revealed that there are several outstanding gaps in our knowledge concerning the effectiveness of open science practices, highlighting the need for continued monitoring of future studies in this area. As new evidence emerges, it will be crucial to reassess and refine our recommendations to ensure they remain aligned with the latest insights and best practices.

Our analysis of the adoption of open science practices in two key service research journals (Journal of Service Research and Journal of Service Management) revealed that while some open science practices are adopted well in the service research community, other practices are adopted to a lesser extent. Combining the insights from our balanced view of the literature with the insights from our observational study of service research, we propose several future directions for the service research community. These directions require efforts from authors, reviewers, and editors in the short term and the long term and can help guide the implementation of effective open science practices. Important to note is that our review spanned multiple scientific fields, making the implementation of some open science practices potentially more challenging for service researchers.

We recommend service researchers to examine the references and tools provided in this paper, and to see how they can implement the recommended open science practices in their current and future research. As Diederich et al. (2022) pointed out, the practical implementation of open science practices is sometimes complicated. We sincerely hope that our review serves as a handy reference for authors, reviewers, and editors to implement open science in their research.

Supplemental Material

sj-docx-1-jsr-10.1177_10946705251338461 – Supplemental material for Open Science: A Review of Its Effectiveness and Implications for Service Research

Supplemental material, sj-docx-1-jsr-10.1177_10946705251338461 for Open Science: A Review of Its Effectiveness and Implications for Service Research by Yves Van Vaerenbergh, Simon Hazée and Thijs J. Zwienenberg in Journal of Service Research

Supplemental Material

sj-docx-2-jsr-10.1177_10946705251338461 – Supplemental material for Open Science: A Review of Its Effectiveness and Implications for Service Research

Supplemental material, sj-docx-2-jsr-10.1177_10946705251338461 for Open Science: A Review of Its Effectiveness and Implications for Service Research by Yves Van Vaerenbergh, Simon Hazée and Thijs J. Zwienenberg in Journal of Service Research

Footnotes

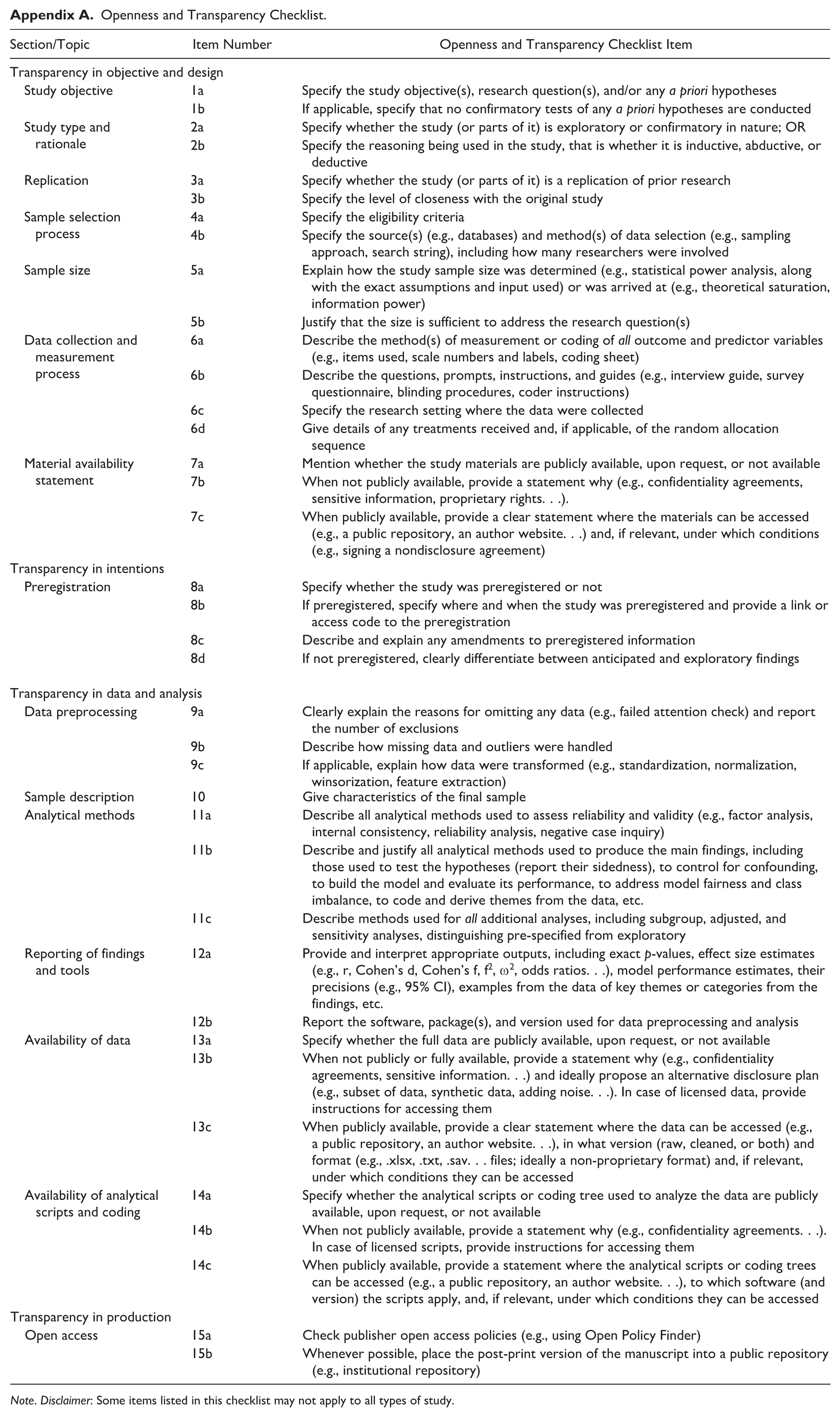

Appendix

Openness and Transparency Checklist.

| Section/Topic | Item Number | Openness and Transparency Checklist Item |

|---|---|---|

| Transparency in objective and design | ||

| Study objective | 1a | Specify the study objective(s), research question(s), and/or any a priori hypotheses |

| 1b | If applicable, specify that no confirmatory tests of any a priori hypotheses are conducted | |

| Study type and rationale | 2a | Specify whether the study (or parts of it) is exploratory or confirmatory in nature; OR |

| 2b | Specify the reasoning being used in the study, that is whether it is inductive, abductive, or deductive | |

| Replication | 3a | Specify whether the study (or parts of it) is a replication of prior research |

| 3b | Specify the level of closeness with the original study | |

| Sample selection process | 4a | Specify the eligibility criteria |

| 4b | Specify the source(s) (e.g., databases) and method(s) of data selection (e.g., sampling approach, search string), including how many researchers were involved | |

| Sample size | 5a | Explain how the study sample size was determined (e.g., statistical power analysis, along with the exact assumptions and input used) or was arrived at (e.g., theoretical saturation, information power) |

| 5b | Justify that the size is sufficient to address the research question(s) | |

| Data collection and measurement process | 6a | Describe the method(s) of measurement or coding of all outcome and predictor variables (e.g., items used, scale numbers and labels, coding sheet) |

| 6b | Describe the questions, prompts, instructions, and guides (e.g., interview guide, survey questionnaire, blinding procedures, coder instructions) | |

| 6c | Specify the research setting where the data were collected | |

| 6d | Give details of any treatments received and, if applicable, of the random allocation sequence | |

| Material availability statement | 7a | Mention whether the study materials are publicly available, upon request, or not available |

| 7b | When not publicly available, provide a statement why (e.g., confidentiality agreements, sensitive information, proprietary rights. . .). | |

| 7c | When publicly available, provide a clear statement where the materials can be accessed (e.g., a public repository, an author website. . .) and, if relevant, under which conditions (e.g., signing a nondisclosure agreement) | |

| Transparency in intentions | ||

| Preregistration | 8a | Specify whether the study was preregistered or not |

| 8b | If preregistered, specify where and when the study was preregistered and provide a link or access code to the preregistration | |

| 8c | Describe and explain any amendments to preregistered information | |

| 8d | If not preregistered, clearly differentiate between anticipated and exploratory findings | |

|

|

||

| Data preprocessing | 9a | Clearly explain the reasons for omitting any data (e.g., failed attention check) and report the number of exclusions |

| 9b | Describe how missing data and outliers were handled | |

| 9c | If applicable, explain how data were transformed (e.g., standardization, normalization, winsorization, feature extraction) | |

| Sample description | 10 | Give characteristics of the final sample |

| Analytical methods | 11a | Describe all analytical methods used to assess reliability and validity (e.g., factor analysis, internal consistency, reliability analysis, negative case inquiry) |

| 11b | Describe and justify all analytical methods used to produce the main findings, including those used to test the hypotheses (report their sidedness), to control for confounding, to build the model and evaluate its performance, to address model fairness and class imbalance, to code and derive themes from the data, etc. | |

| 11c | Describe methods used for all additional analyses, including subgroup, adjusted, and sensitivity analyses, distinguishing pre-specified from exploratory | |

| Reporting of findings and tools | 12a | Provide and interpret appropriate outputs, including exact p-values, effect size estimates (e.g., r, Cohen’s d, Cohen’s f, f2, ω2, odds ratios. . .), model performance estimates, their precisions (e.g., 95% CI), examples from the data of key themes or categories from the findings, etc. |

| 12b | Report the software, package(s), and version used for data preprocessing and analysis | |

| Availability of data | 13a | Specify whether the full data are publicly available, upon request, or not available |

| 13b | When not publicly or fully available, provide a statement why (e.g., confidentiality agreements, sensitive information. . .) and ideally propose an alternative disclosure plan (e.g., subset of data, synthetic data, adding noise. . .). In case of licensed data, provide instructions for accessing them | |

| 13c | When publicly available, provide a clear statement where the data can be accessed (e.g., a public repository, an author website. . .), in what version (raw, cleaned, or both) and format (e.g., .xlsx, .txt, .sav. . . files; ideally a non-proprietary format) and, if relevant, under which conditions they can be accessed | |

| Availability of analytical scripts and coding | 14a | Specify whether the analytical scripts or coding tree used to analyze the data are publicly available, upon request, or not available |

| 14b | When not publicly available, provide a statement why (e.g., confidentiality agreements. . .). In case of licensed scripts, provide instructions for accessing them | |

| 14c | When publicly available, provide a statement where the analytical scripts or coding trees can be accessed (e.g., a public repository, an author website. . .), to which software (and version) the scripts apply, and, if relevant, under which conditions they can be accessed | |

| Transparency in production | ||

| Open access | 15a | Check publisher open access policies (e.g., using Open Policy Finder) |

| 15b | Whenever possible, place the post-print version of the manuscript into a public repository (e.g., institutional repository) | |

Note. Disclaimer: Some items listed in this checklist may not apply to all types of study.

Acknowledgements

We would like to thank the Managing Editor, Associate Editor, and three anonymous reviewers for their valuable feedback. Our gratitude also goes to Filip Germeys, Lisa Brüggen, Michael K. Brady, and the participants at the 2017 SERVSIG Doctoral Consortium, the 2023 Frontiers in Service Conference, and the 2024 SERVSIG Conference for their insightful contributions and resources on this topic. We used ChatGPT (v4.o) for language editing. After utilizing ChatGPT, the authors reviewed and refined the content as needed and take full responsibility for the final text. We would like to emphasize that this article is not intended to critique past research or diminish prior findings. Rather, our aim is to initiate a conversation on the role of open science in service research. Like many others, we recognize our own opportunities for growth in embracing open science practices. We hope this paper raises awareness and encourages fellow service researchers to further adopt open science practices in their work.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.