Abstract

Digitization, artificial intelligence, and service robots carry serious ethical, privacy, and fairness risks. Using the lens of corporate digital responsibility (CDR), we examine these risks and their mitigation in service firms and make five contributions. First, we show that CDR is critical in service contexts because of the vast streams of customer data involved and digital service technology’s omnipresence, opacity, and complexity. Second, we synthesize the ethics, privacy, and fairness literature using the CDR data and technology life-cycle perspective to understand better the nature of these risks in a service context. Third, to provide insights on the origins of these risks, we examine the digital service ecosystem and the related flows of money, service, data, insights, and technologies. Fourth, we deduct that the underlying causes of CDR issues are trade-offs between good CDR practices and organizational objectives (e.g., profit opportunities versus CDR risks) and introduce the CDR calculus to capture this. We also conclude that regulation will need to step in when a firm’s CDR calculus becomes so negative that good CDR is unlikely. Finally, we advance a set of strategies, tools, and practices service firms can use to manage these trade-offs and build a strong CDR culture.

Introduction

Although digital technology, artificial intelligence (AI), and service robots have the potential to bring unprecedented improvements in service quality and productivity (e.g., Bock, Wolter, and Ferrell 2020; Huang and Rust 2018, 2021; Wirtz et al. 2018), these technologies also carry serious ethical, fairness, and privacy risks for service users (Belk 2021; Breidbach and Maglio 2020; Koshiyama et al. 2021; Puntoni et al. 2021). For example, the negligible costs and increasing possibilities of capturing data, observing customers, and harvesting value, a practice also referred to as surveillance capitalism (Zuboff, 2015; Zuboff Shoshana, 2019), are well beyond what George Orwell could have imagined when writing his classic book “Nineteen Eighty-Four: A Novel” and can be considered inconsistent with human rights (United Nations 2021).

Furthermore, advances in AI and its unprecedented power of predictive analytics, automated decision making, and machine learning (ML) (Bornet, Barkin, and Wirtz 2021) poise critical ethical dilemmas (Belk 2021; Breidbach and Maglio 2020). These technologies “allow more and more inferences about individuals,” which are “plagued by biases” and “are, in turn, used to manipulate, assess, predict, and nudge individuals – often without their awareness and nearly always without any oversight or accountability” (Gawer 2021, p. 12). At a higher level, service robots and AI cause concerns related to dehumanization, social isolation, loss of autonomy and dignity, social engineering, and more (Belk 2021; Čaić, Odekerken-Schröder, and Mahr 2018; Leidner and Tona 2021; Vandemeulebroucke, de Casterlé, and Gastmans 2021).

Extant research that guides service organizations about digital technologies in the face of ethical dilemmas remains scant, yet the call for further research is mounting. Bock, Wolter, and Ferrell (2020) called for examining the implications of AI on ethics in service firms, and Gawer (2021) suggested “more in-depth academic research that will benefit from cross-fertilization across academic silos” (p.13) in digital businesses. This article follows these calls for further research by examining these issues using the concept of corporate digital responsibility (CDR). We define CDR in the context of service as the principles underpinning a service firm’s ethical, fair, and protective use of data and technology when engaging with customers within their digital service ecosystem.

This article makes five key contributions. First, we explore CDR in a service context and advance that it is of particular importance to service organizations and their customers because of the vast streams of customer data involved and digital technology’s omnipresence, opacity, and complexity. Second, we synthesize the multi-disciplinary ethics, privacy, and fairness literature using the lenses of CDR in the digital service ecosystem and the data and technology life-cycle perspective (i.e., their creation, operation, refinement, and retainment) to provide a more comprehensive understanding of the nature of consumer risks and concerns. Third, we provide insights on the origins of these risks, examining service business models, their digital service ecosystems, and the related flows of money, service, data, insights, and technologies with customers at the front-end and business partners at the back-end. Fourth, we examine why CDR issues occur and advance that their underlying causes are trade-offs between good CDR practices and organizational objectives (e.g., multiple profitable uses of customer insights in an ecosystem that are based on more and better data and related analytics, but which carry a gamut of ethical, privacy, and fairness risks). To capture these trade-offs, we introduce the CDR calculus. Finally, we outline a set of strategies, tools, and practices service organizations can use to navigate these trade-offs and build a robust CDR culture, processes, and behaviors (e.g., through shared values, norms, and artifacts, a supporting management structure, and digital governance).

Why is CDR So Important in Service?

It is widely accepted that human behavior and decision-making should be governed by moral norms and ethical considerations (Bailey and Shantz 2018). As data combined with digital technologies are increasingly deployed to make service decisions, serve customers (e.g., through virtual and physical robots), and generate additional revenue streams, we advance that there is a need to make these behaviors and decisions accountable to moral norms and ethical considerations. These considerations are the core focus of CDR.

Corporate digital responsibility has been developed at the firm and society levels (Lobschat et al. 2021; Mihale-Wilson et al. 2022), but has not been explored more deeply in a service context. However, CDR is of particular importance for service markets because the potential ethical concerns based on the disruptive force of digital technology is especially high (Bock, Wolter, and Ferrell 2020). Service firms tend to have closer relationships with customers, more touchpoints, and are more customer data information processing-heavy than goods companies. Further, services have many intangible elements that are easier to digitize than the components of physical goods. The high affinity of services to digitization and potential ethical concerns can be seen in examples such as financial services, healthcare, telecommunications, and servitized goods (e.g., platforms with wearable technologies), which increasingly migrate to digital business platforms (e.g., Rangaswamy et al. 2020). These points relate to two underlying factors that intensify CDR issues for service firms. First, many services are deeply ingrained in people’s lives and generate a wealth of data and insights that offer exciting profit opportunities, yet often cause customer vulnerabilities. Second, service increasingly involves autonomous and learning AI and service robots, and we do not yet have a good understanding of the potential harms associated with these technologies. We discuss both issues next.

A New Perspective on Data in Service

Given that new technology and AI depend heavily on large amounts of data, the importance of customer data and constant access to it has increased significantly for service firms. They rely on data, analytics, and AI to train models and optimize their market offerings and associated value propositions (Wixom and Ross 2017). Integrating data, analytical insights, and digital technologies into service experiences provides both companies and customers with proven benefits (Wixom et al. 2020). In particular, they directly contribute to service efficiency and productivity, and provide informational advantages that can help translate novel insights into customer likings and preferences (Huang and Rust 2018; Kozinets and Gretzel 2021; Wirtz et al. 2018). In addition, enhanced customization, personalization, and optimized and flexible real-time customer interactions tie customers closer to the firm and thereby contribute to a firm’s competitive edge (Cukier 2021; Rangaswamy et al. 2020). For these compelling reasons, investments in data, analytics, technology, and talent (e.g., data scientists) have been a top priority for executives in service firms for the last ten years (Kappelman and Sinha 2021).

The traditional view on data has provided a relatively static picture of the customer in the form of, for instance, contact and purchase data in a customer database. However, many services are deeply ingrained in people’s lives (from healthcare and fitness, to finance and entertainment; Martin 2015), built on repeat encounters (e.g., subscription services, memberships, and regular consumption), and are often customized and personalized. Therefore, capturing these data has become integral to service delivery, and always-on digital technology has made it easy to collect such behavioral data automatically in real-time, resulting in dynamic customer, usage, and preference insights (Gawer 2021). Thus, firms can now observe previously unobservable behaviors and use these insights to achieve competitive advantage without paying the data source (Varian 2014). Further, the trends towards virtualization, digitization, servitization (e.g., integrating services and physical goods through mobile technologies), and moving services onto digital platforms offer firms full transparency over customer and market behaviors (Rangaswamy et al. 2020).

The increased digitization of service results in an ever-expanding data stream and integration of various types of data (Puntoni et al. 2021; Zuboff 2015). These can include individual (e.g., profile data, transaction data, likes, and comments) and collective data (e.g., the aggregated and segmented behavior of many individuals that allow training of algorithms, identification of deeper trends in segments and the entire customer population); direct (i.e., provided by the customer such as clickstream data and uploaded photos) and inferred data (i.e., not provided by the customer but can be inferred from user data such as, the building of a customer’s daily routine based on a live-camera feed); static and real-time data (e.g., many adaptive AI services depend on a constant stream of real-time customer data to update AI models); private (i.e., customers own the rights to data and can grant usage rights to the service firm as is often done with profile and transaction data) and public data (i.e., generally available for use such as weather data, maps, and public ratings); and “trial and error” data (e.g., A/B testing is commonly used in digital services to improve customer behavior predictions using quick field experiments with two variants, A and B). The collection and combination of these various data types often make analytics and AI so powerful in service.

To fully leverage their digital capabilities, service firms continually strive for more and different data to capture and integrate from every customer and every interaction (Zuboff 2015). There are many tools widely used to achieve this. For example, service firms update their customer privacy policies and contractual forms to gain consent to online monitoring and automation. This allows firms to extract more consumer data automatically and widens their scope of data usage (e.g., the expanded usage rights that are applied to photos uploaded on social media platforms), even allowing integration with other (public) data (Zuboff 2015). For example, an analysis of eBay’s privacy policy showed that its users agreed to share their data with 909 eBay partners and that the privacy policies involved count some 2 million words (Kurtz et al. 2020). In addition, gamification approaches and social reputation systems (e.g., number and profiles of followers and likes) can generate additional insights into specific customer attitudes and behaviors, including their social network.

The vast accumulation of personal and non-personal information and the combination of various customer data types is viewed with increasing concern (Andrew and Baker 2021; Bleier, Goldfarb, and Tucker 2020; Martin 2015). Privacy breaches, extensive individual profiling, algorithmic biases, and discrimination have been widely discussed in public forums and media (Someh et al. 2019). Although personal data receives increasing regulatory protection [e.g., by General Data Protection Regulation (GDPR)], non-personal information (e.g., anonymized search queries, clicks, and time spent on sites) can offer similar value as personal data without breaching current privacy laws. Furthermore, advanced algorithms combined with big data can infer personal information from anonymous static and behavioral data, thus circumventing current regulation (Andrew and Baker 2021). For example, data brokers in the US were found selling profiles of individuals pegged as “actively pregnant” or “shopping for maternity products.” Instead of collecting this data from end-users, these individuals were identified through integration and analysis of various data sources (Wodinsky and Barr 2022). Hence, the boundaries for good practice in data mining are getting blurry.

Finally, the use of combinations of data can offer significant profit opportunities (along the line “data are the new currency”; Quach et al. 2022). This makes it tempting for service firms to press ahead with capturing and utilizing data, and worry only later about potential risks (Bleier, Goldfarb, and Tucker 2020; Breidbach and Maglio 2020). This is of specific concern in service firms as there is frequently a power imbalance and information asymmetry between service firms and their customers (Lwin, Wirtz, and Williams 2007), and because today’s customers often do not have much choice of opting out, as they lack realistic alternatives to lead their lives without these digital services and their algorithms (Rahwan et al. 2019).

Digital Technology and AI in Service

Digital technologies and AI show increasing ubiquity, complexity, opacity, unobservability, and are implemented in almost all areas of daily life (e.g., social media, credit ratings, stock markets, healthcare, and transportation; c.f. Rahwan et al. 2019). Moreover, the combination of these advanced technologies leads to significant value creation in the form of, for instance, enhanced productivity, cost reduction, and personalized and optimized customer experiences. In fact, it is expected that service will increasingly be delivered by autonomous and learning AI and service robots (Huang and Rust 2018; Wirtz et al. 2018).

The complexity of AI is high and increasing. Contrary to traditional programming methods that typically follow human-specified rules, AI can derive decision rules autonomously and learn from data (Athey 2020). Even if the code for a specific application and training of a model is simple, the results can be highly complex, unpredictable, and often result in uninterpretable “black boxes.” This is especially so when the technologies interact with and learn from customers, the environment, and even other technologies (LeCun, Bengio, and Hinton 2015; Rahwan et al. 2019). It is this evolution through learning that shapes the individual and collective activities of these technologies (Rahwan et al. 2019). Thus, even the designer of an AI system does not always know why and how the results of their own system were generated (Voosen 2017). As such, we do not yet fully understand the potential harms associated with these technologies and their increased use (Bock, Wolter, and Ferrell 2020; Puntoni et al. 2021; Vandemeulebroucke, de Casterlé, and Gastmans 2021).

Therefore, “inevitably costs are involved with delegating power to algorithmically based systems” (Elliott et al. 2021, p. 1), especially so in services where ever more sophisticated technologies can be used to influence and nudge consumers (Davenport et al. 2020; Rangaswamy et al. 2020), achieve “behavioral modifications” (Zuboff 2015), and shape people’s decision-making and wellbeing (e.g., Belk 2021; Breidbach and Maglio 2020; Puntoni et al. 2021). This has triggered increasing criticism as these practices can lead to issues such as price fairness, service access inconsistencies, and coerced consumer decision-making, which can be viewed as a control mechanism by digital providers (Zuboff 2015). Furthermore, these technologies are malleable. Even if introduced with the best intentions, malleable AI can be at risk of exploitation for unintended purposes (Koshiyama et al. 2021). For example, social media were not designed to spread fake news, but their algorithms, designed to build engagement, contribute to this issue (Vosoughi, Roy, and Aral 2018).

Given the vast amount of data generated, and the expansive scope of rapidly advancing digital technologies and related business models, ethics, privacy, and fairness are increasingly highlighted as pressing concerns for service firms (Belk 2021; Rahwan et al. 2019; Vandemeulebroucke, de Casterlé, and Gastmans 2021). We therefore believe that service customers are particularly vulnerable to poor CDR practices.

Ethics, Privacy, and Fairness Across the Data and Technology Life-Cycles

Three broad areas, that is, ethics, privacy, and fairness (or bias), are important to ensure service firms balance their own interests with those of consumers in the context of AI and digital technologies (Davenport et al. 2020). First, ethics in AI has attracted increasing research attention in the service community. Ethical issues raised include coercion of data disclosure, dehumanization and threat to human dignity, social deprivation, disempowerment, and social engineering (Belk 2021; Breidbach and Maglio 2020; Čaić, Odekerken-Schröder, and Mahr 2018; Wirtz et al. 2018). Thus, Bock, Wolter, and Ferrell (2020) called for examining the implications of digital technology and AI on ethics in service firms.

Second, within the marketing literature, consumer privacy, and personal data protection relate to consumers’ autonomy and control over collecting, storing, and using their data (Beke, Eggers, and Verhoef 2018). Here, violations of consumer privacy include, but are not limited to, unwanted marketing communications, highly targeted advertising, surreptitious online tracking, ubiquitous surveillance, privacy failures such as data breaches, identity theft (e.g., Martin and Murphy 2017; Quach et al. 2022), and undisclosed commercialization of data (Martin 2015).

Third, fairness in the context of AI has been primarily examined in the information systems and management literature (e.g., Awad et al. 2022; Ekstrand et al. 2022; Mehrabi et al. 2021). Biases can be introduced in the programming and operation of a system (Herden et al. 2021; Rahwan et al. 2019), and can be classified as data-to-algorithm or data biases (e.g., measurement, representation and aggregation, and sampling and linking biases), algorithm-to-user or method biases (e.g., emergent and correlational biases) and user-to-data biases (e.g., population, historical, and social biases; Mehrabi et al. 2021).

In management, fairness has been defined as a social construct related to equitable treatment of individuals (e.g., in promotion decisions) and encompasses issues such as biases in algorithm-based decision-making and perceptions of procedural unfairness (De Cremer 2020; Hunkenschroer and Luetge 2022). Finally, in an interdisciplinary review of ML and algorithms, van Giffen, Herhausen, and Fahse (2022) identified a total of eight types of biases that can lead to unfair decisions and concluded that there is “no bias-free ML” (p. 105).

The literature discusses the three constructs of ethics, privacy, and fairness in isolation rather than at a more integrated and higher-level perspective. Thus, although research has been undertaken toward specific use of data and technologies in service, such as data management and consumer privacy (Martin and Murphy 2017; Wirtz and Lwin 2009), the foci of these research streams are too narrow to capture the broader scope of emerging technologies, such as ML and AI, and the rapid trend towards digitization and data capture. We believe that a more integrated perspective allows researchers and managers alike to see the bigger picture and better understand the interdependencies between data and digital technologies and the issues that arise.

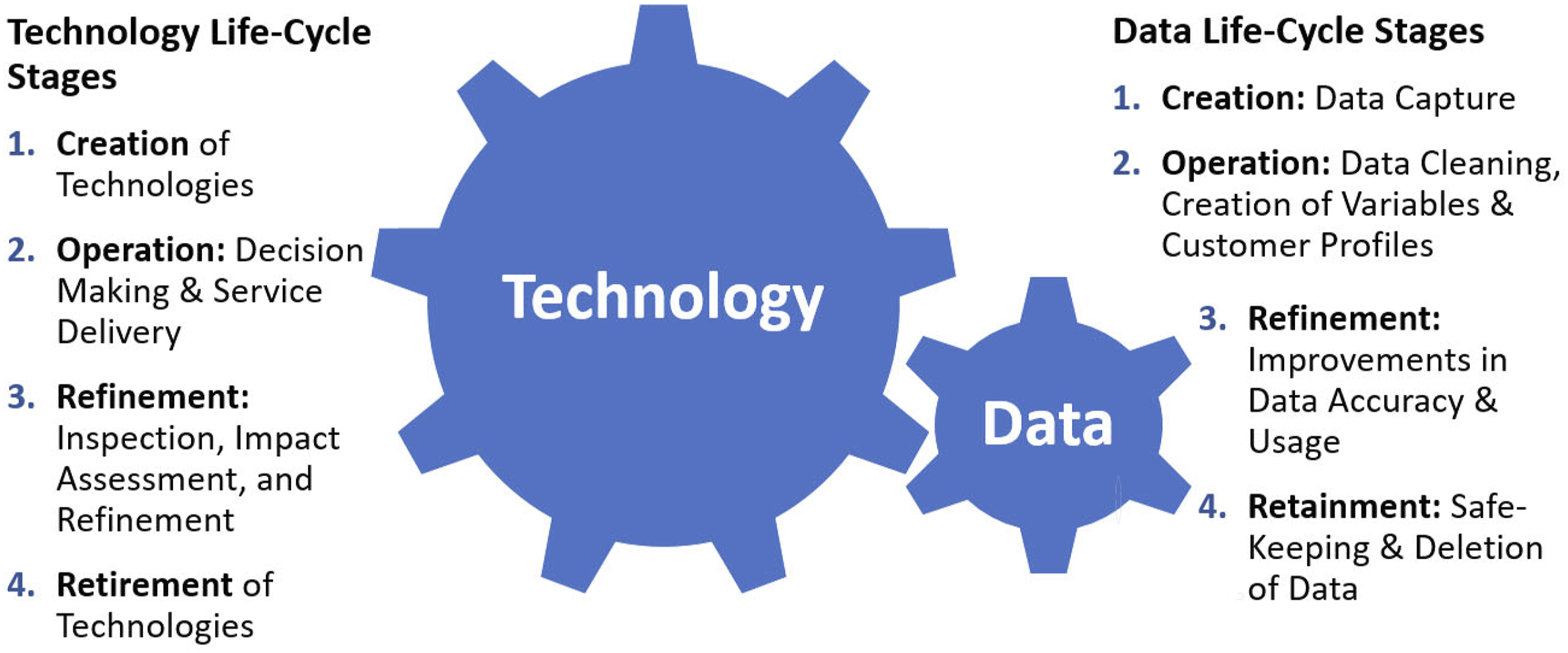

We therefore synthesize key literature on ethics, privacy, and fairness in the social sciences, information systems, law and government, management, marketing, and service (for the key literature reviewed see Table A.1 in the online appendix) using the lens of CDR to provide a more comprehensive understanding of the nature of consumer risks and concerns emerging from digital technologies in service. Specifically, by using this approach, we can begin to further understand the breadth and nature of digital issues as CDR separates data and technology-related issues and distinguishes four life-cycle stages (i.e., their creation, operation, refinement, and retainment; Koshiyama et al. 2021; Lobschat et al. 2021; Sullivan and Wamba 2022). This emerging perspective helps to provide clarity in structuring research on CDR as data and technologies face very different issues in each of the stages, and their life-cycles tend to run at different speeds. As shown in Figure 1, the data cycle moves fast (depicted by the small, fast-moving cog) as data are continuously captured and processed, whereas the technology cycle moves slower (depicted by the larger, slower-moving cog) as the creation and deployment of technology takes more time. Corporate digital responsibility life-cycles of technology and data.

When examining CDR issues related to ethics, privacy, and fairness across the life-cycle stages, one can pinpoint precisely where specific data and technology concerns originate. For example, capturing data (i.e., in the creation stage) relates to very different ethical, privacy, and fairness issues than building variables as input (i.e., in the operation stage) for algorithm decision making. Furthermore, data and technologies also relate to different CDR issues. For example, while data and the variables derived from it (e.g., credit scores) are inputs for algorithms, it is these algorithms that process the variables and make the decisions. Finally, different units within a service organization tend to be responsible for different life-cycle stages. For example, the creation of technologies would be with research and development (R&D) and new service development, whereas the operation of tecnologies rests with the service delivery units. As such, we believe that a higher level and more integrated understanding of where exactly particular CDR issues reside in the life-cycles of data and technologies enhances our understanding, management, and mitigating of these issues. Table A.2 in the online appendix shows the organization of the extant ethics, privacy, and fairness literature in relation to both data and technologies across their four life-cycle stages in a service context. We provide a summary discussion of this table next.

The first life-cycle stage covers the capture of data (e.g., through observation, biometric identification, recording of transaction data, and integrating data from third parties such as social media and credit rating agencies) and the creation of technologies (e.g., designing elderly care robots and ML algorithms). The second stage relates to the actual use of these digital assets, whereby data and digital technologies are tightly intertwined. It is then the technologies that inform service providers and customers, make decisions, and execute both intangible (e.g., making a fund transfer) and tangible processes (e.g., providing guests access to hotel facilities using biometrics). The third stage covers the refinement of these digital assets and includes inspection, optimization, and impact assessment of operating them (e.g., through specified optimization cycles, and defined ownership and governance rules) as well as their intended and unintended consequences. The final retainment stage involves data storage and the retirement of data and technologies (e.g., how long can customer data, credit scores, and transaction data be kept?).

Examining Table A.2 in the online appendix shows that the life-cycle stages of data and technologies contain different activities and relate to different CDR issues and concerns for service firms (e.g., data capture refers to privacy concerns, whereas algorithm decision making mainly concerns issues such as fairness or biases). Some concerns relate to more than one category (e.g., indiscriminate data collection can be unethical, unfair, and violate privacy, all at the same time) and were listed in the category that seemed most relevant (e.g., privacy in this example).

Furthermore, the life-cycle stages illustrate vastly different technology-related CDR issues regarding ethics and fairness. For example, the creation phase shows many violations in data management regarding privacy issues (e.g., over-collecting of data, lack of informed consent for collection). In contrast, the operation phase raises issues such as discrimination of specific customer groups, unintended errors, incorrect algorithm training, and learning oversights (LeCun, Bengio, and Hinton 2015), issues with automation of decision oversight (Schildt 2017), and lack of transparency and explainability of AI outcomes (Gillis and Spiess 2019; Rai 2020).

Given the gamut of CDR risks discussed in different streams of literature and their many contexts, the synthesis provided by the CDR life-cycle perspective can help researchers and practitioners alike to put a particular issue in context and understand how it relates to the wider universe of CDR, and the other risks in the same stage across data and technologies.

Origins of CDR Issues in Digital Service Ecosystems

Although the life-cycle perspective helps to understand and categorize the nature of CDR issues, it does not explicitly consider that in addition to the focal service firm often several other players are involved, and that CDR concerns can originate from different corners of a firm’s digital service ecosystem.

The Nature of Digital Service Ecosystems

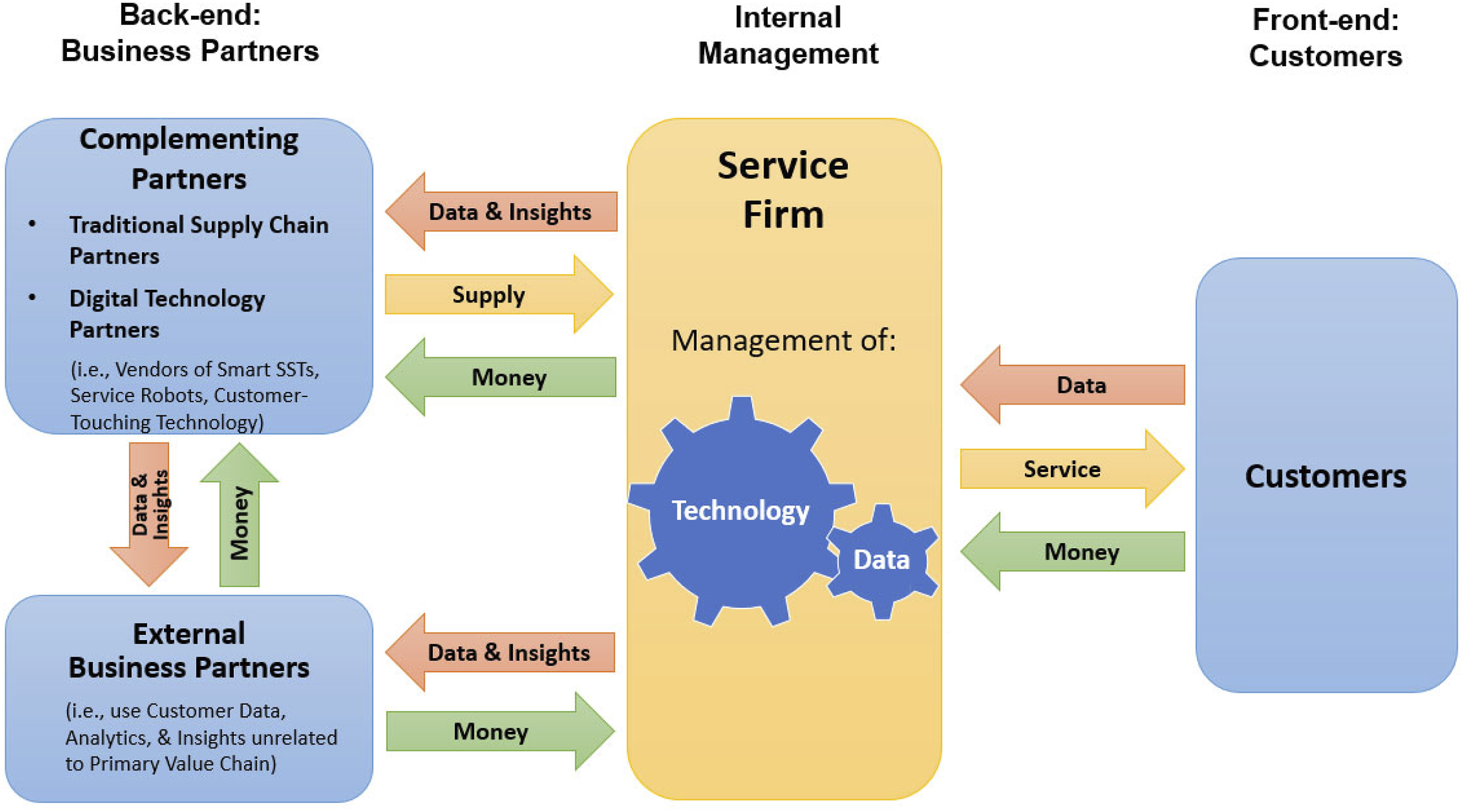

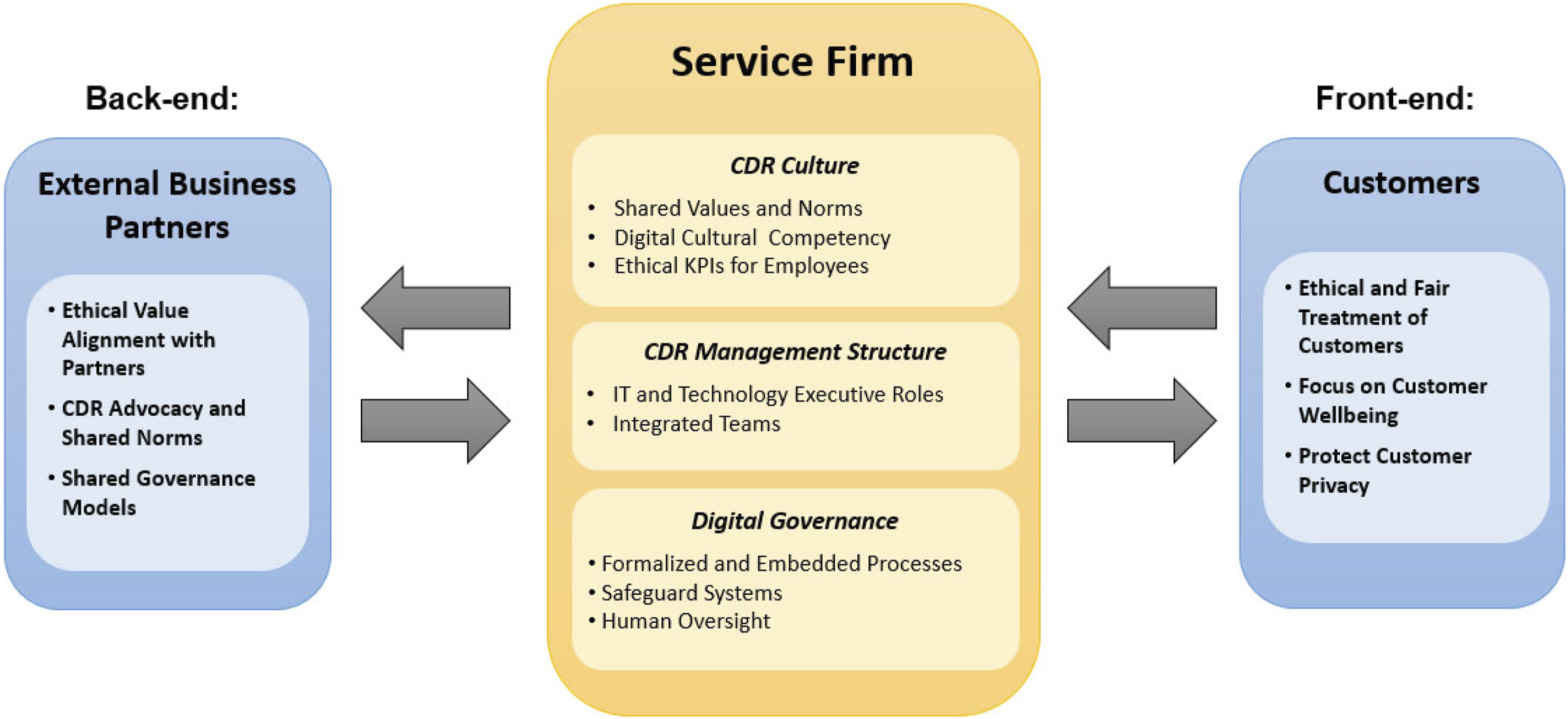

Service organizations have many touchpoints in a customer’s journey with vast opportunities to capture data, deploy technology, and generate insights. These capabilities have led to new digital service ecosystems with the service firm in the center orchestrating value creation, exchange and capture, and governing the ecosystem of customers, complementors, and external business partners. Informed by the digital platform model by Wirtz et al. (2019) and Rangaswamy et al. (2020), we examine the central value exchanges present within the digital service ecosystem as shown in Figure 2. This ecosystem depicts the service firm’s business model from two sides: a front- and back-end with related flows of money, service, data, insights, and technologies. The front-end consists of the customer-facing side containing the service delivery system and customer interface, while the back-end covers the service firm’s relationship to business partners. The dynamics within this ecosystem are fundamental to understanding the origins of CDR issues. The digital service ecosystem model.

The Front-end: Customer and Service Firm Exchanges

The front-end in our model shows the customer interface, illustrating the value exchanges between customers and the service firm. Customers pay money for the service but also allow capturing of their data by the service firm. Through this, they receive value through digitally optimized service delivery via, for instance, customization, personalization, and enhanced convenience (Huang and Rust 2018; Rangaswamy et al. 2020). Intriguingly, these indisputable customer benefits lead directly to CDR concerns as customers find it increasingly difficult to opt-out and not be subjected to potential ethical, privacy, and fairness risks (Puntoni et al. 2021; Rahwan et al. 2019).

The data are not only useful for the firm’s own service delivery but can also serve as input for business partners on the back-end of the ecosystem, where it opens up opportunities for training AI models, optimizing predictive models, and gaining insights. In fact, the value created can be so significant that customers can even be offered the service for free (Gu, Kannan, and Ma 2018). Although consumers may sometimes be able to opt-out of their data being used by the firm and its business partners, this typically does not apply to anonymized data. This kind of data can be re-purposed for various outputs (e.g., predictive analytics) unrelated to the service transaction they are based on (Andrew and Baker 2021).

The Back-end: Business Partner Exchanges

The back-end of the digital service ecosystem covers two main groups of business partners and their interactions with the focal firm. These parties fulfill various roles.

Complementing Partners

There are two types of complementors that support the back-end ecosystem. First, traditional supply chain partners complement and provide needed services and goods as contributions to the service firm’s value chain (e.g., vendors who supply drinks, food, and equipment to a fast-food chain) and receive important market information and money for their goods and services. Sharing market information and analytics make a supply chain more productive, effective, and efficient (Lee, So, and Tang 2000; Quach et al. 2022; Wamba et al. 2020). However, this sharing is also subject to CDR concerns related to data capture, consumer privacy, and algorithmic biases (Hermann 2022; Kordzadeh and Ghasemaghaei 2021). Although anonymizing individual customer data may mitigate some issues (e.g., if only aggregated data are required for supply chain optimization), this may not always be possible (e.g., customer addresses for delivery services) and CDR concerns will emerge (Martin 2015).

Second, digital technology partners provide services and technologies such as AI and robots that are typically trained with, and powered by service firm-provided data. We expect these technologies will primarily not be designed and developed by service firms in-house. Instead, they are likely to be sourced from increasingly global providers of these technologies that have cutting-edge R&D capabilities and the scale required to deliver high-quality and cost-effective solutions. This is akin to what is common practice for traditional technologies such as, for example, automatic teller machines (ATMs) and point-of-sale (POS) equipment. Banks do not develop and manufacture their own ATMs, nor would retailers generally do so for their POS equipment.

Here, new types of vendors arise that innovate and deliver customer-facing digital technologies (Bornet, Barkin, and Wirtz 2021). Examples include vendors of smart and AI-enabled self-service technology (SSTs), retail solutions based on service robots (e.g., SoftBank’s Pepper), customizable platforms for digital agents (e.g., ANZ Bank’s digital agent Jamie is powered by Soul Machines, a vendor of “digital people”) and chatbots (i.e., IBM’s Watson Assistant, a leading provider of conversational AI) that can be tailored to a service organization’s needs. The design and development of these customer interfacing technologies often dictate how a service firm interacts with its customers and the types of data to be captured. Consequently, CDR risks need to be discussed between service firms and these partners. For example, should biometric customer identification be a design feature of a particular technology?

Finally, digital technology partners not only supply solutions that are beneficial for the service firm, but the provided data are also valuable for improving their own market offering, training, and the optimization of their algorithms (Martin 2015). That is, service firms need to work closely with these vendors not only to ensure that technologies and equipment deliver on quality and budget, but also that they mitigate potential CDR risks and address questions such as “Who owns the inferences that are created by customer data?” and “What happens if digital technology partners use the customer-data-based insights to provide services to third parties?”, as illustrated in the exchange pathways in Figure 2.

External Business Partners (Third-Party Users of Data and Insights)

The increasing availability of data and insights can lead to lucrative applications and revenue opportunities for service firms unrelated to the services delivered at the front-end. Such a business represents a secondary market for the focal firm (e.g., an online retailer can sell consumer data and insights to other firms such as financial institutions and data brokers). That is, while traditional supply chain partners and digital technology partners fulfill a complementor role in service delivery, the back-end can also involve external business partners who are not part of the primary value chain. Instead, they offer additional revenue streams to the service firm by monetizing customer data, associated insights, and prediction models (Beke, Eggers, and Verhoef 2018; Martin, Borah, and Palmatier 2017).

This activity is labeled in the literature as value harvesting (Andrew and Baker 2021; Gawer 2021), which occurs when the data streams and insights gained from the customer interface (Breidbach and Maglio 2020) enter a hidden value chain, and where they are shared with and sold to data brokers, aggregators (Martin 2015), and other users. The data and insights are then repackaged and integrated into new service offers, frequently for yet another user group (Leidner and Tona 2021).

These value exchanges are often not just one directional nor solely dyadic. For example, data brokers not only buy data insights from service firms, they frequently also engage in the purchase and collation of data directly from consumers (both private and public, and direct and inferred data), and as such, are often commissioned by service firms to provide consumer lists and insights. For example, brokers such as Quotient and AlikeAudience provide consumer lists to various service firms from data extrapolated from multiple (and often non-disclosed) data sources (e.g., mobile app downloads and usage, geolocations, and online purchases). The sheer magnitude and complexity of these data-sharing ecosystems mean it is often impossible to determine exactly where data are derived from and if consumer privacy regulation is upheld.

These practices have received significant criticism. (Zuboff Shoshana, 2019) calls this “surveillance capitalism” and defines it as “a new economic order that claims human experience as free raw material for hidden commercial practices of extraction, prediction, and sales” (p. 8). The issue with surveillance capitalism is not just associated with privacy concerns, but this new market is based on the monitoring and surveillance of consumers and often leads to manipulating their perceptions, beliefs, and behaviors (frequently in the secondary markets of the ecosystem) for the economic gain of those in control (Zuboff 2015). Interestingly, if a firm acts as private regulator within the digital ecosystem (e.g., controlling access, relationships, data exchange, and access to data and insights), it has the potential to harvest a significant proportion of the value generated, often without even owning the underlying resources (e.g., the customer data; Gawer 2021).

In sum, the discussion on the digital service ecosystem shows that service firms will face CDR issues not only in their interactions with customers (front-end), but also in the back-end with their many and varied supply chain, technology, and other business partners. There is the potential for significant value creation and capture, and as service firms have effectively become private regulators of their ecosystems, they are at risk of abusing that position for their own advantage and create CDR issues.

Why Do Service Firms Engage in Poor CDR Practices?

One can assume that digitally sophisticated service firms would want to follow good CDR practices. Given the risks associated with poor practices, we examine next the question of why firms engage in them.

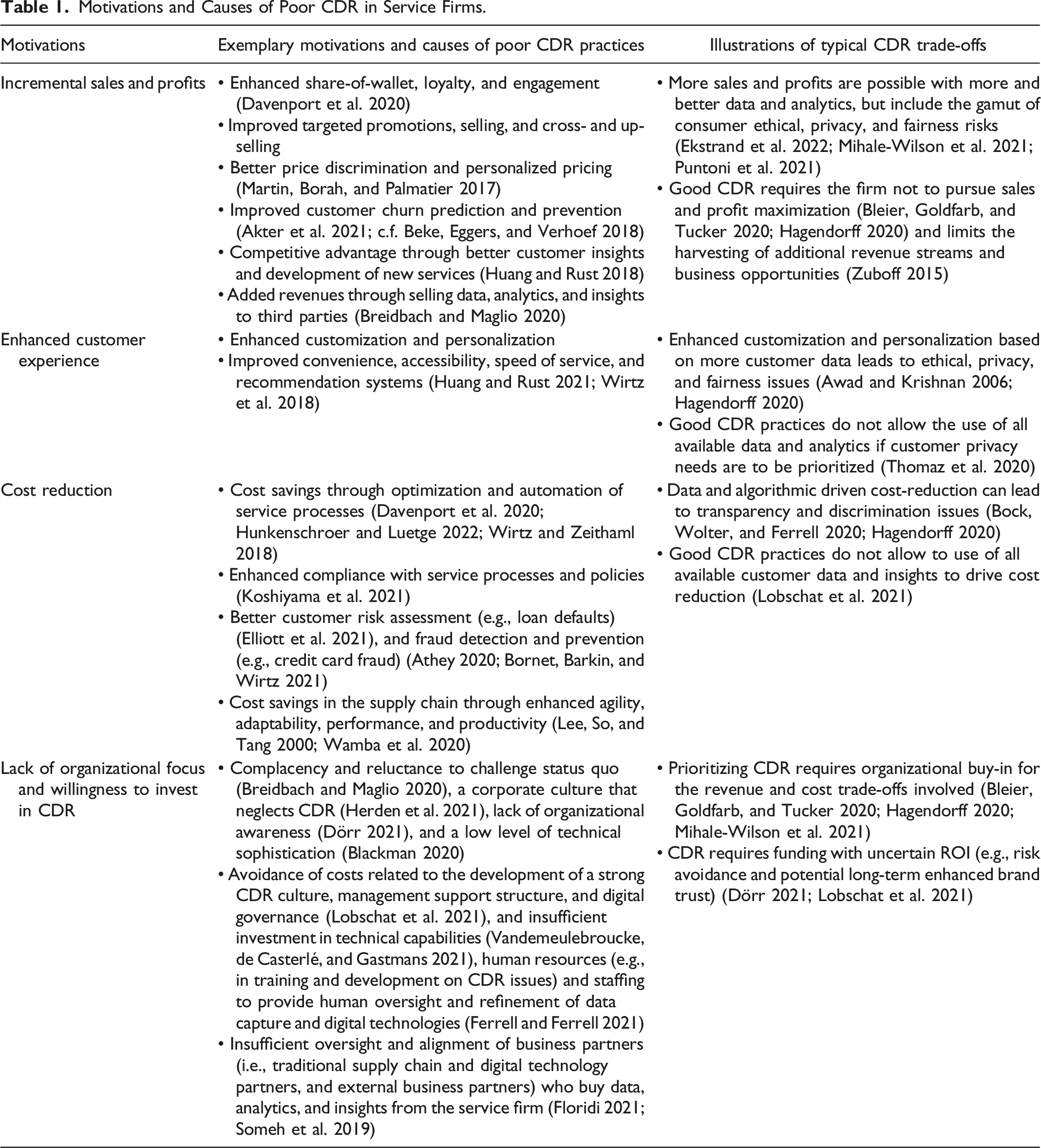

Motivations for Poor CDR in Service Firms

Motivations and Causes of Poor CDR in Service Firms.

The first category relates to discussions in many AI articles that show a clear sales and profit opportunity for service firms in capturing and utilizing consumer data and embedding technology. For example, data, analytics, and AI help improve key marketing processes ranging from identifying promising prospects, crafting highly tailored messages to sell, cross- and upsell, and personalized pricing, to initiatives that reduce churn and increase share-of-wallet, engagement, and stickiness (e.g., Akter et al. 2021; Davenport et al. 2020; Huang and Rust 2021; Martin, Borah, and Palmatier 2017). That is, incremental sales and profits can be achieved with more and better data and analytics, but they are also susceptible to ethical, privacy, and fairness risks (Ekstrand et al. 2022; Mihale-Wilson et al. 2021; Puntoni et al. 2021). Furthermore, the financial value of data appropriation and use includes data monetization in various permutations of data-based business models (e.g., advertising, selling insights derived from data and analytics) and the outright selling of data to third parties (Breidbach and Maglio 2020; Zuboff 2015).

Following good CDR practices often does not allow service firms to maximize sales and profits from their core business (Bleier, Goldfarb, and Tucker 2020; Hagendorff 2020) and limits the harvesting of additional revenue streams and business opportunities (Zuboff 2015). These tensions between CDR and business objectives can be seen clearly in sophisticated digital platform businesses such as Facebook, Google, and Amazon. These firms face criticism for driving the stickiness of their platforms which causes CDR-related issues that range from privacy violations and encouraging addiction, to causing social isolation and depression (Keles, McCrae, and Grealish 2020; Leidner and Tona 2021). Moreover, these tensions will grow in the future with the increasing sophistication and deployment of data capture and digital technologies, such as, biometrics (Breidbach and Maglio 2020; c.f. Rangaswamy et al. 2020).

The second category relates to enhancing the customer experience through customization, personalization, convenience, enhanced accessibility, and speed of service (Huang and Rust 2017; Wirtz et al. 2018). For example, chatbots are almost always available without wait, and customers are offered tailored recommendations based on their profiles (e.g., as used by Amazon and Netflix). However, while customers receive direct benefits, each of these practices can have CDR-related issues such as coercion, privacy concerns, lack of autonomy, fairness, and discrimination issues, amongst others (Awad and Krishnan 2006; Hagendorff 2020). In addition, good CDR practices do not allow the use of all available data and analytics if customer wellbeing is to be prioritized (Thomaz et al. 2020). AI focused on delivering personalized service may also result in suboptimal outcomes for customers as the technology may optimize in unpredicted ways (Bock, Wolter, and Ferrell 2020) and may even be explicitly designed to trade off firm profitability against consumer welfare. For example, “Recommended” or “Our top picks” features on travel websites appear high in search results because they maximize the expected profit of the online travel agent (e.g., commission and booking likelihood) rather than because they offer the best value to the customer (Hunold, Kesler, and Laitenberger 2020).

Third, service firms are interested in deriving cost savings from optimizing and automating customer service processes (Davenport et al. 2020; Hunkenschroer and Luetge 2022; Wirtz et al. 2018). This might include improving customer risk assessments (e.g., loan defaults and insurance claims), fraud detection and prevention (e.g., credit card fraud) (Athey 2020; Bornet, Barkin, and Wirtz 2021; Elliott et al. 2021), higher compliance to processes and policies to mitigate risks, and cost reduction through fewer exceptions (e.g., as in medical claims processing; Koshiyama et al. 2021). Furthermore, sharing data and analytics offer service firms exciting cost-saving potential in their supply chains through enhanced agility, adaptability, performance, and productivity (Lee, So, and Tang 2000; Wamba et al. 2020). That is, increasing digitization, data capture, analytics, and AI make the supply chain more effective and lead to benefits including reduced overstocking, wastage, and stock outage. Although these objectives are frequently aligned with customer needs, they can also carry CDR risks related to privacy, fairness, transparency, and discrimination (Breidbach and Maglio 2020; Bock, Wolter, and Ferrell 2020; Hagendorff 2020).

Finally, service organizations may be reluctant (or unable, in the case of start-ups or small firms) to spend the necessary funds on building a strong CDR culture (e.g., training and communications), management support structures, and governance (e.g., “Who is responsible for developing, monitoring, and enforcing CDR-related policies?” and “How are business partners aligned and governed with regards to CDR?”). Low investments in technical capabilities (Vandemeulebroucke, Casterlé, and Gastmans 2021), human resources (e.g., in training and development on CDR issues), and sufficient staffing to provide human oversight of AI and refinement of data capture and technologies (e.g., impact review and redesign) (Ferrell and Ferrell 2021; Lobschat et al. 2021; Mueller 2022) can lead to errors in judgment, unforeseen and accidental CDR issues, and human negligence (Vandemeulebroucke, Casterlé, and Gastmans 2021). Relatedly, the lack of willingness to invest in CDR may also be caused by complacency, a low level of technical sophistication (Blackman 2020; Breidbach and Maglio 2020; Herden et al. 2021) and a low level of organizational awareness of CDR (Dörr 2021).

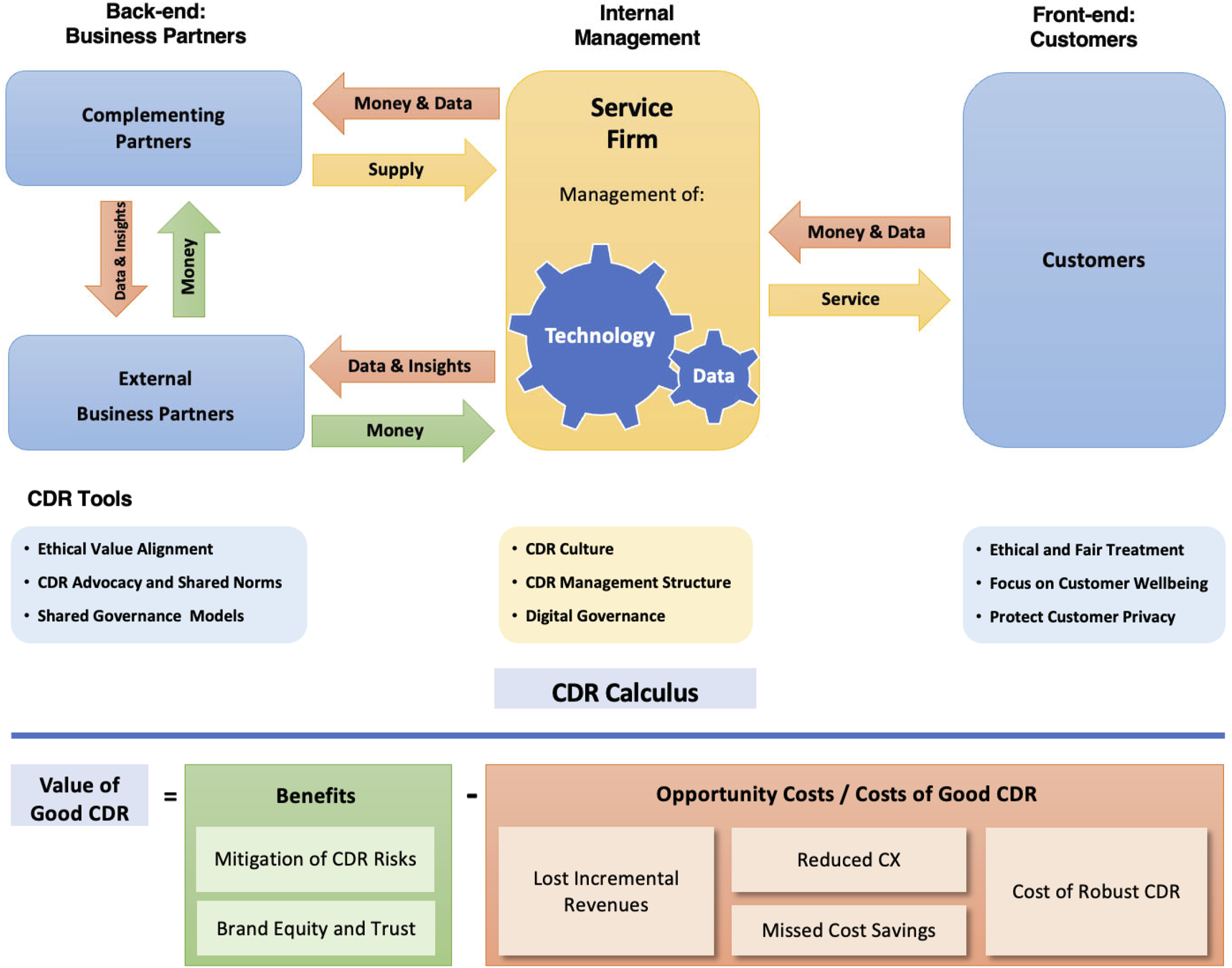

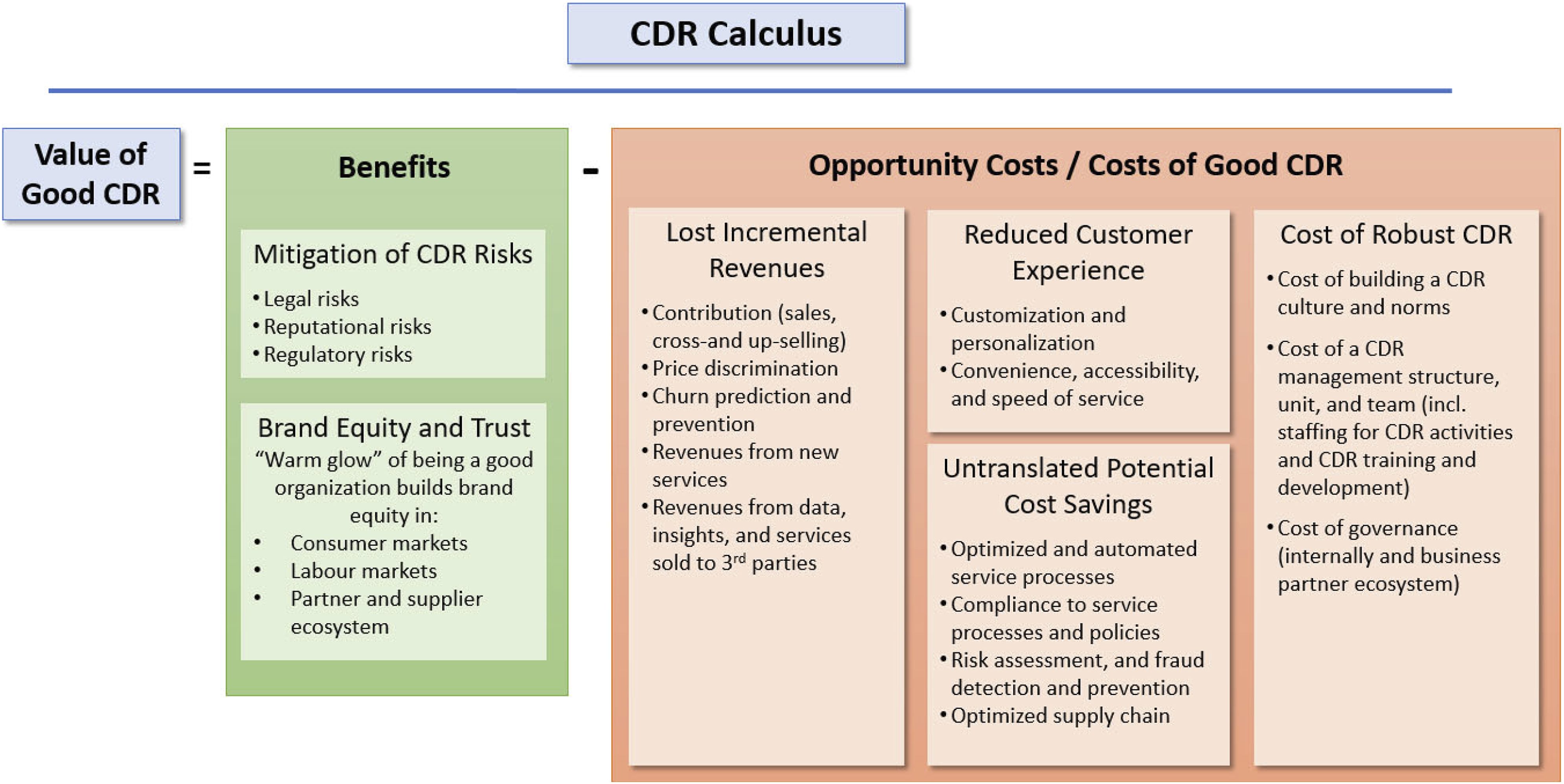

A Service Firm’s CDR Calculus

It is evident from the previous discussion that organizational objectives are often opposed to the adoption of good CDR practices. The resultant tensions can be viewed as trade-offs that service firms need to navigate in order to be profitable yet ethical or as a “utilitarian perspective weighing the benefits and costs” (Hermann 2022, p. 44). The privacy literature developed the “privacy calculus” to address such trade-offs from the consumer perspective and suggests that consumers weigh the benefits and costs, which then determine their privacy responses (Gouthier et al. 2022; Smith, Dinev, and Xu 2011). Likewise, we argue that service firms weigh the benefits and costs of good CDR to determine how much they will engage in CDR. In Table 1, we listed the underlying motivations of poor CDR behavior, which show that good CDR practices often come at a cost that requires service managers to navigate challenging trade-offs between profit impact (i.e., “doing well”) and customer wellbeing (i.e., “doing good”). These costs can be summarized as opportunity costs (e.g., lost incremental revenues, reduced customer experience, and untranslated potential cost savings) and the costs of setting up and operating good CDR (e.g., cost of building a CDR culture, the management structure and team, and the cost of CDR governance). Figure 3 provides an overview of these costs. A service firm’s corporate digital responsibility calculus.

Engaging in good CDR also offers benefits for service firms. First, CDR helps avoid and mitigate legal, reputational, and regulatory risks (Bock, Wolter, and Ferrell 2020; Mueller 2022). Several high-profile cases have shown this, including an investigation of Goldman Sachs by regulators of potentially using an algorithm that provided men higher credit lines than women and therefore was accused of discrimination (Blackman 2020). Second, brand equity and trust are critical for service firms to thrive in the service economy as customers surrender ever more privacy, decisions, and even behavioral autonomy to their digitally enhanced service providers (Breidbach and Maglio 2020; Martin 2015; Martin, Borah, and Palmatier 2017). Having good CDR as a dimension of one’s brand image may become a competitive advantage for building brand equity, consumer trust, and loyalty (e.g., Bleier, Goldfarb, and Tucker 2020; Seele and Schultz 2022).

No service firm is immune to CDR-questionable behaviors and judgment calls, but we feel that it is crucial that the future service organization has an image and reputation of being CDR trustworthy. Using the CDR calculus and viewing service firms’ CDR behaviors as trade-offs between benefits and costs offers critical insights which extend beyond the current common-placed view on risks and concerns. Service organizations should assess their CDR calculus specific to their data practices and technologies. We expect service firms to engage in good CDR if the calculus is positive, or at least not too negative, so that firms are willing to forgo some profit for not “doing bad.” However, if forgone profits become too high, good CDR behaviors seem less likely, and regulation will have to step in. Floridi (2021) shared this somewhat pessimistic view and concluded that the era of self-regulation to address ethical challenges related to AI is over. Although self-regulation has its advantages (e.g., it is faster and more agile, and fosters an ethically constructive dialogue, which are important as digital technology develops rapidly), he concluded that “the time has come to acknowledge that, much as it was worth trying, self-regulation did not work. …Self-regulation needs to be replaced by the law; the sooner, the better” (Floridi 2021, p. 622).

Conclusions, Implications, and Further Research

Conclusions and Implications for Theory

This article shows first that digital service technologies carry serious CDR risks because of the vast streams of customer data involved and the expansive scope of rapidly advancing technologies and their omnipresence, opacity, and complexity in service delivery (e.g., Wixom and Ross 2017). Furthermore, this article provides a number of frameworks for understanding the “what,” “where,” and “why” of these risks. First, using the data and technology life-cycles, we have extended multi-disciplinary understandings of ethics, privacy, and fairness as they pertain to the inherent risks of these digital assets in a service context. The life-cycle perspective provides researchers and practitioners alike with a framework for organizing the many CDR-related risks and helps to understand “what” (i.e., data and technologies and whether the type of risk involved relates to ethics, privacy, or fairness) and “when” (i.e., the stage in the life-cycle from creation all the way to retainment) issues need to be addressed.

Second, we explain the flows of money, service, data, insights, and technologies in the digital service ecosystem model. These flows are at the center of value creation, exchange, and capture in the ecosystem of service firms, customers, complementors, and external business partners (Rangaswamy et al. 2020; Wirtz et al. 2019) and answer the “where” CDR issues originate—internally, at the customer interface, with their supply chain and technology partners (e.g., Martin 2015), and with external partners (Beke, Eggers, and Verhoef 2018; Breidbach and Maglio 2020). The dynamics within this service ecosystem are fundamental to understanding where CDR issues originate. In particular, this ecosystem perspective shows that data and insights lead to lucrative value creation opportunities that are unrelated to the service delivered on the front end (c.f. Andrew and Baker 2021; Beke, Eggers, and Verhoef 2018; Martin, Borah, and Palmatier 2017). Profit opportunities are attractive and, although value is created for all stakeholders, it is not always clear if customers receive a fair share of that value created through the data and insights they contribute (Gawer 2021).

Finally, we advance that service firms face hard trade-offs between good CDR practices and organizational objectives. We introduce the CDR calculus to formally acknowledge these value trade-offs where by it weighs the benefits and costs of good CDR. The CDR calculus makes the trade-offs explicit and answers the “why” service firms engage in poor CDR practices. It also shows that the widely discussed normative (i.e., deontological) approach that tells companies what they should do needs to be supplemented by a utilitarian perspective (c.f. Hermann 2022).

Managerial Implications

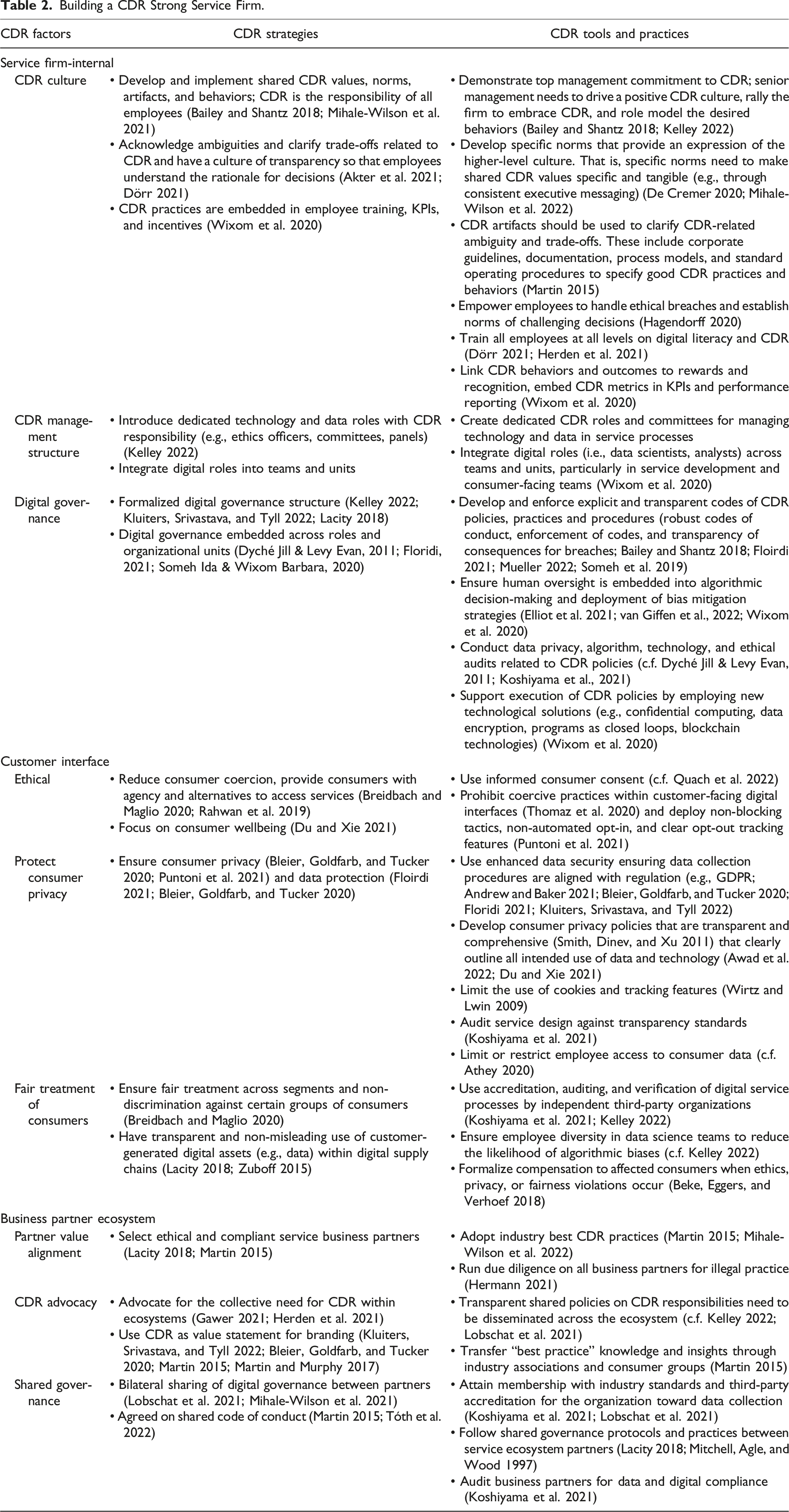

Building on the digital service ecosystem model, we propose that a service firm’s CDR behaviors can be shaped and enhanced by addressing CDR tensions that arise from factors related to customers on the front-end and business partners at the back-end. Figure 4 provides an overview of these factors, while Table 2 offers service managers tangible strategies, practices, and tools to help service firms build and sustain robust CDR. Some CDR practices can be implemented quickly and easily because they do not have significant (opportunity) costs in terms of sales and profits. In contrast, others involve serious trade-offs that need careful consideration, as outlined in the CDR calculus. We provide an overview of these salient CDR strategic imperatives and their sources of tension for service firms next. Factors shaping corporate digital responsibility in service firms. Building a CDR Strong Service Firm.

Internal Service Firm Design

“Managing ethical digital processes is not a technological but a corporate challenge” (Kluiters, Srivastava, and Tyll 2022, p. 3). Here, service firms need to focus on building company-wide norms where ethical practices are paramount (Bailey and Shantz 2018; De Cremer 2020), yet the challenge is their ability to embed CDR as cultural competency, responsible for their ethical digital footprint. For this, organizational stakeholders, particularly employees, must fully understand the practices they need to uphold, and be rewarded and motivated to commit to them (Mihale-Wilson et al. 2021; Bailey and Shantz 2018). A critical element of CDR culture’s embeddedness is its prioritization within the firm, where good CDR development should be made an important KPI for these leaders and their teams (Wixom et al. 2020). This should go beyond box checking, window dressing, and even worse, machinewashing (i.e., intentionally misleading behaviors and communications about a firms’ ethical AI practices; Seele and Schultz 2022).

Additional supporting management structures such as the appointment of IT and technology executive roles (i.e., chief information officers, chief data officers, and chief technology officers) as well as integrated teams (e.g., data scientists and analysts embedded into new service development and marketing teams), can be used to disseminate organizational commitment to CDR practices effectively (Kelley 2022).

Unfortunately, for many service firms, the decisions and processes around data and technology creation and usage are not transparent (Someh Ida & Wixom Barbara, 2020). Digital governance should be formalized and embedded across organizational units and roles, and include practices and systems as outlined in Table 2 (see Dyché Jill & Levy Evan, 2011; Kluiters et al., 2022; Lacity, 2018; Mueller, 2022; Someh Ida & Wixom Barbara, 2020; Wixom et al., 2020). Additionally, safeguard systems that respond to any grey areas and CDR concerns that arise should be made explicit. Especially, as AI entities are introduced into service processes and human supervision of such systems is still a critical component of digital service exchanges.

Customer Interface

Ethical and fair treatment of consumers is increasingly viewed as a fundamental human right, especially in services such as healthcare and utilities (World Health Organization 2017). Therefore, a focus on customer wellbeing (Du and Xie 2021) and non-discrimination (Breidbach and Maglio 2020) should be applied to the design and integration of digital services.

Further, consumer privacy and customer data protection are core principles of good CDR. To address violations of consumer data integrity (e.g., Amazon’s Ring providing private camera footage to public authorities; Facebook’s WhatsApp sharing user data with advertising partners), service organizations must align their privacy policies against (and above) industry standards and regulation (e.g., GDPR) and ensure their robust execution over the entire data and technology life-cycle.

Finally, organizational transparency about its business model and how it uses customer data is essential (Lacity 2018; Bleier, Goldfarb, and Tucker 2020). The customer should not be coerced or forced, particularly in automated processes, to partake in CDR-questionable behaviors such as surrendering privacy and data to access value within a service (Puntoni et al. 2021). In the future, service firms may require external auditing of service design characteristics against industry-standards and regulatory requirements of CDR (Koshiyama et al. 2021; Martin and Murphy 2017).

Business Partner Ecosystem

Service firms play a critical role in advocating for the collective need for CDR across and within their ecosystems. Particularly large service firms that can exert power within an ecosystem should be “responsible for the conduct and treatment of users throughout the chain” (Martin 2015, p. 75). That is, they need to maintain due diligence in selecting ethical and compliant service technology partners and govern the ecosystem with aligned values and norms, focusing on the risks surrounding data misappropriation, exploitation, and unintended use and analytics (Lacity 2018). Furthermore, they should transfer knowledge about responsible CDR practices and take a leading role in advocating ecosystem and industry norms and expectations (Martin 2015). Such signaling can lead to far-reaching adoption and acceptance of standardized practice that values CDR at the organizational, industry, and societal level (c.f. Maignan, Ferrell, and Ferrell 2005).

To ensure a representative and fair power dynamic between service firms and their business partners, and to reduce the likelihood of accidental or coerced CDR issues, external industry practice guidelines and mutual internal regulation systems should be adopted to govern their interactions (Koshiyama et al. 2021). Shared governance models may be based on existing regulation, external non-profit auditing organizations, and industry codes of conduct to reduce managerial friction and potential conflicts of interest between partnering organizations (Mitchell, Agle, and Wood 1997).

Finally, it seems that the CDR orientation of a service firm can be placed on a continuum from an extreme profit orientation (and neglect of CDR) to a strong CDR orientation (relinquishing of significant profit potential). Judging based on the many scandals and reluctance of big tech to give in to various calls for enhanced privacy and consumer protection, we believe that the current tilt is too heavily focused on profits. However, we proffer that no service firm is immune to CDR-questionable behaviors and judgment calls. That is, for service firms to reap the long-term benefits from this digital service revolution, they need to win over their customers and other key stakeholders and keep them “on board” to build trust in the firm’s CDR. Having a robust CDR framework in place (i.e., culture, management structure, and governance) and visibly addressing CDR issues is likely to be rewarded by customers and business partners alike.

Further Research

Given the infancy of CDR exploration in the service literature, we propose multiple areas for future research that collectively provide an exciting and impactful research agenda. Although Table A.3 in the online appendix provides a comprehensive overview of future research streams and key research questions, we emphasize next a few of the most pertinent research gaps surrounding CDR in service.

First, this notion of a trade-off between conflicting goals has not received much attention in the academic literature (Mihale-Wilson et al. 2022) but seems to be a key underlying reason for poor CDR practices. The CDR calculus makes these tensions explicit and offers substantive scope for further empirical investigation into the benefits and associated costs of CDR not only within service firms but in their broader service ecosystems. Significant work in the management literature on organizational ambidexterity (e.g., Raisch and Birkinshaw 2008) and in service research on cost-effective service excellence (Wirtz and Zeithaml 2018) shows how firms can deal with conflicting objectives. We believe that research on CDR-related tensions and the potential role of organizational ambidexterity (i.e., leadership, structural, and contextual ambidexterity; Raisch and Birkinshaw 2008) can play for implementing robust CDR offers exciting research opportunities.

Second, it is not clear how customers perceive, value, and respond to a service firm’s CDR performance. Specifically, how do a service firm’s CDR behaviors and practices influence normative, affective, and behavioral consumer responses such as engagement, trust, and loyalty (Martin and Murphy 2017)? For example, would more CDR-trustworthy service firms enhance consumer advocacy and further endear them to provide more data and allow tracking across service industries and applications?

Third, the business partner ecosystem has attracted scarce research attention beyond data and insights being sold to third parties. Issues in the supply chain and with technology partners have yet to be explored (c.f. Wixom and Ross 2017). For example, it would be important to understand what governance procedures are most effective in encouraging CDR-compliant practices of ecosystem business partners (Lacity 2018).

Fourth, it is worthwhile to explore how technology can be used to enhance a service firm’s CDR performance. With advancements in digital technology and increased access to data, it seems fruitful to explore how recent technological developments in AI, encryption, ML, blockchain, and others technology advancements could mitigate CDR risks for customers and service firms in every stage of the data and technology life-cycle. For example, AI can already identify deep-fake photos and, rather than causing discrimination, AI can be designed to overcome it (Cukier 2021). It would be essential to understand better the role AI can play in encouraging, monitoring, and enforcing CDR-compliant behaviors.

Finally, it is essential to explore the impact of CDR performance on service firms’ outcomes and understand better how and under what circumstances good CDR can be a competitive advantage (Martin and Murphy 2017). What industry, customer, and other parameters can moderate the relationship between a service firm’s quality of CDR practices and the perceived “warm glow” and related brand equity with its various stakeholder (McLeay et al. 2021)? Furthermore, as for greenwashing in environmental, social and governance (ESG), firms may be tempted not to implement costly CDR strategies but instead engage in “machinewashing” (Seele and Schultz 2022). As nicely expressed in their review on stakeholder theory, Parmar et al. (2010) concluded that “the tension between financial and normative/social demands on the firm is real and needs to be examined in greater detail” (p. 413). We concur and suggest that research on the costs and benefits of good vs poor vs “mashinewashed” CDR is needed.

In closing, this article deals with a new but increasingly important topic for our service firms’ sustainability and customers’ wellbeing. It contributes to understanding the importance, gamut, origins, and causes of CDR risks in service, the CDR trade-offs service firms face, and how firms can mitigate them. This is important, as “the future winners in business are organizations that address these topics accordingly” (Kluiters, Srivastava, and Tyll, 2022, p. 21). We hope that our article provides a starting point for further investigation into this critical topic.

Supplemental Material

Supplemental Material - Corporate Digital Responsibility in Service Firms and Their Ecosystems

Supplemental Material for Corporate Digital Responsibility in Service Firms and Their Ecosystems by Jochen Wirtz, Werner Kunz, Nicole Hartley, and James Tarbit in Journal of Service Research

Supplemental Material

Supplemental Material - Corporate Digital Responsibility in Service Firms and Their Ecosystems

Supplemental Material for Corporate Digital Responsibility in Service Firms and Their Ecosystems by Jochen Wirtz, Werner Kunz, Nicole Hartley, and James Tarbit in Journal of Service Research

Footnotes

Acknowledgments

The authors gratefully acknowledge the valuable input and feedback provided by Ida Asadi Someh (Senior Lecturer in Business Information Systems at UQ Business School) and Jamie Ford (Head of Partnerships at Five Good Friends, Brisbane) to earlier drafts of this article. Furthermore, the authors would like to acknowledge the following experts for their valuable input during various stages of this research project (in alphabetical order): Catherine Bailey (King's Business School); Russell Belk (York University); Felix Eggers (Copenhagen Business School): Karen Elliott (Newcastle University); Michael Frese (Asia School of Business; Leuphana, University of Lueneburg); Mauro Gotsch (University of St Gallen); Erik Hermann (IHP Leibniz Institute); Cristina Mihale-Wilson (Goethe University Frankfurt); Irene Ng (University of Warwick); Daniele Scarpi (University of Bologna); Jeroen Schepers (Eindhoven University of Technology); Karim Sidaoui (Radboud Universiteit); Mahesh Subramony (Northern Illinois University), Małgorzata Suchacka (University of Silesia in Katowice); Christopher S. Tang (UCLA Anderson School of Management), Zsofia Toth (Durham University), Gaby Odekerken-Schroeder (Maastricht University); and Simona Š. Žižek (University of Maribor).

We are also grateful to the following industry experts who spent time to discuss CDR in practice with the author team. They are (in alphabetical order): Ian Barkin (entrepreneur in call center automation and RPA); Pascal Bornet (Chief Data Officer, Aera Technology); Elisa Janiec (General Manager, Taiwan, Uber Eats); Sue Keay (Robotics Technology Lead, Oz Minerals and Chair of Board of Directors, Robotics Australia Group); Joanna Marsh (General Manager, Innovation and Advanced Analytics, Investa Property Group); and Ryan van Leent (Vice President, SAP Global Public Sector).

Finally, this research was presented at a number of conferences and seminars (in chronological order): the Service Innovation Alliance Digital Transformation Summit in 2020 in Brisbane (Australia), the Naples Forum on Service 2021 in Naples (Italy), the 17th International Research Seminar in Service Management, La Londe Conference (2022) in Porquerolles (France), the 12th SERVSIG Conference (2022) in Glasgow (UK), the 2022 Frontiers in Service Conference in Boston (USA), and the Journal of Interactive Marketing Workshop on Information Technologies and Consumers’ Well-Being (2022) in Bologna (Italy). The authors are grateful to the organizers and participants for the lively discussions, comments and ideas generated during and after these presentations.

Author Contributions

All authors contributed equally to this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.